Submitted:

02 May 2024

Posted:

07 May 2024

You are already at the latest version

Abstract

Keywords:

1. Introduction

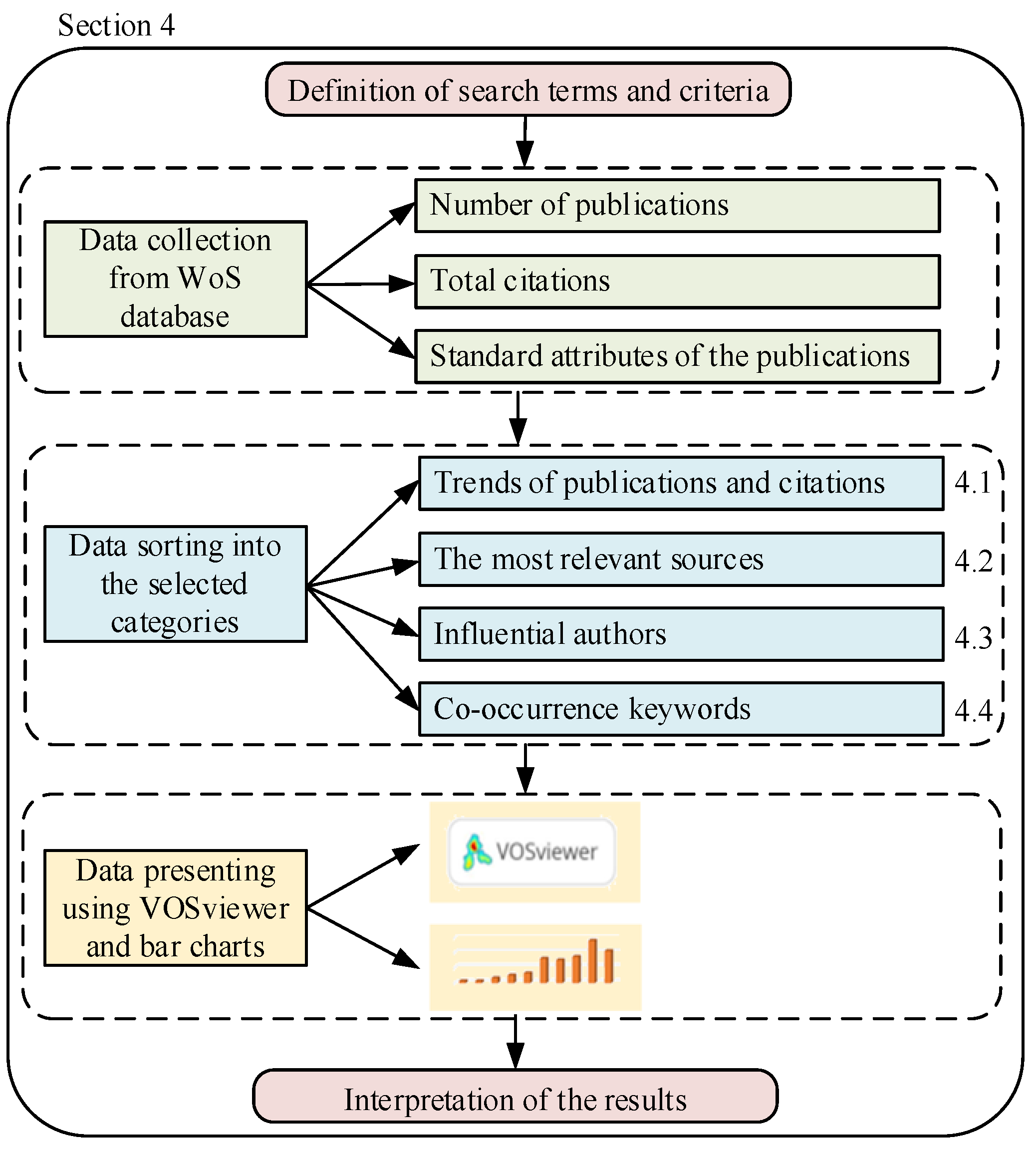

2. Materials and Methods

3. Literature Review

4. Research Findings and Results Description

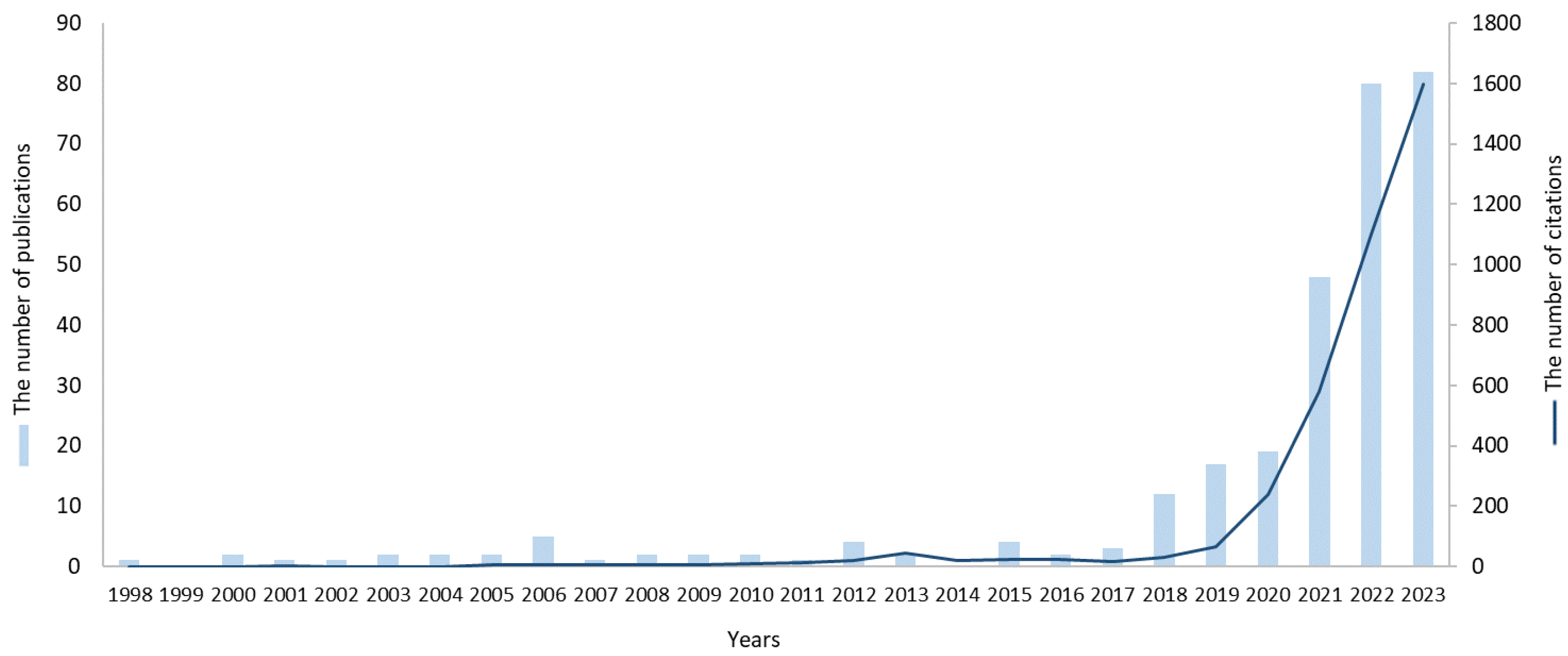

4.1. Trends of Publications and Citations

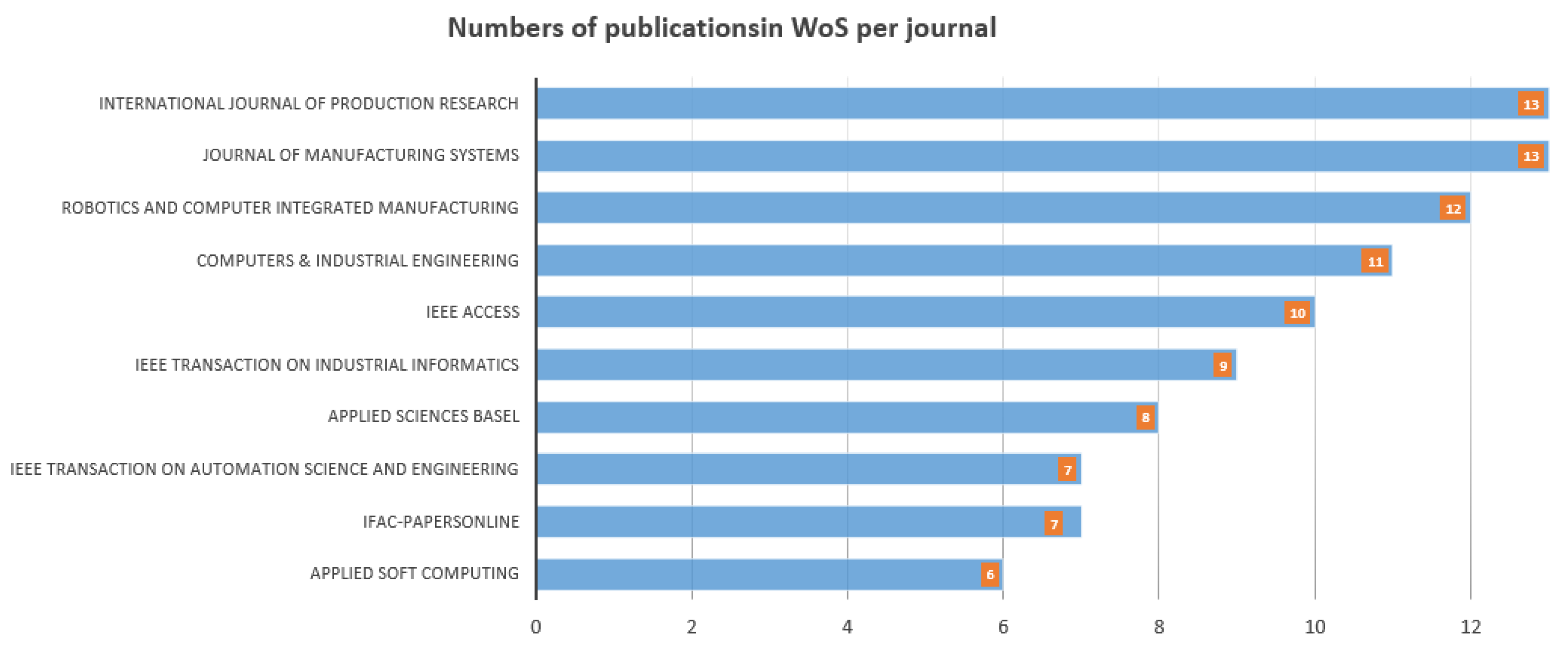

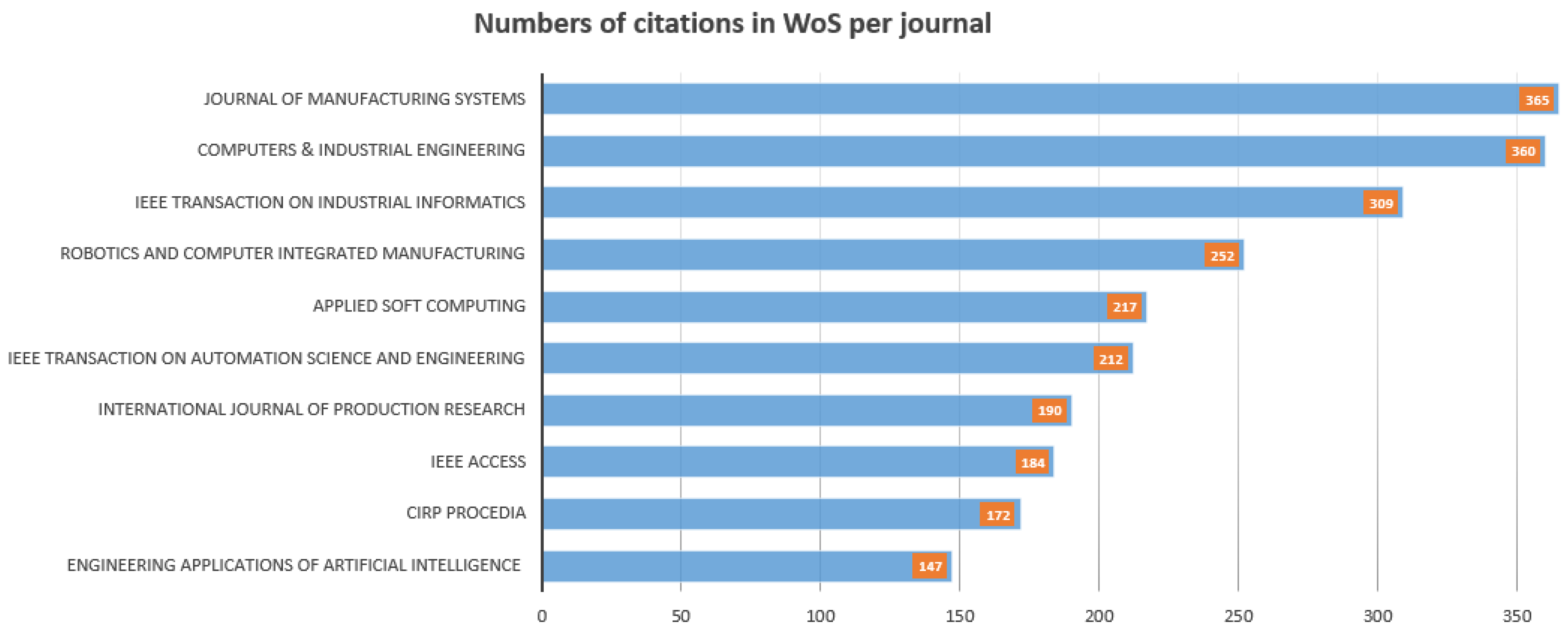

4.2. Most Relevant Sources

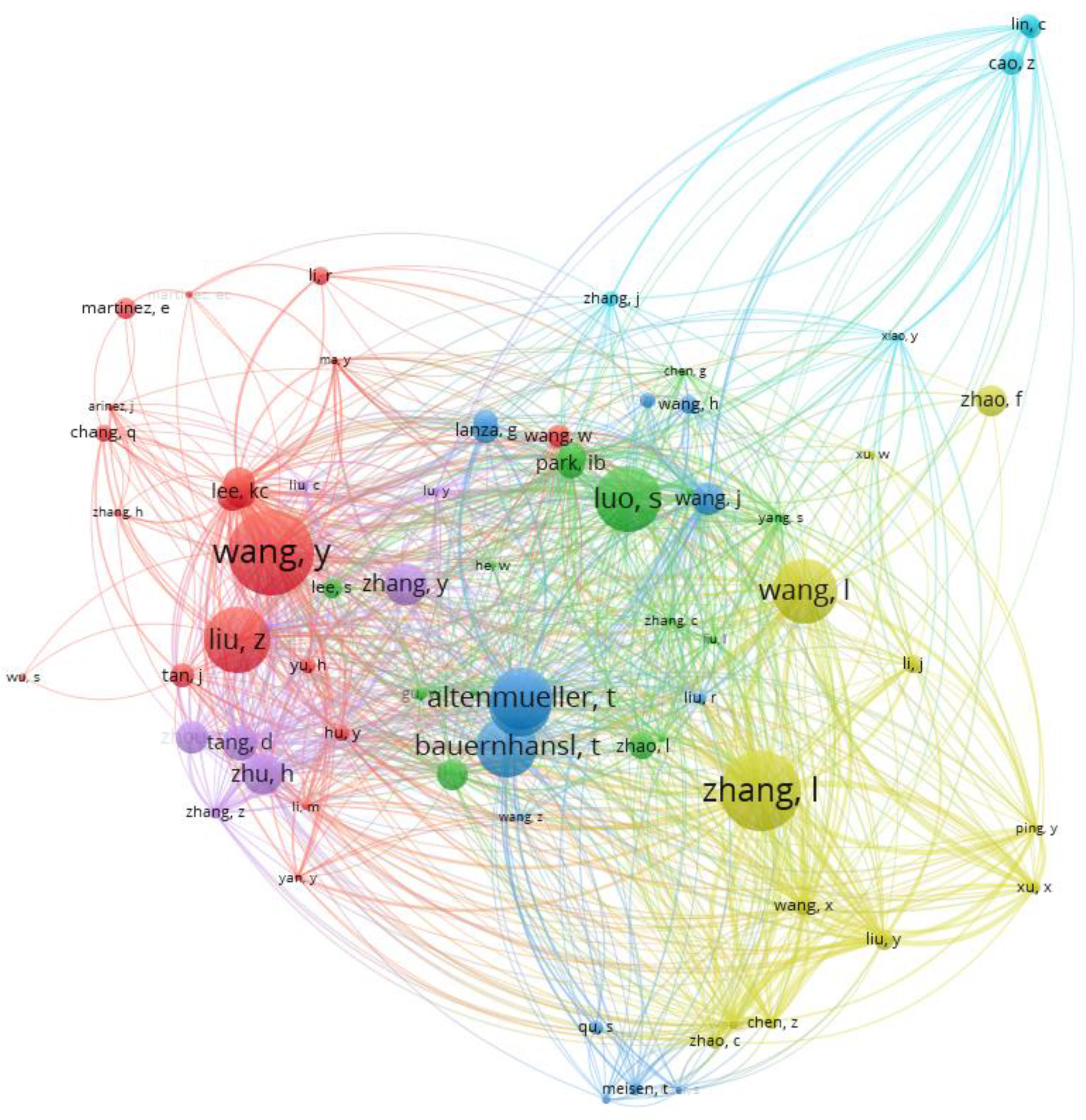

4.3. Most Cited Authors

4.4. Co-Occurrence Keywords

5. Conclusion

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- Pinedo, M. Planning and scheduling in manufacturing and services. Springer (New York) 2005.

- Beheshti, Z.; Shamsuddin, S.M.H. A review of population-based meta-heuristic algorithms. Int. j. adv. soft comput. Appl 2013, 5, 1–35. [Google Scholar]

- Xhafa, F.; Abraham, A. (Eds.) . Metaheuristics for scheduling in industrial and manufacturing applications, 128, 2008, Springer.

- Abdel-Kader, R.F. Particle swarm optimization for constrained instruction scheduling. VLSI design 2008. [CrossRef]

- Balamurugan, A.; Ranjitharamasamy, S.P. A Modified Heuristics for the Batch Size Optimization with Combined Time in a Mass-Customized Manufacturing System. International Journal of Industrial Engineering: Theory Applications and Practice 2023, 30, 1090–1115. [Google Scholar]

- Ghassemi Tari, F.; Olfat, L. Heuristic rules for tardiness problem in flow shop with intermediate due dates. The International Journal of Advanced Manufacturing Technology 2014, 71, 381–393. [Google Scholar] [CrossRef]

- Modrak, V.; Pandian, R.S. Flow shop scheduling algorithm to minimize completion time for n-jobs m-machines problem. Tehnički vjesnik 2010, 17, 273–278. [Google Scholar]

- Thenarasu, M.; Rameshkumar, K.; Rousseau, J.; Anbuudayasankar, S.P. Development and analysis of priority decision rules using MCDM approach for a flexible job shop scheduling: A simulation study. Simul Model Pract Theory 2022, 114. [Google Scholar] [CrossRef]

- Pandian, S.; Modrak, V. Possibilities, obstacles and challenges of genetic algorithm in manufacturing cell formation. Advanced Logistic systems 2009, 3, 63–70. [Google Scholar]

- Abdulredha, M.N.; Bara’a, A.A.; Jabir, A.J. Heuristic and meta-heuristic optimization models for task scheduling in cloud-fog systems: A review. Iraqi Journal for Electrical and Electronic Engineering 2020, 16, 103–112. [Google Scholar] [CrossRef]

- Modrak, V.; Pandian, R.S.; Semanco, P. Calibration of GA parameters for layout design optimization problems using design of experiments. Applied Sciences 2021, 11, 6940. [Google Scholar] [CrossRef]

- Keshanchi, B.; Souri, A.; Navimipour, N.J. An improved genetic algorithm for task scheduling in the cloud environments using the priority queues: formal verification, simulation, and statistical testing. Journal of Systems and Software 2017, 124, 1–21. [Google Scholar] [CrossRef]

- Jans, R.; Degraeve, Z. Meta-heuristics for dynamic lot sizing: A review and comparison of solution approaches. European journal of operational research 2007, 177, 1855–1875. [Google Scholar] [CrossRef]

- Han, B.A.; Yang, J.J. A deep reinforcement learning based solution for flexible job shop scheduling problem. International Journal of Simulation Modelling 2021, 20, 375–386. [Google Scholar] [CrossRef]

- Shyalika, C.; Silva, T.; Karunananda, A. Reinforcement Learning in Dynamic Task Scheduling: A Review, 2020.

- Wang, X.; Zhang, L.; Ren, L.; Xie, K.; Wang, K.; Ye, F.; Chen, Z. Brief Review on Applying Reinforcement Learning to Job Shop Scheduling Problems. Journal of System Simulation 2022, 33, 2782–2791. [Google Scholar]

- Dima, I.C.; Gabrara, J.; Modrak, V.; Piotr, P.; Popescu, C. Using the expert systems in the operational management of production. In 11th WSEAS International Conference on Mathematics and Computers in Business and Economics (MCBE’10)-Published by WSEAS Press 2010.

- Waschneck, B.; Reichstaller, A.; Belzner, L.; Altenmüller, T.; Bauernhansl, T.; Knapp, A.; Kyek, A. Optimization of global production scheduling with deep reinforcement learning. Procedia CIRP 2018, 1264–1269. Elsevier B.V.

- Yan, J.; Liu, Z.; Zhang, T.; Zhang, Y. Autonomous decision-making method of transportation process for flexible job shop scheduling problem based on reinforcement learning. Proceedings - 2021 International Conference on Machine Learning and Intelligent Systems Engineering, MLISE 2021, 234–238. Institute of Electrical and Electronics Engineers Inc.

- Modrak, V.; Pandian, R.S. Operations management research and cellular manufacturing systems. Hershey, USA, 2010, IGI Global.

- Huang, Z.; Liu, Q.; Zhu, F. Hierarchical reinforcement learning with adaptive scheduling for robot control. Engineering Applications of Artificial Intelligence 2023, 126, 107130. [Google Scholar] [CrossRef]

- Arviv, K.; Stern, H.; Edan, Y. Collaborative reinforcement learning for a two-robot job transfer flow-shop scheduling problem. International Journal of Production Research 2016, 54, 1196–1209. [Google Scholar] [CrossRef]

- Wang, L.; Pan, Z.; Wang, J. A Review of Reinforcement Learning Based Intelligent Optimization for Manufacturing Scheduling. Complex System Modeling and Simulation 2021, 1, 257–270. [Google Scholar] [CrossRef]

- Aydin, M.E.; Öztemel, E. Dynamic job-shop scheduling using reinforcement learning agents, 2000.

- Qu, S.; Wang, J.; Govil, S.; Leckie, J.O. Optimized Adaptive Scheduling of a Manufacturing Process System with Multi-skill Workforce and Multiple Machine Types: An Ontology-based, Multi-agent Reinforcement Learning Approach. Procedia CIRP 2016, 55–60, Elsevier B.V.

- Luo, S.; Zhang, L.; Fan, Y. Dynamic multi-objective scheduling for flexible job shop by deep reinforcement learning. Comput Ind Eng. 2021, 159, 107489. [Google Scholar] [CrossRef]

- Zhou, L.; Zhang, L.; Horn, B.K.P. Deep reinforcement learning-based dynamic scheduling in smart manufacturing. Procedia CIRP 2020, 383–388, Elsevier B.V.

- Broadus R., N. Toward a Definition of “Bibliometrics. ” Scientometrics 1987, 12, 373–379. [Google Scholar] [CrossRef]

- Arunmozhi, B.; Sudhakarapandian, R.; Sultan Batcha, Y.; Rajay Vedaraj, I.S. An inferential analysis of stainless steel in additive manufacturing using bibliometric indicators. Mater Today Proc. 2023. [CrossRef]

- Randhawa, K.; Wilden, R.; Hohberger, J. A bibliometric review of open innovation: Setting a research agenda. Journal of product innovation management 2016, 33, 750–772. [Google Scholar] [CrossRef]

- van Raan, A. Advanced bibliometric methods as quantitative core of peer review based evaluation and foresight exercises. Scientometrics 1996, 36, 397–420. [Google Scholar] [CrossRef]

- Brandom, R.B. Articulating reasons: An introduction to inferentialism. Harvard University Press, 2001.

- Kothari, C.R. Research methodology: Methods and techniques. New Age International 2004.

- Suárez, M. An inferential conception of scientific representation. Philosophy of science 2004, 71, 767–779. [Google Scholar] [CrossRef]

- Contessa, G. Scientific representation, interpretation, and surrogative reasoning. Philosophy of science 2007, 74, 48–68. [Google Scholar] [CrossRef]

- Govier, T. Problems in argument analysis and evaluation (Vol. 6). University of Windsor, 2018.

- Wang, S.; Li, J.; Luo, Y. Smart Scheduling for Flexible and Hybrid Production with Multi-Agent Deep Reinforcement Learning. In: Proceedings of 2021 IEEE 2nd International Conference on Information Technology, Big Data and Artificial Intelligence, ICIBA 2021, 288–294. Institute of Electrical and Electronics Engineers Inc.

- Tang, J.; Haddad, Y.; Salonitis, K. Reconfigurable manufacturing system scheduling: a deep reinforcement learning approach. Procedia CIRP, 2022; 1198–1203, Elsevier B.V. [Google Scholar]

- Shahrabi, J.; Adibi, M.A.; Mahootchi, M. A reinforcement learning approach to parameter estimation in dynamic job shop scheduling. Comput Ind Eng. 2017, 110, 75–82. [Google Scholar] [CrossRef]

- Yang, J.; You, X.; Wu, G.; Hassan, M.M.; Almogren, A.; Guna, J. Application of reinforcement learning in UAV cluster task scheduling. Future generation computer systems 2019, 95, 140–148. [Google Scholar] [CrossRef]

- Yuan, X.; Pan, Y.; Yang, J.; Wang, W.; Huang, Z. (2021, February). Study on the application of reinforcement learning in the operation optimization of HVAC system. In Building Simulation (Vol. 14, pp. 75–87). Tsinghua University Press.

- Kurinov, I.; Orzechowski, G.; Hämäläinen, P.; Mikkola, A. (2020). Automated excavator based on reinforcement learning and multibody system dynamics. IEEE access 2020, 8, 213998–214006. [Google Scholar]

- Popper, J.; Motsch, W.; David, A.; Petzsche, T.; Ruskowski, M. Utilizing multi-agent deep reinforcement learning for flexible job shop scheduling under sustainable viewpoints. International Conference on Electrical, Computer, Communications and Mechatronics Engineering 2021, ICECCME 2021. Institute of Electrical and Electronics Engineers Inc.

- Xiong, H.; Fan, H.; Jiang, G.; Li, G. A simulation-based study of dispatching rules in a dynamic job shop scheduling problem with batch release and extended technical precedence constraints. Eur J Oper Res. 2017, 257, 13–24. [Google Scholar] [CrossRef]

- Palacio, J.C.; Jiménez, Y.M.; Schietgat, L.; Doninck, B. Van; Nowé, A. A Q-Learning algorithm for flexible job shop scheduling in a real-world manufacturing scenario. Procedia CIRP, 2022; 227–232, Elsevier B.V. [Google Scholar]

- Chang, J.; Yu, D.; Hu, Y.; He, W.; Yu, H. Deep Reinforcement Learning for Dynamic Flexible Job Shop Scheduling with Random Job Arrival. Processes 2022, 10. [Google Scholar] [CrossRef]

- Liu, R.; Piplani, R.; Toro, C. Deep reinforcement learning for dynamic scheduling of a flexible job shop. Int J Prod Res. 2022, 60, 4049–4069. [Google Scholar] [CrossRef]

- Esteso, A.; Peidro, D.; Mula, J.; Díaz-Madroñero, M. Reinforcement learning applied to production planning and control, 2023.

- Samsonov, V.; Kemmerling, M.; Paegert, M.; Lütticke, D.; Sauermann, F.; Gützlaff, A.; Schuh, G.; Meisen, T. Manufacturing control in job shop environments with reinforcement learning. ICAART 2021 - Proceedings of the 13th International Conference on Agents and Artificial Intelligence 2021, 589–597. SciTePress.

- Cunha, B.; Madureira, A.M.; Fonseca, B.; Coelho, D. Deep reinforcement learning as a job shop scheduling solver: a literature review. In: Madureira, A.M.; Abraham, A.; Gandhi, N.; and Varela, M.L. (eds.) 18th International Conference on Hybrid Intelligent Systems (HIS 2018). Springer International Publishing, Cham 2018.

- Wang, X.; Zhang, L.; Lin, T.; Zhao, C.; Wang, K.; Chen, Z. Solving job scheduling problems in a resource preemption environment with multi-agent reinforcement learning. Robot Comput Integr Manuf. 2022, 77. [Google Scholar] [CrossRef]

- Oh, S.H.; Cho, Y.I.; Woo, J.H. Distributional reinforcement learning with the independent learners for flexible job shop scheduling problem with high variability. J Comput Des Eng. 2022, 9, 1157–1174. [Google Scholar] [CrossRef]

- Zhang, Y.; Zhu, H.; Tang, D.; Zhou, T.; Gui, Y. Dynamic job shop scheduling based on deep reinforcement learning for multi-agent manufacturing systems. Robot Comput Integr Manuf. 2022, 78. [Google Scholar] [CrossRef]

- Kuhnle, A.; May, M.C.; Schäfer, L.; Lanza, G. Explainable reinforcement learning in production control of job shop manufacturing system. Int J Prod Res. 2022, 60, 5812–5834. [Google Scholar] [CrossRef]

- Liang, Y.; Sun, Z.; Song, T.; Chou, Q.; Fan, W.; Fan, J.; Rui, Y.; Zhou, Q.; Bai, J.; Yang, C.; Bai, P. Lenovo Schedules Laptop Manufacturing Using Deep Reinforcement Learning. Interfaces (Providence) 2022, 52, 56–68. [Google Scholar] [CrossRef]

- Chen, Y.; Guo, W.; Liu, J.; Shen, S.; Lin, J.; Cui, D. A multi-setpoint cooling control approach for air-cooled data centers using the deep Q-network algorithm. Measurement and Control 2024, 00202940231216543. [Google Scholar] [CrossRef]

- Théate, T.; Ernst, D. An application of deep reinforcement learning to algorithmic trading. Expert Systems with Applications 2021, 173, 114632. [Google Scholar] [CrossRef]

- Sanaye, S.; Sarrafi, A. A novel energy management method based on Deep Q Network algorithm for low operating cost of an integrated hybrid system. Energy Reports 2021, 7, 2647–2663. [Google Scholar] [CrossRef]

- Luo, S. Dynamic scheduling for flexible job shop with new job insertions by deep reinforcement learning. Applied Soft Computing Journal 2020, 91. [Google Scholar] [CrossRef]

- Luo, S.; Zhang, L.; Fan, Y. Real-Time Scheduling for Dynamic Partial-No-Wait Multiobjective Flexible Job Shop by Deep Reinforcement Learning. IEEE Transactions on Automation Science and Engineering 2022, 19, 3020–3038. [Google Scholar] [CrossRef]

- Du, Y.; Li, J.Q.; Chen, X.L.; Duan, P.Y.; Pan, Q.K. Knowledge-Based Reinforcement Learning and Estimation of Distribution Algorithm for Flexible Job Shop Scheduling Problem. IEEE Trans Emerg Top Comput Intell. 2023, 7, 1036–1050. [Google Scholar] [CrossRef]

- Li, Y.; Gu, W.; Yuan, M.; Tang, Y. Real-time data-driven dynamic scheduling for flexible job shop with insufficient transportation resources using hybrid deep Q network. Robot Comput Integr Manuf. 2022, 74. [Google Scholar] [CrossRef]

- Zhou, T.; Zhu, H.; Tang, D.; Liu, C.; Cai, Q.; Shi, W.; Gui, Y. Reinforcement learning for online optimization of job-shop scheduling in a smart manufacturing factory. Advances in Mechanical Engineering 2022, 14. [Google Scholar] [CrossRef]

- Wang, L.; Hu, X.; Wang, Y.; Xu, S.; Ma, S.; Yang, K.; Wang, W. Dynamic job-shop scheduling in smart manufacturing using deep reinforcement learning. Computer Networks 2021, 190, 107969. [Google Scholar] [CrossRef]

- Wang, Y.; Usher, J.M. Application of reinforcement learning for agent-based production scheduling. Engineering applications of artificial intelligence 2005, 18, 73–82. [Google Scholar] [CrossRef]

- Cancino, C.A.; Merigó, J.M.; Coronado, F.C. A bibliometric analysis of leading universities in innovation research. Journal of Innovation & Knowledge 2017, 2, 106–124. [Google Scholar]

- Varin, C.; Cattelan, M.; Firth, D. Statistical modelling of citation exchange between statistics journals. Journal of the Royal Statistical Society Series A: Statistics in Society 2016, 179, 1–63. [Google Scholar] [CrossRef] [PubMed]

- Moral-Muñoz, J.A.; Herrera-Viedma, E.; Santisteban-Espejo, A.; Cobo, M.J. Software tools for conducting bibliometric analysis in science: An up-to-date review. Profesional de la información/Information Professional 2020, 29.

- Curry, S. Let’s move beyond the rhetoric: it’s time to change how we judge research. Nature 2018, 554, 147–148. [Google Scholar] [CrossRef] [PubMed]

- Al-Hoorie, A.; Vitta, J.P. The seven sins of L2 research: A review of 30 journals’ statistical quality and their CiteScore, SJR, SNIP, JCR Impact Factors. Language Teaching Research 2019, 23, 727–744. [Google Scholar] [CrossRef]

- Waltman, L.; Van Eck, N.J.; Noyons, E.C.M. A Unified Approach to Mapping and Clustering of Bibliometric Networks. J. Informetr. 2010, 4, 629–635. [Google Scholar] [CrossRef]

- Cheng, F.; Huang, Y.; Tanpure, B.; Sawalani, P.; Cheng, L.; Liu, C. Cost-aware job scheduling for cloud instances using deep reinforcement learning. Cluster Computing 2022, 1–13. [Google Scholar] [CrossRef]

- Sutton, R.S.; Barto, A.G. Reinforcement Learning: An Introduction. MIT, New York 2018.

- Thaipisutikul, T.; Chen, Y.-C.; Hui, L.; Chen, S.-C.; Mongkolwat, P.; Shih, T.K. The matter of deep reinforcement learning towards practical AI applications. Proceedings on 12th International Conference on Ubi-Media Computing, 2019; 24–29. [Google Scholar]

- Yan, J.; Huang, Y.; Gupta, A.; Gupta, A.; Liu, C.; Li, J.; Cheng, L. Energy-aware systems for real-time job scheduling in cloud data centers: A deep reinforcement learning approach. Computers and Electrical Engineering 2022, 99, 107688. [Google Scholar] [CrossRef]

- Piller, F.T. Mass customization: reflections on the state of the concept. International journal of flexible manufacturing systems 2004, 16, 313–334. [Google Scholar] [CrossRef]

- Suzić, N.; Forza, C.; Trentin, A.; Anišić, Z. Implementation guidelines for mass customization: current characteristics and suggestions for improvement. Production Planning & Control 2018, 29, 856–871. [Google Scholar]

- Steinbacher, L.M.; Ait-Alla, A.; Rippel, D.; Düe, T.; Freitag, M. Modelling framework for reinforcement learning based scheduling applications. IFAC-PapersOnLine 2022, 55, 67–72. [Google Scholar] [CrossRef]

- Sheng, J.; Cai, S.; Cui, H.; Li, W.; Hua, Y.; Jin, B.; Wang, X. Vmagent: Scheduling simulator for reinforcement learning 2021. 2021; arXiv:2112.04785. [Google Scholar]

- Wagle, S.; Paranjape, A.A. Use of simulation-aided reinforcement learning for optimal scheduling of operations in industrial plants. In 2020 Winter Simulation Conference (WSC) 2020, 572-583, IEEE.

- Al-Hashimi, M.A. A.; Rahiman, A.R.; Muhammed, A.; Hamid, N.A. W. Fog-cloud scheduling simulator for reinforcement learning algorithms. International Journal of Information Technology 2023, 1–17. [Google Scholar] [CrossRef]

| Research Areas | Number of publications | Percentage |

|---|---|---|

| Manufacturing Engineering | 66 | 12,4 % |

| Industrial Engineering | 59 | 11 % |

| Computer Science Interdisciplinary Applications | 51 | 9,6 % |

| Operations Research Management Science | 47 | 8,8 % |

| Electrical Electronic Engineering | 43 | 8 % |

| Computer Science and Artificial Intelligence | 41 | 7,7 % |

| Automation Control Systems | 36 | 6,7 % |

| Computer Science and Information Systems | 24 | 4,5 % |

| Others | 167 | 31,3 % |

| Name | Number of citations | Country | Name | Number of citations | Country |

|---|---|---|---|---|---|

| Wang, Y. | 347 | Taiwan | Altenmueller, T. | 252 | Germany |

| Zhang, L. | 325 | China | Waschenck, B. | 252 | Germany |

| Luo, S. | 272 | China | Bauernhansl, T. | 242 | Germany |

| Liu, Z. | 265 | China | Tang, D. | 161 | China |

| Wang, L. | 262 | China | Zhu, H. | 159 | China |

| Related terms/topics | Number of co-occurrence | Related terms/topics | Number of co-occurrence |

|---|---|---|---|

| Deep reinforcement learning | 69 | Dynamic scheduling | 24 |

| Optimization | 58 | Scheduling | 20 |

| Algorithm | 44 | Simulation | 19 |

| Job shop scheduling | 35 | Model | 19 |

| Genetic algorithm | 26 | Smart manufacturing | 19 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2024 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).