Submitted:

15 April 2024

Posted:

16 April 2024

You are already at the latest version

Abstract

Keywords:

1. Introduction

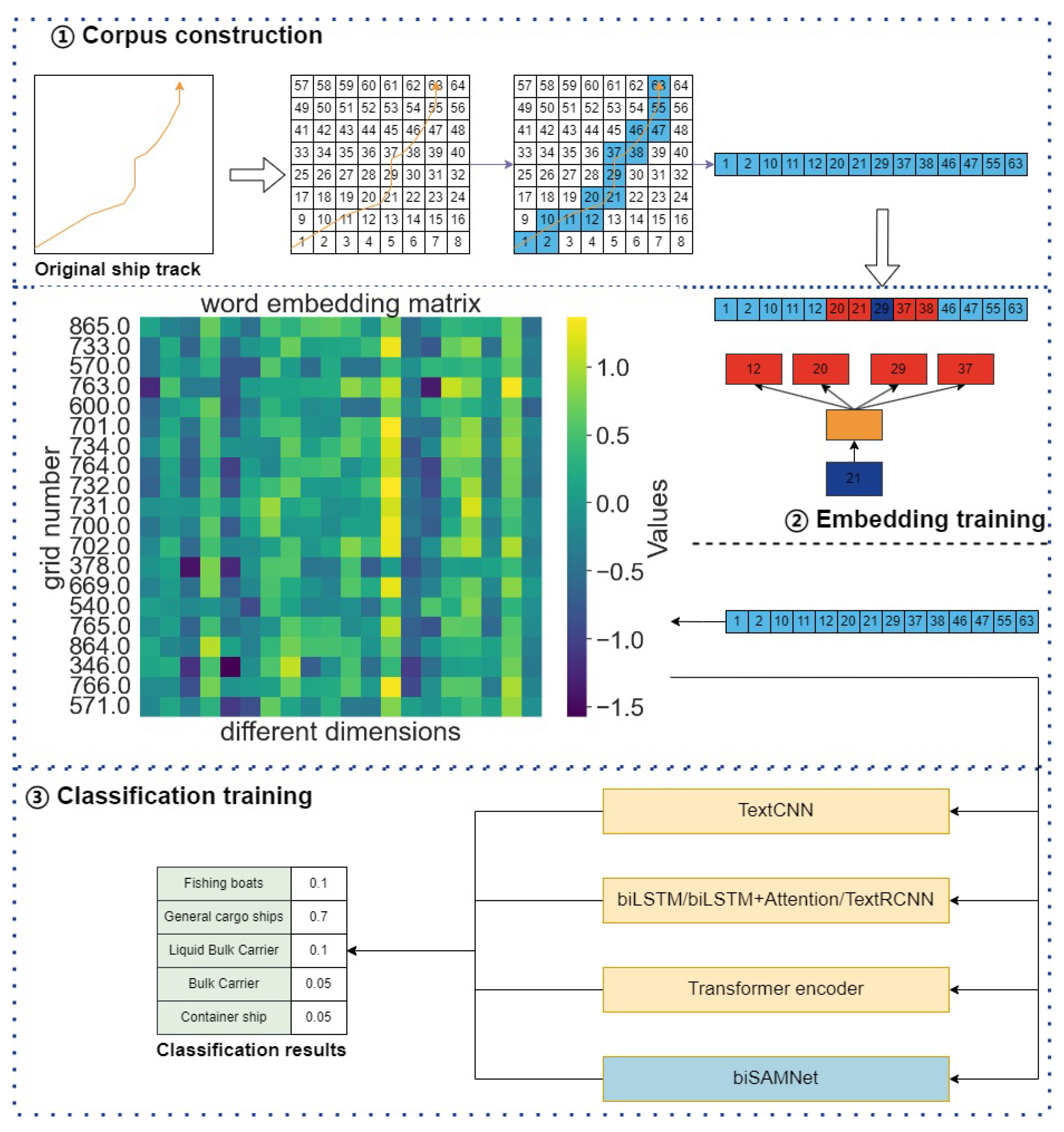

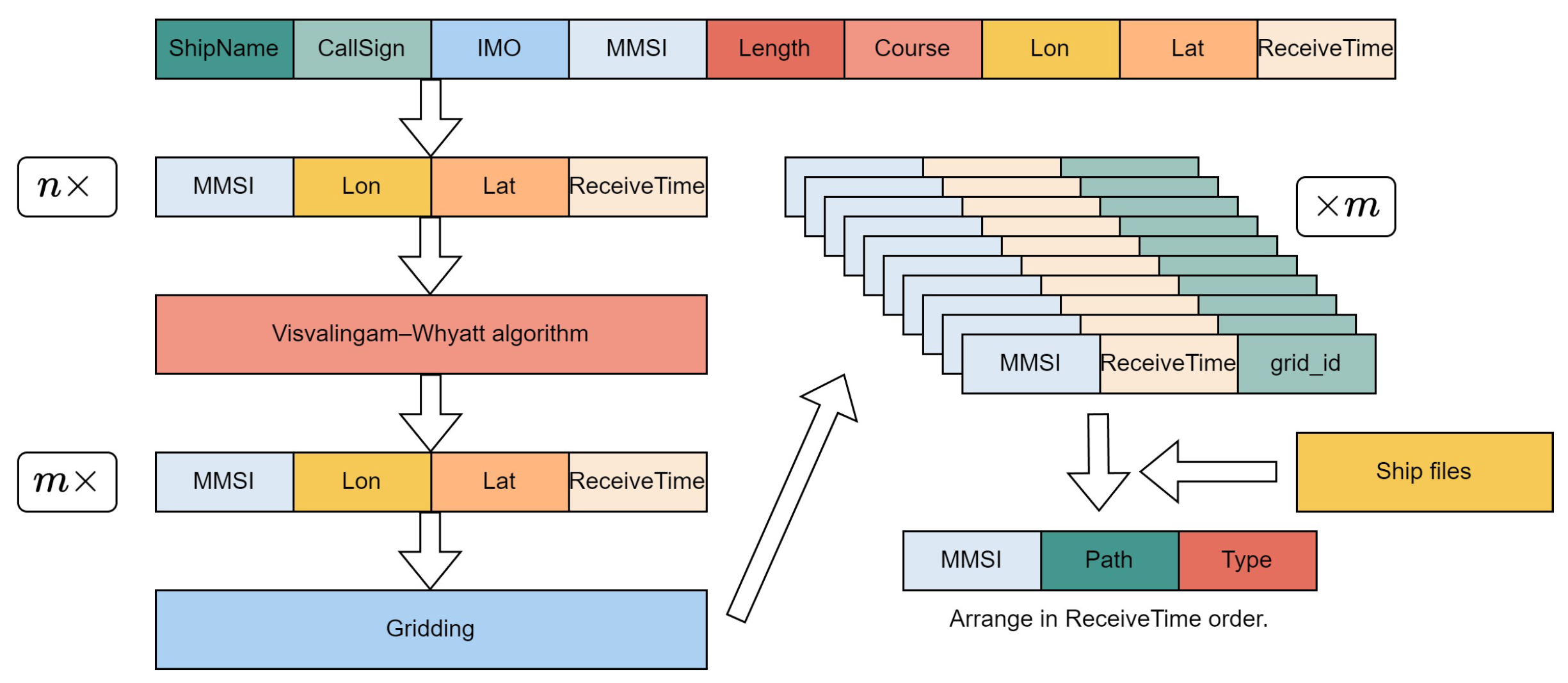

- Introduction of a new method for processing vessel trajectories: This research introduces the Visvalingam-Whyatt algorithm for path compression, followed by a grid-based encoding of AIS data. This innovative approach transforms continuous vessel trajectories into discrete sequences of grid points, which are then transformed into natural language corpora.

- Utilization of traditional Word2vec methods for embedding training: Given the insufficiency of data for training a model like BERT from scratch, this study employs traditional Word2vec techniques from the field of NLP. Each grid point is treated analogously to a word, facilitating the training of static word vectors.

- Integration of vessel trajectories with NLP techniques: The research merges ship path analysis with conventional and artificial intelligence technologies, classifying the paths through supervised learning with ship types as labels. The biSAMNet model is introduced and benchmarked against various models, including RNN, CNN, and Transformer. The results demonstrate the biSAMNet’s efficacy, particularly in lower-dimensional embeddings.

2. Materials and Methods

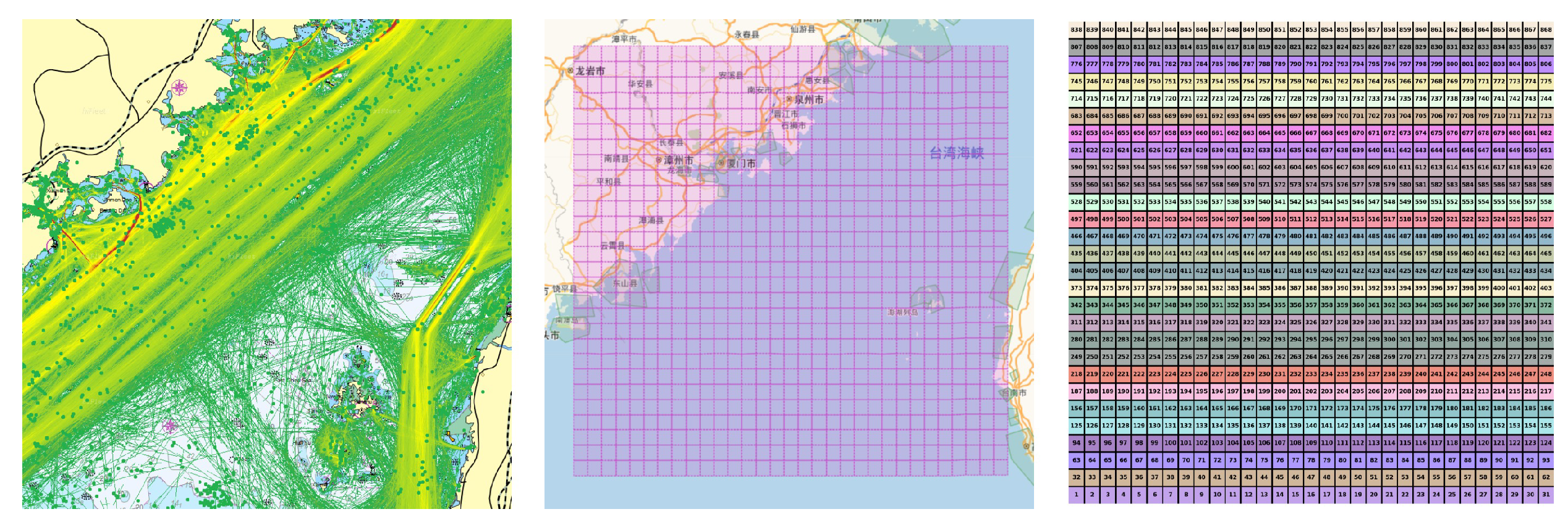

2.1. Corpus Construction and Data Pretreatment Based on Grid-Coding

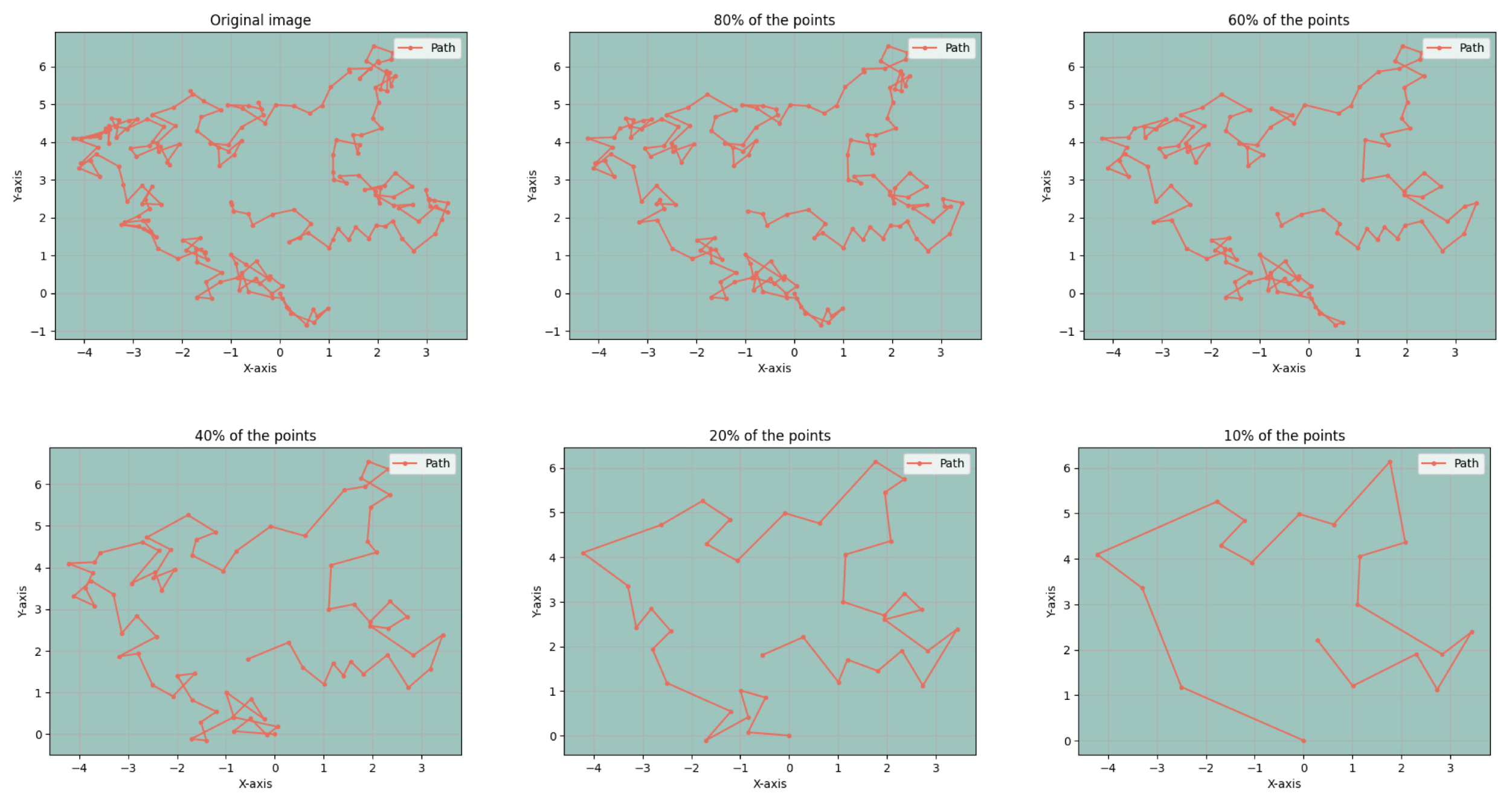

2.2. Visvalingam-Whyatt Algorithm

2.3. Word Embedding Training Using Word2vec

2.4. Cosine Similarity

2.4.1. One-Hot Coding

2.4.2. Word Embedding Vector Averaging

2.4.3. TF-IDF

2.5. Deep Neural Network

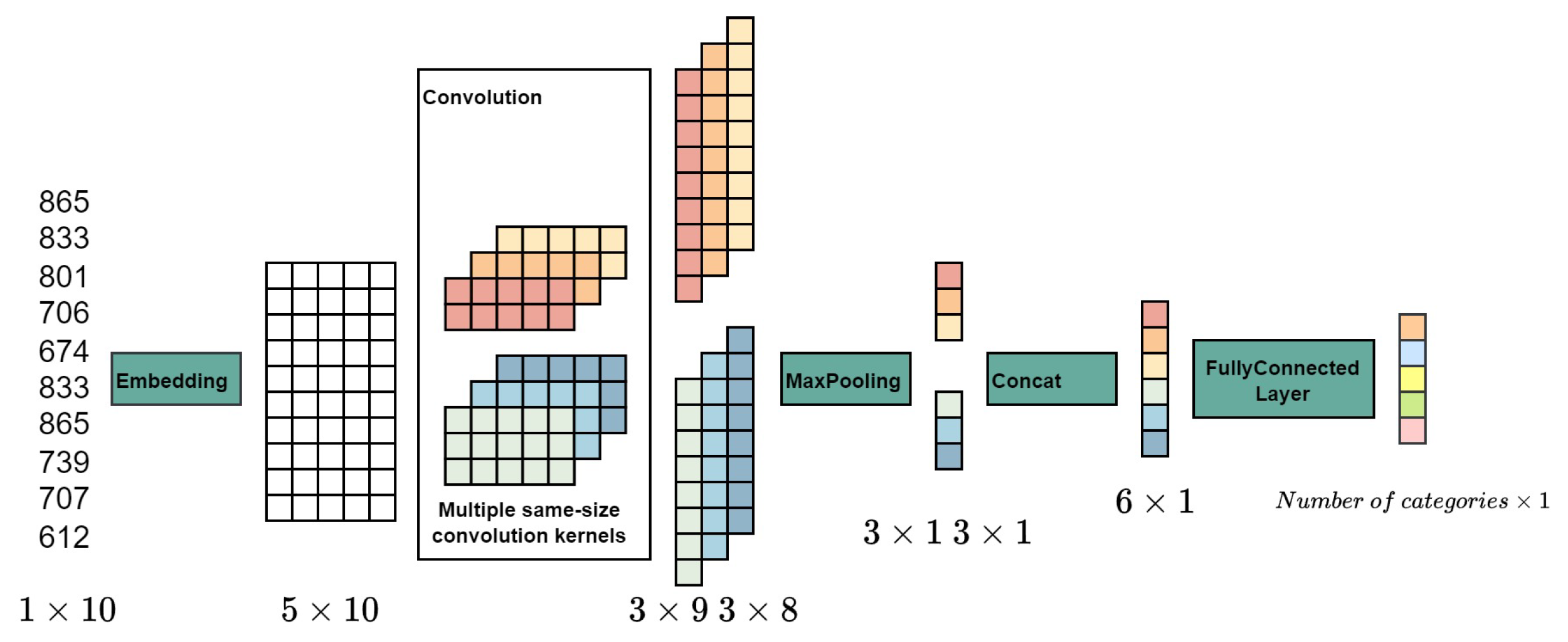

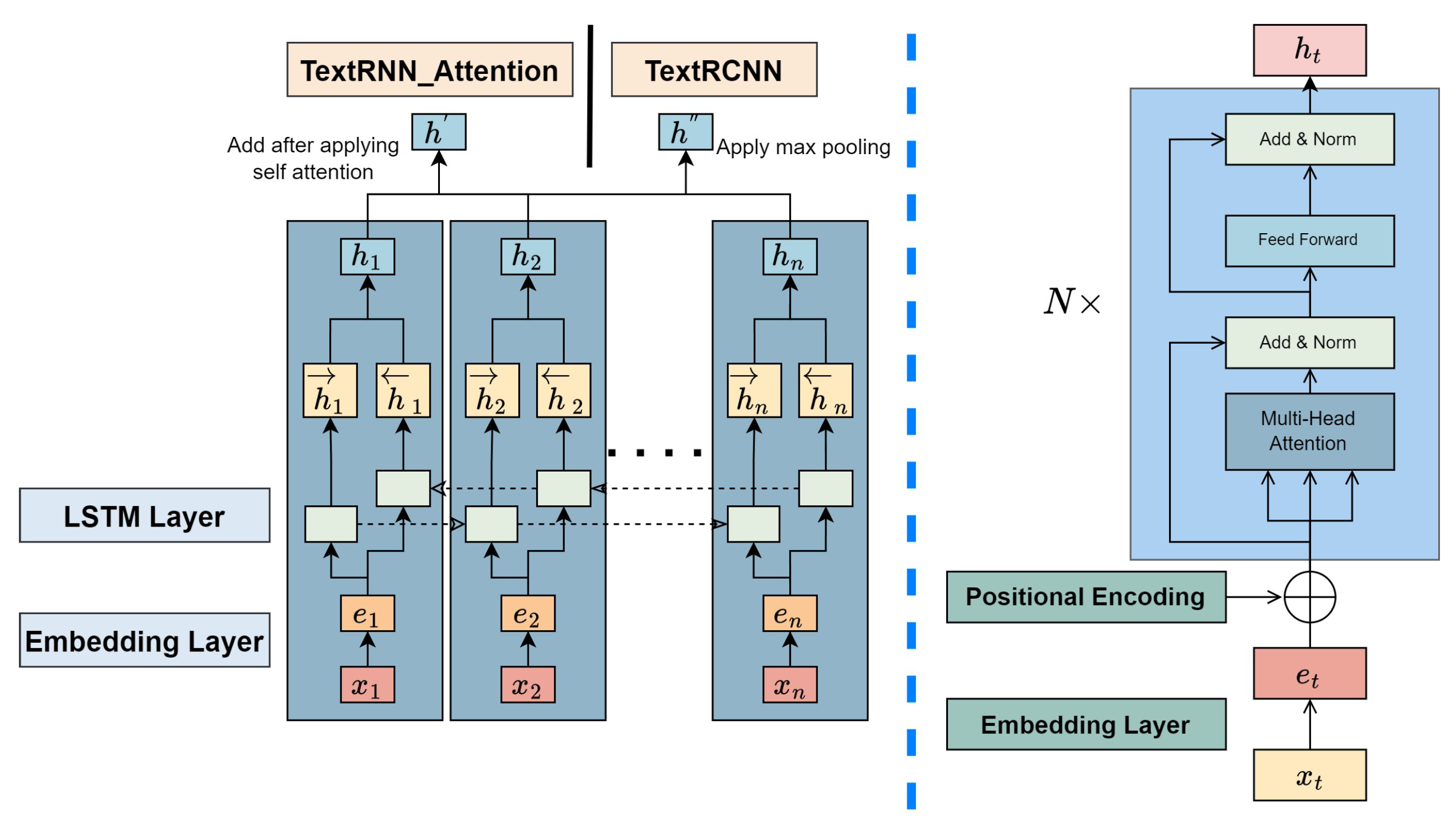

2.5.1. TextCNN

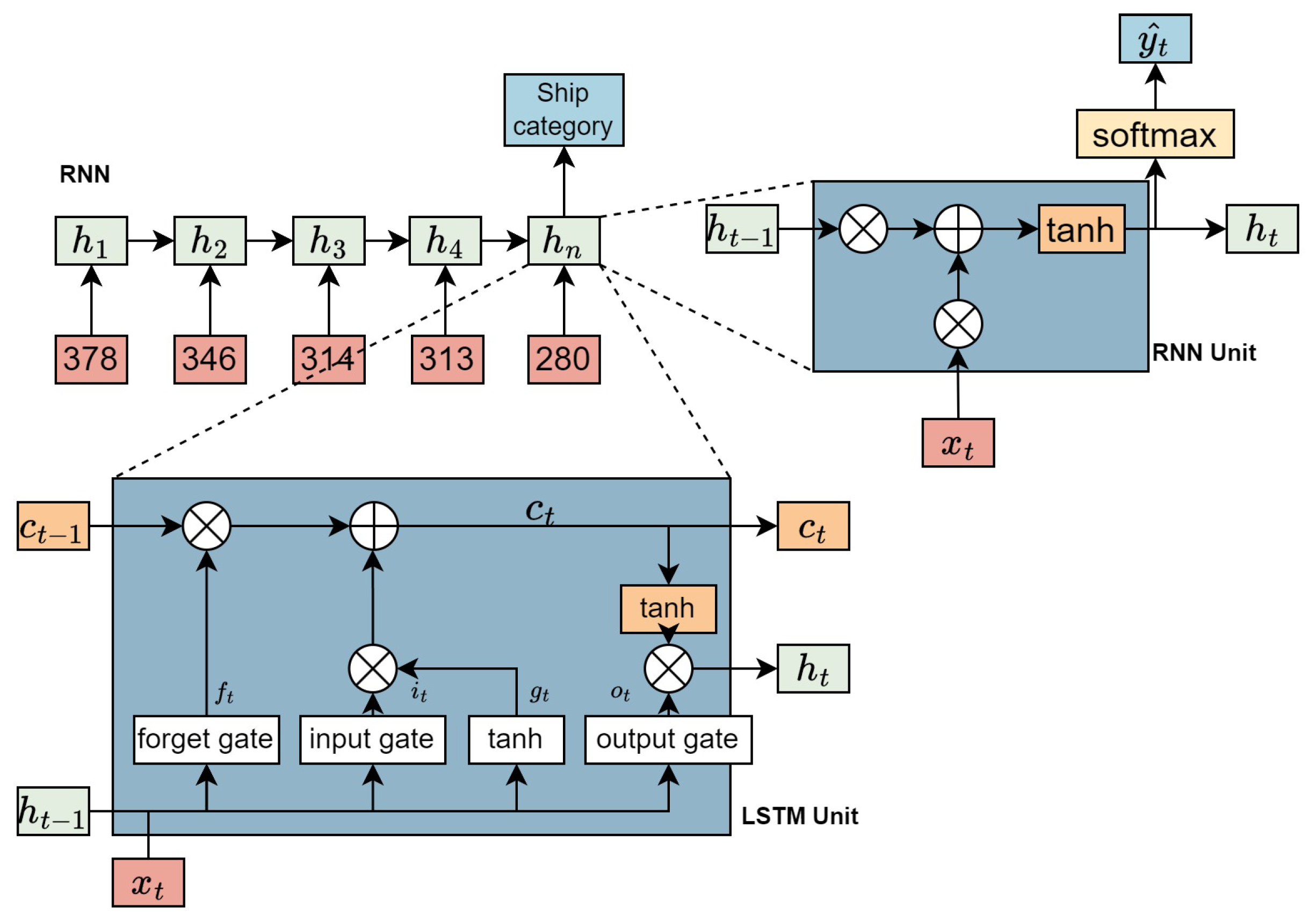

2.5.2. TextRNN

2.5.3. Encoder section of the Transformer

- Parallel processing: The computation within self-attention operates independently, allowing for parallel processing which speeds up computations.

- Global information capture: The mechanism computes word relationships independently, treating the distance between words as uniformly one. This feature enables the mechanism to gather global information easily, achieving or exceeding the long-range information capture capabilities of RNNs.

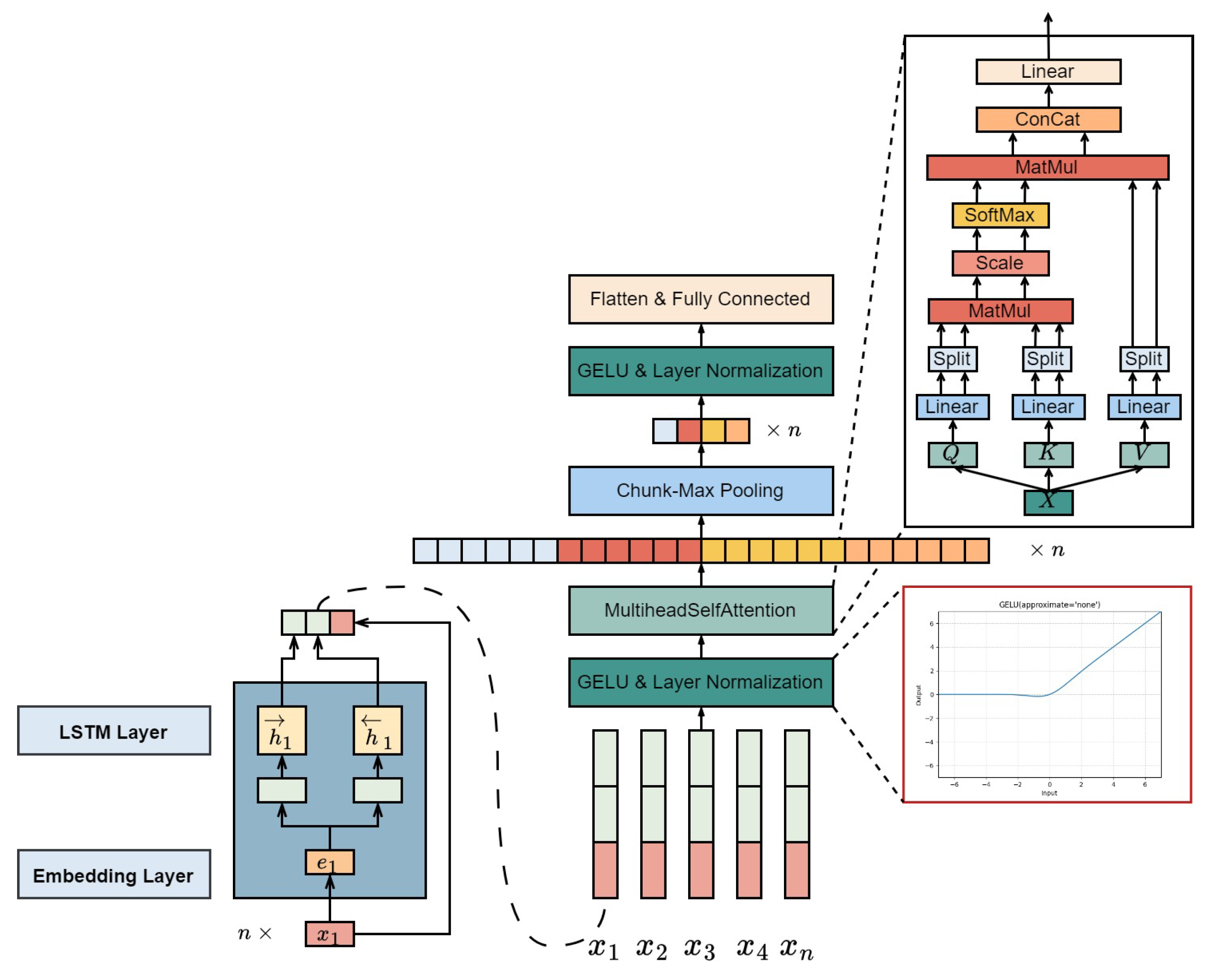

2.6. biSAMNet

3. Results

3.1. Definition of the Problem

3.2. Study Area

3.3. Experimental Data

3.4. Experimental Settings

3.5. Assessment Indicators

3.6. Calculation Results of Cosine Similarity

3.7. Path Compression Comparison

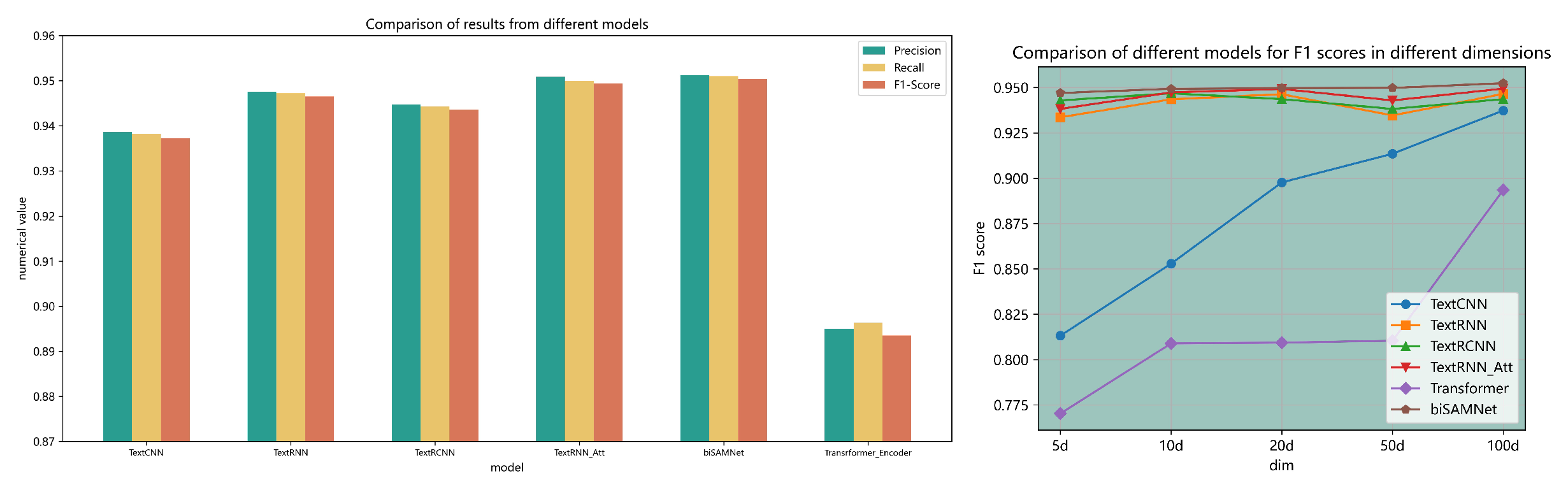

3.8. Results of Data Classification for Different Models

3.9. Ablation Analysis and Tests in Various Dimensions

4. Discussion

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- Xiao, X.; Shao, Z.; Pan, J.; Ji, X. Ship trajectory clustering model based on AIS data and its application. Navigation of China 2015, 38, 82–86. [Google Scholar]

- Wu, B.; Tang, Y.; Yan, X.; Soares, C.G. Bayesian Network modelling for safety management of electric vehicles transported in RoPax ships. Reliability Engineering & System Safety 2021, 209, 107466. [Google Scholar]

- Jiang, D.; Wu, B.; Cheng, Z.; Xue, J.; Van Gelder, P. Towards a probabilistic model for estimation of grounding accidents in fluctuating backwater zone of the Three Gorges Reservoir. Reliability Engineering & System Safety 2021, 205, 107239. [Google Scholar]

- Yu, Y.; Chen, L.; Shu, Y.; Zhu, W. Evaluation model and management strategy for reducing pollution caused by ship collision in coastal waters. Ocean & Coastal Management 2021, 203, 105446. [Google Scholar]

- Gan, L.; Ye, B.; Huang, Z.; Xu, Y.; Chen, Q.; Shu, Y. Knowledge graph construction based on ship collision accident reports to improve maritime traffic safety. Ocean & Coastal Management 2023, 240, 106660. [Google Scholar]

- Shu, Y.; Zhu, Y.; Xu, F.; Gan, L.; Lee, P.T.W.; Yin, J.; Chen, J. Path planning for ships assisted by the icebreaker in ice-covered waters in the Northern Sea Route based on optimal control. Ocean Engineering 2023, 267, 113182. [Google Scholar] [CrossRef]

- Gan, L.; Yan, Z.; Zhang, L.; Liu, K.; Zheng, Y.; Zhou, C.; Shu, Y. Ship path planning based on safety potential field in inland rivers. Ocean Engineering 2022, 260, 111928. [Google Scholar] [CrossRef]

- Peel, D.; Good, N.M. A hidden Markov model approach for determining vessel activity from vessel monitoring system data. Canadian Journal of Fisheries and Aquatic Sciences 2011, 68, 1252–1264. [Google Scholar] [CrossRef]

- Sousa, R.S.D.; Boukerche, A.; Loureiro, A.A. Vehicle trajectory similarity: models, methods, and applications. ACM Computing Surveys (CSUR) 2020, 53, 1–32. [Google Scholar] [CrossRef]

- Wang, J.; Zhu, C.; Zhou, Y.; Zhang, W. Vessel spatio-temporal knowledge discovery with AIS trajectories using co-clustering. The Journal of Navigation 2017, 70, 1383–1400. [Google Scholar] [CrossRef]

- Chen, S.; Lin, W.; Zeng, C.; Liu, B.; Serres, A.; Li, S. Mapping the fishing intensity in the coastal waters off Guangdong province, China through AIS data. Water Biology and Security 2023, 2, 100090. [Google Scholar] [CrossRef]

- Sheng, K.; Liu, Z.; Zhou, D.; He, A.; Feng, C. Research on ship classification based on trajectory features. The Journal of Navigation 2018, 71, 100–116. [Google Scholar] [CrossRef]

- Pallotta, G.; Horn, S.; Braca, P.; Bryan, K. Context-enhanced vessel prediction based on Ornstein-Uhlenbeck processes using historical AIS traffic patterns: Real-world experimental results. 17th international conference on information fusion (FUSION). IEEE, 2014, pp. 1–7.

- Wu, J.; Wu, C.; Liu, W.; Guo, J. Automatic detection and restoration algorithm for trajectory anomalies of ship AIS. Navigation of China 2017, 40, 8–12. [Google Scholar]

- Bengio, Y.; Ducharme, R.; Vincent, P. A neural probabilistic language model. Advances in neural information processing systems 2000, 13. [Google Scholar]

- Mikolov, T.; Chen, K.; Corrado, G.; Dean, J. Efficient estimation of word representations in vector space. arXiv preprint arXiv:1301.3781, 2013. [Google Scholar]

- Le, Q.; Mikolov, T. Distributed representations of sentences and documents. International conference on machine learning. PMLR, 2014, pp. 1188–1196.

- Sarzynska-Wawer, J.; Wawer, A.; Pawlak, A.; Szymanowska, J.; Stefaniak, I.; Jarkiewicz, M.; Okruszek, L. Detecting formal thought disorder by deep contextualized word representations. Psychiatry Research 2021, 304, 114135. [Google Scholar] [CrossRef] [PubMed]

- Rajpurkar, P.; Zhang, J.; Lopyrev, K.; Liang, P. Squad: 100,000+ questions for machine comprehension of text. arXiv preprint arXiv:1606.05250, 2016. [Google Scholar]

- Devlin, J.; Chang, M.W.; Lee, K.; Toutanova, K. Bert: Pre-training of deep bidirectional transformers for language understanding. arXiv preprint arXiv:1810.04805, 2018. [Google Scholar]

- Vaswani, A.; Shazeer, N.; Parmar, N.; Uszkoreit, J.; Jones, L.; Gomez, A.N.; Kaiser, Ł. ; Polosukhin, I. Attention is all you need. Advances in neural information processing systems 2017, 30. [Google Scholar]

- Yang, Z.; Dai, Z.; Yang, Y.; Carbonell, J.; Salakhutdinov, R.R.; Le, Q.V. Xlnet: Generalized autoregressive pretraining for language understanding. Advances in neural information processing systems 2019, 32. [Google Scholar]

- Liu, Y.; Ott, M.; Goyal, N.; Du, J.; Joshi, M.; Chen, D.; Levy, O.; Lewis, M.; Zettlemoyer, L.; Stoyanov, V. Roberta: A robustly optimized bert pretraining approach. arXiv preprint arXiv:1907.11692, 2019. [Google Scholar]

- Raffel, C.; Shazeer, N.; Roberts, A.; Lee, K.; Narang, S.; Matena, M.; Zhou, Y.; Li, W.; Liu, P.J. Exploring the limits of transfer learning with a unified text-to-text transformer. The Journal of Machine Learning Research 2020, 21, 5485–5551. [Google Scholar]

- Mikolov, T.; Sutskever, I.; Chen, K.; Corrado, G.S.; Dean, J. Distributed representations of words and phrases and their compositionality. Advances in neural information processing systems 2013, 26. [Google Scholar]

- Zhang, Y.; Zheng, X.; Helbich, M.; Chen, N.; Chen, Z. City2vec: Urban knowledge discovery based on population mobile network. Sustainable Cities and Society 2022, 85, 104000. [Google Scholar] [CrossRef]

- Zhang, Y.; Wallace, B. A sensitivity analysis of (and practitioners’ guide to) convolutional neural networks for sentence classification. arXiv preprint arXiv:1510.03820, 2015. [Google Scholar]

- Zhou, P.; Shi, W.; Tian, J.; Qi, Z.; Li, B.; Hao, H.; Xu, B. Attention-based bidirectional long short-term memory networks for relation classification. Proceedings of the 54th annual meeting of the association for computational linguistics (volume 2: Short papers), 2016, pp. 207–212.

- Lai, S.; Xu, L.; Liu, K.; Zhao, J. Recurrent convolutional neural networks for text classification. Proceedings of the AAAI conference on artificial intelligence, 2015, Vol. 29.

- Hendrycks, D.; Gimpel, K. Gaussian error linear units (gelus). arXiv preprint arXiv:1606.08415, 2016. [Google Scholar]

- Kao, W.T.; Lee, H.y. Is BERT a Cross-Disciplinary Knowledge Learner? A Surprising Finding of Pre-trained Models’ Transferability. arXiv preprint arXiv:2103.07162, 2021. [Google Scholar]

| MMSI | Time | Lon | Lat |

|---|---|---|---|

| 703011305 | 1688140800 | 121.039907 | 27.072142 |

| 805701357 | 1688140807 | 121.071067 | 27.229082 |

| MMSI | AllRoute | ShipType |

|---|---|---|

| 200011305 | 632|662|631|599|600|570|601|632|633|634 | ocean liner |

| 218820000 | 866|833|736|575|573|542|572|541|540|540|476|381|188|188 | cargo ship |

| 211829000 | 651|588|432|401|308|184|184|93|31|30|28|27|94|319|382|478|509|540|540|599|600|570|570|540|540|508 | Null |

| Vessel type | Number of ships | Proportions |

|---|---|---|

| unknown | 577412 | 39.12% |

| fishing boats | 318376 | 21.57% |

| bulk carrier | 240337 | 16.29% |

| Other ships | 167524 | 11.35% |

| container ship | 76864 | 5.21% |

| Liquid bulk carriers | 36612 | 2.48% |

| oil tanker | 32790 | 2.22% |

| passenger ship | 10759 | 0.73% |

| Method | Top-5 | Top-10 | Top-20 |

|---|---|---|---|

| One-hot | 0.814 | 0.713 | 0.693 |

| Word embedding vector averaging | 0.839 | 0.750 | 0.775 |

| TF-IDF | 0.835 | 0.753 | 0.770 |

| Methods | Precision | Recall | F1-Score |

|---|---|---|---|

| TextCNN | 0.9386 | 0.9382 | 0.9373 |

| TextRNN | 0.9476 | 0.9472 | 0.9465 |

| TextRNN_Attention | 0.9509 | 0.9500 | 0.9494 |

| TextRCNN | 0.9447 | 0.9443 | 0.9436 |

| Transformer | 0.8950 | 0.8963 | 0.8935 |

| biSAMNet | 0.9512 | 0.9510 | 0.9504 |

| Method | Embedded | Precision | Recall | F1-Score |

|---|---|---|---|---|

| TextCNN | Random initialization | 0.9355 | 0.9350 | 0.9341 |

| Trainable pre-training layer | 0.9157 | 0.9155 | 0.9135 | |

| Freeze pre-training layer | 0.9102 | 0.9100 | 0.9071 | |

| biLSTM | Random initialization | 0.9482 | 0.9473 | 0.9467 |

| Trainable pre-training layer | 0.9360 | 0.9357 | 0.9346 | |

| Freeze pre-training layer | 0.9494 | 0.9488 | 0.9482 | |

| biLSTM+Attention | Random initialization | 0.9475 | 0.9467 | 0.9462 |

| Trainable pre-training layer | 0.9443 | 0.9436 | 0.9429 | |

| Freeze pre-training layer | 0.9526 | 0.9518 | 0.9513 | |

| TextRCNN | Random initialization | 0.9478 | 0.9474 | 0.9468 |

| Trainable pre-training layer | 0.9390 | 0.9389 | 0.9382 | |

| Freeze pre-training layer | 0.9482 | 0.9476 | 0.9470 | |

| Transformer | Random initialization | 0.3761 | 0.5129 | 0.4338 |

| Trainable pre-training layer | 0.8118 | 0.8211 | 0.8104 | |

| Freeze pre-training layer | 0.9071 | 0.9078 | 0.9062 | |

| biSAMNet | Random initialization | 0.9481 | 0.9475 | 0.9469 |

| Trainable pre-training layer | 0.9510 | 0.9504 | 0.9498 | |

| Freeze pre-training layer | 0.9516 | 0.9509 | 0.9504 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2024 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).