A qualitative approach augmented by quantitative methods was taken to investigate the three questions that were the focus of the study. Based on the study results, one can make the following conclusions regarding the three primary research questions.

Research Question 1: How can the NASA TRL scale be adapted to better suit the needs of technology transfer professionals in a wider range of contexts, particularly university technology transfer practitioners?

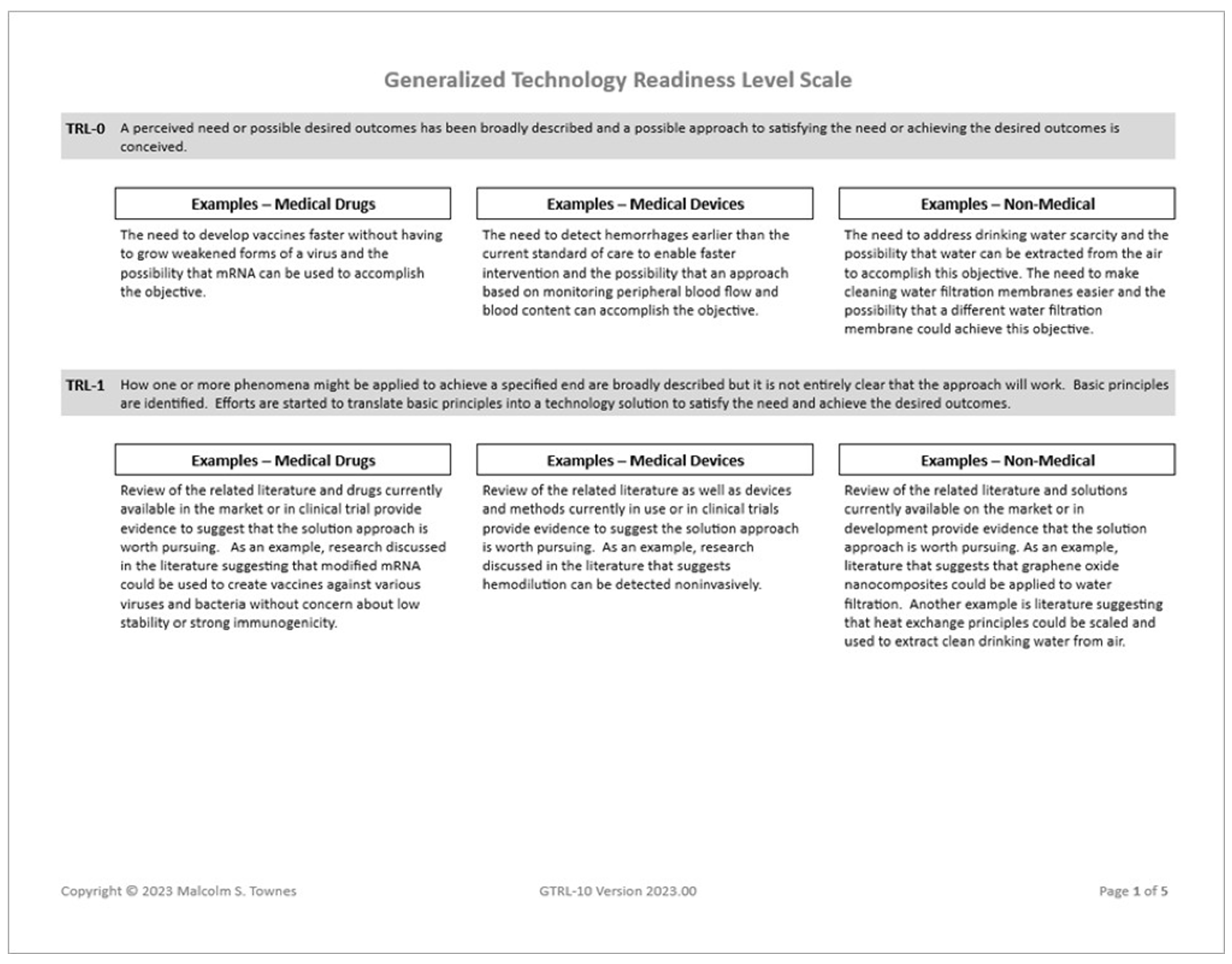

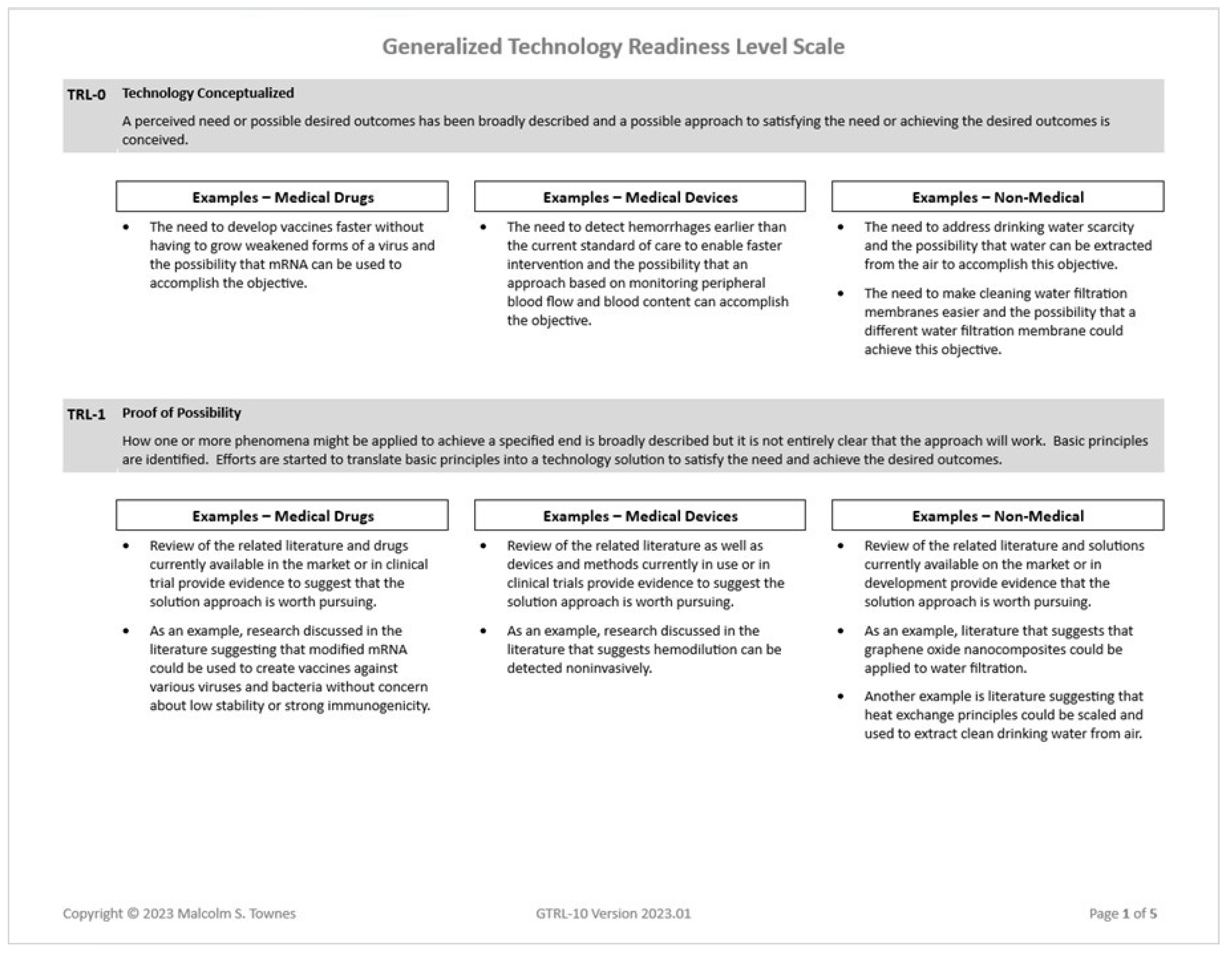

The development of the GTRL scale helped to understand how the NASA TRL scale could be adapted to better suit the needs of technology transfer practitioners in a wider range of contexts. Adapting the NASA TRL scale to create the GTRL focused on minimizing ambiguity in the definitions of each scale level and reducing the cognitive burden imposed when using the GTRL scale. This included using more precise words in the definitions, providing context-specific examples of each readiness level, using clear and simple sentence structures, minimizing the length of sentences where possible, avoiding misplaced modifiers, and using a consistent style (see Guliyeva, 2023; Johnson-Sheehan, 2015; Office of the Federal Register, 2022, March 1; Yadav et al., 2021). Chunking was also used to help reduce the cognitive burden imposed on the user (see Cowan, 2010; Thalmann et al., 2019). For example, the GTRL scale comprises three broad categories of technologies. Additionally, no more than three context-specific examples were included for each readiness level within a technology category.

Research Question 2: How applicable to validating such an instrument based on “readiness levels” are the typical methods for assessing the validity of measurement instruments?

The pilot validation study provided insight into whether the typical validation methods for assessing the validity of instruments are applicable for instruments designed to measure the degree of maturity of technologies that are based on “readiness level” scales. In short, the typical validation methods are quite applicable. However, some minor adjustments may be necessary to facilitate the application of such methods.

Research Question 3: How should large scale studies be structured to better ensure the proper validation of technology maturity measurement instruments that are based on “readiness levels” and intended for use at universities and in other contexts?

The pilot study provided numerous clues about how one should structure large scale studies of the validity and reliability of instruments designed to measure the maturity of technologies that are structured as “readiness level” scales such as the NASA TRL scale and GTRL scale. One important lesson was that such studies should recruit participants that are somewhat homogeneous along certain dimensions such as setting (e.g., university, federal laboratory, private sector research and development) or experience assessing technologies. Another is that participants in such studies will likely require more than just casual familiarization with the scale. Finally, large scale studies will likely need to recruit between 10 and 30 participants. Moreover, email solicitations alone will probably not be sufficient to successfully recruit the necessary number of participants and offering compensation will likely be necessary to minimize non-response bias.

Summary of Considerations Investigated

The study identified and highlighted several challenges that are likely to be encountered with efforts to validate TRL-based measurement instruments for assessing the maturity of technologies. Investigating the following questions surfaced these challenges and revealed potential approaches to cope with them.

Consideration 1: What challenges are likely to be encountered in a larger study to assess the validity and reliability of the GTRL scale and other such instruments?

A significant challenge with using the construct of technology readiness level as a measure of technology maturity is that there is no formal definition. In fact, the concept of “technology readiness” does not appear to be explicitly defined in the literature. Moreover, there is no such unit or quantity of “readiness”. It can be contextually operationalized in numerous ways such as the allowable failure rate for a product or system, the efficacy of a medical drug, or the variability of properties of a material at different design phases. But such operationalizations are context-specific proxies. Strictly speaking, they are not examples of technology readiness. They simply stand in for the concept of “technology readiness”.

Another difficulty that is likely to be encountered is participant recruitment. Workloads and recent societal changes seem to make it more difficult to recruit participants for such studies. Additionally, the current environment of cyberattacks and malicious malware make many people hesitant to respond to email solicitations, which are more cost effective for large studies.

However, the most significant challenge to performing larger validation studies is epistemological in nature. The CVI method of assessing content validity may not be as definitive as the literature suggests. It assumes that experts are virtually omniscient and have near complete, infallible knowledge of the construct. This obviously cannot be true, and history is replete with examples. When using expert-based approaches it is possible for an instrument to incorporate dimensions that experts currently deem critical, which are later demonstrated to be irrelevant. It is also possible for content validity to be deemed sufficiently high even if an instrument is missing relevant and useful dimensions which the experts are simply not aware. At best, such an approach can only estimate the degree to which the content of an instrument reflects the current consensus about dimensions of a phenomena.

Consideration 2: Can the methods for assessing content validity be applied to the GTRL scale?

The pilot study demonstrated that the most popular methods for assessing content validity and reliability can be applied to the GTRL scale. The CVI value, the ICC value for inter-rater reliability, and Krippendorff’s alpha reliability coefficient were all successfully calculated and interpretable.

Consideration 3: Should participants in a larger validation study of the reliability of the GTRL scale be limited to university and federal laboratory technology transfer professionals?

It is probably worthwhile to segregate participants in larger validation studies of the GTRL scale into relatively homogenous cohorts along various dimensions. In such a scheme, one cohort would consist of university technology transfer professionals while federal laboratory technology transfer professionals would constitute another. Validation studies can also be conducted with other homogenous groups such as private sector research and development professionals in specific industries. This will likely increase the data quality and improve the internal consistency of the studies.

Consideration 4: How difficult will it be to recruit study participants?

It appears that obtaining the participation of university technology transfer professionals is likely to be a significant challenge in larger studies to assess the validity and reliability of the GTRL scale. Six (6) of the 22 other individuals (27%) solicitated for the pilot study were employed as university technology transfer professionals but none of them responded even though all were familiar with the researcher making the request. University technology transfer offices are notoriously understaffed and under-resourced. This may have contributed to the non-response rate and exacerbated societal trends that already tend to increase non-response. Several of the 22 other individuals solicitated for the study were hesitant to click on the link in the invitation email that was distributed via the online survey platform because they feared getting a computer virus even though they recognized the name of the person making the request.

Consideration 5: How should a larger study familiarize participants with the GTRL scale?

Although this was only a pilot study, one interpretation of the lower than desired inter-rater reliability statistics is that better instruction on the proper application of the scale may be needed in a larger study as well as when using the scale in practice. The approach taken in the pilot study probably will not suffice. The nature and specifics of this instruction will likely need to vary a bit among the study groups. For example, the type and amount of instruction required for university technology transfer practitioners will likely differ to some non-trivial degree from that required for users in the private sector such as venture capital professionals.

There are several possible options for familiarizing study participants with the GTRL scale. A video providing a more detailed explanation of the GTRL scale with examples of its application is one option. Alternatively, one could also familiarize participants with the GTRL scale using a live synchronous overview that not only provides a more detailed explanation with examples of how to apply the scale but also enables participants to ask questions to clarify any confusion.

Consideration 6: What factors should be controlled in a larger validity and reliability study?

In larger validation studies of the reliability of the GTRL scale, it is probably advisable to control for the type of experience the respondents have assessing technology maturity. Although the GTRL scale is intended for a broad spectrum of users, validating the scale in more homogeneous groups will likely improve the quality and internal consistency of the validation studies. Additionally, studies can control for the category of technology. This may enable the collection of cleaner data for a more accurate assessment of inter-rater and intra-rater reliability for a given class of technology. It may also be prudent to control for the prestige of the organization offering the technology and the researchers that created the technology. All these factors could potentially affect how participants rate a technology.

Consideration 7: How viable are asynchronous web-based methods for administering a validity and reliability study?

Asynchronous web-based methods for collecting data for studies of the validity and reliability of the GTRL scale and other such instruments for measuring technology maturity level appear to be viable. There were no issues administering the questionnaires using the web-based survey platform.

Consideration 8: How viable is the approach of presenting marketing summaries of technologies to participants for them to rate?

Presenting several summaries of technologies to participants and having participants rate the technologies based on those summaries is a viable approach for conducting reliability studies. The participants did not appear to encounter any problems. This approach has the advantage of mimicking the nature and structure of how demand-side technology transfer professionals obtain initial information about technologies. Moreover, it allows one to eliminate extraneous information and control for various factors.

Consideration 9: How burdensome will it be for study participants to rate several technologies in multiple rounds?

Having study participants rate several technologies in multiple rounds does not appear to be overly burdensome. The longest time required to complete the questionnaire for the first round was within four (4) days of beginning the questionnaire with most of the participants completing the questionnaire within two (2) days of beginning it. In the second round, all participants completed the questionnaire the same day they started. Having participants rate 10 technologies in multiple rounds did not appear to be overly burdensome.

Consideration 10: What modifications, if any, to the GTRL scale should be considered before performing a larger validity and reliability study?

This was only a small pilot study. As such, the data and results are not sufficient to make broad generalizations. But they do provide some insights, and the results suggest that it may be prudent to consider modifications to either the instrument or the methodology, or both, before implementing larger studies of reliability.

The validation statistics provide clues to the specific modifications that may be helpful. Depending on the reference, the scale level CVI value of 0.8 is considered acceptable for two expert raters. The ICC value for the inter-rater reliability of rating technologies using the GTRL scale had a p-value greater than 0.05 in both rounds. Thus, one could not reject the null hypothesis that there was no agreement among the raters. However, the ICC value for inter-rater reliability did increase from the first round of technology ratings to the second round. Krippendorff’s alpha reliability coefficient also increased from the first round of technology ratings to the second round, but was still well below the 0.667 threshold, which is considered the minimal limit for tentative conclusions (see Krippendorff, 2004). The ICC value for estimating intra-rater reliability ranged from 0.557 to 0.559 and was statistically significant. This suggests that use of the scale is moderately stable over time. However, Krippendorff’s alpha reliability coefficient was 0.531, which is less than the 0.667 threshold considered the smallest acceptable value.

There are several possible modifications to the GTRL scale that can be implemented to address these issues. First, reducing the number of indicators (i.e., readiness levels) on the GTRL scale may be worthwhile. This will likely increase the inter-rater and intra-rater reliability of the instrument. Assessments of technology maturity in certain settings and contexts, such as university and federal laboratory technology transfer, may not need the level of precision represented by the 10-level GTRL scale and other similar scales. The GTRL scale may prove more practical and useful for the intended contexts of its application if it is reduced from a 10-level ordinal scale to a 5-level ordinal scale (see

Table 12 and Supplementary Resource 7).

It may also be worthwhile to re-format the examples for each readiness level and present them in a series of bullet points (see

Figure 4). This may help reduce the cognitive burden of using the instrument and allow users to more quickly locate the relevant information needed to rate a technology using the scale. Finally, it may be helpful to include the higher order concept (HOC) or theme for each readiness level on the instrument. This should be done in a very conspicuous manner that makes it easy to visually locate (see Supplementary Resource 7 and Supplementary Resource 8). The HOC or theme may serve as a shorthand for users to help them mentally organize the details of the instrument and enable users to better apply the scale.