Submitted:

16 August 2023

Posted:

18 August 2023

You are already at the latest version

Abstract

Keywords:

1. Introduction

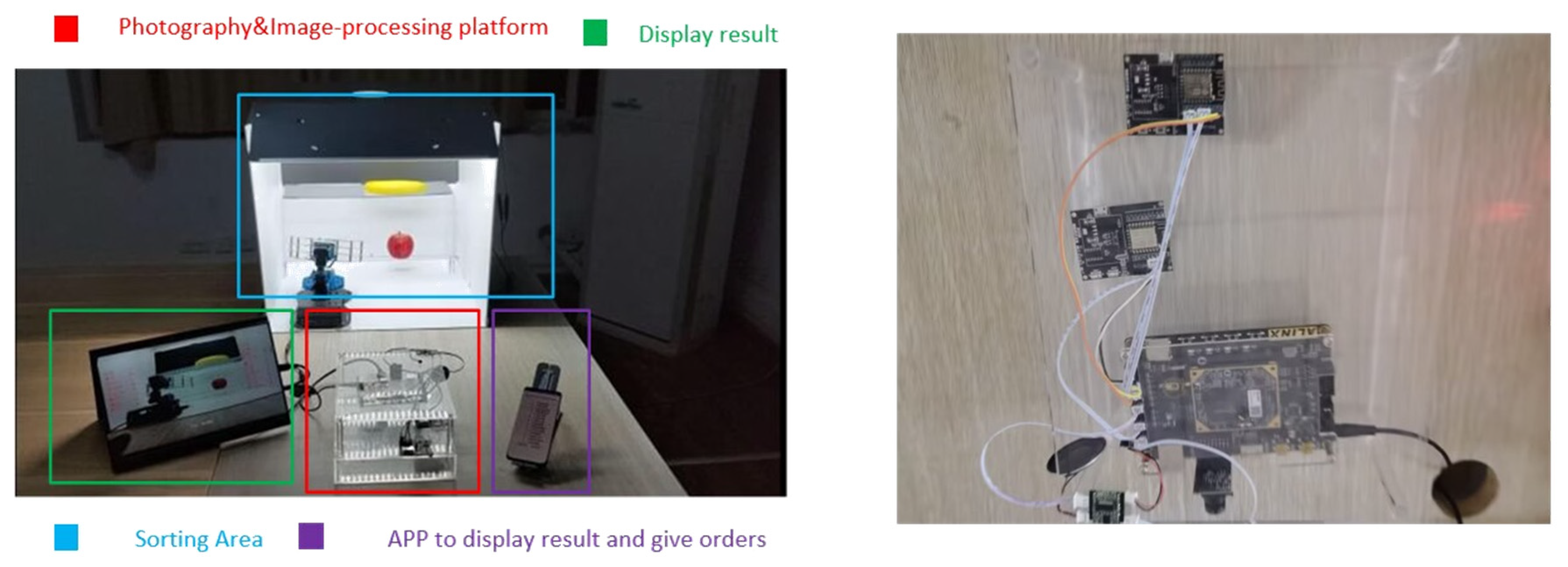

- The hardware configuration of the entire system includes an FPGA development board, a vision camera, and a robotic arm grasping system. This setup eliminates the need for high power consumption and a large computer mobile platform, making it well-suited for the requirements of small enterprises or individuals;

- The entire system is built on an FPGA, utilizing fundamental components such as counters, registers, and LUT (Look-Up Table) modules. This design significantly reduces system resources and power consumption when compared to GPUs;

- In terms of algorithm implementation, the vision component employs traditional morphology for real-time parallel processing through pipeline operations, while the grasping segment utilizes an enhanced cubic B-spline algorithm for trajectory planning. This comprehensive system exhibits strong real-time performance and high operational stability.

2. Related Works Analysis

2.1. Visual Section

2.2. Grabbing Section

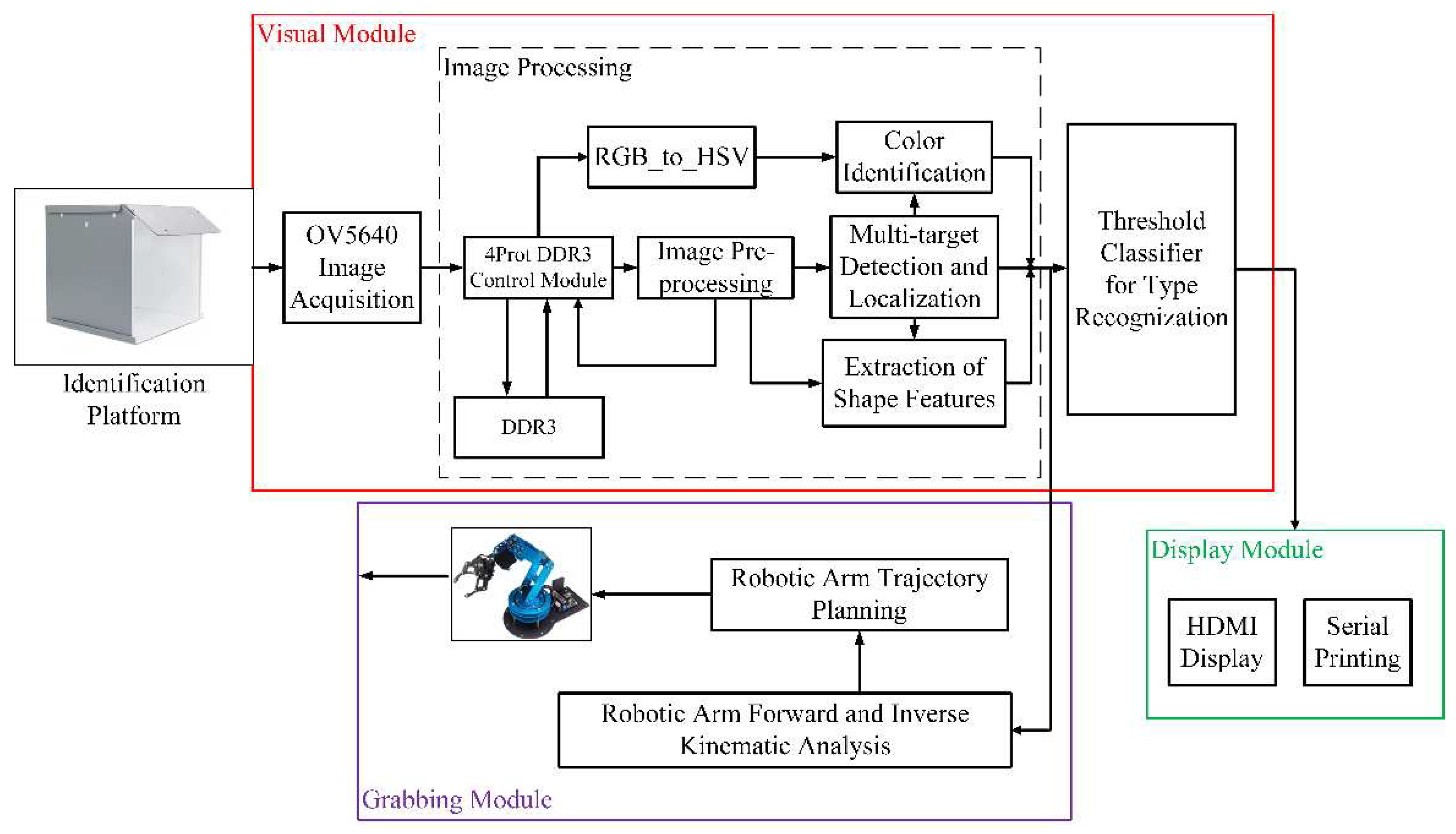

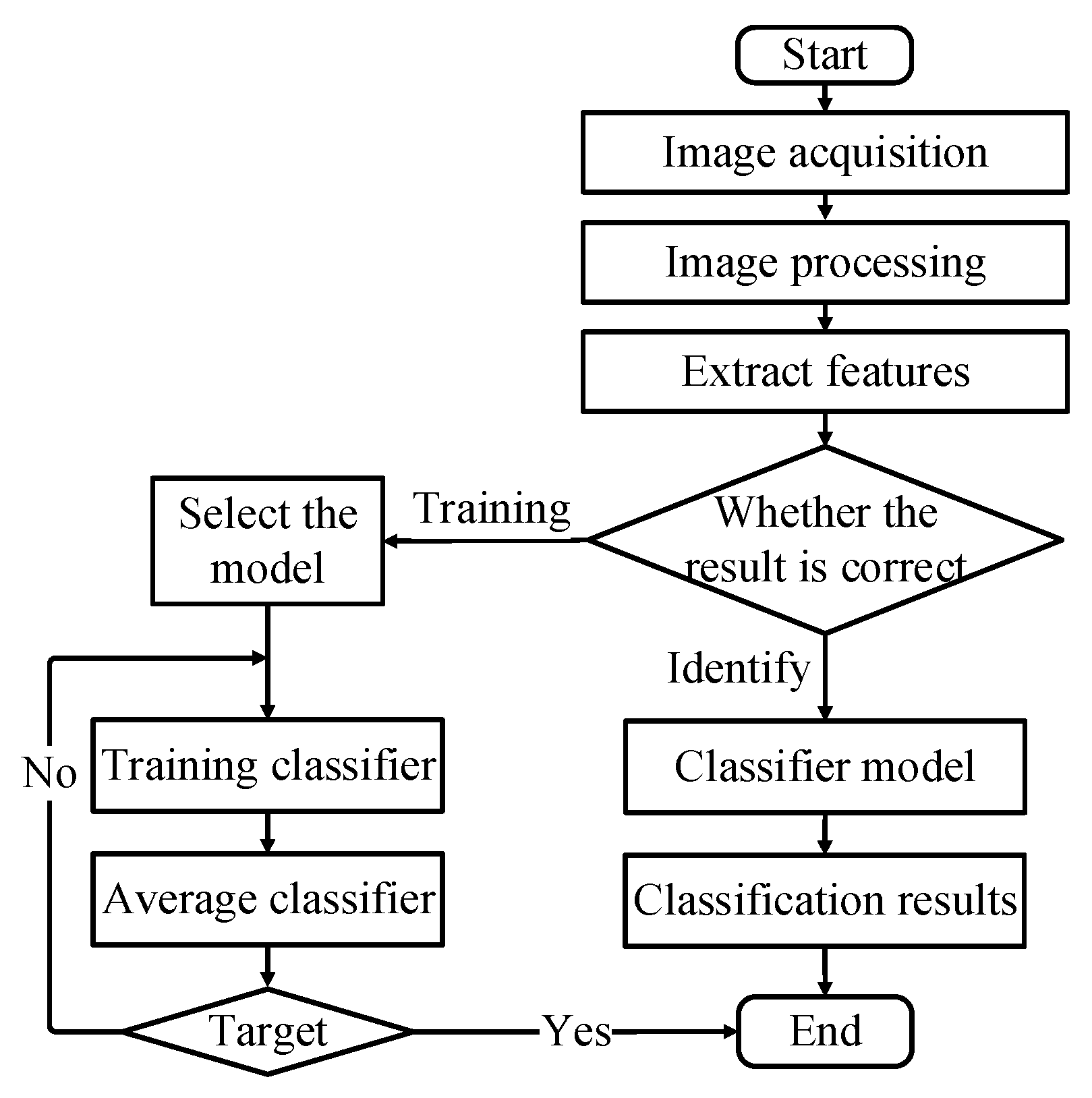

3. Overall System Design

- Image processing;

- Threshold classifier;

- Robotic arm forward and inverse kinematic analysis;

- Robotic arm trajectory planning.

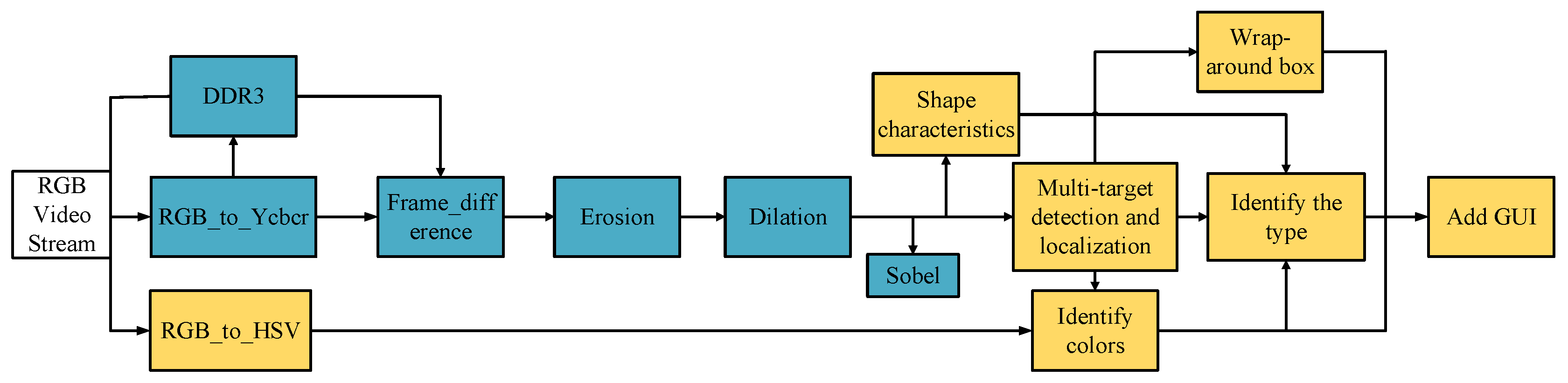

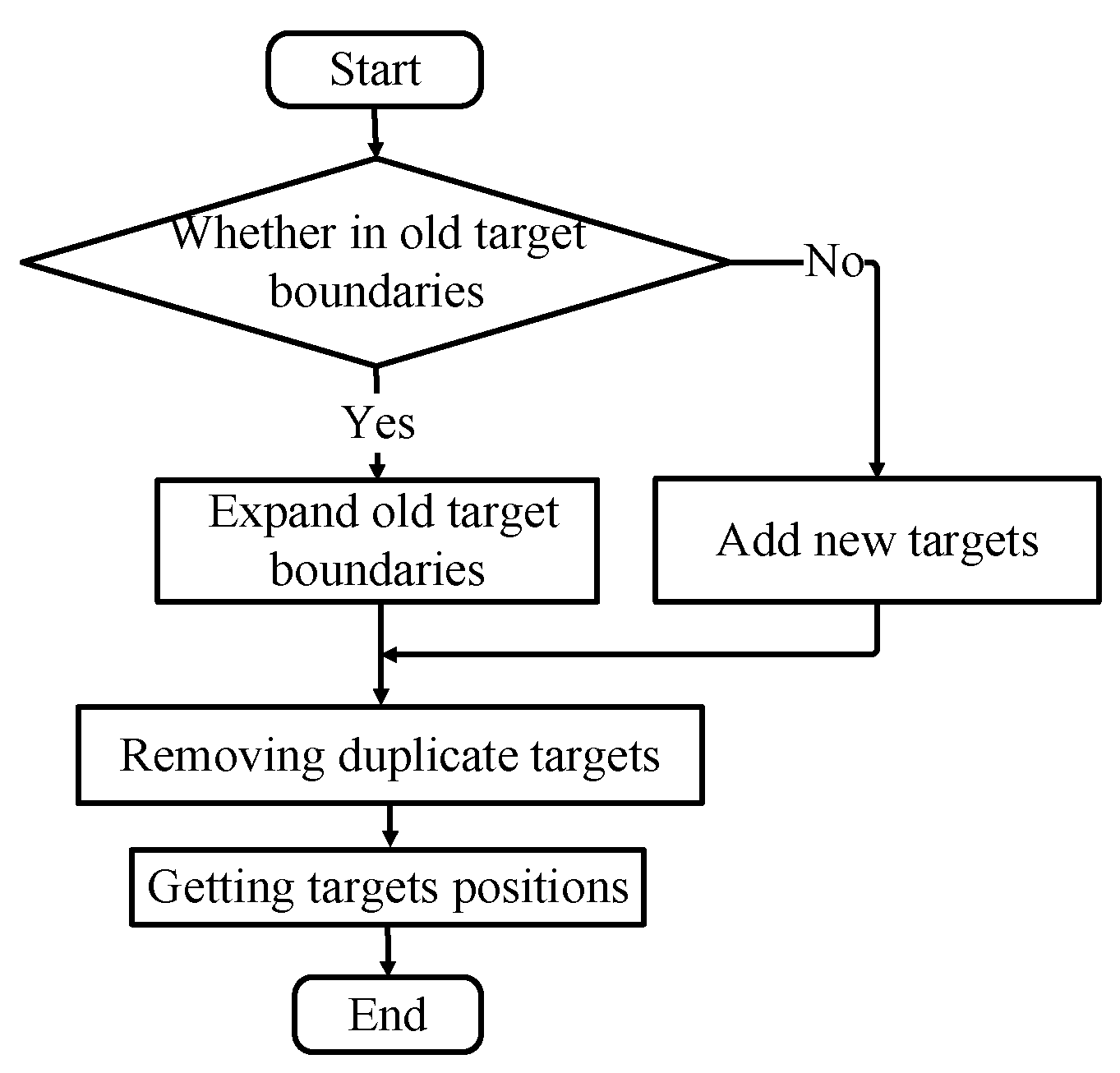

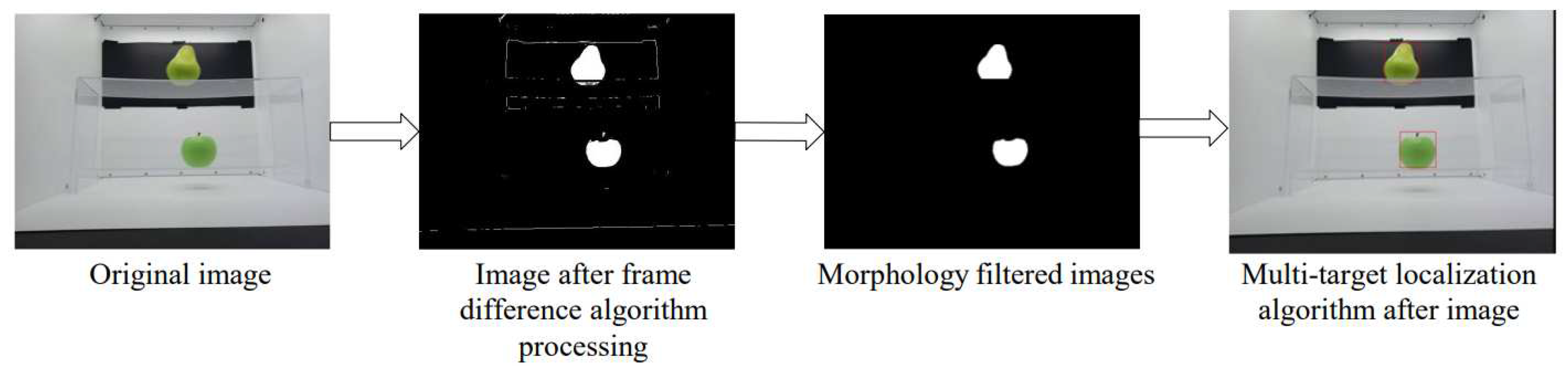

3.1. Image Processing

3.2. Threshold classifier

3.3. Robotic arm forward and inverse kinematic analysis

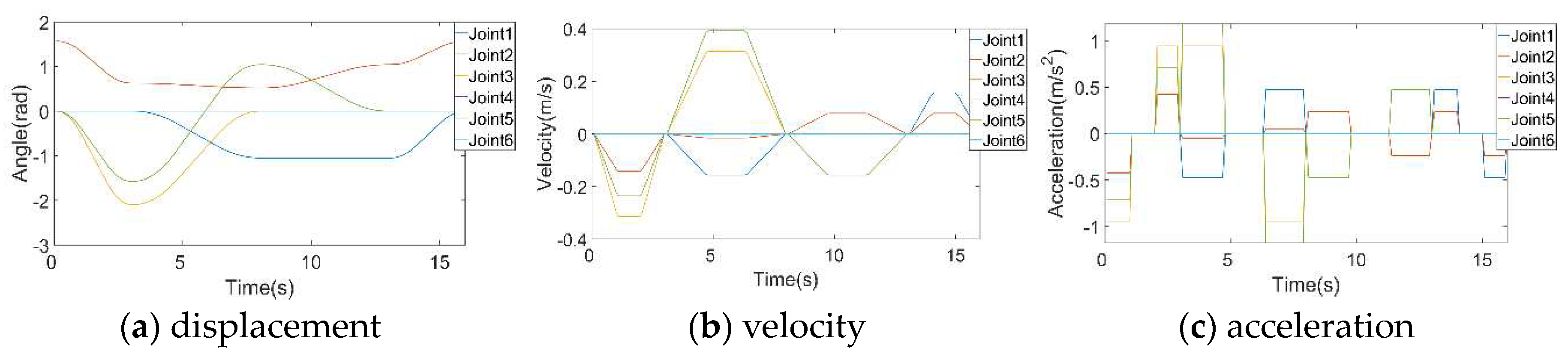

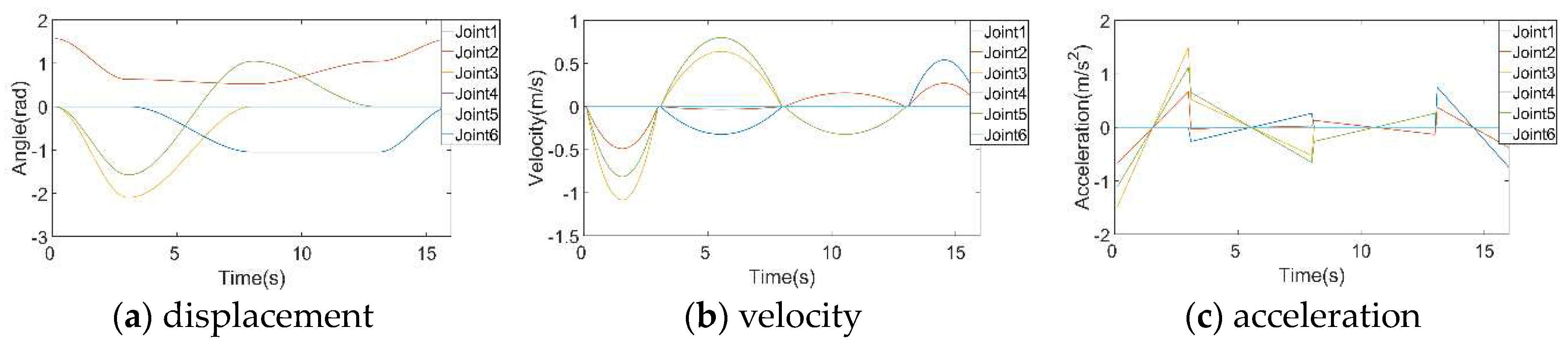

3.4. Robotic arm trajectory planning

- Path planning to establish the geometric profile of the path, involving the determination of spatial curves such as space curves or other complex NURBS curves [17];

- Interpolation, where parameters like time or distance are utilized. Interpolation helps densify the parameters to obtain the intermediate points along the motion path.

4. Experiment Result

4.1. Experimental Setup and Data

4.2. Experimental Result

5. Conclusions

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

References

- Yang, Y. Z.; Wu, C. Y.; Hu, X. D. Study of Web-based integration of pneumatic manipulator and its vision positioning. Zhejiang Univ. Sci. A, 2005, 6, 543–548. [Google Scholar]

- Yin, H.; Hong, H.; Liu, J. FPGA-based Deep Learning Acceleration for Visual Grasping Control of Manipulator. In Proceedings of the 2021 IEEE International Conference on Real-time Computing and Robotics (RCAR), Xining, China; 2021; pp. 881–886. [Google Scholar]

- Yanagisawa, H.; Yamashita, T.; Watanabe, H. A study on object detection method from manga images using CNN. In Proceedings of the 2018 International Workshop on Advanced Image Technology (IWAIT), Chiang Mai, Thailand, 2018, pp.1-4.

- Zhu, L.; Lin, H.; Wu, W.J. Machine vision-based workpiece positioning for industrial robots. J. Chinese Computer Science, 2016, 8, 1873–1877. [Google Scholar]

- Xu, W.F. Trajectory planning of vibration suppression for rigid-flexible hybrid manipulator based on PSO algorithm. J. Control and Decision, 2014, 29, 632–638. [Google Scholar]

- Lee, A. Y.; Jang, G.; Choi, Y. Infinitely differentiable and continuous trajectory planning for mobile robot control. In Proceedings of the 2013 10th International Conference on Ubiquitous Robots and Ambient Intelligence (URAI), Jeju, Korea (South), 2013; pp. 357–361. [Google Scholar]

- Wu, X.; Tan, L.; Xu, X. Three-dimensional recognition method of fruit target based on PointNet++. In Proceedings of the 2022 5th World Conference on Mechanical Engineering and Intelligent Manufacturing (WCMEIM), Ma’anshan, China; 2022; pp. 496–500. [Google Scholar]

- Tang, Y.; Zhang, Y.; Zhu, Y. A Research on the Fruit Recognition Algorithm Based on the Multi-Feature Fusion. In Proceedings of the 2020 5th International Conference on Mechanical, Control and Computer Engineering (ICMCCE), Harbin, China; 2020; pp. 1865–1869. [Google Scholar]

- Wang, X.; Huang, W.; Jin, C.; Hu, M.; Ren, F. Fruit recognition based on multi-feature and multi-decision. In Proceedings of the 2014 IEEE 3rd International Conference on Cloud Computing and Intelligence Systems, Shenzhen; 2014; pp. 113–117. [Google Scholar]

- Latha, R. S.; Sreekanth, G. R.; Rajadevi, R.; Nivetha, S. K.; Kuma, K. A.; Akash, V.; Bhuvanesh, S.; Anbarasu, P. Fruits and Vegetables Recognition using YOLO. In Proceedings of the 2022 International Conference on Computer Communication and Informatics (ICCCI), Coimbatore, India; 2022; pp. 1–6. [Google Scholar]

- Chowdhury, M. F. I.; Jeannerod, C. P.; Neiger, V.; Schost, É. ; Villard,G. Faster Algorithms for Multivariate Interpolation with Multiplicities and Simultaneous Polynomial Approximations. IEEE Trans. Inf. Theory, 2015, 61, 2370–2387. [Google Scholar]

- Kyung-Jo, P.; Park, Y.S. Fourier-based optimal design of a flexible manipulator path to reduce residual vibration of the endpoint. J. Robotica, 1993, 11, 263–272. [Google Scholar]

- Cao, S.; He, X.; Zhang, R.; Xiao, J.; Shi, G. Study on the reverse design of screw rotor profiles based on a B-spline curve. J. Advances in Mechanical Engineering. 2019, 11, 10. [Google Scholar] [CrossRef]

- Qin, Q.; Sun, L.; Feng, Y. Real-Time Image Filtering and Edge Detection Method Based on FPGA. In Proceedings of the 2022 IEEE 5th International Conference on Electronics Technology (ICET), Chengdu, China; 2022; pp. 1168–1173. [Google Scholar]

- Kim, J. R.; Jeon, J. W. Sobel Edge based Image Template Matching in FPGA. In Proceedings of the 2020 IEEE International Conference on Consumer Electronics Asia (ICCE-Asia), Seoul, Korea (South), 2020; pp. 1–2. [Google Scholar]

- Cho, B. Choi, J. Lee. “Time-optimal trajectory planning for a robot system under torque and impulse constraints,” 30th Annual Conference of IEEE Industrial Electronics Society, 2004. IECON 2004, Busan, Korea (South), vol. 2,2004, pp. 1058-1063.

- Ma, H.; Wang, Y.; Yang, S.; Ming, L. Interpolation method of NURBS and its offset curve. J. Mechanical Science and Technology, 2022, 41, 433–438. [Google Scholar]

- L, X.; Gao, X.; Zhang, W.; Hao, L. Smooth and collision-free trajectory generation in cluttered environments using cubic B-spline form. J. Mechanism and Machine Theory, 2022, 169, 104606. [Google Scholar]

- Yan, P.; Xiang, Z. Acceleration and optimization of artificial intelligence CNN image recognition based on FPGA. In Proceedings of the 2022 IEEE 6th Information Technology and Mechatronics Engineering Conference (ITOEC), Chongqing, China; 2022; pp. 1946–1950. [Google Scholar]

- Kojima, A.; Nose, Y. Development of an Autonomous Driving Robot Car Using FPGA. In Proceedings of the 2018 International Conference on Field-Programmable Technology (FPT), Naha, Japan; 2018; pp. 411–414. [Google Scholar]

- Kojima, A. Autonomous Driving System implemented on Robot Car using SoC FPGA. In Proceedings of the 2021 International Conference on Field-Programmable Technology (ICFPT), Auckland, New Zealand, pp. 1-4; 2021. [Google Scholar]

- Wei, K.; Honda, K.; Amano, H. FPGA Design for Autonomous Vehicle Driving Using Binarized Neural Networks. In Proceedings of the 2018 International Conference on Field-Programmable Technology (FPT), Naha, Japan, 2018, pp. 425-428.

- Hao, C.; Sarwari, A.; Jin, Z.; Abu-Haimed, H.; Sew, D.; Li, Y.; Liu, X.; Wu, B.; Fu, D.; Gu, J.; Chen, D. A Hybrid GPU + FPGA System Design for Autonomous Driving Cars. In Proceedings of the 2019 IEEE International Workshop on Signal Processing Systems (SiPS), Nanjing, China, 2019, pp. 121-126.

- Takasaki, K.; Hisafuru, K.; Negishi, R.; Yamashita, K.; Fukada, K.; Wakaizumi, T.; Togawa, N. An autonomous driving system utilizing image processing accelerated by FPGA. In Proceedings of the 2021 International Conference on Field-Programmable Technology (ICFPT), Auckland, New Zealand, 2021, pp. 1-4.

| Joint number i | ai-1 | αi-1 | di | θi |

|---|---|---|---|---|

| 1 | 0 | 0 | d1 | θ1 |

| 2 | 0 | 90° | 0 | θ2 |

| 3 | l3 | 0 | 0 | θ3 |

| 4 | l4 | 0 | 0 | θ4 |

| 5 | l5 | 0 | 0 | θ5 |

| 6 | 0 | 0 | 0 | θ6 |

| Fruit Type | Hue | Saturation | Value |

|---|---|---|---|

| Red Apple | 0~15,300~359 | 200~225 | 70~105 |

| Green Apple | 70~100 | 165~185 | 125~140 |

| Banana | 40~70 | 40~60 | 130~140 |

| Yellow Mango | 40~70 | 40~60 | 130~175 |

| Green Mango | 70~100 | 200~230 | 70~90 |

| Green Pear | 70~100 | 150~165 | 150~165 |

| Yellow Pear | 40~70 | 150~165 | 180~200 |

| Orange | 0~15,300~359 | 220~240 | 150~170 |

| Dragon Fruit | 0~15,300~359 | 170~195 | 80~100 |

| Grape | 0~15,300~359 | 100~140 | 30~60 |

| Kiwifruit | 15~70 | 200~220 | 30~60 |

| Fig | 5~30 | 120~130 | 70~90 |

| Mangosteen | 0~15,300~359 | 110~130 | 10~40 |

| Fruit Type | Roundness | Eccentricity | Body Ratio |

|---|---|---|---|

| Red Apple | 0.74~0.80 | 0.34~0.51 | 1.01~1.13 |

| Green Apple | 0.73~0.79 | 0.35~0.52 | 1.00~1.13 |

| Banana | 0.01~0.09 | 0.96~0.99 | 5.00~6.00 |

| Yellow Mango | 0.31~0.37 | 0.89~0.95 | 2.50~3.20 |

| Green Mango | 0.39~0.43 | 0.84~0.94 | 1.90~2.10 |

| Green Pear | 0.69~0.77 | 0.32~0.42 | 0.89~0.94 |

| Yellow Pear | 0.67~0.76 | 0.33~0.42 | 0.90~0.92 |

| Orange | 0.71~0.86 | 0.12~0.21 | 0.98~1.26 |

| Dragon Fruit | 0.05~0.11 | 0.71~0.85 | 1.68~1.88 |

| Grape | 0.90~0.99 | 0.09~0.16 | 0.98~1.04 |

| Kiwifruit | 0.82~0.90 | 0.64~0.76 | 1.20~1.50 |

| Fig | 0.59~0.67 | 0.54~0.62 | 0.81~0.94 |

| Mangosteen | 0.49~0.58 | 0.43~0.52 | 0.84~0.92 |

| Constraint Point Pi-1 | Joint1(rad) | Joint2(rad) | Joint3(rad) | Joint4(rad) | Joint5(rad) | Joint6(rad) |

|---|---|---|---|---|---|---|

| P0 | 0 | π/2 | 0 | 0 | 0 | 0 |

| P1 | 0 | π/5 | -π*2/3 | 0 | -π/2 | 0 |

| P2 | -π/3 | π/6 | 0 | 0 | π/3 | 0 |

| P3 | -π/3 | π/3 | 0 | 0 | 0 | 0 |

| Fruit Type | The exact number of identification/total number | The exact number of sorting/total number |

|---|---|---|

| Red Apple | 99/100 | 100/100 |

| Green Apple | 100/100 | 100/100 |

| Banana | 99/100 | 95/100 |

| Yellow Mango | 98/100 | 98/100 |

| Green Mango | 97/100 | 100/100 |

| Green Pear | 98/100 | 98/100 |

| Yellow Pear | 93/100 | 95/100 |

| Orange | 100/100 | 100/100 |

| Dragon Fruit | 98/100 | 95/100 |

| Grape | 96/100 | 88/100 |

| Kiwifruit | 98/100 | 98/100 |

| Fig | 96/100 | 92/100 |

| Mangosteen | 97/100 | 96/100 |

| Equipment | FF(Flip-Flop) | LUT | Average recognition time/ms | Average accuracy |

|---|---|---|---|---|

| Ours | 13K | 19K | 25.26 | 97.69% |

| Yan, et al. [19] | 148K | 118K | 900 | 99.6% |

| Yin, et al. [2] | 154K | 71K | 54.76 | 90.78%(mAP) |

| Kojima, et al. [20] | 40K | 27K | Not provided | 96.33% |

| Kojima [21] | 66K | 39K | Not provided | more than 90%(mAP) |

| Wei, et al. [22] | Not provided | Not provided | 1582 | Not provided |

| Hao, et al. [23] | Not provided | Not provided | 28.2 | 69.4%(mAP) |

| Takasaki, et al. [24] | 14K | 126K | Not provided | Not provided |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2023 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).