1. Introduction

Cloud computing is a paradigm-shifting technology that gives users access to the resources of computer systems in a scalable and on-demand manner. Load balancing and efficient resource allocation become more important as the number of cloud-based services and applications grows. These are two of the most important factors in optimising performance and making the most of the resources that are available. Techniques for load balancing allow for the dynamic distribution of tasks over several virtual machines, which in turn improves response times and resource utilisation. In the past, load balancing solutions concentrated on either minimising the amount of time needed for reactions or increasing the amount of resources that were employed. On the other hand, when it comes to cloud computing in the real world, load balancing is a multi-objective problem that necessitates making compromises between competing goals. For instance, optimising resource utilisation may result in longer reaction times, whilst lowering response times may lead to a more efficient use of available resources. Because of this, there is a requirement for load balancing strategies that are capable of properly dealing with the multi-objective character of the problem.

By modelling natural occurrences and biological processes, meta-heuristic algorithms have shown exceptional success in finding solutions to difficult optimisation challenges. The HHO (Harris hawk optimisation) algorithm is one of such type of algorithms. It gets its name from the cooperative hunting behaviour of Harris's hawks. Due to HHO's capacity to find a balance between exploration and exploitation, it has shown great potential in a variety of optimisation domains, which makes it a good candidate for addressing the multi-objective load balancing and resource allocation problem in cloud computing. HHO has shown tremendous potential in numerous optimisation domains.

Using the HHO algorithm, this work proposes a unique solution for multi-objective dynamic load balancing and resource allocation in cloud computing. Our objective is to achieve an effective and well-balanced distribution of activities and resources inside cloud settings by simultaneously optimising a number of objectives, such as reaction time, resource utilisation, energy efficiency, and cost. We provide a comprehensive and effective solution by incorporating the multi-objective aspect of the problem into the HHO algorithm.

The HHO algorithm is utilised by the proposed approach in order to dynamically distribute jobs across the available virtual machines (VMs) and assign resources in accordance with the workload and resource utilisation [

1]. The approach takes advantage of the hierarchical split of hawks into several functions, such as exploratory hawks, sentinel hawks, and possessive hawks, in order to evaluate the cooperative behaviour that is seen in Harris's hawks while they are hunting. These responsibilities make it possible for the hawks to investigate the solution space, keep an eye on the quality of the proposed solutions, and work towards the most effective decisions about load balancing and resource distribution.

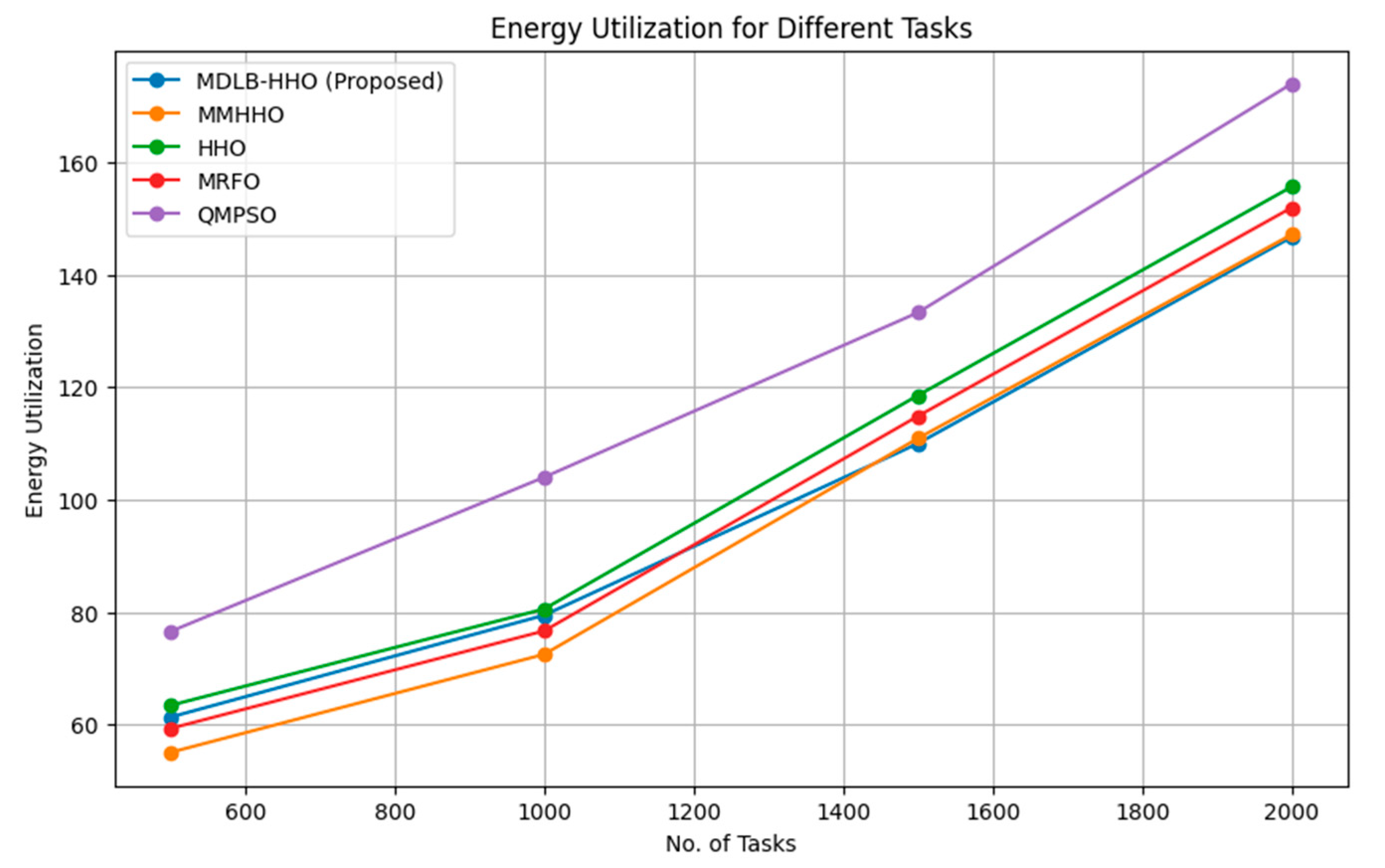

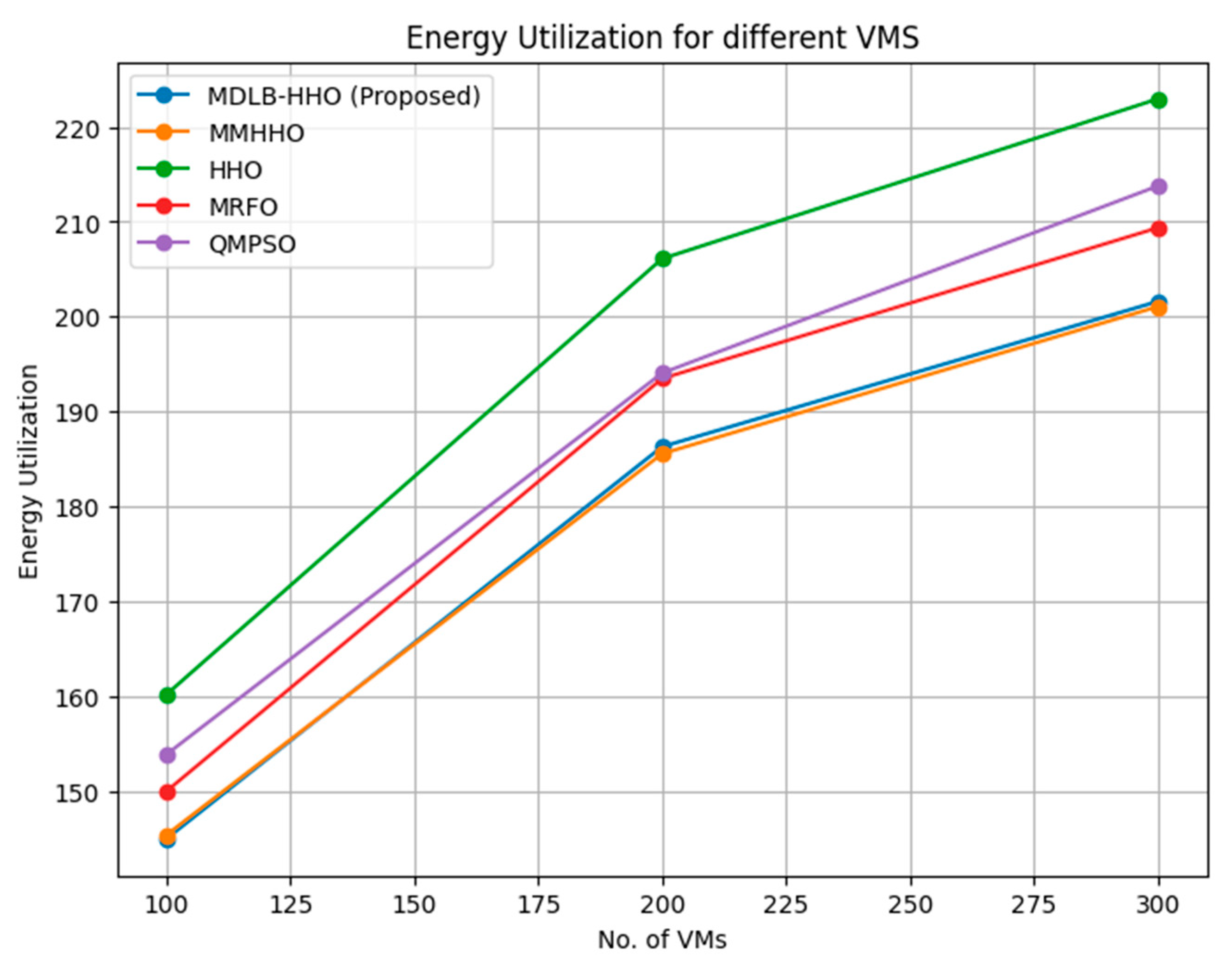

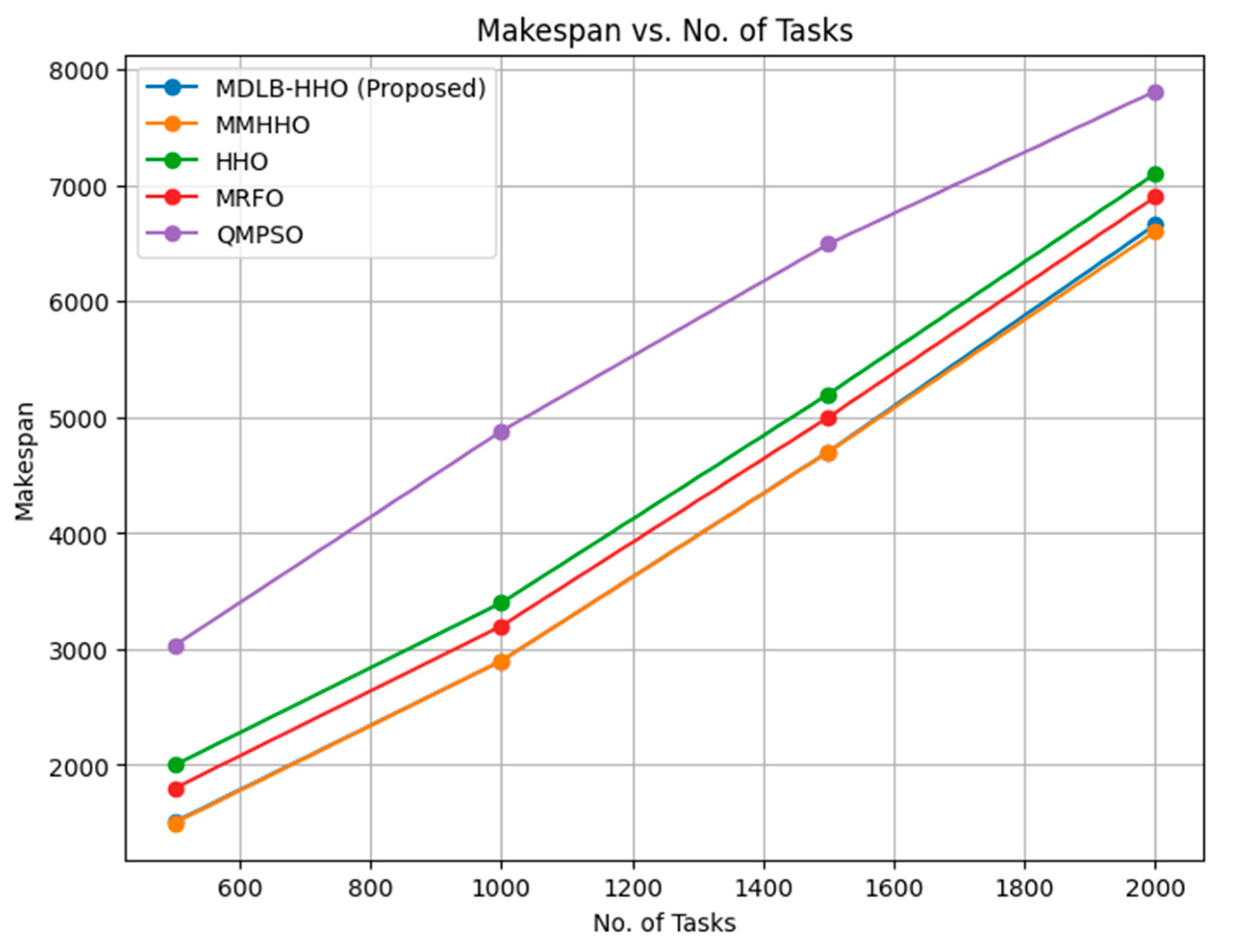

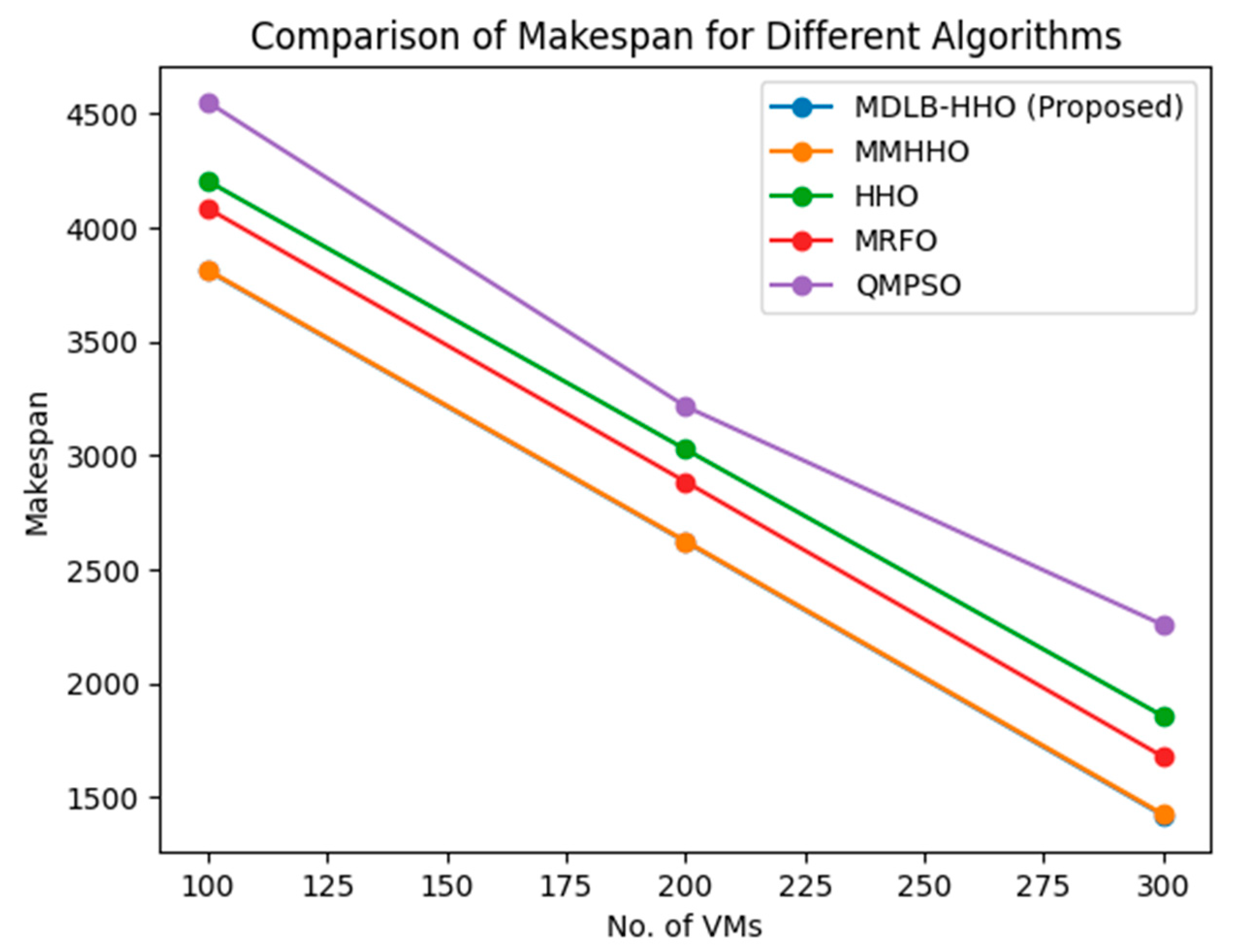

In order to determine how effectively the proposed approach performs, we conduct quite a lot of experiments and make comparisons with several load balancing and resource allocation approaches that are existing in cloud computing. In order to evaluate the performance, we examine important performance parameters such as reaction time, resource utilisation, energy usage, and cost. The findings point to the superiority of a multi-objective meta-heuristic dynamic load balancing and resource allocation strategy that makes use of the HHO algorithm. This technique demonstrates its capacity to accomplish better trade-offs between conflicting objectives and increase overall system performance.

The remainder of the paper is organised as follows:

Section 2 provides a comprehensive literature review on cloud computing load balancing, resource allocation, and meta-heuristic algorithms.

Section 3 presents the methodology, including problem formulation, HHO algorithm details, and multi-objective optimisation integration.

Section 4 describes the experimental setup, presents the results, and provides a comprehensive analysis.

Section 5 concludes by summarising the findings, discussing the contributions of this research, and outlining prospective directions for further improvement and research in multi-objective dynamic load balancing and resource allocation using the HHO algorithm in cloud computing.

2. Literature Review

In cloud computing systems, load balancing and resource allocation are essential components because they have the potential to enhance system performance, boost resource utilisation, and guarantee that resources are distributed fairly among users. Techniques for load balancing and resource allocation in cloud computing systems that are inspired by natural events or biological behaviours show a lot of promise. These algorithms are called meta-heuristic algorithms. The Harris hawk optimisation (HHO) method, which takes its name from the hunting strategy of Harris hawks, has been shown to be effective in the resolution of a variety of optimisation issues. However, its application in the process of load balancing and resource allocation in cloud computing is yet substantially unexplored.

This literature review focuses on meta-heuristic methods, such as genetic algorithms, particle swarm optimisation, ant colony optimisation, and simulated annealing, for load balancing and resource allocation within the context of cloud computing. It examines their advantages and disadvantages, as well as their load balancing and resource distribution applications. D. A. Shafiq and coworkers provide a load-balancing mechanism for data centres to ensure consistent application performance in cloud computing. In order to accomplish load balancing in the data centres, the load-balancing algorithm that they proposed makes use of a multi-objective optimisation strategy. The efficiency of the algorithm is further confirmed using sensitivity analysis, which evaluates the influence that changing parameters has on the performance of the algorithm. The authors offer their perspectives on the resilience of the algorithm as well as its capacity to adjust to a variety of different workload circumstances. A unique technique that handles the issues of load distribution and resource allocation is presented in this study as a contribution to the field of load balancing in cloud computing. It provides helpful insights as well as practical consequences for creating load-balancing algorithms that are efficient in cloud-based contexts.

The paper [

4] analyses the significance of load balancing in cloud computing and draws attention to the restrictions imposed by the currently available load balancing solutions when it comes to the management of load distribution in big-data cloud systems. The authors propose a unique load balancing strategy that they term the Central-Regional Architecture Based Load Balancing Technique (CRLBT). This strategy is intended to help address the problem. Combining a specified throughput maximisation technique with Throughput Maximised-Particle Swarm Optimisation (TM-PSO) and Throughput Maximised-Firefly optimisation (TM-Firefly) algorithms gives CRLBT a distinct advantage over typical central, distributive, and hierarchical cloud systems. In addition, CRLBT distinguishes itself from these cloud designs by combining these algorithms. The results of the experiments demonstrate considerable improvements in response time, task rejection ratio, CPU utilisation rate, and network throughput, which confirms the efficiency of the suggested technique in providing superior load balancing within the context of big-data cloud systems.

The paper [

5] proposes a method, DEER, for load balancing in fog computing environments, taking into account the increasing significance of fog computing in managing the information flow in large and complex networks for the Internet of Things (IoT). The objective is to increase overall efficiency while decreasing energy consumption, carbon emissions, and energy costs.

The most important components of the DEER strategy are the Tasks Manager, Resource Information Provider, Resource Scheduler, Resource Load Manager, Resource Power Manager, and Resource Engine. Tasks are submitted via Tasks Manager, and Cloud Data Centres register resource information. The Resource Engine is responsible for allocating work, while the Resource Scheduler organises available resources based on their utilisation. While Resource Power Manager is responsible for monitoring power consumption, Resource Load and Power Manager monitors the current status of the resources. This method optimises both energy consumption and computing costs, thereby enhancing performance and reducing environmental impact in fog-like scenarios.

This study addresses the problems of work distribution and resource allocation in fog computing by providing a layer fit algorithm and a Modified Harris-Hawks Optimisation (MHHO) strategy. Both of these are discussed in the paper. The proposed solutions cut expenses, make the most efficient use of resources, and distribute the load evenly across cloud and fog levels. The Internet of Things (IoT) enables smart devices to generate large amounts of data and computing labour, which makes it difficult to distribute duties in an effective manner. By preventing oversaturation, degraded service, and resource failures, the layer-fitting algorithm ensures that duties are fairly distributed between layers based on the relative importance of those layers.

In addition, a Modified Harris-Hawks Optimisation (MHHO) meta-heuristic approach is presented for assigning the best available resource within a layer to a task. The goals are to decrease Makespan time, task execution cost, and power consumption while increasing resource utilisation in both layers. The proposed layer fit algorithm and MHHO are compared to traditional optimisation algorithms such as Harris-Hawks Optimisation (HHO), Ant Colony Optimisation (ACO), Particle Swarm Optimisation (PSO), and the Firefly Algorithm (FA) using the iFogSim simulation toolkit.

According to a study by Edward et.al [

7], the load balancing objective in cloud computing is essential owing to the complexity that is produced by enormous amounts of data as well as the potential degradation of the entire system in the event that a defect occurs in a connected virtual machine (VM). The research suggests a unique strategy for resource allocation among virtual machines that is based on transfer learning using Fruitfly. This is done in order to overcome the issues that have been presented. When the virtual machines are first established, they are loaded with a variety of user duties; then, in order to balance the load, the weight is dispersed equitably among all linked VMs. Priority is used to determine the order in which tasks are completed, and resources are allocated in accordance with that order. According to this research paper's findings, the Fruitfly-based transfer learning approach appears to be a potentially useful option for load balancing in cloud computing. It solves the problems that are involved with sharing resources and scheduling tasks, which ultimately leads to improved overall performance and enhanced cloud services.

For the purpose of implementing dynamic resource allocation in cloud computing, Praveenchandar and Tamilarasi [

8] suggest a new load-balancing approach that they name PBMM. By putting an emphasis on the dynamic allocation and scheduling of resources, this algorithm overcomes difficulties with load balancing, which in turn promotes stability and profitability. It considers factors such as the size of the undertaking and the value of each customer's bid. The paper [

9] employs resource tables and task tables to optimise waiting time and minimise average waiting time and response time for special users. The objective is to maximise profits while improving load-balancing stability, especially by increasing the number of special users. The simulation results demonstrate that the proposed load balancing method accomplishes its goals effectively. It ensures optimum profit by optimising resource allocation and scheduling, and it enhances load balancing stability through increased participation of special users. By taking into account task size, bidding value, and utilising resource and task tables, the proposed algorithm improves stability, reduces waiting periods, and boosts profitability. The findings contribute to the advancement of load balancing techniques in cloud computing environments by emphasising their importance in maintaining service quality and optimising resource utilisation.

A comprehensive answer to the difficulties of limited physical memory, resource underutilization, and ASP profitability in MEC environments. Y. Sun and others have proposed a method [

10]. Utilising the Lyapunov optimisation framework and genetic algorithms, the proposed ASP profit-aware solution provides an efficient method for optimising resource allocation, minimising latency, and ensuring long-term profitability. This issue is formulated as a stochastic optimisation problem with ASP profit constraints over the long term. To address this issue, the authors employ the Lyapunov optimisation framework to convert it into a problem involving the optimisation of a specific time slot. Then, they employ genetic algorithms (GA) to create an online heuristic algorithm that approximates near-optimal strategies for each time period. Chetan Kumar et al. [

11] propose a method for reducing system latency and increasing ASP profitability. Utilising the edge network's available resources efficiently and minimising latency over time by simultaneously optimising application loading, assignment allocation, and compute resource allocation. It also attracts a greater number of ASVs by enabling them to attain their desired profitability.

Devi et al. [

12] proposed a security model based on deep learning to optimise job scheduling while considering security factors into account. The study analyses it and compares it to other conventional scheduling techniques to demonstrate its effectiveness. The research demonstrates the capability of deep learning algorithms to address security issues during task scheduling and emphasises the need for security in cloud computing. This paper contributes to the progress made in cloud computing scheduling by presenting a security approach based on deep learning.

The findings highlight the significance of security concerns in cloud computing environments and provide significant information on the performance of the proposed model compared to existing scheduling techniques.

The paper by Abhikriti and Sunitha [

13] examines the significance of scheduling algorithms in cloud computing with regard to the optimisation of resources and the reduction of Makespan time. Despite the fact that existing scheduling algorithms are primarily concerned with reducing Makespan time, they frequently cause load imbalances and wasteful resource utilisation. This research presents a unique Credit-Based Resource Aware Load Balancing Scheduling algorithm (CB-RALB-SA) as a potential solution to the problems described above.

The CB-RALB-SA algorithm's objective is to achieve a balanced distribution of tasks according to the capabilities of the resources available. It assigns weights to jobs using a credit-based scheduling approach, and then maps those tasks to resources after taking into account the load those resources are under and the processing power they possess. The FILL and SPILL functions of the Resource Aware and Load (RAL) approach, which makes use of the Honey Bee Optimisation heuristic algorithm, are used to carry out this mapping.

In [

14], the difficulties of load balancing in cloud computing as well as the significance of locating optimal solutions are explored. Swarm Intelligence (SI) is presented as a potential solution for load balancing, and it is contrasted with several different algorithms, including genetic algorithm, ACO, PSO, BAT, and GWO. The algorithms known as Particle Swarm Optimisation (PSO) and Grey Wolf Optimisation (GWO) are the primary foci of this study's investigation.

Federated clouds have emerged as a combination of private and public clouds to facilitate secure access to data from both categories of clouds. However, the procedure of authorization and authentication in federated clouds can be complex.

A new algorithm called the Secured Storage and Retrieval Algorithm (ATDSRA) has been suggested [

15] with the intention of providing private and public users of cloud databases with the ability to store and retrieve data in a secure mannerIn addition to the Triple-DES ciphering used for encryption, it combines encrypted data using data merging and aggregation algorithms. In addition, the CRT-based Dynamic Data Auditing Algorithm (CRTDDA) is presented for the purpose of controlling access to federated cloud data and conducting audits of the data.

The ATDSRA algorithm and CRTDDA auditing scheme enhance the security of federated cloud-stored data. The proposed model addresses the complex task of authorization and authentication in cloud computing by providing secure storage, retrieval, and auditing capabilities, thereby enhancing the overall security of federated cloud environments.

In order to solve the problem of load balancing, the authors of [

16] propose a combined method namely firefly and BAT. This strategy makes use of the advantages offered by both rapid convergence and global optimisation. This strategy tries to improve both the effectiveness of the system and the distribution of its resources. The findings of the research show that promising results can be achieved in terms of globally optimised quick convergence and decreased total reaction time.

Saydul et al. [

17] discuss the significance of task scheduling in cloud computing and how it affects resource utilisation and service performance. It emphasises the need for efficient job scheduling strategies to prevent resource waste and performance decline. Examining various task scheduling techniques within the context of cloud and grid computing, the research identifies their successes, challenges, and limitations. A taxonomy is proposed to classify and analyse these techniques, with the goal of bridging the gaps between existing studies and offering a conceptual framework for more efficient job scheduling in cloud computing.

A priority-based scheduling approach is proposed by Junaid et al. [

18] as a method for achieving equitable task scheduling in cloud computing environments. The method's goals are to make the most efficient use of available resources and to boost overall performance. The study throws up questions that could be investigated further in the future and could lead to more effective scheduling tactics. Academicians, policymakers, and practitioners can all benefit from using the priority-based scheduling technique to optimise cloud computing configurations. This is because the technique leads to the creation of more efficient task scheduling strategies.

Ashawa et al. [

19] research focuses on resource allocation in large-scale distributed computing systems, specifically in the context of cloud computing. The goal is to maximise overall computing efficiency or throughput by effectively allocating resources. Cloud computing is distinguished from grid computing, and the challenges of allocating virtualized resources in a cloud computing paradigm are acknowledged.

Using the LSTM (Long-Short Term Memory) algorithm, a dynamic resource allocation system is implemented in this study. This system analyses application resource utilisation and determines the optimal allocation of additional resources for each application. The LSTM model is trained and evaluated in simulations that approximate real-time. In addition, the study discusses the incorporation of dynamic routing algorithms designed for cloud data centre traffic. The proposed resource allocation model [

20] is compared to other load-balancing techniques and demonstrates improved accuracy rates and decreased error percentages in the average request blockage probability under heavy traffic loads. Compared to other models, the proposed method increases network utilisation and decreases processing time and resource consumption. For load balancing in energy clouds, it is suggested that future research investigate the implementation of various heuristics and machine learning techniques, specifically the use of firefly algorithms.

Cloud computing provides a variety of services to consumers, but the increased demand also increases the probability of errors. Several fault tolerance techniques have been devised in order to mitigate the impact of errors. The paper [

21] describes a multilevel fault tolerance system designed to improve cloud environments' reliability and availability. The proposed system has two levels of operation. In the first level, a reliability assessment algorithm is used to determine which virtual devices are trustworthy. By evaluating the dependability of virtual machines, the system guarantees that tasks are assigned to the most dependable resources, thereby minimising the possibility of errors. By replicating data and distributing it among a number of physical or virtual devices, the replication method ensures that the data will always be accessible at the second level. This technique ensures that even if one copy becomes inaccessible or malfunctions, other replicas can still be accessed, hence protecting the availability of data even if that one copy becomes unavailable or malfunctions. This multilevel fault tolerance method improves fault tolerance in real-time cloud environments by combining reliable virtual machine identification with data replication. The result is a reduction in mistakes and an increase in the efficiency of cloud services.

Inattention to federated cloud environments Federated clouds, which combine private and public clouds, present unique challenges for authorization, authentication, and secure data storage. The absence of efficient algorithms and models to resolve these complexities and improve the overall security of federated cloud environments represents a research gap.

Table 1.

Comparison of Existing Methods.

Table 1.

Comparison of Existing Methods.

| Citation |

Technique |

Advantage |

Limitations |

| Ali Asghar Heidari et al., 2019 |

Meta-heuristic algorithms (e.g., HHO) |

Effective optimization capabilities, near-optimal solutions |

HHO implementation in cloud computing is largely unexplored |

| K Vinoth Kumar & A Rajesh, 2022 |

Multi-objective optimization strategy |

Load balancing method for data centers, efficient algorithm, adjustable to different workload circumstances |

Limited discussion on the algorithm's resilience and adaptability |

| Shafiq D A et al., 2021 |

CRLBT strategy with TM-PSO and TM-Firefly |

Improved response time, task rejection ratio, CPU utilization rate, and network throughput |

-- |

| Oduwole O A et al., 2022 |

DEER strategy |

Increased efficacy, reduced energy consumption, decreased environmental impact |

Limited discussion on the dynamic nature of fog environments |

| Rehman A U et al., 2020 |

Layer fit algorithm and MHHO strategy |

Enhanced performance metrics, reduced costs, maximized resource utilization, load balancing in fog computing |

Comparison with traditional optimization algorithms |

| Edward G, Geetha B & Ramaraj E, 2023 |

Fruitfly-based transfer learning |

Improved load balancing, resource sharing, and task scheduling |

Limited discussion on the algorithm's performance compared to others |

| Praveenchandar & Tamilarasi, 2022 |

PBMM algorithm |

Dynamic resource allocation, improved load balancing stability and profitability |

-- |

| Narwal A & Sunita D, 2023 |

CB-RALB-SA algorithm |

Balanced distribution of tasks, efficient load balancing, honey bee optimization |

-- |

| Al Reshan M.S. et al., 2023 |

SI, PSO, and GWO algorithms |

Potential load balancing solutions, comparison with other algorithms |

-- |

| Sermakanu A.M., 2020 |

ATDSRA and CRTDDA algorithms |

Secure storage and retrieval, restricted data access |

Limited discussion on the algorithm's performance compared to others |

| Saydul A.M. et al., 2022 |

Task scheduling techniques analysis |

Efficiency of job scheduling, resource utilization, performance improvements |

Taxonomy proposed for classification and analysis of scheduling techniques |

| Ashawa M et al., 2022 |

LSTM-based dynamic resource allocation |

Maximization of computing efficiency, improved resource allocation |

Limited discussion on the comparison with other allocation techniques |

| Hariharan B, 2020 |

Resource allocation model |

Increased accuracy, decreased error percentages, improved network utilization |

Suggested further research on heuristics and machine learning techniques |

2.1. Summary of the Literature review

The purpose of this literature review is to investigate the difficulties associated with load balancing and resource allocation in cloud computing settings and to investigate the various techniques that researchers have offered to address these concerns. In cloud computing, it places a primary emphasis on the utilisation of meta-heuristic methods, such as the Harris hawk optimisation (HHO) algorithm, for the purposes of load balancing and resource allocation.

Well-known meta-heuristic algorithms that are employed in cloud computing, such as genetic algorithms, particle swarm optimisation, ant colony optimisation, and simulated annealing, are investigated in one study. It examines their advantages and disadvantages, as well as the uses they have in load balancing and resource distribution.

A method for load balancing in data centres that makes use of a multi-objective optimisation strategy has been proposed in one study. In yet another piece of research, the issue of load distribution in big-data cloud systems is investigated via the lens of the Central-Regional Architecture Based Load Balancing Technique (CRLBT). In order to manage the flow of information over complicated networks for the Internet of Things (IoT), a technique known as DEER has been presented as a solution for load balancing in fog computing settings. This comes as the importance of fog computing in this area continues to grow.

In addition to that, a paper provides a layer fit technique as well as a Modified Harris-Hawks Optimisation (MHHO) strategy to solve the job distribution and resource allocation issues that are inherent in fog computing. In this article, a novel load balancing algorithm known as PBMM and a load balancing technique based on transfer learning utilising Fruitfly are discussed for the purpose of dynamic resource allocation in cloud computing.

The introduction of a deep learning-based security model and a Credit-Based Resource Aware Load Balancing Scheduling algorithm brings to light the necessity of security in cloud computing scheduling. Both of these innovations are designed to balance and distribute workloads. In addition to this, the paper delves into the topic of swarm intelligence algorithms and suggests a Secured Storage and Retrieval Algorithm (ATDSRA) for usage in federated cloud environments.

In addition, research on task scheduling, priority-based scheduling strategies, resource allocation in distributed computing systems, dynamic resource allocation utilising the LSTM algorithm, fault tolerance systems, and reliability assessment in cloud environments are all covered in this review.

This literature review sheds light on the existing body of knowledge about load balancing and resource allocation in cloud computing. Additionally, it draws attention to the potential of meta-heuristic algorithms and other methodologies to effectively solve these difficulties.

2.2. Research Gap

The research gap in the literature necessitates further exploration and development of load balancing and resource allocation techniques in cloud computing, taking into consideration specific environments, security concerns, combined optimisation techniques, and dynamic resource allocation strategies. In addition, there is a need for a comprehensive analysis, classification, and evaluation of existing techniques in order to provide insight and direction for the development of more effective solutions.

Although meta-heuristic algorithms, such as HHO, have demonstrated promise in solving optimisation problems, their application in cloud computing load balancing and resource allocation is primarily unexplored.

Load balancing techniques tailored to specific cloud computing environments, such as fog computing and big-data cloud systems, are required. Existing research frequently disregards the distinctive characteristics and difficulties of these environments.

Although load balancing and resource allocation are essential for optimising system performance, guaranteeing security in the scheduling process is equally crucial. In the context of task scheduling, the incorporation of security factors, such as deep learning-based security models, requires further investigation.

Equally as essential as load balancing and resource allocation for optimising system performance is the security of the scheduling process. In the context of task scheduling, the incorporation of security factors, such as deep learning-based security models, requires further investigation.

Federated clouds, which combine private and public clouds, present unique authorization, authentication, and secure data storage challenges. The absence of efficient algorithms and models to resolve these complexities and improve the overall security of federated cloud environments represents a research gap.

There is a need for research that investigates the effectiveness of combining various optimisation techniques, such as the firefly and BAT algorithms, to improve load balancing and resource allocation in cloud computing.

Task scheduling has a substantial impact on resource utilisation and overall system performance. Further study is required to develop more effective scheduling techniques that maximise resource utilisation and minimise Makespan time while taking load balancing and performance tradeoffs into account.

Dynamic resource allocation necessitates techniques that optimise computing efficiency, particularly in large-scale distributed computing systems. Dynamic resource allocation models, such as LSTM-based algorithms, and their integration with dynamic routing algorithms for cloud data centre traffic require additional study.