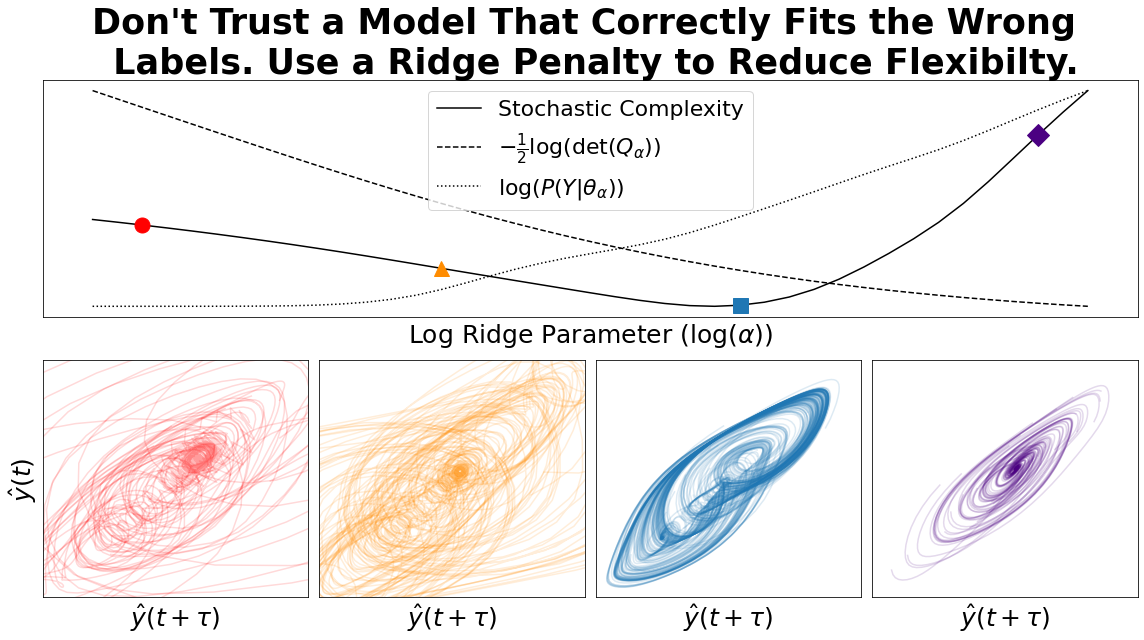

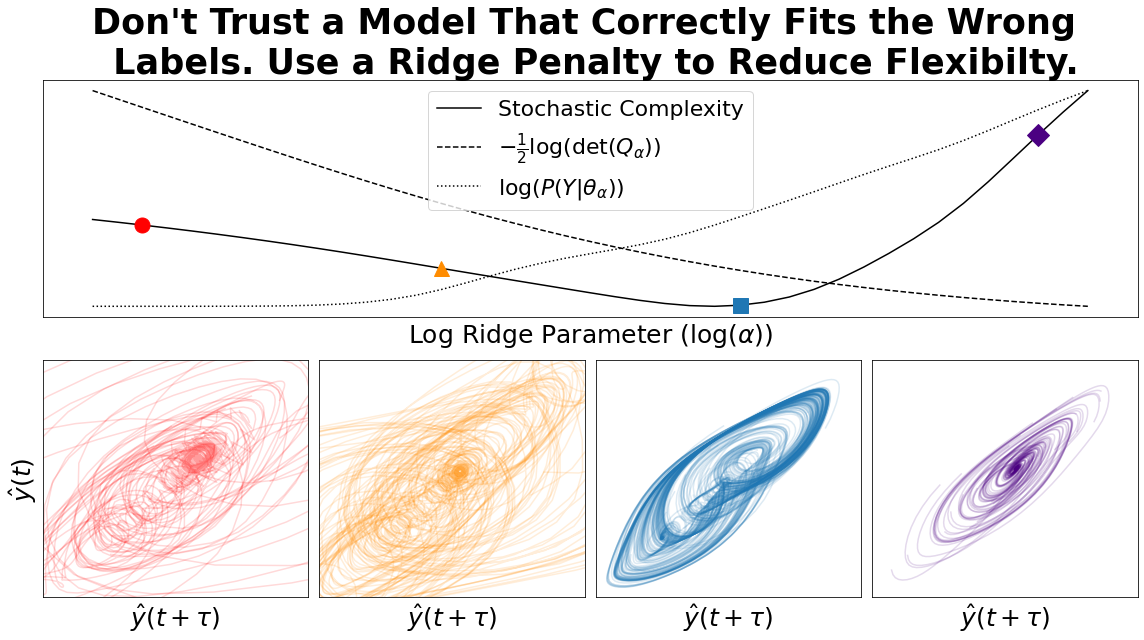

We derive a penalty strength criterion for ridge regression using stochastic complexity,

which is a refined variant of the minimum description length principle. Since stochastic

complexity doesn’t typically account for the effect of regularisation on complexity, despite

its ability to simplify models, we are required to make a slight modification to the un-

derlying coding scheme. Our scheme makes use of a weighted ensemble of regularised

model fits rather than a mixture of maximum likelihood estimates. Under this modification,

regularisation is interpreted as reducing model complexity by constraining flexibility. In

the case of ridge regression, the complexity penalty term that we derive can be expressed

analytically as the log determinant of the residual operator. We demonstrate the effect of

this complexity penalty by fitting a linear readout to a reservoir computer.