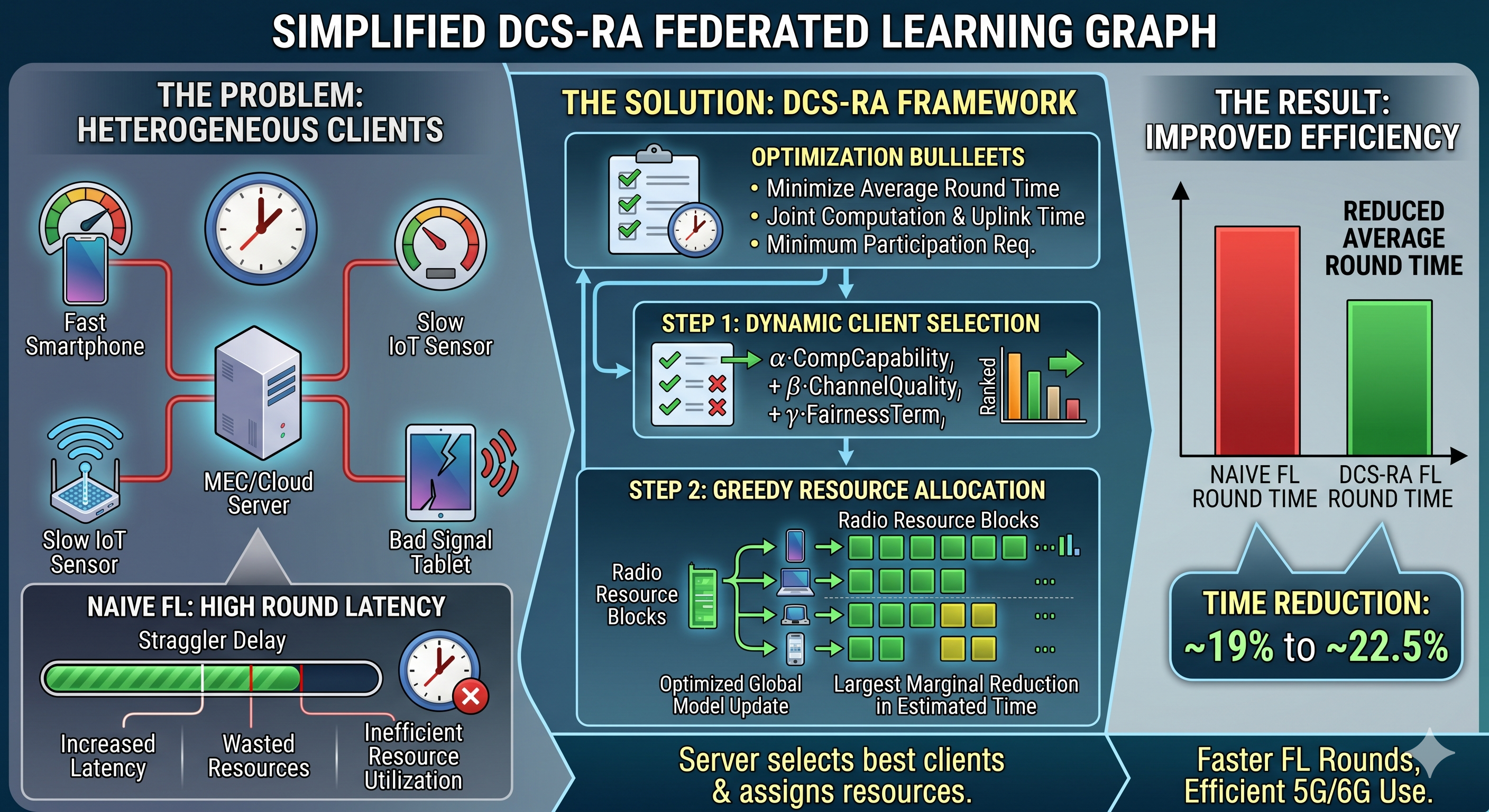

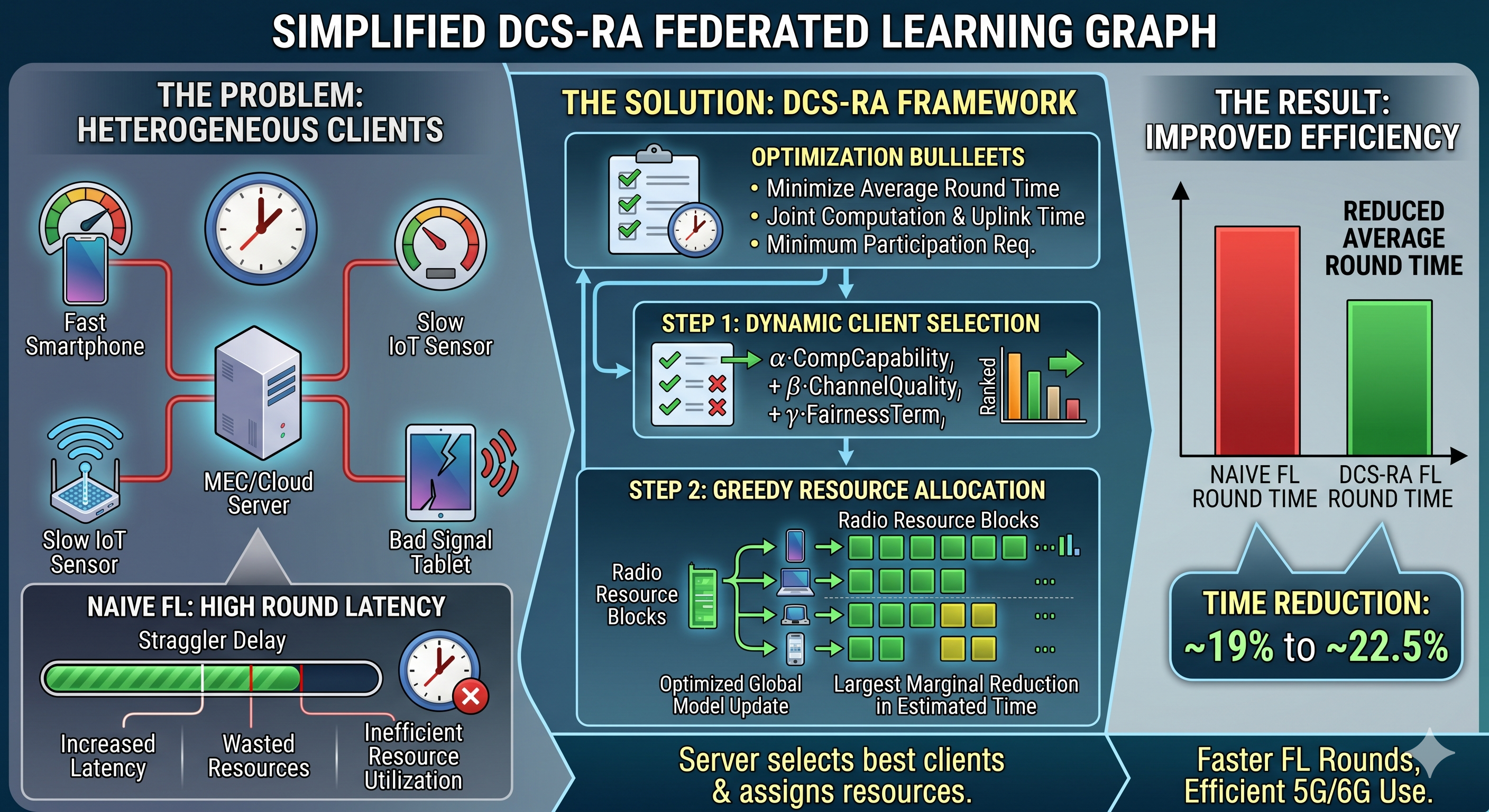

Federated learning (FL) is an attractive learning paradigm for privacy-preserving edge intelligence because it allows distributed devices to train a shared model without moving raw data to a central server. This feature is especially relevant to 5G and emerging 6G networks, where ultra-low latency, dense connectivity, and edge-native computing are expected to support large-scale intelligent services. Nevertheless, practical FL deployment remains difficult in heterogeneous wireless environments because client devices differ in processing capability, battery budget, data volume, and channel quality. These differences create stragglers, increase round latency, and waste scarce communication resources when client participation is scheduled naively. This study develops a deployment-oriented framework for dynamic client selection and resource allocation in heterogeneous edge environments. We formulate each FL round as a latency-constrained optimization problem that jointly captures computation time, uplink transmission time, and minimum participation requirements. On this basis, we propose a Dynamic Client Selection and Resource Allocation (DCS-RA) method that ranks clients using a weighted score combining computational capability, channel quality, and a fairness term, followed by a greedy radio-resource allocation procedure that prioritizes the largest marginal reduction in estimated completion time. Using the reported simulation setting with 100 clients and 20 resource blocks, DCS-RA reduces average round-completion time from 1.92 s to 1.55 s on MNIST and from 2.02 s to 1.57 s on CIFAR-10, corresponding to improvements of 19.39% and 22.47%, respectively. The results indicate that lightweight joint scheduling can substantially improve wall-clock efficiency for FL over heterogeneous 5G/6G edge networks.