Submitted:

01 May 2026

Posted:

04 May 2026

You are already at the latest version

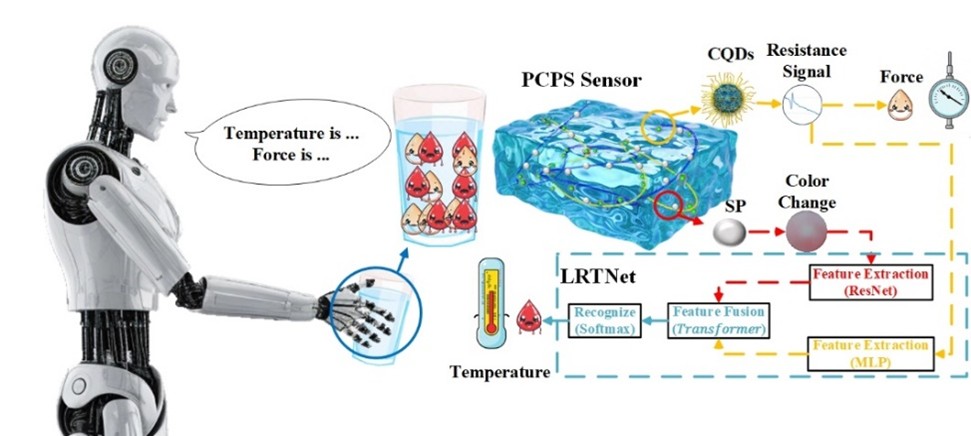

Abstract

Keywords:

1. Introduction

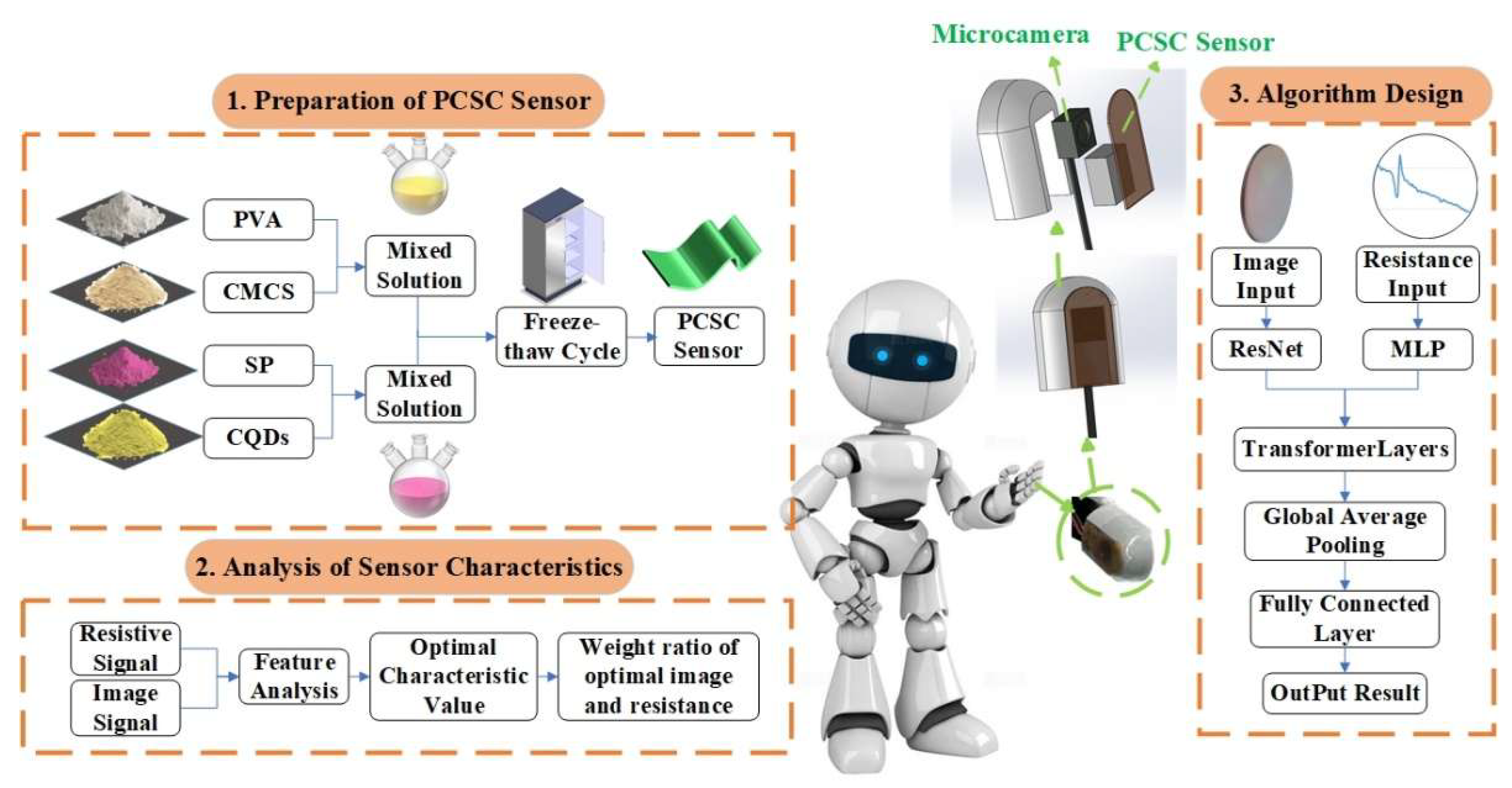

2. Methodology

2.1. Overview of the Research Methodology

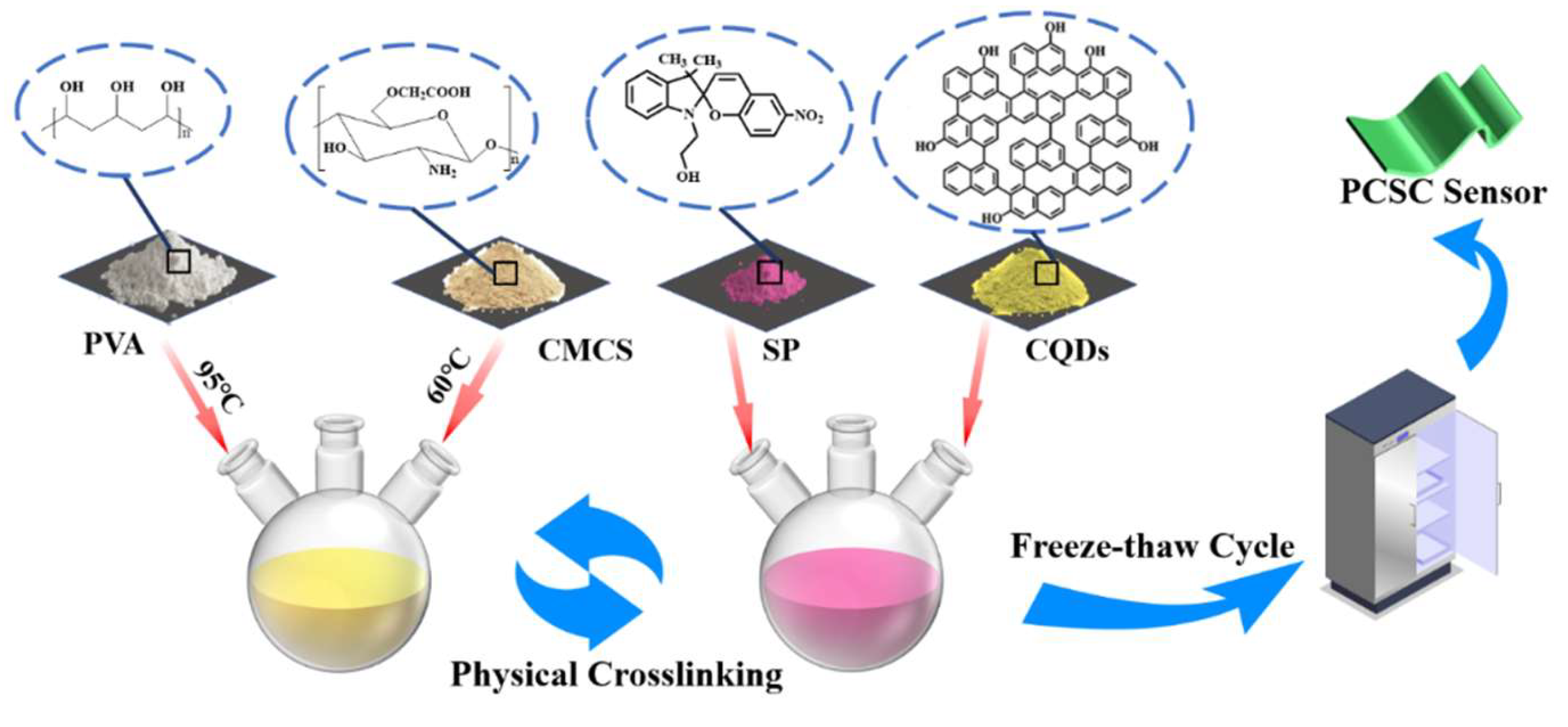

2.2. Preparation of the Sensor

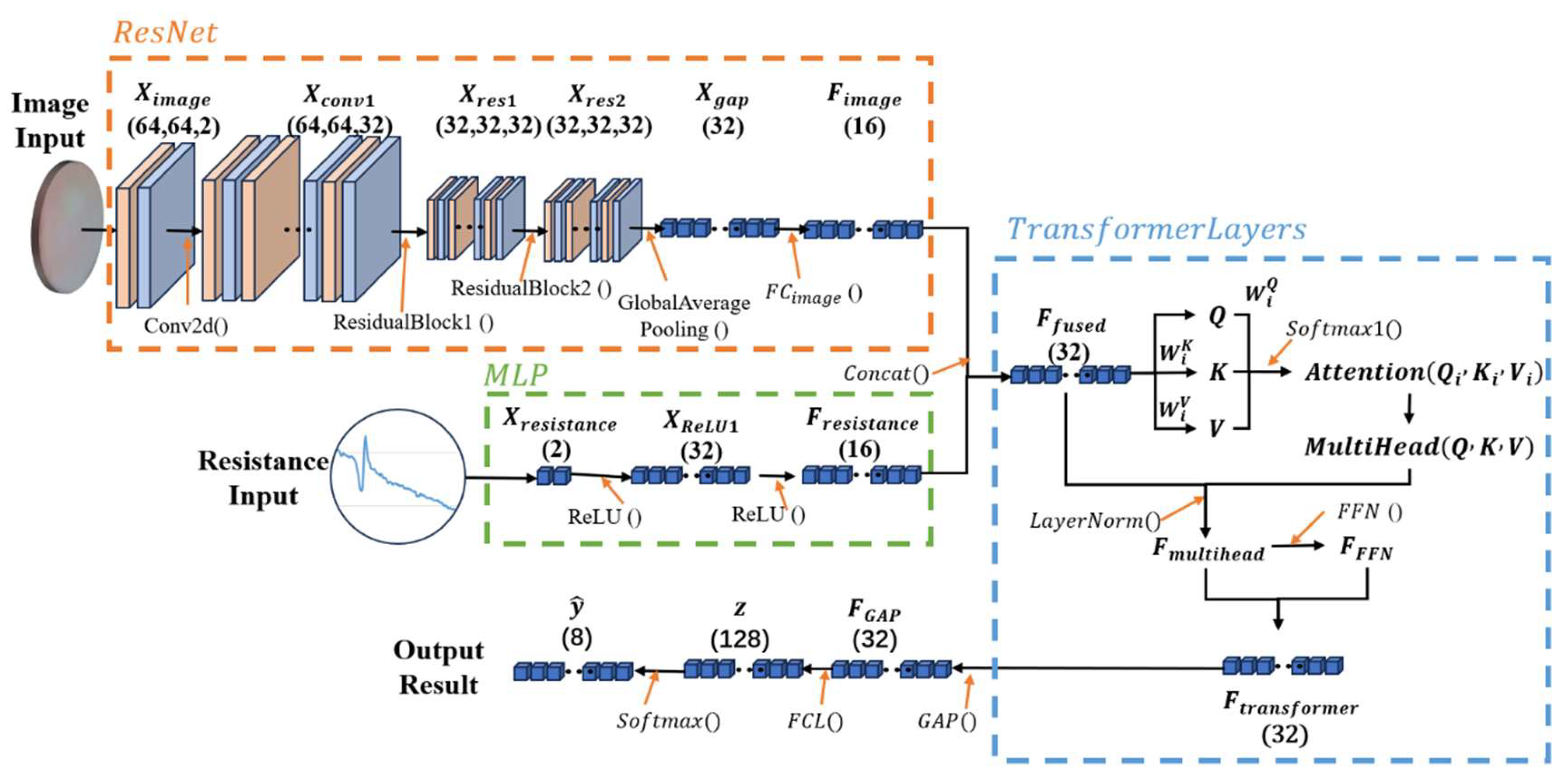

2.3. Algorithm Design

2.3.1. Image Feature Extraction – Lightweight ResNet

2.3.2. Feature Fusion Layer

3. Experimental Design

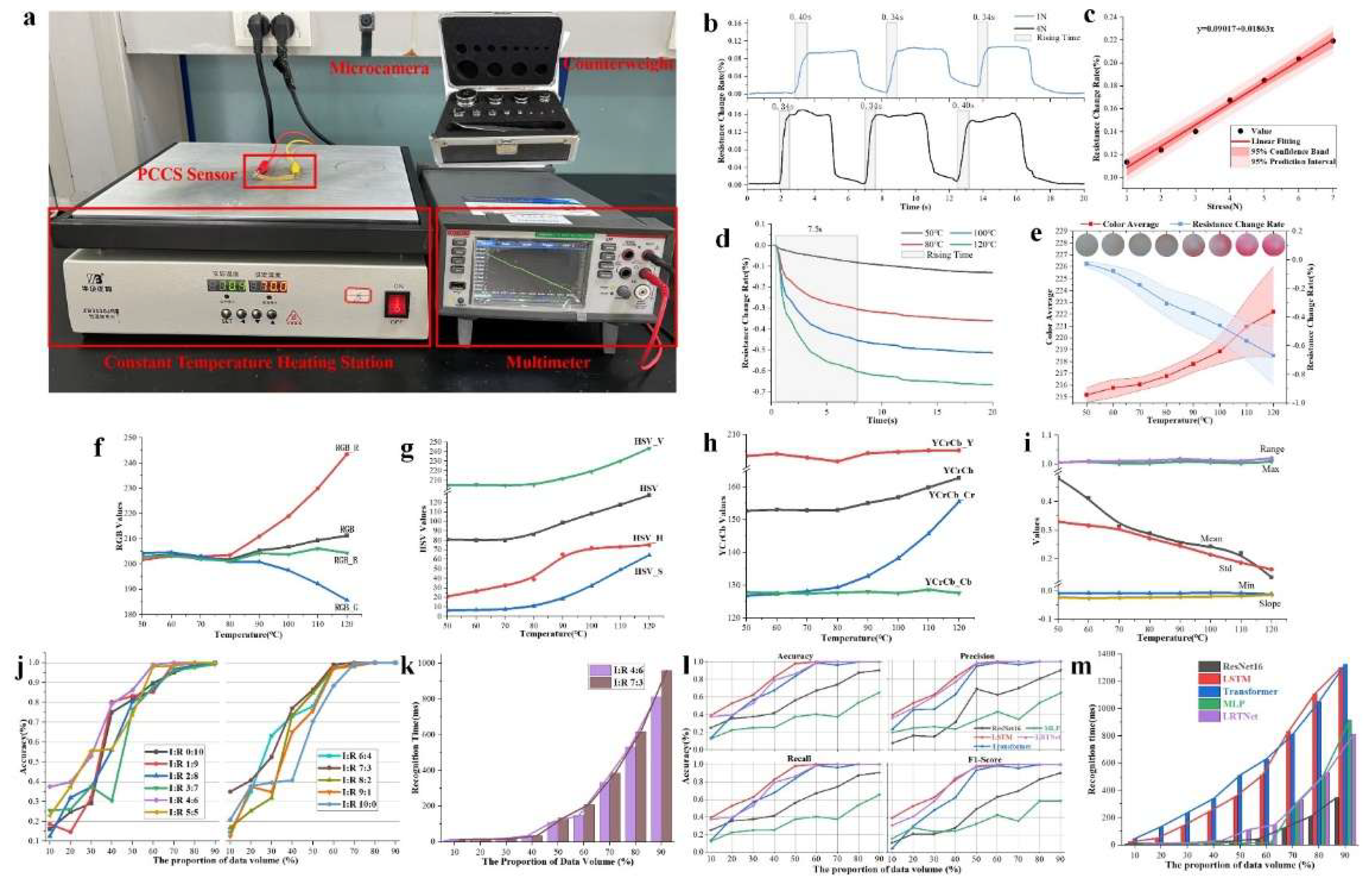

3.1. Sensor Performance Testing

3.2. Algorithm Testing and Optimization

3.2.1. Data Collection and Feature Analysis

3.2.2. Selection of Weight Ratios for Image and Resistance Data

3.2.3. Algorithm Comparison

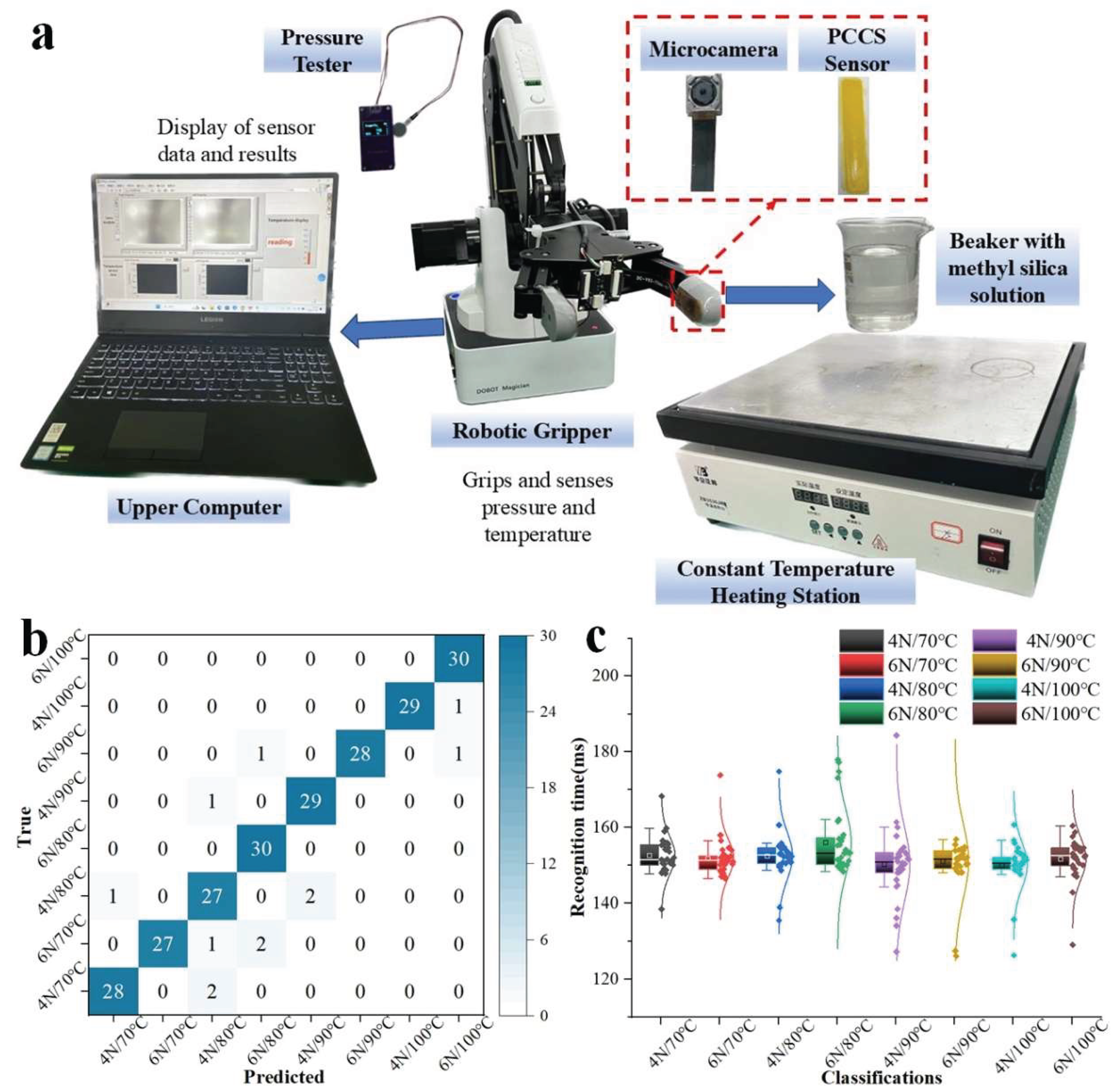

4. Real-Time Recognition Experiment on Robotic Gripper

5. Conclusions

Supplementary Materials

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- Shihong, X.; Zeng, F.; Shuaitao, Y.; Xueqing, Z.; Yuan, G.; Huan, C.; Lujun, P. Highly Flexible, Stretchable, and Self-Powered Strain-Temperature Dual Sensor Based on Free-Standing PEDOT:PSS/Carbon Nanocoils-Poly(vinyl) Alcohol Films. ACS Sens. 2021, 6((3)), 1120–1128. [Google Scholar] [CrossRef]

- Zhang, Q.; Liu, Z.; Wu, J.; Sun, P.; Zhang, H. Design and Performance Analysis of a Hybrid Flexible Pressure Sensor with Wide Linearity and High Sensitivity. Sensors 2025, 26((1)), 238–238. [Google Scholar] [CrossRef]

- Yin, G.; Tian, C.; Jiang, Q.; Wang, G.; Shao, L.; Li, Q.; Li, Y.; Yu, M. Fabrication and Sensing Characterization of Ionic Polymer-Metal Composite Sensors for Human Motion Monitoring. Sensors 2026, 26((2)), 394–394. [Google Scholar] [CrossRef]

- He, Y.; Xu, X.; Xiao, S.; Wu, J.; Zhou, P.; Chen, L.; Liu, H. Research Progress and Application of Multimodal Flexible Sensors for Electronic Skin. ACS Sens. 2024. [Google Scholar] [CrossRef] [PubMed]

- Yunjian, G.; Xiao, W.; Song, G.; Wenjing, Y.; Yang, L.; Guozhen, S. Recent Advances in Carbon Material-Based Multifunctional Sensors and Their Applications in Electronic Skin Systems. Adv. Funct. Mater. 2021, 31, (40). [Google Scholar] [CrossRef]

- Zixuan, Z.; Kehan, L.; Ziyue, B.; Weizhong, Y. Highly adhesive, self-healing, anti-freezing and anti-drying organohydrogel with self-power and mechanoluminescence for multifunctional flexible sensor. Compos. Part A Appl. Sci. Manuf. 2022, (prepublish), 106806. [Google Scholar] [CrossRef]

- Fanbing, H.; Lina, C.; Shuyao, F.; Xufeng, X.; Yong, L.; Minghui, L.; Wen, W. Chip-level orthometric surface acoustic wave device with AlN/metal/Si multilayer structure for sensing strain at high temperature. Sens. Actuators A. Phys. 2022, 333. [Google Scholar] [CrossRef]

- Zixuan, Z.; Kaiqi, G.; Feifei, Y.; Wenjing, Y.; Yang, L.; Junli, Y. The dual-mode sensing of pressure and temperature based on multilayer structured flexible sensors for intelligent monitoring of human physiological information. Compos. Sci. Technol. 2023, 238. [Google Scholar] [CrossRef]

- Wu, T.; Li, Y. T.; Zhao, L.; Zhang, Y.; Zhang, Z.; Yuan, J.; Wu, Y.; Che, A.; Ma, Y.; Chai, Y.; Wang, Y. Recent Progress on Flexible Multimodal Sensors: Decoupling Strategies, Fabrication and Applications. Adv. Mater. 2026, 38((12)), e21375. [Google Scholar] [CrossRef]

- Yiming, Y.; Yalong, W.; Huayang, L.; Jin, X.; Chen, Z.; Xin, L.; Jinwei, C.; Hanfang, F.; Guang, Z. A flexible dual parameter sensor with hierarchical porous structure for fully decoupled pressure–temperature sensing. Chem. Eng. J. 2022, 430, P4. [Google Scholar] [CrossRef]

- Kose, U.; Sili, G.; Doken, B.; Saygili, E. S.; Akleman, F.; Kartal, M. A New Hybrid Sensor Design Based on a Patch Antenna with an Enhanced Sensitivity Using Frequency-Selective Surfaces (FSS) in the Microwave Region for Non-Invasive Glucose Concentration Level Monitoring. Electronics 2026, 15((2)), 427–427. [Google Scholar] [CrossRef]

- Zhong, M.; Jing, Z.; Jiean, L.; Yi, S.; Lijia, P. Frequency-Enabled Decouplable Dual-Modal Flexible Pressure and Temperature Sensor. IEEE ELECTRON DEVICE Lett. 2020, 41((10)), 1568–1571. [Google Scholar] [CrossRef]

- W., F.-W. J.; Thanh, D. V.; Toan, D.; Canh-Dung, T.; Viet, D. D. Pressure and temperature sensitive e-skin for in situ robotic applications. Mater. Des. 2021, 208. [Google Scholar] [CrossRef]

- Fei, W.; Jianwen, C.; Xihua, C.; Xining, L.; Xiaohua, C.; Yutian, Z. Wearable Ionogel-Based Fibers for Strain Sensors with Ultrawide Linear Response and Temperature Sensors Insensitive to Strain. ACS Appl. Mater. Interfaces 2022. [Google Scholar] [CrossRef]

- Jingxuan, C.; Hongji, W.; Guoliang, Z.; Fan, L.; Qi, F.; Yang, L.; Xiaodong, C.; Hua, D. Multifunctional Conductive Hydrogel/Thermochromic Elastomer Hybrid Fibers with a Core-Shell Segmental Configuration for Wearable Strain and Temperature Sensors. ACS Appl. Mater. Interfaces 2020, 12((6)), 7565–7574. [Google Scholar] [CrossRef]

- Han, Y.; Liu, Y.; Liu, Y.; Jiang, D.; Wu, Z.; Jiang, B.; Yan, H.; Toktarbay, Z. High-performance PVA-based hydrogels for ultra-sensitive and durable flexible sensors. Adv. Compos. Hybrid. Mater. 2025, 8((1)), 154–154. [Google Scholar] [CrossRef]

- Lu, Y.; Qu, X.; Wang, S.; Zhao, Y.; Ren, Y.; Zhao, W.; Wang, Q.; Sun, C.; Wang, W.; Dong, X. Ultradurable, freeze-resistant, and healable MXene-based ionic gels for multi-functional electronic skin. Nano Res. 2021, 15((5)), 1–10. [Google Scholar] [CrossRef]

- Xiao, S.; He, Y.; Lu, Y.; Niu, X.; Li, Q.; Wu, J.; Luo, D.; Tian, F.; Wan, G.; Liu, H. An ultrasensitive flexible pressure, temperature, and humidity sensor based on structurally adjustable nano-through-hole array films. J. Mater. Chem. C Mater. Opt. Electron. Devices 2023, 11((37)), 13. [Google Scholar] [CrossRef]

- Sungwoo, C.; Seok, K. J.; Yongsang, Y.; Youngin, C.; Jun, J. S.; Dongpyo, J.; Gwangyeob, L.; Il, S. K.; Seok, N. K.; Inchan, Y.; Donghee, S.; Changhyun, P.; Yong, J.; Hachul, J.; Jin, K. Y.; Deok, C. B.; Jaehun, K.; Phil, K. S.; Wanjun, P.; Seongjun, P. An artificial neural tactile sensing system. Nat. Electron. 2021, 4((6)), 429–438. [Google Scholar] [CrossRef]

- Xiao, W.; Hao, L.; Wenjing, Y.; Song, G.; Zhenxiang, C.; Yang, L.; Guozhen, S. A high-accuracy, real-time, intelligent material perception system with a machine-learning-motivated pressure-sensitive electronic skin. Matter 2022, 5((5)). [Google Scholar] [CrossRef]

- Mengwei, L.; Yujia, Z.; Jiachuang, W.; Nan, Q.; Heng, Y.; Ke, S.; Jie, H.; Lin, S.; Jiarui, L.; Qiang, C.; Pingping, Z.; H., T. T. A star-nose-like tactile-olfactory bionic sensing array for robust object recognition in non-visual environments. Nat. Commun. 2022, 13((1)), 79–79. [Google Scholar] [CrossRef]

- Shengshun, D.; Binghao, W.; Yucheng, L.; Yinghui, L.; Di, Z.; Jun, W.; Jun, X.; Wei, L.; Baoping, W. Waterproof Mechanically Robust Multifunctional Conformal Sensors for Underwater Interactive Human–Machine Interfaces. Adv. Intell. Syst. 2021, 3((9)). [Google Scholar] [CrossRef]

- Zhongda, S.; Minglu, Z.; Zixuan, Z.; Zhaocong, C.; Qiongfeng, S.; Xuechuan, S.; Hua, Y. R. C.; Chengkuo, L. Artificial Intelligence of Things (AIoT) Enabled Virtual Shop Applications Using Self-Powered Sensor Enhanced Soft Robotic Manipulator. Adv. Sci. 2021, 8((14)), e2100230–e2100230. [Google Scholar] [CrossRef]

- Ding, Z.; Li, W.; Wang, W.; Zhao, Z.; Zhu, Y.; Hou, B.; Zhu, L.; Chen, M.; Che, L. Highly Sensitive Iontronic Pressure Sensor with Side-by-Side Package Based on Alveoli and Arch Structure. Adv. Sci. 2024, 11, 24. [Google Scholar] [CrossRef]

- Wu, X.; Yang, X.; Wang, P.; Wang, Z.; Fan, X.; Duan, W.; Yue, Y.; Xie, J.; Liu, Y. Strain-Temperature Dual Sensor Based on Deep Learning Strategy for Human–Computer Interaction Systems. ACS Sens. 2024, 9((8)), 11. [Google Scholar] [CrossRef]

- Shengshun, D.; Qiongfeng, S.; Jun, W. Multimodal Sensors and ML-Based Data Fusion for Advanced Robots. Adv. Intell. Syst. 2022, 4((12)). [Google Scholar] [CrossRef]

- Ick, P. H.; Jin, C. T.; In-Geol, C.; Suk, R. M.; Youngsu, C. Object classification system using temperature variation of smart finger device via machine learning. Sens. Actuators A. Phys. 2023, 356. [Google Scholar] [CrossRef]

- Zhao, P.; Song, Y.; Xie, P.; Zhang, F.; Xie, T.; Liu, G.; Zhao, J.; Han, S.; Zhou, Y. All-Organic Smart Textile Sensor for Deep-Learning-Assisted Multimodal Sensing (Adv. Funct. Mater. 30/2023). Adv. Funct. Mater. 2023, 33, 30. [Google Scholar] [CrossRef]

- A, M. J.; W, H. A.; I, G. D.; Ivan, A.; Craig, M.; Kenneth, B.; Christos, T. Identifying Defects in Aerospace Composite Sandwich Panels Using High-Definition Distributed Optical Fibre Sensors. Sensors 2020, 20((23)), 6746. [Google Scholar] [CrossRef] [PubMed]

| Feature | ARC from 50°C to 120°C (%) | ARC from 50°C - 80°C (%) | ARC from 80°C -120°C (%) |

|---|---|---|---|

| RGB | 0.57 | 0.19 | 1.14 |

| RGB_R | 2.75 | 0.29 | 4.60 |

| RGB_G | -1.35 | -0.56 | -1.95 |

| RGB_B | 0.10 | -0.32 | 0.41 |

| HSV | 6.83 | 2.24 | 10.28 |

| HSV_H | 21.61 | 22.94 | 20.62 |

| HSV_S | 41.93 | 20.99 | 57.64 |

| HSV_V | 2.54 | 0.05 | 4.40 |

| YCrCb | 0.92 | 0.05 | 1.57 |

| YCrCb_Y | 0.12 | -0.28 | 0.42 |

| YCrCb_Cr | 2.98 | 0.65 | 4.72 |

| YCrCb_Cb | -0.02 | -0.03 | -0.01 |

| Mean | -15.76 | -15.35 | -16.06 |

| Std | -9.46 | -6.22 | -11.88 |

| Min | 6.47 | 2.10 | 9.75 |

| Max | 0.05 | -0.09 | 0.15 |

| Range | 0.21 | 0.19 | 0.22 |

| Slope | -5.89 | 0.26 | -10.51 |

| Algorithm | The proportion of data volume (%) | ||||||||

|---|---|---|---|---|---|---|---|---|---|

| 10% | 20% | 30% | 40% | 50% | 60% | 70% | 80% | 90% | |

| ResNet16 | 0.11 | 0.21 | 0.21 | 0.28 | 0.44 | 0.51 | 0.55 | 0.64 | 0.67 |

| LSTM | 0.21 | 0.35 | 0.30 | 0.51 | 0.58 | 0.64 | 0.60 | 0.60 | 0.60 |

| Transformer | -0.09 | 0.10 | 0.08 | 0.32 | 0.42 | 0.59 | 0.58 | 0.61 | 0.60 |

| MLP | 0.06 | 0.18 | 0.18 | 0.19 | 0.27 | 0.33 | 0.22 | 0.35 | 0.37 |

| LRTNet | 0.23 | 0.31 | 0.42 | 0.62 | 0.67 | 0.75 | 0.72 | 0.70 | 0.68 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).