Submitted:

01 May 2026

Posted:

04 May 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction: The Crisis of the Tenable and the Thermodynamics of Intelligence

1.1. Waste Management in Sensu Lato

1.2. The Mutually Uplifting Synergy

1.3. Contributions to Sustainability: The Symbiotic Covenant in Action

2. Waste Management in Sensu Lato: A Taxonomic Expansion

2.1. The Physiology of Failure: The Runner and the Drunkard

- The Exhausted Runner: If a runner starts at a pace that exceeds their reserve of energy and strength, they will inevitably collapse or quit the race owing to the premature exhaustion of resources. The current scaling paradigm for LLMs exhibits precisely this exhaustion profile [2]: training a single large model can emit as much carbon dioxide as the lifetime emissions of five automobiles [3].

- The Algorithmic Drunkard: If an algorithm is overly greedy for inputs that are increasingly rare and requires hardware that consumes resources with no regard for the rigors of computational complexity theory [24], it becomes a liability to itself and to society as a whole. The global data center industry already rivals mid-sized nations in electricity consumption [13,25].

2.2. The ENIAC Moment: A Historical Parallel of Infancy

- The Prophecy of Efficiency: We assert that current AI is in its “ENIAC phase”—a loud, resource-intensive infancy [6]. The transition from “walking on foot” from Rochester to Buffalo, to driving, and ultimately to the helicopter flight of algorithmic elegance, is the only path toward a truly sustainable intelligence. Research on parsimony and learning [26,27] already signals the first stirrings of this paradigm shift.

2.3. Historical Transitions Toward Algorithmic Parsimony

| Era / Entity | The “Greedy” Resource | The Successor | The Paradigm Shift |

|---|---|---|---|

| The ENIAC [22] | Vacuum Tubes / Bulk Power | The Transistor | From physical bulk to solid-state elegance [23]. |

| The Concorde | Massive Fuel (Supersonic) | Efficient Jets | Prioritizing fuel-per-passenger over raw speed. |

| Whale Oil | Perishable Bio-resources | Kerosene / Electricity | Transitioning to abundant, scalable energy. |

| AltaVista | Computational Bloat | Google PageRank [28] | Using inherent web structure as “fuel.” |

| Brute Force AI [2] | Parameters | Parsimonious AI [20] | From memorization to genuine understanding [10]. |

2.4. The Virtue of Algorithmic Parsimony

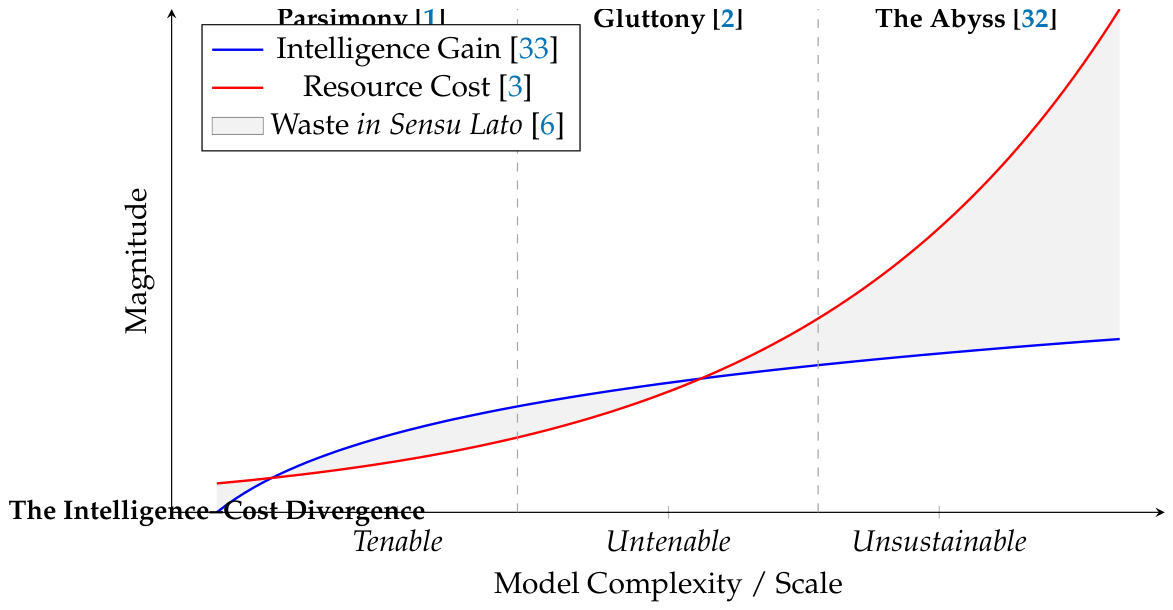

3. The Intelligence–Cost Divergence: Visualizing the Abyss

4. The Interplay: Regulatory Mandates and the Infrastructure Crisis

4.1. The Outcry of Data Centers: The New `Smokestacks’ of Intelligence

- Grid Instability and Energy Rates: Global data center electricity consumption rose to approximately 460 terawatt-hours in 2022—equivalent to the national consumption of France [13]. In regions such as Northern Virginia and Ireland, data centers now exceed 20% of total domestic electricity capacity in some locales, driving residential energy rate increases [34].

- The Hydrological Footprint: Evaporative cooling for high-density GPU clusters has become an acute environmental contention point. Large AI-focused data centers can consume up to 5 million gallons of water per day [35]. A 2025 study published in Nature Sustainability projected the annual water footprint of U.S. AI server deployment at between 731 and 1,125 million cubic meters between 2024 and 2030 [5].

4.2. Adherence to 2026 International Standards

- 1.

- ISO/IEC 42001 [8] (Artificial Intelligence Management System): This standard has become the global benchmark for responsible AI governance. It mandates that organizations perform rigorous “System Impact Assessments” that include explicit environmental cost-benefit analyses before deployment.

- 2.

- The EU AI Act (2024/2026 Implementation) [9]: As of 2026, providers of General-Purpose AI (GPAI) models are legally required to provide technical documentation on the energy consumption of their models. High-impact models that exceed specific compute thresholds are classified under “Systemic Risk,” necessitating stringent efficiency audits [36].

- 3.

4.3. From Compliance to Synergy

5. The Synergy: Toward a Mutually Uplifting AI–Sustainability Framework

5.1. AI as the `Planetary Operating System’

- The Methane Sentinel: AI-coupled hyperspectral imaging is achieving unprecedented rates of global methane leak identification, allowing real-time mitigation [14].

- Optimizing the Circular Economy: Advanced AI-driven robotics are achieving high accuracy in waste sorting in sensu lato, transforming traditional landfills into “urban mines” for rare-earth minerals and high-value polymers [16].

- Precision Agriculture: Recent systematic reviews document AI-driven gains including 25% yield increases, 28% fertilizer savings, and 35% reductions in nitrogen runoff [37].

5.2. The Virtue of Algorithmic Parsimony

5.3. Safe and Sustainable: A Dual Mandate

- 1.

- AI Protects the Grid: Agentic AI systems can balance volatile renewable energy loads (solar and wind) across smart grids in real-time, mitigating the very blackouts that unsustainable data centers once threatened [16].

- 2.

- AI Preserves Resources: From “Digital Twins” of entire watersheds to AI-optimized crop yields [37], we are replacing physical consumption with digital optimization.

6. The Alchemy of AI: Transmuting Leaden Brute Force into Golden Parsimony

7. The Symbiotic Policy Covenant: A Framework for Actionable Intervention

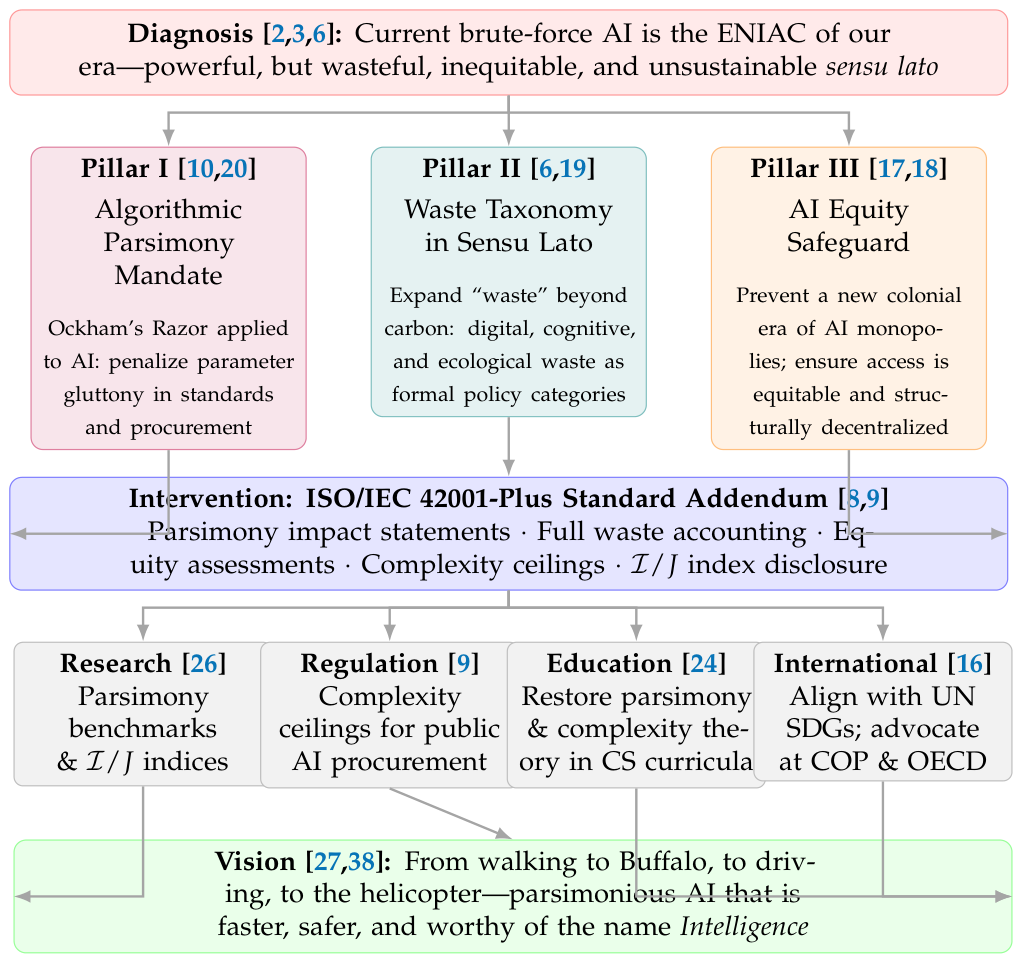

7.1. Preamble: From Diagnosis to Prescription

7.2. Pillar I: The Algorithmic Parsimony Mandate

- 1.

- Parsimony Impact Statements (PIS): Every organization seeking regulatory approval or public funding for an AI system above a defined compute threshold must file a documented justification for why the proposed parameter count is necessary for the stated objective, and what alternatives at lower complexity were considered and rejected [9].

- 2.

- Complexity Ceilings in Public Procurement: Public sector agencies procuring AI solutions must impose complexity ceilings expressed in terms of parameter counts, FLOPs per inference, and energy-per-decision ratios [7]. This mirrors existing energy efficiency standards in procurement for physical goods.

- 3.

- An Intelligence-per-Joule () Index: Funding bodies, journals, and standards organizations should adopt the index as a normalized metric for evaluating AI systems [6]. Publication of this index alongside performance benchmarks should be mandatory for any system claiming to be “state-of-the-art.”

7.3. Pillar II: Waste Taxonomy in Sensu Lato as Policy Language

- Cognitive Waste should be addressed through national AI workforce strategies that ensure human intelligence is directed toward high-value, creative, and ethical AI development rather than toward the maintenance of bloated infrastructure [17].

7.4. Pillar III: The AI Equity Safeguard

- 1.

- Anti-Monopoly Provisions: International AI governance frameworks must include explicit provisions preventing any single entity or small consortium from controlling the critical infrastructure of foundation model training and inference [18].

- 2.

- Open Efficiency Standards: The most parsimonious and efficient AI architectures should be required, as a condition of any public subsidy or regulatory approval, to be published as open standards—ensuring benefits are available to the full global research community [17].

- 3.

- Distributed AI Infrastructure Investment: National and multilateral investment in AI infrastructure should be directed toward distributed, energy-efficient computing facilities rather than the consolidation of hyperscale data centers in a handful of jurisdictions [5].

7.5. The Intervention: A Proposed ISO/IEC 42001-Plus Addendum

- 1.

- Mandatory Parsimony Impact Statements for systems above defined compute thresholds [9].

- 2.

- Full Waste Accounting across all three categories of the in sensu lato taxonomy [19].

- 3.

- Equity Impact Assessments evaluating the distributional consequences of AI deployment [17].

- 4.

- Complexity Ceilings as conditions of certification [6].

- 5.

- Index Disclosure as a mandatory element of system documentation [7].

7.6. The Four Action Levers

- Research

- [26,27]. The scientific community must develop the measurement infrastructure necessary for the Covenant—standardized parsimony benchmarks, validated indices, and open audit methodologies. This is a call to the mathematics and statistics communities to reassert their foundational role in AI [10,11].

- Regulation

- Education

- [24]. The curriculum of every computer science and AI program must restore the Principle of Parsimony and the rigors of computational complexity theory to their rightful centrality. The generation of engineers who will build the next era of AI must be equipped to recognize and resist the temptations of brute force.

- International Coordination

- [14,16]. The Covenant must be adopted as a contribution to the United Nations Sustainable Development Goals (SDGs), and its principles must be advocated at the Conference of the Parties (COP), at the OECD, and in the ITU. AI sustainability is planetary in scope; its governance must be correspondingly universal.

8. Conclusions: The Symbiotic Mandate

Author Contributions

Funding

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Rissanen, J. Modeling by shortest data description. Automatica 1978, 14, 465–471. [Google Scholar] [CrossRef]

- Strubell, E.; Ganesh, A.; McCallum, A. Energy and Policy Considerations for Deep Learning in NLP. In Proceedings of the Proceedings of the 57th Annual Meeting of the Association for Computational Linguistics, 2019; pp. 3645–3650. [Google Scholar] [CrossRef]

- Patterson, D.; Gonzalez, J.; Le, Q.; Liang, C.; Munguia, L.M.; Rothchild, D.; So, D.R.; Texier, M.; Dean, J. Carbon emissions and large neural network training. arXiv 2021, arXiv:2104.10350. [Google Scholar] [CrossRef]

- Mytton, D. Data centre water consumption. npj Clean. Water 2021, 4, 11. [Google Scholar] [CrossRef]

- Xiao, T.; You, F. Environmental impact and net-zero pathways for sustainable artificial intelligence servers in the USA. Nature Sustainability 2025. Published. 10 November 2025. [CrossRef]

- Schwartz, R.; Dodge, J.; Smith, N.A.; Etzioni, O. Green AI. Commun. ACM 2020, 63, 54–63. [Google Scholar] [CrossRef]

- Luccioni, A.S.; Viguier, S.; Ligozat, A.L. Estimating the carbon footprint of BLOOM, a 176B parameter language model. J. Mach. Learn. Res. 2023, 24, 253. [Google Scholar]

- ISO/IEC. ISO/IEC 42001:2023 — Artificial Intelligence Management System (AIMS); International Organization for Standardization / International Electrotechnical Commission. Technical report. 2023.

- European Parliament and Council of the European Union. Regulation (EU) 2024/1689 of the European Parliament and of the Council laying down harmonised rules on artificial intelligence (Artificial Intelligence Act). Technical report. Official Journal of the European Union, 2024. [Google Scholar]

- Rissanen, J. Stochastic Complexity in Statistical Inquiry; World Scientific: Singapore, 1989. [Google Scholar]

- Fokoue, E. Model Selection for Optimal Prediction in Statistical Machine Learning. Not. Am. Math. Soc. 2020, 67, 155–165. [Google Scholar] [CrossRef]

- Bellman, R. Dynamic programming Introduces the “curse of dimensionality.”. In Princeton University Press; 1957. [Google Scholar]

- International Energy Agency. Energy and AI Report 2025, 2025.

- Rolnick, D.; Donti, P.L.; Kaack, L.H.; Kochanski, K.; Lacoste, A.; Sankaran, K.; et al. Tackling climate change with machine learning. ACM Comput. Surv. Originally posted as. 2022, arXiv:1906.0543355, 1–96. [Google Scholar] [CrossRef]

- van Wynsberghe, A. Sustainable AI: AI for sustainability and the sustainability of AI. AI Ethics 2021, 1, 213–218. [Google Scholar] [CrossRef]

- Kaack, L.H.; Donti, P.L.; Strubell, E.; Kamiya, G.; Creutzig, F.; Rolnick, D. Aligning artificial intelligence with climate change mitigation. Nat. Clim. Change 2022, 12, 518–527. [Google Scholar] [CrossRef]

- Bender, E.M.; Gebru, T.; McMillan-Major, A.; Shmitchell, S. On the Dangers of Stochastic Parrots: Can Language Models Be Too Big? In Proceedings of the Proceedings of the 2021 ACM Conference on Fairness, Accountability, and Transparency (FAccT), 2021; pp. 610–623. [Google Scholar] [CrossRef]

- Crawford, K. Atlas of AI: Power, Politics, and the Planetary Costs of Artificial Intelligence; Yale University Press: New Haven, CT, 2021. [Google Scholar]

- Ligozat, A.L.; Lefèvre, J.; Bugeau, A.; Combaz, J. Unraveling the hidden environmental impacts of AI solutions for environment life cycle assessment of AI solutions. Sustainability 2022, 14, 5172. [Google Scholar] [CrossRef]

- Donoho, D.L. Compressed sensing. IEEE Trans. Inf. Theory 2006, 52, 1289–1306. [Google Scholar] [CrossRef]

- International Telecommunication Union. ITU-T L.1801: Methodology for assessing the environmental impact of artificial intelligence; Technical report; ITU, 2026. [Google Scholar]

- Haigh, T.; Priestley, M.; Rope, C. ENIAC in Action: Making and Remaking the Modern Computer; MIT Press: Cambridge, MA, 2016. [Google Scholar]

- Riordan, M.; Hoddeson, L. Crystal Fire: The Invention of the Transistor and the Birth of the Information Age; W.W. Norton: New York, 1997. [Google Scholar]

- Sipser, M. Introduction to the Theory of Computation, 3rd ed.; Cengage Learning: Stamford, CT, 2013. [Google Scholar]

- Masanet, E.; Shehabi, A.; Lei, N.; Smith, S.; Koomey, J. Recalibrating global data center energy-use estimates. Science 2020, 367, 984–986. [Google Scholar] [CrossRef] [PubMed]

- Conference on Parsimony and Learning. CPAL 2026: Third Conference on Parsimony and Learning; ELLIS Institute Tübingen, in conjunction with Max Planck Institute for Intelligent Systems, 2026.

- Wang, Y.; et al. Beyond scaleup: Knowledge-aware parsimony learning from deep networks. arXiv 2024, arXiv:2407.00478. [Google Scholar]

- Brin, S.; Page, L. The anatomy of a large-scale hypertextual Web search engine. Comput. Netw. ISDN Syst. 1998, 30, 107–117. [Google Scholar] [CrossRef]

- Cover, T.M.; Thomas, J.A. Elements of Information Theory, 2nd ed.; Wiley-Interscience: Hoboken, NJ, 2006. [Google Scholar]

- Akaike, H. A new look at the statistical model identification. IEEE Trans. Autom. Control 1974, 19, 716–723. [Google Scholar] [CrossRef]

- Schwarz, G. Estimating the dimension of a model. Ann. Stat. 1978, 6, 461–464. [Google Scholar] [CrossRef]

- de Vries-Gao, A. The carbon and water footprints of data centers and what this could mean for artificial intelligence. In ScienceDirect (Cell Press); 2025. Published December 2025. [Google Scholar] [CrossRef]

- Kaplan, J.; McCandlish, S.; Henighan, T.; Brown, T.B.; Chess, B.; Child, R.; Gray, S.; Radford, A.; Wu, J.; Amodei, D. Scaling laws for neural language models. arXiv 2020, arXiv:2001.08361. [Google Scholar] [CrossRef]

- Lincoln Institute of Land Policy. Data Drain: The Land and Water Impacts of the AI Boom. Land Lines Magazine, 2025. [Google Scholar]

- Environmental and Energy Study Institute. Data Centers and Water Consumption. EESI Article, 2025. [Google Scholar]

- Ebert, K.; Alder, N.; Herbrich, R.; Hacker, P. AI, climate, and regulation: From data centers to the AI act. arXiv 2024, arXiv:2410.06681. [Google Scholar] [CrossRef]

- Okechukwu Paul-Chima, U. Implementing artificial intelligence and machine learning algorithms for optimized crop management: a systematic review on data-driven approach to enhancing resource use and agricultural sustainability. Cogent Food Agric. 2025. [Google Scholar] [CrossRef]

- Dettmers, T.; Lewis, M.; Belkada, Y.; Zettlemoyer, L. int8(): 8-bit matrix multiplication for transformers at scale. Adv. Neural Inf. Process. Syst. 2022, 35. [Google Scholar]

- Hardy, G.H. A Mathematician’s Apology On the timelessness of mathematical ideas; Cambridge University Press: Cambridge, 1940. [Google Scholar]

- Fisher, R.A. Statistical Methods for Research Workers; Oliver and Boyd: Edinburgh, 1925. [Google Scholar]

- Thompson, N.; Ge, Y.; Ferguson, A. On the origin of algorithmic progress in AI. arXiv 2025, arXiv:2511.21622. [Google Scholar] [CrossRef]

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).