Submitted:

02 May 2026

Posted:

04 May 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

1.1. Real-Time Monitoring of Tool Wear with Acoustic Emission Signals

1.2. Research Gap in the Existing Work

- Collecting multi-sensor data for traditional feature fusion is cumbersome and unfeasible. SSWT, on the other hand, can obtain high-resolution time-frequency characteristics and, therefore, reduces the complexity of data acquisition.

- Previous studies have often relied on using one feature and representation; however, SSWT produces rich time-frequency maps spanning complex wear patterns.

- Very few studies consider the temporal and spectral dimensions intra-signal; Vision Transformer is capable of learning global patterns within the SSWT maps automatically, bypassing the need for manual feature fusion.

- Instance-based lazy classifiers have not been used for CNC drill bit wear, and Vision Transformers offer an end-to-end learning framework that surpasses traditional classifiers.

- One of the defining characteristics of deep learning methodologies is the need for large volumes of data; however, the combination of SSWT and ViT mitigates this problem and leads to effective learning, even with moderately sized datasets, as a result of the efficient representation learning.

- While most ML/DL methods have difficulties in real-time industrial applications, the SSWT + ViT framework provides a computationally efficient and robust tool wear classification in real-time.

1.3. Novelty in the Proposed Methodology

2.0. Literature Review

2.1. Acoustic Emission in Tool Wear Monitoring

2.2. Synchrosqueezed Wavelet Representation for Feature Extraction

2.3. Machine Learning and Tool Wear Classification

2.4. Vision Transformer Based Fault Diagnosis

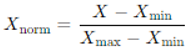

3.0. Methodology

3.1. Dataset Collection

3.2. Feature Extraction

3.3. Pre-AE Signal Processing

3.4. Feature Representation Using SSWT

3.5. Improvements over the Traditional WPD-Based Feature Extraction

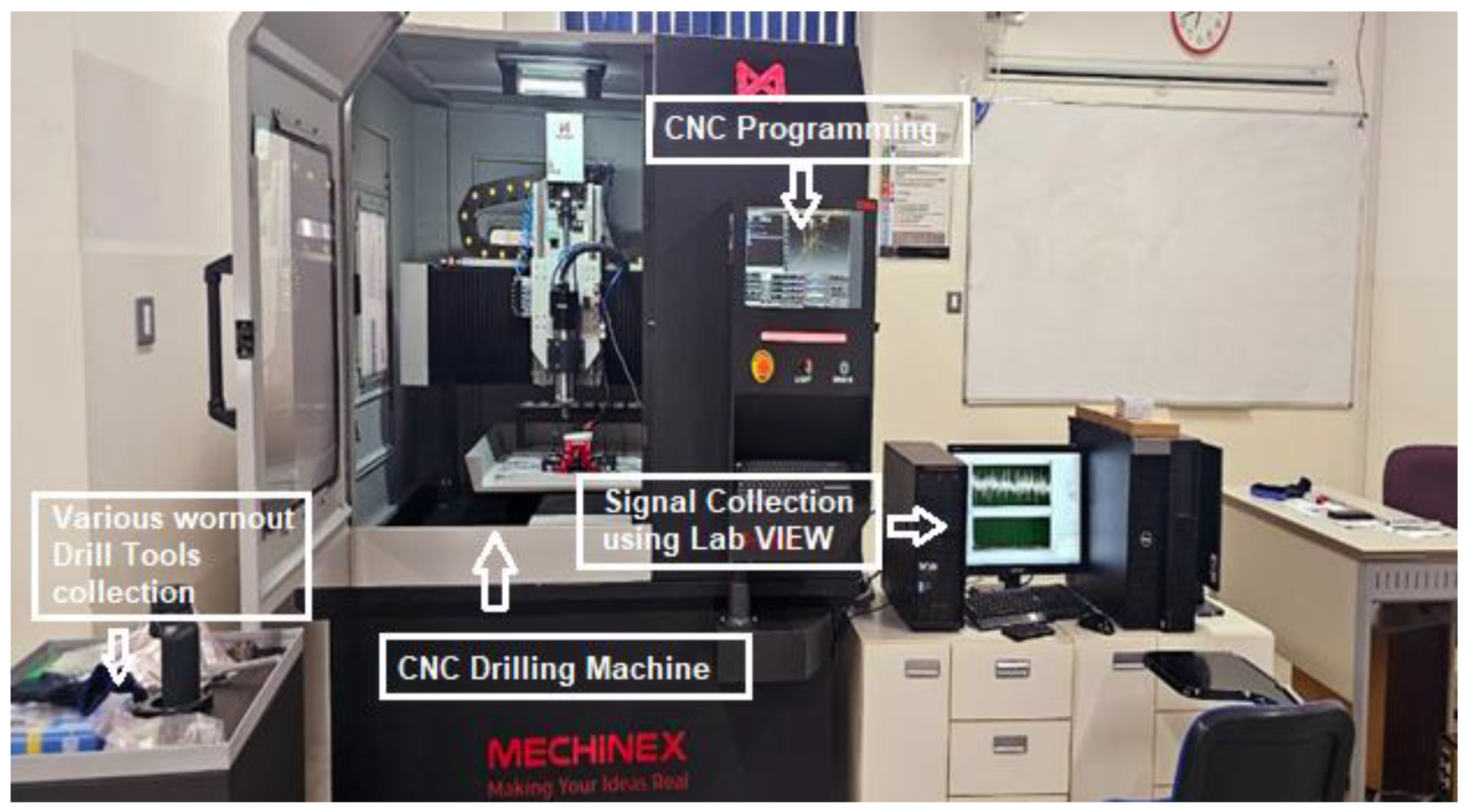

3.6. SSWT Maps Normalisation to Fit the Vision Transformer

3.7. Classification Using Vision Transformer

3.8. Mathematical Formulation of SSWT and Vision Transformer

3.8.1. Acoustic Emission Signal Model

- : instantaneous amplitude (related to wear severity),

- : instantaneous phase,

- : instantaneous frequency,

- : measurement noise.

3.8.2. Continuous Wavelet Transform (CWT)

- : scale parameter,

- : time shift,

- : complex mother wavelet (Morlet commonly used),

- (: complex conjugate.

3.8.3. Instantaneous Frequency Estimation

- micro-crack initiation,

- frictional rubbing,

- tool edge chipping.

3.8.4. Synchrosqueezing Operation

- : true instantaneous frequency,

- : frequency resolution threshold.

- This reassignment concentrates energy ridges, making wear-related frequency components more separable.

3.8.5. Hilbert-Synchrosqueezed Time-Frequency Energy

- increased high-frequency energy,

- ridge broadening,

- energy migration to lower frequencies during severe wear.

3.8.6. Patch Embedding

- : trainable embedding matrix,

- positional embedding.

3.8.7. Multi-Head Self-Attention (MHSA)

3.8.8. Feed-Forward Network (FFN)

3.8.9. Transformer Encoder Layer

3.8.10. Hilbert-Synchrosqueezed Time-Frequency Energy

- increased high-frequency energy,

- ridge broadening,

- energy migration to lower frequencies during severe wear.

3.9. Advantages over Lazy Classifiers

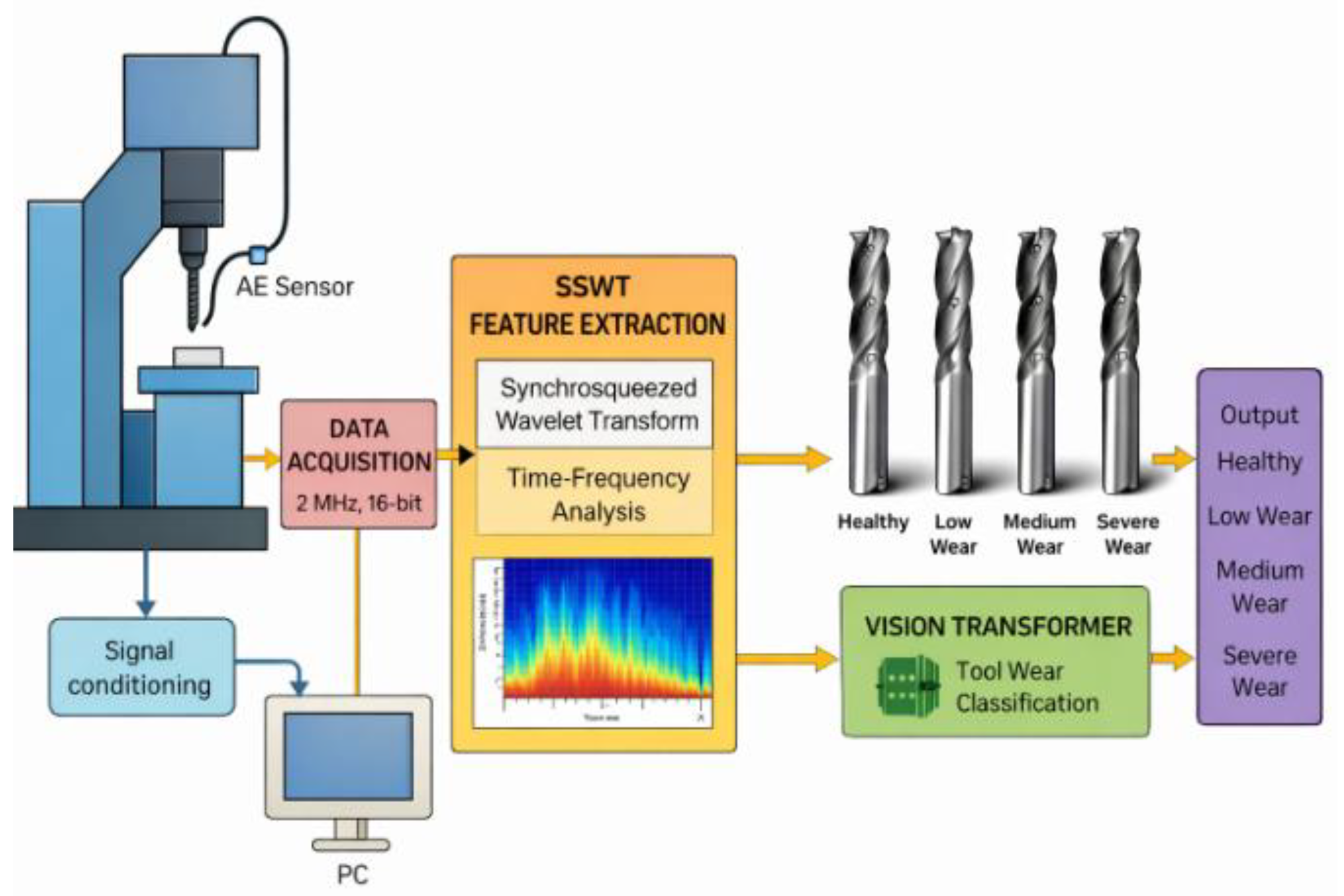

4.0. Experimental Setup

4.1. CNC Drilling Experiments and Collection of AE Data

4.2. Data Labelling and Segmentation

4.3. Feature Extraction and Fusion

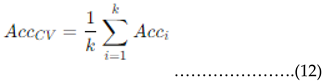

4.4. Classification and Validation

4.5. Performance Metrics

5.0. Results and Discussion

5.1. Classification Metrics and Accuracy

5.2. Confusion Matrix Analysis

5.3. Feature Representation and Analysis

5.4. Analysis of Confusion Matrices

| Actual/Predicted | HT | LW | MW | SW |

| HT | 99.4% | 0.4% | 0.1% | 0.1% |

| LW | 0.3% | 99.0% | 0.6% | 0.1% |

| MW | 0.1% | 0.5% | 98.8% | 0.6% |

| SW | 0.1% | 0.1% | 0.5% | 98.7% |

5.5. Cross-Validation of the Proposed Methodology

5.6. Comparison with Existing Methods

5.7. Limitations and Future Considerations

| Reference | Methodology used/Core application | Obtained Accuracy |

| Truong et al. [24] | BRANN (Bayesian-regularised ANN) | 73.33% |

| Gougam et al. [25] | Hybrid CNN–ResNet–BiLSTM | 98% |

| Kumar et al. [26] | Encoder–Decoder LSTM | 94.20% |

| Kumar et al. [26] | Hybrid LSTM | 97.85% |

| Bilgili et al. [27] | LSTM (with industrial edge data) | 98% |

| Zhang et al. [28] | ResNet | 97.7% |

| Hoang et al. [29] | Gaussian Process Regression + ANFIS | 97.57% |

| Proposed methodology | Synchrosqueezed Wavelet Representation and Vision Transformer | 99.3% |

5.8. Practical Application of the Proposed Methodology

5.0. Conclusion

6.1. Key Conclusions

Consent for publication

Competing interests

References

- Xiqing, M.; Chuangwen, X. Tool Wear Monitoring of Acoustic Emission Signals from Milling Processes; 2009 First International Workshop on Education Technology and Computer Science: Wuhan, China, 2009; pp. 431–435. [Google Scholar] [CrossRef]

- Vijayalakshmi, K. Reliability improvement in component-based software development environment. Int. J. Inf. Syst. Change Manag. 2011, 5(2), 99–123. [Google Scholar] [CrossRef]

- Kamarudeen, M.; Vijayalakshmi, K. A machine learning-based financial management mobile application to enhance college students’ financial literacy. Proc. Int. Conf. Res. Educ. Sci. 2023, 9(1), 1237–1253. [Google Scholar]

- Davy, R.; Ajitha Priyadarsini, S.; Jeen Robert, R. B.; Kennedy, S. M. Cutting-edge tool wear monitoring in AISI4140 steel hard turning using least-square support vector machine. J. Chin. Inst. Eng. 2024, 47(3), 1–16. [Google Scholar] [CrossRef]

- Siddique, M.F.; Umar, M.; Ahmad, W.; et al. Advanced fault diagnosis in milling cutting tools using vision transformers with semi-supervised learning and uncertainty quantification. Sci. Rep. 2025, 15, 42460. [Google Scholar] [CrossRef]

- Twardowski, P.; Tabaszewski, M.; Wiciak-Pikuła, M.; Felusiak-Czyryca, A. Identification of tool wear using acoustic emission signal and machine learning methods. Precis. Eng. 2021, 72, 738–744. [Google Scholar] [CrossRef]

- Nagaraj, S.; Diaz-Elsayed, N. Tool Condition Monitoring in the Milling of Low- to High-Yield-Strength Materials. Machines 2025, 13, 276. [Google Scholar] [CrossRef]

- Maia, L. H. A.; Abrão, A. M.; Vasconcelos, W. L.; Júnior, J. L.; Fernandes, G. H. N.; Machado, Á. R. Enhancing Machining Efficiency: Real-Time Monitoring of Tool Wear with Acoustic Emission and STFT Techniques. Lubricants 2024, 12(11), 380. [Google Scholar] [CrossRef]

- Liu, J.; Wang, Z. Hybrid fusion of acoustic emission and vibration features for tool wear diagnosis. Mech. Syst. Signal Process. 2018, vol. 104, 468–482. [Google Scholar]

- Daubechies, I.; Lu, J.; Wu, H.-T. Synchrosqueezed wavelet transforms: An empirical mode decomposition-like tool. Appl. Comput. Harmon. Anal. 2011, 30(2), 243–261. [Google Scholar] [CrossRef]

- Guo, M.; Tu, X.; Abbas, S.; Zhuo, S.; Li, X. Time-frequency analysis-based impulse feature extraction method for quantitative evaluation of milling tool wear. Struct. Health Monit. 2023, 23(3), 1766–1778. [Google Scholar] [CrossRef]

- Peng, C.; Zheng, J.; Chen, T.; Jing, Z.; Wang, Z.; Su, Y.; Shi, Y. Tool wear feature extraction in BTA deep hole drilling process based on maximum probability multi-synchrosqueezing transform of spindle current signal. Measurement 2025, 241, 115780. [Google Scholar] [CrossRef]

- Shi, J.; Chen, G.; Zhao, Y.; Tao, R. Synchrosqueezed Fractional Wavelet Transform: A New High-Resolution Time-Frequency Representation. IEEE Trans. Signal Process. 2023, vol. 71, 264–278. [Google Scholar] [CrossRef]

- Abdeltawab, A.; Xi, Z.; Longjia, Z.; Galal, A. M. Wavelet-based hybrid CNN-BiLSTM approach in tool wear monitoring. Digit. Signal Process. 2026, 168(Part B), 105529. [Google Scholar] [CrossRef]

- Sun, T.; Li, Q.; Chen, Y. Random forests and gradient boosting machines in tool wear prediction. Expert Syst. Appl. 2019, vol. 116, 170–181. [Google Scholar]

- Aha, D. W.; Kibler, D.; Albert, M. K. Instance-based learning algorithms. Mach. Learn. 1991, vol. 6(no. 1), 37–66. [Google Scholar] [CrossRef]

- Altman, N. S. An introduction to kernel and nearest-neighbour nonparametric regression. Am—Stat 1992, vol. 46(no. 3), 175–185. [Google Scholar] [CrossRef]

- Dong, S.; Meng, Y.; Yin, S.; Liu, X. Tool wear state recognition study based on an MTF and a vision transformer with a Kolmogorov–Arnold network. Mech. Syst. Signal Process. 2025, 228, 112473. [Google Scholar] [CrossRef]

- Qiu, J.; Liu, J.; Chu, Z.; Gao, Z.; Wu, X. Tool wear prediction based on LSTM-Transformer model. In Association for Computing Machinery; 2025. [Google Scholar] [CrossRef]

- Si, S.; Mu, D.; Si, Z. Intelligent tool wear prediction based on deep learning PSD-CVT model. Sci. Rep. 2024, 14, 20754. [Google Scholar] [CrossRef]

- Li, S.; Li, M.; Gao, Y. Deep Learning Tool Wear State Identification Method Based on Cutting Force Signal. Sensors 2025, 25(3), 662. [Google Scholar] [CrossRef]

- Han, N.; Pei, Y.; Song, Z. Signal Separation Operator Based on Wavelet Transform for Non-Stationary Signal Decomposition. Sensors 2024, 24, 6026. [Google Scholar] [CrossRef]

- Vijayalakshmi, K.; Sitharselvam, P. M.; Thamarai, I.; Ashok, J.; Sathish, G.; Mayakannan, S. Secure and private federated learning through encrypted parameter aggregation. In Handbook on federated learning: Advances, applications and opportunities; CRC Press, 2024; pp. 80–105. [Google Scholar] [CrossRef]

- Truong, T.T.; Airao, J.; Karras, P.; Hojati, F.; Azarhoushang, B.; Aghababaei, R. Data-driven prediction of tool wear using Bayesian-regularised artificial neural networks. arXiv. 2023. Available online: https://arxiv.org/abs/2311.18620.

- Gougam, F.; Afia, A.; Aitchikh, M.A.; Touzout, W.; Rahmoune, C.; Benazzouz, D. Computer numerical control machine tool wear monitoring through a data-driven approach. J. Intell. Manuf. 2024, 35(5), 1471–1485. [Google Scholar] [CrossRef]

- Kumar, S.; Kolekar, T.; Kotecha, K.; Patil, S.; Bongale, A. Performance evaluation for tool wear prediction based on Bi-directional, Encoder-Decoder, and Hybrid Long Short-Term Memory models. Int. J. Qual. Reliab. Manag. 2022, 39(7), 1551–1576. [Google Scholar] [CrossRef]

- Bilgili, D.; Kecibas, G.; Besirova, C.; Chehrehzad, M.R.; Burun, G.; Pehlivan, T.; Uresin, U.; Emekli, E.; Lazoglu, I. Tool flank wear prediction using high-frequency machine data from an industrial edge device. arXiv 2022, arXiv:2212.13905. [Google Scholar] [CrossRef]

- Zhang, S.; Yang, Y.; Xie, Y.; Tang, H.; Li, H.; Yao, L.; Yang, Y. GNSS Signal Extraction Using CEEMDAN–WPD for Deformation Monitoring of Ropeway Pillars. Remote Sens. 2025, 17(2), 224. [Google Scholar] [CrossRef]

- Hoang, L.V.; Tran, V.D.; Nguyen, Q.T. Adaptive neuro-fuzzy inference system and Gaussian regression model for tool wear prediction in milling with AE signal features. Eng. Technol. Q. J. 2023, 6(4), 55–65. Available online: https://www.journal.eu-jr.eu/engineering/article/view/2509 (accessed on 7 June 2025).

- Umar, M.; Siddique, M.F.; Ullah, N.; Kim, J.-M. Milling Machine Fault Diagnosis Using Acoustic Emission and Hybrid Deep Learning with Feature Optimization. Appl. Sci. 2024, 14, 10404. [Google Scholar] [CrossRef]

- Poolakkachalil, T. K.; Chandran, S.; Muralidharan, R.; Vijayalakshmi, K. Comparative analysis of lossless compression techniques in efficient DCT-based image compression system based on Laplacian transparent composite model and an innovative lossless compression method for discrete-color images. In Proceedings of the 3rd MEC International Conference on Big Data and Smart City (ICBDSC 2016); IEEE, 2016; pp. 155–160. [Google Scholar] [CrossRef]

- Piankitrungreang, P.; Chaiprabha, K.; Chungsangsatiporn, W.; Ratanasumawong, C.; Chancharoen, P.; Chancharoen, R. Acoustic-Based Machine Main State Monitoring for High-Speed CNC Drilling. Machines 2025, 13, 372. [Google Scholar] [CrossRef]

- Jayachandran, J.; Sivakumar, V.; K, V.; et al. Machine Learning-Enhanced MXene–Copper–Graphene THz Sensor for Accurate Salinity Sensing in Environmental Applications. Plasmonics 2025, 20, 11349–11359. [Google Scholar] [CrossRef]

- Wu, Z.; Huang, N. E. Ensemble empirical mode decomposition: A noise-assisted data analysis method. Adv. Adapt. Data Anal. 2009, 1(1), 1–41. [Google Scholar] [CrossRef]

- Orhan, A.; Yordanov, N.; Ertarğın, M.; Zhilevski, M.; Mikhov, M. A Comparative Study of Time–Frequency Representations for Bearing and Rotating Fault Diagnosis Using Vision Transformer. Machines 2025, 13, 737. [Google Scholar] [CrossRef]

- Suresh Babu, V.; Amuthakkannan, R.; Sriram Kumar, S.; Muruganandam, A. Optimal cutting parameters estimation to improve surface finish in turning operation in AISI 1045 using Taguchi’s robust design. Int. J. Ind. Syst. Eng. 2013, 15(1), 19–36. [Google Scholar] [CrossRef]

- Amuthakkannan, R. Effective software assembly for real-time systems using multi-level genetic algorithm. Int. J. Eng. Sci. Technol. (IJEST) 2011, 3(8), 6187–6201. [Google Scholar]

- Karuppasamy, P.; Wekalao, J.; Rajakannu, A. Graphene Terahertz Metasurface Sensor Enabled by AI for Rapid, High-Precision Sperm Detection in Fertility Assessment. Plasmonics 2026, 21, 619–641. [Google Scholar] [CrossRef]

- R, V.; Thangavel, G.; Wekalao, J.; et al. Ultra-High Sensitivity Terahertz Detection Using a 2D-Material-Based Metasurface: Design, Tuning, and Machine Learning Validation. Plasmonics 2025, 20, 6139–6150. [Google Scholar] [CrossRef]

- Muheki, J.; Elsayed, H.A.; Alfassam, H.E.; et al. Design and Optimization of a Hybrid Graphene–Gold–Silver Terahertz Metasurface Biosensor for High-Sensitivity Sperm Detection with Machine Learning for Behavior Prediction. J. Electron. Mater. 2026, 55, 2348–2371. [Google Scholar] [CrossRef]

- Wekalao, Jacob; Elsayed, Hussein A.; Bin-Jumah, May; Alqhtani, Haifa A.; Abukhadra, Mostafa R.; Bellucci, Stefano; Rajakannu, Amuthakkannan; Mehaney, Ahmed. Advanced terahertz-range dopamine detection using a 2D material-based metasurface biosensor. Appl. Opt. 2025, 64, 4625–4638. [Google Scholar] [CrossRef]

- Al Saadi, A. G. K.; Amuthakkannan, R. An impact of lean supply chain practices in oil and gas sector in Sultanate of Oman: A case study. J. Propuls. Technol. 2024, 45(1), 4224. [Google Scholar]

- Krishnasamy, O.; Thirugapillai, P.; Rajakannu, A.; Selvaraju, M. Optimization and multi-objective analysis of tensile, flexural and impact strength in nano-hybrid bio-composites reinforced with Helicteres isora and Holoptelea integrifolia fibers, and nanographene. Matéria (Rio de Janeiro) 2025, 30. [Google Scholar] [CrossRef]

- Elsayed, H. A.; Wekalao, J.; Alqhtani, H. A.; Bin-Jumah, M.; Abukhadra, M. R.; Bellucci, S.; Rajakannu, A.; Mehaney, A. Machine learning-enhanced terahertz plasmonic biosensor based on MXene-gold nanostructures for tuberculosis detection. Sens. Bio-Sens. Res. 2025, 49, 100852. [Google Scholar] [CrossRef]

- Aggarwal, K.; Wekalao, J.; Rajakannu, A. A Trimodal 2D Metasurface Biosensor with Bayesian Regression for Ultra-Sensitive Cancer Biomarker Detection. Plasmonics 2025, 20, 5977–5990. [Google Scholar] [CrossRef]

- Elsayed, H. A.; Wekalao, J.; Mehaney, A.; Alarifi, N. S.; Abukhadra, M. R.; Bellucci, S.; Hajjiah, A.; Rajakannu, A. Design and performance prediction of a multilayer metamaterial absorber for broadband solar-thermal energy conversion using random forest regression. Case Stud. Therm. Eng. 2025, 74, 106615. [Google Scholar] [CrossRef]

- Wekalao, J.; Mehaney, A.; Alarifi, N. S.; Abukhadra, M. R.; Elsayed, H. A.; Rajakannu, A. Advanced THz metasurface biosensor for label-free amino acid detection optimized with stacking ensemble algorithm. Phys. E Low.-Dimens. Syst. Nanostructures 2025, 172, 116287. [Google Scholar] [CrossRef]

- Anbazhagan, S.; U, A.K.; Rajakannu, A.; et al. AI-Augmented Terahertz Biosensor with MXene–Graphene Architecture for Sensitive Sperm Concentration Detection. Plasmonics 2025, 20, 10573–10587. [Google Scholar] [CrossRef]

- Jim Jose; Amuthakkannan, R. Design, development and analysis of FDM based portable rapid prototyping machine. Int. J. Latest Trends Eng. Technol. (IJLTET) 2014, 4(4), 324–232. [Google Scholar]

- Vijayalakshmi, K.; Ramaraj, N.; Amuthakkannan, R.; Kannan, S. M. A new algorithm in assembly for component-based software using dependency chart. Int. J. Inf. Syst. Change Manag. 2007, 2(3), 261–278. [Google Scholar] [CrossRef]

| S.No | Description | Dimensions/ Details |

| 1. | Work area | 500*500*150mm (X, Y, Z) |

| 2. | Outer Size | 6.4*6.2*6.5 Ft (X, Y, Z) |

| 3. | Speed, Power, and Cooling | 24,000 RPM 2.2kW ATC, Water-Cooled spindle |

| 4. | Weight on the table | 20 Kg |

| 5. | Linear Rail | 20mm |

| 6. | Motor | Hybrid Servo Motors |

| 7. | Collet size | ER20 |

| 8. | Drilling hits/min | 80 hits/min |

| 9. | Resolution µm | 50µ, Accuracy: 50µ |

| 10. | Rapid Traverse | 7000 mm/min |

| 11. | Machine weight | 600KG ex. accessories |

| 12. | Software | Millsoft V1.12 |

| 13. | Power supply | 220v 50Hz 20A single-phase |

| Drill bit condition |

Hea- lthy |

Low | Medi- um |

Sev- ere |

Total |

| Drill diameter | |||||

| 3.0 mm | 50 | 50 | 50 | 50 | 200 |

| 3.2 mm | 50 | 50 | 50 | 50 | 200 |

| 3.4 mm | 50 | 50 | 50 | 50 | 200 |

| 3.6 mm | 50 | 50 | 50 | 50 | 200 |

| 3.8 mm | 50 | 50 | 50 | 50 | 200 |

| Total | 250 | 250 | 250 | 250 | |

| Overall datasets | 1000 | ||||

| Metric | Value |

|---|---|

| Accuracy (%) | 99.3 |

| Precision | 0.99 |

| Recall | 0.99 |

| F1-Score | 0.99 |

| Cohen’s Kappa | 0.99 |

| Actual\Predicted | HT | LW | MW | SW |

| HT | 99.4% | 0.4% | 0.1% | 0.1% |

| LW | 0.3% | 99.0% | 0.6% | 0.1% |

| MW | 0.1% | 0.5% | 98.8% | 0.6% |

| SW | 0.1% | 0.1% | 0.5% | 98.7% |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).