Submitted:

02 May 2026

Posted:

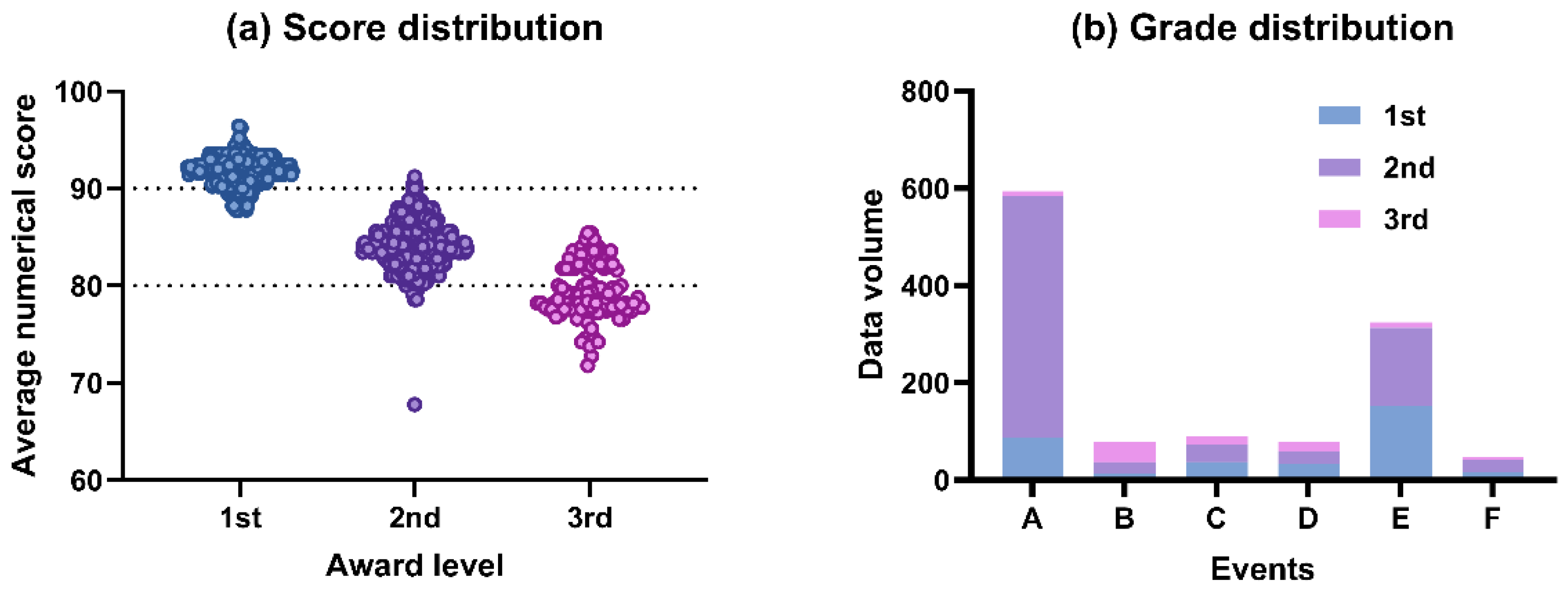

04 May 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Conceptual Foundations and Competition Criteria Mapping

2.1. Theoretical Foundations of the Five Dimensions

2.1.1. Innovation and Creativity

2.1.2. Artistic Aesthetics

2.1.3. Applied Technology

2.1.4. Work Normativity

2.1.5. Practical Promotion

2.2. Mapping Competition Criteria onto the Five-Dimensional Framework

3. Data and Methods

3.1. Expert Re-Evaluation Procedure

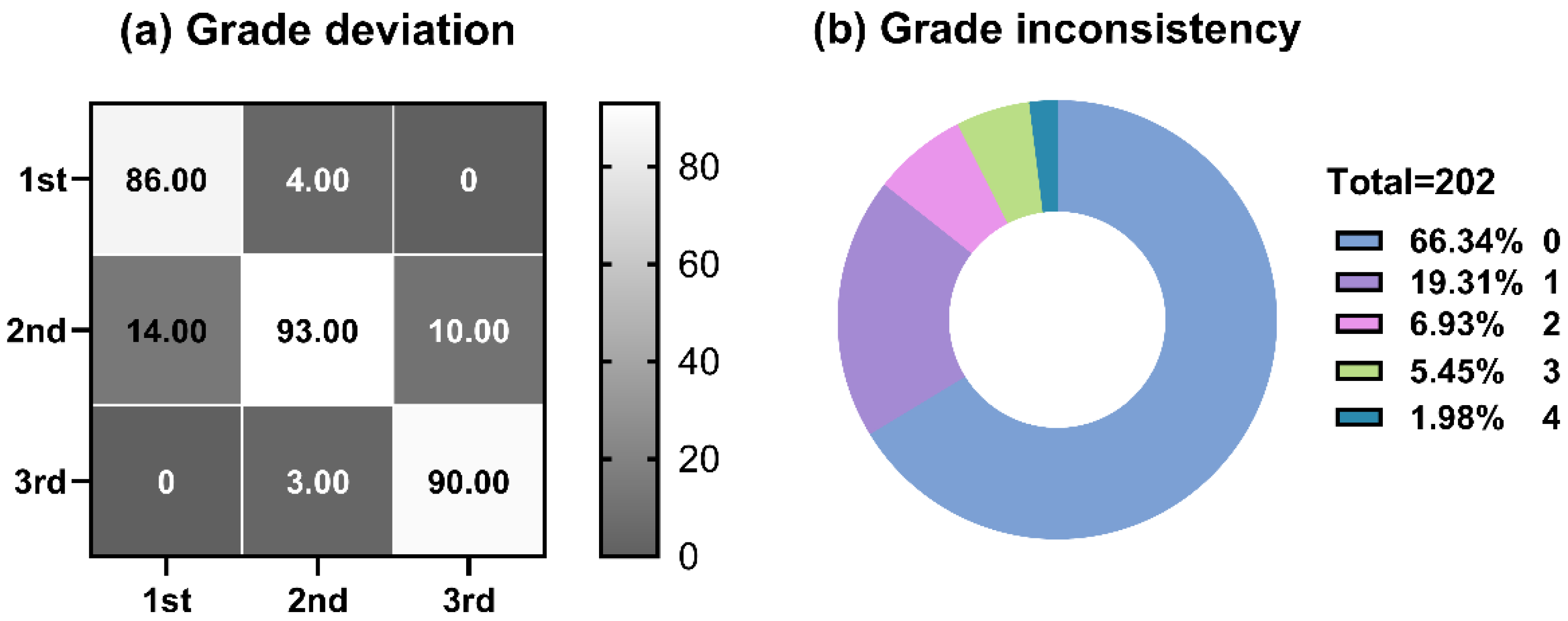

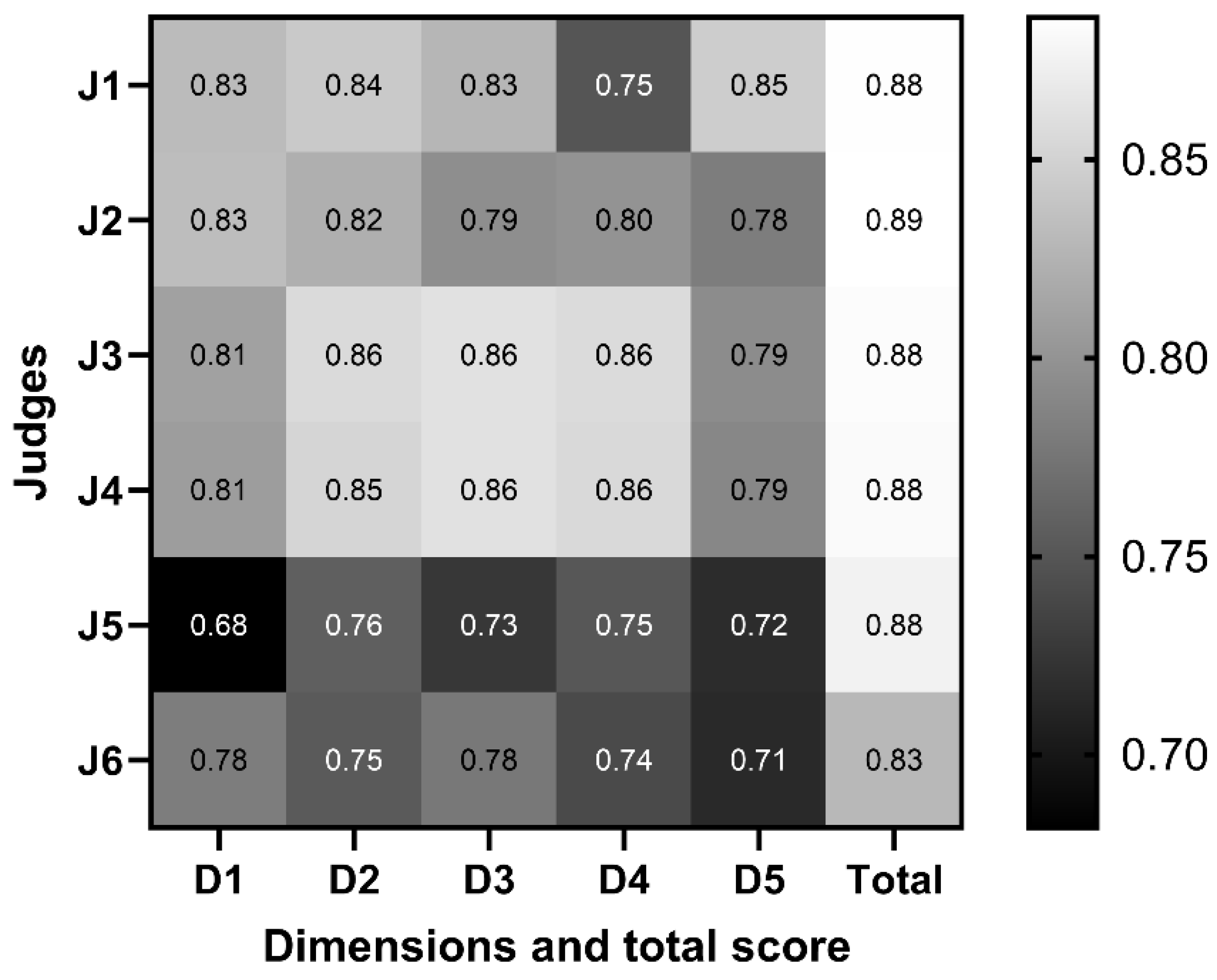

3.2. Data Processing and Reliability Assessment

3.3. Regression Modelling Strategy

3.3.1. Baseline Equal-Weight Model

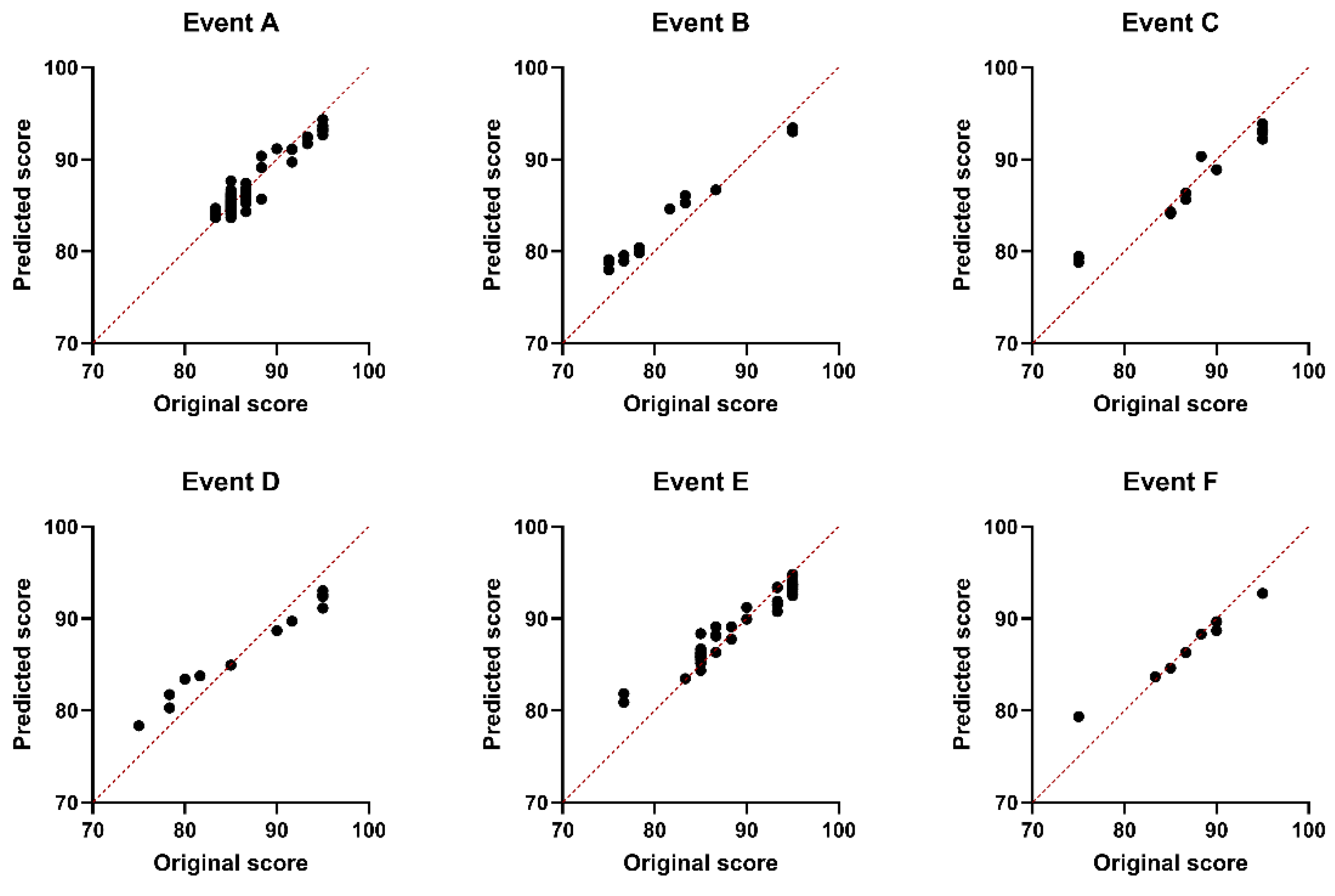

3.3.2. Event-Specific Models

3.4. Model Validation and Comparative Fit

4. Results and Discussion

4.1. Event-Specific Weighting Patterns as Evaluative Cultures

4.2. Transparency, Fairness, and Sustainable Design Education

4.3. Governance and Pedagogical Applications

5. Conclusions

Supplementary Materials

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

Appendix A

References

- Findeli, A. Rethinking design education for the 21st century: Theoretical, methodological, and ethical discussion. Des. Issues 2001, 17, 5–17. [Google Scholar] [CrossRef]

- Eisner, E.W. The arts and the creation of mind; Yale University Press: New Haven, 2002. [Google Scholar]

- Dyer, G. Advertising as communication; Routledge: London, 2008. [Google Scholar]

- Bovill, C. Co-creation in learning and teaching: The case for student–staff partnership. Teach. High. Educ. 2020, 25, 843–854. [Google Scholar]

- Jia, Y. Practice and exploration of “Competitive Teaching Method” in the teaching of advertising design and production in colleges and universities. In Proceedings of the 2020 6th International Conference on Social Science and Higher Education, 2020; pp. 963–966. [Google Scholar]

- Zhang, M. Exploring the integration of teaching practice and innovation-entrepreneurship competitions in art and design education under the “Internet Plus” perspective. Des. Art. Stud. 2025, 1, 4. [Google Scholar] [CrossRef]

- Liu, C.; Husain, A.B. The impact of design competitions on the skill development and employability of Chinese graphic design students. Eurasian J. Educ. Res. (EJER) 2024, 113, 277. [Google Scholar]

- Yu, W.; Jiang, T. Research on the direction of innovation and entrepreneurship education reform within the digital media art design major in the digital economy. Front. Psychol. 2021, 12, 719754. [Google Scholar] [CrossRef]

- Zhuang, T.; Liu, B. Sustaining higher education quality by building an educational innovation ecosystem in China—policies, implementations and effects. Sustainability 2022, 14, 7568. [Google Scholar] [CrossRef]

- Wang, Y.H.; Ajovalasit, M. Involving cultural sensitivity in the design process: a design toolkit for Chinese cultural products. Int. J. Art. Des. Educ. 2020, 39, 565–584. [Google Scholar] [CrossRef]

- Li, S.; Zhang, Y.; Wang, Z.; Li, H. The influence of national policies on the evolution of industrial design education in China. Heliyon 2023, 9, 17504. [Google Scholar] [CrossRef]

- Keane, M. Creative industries in China: Art, design and media; John Wiley & Sons: Hoboken, 2013. [Google Scholar]

- Lawson, B. How designers think: The design process demystified; Routledge: London, 2005. [Google Scholar]

- Cross, N. Design thinking: Understanding how designers think and work; Berg: Oxford, 2011. [Google Scholar]

- Nikander, J.B.; Liikkanen, L.A.; Laakso, M. The preference effect in design concept evaluation. Des. Stud. 2014, 35, 473–499. [Google Scholar] [CrossRef]

- Zhu, C.; Buckley, J.; Klapwijk, R.; Spandaw, J.; de Vries, M.J. A holistic look at creativity: evaluating pupils’ creative design ideation and prototypes through comparative judgment. Int. J. Technol. Des. Educ. 2025, 35, 1–28. [Google Scholar] [CrossRef]

- Orr, S. We kind of try to merge our own experience with the objectivity of the criteria: The role of connoisseurship and tacit practice in undergraduate fine art assessment. Art. Des. Commun. High. Educ. 2010, 9, 5–19. [Google Scholar] [CrossRef]

- Orr, S.; Bloxham, S. Making judgements about students making work: Lecturers’ assessment practices in art and design. Arts Humanit. High. Educ. 2013, 12, 234–253. [Google Scholar] [CrossRef]

- Sadler, D.R. Indeterminacy in the use of preset criteria for assessment and grading. Assess. Eval. High. Educ. 2009, 34, 159–179. [Google Scholar] [CrossRef]

- Sadler, D.R. Interpretations of criteria--based assessment and grading in higher education. Assess. Eval. High. Educ. 2005, 30, 175–194. [Google Scholar] [CrossRef]

- Bloxham, S.; Den-Outer, B.; Hudson, J.; Price, M. Let’s stop the pretence of consistent marking: exploring the multiple limitations of assessment criteria. Assess. Eval. High. Educ. 2016, 41, 466–481. [Google Scholar] [CrossRef]

- Nicol, D.J.; Macfarlane--Dick, D. Formative assessment and self--regulated learning: A model and seven principles of good feedback practice. Stud. High. Educ. 2006, 31, 199–218. [Google Scholar] [CrossRef]

- Carless, D.; Boud, D. The development of student feedback literacy: Enabling uptake of feedback. Assess. Eval. High. Educ. 2018, 43, 1315–1325. [Google Scholar] [CrossRef]

- Ulkhaq, M.M.; Pramono, S.N.; Adyatama, A. Assessing the tendency of judging bias in student competition: a data mining approach. J. Appl. Res. High. Educ. 2023, 15, 1198–1211. [Google Scholar] [CrossRef]

- Qiao, S.; Li, L.X.; Chen, D. Teachers’ data-driven decision-making: developing and validating a measurement scale. Educ. Res. 2025, 67, 286. [Google Scholar] [CrossRef]

- Chen, L.; Wang, P.; Dong, H.; Shi, F.; Han, J.; Guo, Y.; Childs, P.R.; Xiao, J.; Wu, C. An artificial intelligence based data-driven approach for design ideation. J. Vis. Commun. Image Represent. 2019, 61, 10–22. [Google Scholar] [CrossRef]

- Jiang, S.; Hu, J.; Wood, K.L.; Luo, J. Data-driven design-by-analogy: state-of-the-art and future directions. J. Mech. Des. 2022, 144, 020801. [Google Scholar] [CrossRef]

- Lin, L.; Zhou, D.; Wang, J.; Wang, Y. A systematic review of big data driven education evaluation. Sage Open 2024, 14, 1–18. [Google Scholar] [CrossRef]

- Dorst, K. The core of ‘design thinking’and its application. Des. Stud. 2011, 32, 521–532. [Google Scholar] [CrossRef]

- Verganti, R. Design-driven innovation: Changing the rules of competition by radically innovating what things mean; Harvard Business Press: Boston, 2009. [Google Scholar]

- Rossi, E.; Attaianese, E. Research synergies between sustainability and human-centered design: a systematic literature review. Sustainability 2023, 15, 12884. [Google Scholar] [CrossRef]

- Leão, C.P.; Silva, V.; Costa, S. Exploring the intersection of ergonomics, design thinking, and AI/ML in design innovation. Appl. Syst. Innov. 2024, 7, 65. [Google Scholar] [CrossRef]

- Mumford, M.D.; Hester, K.S.; Robledo, I. Creativity in organizations: importance and approaches. Creat. Innov. Manag. 2010, 19, 308–319. [Google Scholar]

- Candy, L.; Edmonds, E. Practice-based research in the creative arts: Foundations and futures. Leonardo 2018, 51, 63–69. [Google Scholar] [CrossRef]

- Polster, L.; Bilgram, V.; Görtz, S. AI-augmented design thinking: potentials, challenges, and mitigation strategies of integrating artificial intelligence in human-centered innovation processes. IEEE Eng. Manag. Rev. 2025, 53, 193–214. [Google Scholar] [CrossRef]

- Demirel, H.O.; Goldstein, M.H.; Li, X.; Sha, Z. Human-centered generative design framework: An early design framework to support concept creation and evaluation. Int. J. Hum. –Comput. Interact. 2024, 40, 933–944. [Google Scholar] [CrossRef]

- Stokes, P.D. Creativity from constraints: The psychology of breakthrough. Creat. Res. J. 2005, 17, 153–165. [Google Scholar]

- Pyatkin, M. Integrating design thinking and systems thinking for entrepreneurial innovation: a holistic approach. EU Business School, Munich, 2024. [Google Scholar]

- Kühnel, K. The development of a future-orientated information technology. In The Agile Enterprise: digitalization as an opportunity for Agile transformation; Kühnel, K., Ed.; Springer Nature Switzerland: Cham, 2025; pp. 13–37. [Google Scholar]

- Norman, D.A. The design of everyday things; MIT Press: Cambridge, 2013. [Google Scholar]

- Ahmadzai, P. Divergent and convergent thinking processes in smart cities: A systematic review of human-centered design practices. Cities 2025, 159, 105744. [Google Scholar] [CrossRef]

- Benoni, F.T.; Novoa, R.D. Enhancing business innovation: a review of the impact of design methods. J. Technol. Manag. Innov. 2023, 18, 104–123. [Google Scholar] [CrossRef]

- Wooten, J.O.; Ulrich, K.T. Idea generation and the role of feedback: Evidence from field experiments with innovation tournaments. Prod. Oper. Manag. 2012, 21, 713–731. [Google Scholar]

- Tai, J.; Ajjawi, R.; Boud, D.; Dawson, P.; Panadero, E. Developing evaluative judgement: enabling students to make decisions about the quality of work. High. Educ. 2018, 76, 467–481. [Google Scholar] [CrossRef]

- Little, T.; Dawson, P.; Boud, D.; Tai, J. Can students’ feedback literacy be improved? A scoping review of interventions. Assess. Eval. High. Educ. 2024, 49, 39–52. [Google Scholar] [CrossRef]

- Nicol, D.; McCallum, S. Making internal feedback explicit: Exploiting the multiple comparisons that occur during peer review. Assess. Eval. High. Educ. 2022, 47, 424–443. [Google Scholar] [CrossRef]

| Event | Competition name | D1 | D2 | D3 | D4 | D5 |

| A | National College Students Advertising Art Competition | Creativity; originality | Recognition | Technical feasibility | — | Promotion |

| B | Huacan Award | Creativity (30%) |

Expressive aesthetics (30%) | Technical feasibility (10%) | Normativity (20%) |

Practicality (10%) |

| C | Milan Design Week – China Exhibition | Creativity (30%) | Aesthetics (30%) | Technicality (30%) | Normativity (10%) | — |

| D | Future Designer Competition | Creativity (30%) | Aesthetics (30%) | Production quality (30%) | Normativity (10%) | — |

| E | China Good Ideas Competition | Originality (40%) |

Value concept (30%) | Model optimality (5%) |

Visual effect (10%) |

Promotion rate (15%) |

| F | China Collegiate Computer Design Competition | Creativity | Feasibility | Technical breakthroughs; code quality | — | Application scenarios |

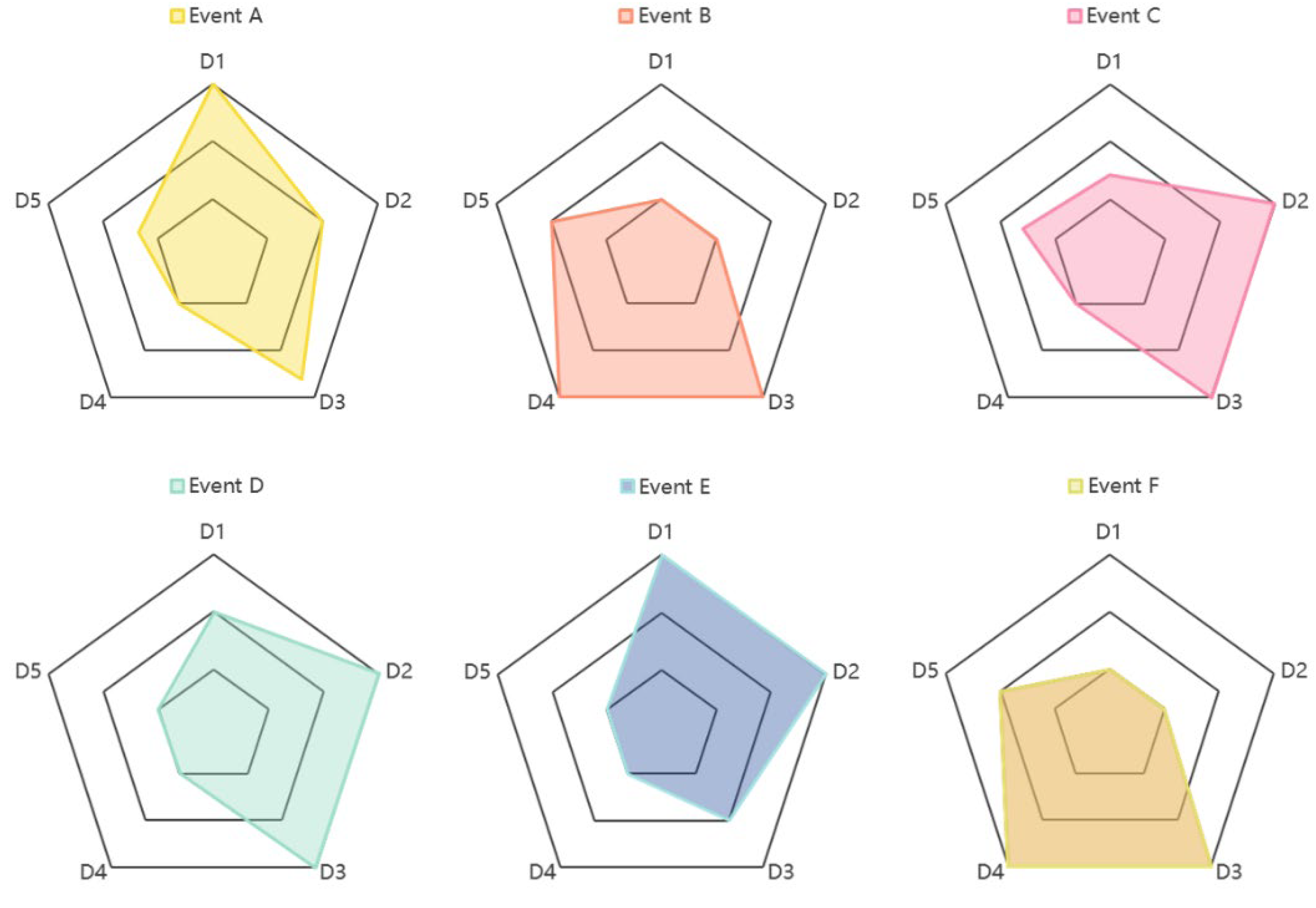

| Event | k1 | k2 | k3 | k4 | k5 | b | R2 |

| A | 0.30 | 0.20 | 0.26 | 0.10 | 0.14 | 1.47 | 0.895 |

| B | 0.10 | 0.10 | 0.30 | 0.30 | 0.20 | 0.00 | 0.855 |

| C | 0.14 | 0.30 | 0.30 | 0.10 | 0.16 | 0.20 | 0.894 |

| D | 0.20 | 0.30 | 0.30 | 0.10 | 0.10 | 0.06 | 0.880 |

| E | 0.30 | 0.30 | 0.20 | 0.10 | 0.10 | 1.40 | 0.896 |

| F | 0.10 | 0.10 | 0.30 | 0.30 | 0.20 | 0.30 | 0.893 |

| Average | 0.19 | 0.22 | 0.28 | 0.17 | 0.15 | 0.19 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).