Submitted:

28 April 2026

Posted:

29 April 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Evolution from Traditional to Novel Metrics: Shift from Output-Focused to Multi-Dimensional Metrics

2.1. Knowledge Diffusion and Spillover Metrics

- Hauser (1998) critiques the limitations of market-outcome metrics for all R&D types, advocating tiered metrics and “research tourism” to encourage external idea exploration.

- Kristiansen and Ritala (2018) show that traditional KPIs are ineffective for radical innovation, proposing process-based measures such as market orientation, learning, and resource dedication.

- Albuquerque et al. (2024) and Klessova et al. (2021) introduce frameworks assessing innovation maturity and upstream potential rather than output alone.

- Lin, Shih, & Chang (2024) and Autant-Bernard et al. (2013) highlight knowledge diffusion from academia to industry and across European regions as a critical but under-measured aspect of innovation performance.

- Su, Yu, & Tao (2018) develop metrics for knowledge diffusion efficiency in R&D networks, complementing traditional productivity measures.

2.2. Innovation Velocity as a Strategic Metric

- Jing (2024) reviews how innovation speed varies across industries and time periods.

- Kessler & Chakrabarti (1996) provide a conceptual model of the antecedents and outcomes of innovation speed.

- Langerak et al. (2004) link market orientation, product advantage, and launch proficiency to faster time-to-market and better performance.

2.3. Multi-Dimensional Performance Assessment Frameworks: From Single Indicators to Integrated Dashboards

- Bitman & Sharif (2008) present a project-ranking system evaluating reasonableness, attractiveness, responsiveness, competitiveness, and innovativeness.

- Mallick & Carlson (2003) emphasise integrated frameworks ecognizing the relationships between design, manufacturing, and marketing metrics.

- Meyer et al. (1997) advocate multi-product, multi-period planning and cross-departmental data integration to assess technological and market leverage.

2.4. Strategic Alignment of R&D Portfolios: Strategic Alignment as a Dimension of R&D Performance

- Santiago & Soares (2020) show how “bucket” approaches can support strategic portfolio alignment.

- Martinsuo & Anttila (2022) and Martinsuo, Poskela & Killen (2022) discuss practices of strategic alignment within and between innovation portfolios.

- Cooper, Edgett & Kleinschmidt (2001) and Meskendahl (2010) demonstrate that portfolio-level selection and ecognizing ze frameworks can enhance success when explicitly tied to business strategy.

2.5. Context-Specific Performance Management Systems: Readiness and Adaptability Frameworks

- Larsen & Lindquist (2016) develop an activity-level framework for a manufacturing R&D department with emphasis on transparency and sustainability.

- Fayek & Golabchi (2021) focus on collaborative R&D in the construction sector, aligning metrics with industry needs.

- Lee & Lee (2023) introduce a system ecognizing indicators by observability and frequency, with a progress-priority matrix for a Korean research institute

- Holden (2022), Van Cauwenbergh et al. (2021), and Yfanti & Sakkas (2024) expand Technology Readiness Levels (TRL) into broader “institutional readiness” frameworks for sustainable and co-created innovations.

2.6. Organisational and Team Dimensions of Performance: Team, ecognizing ze Health and Resilience Metrics

- Edmondson (1999) introduces the concept of psychological safety and learning behaviour in work teams.

- Atkinson et al. (2020, 2021) and Hülsheger et al. (2009) link team-level factors to innovation outcomes.

- Varajão, Fernandes & Amaral (2023) show how information systems team resilience improves project success.

- Liu et al. (2024), Naderpajouh et al. (2020), and Koilakonda (2023) address R&D network resilience under risk propagation and strategies for overcoming challenges.

- Woods (2015) outlines four concepts of resilience relevant to innovation project management.

3. Conceptual Framework

3.1. Framework Principle

| Performance dimension | Description |

|---|---|

| Knowledge Creation and Diffusion |

Measurement: patents, publications, datasets, methods, and evidence of reuse across other projects., stronger human-centric KPIs such as staff mobility across projects, number of mentoring sessions or internal workshops conducted (mentoring frequency), integration rate of research outcomes into operational workflows (adoption rate of new R&D methods), contribution to professional standards and Sonatrach-Academia linkages (Martinsuo et al., 2022; Nonaka & Takeuchi, 2019). Rationale: R&D value extends beyond immediate deliverables to the creation of knowledge assets with long-term impact. |

| Innovation Velocity and Learning |

Measurement: iteration cycle time, capability accretion index, experiment-to-insight ratio, rate of adoption of lessons across teams. Innovation performance should not be conflated with velocity. While shorter cycle times may improve responsiveness, they do not necessarily indicate deeper learning or technological advancement. Genuine innovation requires iterative experimentation, reflective learning, and the ability to transform failures into insight (Pisano, 2019). Therefore, the measurement system privileges evidence of validated learning, capability accumulation, and knowledge diffusion across the organization. Rationale: Projects that accelerate organizational learning create competitive advantage, regardless of immediate outcomes. |

| Dynamic Strategic Alignment |

Measurement: knowledge reuse rates, adaptive behavior during disruptions, and team recovery after project setbacks. Strategic alignment represents the systematic coherence between organizational objectives, strategic development domains, operational processes, and the resources mobilized to achieve them. In R&D contexts, effective alignment entails translating corporate strategy into actionable portfolio decisions through mechanisms such as thematic road-mapping, adaptive prioritization, and iterative alignment reviews that account for shifting strategic, regulatory, and market trends. Recognizing the inherent challenges of quantifying strategic fit, the proposed BTM framework adopts a mixed-methods triangulation approach integrating expert judgment, quantitative indicators, and qualitative insights to enhance evaluative robustness. Its operationalization relies on measurable proxies such as knowledge reuse rates, adaptive responses to technological or market disruptions, and team recovery following project setbacks, which collectively capture the dynamic and nonlinear nature of learning and resilience within R&D ecosystems (Gibson & Birkinshaw 2020). Rationale: Performance should reflect not only internal goals but responsiveness to external dynamics. |

| Team and Organizational Health |

Measurement: psychological safety, interdisciplinary collaboration, and network centrality of knowledge exchange. To reduce subjectivity in KPI interpretation, the framework employs structured expert elicitation methods, including the Delphi technique and inter-rater reliability assessment. These procedures ensure consistency across evaluators and improve transparency and replicability (Santiago & Soares, 2021). Psychological safety within R&D teams is increasingly recognized as a key driver of innovation, knowledge sharing, and problem-solving. To move from conceptual understanding to operational management, psychological safety can be implemented through a structured set of SMART indicators that reflect both behavioral dynamics and tangible outcomes. Quantifiable measures include team stability, project success indicators, the number of cross-team problem resolutions, innovation output per member, and engagement and participation indices. Behavioral signals (engagement, collaboration) reveal the presence of a psychologically safe environment, while outcome-oriented indicators (patents, project successes) demonstrate its concrete impact on organizational performance. By integrating these measures into R&D management systems, organizations can not only monitor team dynamics but also design targeted interventions to foster trust, inclusion, and sustainable innovation. Rationale: High-performing R&D projects rely on healthy team dynamics that foster creativity and innovation. |

| Resilience and Robustness |

Measurement: adaptability to shocks (budget cuts, competitor advances) and robustness of outputs under uncertainty, obsolescence index. Finally, measuring resilience and responsiveness to competitor advances requires multidimensional indicators. The revised model incorporates an ‘Adaptability Index,’ capturing project continuity under disruption, and comparative benchmarking against emerging technologies to gauge robustness relative to industry evolution (de la Torre et al., 2023). Rationale: Resilience is an overlooked determinant of long-term R&D value. |

- Knowledge Creation & Diffusion (KC) — large literatures on knowledge spillovers, bibliometrics, patent-to-industry diffusion and R&D networks. Useful reviews and empirical work show how to measure diffusion (citations, patent citations, network centrality) (Jaffe et al., 1993; Autant-Bernard et al., 2013 ; Audretsch, & Feldmanm 2004;. Lina et al. 2024)

- Innovation Velocity (IV) — the concept (sometimes called innovation speed, experiment velocity, or innovation velocity) is increasingly studied; research groups (e.g., Cambridge IFM’s InnVel work) and recent reviews explore composite measures (cycle time, experiment throughput, conversion rates). It’s an emerging metric space rather than a mature single index (Kessler and Chakrabarti, 1996; Langerak et al. 2004, Jing, 2024)

- Dynamic Strategic Alignment (DA) — strategic alignment for R&D and portfolios (including adaptive alignment across stages) has active empirical work showing firms use continuous alignment practices and bucket methods to keep R&D in sync with strategy (Cooper et al., 2001: Meskendahl, 2010; Santiago et al., 2020; Martinsuo and Anttila, 2022, Martinsuo et al. 2022).

- Team & Organizational Health (TH) — organizational and team health metrics (psychological safety, resilience, cross-disciplinary coordination) are increasingly linked to innovation outcomes in healthcare and IS projects; there is growing empirical evidence that team health predicts project success and resilience. (Edmondson, 1999 ; Hülsheger et al., 2009 ; Atkinson et al. 2020 ;. Atkinson and Singer, 2021 ; Varajão et al., 2023).

- Resilience & Robustness (RR) — an expanding literature applies resilience concepts to projects and R&D (frameworks, case studies, meta-theoretical essays). Resilience as a Where BTM can legitimately claim novelty (publishable claims) (Ahern et al., 2014; Woods, 2015; Naderpajouh et al. 2020, Koilakonda, 2023).

3.2. Framework Originality

- Integration & Equal-Status Dimensions — many studies focus on single dimensions or treat some (e.g., team health) as secondary. Presenting all five as co-equal pillars in a single, operational management framework is a clear synthetic contribution.

- Explicit Stage-Weighted Scoring + Decision Rules — formalizing how weights change with maturity stage (rules, piecewise functions, or ML-driven reweighting) is rarely formalized in literature; operationalizing this is novel.

- Innovation Velocity as a Core Dimension — treating IV as a first-class performance dimension (with a defined composite index) rather than a loose “speed” concept is an opportunity for original methodological contribution.

- Team & Organizational Health embedded into scoring — including TH with measurable KPIs (psychological safety, cross-functional throughput, mentoring) directly in project scoring (not just HR dashboards) is under-explored academically.

- Value-of-Failure operationalization inside RR (measuring retained assets, abandonment reuse index) — explicit KPIs for “value when abandoned” are scarce and will strengthen originality.

4. Methods and Validation Plan for the BTM Framework

4.1. Methods

4.2. Scoring & Index Calculation

- Operationalisation of Dimensions: Literature review to define measurable indicators for each dimension.

- Expert Consultation: Workshops with R&D managers and policymakers to refine KPIs and scoring weights.

- Pilot Testing: Apply the framework to a representative portfolio of R&D projects.

- Multi-Criteria Decision Analysis (MCDA): Balance trade-offs between dimensions and assign weights.

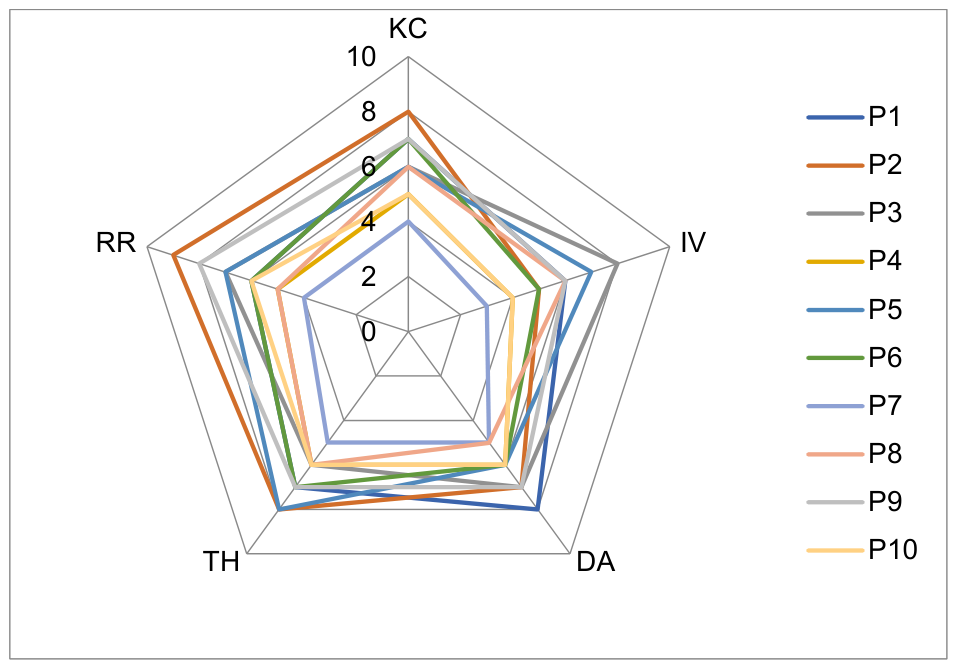

- Visualisation: Present results through radar plots, heatmaps, and portfolio dashboards for intuitive interpretation.

4.3. Processes and Tools for Implementation

-

Planning Phase:

- o

- Define multi-layered KPIs combining traditional and novel metrics.

- o

- Establish a “performance contract” ecognizing both success and “intelligent failure.”

-

Execution Phase:

- o

- Use real-time dashboards and AI-assisted early warning systems to detect weak signals of underperformance (scope drift, communication stress).

- o

- Conduct quarterly dynamic alignment reviews to enable pivots.

-

Evaluation Phase:

- o

- Employ a multi-dimensional scorecard including Knowledge Half-Life (duration of knowledge relevance) and Portfolio Contribution Index (cross-project spillover effects).

4.4. Validation Plan

4.5. Expected Outcomes

4.6. BTM Benefits

- Reframing R&D performance as ecosystemic rather than project-isolated.

- Capturing hidden value from projects that “fail” traditionally but succeed in knowledge or capability building.

- Incorporating resilience and adaptability, critical for innovation under uncertainty.

- Offering a balanced mix of quantitative and qualitative indicators applicable across sectors.

5. A Case Study

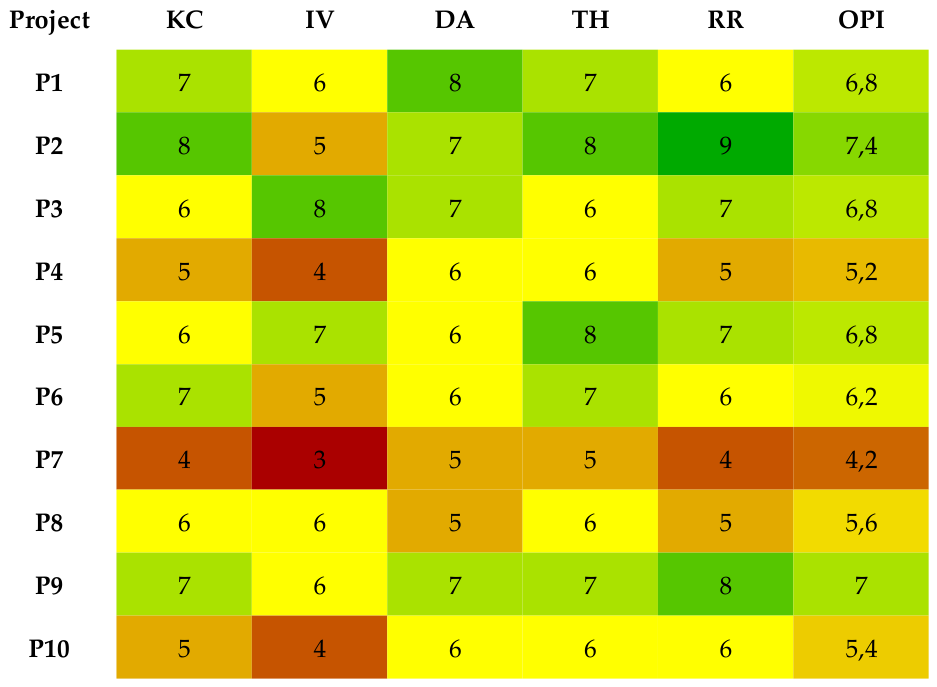

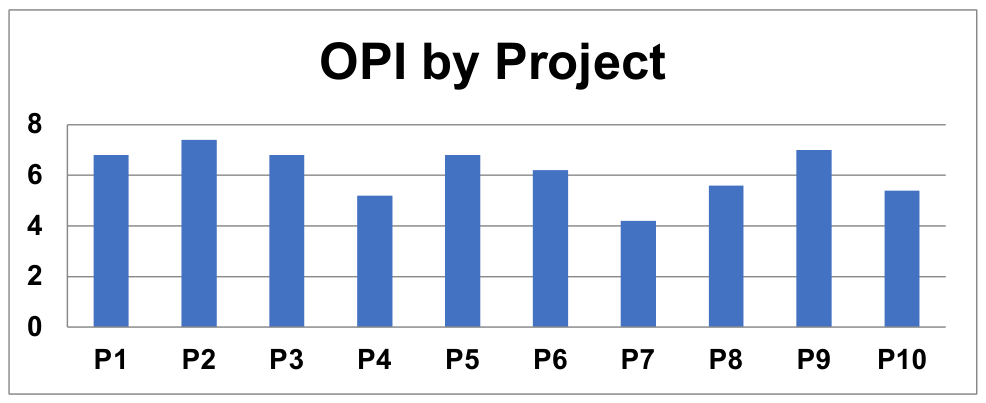

5.1. Analysis of the Results

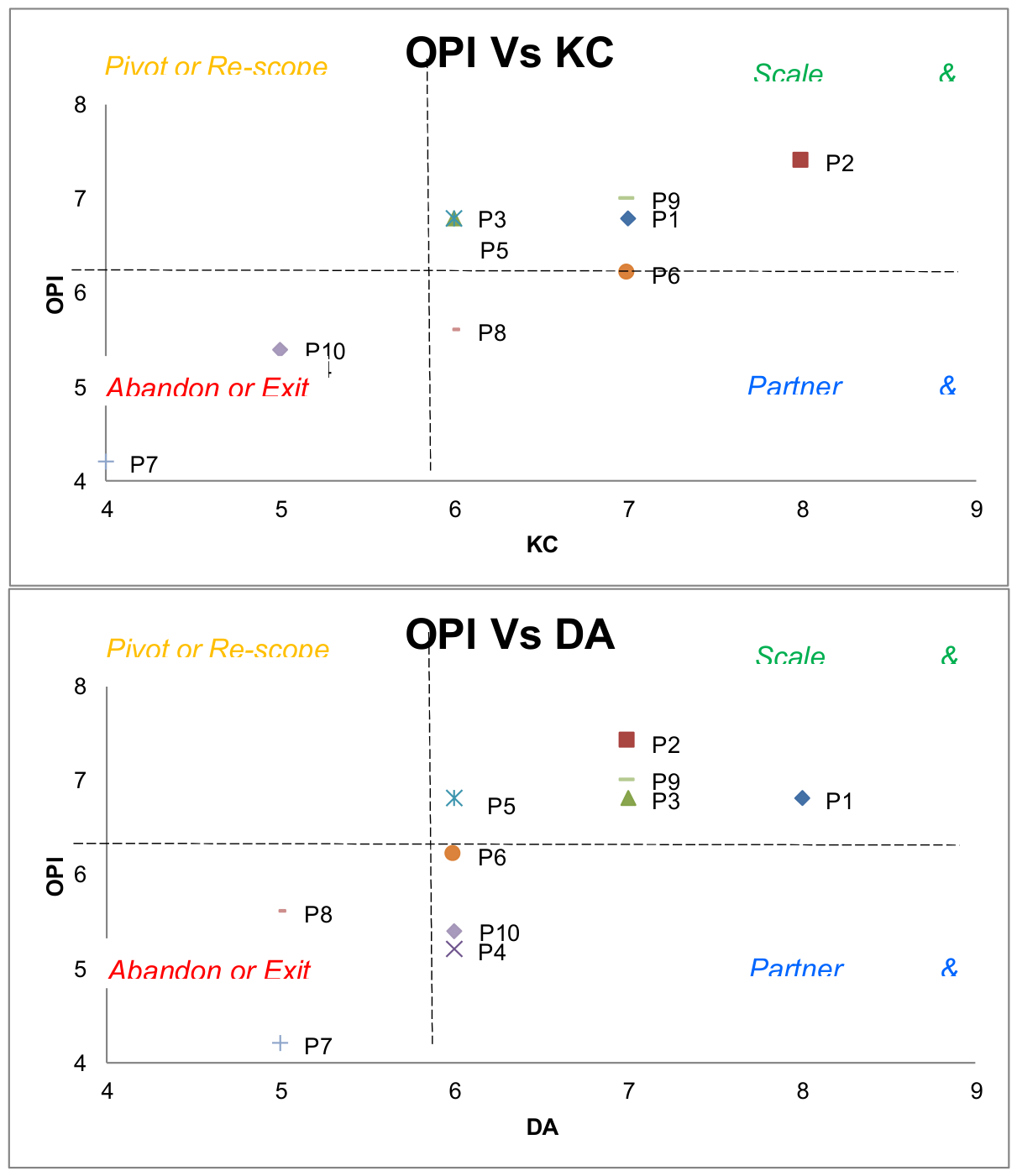

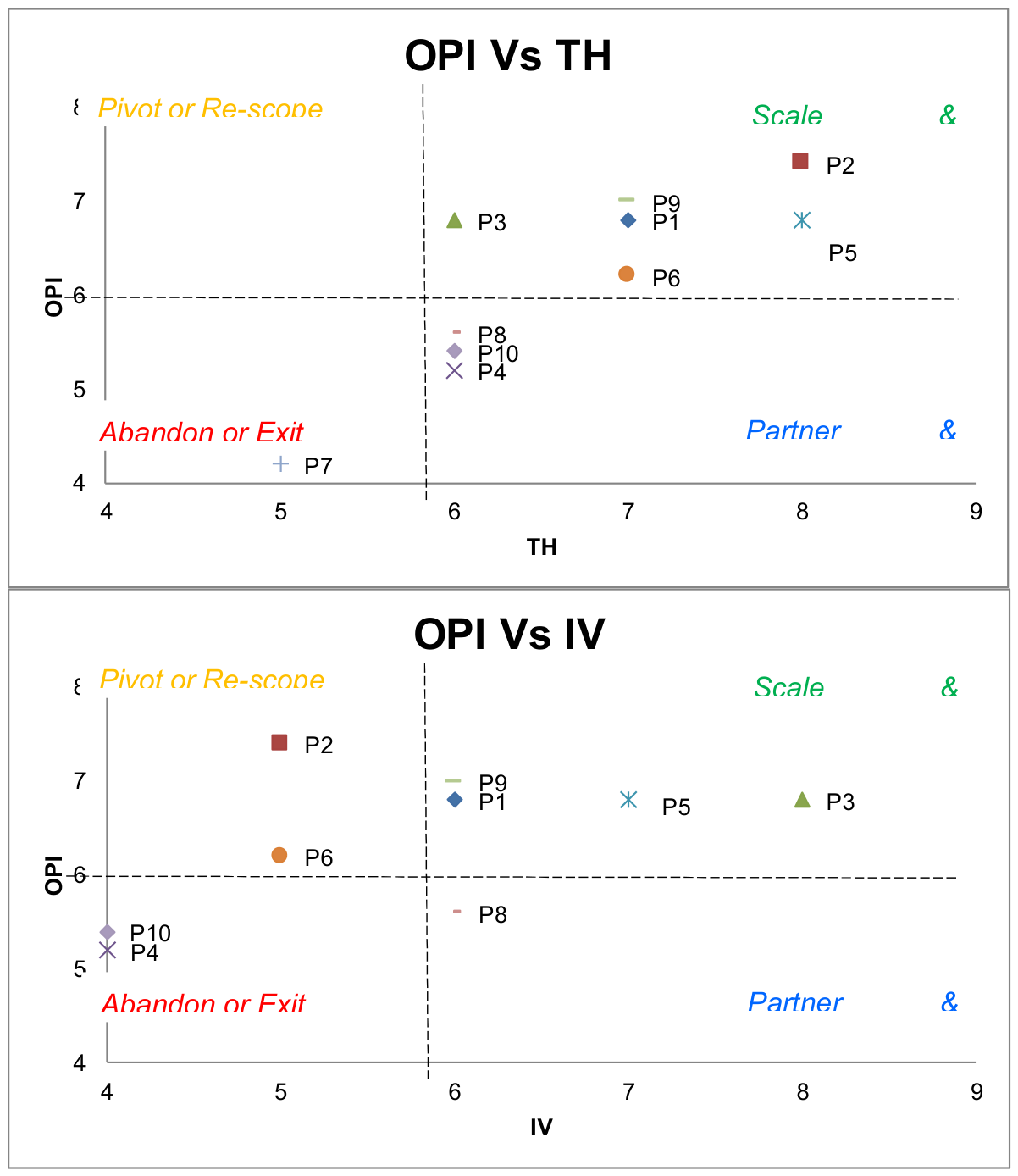

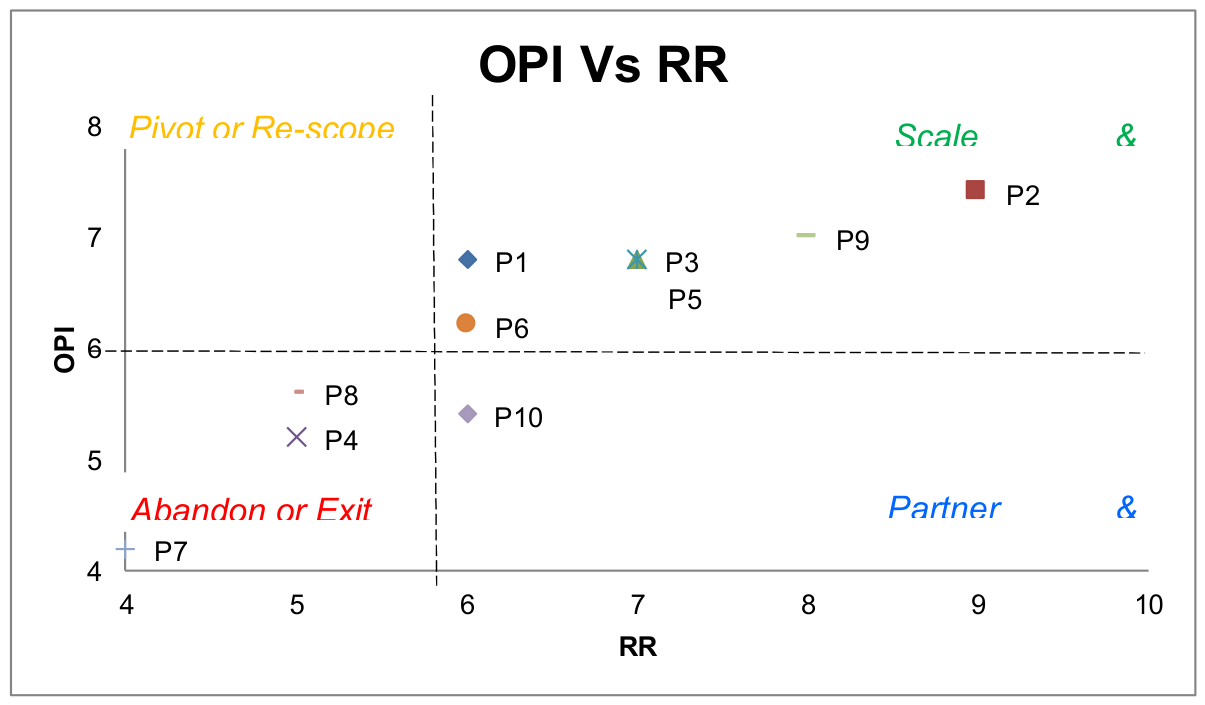

5.1.1. Strategic Portfolio Analysis

- Scale & Continue: P1, P2, P3, P6, P9. These drive both revenue and internal expertise.

- Pivot or Re-scope: P5. High performer but “Knowledge-light.” Re-scope to ensure technical insights and IP are better captured.

- Partner & Support: P8. High knowledge potential but execution is failing. Needs a support partner to turn knowledge into results.

- Abandon or Exit: P4, P7, P10. Projects providing neither actionable results nor strategic learning.

- Scale & Continue: P3, P5. True innovators. They deliver high current performance and break new ground for the future.

- Pivot or Re-scope: P1, P2, P6, P9. Efficient “Cash Cows.” High performance but low novelty. Action: Inject R&D to prevent them from becoming obsolete in the next cycle.

- Partner & Support: None.

- Abandon or Exit: P4, P7, P8, P10. Stagnant projects with no future competitive value.

- Scale & Continue: P1, P2, P3, P9 are core assets. They drive the business forward and fit the mission perfectly.

- Pivot or Re-scope: P5, P6. Successful but “maverick” projects. They perform well but are drifting from the strategy. Action: Align scope to serve the main mission.

- Partner & Support: None.

- Abandon or Exit: P4, P7, P8, P10. Low performance and no strategic fit; these are “distraction” projects.

- Scale & Continue: P1, P2, P5, P6, P9. These projects are successful and contribute to a healthy, sustainable work environment.

- Pivot or Re-scope: P3. A top performer sitting exactly on the health threshold (6.0). Action: Investigate potential team burnout or cultural friction before scaling further.

- Partner & Support: None. No projects show high team health with low performance.

- Abandon or Exit: P4, P7, P8, P10. These projects are underperforming and are likely toxic to team morale or organizational culture.

- Scale & Continue (High OPI/High RR): P1, P2, P3, P5, P9. These are “weather-proof” assets that deliver high performance.

- Pivot or Re-scope (High OPI/Low RR): None. High performance is currently backed by sufficient robustness.

- Partner & Support (Low OPI/High RR): P10. A stable project but underperforming. Seek external efficiency to boost output without altering its solid structure.

- Abandon or Exit (Low OPI/Low RR): P4, P7, P8. Fragile projects with low performance; they represent a high risk of failure under stress.

5.1.2. Overall Portfolio Performance

- The Overall Performance Index (OPI) across the portfolio ranges from 4.2 (42%) to 7.4 (74%), demonstrating moderate dispersion.

- Six projects (P1, P2, P3, P5, P6, P9) are “Strong” (≥6.0 OPI, ≥60%).

- Four projects (P4, P7, P8, P10) remain “Acceptable”, indicating latent improvement potential.

- No project reached the “Outstanding” benchmark (≥8.0), but none fell below 4.0 (critical zone), revealing a strategic opportunity to elevate excellence.

- No project is in the critical failure territory (<4.0), but none are in critical failure territory (<4.0 OPI), indicating baseline operational stability.

5.1.3. High-Performing Projects (“Strong”)

- P2_CCS_Field_Pilot (OPI 7.4) leads the portfolio, excelling in Resilience & Robustness (RR = 9) and Team Health (TH = 8), demonstrating high adaptability and organizational maturity.

- P9_Reservoir_Simulation_Platform (OPI 7.0) shows balanced performance across all dimensions, reflecting a well-structured and strategically coherent project.

- Other “Strong” projects (P1, P3, P5, P6) perform well on IV and KC, but display opportunities to enhance resilience, risk anticipation, and cross-functional alignment.

5.1.4. Medium-Performing Projects (“Acceptable”)

- P4 (OPI 5.2) and P10 (OPI 5.4) show reasonable performance but lag in Innovation Velocity and Knowledge Creation, suggesting execution bottlenecks and low intellectual capital generation.

- P7 (OPI 4.2) is the weakest performer with consistently low scores, indicating a need for urgent strategic reassessment.

- P8 (OPI 5.6) remains constrained by weak strategic fit and modest resilience, limiting its long-term impact.

5.1.5. Dimension-Level Insights

- Knowledge Creation & Diffusion (KC): Scores vary from 4 to 8. Projects with low KC could adopt stronger publication, patenting, and cross-project knowledge-transfer policies.

- Innovation Velocity (IV): Mixed scores (3–8). Low IV in some projects highlights prototype delays, weak milestone tracking, or insufficient technical agility.

- Dynamic Strategic Alignment (DA): Scores range 5–8; lower scores indicate projects may be drifting from corporate or market priorities.

- Team & Organizational Health (TH): Scores mostly 5–8; weaker scores may stem from turnover, communication gaps, or insufficient inter-team cohesion.

- Resilience & Robustness (RR): Scores vary 4–9; the best performers excel here, but others risk disruption (e.g., technology disruption) without stronger risk management.

5.1.6. Portfolio-Level Patterns

- Dimension imbalance: Many projects show high Innovation Velocity but relatively lower Resilience & Robustness high output speed with potential fragility under uncertainty.(e.g., technology disruption).

- Strategic drift: Some “Acceptable” projects have lower Dynamic Strategic Alignment, suggesting misfit with corporate priorities or market/technology trends.

- Knowledge diffusion gaps: Lower KC in several projects implies insufficient cross-project knowledge transfer, limiting learning spillovers across the portfolio.

5.1.7. Portfolio-Level Recommendations

- Prioritize Support for Acceptable Projects: Reassess scope, ensure strategic alignment, and enhance collaboration tools for P4, P7, P8, P10.

- Replicate Best Practices: Use the governance, team practices, and risk-management strategies of P2 and P9 as models.

- Set “Outstanding” Targets: Raise benchmarks and incentive structures to push top projects beyond OPI 8.0.

- Balance Risk/Reward: Keep a healthy mix of high-risk exploratory projects (like P7) and mature impact-driven, strategically aligned projects (like P2 and P9).

5.1.8. Benchmarking & Sensitivity

- Benchmarking: Compare high-performing P2 and P9 against existing models like Balanced Scorecard or TRL-based assessments to quantify incremental value of the BTM framework.

- Sensitivity analysis: Adjust KPI weights (e.g., raise weight of Resilience to 30% for high-risk fields) to test ranking robustness under alternative strategic scenarios.

- Trendline: The BTM results align with literature calling for multi-dimensional performance metrics and can serve as a baseline for longitudinal monitoring of petroleum R&D portfolios.

5.1.9. Actionable Recommendations (Table 6)

- Scale best practices: Replicate governance, knowledge-sharing, and risk-management processes of P2 and P9 in weaker projects.

- Raise OPI targets: Set “Outstanding” goalposts (≥8.0) and tie incentives to balanced performance across all five dimensions.

- Strategic re-scope: Reposition projects with low DA to better align with emerging energy transitions or market needs.

- Embed resilience: Strengthen scenario planning, redundancy architecture, and early-warning systems for projects with low RR scores.

- Knowledge platforms: Implement structured cross-project seminars or digital platforms to enhance KC metrics portfolio-wide.

6. Conclusion

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

References

- Ahern, T.; Leavy, B.; Byrne, P. J. Complex project management as complex problem solving: A distributed knowledge management perspective. Int. J. Proj. Manag. 2014, 32(8), 1371–1381. [Google Scholar] [CrossRef]

- Robinson Fayek, Aminah; Golabchi, Alireza. Framework for identification of performance metrics for research and development collaborations: Construction Innovation Centre. Built Environ. Proj. Asset Manag. 2022, 12(5), 837–852. [Google Scholar] [CrossRef]

- Atkinson, M. K.; Singer, S. J. Managing Organizational Constraints in Innovation Teams: A Qualitative Study Across Four Health Systems. Med. Care Res. Rev. 2021, 78(5), 521–536. [Google Scholar] [CrossRef]

- Atkinson, M. K.; Wessel, J. L.; Chacko, T. I.; Clark, C. Managing organizational constraints in innovation teams. Res. Policy 2020, 49(1), 103120. [Google Scholar] [CrossRef]

- Audretsch, D. B.; Belitski, M. Knowledge spillover entrepreneurship, innovation and regional development. Reg. Stud. 2022, 56(5), 775–787. [Google Scholar] [CrossRef]

- Audretsch, D. B.; Belitski, M. The role of R&D and knowledge spillovers in innovation and productivity. Eur. Econ. Rev. 2020, 130, 103391. [Google Scholar] [CrossRef]

- Audretsch, D. B.; Feldman, M. P. Chapter 61 Knowledge spillovers and the geography of innovation. In Cities and Geography;Handbook of Regional and Urban Economics; Elsevier, 2004; Vol. 4, pp. 2713–2739. [Google Scholar] [CrossRef]

- Autant-Bernard, C.; Fadairo, M.; Massard, N. Knowledge diffusion and innovation policies within the European regions: Challenges based on recent empirical evidence. Res. Policy 2013, 42(1), 196–210. [Google Scholar] [CrossRef]

- Bican, P. M.; Brem, A. Managing innovation: What do we know about ambidexterity in innovation management? Int. J. Innov. Manag. 2020, 24(8), 2050072. [Google Scholar] [CrossRef]

- Bitman, W. R.; Sharif, N. A Conceptual Framework for Ranking R&D Projects. IEEE Trans. Eng. Manag. 2008, 55(2), 267–278. [Google Scholar] [CrossRef]

- Cannon, M. D.; Edmondson, A. C. Failing to learn and learning to fail (intelligently): How great organizations put failure to work to innovate and improve. Long. Range Plan. 2005, 38(3), 299–319. [Google Scholar] [CrossRef]

- Coccia, M. Evolution and convergence of technological paradigms: Toward a theory of the emergence of new technologies. Technol. Forecast. Soc. Change 2021, 166, 120614. [Google Scholar] [CrossRef]

- Cooper, R. G.; Sommer, A. F. New-product portfolio management with agile: Challenges and solutions for manufacturers using Stage-Gate systems. Res.-Technol. Manag. 2020, 63(1), 29–38. [Google Scholar] [CrossRef]

- Cooper, R. G.; Edgett, S. J.; Kleinschmidt, E. J. Portfolio management for new products. R&D Manag. 2001, 31(4), 361–380. [Google Scholar] [CrossRef]

- Cross, R.; Sproull, L. The hidden power of social networks: Understanding how work really gets done in organizations; Harvard Business Review Press, 2022. [Google Scholar]

- Albuquerque, Danyllo; Damásio, Jemerson; Santos, Danilo; Almeida, Hyggo; Perkusich, Mirko; Perkusich, Angelo. Leveraging the innovation index (IVI): A research, development, and innovation-centric measurement approach. J. Open Innov. Technol. Mark. Complex. 2024, 10(3), 100346. [Google Scholar] [CrossRef]

- de la Torre, E.; García, J. L.; Molina, J. Towards data-driven R&D performance measurement: Integrating analytics and decision-support systems. Technovation 2023, 125, 102707. [Google Scholar] [CrossRef]

- Edmondson, A. C. Psychological safety and learning behavior in work teams. Adm. Sci. Q. 1999, 44(2), 350–383. [Google Scholar] [CrossRef]

- Fleming, L.; King, C.; Juda, A. I. Small worlds and regional innovation. Organ. Sci. 2019, 30(3), 545–563. [Google Scholar] [CrossRef]

- Gibson, C. B.; Birkinshaw, J. The antecedents, consequences, and mediating role of organizational ambidexterity. Acad. Manag. Perspect. 2020, 34(3), 287–305. [Google Scholar] [CrossRef]

- Holden, N. M. A readiness level framework for sustainable circular bioeconomy. EFB Bioeconomy 2022, 1(2), 100031. [Google Scholar] [CrossRef]

- Hülsheger, U. R.; Anderson, N.; Salgado, J. F. Team-level predictors of innovation at work: A comprehensive meta-analysis. Appl. Psychol. 2009, 58(1), 1–26. [Google Scholar] [CrossRef]

- Jaffe, A. B.; Trajtenberg, M.; Henderson, R. Geographic localization of knowledge spillovers. Q. J. Econ. 1993, 108(3), 577–598. [Google Scholar] [CrossRef]

- Normann Kristiansen, Jimmi; Ritala, Paavo. Measuring radical innovation project success: typical metrics don’t work. J. Bus. Strategy 2018, 39(4), 34–41. [Google Scholar] [CrossRef]

- Jing, Z. Assessing the Metric of Innovation Speed Across Varied Industries and Time Periods: A Thorough Review. Int. J. Acad. Res. Econ. Manag. Sci. 2024, 13(1), 1–12. [Google Scholar] [CrossRef]

- Hauser, John R. Research, Development, and Engineering Metrics. Manag. Sci. 1998, 44(12-part-1), 1670–1689. [Google Scholar] [CrossRef]

- Kessler, E. H.; Chakrabarti, A. K. Innovation speed: A conceptual model of context, antecedents, and outcomes. Acad. Manag. Rev. 1996, 21(4), 1143–1191. [Google Scholar] [CrossRef]

- Koilakonda, R. R. Resilience in project management: strategies for overcoming challenges. Int. J. Core Eng. Manag. 2023, 7(6). [Google Scholar] [CrossRef]

- Langerak, F.; Hultink, E. J.; Robben, H. S. The impact of market orientation, product advantage, and launch proficiency on new product performance and organizational performance. J. Product. Innov. Manag. 2004, 21(2), 79–94. [Google Scholar] [CrossRef]

- Larsen, A.; Lindquist, P. A performance measurement framework for R&D activities—Increasing transparency of R&D value contribution. 2016. [Google Scholar]

- Lee, K.; Lee, S. Enhancing R&D Performance Management: A Case of R&D Projects in South Korea. Sustainability 2023, 15, 11752. [Google Scholar] [CrossRef]

- Liu, H.; Su, B.; Guo, M.; Wang, J. Exploring R&D network resilience under risk propagation: An organizational learning perspective. Int. J. Prod. Econ. 2024, 278, 109266. [Google Scholar] [CrossRef]

- Mallick, D. N.; Carlson, C. L. An Integrated Framework for Measuring Product Development Performance in High Technology Industries. 2003. [Google Scholar] [CrossRef]

- Meyer, Marc H.; Tertzakian, Peter; Utterback, James M. Metrics for Managing Research and Development in the Context of the Product Family. Manag. Sci. 1997, 43(1), 88–111. [Google Scholar] [CrossRef]

- March, J. G. Exploration and exploitation in organizational learning. Organ. Sci. 1991, 2(1), 71–87. [Google Scholar] [CrossRef]

- Martinsuo, M.; Anttila, R. Practices of strategic alignment in and between innovation project portfolios. Proj. Leadersh. Soc. 2022, 3, 100066. [Google Scholar] [CrossRef]

- Martinsuo, M.; Poskela, J.; Killen, C. P. Practices of strategic alignment in and between innovation portfolios. R&D Manag. 2022, 52(5), 817–835. [Google Scholar] [CrossRef]

- Martinsuo, M.; Poskela, J.; Paasi, J. Managing uncertainty and learning in R&D projects: The role of performance measurement and feedback. Int. J. Proj. Manag. 2022, 40(4), 385–398. [Google Scholar] [CrossRef]

- Meskendahl, S. The influence of business strategy on project portfolio management and its success—A conceptual framework. Int. J. Proj. Manag. 2010, 28(8), 807–817. [Google Scholar] [CrossRef]

- Naderpajouh, N.; Matinheikki, J.; Keeys, L. A.; Aldrich, D. P.; Linkov, I. Resilience and projects: An interdisciplinary crossroad. Proj. Leadersh. Soc. 2020, 1, 100001. [Google Scholar] [CrossRef]

- Newman, A.; Obschonka, M.; Schwarz, S.; Cohen, M.; Nielsen, I. Entrepreneurial mindset: Individual and organizational learning perspective. J. Bus. Res. 2022, 145, 697–708. [Google Scholar] [CrossRef]

- Nonaka, I.; Takeuchi, H. The wise company: How companies create continuous innovation; Oxford University Press, 2019. [Google Scholar]

- Pisano, G. P. Creative construction: The DNA of sustained innovation; PublicAffairs, 2019. [Google Scholar]

- Robertson, J.; Caruana, A.; Ferreira, C. Innovation performance: The effect of knowledge-based dynamic capabilities in cross-country innovation ecosystems. J. Bus. Res. 2021, 32(2), 101866. [Google Scholar] [CrossRef]

- Roy, B. Multicriteria methodology for decision aiding; Kluwer Academic Publishers, 1996. [Google Scholar] [CrossRef]

- Santiago, M.; Soares, A. L. Measuring R&D collaboration performance: A multidimensional approach. Technol. Forecast. Soc. Change 2021, 170, 120915. [Google Scholar] [CrossRef]

- Santiago, L.; Soares, V. M. O. Strategic Alignment of an R&D Portfolio by Crafting the Set of Buckets. IEEE Trans. Eng. Manag. 2020, 67(2), 309–321. [Google Scholar] [CrossRef]

- Shih, W.-; Shih, H.-Y.; Chang, Y.-Y. Knowledge Diffusion from Academia to Industry. R&D Manag. 2024, 54(5), 12884. [Google Scholar] [CrossRef]

- Su, J.; Yu, Y.; Tao, Y. Measuring knowledge diffusion efficiency in R&D networks. Knowl. Manag. Res. Pract. 2018, 16(2), 1–12. [Google Scholar] [CrossRef]

- Klessova, Svetlana; Engell, S.; Thomas, Catherine. A Framework for Measuring Market-upstream Innovations and Innovation Performance in R&D Projects. Academy of Management Proceedings., 2021. [Google Scholar] [CrossRef]

- Tsinopoulos, C.; Al-Zu’bi, Z. M. F. R&D management in the digital era: Rethinking processes, performance and people. Res.-Technol. Manag. 2023, 66(1), 25–35. [Google Scholar] [CrossRef]

- Van Cauwenbergh, N.; Dourojeanni, P. A.; van der Zaag, P.; Brugnach, M.; Dartee, K.; Giordano, R.; Lopez-Gunn, E. Beyond TRL – Understanding institutional readiness for implementation of nature-based solutions. Environ. Sci. Policy 2021, 127, 293–302. [Google Scholar] [CrossRef]

- Varajão, J.; Fernandes, G.; Amaral, A. Linking information systems team resilience to project management success. In Project Leadership and Society.; 2023. [Google Scholar] [CrossRef]

- Wang, J.; Lin, W.; Huang, Y.-H. A performance-oriented risk management framework for innovative R&D projects. Technovation 2010, 30(11-12), 601–610. [Google Scholar] [CrossRef]

- Woods, D. D. Four concepts for resilience and the implications for the future of resilience engineering. Reliab. Eng. Syst. Saf. 2015, 141, 5–9. [Google Scholar] [CrossRef]

- Yfanti, S.; Sakkas, N. Technology Readiness Levels (TRLs) in the Era of Co-Creation. Appl. Syst. Innov. 2024, 7, 32. [Google Scholar] [CrossRef]

| BTM dimension | Key concepts in literature | Key references |

| Knowledge Creation & Diffusion (KC) | Knowledge spillovers, R&D networks, bibliometrics, patent citation analysis, tacit knowledge transfer | Autant-Bernard et al. (2013). Audretsch, & Feldman (2004). Jaffe et al. (1993). |

| Innovation Velocity (IV) | Innovation speed, time-to-market, cycle time, experiment throughput, agility in R&D | Langerak et al. (2004) Kessler and Chakrabarti (1996). |

| Dynamic Strategic Alignment (DA) | Portfolio alignment, adaptive project selection, continuous strategy updating | Martinsuo et al. (2022). Cooper et al. (2001). Meskendahl (2010). |

| Team & Organizational Health (TH) | Psychological safety, cross-functional integration, organizational learning, innovation culture | Edmondson (1999). Atkinson et al. (2020). Hülsheger et al. (2009). |

| Resilience & Robustness (RR) | Project resilience, adaptive capacity, value of failure, robustness to shocks | Naderpajouh et al. (2020). Ahern et al. (2014) Woods (2015) |

| BTM dimension | Proposed KPIs | Measurement approach |

|---|---|---|

| Knowledge Creation & Diffusion (KC) | - Number of peer-reviewed publications - Patent applications and citations - Knowledge transfer workshops conducted - Cross-team knowledge sharing sessions - % of projects reusing methods or models from other projects; - % of outputs successfully applied in different business units; -Mentions in industry standards or internal guidelines. |

Quantitative measures of publications and workshops often fail to capture the true extent of learning. Quantitative metrics such as publication counts or workshop frequency do not fully capture knowledge absorption or reuse. Therefore, the framework now includes process-oriented KPIs—such as the percentage of projects reusing methodologies or data models, and successful cross-unit application of results—to better represent knowledge integration and learning depth (Nonaka & Takeuchi, 2019). |

| Innovation Velocity (IV) | - Industrial application rate: percentage of R&D outputs successfully deployed or scaled up in industrial operations. -Time from prototype to first external licensing; - Ratio of exploration vs. exploitation projects; -Number of projects advancing from TRL 2 to 4; - Number of prototypes per dataset or publication. |

Project tracking tools, milestone reviews, time-to-market analytics Similarly, the notion of innovation efficiency has been recalibrated. R&D metrics must distinguish between operational efficiency and innovation depth. The revised framework replaces pure speed metrics with indicators that reflect exploration–exploitation balance, such as the ratio of exploratory to exploitative projects, time from prototype to external licensing, and transition rates between Technology Readiness Levels (TRLs). These measures ensure that longer-gestation innovations are appropriately valued (March, 1991). |

| Dynamic Strategic Alignment (DA) | - Number of projects contributing to defined strategic objectives; - Alignment lag time (between strategy update and project adjustment); - % of projects addressing regulatory or market trends. |

Portfolio management software, strategy review reports, stakeholder surveys To reduce subjectivity in strategic alignment assessment, quantifiable proxies were introduced—such as the percentage of projects contributing to explicit corporate objectives, alignment lag time, and responsiveness to regulatory or market trends. These quantitative indicators balance strategic intent with measurable outcomes (Bican & Brem, 2020). |

| Team & Organizational Health (TH) | - Team psychological safety score - Cross-functional collaboration index - Employee innovation engagement score - Staff retention within R&D teams -% of team members acquiring new relevant skills per year; - % of high-value talent rotation or loss rate; - Quality index of cross-functional collaboration based on peer review. |

Employee surveys, HR analytics, network analysis, engagement platforms Collaboration metrics in the revised framework emphasize quality and outcome over frequency. Indicators such as the percentage of team members acquiring new technical skills, cross-departmental co-authorship ratios, and turnover of high-value talent provide insight into the vitality and renewal capacity of R&D teams (Cross & Sproull, 2022). |

| Resilience & Robustness (RR) | - Recovery time after project setbacks - Ratio of valuable learnings from failed projects - Scenario stress-test performance - Redundancy index in critical knowledge areas - % of projects successfully redirected after pivot; - % of failed projects with reused components; - % of projects tested under ≥ 2 disruptive scenarios; - % of outputs remaining useful under different market conditions. |

Post-mortem analyses, scenario simulations, resilience assessments, knowledge audits The framework introduces adaptive learning KPIs such as the percentage of projects successfully redirected after failure, reuse of terminated project outputs, and validation of results under disruptive scenarios. These indicators quantify learning agility and ensure that failures contribute to cumulative knowledge capital (Cannon & Edmondson, 2005). |

| Dimension | Key Performance Indicators (KPIs) | Example Metrics / Measurement | Weight (%) |

|---|---|---|---|

| Knowledge Creation & Diffusion (KC) | • Number of publications / patents • Knowledge sharing sessions (impact-based metrics such as: adoption rate of solutions, reuse of methodologies, and measurable improvements in project success) • External collaborations / co-publications • Knowledge reuse in other projects |

• Number of peer-reviewed papers / patents filed • Number of cross-project knowledge transfers • Citation impact (h-index, patent citations) • Number of workshops/seminars delivered |

20% |

| Innovation Velocity (IV) | • Time-to-prototype • Time-to-market readiness • Iteration speed (cycles per year) • % milestones achieved on time |

• Average cycle duration (idea → prototype)• Time lag between project start and deliverable• Number of sprints/iterations completed• % on-time milestone completion | 20% |

| Dynamic Strategic Alignment (DA) | • Alignment with corporate strategy • Relevance to market/technology trends • Stakeholder satisfaction (internal/external) • Portfolio synergy |

• Strategic fit scoring by experts • % of KPIs aligned with corporate strategy • Survey-based stakeholder satisfaction index • Cross-project complementarity score |

20% |

| Team & Organizational Health (TH) | • Psychological safety • Team collaboration effectiveness • Talent retention rate • Learning & skill development |

• Survey-based team trust/psychological safety scale (e.g., Edmondson, 1999) • Collaboration tool usage index • % staff turnover • Number of training hours / certifications per member |

20% |

| Resilience & Robustness (RR) | • Risk management effectiveness • Adaptability to change • Continuity under constraints • Intelligent failure recognition |

• Number of identified/mitigated risks • Recovery time from disruptions • % deliverables maintained during crises • Documented “intelligent failures” → lessons learned index |

20% |

| Project | KC | IV | DA | TH | RR | OPI_0_10 | OPI_percent | Classification |

|---|---|---|---|---|---|---|---|---|

| P1_Enhanced_Recovery_Tech | 7 | 6 | 8 | 7 | 6 | 6.8 | 68 | Strong |

| P2_CCS_Field_Pilot | 8 | 5 | 7 | 8 | 9 | 7.4 | 74 | Strong |

| P3_Digital_Well_Monitoring | 6 | 8 | 7 | 6 | 7 | 6.8 | 68 | Strong |

| P4_H2_CoProcessing | 5 | 4 | 6 | 6 | 5 | 5.2 | 52 | Acceptable |

| P5_Smart_Maintenance_AI | 6 | 7 | 6 | 8 | 7 | 6.8 | 68 | Strong |

| P6_Deepwater_Seismic_Improv | 7 | 5 | 6 | 7 | 6 | 6.2 | 62 | Strong |

| P7_Low_Cost_Geothermal | 4 | 3 | 5 | 5 | 4 | 4.2 | 42 | Acceptable |

| P8_Scaling_Up_Additives | 6 | 6 | 5 | 6 | 5 | 5.6 | 56 | Acceptable |

| P9_Reservoir_Simulation_Platform | 7 | 6 | 7 | 7 | 8 | 7 | 70 | Strong |

| P10_Materials_Corrosion_Study | 5 | 4 | 6 | 6 | 6 | 5.4 | 54 | Acceptable |

| Project Priority | Project IDs | Primary Strategic Action |

|---|---|---|

| High Priority | P1, P2, P3, P9 | Scale & Defend (High performance across all metrics). |

| Risk/Pivot | P5, P6 | Re-scope (Focus on Innovation and Strategic Alignment). |

| Marginal | P10, P8 | Partner/Support (Robust but low performance). |

| Critical Exit | P4, P7 | Immediate Termination (Low OPI, Low Resilience, Low Alignment). |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).