3. AI for Autonomous Vehicle

With the rapid advancement and subsequent embracement of artificial intelligence technologies, the automotive industry and science advocacy groups have began the discussion, proposal, and research into its use within the realm of autonomous automobile operation. Although consumers have shown some apprehension in autonomous vehicle adoption, the hypothetical inclusion of AI for use in AVs grants some potential customer more security [21]. In addition to the local AI/DL/ML frameworks utilized by autonomous vehicles, the development in the infrastructure of the Internet of Vehicles (IoV) has also grown in recent years. The IoV enables the autonomous vehicles connected to it, as well as the learning frameworks on board them, to communicate and share data with pedestrians, environments, and other vehicles[18]. The development of the IoV shows tremendous promise in the effectiveness of the infrastructure for use by autonomous vehicles and its relevance can’t be overlooked.

H. Thadeshwar [6] et al. have worked on a proposal for a self-driving automobile that relies on a fusion of hardware sensors and artificial intelligence to operate. The team went through several sources of literature related to hardware sensor technologies and AI. network technologies, reviewed their findings, and combined several of the best performing technologies to use on the autonomous driving operation of a 1/10 scale remote control car.

The scaled car utilizes input data read from an ultrasonic sensor and a Raspberry Pi camera. This input data is then sent to a convolutional neural network (CNN) server which makes use of a COCO dataset, which helps in making the model run faster via quantization. The CNN processing handles the interpretation of road signs, lane detection, and steering predictions and commands. The server also utilizes the input data to further train the neural network. The server then sends the commands to an Arduino module which then commands the RC car. With this integration of multiple technologies onto a scale model for research and development, a cohesive and comprehensive approach to car automation can be made in stead of one only focused on one aspect fo the technology. This approach can, with further research, be up scaled to an actual car in the future.

S. Mishra [7] et al. have worked on developing a fully autonomous robot car model using the AlexNet AI model. Utilizing a NVIDIA Jetson Nano board and an AlexNet model to train their neural network, the team’s robot car was able to navigate a city simulation with real-time object detection. The input data from the city simulation was also useful for their deep learning and neural network training with the cost-efficient Jetson Nano board. The team plans to expand the AI functionality beyond just collision and object detection to include text-to-speech and speech-to-action commands.

G. Ghanhi [8] et al. have worked on the integration of artificial intelligence with blockchain technology to better train autonomous vehicle operation and safety. They have outlined a system whereby autonomous, AI driven vehicles utilize a public ledger where the data taken in from a number AI driven vehicles is available to the other vehicles connected to the blockchain. Each individual AI driven car can be trained by utilizing this data, allowing for cross-training across a whole network of cars. This would help eliminate the need to train each car separately, providing modularity in the AI training process, saving money, time, and resources, and reduce the need for human effort in the training process. Future work towards this goal will be aimed at embedding the training algorithms with the proposed blockchain network and exploring the extent of the modularity that the system can achieve.

X. Du [9] et al. have worked on proposing a merged system of LIDAR and vision fusion system that makes use of AI deep learning framework for vehicle detection. This system consists of three major portions: the generation of potential car location seeds taken via a LIDAR point cloud, refinement of the proposed locations by way of exploring the information within the network, and lastly a final location detection from the network.

C. Casetti [10] et al. have surveyed recent developments made in AI and Machine Learning (ML) applications and services for use in autonomous vehicle navigation. Their focus is particularly centered on the advancements in the 5G and 6G mobile ecosystems. The team emphasizes the necessity of combining the obstacle detection and classification hardware with infrastructure that combines the data about the environment from a number of different automobile sensor systems (referred to as "sensor fusion"). While traditional frameworks of the sensor data fusion are often rigid and lack potential for growth, the integration of machine learning can provide much more flexibility and adaptation in the object detection process. One particular ML framework is HyndraFusion which outperformed older object detection approaches by 14 percent. Another featured, ML-centered technique called Feature Engineering has been suggested to combine observed data and simulated data into a shared data pool, allowing a more complete and polished dataset for use in ML applications and could therefore increase the accuracy of the predictions.

With the advent of 5G and 6G technology, the possibility of a Vehicle to Everything (V2X) model shows additional optimism. These architectures carry the possibility of increased robustness and availability of data sharing across multiple autonomous vehicles on the road. The messages shared among the vehicles have been standardized by the European Telecommunications Standards Institute (ETSI), which can then be used to build maps of local environments referred to as Local Dynaims Maps (LDMs). The LDM,located server-side, can be a integral asset for up-to-date and detailed real-time representation of the road. With the integration of AI with these mobile technologies (particularly 6G, due to its high speeds and low-latency), an even more reliable and adaptable flow of information can be achieved. 6G technology and AI/ML frameworks have both shown effectiveness in several autonomous vehicle applications such as adaptive cruise control, trajectory prediction, and cooperative lane changing. While there is still work to be done to fully integrate the AL/ML and 6G solutions, the combined framework shows substantial potential at increasing the effectiveness, reliability, and safety of autonomous car control.

X. Jia [11] et al. have worked on developing an improved image object detection algorithm based on a modified version of the YOLOv5 AI vision model. The team was able to rework the existing YOLOv5 algorithm by integrating structural re-parameterization and using training-interface-decoupling to result in a higher level of accuracy in the training phase of the model and higher speeds in the inference phase. The team tested their improved YOLOv5 model on the KITTI dataset, a widely used dataset in the autonomous driving field. To evaluate the performance of their modified model, the team utilized mathematical formulas for Precision (P), Recall rate (R) accuracy (mAP, mean average precision), and frames per second (FPS). Calculations are done as follows:

Where TP indicates a positive predicted as positive, FP indicates a positive evaluated as negative, and FN indicates a negative predicted as positive.

Where AP is the area enclosed by the P-R curve, N is the number of categories, and APi is the AP of the ith category. mAP indicates modal accuracy.

Their model shows a clear improvement of other models used within the study, showing that with continued iteration and development, already existing models can be further improved to deliver better results for object detection in autonomous vehicles.

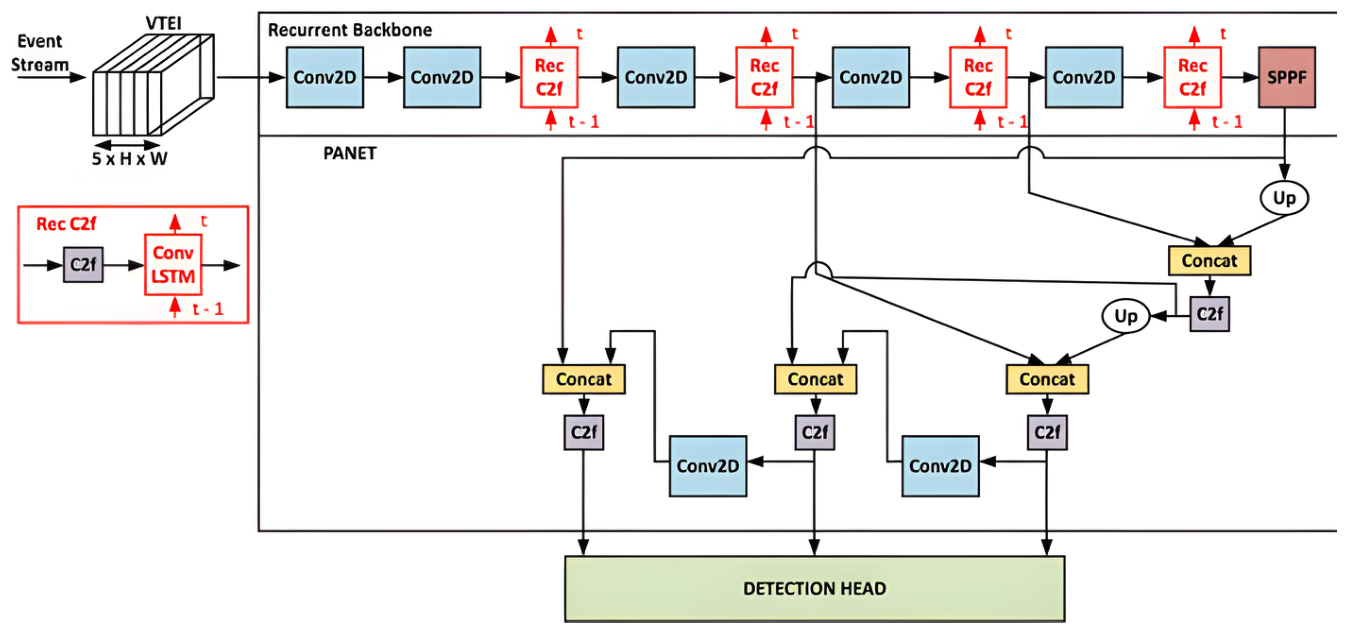

D. Silva [12] et al. have worked on developing a modified, recurrent YOLOv8 framework for object detection. The original YOLOv8 model was modified by the team to integrate a recurrent C2f block into the original framework. This allows for further refinement of the feature maps as they move onto downsampling.

Figure 4 shows the structure of this model.

Three distinct variants of ReYOLOv8 were made with regard to previous scales set by YOLOv8: ReYOLOv8n (nano scale), ReYOLOv8s (small scale), and ReYOLOv8m (medium scale). ReYOLOv8 was implemented along with event-based cameras along with a novel and lightweight memory encoding called Volume of Ternary Event Images (VTEI) which minimized latency and bandwidth while increasing sparsity and compression ratio. The ReYOLOv8 models were tested for the GEN1 and PEDRo datasets, data sets that are commonly used for testing autonomous driving scenarios. The ReYOLOv8 models were tested against other state-of-the-art models used for object detection. After the testing, the ReYOLOv8 models showed a noticeable improvement in their mean Average Precision over other models of similar scale, specifically a 0.7 % improvement for GEN1 and 4.5 % for PEDRo . The team has outlined that future work can be done to outline benchmarks for system level impacts from this method and including evaluation with other datasets such as 1MegaPixel.

I. Ogunrinde [15] et al. have worked on developing an improved DeepSort-based object detection sensor fusion network for use in autonomous vehicles in foggy weather conditions. The original DeepSORT is intended for use with the YOLOv4 object detection model,but showed errors when targets were under heavy fog, switching identities and losing distinction in predictions. The team made use of their previously proposed, deep learning-based CR-YOLOnet sensor fusion network for use with camera and radar object detection. To further increase the network’s accuracy in harsh visual scenarios, the convolutional neural network in the original DeepSORT was replaced with an appearance feature extraction model. GhostNet was also utilized in the place of traditional convolutional layers in the network, reducing computational cost and complexity while increasing performance. The method was tested with and without the previously developed CIoU and GIoU loss functions using the CARLA real-time autonomous driving simulator. The testing occurred across short, medium, and long distances and low, medium, and heavy fog levels to provide a comprehensive result.

Figure 4 shows some of the object detection data from the CLARA simulation.

The improved network shows improvement over the YOLOv5+DeepSORT combination. Specifically, multi-object tracking precision increased y 35.15%, the multi-object tracking precision increased by 32.65%, the speed increased by 37.65%,and identity switches decreased by 46.81%. Future research for the project will focus on improving the sensor fusion techniques, improving the real-time performance, and integrating state-of-the-art deep learning models to improve its real world applications.

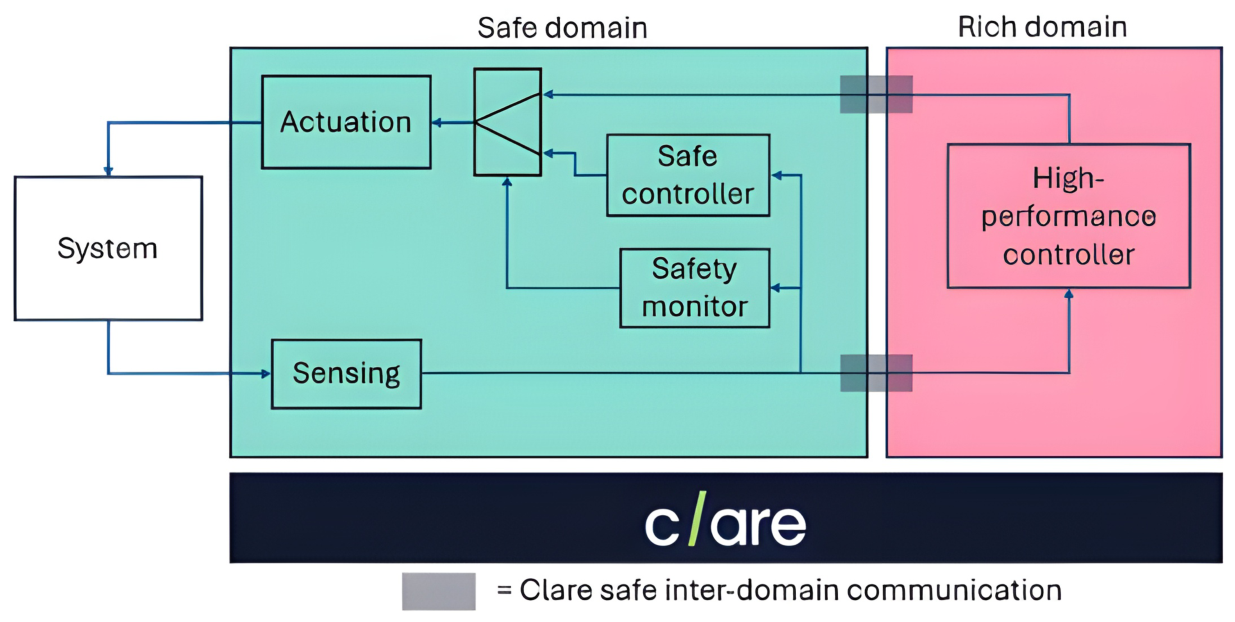

F. Nesti [16] et al. have worked on the use of Simplex architecture within autonomous vehicle systems to provide a safer and more secure layer within the vehicle’s neural network. The architecture is composed of two execution domains running in strong isolation, the safe domain and the rich domain. The safe domain has high criticality and is responsible for safety-critical tasks such as sensing, actuation, communication, and safety monitoring, powered by a real-time operating system. The rich domain has low criticality and oversees all of the high performance processing and computations, powered by a rich operating system. The rich domain is also considered "untrustworthy" compared to the safe domain, meaning any safety decisions made by the rich domain are superseded by the safe domain. CLARE, a type-1 real-time hypervisor, facilitates the communication between the domains which grants the system better security. A diagram of the overall architecture of the system is presented in

Figure 6.

Figure 5.

Foggy weather object detection results for the DeepSort-based object detection model. Row 1 is clear weather. Row 2 is medium fog level. Row 3 is heavy fog level [15].

Figure 5.

Foggy weather object detection results for the DeepSort-based object detection model. Row 1 is clear weather. Row 2 is medium fog level. Row 3 is heavy fog level [15].

In addition, a safety monitor module (not shown in

Figure 5) runs constantly during runtime. As default, the rich domain takes control of the system. However, if the safety monitor detects any potential anomaly or dangerous situation, the high-performance controller is disconnected, and the safe controller takes over operation decisions. The team evaluated the architecture with two case studies, one involving a Furuta pendulum and another with a AgileX Scout mini Rover. For the pendulum study, the rich domain handled swinging the pendulum up from its resting position to the top position and the safe domain activates in response to outside disturbances acting on the pendulum from its upright position, such as a push, and responds with actions to readjust the pendulum to the upright position. This first study was utilized to confirm the architecture’s basic function and the architecture responded successfully based on the proposed model. The rover study consisted of the rover autonomously navigating an environment via a camera and a LIDAR sensor. The rover’s safe domain captured the data through the LIDAR sensor, ran the safety monitor module, and managed motor actuation. The rover’s rich domain read LIDAR distance measurements, captured camera image data, and sent commands to the safe domain based on the data from the sensors. The rover was successfully navigate the environment while promptly and accurately respond to stationary and sudden obstacles. Due to the general nature of the proposed architecture, it can be iterated upon and used across a variety of applications. Future work on the architecture will focus on integration with deeper neural networks, implementation of vehicle-in-the-loop simulation, further benchmarking of the real-time and power properties of the system.

Y. Li [17] et al. have worked on developing an AI-driven hierarchical routing framework with Q-learning for use in 6G enabled Internet of Vehicles (IoV). The framework, referred to as Hierarchal Routing with Q-learning and Structured representation (HRQSR), seeks to confront the challenges present in 6-G enabled IoV, such as multi-objective optimization and the high dynamics associated with 6G IoV. The architecture of the HRQSR framework consists of five core functional modules. First, the Vehicle Node layer collects mobility-based states (position, velocity, heading, and residual energy) which a RSU/EdgeAI controller uses to perform dynamic clustering and cluster prediction. Second, a AI-enabled multitask Graph Attention Network (GAT) based prediction module creates estimation for route decisions. Third, a hierarchal Q-learning optimization module provides prediction-decision coupling from the GAT module in order to provide robust and adaptive routing decisions. the fourth module determines the final route from multiple generate candidate routes evaluated regarding delay, energy efficiency, and reliability. The final module then handles the execution of the routing back to the vehicle network, closing the architecture’s loop. This framework’s effectiveness was evaluated using both real-world maps and large-scale simulation environments, utilizing various levels of traffic density and heterogeneous network conditions. The experiments were designed to emphasize the HRQSR’s effectiveness in future 6G vehicular communication systems. The HRQSR framework achieved a success rate of 94.680%, 98.870%, and 98.920% in low, medium, and high traffic scenarios, respectively, while showing improvement over other methods in the same experiment.

With its resulting high performance, the HRQSR framework shows much potential if it were to be adopted for use in the 6G IoV infrastructure, leading to better and more robust autonomous vehicle V2X communication. Future work for the team includes integrating energy and carbon efficiency objectives, extending the Q-learning mechanism to a multi-agent setting for collaborative intelligence with other points of interest, and real-world validation of the framework via the physical vehicular network.

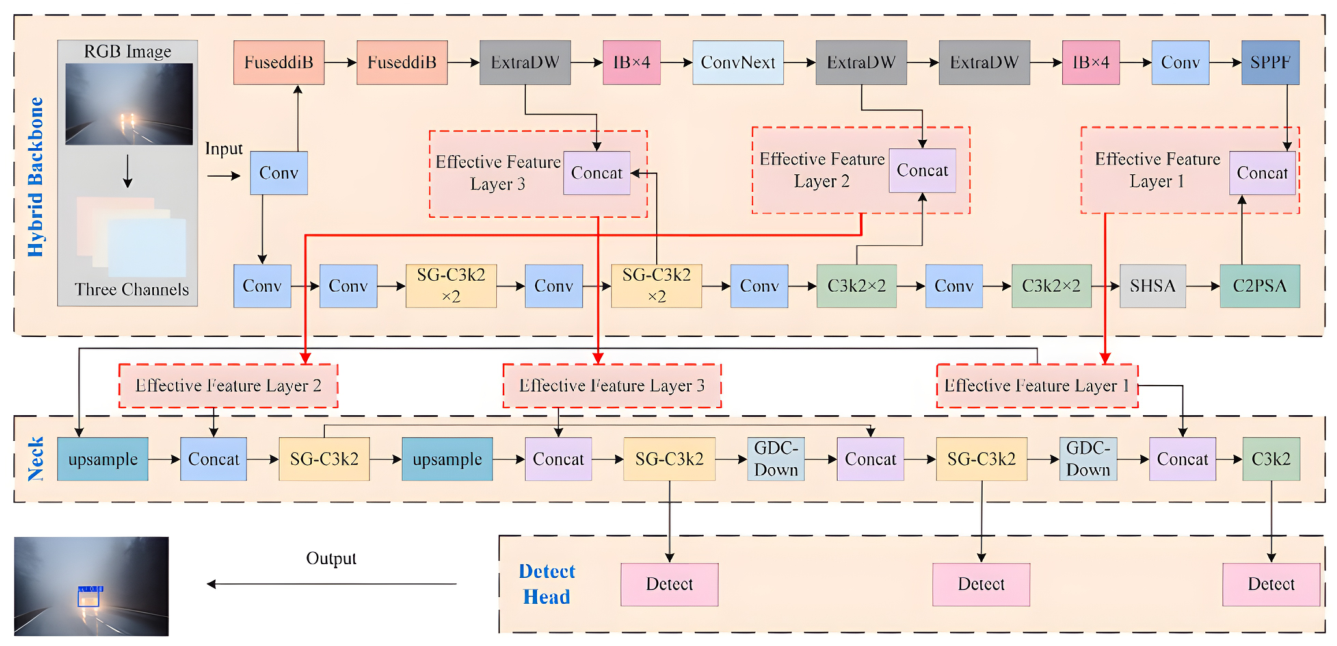

H. Yang [19] et al. have work on improving target detection accuracy during low-visibility environmental conditions utilizing a hybrid backbone network and multi-feature fusion. The method integrates the MobileNetv4 neural network with the YOLO11 backbone feature network. This hybrid backbone module also incorporates the Single-Head Self-Attention (SHSA) attention module, reducing computational complexity and enhances the model’s sensitivity which is necessary in low-light conditions such as fog, rain, and nighttime. The neck portion of the architecture utilized GDC-Down, a novel downsampling structure, along with a redesigned SG-C3k2 module that is made from the combination of the C3k and Bottleneck modules. This allows for better object recognition within low-visibility scenarios. The detection head then operates using the feature maps created by the neck. The complete architecture is shown in

Figure 7.

To test the proposed architecture, the KITTI, Real-Time Traffic Surveillance (RTTS), and BDD_100k datasets were used with a particular focus on data consisting of low-visibility scenarios such as rain, haze, fog, and nighttime. The proposed method was tested against several other YOLO models and each method was evaluated based on the parameters of precision (P), recall (R), and mean average precision (mAP). Precision quantifies the proportion of correctly predicted targets to all object detections, recall quantifies the proportion all all true positive targets successfully identified by the model to all actual positive instances, and mean average precision is the arithmetic mean of the area under the precision-recall curve. The proposed method showed an improvement over the other methods of object detection, namely, a 2.68%, 1.52%, and 1.64% improvement in mAP in the BDD_100k, KITTI, and RTTS data sets, respectively. Future work for the proposed methods includes further reducing the model’s complexity and improving real-time performance, specifically under adverse weather conditions.

X. He [22] et al. have worked on developing a neurologically inspired framework for for use during AI-driven autonomous vehicle operation. The team has based their model off of the amygdala, a section of the brain for use in controlling, feeling, and responding to fear, so that it may operate as cautiously as an actual human driver would. The Fear-Neuro-Inspired Reinforcment Learning (FNI-RL) framework was was composed of the adversarial imagination technique, which simulates worst-case situations within the mode, and the custom Fear-Constrained Actor-Critic (FC-AC) algorithm. The framework was tested against several state-of-the-art AI agents and 30 human participants. Testing was done through the simulation of urban mobility package (SUMO). Overall, the FNI-RL framework outperformed the AI models and was able to achieve the performance of the human drivers in several of the critical safety scenarios, showing much promise. Future work involves further improving the response of the framework and eventually testing it outside of simulations.

R. Gutiérrez-Moreno [23] et al. have worked on developing a deep learning algorithm that seeks provide an enhanced Decision Making (DM) module within the autonomous driving stack. The DM module has 4 layers: Layer 1 (Perception) focuses on receiving data via the sensors and generating position and velocity from surrounding objects. Layer 2 (Tactical) defines a tactical trajectory based on the 3D map made by the previous level and lays the foundation for the routing and navigation. Layer 3 (Strategy) carries out the behavioral planning, making the high-level decision making. The final layer 4 (Operative) combines the predicted trajectory and the decided actions, calculating the driving commands. The algorithm was tested across four key scenarios: crossroads, merges, round-abouts, and lane-changes. It was also tested against the CARLA Autopilot and AD stack Techs4AgeCar architecture. The proposed architecture outperformed the Techs4AgeCar architecture, but could not outperform the CARLA. The team emphasizes that this architecture is a proof of concept and to show that classical implementation of deep reinforcement learning can be integrated into a Autonomous Driving architecture. Further work would focus on refining the architecture’s accuracy and response.

S. Grogprescu [24] et al. have worked on developing an AI-based operating system for use with autonomous applications, called CyberCortex. The operating system allows for several operating nodes to talk with one another and for them to talk with a centralized high performance server. Sensory and control data is streamed to the server for use with training the AI algorithms. The trained algorithms then are deployed back to the nodes for improved autonomous operation. The OS has two main components: An inference system which runs in real time on the embedded hardware, utilizing DataBlock. The second component, the dojo, runs on the high powered computer in the cloud and handles the design, training, and deployment of the AI algorithms. The OS’s performance was measured on GPS data used via the CARLA driving simulator. CyberCortex was able to outperform it’s competitor, the industry standard Robotic Operating System, showing a lower Root Mean Square Error across the experiments. Further work will focus on improving the module’s inter dependencies and sampling rate.

H. Zhang [26] et al. have worked on developing an improved noise-robust framework. The Noise Robust Mixture of Experts (NRMoE) framework makes use of a noise-injection pipeline which injects noise during training, allowing the model to become more noise-robust. Also, an adaptive gating network is combined with an expert network to encode input sequences using a 2D convolutional block followed by a Squeeze-and-Excitation module for feature readability. The expert network, made of two heterogeneous GRU models (Gated Recurrent Unit), then provides weighted outputs used for prediction results. The combined noise-resistant training methods and gating/expert networks combine to form the proposed model. The NRMoE framework was tested on several datasets ,both noise-free and ones with gaussian noise, with the RMSE measured for performance metric. The NRMoE performed with a much lower RMSE than several state-of-the-art frameworks, including CNN-LSTM-MA and Seq2seq-Att. Future work will be focused on optimizing model structure, improving the prediction performance and designing further noise-robust control algorithms.

A. Abiko [27] et al. have worked on developing a novel generative AI-framework for determining vulnerability within Connected Autonomous Electric Vehicles (CAEV) software components and communication protocols. The framework, called GenSecure-CAEV, has it’s architecture based on transformer-style Large Language Model GPT-3 which was tuned on a corpus specifically for CAEV cyber-security scenarios and contexts. The corpus is derived from three primary sources: AUTOSAR-Adaptive, CAN/V2X communication logs, and security advisories and exploit write-ups. For evaluating the framework, the team measured effectiveness in detecting memory safety and protocol logic vulnerabilities, decrease in time-to-detection, scalability-accuracy trade-offs for CAEV codebases, and integration into automotive security networks. Three datasets were used in the simulations: Autosar vulnerability dataset, CAN Bus Intrusion Detection Dataset, and the CARLA Autonomous Driving Simulation Data. The framework was able to perform above several framework peers, achieving F1 scores of 96.3% and 95.8%, for ATUOSAR and CAN BUS IDD respectively, and reducing time-to-detection to 3.8h for AUTOSAR and 2.5h for CAN Bus IDD. Future work on the framework can go in the direction of adding the scope of machine-learning vulnerabilities and incorporating continuous learning within the framework via real-time threat intelligence.

B. Lamichhane [28] et al. have worked on developing a novel roadside sensor network for use with object and threat perception and detection for autonomous vehicles in adverse environments. By utilizing infrastructure based-sensors such as cameras, LIDARs, radar, and weather sensors, the network uses context-sensitive fusion methodologies to dynamically asses and determine sensor reliability in real time. The network then communicates with autonomous vehicles to provide them threat detection support in heavy weather conditions where their onboard sensors might encounter issues. The network was assessed via a CARLA autonomous driving simulation environment. The network was able to improve camera-LIDAR object detection accuracy by 74.4% and a reduction in collision rates during heavy rain conditions by 28%. Future work on the network will involve adding more sensor modalities such as infared and ultrasonic for better system resilience. Additionally, incorporating AI-driven sensor fusion techniques could further improve the network’s performance.

S. Sheng [29] et al. have worked on developing a low-cost pipeline that provides real-time updates for HD roadwork maps for autonomous driving vehicles. The pipeline consists of three major parts: First, a roadworks sign recognition CLIP model that takes the input image of a road sign through a text and image encoder which is fine-tuned through a prior trained CLIP model which then processes the new image to the prediction component. Next, the detected roadworks signs are converted to real-world coordinates by using distortion coefficients, an external reference martrix, and coordinate transformation formulas. Once the real-world coordinates are determined, the pipeline updates the OpenDRIVE file with the new environment information. This pipeline was tested via a dataset of 3752 images. The model showed a 97% recognition rate and a RMSE of less than 1.2m for positional accuracy. Future work will focus on incorporating more detailed roadworks information into the system and testing the real-time map updating capabilities on a real-life road.

T. Yufei [30] et al. have worked on a multi-modal combinatorial approach to mitigating traffic congestion and increasing safety and efficiency for autonomous vehicles. The model utilizes speed prediction by way of an Sparrow Search Algorithm (SSA) optimized Long Short-term Memory Network (LTSM). THE SSA-LTSM model can accurately determine the speed trends of vehicles in the near future and can also provide input to the Adaptive Cruise Control (ACC) in the system. By using the data from the SSA-LTSM, the ACC can provide finer, more accurate distance control in regards to other vehicles on the road. This framwork was tested across three simulations: One focusing on regular, weekday, morning traffic patterns. Another on high-density congestion scenarios to better determine efficiency in tight traffic. Lastly, on accident or temporary closure areas, used to determine how the model acts in response to irregular traffic patterns and speeds. The SSA-LTSM framework was able to drive smoothly in the simulations by effectively determining the best distance to maintain between it’s leader vehicle. While traffic was congested and irregular, the framework was able to reduce the average queue length by 92.86& and the maximum queue length was reduced by 78.57%. Future work for the framework involves integrating more complex sequence modeling networks for predictive purposes and adding a reinforcement learning mechanism into the controller for better self-learning and self-tuning capabilities.