Submitted:

19 April 2026

Posted:

27 April 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Materials and Methods

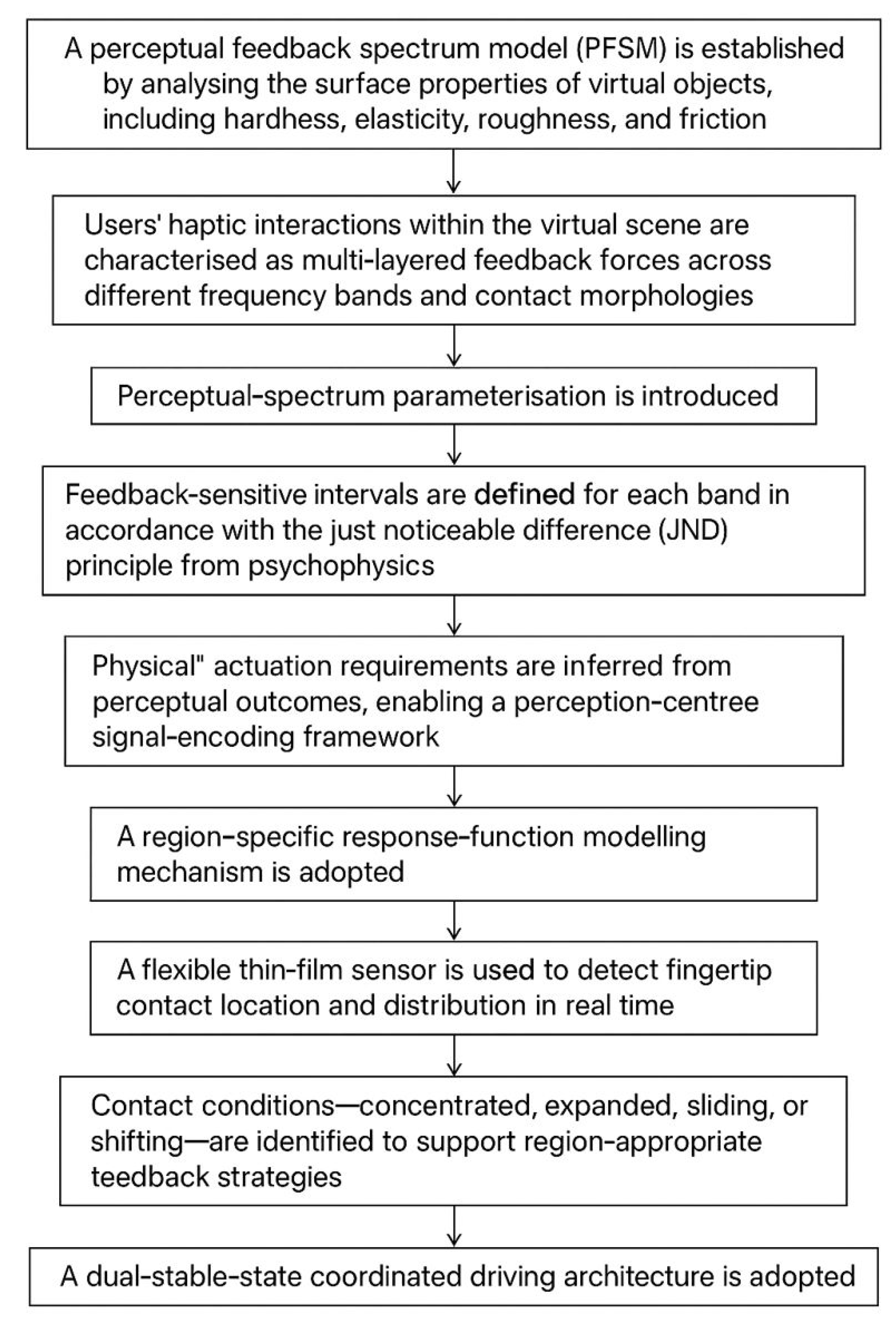

2.1. Perceptual Haptic Spectrum Model

2.2. Perceptual Spectrum Parameterisation

2.3. Region-Specific Response Functions

- Force sensitivity, defined as the magnitude of response to variations in applied pressure;

- Texture frequency sensitivity, referring to the ability to perceive high-frequency vibratory stimuli;

- Perceptual latency tolerance, reflecting subjective tolerance to response delays.

2.4. Contact-State Classification and Predictive Feedforward Control

2.5. Dual-Actuation Hardware Architecture

3. Results: Prototype Demonstration in a Virtual Fabric Task

3.1. Prototype Platform and Demonstration Conditions

3.2. Demonstration of Concentrated Contact

3.3. Demonstration of Distributed Contact

3.4. Demonstration of Slip Contact

3.5. Summary of Demonstration Outcomes

4. Discussion

5. Conclusions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- Bensmaïa, S. J.; Hollins, M. The vibrations of texture. Somatosensory & Motor Research 2003, 20(1), 33–43. [Google Scholar] [CrossRef] [PubMed]

- Culbertson, H.; Schorr, S. B.; Okamura, A. M. Haptics: The present and future of artificial touch sensation. Annual Review of Control, Robotics, and Autonomous Systems 2018, 1, 385–409. [Google Scholar] [CrossRef]

- Di Luca, M.; Mahnan, A. Perceptual limits of visual-haptic simultaneity in virtual reality interactions. In 2019 IEEE World Haptics Conference (WHC); IEEE, 2019; pp. 67–72. [Google Scholar]

- Fechner, G. T. Elemente der psychophysik; Breitkopf u. Härtel, 1860; Vol. 2. [Google Scholar]

- Gescheider, G. A.; Thorpe, J. M.; Goodarz, J.; Bolanowski, S. J. The effects of skin temperature on the detection and discrimination of tactile stimulation. Somatosensory & Motor Research 1997, 14(3), 181–188. [Google Scholar] [CrossRef] [PubMed]

- Johnson, K. O. Pashler, H., Wixted, J., Eds.; Neural basis of haptic perception. In Stevens' Handbook of Experimental Psychology: Sensation and Perception; Wiley, 2002. [Google Scholar]

- Klatzky, R. L.; Lederman, S. J. Multisensory texture perception. In Multisensory Object Perception in the Primate Brain; Springer, 2010; pp. 211–230. [Google Scholar]

- Loomis, J. M.; Lederman, S. J. Tactual perception. In Handbook of Perception and Human Performance; Wiley, 1986; Vol. 2, pp. 31.1–31.41. [Google Scholar]

- Pacchierotti, C.; Sinclair, S.; Solazzi, M.; Frisoli, A.; Hayward, V.; Prattichizzo, D. Wearable haptic systems for the fingertip and the hand: Taxonomy, review, and perspectives. IEEE Transactions on Haptics 2017, 10(4), 580–600. [Google Scholar] [CrossRef] [PubMed]

- Perrone, K. H.; Abdelaal, A. E.; Pugh, C. M.; Okamura, A. M. Haptics: The science of touch as a foundational pathway to precision education and assessment. Academic Medicine 2024, 99((4) Suppl, S84–S88. [Google Scholar] [CrossRef] [PubMed]

- Razzaque, S.; Swapp, D.; Slater, M.; Whitton, M. C.; Steed, A. Redirected walking in place. In Egve; May 2002; Vol. 2, pp. 123–130. [Google Scholar]

- Saal, H. P.; Bensmaia, S. J. Touch is a team effort: Interplay of submodalities in cutaneous sensibility. Trends in Neurosciences 2014, 37(12), 689–697. [Google Scholar] [CrossRef] [PubMed]

- Weber, E. H. De Pulsu, resorptione, auditu et tactu: Annotationes anatomicae et physiologicae.; CF Koehler, 1834. [Google Scholar]

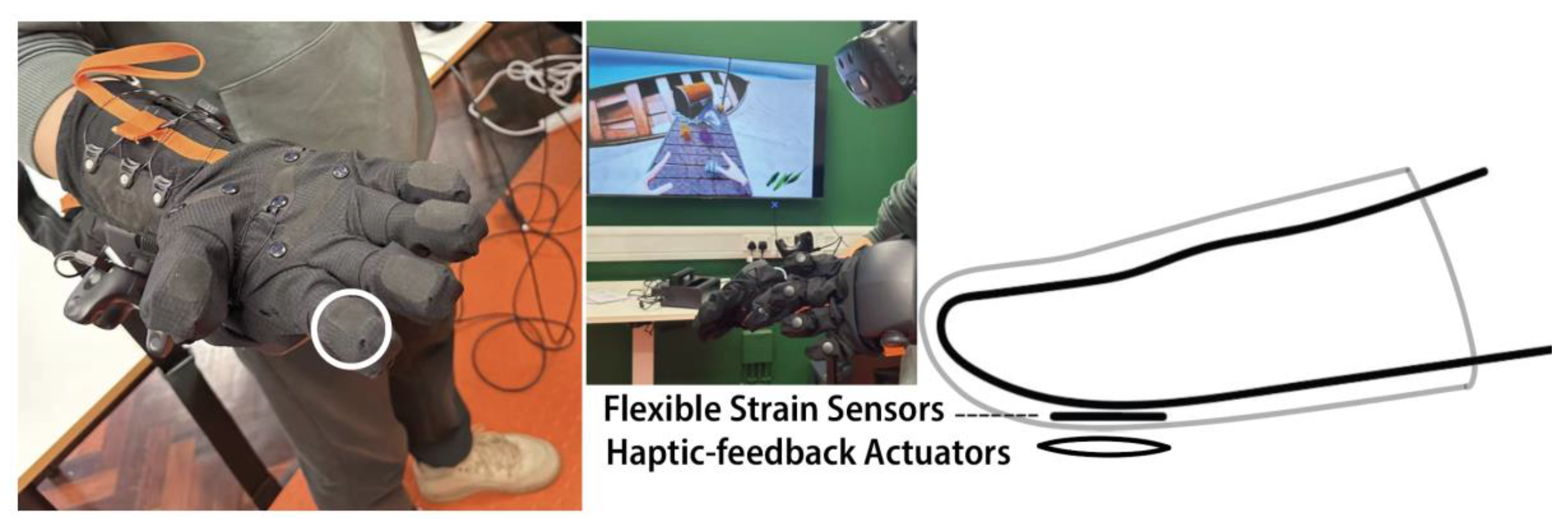

| Component | Physical role | Signal domain | Main function in the framework |

| HaptX Gloves G1 | Primary actuation | Low-frequency force support | Provides gross pressure, resistance, and compliance-related cues |

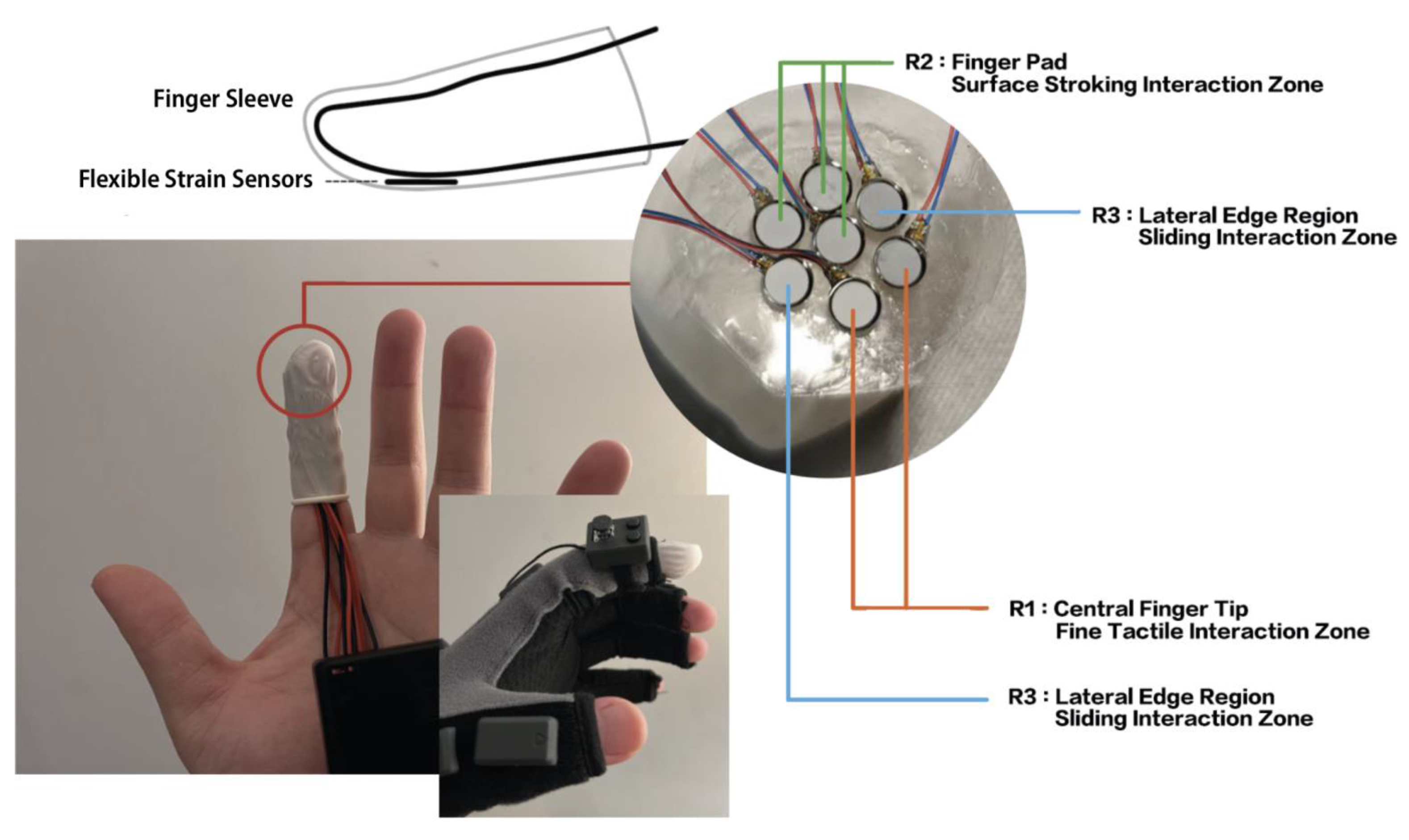

| Flexible strain-sensor array | Sensing | Pressure/deformation measurement | Detects contact location, area, pressure distribution, and temporal change |

| Local sleeve actuators | Secondary actuation | Mid-/high-frequency texture output | Delivers local roughness, boundary, and slip-related tactile cues |

| Motion-capture/VR tracking | Kinematic sensing | Pose, velocity, acceleration | Supports short-horizon predictive feedforward control |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).