Submitted:

22 April 2026

Posted:

23 April 2026

You are already at the latest version

Abstract

Keywords:

Introduction

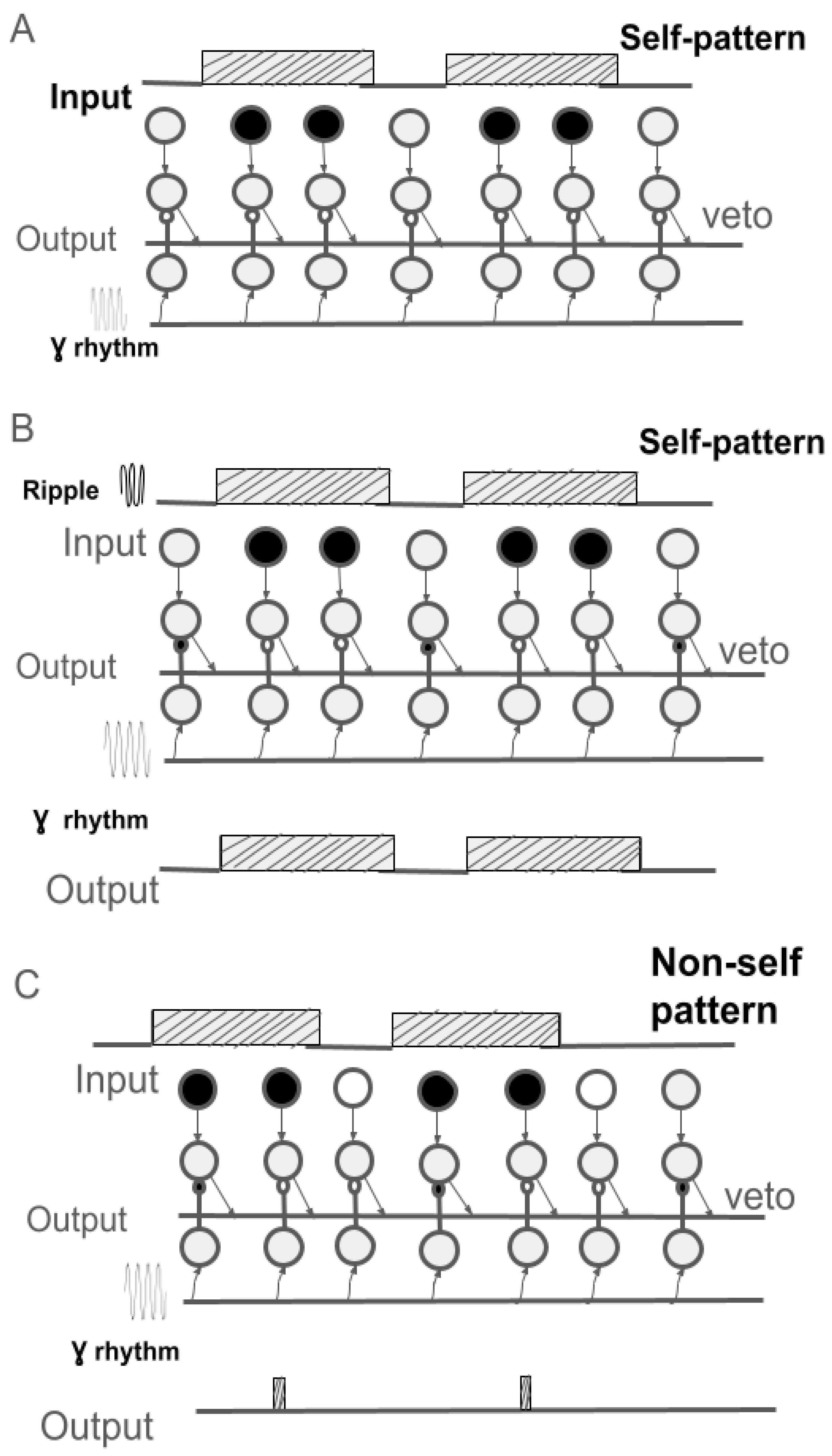

- Incorporation of inhibitory neurons, including "veto" interneurons, into the network architecture, enabling the construction of neural circuits capable of executing sophisticated processing functions (Levashov & Safiulina, 2025).

- Entrainment of the entire neural network via endogenous rhythms analogous to EEG rhythms, serving as "clock frequency" akin to those in electronic systems (Levashov & Safiulina, 2026).

Modeling the Process of Visual Recognition in Living Systems

Magnocellular Model of Visual Recognition

- Defocusing (blurring) of the portion of the visual scene selected by central vision.

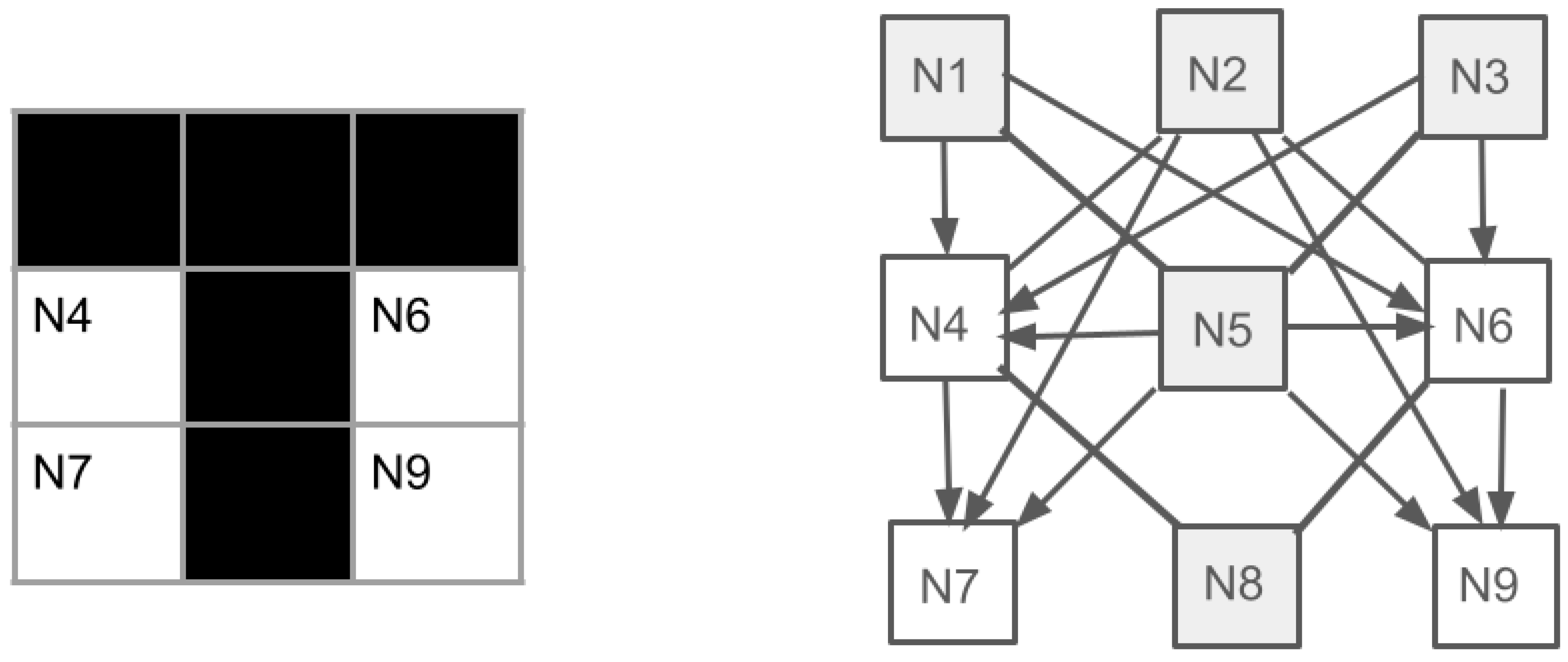

- Binarization and transformation of the image into a two-level "silhouette" that preserves the topology of the recognized object's shape.

- Mapping (matching by superimposition) with contextually relevant templates in memory.

- Selection of a single template based on the "winner-take-all" principle.

- The selected shape, as a visual hypothesis, is transmitted "down" to lower levels of the visual system and visualized.

- Thereby, the brain (frontal cortex) decides that the object is identified.

- Hypothesis verification is performed using subsequent saccades to presumed "areas of interest."

- The M-system is known to dominate in the left LH (Okubo & Nicholls, 2005). This study found a left-hemisphere advantage in the temporal resolution of visual stimuli, indicating the key role of the M-system in interhemispheric asymmetry during visual information processing.

- Receptive fields of M-neurons in the retina and LGN have a concentric structure and large dimensions; therefore, from a technical standpoint, they perform an image blurring (low-pass filtering) operation (Zeki, 1993; Bar et al., 2003).

- According to Bullier (Bullier, 2001), the pattern of activity from the retina is transmitted via the LGN directly to the prefrontal cortex, bypassing layers of "simple form detectors." Signals from the magnocellular layers of the LGN reach the cortex 10–20 ms faster than parvocellular signals, and the first responses in area MT (V5) appear approximately 35–45 ms after image presentation to the retina. Importantly, this bypasses neurons with receptive fields tuned to lines, angles, and other forms, which ensures the preservation of the processed pattern's topology for mapping (see discussion of this issue in the Introduction).

- Adusei et al. (Adusei et al., 2024) found that feedback from areas V4 and MT to the macaque lateral geniculate nucleus is organized as parallel streams, specifically activating certain thalamic layers. This suggests the ability of higher visual centers to directly modulate visual information transmission, i.e., it supports the "top-down" paradigm of visual recognition.

- According to M. Bar and colleagues, who studied the recognition process using combined fMRI and MEG measurements, the first area activated after image presentation to the retina is the orbitofrontal cortex of the left LH (latency 130 ms), followed by the temporal cortex of the right LH (latency 180 ms).

Non-Hebbian Learning Principle in Engram Formation

- If one neuron is activated and a neighboring neuron is not activated, the activated neuron forms (activates) an inhibitory synapse on the neighboring neuron. Upon repeated activation, the synaptic weight increases by a small amount (delta).

- The process of memorizing the input pattern (learning) occurs during "reverberation" – rapid repetition of the current pattern at the input. We hypothesize that the frequency of such repetitions is set by an external rhythm, such as a "ripple" (150-200 Hz), observed during memory consolidation in the hippocampus and prefrontal cortex.

- As a result of learning, the weights of the inhibitory synapses become so large that they can be considered "veto synapses" (prohibition), blocking signal transmission along the corresponding pathway.

- This learning procedure yields a kind of "imprint" of the current pattern in the form of an "individual lock." Subsequently, such a "lock" is "opened" only upon the arrival of the "self" pattern or a part thereof at the input.

Conclusions from Computer Modeling Results

- The modeling demonstrated that the procedure for template (engram) formation proposed in this article solves this problem under the condition of two additional mechanisms: rapid repeated pattern presentation (ripple-reverberation) and the presence of a high-frequency rhythm (e.g., gamma rhythm) for activating key elements of the neural network – the inhibitory synapses that become "veto-synapses" (prohibitory) at the end of learning.

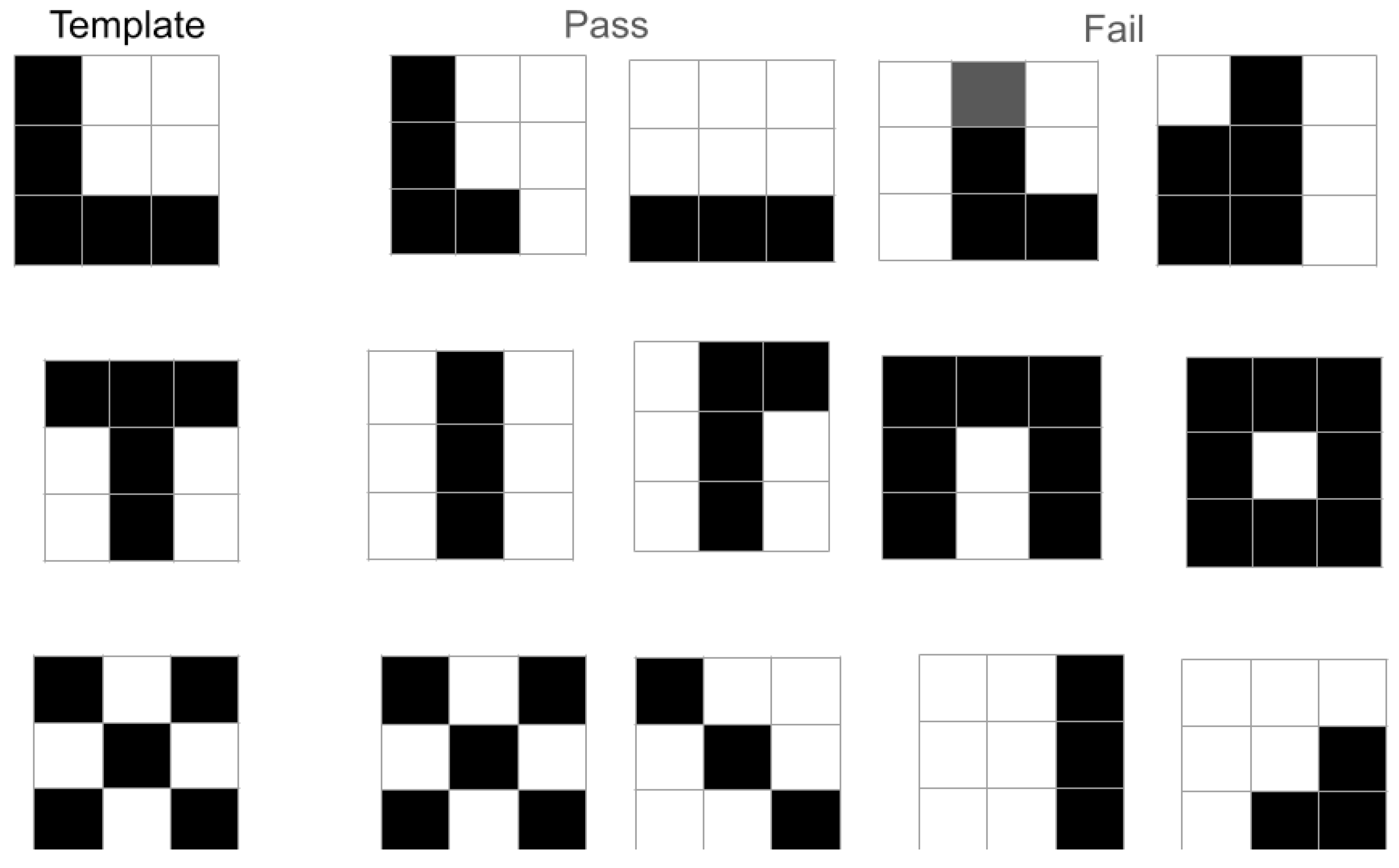

- A feature of this procedure is that the formed local neural network "passes" both the entire pattern and its large fragments (parts of the whole). Such fragments can, in essence, be considered local features. Thus, our proposed M-model of recognition acquires a new form – mapping combined with local feature extraction.

- The ability to generate plausible hypotheses based on a fragment of the whole pattern implies good noise immunity of such an algorithm under conditions of visual noise (interference), as well as when analyzing real complex scenes where object occlusion (partial overlap of the contours of the target object and distractors) is common.

General Discussion

Application

Conclusions

- The difficulty of manipulating activity patterns in real neural networks is discussed, as they are not holistic patterns but merely a mosaic of activated and inactivated neurons. A solution is proposed in the form of local synchronization of network neuron activation, which keeps the processing sequential but makes it very fast.

- A neural model of "non-Hebbian" engram formation in memory is proposed, based on the strengthening of inhibitory synapses up to a "veto" (prohibition) state. As a result, a kind of "neural locks" for "self" patterns are formed in memory.

- The task of retaining the required engram in memory is solved by means of hypothetical "ripple-reverberation" – rapid repeated presentation of the to-be-remembered pattern.

- Computer modeling demonstrated that the formed "lock"-type engrams "pass" (respond to) both the entire pattern and its large fragments (parts of the whole). Such fragments can be considered local features. Consequently, this recognition model acquires a new form – as a variant of the matching model incorporating local feature extraction.

- The ability to generate plausible hypotheses based solely on a fragment of the target pattern implies good noise immunity of such a scheme under conditions of visual noise, as well as when analyzing real complex scenes with occlusion (where object contours partially overlap).

- The plausibility of this model of engram formation for visual recognition could be tested in experiments involving rapid presentation of a fragment of a meaningful shape against random noise. According to our prediction, subjects would report perceiving the entire shape completely.

Author Contributions

Funding

Code Availability

Conflicts of Interest

References

- Kok, E. P. Visual agnosia: syndromes of disorders of higher visual functions in unilateral lesions of the temporo-occipital and parietal-occipital regions of the brain. Moscow: URSS: LENAND Publishing House. 2022. 224 p.

- Levashov O. V. Attractors of visual attention and analysis of visual scenes. Sensory Systems. 2018. 32(3). 200–209.

- Levashov O.V. Artificial Vision. Artificial Intelligence. Neural Models of Living Sensory Systems. Moscow: URSS: LENAND Publishing House. 2022. 246 p.

- Levashov O. V., Safiulina V. F. Models of visual recognition and the problem of constancy of the shape of a visual pattern during its processing in the cortex. Asymmetry. 2025. 19(1): 6–17.

- Pozin N.V., Lyubinsky I.A., Levashov O.V. and others (edited by Pozin N.V.) Elements of the theory of biological analyzers. Moscow: Nauka.1978, 360 p.

- Adusei M, Callaway EM, Usrey WM, Briggs F. Parallel Streams of Direct Corticogeniculate Feedback from Mid-level Extrastriate Cortex in the Macaque Monkey. eNeuro. 2024.11(3):ENEURO.0364-23.2024.

- Agnes, E.J., Vogels, T.P. Co-dependent excitatory and inhibitory plasticity accounts for quick, stable and long-lasting memories in biological networks. Nat Neurosci. 2024. 27:964–974.

- Bar M. A cortical mechanism for triggering top-down facilitation in visual object recognition. J Cogn Neurosci. 2003.15(4):600-9. [CrossRef]

- Bergoin R, Torcini A, Deco G, Quoy M, Zamora-López G. Inhibitory neurons control the consolidation of neural assemblies via adaptation to selective stimuli. Sci Rep. 2023.13(1):6949. [CrossRef]

- Biederman I. Recognition-by-components: a theory of human image understanding. Psychol Rev. 1987.94(2):115-147.

- Bullier J. Integrated model of visual processing. Brain Res Brain Res Rev. 2001 .36(2-3):96-107.

- Elstrott J, Feller MB. Vision and the establishment of direction-selectivity: a tale of two circuits. Curr Opin Neurobiol. 2009.19(3):293-7. [CrossRef]

- Grossberg S. Adaptive pattern classification and universal recoding: I. Parallel development and coding of neural feature detectors. Biol Cybern. 1976. 23(3):121-34.

- Hebb, D. O. 1949. The Organization of Behavior: A Neuropsychological Theory. Wiley.

- Hubel, D.H., 1988. Eye, brain, and vision. New York: Scientific American Library.

- Iwata T, Yanagisawa T, Ikegaya Y, Smallwood J, Fukuma R, Oshino S, Tani N, Khoo HM, Kishima H. Hippocampal sharp-wave ripples correlate with periods of naturally occurring self-generated thoughts in humans. Nat Commun. 2024. 15(1):4078. [CrossRef]

- Janssen MA, Chen HT, Tritsch NX, van der Meer MAA. Ventral Striatal Dopamine Increases following Hippocampal Sharp-Wave Ripples.2025. bioRxiv [Preprint]. 2025.07.24.666687.

- Khodagholy D, Gelinas JN, Buzsáki G. Learning-enhanced coupling between ripple oscillations in association cortices and hippocampus. Science. 2017.358(6361):369-372. [CrossRef]

- Kveraga K, Ghuman AS, Bar M. Top-down predictions in the cognitive brain. Brain Cogn. 2007.65(2):145-68. [CrossRef]

- Levashov, O. V., & Safiulina, V. F. (2026). The Role of Synchronization of Neural Modules in Pattern Processing in the Visual System. bioRxiv. https://doi.org/10.64898/2025.12.29.696937. [CrossRef]

- Malcolm GL, Henderson JM. The effects of target template specificity on visual search in real-world scenes: evidence from eye movements. J Vis. 2009. 9(11):8.1-13. [CrossRef]

- Marr D. 1982. Vision: A computational investigation into the human representation and processing of visual information. San Francisco, CA: W.H. Freeman.

- Mishra A, Akkol S, Espinal E, Markowitz N, Tostaeva G, Freund E, Mehta AD, Bickel S. Hippocampal sharp wave ripples and coincident cortical ripples orchestrate human semantic networks.2024.bioRxiv[Preprint]. 2024.04.10.588795.

- Okubo M, Nicholls ME. Hemispheric asymmetry in temporal resolution: contribution of the magnocellular pathway. Psychon Bull Rev. 2005.12(4):755-9. [CrossRef]

- Pfeffer CK, Xue M, He M, Huang ZJ, Scanziani M. Inhibition of inhibition in visual cortex: the logic of connections between molecularly distinct interneurons. Nat Neurosci. 2013.16(8):1068-76. [CrossRef]

- Robinson HL, Todorova R, Nagy GA, Gruzdeva A, Paudel P, Oliva A, Fernandez-Ruiz A. Large sharp-wave ripples promote hippocampo-cortical memory reactivation and consolidation during sleep. Neuron. 2025.114(2):226-236.e6.

- Rochester N, Holland J, Haibt L and Duda W. "Tests on a cell assembly theory of the action of the brain, using a large digital computer," in IRE Transactions on Information Theory. 1956. vol. 2, no. 3, pp. 80-93. Trevelyan AJ, Sussillo D, Watson BO, Yuste R. Modular propagation of epileptiform activity: evidence for an inhibitory veto in neocortex. J Neurosci. 2006. 26(48):12447-55.

- Yang W, Sun C, Huszár R, Hainmueller T, Kiselev K, Buzsáki G. Selection of experience for memory by hippocampal sharp wave ripples. Science. 2024.383(6690):1478-1483. [CrossRef]

- Zeki S. A Vision of the Brain. Oxford : Blackwell Scientific Publications, 1993. 224 p.

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).