1. Introduction

In everyday classroom practice, teachers spend countless hours grading short-answer questions, a task that is not only time-consuming but also prone to inconsistency due to fatigue or subjective judgment. This burden limits teachers’ ability to provide timely, personalized feedback, which is critical for student learning. While automated short-answer grading (ASAG) offers a promising solution, existing methods struggle with three practical challenges that hinder their adoption in real classrooms. First, the diversity of student answers. The same correct idea can be expressed in countless ways, yet most automated scoring models rely on limited reference answers, leading to frequent misclassifications (Tan et al., 2022). For example, in a biology short-answer question, some students may express “mRNA leaves the nucleus” as “mRNA goes out of the nucleus”—semantically correct but easily flagged as incorrect by keyword-matching systems. Second, the ambiguity of score boundaries. Teachers often encounter partially correct or creatively worded answers that fall into the “gray area” between score levels, making precise classification difficult. Third, imbalanced data distribution. In real classrooms, high-scoring answers are relatively rare, resulting in training data that biases models toward lower scores and reduces their ability to recognize excellent responses (Kaldaras et al., 2022). These challenges not only reduce scoring accuracy but also undermine teachers’ trust in automated grading systems.

To address these challenges, researchers have explored various approaches to improving ASAG. The following sections review prior work on text classification methods, reference answer set construction, and data augmentation, leading to the formulation of our research hypotheses.

1.1. Text Classification Methods and Their Educational Limitations

In automated scoring research, text classification serves as the core technical framework. Early studies employed machine learning methods that relied on manually engineered features to build scoring models (Kumar et al., 2019; Saha et al., 2018; Sultan et al., 2016). These approaches are not only labor-intensive but also struggle to capture complex semantic relationships in student answers. Deep learning techniques, such as convolutional neural networks (CNNs) and long short-term memory networks (LSTMs), reduce the need for manual feature extraction but often fail to account for global semantic interactions (Alikaniotis et al., 2016; Huang et al., 2018; Riordan et al., 2017).

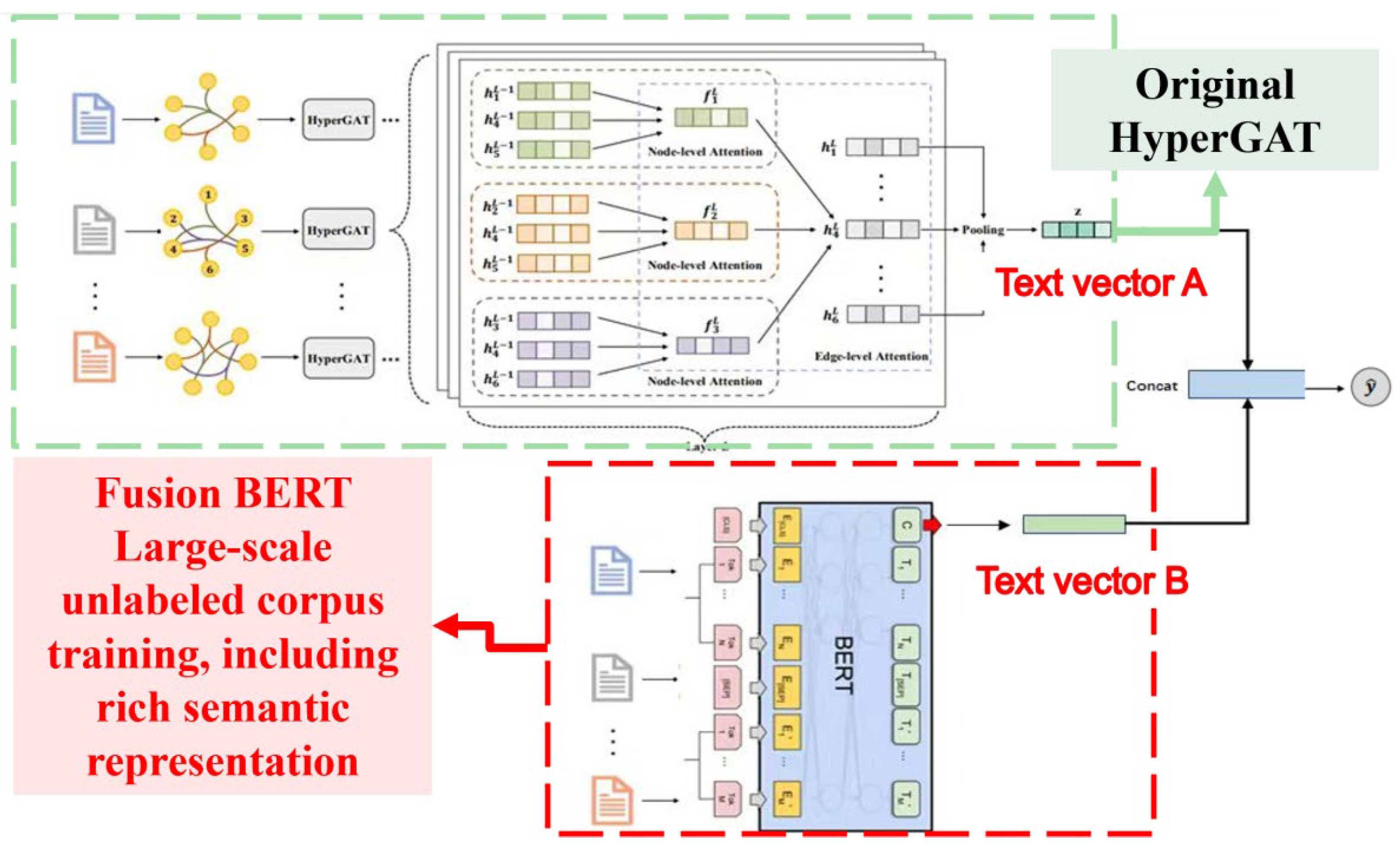

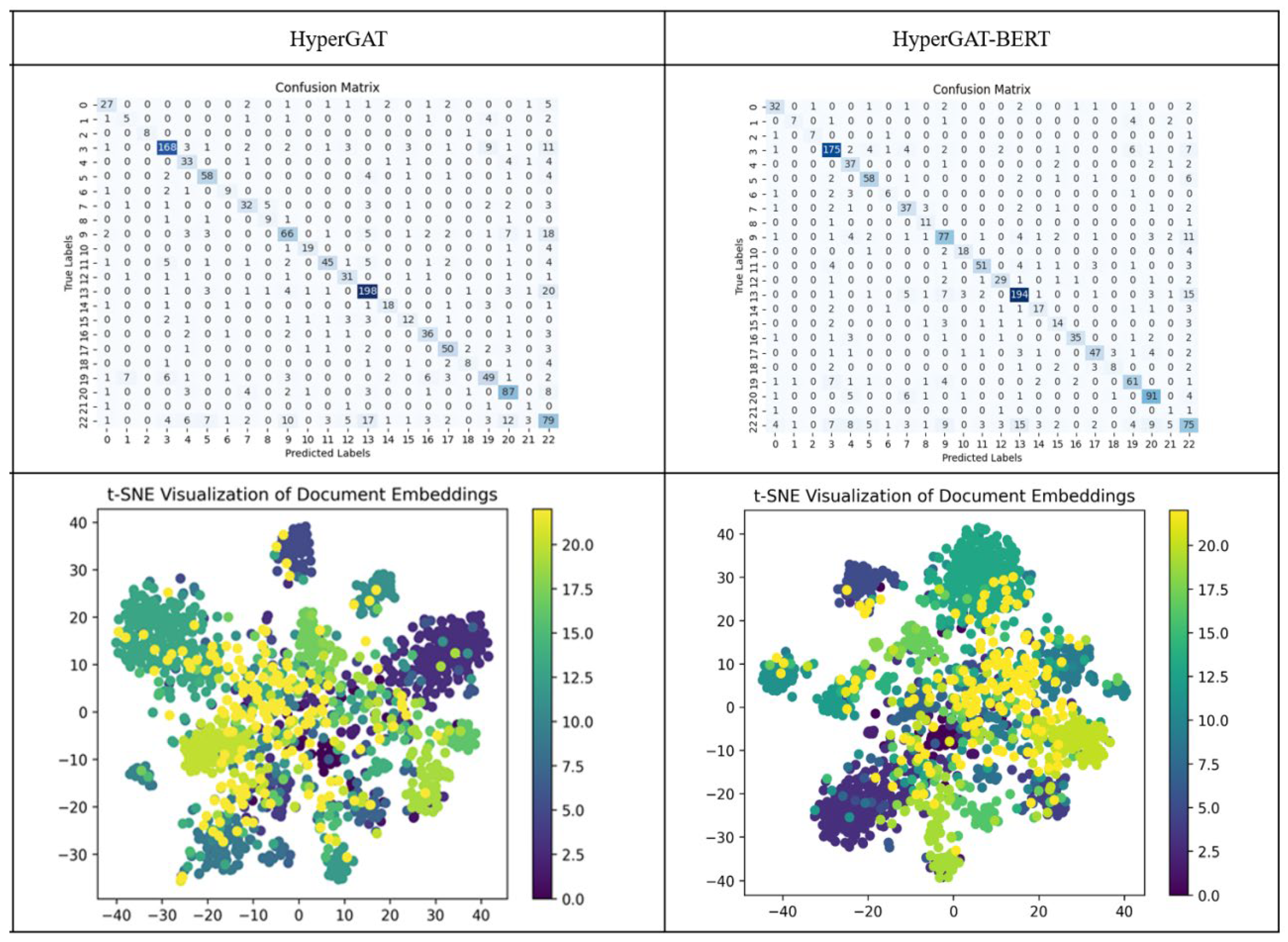

Recent studies have introduced graph convolutional networks (GCNs) to model global semantic structures in student answers (Tan et al., 2023). However, GCNs assume uniform importance among adjacent nodes, neglecting variations in how different words contribute to meaning (Gilmer et al., 2017). More importantly, traditional graphs are limited to pairwise connections, making it difficult to capture complex multi-word interactions in student answers. Hypergraphs address this limitation by allowing a single edge (hyperedge) to connect any number of nodes, enabling more effective modeling of high-order semantic relationships (Feng et al., 2019; Kim et al., 2020). Ding et al. (2020) proposed the HyperGraph Attention Network (HyperGAT), which achieved superior performance on text classification tasks compared to traditional methods, though its accuracy on the Ohsumed medical dataset remained modest at 0.69, indicating room for improvement.

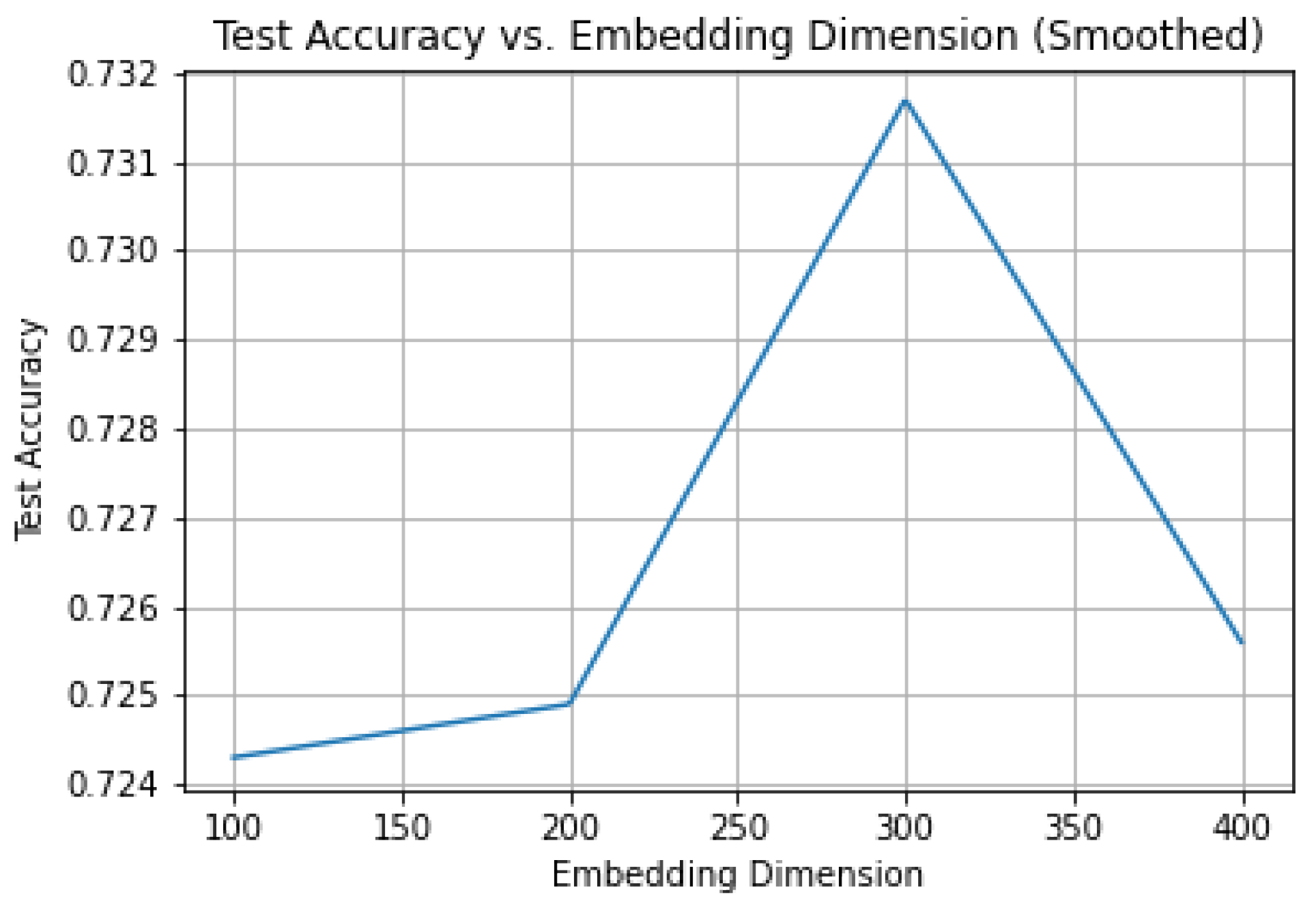

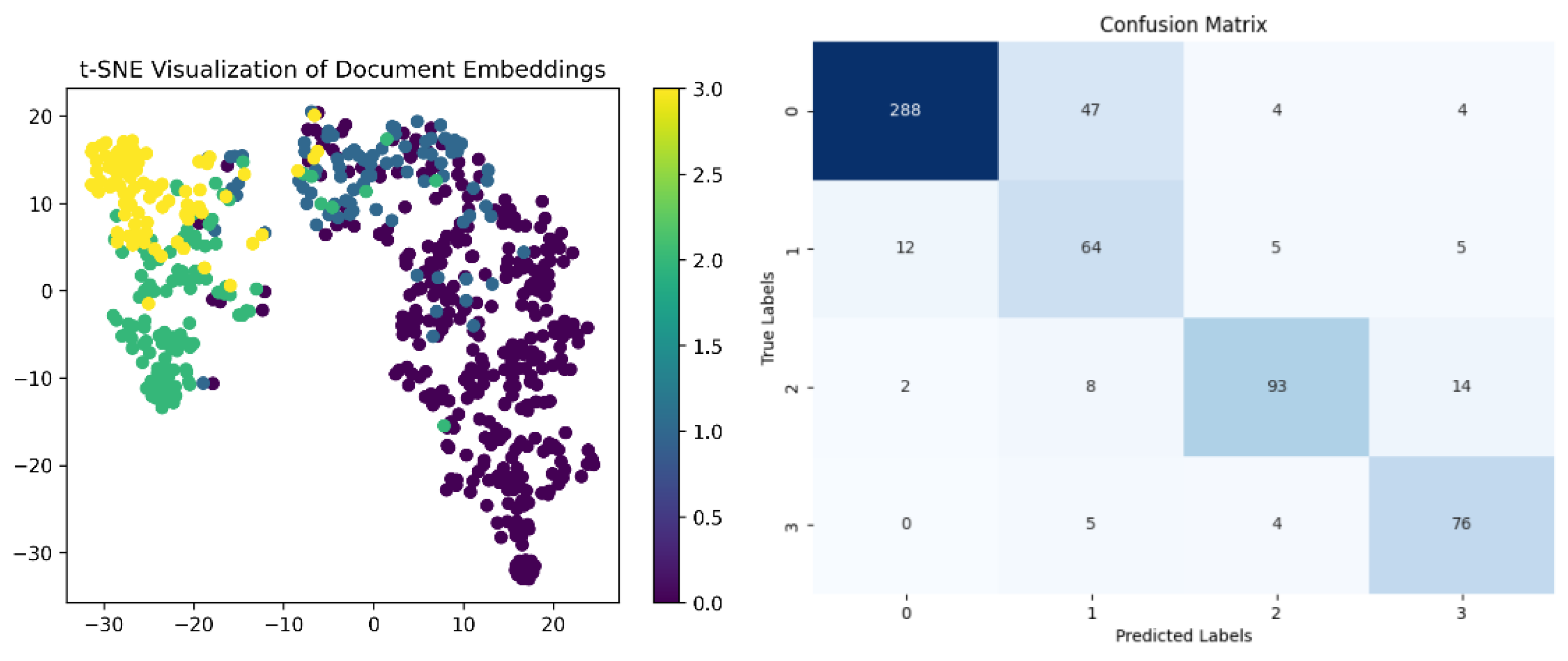

Meanwhile, the emergence of pre-trained language models such as BERT has brought significant advances in text understanding. Unlike static word embeddings, BERT generates dynamic, context-dependent representations, effectively resolving polysemy (Devlin et al., 2019). However, BERT alone struggles to capture the structured semantic relationships between student answers and reference answers. Therefore, Study 1 proposes integrating BERT with HyperGAT to develop a HyperGAT-BERT framework, with the goal of improving text classification accuracy. We hypothesize that combining BERT’s contextual awareness with HyperGAT’s ability to model high-order relationships will better represent student answer semantics and thereby enhance automated scoring performance.

1.2. Reference Answer Sets

The coverage of reference answers is a critical factor affecting ASAG accuracy (Burrows et al., 2015; Valenti et al., 2003). In practice, the diversity of student answers often exceeds the coverage of predefined reference sets, leading to many correct answers being incorrectly flagged as wrong. This problem is particularly acute for open-ended questions (Tan et al., 2022).

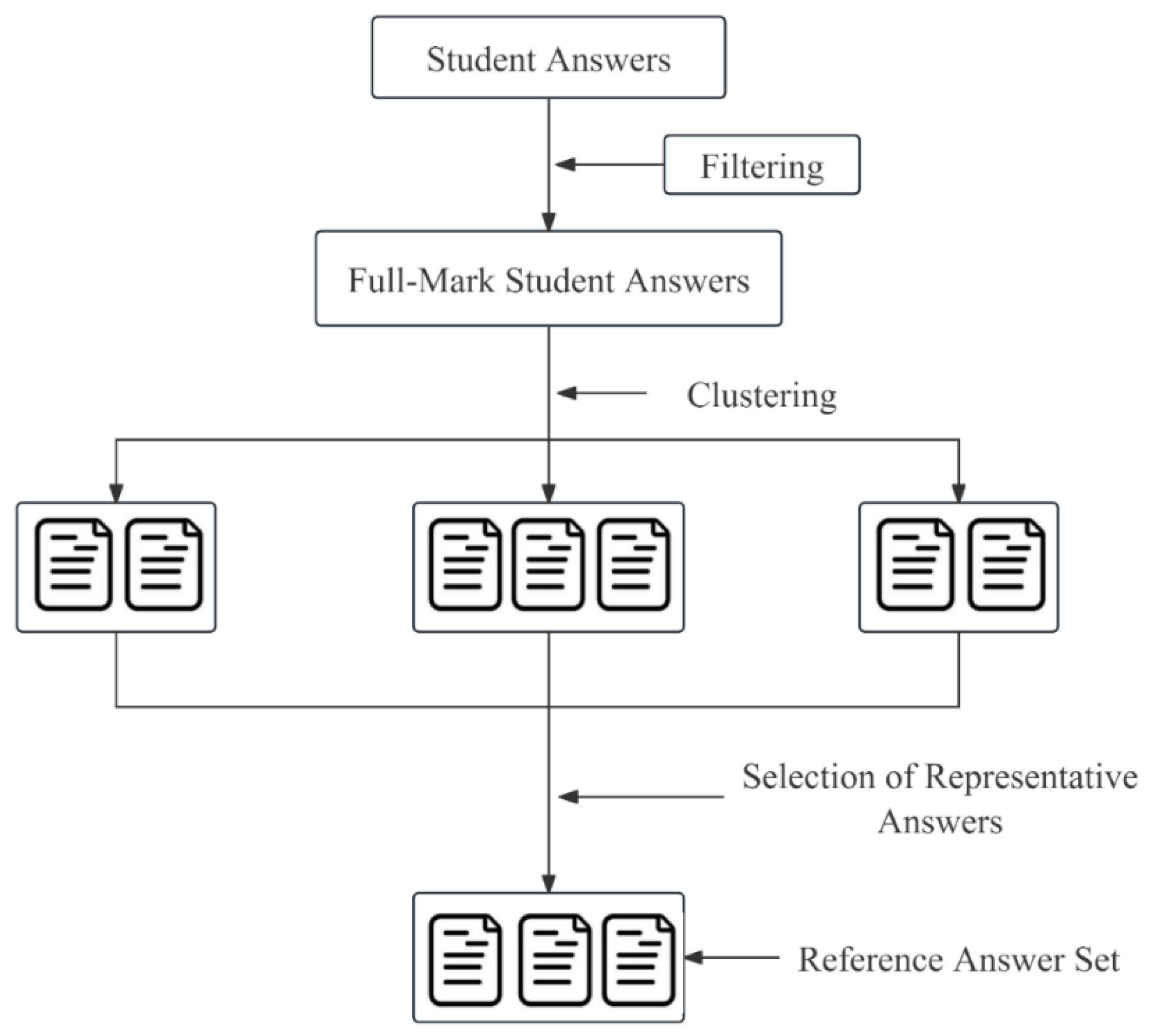

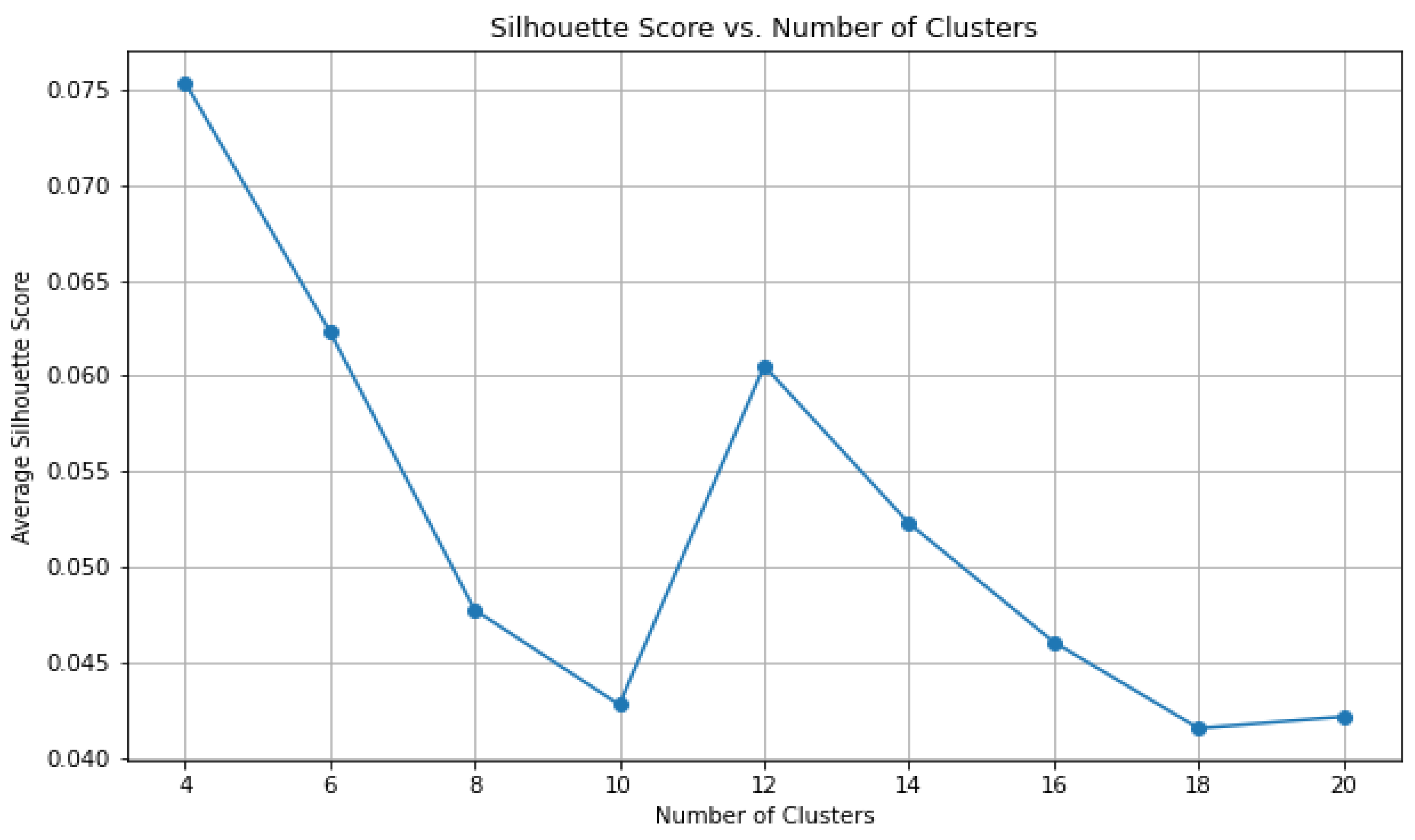

To address this issue, researchers have explored ways to construct more comprehensive reference answer sets. Lan et al. (2015) proposed clustering student answers and having experts select representative examples from each cluster, streamlining the scoring process. Marvaniya et al. (2018) further clustered answers by score level, selecting representative answers from each level. Specifically, reference answer set construction involves two key steps (Tan et al., 2019): (1) clustering analysis to identify distinct answer patterns, with each cluster representing a unique response type; and (2) selecting one or more prototypical answers from each cluster to compile the reference answer set, as illustrated in

Figure 1.

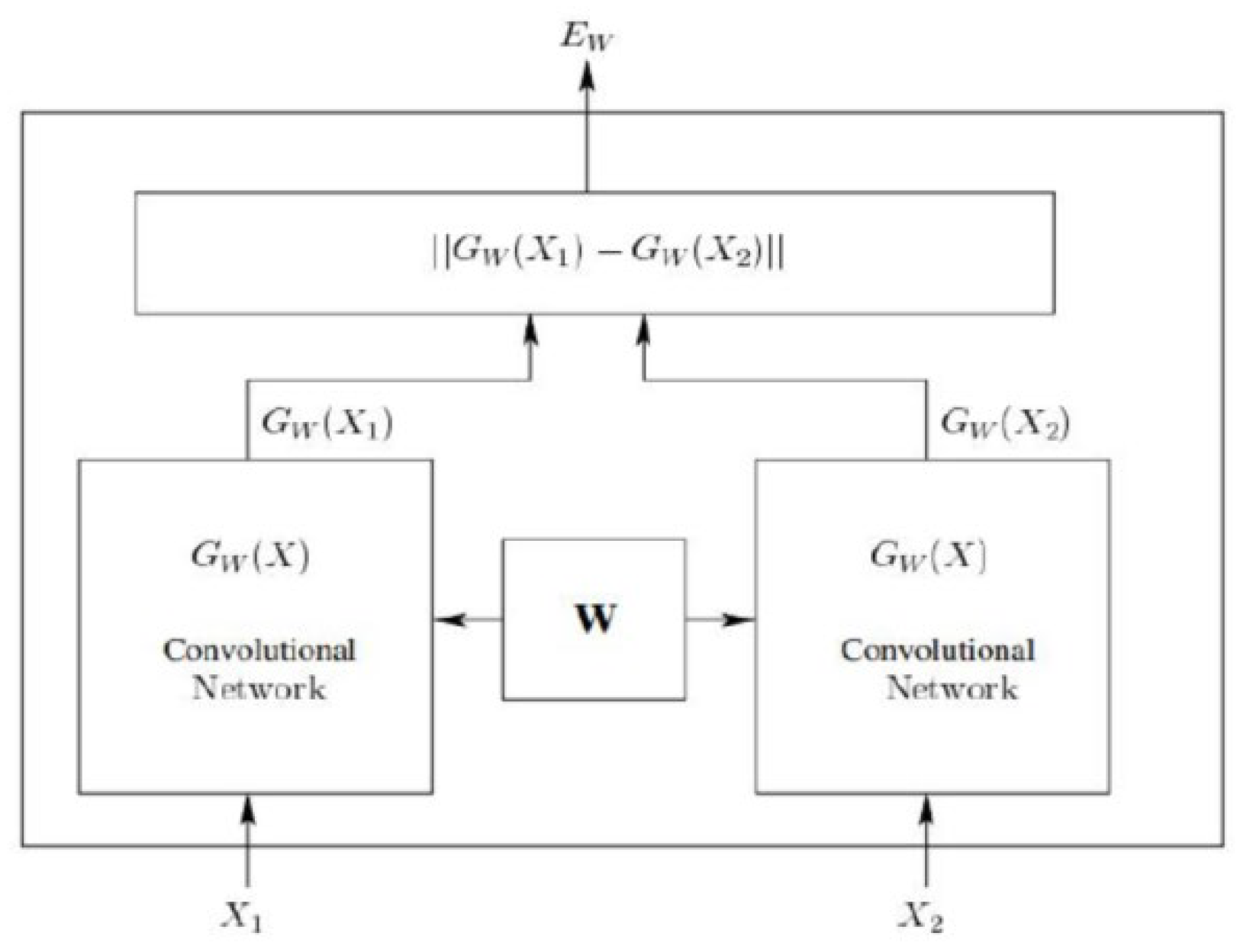

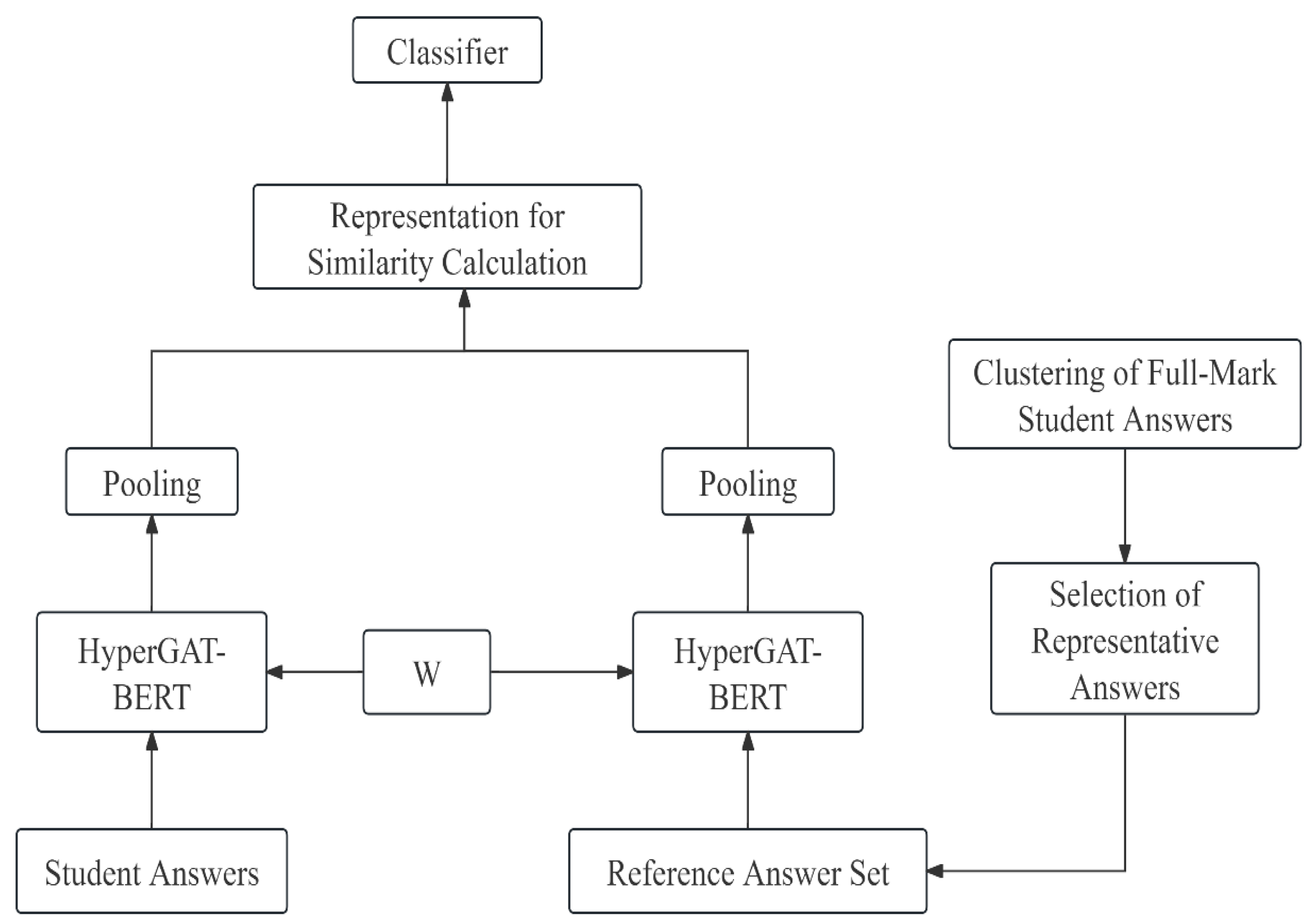

Furthermore, Siamese Neural Networks (SNNs), initially proposed for tasks such as face recognition, signature verification, and similarity learning (Bromley et al., 1993), have recently been adapted to educational applications, particularly in automated scoring of constructed-response items. The architecture comprises twin subnetworks that share identical parameters (e.g., weights and biases) but process two distinct input samples. As shown in

Figure 2, this design enables SNNs to effectively quantify similarity or dissimilarity between paired inputs through comparative analysis.

Based on this analysis, Study 2 proposes constructing a Reference Answer Set (RAS) via clustering and integrating it with SNNs to form the HyperGAT-BERT-RAS framework. We hypothesize that the RAS will cover more diverse answer patterns, while the SNN will more precisely compute semantic similarity between student answers and reference answers. Ablation experiments will validate the contribution of RAS to scoring accuracy.

1.3. Data Augmentation

In real classrooms, the distribution of student scores is often skewed, most students cluster around middle or low scores, while high-scoring answers are relatively rare. This imbalance biases automated scoring models toward majority classes and reduces their ability to recognize minority classes (Kaldaras et al., 2022). While balanced data distribution is critical for model performance (Shorten & Khoshgoftaar, 2019), collecting more high-scoring answers in classroom settings is often impractical. Data augmentation thus emerges as a viable alternative (Cochran et al., 2022).

Early NLP data augmentation methods included synonym replacement, back-translation, and random deletion (Wei & Zou, 2019; Yu et al., 2018). However, these approaches risk semantic distortion or over-reliance on rule-based heuristics. The advent of generative language models has opened new avenues for data augmentation (Bayer et al., 2023). Notably, GPT-4, with its powerful language understanding and generation capabilities, has demonstrated exceptional performance in text augmentation tasks (OpenAI, 2022). Studies have shown that GPT-4-generated synthetic data can effectively improve model performance in low-resource classification tasks (Dai et al., 2023; Ubani et al., 2023; Møller et al., 2023). Cochran et al. (2023) further demonstrated that ChatGPT-augmented data improves automated essay scoring accuracy in low-data regimes.

Therefore, the latter phase of Study 2 employs GPT-4 for data augmentation, generating student answers for score levels 2 and 3 in the ASAP-5 dataset, which are underrepresented. By balancing data distribution, we aim to further improve the accuracy of HyperGAT-BERT-RAS for automated short-answer scoring.

1.4. Novel Contributions of This Work

Based on the above analysis, this study makes the following four contributions: (1) Technical integration. We are the first to jointly optimize BERT’s contextualized representations with HyperGAT’s hypergraph structure for ASAG, addressing the challenge of precise semantic matching. (2) RAS-guided Siamese scoring. Unlike prior work that uses RAS only for answer selection, we embed RAS directly into a Siamese architecture to compute fine-grained similarity scores. (3) Controlled LLM augmentation. We provide the systematic evaluation of GPT-4 augmentation for ASAG, including prompt design, quality assessment, and leakage prevention. (4) Educational significance. By improving scoring accuracy and consistency, this study aims to reduce teacher grading burden, support formative assessment implementation, and provide a reliable tool for AI-assisted assessment in real classrooms.