Submitted:

21 April 2026

Posted:

23 April 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Materials and Methods

2.1. Study Design

2.2. Sample Size

2.3. Population

2.4. Emotional Arousal Image Bank

2.5. Modified Mannheim Craving Scale

2.6. Facial Expression Recognition System

2.7. Facial Expression Recognition System

2.8. Statistical Analysis

3. Results

3.1. Characteristics and Adherence of Participants

3.2. Substance Use Characteristics in the SUD Group

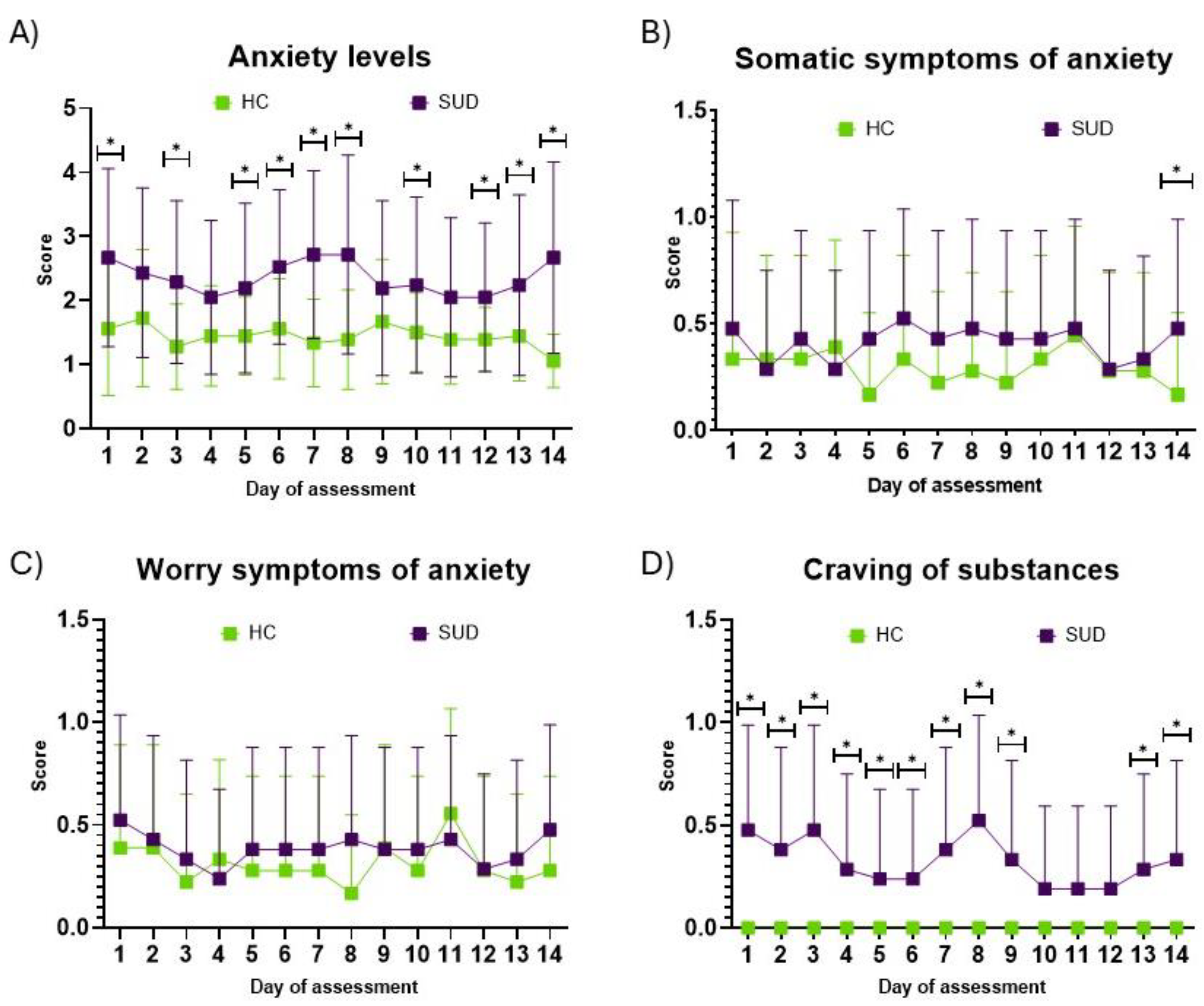

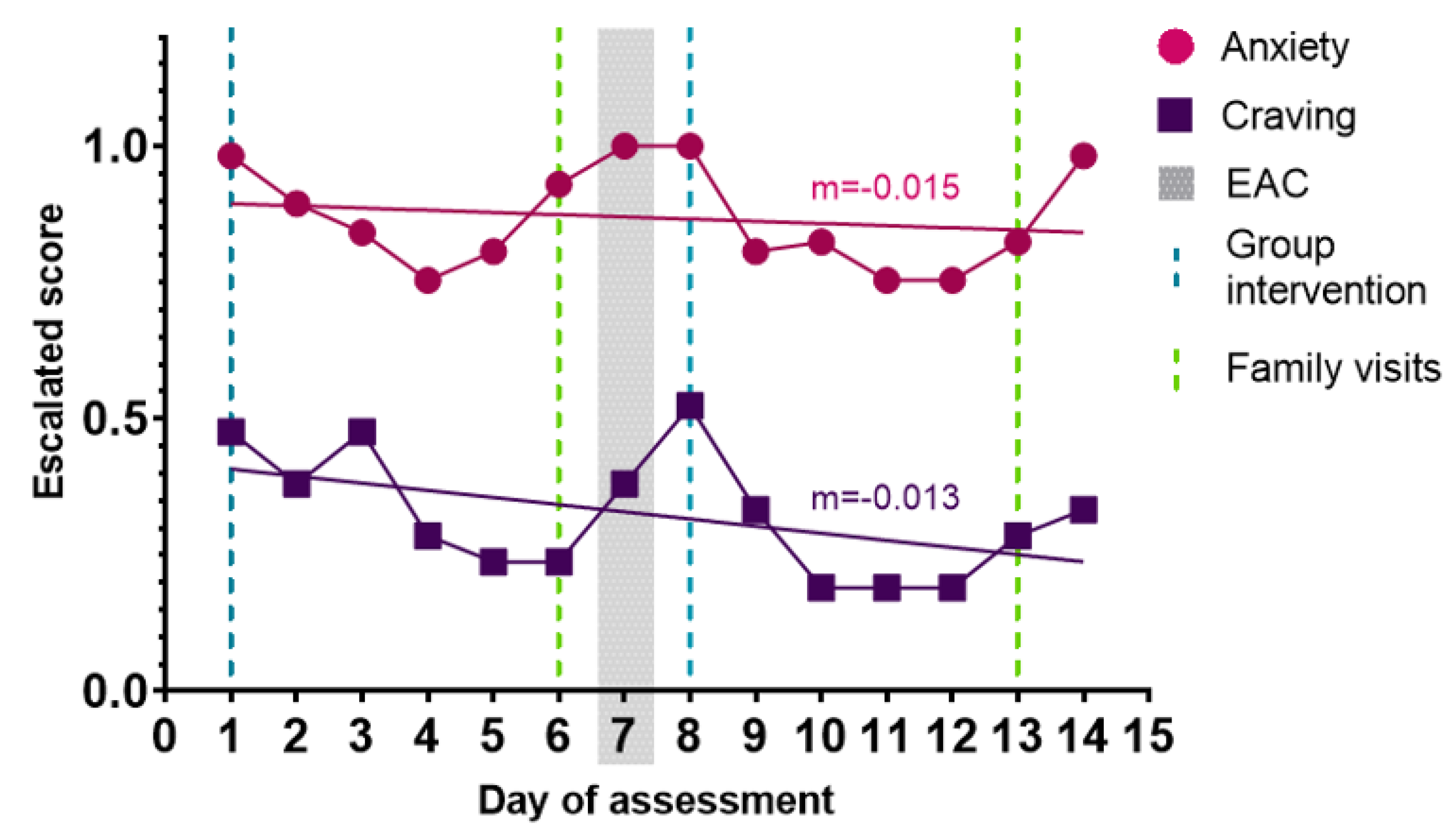

3.3. Anxiety Influences Craving Levels in SUD

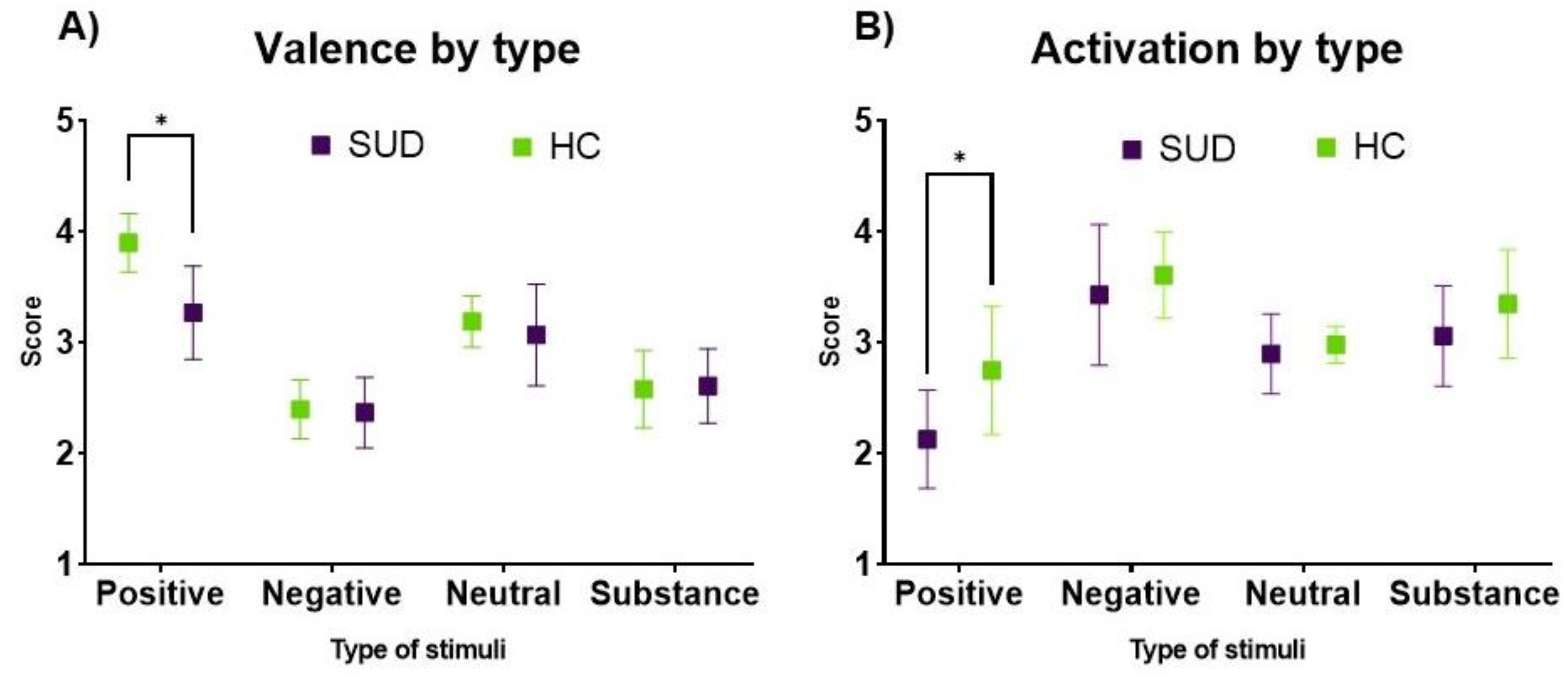

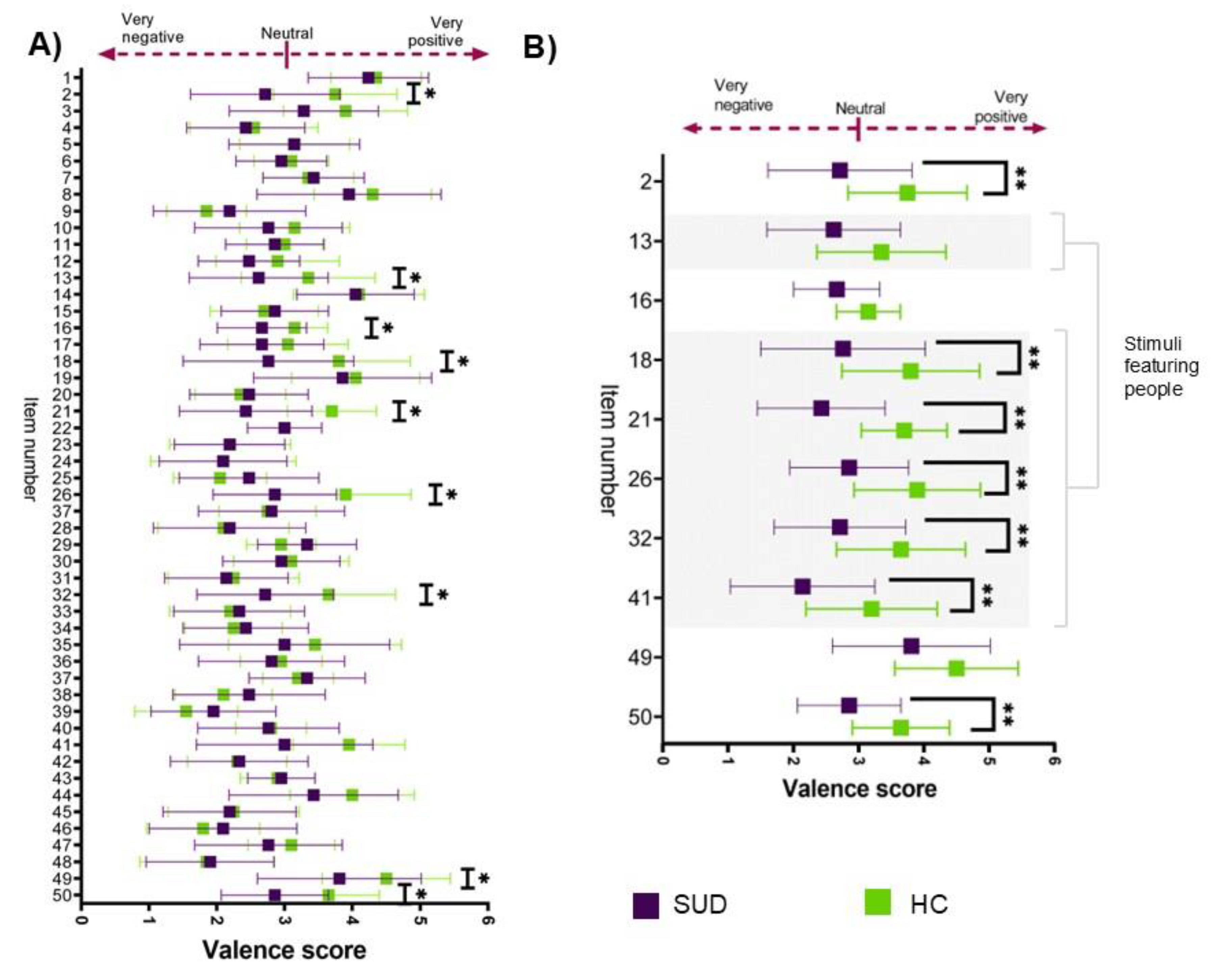

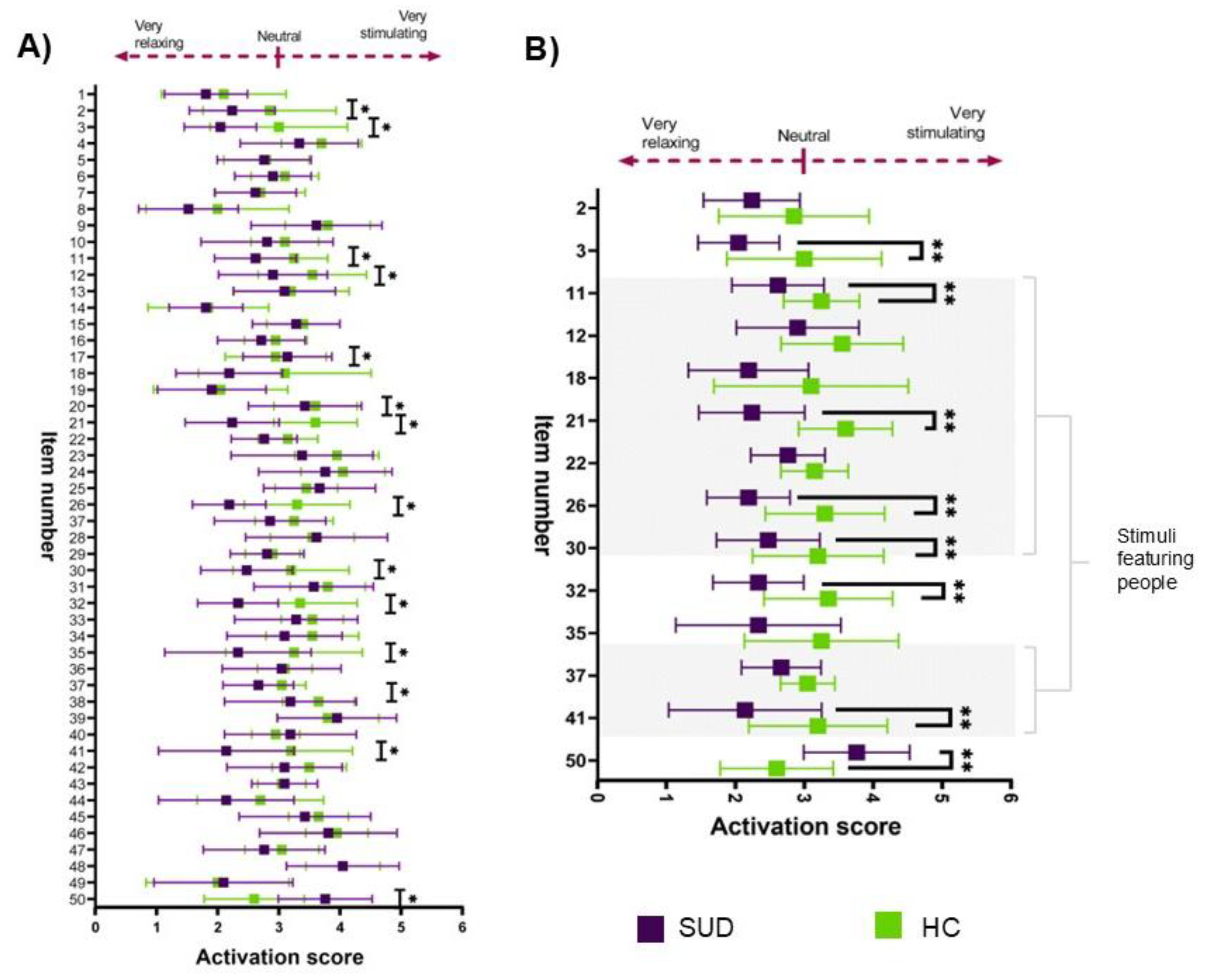

3.4. Positive Emotional Valence and Activation Are Diminished in SUD

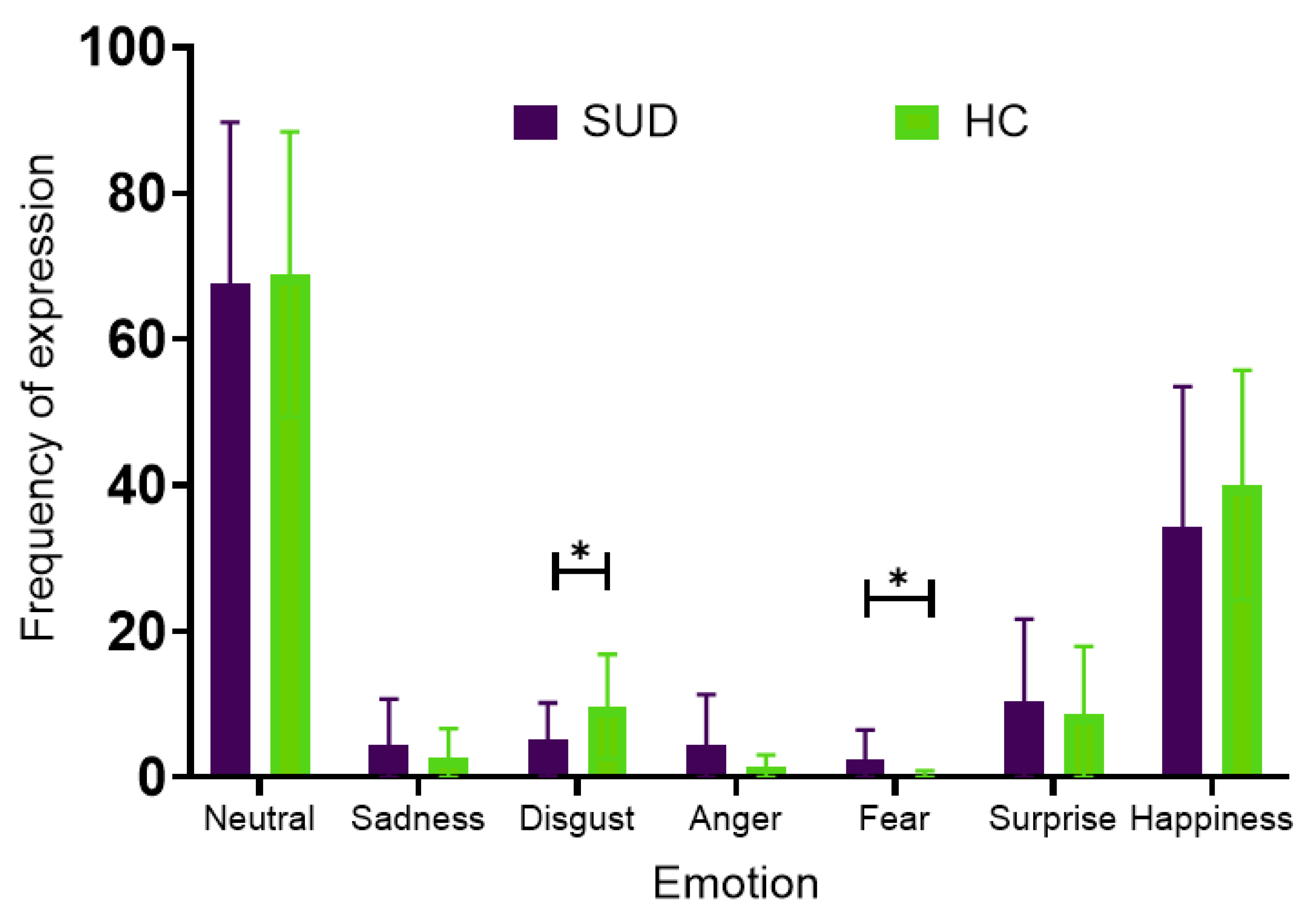

3.5. Comparison of Facial Emotion Expression Between SUD and HC Groups

4. Discussion

5. Conclusions

Supplementary Materials

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- World Health Organization International Statistical Classification of Diseases and Related Health Problems (ICD). Https://Icd.Who.Int/Browse/2024-01/Mms/En 2022.

- American Psychiatric Association Diagnostic and Statistical Manual of Mental Disorders: Fifth Edition Text Revision DSM-5-TRTM.

- Volkow, N.D.; Blanco, C. Substance Use Disorders: A Comprehensive Update of Classification, Epidemiology, Neurobiology, Clinical Aspects, Treatment and Prevention. World Psychiatry 2023, 22, 203–229. [CrossRef]

- Comisión Nacional contra las Adicciones Auditoría de Desempeño 2019-5-12X00-07-0176-2020: Prevención y Atención Contra Las Adicciones. 2019.

- Instituto para la Economía y la Paz Índice de Paz México 2023: Identificación y Medición de Los Factores Que Impulsan La Paz. 2023.

- Volkow, N.D.; Michaelides, M.; Baler, R. The Neuroscience of Drug Reward and Addiction. Physiol Rev 2019, 99, 2115–2140. [CrossRef]

- Sampedro-Piquero, P.; Ladrón de Guevara-Miranda, D.; Pavón, F.J.; Serrano, A.; Suárez, J.; Rodríguez de Fonseca, F.; Santín, L.J.; Castilla-Ortega, E. Neuroplastic and Cognitive Impairment in Substance Use Disorders: A Therapeutic Potential of Cognitive Stimulation. Neurosci Biobehav Rev 2019, 106, 23–48. [CrossRef]

- Bresin, K.; Verona, E. Craving and Substance Use: Examining Psychophysiological and Behavioral Moderators. Int J Psychophysiol 2021, 163, 92–103. [CrossRef]

- Mental, Neurological, and Substance Use Disorders: Disease Control Priorities, Third Edition (Volume 4); Patel, V., Chisholm, D., Dua, T., Laxminarayan, R., Medina-Mora, M.E., Eds.; The International Bank for Reconstruction and Development / The World Bank: Washington (DC), 2016; ISBN 978-1-4648-0426-7.

- McRae, K.; Gross, J.J. Emotion Regulation. Emotion 2020, 20, 1–9. [CrossRef]

- Nozaki, Y.; Mikolajczak, M. Extrinsic Emotion Regulation. Emotion 2020, 20, 10–15. [CrossRef]

- Koob, G.F. Antireward, Compulsivity, and Addiction: Seminal Contributions of Dr. Athina Markou to Motivational Dysregulation in Addiction. Psychopharmacology (Berl) 2017, 234, 1315–1332. [CrossRef]

- Antons, S.; Brand, M.; Potenza, M.N. Neurobiology of Cue-Reactivity, Craving, and Inhibitory Control in Non-Substance Addictive Behaviors. J Neurol Sci 2020, 415, 116952. [CrossRef]

- Viera, A.; Jadovich, E.; Lauckner, C.; Muilenburg, J.; Kershaw, T. Responding to Location-Based Triggers of Cravings to Return to Substance Use: A Qualitative Study. J Subst Use Addict Treat 2025, 168, 209534. [CrossRef]

- Carretié, L.; Tapia, M.; López-Martín, S.; Albert, J. EmoMadrid: An Emotional Pictures Database for Affect Research. Motiv Emot 2019, 43, 929–939. [CrossRef]

- Marczak-Czajka, A.; Redgrave, T.; Mitcheff, M.; Villano, M.; Czajka, A. Assessment of Human Emotional Reactions to Visual Stimuli “Deep-Dreamed” by Artificial Neural Networks. Front. Psychol. 2024, 15. [CrossRef]

- Garzón-Partida, A.P.; Magaña-Plascencia, K.; Martínez-Fernández, D.E.; García-Estrada, J.; Luquin, S.; Fernández-Quezada, D. Development of a Cohesive Predictive Model for Substance Use Disorder Rehabilitation Using Passive Digital Biomarkers, Psychological Assessments, and Automated Facial Emotion Recognition: Protocol for a Prospective Cohort Study. JMIR Res Protoc 2025, 14, e71374. [CrossRef]

- Meule, A.; Nakovics, H.; Kübler, A. The Mannheimer Craving Scale (MaCS): Psychometric Properties in a Non-Clinical Sample and Development of Cut-off Score 2023.

- Kelley, N.J.; Finley, A.J.; Schmeichel, B.J. Aftereffects of Self-Control: The Reward Responsivity Hypothesis. Cogn Affect Behav Neurosci 2019, 19, 600–618. [CrossRef]

- Young, C.B.; Nusslock, R. Positive Mood Enhances Reward-Related Neural Activity. Soc Cogn Affect Neurosci 2016, 11, 934–944. [CrossRef]

- London, E.D.; Groman, S.M.; Leyton, M.; de Wit, H. The Mesocorticolimbic System in Stimulant Use Disorder. Mol Psychiatry 2025, 30, 5486–5499. [CrossRef]

- Li, Q.; Du, M.; Xiao, J.; Li, T.; Hu, K.; Tu, S.; Liu, X.; Wang, L.; Dai, W. Divergent Neural Mechanisms of Reward Processing and Cognitive Control in Non-Substance and Substance Addiction: A Meta-Analytic Perspective. NeuroImage 2026, 327, 121735. [CrossRef]

- Bhanji, J.P.; Delgado, M.R. The Social Brain and Reward: Social Information Processing in the Human Striatum. Wiley Interdiscip Rev Cogn Sci 2014, 5, 61–73. [CrossRef]

- Sazhin, D.; Frazier, A.M.; Haynes, C.R.; Johnston, C.R.; Chat, I.K.-Y.; Dennison, J.B.; Bart, C.P.; McCloskey, M.E.; Chein, J.M.; Fareri, D.S.; et al. The Role of Social Reward and Corticostriatal Connectivity in Substance Use. J Psychiatr Brain Sci 2020, 5, e200024. [CrossRef]

- Ike, K.G.O.; de Boer, S.F.; Buwalda, B.; Kas, M.J.H. Social Withdrawal: An Initially Adaptive Behavior That Becomes Maladaptive When Expressed Excessively. Neuroscience & Biobehavioral Reviews 2020, 116, 251–267. [CrossRef]

- Kwako, L.E.; Bickel, W.K.; Goldman, D. Addiction Biomarkers: Dimensional Approaches to Understanding Addiction. Trends Mol Med 2018, 24, 121–128. [CrossRef]

- Ferrer-Pérez, C.; Montagud-Romero, S.; Blanco-Gandía, M.C. Neurobiological Theories of Addiction: A Comprehensive Review. Psychoactives 2024, 3, 35–47. [CrossRef]

- Schlauch, R.C.; Gwynn-Shapiro, D.; Stasiewicz, P.R.; Molnar, D.S.; Lang, A.R. Affect and Craving: Positive and Negative Affect Are Differentially Associated with Approach and Avoidance Inclinations. Addictive Behaviors 2013, 38, 1970–1979. [CrossRef]

- Murphy, A.; Taylor, E.; Elliott, R. The Detrimental Effects of Emotional Process Dysregulation on Decision-Making in Substance Dependence. Front Integr Neurosci 2012, 6, 101. [CrossRef]

- Cyr, L.; Bernard, L.; Pedinielli, J.-L.; Cutarella, C.; Bréjard, V. Association Between Negative Affectivity and Craving in Substance-Related Disorders: A Systematic Review and Meta-Analysis of Direct and Indirect Relationships. Psychol Rep 2023, 126, 1143–1180. [CrossRef]

- Votaw, V.R.; Tuchman, F.R.; Piccirillo, M.L.; Schwebel, F.J.; Witkiewitz, K. Examining Associations Between Negative Affect and Substance Use in Treatment-Seeking Samples: A Review of Studies Using Intensive Longitudinal Methods. Curr Addict Rep 2022, 9, 445–472. [CrossRef]

- Khedr, M.A.; El-Ashry, A.M.; Ali, E.A.; Eweida, R.S. Relationship between Craving to Drugs, Emotional Manipulation and Interoceptive Awareness for Social Acceptance: The Addictive Perspective. BMC Nurs 2023, 22, 376. [CrossRef]

- Darharaj, M.; Hekmati, I.; Mohammad Ghezel Ayagh, F.; Ahmadi, A.; Eskin, M.; Abdollahpour Ranjbar, H. Emotional Dysregulation and Craving in Patients with Substance Use Disorder: The Mediating Role of Psychological Distress. International Journal of Mental Health and Addiction 2023. [CrossRef]

- Sinha, R. Chronic Stress, Drug Use, and Vulnerability to Addiction. Ann N Y Acad Sci 2008, 1141, 105–130. [CrossRef]

- Ressler, K.J. Amygdala Activity, Fear, and Anxiety: Modulation by Stress. Biol Psychiatry 2010, 67, 1117–1119. [CrossRef]

- Nikbakhtzadeh, M.; Ranjbar, H.; Moradbeygi, K.; Zahedi, E.; Bayat, M.; Soti, M.; Shabani, M. Cross-Talk between the HPA Axis and Addiction-Related Regions in Stressful Situations. Heliyon 2023, 9, e15525. [CrossRef]

- Hand, L.J.; Paterson, L.M.; Lingford-Hughes, A.R. Re-Evaluating Our Focus in Addiction: Emotional Dysregulation Is a Critical Driver of Relapse to Drug Use. Transl Psychiatry 2024, 14, 467. [CrossRef]

- Knowles, K.A.; Cox, R.C.; Armstrong, T.; Olatunji, B.O. Cognitive Mechanisms of Disgust in the Development and Maintenance of Psychopathology: A Qualitative Review and Synthesis. Clin Psychol Rev 2019, 69, 30–50. [CrossRef]

- Schienle, A.; Stark, R.; Walter, B.; Blecker, C.; Ott, U.; Kirsch, P.; Sammer, G.; Vaitl, D. The Insula Is Not Specifically Involved in Disgust Processing: An fMRI Study. Neuroreport 2002, 13, 2023–2026. [CrossRef]

- Castellano, G.; De Carolis, B.; Macchiarulo, N. Automatic Facial Emotion Recognition at the COVID-19 Pandemic Time. Multimed Tools Appl 2023, 82, 12751–12769. [CrossRef]

- Ceja-Vega, M.J.; Ruvalcaba-Delgadillo, Y.; Jáuregui-Huerta, F. Impact of Methamphetamine Abstinence on Social Cognition and Oxytocin Regulation: A Study in Patients Undergoing Rehabilitation. Personalized Medicine in Psychiatry 2025, 51–52, 100156. [CrossRef]

- Castellano, F.; Bartoli, F.; Crocamo, C.; Gamba, G.; Tremolada, M.; Santambrogio, J.; Clerici, M.; Carrà, G. Facial Emotion Recognition in Alcohol and Substance Use Disorders: A Meta-Analysis. Neuroscience & Biobehavioral Reviews 2015, 59, 147–154. [CrossRef]

- Hosseini, M.; Sohrab, F.; Gottumukkala, R.; Bhupatiraju, R.T.; Katragadda, S.; Raitoharju, J.; Iosifidis, A.; Gabbouj, M. A Multimodal Stress Detection Dataset with Facial Expressions and Physiological Signals. Sci Data 2025, 12, 1844. [CrossRef]

- Castellano, F.; Bartoli, F.; Crocamo, C.; Gamba, G.; Tremolada, M.; Santambrogio, J.; Clerici, M.; Carrà, G. Facial Emotion Recognition in Alcohol and Substance Use Disorders: A Meta-Analysis. Neurosci Biobehav Rev 2015, 59, 147–154. [CrossRef]

- Rabin, R.A.; Parvaz, M.A.; Alia-Klein, N.; Goldstein, R.Z. Emotion Recognition in Individuals with Cocaine Use Disorder: The Role of Abstinence Length and the Social Brain Network. Psychopharmacology (Berl) 2022, 239, 1019–1033. [CrossRef]

- Bonfiglio, N.S.; Renati, R.; Bottini, G. Decoding Emotion in Drug Abusers: Evidence for Face and Body Emotion Recognition and for Disgust Emotion. Eur J Investig Health Psychol Educ 2022, 12, 1427–1440. [CrossRef]

- Żurowska, N.; Kałwa, A.; Rymarczyk, K.; Habrat, B. Recognition of Emotional Facial Expressions in Benzodiazepine Dependence and Detoxification. Cogn Neuropsychiatry 2018, 23, 74–87. [CrossRef]

- Bland, A.R.; Ersche, K.D. Deficits in Recognizing Female Facial Expressions Related to Social Network in Cocaine-Addicted Men. Drug Alcohol Depend 2020, 216, 108247. [CrossRef]

| Variable | SUD | HC | ||

| % / x̄±SD | n | %/ x̄±SD | n | |

| Age | 31.33±10.01 | 22 | 25.79±6.39 | 20 |

| Education | ||||

| Secondary education | 14.29% | 3 | 0% | 0 |

| High School | 47.62% | 10 | 5% | 1 |

| Bachelor’s degree | 23.81% | 5 | 70% | 14 |

| Postgraduate degree | 9.52% | 2 | 25% | 5 |

| Employment | ||||

| Manual labor | 42.86% | 9 | 5% | 1 |

| Customer service | 19.05% | 4 | 5% | 1 |

| Self-employed | 19.05% | 4 | 10% | 2 |

| Healthcare | 4.76% | 1 | 30% | 6 |

| Unemployed | 9.52% | 2 | 5% | 1 |

| Other a | 4.76% | 1 | 45% | 9 |

| Marital status | ||||

| Single | 76.19% | 16 | 85% | 17 |

| Long-term partner or married | 4.76% | 1 | 15% | 3 |

| Separation or divorce | 19.05% | 4 | 0% | 0 |

| Housing | ||||

| Alone | 19.05% | 4 | 5% | 1 |

| Parents and/or siblings | 47.62% | 10 | 60% | 12 |

| Partner with/without children | 9.52% | 2 | 15% | 3 |

| Relatives | 19.05% | 4 | 5% | 1 |

| Friends and/or roommates | 4.76% | 1 | 15% | 3 |

| Mental health | ||||

| Depressive disorders | 52.38% | 11 | 10% | 2 |

| Anxiety disorders | 42.86% | 9 | 20% | 4 |

| ADHD | 28.57% | 6 | 15% | 3 |

| Bipolar disorders | 19.05% | 4 | - | - |

| Antisocial personality disorder | 4.76% | 1 | - | - |

| None | 19.05% | 4 | 70% | 14 |

| Medications | ||||

| Antipsychotics | 42.86% | 9 | - | - |

| Antidepressants | 14.29% | 3 | - | - |

| Methylphenidate | 9.52% | 2 | 10% | 2 |

| Anxiolytics | 9.52% | 2 | 5% | 1 |

| Antiretrovirals | 4.76% | 1 | - | - |

| Cardiovascular | 4.76% | 1 | - | - |

| None | 23.81% | 5 | 85% | 17 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).