Submitted:

15 April 2026

Posted:

23 April 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

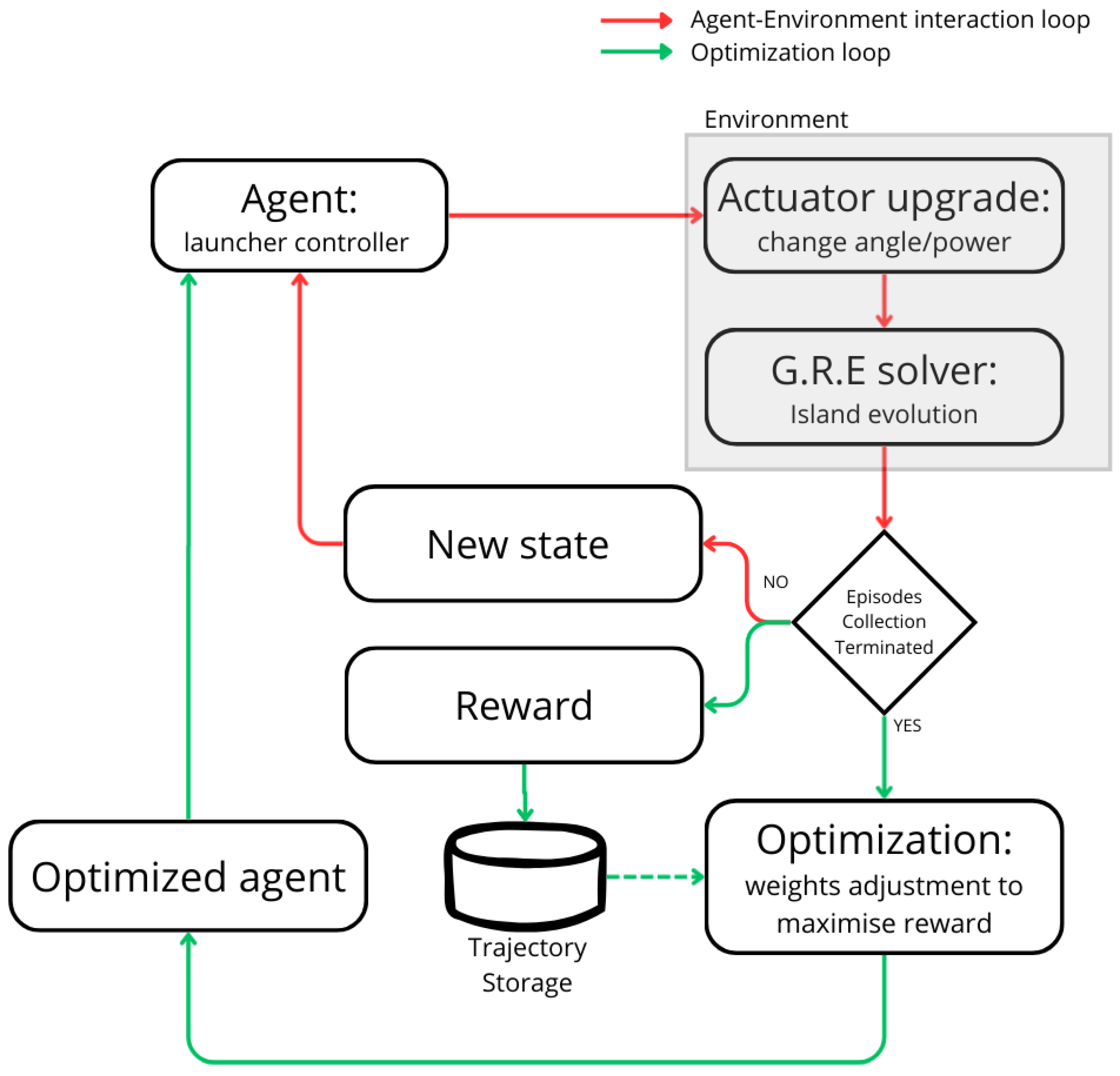

2. Reinforcement Learning Framework

2.1. Reinforcement Learning

2.2. Environment

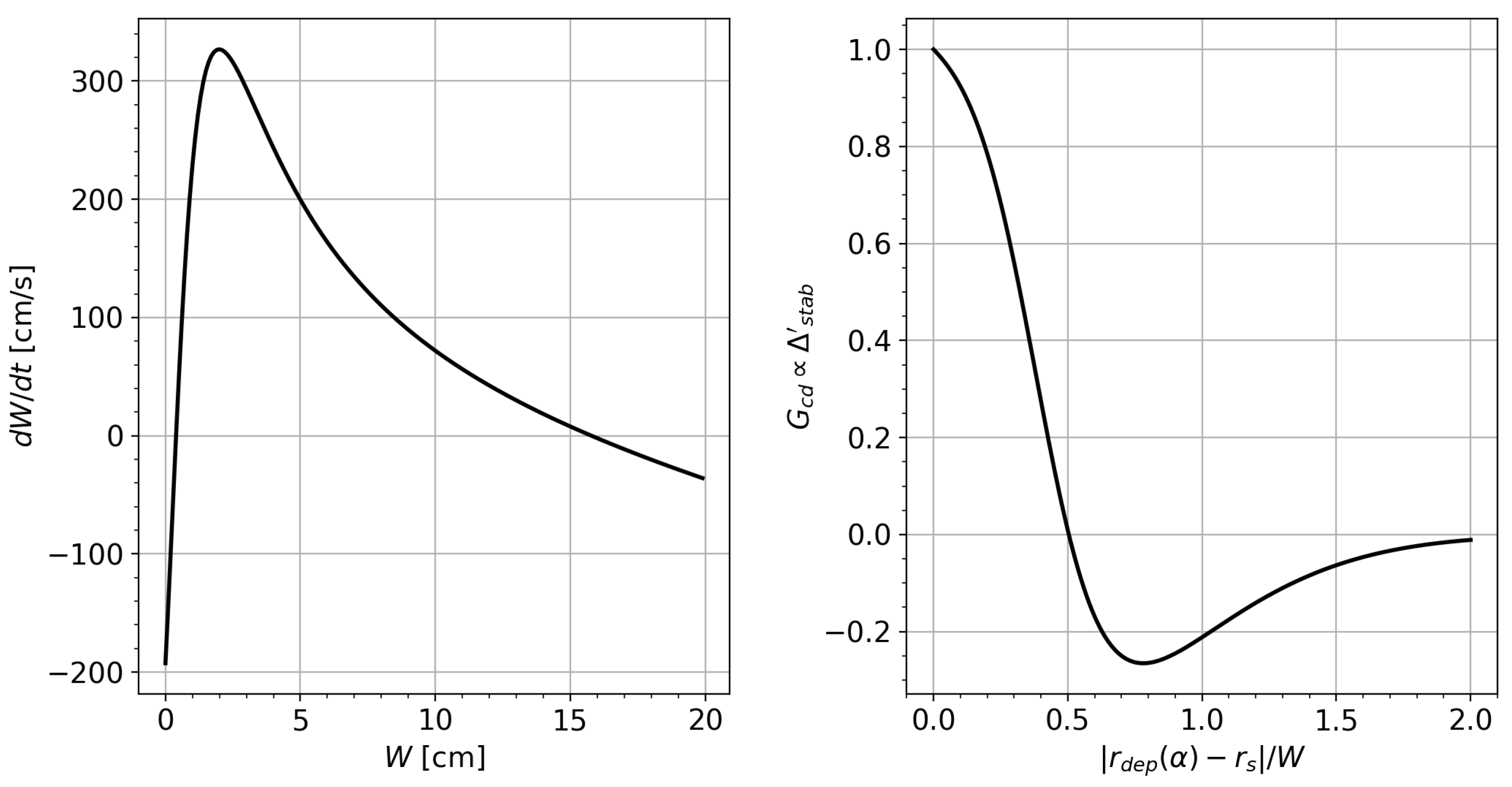

2.2.1. NTM Evolution Model

2.2.2. Reward Function

2.3. Training Algorithm

2.3.1. Loss Function

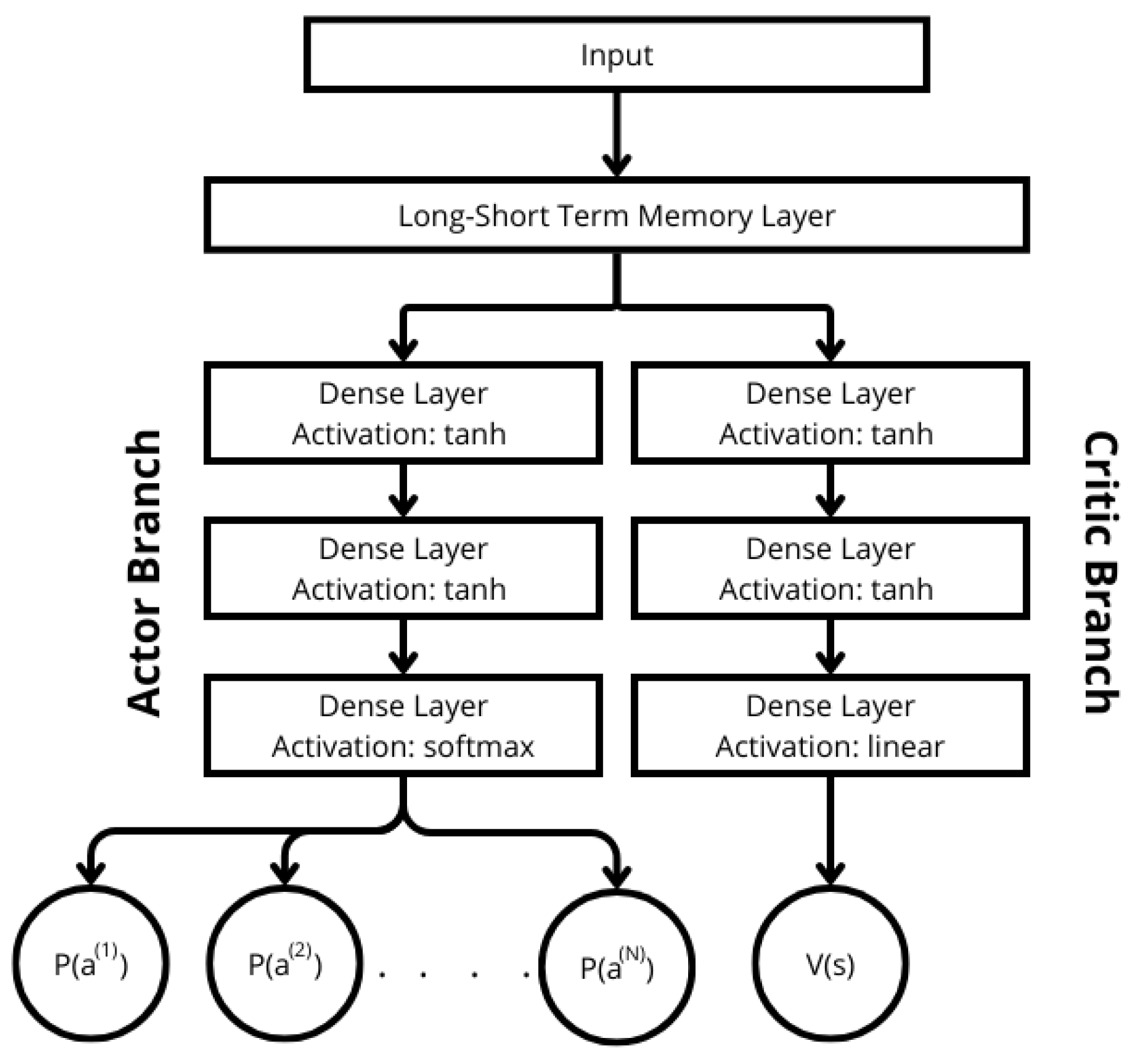

2.4. Neural Network Architecture

3. Alignment Task with Fixed Power

| Action ID | Command | Description |

| 0 | Increase launcher angle | |

| 1 | Decrease launcher angle | |

| 2 | 0 | Keep launcher angle unchanged |

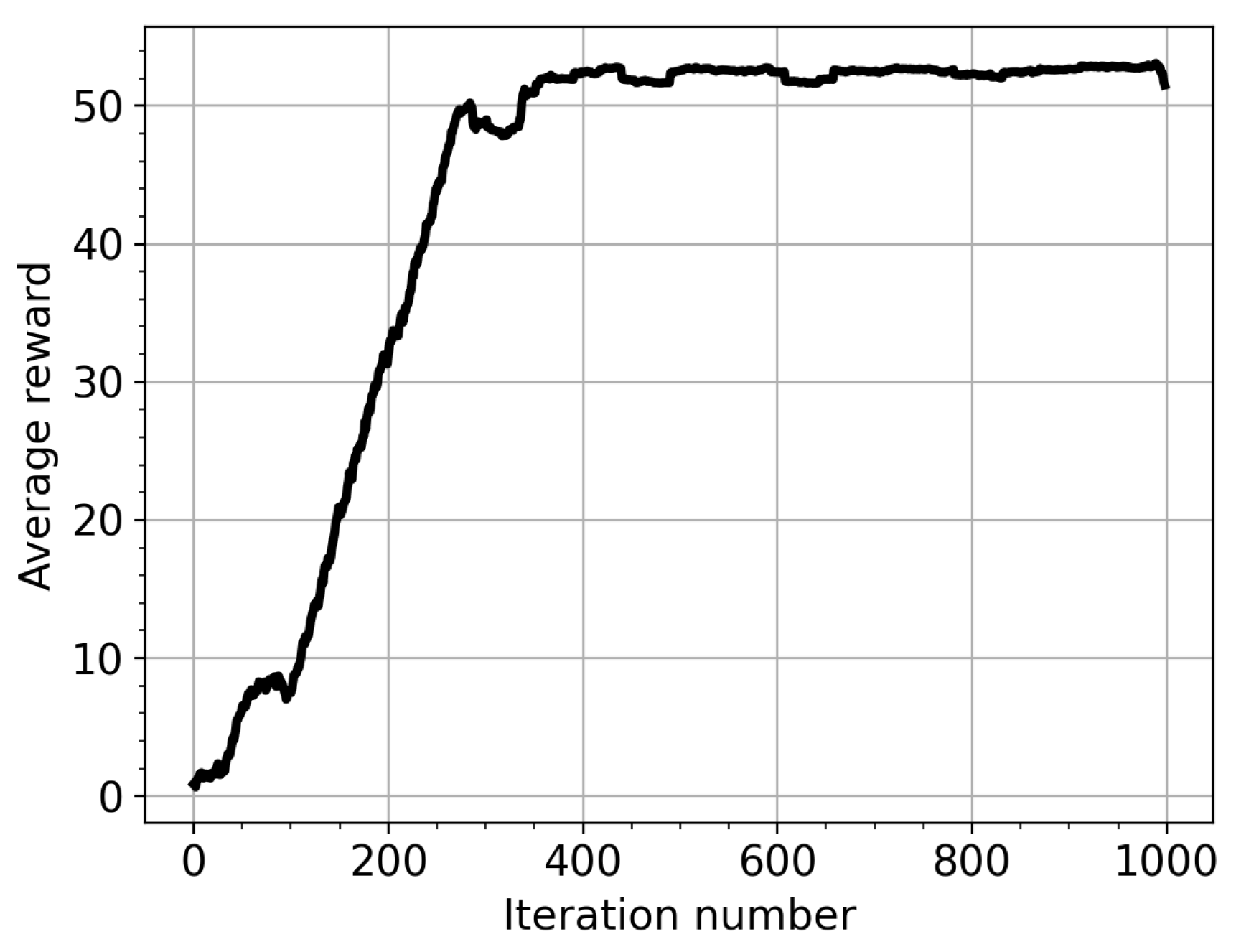

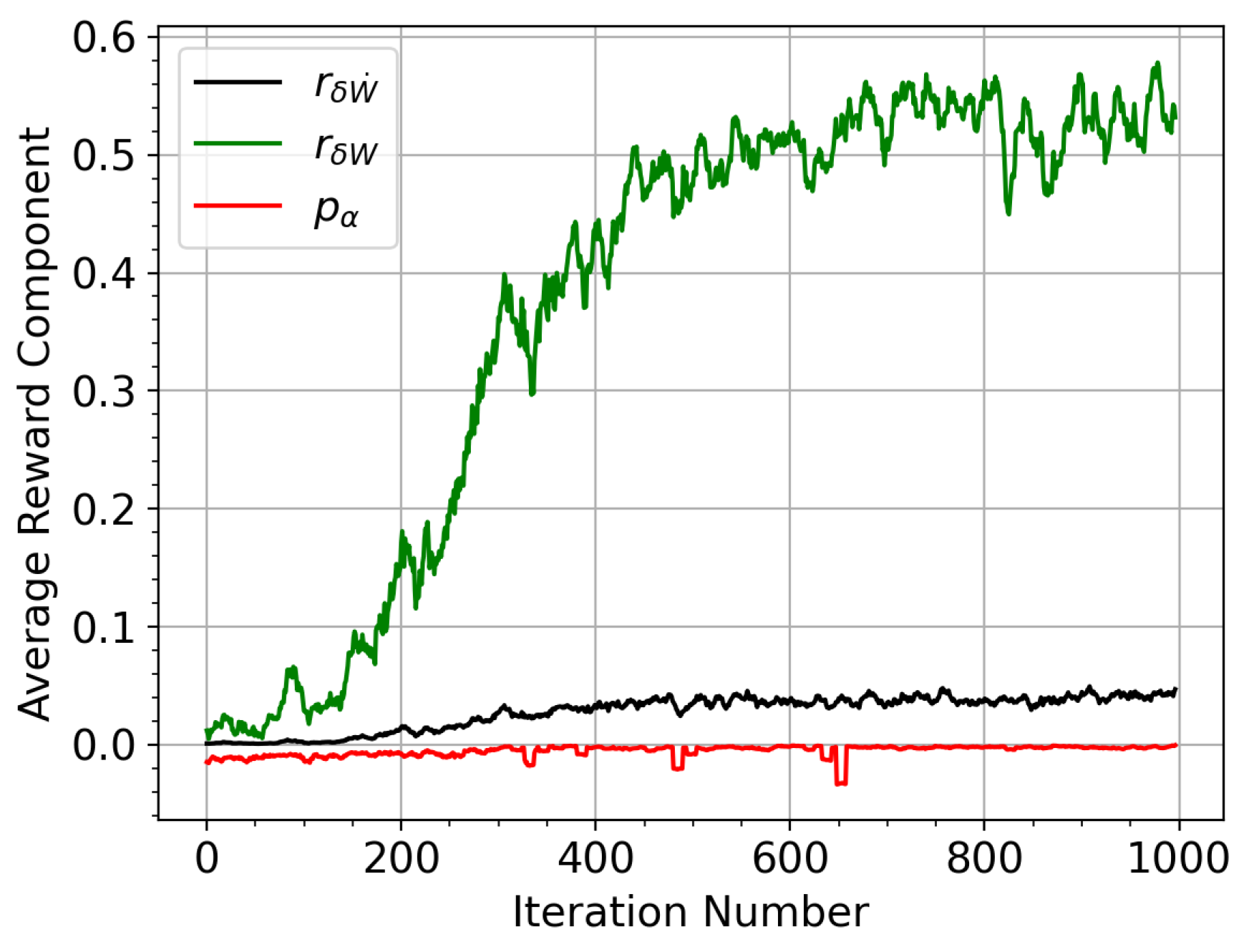

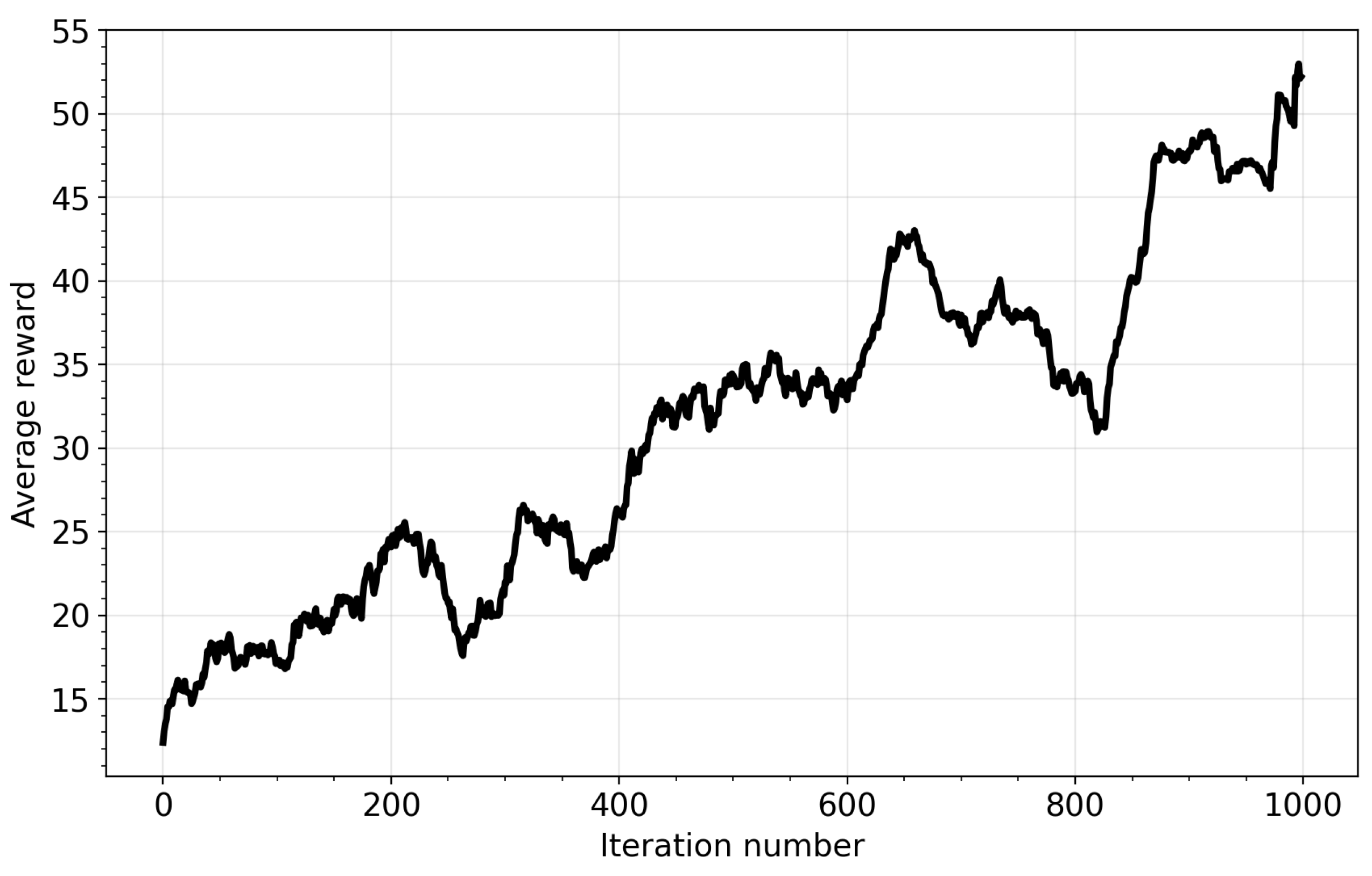

3.1. Training

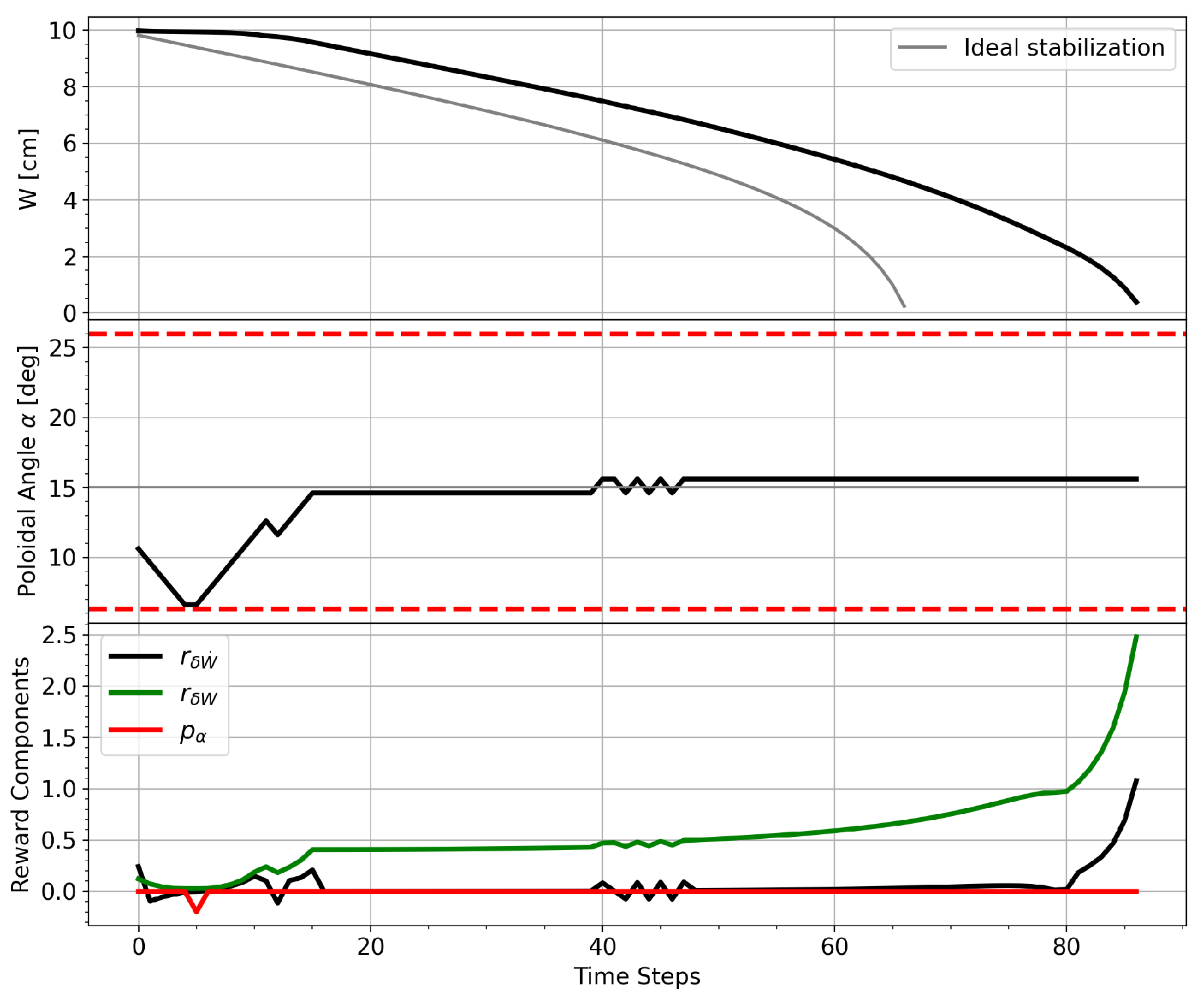

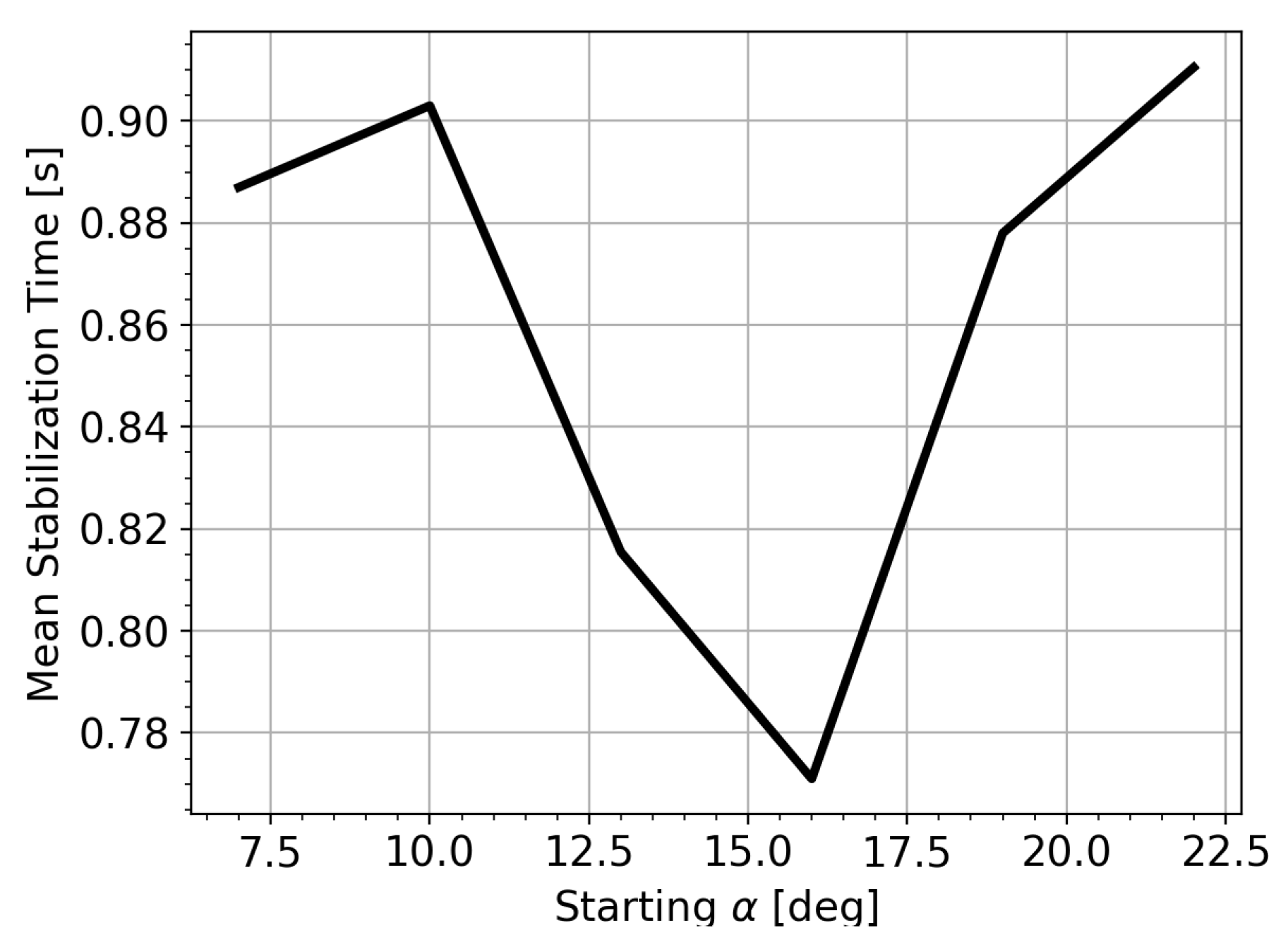

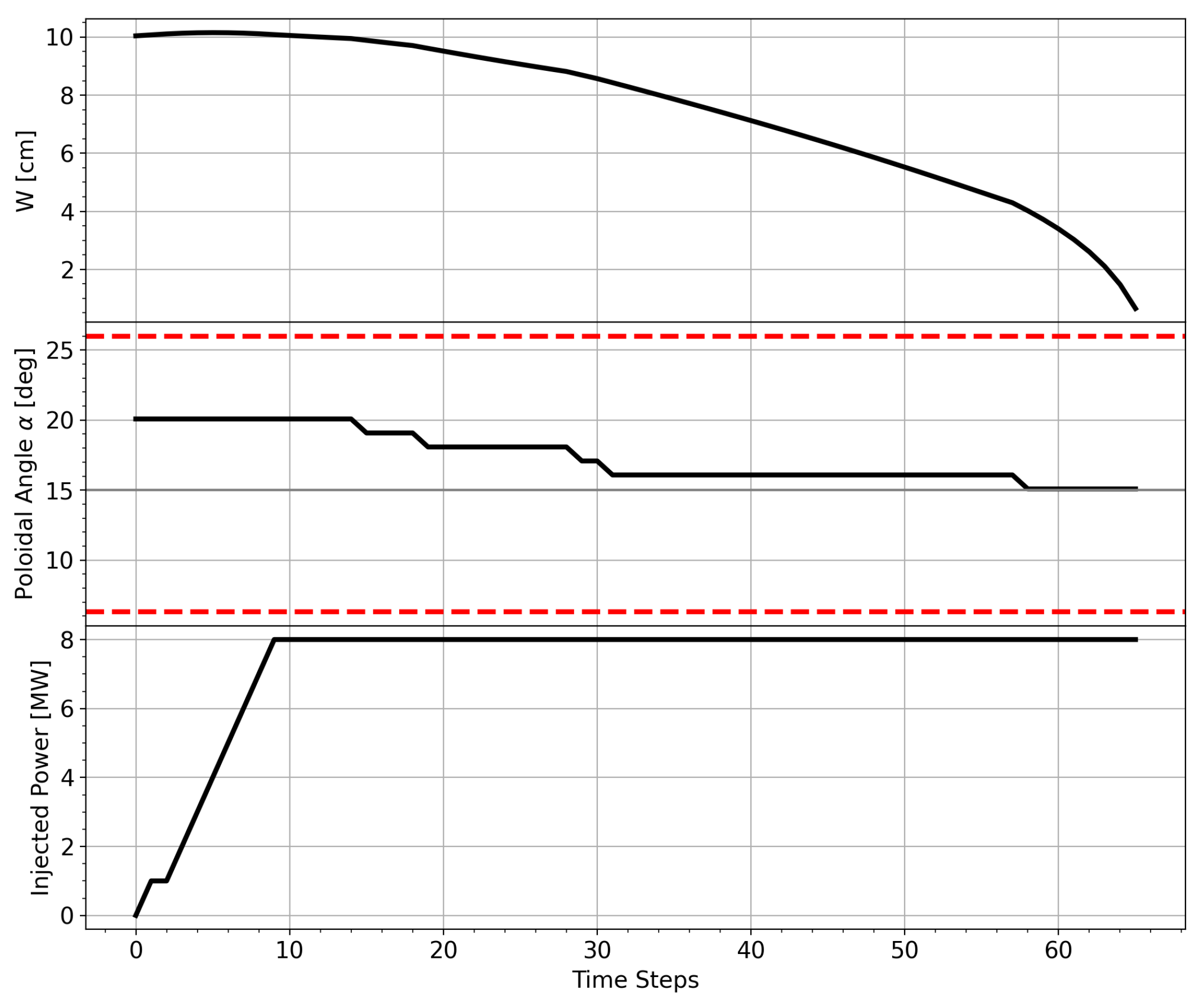

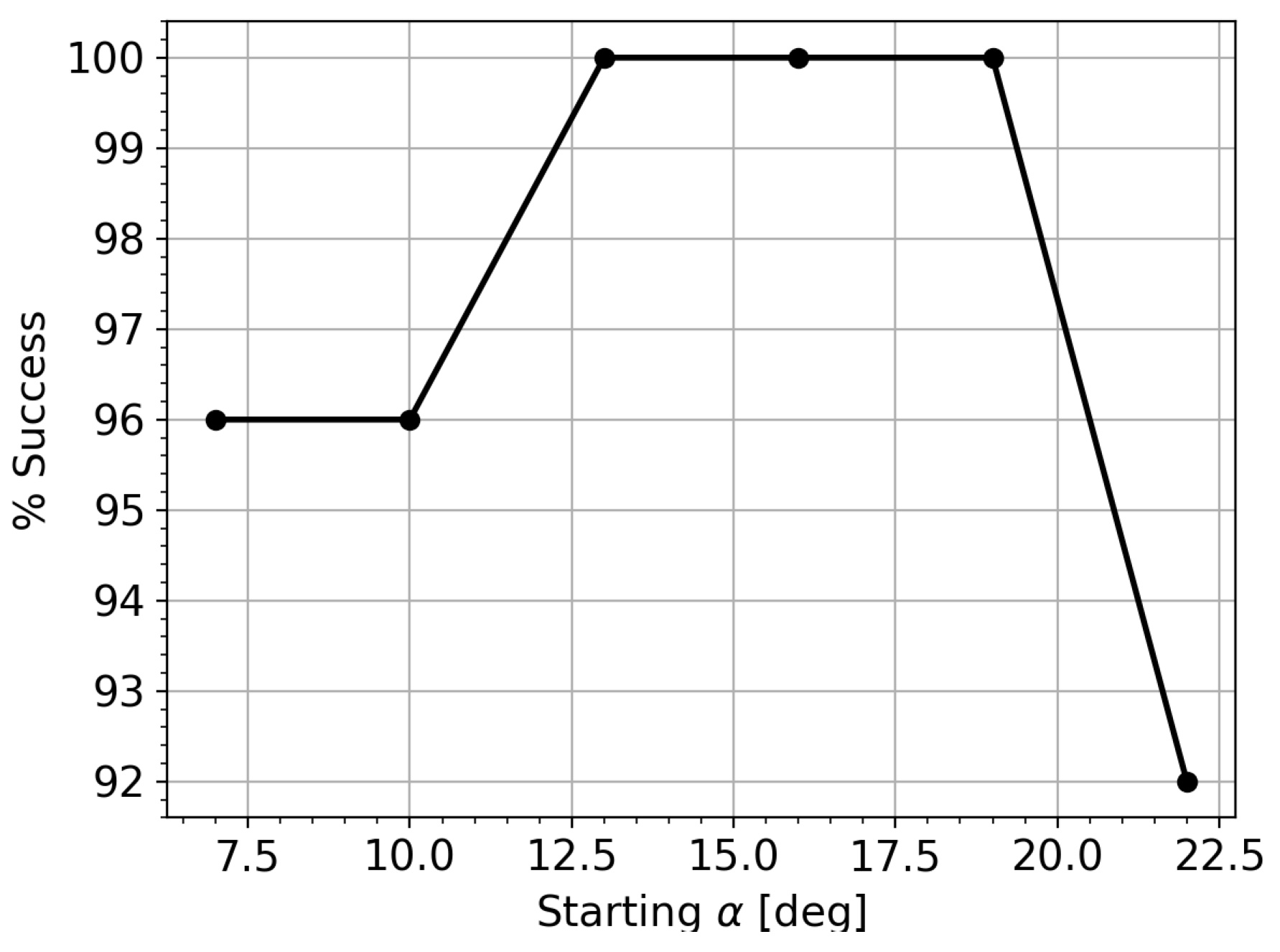

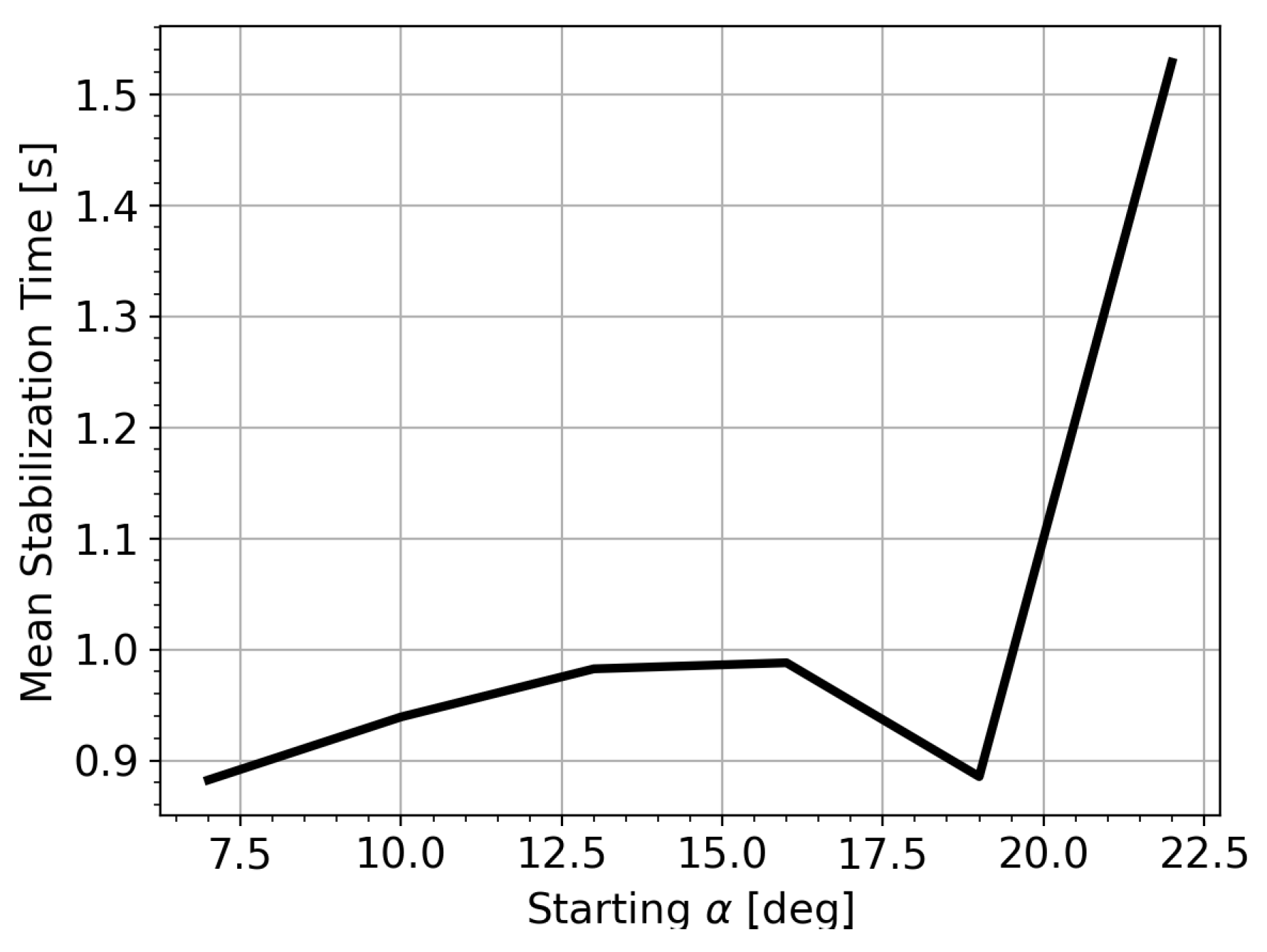

3.2. Test and Discussion

4. Alignment Task with Power Control

| Action ID | Command | Description |

| 0 | Increase launcher angle | |

| 1 | Decrease launcher angle | |

| 2 | Increase injected power | |

| 3 | Decrease injected power | |

| 4 | / | No changes in power and angle |

4.1. Training

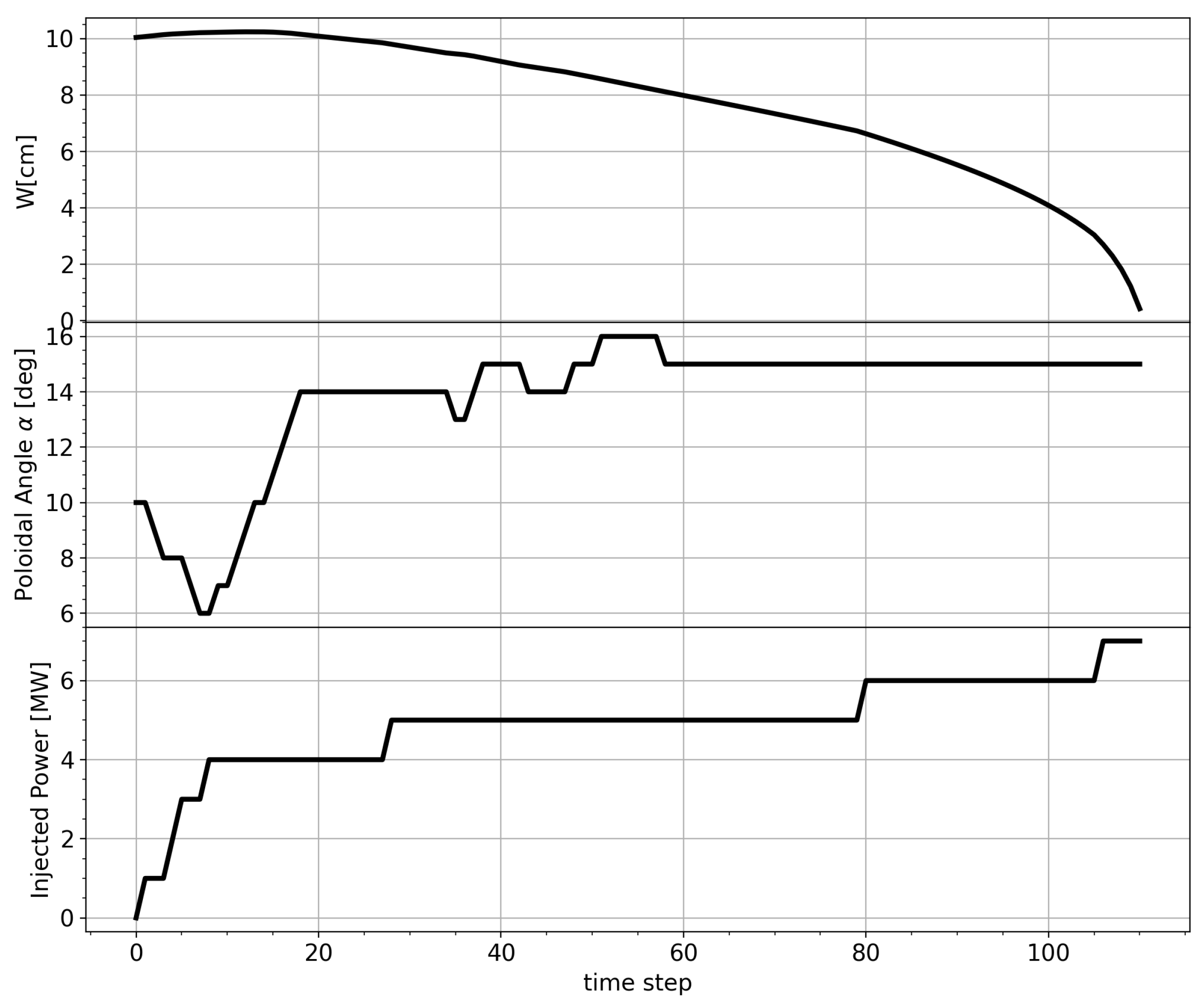

4.2. Test and Discussion

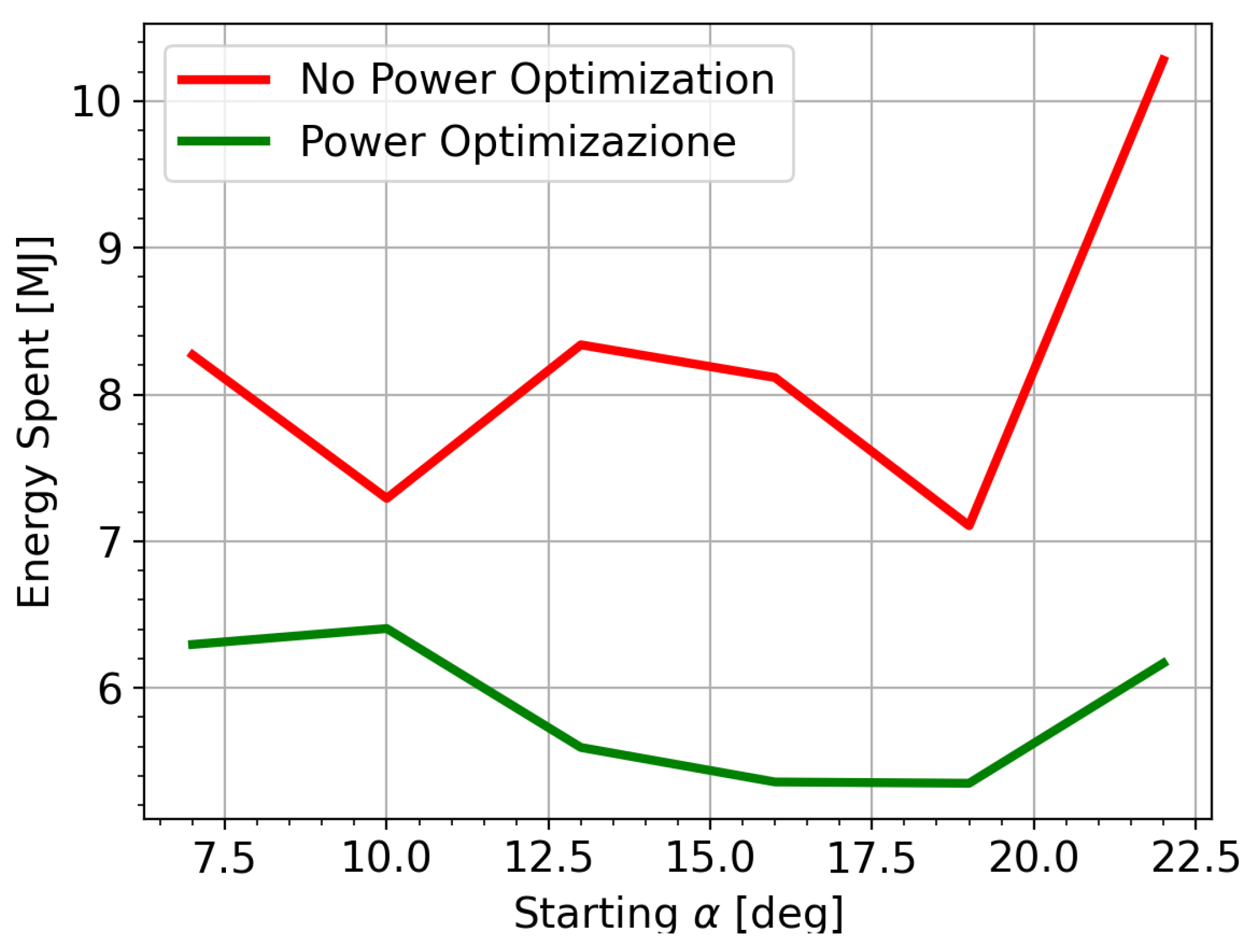

5. Power Optimization

6. Conclusions

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

References

- De Vries, P.; Johnson, M.; Alper, B.; Buratti, P.; Hender, T.; Koslowski, H.; Riccardo, V.; Contributors, J.E. Survey of disruption causes at JET. Nuclear fusion 2011, 51, 053018. [Google Scholar] [CrossRef]

- Lehnen, M.; Aleynikova, K.; Aleynikov, P.; Campbell, D.; Drewelow, P.; Eidietis, N.; Gasparyan, Y.; Granetz, R.; Gribov, Y.; Hartmann, N.; et al. Disruptions in ITER and strategies for their control and mitigation. Journal of Nuclear materials 2015, 463, 39–48. [Google Scholar] [CrossRef]

- Sauter, O.; Henderson, M.; Ramponi, G.; Zohm, H.; Zucca, C. On the requirements to control neoclassical tearing modes in burning plasmas. Plasma Physics and Controlled Fusion 2010, 52, 025002. [Google Scholar] [CrossRef]

- Stober, J.; Barrera, L.; Behler, K.; Bock, A.; Buhler, A.; Eixenberger, H.; Giannone, L.; Kasparek, W.; Maraschek, M.; Mlynek, A.; et al. Feedback-controlled NTM stabilization on ASDEX Upgrade. Proceedings of the EPJ Web of Conferences. EDP Sciences 2015, 87, 02017. [Google Scholar] [CrossRef]

- Humphreys, D.; Ferron, J.; La Haye, R.; Luce, T.; Petty, C.; Prater, R.; Welander, A. Active control for stabilization of neoclassical tearing modes. Physics of Plasmas 2006, 13. [Google Scholar] [CrossRef]

- Seo, J.; Kim, S.; Jalalvand, A.; Choi, W.H.; Linder, B.; Kolemen, E. Avoiding fusion plasma tearing instability with deep reinforcement learning. Nature 2024, 626, 746–751. [Google Scholar] [CrossRef]

- Schulman, J.; Wolski, F.; Dhariwal, P.; Radford, A.; Klimov, O. Proximal policy optimization algorithms. arXiv 2017, arXiv:1707.06347. [Google Scholar] [CrossRef]

- Wilson, H. Neoclassical tearing modes. Fusion science and technology 2002, 41, 107–116. [Google Scholar]

- Puterman, M.L. Markov decision processes. Handbooks in operations research and management science 1990, 2, 331–434. [Google Scholar]

- Hornik, K.; Stinchcombe, M.; White, H. Multilayer feedforward networks are universal approximators. Neural networks 1989, 2, 359–366. [Google Scholar] [CrossRef]

- Kaelbling, L.P.; Littman, M.L.; Moore, A.W. Reinforcement learning: A survey. Journal of artificial intelligence research 1996, 4, 237–285. [Google Scholar] [CrossRef]

- Belousov, B.; Abdulsamad, H.; Klink, P.; Parisi, S.; Peters, J. Reinforcement learning algorithms: analysis and applications. 2021. [Google Scholar] [CrossRef]

- Ambrosino, R.; et al. DTT-Divertor Tokamak Test facility: A testbed for DEMO. Fusion Engineering and Design 2021, 167, 112330. [Google Scholar] [CrossRef]

- Casiraghi, I.; et al. Core integrated simulations for the Divertor Tokamak Test facility scenarios towards consistent core-pedestal-SOL modelling. Nuclear Fusion 2023, 63, 036017. [Google Scholar] [CrossRef]

- Farina, D. A quasi-optical beam-tracing code for electron cyclotron absorption and current drive: GRAY. Fusion Science and Technology 2007, 52, 154–160. [Google Scholar] [CrossRef]

- Rutherford, P.H.; et al. Nonlinear growth of the tearing mode. Physics of Fluids 1973, 16, 1903. [Google Scholar] [CrossRef]

- Lazzaro, E.; Borgogno, D.; Brunetti, D.; Comisso, L.; Fevrier, O.; Grasso, D.; Lutjens, H.; Maget, P.; Nowak, S.; Sauter, O.; et al. Physics conditions for robust control of tearing modes in a rotating tokamak plasma. Plasma Physics and Controlled Fusion 2017, 60, 014044. [Google Scholar] [CrossRef]

- Zohm, H. Stabilization of neoclassical tearing modes by electron cyclotron current drive. Physics of plasmas 1997, 4, 3433–3435. [Google Scholar] [CrossRef]

- Furth, H.; Rutherford, P.; Selberg, H. Tearing mode in the cylindrical tokamak. The Physics of Fluids 1973, 16, 1054–1063. [Google Scholar] [CrossRef]

- White, R.B.; Monticello, D.; Rosenbluth, M.N.; Waddell, B.V. Saturation of the tearing mode; Technical report; Princeton Univ.: N.J. (USA); Plasma Physics Lab., 1976. [Google Scholar] [CrossRef]

- Gorelenkov, N.; Budny, R.; Chang, Z.; Gorelenkova, M.; Zakharov, L. A threshold for excitation of neoclassical tearing modes. Physics of Plasmas 1996, 3, 3379–3385. [Google Scholar] [CrossRef]

- Glasser, A.; Greene, J.; Johnson, J. Resistive instabilities in general toroidal plasma configurations. The Physics of Fluids 1975, 18, 875–888. [Google Scholar] [CrossRef]

- De Lazzari, D.; Westerhof, E. On the merits of heating and current drive for tearing mode stabilization. Nuclear Fusion 2009, 49, 075002. [Google Scholar] [CrossRef]

- Ng, A.Y.; Harada, D.; Russell, S. Policy invariance under reward transformations: Theory and application to reward shaping. Proceedings of the Icml. Citeseer 1999, 99, 278–287. [Google Scholar]

- Pathak, D.; Agrawal, P.; Efros, A.A.; Darrell, T. Curiosity-driven exploration by self-supervised prediction. In Proceedings of the International conference on machine learning. PMLR, 2017; pp. 2778–2787. [Google Scholar]

- Konda, V.; Tsitsiklis, J. Actor-critic algorithms. Advances in neural information processing systems 1999, 12. [Google Scholar]

- Hochreiter, S.; Schmidhuber, J. Long short-term memory. Neural computation 1997, 9, 1735–1780. [Google Scholar] [CrossRef]

- Staudemeyer, R.C.; Morris, E.R. Understanding LSTM - a tutorial into Long Short-Term Memory Recurrent Neural Networks. CoRR 2019, abs/1909.09586. [Google Scholar]

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).