Submitted:

20 April 2026

Posted:

21 April 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

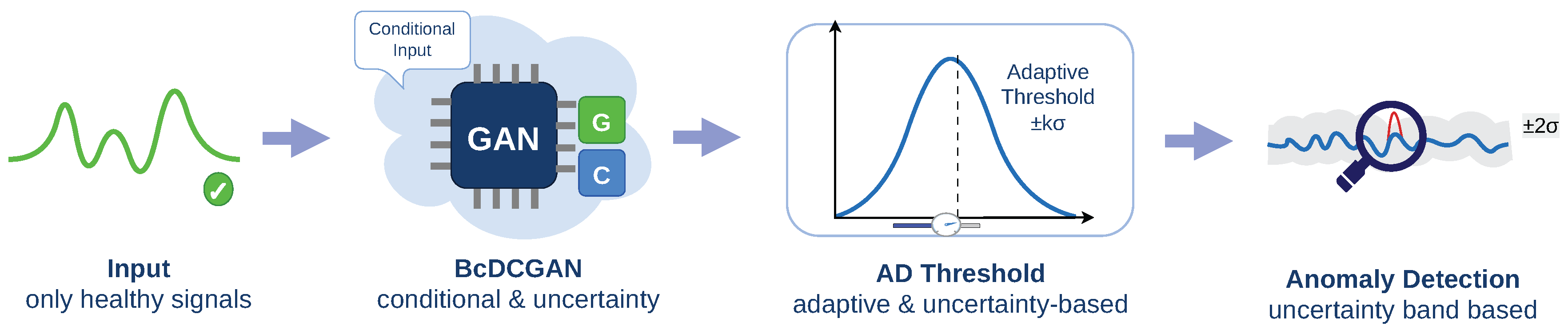

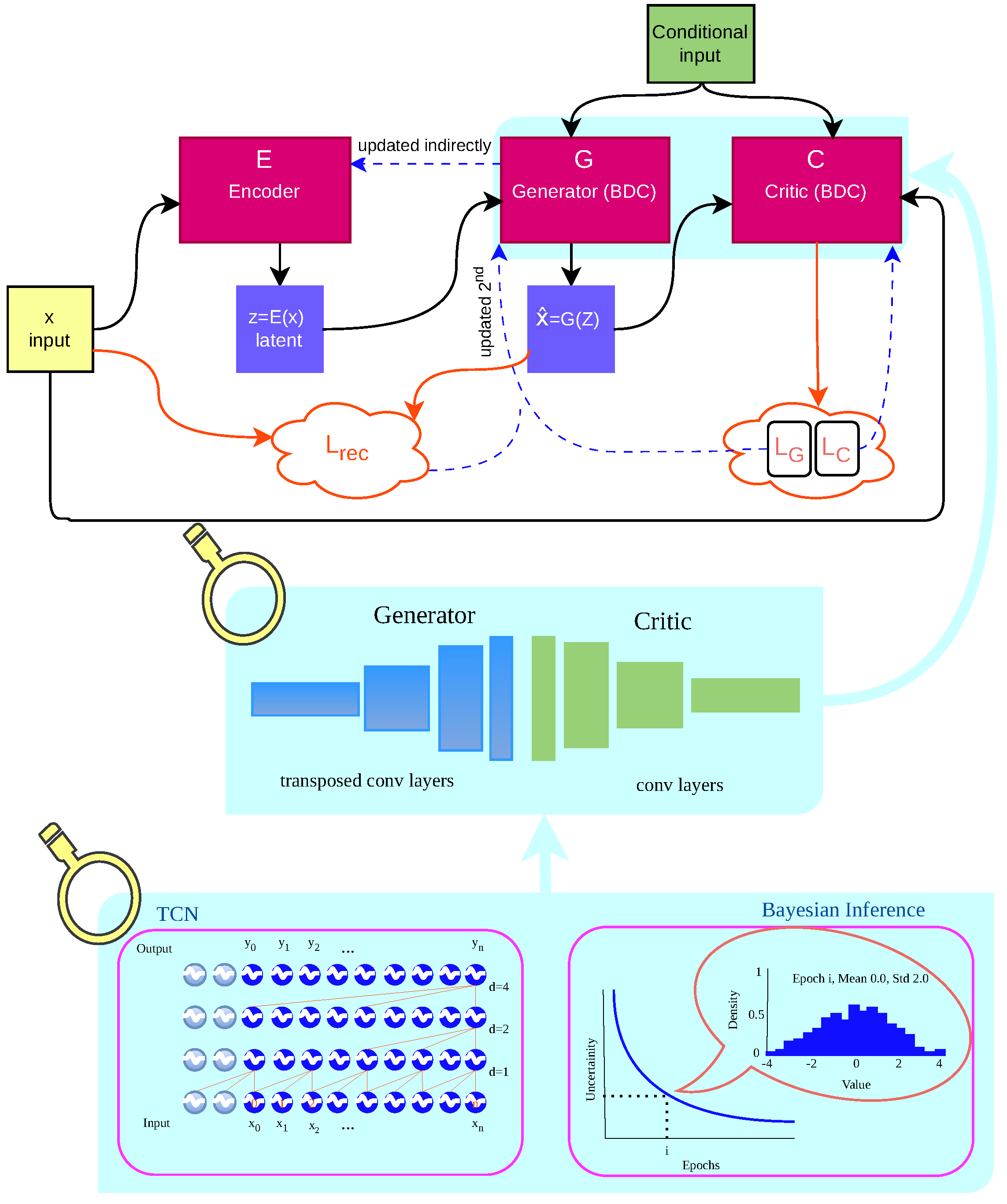

- A Bayesian conditional deep convolutional GAN (BcDCGAN) architecture tailored to multivariate vibration-based time series is proposed, enabling fully unsupervised anomaly detection using only healthy data from prestressed concrete catenary poles.

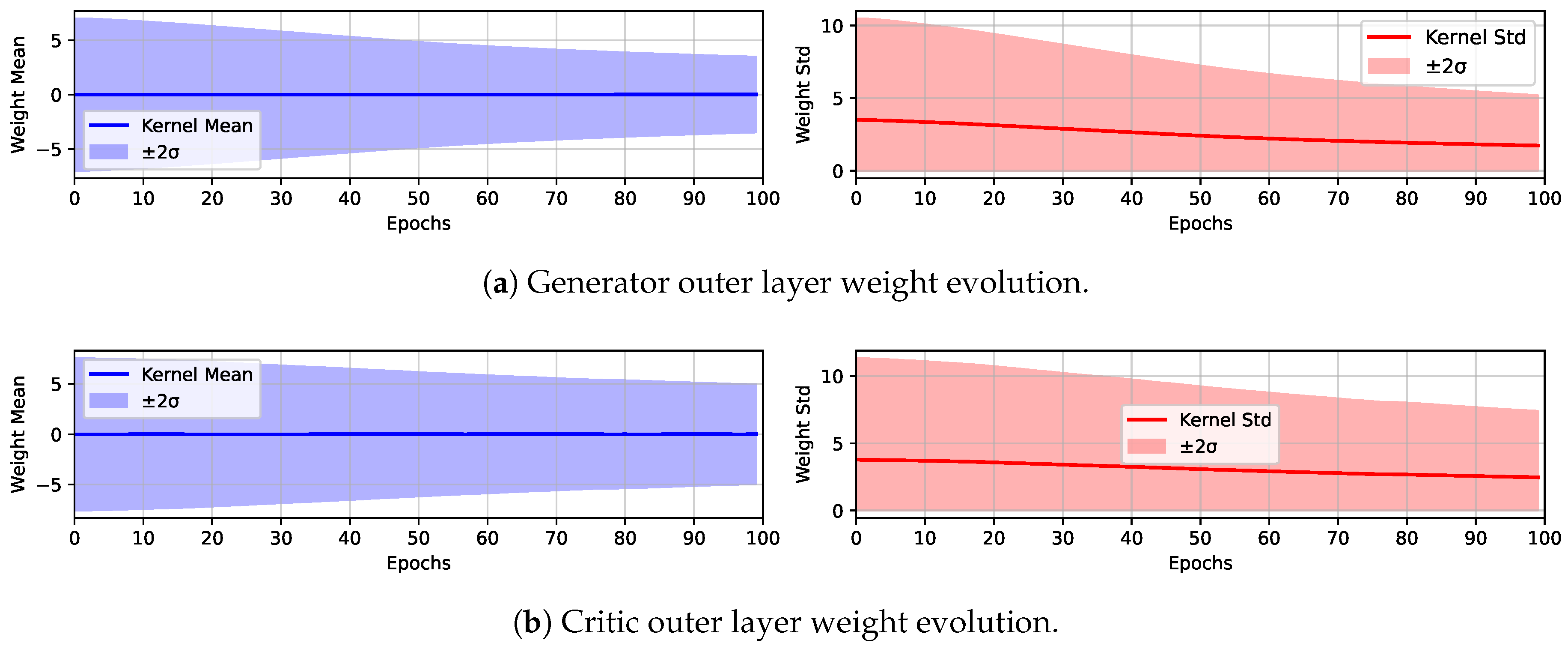

- A variational Bayesian weight distribution is integrated into the generator and critic, yielding epistemic uncertainty estimates that support risk-aware decision making in safety-critical SHM applications.

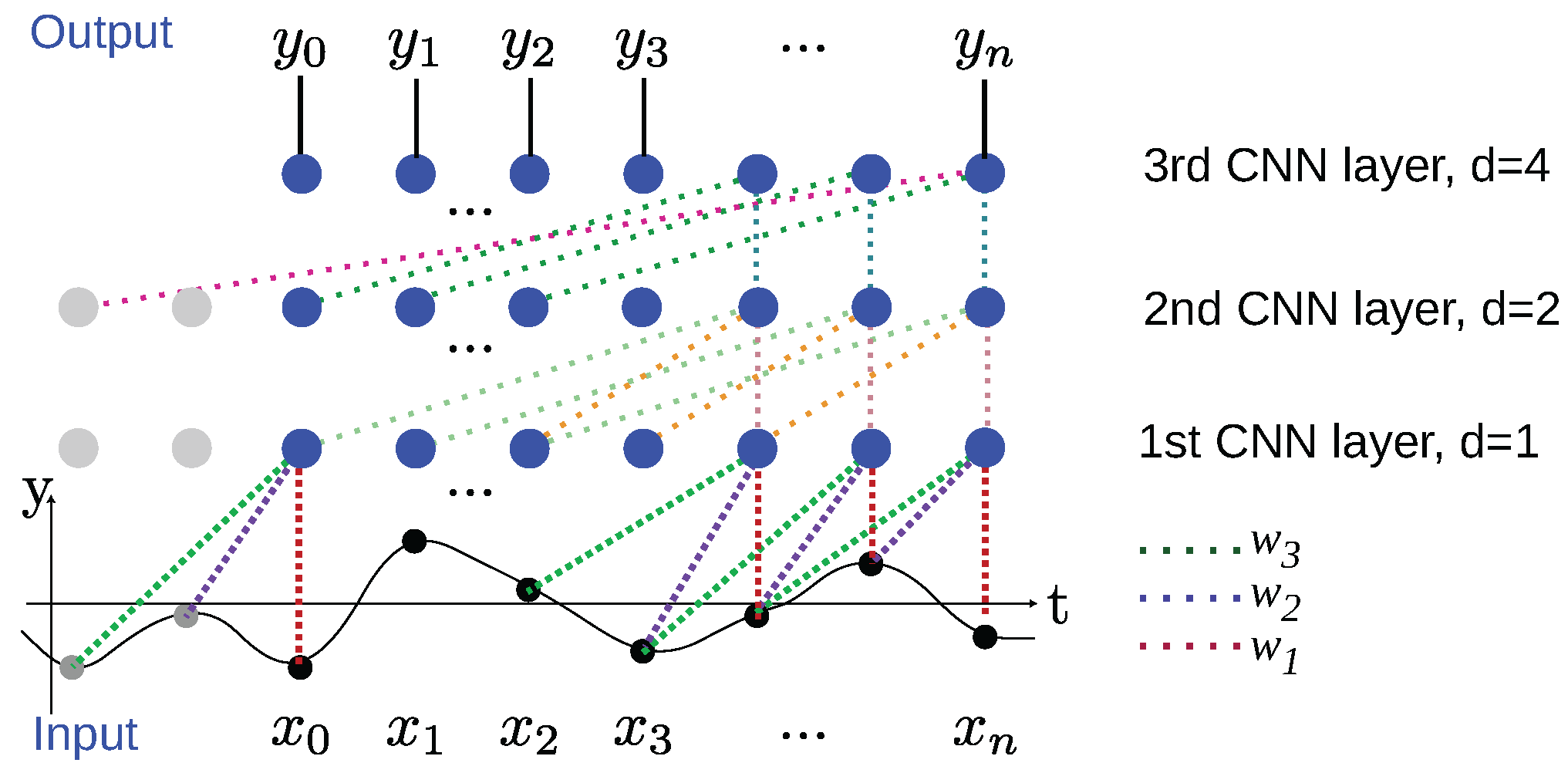

- A temporal convolutional network with dilated causal convolutions within the generator-critic model component is employed to capture long-range temporal dependencies and handle non-stationary operating conditions.

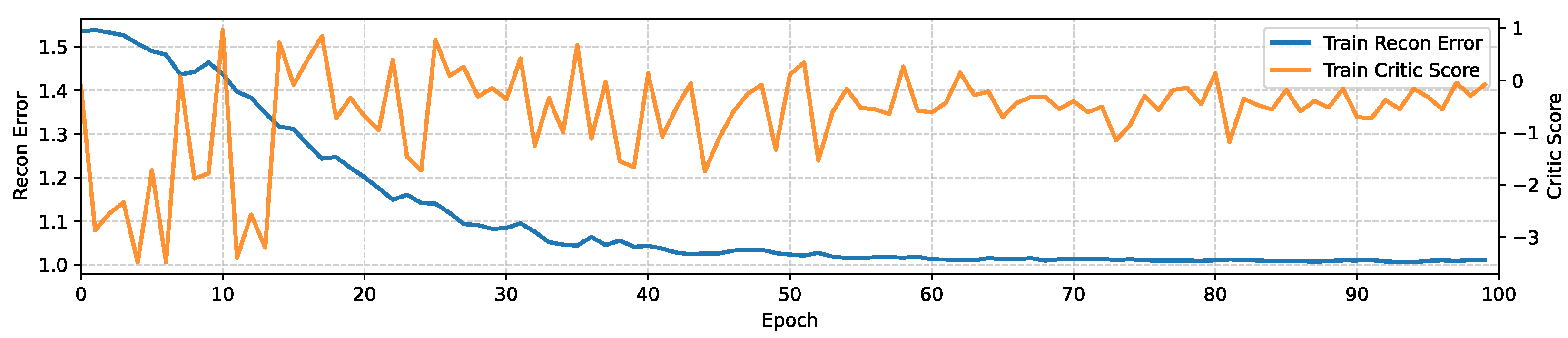

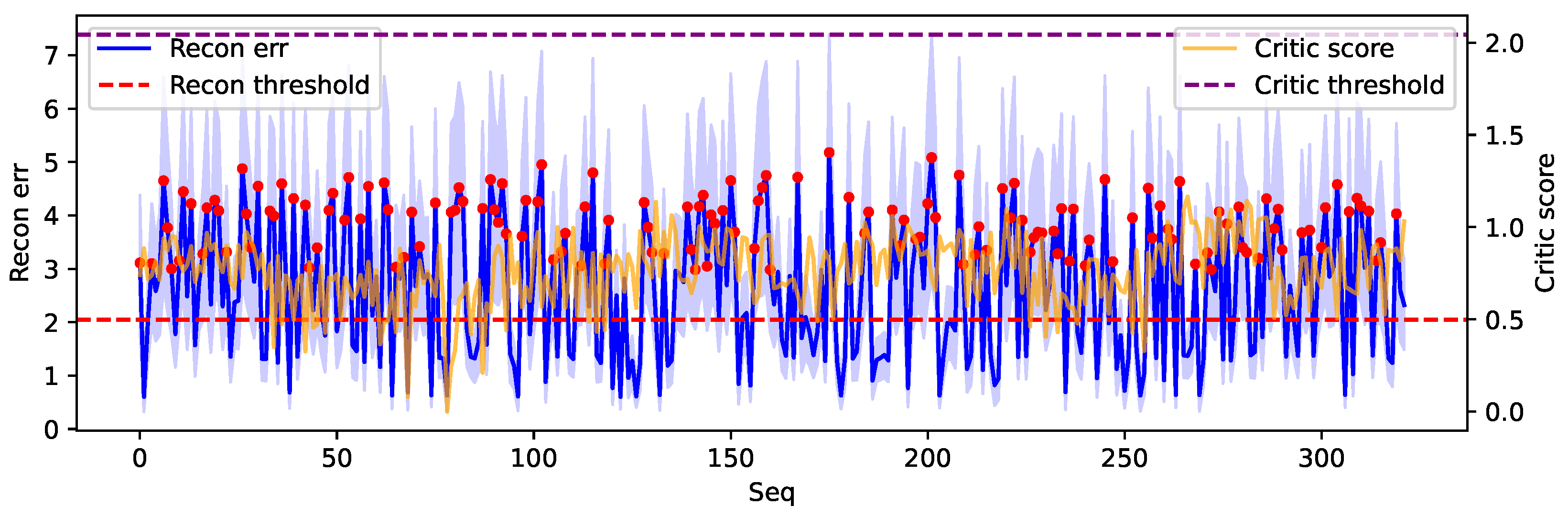

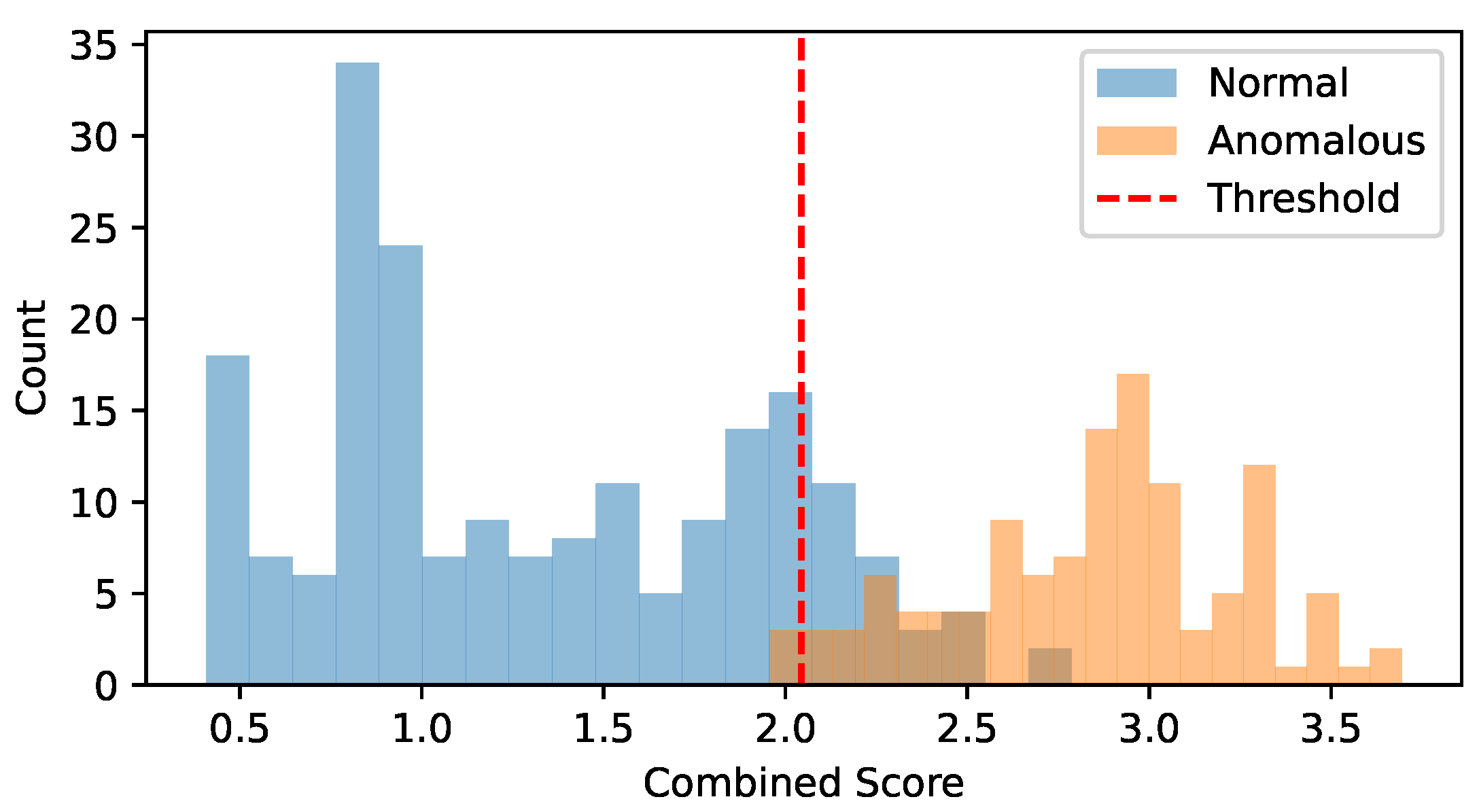

- An adaptive Bayesian anomaly scoring and thresholding scheme is introduced that combines normalized reconstruction error, critic score, and epistemic uncertainty into a single score. The decision threshold is calibrated using validation data for practical deployment.

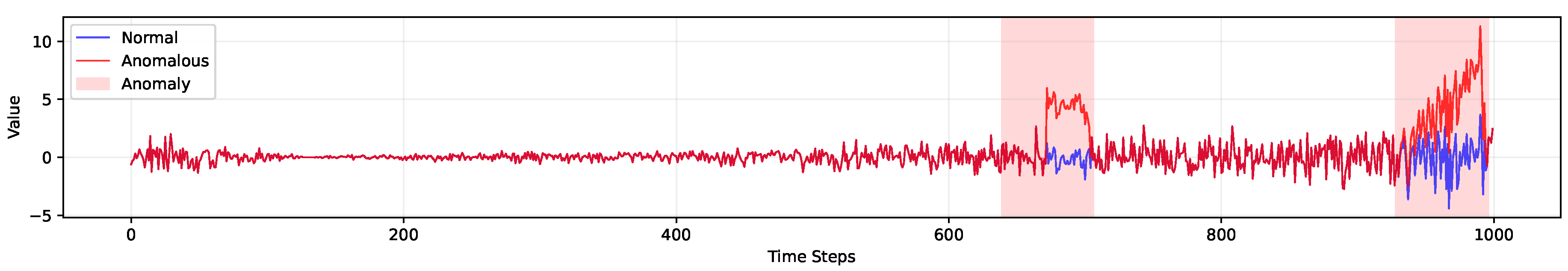

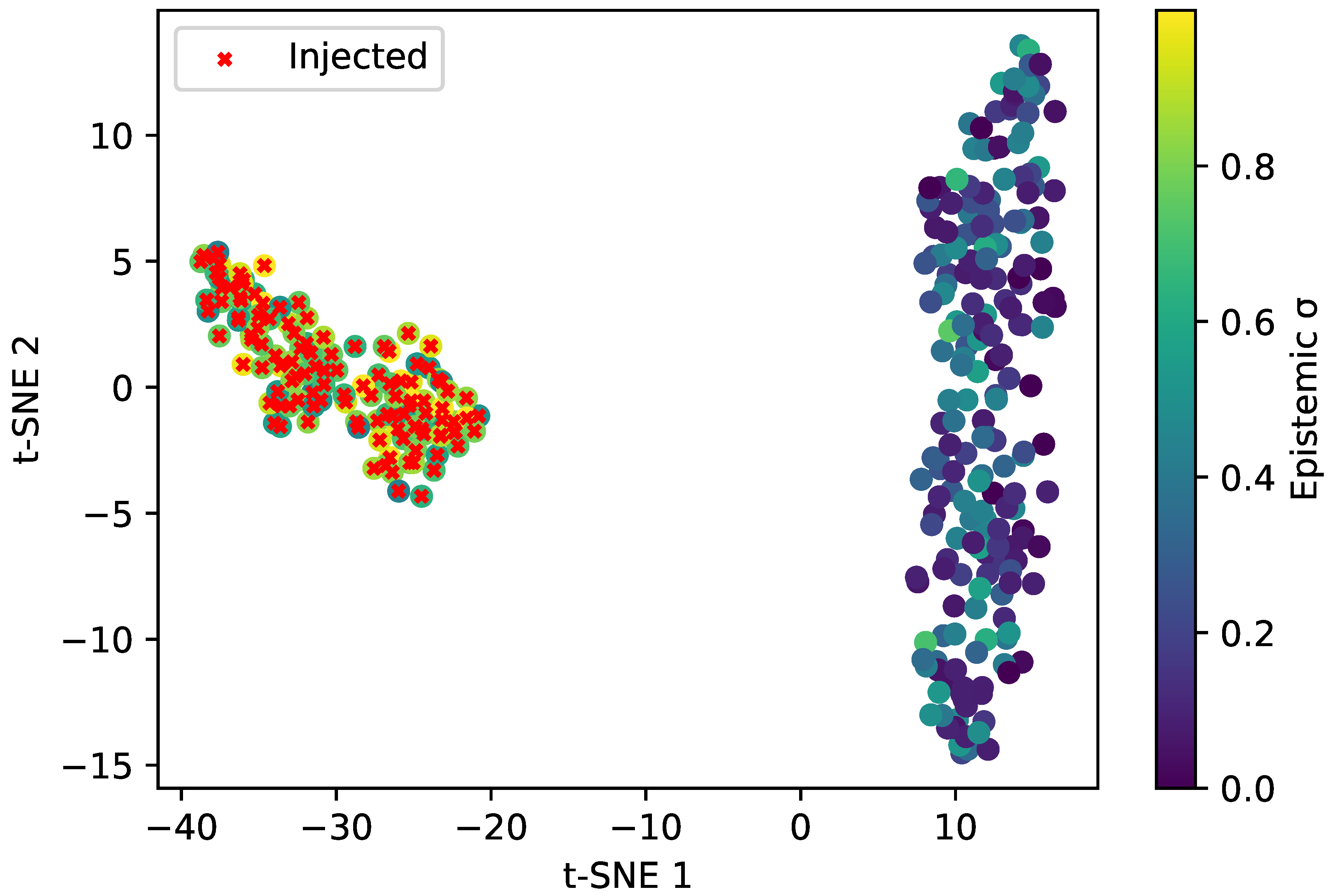

- The effectiveness of the proposed framework is demonstrated on a real catenary-pole SHM dataset with injected anomalies, showing high recall and clear separation between normal and anomalous signals in both latent representations and uncertainty measures.

2. Literature Review

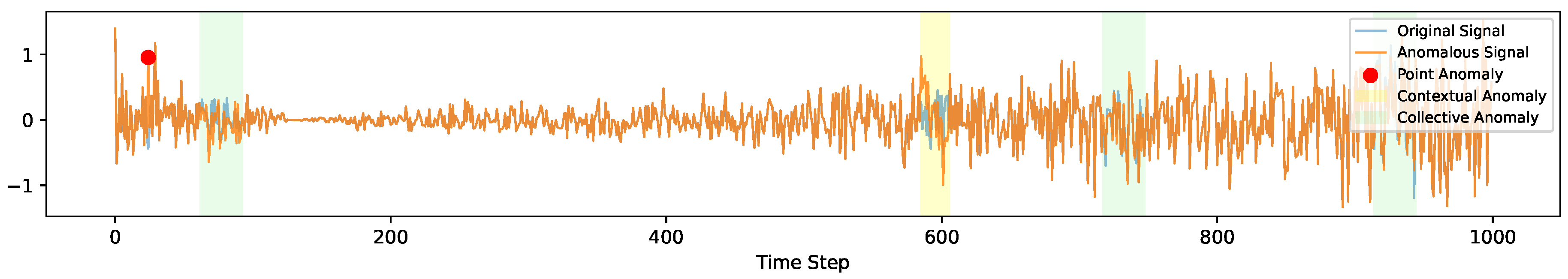

2.1. Time Series Anomalies

2.2. Traditional Anomaly Detection Limitations

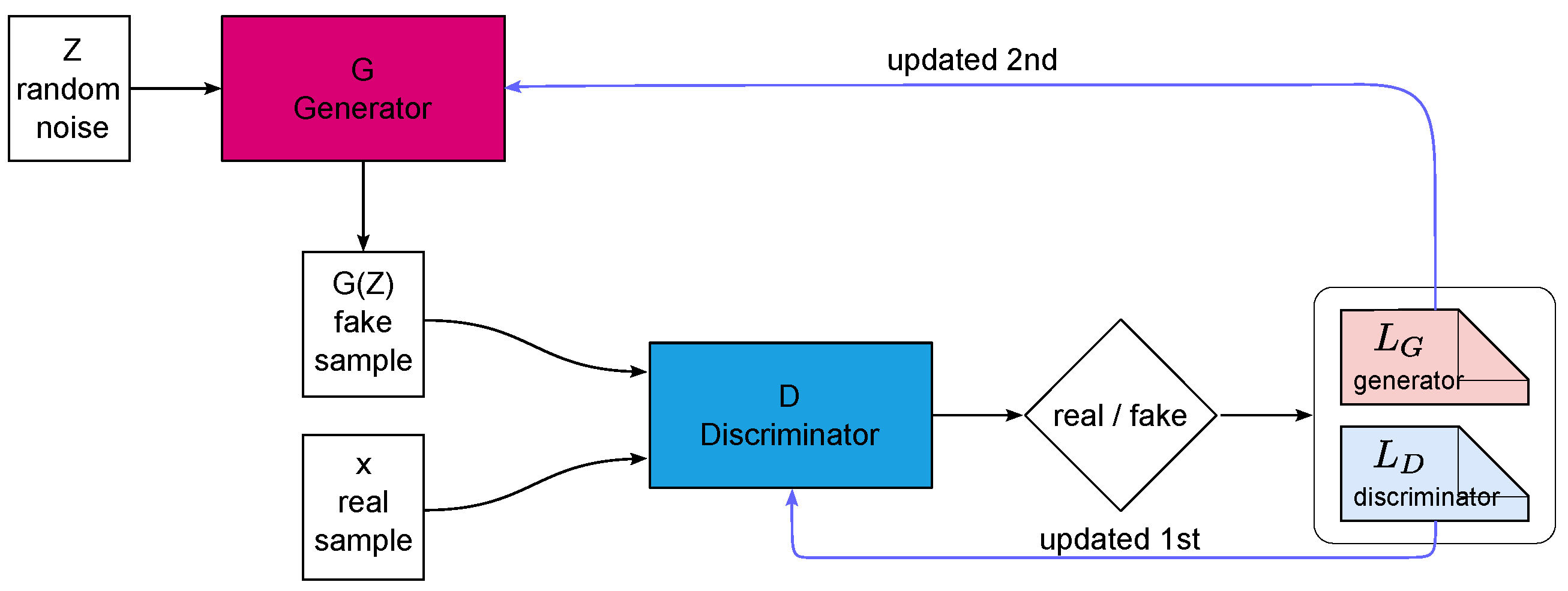

2.3. Generative Adversarial Network

| x | is real data sample from true data distribution |

| z | is latent noise vector from prior (e.g., ) |

| is discriminator network (outputs probability of real) | |

| is generator network (maps noise to fake data) |

2.4. GAN Based Anomaly Detection Approaches

| Method | How it Works | Strength | Limitation |

|---|---|---|---|

| TAnoGAN | GAN with LSTM, uses reconstruction errors | Models temporal trends | Tuning sensitive |

| DCGAN+Bi-LSTM | DCGAN and Bi-LSTM for spatial-temporal data | Accurate for sequences | Computationally heavy |

| BiGAN | Joint encoder, generator, discriminator training | Precise reconstruction | Overfitting risk |

2.5. Anomaly Detection Metrics

3. Motivation

4. Methodology

4.1. Bayesian Inference

4.2. Temporal Causal Networks

4.3. Proposed Bayesian Conditional Deep Convolution GAN Anomaly Detection Architecture

| x | is real data sample from true data distribution, |

| z | is latent vector from prior, |

| is critic network, | |

| is generator network. |

| is the critic loss, |

| is the generator loss, |

| is the reconstruction loss. |

| is the total generator loss, |

| is the reconstruction loss weight defined based on the current and total number of epochs |

| given by , |

| is a factor that gradually increases the strength of ELBO regularization, |

| is the ELBO loss which is negative of ELBO defined in equation 3. |

4.4. Adaptive Threshold

5. Case Study

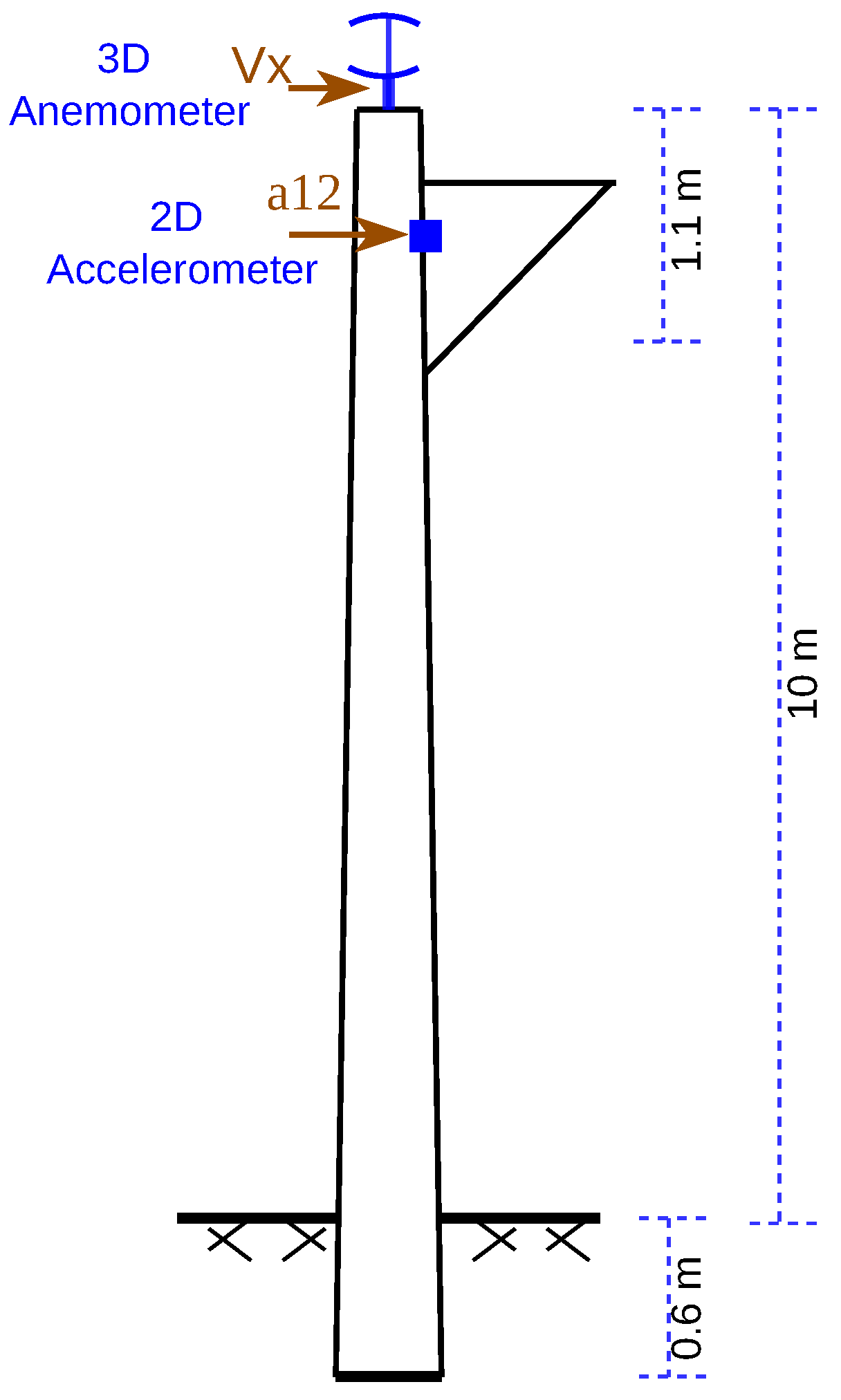

5.1. Dataset

5.2. Anomaly Injection

5.3. Model Training

5.4. Latent Space Analysis

5.5. Validation data based Threshold and Anomaly Detection

5.6. Kullback-Leibler Divergence

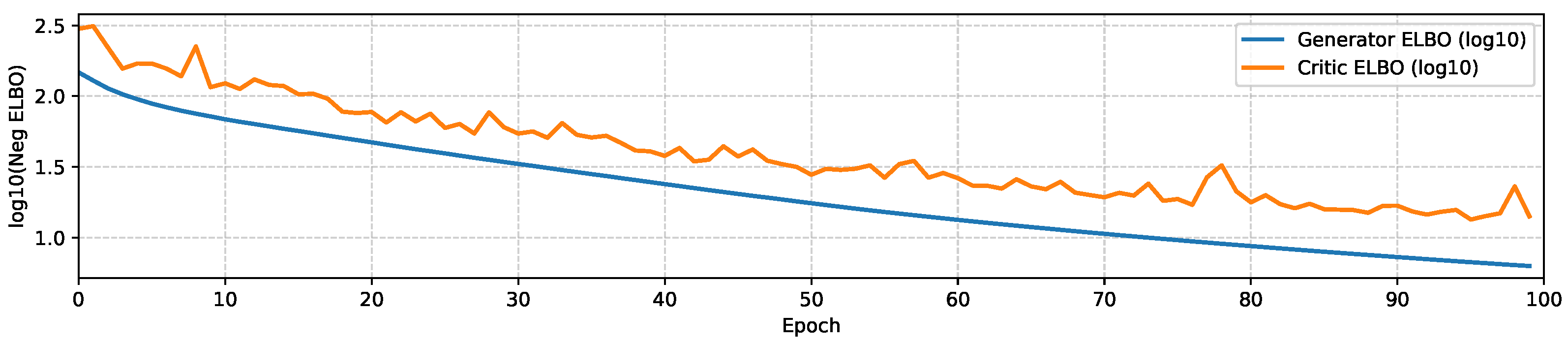

5.7. Posterior Uncertainty Monte Carlo

5.8. Consistency of Results with Theoretical Expectations

Conclusion

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Usmani, U.A.; Aziz, I.A.; Jaafar, J.; Watada, J. Deep Learning for Anomaly Detection in Time-Series Data: An Analysis of Techniques, Review of Applications, and Guidelines for Future Research. IEEE Access 2024.

- Zamanzadeh Darban, Z.; Webb, G.I.; Pan, S.; Aggarwal, C.; Salehi, M. Deep learning for time series anomaly detection: A survey. ACM Computing Surveys 2024, 57, 1–42. [Google Scholar] [CrossRef]

- Blázquez-García, A.; Conde, A.; Mori, U.; Lozano, J.A. A review on outlier/anomaly detection in time series data. ACM computing surveys (CSUR) 2021, 54, 1–33. [Google Scholar] [CrossRef]

- Geiger, A.; Liu, D.; Alnegheimish, S.; Cuesta-Infante, A.; Veeramachaneni, K. Tadgan: Time series anomaly detection using generative adversarial networks. In Proceedings of the 2020 ieee international conference on big data (big data); IEEE, 2020; pp. 33–43. [Google Scholar]

- Smuha, N.A. Regulation 2024/1689 of the Eur. Parl. & Council of June 13, 2024 (EU Artificial Intelligence Act). International Legal Materials 2025, 1–148. [Google Scholar]

- Schlegl, T.; Seeböck, P.; Waldstein, S.M.; Langs, G.; Schmidt-Erfurth, U. f-AnoGAN: Fast unsupervised anomaly detection with generative adversarial networks. Medical image analysis 2019, 54, 30–44. [Google Scholar] [CrossRef] [PubMed]

- Liang, H.; Song, L.; Wang, J.; Guo, L.; Li, X.; Liang, J. Robust unsupervised anomaly detection via multi-time scale DCGANs with forgetting mechanism for industrial multivariate time series. Neurocomputing 2021, 423, 444–462. [Google Scholar] [CrossRef]

- Guigou, F.; Collet, P.; Parrend, P. SCHEDA: Lightweight euclidean-like heuristics for anomaly detection in periodic time series. Applied Soft Computing 2019, 82, 105594. [Google Scholar] [CrossRef]

- Alkam, F. Vibration-based Monitoring of Concrete Catenary Poles using Bayesian Inference. PhD thesis, Dissertation, Weimar, Bauhaus-Universität Weimar, 2021. [Google Scholar]

- Lee, C.K.; Cheon, Y.J.; Hwang, W.Y. Studies on the GAN-Based Anomaly Detection Methods for the Time Series Data. IEEE Access 2021, 9, 73201–73215. [Google Scholar] [CrossRef]

- Goodfellow, I.; Pouget-Abadie, J.; Mirza, M.; Xu, B.; Warde-Farley, D.; Ozair, S.; Courville, A.; Bengio, Y. Generative adversarial networks. Communications of the ACM 2020, 63, 139–144. [Google Scholar] [CrossRef]

- Bashar, M.A.; Nayak, R. ALGAN: Time Series Anomaly Detection with Adjusted-LSTM GAN: MA Bashar, R. Nayak. International Journal of Data Science and Analytics 2025, 20, 5719–5737. [Google Scholar] [CrossRef]

- Bashar, M.A.; Nayak, R. TAnoGAN: Time Series Anomaly Detection with Generative Adversarial Networks. In Proceedings of the 2020 IEEE Symposium Series on Computational Intelligence (SSCI); IEEE, 2020; pp. 1778–1785. [Google Scholar] [CrossRef]

- Tien, T.B.; et al. Time series data recovery in SHM of large-scale bridges: Leveraging GAN and Bi-LSTM networks. Structures 2024, 63. [Google Scholar]

- Zhang, D.; Ma, M.; Xia, L. A comprehensive review on GANs for time-series signals. Neural Computing and Applications 2022, 34, 3551–3571. [Google Scholar] [CrossRef]

- Zenati, H.; Foo, C.S.; Lecouat, B.; Manek, G.; Chandrasekhar, V.R. Efficient gan-based anomaly detection. arXiv 2018, arXiv:1802.06222. [Google Scholar]

- Li, H.; Li, Y. Anomaly detection methods based on GAN: a survey. Applied Intelligence 2023, 53, 8209–8231. [Google Scholar] [CrossRef]

- Blei, D.M.; Kucukelbir, A.; McAuliffe, J.D. Variational inference: A review for statisticians. Journal of the American statistical Association 2017, 112, 859–877. [Google Scholar] [CrossRef]

- Nalisnick, E.; Matsukawa, A.; Teh, Y.W.; Gorur, D.; Lakshminarayanan, B. Do deep generative models know what they don’t know? arXiv 2018, arXiv:1810.09136. [Google Scholar]

- Kingma, D.P.; Welling, M. Auto-encoding variational bayes. arXiv 2013, arXiv:1312.6114. [Google Scholar]

- Murphy, K.P. Probabilistic machine learning: an introduction; MIT press, 2022. [Google Scholar]

- Lara-Benítez, P.; Carranza-García, M.; Luna-Romera, J.M.; Riquelme, J.C. Temporal convolutional networks applied to energy-related time series forecasting. applied sciences 2020, 10, 2322. [Google Scholar] [CrossRef]

- Akcay, S.; Atapour-Abarghouei, A.; Breckon, T.P. Ganomaly: Semi-supervised anomaly detection via adversarial training. In Proceedings of the Asian conference on computer vision. Springer, 2018, pp. 622–637.

- Park, S.; Lee, K.H.; Ko, B.; Kim, N. Unsupervised anomaly detection with generative adversarial networks in mammography. Scientific Reports 2023, 13, 2925. [Google Scholar] [CrossRef] [PubMed]

- Van der Maaten, L.; Hinton, G. Visualizing data using t-SNE. Journal of machine learning research 2008, 9. [Google Scholar]

- Blundell, C.; Cornebise, J.; Kavukcuoglu, K.; Wierstra, D. Weight uncertainty in neural network. In Proceedings of the International conference on machine learning. PMLR, 2015, pp. 1613–1622.

- Gal, Y.; Ghahramani, Z. Dropout as a bayesian approximation: Representing model uncertainty in deep learning. In Proceedings of the international conference on machine learning. PMLR, 2016, pp. 1050–1059.

| Strategy/Metric | Description |

|---|---|

| Reconstruction Error | MSE or similar between input and reconstruction |

| Discriminator Score | Confidence near 0.5 indicates uncertainty |

| Combined Scoring | Fusion of residual and discriminative signals |

| Thresholding Approaches | Fixed, percentile, or |

| Recall | TP / (TP + FN) on injected anomalies |

| Precision, F1 and F2 | Secondary when false positives are quantifiable |

| Category | Parameter | Description |

|---|---|---|

| Training setup | Total number of training epochs. | |

| Number of critic updates per generator/encoder update. | ||

| Optimizers | Adam (G, E), | Optimizer and hyperparameters for generator and encoder. |

| Adam (C), | Optimizer and hyperparameters for critic. | |

| Bayesian prior | Mean of the Gaussian prior for Bayesian TCN weights. | |

| Standard deviation of the Gaussian prior for Bayesian TCN weights. | ||

| ELBO regularization | Target weight on the ELBO-based regularization term. | |

| Uncertainty | Number of Monte Carlo forward passes per input to estimate epistemic uncertainty. | |

| Anomaly scoring | Weights for (reconstruction, uncertainty, critic) in the combined log-space anomaly score. | |

| Thresholding | Adaptive validation-based anomaly threshold. |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).