Submitted:

20 April 2026

Posted:

20 April 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

- 1.

- Distinguish correct from incorrect (altered) movement signals using simple and interpretable measures;

- 2.

- Provide explainability output regarding the reasons the movement is altered, using the same set of measures;

- 3.

- Identify smaller sensor sets that are more suitable for wearable system deployment;

- 4.

- Test whether this approach can support a simulated real-time corrective-feedback setting.

2. Materials and Methods

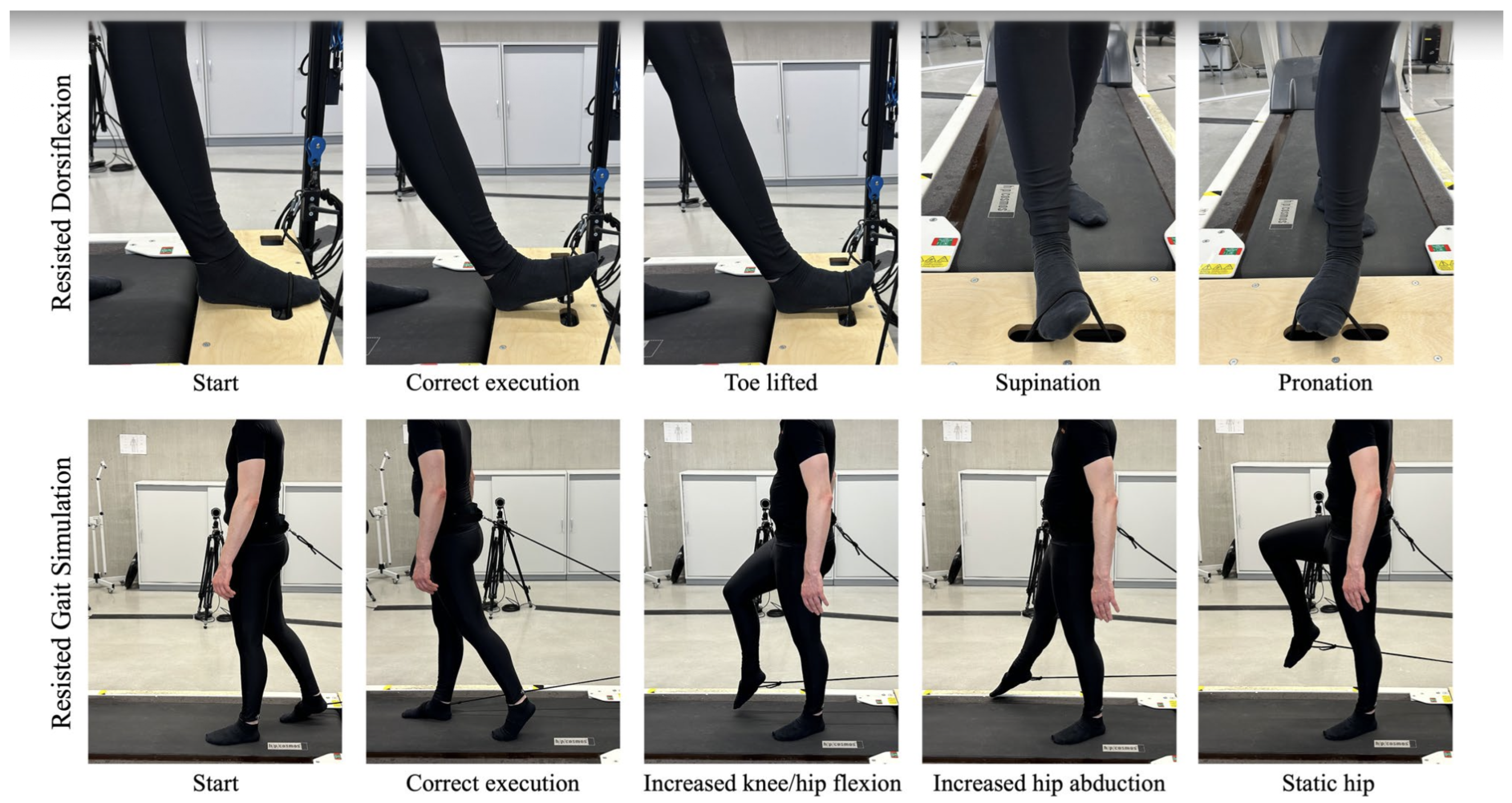

2.1. Dataset and Tasks

2.2. Feature Extraction

- mean angular speed

- RMS angular speed

- peak angular speed

- RMS angular acceleration

- rotational range.

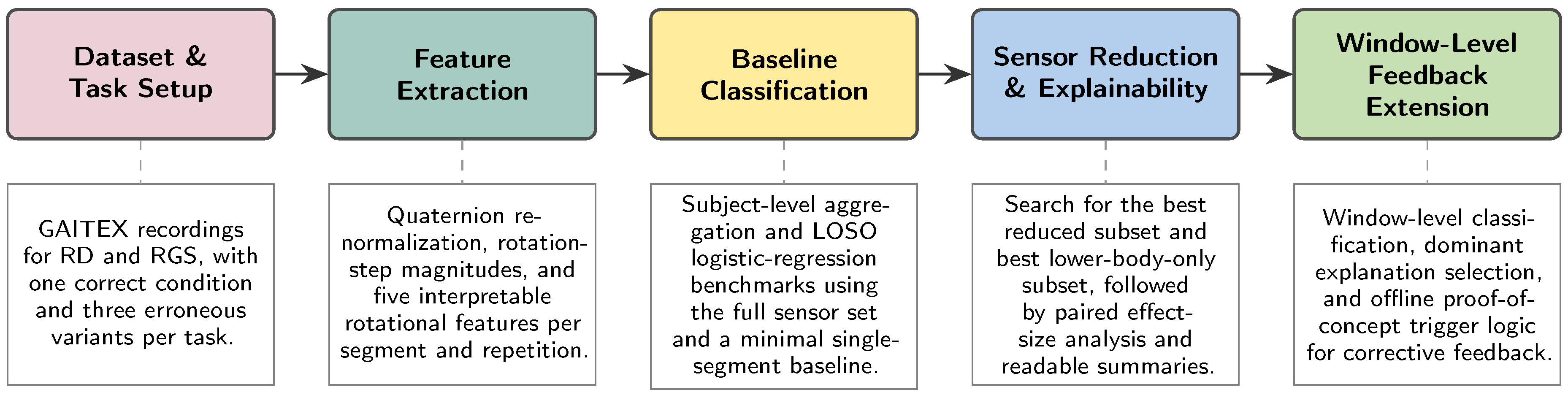

2.3. ML Pipeline

2.4. Baseline Benchmarks

2.5. Sensor Configuration Analysis

- 1.

- built a subject-level wide feature table from the selected segments;

- 2.

- trained a binary logistic-regression model with median imputation, z-score standardization, the liblinear solver, and max_iter = 5000;

- 3.

- evaluated LOSO accuracy and balanced accuracy.

- an unconstrained analysis across all available segments;

- a lower-body-only analysis including feet, lower legs, upper legs, and pelvis.

2.6. Explainability and Readable Feedback Layer

2.7. Proof-of-Concept Closed-Loop Extension

- the full sensor set;

- the best reduced subset from the subject-level unconstrained search;

- the best lower-body-only subset from the subject-level constrained search.

3. Results

3.1. Baseline Results

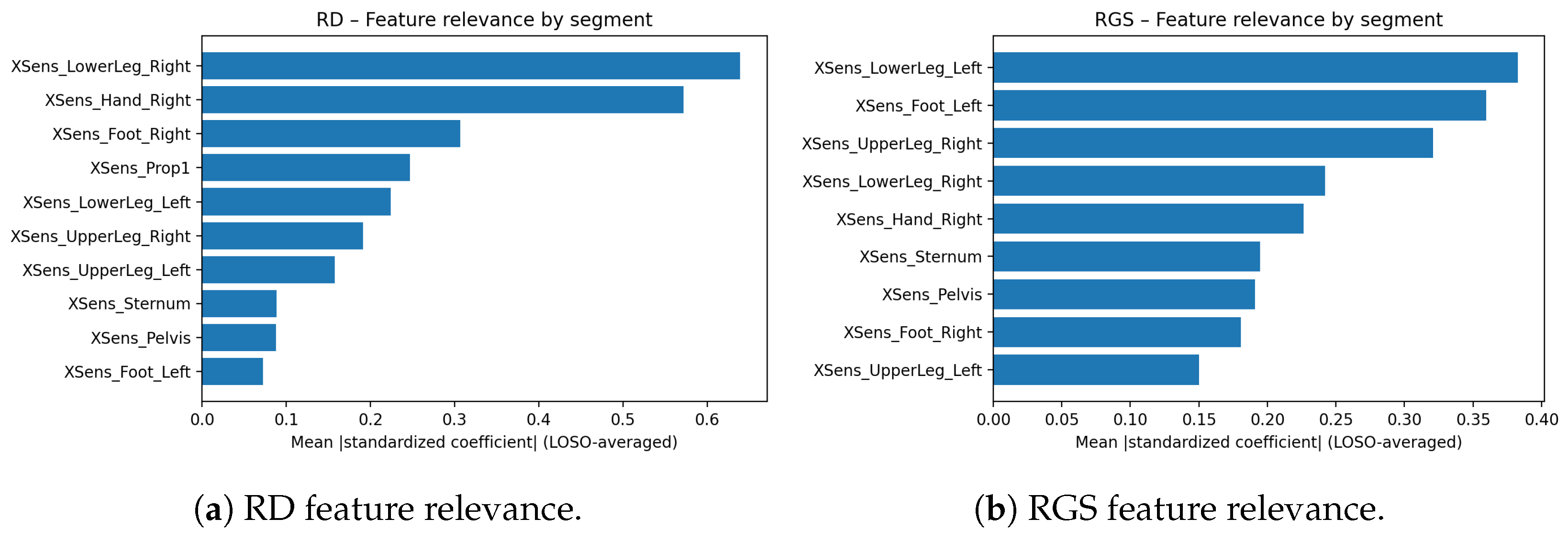

3.2. Explainable Results and Readable Movement Summaries

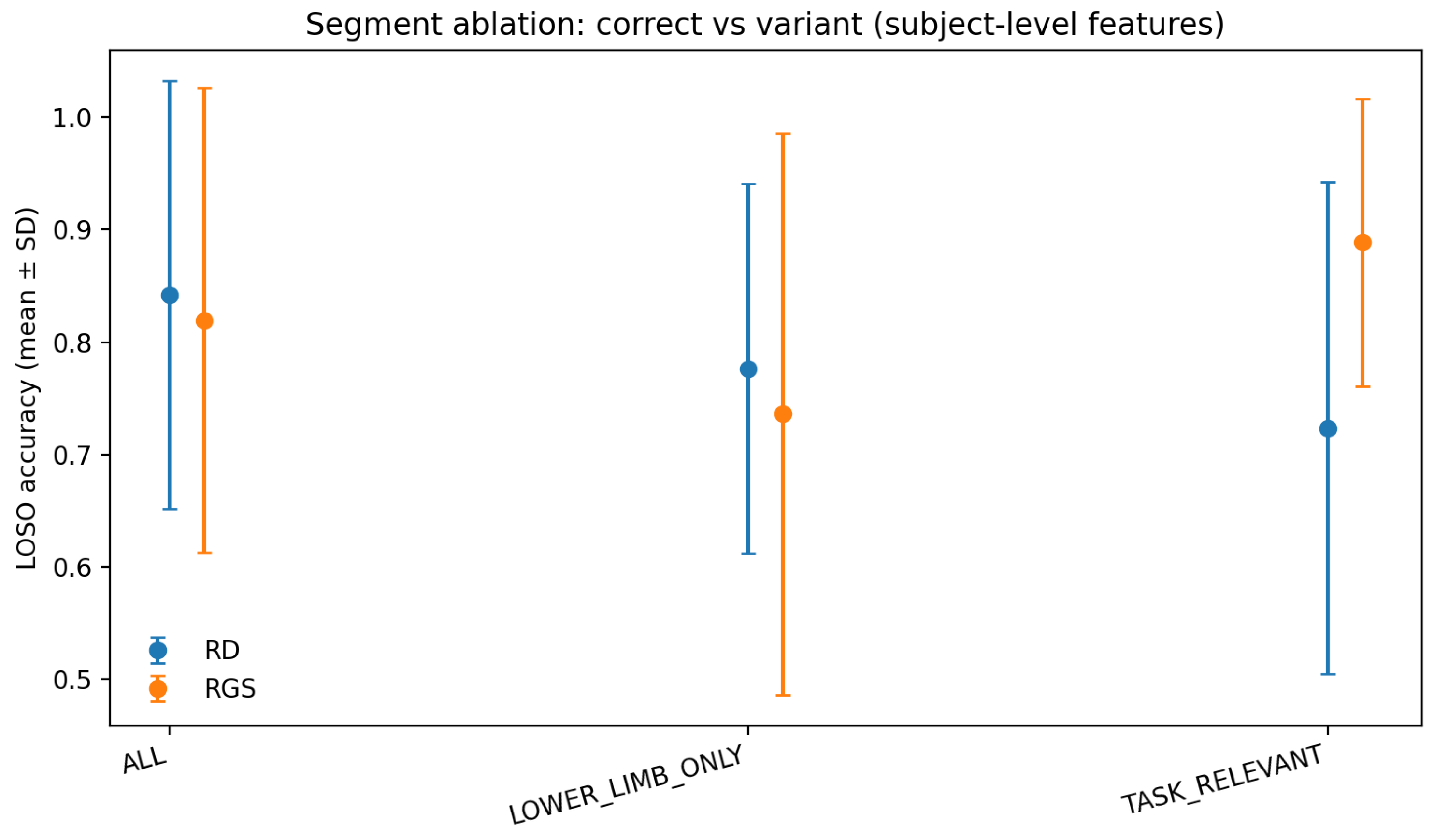

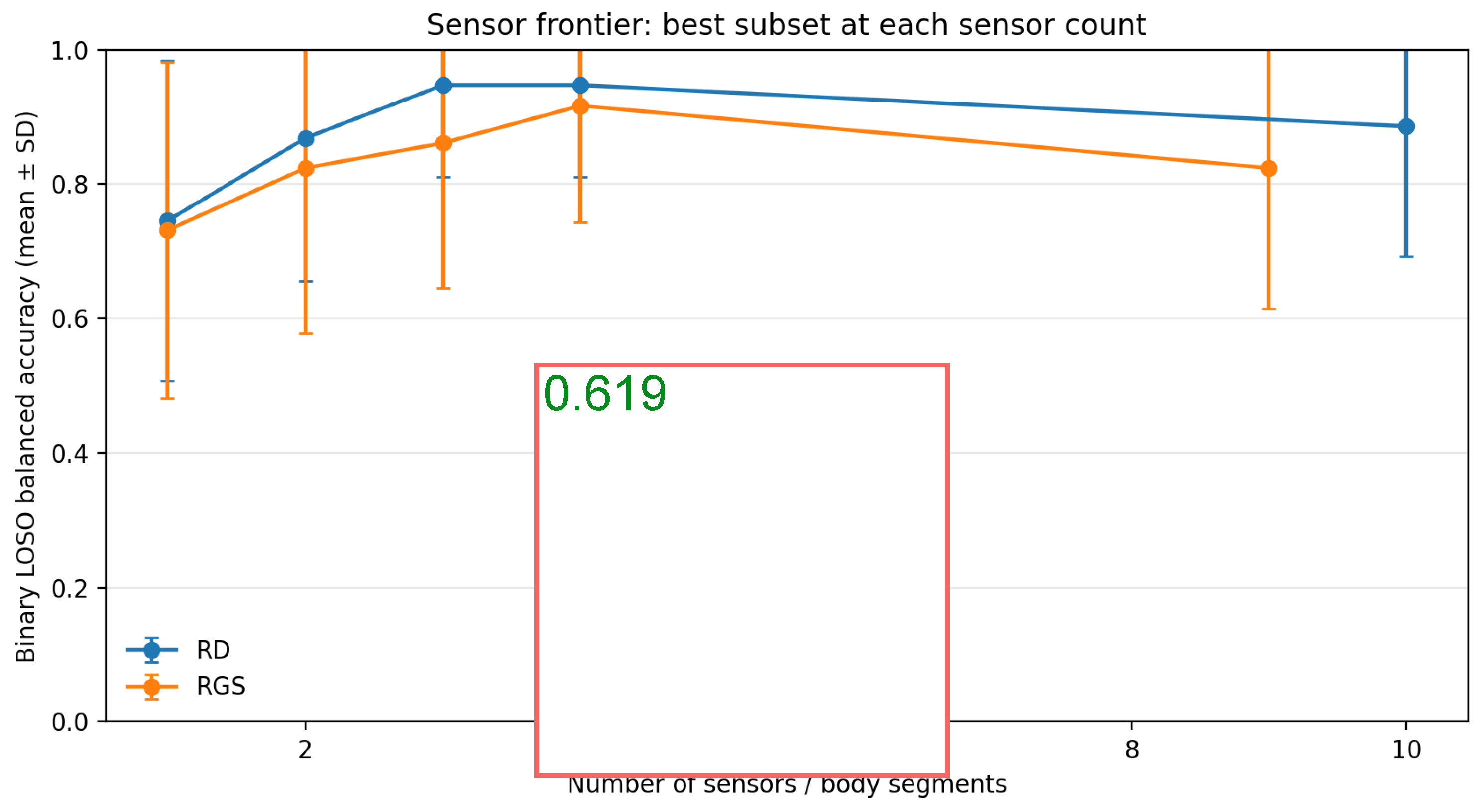

3.3. Sensor Configuration Results

3.4. Lower-Body-Only Sensor Sets

3.5. Proof-of-Concept Closed-Loop Extension Results

4. Discussion

5. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Celik, Y.; Stuart, S.; Woo, W. L.; Godfrey, A. Gait analysis in neurological populations: Progression in the use of wearables. Med. Eng. Phys. 2021, 87, 9–29. [Google Scholar] [CrossRef] [PubMed]

- Horst, F.; Lapuschkin, S.; Samek, W.; Müller, K.-R.; Schöllhorn, W. I. On the explainability of classification decisions with deep learning models in human movement analysis. In Proceedings of the 42nd German Conference on Pattern Recognition, Dortmund, Germany, 24–27 September 2019; Springer: Cham, Switzerland, 2019; pp. 15–28. [Google Scholar]

- Jatesiktat, P.; Lim, G. M.; Kuah, C. W. K.; Anopas, D.; Ang, W. T. Autonomous modeling of repetitive movement for rehabilitation exercise monitoring. BMC Med. Inform. Decis. Mak. 2022, 22, 175. [Google Scholar] [CrossRef] [PubMed]

- Spilz, A.; Oppel, H.; Werner, J.; Stucke-Straub, K.; Capanni, F.; Munz, M. GAITEX: Human motion dataset of impaired gait and rehabilitation exercises using inertial and optical sensors. Sci. Data 2026, 13, 11. [Google Scholar] [CrossRef] [PubMed]

- Munz, M.; Spilz, A.; Oppel, H. Dataset GAITEX: Human motion dataset of impaired gait and rehabilitation exercises using inertial and optical sensors (1.0.0) [Data set]; Zenodo, 2025. [Google Scholar] [CrossRef]

- Xsens. Motion Capture . Available online: https://www.xsens.com/products/motion-capture (accessed on 11 April 2026).

- Papi, E.; Murtagh, G.M.; McGregor, A.H. Wearable technologies in osteoarthritis and rehabilitation: A systematic review. Sensors 2022, 22, 2243. [Google Scholar] [CrossRef]

- Peake, J.M.; Kerr, G.; Sullivan, J.P. A critical review of consumer wearables, mobile applications, and equipment for providing biofeedback, monitoring stress, and sleep in physically active populations. Front. Physiol. 2018, 9, 743. [Google Scholar] [CrossRef] [PubMed]

- Amann, J.; Blasimme, A.; Vayena, E.; Frey, D.; Madai, V.I. Explainability for artificial intelligence in healthcare: A multidisciplinary perspective. BMC Med. Inform. Decis. Mak. 2020, 20, 310. [Google Scholar] [CrossRef] [PubMed]

- Brennan, D.; Mawson, S.; Brownsell, S. Telerehabilitation: Enabling the remote delivery of healthcare, rehabilitation, and self-management. Stud. Health Technol. Inform. 2009, 145, 231–248. [Google Scholar] [PubMed]

- Varoquaux, G. Cross-validation failure: Small sample sizes lead to large error bars. NeuroImage 2018, 180, 68–77. [Google Scholar] [CrossRef] [PubMed]

- Amann, J.; Blasimme, A.; Vayena, E.; Frey, D.; Madai, V.I. Explainability for artificial intelligence in healthcare: A multidisciplinary perspective. BMC Med. Inform. Decis. Mak. 2020, 20, 310. [Google Scholar] [CrossRef] [PubMed]

- Brennan, D.; Mawson, S.; Brownsell, S. Telerehabilitation: Enabling the remote delivery of healthcare, rehabilitation, and self-management. Stud. Health Technol. Inform. 2009, 145, 231–248. [Google Scholar] [PubMed]

- Giggins, O.M.; Sweeney, K.T.; Caulfield, B. Rehabilitation exercise assessment using inertial sensors: A cross-sectional analytical study. J. Neuroeng. Rehabil. 2014, 11, 158. [Google Scholar] [CrossRef] [PubMed]

- Giggins, O.; Sweeney, K.T.; Caulfield, B. The use of inertial sensors for the classification of rehabilitation exercises. Proc. Annu. Int. Conf. IEEE Eng. Med. Biol. Soc., 2014; pp. 2965–2968. [Google Scholar] [CrossRef]

- Huang, B.; Giggins, O.; Kechadi, T.; Caulfield, B. The limb movement analysis of rehabilitation exercises using wearable inertial sensors. Proc. Annu. Int. Conf. IEEE Eng. Med. Biol. Soc., 2016; pp. 4686–4689. [Google Scholar] [CrossRef]

- Porciuncula, F.; Roto, A.V.; Kumar, D.; Davis, I.; Roy, S.; Walsh, C.J.; Awad, L.N. Wearable movement sensors for rehabilitation: A focused review of technological and clinical advances. PM&R 2018, 10, S220–S232. [Google Scholar] [CrossRef] [PubMed]

- Giggins, O.M.; Persson, U.M.; Caulfield, B. Wearable inertial sensor systems for lower limb exercise detection and evaluation: A systematic review. Sports Med. 2018, 48, 1221–1246. [Google Scholar] [CrossRef] [PubMed]

- Bavan, L.; Surmacz, K.; Beard, D.; Mellon, S.; Rees, J. Adherence monitoring of rehabilitation exercise with inertial sensors: A clinical validation study. Gait Posture 2019, 70, 211–217. [Google Scholar] [CrossRef] [PubMed]

- Brennan, L.; Bevilacqua, A.; Kechadi, T.; Caulfield, B. Segmentation of shoulder rehabilitation exercises for single and multiple inertial sensor systems. J. Rehabil. Assist. Technol. Eng. 2020, 7, 2055668320915377. [Google Scholar] [CrossRef] [PubMed]

- Amann, J.; Blasimme, A.; Vayena, E.; Frey, D.; Madai, V.I. Explainability for artificial intelligence in healthcare: A multidisciplinary perspective. BMC Med. Inform. Decis. Mak. 2020, 20, 310. [Google Scholar] [CrossRef] [PubMed]

- Regterschot, G.R.H.; Ribbers, G.M.; Bussmann, J.B.J. Wearable movement sensors for rehabilitation: From technology to clinical practice. Sensors 2021, 21, 4744. [Google Scholar] [CrossRef] [PubMed]

- Phan, V.; Song, K.; Silva, R.S.; Silbernagel, K.G.; Baxter, J.R.; Halilaj, E. Seven things to know about exercise classification with inertial sensing wearables. IEEE J. Biomed. Health Inform. 2024, 28, 3411–3421. [Google Scholar] [CrossRef] [PubMed]

- Shenoy, A.; Samra, M.S.; Van Ooteghem, K.; Beyer, K.B.; Thomson, S.; McIlroy, W.E.; Eng, J.J.; Pollock, C.L. Evaluating the usability of inertial measurement units for measuring and monitoring activity post-stroke: A scoping review. Sensors 2025, 25, 3694. [Google Scholar] [CrossRef] [PubMed]

- Brennan, D.; Mawson, S.; Brownsell, S. Telerehabilitation: Enabling the remote delivery of healthcare, rehabilitation, and self-management. Stud. Health Technol. Inform. 2009, 145, 231–248. [Google Scholar] [PubMed]

| Task | Full acc. | Minimal acc. | (Full−Min.) | Multiclass acc. |

|---|---|---|---|---|

| RD | 0.697 | 0.447 | 0.250 () | 0.461 |

| RGS | 0.694 | 0.472 | 0.222 () | 0.403 |

| Variant | Readable Summary |

|---|---|

| RD pronation | Very large increases in right lower-leg typical, average, and peak rotational speed. |

| RD supination | Very large increases in right-hand typical and average rotational speed, plus increased right lower-leg rotational excursion. |

| RD toes | Very large increases in right-hand peak rotational speed, rotational excursion, and typical rotational speed. |

| RGS abduction | Very large reductions in left-foot rotational speed with simultaneous pelvis speed increase. |

| RGS flexion | Marked reductions in left-foot speed and lower left-leg mean speed. |

| RGS stork | Increased right upper-leg mean speed with strong reductions in left-foot speed metrics. |

| Task | k | Best Subset | Accuracy | Balanced acc. |

|---|---|---|---|---|

| RD | 1 | Hand Right | 0.829 | 0.746 |

| RD | 2 | Hand Right + LowerLeg Right | 0.908 | 0.868 |

| RD | 3 | Hand Right + LowerLeg Left + LowerLeg Right | 0.947 | 0.947 |

| RD | 4 | Foot Left + Hand Right + LowerLeg Left + LowerLeg Right | 0.947 | 0.947 |

| RD | All | Full 10-segment set | 0.908 | 0.886 |

| RGS | 1 | LowerLeg Left | 0.847 | 0.731 |

| RGS | 2 | Foot Right + LowerLeg Left | 0.875 | 0.824 |

| RGS | 3 | Foot Left + LowerLeg Left + Pelvis | 0.903 | 0.861 |

| RGS | 4 | Foot Right + Hand Right + LowerLeg Left + Sternum | 0.903 | 0.917 |

| RGS | All | Full 9-segment set | 0.847 | 0.824 |

| Task | k | Best Lower-Body Subset | Accuracy | Balanced acc. |

|---|---|---|---|---|

| RD | 1 | LowerLeg Right | 0.750 | 0.593 |

| RD | 2 | LowerLeg Right + UpperLeg Right | 0.776 | 0.640 |

| RD | 3 | LowerLeg Left + LowerLeg Right + UpperLeg Right | 0.803 | 0.675 |

| RD | 4 | LowerLeg Left + LowerLeg Right + UpperLeg Left + UpperLeg Right | 0.816 | 0.702 |

| RGS | 1 | LowerLeg Left | 0.847 | 0.731 |

| RGS | 2 | Foot Right + LowerLeg Left | 0.875 | 0.824 |

| RGS | 3 | Foot Left + LowerLeg Left + Pelvis | 0.903 | 0.861 |

| RGS | 4 | Foot Right + LowerLeg Left + LowerLeg Right + Pelvis | 0.889 | 0.870 |

| Task | Configuration | Sensors | Window acc. | Window bal. acc. | Median First Detect | Trigger Rate |

|---|---|---|---|---|---|---|

| RD | Full | 10 | 0.772 | 0.601 | 0.093 | — |

| RD | Best compact | 3 | 0.800 | 0.605 | 0.093 | — |

| RD | Best lower-body | 4 | 0.783 | 0.557 | 0.091 | — |

| RGS | Full | 9 | 0.792 | 0.593 | 0.047 | — |

| RGS | Best compact | 4 | 0.781 | 0.527 | 0.047 | — |

| RGS | Best lower-body | 3 | 0.781 | 0.527 | 0.047 | — |

| RD | Tuned trigger, incorrect reps | 10* | — | — | 0.277† | 0.610 |

| RD | Tuned trigger, correct reps | 10* | — | — | 0.690† | 0.094 |

| RGS | Tuned trigger, incorrect reps | 9* | — | — | 0.233† | 0.622 |

| RGS | Tuned trigger, correct reps | 9* | — | — | 0.619† | 0.088 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).