1. Introduction

In many real-world applications, the same set of objects can be described by several different types of features. For example, an image can be represented by its color, texture, and shape at the same time. A web document can be described by both its text content and its link structure. A clinical patient may have genomic data, pathological images, and electronic health records. Each type of feature is called a

view, and each view captures only part of the information about the data. No single view is likely to reveal the complete cluster structure on its own. Multi-view clustering aims to combine these different views to find meaningful groups of objects without any label information. This task has wide applications in areas such as social network analysis, medical informatics, remote sensing, and visual object categorization [

1,

2].

Among the various approaches to this task, graph-based methods have attracted considerable attention. The basic idea is to build a graph for each view, where nodes represent data samples and edge weights reflect pairwise similarities. The cluster structure is then extracted from the spectral properties of the graph. In classical spectral clustering [

3], the eigenvectors of the graph Laplacian provide a continuous approximation of the discrete cluster indicators, and K-means is applied as a post-processing step to produce hard assignments. Multi-view extensions build one graph per view and try to learn a unified representation that combines information across views. Nie et al. [

4] proposed a graph learning framework that assigns view weights automatically through self-optimization. Wang et al. [

5] introduced a mutual reinforcement mechanism between individual view graphs and the consensus graph. Liang et al. [

6] modeled both consistency and inconsistency across views, and Huang et al. [

7] separated multi-view graphs into shared and diverse components. These methods have significantly improved clustering quality, but they all rely on full

similarity matrices. This requires at least

storage and

eigendecomposition cost, which becomes impractical when

N exceeds a few thousand.

Dense pairwise graphs carry a heavy computational burden. To resolve this, researchers select a small set of representative anchors, denoted as

r (

). By learning an

affinity matrix between the samples and these anchors, a sparse bipartite structure is formed. Graph construction and spectral analysis both scale as

. Li et al. [

8] initially adapted this concept for multi-view data. They construct a separate graph per view and concatenate them prior to spectral analysis. A related pipeline by Kang et al. [

9] accelerates subspace clustering. Addressing a different issue, Nie et al. [

10] designed a structured objective based on graph connectivity. This yields cluster labels directly, avoiding the K-means step altogether. Meanwhile, other studies [

11,

12,

13] proposed fusion strategies without additional hyperparameters. These methods aggregate the individual bipartite graphs under a strict Laplacian rank constraint, which guarantees the consensus graph forms exactly

c connected components.

A recent trend attempts to solve graph construction and clustering in a single step. Fang et al. [

14] reconstruct subspaces to learn bipartite graphs for each view alongside a consensus graph. They also add label learning directly into this loop. Liu et al. [

15] rely on decomposition. By splitting each anchor graph into a shared core and a separate residual, they penalize the residuals to reduce discrepancies across views. Yan et al. [

16] push this idea further. They isolate consistency from diversity, applying sparsity rules so that only the consistent components shape the final graph. However, these methods all build graphs in the original feature space without modifying the data representation beforehand. Li et al. [

17] take a different route by fusing similarities during the spectral embedding phase. By utilizing entropy weighting and spectral rotation, their model produces discrete labels without extra clustering steps. This orthogonal rotation mechanism to replace K-means was originally designed by Luo et al. [

18] for multigraph clustering. Several recent bipartite graph models have since adopted this idea [

14,

17].

Tensors provide a parallel approach to capture high-order correlations. Xia et al. [

19] formed a single tensor by stacking individual bipartite graphs. They then applied the tensor Schatten

p-norm to guarantee low-rank consistency. Since full tensor decomposition is computationally expensive, Gu et al. [

20] avoided it by learning a compact essential representation. Other studies broaden the scope of graph modeling. Zhao et al. [

21] captured indirect relationships between samples. To maintain performance, they introduced a truncation mechanism that explicitly filters out low-quality graphs before fusion. Addressing a different challenge, Jiang et al. [

22] extended the framework to handle unaligned data. Under this setting, the sample correspondences across views are unknown.

Despite this progress, two limitations remain in existing methods. The first limitation concerns the feature space in which bipartite graphs are constructed. Virtually all methods cited above learn anchor-sample affinities in the original feature space. When a view contains thousands of features, many of which are redundant or uninformative, pairwise distances suffer from the concentration phenomenon in high-dimensional spaces and become nearly indistinguishable. The resulting affinity values lose discriminative power, and the distorted affinities propagate through consensus fusion into the final partition. Although dimensionality reduction techniques such as PCA have long been available, integrating them into the bipartite graph learning objective while preserving the variance structure and maintaining closed-form updates remains unexplored.

The second limitation lies in the transition from continuous spectral embeddings to discrete cluster assignments. Three competing strategies coexist. The dominant approach applies K-means to the eigenvectors of the consensus Laplacian, which introduces nondeterminism through random seed selection and can yield different partitions across runs on the same graph. The constrained Laplacian rank approach [

23] enforces exactly

c connected components, yielding deterministic labels from the connectivity pattern, but it imposes a rigid structural requirement that may not suit data whose clusters overlap or vary in density. Spectral rotation [

24] aligns the eigenvector matrix to a discrete indicator through orthogonal rotation, removing K-means entirely, yet it does not by itself ensure that the underlying graph possesses a clear connected-component structure. Each strategy sacrifices one desirable property to gain another, and combining their strengths within a unified objective remains an open question.

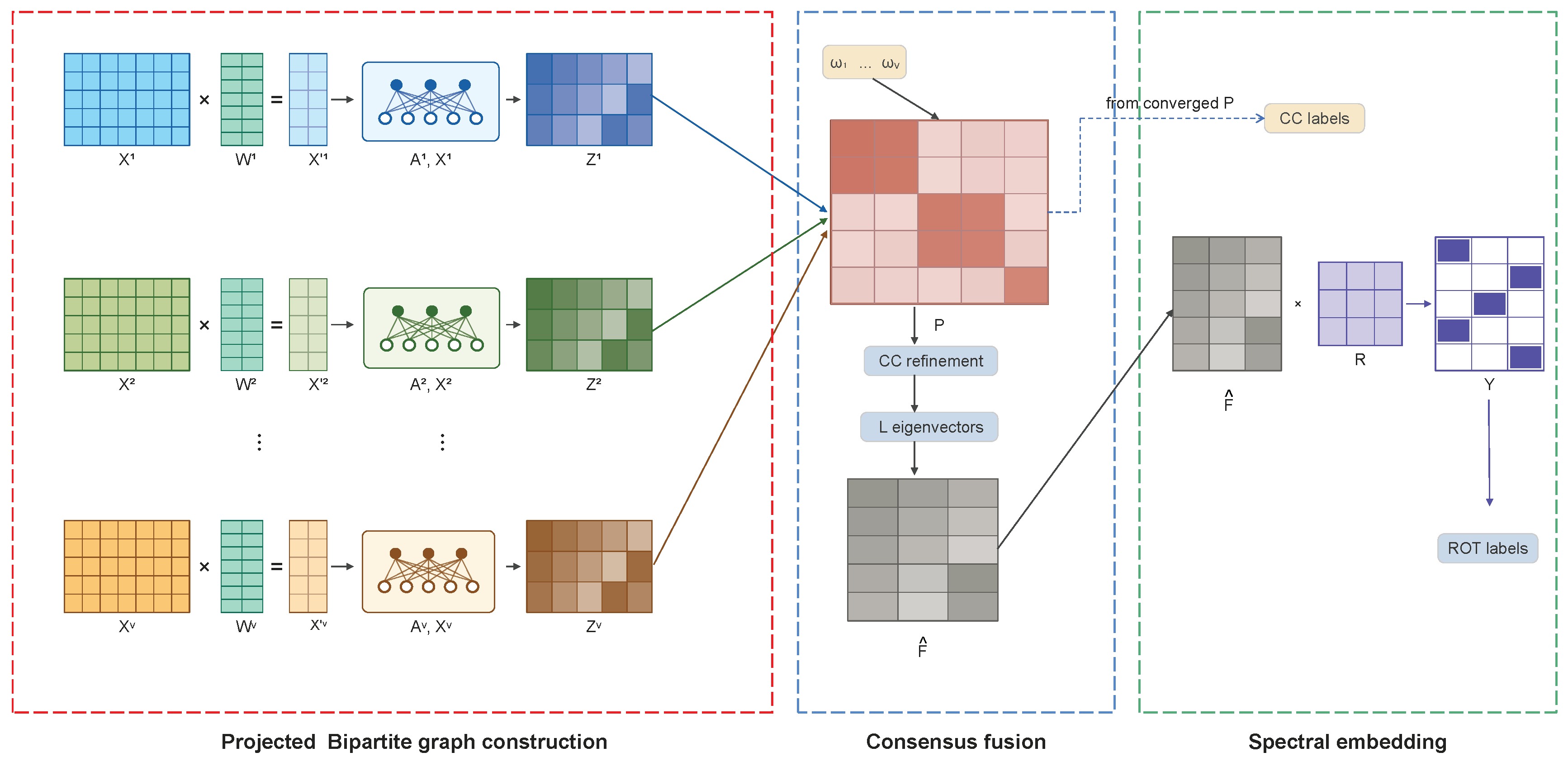

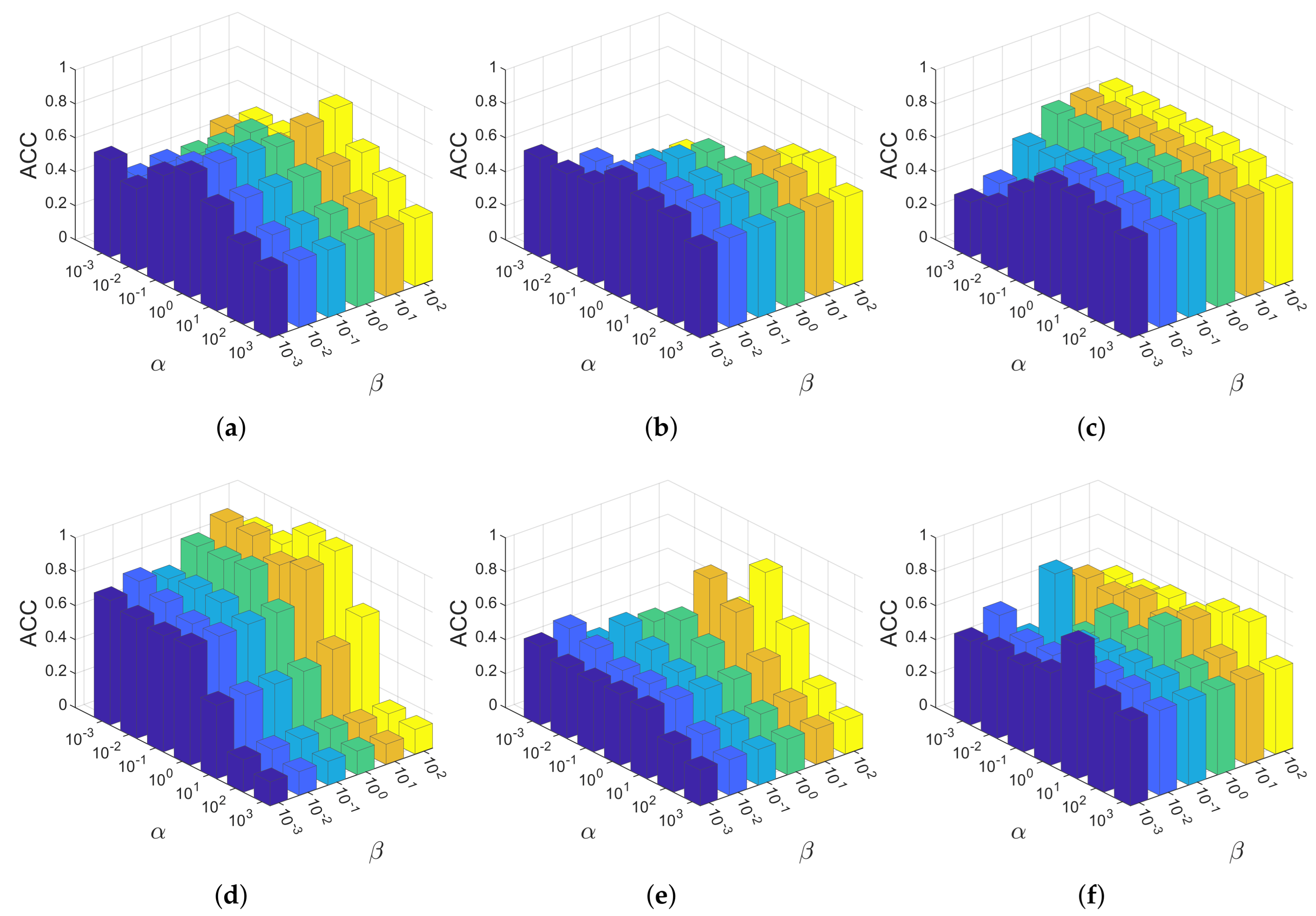

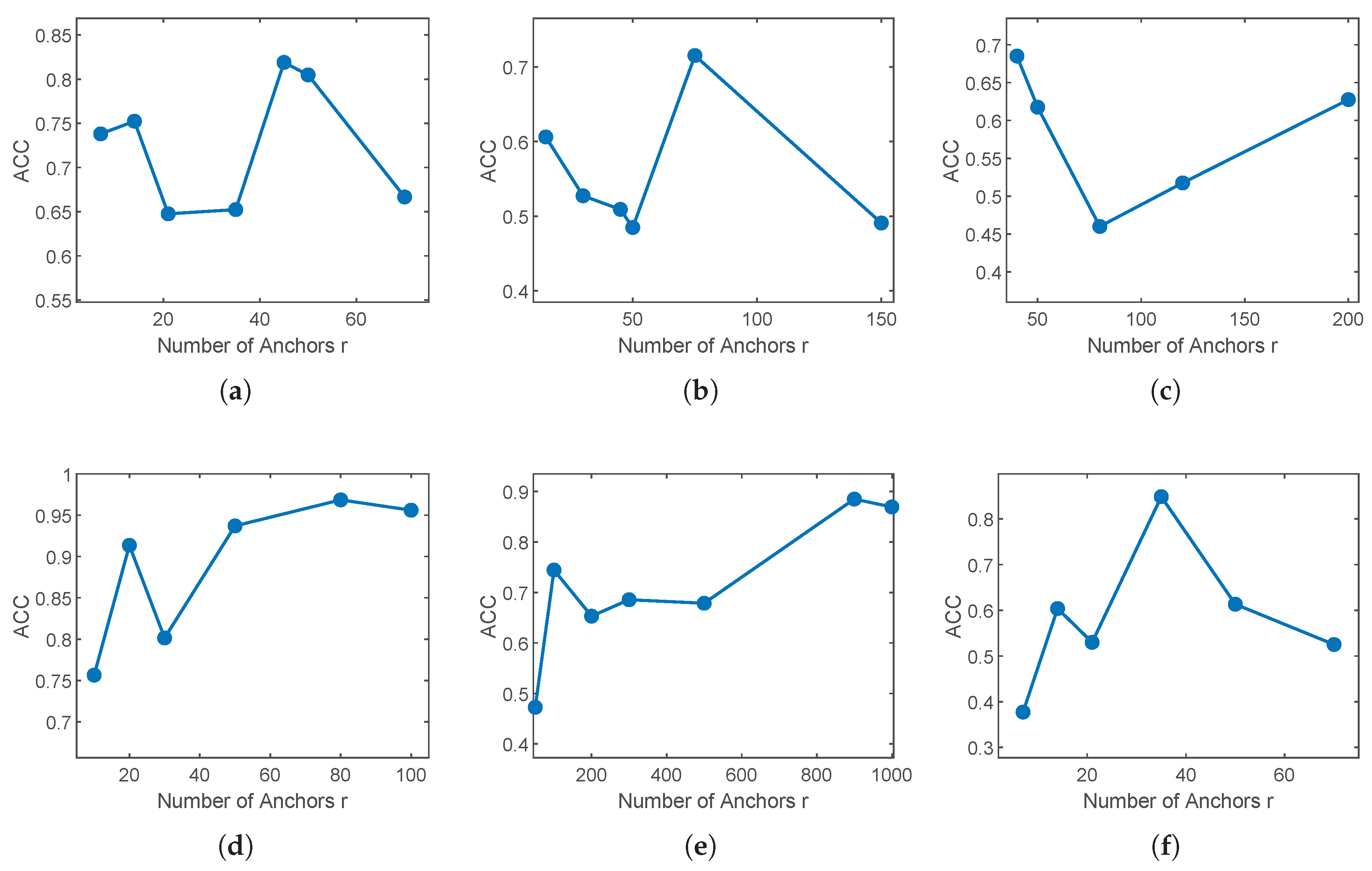

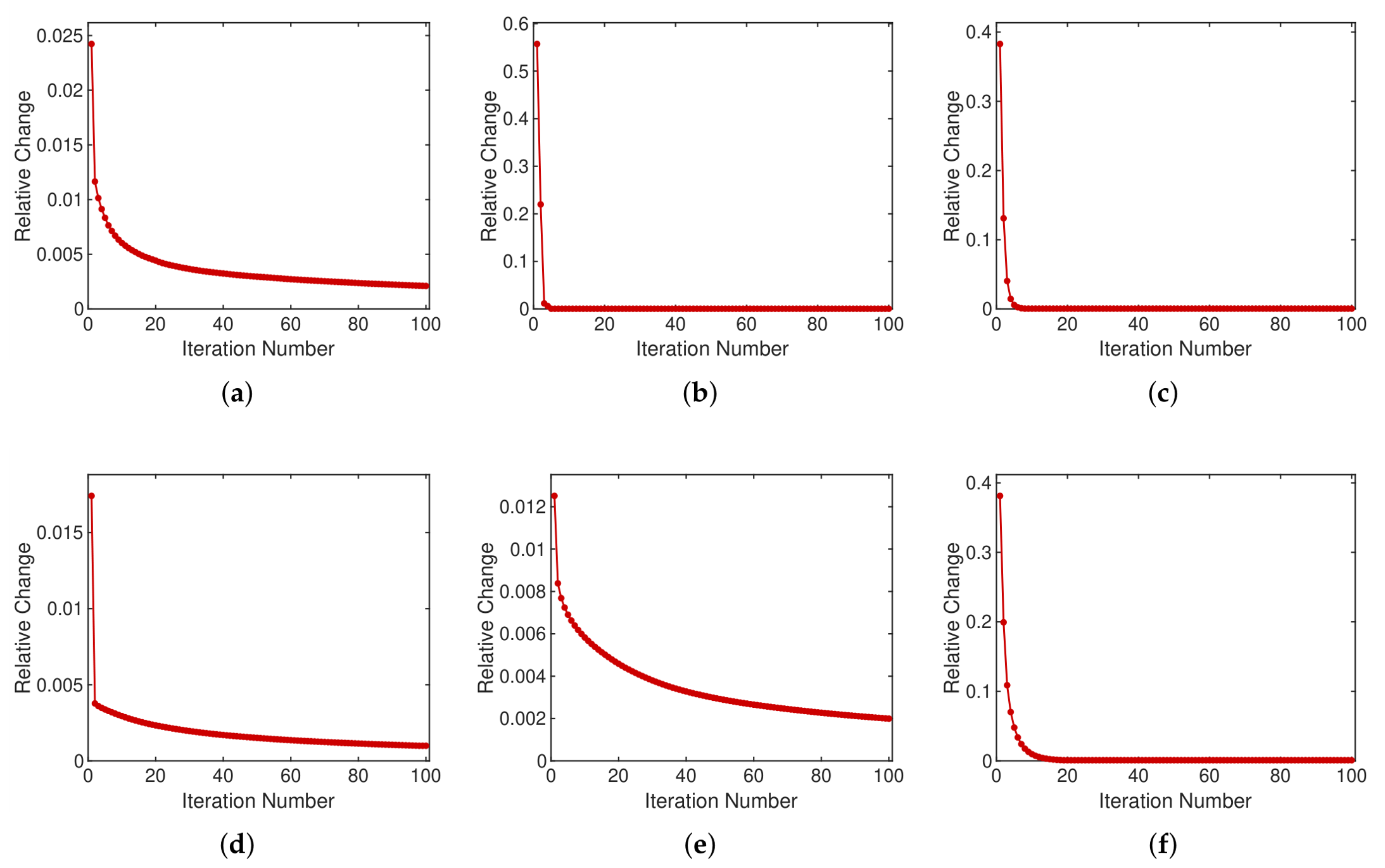

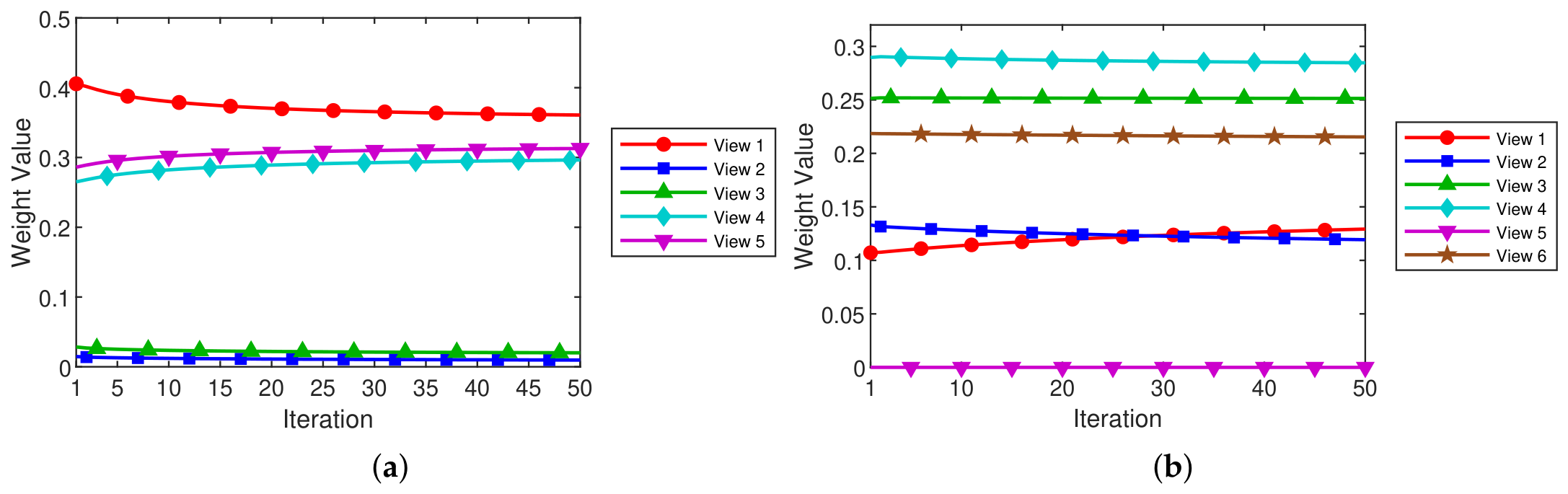

We propose Projection-Enhanced Bipartite Graph Learning (PEBGL) to resolve these limitations. Our approach places the entire learning pipeline within a single objective function. Rather than working in the original feature space, we project each view orthogonally. This maps the data into a compact subspace, but the dominant variance remains intact. In this new space, anchor reconstruction under simplex constraints forms the bipartite graph. The fusion objective treats all views symmetrically. By applying an entropy penalty, the method learns adaptive weights for the individual graphs and fuses them into one consensus structure. We then compute a spectral embedding from its symmetric normalized Laplacian. An orthogonal rotation aligns this embedding directly with discrete cluster indicators. Here, the rotation acts merely as a structural regularizer rather than a forced decoding step. Final labels are read straight from the connected components of the converged graph. Every subproblem has a closed-form solution, and the time cost per iteration scales linearly with N. Tested against six benchmark datasets, PEBGL reached the top accuracy on two and ranked second on three others. It yields deterministic output and maintains competitive running times.

The main contributions are summarized as follows.

- (i)

A unified objective that integrates variance-preserving PCA projection, bipartite graph construction, entropy-weighted consensus fusion, spectral embedding, and discrete rotation with overall linear time complexity.

- (ii)

A deterministic coclustering decoding strategy based on connected-component detection, which bypasses K-means post-processing and guarantees reproducible cluster labels for any fixed parameter configuration.

- (iii)

Closed-form solutions for every subproblem, validated by experiments on six benchmarks against seven recent methods.

The overall framework of PEBGL is depicted in

Figure 1.

Table 1 summarizes the key symbols used throughout this paper. The set of

binary matrices in which each row contains exactly one unit entry is denoted by

. Let

denote a multi-view dataset with

V views, where

contains

N samples described by

-dimensional features in the

v-th view. The goal is to partition the

N samples into

c clusters by jointly exploiting all views.

The remainder of this paper is organized as follows.

Section 2 establishes the mathematical preliminaries.

Section 3 develops the proposed method with complete derivations.

Section 4 reports experimental comparisons and analyses on six benchmark datasets.

Section 5 concludes the paper.