Submitted:

14 April 2026

Posted:

20 April 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

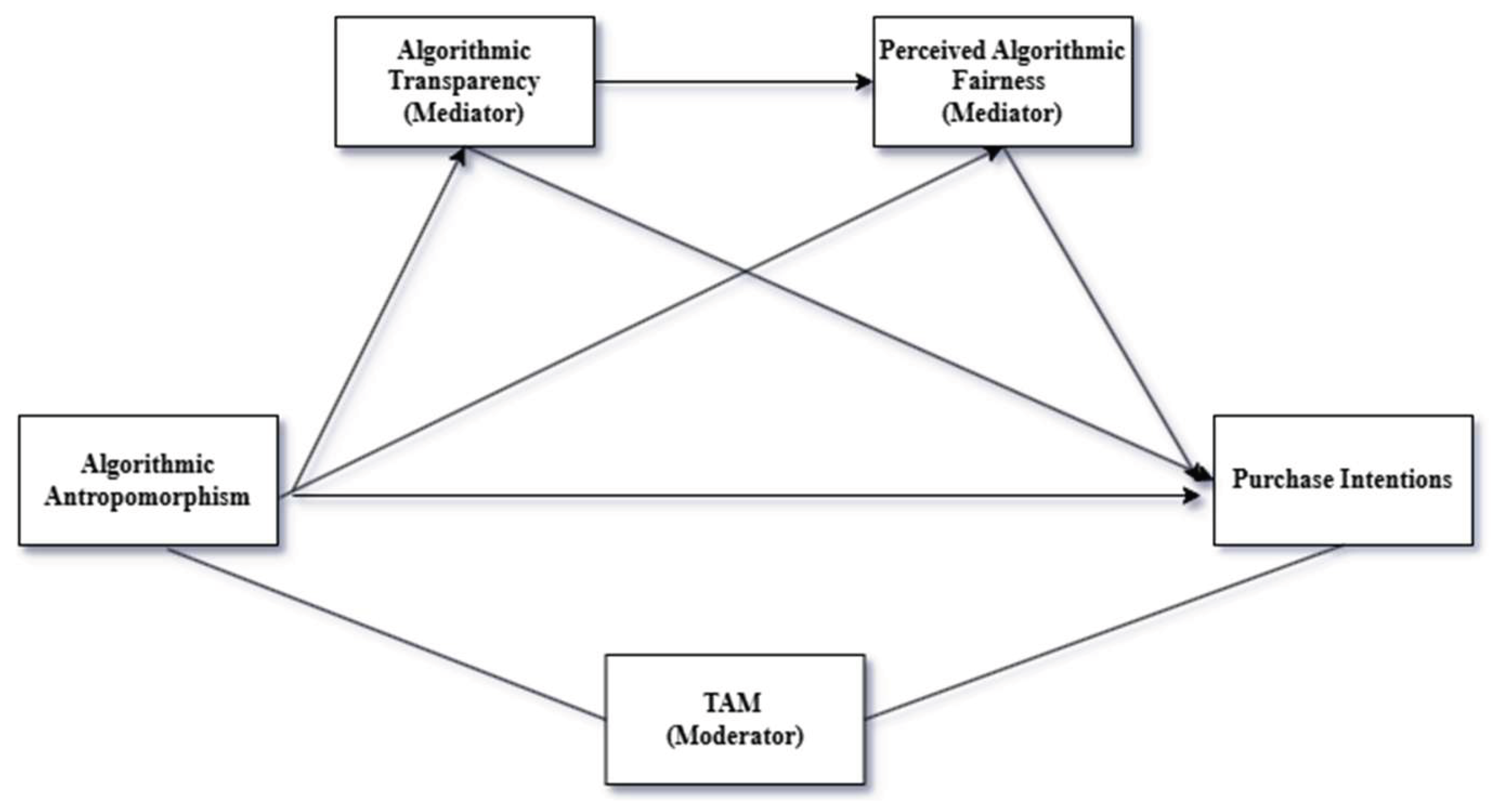

2. Literature Review and Hypothesis Development

2.1. Algorithmic Anthropomorphism and Perceived Fairness

2.2. Algorithmic Transparency and Perceived Fairness

2.3. Perceived Fairness and Purchase Intention

2.4. Algorithmic Transparency and Purchase Intention

2.5. Mediating Role of Perceived Fairness and Transparency

2.6. Algorithmic Anthropomorphism and Transparency

2.7. Algorithmic Anthropomorphism and Purchase Intention

2.8. The Moderating Role of the Technology Acceptance Model

3. Research Method

3.1. Questionnaire Design

3.2. Respondents and Data Collection

4. Data Analysis and Research Results

4.1. Reliability Analysis

4.2. Descriptive Statistics and Normality Tests

4.3. Hypothesis Testing

4.3.1. Mediation Analysis

4.3.2. Moderation Analysis

4.3.3. Summary of Hypothesis Testing

| Hypothesis | Path | Result |

| H1 | Anthropomorphism → Fairness (+) | Supported |

| H2 | Transparency → Fairness (+) | Supported |

| H3 | Fairness → Purchase Intention (+) | Supported |

| H4 | Transparency → Purchase Intention (+) | Supported |

| H5 | Anthrop. → Transp. → Fairness → PI (sequential mediation) | Supported |

| H6 | Fairness mediates Transparency → PI | Supported |

| H8 | Anthropomorphism → Transparency (+) | Supported |

| H9 | Anthropomorphism → Purchase Intention (+) | Supported |

| H10 | TAM → Purchase Intention (+) | Supported |

| H11 | TAM moderates Anthrop. → PI | Marginally Supported |

5. Discussion

5.1. Key Findings

5.2. Theoretical Contributions

5.3. Managerial Implications

5.4. Limitations and Future Research

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- Adam, M.; Wessel, M.; Benlian, A. AI-based chatbots in customer service and their effects on user compliance. Electronic Markets 2021, 31(2), 427–445. [Google Scholar] [CrossRef]

- Braga, F. M. I. The influence and impact of artificial intelligence in the consumer decision-making process; Dissertation: ISCTE Business School, 2020. [Google Scholar]

- Burrell, J. How the machine ‘thinks’: Understanding opacity in machine learning algorithms. Big Data & Society 2016, 3(1), 2053951715622512. [Google Scholar] [CrossRef]

- Chai, F. Grading by AI makes me feel fairer? How different evaluators affect college students’ perception of fairness. Frontiers in Psychology 2024, 15. [Google Scholar] [CrossRef]

- Cheng, X.; Zhang, X.; Cohen, J.; Mou, J. Human vs. AI: Understanding the impact of anthropomorphism on consumer response to chatbots. Journal of Retailing and Consumer Services 2022, 68, 103013. [Google Scholar]

- Conlon, D. E.; Porter, C. O.; Parks, J. M. The fairness of decision rules. Journal of Management 2004, 30(3), 329–349. [Google Scholar] [CrossRef]

- Davis, F. D. Perceived usefulness, perceived ease of use, and user acceptance of information technology. MIS Quarterly 1989, 13(3), 319–340. [Google Scholar] [CrossRef]

- Draws, T.; Szlávik, Z.; Timmermans, B.; Tintarev, N.; Varshney, K.; Hind, M. Disparate impact diminishes consumer trust even for advantaged users. In Proceedings of the 2021 AAAI/ACM Conference on AI, Ethics, and Society, 2021. [Google Scholar]

- Epley, N.; Waytz, A.; Cacioppo, J. T. On seeing humans: A three-factor theory of anthropomorphism. Psychological Review 2007, 114(4), 864–886. [Google Scholar] [CrossRef]

- Eyssel, F.; Kuchenbrandt, D.; Bobinger, S. Effects of anticipated human-robot interaction and predictability of robot behavior on perceptions of anthropomorphism. In Proceedings of the 6th ACM/IEEE International Conference on HRI, 2011; pp. 373–380. [Google Scholar]

- Fedorko, I.; Bačík, R.; Gavurová, B. Technology acceptance model in e-commerce segment. Management & Marketing 2018, 13(4), 1242–1256. [Google Scholar] [CrossRef]

- Felzmann, H.; Fosch-Villaronga, E.; Lutz, C.; Tamò-Larrieux, A. Towards transparency by design for artificial intelligence. Science and Engineering Ethics 2020, 26, 3333–3361. [Google Scholar] [CrossRef]

- Fink, J. Anthropomorphism and human likeness in the design of robots and human-robot interaction. Social Robotics: 4th International Conference, ICSR 2012, 2012; pp. 199–208. [Google Scholar]

- Fu, S.; Liu, X.; Lamrabet, A.; Liu, H.; Huang, Y. Green production information transparency and online purchase behavior. Electronic Commerce Research and Applications 2022, 56, 101210. [Google Scholar]

- Gomes, S.; Lopes, J. M.; Nogueira, E. Anthropomorphism in artificial intelligence: A gamechanger for brand marketing. Future Business Journal 2025, 11(1). [Google Scholar] [CrossRef]

- Goodman, B.; Flaxman, S. European Union regulations on algorithmic decision making and a “right to explanation.”. AI Magazine 2017, 38(3), 50–57. [Google Scholar] [CrossRef]

- Goundar, S.; Lal, K.; Chand, A.; Vyas, P. Consumer perception of electronic commerce: Incorporating trust and risk with the TAM. International Journal of Electronic Business 2021, 16(3), 214–235. [Google Scholar]

- Grimmelikhuijsen, S. Explaining why the computer says no: Algorithmic transparency affects the perceived trustworthiness of automated decision-making. Public Administration Review 2022, 83(2), 241–262. [Google Scholar] [CrossRef]

- Gunning, D.; Aha, D. DARPA’s explainable artificial intelligence (XAI) program. AI Magazine 2019, 40(2), 44–58. [Google Scholar] [CrossRef]

- Hayes, A. F. Introduction to Mediation, Moderation, and Conditional Process Analysis, 3rd ed.; The Guilford Press, 2022. [Google Scholar]

- He, S.; Wang, R. The development potential and challenges of one-click generative AI in cross-border e-commerce. International Journal of Retail & Distribution Management 2024. [Google Scholar]

- Heijden, H.; Verhagen, T.; Creemers, M. Understanding online purchase intentions: Contributions from technology and trust perspectives. European Journal of Information Systems 2003, 12(1), 41–48. [Google Scholar] [CrossRef]

- Hossain, M.; Hussain, M.; Akther, A. E-commerce platforms in developing economies: Unveiling behavioral intentions through TAM. Journal of Electronic Commerce Research 2023, 24(2). [Google Scholar]

- Höddinghaus, M.; Sondern, D.; Hertel, G. The automation of leadership functions: Would people trust decision algorithms? Computers in Human Behavior 2021, 116, 106635. [Google Scholar] [CrossRef]

- Hwang, E. Operational transparency as a service design: An investigation on labor/effort observation effect. Journal of Hospitality & Tourism Research 2024, 48(1), 59–77. [Google Scholar] [CrossRef]

- Inie, N.; Druga, S.; Zukerman, P.; Bender, E. M. From “AI” to probabilistic automation: How does anthropomorphization of technical systems affect trust? Proceedings of CHI 2024, 2024. [Google Scholar]

- Kim, H.; Jang, S. Restaurant-visit intention: Do anthropomorphic cues, brand awareness and subjective social class interact? International Journal of Hospitality Management 2022, 104, 103243. [Google Scholar] [CrossRef]

- Kizilcec, R. F. How much information? Effects of transparency on trust in an algorithmic interface. Proceedings of CHI 2016, 2016; pp. 2390–2395. [Google Scholar]

- Lee, M. Understanding perception of algorithmic decisions: Fairness, trust, and emotion in response to algorithmic management. Big Data & Society 2018, 5(1). [Google Scholar] [CrossRef]

- Leinsle, P.; Totzek, D.; Schumann, J. How price fairness and fit affect customer tariff evaluations. Journal of Service Management 2018, 29(4), 735–764. [Google Scholar] [CrossRef]

- Liu, A.; Lin, S. Service for specialized and digitalized: Consumer switching perceptions of e-commerce in specialty retail. Managerial and Decision Economics 2022, 43(7), 2701–2714. [Google Scholar] [CrossRef]

- Lu, Y.; Liu, Y.; Le, T.; Ye, S. Cuteness or coolness—How does different anthropomorphic brand image accelerate consumers’ willingness to buy. Frontiers in Psychology 2021, 12. [Google Scholar] [CrossRef]

- Mehrabi, N.; Morstatter, F.; Saxena, N.; Lerman, K.; Galstyan, A. A survey on bias and fairness in machine learning. ACM Computing Surveys 2021, 54(6), 1–35. [Google Scholar] [CrossRef]

- Mirghaderi, L.; Sziron, M.; Hildt, E. Ethics and transparency issues in digital platforms: An overview. AI 2023, 4(4), 831–843. [Google Scholar] [CrossRef]

- Mori, M.; MacDorman, K. F.; Kageki, N. The uncanny valley. IEEE Robotics & Automation Magazine 2012, 19(2), 98–100. [Google Scholar] [CrossRef]

- Munnukka, J.; Talvitie-Lamberg, K.; Maity, D. Anthropomorphism and social presence in human–virtual service assistant interactions. Computers in Human Behavior 2022, 132, 107234. [Google Scholar] [CrossRef]

- Nass, C.; Moon, Y. Machines and mindlessness: Social responses to computers. Journal of Social Issues 2000, 56(1), 81–103. [Google Scholar] [CrossRef]

- Nelson, J. P.; Biddle, J. B.; Shapira, P. Applications and societal implications of artificial intelligence in manufacturing: A systematic review. Technology in Society 2023, 72, 102194. [Google Scholar]

- Newman, D. T.; Fast, N. J.; Harmon, D. J. When eliminating bias isn’t fair: Algorithmic reductionism and procedural justice in human resource decisions. Organizational Behavior and Human Decision Processes 2020, 160, 149–167. [Google Scholar] [CrossRef]

- Nunnally, J. C.; Bernstein, I. H. Psychometric Theory, 3rd ed.; McGraw-Hill, 1994. [Google Scholar]

- Ochmann, J.; Michels, L.; Tiefenbeck, V.; Maier, C.; Laumer, S. Perceived algorithmic fairness: An empirical study of transparency and anthropomorphism. Electronic Markets 2024, 34(1). [Google Scholar]

- Oktaria, R.; Naibaho, S.; Muda, I.; Kesuma, S. Factors of acceptance of e-commerce technology among society: Integration of TAM. International Journal of Science, Technology & Management 2024, 5(1). [Google Scholar]

- Parboteeah, D. V.; Valacich, J. S.; Wells, J. D. The influence of website characteristics on a consumer’s urge to buy impulsively. Information Systems Research 2009, 20(1), 60–78. [Google Scholar] [CrossRef]

- Pasquale, F. The Black Box Society: The Secret Algorithms That Control Money and Information; Harvard University Press, 2015. [Google Scholar]

- Rader, E.; Cotter, K.; Cho, J. Explanations as mechanisms for supporting algorithmic transparency. Proceedings of CHI 2018, 2018; pp. 1–13. [Google Scholar]

- Rawls, J. A Theory of Justice; Harvard University Press, 1971. [Google Scholar]

- Roesler, E. Anthropomorphic framing and failure comprehensibility influence different facets of trust towards industrial robots. In Frontiers in Robotics and AI; 2023. [Google Scholar]

- Soni, N.; Sharma, E. K.; Singh, N.; Kapoor, A. Impact of artificial intelligence on businesses: From research, innovation, market deployment to future shifts in business models. International Journal of Engineering and Advanced Technology 2019, 8(6S), 1–11. [Google Scholar]

- Song, Y. W. User acceptance of an artificial intelligence (AI) virtual assistant: An extension of the technology acceptance model. Doctoral Dissertation, 2019. [Google Scholar]

- Starke, C.; Baleis, J.; Keller, B.; Marcinkowski, F. Fairness perceptions of algorithmic decision-making: A systematic review of the empirical literature. Big Data & Society 2022, 9(2). [Google Scholar] [CrossRef]

- Sun, C.; Ding, Y.; Wang, X.; Meng, X. Anthropomorphic design in mortality salience situations. International Journal of Human-Computer Interaction 2024, 40(4). [Google Scholar]

- Sun, T.; Li, Y. Effect of algorithmic transparency on gig workers’ proactive service performance. American Behavioral Scientist 2024, 68(1). [Google Scholar]

- Suryawirawan, O. The effect of college students’ technology acceptance on e-commerce adoption. Bisma 2021, 14(1), 46–62. [Google Scholar] [CrossRef]

- Toussaint, P.; Thiebes, S.; Schmidt-Kraepelin, M.; Sunyaev, A. Perceived fairness of direct-to-consumer genetic testing business models. Electronic Markets 2022, 32, 1129–1157. [Google Scholar] [CrossRef]

- Van Teijlingen, E.; Hundley, V. The importance of pilot studies. Social Research Update 2001, 35, 1–4. [Google Scholar] [CrossRef]

- Venkatesh, V.; Davis, F. D. A theoretical extension of the technology acceptance model: Four longitudinal field studies. Management Science 2000, 46(2), 186–204. [Google Scholar] [CrossRef]

- Wang, H. Transparency as manipulation? Uncovering the disciplinary power of algorithmic transparency. Philosophy & Technology 2022, 35(3). [Google Scholar] [CrossRef]

- Yong, W.; TianZe, T.; Zhang, W.; Sun, Z.; Xiong, Q. The Achilles tendon of dynamic pricing: The effect of consumers’ fairness preferences. Frontiers in Psychology 2021, 12. [Google Scholar]

- Zhao, Y.; Wang, L.; Tang, H.; Zhang, Y. Electronic word-of-mouth and consumer purchase intentions in social e-commerce. Electronic Commerce Research and Applications 2020, 41, 100980. [Google Scholar] [CrossRef]

| Variable | Measuring Items | Source |

| Algorithmic Anthropomorphism (5 items) | The algorithm’s behavior seemed natural rather than artificial; The algorithm felt humanlike rather than mechanical; The algorithm gave the impression of being conscious and aware; The responses felt lifelike and realistic; The algorithm interacted in a smooth and humanlike way. | Eyssel et al. (2011); Munnukka et al. (2022) |

| Algorithmic Transparency (3 items) | I think I could understand the decision-making process of platform algorithms very well; I think I can see through platform algorithms’ decision-making process; I think the decision-making process of platform algorithms is clear and transparent. | Höddinghaus et al. (2021); Sun & Li (2024) |

| Perceived Algorithmic Fairness (4 items) | The way this algorithm determined which products were displayed seems fair; The algorithm’s process for deciding which products were displayed was fair; The decision made by the algorithm was fair; The outcome of the algorithm’s decision was fair. | Conlon et al. (2004); Newman et al. (2020) |

| Purchase Intention (4 items) | I am likely to return to this store’s website in the future; I am likely to consider purchasing from this website in the short term; I am likely to consider purchasing in the longer term; I am likely to buy from this store for this purchase. | Heijden et al. (2003) |

| TAM (10 items) | Perceived Ease of Use (5 items): Easy to learn, understandable, requires little effort, minimal mental effort, easy to navigate. Perceived Usefulness (5 items): Increases productivity, helps make better decisions, effective in improving sales, frequent purchases, beneficial for sales/marketing. | Davis (1989); Heijden et al. (2003) |

| Variable | Category | Freq. | Percent |

| Online shopping frequency | Every day | 18 | 4.69% |

| Several times a week | 39 | 10.16% | |

| Once a week | 51 | 13.28% | |

| Several times a month | 156 | 40.63% | |

| Once a month | 112 | 29.17% | |

| Never | 8 | 2.08% | |

| Gender | Male | 158 | 41.15% |

| Female | 207 | 53.91% | |

| Prefer not to say | 19 | 4.95% | |

| Education | High school or below | 80 | 20.83% |

| 2-year university | 27 | 7.03% | |

| Bachelor’s degree | 193 | 50.26% | |

| Master’s degree | 41 | 10.68% | |

| PhD | 43 | 11.20% | |

| Profession | Student | 191 | 49.74% |

| Employee | 115 | 29.95% | |

| Self-employment | 17 | 4.43% | |

| Other | 61 | 15.89% | |

| Nationality | Turkey | 359 | 93.49% |

| France | 3 | 0.78% | |

| Netherlands | 6 | 1.56% | |

| Other | 16 | 4.17% | |

| Daily internet usage | 0–2 hours | 34 | 8.85% |

| 2–4 hours | 106 | 27.60% | |

| 4–6 hours | 137 | 35.68% | |

| More than 6 hours | 107 | 27.86% | |

| AI familiarity (1–5) | 1 (Not familiar) | 15 | 3.90% |

| 2 | 48 | 12.50% | |

| 3 | 166 | 43.23% | |

| 4 | 99 | 25.78% | |

| 5 (Very familiar) | 56 | 14.58% | |

| Impulse buying | Yes | 143 | 37.24% |

| No | 241 | 62.76% |

| Variable | No. of Items | α (Scale) | α (All Items) |

| Perceived Algorithmic Fairness | 4 | 0.944 | 0.961 |

| Purchase Intention | 4 | 0.928 | 0.961 |

| Algorithmic Transparency | 3 | 0.861 | 0.961 |

| Algorithmic Anthropomorphism | 5 | 0.927 | 0.961 |

| TAM | 10 | 0.936 | 0.961 |

| Variable | N | Mean | Std. Deviation |

| TAM | 384 | 5.00 | 1.40 |

| Perceived Algorithmic Fairness | 384 | 4.26 | 1.51 |

| Purchase Intention | 384 | 4.58 | 1.53 |

| Algorithmic Transparency | 384 | 4.41 | 1.53 |

| Algorithmic Anthropomorphism | 384 | 4.24 | 1.53 |

| Path | β | SE | p | Result |

| Anthropomorphism → Transparency (a1) | 0.665 | – | < 0.001 | H8: Supported |

| Anthropomorphism → Fairness (a2) | 0.375 | – | < 0.001 | H1: Supported |

| Transparency → Fairness (d21) | 0.367 | – | < 0.001 | H2: Supported |

| Fairness → Purchase Intention (b2) | 0.263 | – | < 0.001 | H3: Supported |

| Transparency → Purchase Intention (b1) | 0.336 | – | < 0.001 | H4: Supported |

| Anthropomorphism → Purchase Intention (c’) | 0.168 | – | 0.002 | H9: Supported |

| Total effect (c) | 0.555 | – | < 0.001 | – |

| Indirect Path | Effect | Boot SE | Boot 95% CI |

| Anthrop. → Transparency → PI (a1·b1) | 0.224 | – | CI excl. zero |

| Anthrop. → Fairness → PI (a2·b2) | 0.099 | – | CI excl. zero |

| Anthrop. → Transp. → Fairness → PI (a1·d21·b2) | 0.064 | – | CI excl. zero |

| Total indirect effect | 0.386 | – | CI excl. zero |

| Direct effect (c’) | 0.168 | – | p = 0.002 |

| Total effect (c) | 0.555 | – | p < 0.001 |

| Predictor | b | SE | t | p |

| Algorithmic Anthropomorphism | 0.449 | – | – | < 0.001 |

| TAM | 0.718 | – | – | < 0.001 |

| Anthropomorphism × TAM | –0.035 | – | – | 0.075 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).