Submitted:

13 April 2026

Posted:

15 April 2026

You are already at the latest version

Abstract

Keywords:

1. Summary

2. Data Description

3. Methods

3.1. Ethics Considerations

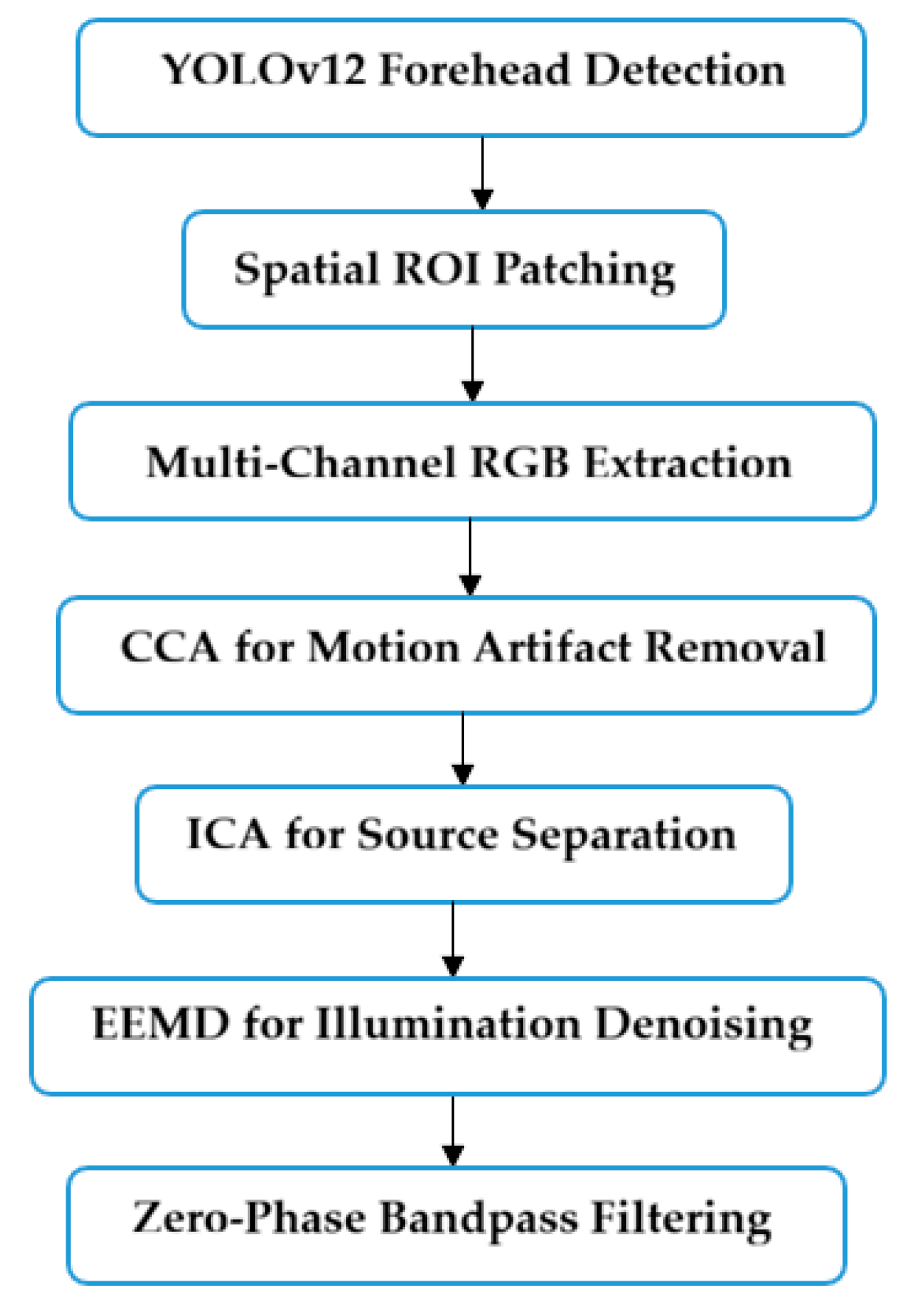

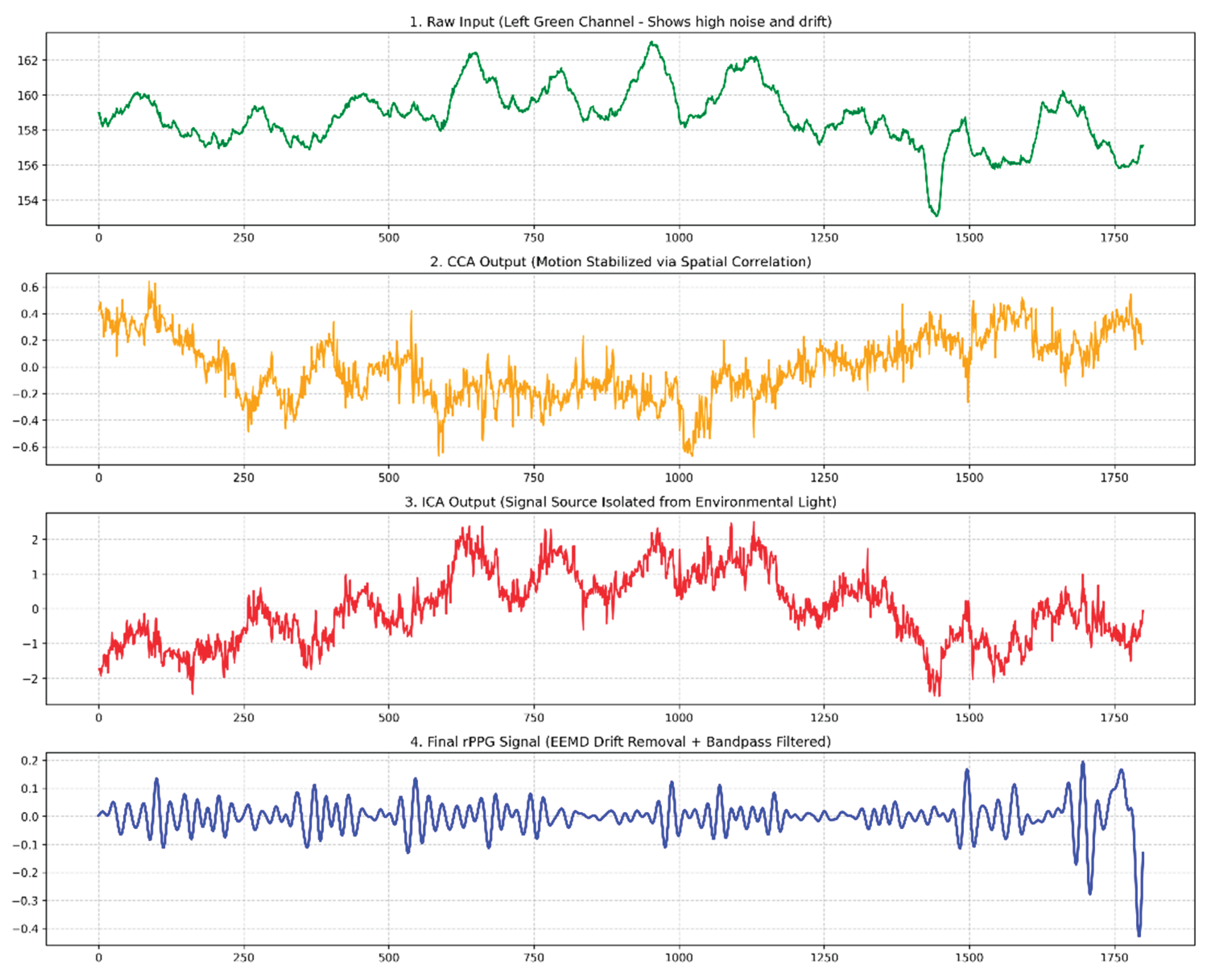

3.2. rPPG Signals Extraction

4. User Notes

- CLBP-300 is designed for AI developers and digital health researchers that trying to build cuff-less BP models using rPPG signal.

- Training any AI models on this data for commercial products requires a separate license from the corresponding author.

- Attempting to identify any person in the videos is strictly prohibited, as is sharing or sending the original video files to anyone else. Also, no face photo allowed to be published, only use graphs (signals), features extraction and table results.

- Any publication, conference proceeding, or report using CLBP-300 must cite this paper: “CLBP-300: A Real-World Video Dataset for Cuff-less Blood Pressure Estimation via rPPG.”

- By using CLBP-300, researchers automatically agree to all terms in the Data Use Agreement (DUA). Any violation will lead to legal action.

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- W. H. O. (WHO). “Hypertension,” accessed on 16 March 2026; https://www.who.int/news-room/fact-sheets/detail/hypertension.

- W. H. O. (WHO). “Global report on hypertension 2025: High stakes: Turning evidence into action,” accessed on 16 March 2026; https://www.who.int/publications/i/item/9789240115569.

- Tamura, T.; Huang, M., “Cuffless blood pressure monitor for home and hospital use,” Sensors, vol. 25, no. 3, pp. 640, 2025. [CrossRef]

- Mousavi, S.S.; Reyna, M.A.; Clifford, G.D.; Sameni, R., “A survey on blood pressure measurement technologies: Addressing potential sources of bias,” Sensors, vol. 24, no. 6, pp. 1730, 2024. [CrossRef]

- Al-Naji, A.; Fakhri, A.B.; Mahmood, M.F.; Chahl, J., “Contactless blood pressure estimation system using a computer vision system,” Inventions, vol. 7, no. 3, pp. 84, 2022. [CrossRef]

- Schutte, A.E.; Kollias, A.; Stergiou, G.S., “Blood pressure and its variability: Classic and novel measurement techniques,” Nature Reviews Cardiology, vol. 19, no. 10, pp. 643-654, 2022. [CrossRef]

- Yu, Z.; Li, X.; Zhao, G., “Facial-video-based physiological signal measurement: Recent advances and affective applications,” IEEE Signal Processing Magazine, vol. 38, no. 6, pp. 50-58, 2021. [CrossRef]

- Al-Naji, A.; Mahmood, M.F.; Fakhri, A.B.; Chahl, J., “Computer vision for non-contact blood pressure (BP): Preliminary results.” p. 040012. [CrossRef]

- Premkumar, S.; Hemanth, D.J., “Intelligent remote photoplethysmography-based methods for heart rate estimation from face videos: A survey.” p. 57. [CrossRef]

- Cheng, C.-H.; Chin, J.W.; Wong, K.L.; Chan, T.T.; Lo, H.C.; Pang, K.L.; So, R.; Yan, B., “Remote blood pressure estimation from facial videos using transfer learning: Leveraging PPG to RPPG conversion.” pp. 4225-4236. [CrossRef]

- Tian, Y.; Ye, Q.; Doermann, D., “Yolov12: Attention-centric real-time object detectors,” arXiv preprint arXiv:2502.12524, 2025. [CrossRef]

- Yang, X.; Liu, W.; Liu, W.; Tao, D., “A survey on canonical correlation analysis,” IEEE Transactions on Knowledge and Data Engineering, vol. 33, no. 6, pp. 2349-2368, 2019. [CrossRef]

- Wedekind, D.; Trumpp, A.; Gaetjen, F.; Rasche, S.; Matschke, K.; Malberg, H.; Zaunseder, S., “Assessment of blind source separation techniques for video-based cardiac pulse extraction,” Journal of biomedical optics, vol. 22, no. 3, pp. 035002-035002, 2017. [CrossRef]

- Labunets, L., “Empirical mode decomposition of remote photoplethysmography signals for assessment of heart rate,” Biomedical Engineering, pp. 1-6, 2025. [CrossRef]

| Task | Description |

|---|---|

| Acronym | CLBP-300. |

| Beneficiaries | Digital Health Researchers, AI & Computer Vision Developers and Computer Science Researchers. |

| Specific subject area | Digital Health / Health Informatics and AI in Medicine. |

| Total participants | 300 (244 Males, 56 Females) |

| Duration | 30-60 seconds per video. |

| Type of data | Videos and excel sheet providing ground-truth measurements for SYSBP, DIABP, and HR for each recorded video with ambient light intensity recorded in Lux and demographic attributes for each participant (i.e. age and gender). |

| How data were acquired | Videos were captured with an iPhone 16 pro max camera and Nikon D5300 captured at 60 fps. |

| Data format | MOV format. |

| Experimental Setup | Participants were seated at a distance of 0.5 to 1 meters from the cameras. |

| Data accessibility | A sample dataset containing video recordings for three participant is publicly available on Google site at (https://sites.google.com/view/clbp-300?usp=sharing). |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).