Submitted:

14 April 2026

Posted:

15 April 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Materials and Methods

2.1. Study Design

2.2. Teacher Recruitment and Training

2.3. Research Kits and Materials

2.4. Classroom Implementation

2.5. Expert Verification and Identification Accuracy

2.6. Response Variables and Covariates

2.7. Statistical Analyses

3. Results

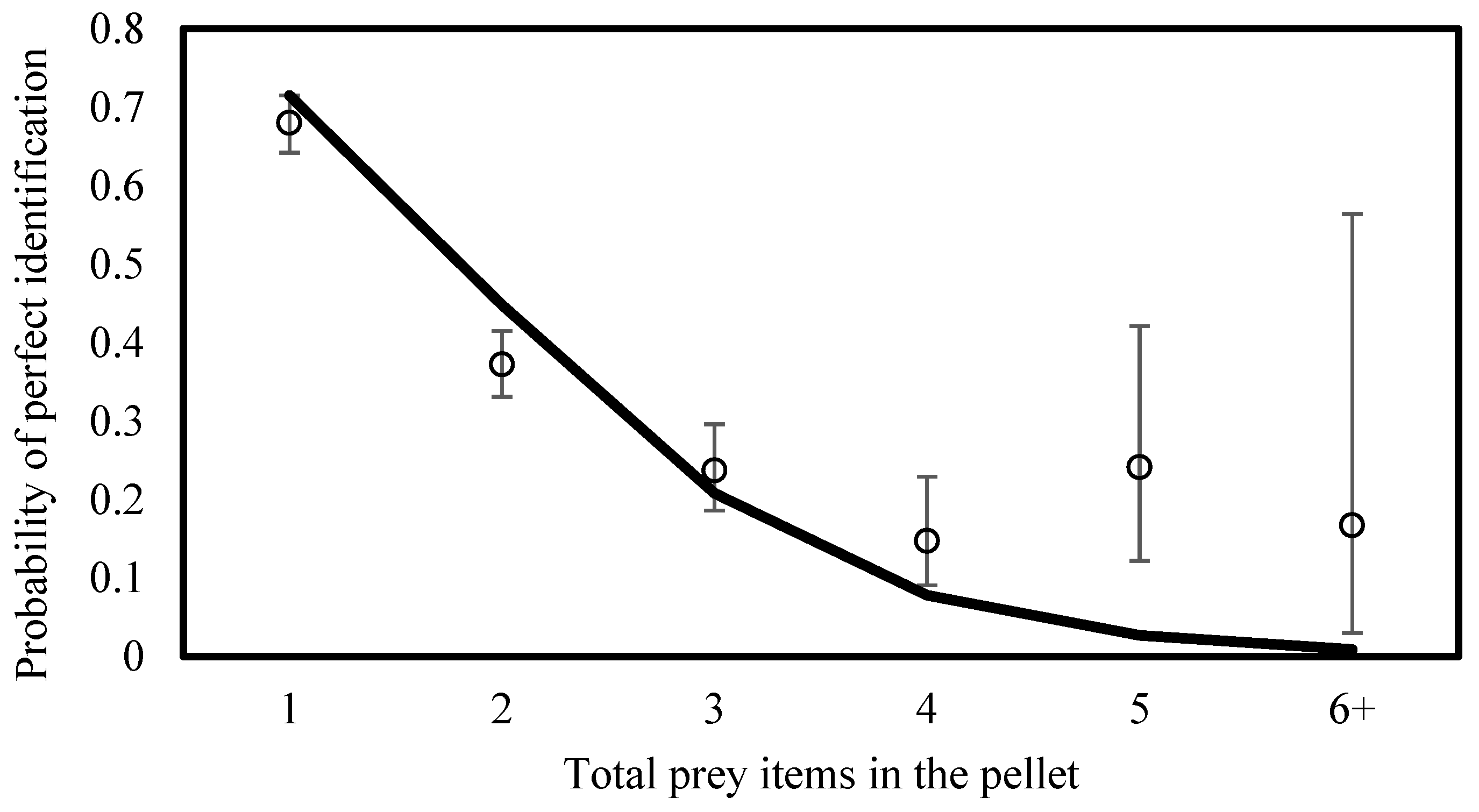

3.1. Student Prey Identification Accuracy and Success

3.2. Teacher Participation Results

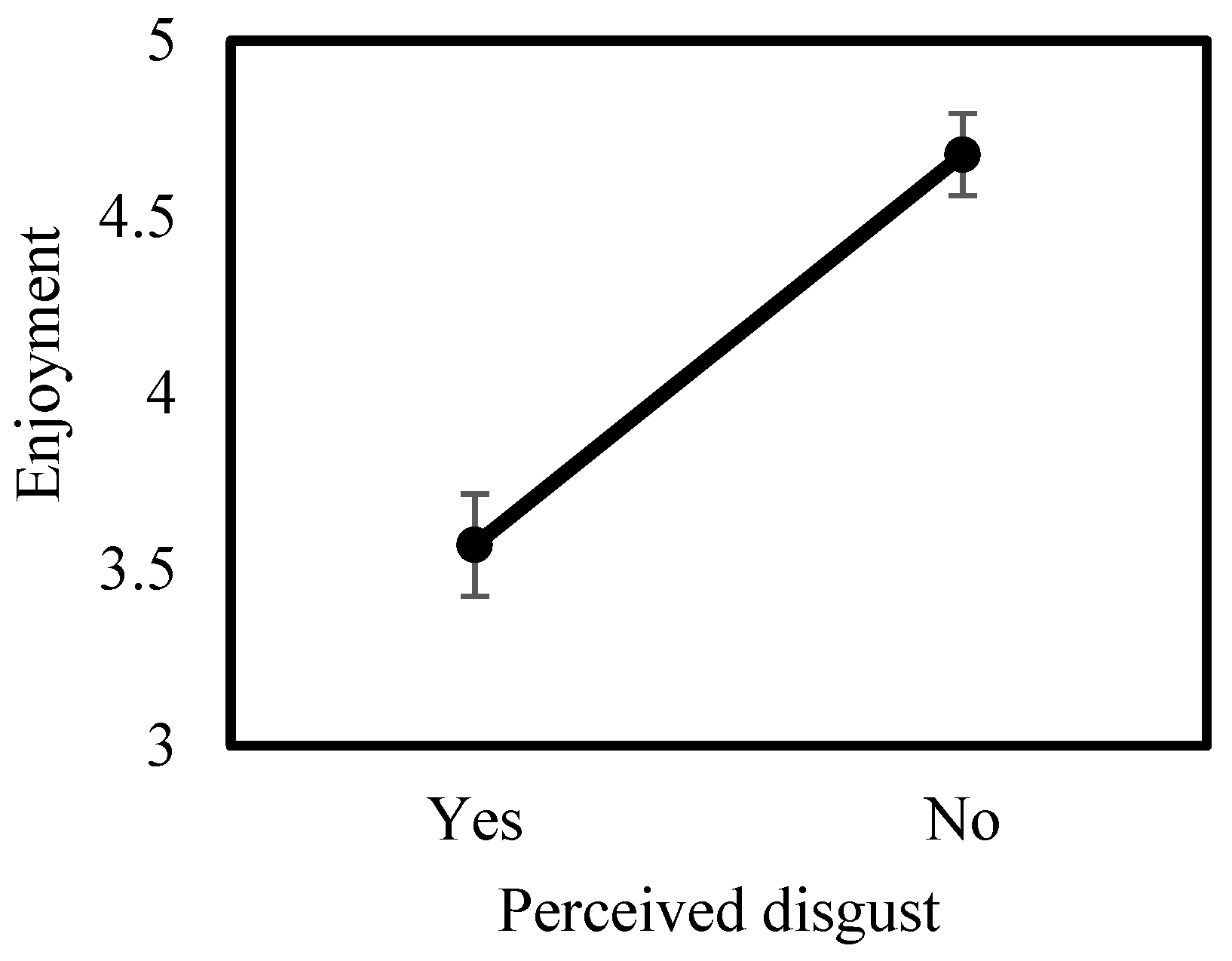

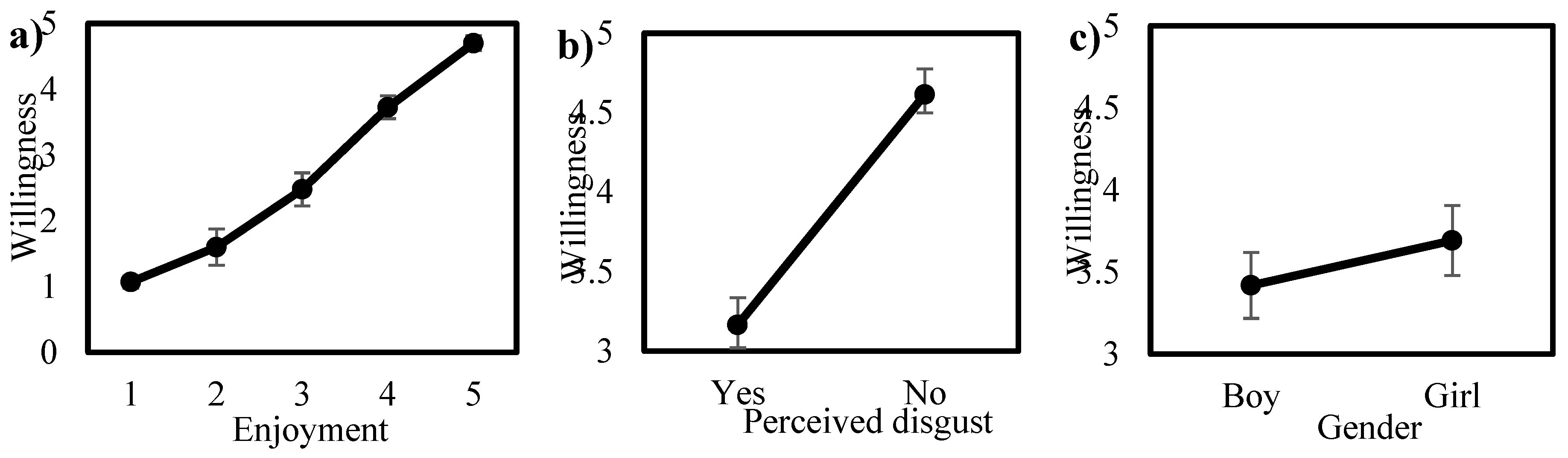

3.3. Student Perceptions and Engagement During the Pellet Activity

4. Discussion

4.1. Identification Accuracy and Limits to Ecological Inference

4.2. Implementation Feasibility in School-Based Monitoring

4.3. Task Complexity as a Structural Constraint on Citizen-Science Data Quality

4.4. Limitations and Future Directions

5. Conclusions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Anderson, C.B. Biodiversity Monitoring, Earth Observations and the Ecology of Scale. Ecol. Lett. 2018, 21, 1572–1585. [Google Scholar] [CrossRef]

- Jetz, W.; McGeoch, M.A.; Guralnick, R.; Ferrier, S.; Beck, J.; Costello, M.J.; Fernandez, M.; Geller, G.N.; Keil, P.; Merow, C.; et al. Essential Biodiversity Variables for Mapping and Monitoring Species Populations. Nat. Ecol. Evol. 2019 34 2019, 3, 539–551. [Google Scholar] [CrossRef]

- Chandler, M.; See, L.; Copas, K.; Bonde, A.M.Z.; López, B.C.; Danielsen, F.; Legind, J.K.; Masinde, S.; Miller-Rushing, A.J.; Newman, G.; et al. Contribution of Citizen Science towards International Biodiversity Monitoring. Biol. Conserv. 2017, 213, 280–294. [Google Scholar] [CrossRef]

- Di Cecco, G.J.; Barve, V.; Belitz, M.W.; Stucky, B.J.; Guralnick, R.P.; Hurlbert, A.H. Observing the Observers: How Participants Contribute Data to INaturalist and Implications for Biodiversity Science. Bioscience 2021, 71, 1179–1188. [Google Scholar] [CrossRef]

- Bowler, D.E.; Bhandari, N.; Repke, L.; Beuthner, C.; Callaghan, C.T.; Eichenberg, D.; Henle, K.; Klenke, R.; Richter, A.; Jansen, F.; et al. Decision-Making of Citizen Scientists When Recording Species Observations. Sci. Reports 2022 121 2022, 12, 11069. [Google Scholar] [CrossRef] [PubMed]

- Balestrieri, A.; Gazzola, A.; Formenton, G.; Canova, L. Long-Term Impact of Agricultural Practices on the Diversity of Small Mammal Communities: A Case Study Based on Owl Pellets. Environ. Monit. Assess. 2019, 191, 725. [Google Scholar] [CrossRef]

- Williams, S.T.; Maree, N.; Taylor, P.; Belmain, S.R.; Keith, M.; Swanepoel, L.H. Predation by Small Mammalian Carnivores in Rural Agro-Ecosystems: An Undervalued Ecosystem Service? Ecosyst. Serv. 2018, 30, 362–371. [Google Scholar] [CrossRef]

- Hurst, Z.M.; McCleery, R.A.; Collier, B.A.; Silvy, N.J.; Taylor, P.J.; Monadjem, A. Linking Changes in Small Mammal Communities to Ecosystem Functions in an Agricultural Landscape. Mamm. Biol. 2014, 79, 17–23. [Google Scholar] [CrossRef]

- Labuschagne, L.; Swanepoel, L.H.; Taylor, P.J.; Belmain, S.R.; Keith, M. Are Avian Predators Effective Biological Control Agents for Rodent Pest Management in Agricultural Systems? Biol. Control 2016, 101, 94–102. [Google Scholar] [CrossRef]

- Cleary, K.A.; Bonaiuto, V.; Amulike, B.; Pearson, J.; Johnson, G. A Reduced Labor, Non-Invasive Method for Characterizing Small Mammal Communities. Mammal Res. 2025 701 2025, 70, 151–158. [Google Scholar] [CrossRef]

- Heisler, L.M.; Somers, C.M.; Poulin, R.G. Owl Pellets: A More Effective Alternative to Conventional Trapping for Broad-Scale Studies of Small Mammal Communities. Methods Ecol. Evol. 2016, 7, 96–103. [Google Scholar] [CrossRef]

- Torre, I.; Arrizabalaga, A.; Flaquer, C. Three Methods for Assessing Richness and Composition of Small Mammal Communities. J. Mammal. 2004, 85, 524–530. [Google Scholar] [CrossRef]

- Taylor, I. Barn Owls: Predator-Prey Relationships and Conservation; Cambridge University Press, 1994. [Google Scholar]

- Raczyński, J.; Ruprecht, A.L. Effect of Digestion on the Osteological Composition of Owl Pellets. Acta Ornithol. 1974, 14, 25–38. [Google Scholar]

- Johnston, A.; Fink, D.; Hochachka, W.M.; Kelling, S. Estimates of Observer Expertise Improve Species Distributions from Citizen Science Data. Methods Ecol. Evol. 2018, 9, 88–97. [Google Scholar] [CrossRef]

- Gaston, K.J.; Soga, M.; Duffy, J.P.; Garrett, J.K.; Gaston, S.; Cox, D.T.C. Personalised Ecology. Trends Ecol. Evol. 2018, 33, 916–925. [Google Scholar] [CrossRef]

- Peleg, O.; Nir, S.; Meyrom, K.; Aviel, S.; Roulin, A.; Izhaki, I.; Leshem, Y.; Charter, M. Three Decades of Satisfied Israeli Farmers : Barn Owls (Tyto Alba ) as Biological Pest Control of Rodents. In Proceedings of the Proc. 28 th Vertebr. Pest Conf.; Woods, D.M., Ed.; Univ. of Calif.: Davis., 2018; pp. 194–203. [Google Scholar]

- Ronen, N.; Brook, A.; Charter, M. Assessing Birds of Prey as Biological Pest Control: A Comparative Study with Hunting Perches and Rodenticides on Rodent Activity and Crop Health. Biology (Basel). 2025, 14, 1108. [Google Scholar] [CrossRef]

- Charter, M.; Leshem, Y.; Meyrom, K.; Peleg, O.; Roulin, A. The Importance of Micro-Habitat in the Breeding of Barn Owls Tyto Alba. Bird Study 2012, 59, 368–371. [Google Scholar] [CrossRef]

- Charter, M.; Izhaki, I.; Leshem, Y.; Meyrom, K.; Roulin, A. Relationship between Diet and Reproductive Success in the Israeli Barn Owl. J. Arid Environ. 2015, 122, 59–63. [Google Scholar] [CrossRef]

- Charter, M.; Izhaki, I.; Roulin, A. The Relationship between Intra–Guild Diet Overlap and Breeding in Owls in Israel. Popul. Ecol. 2018, 60, 397–403. [Google Scholar] [CrossRef]

- Bonney, R.; Cooper, C.B.; Dickinson, J.; Kelling, S.; Phillips, T.; Rosenberg, K. V.; Shirk, J. Citizen Science: A Developing Tool for Expanding Science Knowledge and Scientific Literacy. Bioscience 2009, 59, 977–984. [Google Scholar] [CrossRef]

- Aceves-Bueno, E.; Adeleye, A.S.; Feraud, M.; Huang, Y.; Tao, M.; Yang, Y.; Anderson, S.E. The Accuracy of Citizen Science Data: A Quantitative Review. Bull. Ecol. Soc. Am. 2017, 98, 278–290. [Google Scholar] [CrossRef]

- Schmidt, B.R.; Cruickshank, S.S.; Bühler, C.; Bergamini, A. Observers Are a Key Source of Detection Heterogeneity and Biased Occupancy Estimates in Species Monitoring. Biol. Conserv. 2023, 283, 110102. [Google Scholar] [CrossRef]

- Farmer, R.G.; Leonard, M.L.; Horn, A.G. Observer Effects and Avian-Call-Count Survey Quality: Rare-Species Biases and Overconfidence. Auk 2012, 129, 76–86. [Google Scholar] [CrossRef]

- Lüsse, M.; Brockhage, F.; Beeken, M.; Pietzner, V. Citizen Science and Its Potential for Science Education. Int. J. Sci. Educ. 2022, 44, 1120–1142. [Google Scholar] [CrossRef]

- Kelemen-Finan, J.; Scheuch, M.; Winter, S. Contributions from Citizen Science to Science Education: An Examination of a Biodiversity Citizen Science Project with Schools in Central Europe. Int. J. Sci. Educ. 2018, 40, 2078–2098. [Google Scholar] [CrossRef]

- Shah, H.R.; Martinez, L.R. Current Approaches in Implementing Citizen Science in the Classroom. J. Microbiol. Biol. Educ. 2016, 17, 17–22. [Google Scholar] [CrossRef]

- Finger, L.; van den Bogaert, V.; Schmidt, L.; Fleischer, J.; Stadtler, M.; Sommer, K.; Wirth, J. The Science of Citizen Science: A Systematic Literature Review on Educational and Scientific Outcomes. Front. Educ. 2023, 8, 1226529. [Google Scholar] [CrossRef]

- Ostrom, E. A General Framework for Analyzing Sustainability of Social-Ecological Systems. Science (80-. ). 2009, 325, 419–422. [Google Scholar] [CrossRef]

- Brown, E.; Liu, H.-L. Citizen Science in the Classroom: Data Quality and Student Engagement. J. Community Engagem. Scholarsh. 2024, 16, 8. [Google Scholar] [CrossRef]

- Philippoff, J.; Baumgartner, E. Addressing Common Student Technical Errors in Field Data Collection: An Analysis of a Citizen-Science Monitoring Project. J. Microbiol. Biol. Educ. 2016, 17, 51–55. [Google Scholar] [CrossRef]

- Kosmala, M.; Wiggins, A.; Swanson, A.; Simmons, B. Assessing Data Quality in Citizen Science. Front. Ecol. Environ. 2016, 14, 551–560. [Google Scholar] [CrossRef]

- Shirk, J.L.; Ballard, H.L.; Wilderman, C.C.; Phillips, T.; Wiggins, A.; Jordan, R.; McCallie, E.; Minarchek, M.; Lewenstein, B. V.; Krasny, M.E.; et al. Public Participation in Scientific Research: A Framework for Deliberate Design. Ecol. Soc. 2012, 17. [Google Scholar] [CrossRef]

- Meza-Torres, C.; Jordan, M.; Zuiker, S.; Jongewaard, R.; Adeloju, E.; Spreitzer, K. Examining Teacher Supports for Visibility, Believability, and Meaningfulness in Place-Based Citizen Science. Proc. Int. Conf. Learn. Sci. ICLS 2024, 2409–2410. [Google Scholar] [CrossRef]

- Aristeidou, M.; Lorke, J.; Ismail, N. Citizen Science: Schoolteachers’ Motivation, Experiences, and Recommendations. Int. J. Sci. Math. Educ. 2022 217 2022, 21, 2067–2093. [Google Scholar] [CrossRef]

- Carrier, S.J.; Scharen, D.R.; Hayes, M.; Smith, P.S.; Bruce, A.; Craven, L. Citizen Science in Elementary Classrooms: A Tale of Two Teachers. Front. Educ. 2024, 9, 1470070. [Google Scholar] [CrossRef]

- Chambert, T.; Miller, D.A.W.; Nichols, J.D. Modeling False Positive Detections in Species Occurrence Data under Different Study Designs. Ecology 2015, 96, 332–339. [Google Scholar] [CrossRef] [PubMed]

- Dickinson, J.L.; Zuckerberg, B.; Bonter, D.N. Citizen Science as an Ecological Research Tool: Challenges and Benefits. Annu. Rev. Ecol. Evol. Syst. 2010, 41, 149–172. [Google Scholar] [CrossRef]

- Dorazio, R.M.; Royle, J.A. Estimating Size and Composition of Biological Communities by Modeling the Occurrence of Species. J. Am. Stat. Assoc. 2005, 100, 389–398. [Google Scholar] [CrossRef]

- Ratnieks, F.L.W.; Schrell, F.; Sheppard, R.C.; Brown, E.; Bristow, O.E.; Garbuzov, M. Data Reliability in Citizen Science: Learning Curve and the Effects of Training Method, Volunteer Background and Experience on Identification Accuracy of Insects Visiting Ivy Flowers. Methods Ecol. Evol. 2016, 7, 1226–1235. [Google Scholar] [CrossRef]

- National Academies of Sciences, Engineering, and Medicine; Division of Behavioral and Social Sciences and Education; Board on Science Education; Committee on Designing Citizen Science to Support Science Learning. Processes of Learning and Learning in Science. In Learning Through Citizen Science: Enhancing Opportunities by Design; Pandya, R., Dibner, K.A., Eds.; National Academies Press: Washington, DC, 2018; ISBN 9780309479165. [Google Scholar]

- Trautmann, N.M.; Shirk, J.L.; Krasny, M.E. Who Poses the Question? Using Citizen Science to Help K–12 Teachers Meet the Mandate for Inquiry. In Citizen Science; Louv, R., Fitzpatrick, J.W., Eds.; Cornell University Press, 2017; pp. 179–190. [Google Scholar]

- Bopardikar, A.; Bernstein, D.; McKenney, S. Boundary Crossing in Student-Teacher-Scientist-Partnerships: Designer Considerations and Methods to Integrate Citizen Science with School Science. Instr. Sci. 2022 515 2023, 51, 847–886. [Google Scholar] [CrossRef]

- Braz Sousa, L.; Kenneally, C.; Golumbic, Y.; Martin, J.M.; Preston, C.; Rutledge, P.; Motion, A. Teacher Experiences and Understanding of Citizen Science in Australian Classrooms. PLoS One 2024, 19, e0312680. [Google Scholar] [CrossRef]

- Bonney, R.; Phillips, T.B.; Ballard, H.L.; Enck, J.W. Can Citizen Science Enhance Public Understanding of Science? Public Underst. Sci. 2016, 25, 2–16. [Google Scholar] [CrossRef]

- Phillips, T.; Porticella, N.; Constas, M.; Bonney, R. A Framework for Articulating and Measuring Individual Learning Outcomes from Participation in Citizen Science. Citiz. Sci. Theory Pract. 2018, 3, 3. [Google Scholar] [CrossRef]

- Lovell, S.; Hamer, M.; Rob, A.E.; Ae, S.; Herbert, D.; Lovell, S.; Hamer, Á.M.; Slotow, Á.R.; Herbert, Á.D.; Herbert, D. An Assessment of the Use of Volunteers for Terrestrial Invertebrate Biodiversity Surveys. Biodivers. Conserv. 2009 1812 2009, 18, 3295–3307. [Google Scholar] [CrossRef]

- Isaac, N.J.B.; van Strien, A.J.; August, T.A.; de Zeeuw, M.P.; Roy, D.B. Statistics for Citizen Science: Extracting Signals of Change from Noisy Ecological Data. Methods Ecol. Evol. 2014, 5, 1052–1060. [Google Scholar] [CrossRef]

- Lukyanenko, R.; Parsons, J.; Wiersma, Y.F. Emerging Problems of Data Quality in Citizen Science. Conserv. Biol. 2016, 30, 447–449. [Google Scholar] [CrossRef] [PubMed]

- Crall, A.W.; Newman, G.J.; Stohlgren, T.J.; Holfelder, K.A.; Graham, J.; Waller, D.M. Assessing Citizen Science Data Quality: An Invasive Species Case Study. Conserv. Lett. 2011, 4, 433–442. [Google Scholar] [CrossRef]

- Bird, T.J.; Bates, A.E.; Lefcheck, J.S.; Hill, N.A.; Thomson, R.J.; Edgar, G.J.; Stuart-Smith, R.D.; Wotherspoon, S.; Krkosek, M.; Stuart-Smith, J.F.; et al. Statistical Solutions for Error and Bias in Global Citizen Science Datasets. Biol. Conserv. 2014, 173, 144–154. [Google Scholar] [CrossRef]

- Kremen, C.; Ullman, K.S.; Thorp, R.W. Evaluating the Quality of Citizen-Scientist Data on Pollinator Communities. Conserv. Biol. 2011, 25, 607–617. [Google Scholar] [CrossRef]

- Norouzzadeh, M.S.; Nguyen, A.; Kosmala, M.; Swanson, A.; Palmer, M.S.; Packer, C.; Clune, J. Automatically Identifying, Counting, and Describing Wild Animals in Camera-Trap Images with Deep Learning. Proc. Natl. Acad. Sci. U. S. A. 2018, 115, E5716–E5725. [Google Scholar] [CrossRef]

- Christin, S.; Hervet, É.; Lecomte, N. Applications for Deep Learning in Ecology. Methods Ecol. Evol. 2019, 10, 1632–1644. [Google Scholar] [CrossRef]

- Willi, M.; Pitman, R.T.; Cardoso, A.W.; Locke, C.; Swanson, A.; Boyer, A.; Veldthuis, M.; Fortson, L. Identifying Animal Species in Camera Trap Images Using Deep Learning and Citizen Science. Methods Ecol. Evol. 2019, 10, 80–91. [Google Scholar] [CrossRef]

- Tabak, M.A.; Norouzzadeh, M.S.; Wolfson, D.W.; Sweeney, S.J.; Vercauteren, K.C.; Snow, N.P.; Halseth, J.M.; Di Salvo, P.A.; Lewis, J.S.; White, M.D.; et al. Machine Learning to Classify Animal Species in Camera Trap Images: Applications in Ecology. Methods Ecol. Evol. 2019, 10, 585–590. [Google Scholar] [CrossRef]

- Zoellick, B.; Nelson, S.J.; Schauffler, M. Participatory Science and Education: Bringing Both Views into Focus. Front. Ecol. Environ. 2012, 10, 310–313. [Google Scholar] [CrossRef]

- Brustenga, L.; Massetti, S.; Paletta, C.; Piccioni, E.; Di Seclì, G.; La Porta, G.; Lucentini, L. Shaping Young Naturalists, Owl Pellets Dissection to Train High-School Students in Comparative Anatomy and Molecular Biology. J. Biol. Educ. 2025, 59, 731–744. [Google Scholar] [CrossRef]

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).