2.1. Dataset

Within the scope of the study, the dataset is prepared for loose sandy soils that is known as susceptible to liquefaction, soils such as gravel, clay, unsaturated soils are excluded. Dataset used in the analyses consists of both liquefied and non-liquefied cases due to earthquakes [

12,

13,

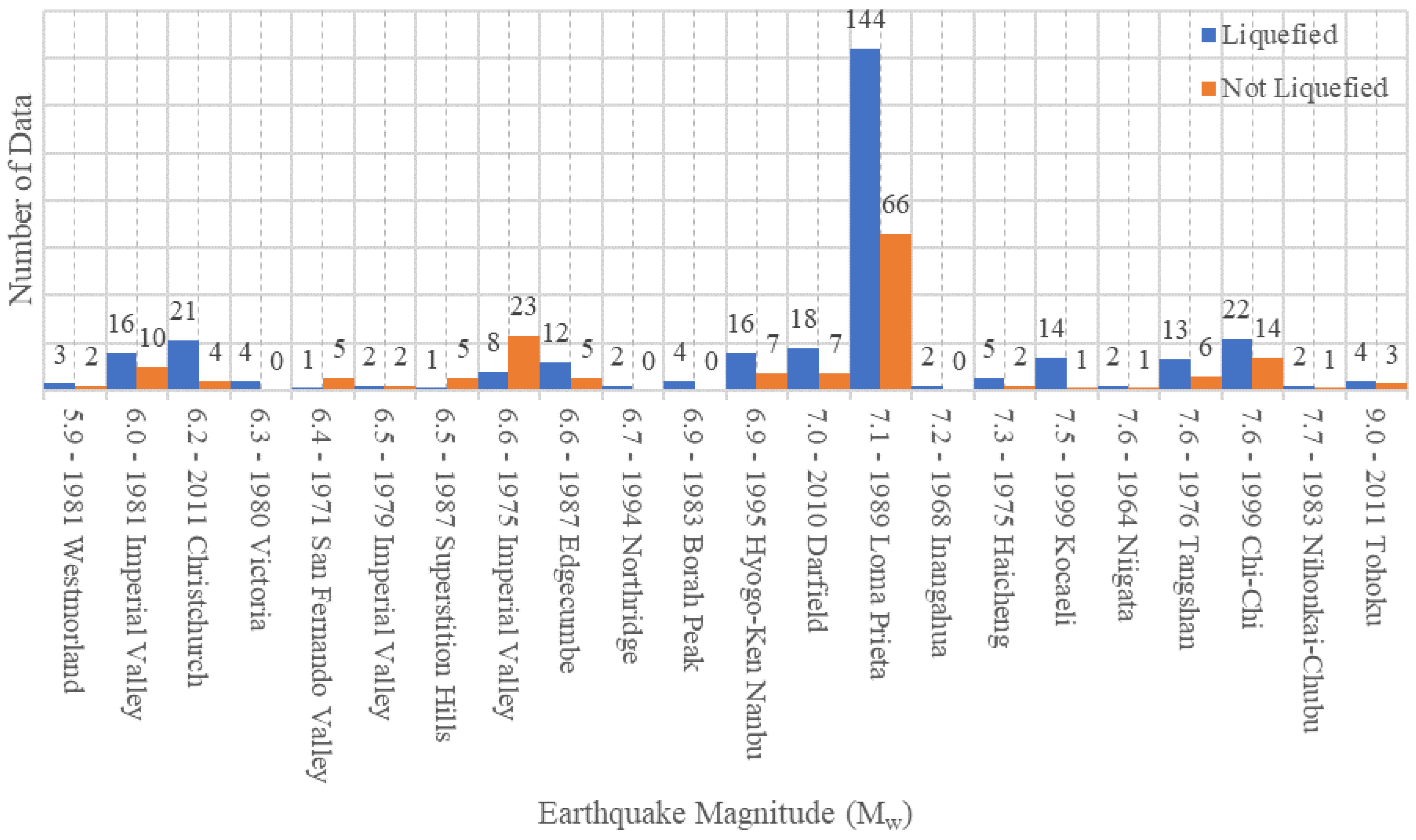

14]. The selected sources, which reported observed liquefaction and non-liquefaction cases for the 22 earthquake events, are listed in

Table 1. The number of cases reported as liquefied and non-liquefied for each earthquake event is given in

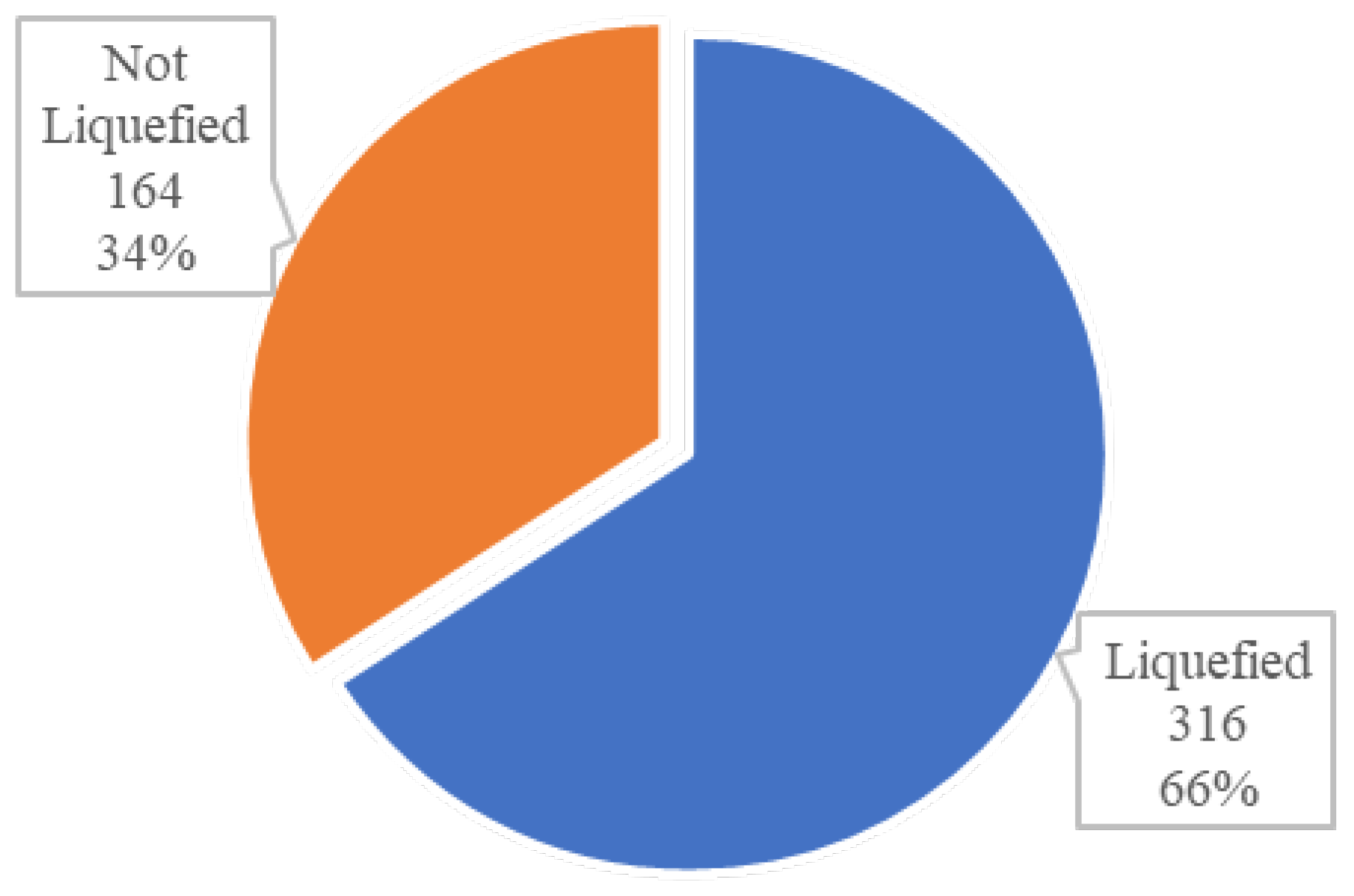

Figure 1. The total number of data points is 480, and proportions of observed liquefaction and non-liquefaction cases are 66% (316 cases) and 34% (164 cases), respectively, as shown in

Figure 2.

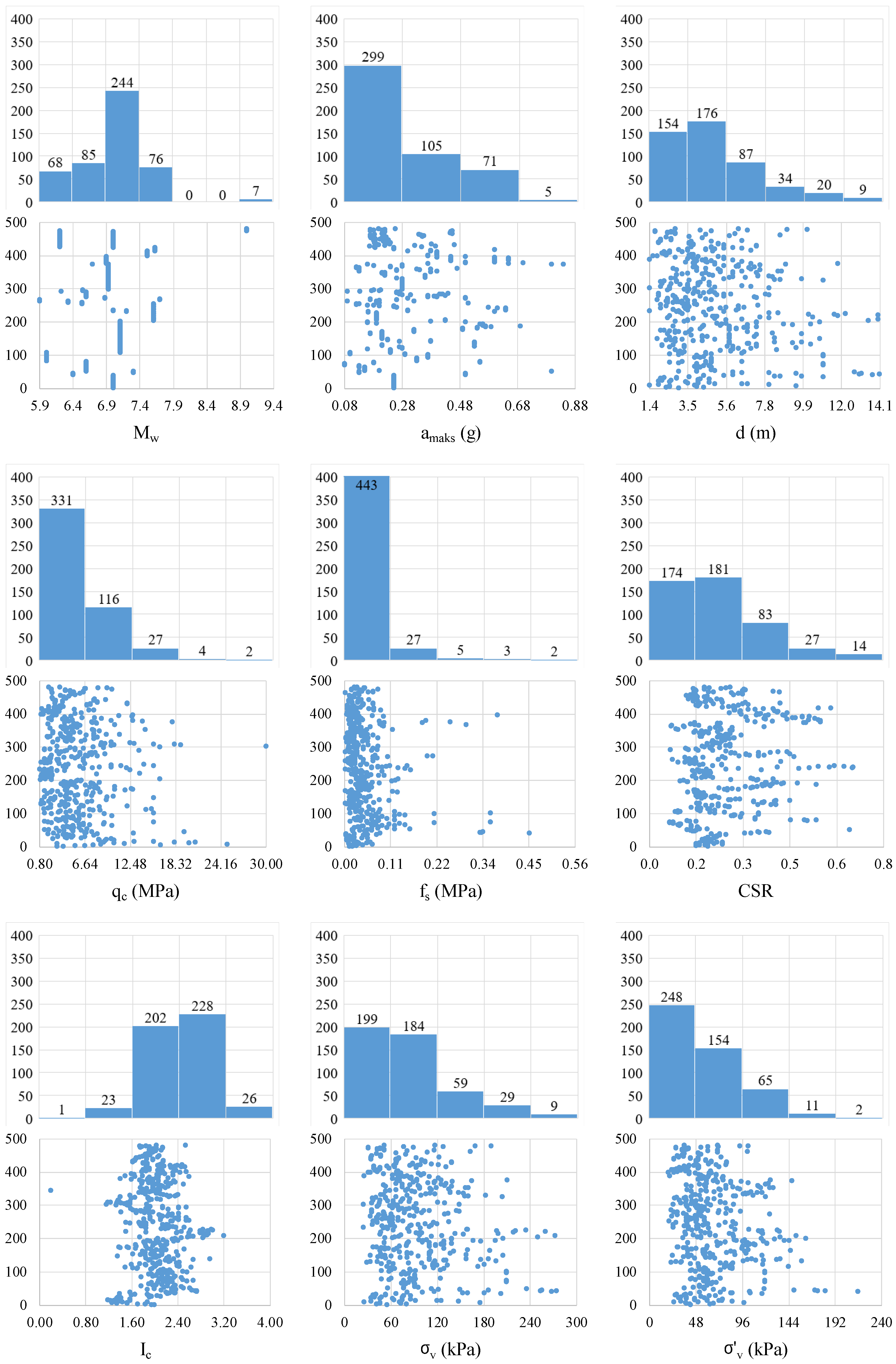

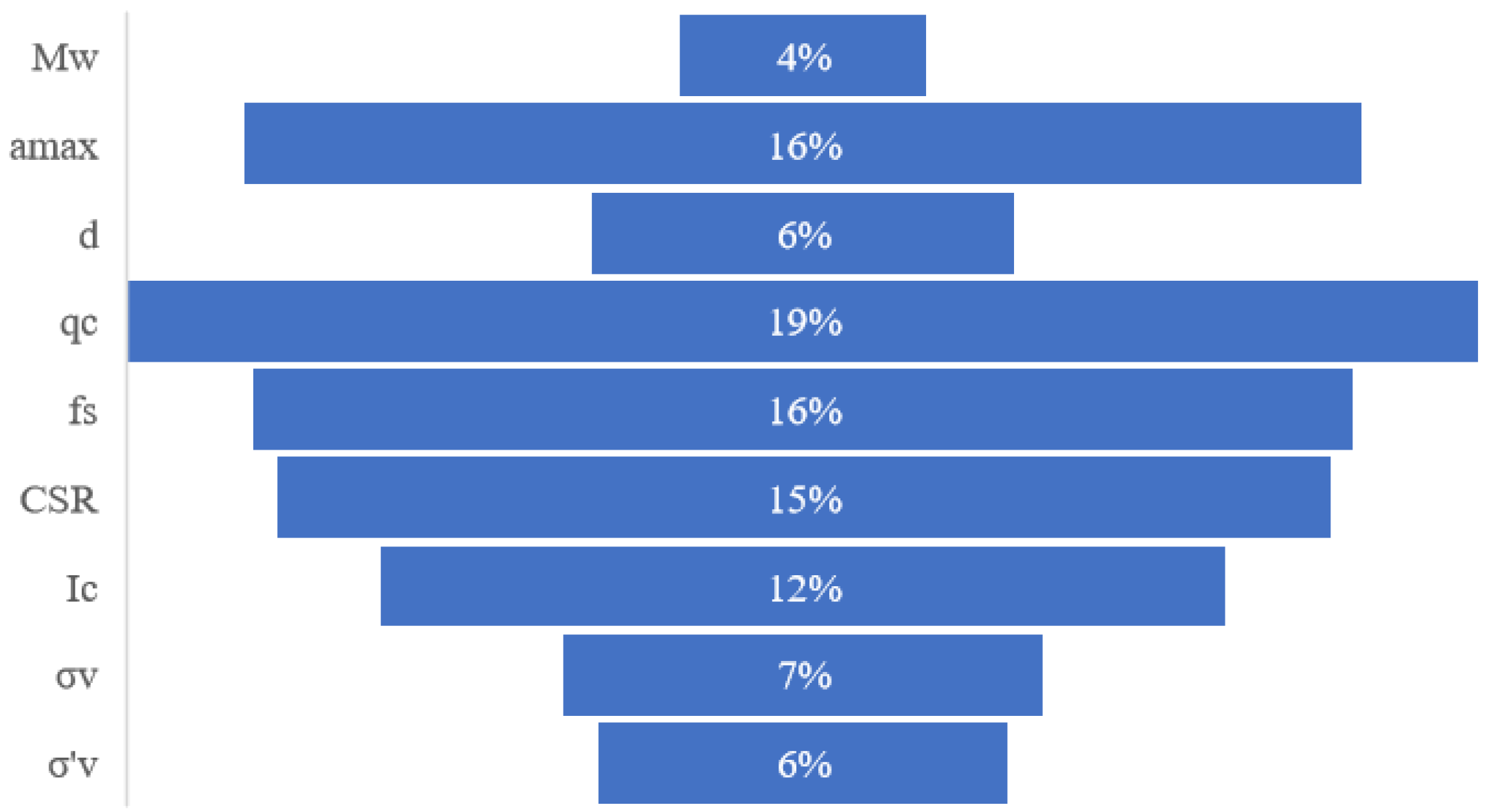

Each data point in the created dataset contains nine quantities, which are earthquake moment magnitude (), peak ground acceleration (), measured depth of and (), cone tip resistance (), cone friction resistance (), cyclic stress ratio (CSR), soil behavior type index (), total stress () and effective stress (),. , , , , , , quantities are determined by measurement, and CSR, quantities are determined depending on the measured quantities.

Researchers conduct studies in cases where liquefaction is observed as a result of earthquakes, and they provide valuable contributions to the literature by reporting their results. Some of these studies added to the literature may include laboratory study results such as dynamic triaxial, monotonic triaxial, consistency limit testing, and sieve analyses. Even simple tests such as sieve analysis and consistency limits require laboratory work. Studies based on field data results can be completed faster and more economically. Therefore, some studies in the literature may not contain laboratory results. Thus, approximately 40% of the studies evaluated within the scope of this study do not have fine material data [

6,

9,

10,

11,

14,

15,

16]. Field tests are performed as a standard practice in studies about cases of liquefaction after an earthquake. Therefore, almost all of the case studies evaluated within the scope of this study have field test data. The number of data points is one of the major factors that governs the accuracy and reliability of the machine learning methods [

17]. For this reason, the high number of input data in machine learning methods was prioritized, in this study. For this purpose, it has been preferred to use the soil type (

) parameter instead of fine material content (FC) parameter that may be missing in some studies.

is a parameter that indicates the soil type (gravel, sand, silt, silty sand, etc.), and it can be determined based on CPT test results for all data points [

3].

The following parameters earthquake moment magnitude (

), peak ground acceleration (

), depth (

), cone tip resistance (

), cone friction resistance (

), total stress (

), effective stress (

), cyclic stress ratio (CSR), soil behavior type index (

) were evaluated as input parameters of the prediction models. The liquefaction status in the database, which is evaluated as the output parameter, is considered as a binary classification problem to separating patterns between samples where liquefaction occurs (positive) and those where liquefaction does not occur (negative).The histogram and scatter plots of the input parameters used in the study are given in

Figure 3.

In machine learning models, variables with different scales can negatively impact model performance. Specifically, some algorithms may be biased toward variables with larger values, causing the model to become unbalanced. To prevent this issue, a normalization process is applied during the data preprocessing stage. In this study, the min-max normalization method given in Equation 1 was used to scale the variables to a certain range. Min-max normalization ensures that all values fall between 0 and 1, allowing variables of different magnitudes to contribute equally.

Here

represents the original value,

represents the lowest value in the dataset, and

represents the highest value [

18,

19,

20,

21].

As part of this study, min-max normalization was applied to all independent variables used for liquefaction prediction. This process helped balance the effect of variables with different scales, increased the learning efficiency of the model, and minimized errors due to differences in scale between variables [

7,

17,

22]. Traditional liquefaction potential prediction method [

3].

Liquefaction potential has been computed for several decades by evaluating field tests such as SPT, CPT and shear wave velocity tests [

23]. Cyclic stress ratio (CSR) is calculated using Equation 2, was proposed by Seed and Idriss [

24], and it is a worldwide accepted and frequently preferred index for liquefaction computations. The cyclic resistance ratio (CRR) can be computed is calculated using the CPT data [

3].

Here

is maximum horizontal surface ground acceleration,

is ground acceleration,

is total vertical stress,

is effective vertical stress and

is stress reduction coefficient.

The Stress Reduction Coefficient

, which is included in Equation 2 and allows the flexibility of the soil profile to be considered, is calculated by Equation 3. The z value in the equation indicates the depth (m).

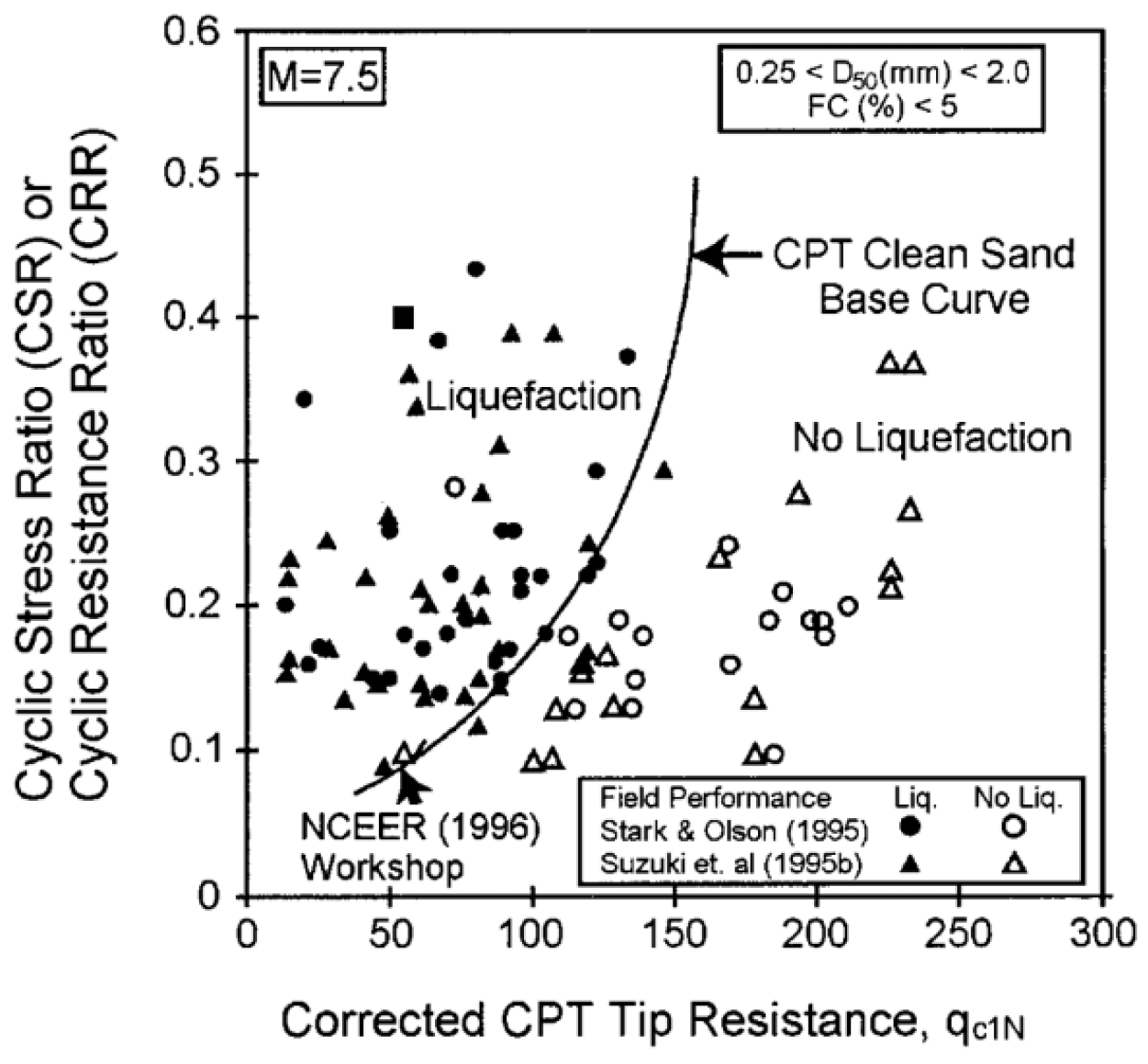

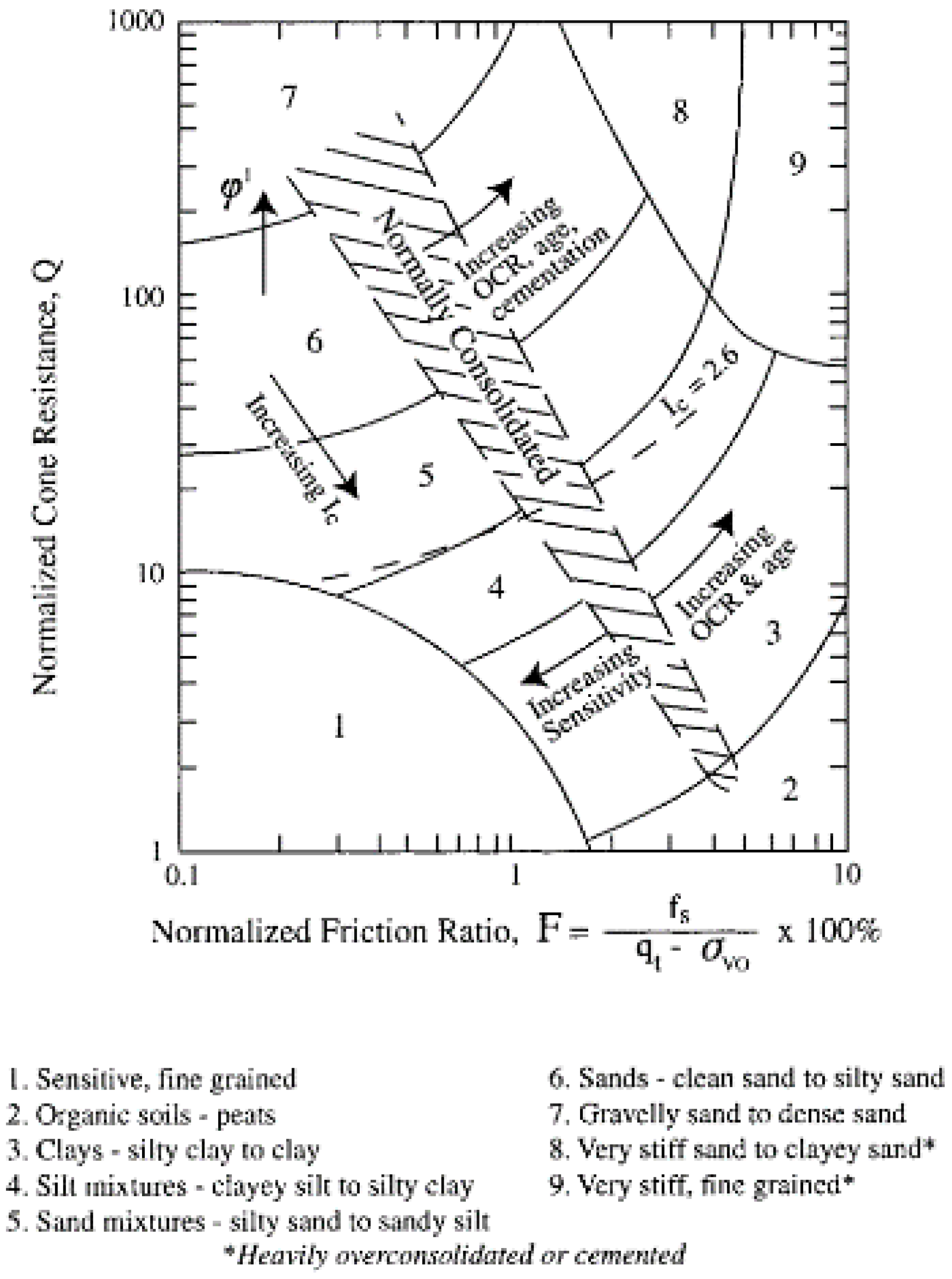

The curve separating the areas where liquefaction is observed/not observed, shown in

Figure 4, which was presented by combining the studies in the literature to be used for estimating CRR using CPT tip resistance, is approximately given by Equation 10. This graph is known to provide more valid results for clean sands [

3], so the values used in the calculations need to be converted to values suitable for clean sands.

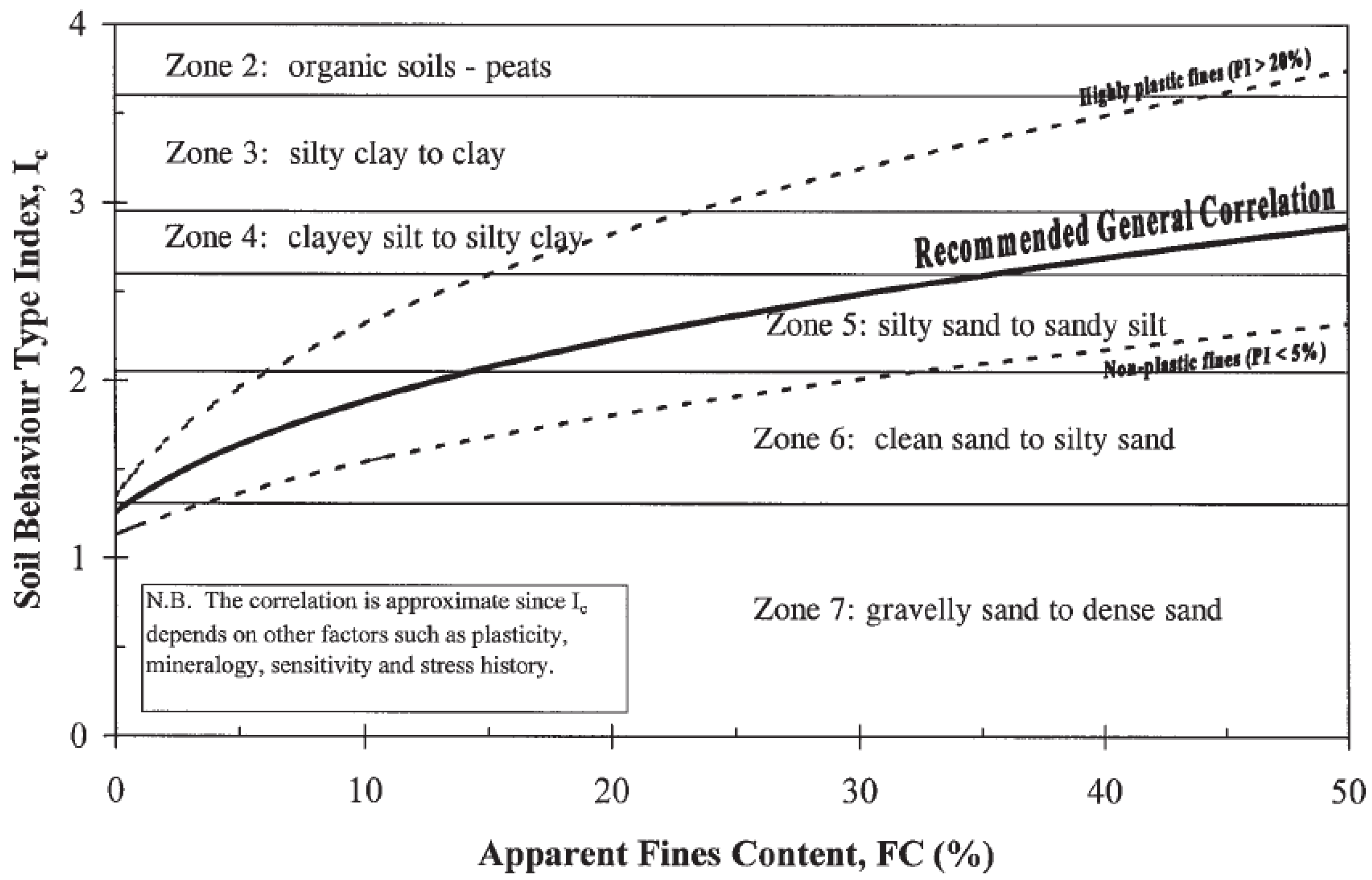

The soil behavior table given in

Figure 5 was developed using the appropriate value of

for clayey soil types. However, a value of

would be appropriate for clean sands, and a value between 0.5 and 1.0 for silts and sandy silts [

3]. The soil behavior type boundaries shown in

Figure 5 were determined according to the

value ranges given in

Table 2. The soil behavior type index

is not valid for zones 1, 8 or 9. Throughout the normally consolidated zone, the soil behavior type index increases in direct proportion to the fines content and plasticity. This relationship is also shown in

Figure 6.

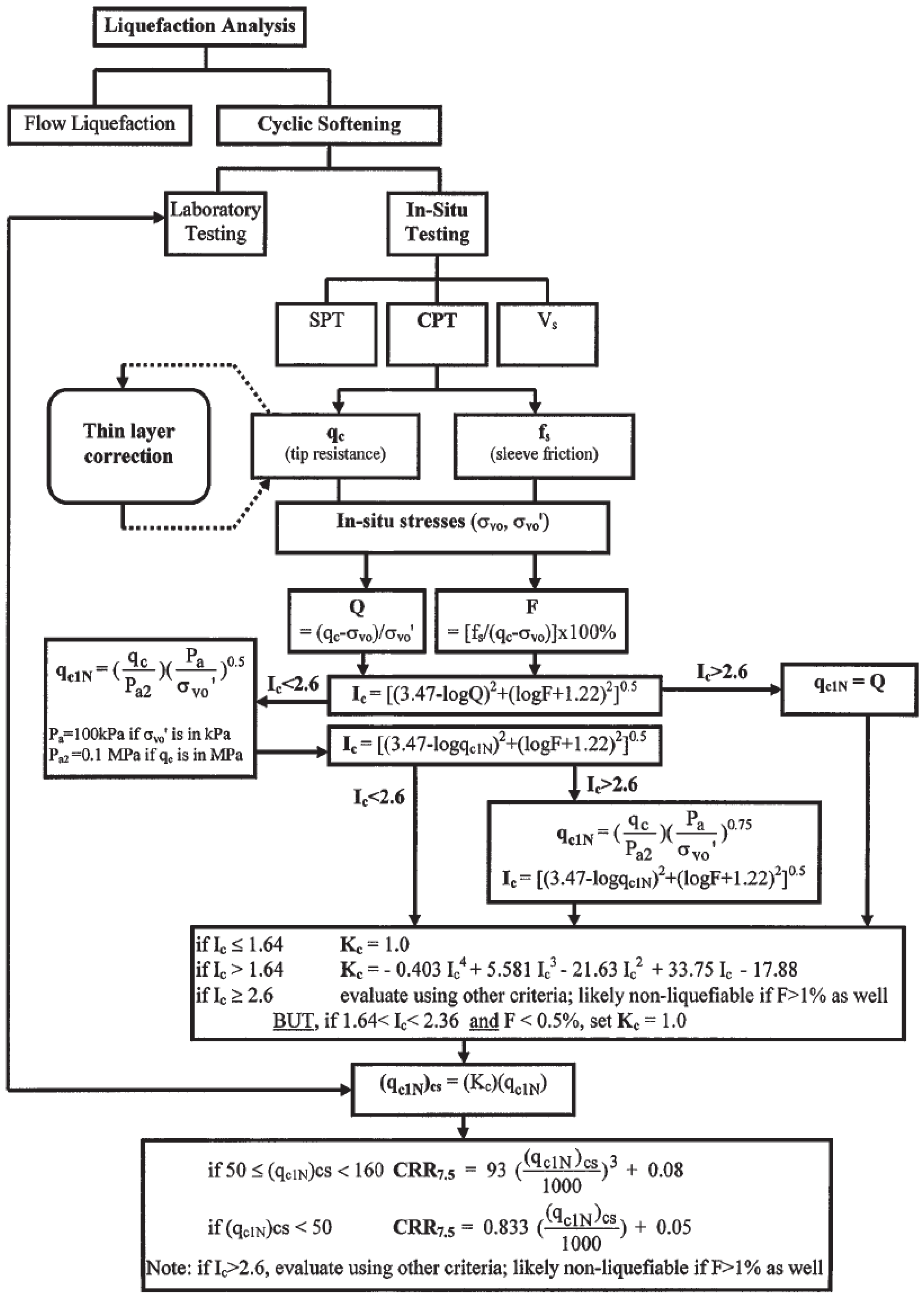

The Cyclic Stress Ratio (CRR) is calculated by following the process steps explained below and summarized in the flow chart given in

Figure 8 [

3].

Firstly, the soil types defined as clay are distinguished from sand and silt. This distinction is calculated by assuming

in the equation given by Equation 4 and calculating the Q value.

Here,

is the dimensionless CPT end resistance,

is the CPT end resistance,

is the vertical total stress,

is the air pressure (100 kPa = 1 atm),

is the vertical effective stress, and

is a value ranging from 0.5 to 1.0 representing the soil type.

The soil behavior type index

given by Equation 6 is calculated using the

value calculated by Equation 4 and the

value calculated by Equation 5 . Here,

is the dimensionless friction ratio and

is the CPT friction resistance.

If the calculated

value is greater than 2.6, the soil is classified as clayey, and the analysis is completed by assuming that it is too rich in clay to liquefy. However, soil samples should be taken and tested with laboratory tests to verify the soil type and liquefaction resistance. If the calculated

value is less than 2.6, the soil is classified as granular and therefore,

and

values are recalculated by accepting

in the equation given by Equation 4.

If the recalculated

value is less than 2.6, the soil is classified as non-plastic and granular. This

value is used to estimate the liquefaction resistance in the following steps. If the recalculated

value is greater than 2.6, the soil is very silty and probably plastic. In this case, the normalized CPT tip resistance

value for silty sands given by Equation 7 should be recalculated by taking

.

The

value is then recalculated using the recalculated

value instead of the

value in Equation 6. This

value is then used to calculate the liquefaction resistance.

For silty sands, the normalized CPT tip resistance

is corrected to the equivalent clean sand value

using Equation 8.

Here

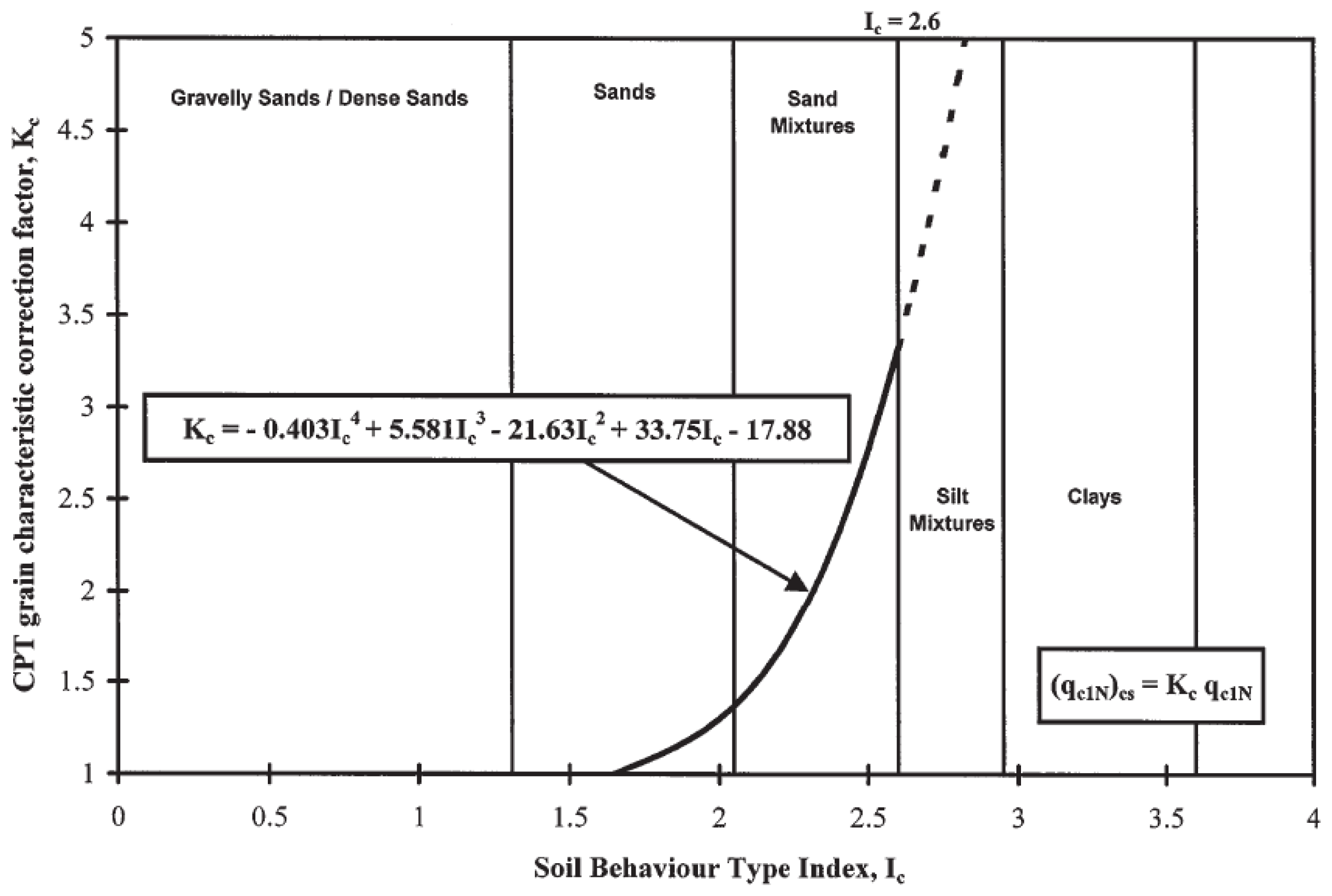

is the correction factor for the grain characteristics calculated by Equation 9.

The

curve given by Equation 9 is shown in

Figure 7. For

, the curve shown as a dashed line indicates that the soils in this

range must have a high clay content or be plastic to liquefy.

The obtained

value is used in the relationship given by Equation 10 and as a result of calculating the

value, the cyclic resistance ratio (CRR) required for the liquefaction of the soil and the cyclic stress ratio (CSR) created by the earthquake are compared with each other and the liquefaction potential can be estimated with the ratio obtained. As a result of these calculations, it is concluded that there is a risk of liquefaction in the layer if the FS safety factor is less than 1.0. Here

is the clean sand CPT tip resistance normalized to 100 kPa (1 atm).