Submitted:

10 April 2026

Posted:

14 April 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Materials and Methods

2.1. Tools and Comparative Feature Analysis

2.1.1. Annotix

2.1.2. Comparative Feature Analysis

2.1.3. Reference Comparators

2.2. Study Design and Participants

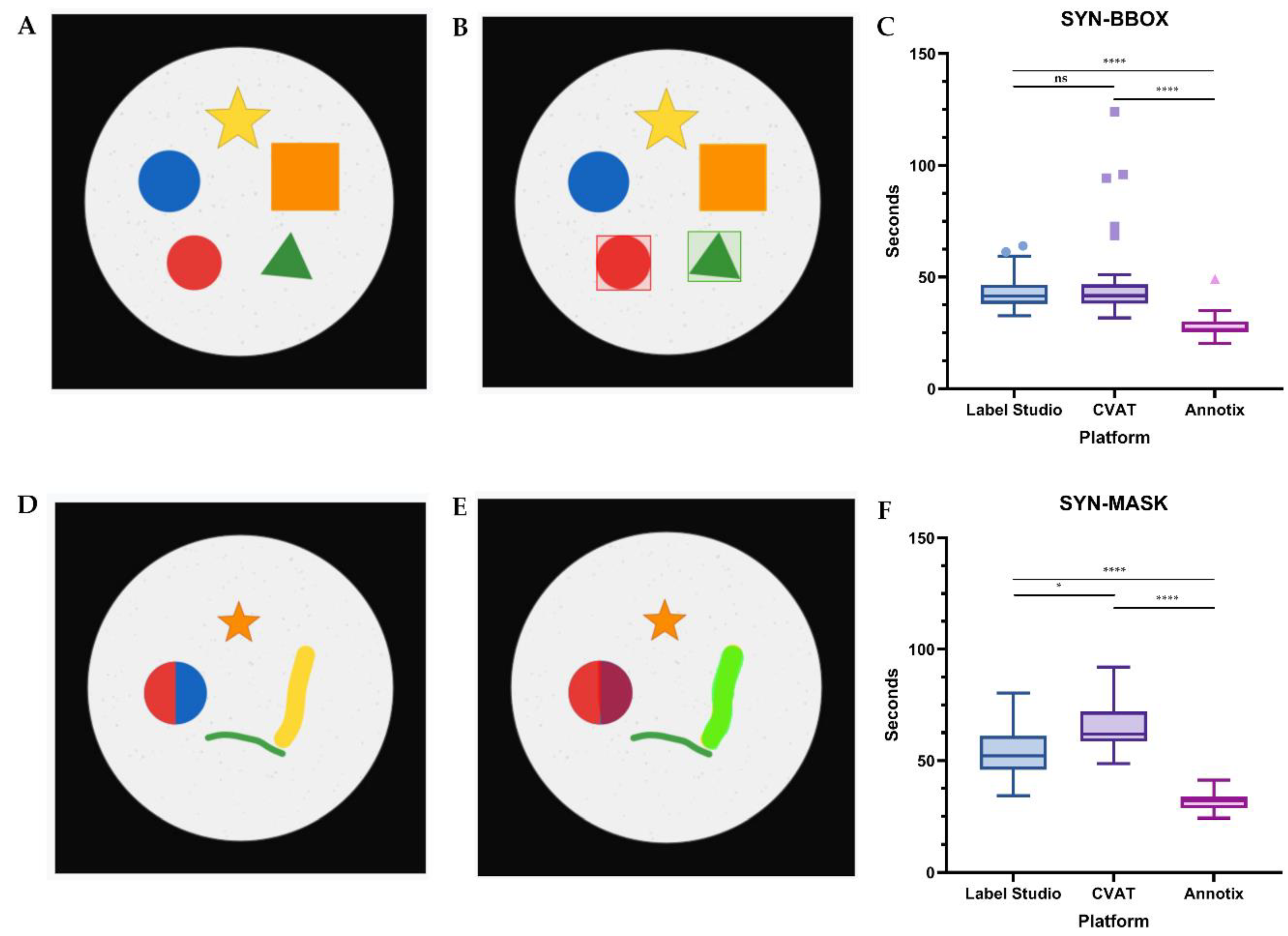

2.3. Annotation Efficiency Evaluation of Synthetic Images

2.3.1. Stimulus Materials

2.3.2. Procedure

2.3.3. Statistical Analysis

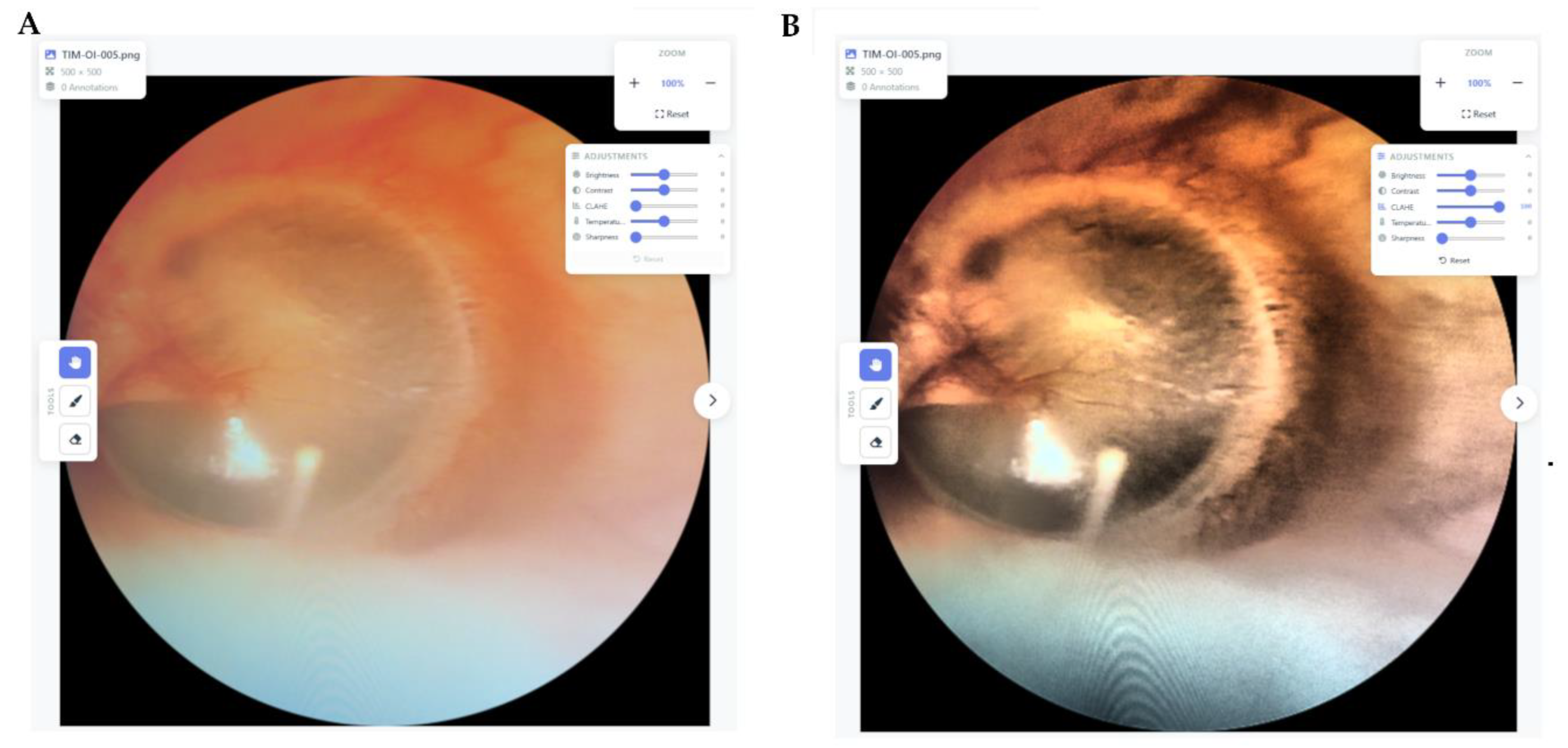

2.4. Heuristic Usability Evaluation of Medical Images

2.4.1. Method

2.4.2. Task Protocol

2.4.3. Analysis

2.5. Use of Generative AI Tools

3. Results

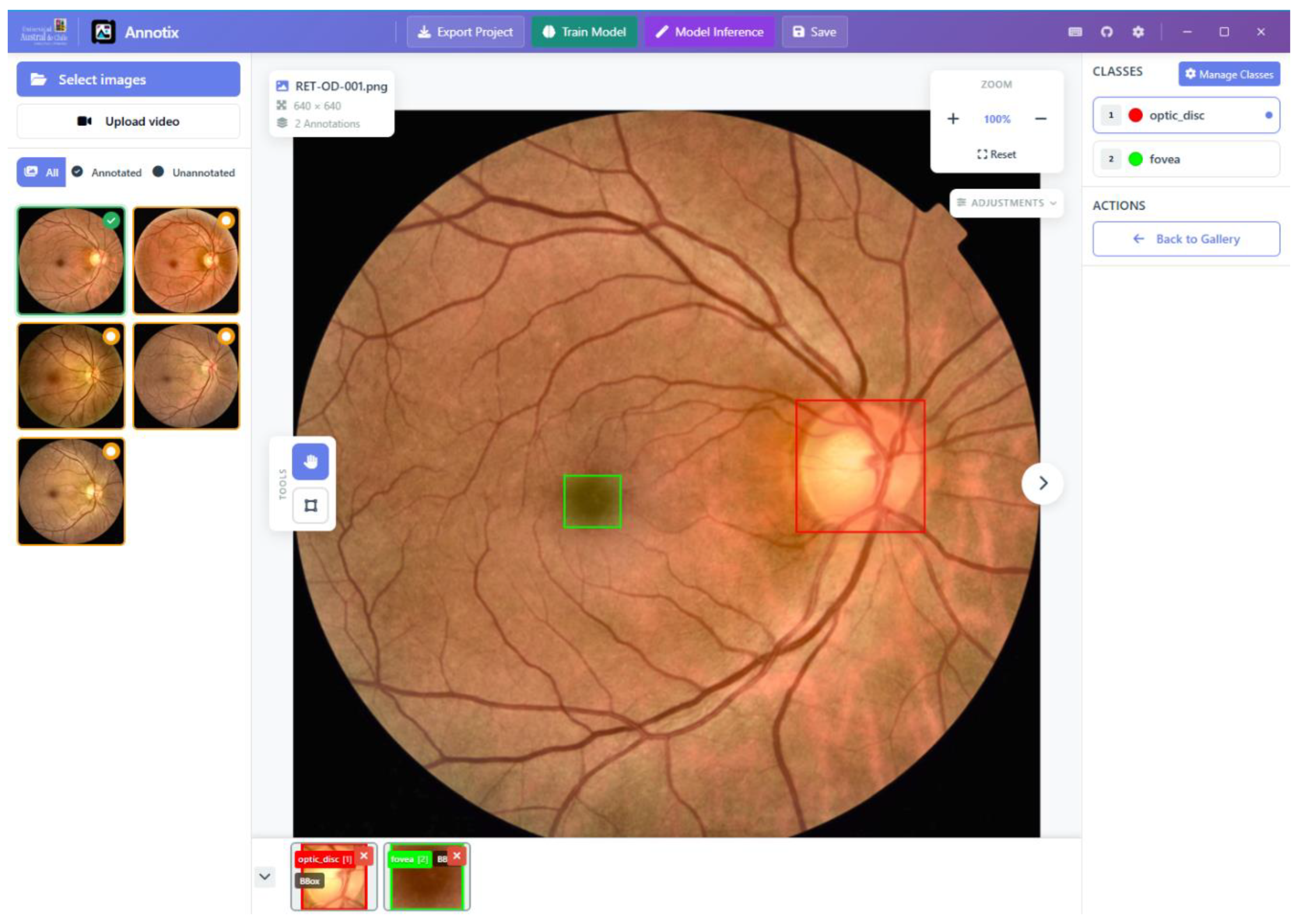

3.1. The Annotix Platform

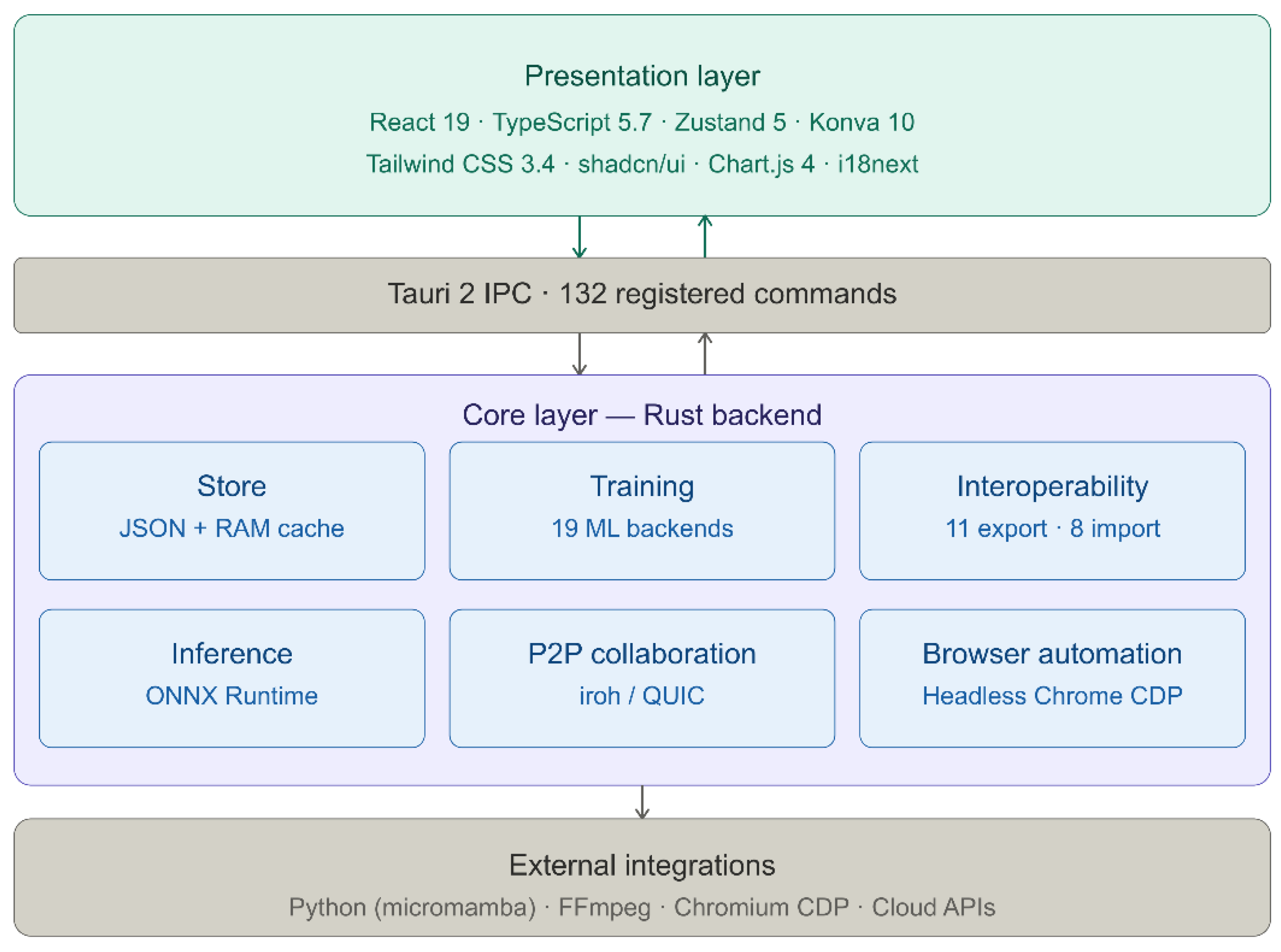

3.1.1. Architectural Overview

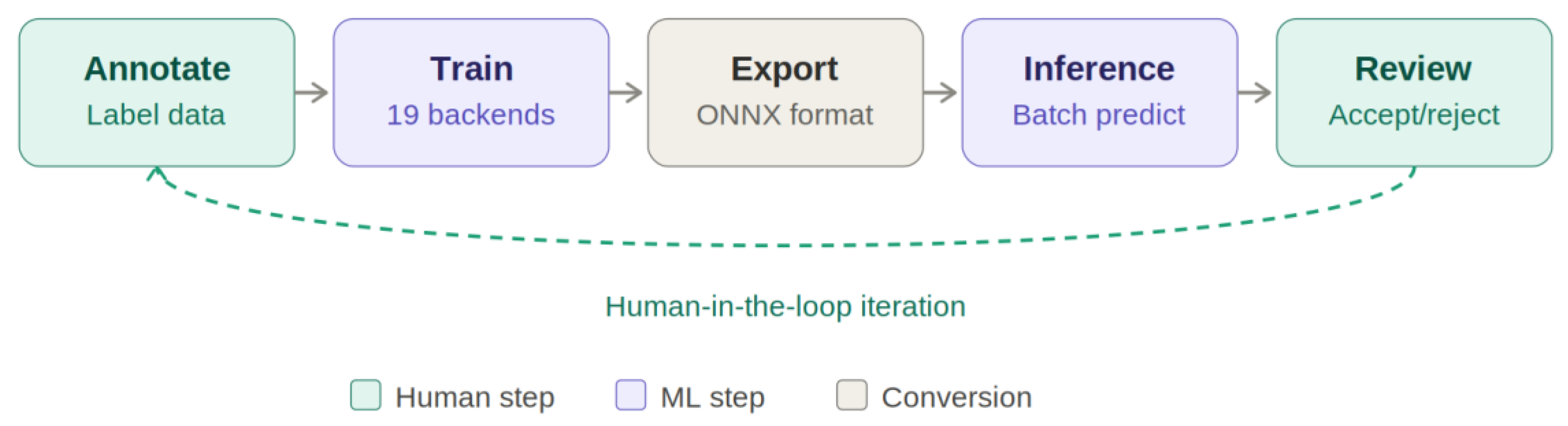

| Listing 1. Rust accessor patterns for project state management in Annotix. |

|

3.1.2. Annotation Capabilities

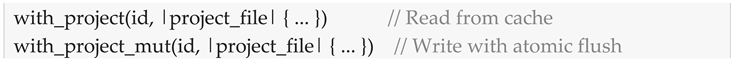

3.1.3. Training and Inference

| Task | Backend | Architectures |

|---|---|---|

| Object Detection | Ultralytics | YOLOv8, v9, v10, v11, v12 |

| Object Detection | Ultralytics | RT-DETR-l, RT-DETR-x |

| Object Detection | Roboflow | RF-DETR-base, RF-DETR-large |

| Object Detection | MMDetection | 30+ architectures |

| Semantic Segmentation | SMP | U-Net, DeepLabV3+, FPN, PSPNet, MAnet, LinkNet, PAN |

| Semantic Segmentation | HuggingFace | SegFormer, Mask2Former |

| Semantic Segmentation | MMSegmentation | Full OpenMMLab catalog |

| Instance Segmentation | Detectron2 | Mask R-CNN, Cascade Mask R-CNN |

| Pose Estimation | MMPose | HRNet, ViTPose, RTMPose |

| Oriented Detection | MMRotate | Oriented R-CNN, RoI Transformer |

| Image Classification | timm | 700+ architectures |

| Image Classification | HuggingFace | ViT, BEiT, DeiT, Swin Transformer |

| Time-Series (DL) | tsai | InceptionTime, LSTM-FCN, TSTPlus |

| Time-Series (DL) | PyTorch Forecasting | TFT, N-BEATS, DeepAR |

| Anomaly Detection | PyOD | Isolation Forest, LOF, AutoEncoder |

| Clustering | tslearn | k-Shape, DTW Barycenter |

| Imputation | PyPOTS | SAITS, Transformer-based |

| Pattern Recognition | STUMPY | Matrix Profile |

| Tabular ML | scikit-learn | RandomForest, SVM, kNN, GBM |

3.1.4. Peer-to-Peer Collaboration

3.1.5. Interoperability

3.1.6. Comparative Feature Analysis

| Feature | LabelImg | LabelMe | CVAT | Label Studio | Roboflow | Annotix |

|---|---|---|---|---|---|---|

| Offline operation | ✓ | ✓ | ✗ | ✗ | ✗ | ✓ |

| Annotation types | 1 | 2 | 5 | 4 | 3 | 7 |

| Video annotation | ✗ | ✗ | ✓ | ✗ | ✗ | ✓ |

| Time-series support | ✗ | ✗ | ✗ | ✓ | ✗ | ✓ |

| Tabular data | ✗ | ✗ | ✗ | ✗ | ✗ | ✓ |

| Integrated training | ✗ | ✗ | ✗ | ✗ | ✓1 | ✓ |

| Training backends | 0 | 0 | 0 | 0 | 1 | 19 |

| Model-assisted labeling | ✗ | ✗ | ✓ | ✓ | ✓ | ✓ |

| P2P collaboration | ✗ | ✗ | ✗ | ✗ | ✗ | ✓ |

| Export formats | 2 | 1 | 6 | 5 | 8 | 11 |

| Import formats | 0 | 0 | 4 | 3 | 5 | 8 |

| Localization | 1 | 1 | 2 | 1 | 1 | 10 |

| Data sovereignty | ✓ | ✓ | Partial | Partial | ✗ | ✓ |

3.2. Annotation Efficiency Evaluation

3.2.1. Bounding Box Task

3.2.2. Mask Task

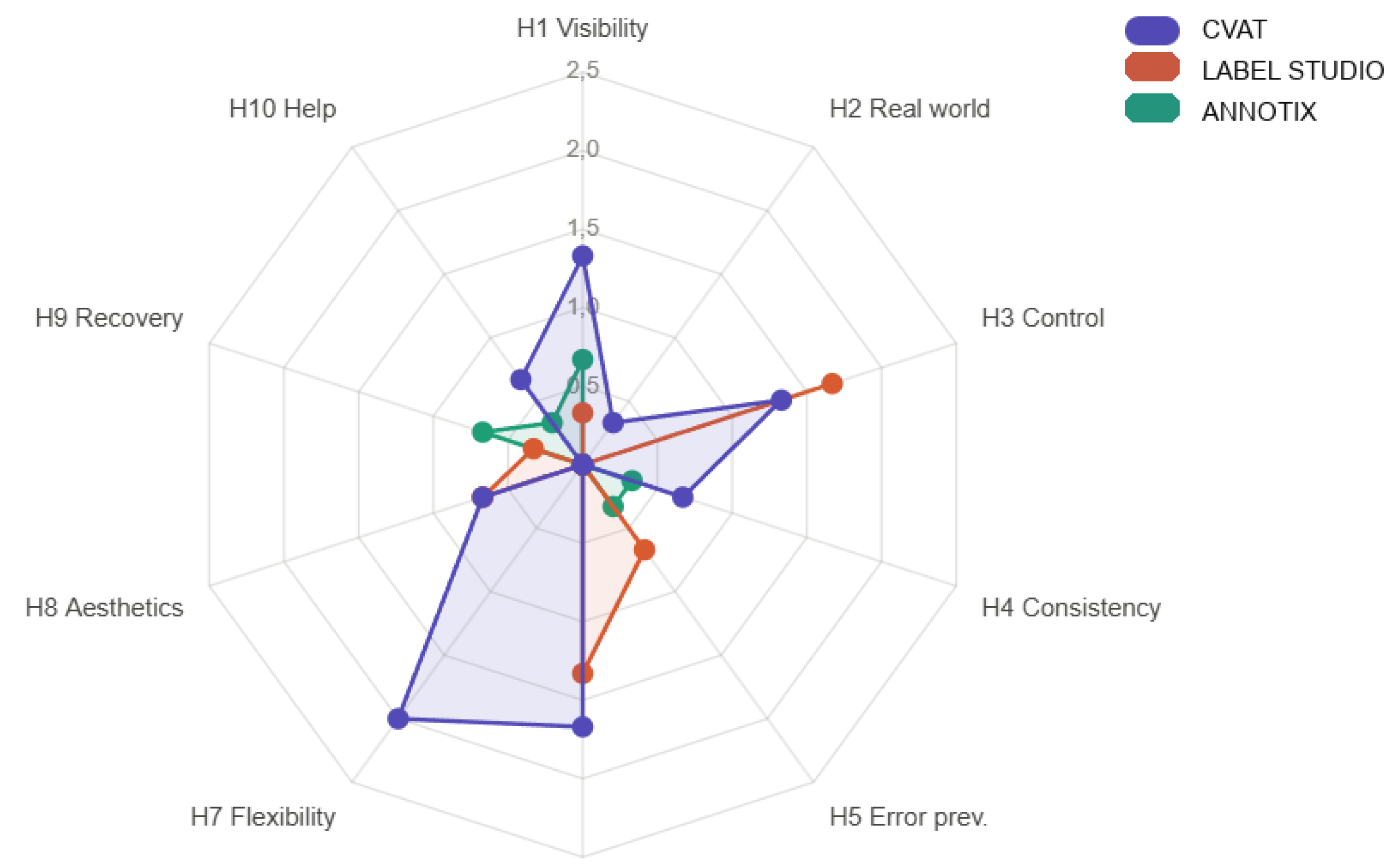

3.3. Heuristic Usability Evaluation

4. Discussion

4.1. Annotix as a Tool Paper Contribution

4.2. Annotation Efficiency

4.3. Heuristic Usability

4.4. Applicability to Medical Imaging

4.5. Limitations and Future Work

5. Conclusions

Supplementary Materials

Author Contributions

Funding

Data Availability Statement

Acknowledgments

Conflicts of Interest

Abbreviations

| AE | Annotator-Evaluator (AE-1, AE-2, AE-3) |

| API | Application Programming Interface |

| CDP | Chrome DevTools Protocol |

| CLAHE | Contrast Limited Adaptive Histogram Equalization |

| COCO | Common Objects in Context |

| CRUD | Create, Read, Update, Delete (also: Create, Move, Resize, Delete in task protocol) |

| CUDA | Compute Unified Device Architecture |

| CVAT | Computer Vision Annotation Tool |

| FPS | Frames Per Second |

| GDPR | General Data Protection Regulation |

| GPU | Graphics Processing Unit |

| HIPAA | Health Insurance Portability and Accountability Act |

| ICC | Intraclass Correlation Coefficient |

| IPC | Inter-Process Communication |

| IQR | Interquartile Range |

| JSON | JavaScript Object Notation |

| ML | Machine Learning |

| NAT | Network Address Translation |

| ONNX | Open Neural Network Exchange |

| P2P | Peer-to-Peer |

| QUIC | Quick UDP Internet Connections (IETF RFC 9000) |

| RFC | Request for Comments |

| SAM | Segment Anything Model |

| SYN-BBOX | Synthetic Bounding Box stimulus dataset |

| SYN-MASK | Synthetic Mask stimulus dataset |

| UUID | Universally Unique Identifier |

| VOC | Visual Object Classes (Pascal VOC) |

References

- Ren, S.; He, K.; Girshick, R.; Sun, J. Faster R-CNN: Towards Real-Time Object Detection with Region Proposal Networks. IEEE Trans. Pattern Anal. Mach. Intell. 2017, 39, 1137–1149. [Google Scholar] [CrossRef] [PubMed]

- Shelhamer, E.; Long, J.; Darrell, T. Fully Convolutional Networks for Semantic Segmentation. IEEE Trans. Pattern Anal. Mach. Intell. 2017, 39, 640–651. [Google Scholar] [CrossRef] [PubMed]

- He, K.; Gkioxari, G.; Dollár, P.; Girshick, R. Mask R-CNN. In Proceedings of the IEEE International Conference on Computer Vision (ICCV), Venice, Italy, 22–29 October 2017; pp. 2980–2988. [Google Scholar] [CrossRef]

- Lin, T.Y.; Maire, M.; Belongie, S.; Hays, J.; Perona, P.; Ramanan, D.; Dollár, P.; Zitnick, C.L. Microsoft COCO: Common Objects in Context. In Computer Vision -- ECCV 2014; Fleet, D., Pajdla, T., Schiele, B., Tuytelaars, T., Eds.; Springer: Cham, Switzerland, 2014; pp. 740–755. [Google Scholar] [CrossRef]

- Xie, X.; Cheng, G.; Wang, J.; Yao, X.; Han, J. Oriented R-CNN for Object Detection. In Proceedings of the IEEE/CVF International Conference on Computer Vision (ICCV), Montreal, QC, Canada, 11–17 October 2021; pp. 3500–3509. [Google Scholar] [CrossRef]

- Ismail Fawaz, H.; Forestier, G.; Weber, J.; Idoumghar, L.; Muller, P.A. Deep Learning for Time Series Classification: A Review. Data Min. Knowl. Discov. 2019, 33, 917–963. [Google Scholar] [CrossRef]

- Chalapathy, R.; Chawla, S. Deep Learning for Anomaly Detection: A Survey. arXiv 2019, arXiv:1901.03407. [Google Scholar] [CrossRef]

- Lim, B.; Zohren, S. Time-Series Forecasting with Deep Learning: A Survey. Philos. Trans. R. Soc. A 2021, 379, 20200209. [Google Scholar] [CrossRef]

- Roboflow. Available online: https://roboflow.com (accessed on 13 March 2026).

- V7 Labs. Available online: https://www.v7labs.com (accessed on 13 March 2026).

- Supervisely. Available online: https://supervisely.com (accessed on 13 March 2026).

- Sekachev, B.; et al. CVAT. 2020. Available online: https://github.com/opencv/cvat (accessed on 13 March 2026).

- Tkachenko, M.; et al. Label Studio: Data Labeling Software. 2020. Available online: https://github.com/heartexlabs/label-studio (accessed on 13 March 2026).

- Tzutalin. LabelImg. 2015. Available online: https://github.com/tzutalin/labelImg (accessed on 13 March 2026).

- Russell, B.C.; Torralba, A.; Murphy, K.P.; Freeman, W.T. LabelMe: A Database and Web-Based Tool for Image Annotation. Int. J. Comput. Vis. 2008, 77, 157–173. [Google Scholar] [CrossRef]

- Makesense.ai. Available online: https://www.makesense.ai (accessed on 13 March 2026).

- Aljabri, M.; et al. Towards a Better Understanding of Annotation Tools for Medical Imaging: A Survey. Multimed. Tools Appl. 2022, 81, 25877–25911. [Google Scholar] [CrossRef] [PubMed]

- Yang, F.; Zamzmi, G.; Angara, S.; Rajaraman, S.; Aquilina, A.; Xue, Z.; Jaeger, S.; Papagiannakis, E.; Antani, S.K. Assessing Inter-Annotator Agreement for Medical Image Segmentation. IEEE Access 2023, 11, 21300–21312. [Google Scholar] [CrossRef] [PubMed]

- Nielsen, J.; Molich, R. Heuristic Evaluation of User Interfaces. In Proceedings of the SIGCHI Conference on Human Factors in Computing Systems (CHI '90), Seattle, WA, USA, 1–5 April 1990; pp. 249–256. [Google Scholar] [CrossRef]

- Landis, J.R.; Koch, G.G. The Measurement of Observer Agreement for Categorical Data. Biometrics 1977, 33, 159–174. [Google Scholar] [CrossRef] [PubMed]

- The Open Group. The Single UNIX Specification, Version 4: Rename. Available online: https://pubs.opengroup.org/onlinepubs/9699919799/functions/rename.html (accessed on 13 March 2026).

- Lugaresi, C.; Tang, J.; Nash, H.; McClanahan, C.; Uboweja, E.; Hays, M.; Zhang, F.; Chang, C.L.; Yong, M.; Lee, J.; et al. MediaPipe: A Framework for Building Perception Pipelines. arXiv 2019, arXiv:1906.08172. [Google Scholar] [CrossRef]

- QuantStack. micromamba. Available online: https://github.com/mamba-org/mamba (accessed on 13 March 2026).

- ONNX Runtime. Available online: https://onnxruntime.ai (accessed on 13 March 2026).

- n0 Computer. iroh: Efficient QUIC-based Data Transfer. Available online: https://iroh.computer (accessed on 13 March 2026).

- Iyengar, J.; Thomson, M. QUIC: A UDP-Based Multiplexed and Secure Transport; RFC 9000; Internet Engineering Task Force: Fremont, CA, USA, 2021; pp. 1–151. [CrossRef]

- Everingham, M.; Van Gool, L.; Williams, C.K.I.; Winn, J.; Zisserman, A. The Pascal Visual Object Classes (VOC) Challenge. Int. J. Comput. Vis. 2010, 88, 303–338. [Google Scholar] [CrossRef]

- Pop, P. Comparing Web Applications with Desktop Applications: An Empirical Study. 2002.

- Nielsen, J. Enhancing the Explanatory Power of Usability Heuristics. In Proc. CHI '94, Boston, MA, USA, 24–28 April 1994; pp. 152–158. [CrossRef]

- Lutz de Araujo, A.; Wu, J.; Harvey, H.; Lungren, M.P.; Graham, M.; Leiner, T.; Willemink, M.J. Medical Imaging Data Calls for a Thoughtful and Collaborative Approach to Data Governance. PLoS Digit. Health 2025, 4, e0001046. [Google Scholar] [CrossRef] [PubMed]

- Ravi, N.; Gabeur, V.; Hu, Y.T.; Hu, R.; Ryali, C.; Ma, T.; Khedr, H.; Rädle, R.; Rolland, C.; Gustafson, L.; et al. SAM 2: Segment Anything in Images and Videos. arXiv 2024, arXiv:2408.00714. [Google Scholar] [CrossRef]

- Liu, S.; Zeng, Z.; Ren, T.; Li, F.; Zhang, H.; Yang, J.; Jiang, Q.; Li, C.; Yang, J.; Su, H.; et al. Grounding DINO: Marrying DINO with Grounded Pre-training for Open-Set Object Detection. In Computer Vision -- ECCV 2024; Leonardis, A., Ricci, E., Roth, S., Russakovsky, O., Sattler, T., Varol, G., Eds.; Springer: Cham, Switzerland, 2024; pp. 38–55. [Google Scholar] [CrossRef]

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).