Submitted:

11 April 2026

Posted:

13 April 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

- Sensor degradation and communication degradation frequently co-occur (e.g., electromagnetic interference affecting both radar and RF links simultaneously);

- Independent failure-mode analyses underestimate compound risk when both subsystems are simultaneously stressed; and

- Regulatory frameworks (EASA, FAA, and JCAB — Japan Civil Aviation Bureau, which governs UAV airspace integration in Japan) increasingly require demonstrable safety integration across sensing and communication layers.

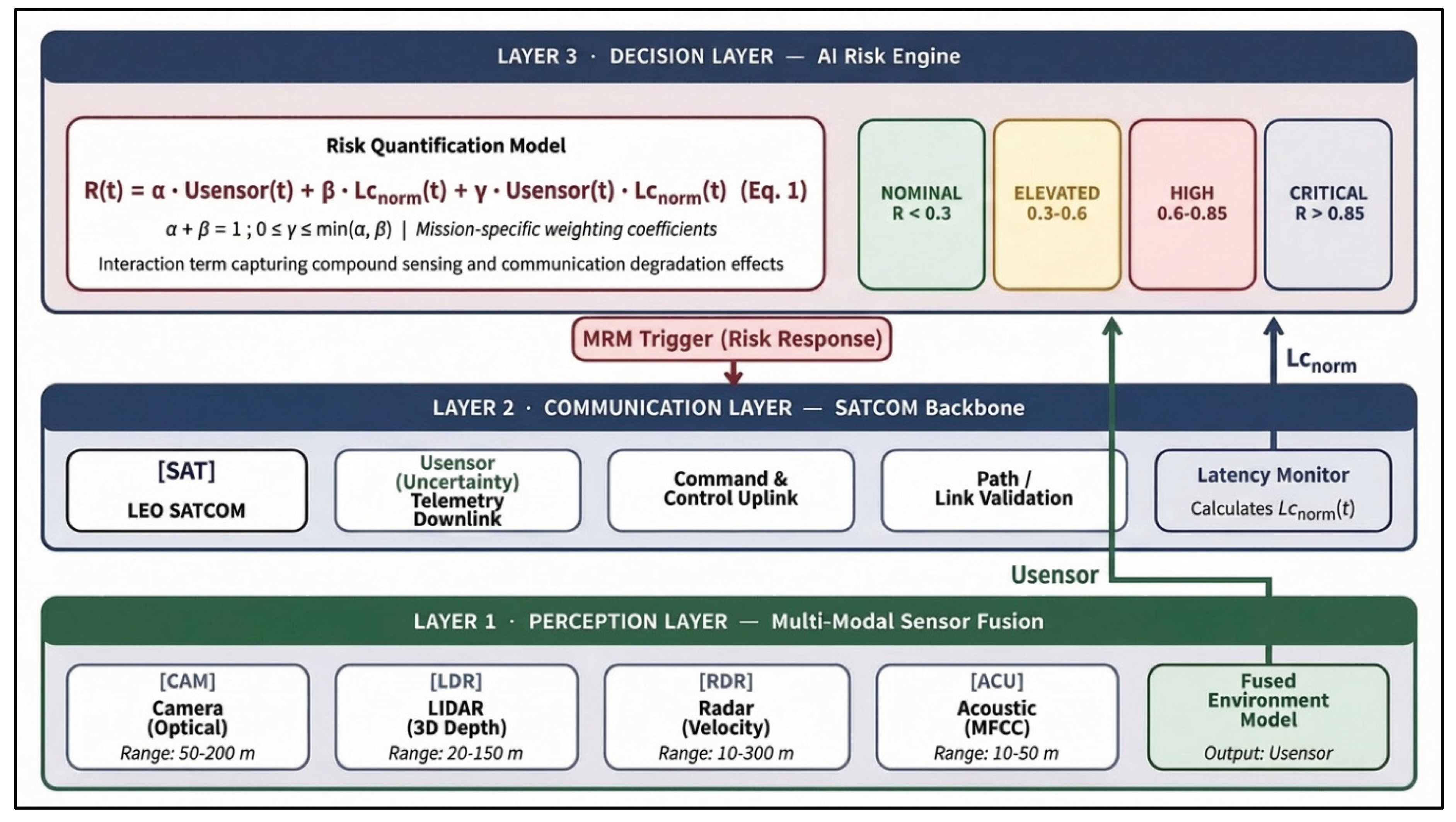

- A formalised three-layer RASA architecture for BVLOS UAV safety systems;

- A tractable risk model with interaction term: R(t) = α·U_sensor(t) + β·L_c_norm(t) + γ·U_sensor(t)·L_c_norm(t);

- Monte Carlo numerical validation across three representative BVLOS scenarios;

- Conceptual alignment with ISO 26262-derived functional safety principles; and

- Regulatory benchmarking against EASA, FAA, and JCAB BVLOS requirements.

2. Safety Challenges in BVLOS UAV Systems

2.1. Operational Risk Categories

2.2. The Communication Dependency Problem

2.3. Functional Safety Principles in UAV Contexts

3. Multi-Modal Sensor Fusion for Risk Perception

3.1. Sensor Modality Overview

3.2. Cross-Domain Validation: From Automotive to Aerial Safety

3.3. Acoustic Sensing and MFCC-Based Feature Extraction

3.4. Uncertainty Quantification

3.5. Comparison with Existing UAV Safety Architectures

4. SATCOM as the Communication Backbone for BVLOS Operations

4.1. Limitations of Terrestrial Communication

4.2. SATCOM Architecture for UAV Connectivity

4.3. SATCOM in the RASA Framework and Latency Decomposition

5. The RASA Framework: Proposed Architecture

5.1. Architectural Overview

5.2. Layer 1: Perception Layer

5.3. Layer 2: Communication Layer

5.4. Layer 3: Decision Layer — Risk Quantification

5.5. Risk State Classification

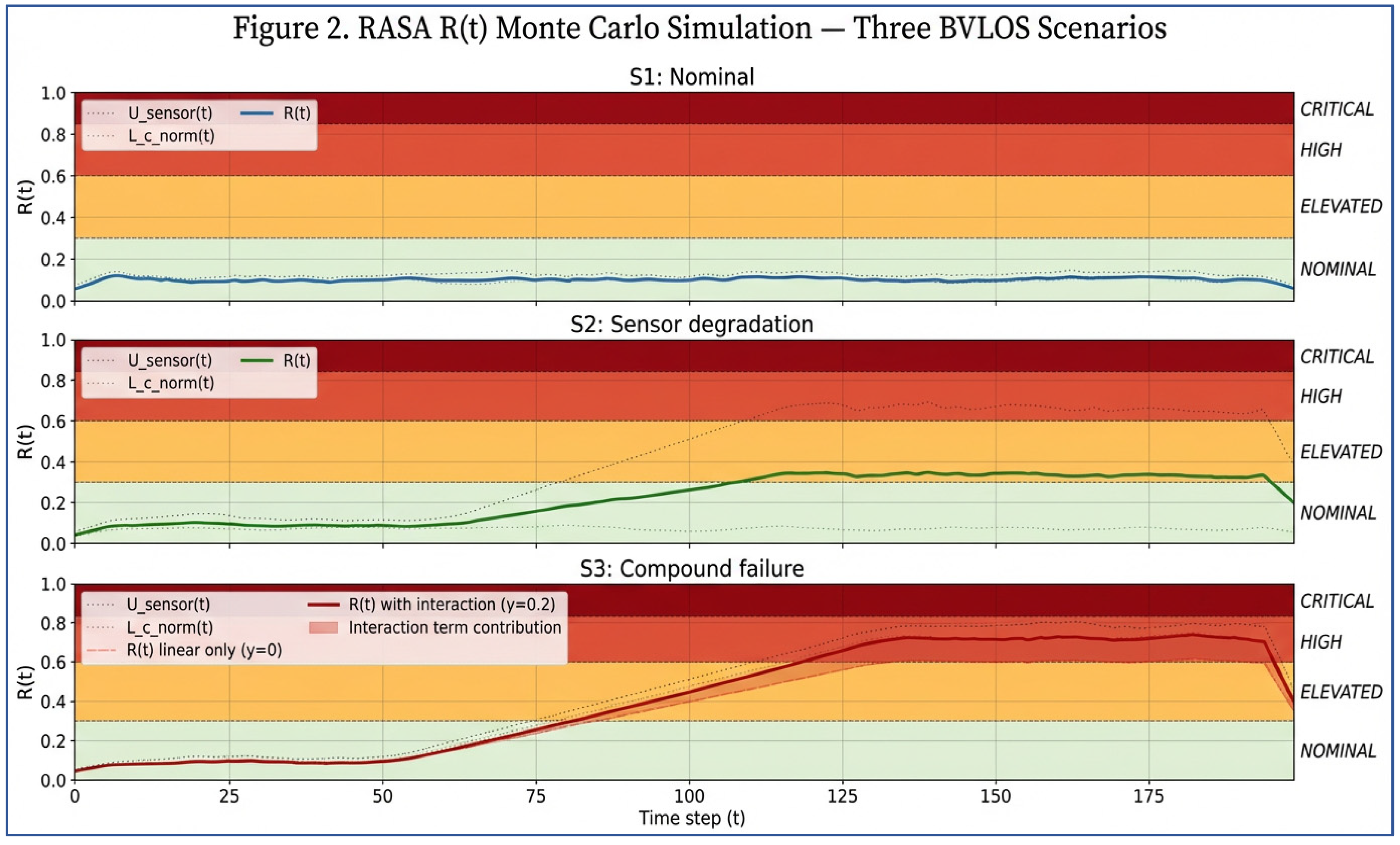

5.6. Simulation and Numerical Validation

| Scenario | U_sensor range | L_c_norm range | α | β | γ | Mean R(t) | Dominant state |

| S1: Nominal | 0.05–0.20 | 0.03–0.15 | 0.45 | 0.45 | 0.10 | 0.13 | NOMINAL |

| S2: Sensor degradation only | 0.50–0.80 | 0.03–0.10 | 0.45 | 0.45 | 0.10 | 0.38 | ELEVATED |

| S3: Compound failure | 0.60–0.85 | 0.55–0.80 | 0.40 | 0.40 | 0.20 | 0.74 | HIGH/CRITICAL |

6. Regulatory Alignment and Implementation Pathway

6.1. Current BVLOS Regulatory Landscape

6.2. RASA Alignment with Regulatory Requirements

6.3. Limitations and Scope

7. Discussion and Future Research Directions

7.1. Integration Challenges

7.2. Future Research Directions

7.3. Broader Implications

8. Conclusion

Data Availability Statement

Reproducibility Statement

| Paper Element | Repository | File / Location | Section |

| Risk model implementation | GitHub (RASA-core) | /rasa_model.py | Eq. 1 |

| Monte Carlo simulation scripts | GitHub (RASA-core) | /simulation/monte_carlo.py | Sec. 5.6 |

| Scenario configuration files | Zenodo | /data/bvlos_scenarios.csv | Table 5 |

| Simulation output datasets | Zenodo | /data/simulation_outputs/ | Sec. 5.6 |

| Architecture block diagram (Figure 1) | Zenodo | /figures/rasa_architecture_v2.svg | Sec. 5.1 |

| α, β, γ parameter sets | Zenodo | /params/weighting_coefficients.json | Sec. 5.4 |

| Validation figures (600 DPI) | Zenodo | /figures/ | Sec. 5.6 |

| Full source archive | Zenodo DOI 10.5281/zenodo.19200142 | — | All |

Acknowledgments

References

- LeCun, Y.; Bengio, Y.; Hinton, G. Deep learning. Nature 2015, 521, 436–444. [Google Scholar] [CrossRef] [PubMed]

- He, K.; Zhang, X.; Ren, S.; Sun, J. Deep Residual Learning for Image Recognition. In Proceedings of the IEEE CVPR; 2016; pp. 770–778. [Google Scholar] [CrossRef]

- Rezaul, K. M.; Jewel, M.; Islam, M. S.; Barua, N.; et al. Enhancing audio classification through MFCC feature extraction and data augmentation with CNN and RNN models. International Journal of Advanced Computer Science 2024. [Google Scholar] [CrossRef]

- Bertrand, S.; et al. Ground risk assessment for long-range UAV flights over sparsely populated areas. Journal of Intelligent & Robotic Systems 2021, 101(3), 58. [Google Scholar] [CrossRef]

- Lykou, G.; Moustakas, D.; Gritzalis, D. Defending Airports from UAS: A Survey on Cyber-Attacks and Counter-Drone Sensing Technologies. Sensors 2020, 20(12), 3537. [Google Scholar] [CrossRef] [PubMed]

- Shakhatreh, H.; et al. Unmanned Aerial Vehicles (UAVs): A Survey on Civil Applications and Key Research Challenges. IEEE Access 2019, 7, 48572–48634. [Google Scholar] [CrossRef]

- Mozaffari, M.; et al. A Tutorial on UAVs for Wireless Networks. IEEE Communications Surveys & Tutorials 2019, 21(3), 2334–2360. [Google Scholar] [CrossRef]

- Gupta, L.; Jain, R.; Vaszkun, G. Survey of Important Issues in UAV Communication Networks. IEEE Communications Surveys & Tutorials 2016, 18(2), 1123–1152. [Google Scholar] [CrossRef]

- Barua, N. Integrated Safety Architectures: Leveraging Multi-Modal AI and ISO 26262 to Protect Vulnerable Road Users. SSRN 2026. [Google Scholar] [CrossRef]

- Barua, N.; Hitosugi, M. Advanced Multi-Modal Sensor Fusion System for Detecting Falling Humans. Vehicles 2025, 7(4), 149. [Google Scholar] [CrossRef]

- Zeng, Y.; Zhang, R.; Lim, T.J. Wireless communications with unmanned aerial vehicles: Opportunities and challenges. IEEE Communications Magazine 2016, 54(5), 36–42. [Google Scholar] [CrossRef]

- Wan, J.; et al. UAV Swarm Communication: A Survey on Architecture and Applications. Drones 2023, 7(2), 80. [Google Scholar] [CrossRef]

- Del Portillo, I.; Cameron, B.G.; Crawley, E.F. A technical comparison of three LEO satellite constellation systems to provide global broadband connectivity. Acta Astronautica 2019, 159, 216–225. [Google Scholar] [CrossRef]

- Kim, J.; Yoon, S. LEO Satellite Constellations for BVLOS UAV Command and Control. IEEE Transactions on Aerospace and Electronic Systems 2022, 58(6), 5112–5124. [Google Scholar] [CrossRef]

- Barua, N. SATCOM, The Future UAV Communication Link. SSRN. 2022. [Google Scholar] [CrossRef]

- 3GPP. Non-Terrestrial Networks (NTN) for New Radio (NR): Technical Specification 38.821, Release 17. 3rd Generation Partnership Project. 2022. [Google Scholar]

- European Union Aviation Safety Agency. Opinion No 05/2023 — High and medium risk UAS operations; EASA: Cologne, 2023. [Google Scholar]

- Federal Aviation Administration. BVLOS Aviation Rulemaking Committee Final Report; FAA: Washington D.C, 2024. [Google Scholar]

- Schauf, M.; Longo, S. Towards Safety-Certified Autonomous UAV Systems: Challenges and Frameworks. Aerospace 2022, 9(11), 630. [Google Scholar] [CrossRef]

- Fraga-Lamas, P.; et al. A Review on IoT Deep Learning UAV Systems for Autonomous Obstacle Detection. Remote Sensing 2019, 11(18), 2144. [Google Scholar] [CrossRef]

- Bauranov, A.; Rakas, J. Designing airspace for urban air mobility. Progress in Aerospace Sciences 2021, 125, 100726. [Google Scholar] [CrossRef]

- Johnson, M.; et al. Flight Test Evaluation of a UTM Concept for Urban Operations. In AIAA SciTech Forum; 2020; pp. AIAA 2020–0518. [Google Scholar] [CrossRef]

- Barua, N. Supplementary Data for Risk-Aware AI Architecture for BVLOS UAV Safety (RASA) [Data set]; Zenodo, 2026. [Google Scholar] [CrossRef]

- Thrun, S.; Burgard, W.; Fox, D. Probabilistic Robotics; MIT Press, 2005; ISBN 978-0-262-20162-9. [Google Scholar]

- Cesetti, A.; et al. A vision-based guidance system for UAV navigation and safe landing using natural landmarks. Journal of Intelligent & Robotic Systems 2010, 57(1–4), 233–257. [Google Scholar] [CrossRef]

- EASA. NPA 2022-06: U-space concept of operations; European Union Aviation Safety Agency: Cologne, 2022. [Google Scholar]

- Barua, N. Estimator Collapse Theory (ECT) Framework v1.2.0 (v1.2.0). Zenodo 2026. [Google Scholar] [CrossRef]

| Risk Category | Root Cause | Operational Consequence |

| Collision Risk | Limited obstacle awareness at range | Structural damage; third-party injury |

| Communication Loss | RF interference, range limits, link fade | Loss of command authority; flyaway |

| Sensor Failure | Environmental noise; hardware fault | False-negative detection; incorrect manoeuvre |

| Perception Latency | Processing bottleneck; bandwidth saturation | Delayed response to dynamic obstacles |

| Environmental Uncertainty | Weather, terrain masking, and dynamic airspace | Unpredicted mission deviation |

| Modality | Range | Environmental Sensitivity | Primary Role in RASA | Layer |

| Optical Camera | 50–200 m | High (light-dependent) | Object classification | Perception |

| LiDAR | 20–150 m | Moderate (rain, dust) | 3D obstacle mapping | Perception |

| Radar | 10–300 m | Low (all-weather) | Velocity/range | Perception |

| Acoustic (MFCC) | 10–50 m | Low (dark, fog) | Anomaly detection | Perception |

| SATCOM Telemetry | Global | Low | Path/link validation | Communication |

| Feature | Traditional UAV Stack | RASA |

| Sensor + communication coupling | ❌ Independent modules | ✅ Unified risk model |

| Real-time scalar risk output | ❌ Not available | ✅ R(t) at each timestep |

| Compound failure modelling | ❌ Linear superposition only | ✅ Interaction term γ |

| Autonomous risk escalation | Limited / operator-dependent | ✅ Explicit MRM hierarchy |

| Regulatory audit trail | Partial | ✅ Auditable safety trace |

| Onboard without ground loop | ❌ Ground-dependent | ✅ Fully autonomous |

| Risk State | R(t) Range | Trigger Condition | MRM Response | Coverage |

| NOMINAL | 0.00 – 0.30 | Normal ops; all variables within bounds | Autonomous mission execution | Standard |

| ELEVATED | 0.31 – 0.60 | Sensor uncertainty or latency rising | Increased reporting; pre-position contingency commands | Enhanced |

| HIGH | 0.61 – 0.85 | Combined sensor/comm degradation | Station-keeping; return-to-launch initiated | Critical |

| CRITICAL | > 0.85 | Compound sensor + comm failure | Emergency contingency landing | Emergency |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).