1. Introduction

Overall Equipment Effectiveness (OEE) is widely recognised as the gold standard for measuring manufacturing productivity. Introduced by Nakajima [

1] within the Total Productive Maintenance (TPM) framework, OEE decomposes equipment losses into three multiplicative components, Availability (A), Performance (P), and Quality (Q), yielding the composite score OEE = A × P × Q. Since its formalisation in the late 1980s, OEE has achieved broad adoption across discrete and process manufacturing, serving as a foundational key performance indicator in lean manufacturing, Six Sigma, World Class Manufacturing, and Industry 4.0 digital transformation initiatives [

2,

3]. Academic interest in OEE has increased substantially over the past decade, with associated research keywords evolving from maintenance and production to lean manufacturing and optimisation [

3]. An OEE score of 85% is widely cited as the world-class benchmark for discrete manufacturing, while the global average across most industrial sectors lies between 55% and 65% [

4,

5], indicating persistent and measurable gaps between actual and potential equipment performance.

Despite its widespread use, the classical OEE formula embeds a structural assumption that has received insufficient critical attention in the literature, specifically that the three components A, P and Q are treated as equally important and combined with implicit fixed weights of 1/3 each, irrespective of the operational context in which the equipment operates. This assumption is operationally unjustifiable across different industrial environments.

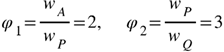

To illustrate this structural issue, three representative industrial contexts are examined, each characterised by a distinct dominant loss factor.

In pharmaceutical manufacturing, Quality constitutes the operationally critical component. Regulatory frameworks enforced by agencies such as the Food and Drug Administration impose strict conformance requirements on every production unit; non-conforming batches are subject to mandatory quarantine, investigation, and disposal [

6]. Reported industry data indicate that the average quality score in pharmaceutical manufacturing is approximately 94%, implying a 6% non-conformance rate that may trigger regulatory inspections, warning letters, or product recalls [

5]. The financial and reputational consequences of quality failures in this sector are disproportionate relative to equivalent losses in Availability or Performance, which result in recoverable throughput reductions rather than regulatory liability. The classical OEE formula assigns equal arithmetic weight to all three components, thereby failing to reflect this asymmetry.

In continuous-flow refinery operations, Availability constitutes the operationally critical component. Process shutdowns in continuous production systems propagate simultaneously across interconnected upstream and downstream units, generating economic losses at rates that are structurally incomparable to equivalent performance or quality degradations [

7]. Restart procedures following unplanned shutdowns incur additional costs associated with energy consumption, material losses, and equipment thermal cycling [

7]. Performance and quality variations in refinery operations are typically absorbed within operational tolerances and do not produce losses of comparable magnitude. The equal-weight OEE formulation does not capture this difference in loss severity between components.

In high-speed automotive assembly, Performance constitutes the operationally critical component. These production systems are engineered around a defined cycle time, and minor speed reductions or micro-stoppages that fall below the threshold for classification as availability losses nonetheless generate cumulative throughput deficits across tightly synchronised stations [

8]. Automated inspection systems maintain quality losses at negligible levels, and predictive maintenance programmes limit unplanned downtime [

2,

8]. Assigning equal weight to A, P and Q in this production environment systematically underestimates the contribution of performance losses to total production inefficiency.

In each context, the component that dominates operational loss is different, yet the classical OEE formula assigns identical weight to all three. This fixed-weight assumption is not a neutral modelling choice; it constitutes a structural limitation with direct and measurable consequences for maintenance prioritisation decisions.

Significant efforts have been made to extend the original OEE framework. Extensions such as Total Equipment Effectiveness Performance (TEEP), Production Equipment Efficiency (PEE), Overall Asset Effectiveness (OAE), Overall Factory Effectiveness (OFE), and Overall Production Effectiveness (OPE) have emerged to address broader perspectives on equipment and factory-level performance [

2]. While PEE introduces weights to the sub-indicators so that A, P and Q are not assigned equal importance as in classical OEE, no structured, formal method is prescribed for deriving those weights from the operational context, and the assignment of PEE weights remains ad hoc, expert-dependent, and unsupported by any consistency validation mechanism. No published work has formally proposed replacing the fixed A×P×Q product with a structured Multi-Criteria Decision Making (MCDM) weighting model that derives context-specific weights with guaranteed consistency and minimum expert burden. Existing extensions modify the scope of measurement but none provides a systematic, auditable methodology for determining how the three components should be weighted relative to each other in a given operational context. This methodological gap motivates the present work.

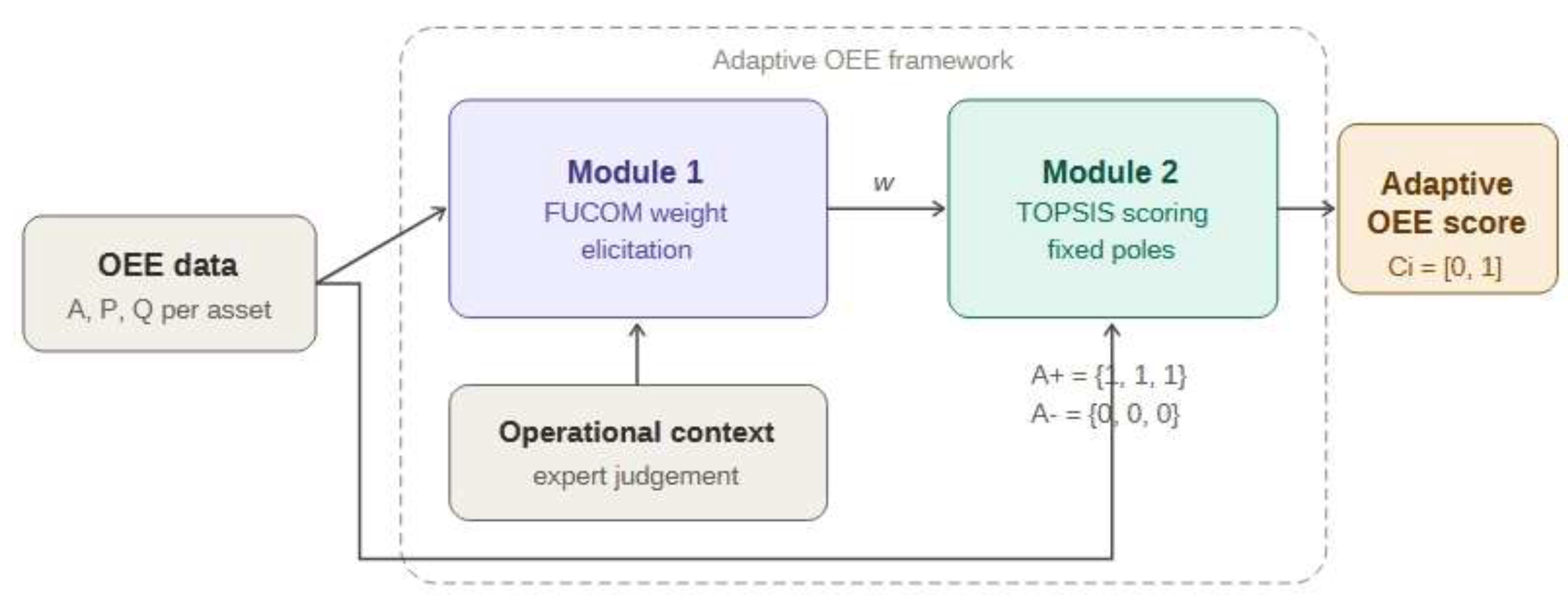

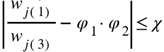

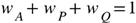

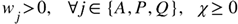

This paper addresses three research questions. The first concerns the formal characterisation of the structural limitations of the classical OEE formula and the theoretical basis upon which a multi-criteria weighting approach is justified. The second examines how the Full Consistency Method (FUCOM) and the Technique for Order Preference by Similarity to Ideal Solution (TOPSIS) can be integrated to derive context-sensitive weights for the OEE components with minimum expert elicitation burden and mathematically guaranteed consistency. The third investigates the conditions under which the proposed Adaptive OEE framework produces rankings that differ materially from those obtained under classical OEE and analyses the implications of such divergence for maintenance prioritisation decisions.

This paper makes three original contributions. The equal-weight assumption embedded in the classical OEE formula is formally characterised as a structural limitation, and its quantifiable consequences for maintenance prioritisation decisions are systematically identified. The Adaptive OEE framework is proposed as a FUCOM–TOPSIS model for context-driven equipment effectiveness measurement, designed to be applicable across heterogeneous industrial environments. Three illustrative case studies involving equipment assets evaluated across availability-dominant, performance-dominant, and quality-dominant operational contexts are presented, demonstrating the specific conditions under which Adaptive OEE produces rankings that diverge from those of classical OEE and quantifying the associated decision impact through the Divergence Index.

The remainder of this paper is organised as follows.

Section 2 reviews the classical OEE framework and its documented limitations.

Section 3 presents the Adaptive OEE framework and introduces the FUCOM and TOPSIS methods that form the methodological basis of the proposed approach, detailing its architecture, weighting procedure, and scoring mechanism.

Section 4 describes illustrative case studies, reports the results obtained across the three operational contexts, and compares Adaptive OEE rankings against those produced by the classical formula.

Section 5 discusses the findings and addresses the practical implications for industrial asset management.

Section 6 concludes the paper and identifies directions for future research.

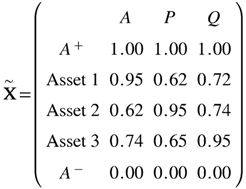

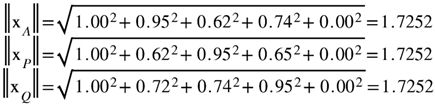

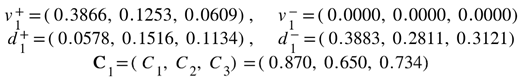

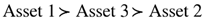

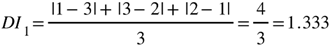

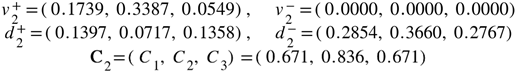

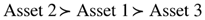

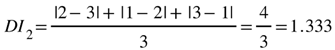

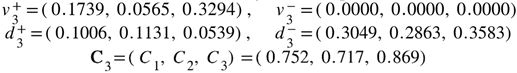

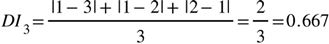

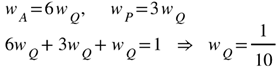

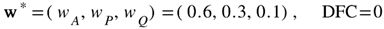

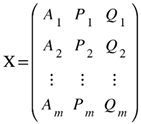

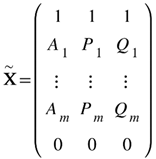

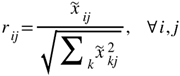

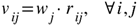

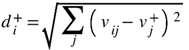

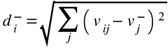

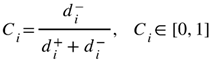

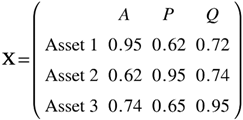

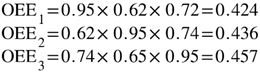

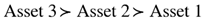

will serve as the reference against which the Adaptive OEE rankings under the three context-specific weight vectors are compared in Section 4.5. The asset profiles are deliberately constructed so that the classical ranking is not preserved under all three weight configurations, thereby illustrating the diagnostic value of the proposed framework. The augmented decision matrix , including the fixed ideal poles and , is identical across the three case studies:

will serve as the reference against which the Adaptive OEE rankings under the three context-specific weight vectors are compared in Section 4.5. The asset profiles are deliberately constructed so that the classical ranking is not preserved under all three weight configurations, thereby illustrating the diagnostic value of the proposed framework. The augmented decision matrix , including the fixed ideal poles and , is identical across the three case studies: