Submitted:

10 April 2026

Posted:

10 April 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

- RQ1. What operational risks emerge when smart manufacturing data pipelines are deployed under constrained SME factory conditions?

- RQ2. What core requirements must an operational data foundation satisfy under such conditions, and how can these requirements be formalized into design principles and operational invariants?

- RQ3. How can the proposed framework be instantiated in a real SME manufacturing setting, and how can its practical value be evaluated through field evidence?

- 1.

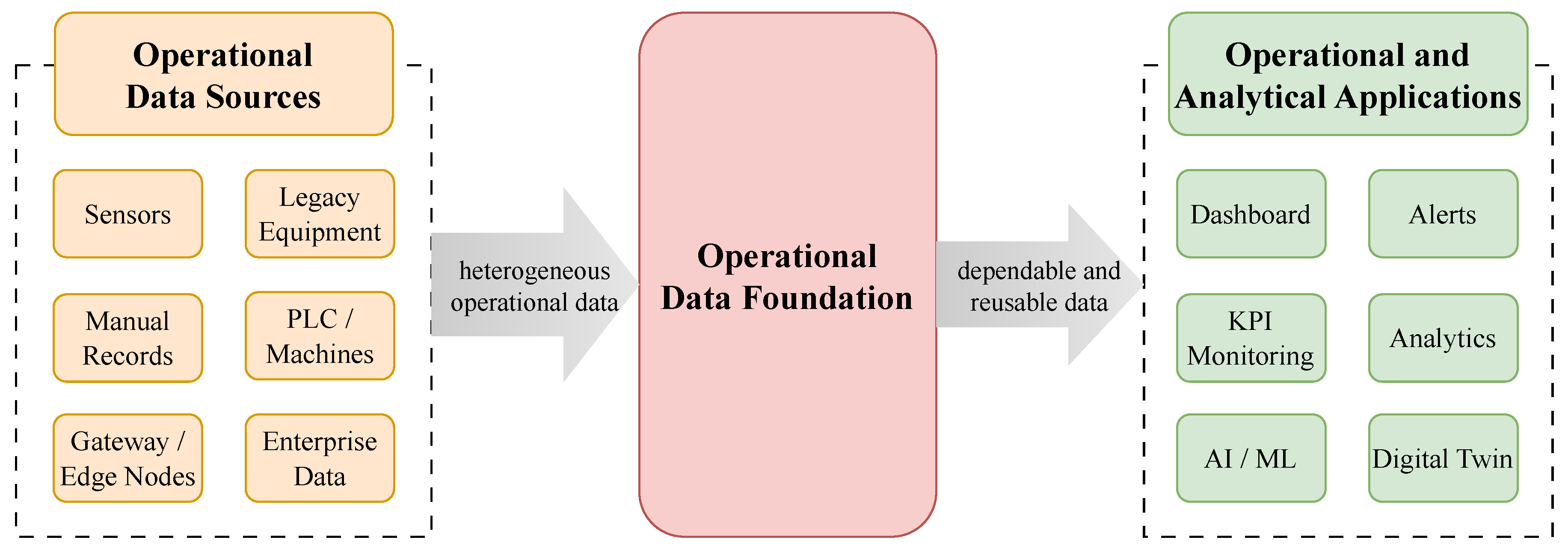

- Reframing the practical bottleneck of smart manufacturing in constrained SME factories as an operational data foundation problem.

- 2.

- Deriving the core operational risks that emerge when a general manufacturing data lifecycle is exposed to constrained SME factory conditions.

- 3.

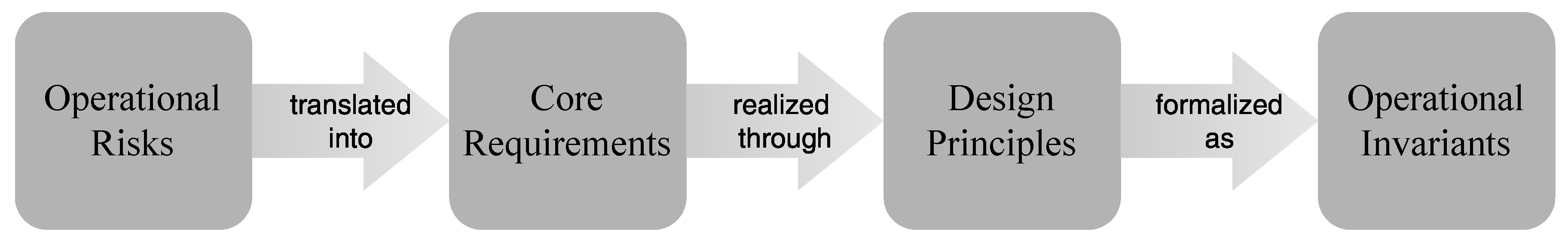

- Formalizing the Operational Data Foundation Framework in terms of core requirements, design principles, and operational invariants.

- 4.

- Demonstrating the framework’s field-grounded operational relevance through real-world implementation in an SME manufacturing environment.

2. Related Work

2.1. Smart Manufacturing Applications

2.2. Industrial Data Infrastructures

2.3. Lifecycle Connectivity

2.4. SME Deployment

3. Problem Analysis

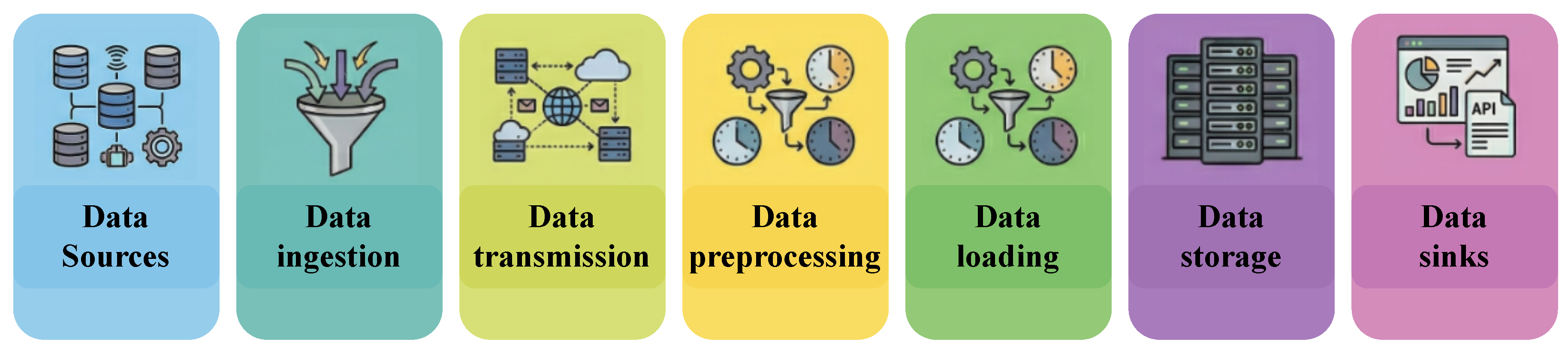

3.1. General Data Pipeline Lifecycle

- Data sources: the points at which operational data originate, including sensors, equipment states, manual records, and external systems.

- Data ingestion: the intake of source data into the pipeline.

- Data transmission: the movement of acquired data across gateway, edge, server, or cloud boundaries toward the next processing point.

- Data preprocessing: the cleaning, decoding, normalization, mapping, and transformation required to make raw inputs usable downstream.

- Data loading: the controlled insertion of processed records into the target operational layer or storage boundary.

- Data storage: the durable preservation of records for later access, recovery, reinterpretation, and reuse.

- Data sinks: the downstream endpoints that consume stored data, including dashboards, alerts, reporting, analytics, and decision support.

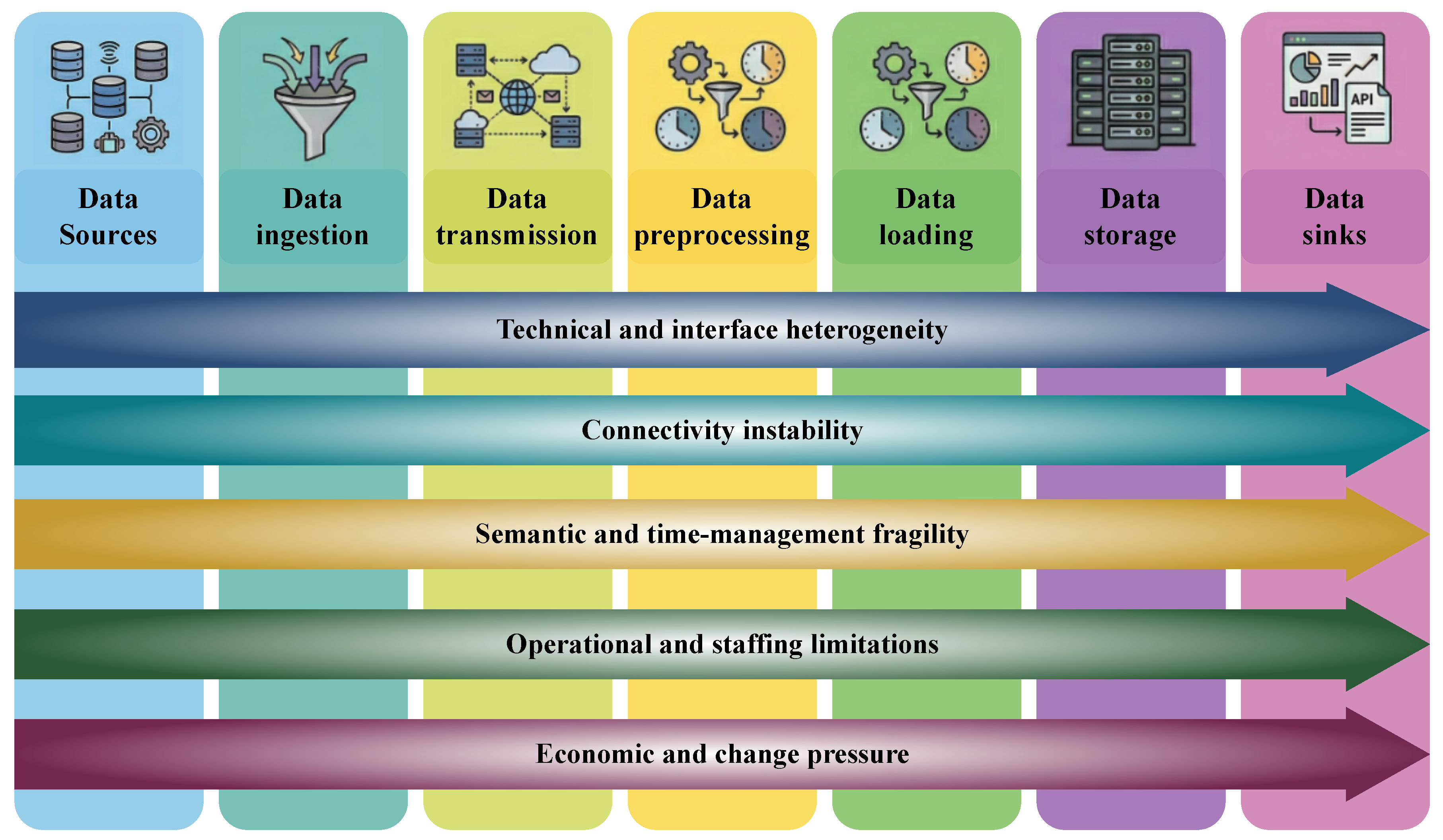

3.2. SME Constraints

3.3. Operational Risks

- RSK1.

- Continuity risk. Operational records may become interrupted, fragmented, or partially lost as data move from source capture to storage. In constrained manufacturing settings, the essential requirement is not one-time collection success, but the preservation of a gap-minimized and recoverable record despite source instability, transmission disruption, or delayed recovery.

- RSK2.

- Governance risk. Data may remain available while losing stable meaning. This occurs when source identity, metric semantics, timestamp interpretation, or transformation logic are not kept consistent across collection, preprocessing, loading, and storage. Under such conditions, downstream analysis may continue to operate, but it does so on data whose meaning is no longer reliably governed.

- RSK3.

- Diagnosability risk. When failure occurs, it may be difficult to determine where in the lifecycle the problem emerged and what kind of failure it is. Weak monitoring, poor stage separation, and limited observability turn degradation into a black-box symptom, making it difficult to distinguish among transmission loss, semantic corruption, storage inconsistency, and downstream mismatch in a structurally interpretable way.

- RSK4.

- Operability risk. A system may function technically while becoming too costly or complex to sustain in everyday practice. Tight coupling, heavy maintenance burden, unclear ownership, and non-modular structures increase the effort required to manage, modify, and repair the system, making long-term operation difficult under constrained SME staffing and budget conditions.

- RSK5.

- Reprocessability risk. Historical data may cease to be usable when rules, KPIs, schemas, or interpretation logic change. If raw preservation is weak, preprocessing is effectively irreversible, or lineage is incomplete, past records cannot be reliably replayed, recomputed, or reinterpreted. In that case, the data foundation loses one of its most important operational values: the ability to support correction and renewed interpretation over time.

- RSK6.

- Evolvability risk. New sensors, processes, lines, factories, or downstream requirements may become difficult to incorporate without disproportionate redesign cost or structural disruption. Because SME digitalization usually proceeds through incremental onboarding rather than one-shot integration, a data foundation that cannot absorb change without breaking governance and operational control cannot serve as a durable basis for growth.

4. Operational Data Foundation Framework

4.1. Core Requirements

- R1.

- Continuity. Data continuity should be preserved as far as practicable despite field disruption and connectivity instability. The essential requirement is not momentary acquisition success, but the minimization of gaps and the preservation of recoverable continuity so that the operational record remains usable even when intermediate segments are unstable.

- R2.

- Governance. Source identity, metric semantics, and time semantics should remain stable across collection, normalization, and storage. Data are not operationally usable merely because they exist; they must retain controlled meaning and interpretable context throughout the pipeline.

- R3.

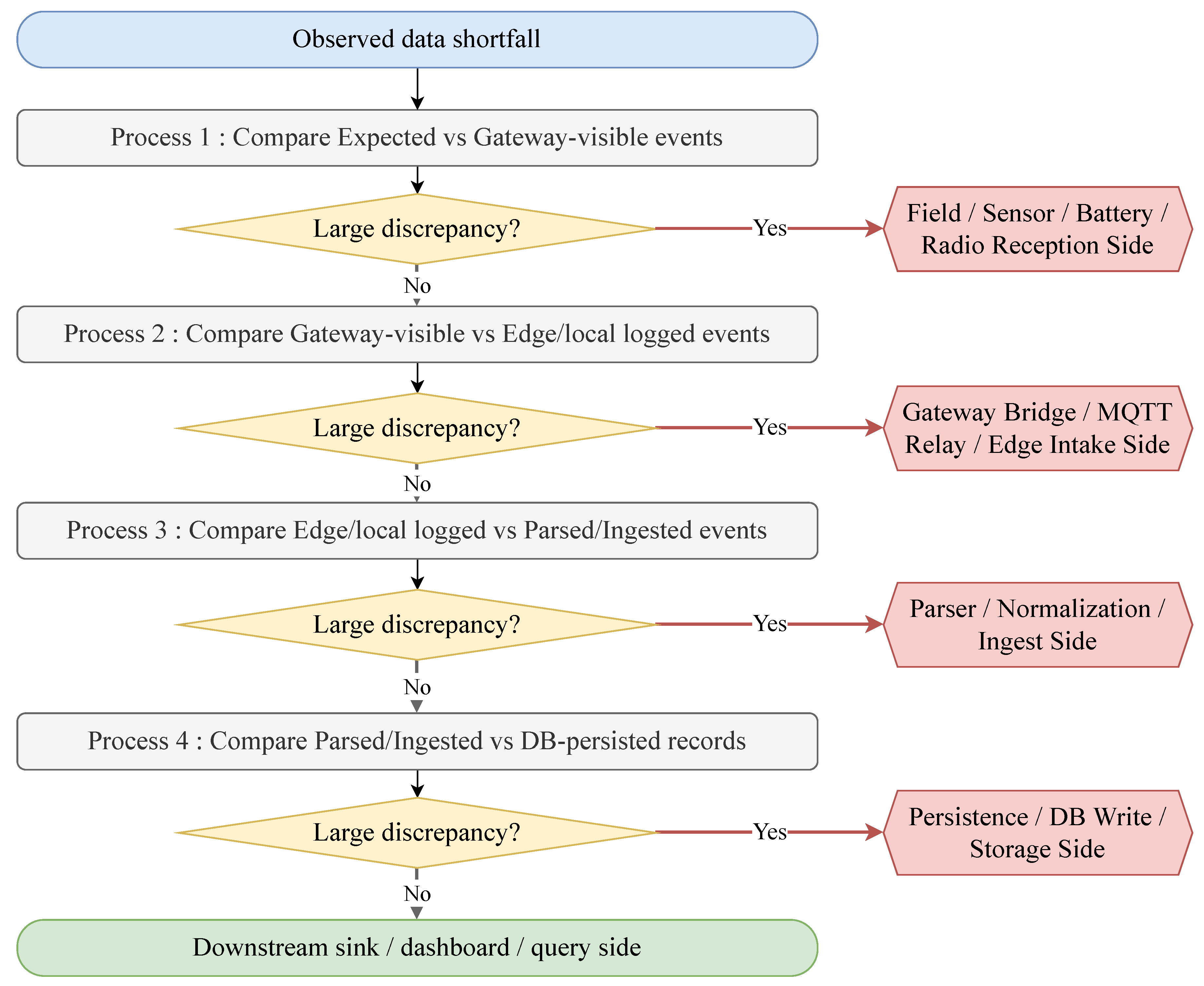

- Diagnosability. Failures should be observable as segment-level discrepancies rather than only as end-to-end symptoms. The requirement is not simply that problems become visible after downstream effects appear, but that the lifecycle location and mode of failure can be identified in a structurally interpretable manner.

- R4.

- Operability. The system should remain maintainable and manageable under constrained staffing and budget conditions. An operational data foundation must therefore be sustainable in day-to-day practice without imposing a structurally excessive maintenance burden or operational complexity.

- R5.

- Reprocessability. Historical data should remain reprocessable for rule changes, KPI revisions, correction, and recovery. Manufacturing data should not be treated as one-time consumables, but as records that can be revisited, recalculated, and reinterpreted as operational needs evolve.

- R6.

- Evolvability. The foundation should accommodate new sources, processes, sites, and applications without breaking the existing structure. Because SME digitalization typically proceeds through incremental onboarding rather than one-time integration, the data foundation must remain structurally open to change and extension.

4.2. Design Principles

- P1.

- Continuity responsibility should be placed close to the source. If continuity depends primarily on downstream transmission or cloud reachability, it becomes fragile under field disruption and connectivity instability. For this reason, the primary responsibility for preserving continuity should be placed as close to the source as possible through source-near capture, upstream buffering or persistence, and recovery-oriented ingestion logic.

- P2.

- Raw events should be preserved before abstraction. If only transformed outputs are retained, later replay, reinterpretation, and recovery become structurally constrained. Preserving raw events before irreversible abstraction is therefore a fundamental design principle, since rule changes, KPI revision, and correction all depend on access to preserved pre-abstraction records.

- P3.

- Semantics should be contract-defined. If source identity, metric naming, timestamp semantics, unit definition, and transformation logic are left to ad hoc implementation, semantic inconsistency accumulates across the pipeline. The design must therefore define semantics explicitly through controlled contracts, schema discipline, version-aware rules, and clear conventions for identity and time.

- P4.

- Observability should follow pipeline segments. Diagnosability cannot be secured through black-box end-to-end monitoring alone, because operationally meaningful failures must be localizable to specific lifecycle segments. Observability should therefore be structured along the pipeline itself through segment-level checkpoints, stage-level indicators, and explicit discrepancy visibility.

- P5.

- Responsibilities should be separated by function. If acquisition, normalization, storage, and downstream use are tightly coupled, both maintenance burden and change cost rise rapidly. Functional responsibilities should therefore be separated so that components remain structurally independent, interfaces remain replaceable, and modifications in one part do not unnecessarily destabilize the whole.

- P6.

- Operations should remain lightweight, modular, and open. In constrained SME environments, heavy operational structures, costly maintenance models, and vendor dependence undermine long-term viability. The design should therefore remain lightweight in deployment, modular in composition, manageable in maintenance scope, and open to replacement and extension. Where appropriate, open and widely supported components, including open-source tools, can help reduce lock-in and maintenance burden.

- P7.

- Change should be treated as a first-class assumption. Changes in KPIs, schemas, rules, source onboarding, and deployment scope are not exceptional events, but normal operating conditions in SME digitalization. The foundation should therefore be designed from the outset to accommodate and organize change through version-aware structures, replay support, incremental onboarding discipline, and change-tolerant interfaces.

4.3. Operational Invariants

- I1.

- Durable Capture Invariant. An accepted event should obtain durable persistence before any downstream failure can erase it. Continuity should therefore be judged from the point of first durable capture rather than from downstream availability alone.

- I2.

- Traceability Invariant. Every canonical or stored record should remain traceable to its source identity and operational context. Downstream data should not become detached from the source, metadata, and governing semantics that give them meaning.

- I3.

- Deterministic Normalization Invariant. The same raw event under the same contract and version conditions should always produce the same canonical output. Normalization should function as a repeatable and controlled semantic transformation rather than as ad hoc interpretation.

- I4.

- Segment-Localizable Failure Invariant. A failure should become visible as at least one discrepancy within a recognizable lifecycle segment. It should be possible to attribute the problem to a specific segment, such as the source, transmission, preprocessing, loading, storage, or sink, rather than observing it only as a downstream symptom.

- I5.

- Replayability Invariant. Preserved raw data should remain reprocessable for rule changes, KPI revision, correction, and recovery. Historical data should therefore remain usable for later reinterpretation and recomputation, rather than serving only as passive archival material.

- I6.

- Modular Replacement Invariant. Replacing a specific component should not break the semantic chain of the overall foundation. Changes in storage, parsing, transport, or downstream components should not destroy source identity, metric meaning, or replay context across the system.

- I7.

- Onboarding Consistency Invariant. A new source should be incorporable into the existing structure without breaking the prevailing governance discipline. Incremental onboarding should proceed through the same contract, schema, naming, and validation discipline, rather than through an accumulation of ad hoc exceptions.

4.4. Framework Synthesis

5. Field Implementation

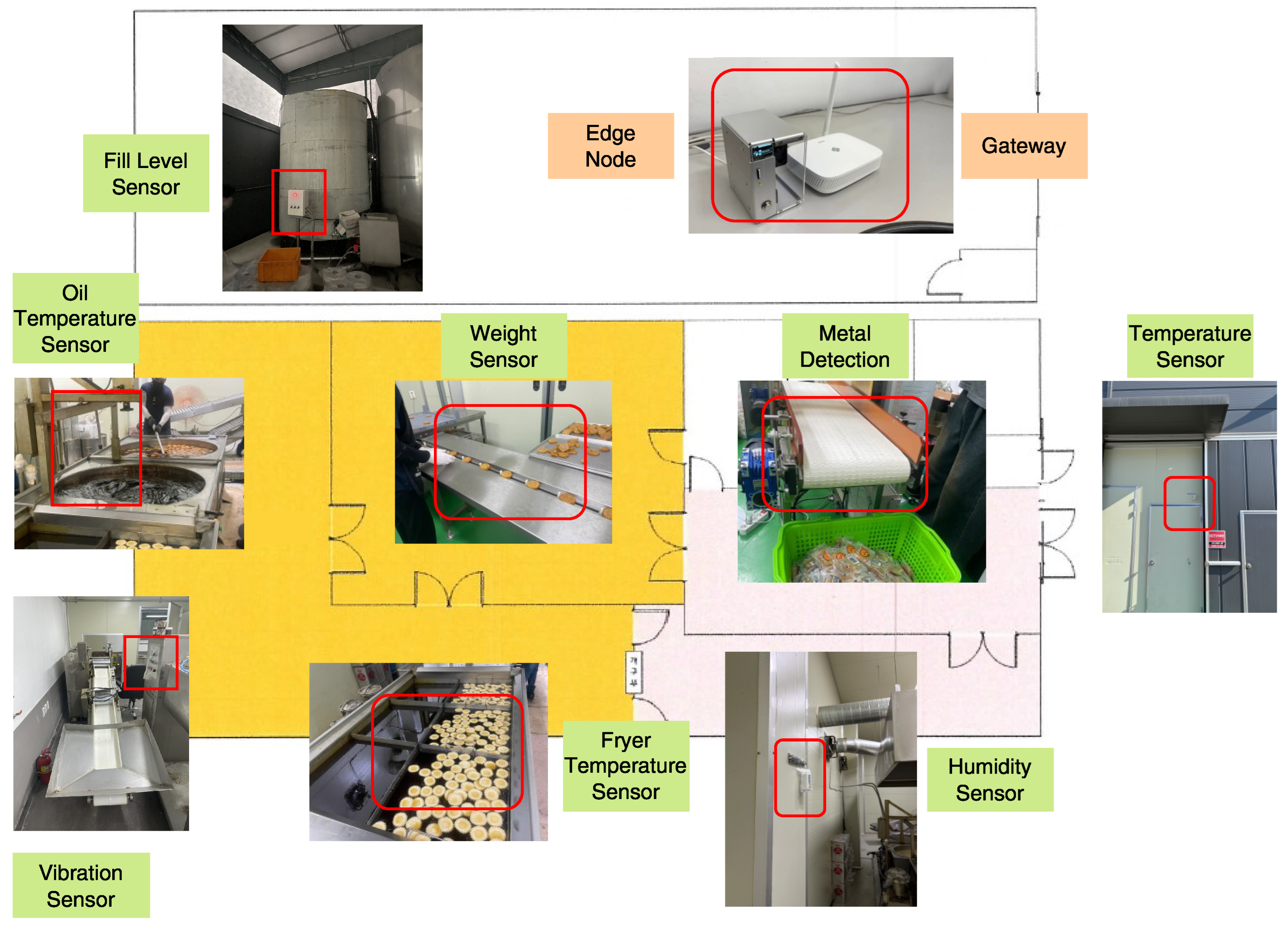

5.1. Deployment Setting

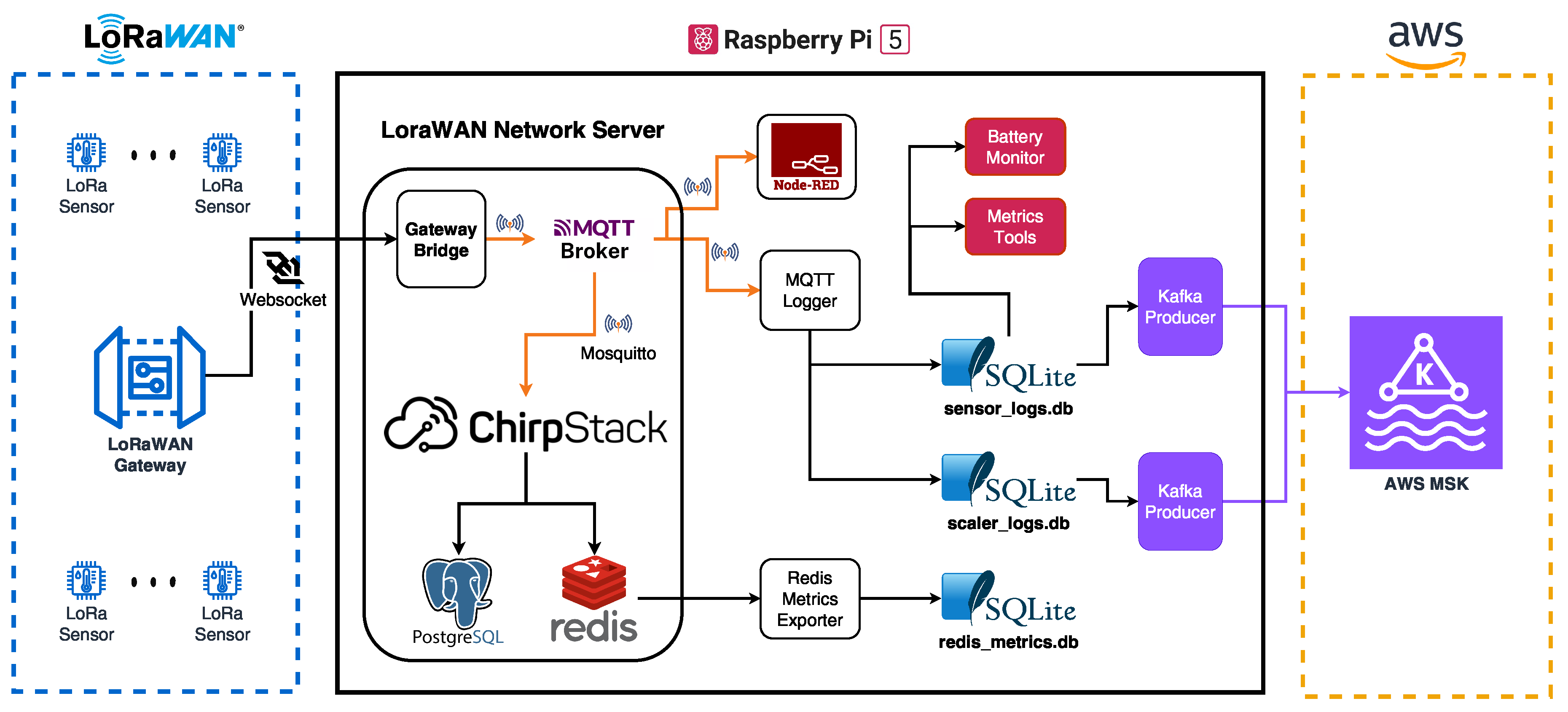

5.2. Field Data Acquisition and Edge Architecture

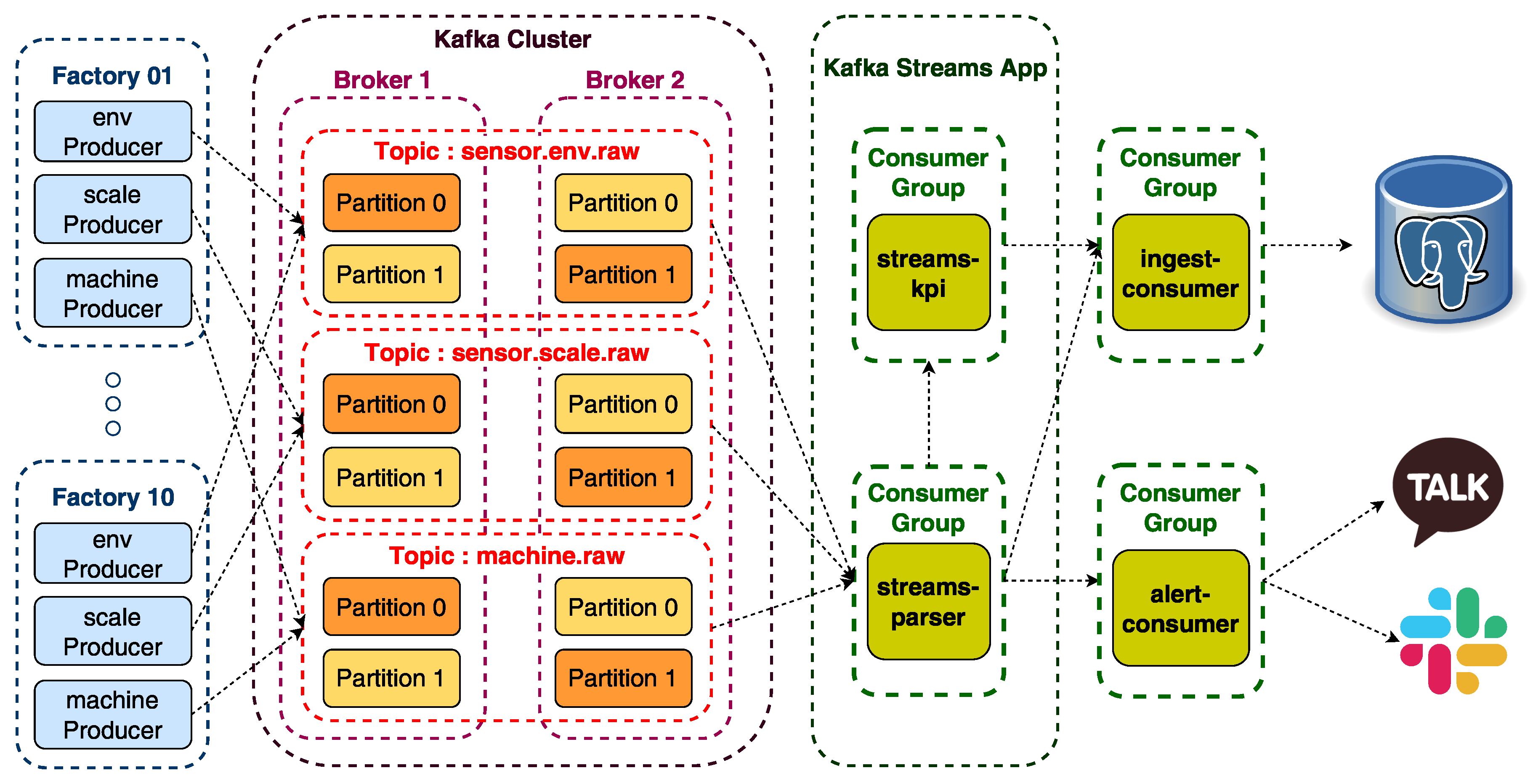

5.3. Cloud Processing and Event Flow

5.4. Operational Use

6. Evaluation of the Framework

6.1. Framework Compliance

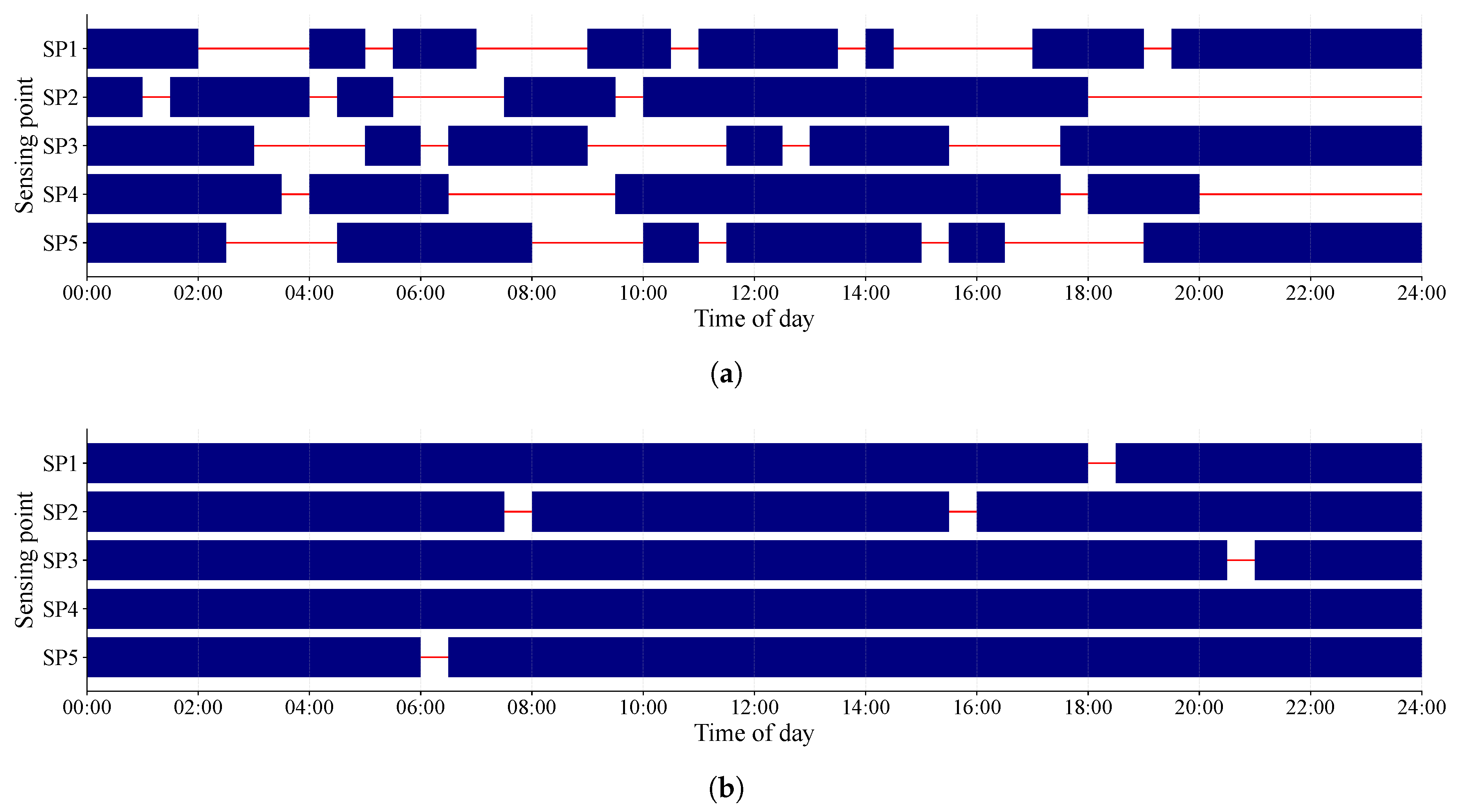

6.2. Operational Case: LoRa Reliability Improvement

7. Conclusions

Author Contributions

Funding

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Song, Y. A Comprehensive Review of Key Technologies and Applications in Smart Manufacturing Systems: From Digital Foundations to Intelligent Applications. In Proceedings of the 2025 2nd International Conference on Industrial Automation and Robotics, New York, NY, USA, 2025; IAR ’25, pp. 328–333. [CrossRef]

- Tao, F.; Qi, Q.; Liu, A.; Kusiak, A. Data-driven smart manufacturing. J. Manuf. Syst. 2018, 48, 157–169. [CrossRef]

- Tao, F.; Zhang, M. Digital Twin Shop-Floor: A New Shop-Floor Paradigm Towards Smart Manufacturing. IEEE Access 2017, 5, 20418–20427. [CrossRef]

- Ding, K.; Chan, F.T.S.; Zhang, X.; Zhou, G.; Zhang, F. Defining a Digital Twin-based Cyber-Physical Production System for autonomous manufacturing in smart shop floors. Int. J. Prod. Res. 2019, 57, 6315–6334. [CrossRef]

- Nguyen, P.; Kim, M.; Nichols, E.; Yoon, H.S. AI-Driven Digital Twins for Manufacturing: A Review Across Hierarchical Manufacturing System Levels. Sensors 2025, 26, 124. [CrossRef]

- Cerquitelli, T.; Pagliari, D.J.; Calimera, A.; Bottaccioli, L.; Patti, E.; Acquaviva, A.; Poncino, M. Manufacturing as a Data-Driven Practice: Methodologies, Technologies, and Tools. Proc. IEEE 2021, 109, 399–422. [CrossRef]

- Ren, S.; Zhang, Y.; Liu, Y.; Sakao, T.; Huisingh, D.; Almeida, C.M.V.B. A comprehensive review of big data analytics throughout product lifecycle to support sustainable smart manufacturing: A framework, challenges and future research directions. J. Clean. Prod. 2019, 210, 1343–1365. [CrossRef]

- Bernstein, W.Z.; Hedberg Jr., T.D.; Helu, M.; Feeney, A.B. Contextualising manufacturing data for lifecycle decision-making. Int. J. Prod. Lifecycle Manag. 2018, 10, 326–347. [CrossRef]

- Walton, R.B.; Ciarallo, F.W.; Champagne, L.E. A Unified Digital Twin Approach Incorporating Virtual, Physical, and Prescriptive Analytical Components to Support Adaptive Real-Time Decision-Making. Computers & Industrial Engineering 2024, 193, 110241. [CrossRef]

- Zaborowski, P.; Bye, B.L.; Berre, A.J.; Atkinson, R.; Villar, A.; Voidrot, M.F.; Palma, R. The Role of Standards in The Environmental Digital Twins Architectures. In Proceedings of the IGARSS 2024 – 2024 IEEE International Geoscience and Remote Sensing Symposium, 2024, pp. 271–273. [CrossRef]

- Ismail, A.; Truong, H.L.; Kastner, W. Manufacturing process data analysis pipelines: a requirements analysis and survey. J. Big Data 2019, 6, 1. [CrossRef]

- Raptis, T.P.; Passarella, A.; Conti, M. Data Management in Industry 4.0: State of the Art and Open Challenges. IEEE Access 2019, 7, 97052–97093. [CrossRef]

- Hedberg Jr., T.D.; Feeney, A.B.; Helu, M.; Camelio, J.A. Toward a Lifecycle Information Framework and Technology in Manufacturing. J. Comput. Inf. Sci. Eng. 2017, 17, 021010. [CrossRef]

- Zeid, A.; Sundaram, S.; Moghaddam, M.; Kamarthi, S.; Marion, T. Interoperability in Smart Manufacturing: Research Challenges. Machines 2019, 7, 21. [CrossRef]

- Pereira, R.M.; Szejka, A.L.; Canciglieri Junior, O. Towards an information semantic interoperability in smart manufacturing systems: contributions, limitations and applications. Int. J. Comput. Integr. Manuf. 2021, 34, 422–439. [CrossRef]

- Morris, K.C.; Lu, Y.; Frechette, S. Foundations of Information Governance for Smart Manufacturing. Smart Sustain. Manuf. Syst. 2020, 4, 43–61. [CrossRef]

- Foidl, H.; Golendukhina, V.; Ramler, R.; Felderer, M. Data pipeline quality: Influencing factors, root causes of data-related issues, and processing problem areas for developers. J. Syst. Softw. 2024, 207, 111855. [CrossRef]

- Davis, J.; Malkani, H.; Dyck, J.; Korambath, P.; Wise, J. Cyberinfrastructure for the Democratization of Smart Manufacturing. In Smart Manufacturing; Soroush, M.; Baldea, M.; Edgar, T.F., Eds.; Elsevier, 2020; pp. 83–116. [CrossRef]

- Mittal, S.; Khan, M.A.; Romero, D.; Wuest, T. A critical review of smart manufacturing & Industry 4.0 maturity models: Implications for small and medium-sized enterprises (SMEs). J. Manuf. Syst. 2018, 49, 194–214. [CrossRef]

- Krishnan, R. Challenges and benefits for small and medium enterprises in the transformation to smart manufacturing: a systematic literature review and framework. J. Manuf. Technol. Manag. 2024, 35, 918–938. [CrossRef]

- Mittal, S.; Khan, M.A.; Purohit, J.K.; Menon, K.; Romero, D.; Wuest, T. A smart manufacturing adoption framework for SMEs. Int. J. Prod. Res. 2020, 58, 1555–1573. [CrossRef]

- Ghobakhloo, M.; Ching, N.T. Adoption of digital technologies of smart manufacturing in SMEs. J. Ind. Inf. Integr. 2019, 16, 100107. [CrossRef]

- Alqoud, A.; Schaefer, D.; Milisavljevic-Syed, J. Industry 4.0: a systematic review of legacy manufacturing system digital retrofitting. Manufacturing Rev. 2022, 9, 32. [CrossRef]

- Huang, D.; Chin, C.P.Y. The Barriers and Challenges in Smart Manufacturing Adoption for SMEs: A Review. J. Adv. Manuf. Syst. 2025, 24, 533–557. [CrossRef]

- Kumar, R.; Dutta, G.; Phanden, R.K. Digitalization Adoption Barriers in the Context of Sustainability and Operational Excellence: Implications for SMEs. Eng. Manag. J. 2025, 37, 355–371. [CrossRef]

- Narwane, V.S.; Raut, R.D.; Gardas, B.B.; Narkhede, B.E.; Awasthi, A. Examining smart manufacturing challenges in the context of micro, small and medium enterprises. Int. J. Comput. Integr. Manuf. 2022, 35, 1395–1412. [CrossRef]

- Abdel-Aty, T.A.; Negri, E. Conceptualizing the digital thread for smart manufacturing: a systematic literature review. J. Intell. Manuf. 2024, 35, 3629–3653. [CrossRef]

- Zhang, Q.; Liu, J.; Chen, X. A Literature Review of the Digital Thread: Definition, Key Technologies, and Applications. Systems 2024, 12, 70. [CrossRef]

- Rozhok, A.; Abate, R.; Manoli, E.; Nele, L. A Review of Recent Advanced Applications in Smart Manufacturing Systems. J. Manuf. Mater. Process. 2026, 10, 1. [CrossRef]

- Bueno, A.; Godinho Filho, M.; Frank, A.G. Smart production planning and control in the Industry 4.0 context: A systematic literature review. Comput. Ind. Eng. 2020, 149, 106774. [CrossRef]

- Cinar, Z.M.; Nuhu, A.A.; Zeeshan, Q.; Korhan, O. Digital Twins for Industry 4.0: A Review. In Industrial Engineering in the Digital Disruption Era; Calisir, F.; Korhan, O., Eds.; Lecture Notes in Management and Industrial Engineering, Springer: Cham, 2020. [CrossRef]

- Atalay, M.; Murat, U.; Oksuz, B.; Parlaktuna, A.M.; Pisirir, E.; Testik, M.C. Digital twins in manufacturing: systematic literature review for physical–digital layer categorization and future research directions. Int. J. Comput. Integr. Manuf. 2022, 35, 679–705. [CrossRef]

- Ojstersek, R.; Javernik, A.; Buchmeister, B. Optimizing smart manufacturing systems using digital twin. Adv. Prod. Eng. Manag. 2023, 18, 475–485. [CrossRef]

- Qamsane, Y.; Chen, C.Y.; Balta, E.C.; Kao, B.C.; Mohan, S.; Moyne, J.; Tilbury, D.; Barton, K. A Unified Digital Twin Framework for Real-time Monitoring and Evaluation of Smart Manufacturing Systems. In Proceedings of the 2019 IEEE 15th International Conference on Automation Science and Engineering (CASE), 2019, pp. 1394–1401. [CrossRef]

- Ciano, M.P.; Pozzi, R.; Rossi, T.; Strozzi, F. Digital twin-enabled smart industrial systems: a bibliometric review. Int. J. Comput. Integr. Manuf. 2021, 34, 690–708. [CrossRef]

- Saqlain, M.; Piao, M.; Shim, Y.; Lee, J.Y. Framework of an IoT-based Industrial Data Management for Smart Manufacturing. J. Sens. Actuator Netw. 2019, 8, 25. [CrossRef]

- O’Donovan, P.; Leahy, K.; Bruton, K.; O’Sullivan, D.T.J. An industrial big data pipeline for data-driven analytics maintenance applications in large-scale smart manufacturing facilities. J. Big Data 2015, 2, 25. [CrossRef]

- Modoni, G.E.; Doukas, M.; Terkaj, W.; Sacco, M.; Mourtzis, D. Enhancing factory data integration through the development of an ontology: from the reference models reuse to the semantic conversion of the legacy models. Int. J. Comput. Integr. Manuf. 2017, 30, 1043–1059. [CrossRef]

- Hildebrandt, C.; Köcher, A.; Küstner, C.; López-Enríquez, C.M.; Müller, A.W.; Caesar, B.; Gundlach, C.S.; Fay, A. Ontology Building for Cyber–Physical Systems: Application in the Manufacturing Domain. IEEE Trans. Autom. Sci. Eng. 2020, 17, 1266–1282. [CrossRef]

- Westermann, T.; Hranisavljevic, N.; Fay, A. Accessing and Interpreting OPC UA Event Traces based on Semantic Process Descriptions. In Proceedings of the 2022 IEEE 27th International Conference on Emerging Technologies and Factory Automation (ETFA), Stuttgart, Germany, 2022; pp. 1–7. [CrossRef]

- Bianchini, D.; Fapanni, T.; Garda, M.; Leotta, F.; Mecella, M.; Rula, A.; Sardini, E. Digital Thread for Smart Products: A Survey on Technologies, Challenges, and Opportunities in Service-Oriented Supply Chains. IEEE Access 2024, 12, 125284–125305. [CrossRef]

- Hedberg Jr., T.D.; Bajaj, M.; Camelio, J.A. Using Graphs to Link Data Across the Product Lifecycle for Enabling Smart Manufacturing Digital Threads. J. Comput. Inf. Sci. Eng. 2020, 20, 011011. [CrossRef]

- Kwon, S.; Monnier, L.V.; Barbau, R.; Bernstein, W.Z. Enriching standards-based digital thread by fusing as-designed and as-inspected data using knowledge graphs. Adv. Eng. Inform. 2020, 46, 101102. [CrossRef]

- Schmidt, N.; Lueder, A. The Flow and Reuse of Data: Capabilities of AutomationML in the Production System Life Cycle. IEEE Ind. Electron. Mag. 2018, 12, 59–63. [CrossRef]

- Monnier, L.V.; Shao, G.; Foufou, S. A Methodology for Digital Twins of Product Lifecycle Supported by Digital Thread. In Proceedings of the ASME 2022 International Mechanical Engineering Congress and Exposition, Columbus, Ohio, USA, 2022; p. V02BT02A023. [CrossRef]

- Etz, D.; Brantner, H.; Kastner, W. Smart Manufacturing Retrofit for Brownfield Systems. Procedia Manuf. 2020, 42, 327–332. [CrossRef]

- Tran, T.A.; Ruppert, T.; Eigner, G.; Abonyi, J. Retrofitting-Based Development of Brownfield Industry 4.0 and Industry 5.0 Solutions. IEEE Access 2022, 10, 64348–64374. [CrossRef]

- Park, H.M.; Jeon, J.W. OPC UA based Universal Edge Gateway for Legacy Equipment. In Proceedings of the 2019 IEEE 17th International Conference on Industrial Informatics (INDIN), Helsinki, Finland, 2019; pp. 1002–1007. [CrossRef]

- Hulla, M.; Herstätter, P.; Wolf, M.; Ramsauer, C. Towards digitalization in production in SMEs – A qualitative study of challenges, competencies and requirements for trainings. Procedia CIRP 2021, 104, 887–892. [CrossRef]

- Chavez, Z.; Baalsrud Hauge, J.; Bellgran, M. A Conceptual Model for Deploying Digitalization in SMEs Through Capability Building. In Advances in Production Management Systems. Towards Smart and Digital Manufacturing; Lalic, B.; Majstorovic, V.; Marjanovic, U.; von Cieminski, G.; Romero, D., Eds.; Springer: Cham, 2020; Vol. 592, IFIP Advances in Information and Communication Technology. [CrossRef]

- Banerjee, A.; Jayaraman, P.P.; Fizza, K.; Wang, S.; Jin, J.; Ghaderi, H. Low-cost digital manufacturing solution for process manufacturing SMEs - Lesson and experiences from real-world pilot. IET Conf. Proc. 2024, 2024, 109–115. [CrossRef]

- Rauch, E.; Dallasega, P.; Unterhofer, M. Requirements and Barriers for Introducing Smart Manufacturing in Small and Medium-Sized Enterprises. IEEE Eng. Manag. Rev. 2019, 47, 87–94. [CrossRef]

- Gao, C.; Wang, Z.; Chen, Y. On the Connectivity of Highly Dynamic Wireless Sensor Networks in Smart Factory. In Proceedings of the 2019 International Conference on Networking and Network Applications (NaNA), Daegu, Korea (South), 2019; pp. 208–212. [CrossRef]

- Noor-A-Rahim, M.; John, J.; Firyaguna, F.; Sherazi, H.H.R.; Kushch, S.; Vijayan, A.; O’Connell, E.; Pesch, D.; O’Flynn, B.; O’Brien, W.; et al. Wireless Communications for Smart Manufacturing and Industrial IoT: Existing Technologies, 5G and Beyond. Sensors 2023, 23, 73. [CrossRef]

- Hawkridge, G.; Hernandez, M.P.; de Silva, L.; Terrazas, G.; Tlegenov, Y.; McFarlane, D.; Thorne, A. Tying Together Solutions for Digital Manufacturing: Assessment of Connectivity Technologies & Approaches. In Proceedings of the 2019 24th IEEE International Conference on Emerging Technologies and Factory Automation (ETFA), Zaragoza, Spain, 2019; pp. 1383–1387. [CrossRef]

- Nagorny, K.; Scholze, S.; Colombo, A.W.; Oliveira, J.B. A DIN Spec 91345 RAMI 4.0 Compliant Data Pipelining Model: An Approach to Support Data Understanding and Data Acquisition in Smart Manufacturing Environments. IEEE Access 2020, 8, 223114–223129. [CrossRef]

- Nasirinejad, M.; Afshari, H.; Sampalli, S. Challenges and Solutions to Adopt Smart Maintenance in SMEs: A Literature Review and Research Agenda. IFAC-PapersOnLine 2024, 58, 917–922. [CrossRef]

- Doyle, F.; Cosgrove, J. Steps towards digitization of manufacturing in an SME environment. Procedia Manuf. 2019, 38, 540–547. [CrossRef]

- McFarlane, D.; Ratchev, S.; de Silva, L.; Hawkridge, G.; Schönfuß, B.; Terrazas Angulo, G. Digitalisation for SME Manufacturers: A Framework and a Low-Cost Approach. IFAC-PapersOnLine 2022, 55, 414–419. [CrossRef]

| SME Constraint | Operational Risk | Requirements | Principles | Invariants |

|---|---|---|---|---|

| Heterogeneous devices and fragmented interfaces | RSK2 (Governance risk) | R2 (Governance) | P3 (Semantics must be contract-defined) | I2 (Traceability), I3 (Deterministic normalization) |

| Add-on sources and partial visibility | RSK2, RSK6 (Evolvability risk) | R2, R6 (Evolvability) | P3, P7 (Change as a first-class assumption) | I2, I7 (Onboarding consistency) |

| Unstable connectivity and constrained sensing | RSK1 (Continuity risk) | R1 (Continuity) | P1 (Continuity responsibility must be placed close to the source) | I1 (Durable capture) |

| Weak cross-segment observability | RSK3 (Diagnosability risk) | R3 (Diagnosability) | P4 (Observability must follow pipeline segments) | I4 (Segment-localizable failure) |

| Limited staff and high maintenance burden | RSK4 (Operability risk) | R4 (Operability) | P6 (Operations must remain lightweight, modular, and open) | I6 (Modular replacement) |

| Weak raw-data preservation | RSK5 (Reprocessability risk) | R5 (Reprocessability) | P2 (Raw events must be preserved before abstraction) | I5 (Replayability) |

| Tight functional coupling across the pipeline | RSK3, RSK4, RSK6 | R3, R4, R6 | P5 (Responsibilities must be separated by function) | I4, I6 |

| Frequent KPI, rule, schema, and source changes | RSK5, RSK6 | R5, R6 | P7 | I5, I7 |

| Budget sensitivity and lock-in concerns | RSK4, RSK6 | R4, R6 | P6 | I6, I7 |

| General Lifecycle Stage | Added Operational Meaning | Related Risks | Requirements | Principles | Invariants |

|---|---|---|---|---|---|

| Data sources | Stable source identity | RSK2, RSK6 | R2, R6 | P3, P7 | I2, I7 |

| Data ingestion | Source-near continuity and intake control | RSK1, RSK2 | R1, R2 | P1, P3 | I1, I2 |

| Data transmission | Recoverable transfer and visible discrepancies | RSK1, RSK3 | R1, R3 | P1, P4 | I1, I4 |

| Data preprocessing | Contract-defined and deterministic transformation | RSK2, RSK6 | R2, R6 | P3, P7 | I2, I3, I7 |

| Data loading | Controlled persistence with replay context | RSK5, RSK6 | R5, R6 | P2, P7 | I5, I7 |

| Data storage | Replay-ready and replaceable persistence | RSK4, RSK5 | R4, R5 | P2, P6 | I5, I6 |

| Data sinks | Governed downstream reuse | RSK2, RSK5 | R2, R5 | P3, P7 | I2, I5 |

| Monitoring and management | Lifecycle-wide observability and maintainability | RSK3, RSK4 | R3, R4 | P4, P6 | I4, I6 |

| Requirement | Representative evidence |

|---|---|

| Continuity | local persistence, recovery path, gap reduction |

| Governance | source identity, canonical semantics, time separation |

| Diagnosability | segment-wise visibility, stage discrepancy checks |

| Operability | modular stack, monitoring and intervention points |

| Reprocessability | raw-like retention, layered persistence |

| Evolvability | common event contract, extensible onboarding |

| Stage / Metric | Expected | Observed | Ratio (%) |

|---|---|---|---|

| Expected transmissions | 48,000 | – | – |

| Gateway-visible events | 48,000 | 16,400 | 34.2 |

| Edge/local logged events | 16,400 | 16,120 | 98.3 |

| Parsed/Ingested events | 16,120 | 15,980 | 99.1 |

| DB-persisted events | 15,980 | 15,870 | 99.3 |

| Metric | Before | After | Change |

|---|---|---|---|

| Reception rate (%) | 33 | 95 | +62 pp |

| Persisted events/day | 15,870 | 45,600 | +187% |

| Average gap duration (min) | 145 | 18 | –87.6% |

| Long-gap events/day | 18 | 2 | –88.9% |

| Gateway-to-DB consistency (%) | 96.8 | 99.4 | +2.6 pp |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).