Submitted:

08 April 2026

Posted:

10 April 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Background

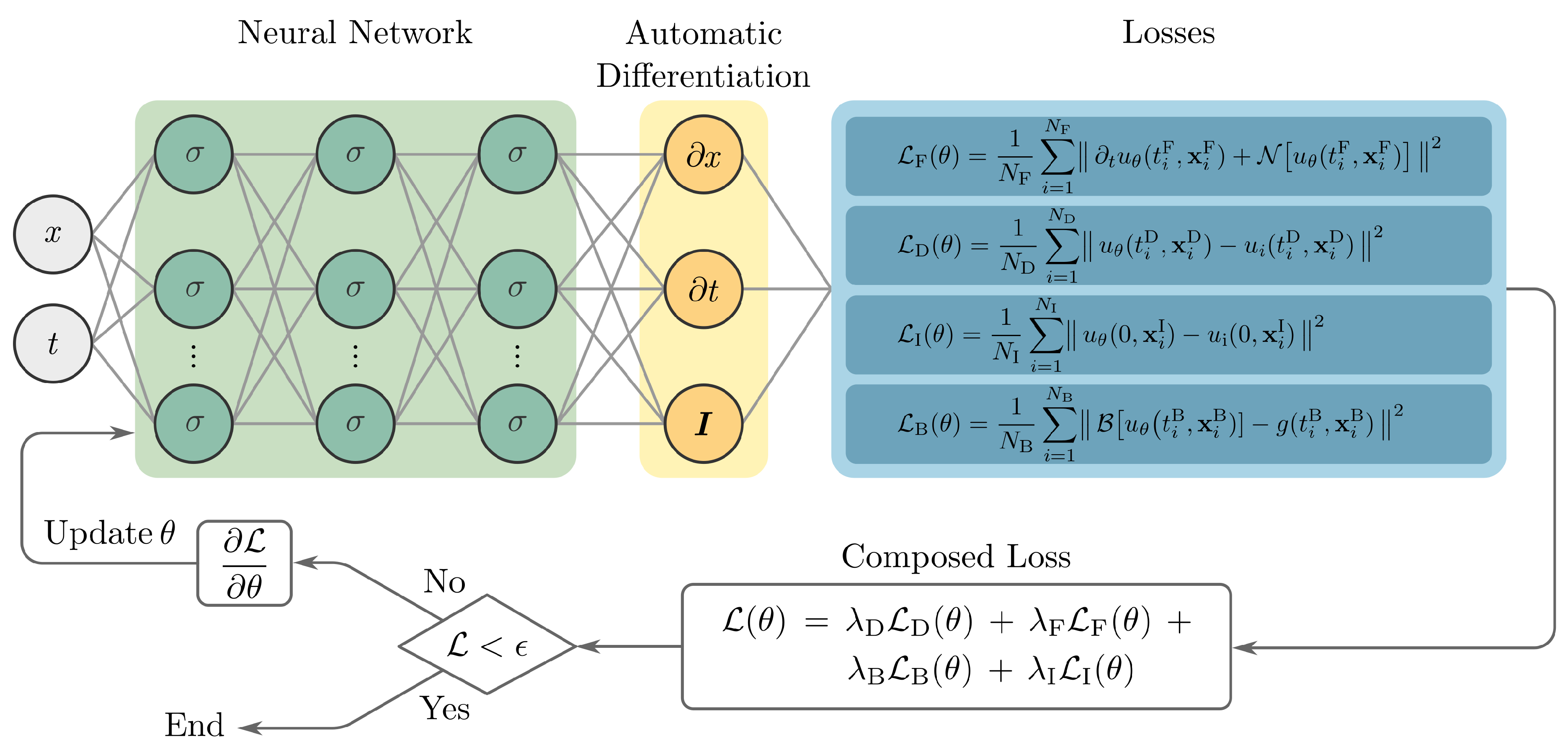

2.1. Physics-Informed Neural Networks

2.2. Electromagnetism and Maxwell’s equations

3. Methodology

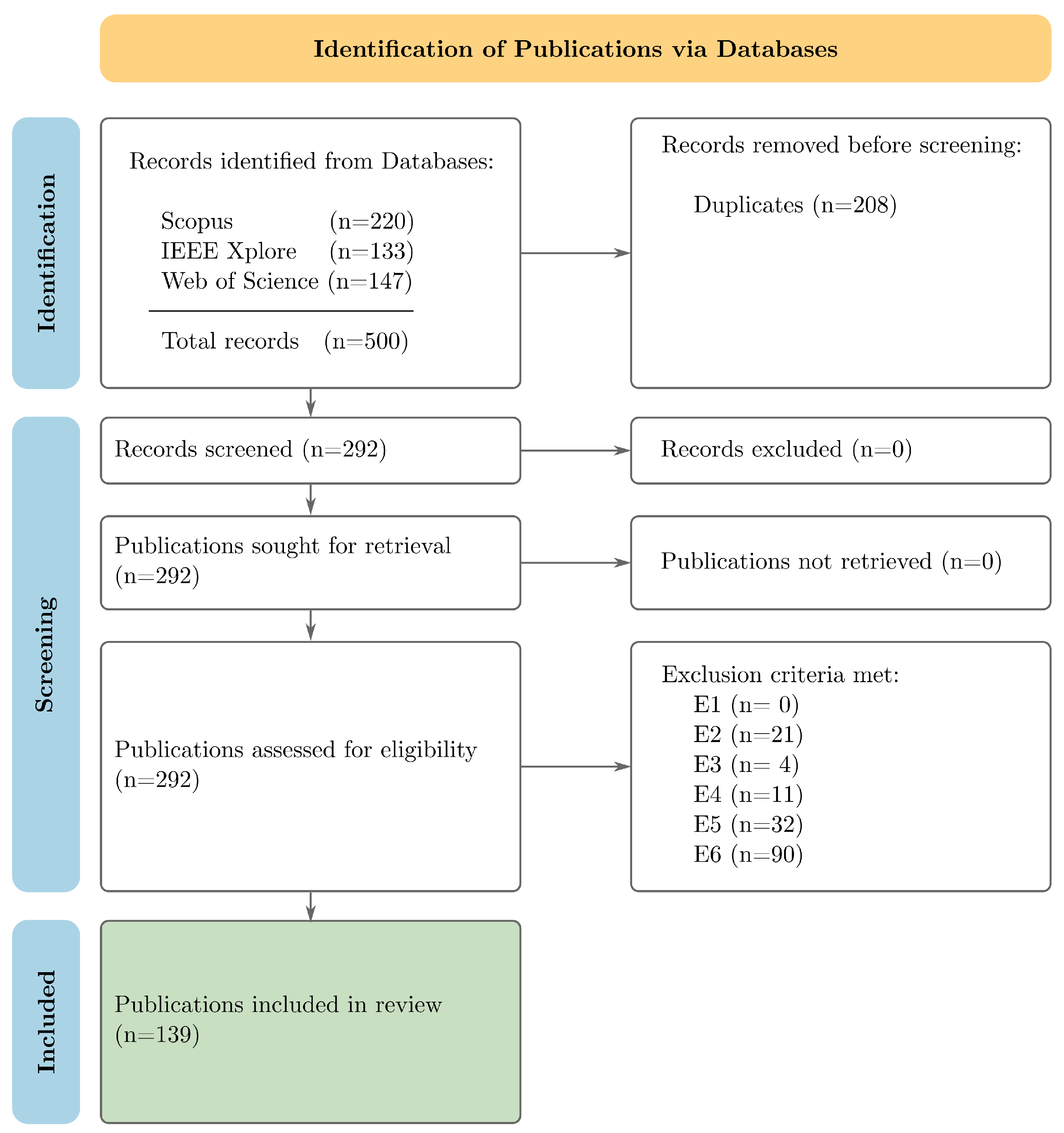

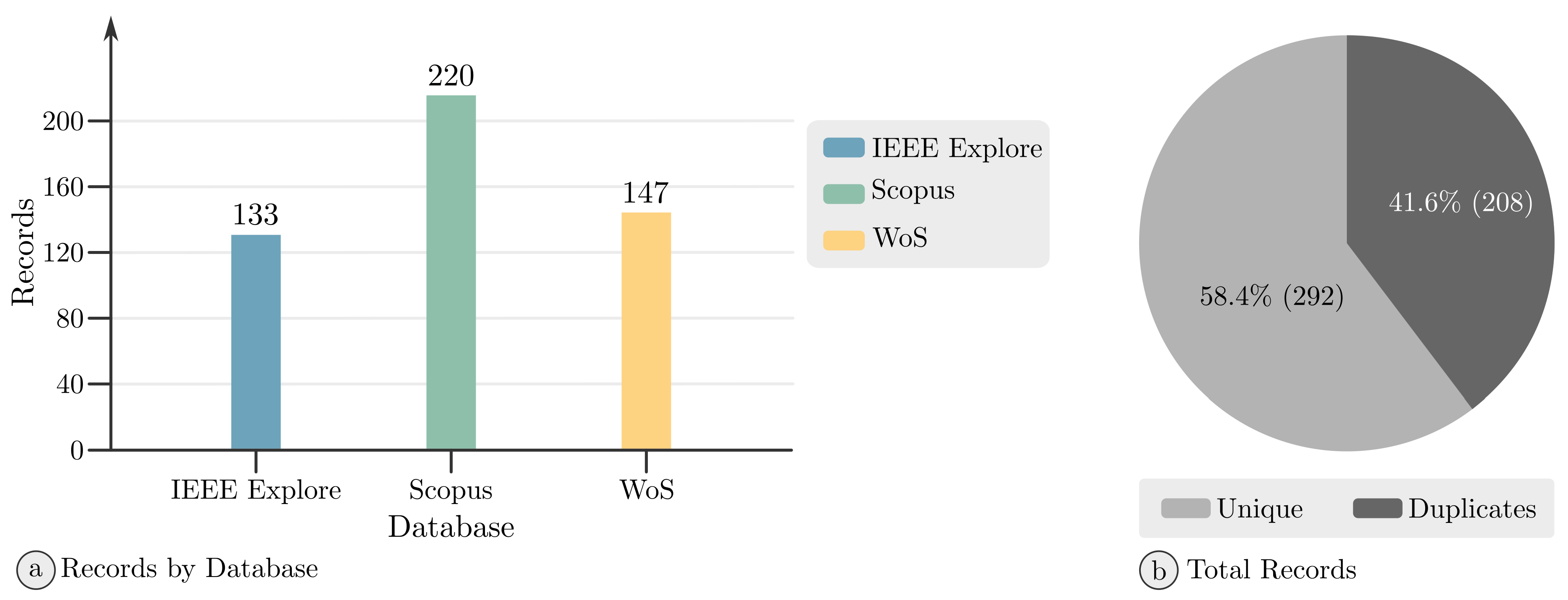

3.1. Systematic Literature Review

3.2. Research Questions

3.3. Search Strategy

- Scopus;

- Web of Science;

- IEEE Xplore.

3.4. Eligibility Criteria

3.5. Study Selection

3.6. Data Extraction

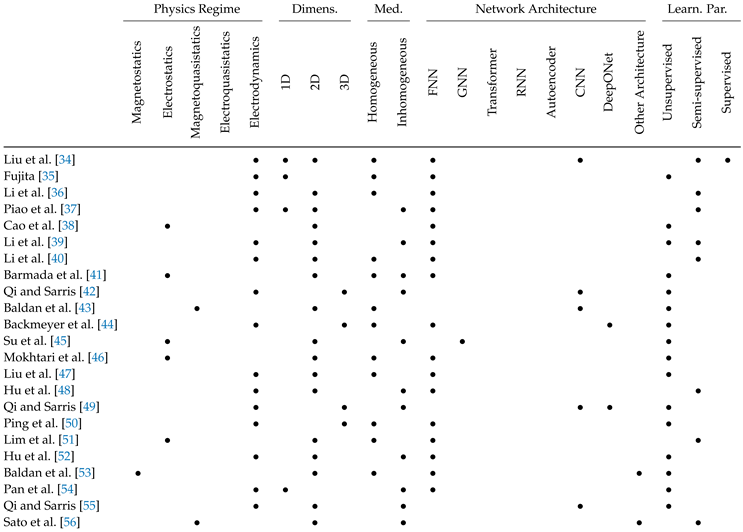

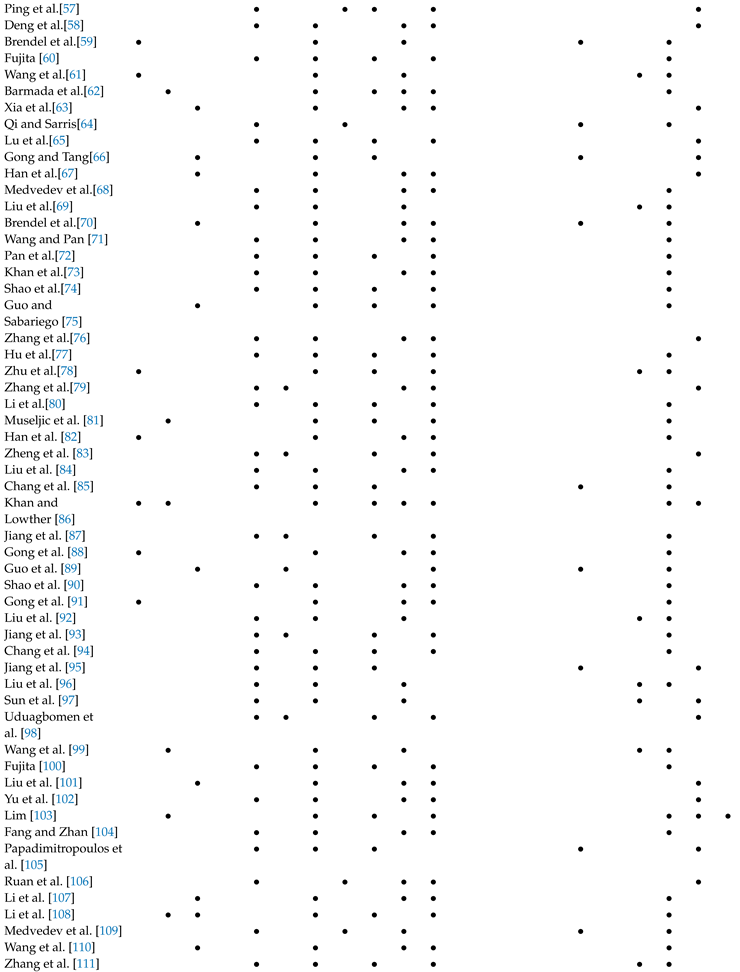

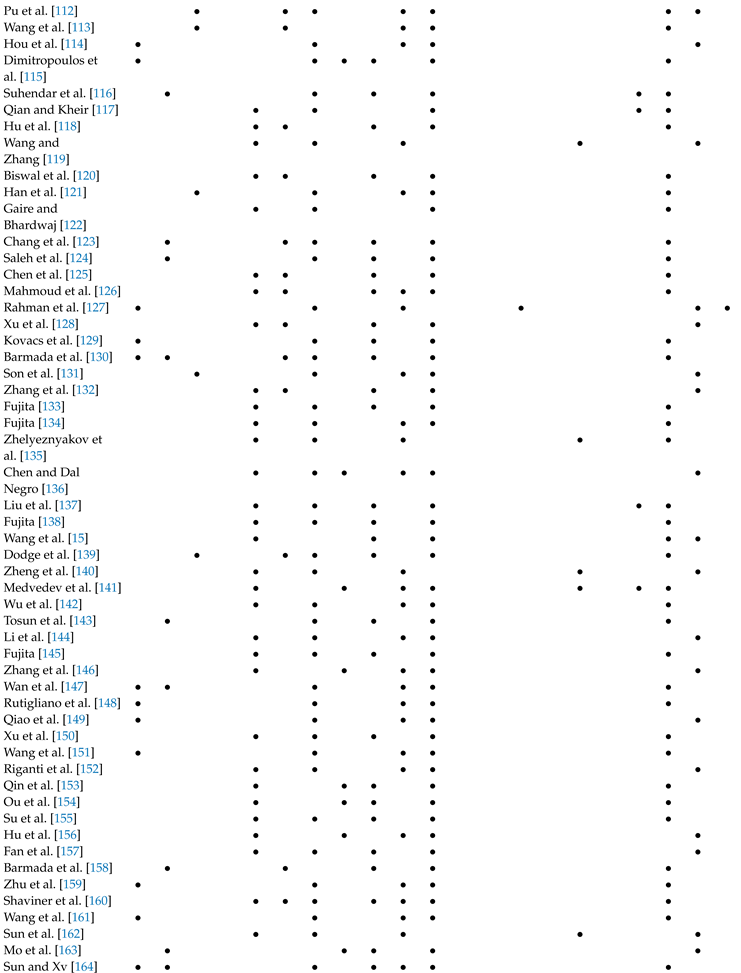

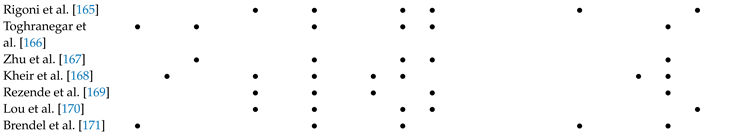

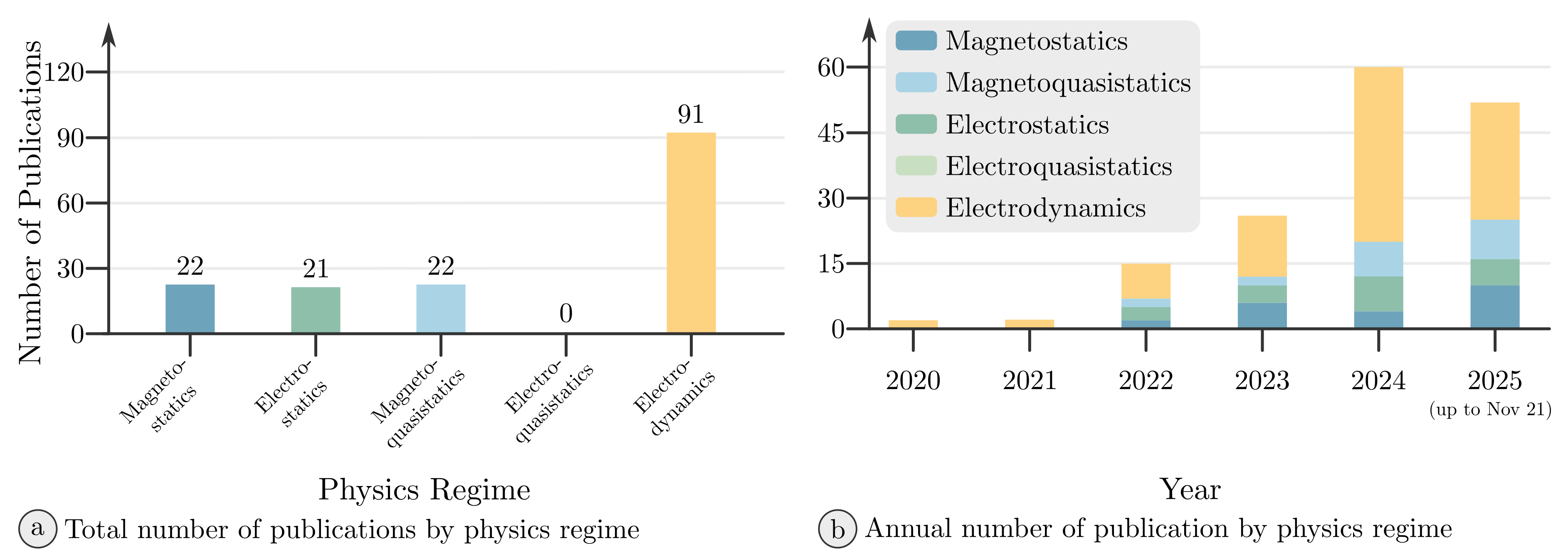

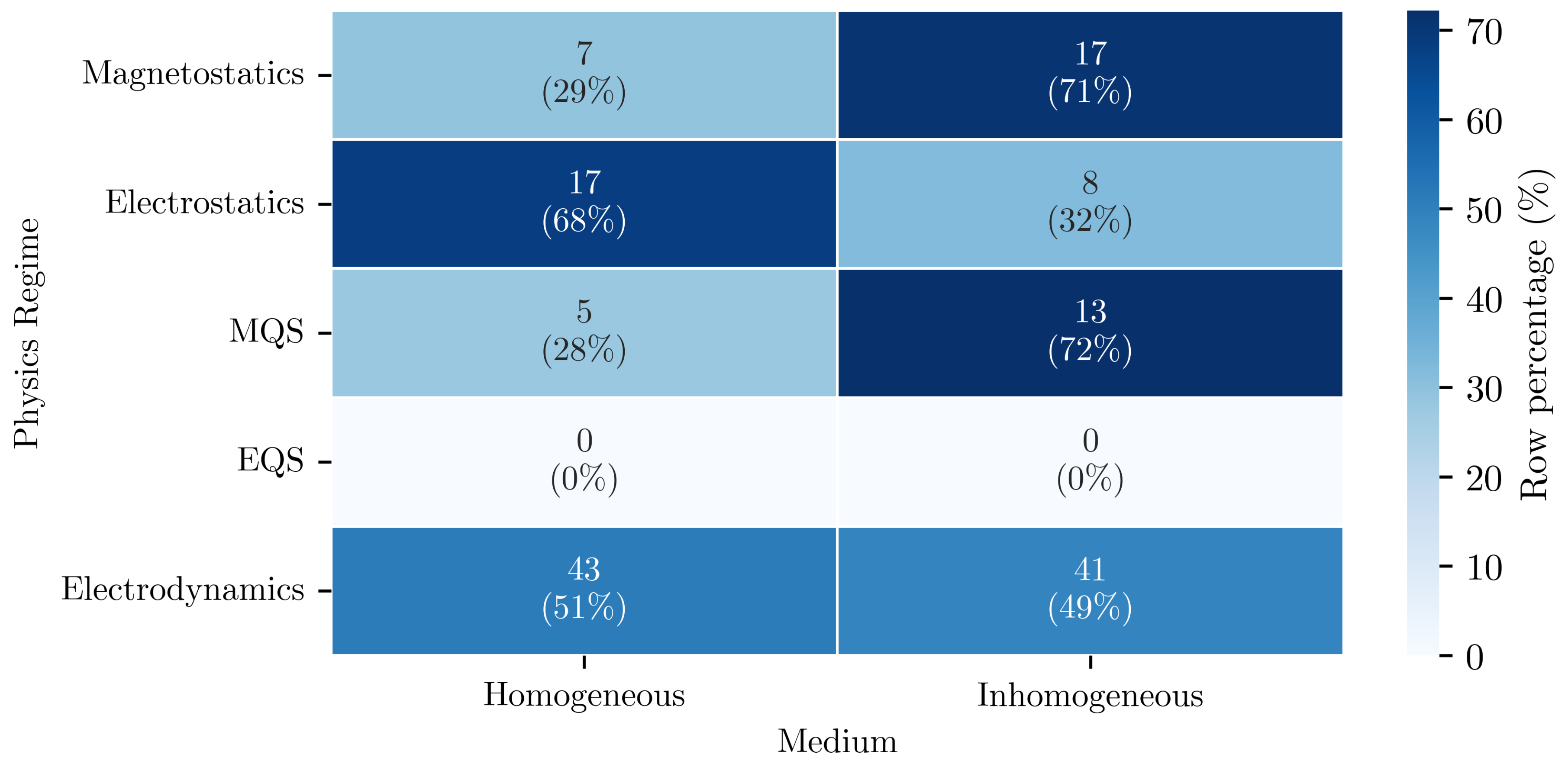

- Physics Regime: The subfield of electromagnetics addressed by a publication is recorded in the characteristic Physics Regime. To answer the research questions, six categories were introduced, namely Magnetostatics, Electrostatics, Magnetoquasistatics, Electroquasistatics and Electrodynamics. Magneto- and Electrostatics deal with the purely static cases, while the quasistatic assumptions extend these as described in Chapter 2.2. The category Electrodynamics encompasses the dynamic regime governed by the time-dependent Maxwell’s equations, where the displacement current as well as the induction terms are retained and wave propagation is essential. Here, the term Electrodynamics does not refer to the full scope of the field theory of classical electrodynamics, therefore excluding the static regime.

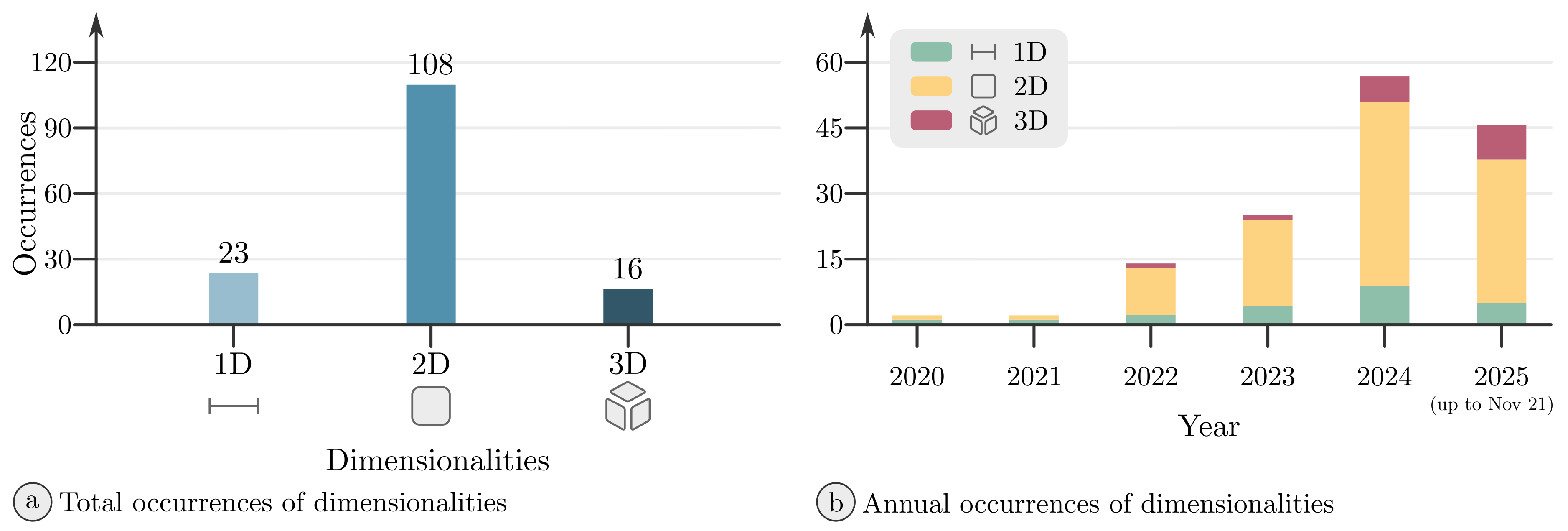

- Dimensionality: The characteristic Dimensionality delineates the spatial dimensions of the addressed problem with the categories 1D, 2D, and 3D. In this context, time is not considered as a separate dimension; rather, it is inherently incorporated within the categories that define the physics regime. A category for 0D, or lumped-element models, is not included, as such problems do not resolve spatial field variations, and the system is therefore represented by spatially aggregated quantities (e.g., charge, current, flux, energy) or an equivalent circuit model. Those publications were excluded from further processing due to not dealing with Maxwell’s field equations. Problems with 1D, 2D, and 3D dimensionality, in contrast, explicitly resolve the spatial dimensions and consider the underlying field equations.

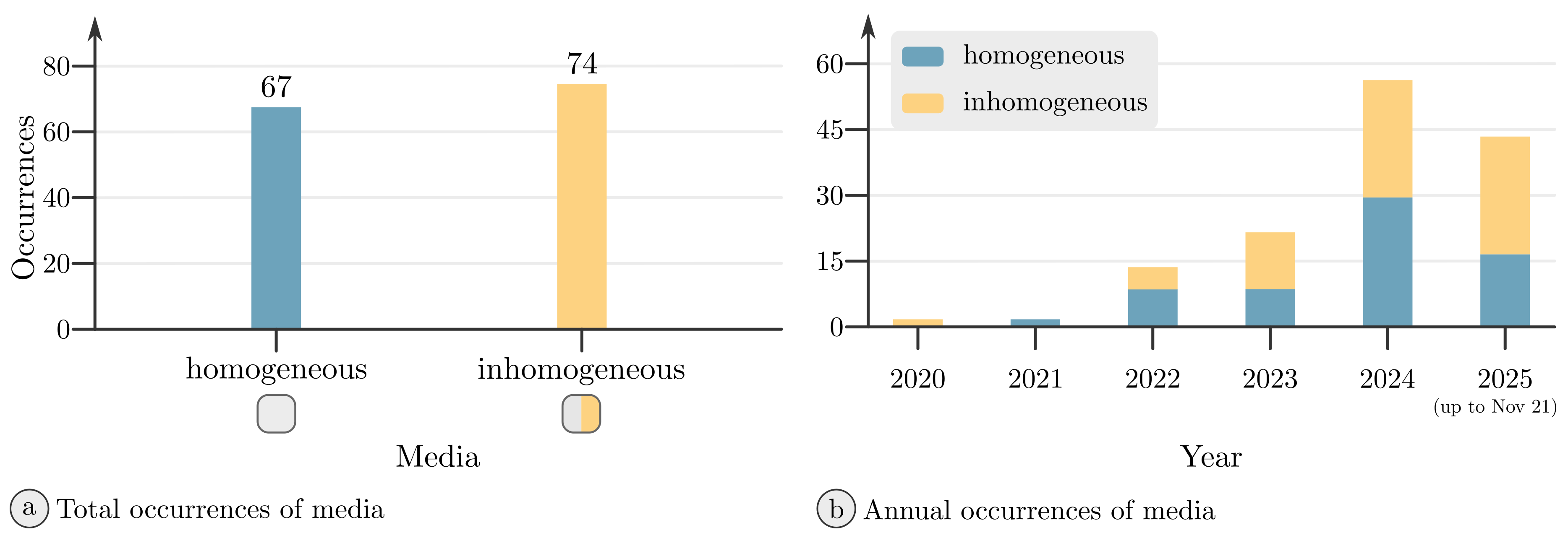

- Medium: The absence or presence of spatially varying properties in the computational domain is documented in the characteristic Medium. The category Homogeneous applies when there is no spatial variance of the material properties, e.g., permittivity, permeability, polarization, or magnetization, in the domain, while the category Inhomogeneous applies when these material properties vary with position. Continuously and discretely varying properties were aggregated under the latter without further distinction. Boundary conditions that emulate material boundaries are not seen as a variance in material properties to be classified as Inhomogeneous.

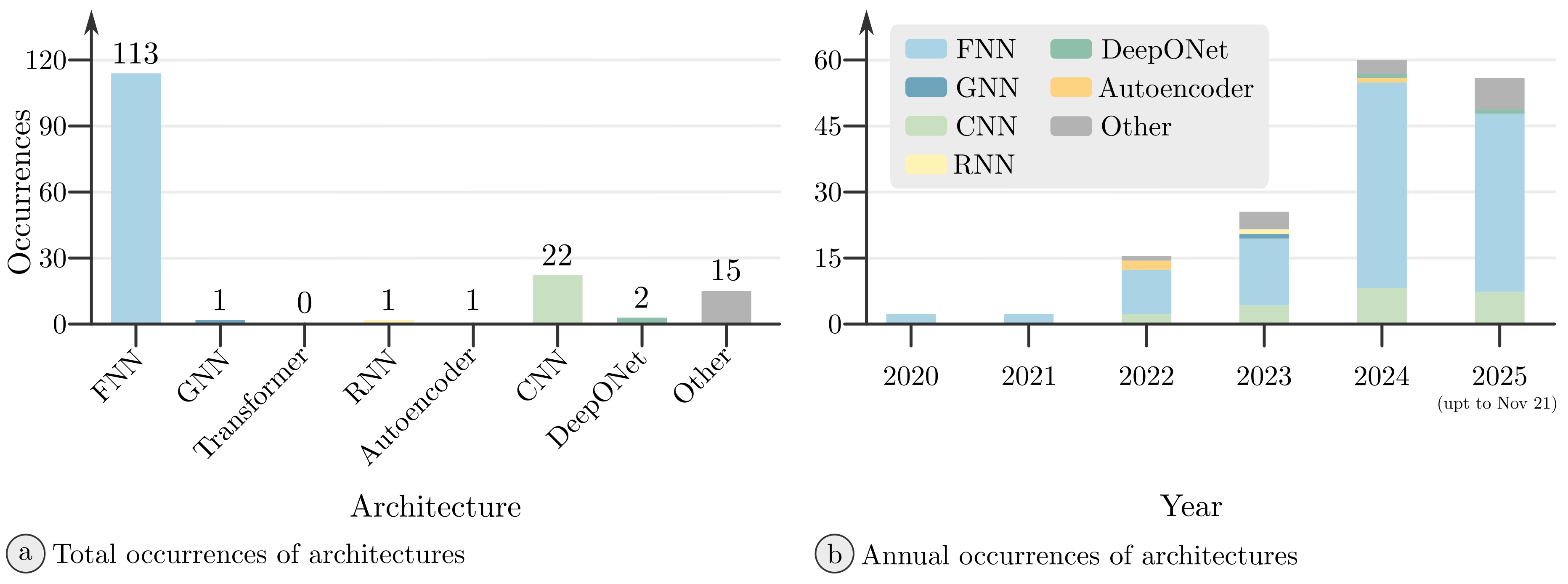

- Network Architecture: The high-level structural design, the organization of computation, and the flow of information through a NN is given by its architecture. It specifies the types of computational units and layers used (e.g., fully connected layers, convolutional layers, attention heads, or recurrent units) and how they are connected (e.g., forward connections, recurrent connections, parallel branches, or residual connections). The occurring architectures the PINNs in the selected publications are based on were noted in the characteristic Network Architecture. When suitable, architectures were aggregated, as U-Nets and ResNets were categorized as CNNs and LSTMs as RNNs. Architectures that distinctly differ from the generally established architectures were combined under Other.

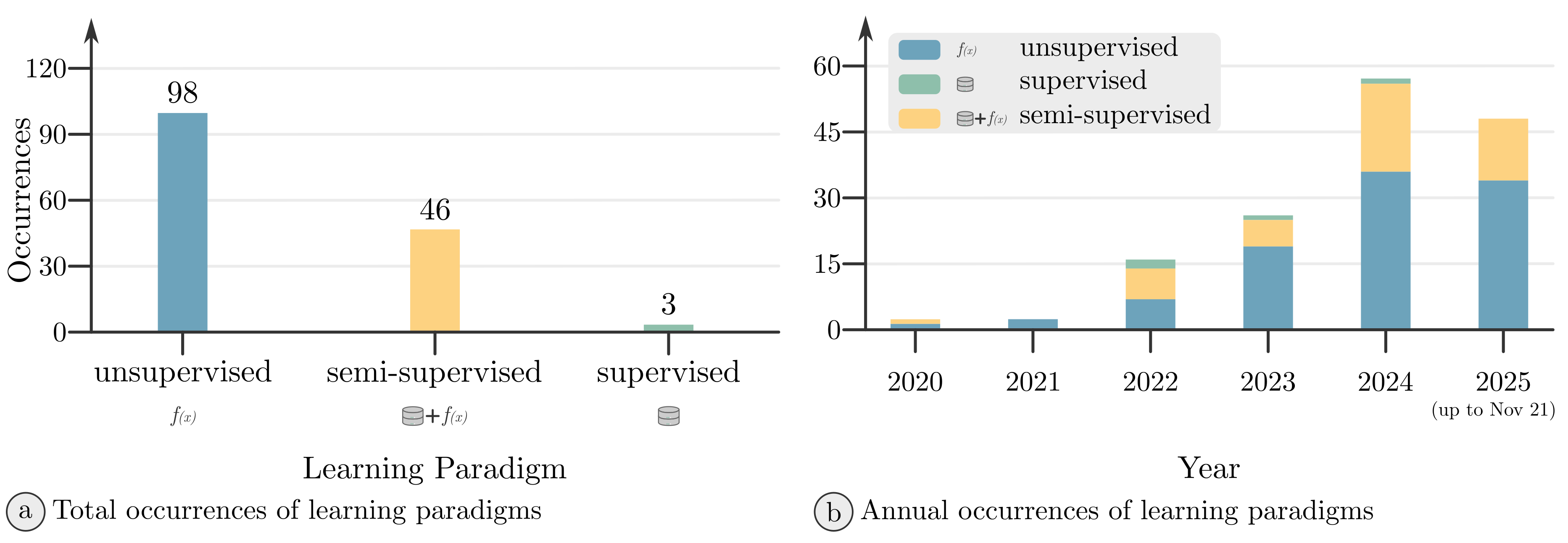

- Learning Paradigm: In the characteristic Learning Paradigm, the type and setting of learning is described, which governs how data is utilized in the learning process of a DL model. The categories used are supervised learning, unsupervised learning, and semi-supervised learning. In contrast to the predominant use of these terms in DL, a more specific definition used in the context of PINNs is applied. Here, supervised learning means the utilization of labeled data as ground truth, without the incorporation of a physics term within the loss function. Consequently, models designated as employing supervised learning are, by definition, not designated as being PINNs. This deviates from the interpretation proposed by Raissi et al. [2], who employ a more general definition in the sense of DL. In their approach, the physics loss is conceptualized as analogous to the use of labeled data. Subsequently, this results in the categorization of PINNs as a form of supervised learning. In this study, semi-supervised learning is defined as utilizing labeled data as well as physics loss terms, while purely unsupervised learning takes no labeled data as inputs, relying entirely on the physics loss. Publications to which only the category Supervised Learning applied were not included in further processing.

4. Results

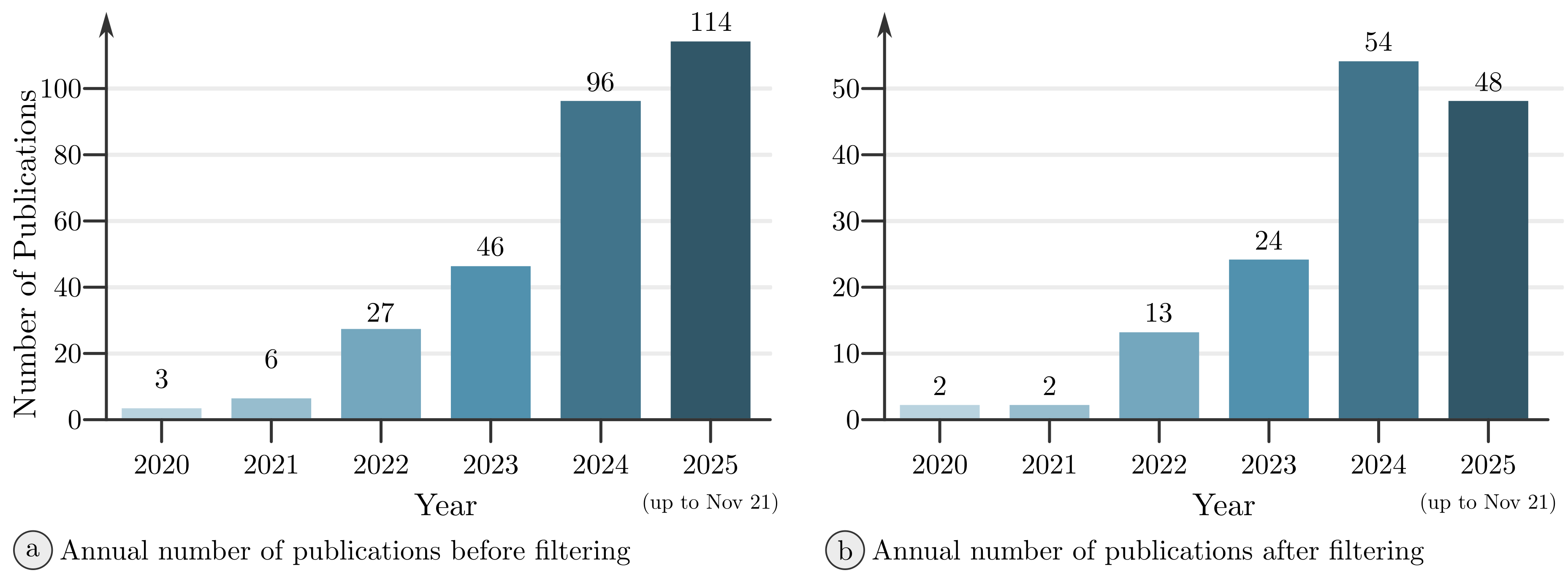

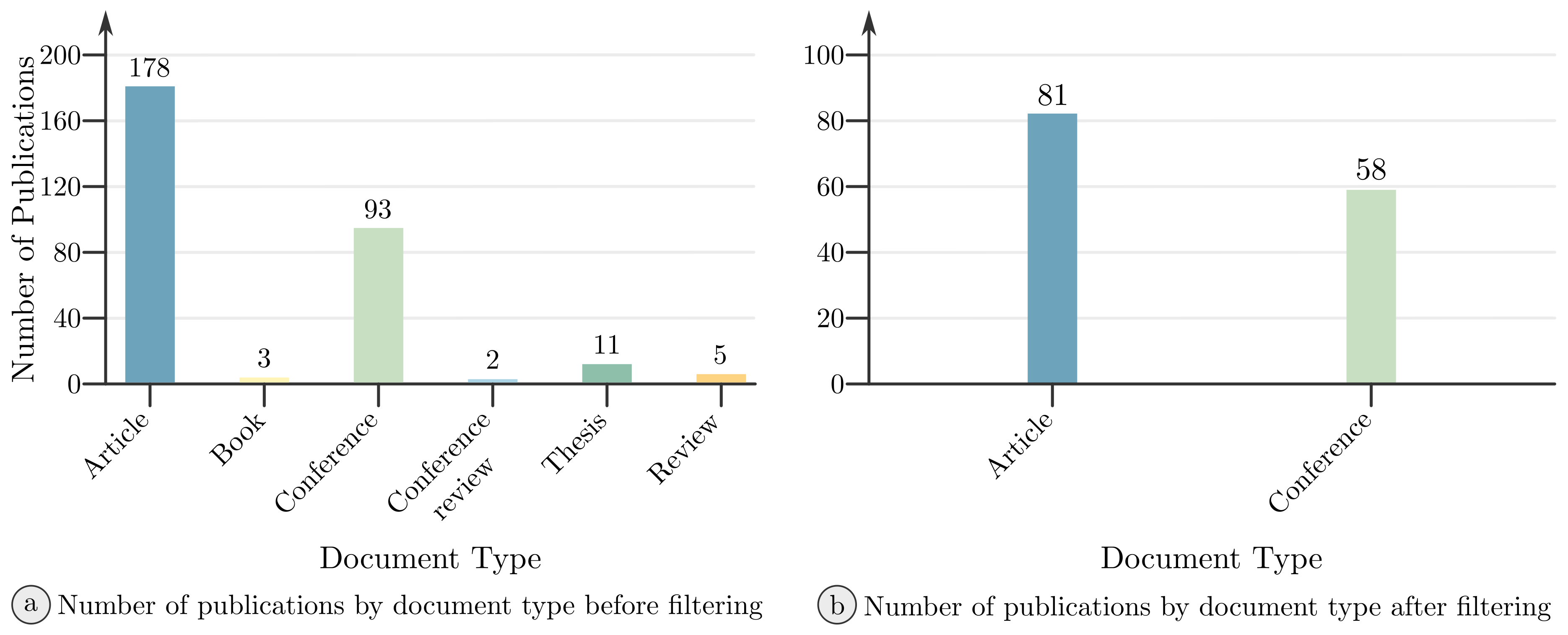

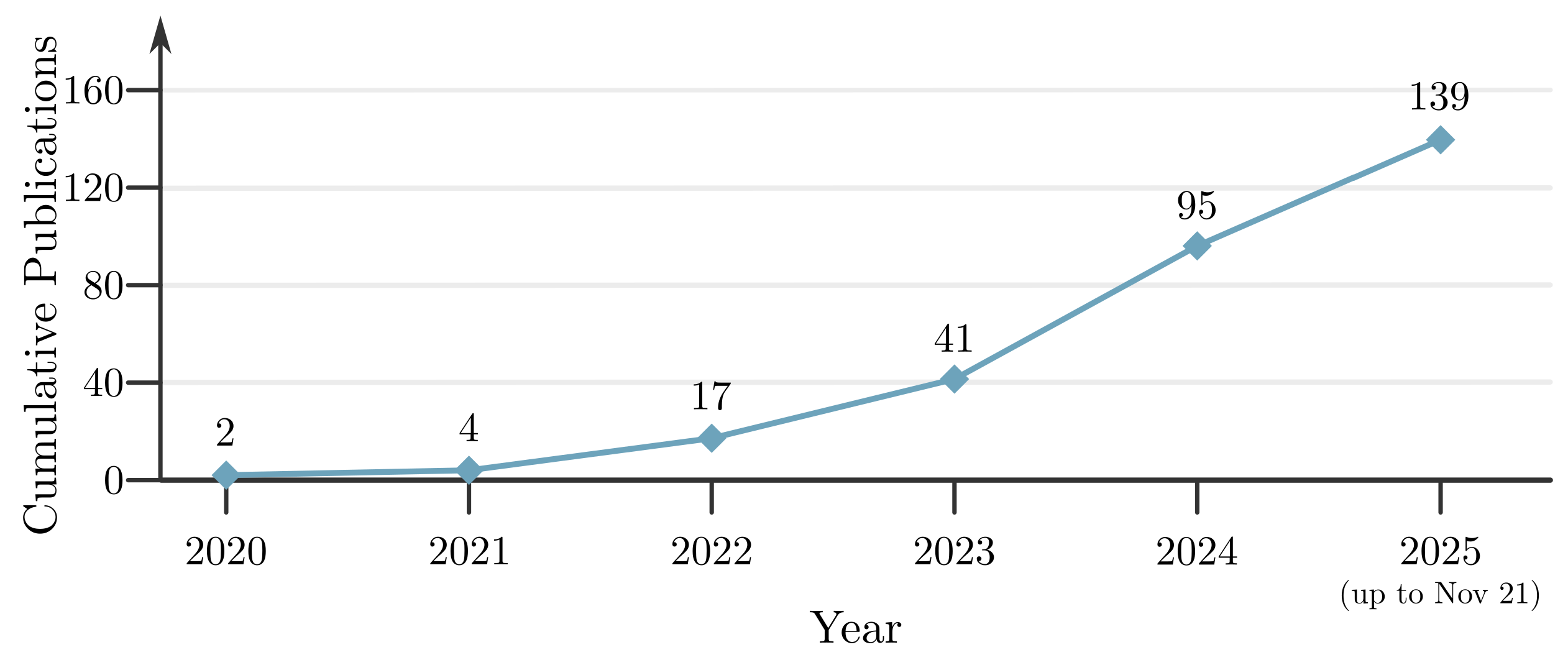

4.1. Bibliometric Analysis

4.2. RQ1: How Extensively Are PINNs Applied Within Electromagnetics?

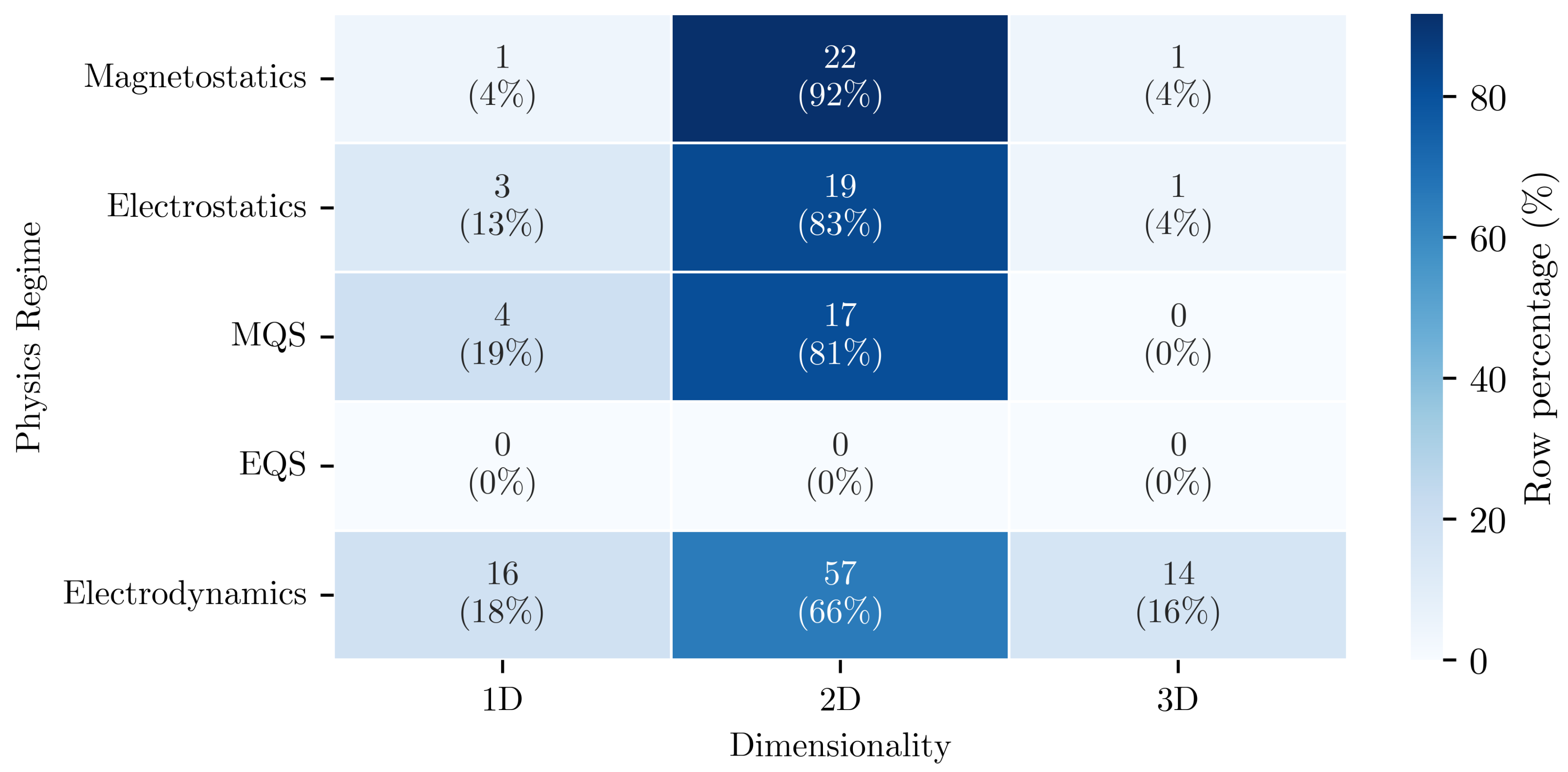

4.3. RQ2: Which Subfields of Electromagnetics Are PINNs Applied to?

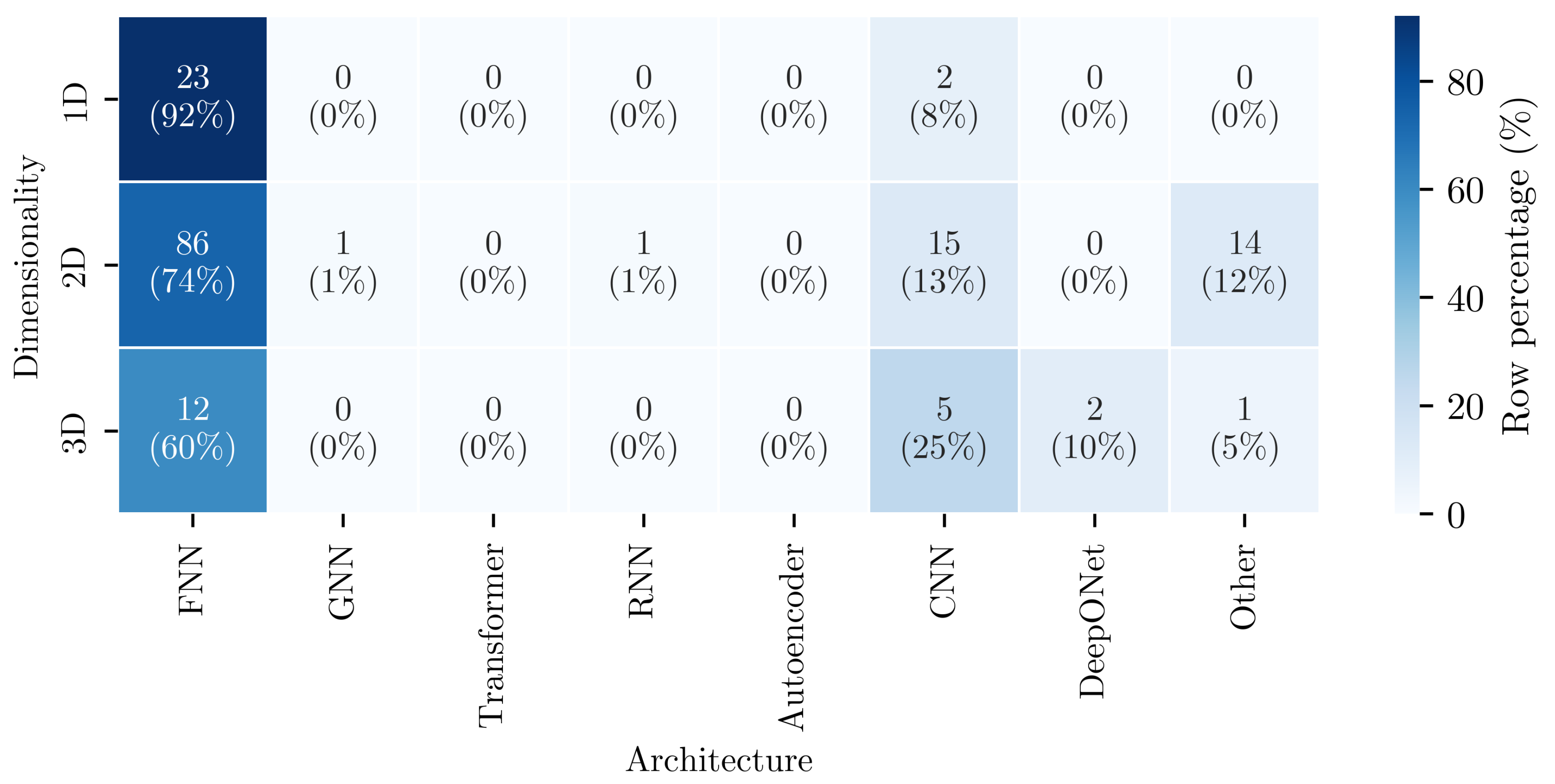

4.4. RQ3: Which Network Architectures Are Used for PINNs in Solving Maxwell’s Equations?

4.5. RQ4: What Spatial Dimensionality Are the Electromagnetic Problems Solved?

4.6. RQ5: Are the Reviewed Domains Divided into Different Media?

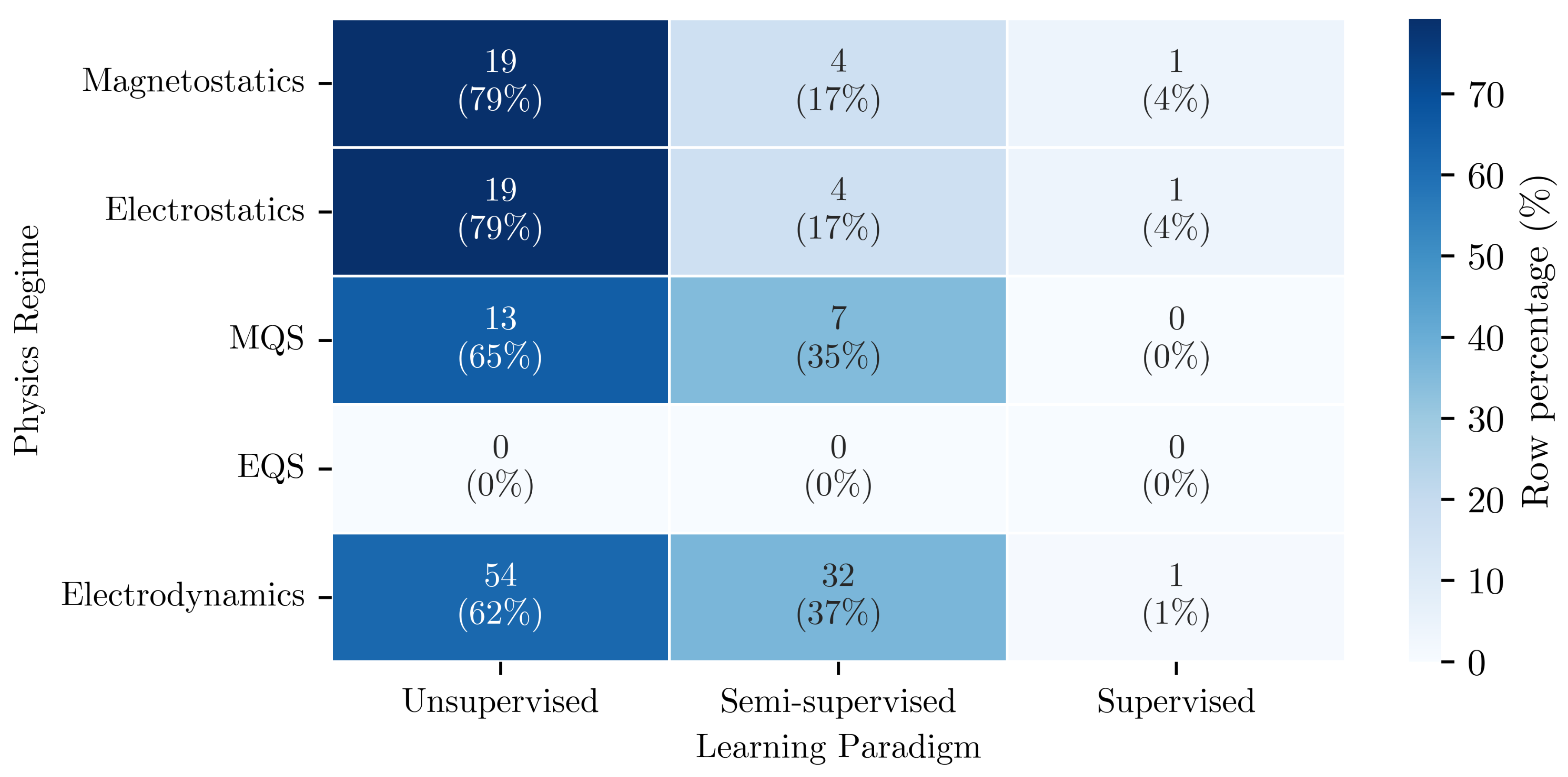

4.7. RQ6: Which Learning Paradigms Are Used for Solving Maxwell’s Equations with PINNs?

4.8. RQ7: Are There Associations Between the Extracted Characteristics of the Reviewed Publications?

5. Discussion

5.1. Interpretation of Findings

5.2. Perspective and Research Opportunities

- The absence of publications categorized as electroquasistatics suggests potential for further research. This is a topical gap that might be addressed through further targeted research or domain outreach.

- The results show a limited diversity in the application of network architectures beyond FNNs. More advanced architectures, e.g., CNNs, GNNs, or DeepONets, are generally rarely deployed, although architectural diversity increases with spatial dimensionality, with 3D studies already employing a broader range of architectures. Consequently, the need for architectural experimentation, to meet problem-specific requirements, is most pressing in lower-dimensional settings. Furthermore, systematic comparative studies that evaluate architecture choices for representative problems, which involve solving Maxwell’s equations, would be advantageous.

- In the context of learning paradigms, the application of semi-supervised learning is sparse. While unsupervised learning is applied in most studies, the integration of labeled data from measurements or numerical simulations is limited. The use of semi-supervised learning varies by physics regime, with magnetoquasistatic and electrodynamic studies employing it most. Consequently, the integration of labeled data presents an opportunity particularly for magnetostatic and electrostatic applications, where semi-supervised approaches remain underutilized.

- The majority of publications in the SLR concentrate on 2D domains, with considerably less attention given to the review of 3D domains. This gap is most pronounced in the static and quasistatic regimes. The extension of PINN-based approaches to 3D formulations in these regimes presents a specific opportunity for future work.

- Associations between several of the extracted characteristics was found. This indicates that methodological choices are not made independently of the problem under investigation. Future studies might therefore benefit from reporting and analyzing these interactions explicitly, rather than treating characteristics such as Network Architecture, Learning Paradigm, and Medium as isolated decisions.

5.3. Limitations

6. Conclusion

Author Contributions

Funding

Data Availability Statement

Acknowledgments

Conflicts of Interest

Appendix A. List of Reviewed Publications

|

References

- Lagaris, I.; Likas, A.; Fotiadis, D. Artificial neural networks for solving ordinary and partial differential equations. IEEE Transactions on Neural Networks 1998, 9, 987–1000. [Google Scholar] [CrossRef] [PubMed]

- Raissi, M.; Perdikaris, P.; Karniadakis, G.E. Physics Informed Deep Learning (Part I): Data-driven Solutions of Nonlinear Partial Differential Equations, 2017, [arXiv:cs.AI/1711.10561].

- Raissi, M.; Perdikaris, P.; Karniadakis, G.E. Physics Informed Deep Learning (Part II): Data-driven Discovery of Nonlinear Partial Differential Equations, 2017, [arXiv:cs.AI/1711.10566].

- Raissi, M.; Perdikaris, P.; Karniadakis, G. Physics-informed neural networks: A deep learning framework for solving forward and inverse problems involving nonlinear partial differential equations. Journal of Computational Physics 2019, 378, 686–707. [Google Scholar] [CrossRef]

- Karniadakis, G.; Kevrekidis, Y.; Lu, L.; Perdikaris, P.; Wang, S.; Yang, L. Physics-informed machine learning. Nature Reviews Physics 2021, 1–19. [Google Scholar] [CrossRef]

- Farea, A.; Yli-Harja, O.; Emmert-Streib, F. Understanding Physics-Informed Neural Networks: Techniques, Applications, Trends, and Challenges. AI 2024, 5, 1534–1557. [Google Scholar] [CrossRef]

- Michaloglou, A.; Papadimitriou, I.; Gialampoukidis, I.; Vrochidis, S.; Kompatsiaris, I. Physics-Informed Neural Networks in Materials Modeling and Design: A Review. Archives of Computational Methods in Engineering 2025. [Google Scholar] [CrossRef]

- Fan, W.; Chen, X. Embedding Physics into Machine Learning: A Review of Physics Informed Neural Networks as Partial Differential Equation Forward Solvers. Tsinghua Science and Technology 2026, 31, 1326–1364. [Google Scholar] [CrossRef]

- Pioch, F.; Harmening, J.H.; Müller, A.M.; Peitzmann, F.J.; Schramm, D.; el Moctar, O. Turbulence Modeling for Physics-Informed Neural Networks: Comparison of Different RANS Models for the Backward-Facing Step Flow. Fluids 2023, 8. [Google Scholar] [CrossRef]

- Harmening, J.H.; Peitzmann, F.J.; el Moctar, O. Effect of network architecture on physics-informed deep learning of the Reynolds-averaged turbulent flow field around cylinders without training data. Frontiers in Physics 2024, 12. [Google Scholar] [CrossRef]

- Harmening, J.H.; Pioch, F.; Fuhrig, L.; Peitzmann, F.J.; Schramm, D.; el Moctar, O. Data-assisted training of a physics-informed neural network to predict the separated Reynolds-averaged turbulent flow field around an airfoil under variable angles of attack. Neural Computing and Applications 2024, 36. [Google Scholar] [CrossRef]

- Raissi, M.; Yazdani, A.; Karniadakis, G.E. Hidden fluid mechanics: Learning velocity and pressure fields from flow visualizations. Science 2020, 367, 1026–1030. [Google Scholar] [CrossRef]

- Laubscher, R.; Rousseau, P. Application of a mixed variable physics-informed neural network to solve the incompressible steady-state and transient mass, momentum, and energy conservation equations for flow over in-line heated tubes. Applied Soft Computing 2022, 114, 108050. [Google Scholar] [CrossRef]

- Ouyang, H.; Zhu, Z.; Chen, K.; Tian, B.; Huang, B.; Hao, J. Reconstruction of hydrofoil cavitation flow based on the chain-style physics-informed neural network. Engineering Applications of Artificial Intelligence 2023, 119, 105724. [Google Scholar] [CrossRef]

- Wang, H.; Liu, Y.; Wang, S. Dense velocity reconstruction from particle image velocimetry/particle tracking velocimetry using a physics-informed neural network. Physics of Fluids 2022, 34, 017116. [Google Scholar] [CrossRef]

- Jin, X.; Cai, S.; Li, H.; Karniadakis, G.E. NSFnets (Navier-Stokes flow nets): Physics-informed neural networks for the incompressible Navier-Stokes equations. Journal of Computational Physics 2021, 426, 109951. [Google Scholar] [CrossRef]

- Cai, S.; Wang, Z.; Wang, S.; Perdikaris, P.; Karniadakis, G.E. Physics-Informed Neural Networks for Heat Transfer Problems. Journal of Heat Transfer 2021, 143, 060801. [Google Scholar] [CrossRef]

- Zobeiry, N.; Humfeld, K.D. A physics-informed machine learning approach for solving heat transfer equation in advanced manufacturing and engineering applications. Engineering Applications of Artificial Intelligence 2021, 101, 104232. [Google Scholar] [CrossRef]

- Oommen, V.; Srinivasan, B. Solving Inverse Heat Transfer Problems Without Surrogate Models: A Fast, Data-Sparse, Physics Informed Neural Network Approach. Journal of Computing and Information Science in Engineering 2022, 22, 041012. [Google Scholar] [CrossRef]

- Billah, M.M.; Khan, A.I.; Liu, J.; Dutta, P. Physics-informed deep neural network for inverse heat transfer problems in materials. Materials Today Communications 2023, 35, 106336. [Google Scholar] [CrossRef]

- Karthik, K.; Sowmya, G.; Sharma, N.; Kumar, C.; Ravikumar Shashikala, V.K.; Alur Shivaprakash, S.; Muhammad, T.; Gill, H.S. Predictive modeling through physics-informed neural networks for analyzing the thermal distribution in the partially wetted wavy fin. ZAMM - Journal of Applied Mathematics and Mechanics / Zeitschrift für Angewandte Mathematik und Mechanik 2024, 104, e202400180. [Google Scholar] [CrossRef]

- Kumar, C.; Srilatha, P.; Karthik, K.; Somashekar, C.; Nagaraja, K.V.; Varun Kumar, R.S.; Shah, N.A. A physics-informed machine learning prediction for thermal analysis in a convective-radiative concave fin with periodic boundary conditions. ZAMM - Journal of Applied Mathematics and Mechanics / Zeitschrift für Angewandte Mathematik und Mechanik 2024, 104, e202300712. [Google Scholar] [CrossRef]

- Kapoor, T.; Wang, H.; Núnez, A.; Dollevoet, R. Physics-Informed Neural Networks for Solving Forward and Inverse Problems in Complex Beam Systems. IEEE Transactions on Neural Networks and Learning Systems 2024, 35, 5981–5995. [Google Scholar] [CrossRef]

- Jin, H.; Zhang, E.; Espinosa, H.D. Recent Advances and Applications of Machine Learning in Experimental Solid Mechanics: A Review. Applied Mechanics Reviews 2023, 75, 061001. [Google Scholar] [CrossRef]

- Henkes, A.; Wessels, H.; Mahnken, R. Physics informed neural networks for continuum micromechanics. PAMM 2021, 21, e202100040. [Google Scholar] [CrossRef]

- Zhang, N.; Xu, K.; Yu Yin, Z.; Li, K.Q.; Jin, Y.F. Finite element-integrated neural network framework for elastic and elastoplastic solids. Computer Methods in Applied Mechanics and Engineering 2025, 433, 117474. [Google Scholar] [CrossRef]

- Habib, A.; Yildirim, U. Developing a physics-informed and physics-penalized neural network model for preliminary design of multi-stage friction pendulum bearings. Engineering Applications of Artificial Intelligence 2022, 113, 104953. [Google Scholar] [CrossRef]

- Rumelhart, D.E.; Hinton, G.E.; Williams, R.J. Learning representations by back-propagating errors. Nature 1986, 323, 533–536. [Google Scholar] [CrossRef]

- Maxwell, J.C., VIII. A dynamical theory of the electromagnetic field. Philosophical Transactions of the Royal Society of London 1865, 155, 459–512. [Google Scholar] [CrossRef]

- Griffiths, D.J. Introduction to Electrodynamics, 5 ed.; Cambridge University Press, 2023. [CrossRef]

- Kitchenham, B.; Charters, S. Guidelines for performing Systematic Literature Reviews in Software Engineering. 2007, 2. [Google Scholar]

- Schmeing, L.; Pioch, F. Dataset for "Advancements in Physics-Informed Neural Networks for Solving Maxwell’s Equations: A Systematic Literature Review", 2026. [CrossRef]

- Page, M.J.; McKenzie, J.E.; Bossuyt, P.M.; Boutron, I.; Hoffmann, T.C.; Mulrow, C.D.; Shamseer, L.; Tetzlaff, J.M.; Akl, E.A.; Brennan, S.E.; et al. The PRISMA 2020 statement: an updated guideline for reporting systematic reviews. BMJ 2021, 372. [Google Scholar] [CrossRef]

- Liu, Z.; Xu, F. Principle and Application of Physics-Inspired Neural Networks for Electromagnetic Problems. In Proceedings of the IGARSS 2022 - 2022 IEEE International Geoscience and Remote Sensing Symposium. IEEE, 2022, p. 5244–5247. [CrossRef]

- Fujita, K. Physics-Informed Neural Networks with Data and Equation Scaling for Time Domain Electromagnetic Fields. In Proceedings of the 2022 Asia-Pacific Microwave Conference (APMC), 2022, pp. 623–625. [CrossRef]

- Li, R.; Xiao, L.; Zhang, Y.; Shi, Z.; Jiao, Y.; Tang, H. Forward electromagnetic modeling and inverse scattering of cylinder with various cross-section using physics informed neural network. In Proceedings of the 2024 International Applied Computational Electromagnetics Society Symposium (ACES-China), 2024, pp. 1–3. [CrossRef]

- Piao, S.; Gu, H.; Wang, A.; Qin, P. A Domain-Adaptive Physics-Informed Neural Network for Inverse Problems of Maxwell’s Equations in Heterogeneous Media. IEEE Antennas and Wireless Propagation Letters 2024, 23, 2905–2909. [Google Scholar] [CrossRef]

- Cao, B.; Wang, Y.D.; Zhang, N.E.; Liang, Y.Z.; Yin, W.Y. A Physics-Informed Neural Networks Algorithm for Simulating Semiconductor Devices. In Proceedings of the 2023 International Applied Computational Electromagnetics Society Symposium (ACES-China), 2023, pp. 1–3. [CrossRef]

- Li, R.; Zhang, Y.; Tang, H.; Shi, Z.; Jiao, Y.; Xiao, L.; Wei, B.; Gong, S. Research on Electromagnetic Scattering and Inverse Scattering of Target Based on Transfer Learning Physics-Informed Neural Networks. In Proceedings of the 2024 International Applied Computational Electromagnetics Society Symposium (ACES-China), 2024, pp. 1–3. [CrossRef]

- Li, W.; Tang, H.; Li, R.; Zhang, M.; Deng, Q.; Zhang, Y.; Shi, Z. Electromagnetic Scattering of Infinitely Long Cylinder of Arbitrary Cross-section Based on PINNs. In Proceedings of the 2024 Photonics & Electromagnetics Research Symposium (PIERS), 2024, pp. 1–8. [CrossRef]

- Barmada, S.; Tucci, M.; Formisano, A.; Di Barba, P.; Mognaschi, M.E. Hybrid Boundary Element – Physics Informed Neural Network Formulation for Electromagnetics Problems. In Proceedings of the 2024 IEEE 21st Biennial Conference on Electromagnetic Field Computation (CEFC), 2024, pp. 1–2. [CrossRef]

- Qi, S.; Sarris, C.D. Hybrid Physics-Informed Neural Network for the Wave Equation With Unconditionally Stable Time-Stepping. IEEE Antennas and Wireless Propagation Letters 2024, 23, 1356–1360. [Google Scholar] [CrossRef]

- Baldan, M.; Di Barba, P.; Lowther, D.A. Physics- Informed Neural Networks for Inverse Electromagnetic Problems. In Proceedings of the 2022 IEEE 20th Biennial Conference on Electromagnetic Field Computation (CEFC), 2022, pp. 1–2. [CrossRef]

- Backmeyer, M.; Kurz, S.; Möller, M.; Schöps, S. Solving Electromagnetic Scattering Problems by Isogeometric Analysis with Deep Operator Learning. In Proceedings of the 2024 Kleinheubach Conference, 2024, pp. 1–4. [CrossRef]

- Su, Y.; Zeng, S.; Wu, X.; Huang, Y.; Chen, J. Physics-Informed Graph Neural Network for Electromagnetic Simulations. In Proceedings of the 2023 XXXVth General Assembly and Scientific Symposium of the International Union of Radio Science (URSI GASS), 2023, pp. 1–3. [CrossRef]

- Mokhtari, B.E.; Chauviere, C.; Bonnet, P. On the Importance of the Mathematical Formulation to Get PINNs Working. IEEE Transactions on Electromagnetic Compatibility 2024, 66, 2142–2149. [Google Scholar] [CrossRef]

- Liu, J.P.; Wang, B.Z.; Chen, C.S.; Wang, R. Inverse Design Method for Horn Antennas Based on Knowledge-Embedded Physics-Informed Neural Networks. IEEE Antennas and Wireless Propagation Letters 2024, 23, 1665–1669. [Google Scholar] [CrossRef]

- Hu, Y.D.; Wang, X.H.; Zhou, H.; Wang, L. A Priori Knowledge-Based Physics-Informed Neural Networks for Electromagnetic Inverse Scattering. IEEE Transactions on Geoscience and Remote Sensing 2024, 62, 1–9. [Google Scholar] [CrossRef]

- Qi, S.; Sarris, C.D. Physics-Informed Deep Operator Network for 3-D Time-Domain Electromagnetic Modeling. IEEE Transactions on Microwave Theory and Techniques 2025, 73, 3800–3812. [Google Scholar] [CrossRef]

- Ping, Y.; Zhang, Y.; Jiang, L. Uncertainty Quantification in PEEC Method: A Physics-Informed Neural Networks-Based Polynomial Chaos Expansion. IEEE Transactions on Electromagnetic Compatibility 2024, 66, 2095–2101. [Google Scholar] [CrossRef]

- Lim, K.L.; Dutta, R.; Rotaru, M. Physics Informed Neural Network using Finite Difference Method. In Proceedings of the 2022 IEEE International Conference on Systems, Man, and Cybernetics (SMC), 2022, pp. 1828–1833. [CrossRef]

- Hu, Y.D.; Wang, X.H.; Zhou, H.; Wang, L.; Wang, B.Z. A More General Electromagnetic Inverse Scattering Method Based on Physics-Informed Neural Network. IEEE Transactions on Geoscience and Remote Sensing 2023, 61, 1–9. [Google Scholar] [CrossRef]

- Baldan, M.; Di Barba, P.; Lowther, D.A. Physics-Informed Neural Networks for Inverse Electromagnetic Problems. IEEE Transactions on Magnetics 2023, 59, 1–5. [Google Scholar] [CrossRef]

- Pan, Y.Q.; Wang, R.; Wang, B.Z. Physics-Informed Neural Networks With Embedded Analytical Models: Inverse Design of Multilayer Dielectric-Loaded Rectangular Waveguide Devices. IEEE Transactions on Microwave Theory and Techniques 2024, 72, 3993–4005. [Google Scholar] [CrossRef]

- Qi, S.; Sarris, C.D. Physics-Informed Neural Networks for Multiphysics Simulations: Application to Coupled Electromagnetic-Thermal Modeling. In Proceedings of the 2023 IEEE/MTT-S International Microwave Symposium - IMS 2023, 2023, pp. 166–169. [CrossRef]

- Sato, T.; Sasaki, H.; Sato, Y. A Fast Physics-informed Neural Network Based on Extreme Learning Machine for Solving Magnetostatic Problems. In Proceedings of the 2023 24th International Conference on the Computation of Electromagnetic Fields (COMPUMAG), 2023, pp. 1–4. [CrossRef]

- Ping, Y.; Zhang, Y.; Jiang, L. Uncertainty Quantification in PEEC Method: A Physics-Informed Neural Networks-Based Polynomial Chaos Expansion. In Proceedings of the 2024 IEEE Joint International Symposium on Electromagnetic Compatibility, Signal & Power Integrity: EMC Japan / Asia-Pacific International Symposium on Electromagnetic Compatibility (EMC Japan/APEMC Okinawa), 2024, pp. 395–398. [CrossRef]

- Deng, Q.; Tang, H.; Li, R.; Li, W.; Zhang, M.; Shi, Z.; Zhang, Y. Application of PINNs in PNJ Research. In Proceedings of the 2024 Photonics & Electromagnetics Research Symposium (PIERS), 2024, pp. 1–8. [CrossRef]

- Brendel, P.; Medvedev, V.; Rosskopf, A. Convolutional Physics- Informed Neural Networks for Fast Prediction of Core Losses in Axisymmetric Transformers. In Proceedings of the 2024 IEEE 21st Biennial Conference on Electromagnetic Field Computation (CEFC), 2024, pp. 1–2. [CrossRef]

- Fujita, K. Modeling Power-Bus Structures with Physics-Informed Neural Networks. In Proceedings of the 2024 IEEE Joint International Symposium on Electromagnetic Compatibility, Signal & Power Integrity: EMC Japan / Asia-Pacific International Symposium on Electromagnetic Compatibility (EMC Japan/APEMC Okinawa), 2024, pp. 552–555. [CrossRef]

- Wang, J.; Wang, D.; Wang, S.; Li, W. Modeling of Permanent Magnet Eddy-Current Coupler Based on Unsupervised Physics-Informed Radial-Based Function Neural Networks. IEEE Transactions on Magnetics 2025, 61, 1–10. [Google Scholar] [CrossRef]

- Barmada, S.; Dodge, S.; Tucci, M.; Formisano, A.; Di Barba, P.; Evelina Mognaschi, M. A Novel Hybrid Boundary Element—Physics Informed Neural Network Method for Numerical Solutions in Electromagnetics. IEEE Access 2024, 12, 171444–171457. [Google Scholar] [CrossRef]

- Xia, C.; Du, B.; Huang, W.; Cui, S. Parameter Identification of Permanent Magnet Synchronous Motor Based on Physics-Informed Neural Network. In Proceedings of the 2024 5th International Conference on Power Engineering (ICPE), 2024, pp. 207–212. [CrossRef]

- Qi, S.; Sarris, C.D. Benchmarking Physics-Informed Neural Networks for Time-Domain Electromagnetic Simulations. In Proceedings of the 2023 IEEE International Symposium on Antennas and Propagation and USNC-URSI Radio Science Meeting (USNC-URSI), 2023, pp. 1619–1620. [CrossRef]

- Lu, W.; Duan, J.; Cheng, L.; Lu, J.; Dou, D. Electromagnetic Interference Effect Assessment Under Measuring Testability Limitation Based on Physics-Informed Neural Network and Gaussian Process Regression. IEEE Transactions on Power Electronics 2024, 39, 12413–12423. [Google Scholar] [CrossRef]

- Gong, R.; Tang, Z. Hot Spot Driven Physics-informed Neural Network via Special Designed Quantity of Interest applied to Magneto-thermal Analysis. In Proceedings of the 2022 IEEE 20th Biennial Conference on Electromagnetic Field Computation (CEFC), 2022, pp. 1–2. [CrossRef]

- Han, J.H.; Park, J.H.; Song, S.M.; Hong, S.K. Electromagnetic Field Analysis Using Physics Informed Neural Network Considering Eddy Current. In Proceedings of the 2024 IEEE 21st Biennial Conference on Electromagnetic Field Computation (CEFC), 2024, pp. 1–2. [CrossRef]

- Medvedev, V.; Erdmann, A.; Rosskopf, A. Modeling of Near-and Far-Field Diffraction from EUV Absorbers Using Physics-Informed Neural Networks. In Proceedings of the 2023 Photonics & Electromagnetics Research Symposium (PIERS), 2023, pp. 297–305. [CrossRef]

- Liu, Y.H.; Liang, J.C.; Wang, B.Z.; Wang, R. Inverse Design Method for Electromagnetic Periodic Structures Based on Physics-Informed Neural Network With Embedded Analytical Models. IEEE Transactions on Microwave Theory and Techniques 2025, 73, 844–853. [Google Scholar] [CrossRef]

- Brendel, P.; Medvedev, V.; Rosskopf, A. Physics-Informed Neural Networks for Magnetostatic Problems on Axisymmetric Transformer Geometries. IEEE Journal of Emerging and Selected Topics in Industrial Electronics 2024, 5, 700–709. [Google Scholar] [CrossRef]

- Wang, J.Y.; Pan, X.M. Universal Approximation Theorem and Deep Learning for the Solution of Frequency-Domain Electromagnetic Scattering Problems. IEEE Transactions on Antennas and Propagation 2024, 72, 9274–9285. [Google Scholar] [CrossRef]

- Pan, Y.Q.; Wang, R.; Wang, B.Z. Solving Two-Dimensional Waveguide Problem Based on Physics-Informed Neural Networks. In Proceedings of the 2023 International Conference on Microwave and Millimeter Wave Technology (ICMMT), 2023, pp. 1–3. [CrossRef]

- Khan, M.R.; Zekios, C.L.; Bhardwaj, S.; Georgakopoulos, S.V. A Physics-Informed Neural Network-Based Waveguide Eigenanalysis. IEEE Access 2024, 12, 120777–120787. [Google Scholar] [CrossRef]

- Shao, J.; Wang, R.; Wang, B.Z. Theoretical Analysis of Rotational Symmetry Models for Time-Domain PINN. In Proceedings of the 2024 IEEE International Conference on Computational Electromagnetics (ICCEM), 2024, pp. 1–2. [CrossRef]

- Guo, Z.; Sabariego, R.V. Physics-Informed Neural Network for 2D Magneto-Quasi-Static Problems in Time Domain. In Proceedings of the 2024 IEEE 21st Biennial Conference on Electromagnetic Field Computation (CEFC), 2024, pp. 1–2. [CrossRef]

- Zhang, J.B.; Yu, D.M.; Pan, X.M. Physics-Informed Neural Networks For the Solution of Electromagnetic Scattering by Integral Equations. In Proceedings of the 2022 International Applied Computational Electromagnetics Society Symposium (ACES-China), 2022, pp. 1–2. [CrossRef]

- Hu, Y.D.; Wang, X.H.; Wei, T.; Ren, H.Y. Physics-Informed Neural Networks with Dynamic Sampling Method for Solving Rectangular Waveguide Problems. In Proceedings of the 2023 International Conference on Microwave and Millimeter Wave Technology (ICMMT), 2023, pp. 1–3. [CrossRef]

- Zhu, Y.; Xu, K.; Wan, B.; Lei, G.; Zhu, J. Kolmogorov–Arnold Network for Solving 2-D Magnetostatic Problems. IEEE Transactions on Magnetics 2025, 61, 1–5. [Google Scholar] [CrossRef]

- Zhang, P.; Hu, Y.; Jin, Y.; Deng, S.; Wu, X.; Chen, J. A Maxwell’s Equations Based Deep Learning Method for Time Domain Electromagnetic Simulations. In Proceedings of the 2020 IEEE Texas Symposium on Wireless and Microwave Circuits and Systems (WMCS), 2020, pp. 1–4. [CrossRef]

- Li, H.; Liu, J.G.; Huang, X.W.; Sheng, X.Q. Physics-Informed Neural Networks with Hard Constraints for Electromagnetic Scattering Analysis. In Proceedings of the 2024 14th International Symposium on Antennas, Propagation and EM Theory (ISAPE), 2024, pp. 1–3. [CrossRef]

- Mušeljić, E.; Reinbacher-Köstinger, A.; Kaltenbacher, M. Solving the electrostatic Laplace’s equation with a parameterizable physics informed neural network. In Proceedings of the 2022 IEEE 20th Biennial Conference on Electromagnetic Field Computation (CEFC), 2022, pp. 1–2. [CrossRef]

- Han, J.H.; Choi, E.J.; Hong, S.K. A Study on Electromagnetic Field Analysis Considering Geometry Variation Using Physics-Informed Neural Network. In Proceedings of the 2023 26th International Conference on Electrical Machines and Systems (ICEMS), 2023, pp. 3345–3348. [CrossRef]

- Zheng, Y.R.; Huang, Z.Y.; Gong, X.Z.; Zheng, X.Z. Implementation of Maxwell’s equations solving algorithm based on PINN. In Proceedings of the 2024 IEEE International Conference on Computational Electromagnetics (ICCEM), 2024, pp. 1–3. [CrossRef]

- Liu, Y.H.; Wang, B.Z.; Wang, R. Inverse Design of Frequency Selective Surface Using Physics-Informed Neural Networks. In Proceedings of the 2024 IEEE International Symposium on Antennas and Propagation and INC/USNC-URSI Radio Science Meeting (AP-S/INC-USNC-URSI), 2024, pp. 1027–1028. [CrossRef]

- Chang, H.Y.; Wang, R.; Wang, B.Z. Solving Complex Electromagnetic Scattering Problem Based on Physics-Informed Neural Network with Adaptive Sampling Method. In Proceedings of the 2023 International Conference on Microwave and Millimeter Wave Technology (ICMMT), 2023, pp. 1–3. [CrossRef]

- Khan, A.; Lowther, D.A. Physics Informed Neural Networks for Electromagnetic Analysis. IEEE Transactions on Magnetics 2022, 58, 1–4. [Google Scholar] [CrossRef]

- Jiang, X.; Zhang, M.; Song, Y.; Chen, H.; Huang, D.; Wang, D. Predicting Ultrafast Nonlinear Dynamics in Fiber Optics by Enhanced Physics-Informed Neural Network. Journal of Lightwave Technology 2024, 42, 1381–1394. [Google Scholar] [CrossRef]

- Gong, Z.; Chu, Y.; Yang, S. Physics-Informed Neural Networks for Solving Two-Dimensional Magneto-Static Fields. In Proceedings of the 2023 IEEE International Magnetic Conference - Short Papers (INTERMAG Short Papers), 2023, pp. 1–2. [CrossRef]

- Guo, Z.; Nguyen, B.; Sabariego, R.V. Physics-Informed Neural Network for Solving 1-D Nonlinear Time-Domain Magneto-Quasi-Static Problems. IEEE Transactions on Magnetics 2025, 61, 1–9. [Google Scholar] [CrossRef]

- Shao, J.; Liu, Y.; Wang, R.; Wang, B.Z. Finite Difference Based PINN for Electromagnetic Forward Problem Solving. In Proceedings of the 2024 IEEE International Symposium on Antennas and Propagation and INC/USNC-URSI Radio Science Meeting (AP-S/INC-USNC-URSI), 2024, pp. 1023–1024. [CrossRef]

- Gong, Z.; Chu, Y.; Yang, S. Physics-Informed Neural Networks for Solving 2-D Magnetostatic Fields. IEEE Transactions on Magnetics 2023, 59, 1–5. [Google Scholar] [CrossRef]

- Liu, C.; Li, L.; Cui, T. Physics-informed Unsupervised Deep Learning Framework for Solving Full-Wave Inverse Scattering Problems. In Proceedings of the 2022 IEEE Conference on Antenna Measurements and Applications (CAMA), 2022, pp. 1–4. [CrossRef]

- Jiang, X.; Wang, D.; Fan, Q.; Zhang, M.; Lu, C.; Tao Lau, A.P. Solving the Nonlinear Schrödinger Equation in Optical Fibers Using Physics-informed Neural Network. In Proceedings of the 2021 Optical Fiber Communications Conference and Exhibition (OFC), 2021, pp. 1–3.

- Chang, H.; Fan, J.; Wang, R.; Wang, B.Z. Solving Electromagnetic Problems with PINN based on Scattering Equivalent Source Method. In Proceedings of the 2024 IEEE International Symposium on Antennas and Propagation and INC/USNC-URSI Radio Science Meeting (AP-S/INC-USNC-URSI), 2024, pp. 1025–1026. [CrossRef]

- Jiang, F.; Li, T.; Lv, X.; Rui, H.; Jin, D. Physics-Informed Neural Networks for Path Loss Estimation by Solving Electromagnetic Integral Equations. IEEE Transactions on Wireless Communications 2024, 23, 15380–15393. [Google Scholar] [CrossRef]

- Liu, C.; Zhang, H.; Li, L.; Cui, T.J. Towards Intelligent Electromagnetic Inverse Scattering Using Deep Learning Techniques and Information Metasurfaces. IEEE Journal of Microwaves 2023, 3, 509–522. [Google Scholar] [CrossRef]

- Sun, B.; Wu, F.; Zhang, C.; Fan, W.; Gao, Z.; Liu, Y. Physics-Informed Contrast Source Inversion Learning Methods for Microwave Imaging. In Proceedings of the 2024 IEEE Asia-Pacific Microwave Conference (APMC), 2024, pp. 793–795. [CrossRef]

- Uduagbomen, J.; Lakshminarayana, S.; Liu, Z.; Leeson, M.S.; Xu, T. Physics-Informed Neural Network for Fibre Channel Modelling in Optical Communication Systems. In Proceedings of the 2023 23rd International Conference on Transparent Optical Networks (ICTON), 2023, pp. 1–4. [CrossRef]

- Wang, D.; Wang, S.; Kong, D.; Wang, J.; Li, W.; Pecht, M. Physics-Informed Sparse Neural Network for Permanent Magnet Eddy Current Device Modeling and Analysis. IEEE Magnetics Letters 2023, 14, 1–5. [Google Scholar] [CrossRef]

- Fujita, K. Physics-Informed Neural Network Method for Space Charge Effect in Particle Accelerators. IEEE Access 2021, 9, 164017–164025. [Google Scholar] [CrossRef]

- Liu, W.; Luo, W.; Cheng, X.; Zhou, M. Measurement-Physic-Constrained Neural Network for Multifield Reconstruction of PMC. IEEE Transactions on Instrumentation and Measurement 2025, 74, 1–9. [Google Scholar] [CrossRef]

- Yu, X.; Serrallés, J.E.C.; Giannakopoulos, I.I.; Liu, Z.; Daniel, L.; Lattanzi, R.; Zhang, Z. PIFON-EPT: MR-Based Electrical Property Tomography Using Physics-Informed Fourier Networks. IEEE Journal on Multiscale and Multiphysics Computational Techniques 2024, 9, 49–60. [Google Scholar] [CrossRef]

- Lim, K. Electrostatic Field Analysis Using Physics Informed Neural Net and Partial Differential Equation Solver Analysis. In Proceedings of the 2024 IEEE 21st Biennial Conference on Electromagnetic Field Computation (CEFC), 2024, pp. 01–02. [CrossRef]

- Fang, Z.; Zhan, J. Deep Physical Informed Neural Networks for Metamaterial Design. IEEE Access 2020, 8, 24506–24513. [Google Scholar] [CrossRef]

- Papadimitropoulos, S.; Tsogka, C.; Hasan, M. Synthetic Aperture Imaging Using Physically Informed Convolutional Neural Networks. In Proceedings of the 2024 IEEE Conference on Computational Imaging Using Synthetic Apertures (CISA), 2024, pp. 01–04. [CrossRef]

- Ruan, G.; Wang, Z.; Liu, C.; Xia, L.; Wang, H.; Qi, L.; Chen, W. Magnetic Resonance Electrical Properties Tomography Based on Modified Physics- Informed Neural Network and Multiconstraints. IEEE Transactions on Medical Imaging 2024, 43, 3263–3278. [Google Scholar] [CrossRef]

- Li, Y.; Liu, Y.; Yan, Y.; Wang, J.; Mattar, T. Deep Learning Method Based on Physics Informed Neural Networks for the Electromagnetic Stress Simulation in Transformer Windings. In Proceedings of the The Proceedings of the 19th Annual Conference of China Electrotechnical Society; Yang, Q.; Bie, Z.; Yang, X., Eds., Singapore, 2025; pp. 725–736. [CrossRef]

- Li, X.; Wang, P.; Yang, F.; Li, X.; Fang, Y.; Tong, J. DAL-PINNs: Physics-informed neural networks based on D’Alembert principle for generalized electromagnetic field model computation. Engineering Analysis with Boundary Elements 2024, 168, 105914. [Google Scholar] [CrossRef]

- Medvedev, V.; Erdmann, A.; Rosskopf, A. Physics-informed deep learning for 3D modeling of light diffraction from optical metasurfaces. Optics Express 2025, 33, 1371–1384. [Google Scholar] [CrossRef] [PubMed]

- Tan, B.; Yi, J.; Qin, Y.; Pu, H.; Luo, J. Design Optimization of Permanent Magnet Coupler Based on Physics-Informed Neural Networks. In Proceedings of the Advances in Mechanical Design; Tan, J.; Liu, Y.; Huang, H.Z.; Yu, J.; Wang, Z., Eds., Singapore, 2024; pp. 657–670. [CrossRef]

- Zhang, H.; Li, C.; Xia, R.; Chen, X.; Xiao, T.; Guo, X.W.; Liu, J. FE-PIRBN:Feature-Enhanced physics-informed radial basis neural networks for solving high-frequency electromagnetic scattering problems. Journal of Computational Physics 2025, 527, 113798. [Google Scholar] [CrossRef]

- Pu, H.; Tan, B.; Yi, J.; Yuan, S.; Zhao, J.; Bai, R.; Luo, J. A novel key performance analysis method for permanent magnet coupler using physics-informed neural networks. Eng. with Comput. 2023, 40, 2259–2277. [Google Scholar] [CrossRef]

- Wang, B.; Guo, Z.; Liu, J.; Wang, Y.; Xiong, F. Geophysical Frequency Domain Electromagnetic Field Simulation Using Physics-Informed Neural Network. Mathematics 2024, 12. [Google Scholar] [CrossRef]

- Hou, S.; Hao, X.; Pan, D.; Wu, W. Physics-informed neural network for simulating magnetic field of coaxial magnetic gear. Engineering Applications of Artificial Intelligence 2024, 133, 108302. [Google Scholar] [CrossRef]

- Dimitropoulos, I.; Contopoulos, I.; Mpisketzis, V.; Chaniadakis, E. The pulsar magnetosphere with machine learning: methodology. Monthly Notices of the Royal Astronomical Society 2024, 528, 3141–3152. [Google Scholar] [CrossRef]

- Suhendar, H.; Pratama, M.R.; Silambi, M.S. Mesh-Free Solution of 2D Poisson Equation with High Frequency Charge Patterns Using Data-Free Physics Informed Neural Network. In Proceedings of the Journal of Physics: Conference Series. IOP Publishing, 10 2024, Vol. 2866, p. 012053. [CrossRef]

- Qian, K.; Kheir, M. Investigating KAN-Based Physics-Informed Neural Networks for EMI/EMC Simulations. In Proceedings of the Intelligent Systems, Blockchain, and Communication Technologies; Abdelgawad, A.; Jamil, A.; Hameed, A.A., Eds., Cham, 2025; pp. 40–48. [CrossRef]

- Hu, Z.; Yang, A.; Xu, S.; Li, N.; Wu, Q.; Sun, Y. Prediction of soliton evolution and parameters evaluation for a high-order nonlinear Schrödinger–Maxwell–Bloch equation in the optical fiber. Physics Letters A 2025, 531, 130182. [Google Scholar] [CrossRef]

- Wang, Y.; Zhang, S. Multi-receptive-field physics-informed neural network for complex electromagnetic media. Optical Materials Express 2024, 14, 2740–2754. [Google Scholar] [CrossRef]

- Biswal, P.; Avdijaj, J.; Parente, A.; Coussement, A. Solving the Radiation Transfer Equation in Participating Media Using Physics Informed Neural Networks. In Proceedings of the Proceedings of the 10th World Congress on Mechanical, Chemical, and Material Engineering (MCM’24), 8 2024, pp. HTFF 269–1–HTFF 269–10. [CrossRef]

- Han, J.H.; Park, J.H.; Song, S.M.; Hong, S.K. Enhancing Learning Efficiency in Physics Informed Neural Network through Data Comparison and Transfer Learning. Journal of Electrical Engineering & Technology 2025, 20, 3335–3341. [Google Scholar] [CrossRef]

- Gaire, P.; Bhardwaj, S. Physics embedded neural network: Novel data-free approach towards scientific computing and applications in transfer learning. Neurocomputing 2025, 617, 128936. [Google Scholar] [CrossRef]

- Chang, C.; Xin, Z.; Zeng, T. A conservative hybrid deep learning method for Maxwell–Ampère–Nernst–Planck equations. Journal of Computational Physics 2024, 501, 112791. [Google Scholar] [CrossRef]

- Saleh, E.; Ghaffari, S.; Bretl, T.; Olson, L.; West, M. Learning from Integral Losses in Physics Informed Neural Networks. In Proceedings of the Proceedings of the 41st International Conference on Machine Learning; Salakhutdinov, R.; Kolter, Z.; Heller, K.; Weller, A.; Oliver, N.; Scarlett, J.; Berkenkamp, F., Eds. PMLR, 21–27 Jul 2024, Vol. 235, Proceedings of Machine Learning Research, pp. 43077–43111.

- Chen, Y.; Wang, C.; Hui, Y.; Shah, N.V.; Spivack, M. Surface Profile Recovery from Electromagnetic Fields with Physics-Informed Neural Networks. Remote Sensing 2024, 16. [Google Scholar] [CrossRef]

- Mahmoud, M.G.; Hares, A.S.; Hameed, M.F.O.; El-Azab, M.S.; Obayya, S.S.A. AI-driven photonics: Unleashing the power of AI to disrupt the future of photonics. APL Photonics 2024, 9, 080902. [Google Scholar] [CrossRef]

- Rahman, M.M.; Khan, A.; Lowther, D.; Giannacopoulos, D. Evaluating magnetic fields using deep learning. COMPEL - The international journal for computation and mathematics in electrical and electronic engineering 2023, 42, 1115–1132. [Google Scholar] [CrossRef]

- Xu, S.Y.; Zhou, Q.; Liu, W. Prediction of soliton evolution and equation parameters for NLS–MB equation based on the phPINN algorithm. Nonlinear Dynamics 2023, 111, 18401–18417. [Google Scholar] [CrossRef]

- Kovacs, A.; Exl, L.; Kornell, A.; Fischbacher, J.; Hovorka, M.; Gusenbauer, M.; Breth, L.; Oezelt, H.; Praetorius, D.; Suess, D.; et al. Magnetostatics and micromagnetics with physics informed neural networks. Journal of Magnetism and Magnetic Materials 2022, 548, 168951. [Google Scholar] [CrossRef]

- Barmada, S.; Barba, P.D.; Formisano, A.; Mognaschi, M.E.; Tucci, M. Physics-informed Neural Networks for the Resolution of Analysis Problems in Electromagnetics. Applied Computational Electromagnetics Society Journal (ACES) 2023, 38, 841–848. [Google Scholar] [CrossRef]

- Son, S.; Lee, H.; Jeong, D.; Oh, K.Y.; Ho Sun, K. A novel physics-informed neural network for modeling electromagnetism of a permanent magnet synchronous motor. Advanced Engineering Informatics 2023, 57, 102035. [Google Scholar] [CrossRef]

- Zhang, R.; Su, J.; Feng, J. Solution of the Hirota equation using a physics-informed neural network method with embedded conservation laws. Nonlinear Dynamics 2023, 111, 13399–13414. [Google Scholar] [CrossRef]

- Fujita, K. Impedance modeling of accelerator beams with discontinuous charge density using scattered-field physics-informed neural networks. IEICE Electronics Express 2023, 20, 20220523–20220523. [Google Scholar] [CrossRef]

- Fujita, K. Electromagnetic field computation of multilayer vacuum chambers with physics-informed neural networks. Frontiers in Physics 2022, 10–2022. [Google Scholar] [CrossRef]

- Zhelyeznyakov, M.; Fröch, J.; Wirth-Singh, A.; Noh, J.; Rho, J.; Brunton, S.; Majumdar, A. Large area optimization of meta-lens via data-free machine learning. Communications Engineering 2023, 2, 60. [Google Scholar] [CrossRef]

- Chen, Y.; Dal Negro, L. Physics-informed neural networks for imaging and parameter retrieval of photonic nanostructures from near-field data. APL Photonics 2022, 7, 010802. [Google Scholar] [CrossRef]

- Liu, Y.H.; Liu, J.P.; Wang, B.Z.; Wang, R. A PINN framework for inverse physical design of metal-loaded electromagnetic devices. AIP Advances 2024, 14, 125201. [Google Scholar] [CrossRef]

- Fujita, K. Comparison of physics-informed neural networks in solving electromagnetic interior scattering problems including a relativistic beam current. Journal of Advanced Simulation in Science and Engineering 2024, 11, 73–82. [Google Scholar] [CrossRef]

- Dodge, S.; Barmada, S.; Formisano, A. A STacked Adaptive Residual PINN (STAR-PINN) Approach to 2D Time-Domain Magnetic Diffusion in Nonlinear Materials. IEEE Access 2025, 13, 141380–141394. [Google Scholar] [CrossRef]

- Zheng, X.; Peng, T.J.; Hou, J.; Zhang, Y.; Chen, L.; Qin, S.L.; Mao, Y.Q.; Lu, W.B.; Zhang, J.N.; You, J.W.; et al. Hybrid Physics-Data-Driven Neural Network for Accurate Modeling of Scattering Problems. IEEE Transactions on Antennas and Propagation 2025, 73, 6826–6838. [Google Scholar] [CrossRef]

- Medvedev, V.; Rosskopf, A.; Erdmann, A. Generative Inverse Design of Metamaterials Enhanced by Physics-Informed Neural Network. In Proceedings of the 2025 Nineteenth International Congress on Artificial Materials for Novel Wave Phenomena (Metamaterials), 2025, pp. X–215–X–217. [CrossRef]

- Wu, S.; Ling, H.; Zhao, K.; Hong, D. Solving the Maxwell’s Equations From Magnetic Dipole Sources in 2.5-D TI Medium With PINNs. IEEE Transactions on Geoscience and Remote Sensing 2025, 63, 1–14. [Google Scholar] [CrossRef]

- Tosun, R.A.; Kuzucu, D.; Durgun, A.C.; Baydoğan, M.G. Fine-Pitch Interconnect Modeling Using Physics-Informed Neural Networks. In Proceedings of the 2025 IEEE 29th Workshop on Signal and Power Integrity (SPI), 2025, pp. 1–4. [CrossRef]

- Li, H.; Liu, J.G.; Wang, Y.; Xin, X.D.; Huang, X.W.; Sheng, X.Q. Physics-Informed Deep Learning for Inverse Scattering of Irregular Targets From Near-Field Data. IEEE Antennas and Wireless Propagation Letters 2025, 24, 3734–3738. [Google Scholar] [CrossRef]

- Fujita, K. Physics-Informed neural networks with transfer learning for space charge impedances in particle accelerators. International Journal of Applied Electromagnetics and Mechanics 2025, 78, 40–44. [Google Scholar] [CrossRef]

- Zhang, Y.; Li, R.; Tang, H.; Shi, Z.; Wei, B.; Gong, S.; Yang, L.; Yan, B. Electromagnetic Scattering from a Three-dimensional Object using Physics-informed Neural Network. Applied Computational Electromagnetics Society Journal (ACES) 2025, 40, 103–111. [Google Scholar] [CrossRef]

- Wan, B.; Lei, G.; Guo, Y.; Zhu, J. Physics-Informed Neural Networks Based on Unsupervised Learning for Multidomain Electromagnetic Analysis. IET Electric Power Applications 2025, 19, e70083. [Google Scholar] [CrossRef]

- Rutigliano, N.; Rossi, R.; Murari, A.; Gelfusa, M.; Craciunescu, T.; Mazon, D.; Gaudio, P. Physics-informed neural networks for the modelling of interferometer-polarimetry in tokamak multi-diagnostic equilibrium reconstructions. Plasma Physics and Controlled Fusion 2025, 67, 065029. [Google Scholar] [CrossRef]

- Qiao, Z.; Wang, D.; Ni, Y.; Song, K.; Li, Y.; Wang, S. A partitioned modeling approach using a physics-informed neural network for PMSM. Engineering Analysis with Boundary Elements 2025, 179, 106379. [Google Scholar] [CrossRef]

- Xu, W.; Zhong, Q.; Wang, M.; Wei, Z.; Wang, Z.; Cheng, X. High precision, full-vector optical mode solving in waveguides via fourth-order derivative physics-informed neural networks. Opt. Express 2025, 33, 38317–38328. [Google Scholar] [CrossRef] [PubMed]

- Wang, J.; Wang, D.; Wang, S.; Li, W.; Jiang, Y. Dimensionless Physics-Informed Neural Network for Electromagnetic Field Modelling of Permanent Magnet Eddy Current Coupler. IET Electric Power Applications 2025, 19, e70084. [Google Scholar] [CrossRef]

- Riganti, R.; Zhu, Y.; Cai, W.; Torquato, S.; Negro, L.D. Multiscale Physics-Informed Neural Networks for the Inverse Design of Hyperuniform Optical Materials. Advanced Optical Materials 2025, 13, 2403304. [Google Scholar] [CrossRef]

- Qin, H.; Zhang, T.; Bao, H.; Yu, Z.; Ding, D. Physics-Informed Neural Network for Solving Three-Dimensional Maxwell’s Equations. In Proceedings of the 2025 International Conference on Microwave and Millimeter Wave Technology (ICMMT), 2025, pp. 1–3. [CrossRef]

- Ou, M.; Sun, Y.F.; Zhu, H.; Li, X.H. Physics-Informed Neural Network for Rapid Prediction of Wide-Angle Electromagnetic Scattering from Three-Dimensional Objects. In Proceedings of the 2025 IEEE 13th Asia-Pacific Conference on Antennas and Propagation (APCAP), 2025, pp. 178–179. [CrossRef]

- Su, W.; Shao, W.; Cheng, X.; Ding, X. A Physics-Informed Neural Network for Unconditionally Stable Time-Domain Simulations. In Proceedings of the 2025 International Conference on Microwave and Millimeter Wave Technology (ICMMT), 2025, pp. 1–3. [CrossRef]

- Hu, Y.D.; Zhang, K.; Zhang, L.; Du, W.; Wang, X.H. An Improved Physics-Informed Neural Networks Method for Three-Dimensional Electromagnetic Inverse Scattering Problems. In Proceedings of the 2025 International Conference on Microwave and Millimeter Wave Technology (ICMMT), 2025, pp. 1–3. [CrossRef]

- Fan, J.; Shao, J.; Liu, J.P.; Chang, H.; Liu, Y.; Wang, R.; Wang, B.Z. Electromagnetic Inverse Design Method for 2-D Parametric-Curve-Defined Metallic Structures Based on PINNs. IEEE Transactions on Microwave Theory and Techniques 2026, 74, 1385–1395. [Google Scholar] [CrossRef]

- Barmada, S.; Dodge, S.; Formisano, A. Weak Formulation for Physics-Informed Neural Networks in the Resolution of Analysis Problems in Electromagnetics. IEEE Transactions on Magnetics 2025, 1–1. [Google Scholar] [CrossRef]

- Zhu, Y.; Guo, Z.; Lei, G.; Guo, Y.; Zhu, J. Self-Adaptive Physics-Informed Neural Networks for Solving 2-D Magnetostatic Fields in Open Boundaries. IEEE Transactions on Magnetics 2025, 1–1. [Google Scholar] [CrossRef]

- Shaviner, G.G.; Chandravamsi, H.; Pisnoy, S.; Chen, Z.; Frankel, S.H. PINNs for solving unsteady Maxwell’s equations: convergence issues and comparative assessment with compact schemes. Neural Computing and Applications 2025, 37, 24103–24122. [Google Scholar] [CrossRef]

- Wang, S.; Wang, K.; Zeng, P.; Lei, Y.; Wang, Z.; Zhang, B. Gradient-aligned physics-informed neural network for performance analysis of permanent magnet eddy current device under complex operating conditions. Expert Systems with Applications 2026, 299, 129915. [Google Scholar] [CrossRef]

- Sun, B.; Guo, X.; Wu, F.; Gao, Z.; Su, M.; Liu, Y. A Physics-Informed Contrast Source Inversion Learning Method for Solving Full-Wave 2-D Inverse Scattering Problems. IEEE Transactions on Microwave Theory and Techniques 2025, 73, 9701–9716. [Google Scholar] [CrossRef]

- Mo, G.; Narayanan, K.K.; Castells-Rufas, D.; Carrabina, J. Physics-Informed Neural Network Surrogate Model For Capacitive Touch Sensors By Solving Maxwell’s Equations. In Proceedings of the Proceedings of the 39th ECMS International Conference on Modelling and Simulation, ECMS 2025, Catania, Italy, June 2025; Scarpa, M.; Cavalieri, S.; Serrano, S.; Vita, F.D., Eds. European Council for Modeling and Simulation, 2025, pp. 390–396. [CrossRef]

- Sun, Y.; Xv, W. Application of Physical Information Neural Network Based on Fourier Features in Electromagnetic Computing. In Proceedings of the Proceedings of the 1st Electrical Artificial Intelligence Conference, Volume 1; Qu, R.; Song, Z.; Ding, Z.; Mu, G.; Xiong, R.; Han, L., Eds., Singapore, 2025; pp. 63–76. [CrossRef]

- Rigoni, T.; Arcieri, G.; Haywood-Alexander, M.; Haener, D.; Chatzi, E. Modeling GPR observations on railway tracks via black box and physics informed neural networks. In Proceedings of the Proceedings of the 9th European Congress on Computational Methods in Applied Sciences and Engineering (ECCOMAS), 2024, pp. 1–12. [CrossRef]

- Toghranegar, S.; Kazmi, H.; Deconinck, G.; Sabariego, R.V. Magnetostatic and Magnetodynamic Modeling With Unsupervised Physics-Informed Neural Networks. IEEE Transactions on Magnetics 2025, 61, 1–10. [Google Scholar] [CrossRef]

- Zhu, R.; Cong, X.; Pu, S.; Lin, N.; Dinavahi, V. SAS-PINN: An Enhanced Physics-Informed Neural Network for 2-D Time-Domain Electromagnetic Field Computation of Power Transformer. IEEE Transactions on Magnetics 2025, 61, 1–8. [Google Scholar] [CrossRef]

- Kheir, M.; Qian, K.; Nabi, M.; Ebel, T. Modular Meshless Electromagnetic Simulation Using KAN-Based Physics-Informed Neural Networks. IEEE Journal on Multiscale and Multiphysics Computational Techniques 2025, 10, 452–458. [Google Scholar] [CrossRef]

- Rezende, R.S.; Piwonski, A.; Schuhmann, R. An Efficient Architecture Selection Approach for PINNs Applied to Electromagnetic Problems. IEEE Transactions on Magnetics 2025, 1–1. [Google Scholar] [CrossRef]

- Lou, X.Y.; Zhang, J.B.; Yu, D.M.; Wang, D.F.; Pan, X.M. Solution of Electromagnetic Scattering and Inverse Scattering by Integral Equations Through Neural Networks. IEEE Transactions on Antennas and Propagation 2025, 73, 9654–9659. [Google Scholar] [CrossRef]

- Brendel, P.; Medvedev, V.; Rosskopf, A. Convolutional Physics-Informed Neural Networks for Fast Prediction of Core Losses in Axisymmetric Transformers. IEEE Transactions on Magnetics 2024, 60, 1–4. [Google Scholar] [CrossRef]

| Research Question | |

|---|---|

| RQ1 | How extensively are PINNs applied within electromagnetics? |

| RQ2 | Which subfields of electromagnetics are PINNs applied to? |

| RQ3 | Which network architectures are used for PINNs in solving Maxwell’s equations? |

| RQ4 | What spatial dimensionality are the electromagnetic problems solved? |

| RQ5 | Are the reviewed domains divided into different media? |

| RQ6 | Which learning paradigms are used for solving Maxwell’s equations with PINNs? |

| RQ7 | Are there associations between the extracted characteristics of the reviewed publications? |

| Inclusion criterion | |

|---|---|

| I1 | Employed PINNs to solve Maxwell’s equations or related electromagnetic problems. |

| I2 | Is a peer-reviewed journal article or conference paper. |

| I3 | Described the neural network architecture used. |

| I4 | Provided sufficient details on the application domain. |

| I5 | Provided sufficient details on the learning paradigm. |

| I6 | Provided sufficient details on the electromagnetic problem. |

| Exclusion Criterion | |

|---|---|

| E1 | Is not written in English. |

| E2 | Is of type review, editorial, book, awarded grant, preprint. |

| E3 | Is not peer-reviewed. |

| E4 | Full-text is not accessible to the reviewers. |

| E5 | Is not utilizing PINNs. |

| E6 | Addresses a problem outside of electromagnetics or does not solve field equations. |

| Characteristics | Description | Categories |

|---|---|---|

| Bibliographic | ||

| Title | Title of the publication | Free text (as extracted from the database) |

| Type of Publication | Document type | Journal article; Conference paper |

| Authors | Authors of the publication | Free text (as extracted from the database) |

| Publication Year | Year in which the work was published | Integer |

| DOI | Digital Object Identifier of the publication | Free text (as extracted from the database) |

| Journal | Journal or conference venue | Free text (as extracted from the database) |

| Problem | ||

| Physics Regime | Regime addressed in electromagnetics | Magnetostatics; Electrostatics; Magnetoquasistatics; Electroquasistatics; Electrodynamics |

| Dimensionality | Spatial dimensionality of the problem | 0D; 1D; 2D; 3D |

| Medium | Properties of the reviewed medium | Homogeneous; Inhomogeneous |

| Model | ||

| Network Architecture | Neural network architecture used in the PINN | Feedforward Neural Network (FNN); Graph Neural Network (GNN); Transformer; Recurrent Neural Network (RNN); Autoencoder; Convolutional Neural Network (CNN); DeepONet; Other |

| Learning Paradigm | Learning setting used to train the model and use of data | Supervised Learning; Semi-supervised Learning; Unsupervised Learning |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).