Submitted:

09 April 2026

Posted:

10 April 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Materials and Methods

2.1. Participants

2.2. Experimental Design and Stimuli

2.3. EEG Recording and Preprocessing

2.4. Feature Extraction

2.4.1. Time-Domain Features

2.4.2. Frequency-Domain Features

2.4.3. Nonlinear Features

2.5. Classification Analysis

2.5.1. Machine-Learning Models

2.5.2. Deep-Learning Model

2.5.3. EEGNet-Based Saliency Analysis

2.6. Source-Space Estimation

3. Results

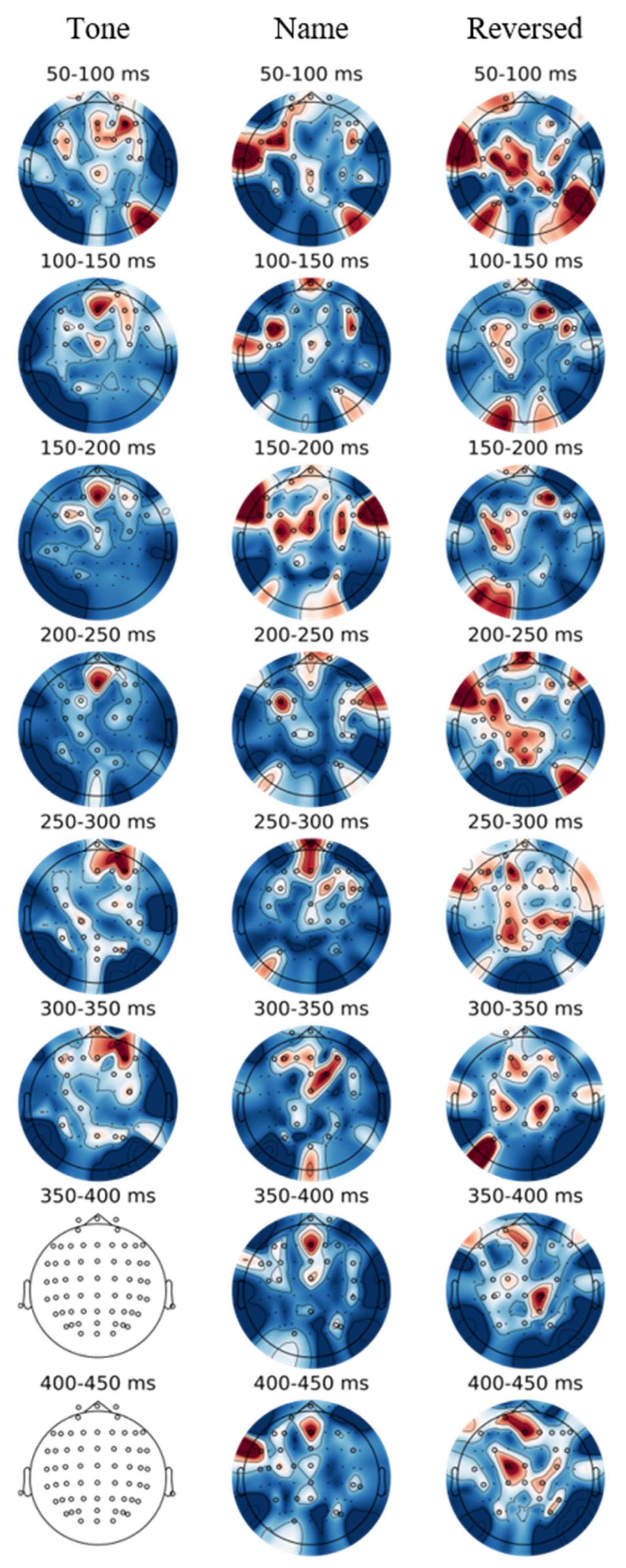

3.1. ERP Results

3.2. Source-Space Results

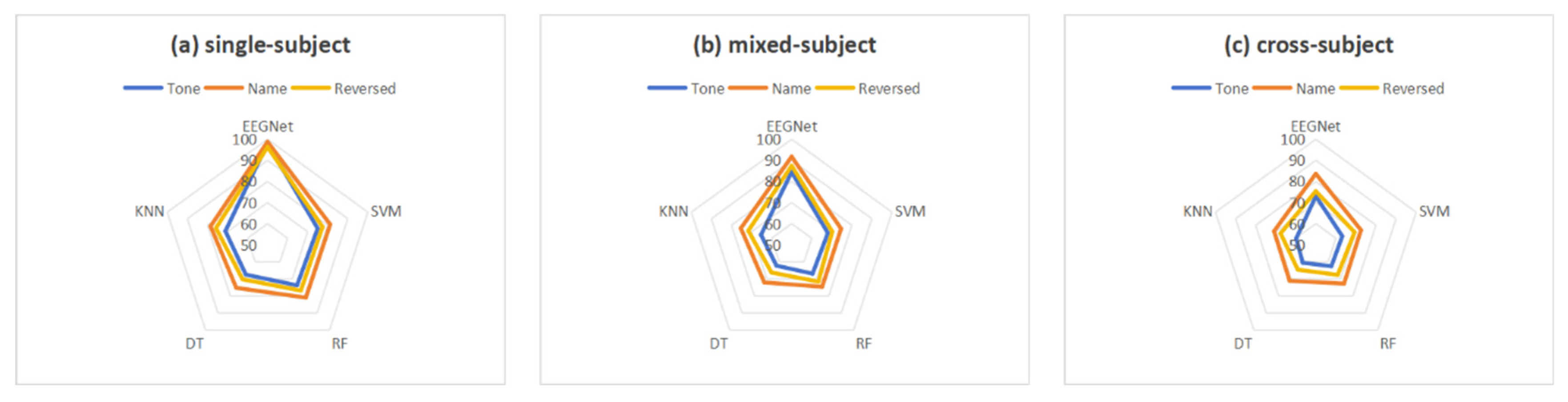

3.3. Classification Results

3.4. EEGNet Saliency Topography

4. Discussion

5. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

Appendix A

| Paradigm | Cluster ID | Time Range (ms) | Vertices | p-value | Primary Regions |

| Tone | 0 | 1.0 - 395.0 | 3889 | 0.0003 | superiorfrontal-lh: 278 (7.1%); precentral-lh: 256 (6.6%); superiorparietal-lh: 239 (6.1%); postcentral-lh: 202 (5.2%); rostralmiddlefrontal-lh: 201 (5.2%) |

| 1 | 5.0 - 450.0 | 3748 | 0.0003 | inferiorparietal-rh : 241 (6.4%); superiorfrontal-rh : 238 (6.4%); superiorparietal-rh : 229 (6.1%); precentral-rh : 220 (5.9%); rostralmiddlefrontal-rh : 197 (5.3%) | |

| 2 | 37.0 - 107.0 | 97 | 0.0180 | superiorparietal-lh : 50 (51.5%); lateraloccipital-lh : 26 (26.8%); inferiorparietal-lh : 21 (21.6%) | |

| 3 | 134.0 - 208.0 | 52 | 0.0233 | superiorparietal-rh : 40 (76.9%); inferiorparietal-rh : 12 (23.1%) | |

| 4 | 351.0 - 398.0 | 70 | 0.0410 | inferiorparietal-rh : 35 (50.0%); superiorparietal-rh : 35 (50.0%) | |

| 5 | 360.0 - 450.0 | 1920 | 0.0007 | precentral-lh : 159 (8.3%); superiortemporal-lh : 140 (7.3%); insula-lh : 118 (6.1%); postcentral-lh : 107 (5.6%); precuneus-lh : 100 (5.2%) | |

| 6 | 401.0 - 430.0 | 74 | 0.0350 | superiorparietal-rh : 74 (100.0%) | |

| Name | 0 | 322.0 - 397.0 | 61 | 0.0127 | lateralorbitofrontal-lh : 5 (8.2%); medialorbitofrontal-lh : 1 (1.6%) |

| 1 | 34.0 - 101.0 | 117 | 0.0167 | insula-lh : 64 (54.7%) parstriangularis-lh : 20 (17.1%); parsopercularis-lh : 11 (9.4%); superiortemporal-lh : 9 (7.7%); supramarginal-lh : 8 (6.8%) |

|

| 2 | 49.0 - 118.0 | 69 | 0.0260 | supramarginal-rh : 43 (62.3%); postcentral-rh : 16 (23.2%); insula-rh : 9 (13.0%); precentral-rh : 1 (1.4%) | |

| 3 | 134.0 - 208.0 | 151 | 0.0083 | posteriorcingulate-lh : 48 (31.8%); caudalanteriorcingulate-lh : 7 (4.6%); isthmuscingulate-lh : 2 (1.3%) | |

| 4 | 60.0 - 114.0 | 120 | 0.0153 | posteriorcingulate-rh : 22 (18.3%); caudalanteriorcingulate-rh : 12 (10.0%); rostralanteriorcingulate-rh : 1 (0.8%) | |

| 5 | 111.0 - 270.0 | 1913 | 0.0003 | superiortemporal-rh : 128 (6.7%); precentral-rh : 117 (6.1%); precuneus-rh : 111 (5.8%); superiorparietal-rh : 106 (5.5%); supramarginal-rh : 101 (5.3%) | |

| 6 | 121.0 - 265.0 | 1974 | 0.0003 | precentral-lh : 211 (10.7%); postcentral-lh : 171 (8.7%); superiorfrontal-lh : 117 (5.9%); superiortemporal-lh : 111 (5.6%); precuneus-lh : 109 (5.5%) | |

| 7 | 151.0 - 249.0 | 30 | 0.0340 | inferiorparietal-rh : 30 (100.0%) | |

| 8 | 153.0 - 235.0 | 22 | 0.0367 | precentral-rh : 22 (100.0%) | |

| 9 | 630.0 - 764.0 | 44 | 0.0150 | precentral-lh : 21 (47.7%); parsopercularis-lh : 14 (31.8%); caudalmiddlefrontal-lh : 8 (18.2%); rostralmiddlefrontal-lh : 1 (2.3%) | |

| 10 | 630.0 - 706.0 | 70 | 0.0443 | insula-rh : 46 (65.7%); supramarginal-rh : 13 (18.6%); postcentral-rh : 7 (10.0%); superiortemporal-rh : 3(4.3%); precentral-rh : 1 (1.4%) | |

| 11 | 675.0 - 707.0 | 106 | 0.0170 | medialorbitofrontal-rh : 5 (4.7%); rostralanteriorcingulate-rh : 1 (0.9%) | |

| Reversed | 0 | 422.0 - 508.0 | 46 | 0.0137 | caudalanteriorcingulate-lh : 29 (63.0%); rostralanteriorcingulate-lh : 6 (13.0%); posteriorcingulate-lh : 3 (6.5%); superiorfrontal-lh : 2 (4.3%) |

| 1 | 724.0 - 800.0 | 58 | 0.0100 | none | |

| 2 | 730.0 - 800.0 | 41 | 0.0180 | posteriorcingulate-rh : 27 (65.9%); precuneus-rh : 14 (34.1%) | |

| 3 | 731.0 - 800.0 | 53 | 0.0037 | posteriorcingulate-lh : 22 (41.5%); isthmuscingulate-lh : 12 (22.6%); caudalanteriorcingulate-lh : 1 (1.9%) | |

| 4 | 733.0 - 808.0 | 120 | 0.0023 | posteriorcingulate-rh : 24 (20.0%); isthmuscingulate-rh : 16 (13.3%); caudalanteriorcingulate-rh : 2 (1.7%) | |

| 5 | 205.0 - 275.0 | 35 | 0.0283 | precentral-rh : 31 (88.6%); caudalmiddlefrontal-rh : 2 (5.7%); parsopercularis-rh : 2 (5.7%) |

References

- Näätänen, R.; Paavilainen, P.; Rinne, T.; Alho, K. The mismatch negativity (MMN) in basic research of central auditory processing: A review. Clinical Neurophysiology 2007, 118, 2544-2590. [CrossRef]

- Polich, J. Updating P300: An integrative theory of P3a and P3b. Clinical Neurophysiology 2007, 118, 2128-2148. [CrossRef]

- Garrido, M.I.; Kilner, J.M.; Stephan, K.E.; Friston, K.J. The mismatch negativity: A review of underlying mechanisms. Clinical Neurophysiology 2009, 120, 453-463. [CrossRef]

- Doeller, C.F.; Opitz, B.; Mecklinger, A.; Krick, C.; Reith, W.; Schröger, E. Prefrontal cortex involvement in preattentive auditory deviance detection. NeuroImage 2003, 20, 1270-1282. [CrossRef]

- Opitz, B.; Rinne, T.; Mecklinger, A.; von Cramon, D.Y.; Schröger, E. Differential Contribution of Frontal and Temporal Cortices to Auditory Change Detection: fMRI and ERP Results. NeuroImage 2002, 15, 167-174. [CrossRef]

- Liu, Y.; Li, P.; Shu, H.; Zhang, Q.; Chen, L. Structure and meaning in Chinese: An ERP study of idioms. Journal of Neurolinguistics 2010, 23, 615-630. [CrossRef]

- Schnakers, C.; Perrin, F.; Schabus, M.; Majerus, S.; Ledoux, D.; Damas, P.; Boly, M.; Vanhaudenhuyse, A.; Bruno, M.A.; Moonen, G.; et al. Voluntary brain processing in disorders of consciousness. Neurology 2008, 71, 1614-1620. [CrossRef]

- Alexopoulos, T.; Muller, D.; Ric, F.; Marendaz, C. I, me, mine: Automatic attentional capture by self-related stimuli. European Journal of Social Psychology 2012, 42, 770-779. [CrossRef]

- Höller, Y.; Kronbichler, M.; Bergmann, J.; Crone, J.S.; Ladurner, G.; Golaszewski, S. EEG frequency analysis of responses to the own-name stimulus. Clinical Neurophysiology 2011, 122, 99-106. [CrossRef]

- Näätänen, R.; Kujala, T.; Winkler, I. Auditory processing that leads to conscious perception: A unique window to central auditory processing opened by the mismatch negativity and related responses. Psychophysiology 2010, 48, 4-22. [CrossRef]

- Thornhill, D.E.; Van Petten, C. Lexical versus conceptual anticipation during sentence processing: Frontal positivity and N400 ERP components. International Journal of Psychophysiology 2012, 83, 382-392. [CrossRef]

- Qin, P.; Di, H.; Yan, X.; Yu, S.; Yu, D.; Laureys, S.; Weng, X. Mismatch negativity to the patient’s own name in chronic disorders of consciousness. Neuroscience Letters 2008, 448, 24-28. [CrossRef]

- Ghani, U.; Signal, N.; Niazi, I.K.; Taylor, D. ERP based measures of cognitive workload: A review. Neuroscience & Biobehavioral Reviews 2020, 118, 18-26. [CrossRef]

- Hsu, Y.F.; Darriba, A.; Waszak, F. Attention modulates repetition effects in a context of low periodicity. Brain Res 2021, 1767, 147559. [CrossRef]

- Orban, M.; Elsamanty, M.; Guo, K.; Zhang, S.; Yang, H. A Review of Brain Activity and EEG-Based Brain–Computer Interfaces for Rehabilitation Application. Bioengineering 2022, 9, 768. [CrossRef]

- Blankertz, B.; Lemm, S.; Treder, M.; Haufe, S.; Müller, K.-R. Single-trial analysis and classification of ERP components — A tutorial. NeuroImage 2011, 56, 814-825. [CrossRef]

- Gilmore, C.S.; Clementz, B.A.; Berg, P. Hemispheric differences in auditory oddball responses during monaural versus binaural stimulation. International Journal of Psychophysiology 2009, 73, 326-333. [CrossRef]

- Qin, P.; Northoff, G. How is our self related to midline regions and the default-mode network? NeuroImage 2011, 57, 1221-1233. [CrossRef]

- Brown, A.; Pinto, D.; Burgart, K.; Zvilichovsky, Y.; Zion-Golumbic, E. Neurophysiological Evidence for Semantic Processing of Irrelevant Speech and Own-Name Detection in a Virtual Café. The Journal of Neuroscience 2023, 43, 5045-5056. [CrossRef]

- Erlbeck, H.; Real, R.G.L.; Kotchoubey, B.; Mattia, D.; Bargak, J.; Kübler, A. Basic discriminative and semantic processing in patients in the vegetative and minimally conscious state. International Journal of Psychophysiology 2017, 113, 8-16. [CrossRef]

- Holeckova, I.; Fischer, C.; Giard, M.-H.; Delpuech, C.; Morlet, D. Brain responses to a subject’’s own name uttered by a familiar voice. Brain Research 2006, 1082, 142-152. [CrossRef]

- Huang, F.; Wu, J.; Cheng, L.; Meng, Y. The Effects of Synonym and Antonym Relations on Visual Recognition of Chinese Compound Words: Based on Behavioral and ERP Experiments. International Journal of Psychophysiology 2021, 168. [CrossRef]

- Nijhof, A.D.; von Trott Zu Solz, J.; Catmur, C.; Bird, G. Equivalent own name bias in autism: An EEG study of the Attentional Blink. Cogn Affect Behav Neurosci 2022, 22, 625-639. [CrossRef]

- Roer, J.P.; Cowan, N. A preregistered replication and extension of the cocktail party phenomenon: One’’s name captures attention, unexpected words do not. J Exp Psychol Learn Mem Cogn 2021, 47, 234-242. [CrossRef]

- Wu, H.; Wang, D.; Liu, Y.; Xie, M.; Zhou, L.; Wang, Y.; Cao, J.; Huang, Y.; Qiu, M.; Qin, P. Decoding subject’’s own name in the primary auditory cortex. Human Brain Mapping 2022, 44, 1985-1996. [CrossRef]

- Zhang, Y.; Xie, M.; Wang, Y.; Qin, P. Distinct Effects of Stimulus Repetition on Various Temporal Stages of Subject’s Own Name Processing. Brain Sciences 2022, 12, 411.

- Bao, H.; Xie, M.; Huang, Y.; Liu, Y.; Lan, C.; Lin, Z.; Wang, Y.; Qin, P. Specificity in the processing of a subject’s own name. Social Cognitive and Affective Neuroscience 2023, 18, nsad066. [CrossRef]

- Tamura, K.; Mizuba, T.; Iramina, K. ERP and time-frequency analysis of the response to hearing subject’’s own name. International Journal of Psychophysiology 2014, 94. [CrossRef]

- Wang, F.; Di, H.; Hu, X.; Jing, S.; Thibaut, A.; Di Perri, C.; Huang, W.; Nie, Y.; Schnakers, C.; Laureys, S. Cerebral response to subject’s own name showed high prognostic value in traumatic vegetative state. BMC Medicine 2015, 13, 83. [CrossRef]

- Gramfort, A.; Luessi, M.; Larson, E.; Engemann, D.A.; Strohmeier, D.; Brodbeck, C.; Parkkonen, L.; Hämäläinen, M.S. MNE software for processing MEG and EEG data. NeuroImage 2014, 86, 446-460. [CrossRef]

- Liu, Y.; Huang, C.; Huang, X.; Chen, H.; Qin, P. Using the attribute amnesia paradigm to test the automatic memory advantage of person names. Psychon Bull Rev 2021, 28, 2019-2026. [CrossRef]

- Guan, L.; Qi, M.; Li, H.; Hitchman, G.; Yang, J.; Liu, Y. Priming with threatening faces modulates the self-face advantage by enhancing the other-face processing rather than suppressing the self-face processing. Brain Res 2015, 1608, 97-107. [CrossRef]

- Hervais-Adelman, A.G.; Carlyon, R.P.; Johnsrude, I.S.; Davis, M.H. Brain regions recruited for the effortful comprehension of noise-vocoded words. Language and Cognitive Processes 2012, 27, 1145-1166. [CrossRef]

- Real, R.G.L.; Veser, S.; Erlbeck, H.; Risetti, M.; Vogel, D.; Müller, F.; Kotchoubey, B.; Mattia, D.; Kübler, A. Information processing in patients in vegetative and minimally conscious states. Clinical Neurophysiology 2016, 127, 1395-1402. [CrossRef]

- Fan, X.; Han, S. Neural responses to one’’s own name under mortality threat. Neuropsychologia 2018, 108, 32-41. [CrossRef]

- Honbolygó, F.; Zulauf, B.; Zavogianni, M.I.; Csépe, V. Investigating the neurocognitive background of speech perception with a fast multi-feature MMN paradigm. Biologia Futura 2024, 75, 145-158. [CrossRef]

- Li, Y.; Peng, P.; Guo, T.; Lu, P.; Liu, X.; Chen, S.; Liu, L.; Guo, T. A Comparison Between the Oddball Paradigm and the Multi-Feature Paradigm: Evidence From an Event-Related Potential Study on Processing Mandarin Vowels and Tones. European Journal of Neuroscience 2025, 62, e70212. [CrossRef]

- Apps, M.A.; Tsakiris, M. The free-energy self: a predictive coding account of self-recognition. Neurosci Biobehav Rev 2014, 41, 85-97. [CrossRef]

- Takasago, M.; Kunii, N.; Fujitani, S.; Ishishita, Y.; Tada, M.; Kirihara, K.; Komatsu, M.; Uka, T.; Shimada, S.; Nagata, K.; et al. Auditory prediction errors in sound frequency and duration generated different cortical activation patterns in the human brain: an ECoG study. Cerebral Cortex 2024, 34, bhae072. [CrossRef]

- Carter, J.A.; Bidelman, G.M. Perceptual warping exposes categorical representations for speech in human brainstem responses. NeuroImage 2023, 269, 119899. [CrossRef]

- Saparbayeva, M.; Shomanov, A.; Lee, M.-H. A Novel Binary BCI Systems Based on Non-oddball Auditory and Visual Paradigms. Cham, 2021; pp. 3-14.

- Lawhern, V.J.; Solon, A.J.; Waytowich, N.R.; Gordon, S.M.; Hung, C.P.; Lance, B.J. EEGNet: A Compact Convolutional Network for EEG-based Brain-Computer Interfaces. Journal of Neural Engineering 2018, 15, 056013.056011-056013.056017.

- Hauk, O.; Stenroos, M.; Treder, M.S. Towards an objective evaluation of EEG/MEG source estimation methods – The linear approach. NeuroImage 2022, 255, 119177. [CrossRef]

- Yazıcı, M.; Ulutaş, M.; Okuyan, M. Effect of EEG Electrode Numbers on Source Estimation in Motor Imagery. Brain Sciences 2025, 15, 685. [CrossRef]

- Deroche, M.L.D.; Wolfe, J.; Neumann, S.; Manning, J.; Towler, W.; Alemi, R.; Bien, A.G.; Koirala, N.; Hanna, L.; Henry, L.; et al. Auditory evoked response to an oddball paradigm in children wearing cochlear implants. Clinical Neurophysiology 2023, 149, 133-145. [CrossRef]

- Jiang, C.G.; Wang, J.; Sun, Y.F.; Tan, S.P.; Percell, S.M.; Zhou, Z.H.; Pan, J.Q.; Hall, M.H.; consortium, G. Unveiling distinct representations of P3a in schizophrenia through two-stimulus and three-stimulus auditory oddball paradigms. Schizophrenia Research 2025, 277””””, 159-168. [CrossRef]

- Pruvost-Robieux, E.; Benghanem, S.; Lauriers, C.D.; Llorens, A.; Gavaret, M. How can emotion and familiarity improve own-name oddball paradigms? Neurophysiologie Clinique 2025, 55, 103050. [CrossRef]

- Yang, Y.; An, X.; Chen, L.; Liu, S.; Zhao, X.; Ming, D. Study on the effect of nontarget types on name based auditory event-related potentials. Annu Int Conf IEEE Eng Med Biol Soc 2020, 2020, 3003-3006. [CrossRef]

| Paradigm | MMN_Latency | MMN_Amplitude | P300_Latency | P300_Amplitude |

| Tone | 158.0 | -2.30 | 310.0 | 1.77 |

| Name | 205.0 | -6.39 | 371.0 | 3.95 |

| Reversed | 259.0 | -5.67 | 450.0 | 2.55 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).