Submitted:

09 April 2026

Posted:

09 April 2026

You are already at the latest version

Abstract

Keywords:

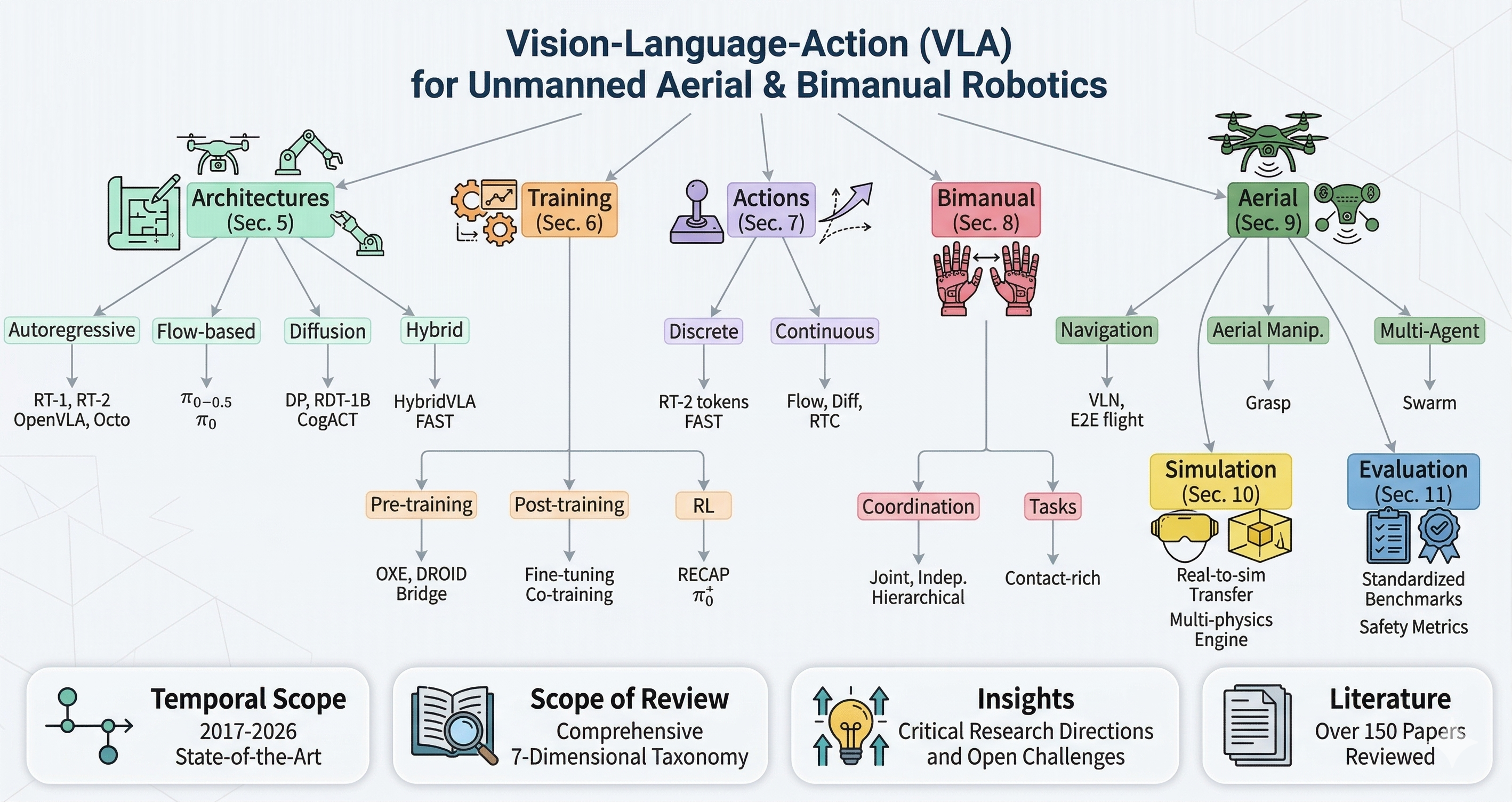

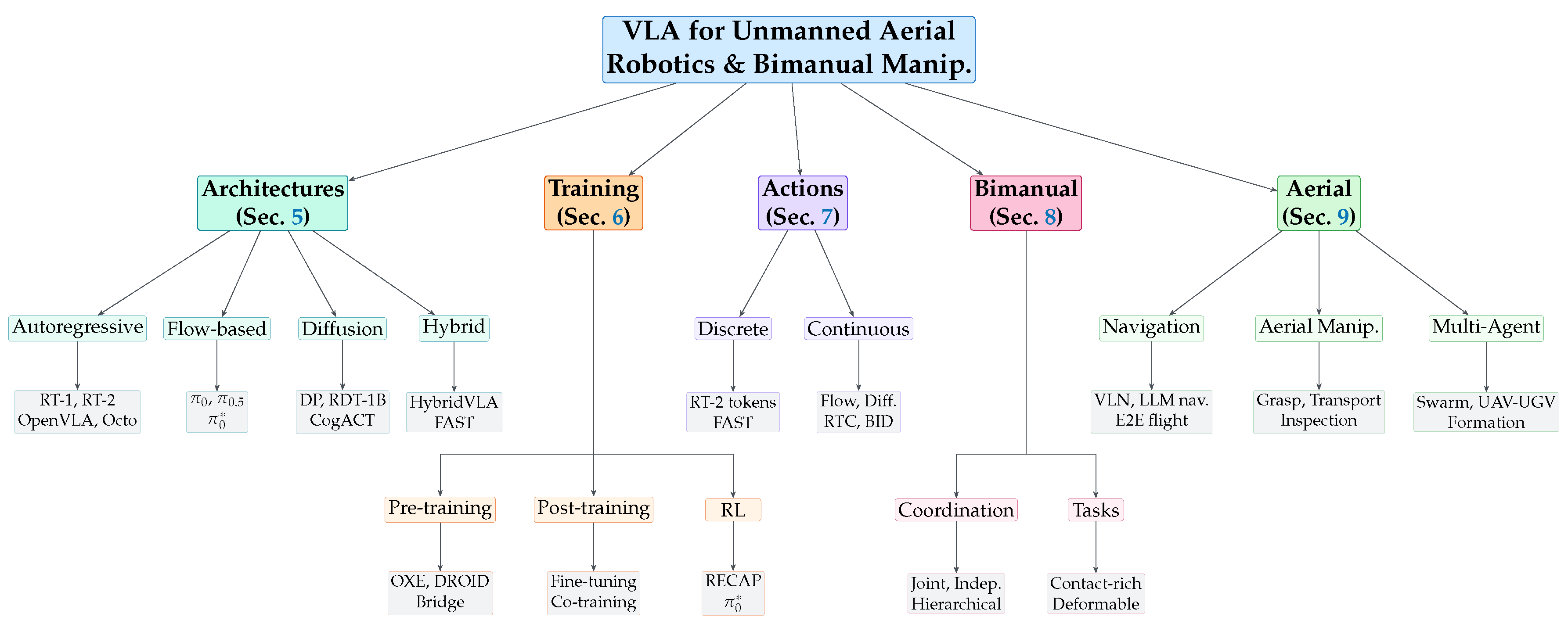

1. Introduction

- A unified taxonomy of VLA models covering architectures, training, action representations, bimanual manipulation, and unmanned aerial robotics, with comparison tables spanning 30+ methods.

- The first cross-domain analysis connecting bimanual coordination strategies to multi-drone and aerial manipulation systems, showing how insights transfer between embodiments.

- Fourteen research directions identifying open challenges across both domains, from real-time control and safety certification to end-to-end drone VLAs and bridging the research-to-production gap.

2. Problem Definition and Scope

2.1. VLA Policy Formulation

2.2. Action Chunking

2.3. Flow Matching for Action Generation

2.4. Bimanual Coordination

- 1.

- Independent: Each arm executes its own subtask without coupling (e.g., one arm picks an object while the other holds a container).

- 2.

- Loosely coupled: Arms must coordinate timing but not forces (e.g., handover tasks where one arm releases as the other grasps).

- 3.

- Tightly coupled: Arms must coordinate both motion and forces simultaneously (e.g., folding fabric where both arms must apply tension).

2.5. Scope of This Review

3. Background

3.1. Vision-Language Models

3.2. Imitation Learning

3.3. Generative Modeling for Actions

3.3.1. Autoregressive Models

3.3.2. Diffusion Models

3.3.3. Flow Matching

3.4. Bimanual Robotic Systems

3.5. Aerial Robotic Systems

4. Datasets, Benchmarks, and Evaluation

4.1. Pre-training Datasets

4.2. Simulation Benchmarks

4.3. Evaluation Metrics

| Dataset | Episodes | Embodiments | Tasks | Bimanual | Language | Year |

|---|---|---|---|---|---|---|

| OXE [47] | >1M | 22 | 527 | ✓ | ✓ | 2024 |

| DROID [48] | 76K | 1 (Franka) | 86 | – | ✓ | 2024 |

| BridgeData V2 [49] | 60K | 1 (WidowX) | 13 | – | ✓ | 2023 |

| ALOHA [17] | ∼1K | 1 (ALOHA) | 6 | ✓ | – | 2023 |

| GigaBrain-0.5M [51] | 500K | Multiple | 200+ | ✓ | ✓ | 2025 |

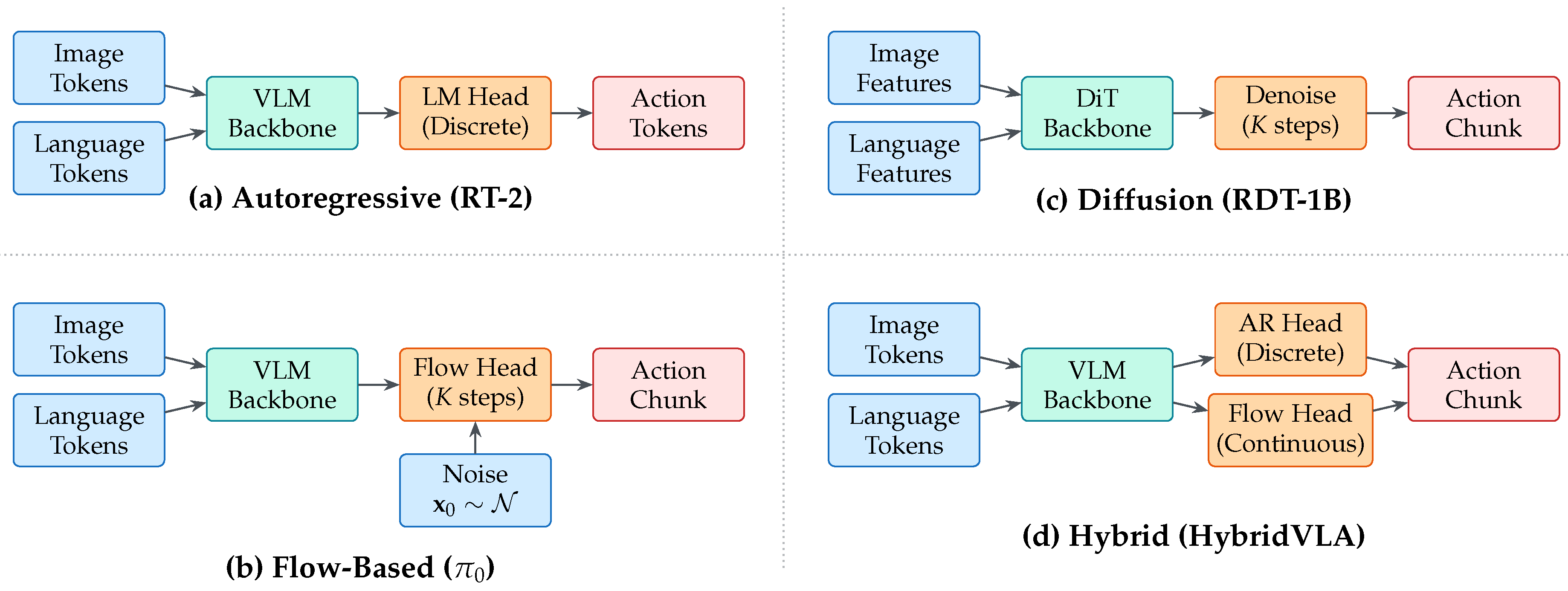

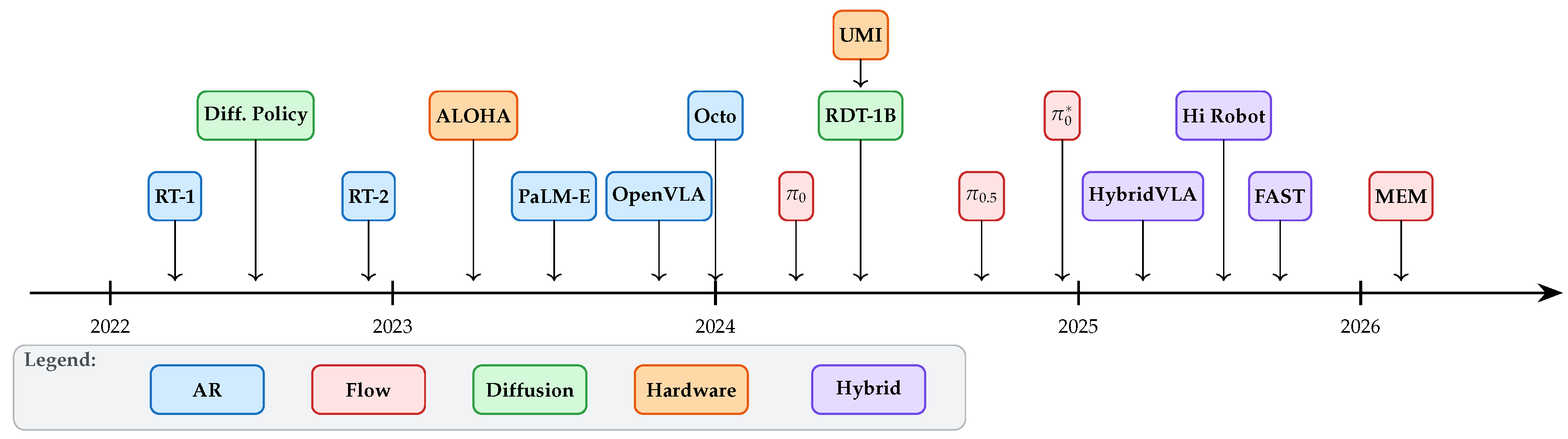

5. VLA Architectures and Foundations

5.1. Autoregressive VLAs

5.1.1. RT-1 and RT-2

5.1.2. OpenVLA

5.1.3. Octo

5.2. Flow-Based VLAs

5.2.1.

5.2.2.

5.2.3. and RECAP

5.3. Diffusion-Based VLAs

5.3.1. Diffusion Policy

5.3.2. RDT-1B

5.3.3. CogACT

5.4. Hybrid and Efficient VLAs

5.4.1. HybridVLA

5.4.2. TinyVLA

5.4.3. MiniVLA

5.4.4. FAST

| Method | Action Type | VLM Backbone | Params | Chunk H | Bimanual | Open-Source | Year |

|---|---|---|---|---|---|---|---|

| RT-1 [60] | AR (discrete) | EfficientNet | 35M | 1 | – | – | 2022 |

| RT-2 [1] | AR (discrete) | PaLI-X/PaLM-E | 55B | 1 | – | – | 2023 |

| Octo [6] | Diff head | Custom | 93M | 4 | ✓ | ✓ | 2024 |

| OpenVLA [5] | AR (discrete) | Prismatic | 7B | 1 | – | ✓ | 2024 |

| [2] | FM | PaLIGemma | 3B | 50 | ✓ | – | 2024 |

| [3] | FM (hierarchical) | PaLIGemma | 3B | 50 | ✓ | – | 2025 |

| [4] | FM + RL | PaLIGemma | 3B | 50 | ✓ | – | 2025 |

| RDT-1B [44] | Diff (DiT) | SigLIP + T5 | 1.2B | 64 | ✓ | ✓ | 2024 |

| CogACT [70] | Diff head | VLM | 7B | 16 | – | ✓ | 2025 |

| HybridVLA [75] | AR + FM | VLM | 7B | 50 | ✓ | – | 2025 |

| TinyVLA [7] | Distilled | Small VLM | 1B | 10 | – | ✓ | 2024 |

| MiniVLA [76] | Efficient head | Small VLM | 300M | 8 | – | ✓ | 2024 |

| FAST [8] | AR (learned tok.) | VLM | 7B | 50 | – | ✓ | 2025 |

| Hi Robot [29] | Hierarchical FM | VLM | 3B+ | 50 | ✓ | – | 2025 |

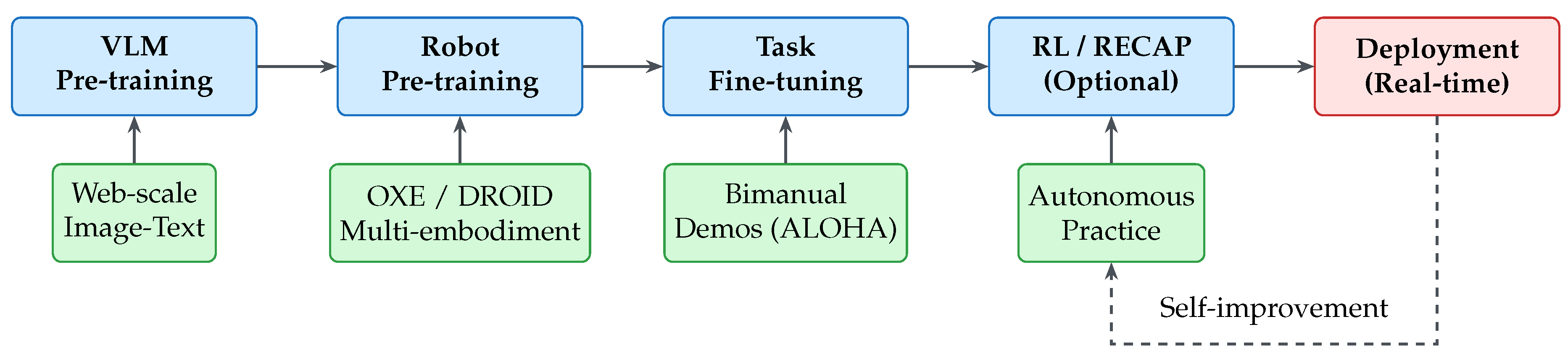

6. Training Recipes and Data Strategies

6.1. Pre-training

6.2. Post-training and Fine-tuning

6.3. Reinforcement Learning for VLAs

| Algorithm 1 RECAP: RL from Autonomous Capability |

|

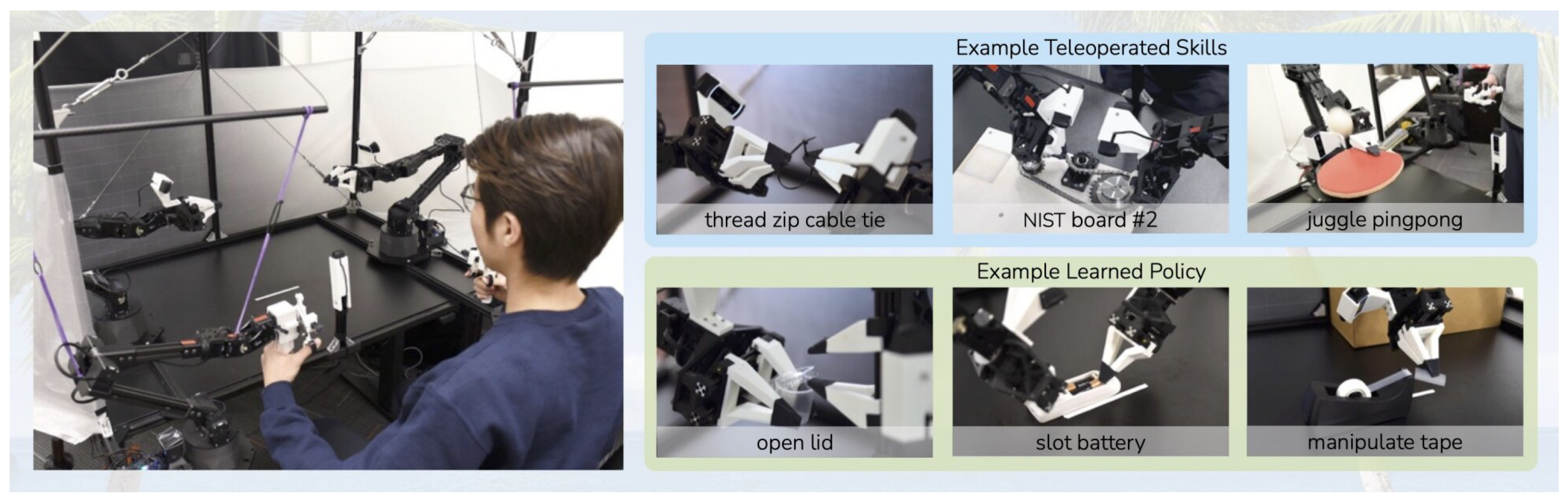

6.4. Data Collection for Bimanual Manipulation

6.5. Data Scaling Laws

7. Action Representations and Real-Time Execution

7.1. Discrete Action Tokenization

7.2. Continuous Action Generation

7.3. Action Chunking Strategies

7.4. Real-Time Chunking (RTC)

7.5. Bidirectional Decoding (BID)

7.6. Training-Time Action Conditioning

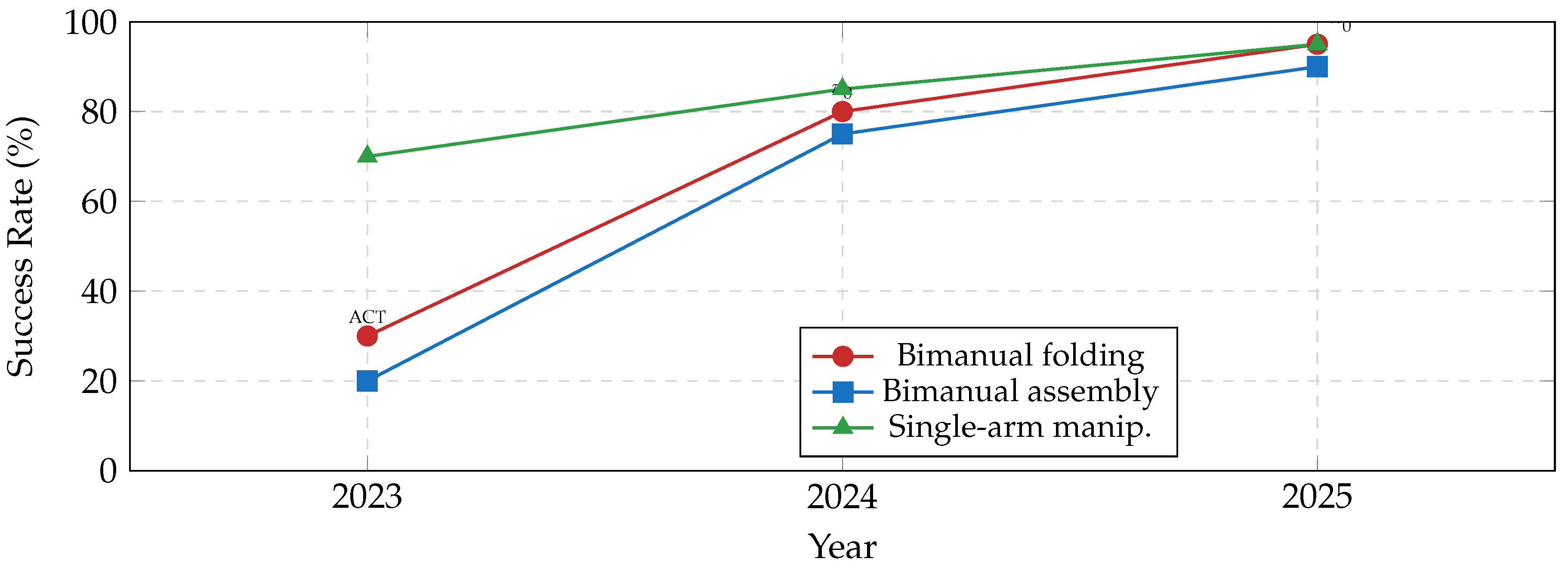

8. Bimanual Manipulation with VLAs

8.1. Coordination Strategies

8.1.1. Joint Action Space

8.1.2. Independent Policies

8.1.3. Leader-Follower

8.2. Contact-Rich Bimanual Tasks

8.3. Deformable Object Manipulation

8.4. Long-Horizon Bimanual Tasks

8.5. Mobile Bimanual Manipulation

| Task Category | Specific Task | RDT-1B | ACT | Diff. Policy | ||

|---|---|---|---|---|---|---|

| Deformable | Laundry folding | 80 | 92 | – | 50 | 35 |

| Towel folding | 85 | 95 | 60 | 55 | 40 | |

| Contact-rich | Box assembly | 75 | 88 | 55 | 40 | 30 |

| Peg insertion (bimanual) | 70 | 85 | 50 | 45 | 35 | |

| Long-horizon | Table busing | 65 | 80 | – | 30 | – |

| Kitchen cleanup | 60 | 78 | – | – | – | |

| Coordination | Object handover | 90 | 95 | 75 | 70 | 60 |

| Collaborative lift | 85 | 93 | 70 | 60 | 50 |

| Platform | DOF/arm | Mobile | Cost |

|---|---|---|---|

| ALOHA [17] | 6+1 | – | <$20K |

| Mobile ALOHA [42] | 6+1 | ✓ | <$30K |

| Franka Dual | 7+1 | – | >$60K |

| UMI [43] | N/A* | – | <$5K |

| *UMI is a data collection interface, not a robot. | |||

| Strategy | Coupling | Methods | |

|---|---|---|---|

| Joint space | ✓ | , RDT-1B, ACT | |

| Independent | – | Hi Robot | |

| Leader-follower | Partial | Custom setups | |

| Hierarchical | Variable | ✓ | , Hi Robot |

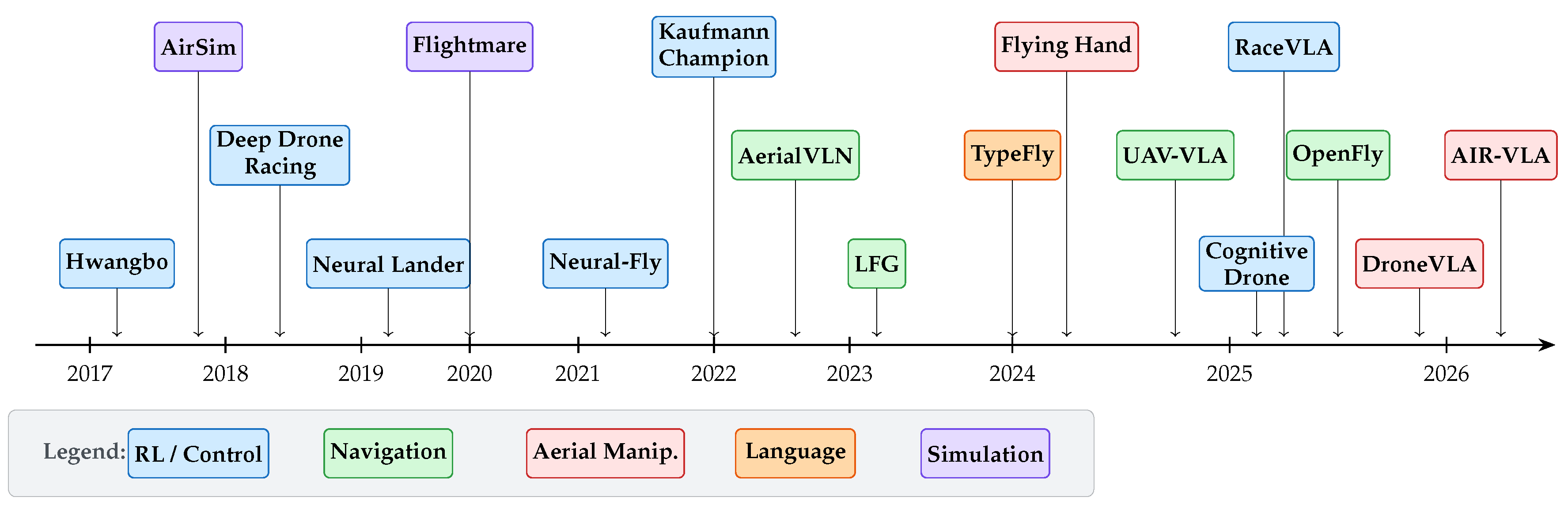

9. VLA for Unmanned Aerial Robotics and Drones

9.1. VLA-Based Drone Navigation and Control

9.1.1. Vision-Language Navigation for UAVs

9.1.2. End-to-End Learned Flight Control

9.2. Aerial Manipulation

9.2.1. Grasping and Payload Transport

9.3. Language-Guided Drone Missions

9.3.1. Natural Language to Flight Plans

9.3.2. Interactive and Corrective Language Control

9.4. Multi-Agent Aerial Systems

9.5. UAV-UGV Collaborative Systems

9.6. Sim-to-Real Transfer for Aerial VLAs

9.6.1. Simulation Environments

9.6.2. Domain Adaptation and Reality Gap

| Method | Task | Approach | Action Type | Sim | Year | Highlights |

|---|---|---|---|---|---|---|

| Navigation and Control | ||||||

| AerialVLN [129] | VL navigation | VLN baseline | Waypoints | ✓ | 2023 | First outdoor aerial VLN benchmark |

| LFG [130] | Language nav. | LLM → cost map | Waypoints | – | 2023 | Zero-shot LLM-guided navigation |

| UAV-VLA [132] | Mission gen. | VLA (sat. imagery) | Waypoints | – | 2025 | faster than human; 100K missions |

| UAV-VLN [133] | VL navigation | LLM + vision | Waypoints | ✓ | 2025 | End-to-end VLN with LLM parsing |

| OpenFly [134] | VLN benchmark | Toolchain | Waypoints | ✓ | 2025 | Large-scale aerial VLN benchmark |

| CityNavAgent [135] | City-scale nav. | Hierarchical VLN | Waypoints | ✓ | 2025 | Semantic planning + global memory |

| Hwangbo et al. [136] | Stabilization | RL | Motor cmds | ✓ | 2017 | s inference; thrown recovery |

| Kaufmann et al. [137] | Drone racing | RL | Motor cmds | ✓ | 2023 | Superhuman agile flight |

| CognitiveDrone [9] | Cognitive tasks | VLA | 4D | ✓ | 2025 | 77.2% success with VLM reasoning |

| RaceVLA [139] | Drone racing | VLA | Velocity cmds | ✓ | 2025 | Human-like racing behavior |

| Neural-Fly [141] | Agile flight | Adaptive NN | Motor cmds | – | 2022 | Online adaptation in strong winds |

| Dream to Fly [140] | Vision flight | Model-based RL | Velocity cmds | ✓ | 2025 | Learned world model for planning |

| Aerial Manipulation | ||||||

| Zhang et al. [142] | Aerial grasp | RL | Thrust + grip | ✓ | 2019 | Flight-grasp coordination |

| DroneVLA [10] | Object retrieval | VLA + servoing | EE pose + grip | – | 2026 | Language-commanded aerial manipulation |

| AIR-VLA [11] | Aerial manip. | End-to-end VLA | Flight + grip | – | 2026 | Safety-constrained; 20 Hz inference |

| Flying Hand [12] | Dexterous manip. | ACT + MPC | 6-DOF + 4-DOF arm | – | 2025 | Hexarotor; writing, peg-in-hole |

| Aerial Bimanual [143] | Harvesting | Dual-arm aerial | Dual-arm cmds | – | 2024 | Bimanual aerial manipulation |

| Language-Guided Missions | ||||||

| AeroAgent [144] | Mission plan | LLM agent | API calls | – | 2025 | LLM mission decomposition |

| TypeFly [145] | Mission plan | LLM → MiniSpec | API calls | – | 2024 | Low-latency program generation |

| Multi-Agent | ||||||

| MARL Swarms [147] | Formation | Decentralized MARL | Velocity cmds | ✓ | 2021 | Scalable to 10+ drones |

| Simulation, Datasets, and Sim-to-Real | ||||||

| AirSim [45] | Sim platform | UE4 rendering | Various | ✓ | 2018 | Photorealistic drone sim |

| Flightmare [46] | Sim platform | Parallel RL | Various | ✓ | 2021 | real-time training |

| TartanAir [150] | Dataset | Multi-modal | – | ✓ | 2020 | Diverse visual conditions; SLAM focus |

| Mid-Air [151] | Dataset | Multi-modal | – | ✓ | 2019 | Low-altitude flights; depth + semantics |

10. Language Grounding, Reasoning, and Generalization

10.1. Language-Conditioned Policies

10.2. Hierarchical Reasoning

10.3. Open-Ended Instruction Following

10.4. Cross-Embodiment Transfer

10.5. Zero-Shot and Few-Shot Generalization

11. Cross-Cutting Concerns

11.1. Visual Representation Learning

11.2. Safety

11.3. Sim-to-Real Transfer

11.4. World Models and Future State Prediction

11.5. Human-Robot Interaction

11.6. Scalability and Deployment

| Method | Visual Encoder | Multi-View | Safety | Sim-to-Real | HRI | Proprioception |

|---|---|---|---|---|---|---|

| RT-1 [60] | EfficientNet | – | Basic | – | – | – |

| RT-2 [1] | ViT (PaLI-X) | – | Basic | – | Partial | – |

| OpenVLA [5] | DINOv2+SigLIP | – | – | – | – | – |

| [2] | SigLIP | ✓ | Rate limit | – | – | ✓ |

| [3] | SigLIP | ✓ | Multi-layer | – | ✓ | ✓ |

| [4] | SigLIP | ✓ | Rate limit | – | – | ✓ |

| RDT-1B [44] | SigLIP | ✓ | Basic | – | – | ✓ |

| Octo [6] | Custom ViT | ✓ | – | ✓ | – | – |

12. Discussion and Conclusions

12.1. State of the Art Performance

| System | Organization | Architecture | Key Innovation | Deployment | Year |

|---|---|---|---|---|---|

| Dual-System (S1/S2) Architectures | |||||

| Gemini Robotics [178] | Google DeepMind | VLM + action decoder | Actions as native Gemini modality | Partner testing | 2025 |

| GR00T N1 [179] | NVIDIA | VLM (10 Hz) + DiT (120 Hz) | Open-source; neural trajectory augment. | Research | 2025 |

| Helix [180] | Figure AI | VLM (7 Hz) + action (200 Hz) | 35-DOF on embedded GPU | BMW factory | 2025 |

| Video-as-Action / World Models | |||||

| FutureVision [81] | Rhoda AI | Causal video → inv. dynamics | Web-scale video pre-training | Industrial pilots | 2026 |

| 1XWM [181] | 1X Technologies | Diffusion WM → IDM | 900h human video + 70h robot | Development | 2025 |

| RFM-1 [185] | Covariant | 8B AR world model | 99%+ warehouse precision | Hundreds of sites | 2024 |

| Continuous Autonomous Improvement | |||||

| DYNA-1 [182] | Dyna Robotics | FM + proprietary RM | 99.4% success, 24h autonomy | Commercial sites | 2025 |

| [4] | Physical Intelligence | FM + RECAP (RL) | 10–40% over demo baseline | Research | 2025 |

| Novel Data Collection | |||||

| ACT-1 [184] | Sunday Robotics | Zero robot data; glove demos | 10M episodes from 500+ homes | Beta 2026 | 2026 |

| AgiBot World [127] | AgiBot | Latent action repr. | 1M+ trajectories; 30% over OXE | Shipping at scale | 2025 |

| Open-Source VLAs | |||||

| Xiaomi-Robotics-0 [157] | Xiaomi | MoT + DiT (4.7B) | LIBERO 98.7% SOTA | Open-source | 2025 |

| GR00T N1 [179] | NVIDIA | VLM + DiT (2.2B) | Weights + data released | Open-source | 2025 |

| Method | Params | Latency | Min. GPU |

|---|---|---|---|

| RT-2 [1] | 55B | ∼1 s | TPU v4 |

| OpenVLA [5] | 7B | ∼150 ms | A100 |

| [2] | 3B | ∼70 ms | A100 |

| RDT-1B [44] | 1.2B | ∼150 ms | A6000 |

| TinyVLA [7] | 1B | ∼40 ms | RTX 4090 |

| MiniVLA [76] | 300M | ∼25 ms | RTX 3090 |

| FAST [8] | 7B | ∼80 ms | A100 |

- Distribution shift: VLA policies still degrade when encountering out-of-distribution observations, especially for bimanual tasks where object configurations have high variability.

- Contact modeling: Precise force control during bimanual contact is not addressed by current position-space VLAs.

- Evaluation standardization: The lack of common bimanual benchmarks prevents fair comparison across methods.

- Data scarcity: High-quality bimanual demonstrations remain expensive to collect, limiting the scale of bimanual VLA training.

- Temporal credit assignment: For long-horizon bimanual tasks, determining which actions contributed to success or failure is difficult, hindering RL-based improvement.

12.2. Summary and Discussion

12.3. Research Directions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

Abbreviations

| VLA | Vision-Language-Action model |

| VLM | Vision-Language Model |

| BC | Behavioral Cloning |

| IL | Imitation Learning |

| RL | Reinforcement Learning |

| FM | Flow Matching |

| ODE | Ordinary Differential Equation |

| DOF | Degrees of Freedom |

| OXE | Open X-Embodiment |

| AR | Autoregressive |

| DiT | Diffusion Transformer |

| RECAP | Reinforcement Learning from Autonomous CAPability |

| RTC | Real-Time Chunking |

| TTAC | Training-Time Action Conditioning |

| BID | Bidirectional Decoding |

| DVA | Direct Video Action |

| WM | World Model |

| MEM | Multi-Scale Embodied Memory |

| UAV | Unmanned Aerial Vehicle |

| UGV | Unmanned Ground Vehicle |

| VLN | Vision-Language Navigation |

| MAV | Micro Aerial Vehicle |

| IMU | Inertial Measurement Unit |

References

- Brohan, A.; Brown, N.; Carbajal, J.; Chebotar, Y.; Chen, X.; Choromanski, K.; Ding, T.; Driess, D.; Dubey, A.; Finn, C.; et al. RT-2: Vision-Language-Action Models Transfer Web Knowledge to Robotic Control. arXiv preprint arXiv:2307.15818 2023.

- Black, K.; Brown, N.; Driess, D.; Esmail, A.; Equi, M.; Finn, C.; Fusai, N.; Groom, L.; Hausman, K.; Ichter, B.; et al. π0: A Vision-Language-Action Flow Model for General Robot Control. arXiv preprint arXiv:2410.24164 2024.

- Black, K.; Brown, N.; Driess, D.; Esmail, A.; Equi, M.; Finn, C.; Fusai, N.; Groom, L.; Hausman, K.; Ichter, B.; et al. π0.5: A Vision-Language-Action Model with Open-World Generalization. In Proceedings of the Proceedings of the Conference on Robot Learning (CoRL), 2025.

- Amin, R.; Black, K.; Brown, N.; Driess, D.; Esmail, A.; Equi, M.; Finn, C.; Fusai, N.; Groom, L.; Hausman, K.; et al. : A VLA That Learns From Experience. arXiv preprint arXiv:2511.14759 2025.

- Kim, M.J.; Pertsch, K.; Karamcheti, S.; Xiao, T.; Balakrishna, A.; Nair, S.; Rafailov, R.; Foster, E.; Lam, G.; Nasiriany, M.; et al. OpenVLA: An Open-Source Vision-Language-Action Model. arXiv preprint arXiv:2406.09246 2024.

- Octo Model Team.; Ghosh, D.; Walke, H.; Pertsch, K.; Black, K.; Mees, O.; Dasari, S.; Hejna, J.; Kreiman, T.; Xu, C.; et al. Octo: An Open-Source Generalist Robot Policy. In Proceedings of the Proceedings of Robotics: Science and Systems (RSS), 2024.

- Wen, J.; Zhu, Y.; Zhang, J.; Mu, M.; Qi, Z.; Peng, Z.; Wan, G.; Li, T.; Huang, J.; Lu, H. TinyVLA: Towards Fast, Data-Efficient Vision-Language-Action Models for Robotic Manipulation. arXiv preprint arXiv:2409.12514 2024. [CrossRef]

- Pertsch, K.; Kim, M.J.; Luo, J.; Levine, S.; Finn, C. FAST: Efficient Action Tokenization for Vision-Language-Action Models. In Proceedings of the Proceedings of Robotics: Science and Systems (RSS), 2025.

- Arshad, A.; Jia, X.; Sun, L. CognitiveDrone: A VLA Model and Evaluation Benchmark for Real-Time Cognitive Task Solving and Reasoning in UAVs. arXiv preprint arXiv:2503.01378 2025.

- Saha, A.; Mishra, S.; Fang, Y.; Liu, M.; Xu, D.; Garg, A. DroneVLA: Vision-Language-Action Model for Aerial Manipulation. arXiv preprint arXiv:2601.13809 2026.

- Liu, C.; Chen, Z.; Zhao, Y.; Liu, M.; Luo, W. AIR-VLA: End-to-End Vision-Language-Action Model for Aerial Manipulation. arXiv preprint arXiv:2601.21602 2026.

- Zhou, G.; Li, Y.C.; Pan, Y.; Huang, Z.; Lin, Y.A.; Xu, J.; Song, S. Flying Hand: End-Effector-Centric Framework for Versatile Aerial Manipulation Imitation Learning. arXiv preprint arXiv:2504.10334 2025.

- Firoozi, R.; Tucker, J.; Tian, S.; Majumdar, A.; Sun, J.; Liu, W.; Zhu, Y.; Song, S.; Kapoor, A.; Hausman, K.; et al. Foundation Models in Robotics: Applications, Challenges, and the Future. The International Journal of Robotics Research 2024, 43, 2164–2204. [CrossRef]

- Wolf, R.; Shi, Y.; Liu, S.; Rayyes, R. Diffusion Models for Robotic Manipulation: A Survey. Frontiers in Robotics and AI 2025, 12. [CrossRef]

- Abbas, M.; Narayan, J.; Dwivedy, S.K. A Systematic Review on Cooperative Dual-Arm Manipulators: Modeling, Planning, Control, and Vision Strategies. International Journal of Intelligent Robotics and Applications 2023, 7, 683–707. [CrossRef]

- Kreiman, T.; Levine, S.; Finn, C. Bimanual Coordination for Robot Manipulation: A Survey. arXiv preprint 2024.

- Zhao, T.Z.; Kumar, V.; Levine, S.; Finn, C. Learning Fine-Grained Bimanual Manipulation with Low-Cost Hardware. arXiv preprint arXiv:2304.13705 2023.

- Lipman, Y.; Chen, R.T.Q.; Ben-Hamu, H.; Nickel, M. Flow Matching for Generative Modeling. arXiv preprint arXiv:2210.02747 2022.

- Vaswani, A.; Shazeer, N.; Parmar, N.; Uszkoreit, J.; Jones, L.; Gomez, A.N.; Kaiser, Ł.; Polosukhin, I. Attention Is All You Need. In Proceedings of the Advances in Neural Information Processing Systems (NeurIPS), 2017.

- Dosovitskiy, A.; Beyer, L.; Kolesnikov, A.; Weissenborn, D.; Zhai, X.; Unterthiner, T.; Dehghani, M.; Minderer, M.; Heigold, G.; Gelly, S.; et al. An Image is Worth 16x16 Words: Transformers for Image Recognition at Scale. In Proceedings of the Proceedings of the International Conference on Learning Representations (ICLR), 2021.

- Radford, A.; Kim, J.W.; Hallacy, C.; Ramesh, A.; Goh, G.; Agarwal, S.; Sastry, G.; Askell, A.; Mishkin, P.; Clark, J.; et al. Learning Transferable Visual Models From Natural Language Supervision. In Proceedings of the Proceedings of the International Conference on Machine Learning (ICML), 2021.

- Brown, T.B.; Mann, B.; Ryder, N.; Subbiah, M.; Kaplan, J.; Dhariwal, P.; Neelakantan, A.; Shyam, P.; Sastry, G.; Askell, A.; et al. Language Models Are Few-Shot Learners. In Proceedings of the Advances in Neural Information Processing Systems (NeurIPS), 2020.

- Ouyang, L.; Wu, J.; Jiang, X.; Almeida, D.; Wainwright, C.L.; Mishkin, P.; Zhang, C.; Agarwal, S.; Slama, K.; Ray, A.; et al. Training Language Models to Follow Instructions with Human Feedback. Advances in Neural Information Processing Systems (NeurIPS) 2022.

- Driess, D.; Xia, F.; Sajjadi, M.S.M.; Lynch, C.; Chowdhery, A.; Ichter, B.; Wahid, A.; Tompson, J.; Vuong, Q.; Yu, T.; et al. PaLM-E: An Embodied Multimodal Language Model. In Proceedings of the Proceedings of the International Conference on Machine Learning (ICML), 2023.

- Beyer, L.; Steiner, A.; Pinto, A.S.; Kolesnikov, A.; Wang, X.; Salz, D.; Neumann, M.; Alabdulmohsin, I.; Tschannen, M.; Bugliarello, E.; et al. PaLIGemma: A Versatile 3B VLM for Transfer. arXiv preprint arXiv:2407.07726 2024. [CrossRef]

- Gemma Team. Gemma: Open Models Based on Gemini Research and Technology. arXiv preprint arXiv:2403.08295 2024. [CrossRef]

- Touvron, H.; Lavril, T.; Izacard, G.; Martinet, X.; Lachaux, M.A.; Lacroix, T.; Rozière, B.; Goyal, N.; Hambro, E.; Azhar, F.; et al. LLaMA: Open and Efficient Foundation Language Models. arXiv preprint arXiv:2302.13971 2023. [CrossRef]

- Liu, H.; Li, C.; Wu, Q.; Lee, Y.J. Visual Instruction Tuning. In Proceedings of the Advances in Neural Information Processing Systems (NeurIPS), 2024.

- Shi, L.X.; Ichter, B.; Equi, M.; Levine, S.; Hausman, K. Hi Robot: Open-Ended Instruction Following with Hierarchical Vision-Language-Action Models. In Proceedings of the Proceedings of the International Conference on Machine Learning (ICML), 2025.

- Pomerleau, D.A. ALVINN: An Autonomous Land Vehicle in a Neural Network. In Proceedings of the Advances in Neural Information Processing Systems (NeurIPS), 1989.

- Ross, S.; Gordon, G.J.; Bagnell, D. A Reduction of Imitation Learning and Structured Prediction to No-Regret Online Learning. In Proceedings of the Proceedings of the International Conference on Artificial Intelligence and Statistics (AISTATS), 2011.

- Stepputtis, S.; Campbell, J.; Phielipp, M.; Lee, S.; Baral, C.; Ben Amor, H. Language-Conditioned Imitation Learning for Robot Manipulation Tasks. In Proceedings of the Advances in Neural Information Processing Systems (NeurIPS), 2020.

- Kingma, D.P.; Welling, M. Auto-Encoding Variational Bayes. In Proceedings of the Proceedings of the International Conference on Learning Representations (ICLR), 2014.

- Goodfellow, I.; Pouget-Abadie, J.; Mirza, M.; Xu, B.; Warde-Farley, D.; Ozair, S.; Courville, A.; Bengio, Y. Generative Adversarial Nets. In Proceedings of the Advances in Neural Information Processing Systems (NeurIPS), 2014.

- Ho, J.; Jain, A.; Abbeel, P. Denoising Diffusion Probabilistic Models. In Proceedings of the Advances in Neural Information Processing Systems (NeurIPS), 2020.

- Song, Y.; Sohl-Dickstein, J.; Kingma, D.P.; Kumar, A.; Ermon, S.; Poole, B. Score-Based Generative Modeling through Stochastic Differential Equations. In Proceedings of the Proceedings of the International Conference on Learning Representations (ICLR), 2021.

- Rombach, R.; Blattmann, A.; Lorenz, D.; Esser, P.; Ommer, B. High-Resolution Image Synthesis with Latent Diffusion Models. In Proceedings of the Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), 2022.

- Chen, L.; Lu, K.; Rajeswaran, A.; Lee, K.; Grover, A.; Laskin, M.; Abbeel, P.; Srinivas, A.; Mordatch, I. Decision Transformer: Reinforcement Learning via Sequence Modeling. Advances in Neural Information Processing Systems (NeurIPS) 2021.

- Reed, S.; Zolna, K.; Parisotto, E.; Colmenarejo, S.G.; Novikov, A.; Barth-Maron, G.; Giménez, M.; Sulsky, Y.; Kay, J.; Springenberg, J.T.; et al. A Generalist Agent. In Proceedings of the Transactions on Machine Learning Research (TMLR), 2022.

- Chi, C.; Feng, S.; Du, Y.; Xu, Z.; Cousineau, E.; Burchfiel, B.; Song, S. Diffusion Policy: Visuomotor Policy Learning via Action Diffusion. The International Journal of Robotics Research (IJRR) 2023.

- Liu, X.; Gong, C.; Liu, Q. Flow Straight and Fast: Learning to Generate and Transfer Data with Rectified Flow. arXiv preprint arXiv:2209.14577 2022. [CrossRef]

- Fu, Z.; Zhao, T.Z.; Finn, C. Mobile ALOHA: Learning Bimanual Mobile Manipulation with Low-Cost Whole-Body Teleoperation. In Proceedings of the Proceedings of the Conference on Robot Learning (CoRL), 2024.

- Chi, C.; Xu, Z.; Pan, C.; Cousineau, E.; Burchfiel, B.; Feng, S.; Tedrake, R.; Song, S. Universal Manipulation Interface: In-The-Wild Robot Teaching Without In-The-Wild Robots. In Proceedings of the Proceedings of Robotics: Science and Systems (RSS), 2024.

- Liu, S.; Wu, L.; Li, B.; Tan, H.; Chen, H.; Wang, Z.; Xu, K.; Su, H.; Zhu, J. RDT-1B: A Diffusion Foundation Model for Bimanual Manipulation. arXiv preprint arXiv:2410.07864 2024. [CrossRef]

- Shah, S.; Dey, D.; Lovett, C.; Kapoor, A. AirSim: High-Fidelity Visual and Physical Simulation for Autonomous Vehicles. In Proceedings of the Field and Service Robotics (FSR), 2018.

- Song, Y.; Naji, S.; Kaufmann, E.; Loquercio, A.; Scaramuzza, D. Flightmare: A Flexible Quadrotor Simulator. Conference on Robot Learning (CoRL) 2021.

- Open X-Embodiment Collaboration. Open X-Embodiment: Robotic Learning Datasets and RT-X Models. arXiv preprint arXiv:2310.08864 2024.

- Khazatsky, A.; Pertsch, K.; Nair, S.; Balakrishna, A.; Dasari, S.; Karamcheti, S.; Nasiriany, M.; Srirama, M.K.; Chen, L.Y.; Ellis, K.; et al. DROID: A Large-Scale In-the-Wild Robot Manipulation Dataset. arXiv preprint arXiv:2403.12945 2024.

- Walke, H.; Black, K.; Zhao, T.Z.; Vuong, Q.; Zheng, C.; Florence, P.; Levine, S. BridgeData V2: A Dataset for Robot Learning at Scale. In Proceedings of the Proceedings of the Conference on Robot Learning (CoRL), 2023.

- Ebert, F.; Yang, Y.; Schmeckpeper, K.; Buber, B.; Georgakis, G.; Daniilidis, K.; Finn, C.; Levine, S. Bridge Data: Boosting Generalization of Robotic Skills with Cross-Domain Datasets. In Proceedings of the Proceedings of Robotics: Science and Systems (RSS), 2021.

- GigaAI. GigaBrain-0.5M*: A VLA with World Model-Based Reinforcement Learning. arXiv preprint arXiv:2602.12099 2025.

- Liu, B.; Zhu, Y.; Gao, C.; Feng, Y.; Liu, Q.; Zhu, Y.; Stone, P. LIBERO: Benchmarking Knowledge Transfer for Lifelong Robot Learning. Advances in Neural Information Processing Systems (NeurIPS) 2024.

- Li, X.; Hsu, K.; Liu, J.; Pertsch, K.; Vuong, Q.; Levine, S. SIMPLER: Simulated Manipulation Policy Evaluation for Real Robot Setups. arXiv preprint 2024.

- James, S.; Ma, Z.; Arrojo, D.R.; Davison, A.J. RLBench: The Robot Learning Benchmark and Learning Environment. In Proceedings of the IEEE Robotics and Automation Letters (RA-L), 2020.

- Yu, T.; Quillen, D.; He, Z.; Julian, R.; Hausman, K.; Finn, C.; Levine, S. Meta-World: A Benchmark and Evaluation for Multi-Task and Meta Reinforcement Learning. Proceedings of the Conference on Robot Learning (CoRL) 2020.

- Zhu, Y.; Wong, J.; Mandlekar, A.; Martín-Martín, R.; Joshi, A.; Nasiriany, S.; Zhu, Y. robosuite: A Modular Simulation Framework and Benchmark for Robot Learning. In Proceedings of the arXiv preprint arXiv:2009.12293, 2020.

- Gu, J.; Xiang, F.; Li, X.; Ling, Z.; Liu, X.; Mu, T.; Tang, Y.; Tao, S.; Wei, X.; Yao, Y.; et al. ManiSkill2: A Unified Benchmark for Generalizable Manipulation Skills. In Proceedings of the Proceedings of the International Conference on Learning Representations (ICLR), 2023.

- Lee, C.; Zhang, R.; Zhu, J.; Xia, F.; Ehsani, K.; Martín-Martín, R.; Li, Y.; Savarese, S.; Fei-Fei, L. BEHAVIOR-1K: A Human-Centered, Embodied AI Benchmark with 1,000 Everyday Activities and Realistic Simulation. In Proceedings of the Proceedings of the Conference on Robot Learning (CoRL), 2024.

- Li, X.; Hsu, K.; Liu, J.; Pertsch, K.; Vuong, Q.; Levine, S. Evaluating Real-World Robot Manipulation Policies in Simulation. arXiv preprint 2024. [CrossRef]

- Brohan, A.; Brown, N.; Carbajal, J.; Chebotar, Y.; Dabis, J.; Finn, C.; Gober, K.; Hausman, K.; Herzog, A.; Hsu, J.; et al. RT-1: Robotics Transformer for Real-World Control at Scale. arXiv preprint arXiv:2212.06817 2022.

- Zitkovich, B.; Yu, T.; Xu, S.; Xu, P.; Xiao, T.; Xia, F.; Wu, J.; Wohlhart, P.; Welker, S.; Wahid, A.; et al. RT-2-X: Learning Robot Skills from Large-Scale Data. In Proceedings of the arXiv preprint, 2023.

- Open X-Embodiment Collaboration. Open X-Embodiment: Robotic Learning Datasets and RT-X Models. In Proceedings of the Proceedings of the IEEE International Conference on Robotics and Automation (ICRA), 2024.

- Zheng, J.; Kim, M.J.; Pertsch, K.; Karamcheti, S.; Finn, C.; Levine, S.; Liang, P. OpenVLA 2.0: Advancing Vision-Language-Action Models with Updated Training Recipes and Data. arXiv preprint 2024.

- Wu, K.; Wu, X.; Zhang, R.; Duan, J.; Ni, B.; Lu, J.; Zheng, J.; Fan, H. GR-1: Unleashing Large-Scale Video Generative Pre-Training for Visual Robot Manipulation. In Proceedings of the arXiv preprint, 2023.

- Li, Y.; Fang, K.; Hausman, K.; Ichter, B.; Florence, P. Hamster: Hierarchical Action Models for Open-World Robot Manipulation. arXiv preprint 2025. [CrossRef]

- Chen, D.; Wang, J.; Xu, Y.; Zhang, R.; Li, X.; Wu, Y.; Shao, J.; Ke, L. SpatialVLA: Exploring Spatial Representations for Visual-Language-Action Model. arXiv preprint 2024.

- Haldar, S.; Pari, J.; Rao, A.; Watter, M.; Pinto, L. BAKU: An Efficient Transformer for Multi-Task Policy Learning. In Proceedings of the arXiv preprint, 2024.

- Kim, M.J.; Pertsch, K.; Sadigh, D.; Levine, S.; Finn, C. KAT: Keypoint-Action Tokens for Robot Manipulation. arXiv preprint 2024.

- Zhen, H.; Qiu, Y.; Sun, S. SimpleVLA-RL: Simple Vision-Language-Action Models Meet Reinforcement Learning. arXiv preprint 2024.

- Li, Q.; Liang, Y.; Wang, Z.; Luo, L.; Chen, X.; Fang, M.; Jiang, J.; Fan, H.; Duan, N. CogACT: A Foundational Vision-Language-Action Model for Synergizing Cognition and Action in Robotic Manipulation. arXiv preprint 2025.

- Shridhar, M.; Manuelli, L.; Fox, D. Perceiver-Actor: A Multi-Task Transformer for Robotic Manipulation. In Proceedings of the Proceedings of the Conference on Robot Learning (CoRL), 2023.

- Goyal, A.; Xu, J.; Guo, Y.; Blukis, V.; Chao, Y.W.; Fox, D. RVT: Robotic View Transformer for 3D Object Manipulation. In Proceedings of the Proceedings of the Conference on Robot Learning (CoRL), 2023.

- Ze, Y.; Yan, G.; Wu, Y.; Jia, Y.; Zhang, R.; Hu, Y.; Wu, J.; Tan, J.; Sun, H.; Su, H.; et al. 3D Diffusion Policy: Generalizable Visuomotor Policy Learning via Simple 3D Representations. In Proceedings of the Proceedings of Robotics: Science and Systems (RSS), 2024.

- Zhou, C.; Yu, L.; Babu, A.; Tirumala, K.; Yasunaga, M.; Shamber, L.; Kahn, J.; Ma, X.; Zettlemoyer, L.; Levy, O. Transfusion: Predict the Next Token and Diffuse Images with One Multi-Modal Model. arXiv preprint arXiv:2408.11039 2024. [CrossRef]

- Chen, J.; Li, S.; Zhang, K.; Chen, Y.; Ding, R.; Ge, Y.; Zhao, J. HybridVLA: Collaborative Diffusion and Autoregression in a Unified Vision-Language-Action Model. arXiv preprint arXiv:2503.10631 2025.

- Belkhale, S.; Sadigh, D. MiniVLA: A Better VLA with a Smaller Footprint. arXiv preprint 2024.

- Torne, M.; Pertsch, K.; Walke, H.; Vedder, K.; Nair, S.; Ichter, B.; Ren, A.Z.; Wang, H.; Tang, J.; Stachowicz, K.; et al. MEM: Multi-Scale Embodied Memory for Vision Language Action Models. arXiv preprint arXiv:2503.02760 2026. [CrossRef]

- Shi, H.; Bin, X.; Liu, Y.; Sun, L.; Fengrong, L.; Wang, T.; Zhou, E.; Fan, H.; Zhang, X.; Huang, G. MemoryVLA: Perceptual-Cognitive Memory in Vision-Language-Action Models for Robotic Manipulation. arXiv preprint arXiv:2508.19236 2025.

- Jang, H.; Yu, S.; Kwon, H.; Jeon, H.; Seo, Y.; Shin, J. ContextVLA: Vision-Language-Action Model with Amortized Multi-Frame Context. arXiv preprint arXiv:2510.04246 2025.

- GigaAI Team. GigaBrain-0: A World Model-Powered Vision-Language-Action Model. arXiv preprint arXiv:2510.19430 2025.

- Rhoda AI. Causal Video Models Are Data-Efficient Robot Policy Learners. https://www.rhoda.ai/research/direct-video-action, 2026.

- WorldVLA Team. WorldVLA: Towards Autoregressive Action World Model. arXiv preprint arXiv:2506.21539 2025. [CrossRef]

- GigaAI Team. GigaWorld-0: World Models as Data Engine to Empower Embodied AI. arXiv preprint arXiv:2511.19861 2025. [CrossRef]

- Hu, E.J.; Shen, Y.; Wallis, P.; Allen-Zhu, Z.; Li, Y.; Wang, S.; Wang, L.; Chen, W. LoRA: Low-Rank Adaptation of Large Language Models. Proceedings of the International Conference on Learning Representations (ICLR) 2022.

- Rafailov, R.; Sharma, A.; Mitchell, E.; Ermon, S.; Manning, C.D.; Finn, C. Direct Preference Optimization: Your Language Model is Secretly a Reward Model. Advances in Neural Information Processing Systems (NeurIPS) 2023.

- Driess, D.; Finn, C.; Levine, S. Knowledge Insulation for Task-Oriented Fine-Tuning of Vision-Language-Action Models. In Proceedings of the Advances in Neural Information Processing Systems (NeurIPS), 2025.

- Xiao, M.; Song, Z.; Sebe, N.; Cheng, L. Align-then-Steer: Aligning Vision-Language-Action Models for Efficient Robot Policy Fine-Tuning. arXiv preprint 2025.

- Mandlekar, A.; Xu, D.; Wong, J.; Nasiriany, S.; Wang, C.; Kulkarni, R.; Fei-Fei, L.; Savarese, S.; Zhu, Y.; Martín-Martín, R. What Matters in Learning from Offline Human Demonstrations for Robot Manipulation. In Proceedings of the Proceedings of the Conference on Robot Learning (CoRL), 2022.

- Bharadhwaj, H.; Vakil, J.; Sharma, M.; Gupta, A.; Tulsiani, S.; Kumar, V. RoboAgent: Generalization and Efficiency in Robot Manipulation via Semantic Augmentations and Action Chunking. In Proceedings of the Proceedings of the IEEE International Conference on Robotics and Automation (ICRA), 2024.

- Kalashnikov, D.; Irpan, A.; Pastor, P.; Ibarz, J.; Herzog, A.; Jang, E.; Quillen, D.; Holly, E.; Kalakrishnan, M.; Vanhoucke, V.; et al. QT-Opt: Scalable Deep Reinforcement Learning for Vision-Based Robotic Manipulation. In Proceedings of the Proceedings of the Conference on Robot Learning (CoRL), 2018.

- Kumar, A.; Zhou, A.; Tucker, G.; Levine, S. Conservative Q-Learning for Offline Reinforcement Learning. In Proceedings of the Advances in Neural Information Processing Systems (NeurIPS), 2020.

- Peng, X.B.; Kumar, A.; Zhang, G.; Levine, S. Advantage-Weighted Regression: Simple and Scalable Off-Policy Reinforcement Learning. In Proceedings of the arXiv preprint arXiv:1910.00177, 2019.

- Levine, S.; Kumar, A.; Tucker, G.; Fu, J. Offline Reinforcement Learning: Tutorial, Review, and Perspectives on Open Problems. arXiv preprint arXiv:2005.01643 2020. [CrossRef]

- Schulman, J.; Wolski, F.; Dhariwal, P.; Radford, A.; Klimov, O. Proximal Policy Optimization Algorithms. In Proceedings of the arXiv preprint arXiv:1707.06347, 2017.

- Ranawaka Aracchige, R.; Chi, C.; Song, S.; Burchfiel, B. SAIL: Sample-Efficient Policy Adaptation for Faster-than-Demonstration Execution. arXiv preprint 2025.

- Lu, J.; Luo, J.; Pertsch, K.; Levine, S. VLA-RL: Reinforcement Learning for Vision-Language-Action Models. arXiv preprint 2025.

- Chen, J.; Yuan, Y.; Li, J.; Qiao, Y. ConRFT: A Reinforced Fine-Tuning Method for VLA Models via Consistency Regularization. arXiv preprint 2025.

- Chebotar, Y.; Vuong, Q.; Hausman, K.; Xia, F.; Lu, Y.; Irpan, A.; Kumar, A.; Yu, T.; Herzog, A.; Pertsch, K.; et al. Q-Transformer: Scalable Offline Reinforcement Learning via Autoregressive Q-Functions. In Proceedings of the Proceedings of the Conference on Robot Learning (CoRL), 2023.

- Ren, A.Z.; Dinh, J.; Dai, S.; Zhang, T.; Phielipp, M.; Burchfiel, B. Diffusion Policy Policy Optimization. arXiv preprint 2023.

- Ghasemipour, S.K.S.; Florence, P.; Lazzaro, S.; Levine, S. Self-Improving Foundation Models for Embodied Intelligence. arXiv preprint 2025.

- Levine, S.; Pastor, P.; Krizhevsky, A.; Ibarz, J.; Quillen, D. Learning Hand-Eye Coordination for Robotic Grasping with Deep Learning and Large-Scale Data Collection. The International Journal of Robotics Research (IJRR) 2018, 37, 421–436. [CrossRef]

- Ha, H.; Florence, P.; Song, S. Scaling Up and Distilling Down: Language-Guided Robot Skill Acquisition. In Proceedings of the Proceedings of the Conference on Robot Learning (CoRL), 2023.

- Zheng, J.; Yao, J.; Yu, D.; Zhao, Z.; Wang, T.; Pan, L.; Zhang, L.; Yang, Y.; Zou, J. Universal Actions for Enhanced Embodied Foundation Models. arXiv preprint 2025. [CrossRef]

- Ke, L.; Pertsch, K.; Levine, S.; Finn, C. Consistency Models as a Rich and Efficient Policy Class for Reinforcement Learning. In Proceedings of the arXiv preprint, 2024.

- Zhao, T.Z.; Kumar, V.; Levine, S.; Finn, C. Learning Bimanual Manipulation with Action Chunking Transformers. In Proceedings of the Proceedings of Robotics: Science and Systems (RSS), 2024.

- Dai, Y.; Bahl, S.; Singh, A.; Pertsch, K.; Levine, S. RACER: Rich Language-Guided Failure Recovery Policies for Imitation Learning. arXiv preprint 2024.

- Black, K.; Pertsch, K.; Nair, S.; Levine, S. Real-Time Chunking: Real-Time Execution of Action Chunking Flow Policies. arXiv preprint 2025. [CrossRef]

- Liu, Y.; Hamdi, A.; Gkanatsios, N.; Fragkiadaki, K. Bidirectional Decoding: Improving Action Chunking via Closed-Loop Resampling. arXiv preprint 2024. [CrossRef]

- Black, K.; Pertsch, K.; Nair, S.; Levine, S. Training-Time Action Conditioning for Efficient Real-Time Chunking. arXiv preprint 2025.

- Grannen, J.; Chong, Y.; Zhao, T.Z.; Finn, C. Stabilize to Act: Learning to Coordinate for Bimanual Manipulation. In Proceedings of the Proceedings of the Conference on Robot Learning (CoRL), 2023.

- Chitnis, R.; Tulsiani, S.; Gupta, S.; Gupta, A. Efficient Bimanual Manipulation Using Learned Task Schemas. In Proceedings of the Proceedings of the IEEE International Conference on Robotics and Automation (ICRA), 2020.

- Liang, J.; Huang, W.; Xia, F.; Xu, P.; Hausman, K.; Ichter, B.; Florence, P.; Zeng, A. Code as Policies: Language Model Programs for Embodied Control. In Proceedings of the Proceedings of the IEEE International Conference on Robotics and Automation (ICRA), 2023.

- Ahn, M.; Brohan, A.; Brown, N.; Chebotar, Y.; Cortes, O.; David, B.; Finn, C.; Fu, C.; Gober, K.; Hausman, K.; et al. Do As I Can, Not As I Say: Grounding Language in Robotic Affordances. In Proceedings of the Proceedings of the Conference on Robot Learning (CoRL), 2022.

- Wang, C.; Fan, L.; Sun, J.; Zhang, R.; Fei-Fei, L.; Xu, D.; Zhu, Y.; Anandkumar, A. MimicPlay: Long-Horizon Imitation Learning by Watching Human Play. In Proceedings of the Proceedings of the Conference on Robot Learning (CoRL), 2023.

- Chen, L.; Bahl, S.; Pathak, D. PlayFusion: Skill Acquisition via Diffusion from Language-Annotated Play. In Proceedings of the Proceedings of the Conference on Robot Learning (CoRL), 2023.

- Du, Y.; Yang, S.; Dai, B.; Dai, H.; Nachum, O.; Tompson, J.; Schuurmans, D.; Abbeel, P. Learning Universal Policies via Text-Guided Video Generation. Advances in Neural Information Processing Systems (NeurIPS) 2024.

- Hu, Y.; Xie, F.; Jia, W.; Wang, G.; Zhao, J.; Gao, Y. Look Before You Leap: Unveiling the Power of GPT-4V in Robotic Vision-Language Planning. In Proceedings of the arXiv preprint, 2023.

- Ma, Y.J.; Liang, W.; Wang, G.; Huang, D.A.; Bastani, O.; Jayaraman, D.; Zhu, Y.; Fan, L.; Anandkumar, A. Leveraging Internet-Scale Robotic Manipulation Data for Robotic Grasping. In Proceedings of the arXiv preprint, 2024.

- Li, H.S.; Yang, Y.; Chen, X.; Chen, X.; Yang, Y.; Tian, H.; Wang, T.; Lin, D.; Zhao, F. CronusVLA: Towards Efficient and Robust Manipulation via Multi-Frame Vision-Language-Action Modeling. In Proceedings of the Proceedings of the AAAI Conference on Artificial Intelligence, 2025.

- Mark, M.S.; Liang, J.; Attarian, M.; Fu, C.; Dwibedi, D.; Shah, D.; Kumar, A. BPP: Long-Context Robot Imitation Learning by Focusing on Key History Frames. arXiv preprint arXiv:2602.15010 2026. [CrossRef]

- Torne, M.; Tang, A.; Liu, Y.; Finn, C. Learning Long-Context Diffusion Policies via Past-Token Prediction. arXiv preprint arXiv:2505.09561 2025.

- Fang, H.; Grotz, M.; Pumacay, W.; Wang, Y.R.; Fox, D.; Krishna, R.; Duan, J. SAM2Act: Integrating Visual Foundation Model with a Memory Architecture for Robotic Manipulation. In Proceedings of the Proceedings of the International Conference on Machine Learning (ICML), 2025.

- Sridhar, A.; Pan, J.; Sharma, S.; Finn, C. MemER: Scaling Up Memory for Robot Control via Experience Retrieval. arXiv preprint arXiv:2510.20328 2025. [CrossRef]

- Wei, Y.L.; Liao, H.; Lin, Y.; Wang, P.; Liang, Z.; Liu, G.; Zheng, W.S. CycleManip: Enabling Cyclic Task Manipulation via Effective Historical Perception and Understanding. arXiv preprint arXiv:2512.01022 2025. [CrossRef]

- Chi, C.; Xu, Z.; Song, S. UMI on Legs: Making Manipulation Policies Mobile with Locomotion. arXiv preprint 2025.

- Shah, R.; Martín-Martín, R.; Zhu, Y. BUMBLE: Unifying Reasoning and Acting with Vision-Language Models for Building-wide Mobile Manipulation. arXiv preprint 2024.

- AgiBot Team. AgiBot World: A New Frontier for Generalist Robot Policies. arXiv preprint 2025.

- Physical Intelligence. Scaling Robot Policy Learning via Zero-Shot Labeling with Foundation Models. arXiv preprint 2025.

- Liu, S.; Zhang, H.; Li, Y. AerialVLN: Vision-and-Language Navigation for UAVs. In Proceedings of the Proceedings of the IEEE/CVF International Conference on Computer Vision (ICCV), 2023.

- Shah, D.; Equi, M.; Osinski, B.; Levine, S. Navigation with Large Language Models: Semantic Guessing as a Heuristic for Planning. In Proceedings of the Conference on Robot Learning (CoRL), 2023.

- Zhang, W.; et al. SkyGPT: Autonomous UAV Navigation with Vision-Language Foundation Models. arXiv preprint 2025.

- Khasianov, A.; Manghi, T.; Nesterov, A.; Belousov, B.; Peters, J. UAV-VLA: Vision-Language-Action System for Large Scale Aerial Mission Generation. In Proceedings of the Companion of the ACM/IEEE International Conference on Human-Robot Interaction (HRI), 2025. arXiv:2501.05014.

- Gao, Z.; Wang, Y.; Sun, P. End-to-End Vision-Language Navigation for UAVs. arXiv preprint arXiv:2504.21432 2025.

- Gao, Y.; Wang, C.; Chen, Z.; Wang, Y.; Zhao, Y. OpenFly: A Versatile Toolchain and Large-Scale Benchmark for Aerial Vision-Language Navigation. arXiv preprint 2025.

- He, J.; Li, X.; Zhang, Y. CityNavAgent: Aerial Vision-Language Navigation with Hierarchical Semantic Planning and Global Memory. arXiv preprint 2025.

- Hwangbo, J.; Sa, I.; Siegwart, R.; Hutter, M. Control of a Quadrotor with Reinforcement Learning. IEEE Robotics and Automation Letters 2017, 2, 2096–2103. [CrossRef]

- Kaufmann, E.; Loquercio, A.; Ranftl, R.; Müller, M.; Koltun, V.; Scaramuzza, D. Champion-level drone racing using deep reinforcement learning. Nature 2023, 620, 982–987. [CrossRef]

- Shi, G.; Shi, X.; O’Connell, M.; Yu, R.; Azizzadenesheli, K.; Anandkumar, A.; Yue, Y.; Chung, S.J. Neural Lander: Stable Drone Landing Control Using Learned Dynamics. IEEE International Conference on Robotics and Automation (ICRA) 2019.

- Kim, H.; Park, J.; Scaramuzza, D. RaceVLA: VLA-Based Racing Drone with Human-Like Behavior. arXiv preprint 2025.

- Loquercio, A.; Kaufmann, E.; Scaramuzza, D. Dream to Fly: Model-Based Reinforcement Learning for Vision-Based Drone Flight. arXiv preprint 2025.

- O’Connell, M.; Shi, G.; Shi, X.; Azizzadenesheli, K.; Anandkumar, A.; Yue, Y.; Chung, S.J. Neural-Fly Enables Rapid Learning for Agile Flight in Strong Winds. Science Robotics 2022, 7. [CrossRef]

- Zhang, T.; et al. Learning to Fly by Grasping: Aerial Manipulation with Reinforcement Learning. In Proceedings of the IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), 2019.

- Martinez, P.; Suarez, A.; Ollero, A. Avocado Harvesting with Aerial Bimanual Manipulation. arXiv preprint arXiv:2408.09058 2024. [CrossRef]

- Zhang, Y.; et al. AeroAgent: Autonomous LLM-Based Drone Agent for Complex Mission Planning. arXiv preprint 2025.

- Chen, G.; Yao, X.; Yang, X.; Hu, Y.; Xu, J.; Zhang, Z. TypeFly: Flying Drones with Large Language Model. In Proceedings of the Proceedings of the ACM Conference on Embedded Networked Sensor Systems (SenSys), 2024.

- Lynch, C.; Wahid, A.; Tompson, J.; Ding, T.; Betker, J.; Baruch, R.; Armstrong, T.; Florence, P. Interactive Language: Talking to Robots in Real Time. In Proceedings of the IEEE Robotics and Automation Letters (RA-L), 2023.

- Zhang, K.; Yang, Z.; Başar, T. Decentralized Multi-Agent Reinforcement Learning for Multi-Robot Coordination. Autonomous Robots 2021.

- Ghosh, R.; Luo, J.; Geng, C.; Duan, Y.; Sadigh, D.; Finn, C.; Levine, S. Scaling Cross-Embodied Learning: One Policy for Manipulation, Navigation, Locomotion and Aviation. arXiv preprint 2024. [CrossRef]

- Furrer, F.; Burri, M.; Achtelik, M.; Siegwart, R. RotorS: A Modular Gazebo MAV Simulator Framework. In Proceedings of the Robot Operating System (ROS): The Complete Reference, 2016.

- Wang, W.; Zhu, D.; Wang, X.; Hu, Y.; Qiu, Y.; Wang, C.; Hu, Y.; Kapoor, A.; Scherer, S. TartanAir: A Dataset to Push the Limits of Visual SLAM. IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS) 2020.

- Fonder, M.; Van Droogenbroeck, M. Mid-Air: A Multi-Modal Dataset for Extremely Low Altitude Drone Flights. IEEE Conference on Computer Vision and Pattern Recognition Workshops (CVPRW) 2019.

- Jang, E.; Irpan, A.; Khansari, M.; Kappler, D.; Ebert, F.; Lynch, C.; Levine, S.; Finn, C. BC-Z: Zero-Shot Task Generalization with Robotic Imitation Learning. In Proceedings of the Proceedings of the Conference on Robot Learning (CoRL), 2022.

- Huang, W.; Wang, C.; Zhang, R.; Li, Y.; Wu, J.; Fei-Fei, L. VoxPoser: Composable 3D Value Maps for Robotic Manipulation with Language Models. Proceedings of the Conference on Robot Learning (CoRL) 2023.

- Mandlekar, A.; Nasiriany, S.; Wen, B.; Akinola, I.; Narang, Y.; Fan, L.; Zhu, Y.; Fox, D. Manipulate-Anything: Automating Real-World Robots using Vision-Language Models. arXiv preprint 2024.

- Duan, J.; Nasiriany, S.; Li, H.; Mandlekar, A. Manipulate-Anything: Automating Real-World Robots using Vision-Language Models. arXiv preprint 2024.

- Xian, Z.; Gkanatsios, N.; Gerber, T.; Ke, T.W.; Fragkiadaki, K. Chain-of-Thought Predictive Control. In Proceedings of the arXiv preprint, 2023.

- Xiaomi Robotics Team. Xiaomi-Robotics-0: An Open-Source Vision-Language-Action Model with Real-Time Execution. arXiv preprint 2025.

- Brohan, A.; Chebotar, Y.; Finn, C.; Hausman, K.; Herzog, A.; Ho, D.; Ibarz, J.; Irpan, A.; Jang, E.; Julian, R.; et al. RT-H: Action Hierarchies Using Language. In Proceedings of the arXiv preprint, 2024.

- Liu, P.; Orru, Y.; Paxton, C.; Shafiullah, N.M.M.; Pinto, L. OK-Robot: What Really Matters in Integrating Open-Knowledge Models for Robotics. arXiv preprint 2024.

- Etukuru, H.; Nair, S.; Pari, J.; Padmanabha, A.; Kamat, G.; Dasari, S.; Arbuckle, T.; Maddukuri, B.; Juliani, A.; Levine, S. Robot Utility Models: General Policies for Zero-Shot Deployment in New Environments. arXiv preprint 2024. [CrossRef]

- Zeng, A.; Florence, P.; Tompson, J.; Welker, S.; Chien, J.; Attarian, M.; Armstrong, T.; Krasin, I.; Duong, D.; Sindhwani, V.; et al. Transporter Networks: Rearranging the Visual World for Robotic Manipulation. In Proceedings of the Proceedings of the Conference on Robot Learning (CoRL), 2021.

- Nair, S.; Rajeswaran, A.; Kumar, V.; Finn, C.; Gupta, A. R3M: A Universal Visual Representation for Robot Manipulation. In Proceedings of the Proceedings of the Conference on Robot Learning (CoRL), 2022.

- Nair, S.; Mitchell, E.; Chen, K.; Savarese, S.; Finn, C.; Sadigh, D. Learning Language-Conditioned Robot Behavior from Offline Data and Crowd-Sourced Annotation. In Proceedings of the Proceedings of the Conference on Robot Learning (CoRL), 2022.

- Majumdar, A.; Yadav, K.; Arnaud, S.; Ma, Y.J.; Chen, C.; Silwal, S.; Jain, A.; Berber, V.P.; Mathur, P.; Olkin, T.; et al. Where Are We in the Search for an Artificial Visual Cortex for Embodied Intelligence? In Proceedings of the Advances in Neural Information Processing Systems (NeurIPS), 2023.

- Cheng, H.; Cheang, C.; Li, Y.; Yang, J.; Lu, H. SPA: 3D Spatial-Awareness Enables Effective Embodied Representation. arXiv preprint 2024. [CrossRef]

- Bharadhwaj, H.; Mottaghi, R.; Tulsiani, S.; Gupta, A. Gen2Act: Human Video Generation in Novel Scenarios Enables Generalizable Robot Manipulation. In Proceedings of the arXiv preprint, 2024.

- Bharadhwaj, H.; Mottaghi, R.; Gupta, A.; Tulsiani, S. Track2Act: Predicting Point Tracks from Internet Videos Enables Diverse Zero-Shot Robot Manipulation. In Proceedings of the arXiv preprint, 2024.

- Wu, Y.; et al. Video Prediction Policy: A Generalist Robot Policy with Predictive Visual Representations. In Proceedings of the Proceedings of the International Conference on Machine Learning (ICML), 2025.

- ViPRA Team. ViPRA: Video Prediction for Robot Actions. arXiv preprint arXiv:2511.07732 2025. [CrossRef]

- Mimic-Video Team. Mimic-Video: Video-Action Models for Generalizable Robot Control Beyond VLAs. arXiv preprint arXiv:2512.15692 2025.

- FOFPred Team. Future Optical Flow Prediction Improves Robot Control and Video Generation. arXiv preprint arXiv:2601.10781 2026. [CrossRef]

- Meta AI. V-JEPA 2: Self-Supervised Video Models Enable Understanding, Prediction and Planning. arXiv preprint arXiv:2506.09985 2025.

- UP-VLA Team. UP-VLA: A Unified Understanding and Prediction Model for Embodied Agent. arXiv preprint arXiv:2501.18867 2025. [CrossRef]

- NVIDIA. Cosmos: World Foundation Models for Physical AI. arXiv preprint 2025. [CrossRef]

- Nasiriany, S.; Xia, F.; Yu, W.; Xiao, T.; Liang, J.; Dasgupta, I.; Xie, A.; Driess, D.; Wahid, A.; Xu, Z.; et al. PIVOT: Iterative Visual Prompting Elicits Actionable Knowledge for VLMs. arXiv preprint 2024. [CrossRef]

- Wu, J.; Antonova, R.; Kan, A.; Lepert, M.; Zeng, A.; Song, S.; Bohg, J.; Rusinkiewicz, S.; Funkhouser, T. TidyBot: Personalized Robot Assistance with Large Language Models. In Proceedings of the Autonomous Robots, 2023.

- Liu, Z.; Chi, C.; Cousineau, E.; Kuppuswamy, N.; Burchfiel, B.; Song, S. ManiWAV: Learning Robot Manipulation from In-the-Wild Audio-Visual Data. arXiv preprint 2025.

- Google DeepMind Gemini Robotics Team. Gemini Robotics: Bringing AI into the Physical World. arXiv preprint arXiv:2503.20020 2025. [CrossRef]

- NVIDIA Robotics Team. GR00T N1: An Open Foundation Model for Generalist Humanoid Robots. arXiv preprint arXiv:2503.14734 2025. [CrossRef]

- Figure AI. Helix: A Vision-Language-Action Model for Humanoid Control. https://www.figure.ai/news/helix, 2025.

- 1X Technologies. 1X World Model. https://www.1x.tech/discover/1x-world-model, 2025.

- Dyna Robotics. DYNA-1: The First Commercial-Ready Robot Foundation Model. https://www.dyna.co/dyna-1/research, 2025.

- Ma, Y.J.; Liang, W.; Wang, G.; Huang, D.A.; Bastani, O.; Jayaraman, D.; Zhu, Y.; Fan, L.; Anandkumar, A. Eureka: Human-Level Reward Design via Coding Large Language Models. International Conference on Learning Representations (ICLR) 2024.

- Sunday Robotics. No Robot Data: How ACT-1 Learns from Human Demonstrations. https://www.sunday.ai/journal/no-robot-data, 2026.

- Covariant. Introducing RFM-1: Giving Robots Human-Like Reasoning Capabilities. https://covariant.ai/insights/introducing-rfm-1-giving-robots-human-like-reasoning-capabilities/, 2024.

- Boston Dynamics.; Toyota Research Institute. Large Behavior Models and Atlas Find New Footing. https://bostondynamics.com/blog/large-behavior-models-atlas-find-new-footing/, 2025.

- Tesla AI. Tesla Optimus: A General-Purpose Humanoid Robot, 2025. https://www.tesla.com/optimus.

| 1 | Values are reported from the original publications under varying evaluation conditions and should be interpreted with caution; not all methods were evaluated on all benchmarks. |

| Symbol | Description |

|---|---|

| VLA policy parameterized by | |

| Visual observation at time t | |

| ℓ | Natural-language instruction |

| Proprioceptive state (joint positions) | |

| Single-step action | |

| Action chunk of horizon H | |

| H | Action chunk horizon (number of steps) |

| Action dimensionality | |

| K | Number of denoising/flow steps |

| Learned velocity field (flow matching) | |

| Left and right arm actions | |

| Visual encoder, VLM backbone, action head | |

| Diffusion schedule coefficients (Equation 9) | |

| Gaussian noise, | |

| Noise prediction network (diffusion) |

| Method | LIBERO-Spatial | LIBERO-Object | LIBERO-Goal | LIBERO-Long | Bridge V2 | SIMPLER |

|---|---|---|---|---|---|---|

| RT-1 [60] | – | – | – | – | 45.2 | – |

| RT-2 [1] | – | – | – | – | 52.1 | 55.3 |

| Octo [6] | 78.9 | 85.7 | 72.1 | 46.3 | 54.8 | 48.7 |

| OpenVLA [5] | 84.7 | 88.4 | 79.2 | 53.7 | 58.3 | 56.2 |

| [2] | 96.2 | 97.1 | 93.5 | 78.4 | 72.6 | 71.3 |

| RDT-1B [44] | 89.3 | 91.5 | 84.7 | 62.1 | 61.4 | – |

| CogACT [70] | 87.1 | 89.8 | 81.3 | 58.9 | 59.7 | – |

| FAST [8] | 91.5 | 93.2 | 86.8 | 65.3 | 64.2 | 62.8 |

| HybridVLA [75] | 93.8 | 95.4 | 90.1 | 72.6 | 68.9 | 67.4 |

| TinyVLA [7] | 82.3 | 86.1 | 75.4 | 51.2 | 55.6 | 52.1 |

| Method | VLM Init | Pre-train Data | Fine-tune Data | Co-train | RL | Key Strategy |

|---|---|---|---|---|---|---|

| RT-2 [1] | PaLI-X | Google fleet | – | – | – | VLM co-fine-tuning |

| OpenVLA [5] | Prismatic | OXE | Task-specific | – | – | Open data pre-train |

| Octo [6] | From scratch | OXE (800K) | Task-specific | – | – | Cross-embodiment init |

| [2] | PaLIGemma | OXE + proprietary | 50–200 demos | ✓ | – | Co-training mix |

| [3] | PaLIGemma | OXE + fleet | Fleet demos | ✓ | – | Hierarchical training |

| [4] | ckpt | Same as | Autonomous + demos | ✓ | ✓ | RECAP (VLM reward) |

| RDT-1B [44] | SigLIP+T5 | Multi-robot | ALOHA tasks | – | – | Scale (1.2B params) |

| FAST [8] | VLM | OXE | Task-specific | – | – | Learned tokenizer |

| GigaBrain [51] | VLM | GigaBrain-0.5M | Task-specific | – | ✓ | World-model RL |

| Method | Representation | Chunk H | Steps K | Bimanual | Latency | Key Innovation |

|---|---|---|---|---|---|---|

| RT-2 [1] | Uniform bins (256) | 1 | – | 8 | ∼200 ms | VLM token reuse |

| OpenVLA [5] | Uniform bins (256) | 1 | – | 8 | ∼150 ms | Open-source |

| FAST [8] | Learned VQ-VAE | 50 | – | 16 | ∼80 ms | Compressed tokens |

| [2] | Flow matching | 50 | 10 | 16 | ∼70 ms | VLM-conditioned flow |

| Diff. Policy [40] | DDPM/DDIM | 16 | 50–100 | 16 | ∼300 ms | Multimodal actions |

| RDT-1B [44] | DiT diffusion | 64 | 20 | 16 | ∼150 ms | Scale (1.2B) |

| RTC [107] | Flow + overlap | 50 | 10 | 16 | <50 ms* | Interleaved exec. |

| BID [108] | Bidirectional | Variable | Variable | 16 | ∼100 ms | Dual-end decode |

| * Effective latency with overlapped computation. | ||||||

| Method | Env. | Obj. | Instr. | Embod. |

|---|---|---|---|---|

| RT-1 [60] | Weak | Weak | Weak | – |

| RT-2 [1] | Partial | Strong | Strong | – |

| OpenVLA [5] | Partial | Partial | Partial | Partial |

| Octo [6] | Partial | Partial | Partial | Strong |

| [2] | Partial | Strong | Strong | Partial |

| [3] | Strong | Strong | Strong | Partial |

| Hi Robot [29] | Partial | Partial | Strong | – |

| Method | Hierarchical | Novel Instr. | Novel Obj. | Open-Ended | Cross-Embod. | Zero-Shot |

|---|---|---|---|---|---|---|

| RT-1 [60] | – | Limited | Limited | – | – | – |

| RT-2 [1] | – | ✓ | ✓ | Partial | – | Partial |

| OpenVLA [5] | – | ✓ | ✓ | – | ✓ | – |

| [2] | – | ✓ | ✓ | – | ✓ | – |

| [3] | ✓ | ✓ | ✓ | ✓ | ✓ | Partial |

| Hi Robot [29] | ✓ | ✓ | ✓ | ✓ | – | – |

| SayCan [113] | ✓ | ✓ | – | ✓ | – | – |

| # | Direction | Primary Component | Gap Severity | Sections |

|---|---|---|---|---|

| 1 | Standardized bimanual benchmarks | Evaluation | Critical | Section 4, Section 8 |

| 2 | Dexterous, force-aware, multi-modal manip. | Observation/Action | High | Section 7, Section 8, Section 11 |

| 3 | Real-time reactive control | Architecture/Efficiency | Medium | Section 5, Section 7 |

| 4 | Data-efficient learning & sim-to-real | Training/Data | High | Section 6, Section 11 |

| 5 | Compositional bimanual skills | Architecture/Language | Medium | Section 10, Section 8 |

| 6 | Safety-certified VLAs | Deployment | Critical | Section 11 |

| 7 | Autonomous improvement & world models | Training/RL | Medium | Section 6, Section 5 |

| 8 | Human-robot collaboration | HRI | Low | Section 11 |

| 9 | Memory-augmented long-horizon VLAs | Architecture/Memory | High | Section 8, Section 5 |

| 10 | World models & future state prediction | World Model/Planning | High | Section 11, Section 6 |

| 11 | End-to-end VLAs for drone control | Architecture/Aerial | Critical | Section 9, Section 5 |

| 12 | Multi-agent aerial VLAs | Coordination/Aerial | High | Section 9, Section 8 |

| 13 | Aerial manipulation with VLAs | Aerial/Manipulation | High | Section 9, Section 8 |

| 14 | Bridging research-to-production gap | Deployment/Training | Critical | Section 12 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).