Submitted:

04 April 2026

Posted:

07 April 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

| Modulation Classification | Identifying the modulation scheme (e.g., BPSK, QPSK, QAM) from received signals; core task in AMC. | CNNs, LSTMs, transformers [9,47,48] |

|---|---|---|

| FEC / Coding Scheme Recognition | Inferring forward error correction schemes (e.g., LDPC, convolutional codes) from received data. | CNN and DL-based LDPC recognition [10,11] |

| Interference Classification | Identifying types of interference (e.g., WiFi vs Bluetooth vs noise sources). | Semi-supervised CNNs [37] |

| Open-Set / Unknown Signal Detection | Detecting signals not seen during training and rejecting unknown classes. | Domain generalization and OTA evaluation [16,17] |

| Channel / Impairment Estimation | Estimating channel effects such as fading, frequency offset, phase noise, and hardware distortions. | DL-based correction and estimation [18,21] |

| Signal Detection / Spectrum Sensing | Determining the presence or absence of signals in a frequency band under noise and interference. | DL-based spectrum monitoring [22,32] |

| Multi-Signal / Emitter Separation | Identifying and separating overlapping signals from multiple transmitters in time/frequency. | CNN-based and radar-signal approaches [13,14] |

| Radio Access Technology (RAT) Identification | Detecting communication protocols (e.g., LTE, WiFi, LoRa) using time–frequency or spectral patterns. | CNN on spectrograms [32,33] |

| Protocol Behavior / Adaptation Detection | Characterizing adaptive behaviors such as rate control, power adjustment, or IoT link adaptation. | IoT and LoRa-based analysis [1,2] |

| Device / Transmitter Identification (RF Fingerprinting) | Identifying unique hardware signatures of transmitters based on RF impairments. | DL-based RF fingerprinting [19,20] |

2. Review Strategy

- 1.

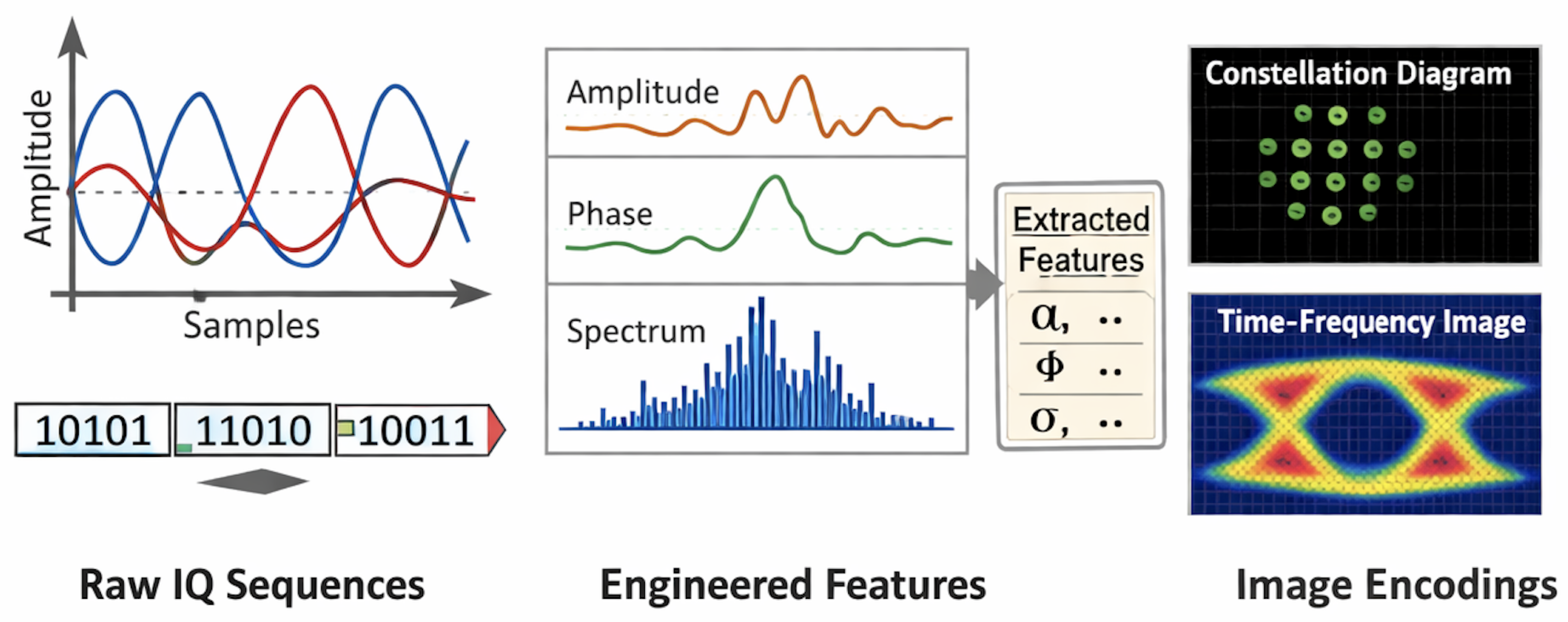

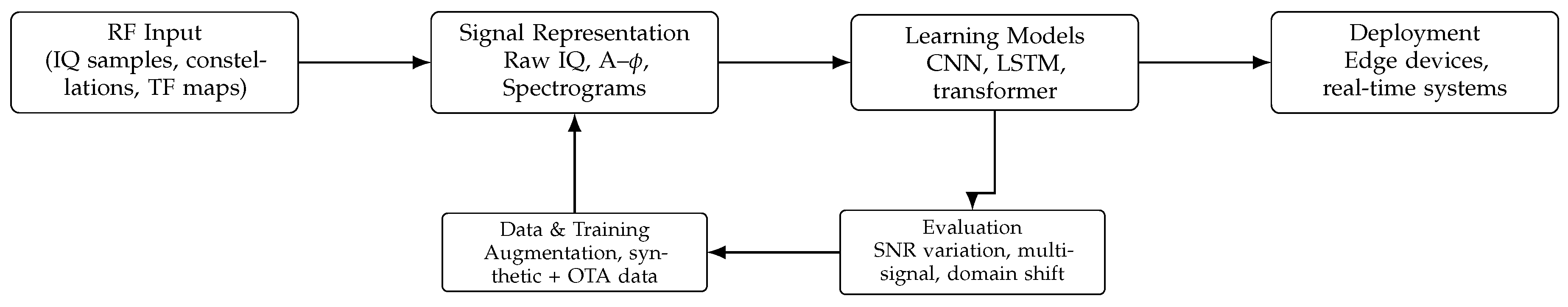

- Signal Representations and Dataset Design: Analysis of raw IQ data, engineered features, and time–frequency or constellation-based representations, along with the impact of dataset realism and evaluation protocols on reported performance.

- 2.

- Convolutional Neural Networks (CNNs): CNN-based approaches for AMC, including baseline architectures, robustness enhancements, hybrid feature integrations, and lightweight models for deployment.

- 3.

- Sequence Models (RNNs and LSTMs): Temporal modeling techniques that capture sequential dependencies in RF signals, with discussion of their strengths, limitations, and role relative to CNN-based methods.

- 4.

- Transformer-Based Architectures: Attention-driven models that enable global context learning from IQ sequences and derived representations, including design considerations and performance trade-offs.

- 5.

- Real-World Challenges and System-Level Considerations: Challenges include domain shift, low-SNR environments, multi-signal interference, and deployment constraints, alongside methods such as transfer learning and ensemble learning to improve accuracy.

2.1. Review Methodology

“Automatic Modulation Classification”, “RF Machine Learning”, “RF Deep Learning”, “CNN”, “RNN”, and “Transformer”.

| Representation | Advantages | Limitations |

|---|---|---|

| Raw IQ Sequences | •No preprocessing; preserves full amplitude and phase information •Enables true end-to-end learning from raw observations •Avoids bias from handcrafted features •Captures fine-grained temporal dynamics and phase transitions •Suitable for real-time/streaming systems (low latency) •Flexible across signal types and channel conditions •Retains information for joint learning of impairments (noise, drift, offsets) |

•Sensitive to noise and channel impairments (low SNR degradation) •Phase/frequency offsets can distort learned patterns •Requires large datasets to learn invariances •Harder to learn robustness without augmentation •Multipath fading and nonlinear effects corrupt structure •Higher training complexity due to limited inductive bias |

| Engineered Features | •Incorporates domain knowledge (e.g., spectral, wavelet features) •Improved robustness to noise and channel effects •Reduces learning complexity via compact representations •More stable across varying SNR conditions •Highlights physically meaningful signal characteristics •Integrates well with classical signal processing pipelines |

•Requires expert-driven feature design •Potential information loss during transformation •Limited generalization across datasets/hardware •Adds preprocessing latency and system complexity •Reduced adaptability to unseen modulation types •Possible mismatch with learned representations |

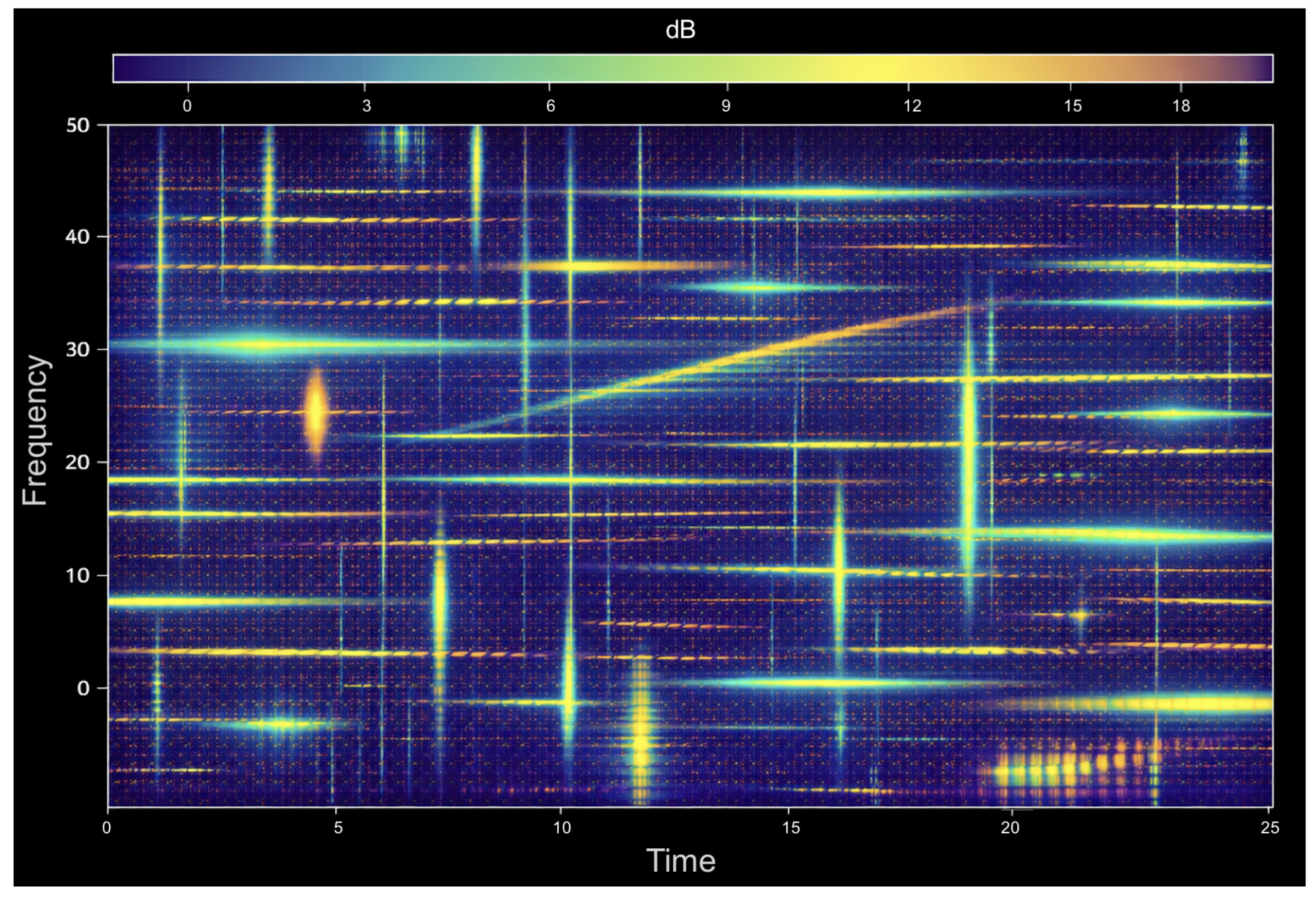

| Image Encodings | •Enables use of vision-based models (CNNs, ViTs) •Provides interpretable representations (spectrograms, constellations) •Highlights spatial modulation patterns •Leverages mature computer vision architectures •Effective in high-SNR conditions •Captures joint time–frequency structure |

•Requires preprocessing (e.g., STFT), increasing overhead •Often assumes synchronization (timing, carrier alignment) •Noise degrades constellation separability •High-order modulations become difficult under noise •Multipath and interference distort visual structure •Loss of temporal information in static images •Higher memory and compute requirements |

3. Signal Model, Task Definitions, and Evaluation Dimensions

3.1. Closed-Set vs. Open-set AMC

3.2. Channel Impairments and Domain Shift

3.3. Metrics and Protocols

4. Datasets and Signal Representations

4.1. Representations

- Raw IQ sequences: used in early end-to-end CNN baselines and later in transformer work.

- Image encodings: constellation diagrams or time–frequency images (e.g. eye diagrams) that permit reuse of vision CNNs (AlexNet/VGG/ResNet variants) [25].

4.2. Labeling Scope: Modulation only vs. Richer Parameters

5. A Taxonomy of DL-Based AMC Approaches

5.1. CNN-Centric Modulation Recognition

5.2. From Baseline CNNs to Improved Accuracy

5.3. Robustness via Correction and Auxiliary Features

5.4. Alternative Feature Extractions and Hybrid Encodings

5.5. Architectural Variations: Dilation, Dropout, and Fusion

5.6. Efficiency and Deployment-Oriented CNNs

6. Sequence Models: RNN and LSTM Approaches

7. Attention Mechanisms and Transformers

7.1. Transformer-Based AMC

7.2. Large Language Model Meta AI for AMC Adaption in Realistic Environment

7.3. Time–Frequency Attention and Hybrid CNN-Attention Designs

7.4. IoT- and Systems-Oriented Transformer Variants

8. Ensembles, Fusion, and Distributed Spectrum Monitoring

9. Transfer Learning for AMC

10. Beyond Communications-Only AMC: Radar and Multi-Signal Mixtures

11. Practicality and Sustainability

| DL Model | Advantages | Disadvantages |

|---|---|---|

| CNN | Learns local features and translation invariance; efficient and highly parallelizable; robust to small shifts and noise | Requires fixed-size inputs; limited ability to model long-range dependencies; may need deeper architectures or large receptive fields |

| RNN | Captures temporal dependencies; processes sequential data; suitable for time-series modeling | Suffers from vanishing and exploding gradients; limited long-term memory; slow training due to sequential processing |

| LSTM | Handles long-term dependencies; improved gradient flow via gating mechanisms; more stable than vanilla RNNs | Higher computational complexity; slower training; less parallelizable than CNNs and transformers |

| Transformer | Models long-range dependencies using self-attention; highly parallelizable; captures global context effectively | High computational and memory cost; data-hungry; sensitive to tokenization and architectural design |

11.1. Training Data Volume, Augmentation, and Synthetic-to-Real Gaps

11.2. Model Selection Under Latency and Memory Constraints

11.3. Benchmarking Pitfalls and a Minimal Reporting Checklist

| Pitfall | Impact | What to report |

|---|---|---|

| Unclear dataset versioning | Results are difficult to reproduce and compare across studies | Dataset version, number of classes, sample length, and generation procedure |

| SNR imbalance | Inflated or misleading accuracy due to dominance of high-SNR samples | Train/test SNR distribution and per-SNR accuracy curves |

| Preprocessing variability (TF, constellations) | Hidden biases and inconsistent feature representations | Exact preprocessing pipeline and parameters (e.g., STFT window, normalization) |

| No cross-domain testing | Unknown robustness under real-world deployment conditions | Cross-dataset evaluation and over-the-air (OTA) testing |

| Ignoring calibration uncertainty | Overconfident predictions and unreliable decision thresholds | Calibration method (e.g., temperature scaling) and rejection criteria |

| Single-signal assumption | Unrealistic performance in environments with overlapping signals | Evaluation on multi-signal or interference scenarios |

| Synthetic-only training data | Poor generalization to real-world RF due to domain shift | Use of OTA data or domain adaptation techniques |

| Lack of channel variability | Models fail under different propagation conditions (fading, Doppler, offsets) | Channel models used and impairment settings |

| Signal parameter variation (not modeled) | Performance degradation due to unmodeled variations in signal parameters | Baud rate, sampling rate, sideband effects, RF measurement errors, and front-end components (modulator/demodulator, imaging artifacts) |

| Fixed input assumptions | Reduced robustness to varying sampling rates, symbol lengths, or bandwidths | Input configuration and preprocessing strategy |

| Ignoring computational constraints | Models may be impractical for real-time or edge deployment | Model size, FLOPs, inference latency, and hardware setup |

| Limited evaluation metrics | Accuracy alone does not reflect real-world performance | Additional metrics (precision/recall, confusion matrix, SNR-wise results) |

| Class imbalance | Bias toward dominant modulation classes | Class distribution and balancing strategy |

12. Open Problems and Research Directions

12.1. Robustness and Domain Generalization

12.2. Low-SNR Recognition and Impairment-Aware Learning

12.3. Beyond Single-Signal, Closed-Set Classification

12.4. Data Realism, Reporting Standards, and Reproducibility

12.5. Edge Deployment and Distributed Sensing

12.6. Integrating DL with Classical Signal Processing

13. Conclusions

Author Contributions

Funding

Conflicts of Interest

References

- Aldhaheri, Lameya; Alshehhi, Noor; Manzil, Irfana; Khalil, Ruhul Amin; Javaid, Shumaila; Saeed, Nasir; Alouini, Mohamed-Slim. LoRa Communication for Agriculture 4.0: Opportunities, Challenges, and Future Directions. 2024. [Google Scholar] [CrossRef]

- Kufakunesu, R.; Hancke, G.P.; Abu-Mahfouz, A.M. A Survey on Adaptive Data Rate Optimization in LoRaWAN: Recent Solutions and Major Challenges. Sensors 2020, 20, 5044. [Google Scholar] [CrossRef]

- Thakur, A. S.; Imtiaz, M. H. Long Range (LoRa) Agriculture Network in Northern New York: A Scoping Review. 2025 IEEE 16th Annual Ubiquitous Computing, Electronics & Mobile Communication Conference (UEMCON), Yorktown Heights, NY, USA; 2025, pp. 0374–0381. [CrossRef]

- Bahloul, Mohammad Rida; et al. An Efficient Likelihood-Based Modulation Classification Algorithm for MIMO Systems. ArXiv abs/1605.07505 2016, n. pag. [Google Scholar]

- Jin, X.; Zhou, X. A new likelihood-based modulation classification algorithm using MCMC. J. Electron. (China) 2012, 29, 17–22. [Google Scholar] [CrossRef]

- Dobre, O. Survey of Automatic Modulation Classification Techniques: Classical Approaches and New Trends. In IET Communications; 2007. [Google Scholar] [CrossRef]

- Li, X.; Jiang, Z.; Ting, K.; Zhu, Y. An Online Automatic Modulation Classification Scheme Based on Isolation Distributional Kernel. arXiv 2024, arXiv:2410.02750. [Google Scholar] [CrossRef]

- LeCun, Y.; Boser, B.; Denker, J. S.; Henderson, D.; Howard, R. E.; Hubbard, W.; Jackel, L. D. Backpropagation Applied to Handwritten Zip Code Recognition. Neural Computation 1989, vol. 1, 541–551. [Google Scholar] [CrossRef]

- O’Shea, T.; Corgan, J.; Clancy, T. Convolutional Radio Modulation Recognition Networks. Proc. IEEE International Conference on Communications (ICC), 2016; Available online: https://arxiv.org/pdf/1602.04105.

- Ramabadran, S.; Madhu Kumar, A. S.; Guohua, W.; Kee, T. Shang. Blind Recognition of LDPC Code Parameters Over Erroneous Channel Conditions. IET Signal Processing 2019, vol. 13, 86–95. [Google Scholar] [CrossRef]

- Zhang, X.; Zhang, W. A Cascade Network for Blind Recognition of LDPC Codes. Electronics 2023, 12. [Google Scholar] [CrossRef]

- Yan, W.; Ling, Q.; Zhang, L. Convolutional Neural Networks for Space-Time Block Coding Recognition. ArXiv 2019, abs/1910.09952. [Google Scholar]

- Wang, T.; Yang, G.; Chen, P.; Xu, Z.; Jiang, M.; Ye, Q. A Survey of Applications of Deep Learning in Radio Signal Modulation Recognition. Appl. Sci. 2022, 12, 12052. [Google Scholar] [CrossRef]

- Wan, C.; Zhang, Q. A Novel Dual-Component Radar-Signal Modulation Recognition Method Based on CNN-ST. Appl. Sci. 2024, 14, 5499. [Google Scholar] [CrossRef]

- Wang, Y.; Guo, J.; Liu, H.; Li, L.; Wang, Z.; Wu, H. CNN-Based Modulation Classification in the Complicated Communication Channel. Proc. IEEE Int. Conf. Electronic Measurement & Instruments (ICEMI), Yangzhou, China, Oct. 2017; pp. 512–516. [Google Scholar]

- O’Shea, T. J.; Roy, T.; Clancy, T. C. Over-the-Air Deep Learning Based Radio Signal Classification. IEEE Journal of Selected Topics in Signal Processing 2018, vol. 12(no. 1), 168–179. [Google Scholar] [CrossRef]

- Rangaswamy, Arjun; T P, Surekha. Over-the-Air Modulation Classification using Deep Learning in Fading Channels for Cognitive Radio. Indian Journal of Science and Technology 2021, 14, 3360–3369. [Google Scholar] [CrossRef]

- Yashashwi, Kumar; Sethi, Amit; Chaporkar, Prasanna. A Learnable Distortion Correction Module for Modulation Recognition. IEEE Wireless Communications Letters 2018. [Google Scholar] [CrossRef]

- Erpek, T.; O’Shea, T.; Sagduyu, Y.E.; Shi, Y.; Clancy, T.C. Deep Learning for Wireless Communications. ArXiv 2019, abs/2005.06068. [Google Scholar]

- O’Shea, Tim; Hoydis, Jakob. An Introduction to Deep Learning for the Physical Layer. IEEE Transactions on Cognitive Communications and Networking 2017, 3, 563–575. [Google Scholar] [CrossRef]

- Gu, Hao; Wang, Yu; Hong, Sheng; Gui, Guan. Blind Channel Identification Aided Generalized Automatic Modulation Recognition Based on Deep Learning. IEEE Access 2019, PP. 1–1. [Google Scholar] [CrossRef]

- Rajendran, S.; Meert, W.; Giustiniano, D.; Lenders, V.; Pollin, S. Distributed Deep Learning Models for Wireless Signal Classification with Low-Cost Spectrum Sensors. IEEE Transactions on Cognitive Communications and Networking 2017. [Google Scholar] [CrossRef]

- Zhang, Q.; Xu, Z.; Zhang, P. Modulation Recognition Using Wavelet-Assisted Convolutional Neural Network. Proc. IEEE Int. Conf. Advanced Technologies for Communications (ATC), Ho Chi Minh City, Vietnam, Oct. 2018; pp. 100–104. [Google Scholar]

- Zhang, F.; Luo, C.; Xu, J.; Luo, Y. An Efficient Deep Learning Model for Automatic Modulation Recognition Based on Parameter Estimation and Transformation. IEEE Communications Letters 2021. [Google Scholar] [CrossRef]

- Peng, Shengliang; Jiang, Hanyu; Wang, Huaxia; Alwageed, Hathal; Yao, Yu-Dong. Modulation classification using convolutional Neural Network based deep learning model. 2017, 1–5. [Google Scholar] [CrossRef]

- West, N. E.; O’Shea, T. Deep Architectures for Modulation Recognition. Proc. IEEE DySPAN, Baltimore, MD, USA, Mar. 2017; pp. 1–6. [Google Scholar]

- Du, R.; Liu, F.; Xu, J.; Gao, F.; Hu, Z.; Zhang, A. D-GF-CNN Algorithm for Modulation Recognition. Wireless Personal Communications, 2022. [Google Scholar]

- Zhang, M.; Diao, M.; Guo, L. Convolutional Neural Networks for Automatic Cognitive Radio Waveform Recognition. IEEE Access 2017, vol. 5, 11074–11082. [Google Scholar] [CrossRef]

- Li, R.; Li, L.; Yang, S.; Li, S. Robust Automated VHF Modulation Recognition Based on Deep Convolutional Neural Networks. IEEE Communications Letters 2018, vol. 22, 946–949. [Google Scholar] [CrossRef]

- Wu, H.; Wang, Q.; Zhou, L.; Meng, J. VHF Radio Signal Modulation Classification Based on Convolution Neural Networks. Proc. 1st Int. Symp. on Water System Operations, MATEC Web Conf., Beijing, China, Oct. 2018; vol. 246, p. 03032. [Google Scholar]

- Wang, Y.; Liu, M.; Yang, J.; Gui, G. Data-Driven Deep Learning for Automatic Modulation Recognition in Cognitive Radios. IEEE Transactions on Vehicular Technology 2019, vol. 68, 4074–4077. [Google Scholar] [CrossRef]

- Kulin, M.; Kazaz, T.; Moerman, I.; De Poorter, E. End-to-End Learning from Spectrum Data: A Deep Learning Approach for Wireless Signal Identification in Spectrum Monitoring Applications. IEEE Access 2018, vol. 6, 18484–18501. [Google Scholar] [CrossRef]

- Hiremath, S. M.; Deshmukh, S.; Rakesh, R.; Patra, S. K. Blind Identification of Radio Access Techniques Based on Time-Frequency Analysis and Convolutional Neural Network. Proc. IEEE TENCON, Jeju Island, Korea, Oct. 2018; pp. 1163–1167. [Google Scholar]

- Ghanem, H. S.; Al-Makhlasawy, R. M.; El-Shafai, W.; Elsabrouty, M.; Hamed, H. F.; Salama, G. M.; El-Samie, F. E. A. Wireless Modulation Classification Based on Radon Transform and Convolutional Neural Networks. Journal of Ambient Intelligence and Humanized Computing 2022. [Google Scholar] [CrossRef]

- Dileep, P.; Das, D.; Bora, P. K. Dense Layer Dropout Based CNN Architecture for Automatic Modulation Classification. in Proc. IEEE NCC, Kharagpur, India, Feb. 2020; pp. 1–5. [Google Scholar]

- Wang, Z.; Sun, D.; Gong, K.; Wang, W.; Sun, P. A Lightweight CNN Architecture for Automatic Modulation Classification. Electronics 2021, vol. 10, 2679. [Google Scholar] [CrossRef]

- Longi, K.; Pulkkinen, T.; Klami, A. Semi-Supervised Convolutional Neural Networks for Identifying Wi-Fi Interference Sources. in Proc. Asian Conf. Machine Learning (ACML), Seoul, Korea, Nov. 2017; pp. 391–406. [Google Scholar]

- Shi, Jibo; Qi, Lin; Li, Kuixian; Lin, Yun. Signal Modulation Recognition Method Based on Differential Privacy Federated Learning. Wireless Communications and Mobile Computing 2021, 2537546, 13 pages. [Google Scholar] [CrossRef]

- Bhardwaj, S.; Kim, D.-H.; Kim, D.-S. Federated learning based modulation classification for multipath channels. Parallel Computing 2024, vol. 120, 103083. [Google Scholar] [CrossRef]

- DeepSig Inc. Datasets." DeepSig. Available online: https://www.deepsig.ai/datasets/ (accessed on 28 Mar. 2026).

- Abudeeb, M. “RML2016.10b Dataset,” Kaggle. Available online: https://www.kaggle.com/datasets/marwanabudeeb/ (accessed on Mar. 28 2026).

- Tekbıyık, Kürşat; et al. HisarMod: A New Challenging Modulated Signals Dataset. IEEE DataPort, Mar. 28, 2026; 2020. Available online: https://ieee-dataport.org/open-access/hisarmod-new-challenging-modulated-signals-dataset.

- Hochreiter, S.; Schmidhuber, J. Long short-term memory. Neural Computation 1997, vol. 9(no. 8), 1735–1780. [Google Scholar] [CrossRef]

- Hong, D.; Zhang, Z.; Xu, X. Automatic Modulation Classification Using Recurrent Neural Networks. Proc. IEEE ICCC, Chengdu, China, Dec. 2017; pp. 695–700. [Google Scholar]

- Daldal, N.; Yıldırım, Ö.; Polat, K. Deep Long Short-Term Memory Networks-Based Automatic Recognition of Six Different Digital Modulation Types Under Varying Noise Conditions. Neural Computing and Applications 2019, vol. 31, 1967–1981. [Google Scholar] [CrossRef]

- Vaswani, A.; Shazeer, N.; Parmar, N.; Uszkoreit, J.; Jones, L.; Gomez, A. N.; Kaiser, Ł.; Polosukhin, I. Attention Is All You Need. Proc. NeurIPS, 2017; pp. 6000–6010. [Google Scholar]

- Cai, J.; Gan, F.; Cao, X. Signal Modulation Classification Based on the Transformer Network. IEEE Transactions on Cognitive Communications and Networking 2022, vol. 8(no. 3), 1348–1357. [Google Scholar] [CrossRef]

- Rashvand, N.; Witham, K.; Maldonado, G.; Katariya, V.; Marer Prabhu, N.; Schirner, G.; Tabkhi, H. Enhancing Automatic Modulation Recognition for IoT Applications Using Transformers. IoT 2024, vol. 5, 212–226. [Google Scholar] [CrossRef]

- Lin, S.; Zeng, Y.; Gong, Y. Learning of Time-Frequency Attention Mechanism for Automatic Modulation Recognition. IEEE Wireless Communications Letters 2022, vol. 11, 707–711. [Google Scholar] [CrossRef]

- Dosovitskiy, A. , An Image Is Worth 16x16 Words: Transformers for Image Recognition at Scale. arXiv 2020, arXiv:2010.11929. [Google Scholar]

- Devlin, Jacob; Chang, Ming-Wei; Lee, Kenton; Toutanova, Kristina. BERT: Pre-training of Deep Bidirectional Transformers for Language Understanding. 2018. [Google Scholar]

- Yenduri, G.; Ramalingam, M.; Selvi, G. C.; Supriya, Y.; Srivastava, G.; Maddikunta, P. K. R.; Gadekallu, T. R. GPT (generative pre-trained transformer)—a comprehensive review on enabling technologies, potential applications, emerging challenges, and future directions. IEEE access 2024, 12, 54608–54649. [Google Scholar] [CrossRef]

- Touvron, Hugo; et al. Llama: Open and efficient foundation language models. arXiv 2023, arXiv:2302.13971. [Google Scholar] [CrossRef]

- Wei, W; Zhu, C; Hu, L; Liu, P. Application of a Transfer Learning Model Combining CNN and Self-Attention Mechanism in Wireless Signal Recognition. Sensors (Basel) 2025, 25(13), 4202. [Google Scholar] [CrossRef] [PubMed] [PubMed Central]

- Woo, Sanghyun; Park, Jongchan; Lee, Joon-Young; Kweon, In So. CBAM: Convolutional Block Attention Module. Computer Vision – ECCV 2018: 15th European Conference Proceedings, Part VII, Munich, Germany, September 8–14, 2018; Springer-Verlag, Berlin, Heidelberg, 2018; pp. 3–19. [Google Scholar]

- Thakur, A. S.; Imtiaz, M. Binary Transformer Detectors for Automatic Modulation Detection Under Realistic Radio Frequency Impairments. Preprints 2026, 2026030346. [Google Scholar] [CrossRef]

- Khánh, H.; Doan, S.; Hoang, V.-P. Ensemble of Convolution Neural Networks for Improving Automatic Modulation Classification Performance. Journal of Science and Technology – ICT Issue 2022, 25–32. [Google Scholar] [CrossRef]

- Zheng, S.; Qi, P.; Chen, S.; Yang, X. Fusion Methods for CNN-Based Automatic Modulation Classification. IEEE Access 2019, vol. 7, 66496–66504. [Google Scholar] [CrossRef]

- Gao, L.; Zhang, X.; Gao, J.; You, S. Fusion Image Based Radar Signal Feature Extraction and Modulation Recognition. IEEE Access 2019, vol. 7, 13135–13148. [Google Scholar] [CrossRef]

- Wu, H.; Li, Y.; Zhou, L.; Meng, J. Convolutional Neural Network and Multi-Feature Fusion for Automatic Modulation Classification. Electronics Letters 2019, vol. 55, 895–897. [Google Scholar] [CrossRef]

- Shi, F.; Hu, Z.; Yue, C.; Shen, Z. Combining Neural Networks for Modulation Recognition. Digital Signal Processing 2022, vol. 120, 103264. [Google Scholar] [CrossRef]

- Ji, Zelin; Wang, Shuo; Yang, Kuojun; Zhang, Qinchuan; Ye, Peng. Transfer Learning Guided Noise Reduction for Automatic Modulation Classification. 2024. [Google Scholar] [CrossRef]

- Zhang, W. T.; Cui, D.; Lou, S. T. Training Images Generation for CNN Based Automatic Modulation Classification. IEEE Access 2021, vol. 9, 62916–62925. [Google Scholar] [CrossRef]

- Zhang, M.; Zeng, Y.; Han, Z.; Gong, Y. Automatic modulation recognition using deep learning architectures. Proc. IEEE 19th Int. Workshop on Signal Processing Advances in Wireless Communications (SPAWC), Kalamata, Greece, Jun. 2018; pp. 1–5. [Google Scholar]

- Vaidyanathan, P.P. Multirate Digital Filters, Filter Banks, Polyphase Networks, and Applications. A Tutorial. Proceedings of the IEEE 1990, 78, 56–93. [Google Scholar] [CrossRef]

- Sang, Y.; Li, L. Application of novel architectures for modulation recognition. Proc. IEEE Asia Pacific Conf. on Circuits and Systems (APCCAS), Chengdu, China, Oct. 2018; pp. 159–162. [Google Scholar]

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).