Submitted:

01 April 2026

Posted:

02 April 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

- A three-layer ML framework structured according to the mechanics relationship, enabling hierarchical feature enrichment without additional structural analyses;

- Sensitivity-based secondary input features derived analytically from closed-form stiffness and displacement expressions, embedding domain knowledge directly into the feature engineering process;

- A member-level stiffness representation extracted as submatrices of the global stiffness matrix, encoding inter-member structural context into each member’s feature vector;

- A multi-method interpretability analysis using feature importance rank, Spearman correlation, and mutual information, which reveals the collective discriminative role of nodal coordinate features across all three layers.

2. Machine Learning Models for Structural Reanalysis

2.1. Support Vector Machine

2.2. Ensemble Methods

2.2.1. Random Forest

2.2.2. Gradient Boosting

2.2.3. Extreme Gradient Boosting

2.3. Neural Networks

3. The Proposed Reanalysis Framework of Machine Learning Models

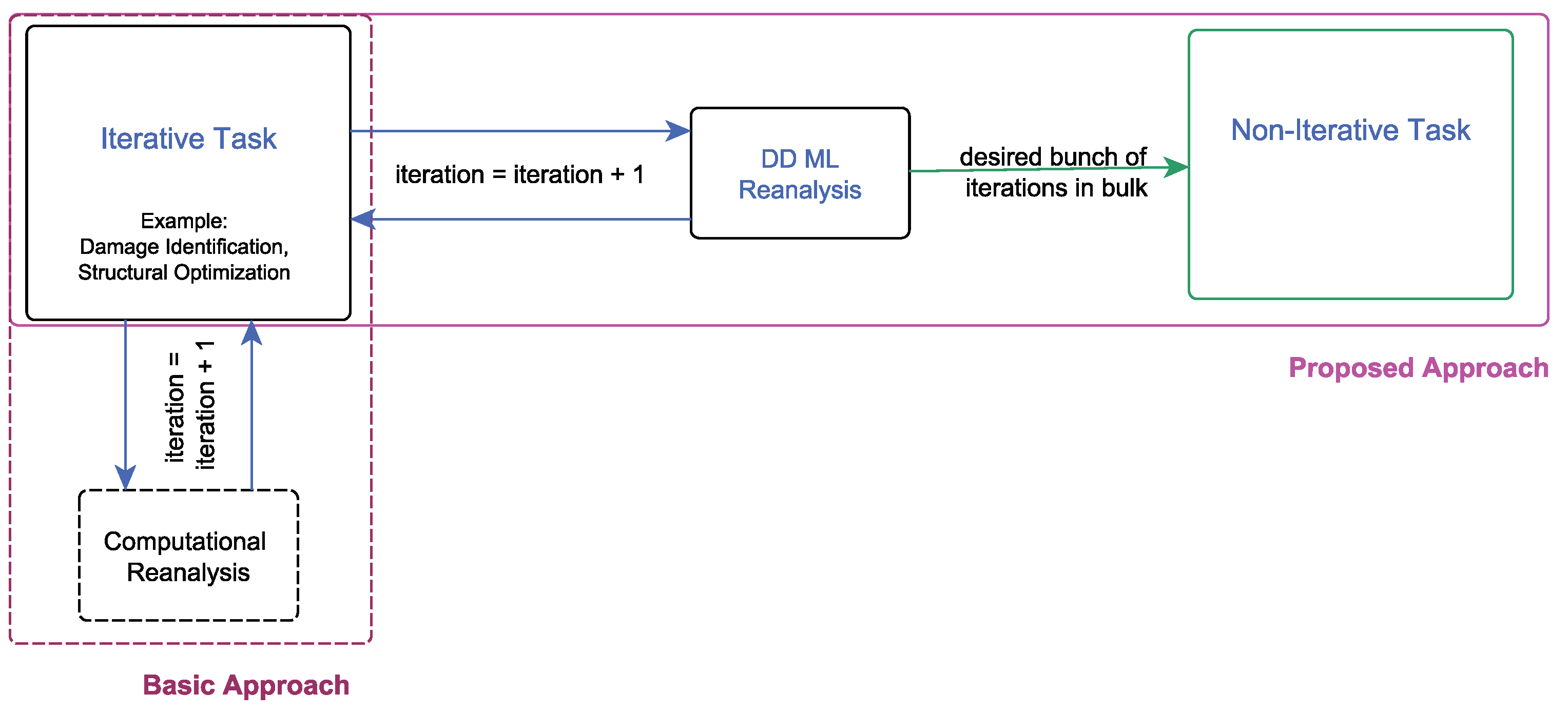

3.1. Framework Integration

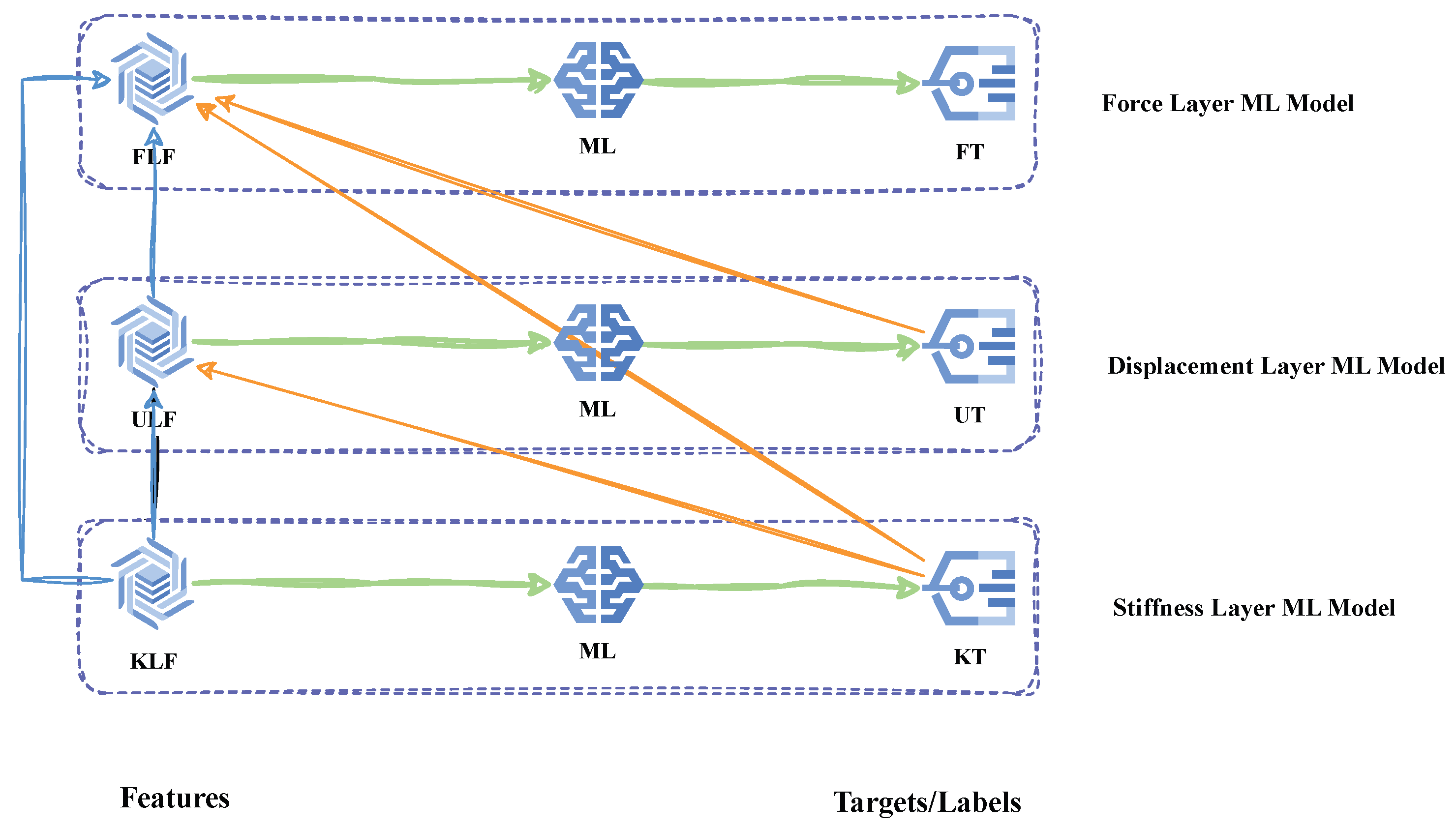

3.2. Layer-Wise Architecture

3.3. Sequential Feature Enrichment

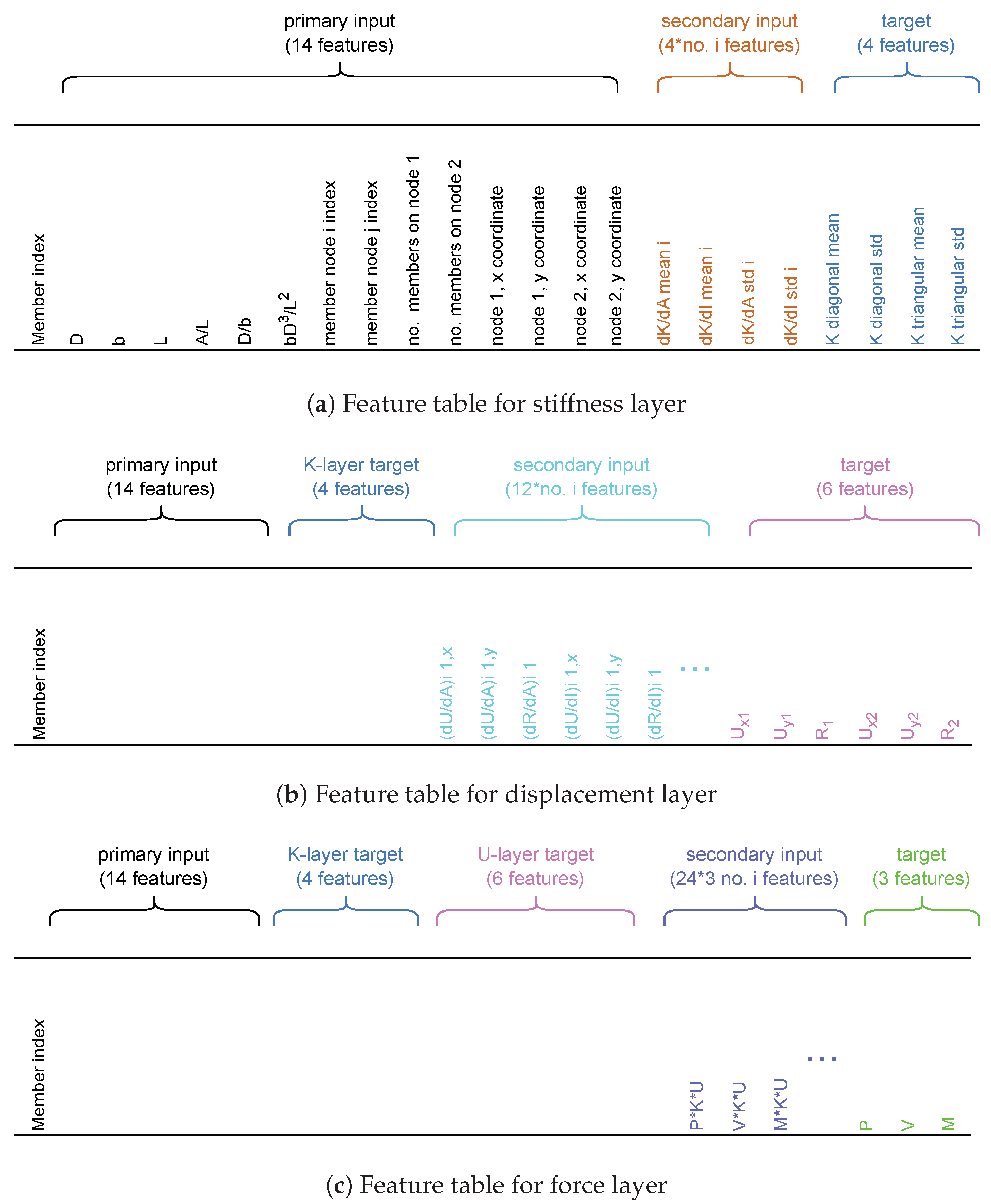

4. Features and Labels

4.1. Features

4.1.1. Stiffness Layer Sensitivity

4.1.2. Displacement Layer Sensitivity

4.1.3. Force Layer Sensitivity

4.2. Labels

4.3. Feature Tables

4.4. General Procedure

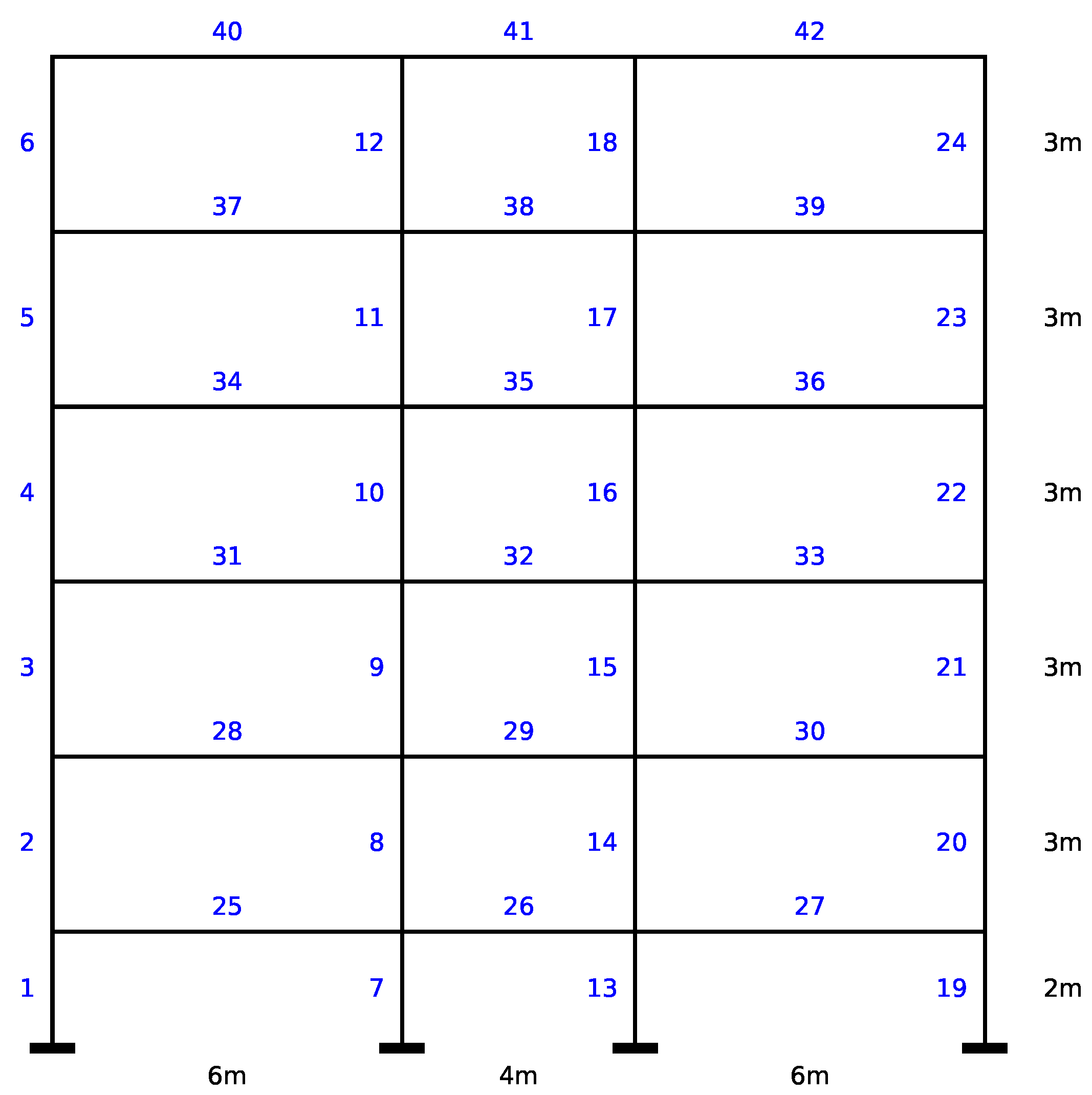

5. Application of the Framework on a 2D RC Building Frame

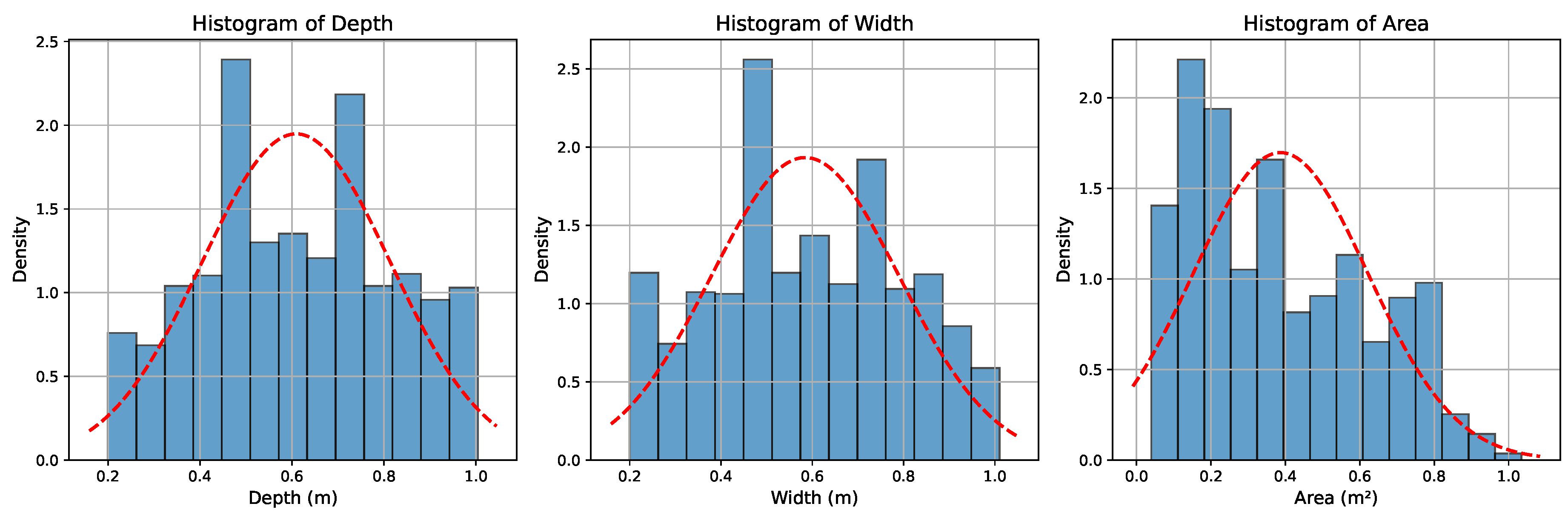

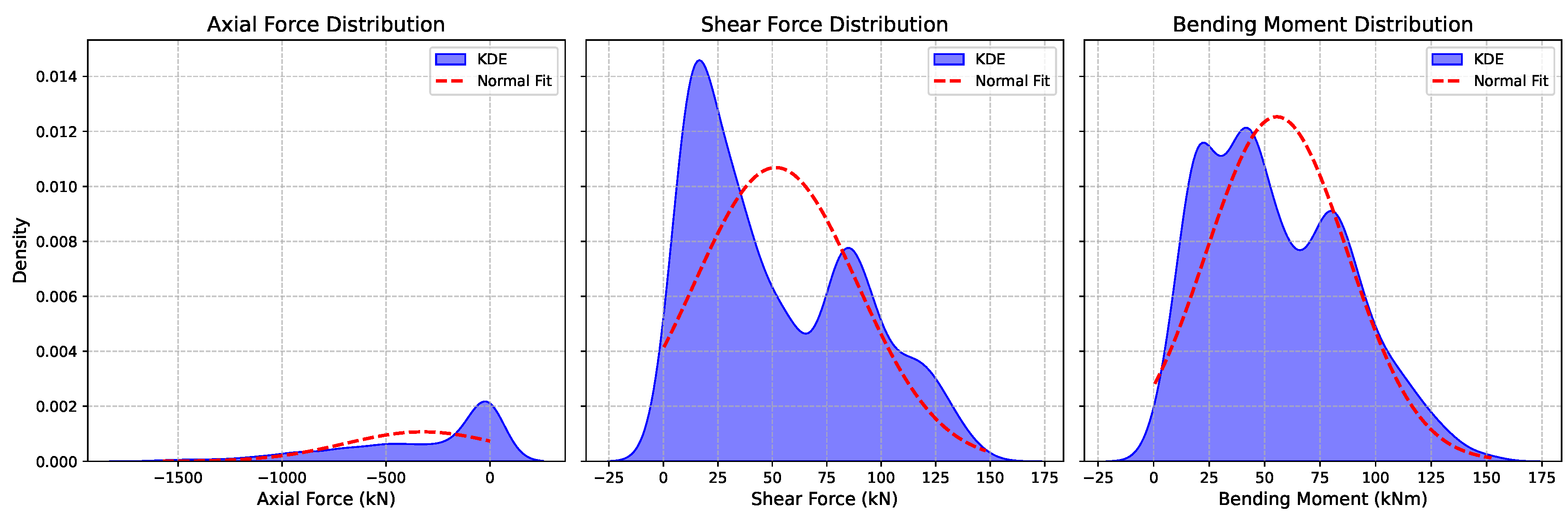

5.1. Dataset

5.2. Experimentation

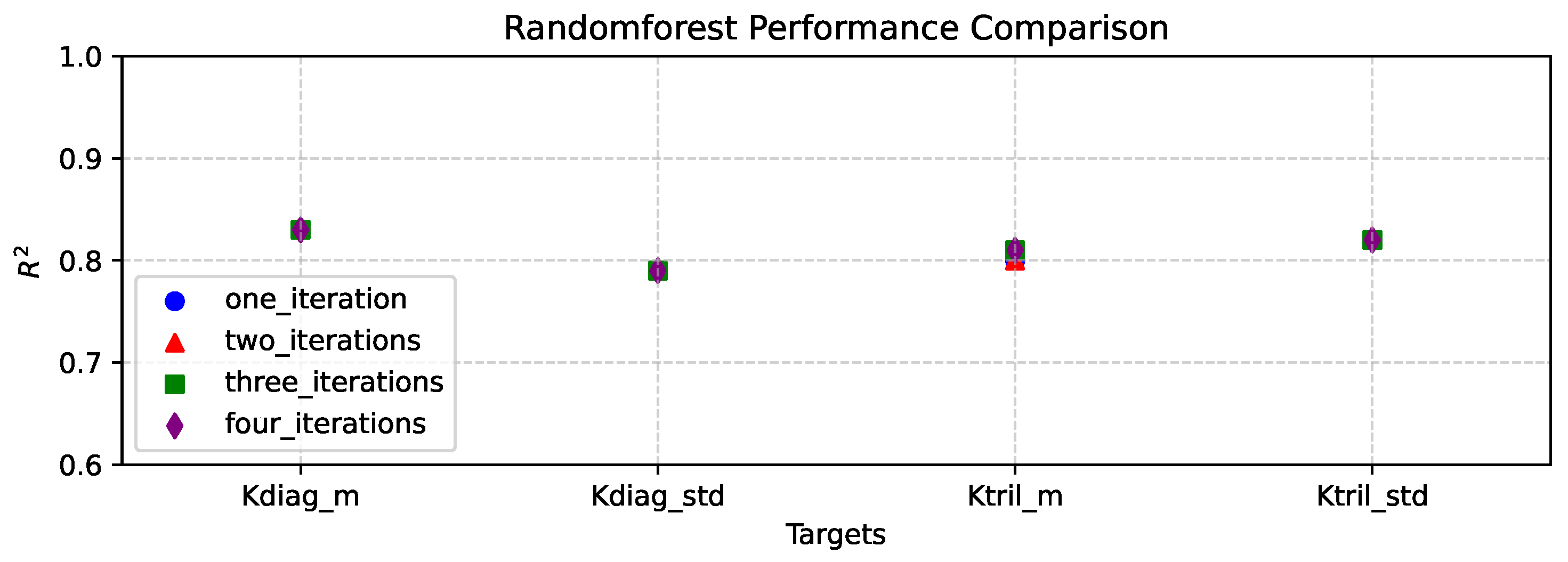

5.2.1. Number of Secondary Input Iterations

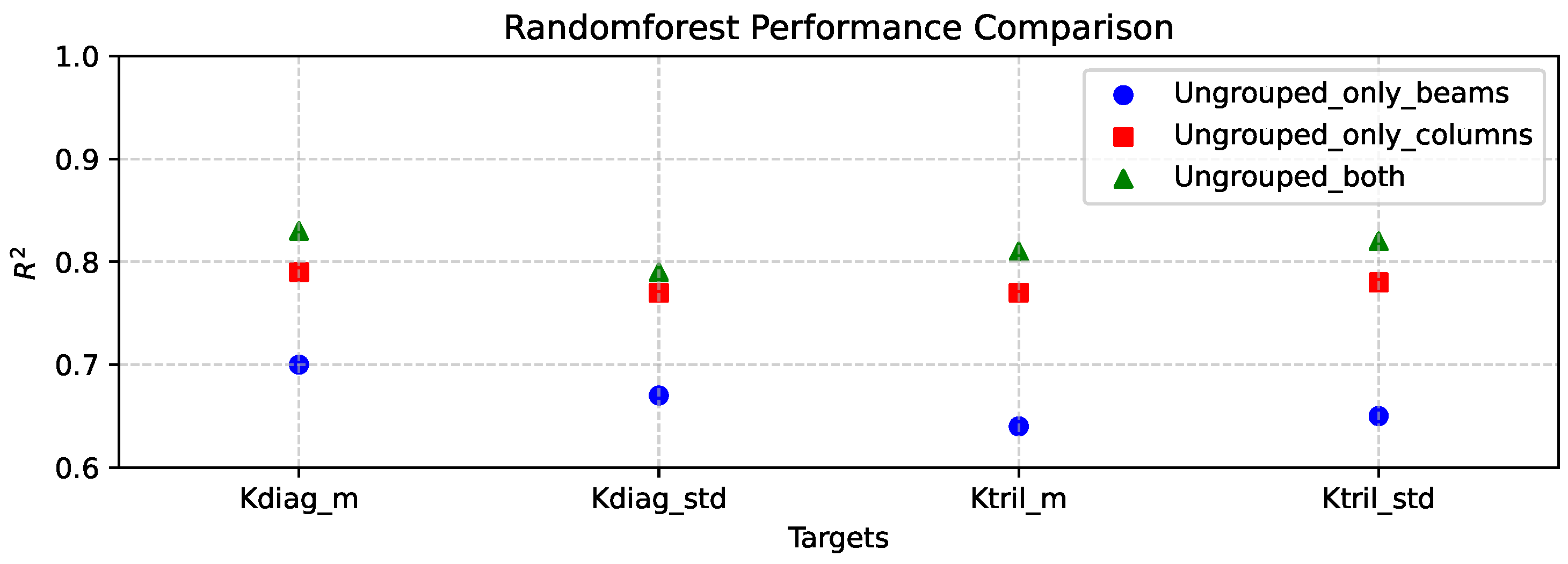

5.2.2. Separate Member Type Consideration

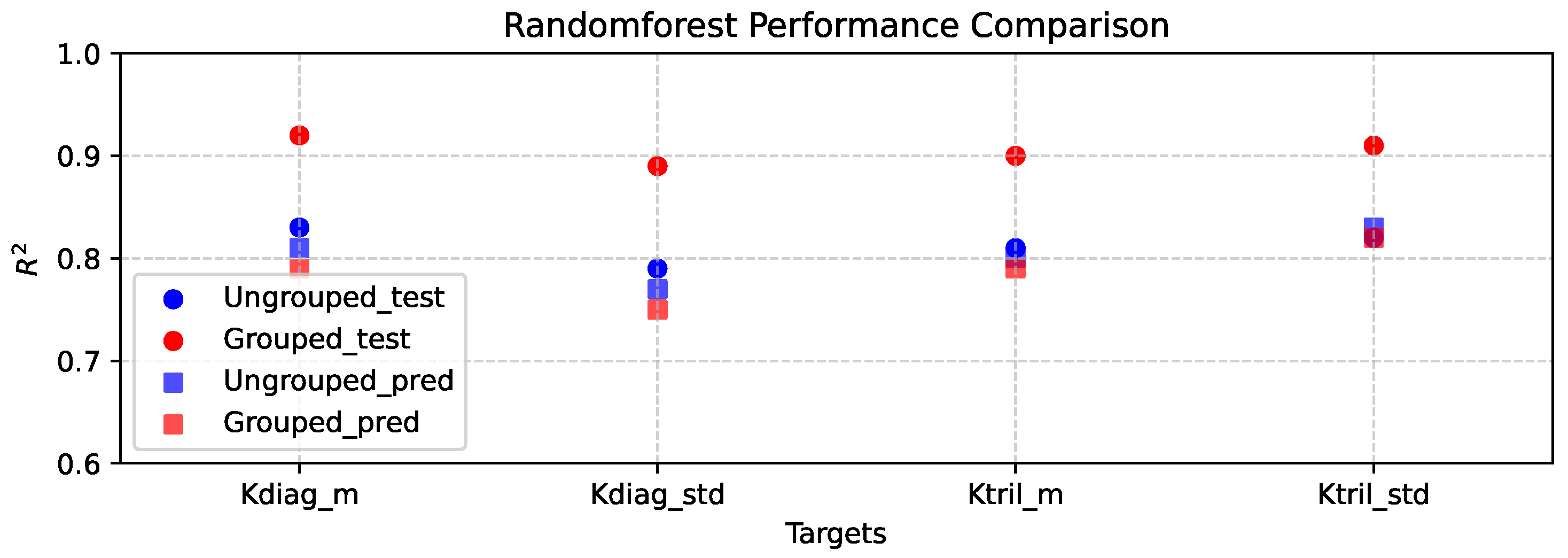

5.2.3. Grouping Members by Iteration

5.2.4. Regression Algorithm Selection

5.3. Results and Discussion

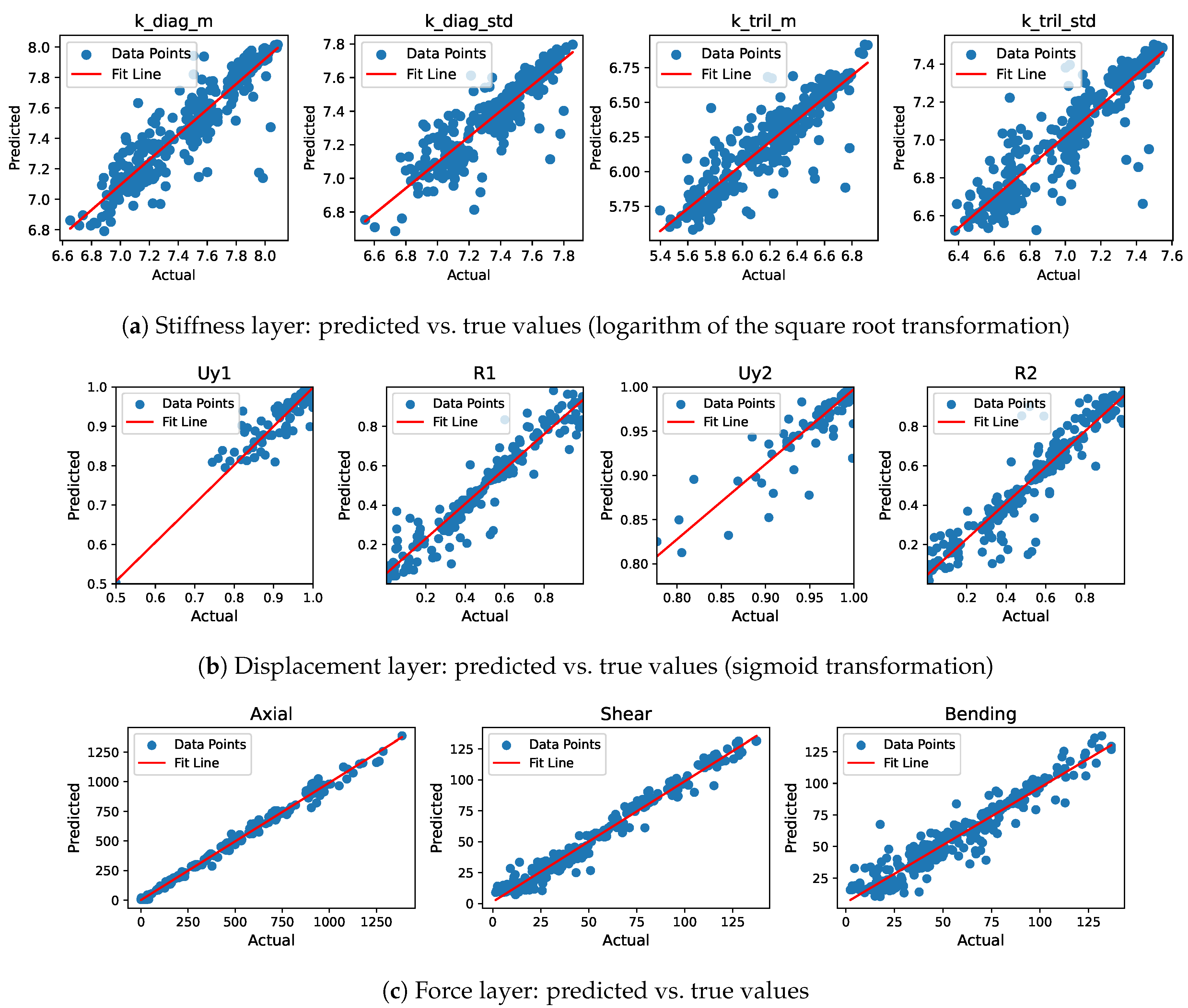

5.3.1. Layer-Wise Model Performance

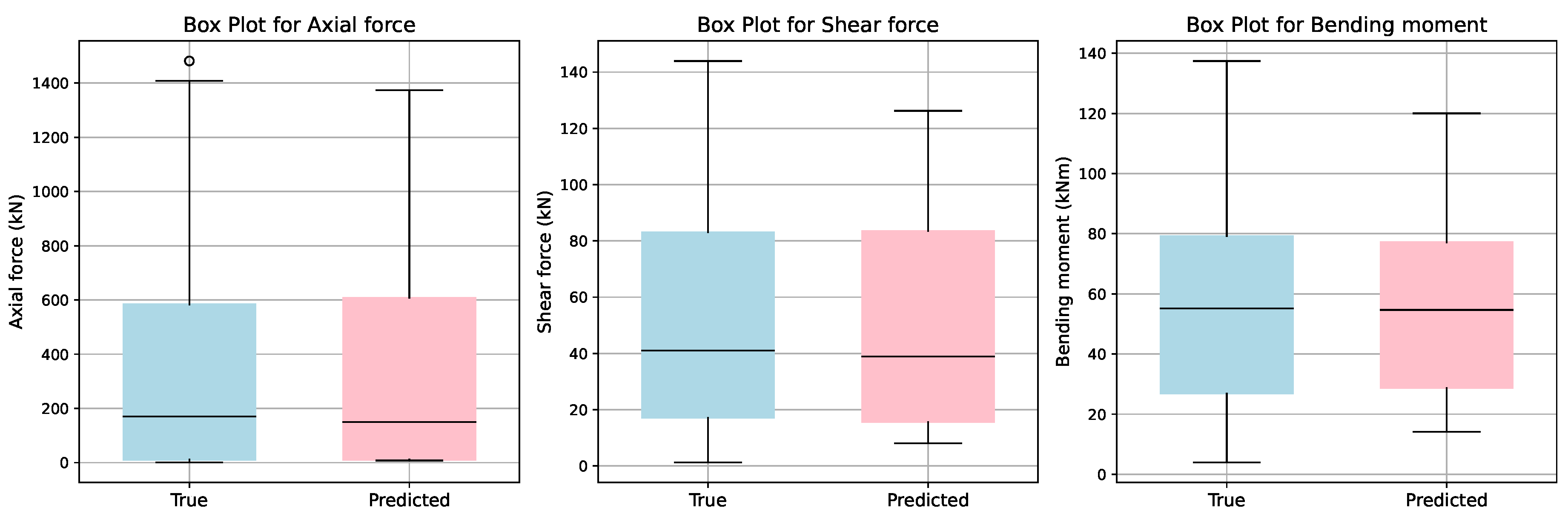

5.3.2. Force Layer Performance by Member Type

5.3.3. Multi-Iteration Prediction on Unseen Data

5.4. Interpretability

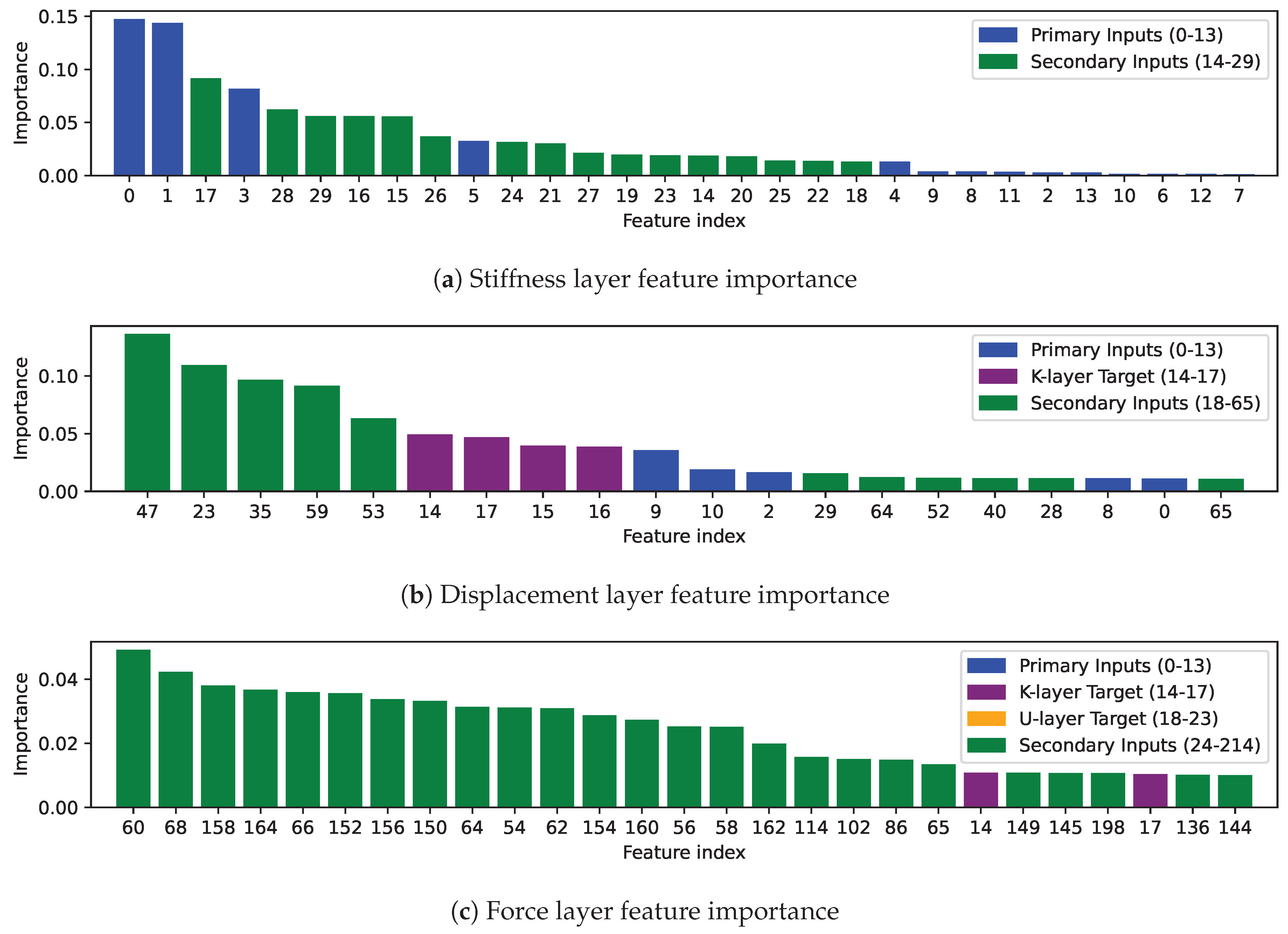

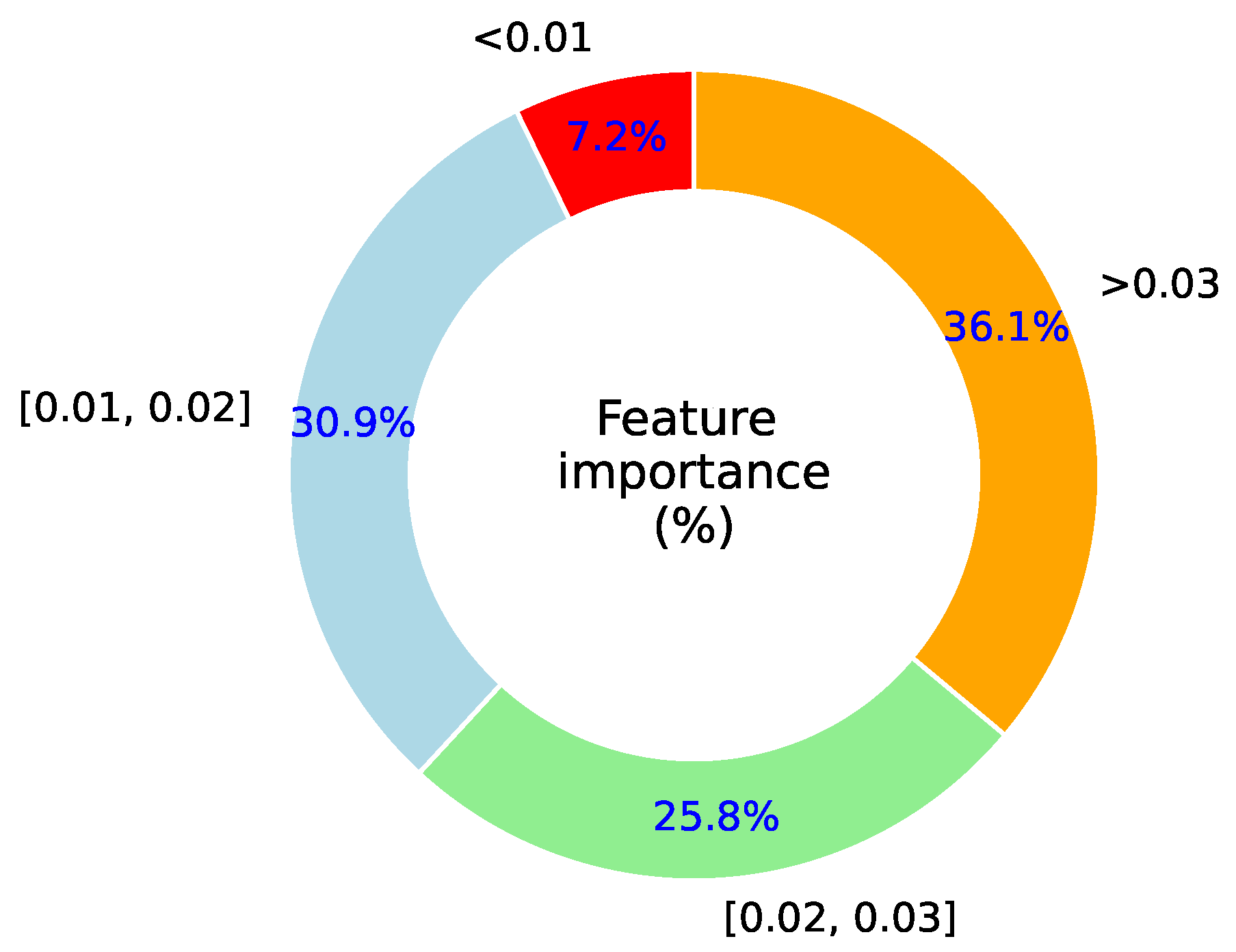

5.4.1. Feature Importance

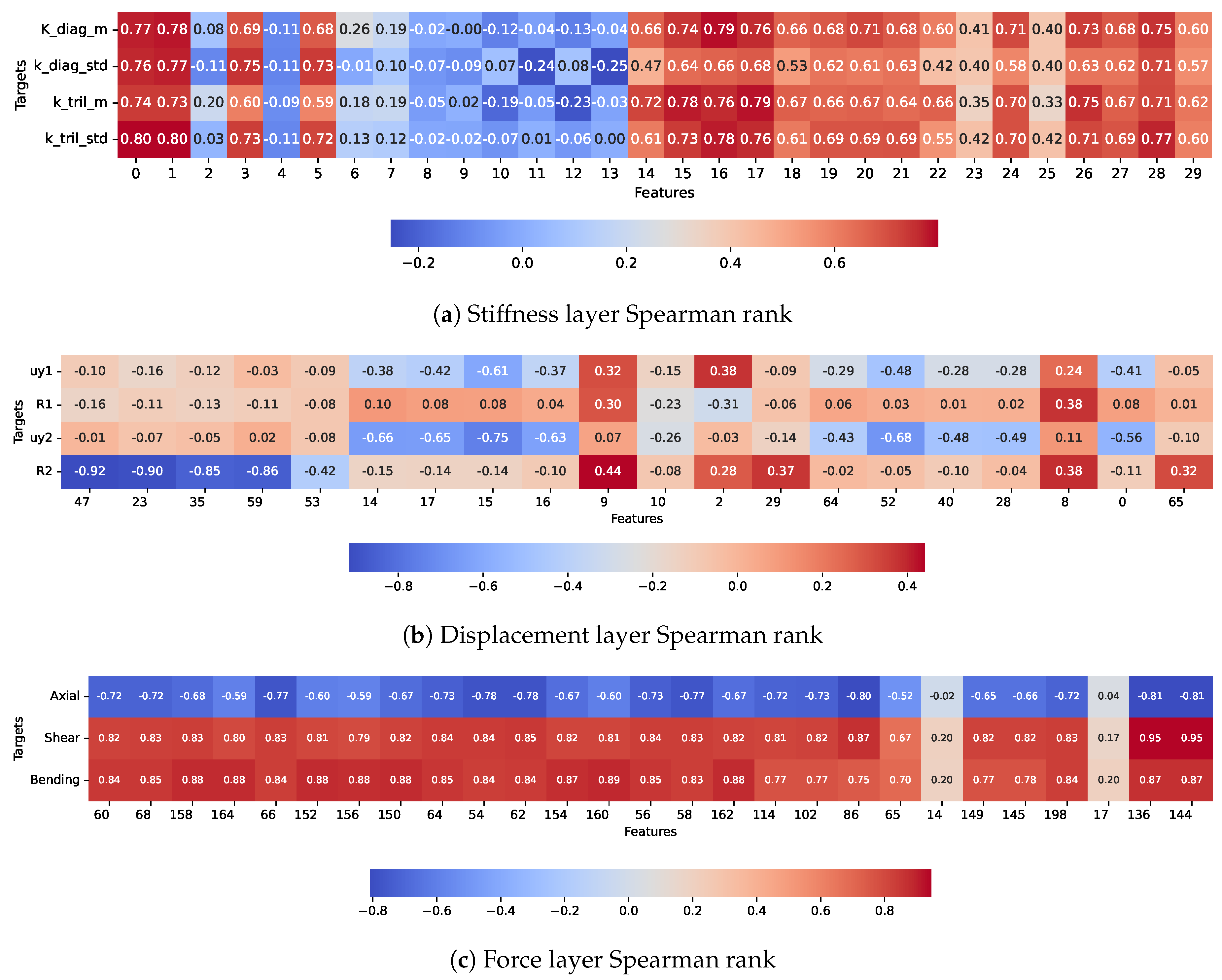

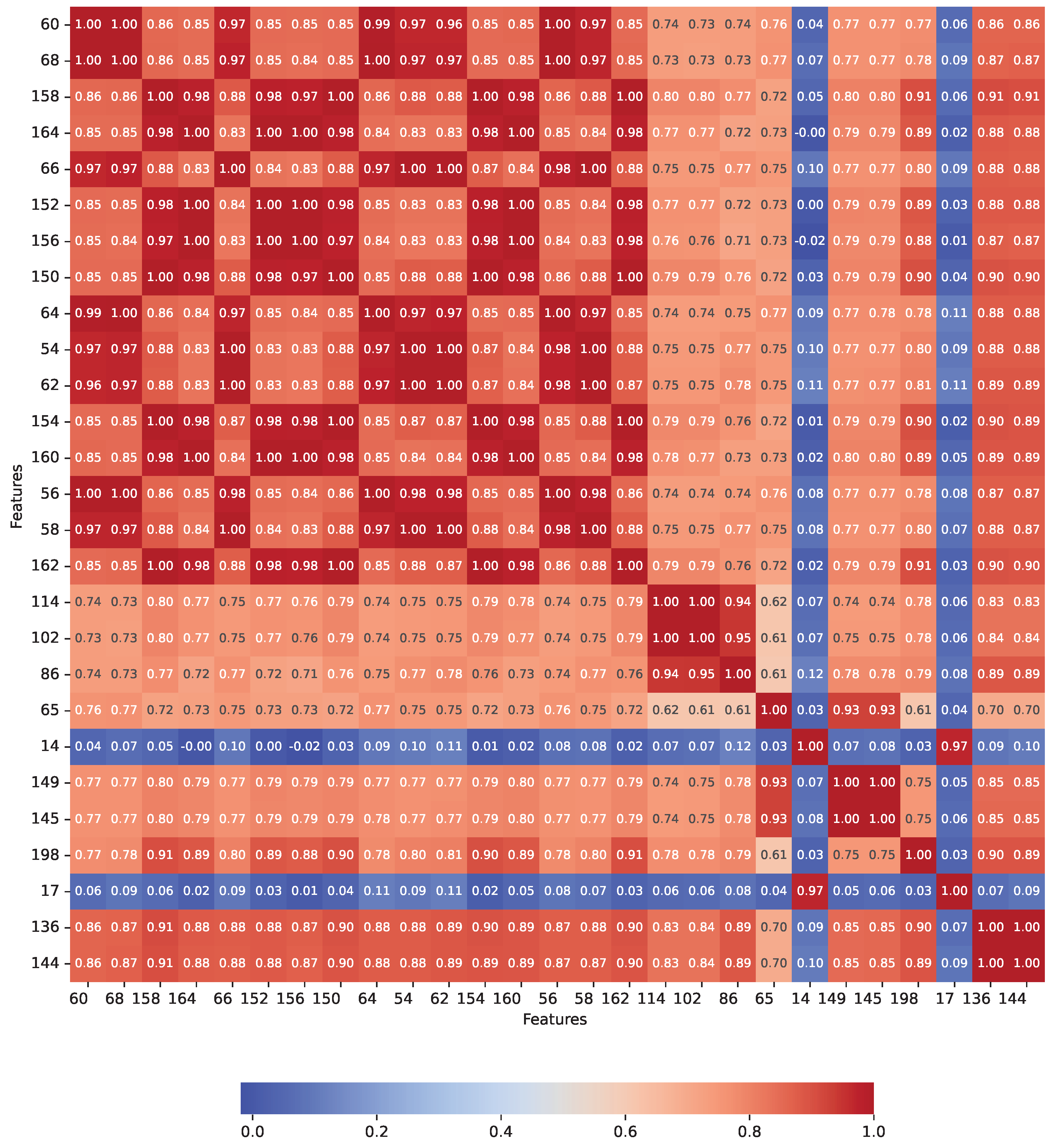

5.4.2. Spearman’s Rank Correlation

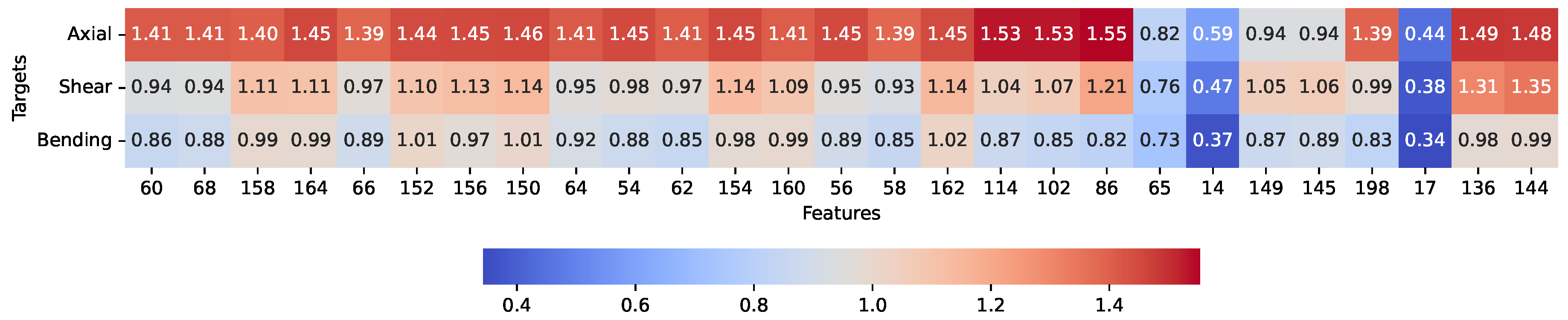

5.4.3. Mutual Information

5.4.4. Role of Nodal Coordinate Features

5.4.5. Cumulative Feature Contribution at the Force Layer

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Akgün, M.A.; Garcelon, J.H.; Haftka, R.T. Fast exact linear and non-linear structural reanalysis and the Sherman–Morrison–Woodbury formulas. International Journal for Numerical Methods in Engineering 2001, 50, 1587–1606. [CrossRef]

- Chen, S.; Yang, X.; Lian, H. Comparison of several eigenvalue reanalysis methods for modified structures. Structural and Multidisciplinary optimization 2000, 20, 253–259. [CrossRef]

- Kirsch, U. Reanalysis of structures; Springer, 2008.

- Kirsch, U. Combined approximations–a general reanalysis approach for structural optimization. Structural and Multidisciplinary Optimization 2000, 20, 97–106. [CrossRef]

- Lee, M.; Jung, Y.; Choi, J.; Lee, I. A reanalysis-based multi-fidelity (RBMF) surrogate framework for efficient structural optimization. Computers & Structures 2022, 273, 106895.

- Jenkins, W. A neural network for structural re-analysis. Computers & Structures 1999, 72, 687–698. [CrossRef]

- Jenkins, W. Structural reanalysis using a neural network-based iterative method. Journal of Structural Engineering 2002, 128, 946–950. [CrossRef]

- Ahmad, A.; Cotsovos, D.M.; Lagaros, N.D. Framework for the development of artificial neural networks for predicting the load carrying capacity of RC members. SN Applied Sciences 2020, 2, 1–21. [CrossRef]

- Tandale, S.B.; Markert, B.; Stoffel, M. Smart stiffness computation of one-dimensional finite elements. Mechanics Research Communications 2022, 119, 103817. [CrossRef]

- Kaveh, A.; Eslamlou, A.D. Metaheuristic optimization algorithms in civil engineering: new applications; Springer, 2020.

- Bao, Y.; Li, H. Machine learning paradigm for structural health monitoring. Structural health monitoring 2021, 20, 1353–1372. [CrossRef]

- Thai, H.T. Machine learning for structural engineering: A state-of-the-art review. In Proceedings of the Structures. Elsevier, 2022, Vol. 38, pp. 448–491.

- Alemu, Y.L.; Lahmer, T.; Walther, C. Damage Detection with Data-Driven Machine Learning Models on an Experimental Structure. Eng 2024, 5, 629–656. [CrossRef]

- Champaney, V.; Chinesta, F.; Cueto, E. Engineering empowered by physics-based and data-driven hybrid models: A methodological overview. International Journal of Material Forming 2022, 15, 31. [CrossRef]

- Zhou, Y.; Meng, S.; Lou, Y.; Kong, Q. Physics-informed deep learning-based real-time structural response prediction method. Engineering 2024, 35, 140–157. [CrossRef]

- Truong, V.H.; Pham, H.A. Support vector machine for regression of ultimate strength of trusses: A comparative study. Engineering Journal 2021, 25, 157–166. [CrossRef]

- Yetilmezsoy, K.; Sihag, P.; Kıyan, E.; Doran, B. A benchmark comparison and optimization of Gaussian process regression, support vector machines, and M5P tree model in approximation of the lateral confinement coefficient for CFRP-wrapped rectangular/square RC columns. Engineering Structures 2021, 246, 113106. [CrossRef]

- Lu, S.; Jiang, M.; Wang, X.; Yu, H.; Su, C. Damage degree prediction method of CFRP structure based on fiber Bragg grating and epsilon-support vector regression. Optik 2019, 180, 244–253. [CrossRef]

- Khan, A.A.; Chaudhari, O.; Chandra, R. A review of ensemble learning and data augmentation models for class imbalanced problems: Combination, implementation and evaluation. Expert Systems with Applications 2023, p. 122778. [CrossRef]

- Louk, M.H.L.; Tama, B.A. Dual-IDS: A bagging-based gradient boosting decision tree model for network anomaly intrusion detection system. Expert Systems with Applications 2023, 213, 119030. [CrossRef]

- Bentéjac, C.; Csörgo, A.; Martínez-Muñoz, G. A comparative analysis of gradient boosting algorithms. Artificial Intelligence Review 2021, 54, 1937–1967. [CrossRef]

- Ganaie, M.A.; Hu, M.; Malik, A.K.; Tanveer, M.; Suganthan, P.N. Ensemble deep learning: A review. Engineering Applications of Artificial Intelligence 2022, 115, 105151. [CrossRef]

- Goodfellow, I.; Bengio, Y.; Courville, A. Deep learning; Vol. 1, MIT press Cambridge, 2016.

- Cianci, E.; Civera, M.; De Biagi, V.; Chiaia, B. Physics-informed machine learning for the structural health monitoring and early warning of a long highway viaduct with displacement transducers. Mechanical Systems and Signal Processing 2026, 242, 113659. [CrossRef]

- Alemu, Y.L.; Habte, B.; Lahmer, T.; Urgessa, G. Priority Criteria (PC) Based Particle Swarm Optimization of Reinforced Concrete Frames (PCPSO). CivilEng 2023, 4, 679–701. [CrossRef]

- Demir, A.; Sahin, E.K.; Demir, S. Advanced tree-based machine learning methods for predicting the seismic response of regular and irregular RC frames. In Proceedings of the Structures. Elsevier, 2024, Vol. 64, p. 106524.

- Yahiaoui, A.; Dorbani, S.; Yahiaoui, L. Machine learning techniques to predict the fundamental period of infilled reinforced concrete frame buildings. In Proceedings of the Structures. Elsevier, 2023, Vol. 54, pp. 918–927.

| Feature category | Features | Framework layer |

|---|---|---|

| Geometry | depth, width, length and their derivatives | All layers |

| Stiffness | lower triangular stiffness mean, standard deviation | Stiffness |

| Displacement | deflections and rotations | Displacement |

| Sensitivities | stiffness and displacement with respect to area and moment of inertia |

Stiffness and Displacement |

| Force | augmentation of stiffness, displacement and force | Force |

| Structural information | members, nodal connectivity, degree of freedom, nodal coordinates |

All layers |

| Framework layer | Target Labels | No. of Targets | Transformation |

|---|---|---|---|

| Stiffness layer | Diagonal stiffness matrix: mean Diagonal stiffness matrix: standard deviation Lower triangular stiffness matrix: mean Lower triangular stiffness matrix: standard deviation |

4 | logarithm of square root |

| Displacement layer |

Translations Rotations |

6 | sigmoid |

| Force layer | Internal forces | 3 | — |

| No. | Main activity | Activity | Sub-activity |

|---|---|---|---|

| 1 | Data Collection | FEM-based structural simulation and analysis

for a selected number of iterations. Extracting required data from the simulation and analysis. |

The main variables changing in each iteration are

cross-sectional sizes. Step size and cross-sectional limits

for each member type (beam or column) shall be defined.

Sampling approach: a mix of random and uniform

distribution. Cross-section, member length, stiffness matrix, nodal coordinates, nodal and member connectivity, degree of freedom, deformation results, and internal force results are extracted. |

| 2 | Data Preparation | Preparing the extracted data in a data frame format. | Extracting member stiffness from the global stiffness matrix

using connectivity and degree-of-freedom information. Nodal coordinates, connectivity, geometry, deformation, and force for each member are retained. |

| 3 | Feature Tables and ML Model Training | Splitting into primary and secondary inputs. Setting targets for each framework layer. Preferred regression model trained sequentially along the layers, with results evaluated. |

Arround 80% of data used as primary input computation.

Equation 3–Equation 6 applied to compute

secondary inputs. Targets follow Figure 3. Appropriate evaluation metrics applied. |

| 4 | Framework Deployment for Reanalysis Prediction | For a given set of cross-sections for members of the same structure: input data provided and predictions generated. | Primary input updated with new cross-sectional sizes. Secondary inputs for the first two layers updated based on current cross-sectional variations. Stiffness layer targets predicted. Displacement layer targets predicted using stiffness layer outputs. Force layer secondary input updated and force targets predicted. |

| Model | RMSE | |||||||

|---|---|---|---|---|---|---|---|---|

| RF | 0.83 | 0.79 | 0.81 | 0.82 | 0.14 | 0.13 | 0.16 | 0.13 |

| GB | 0.80 | 0.74 | 0.75 | 0.77 | 0.16 | 0.14 | 0.18 | 0.15 |

| XGB | 0.79 | 0.75 | 0.75 | 0.78 | 0.16 | 0.14 | 0.18 | 0.15 |

| SVM | 0.83 | 0.78 | 0.80 | 0.81 | 0.14 | 0.13 | 0.16 | 0.14 |

| NN | 0.75 | 0.66 | 0.73 | 0.73 | 0.16 | 0.14 | 0.17 | 0.12 |

| Metric | Stiffness layer | Displacement layer | Force layer | ||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| 0.83 | 0.79 | 0.81 | 0.82 | 0.98 | 0.92 | 0.86 | 0.90 | 0.99 | 0.98 | 0.91 | |

| RMSE | 0.14 | 0.13 | 0.16 | 0.13 | 0.018 | 0.066 | 0.013 | 0.08 | 23.94 | 5.4 | 9.17 |

| MAE | 0.11 | 0.09 | 0.11 | 0.1 | 0.008 | 0.039 | 0.005 | 0.048 | 14.56 | 3.97 | 6.41 |

| Metric | Data | Beams only | Columns only | Beams and columns | ||||||

|---|---|---|---|---|---|---|---|---|---|---|

| RMSE | Test | 6.93 | 5.92 | 8.99 | 33.77 | 5.28 | 9.06 | 25.27 | 5.65 | 9.01 |

| 0.71 | 0.93 | 0.87 | 0.99 | 0.82 | 0.84 | 0.99 | 0.98 | 0.92 | ||

| RMSE | Unseen | 7.81 | 5.56 | 8.90 | 30.19 | 4.90 | 9.04 | 23.94 | 5.40 | 9.17 |

| 0.66 | 0.94 | 0.90 | 0.99 | 0.85 | 0.83 | 0.99 | 0.98 | 0.91 | ||

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).