Submitted:

27 March 2026

Posted:

31 March 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Related Work

2.1. Battery SOH Estimation and RUL Prediction

2.2. Personalization and Transfer Learning for Batteries

2.3. Concept Drift and Lightweight Adaptation

3. Method

3.1. Problem Setup

3.2. Backbone Model and Baseline Setting

3.3. Parameter-Efficient Online Personalization

3.4. Adaptive Temporal Windowing

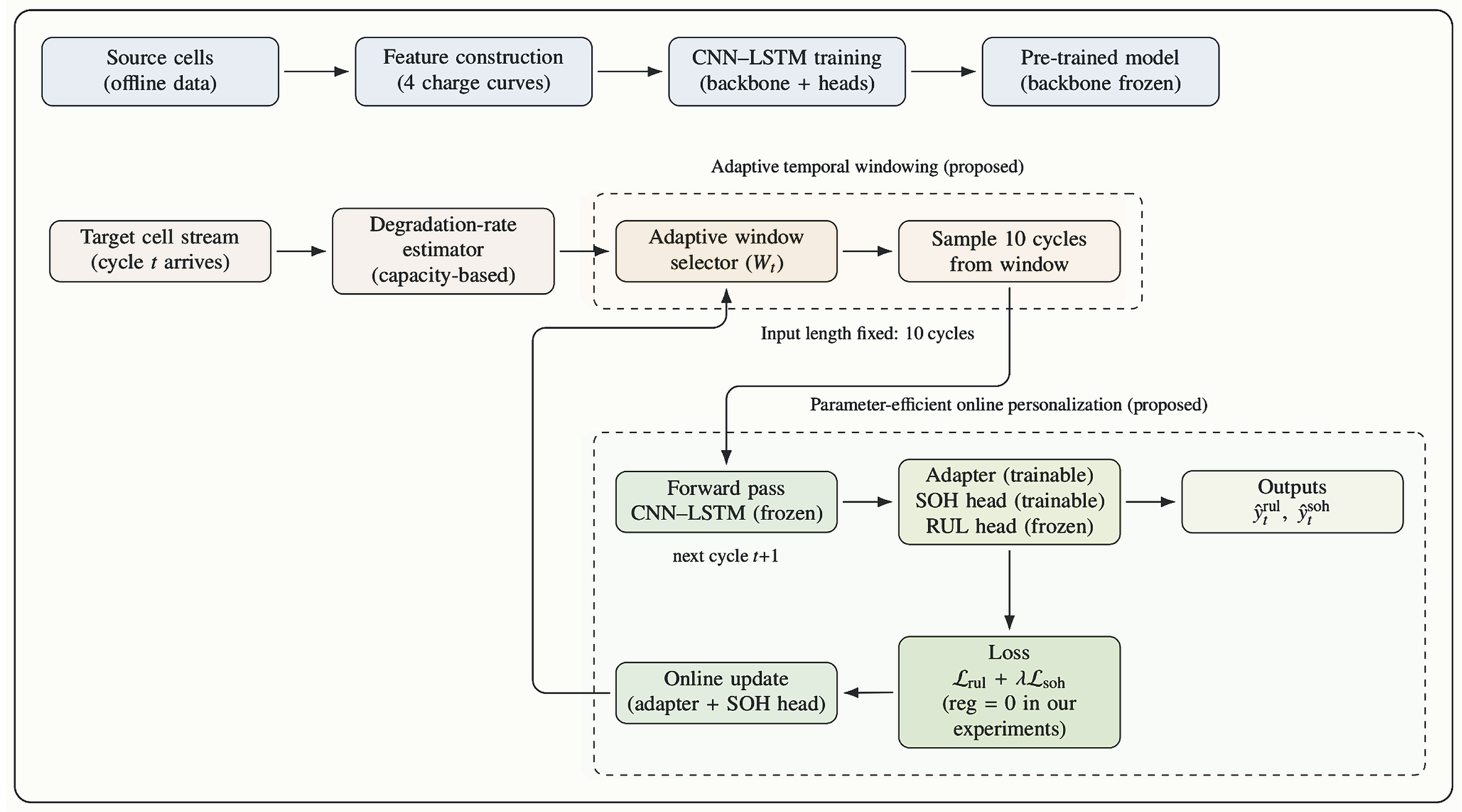

3.5. Overall Workflow of the Proposed Online Personalization Framework

4. Experiments and Results

4.1. Dataset and Setup

- Baseline: fixed history span with stride (30 cycles → 10 sampled cycles); online fine-tuning updates only the LSTM parameters while keeping the CNN and prediction heads frozen (74,880 trainable parameters) [8].

- PEAT (full): adaptive temporal windowing with candidate spans (strides ); parameter-efficient online adaptation updates only the adapter and SOH head while keeping the backbone and RUL head frozen (2,193 trainable parameters).

- PEAT without adaptive windowing (A-only): fixed history span and ; online adaptation updates only the adapter and SOH head (2,193 trainable parameters).

- PEAT without parameter-efficient adaptation (B-only): adaptive temporal windowing with candidate spans ; Ma-style online fine-tuning updates only the LSTM parameters (74,880 trainable parameters).

4.2. Metrics

4.3. Experimental Results for RUL

4.4. Component Study and Complementary Roles

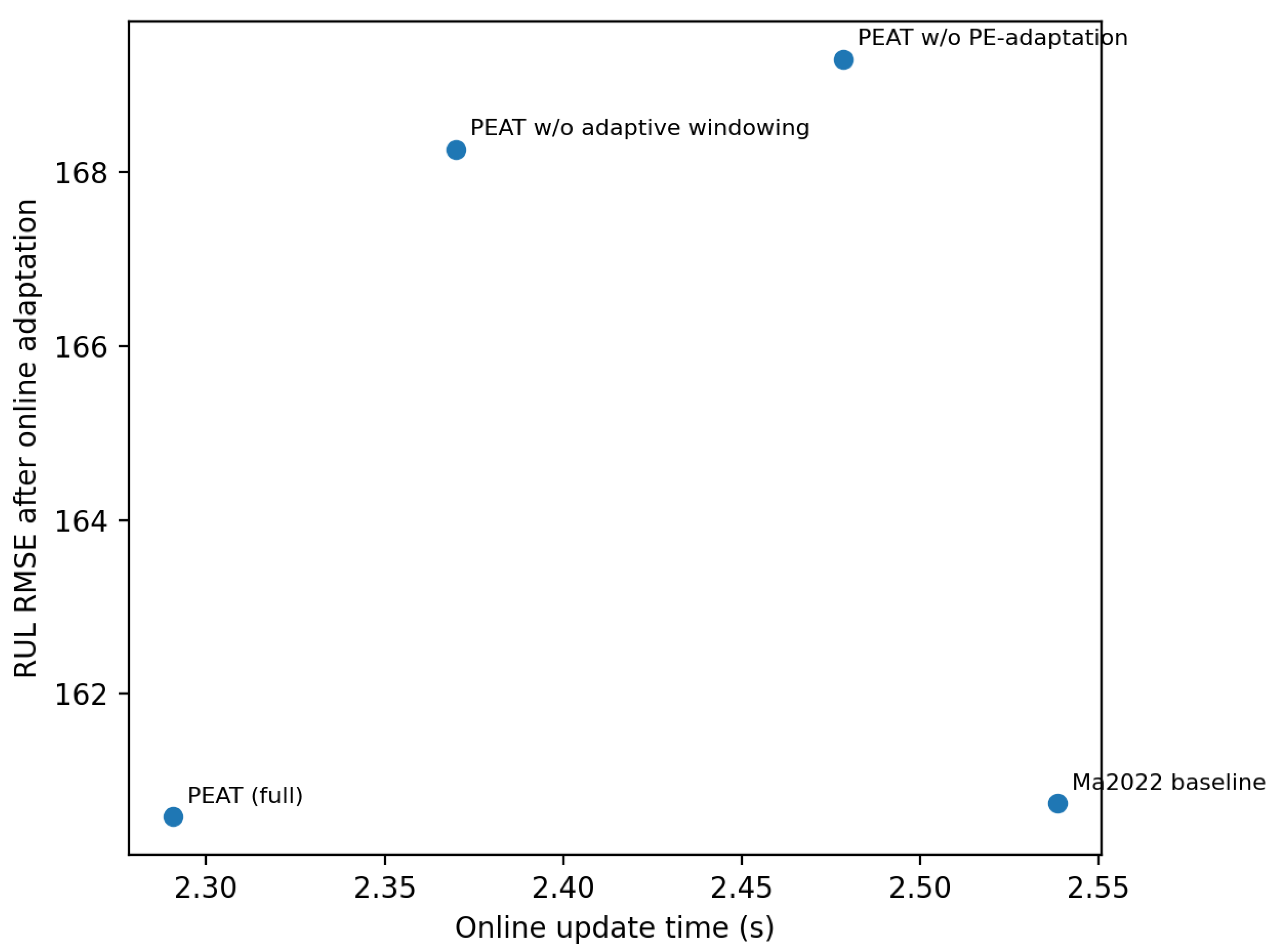

4.5. Efficiency and Accuracy–Cost Trade-off

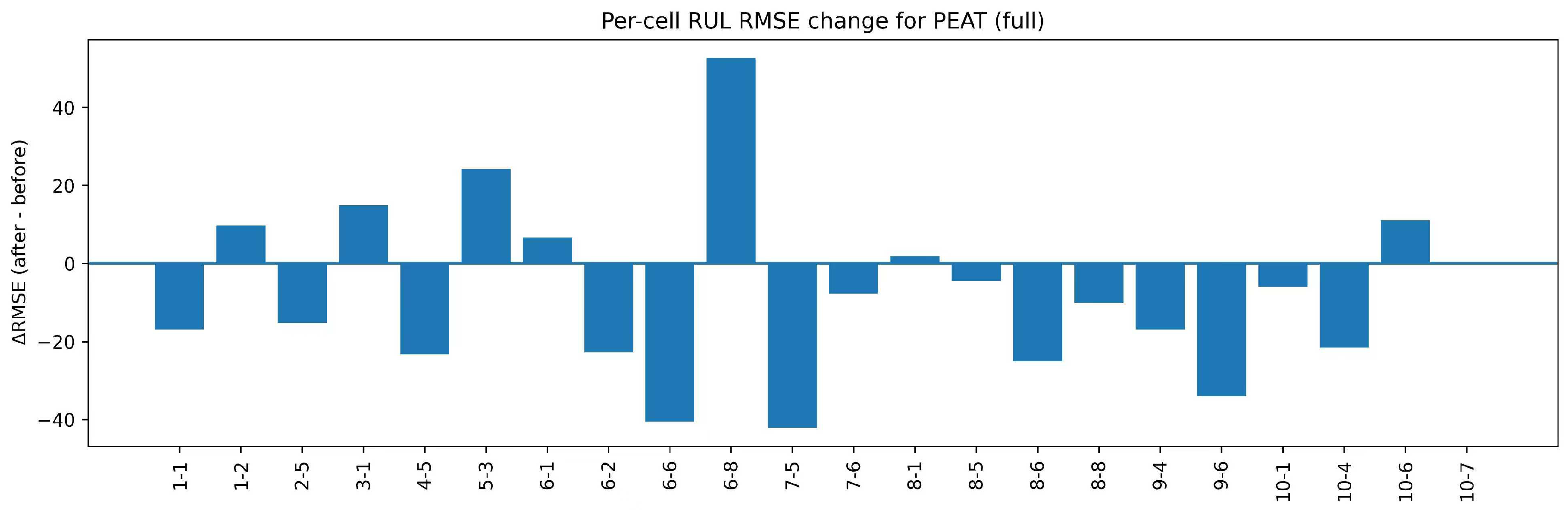

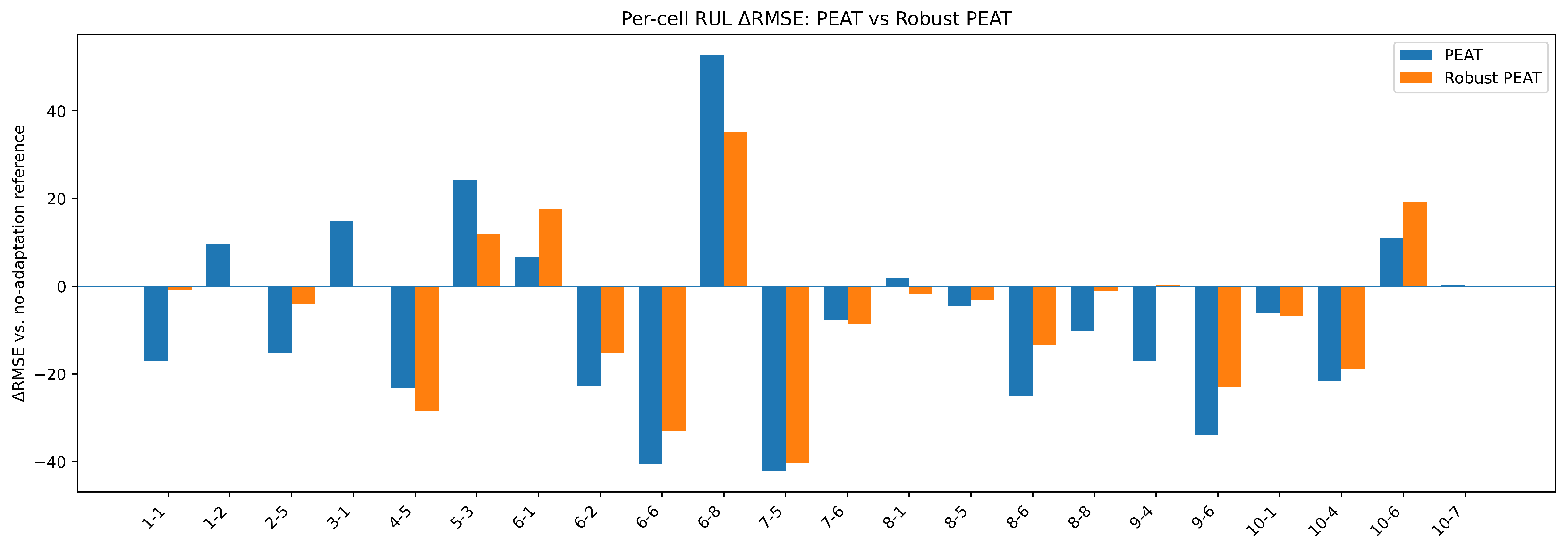

4.6. Per-Cell Error Change Under PEAT

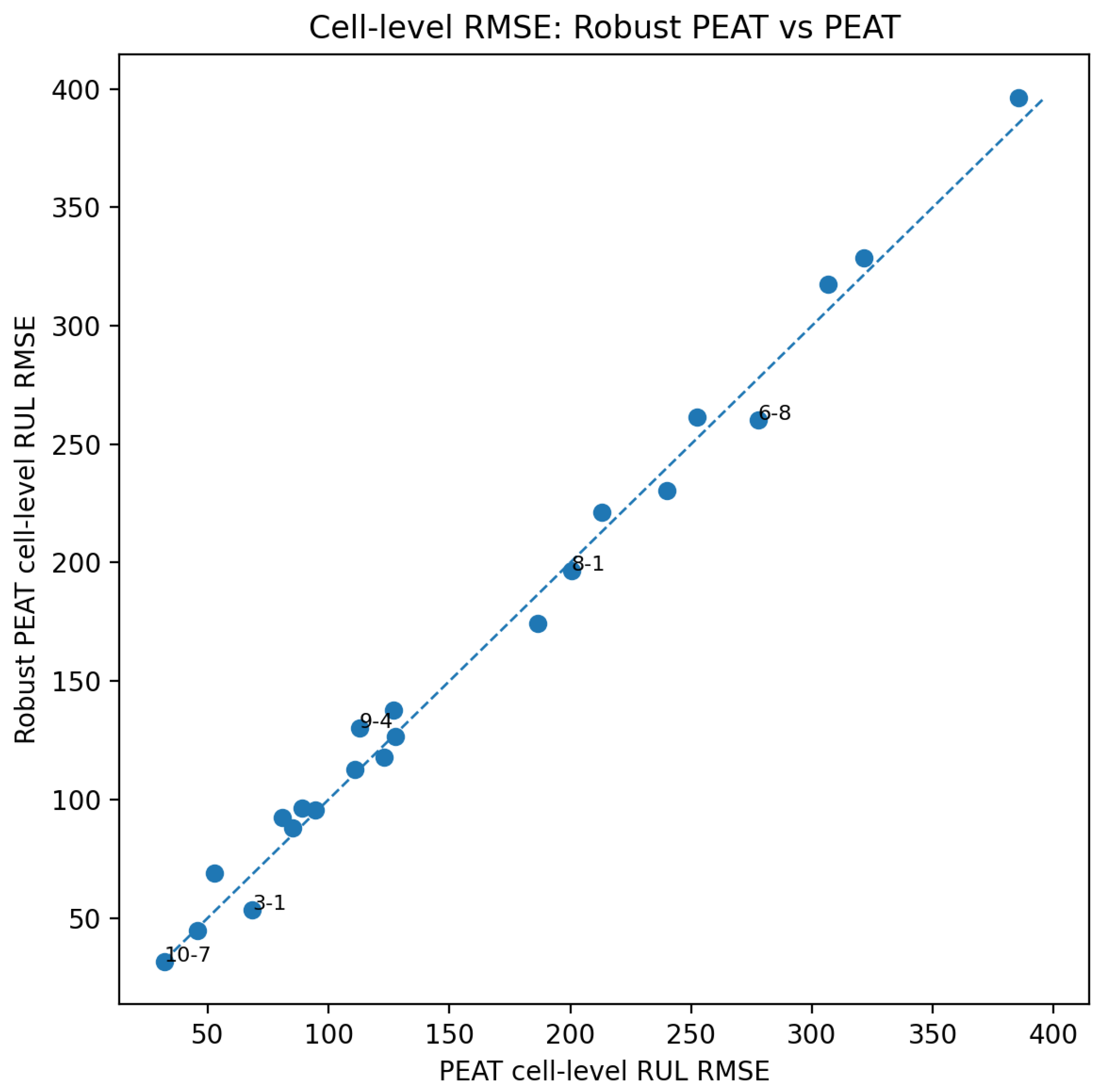

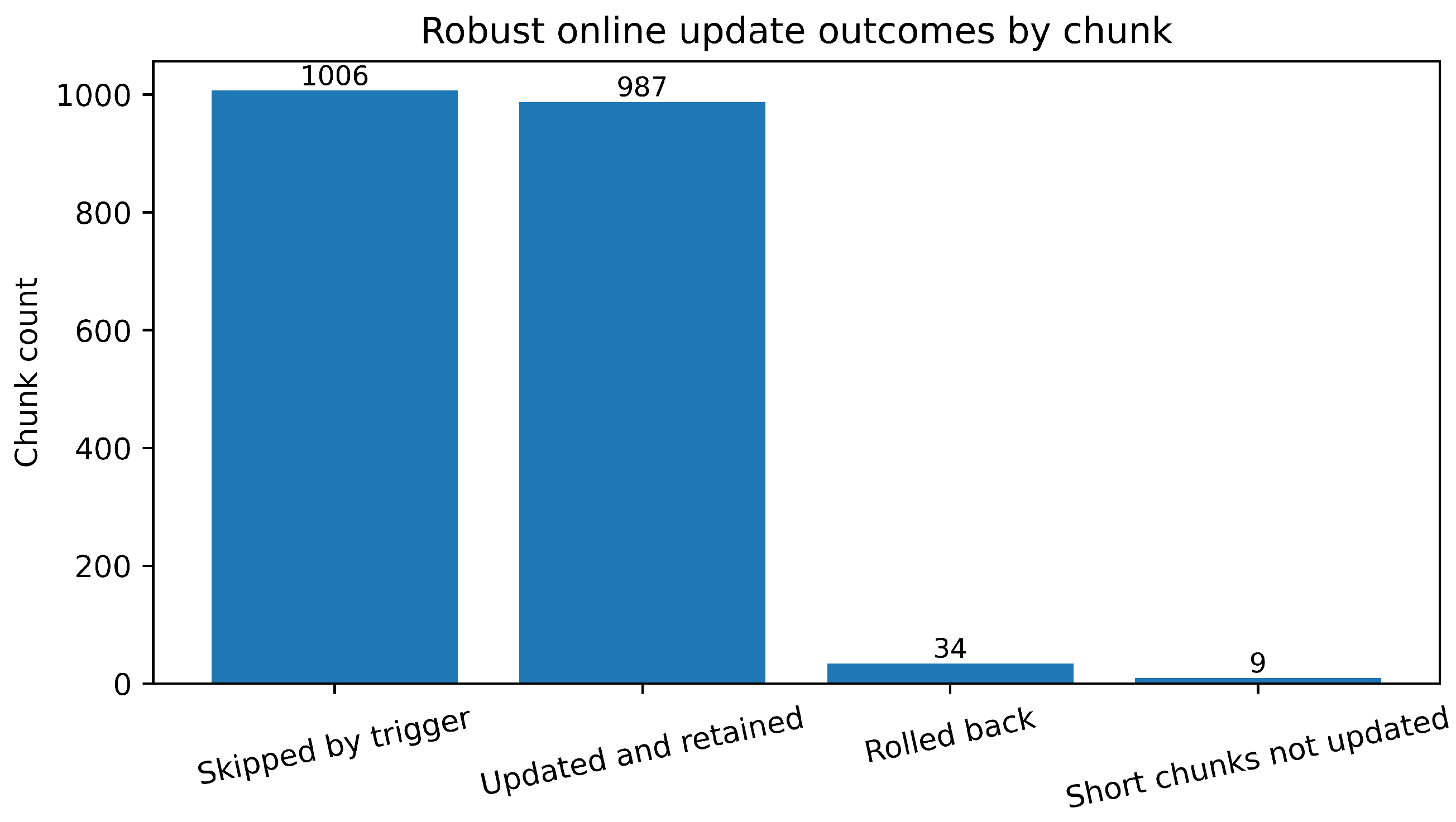

4.7. Robustness Analysis of Conservative Online Updating

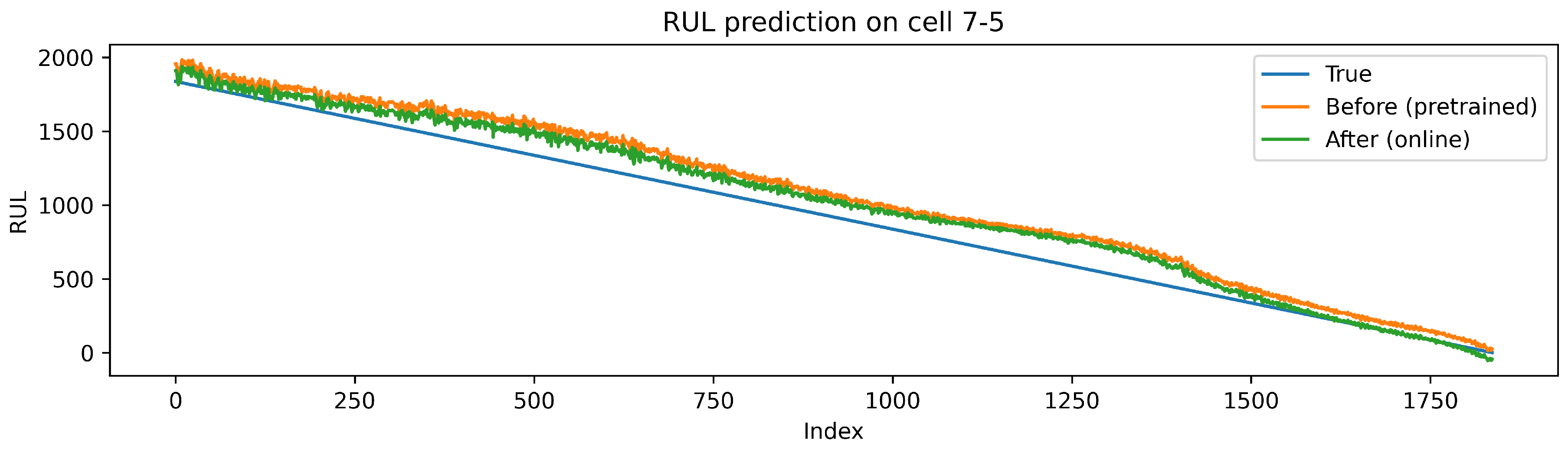

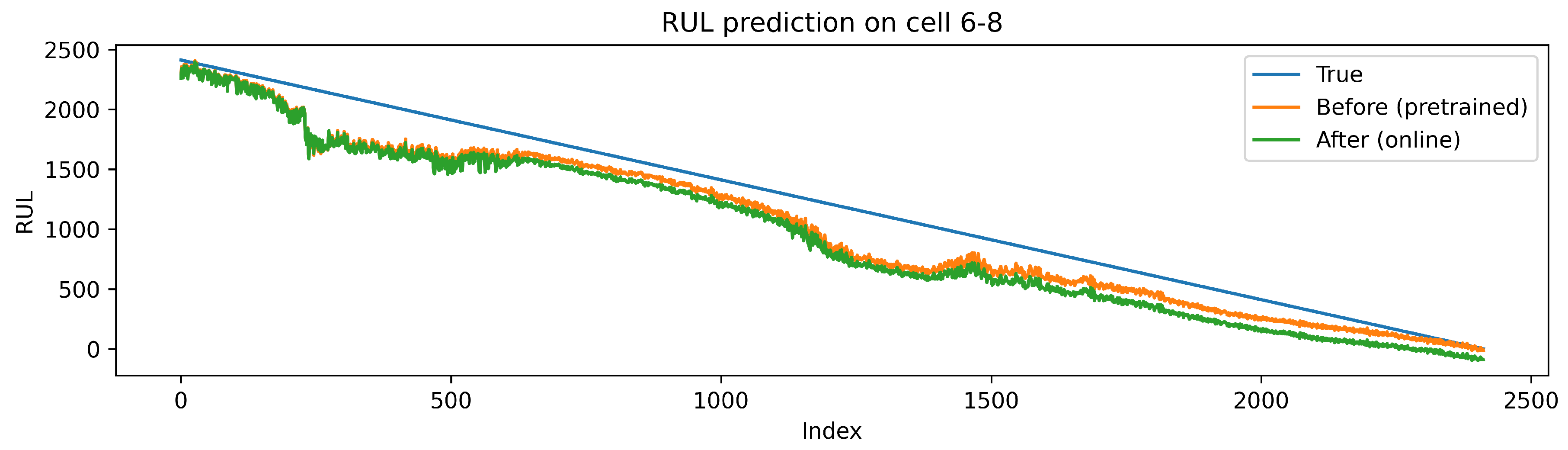

4.8. Case Studies

4.9. SOH Results and Trade-off

4.10. Discussion

5. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- International Energy Agency. Batteries and Secure Energy Transitions. International Energy Agency: Paris, France, 2024. [Google Scholar]

- Che, Y.; Hu, X.; Lin, X.; Guo, J.; Teodorescu, R. Health prognostics for lithium-ion batteries: mechanisms, methods, and prospects. Energy Environ. Sci. 2023, 16, 338–371. [Google Scholar] [CrossRef]

- Hu, X.; Xu, L.; Lin, X.; Pecht, M. Battery lifetime prognostics. Joule 2020, 4, 310–346. [Google Scholar] [CrossRef]

- Xie, Y.; Wang, S.; Zhang, G.; Takyi-Aninakwa, P.; Fernandez, C.; Blaabjerg, F. A review on data-driven whole-life state of health prediction for lithium-ion batteries: data preprocessing, aging characteristics, algorithms, and future challenges. J. Energy Chem. 2024, 97, 630–649. [Google Scholar] [CrossRef]

- Ge, M.-F.; Liu, Y.; Jiang, X.; Liu, J. A review on state of health estimations and remaining useful life prognostics of lithium-ion batteries. Measurement 2021, 174, 109057. [Google Scholar] [CrossRef]

- Devie, A.; Baure, G.; Dubarry, M. Intrinsic variability in the degradation of a batch of commercial 18650 lithium-ion cells. Energies 2018, 11, 1031. [Google Scholar] [CrossRef]

- Diao, W.; Kim, J.; Azarian, M.H.; Pecht, M. Degradation modes and mechanisms analysis of lithium-ion batteries with knee points. Electrochim. Acta 2022, 431, 141143. [Google Scholar] [CrossRef]

- Ma, G.; Xu, S.; Jiang, B.; Cheng, C.; Yang, X.; Shen, Y.; Yang, T.; Huang, Y.; Ding, H.; Yuan, Y. Real-time personalized health status prediction of lithium-ion batteries using deep transfer learning. Energy Environ. Sci. 2022, 15, 4083–4094. [Google Scholar] [CrossRef]

- Liu, K.; Peng, Q.; Che, Y.; Zheng, Y.; Li, K.; Teodorescu, R.; Widanage, D.; Barai, A. Transfer learning for battery smarter state estimation and ageing prognostics: recent progress, challenges, and prospects. Adv. Appl. Energy 2023, 9, 100117. [Google Scholar] [CrossRef]

- Che, Y.; Zheng, Y.; Onori, S.; Hu, X. Increasing generalization capability of battery health estimation using continual learning. Cell Rep. Phys. Sci. 2023, 4, 101743. [Google Scholar] [CrossRef]

- Kim, S.W.; Oh, K.-Y.; Lee, S. Novel informed deep learning-based prognostics framework for on-board health monitoring of lithium-ion batteries. Appl. Energy 2022, 315, 119011. [Google Scholar] [CrossRef]

- Qin, H.; Fan, X.; Fan, Y.; Wang, R.; Shang, Q.; Zhang, D. A computationally efficient approach for the state-of-health estimation of lithium-ion batteries. Energies 2023, 16, 5414. [Google Scholar] [CrossRef]

- Wu, W.; Chen, Z.; Liu, W.; Zhou, D.; Xia, T.; Pan, E. Battery health prognosis in data-deficient practical scenarios via reconstructed voltage-based machine learning. Cell Rep. Phys. Sci. 2025, 6, 102442. [Google Scholar] [CrossRef]

- Bifet, A.; Gavaldà, R. Learning from time-changing data with adaptive windowing. In Proceedings of the 2007 SIAM International Conference on Data Mining, Minneapolis, MN, USA, 26–28 April 2007; pp. 443–448. [Google Scholar]

- Yang, B.; Qian, Y.; Li, Q.; Chen, Q.; Wu, J.; Luo, E.; Xie, R.; Zheng, R.; Yan, Y.; Su, S.; Wang, J. Critical summary and perspectives on state-of-health of lithium-ion battery. Renew. Sustain. Energy Rev. 2024, 190, 114077. [Google Scholar] [CrossRef]

- Kumar, R.; Das, K. Lithium battery prognostics and health management for electric vehicle application–a perspective review. Sustain. Energy Technol. Assess. 2024, 65, 103766. [Google Scholar] [CrossRef]

- dos Reis, G.; Strange, C.; Yadav, M.; Li, S. Lithium-ion battery data and where to find it. Energy and AI 2021, 5, 100081. [Google Scholar] [CrossRef]

- Mayemba, Q.; Mingant, R.; Li, A.; Ducret, G.; Venet, P. Aging datasets of commercial lithium-ion batteries: a review. J. Energy Storage 2024, 83, 110560. [Google Scholar] [CrossRef]

- Li, X.; Yu, D.; Vilsen, S.B.; Store, D.I. The development of machine learning-based remaining useful life prediction for lithium-ion batteries. J. Energy Chem. 2023, 82, 103–128. [Google Scholar] [CrossRef]

- Zhao, J.; Feng, X.; Pang, Q.; Wang, J.; Lian, Y.; Ouyang, M.; Burke, A.F. Battery prognostics and health management from a machine learning perspective. J. Power Sources 2023, 581, 233474. [Google Scholar] [CrossRef]

- Zhang, Y.; Li, Y.-F. Prognostics and health management of lithium-ion battery using deep learning methods: a review. Renew. Sustain. Energy Rev. 2022, 161, 112282. [Google Scholar] [CrossRef]

- Lyu, D.; Zhang, B.; Zio, E.; Xiang, J. Battery cumulative lifetime prognostics to bridge laboratory and real-life scenarios. Cell Rep. Phys. Sci. 2024, 5, 102164. [Google Scholar] [CrossRef]

- Shu, X.; Shen, J.; Li, G.; Zhang, Y.; Chen, Z.; Liu, Y. A flexible state-of-health prediction scheme for lithium-ion battery packs with long short-term memory network and transfer learning. IEEE Trans. Transp. Electrif 2021, 7, 2238–2248. [Google Scholar] [CrossRef]

- Sahoo, S.; Hariharan, K.S.; Agarwal, S.; Swernath, S.B.; Bharti, R.; Han, S.; Lee, S. Transfer learning based generalized framework for state of health estimation of Li-ion cells. Sci. Rep. 2022, 12, 13173. [Google Scholar] [CrossRef] [PubMed]

- Yang, Y.; Xu, Y.; Nie, Y.; Li, J.; Liu, S.; Zhao, L.; Yu, Q.; Zhang, C. Deep transfer learning enables battery state of charge and state of health estimation. Energy 2024, 294, 130779. [Google Scholar] [CrossRef]

- Lin, T.; Chen, S.; Harris, S.J.; Zhao, T.; Liu, Y.; Wan, J. Investigating explainable transfer learning for battery lifetime prediction under state transitions. eScience 2024, 4, 100280. [Google Scholar] [CrossRef]

- Ji, S.; Zhang, Z.; Stein, H.S.; Zhu, J. Flexible health prognosis of battery nonlinear aging using temporal transfer learning. Appl. Energy 2025, 377, 124766. [Google Scholar] [CrossRef]

- Wu, X.; Chen, J.; Tang, H.; Xu, K.; Shao, M.; Long, Y. Robust online estimation of state of health for lithium-ion batteries based on capacities under dynamical operation conditions. Batteries 2024, 10, 219. [Google Scholar] [CrossRef]

- Gama, J.; Žliobaitė, I.; Bifet, A.; Pechenizkiy, M.; Bouchachia, A. A survey on concept drift adaptation. ACM Comput. Surv. 2014, 46, 44:1–44:37. [Google Scholar] [CrossRef]

- Ni, Y.; Li, X.; Zhang, H.; Wang, T.; Song, K.; Zhu, C.; Xu, J. Online identification of knee point in conventional and accelerated aging lithium-ion batteries using linear regression and Bayesian inference methods. Appl. Energy 2025, 388, 125646. [Google Scholar] [CrossRef]

- Houlsby, N.; Giurgiu, A.; Jastrzebski, S.; Morrone, B.; de Laroussilhe, Q.; Gesmundo, A.; Attariyan, M.; Gelly, S. Parameter-efficient transfer learning for NLP. In Proceedings of the 36th International Conference on Machine Learning, Long Beach, CA, USA, 9–15 June 2019; pp. 2790–2799. [Google Scholar]

| Before | After | |||||

|---|---|---|---|---|---|---|

| Method | RMSE ↓ | MAPE (%) ↓ | RMSE ↓ | MAPE (%) ↓ | ||

| Ma et al. (2022) baseline [8] | – | – | – | 186.00 | 0.804 | 8.72 |

| PEAT (Full) | 168.10 | 0.8451 | 8.22 | 160.58 | 0.8588 | 7.69 |

| Method | RMSE ↓ | MAPE (%) ↓ | |

|---|---|---|---|

| Ma et al. (2022) baseline [8] | 186.00 | 0.804 | 8.72 |

| PEAT without adaptive windowing | 168.26 | 0.8556 | 8.05 |

| PEAT without parameter-efficient personalization | 169.30 | 0.8472 | 8.07 |

| PEAT (Full) | 160.58 | 0.8588 | 7.69 |

| (a) RUL and stability summary | ||||||

|---|---|---|---|---|---|---|

| Method | RMSE ↓ |

Improved cells |

Degraded cells |

Mean RMSE vs. no-adaptation reference |

Worst RMSE vs. no-adaptation reference |

|

| No-adaptation reference (Before) |

168.10 | 0.8451 | – | – | 0.00 | 0.00 |

| PEAT | 160.58 | 0.8588 | 14/22 | 8/22 | -7.51 | 52.69 |

| Robust PEAT | 162.91 | 0.8535 | 15/22 | 6/22 | -5.19 | 35.22 |

| (b) SOH summary | ||||||

| Method | SOH RMSE ↓ | Improved SOH cells | ||||

| No-adaptation reference (Before) | 5.74 | 0.9953 | – | |||

| PEAT | 4.18 | 0.9972 | 16/22 | |||

| Robust PEAT | 3.58 | 0.9980 | 20/22 | |||

| Before | After | |||||

|---|---|---|---|---|---|---|

| Method | RMSE ↓ | MAPE (%) ↓ | RMSE ↓ | MAPE (%) ↓ | ||

| Ma et al. (2022) baseline [8] | – | – | – | 2.57 | 0.999 | 0.176 |

| PEAT (Full) | 5.74 | 0.9953 | 0.420 | 4.18 | 0.9972 | 0.294 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).