Submitted:

30 March 2026

Posted:

31 March 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Materials and Methods

2.1. Bat Sample Collection and Acoustic Recording

2.2. Feature Selection for Machine Learning Models

2.3. Machine Learning Model Selection

2.4. Machine Learning Model Training and Evaluation

2.5. Sound Wave Editing and Playback Experiment

3. Results

3.1. Comparison of Machine Learning Classification Results and Key Features in Acoustic Signal Analysis

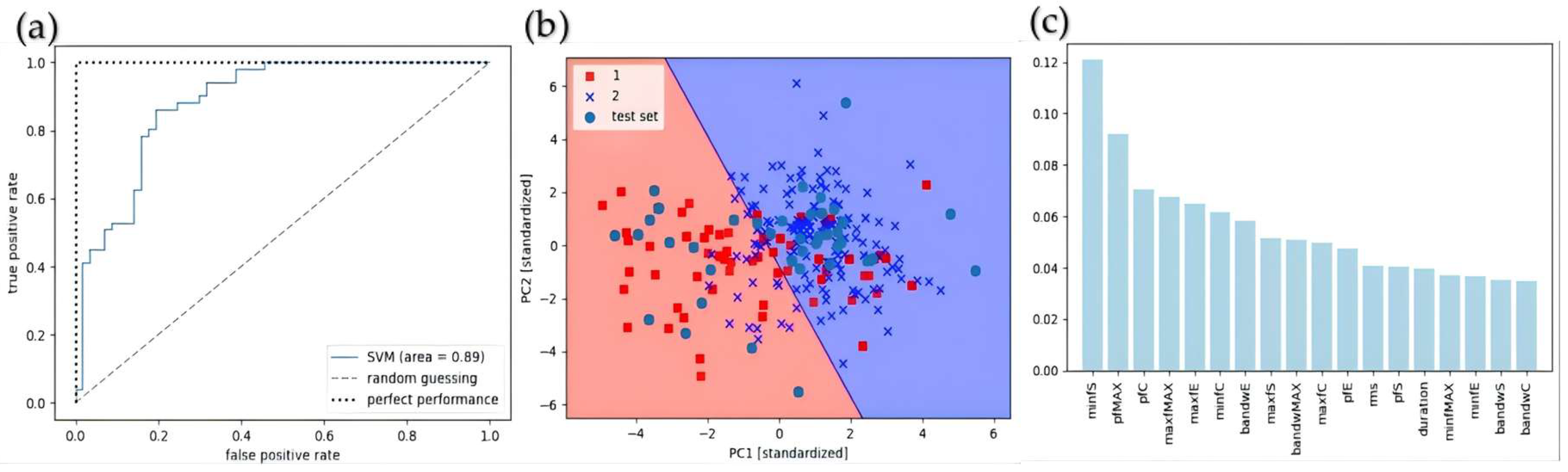

3.1.1. Comparison of Classification Results by Sex and Key Features

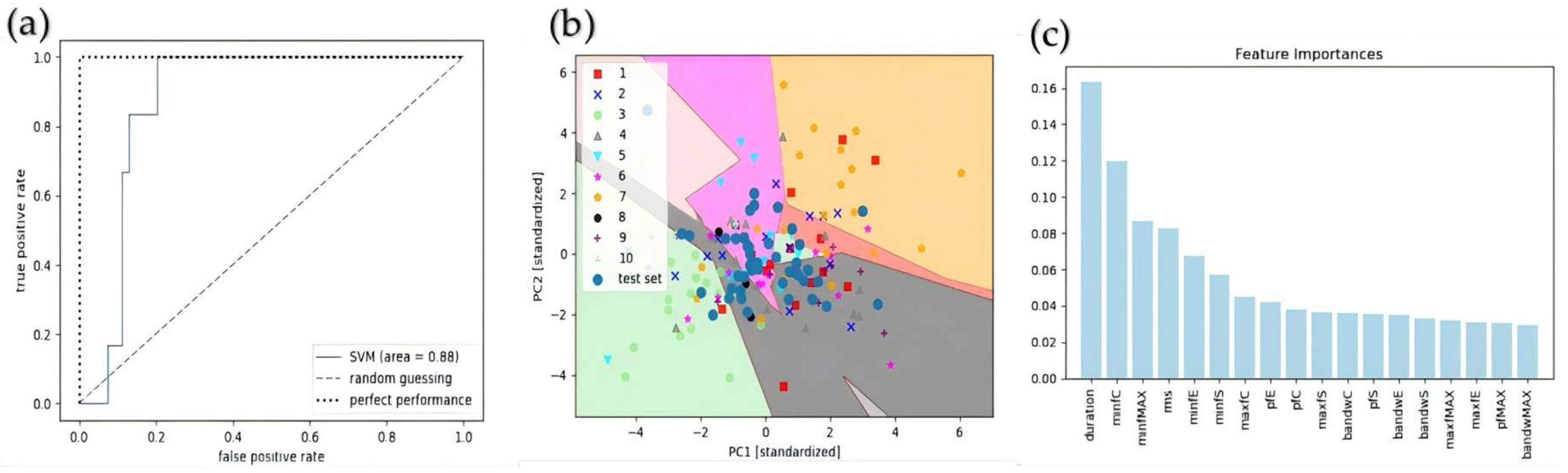

3.1.2. Comparison of Age Classification Results with Key Characteristics

3.1.3. Comparison of Individual Classification Results with Key Features

3.2. Habituation Discrimination Experiment

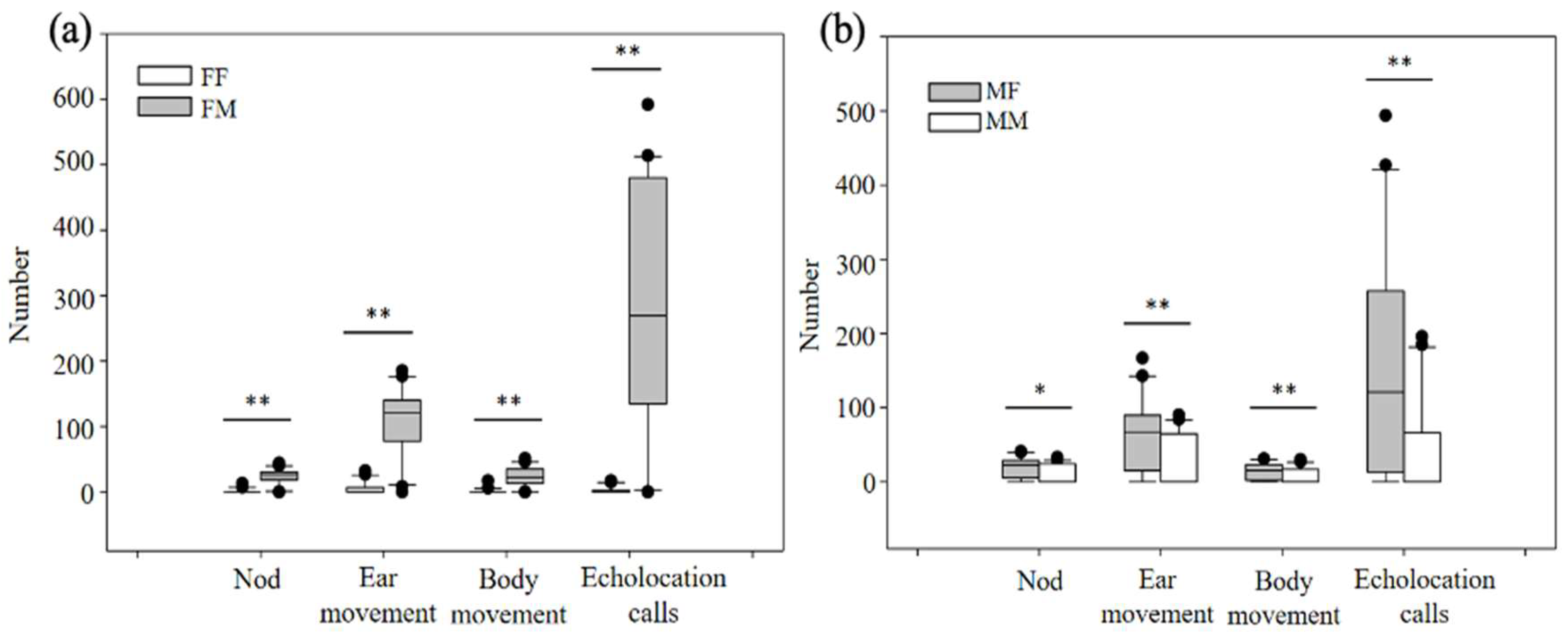

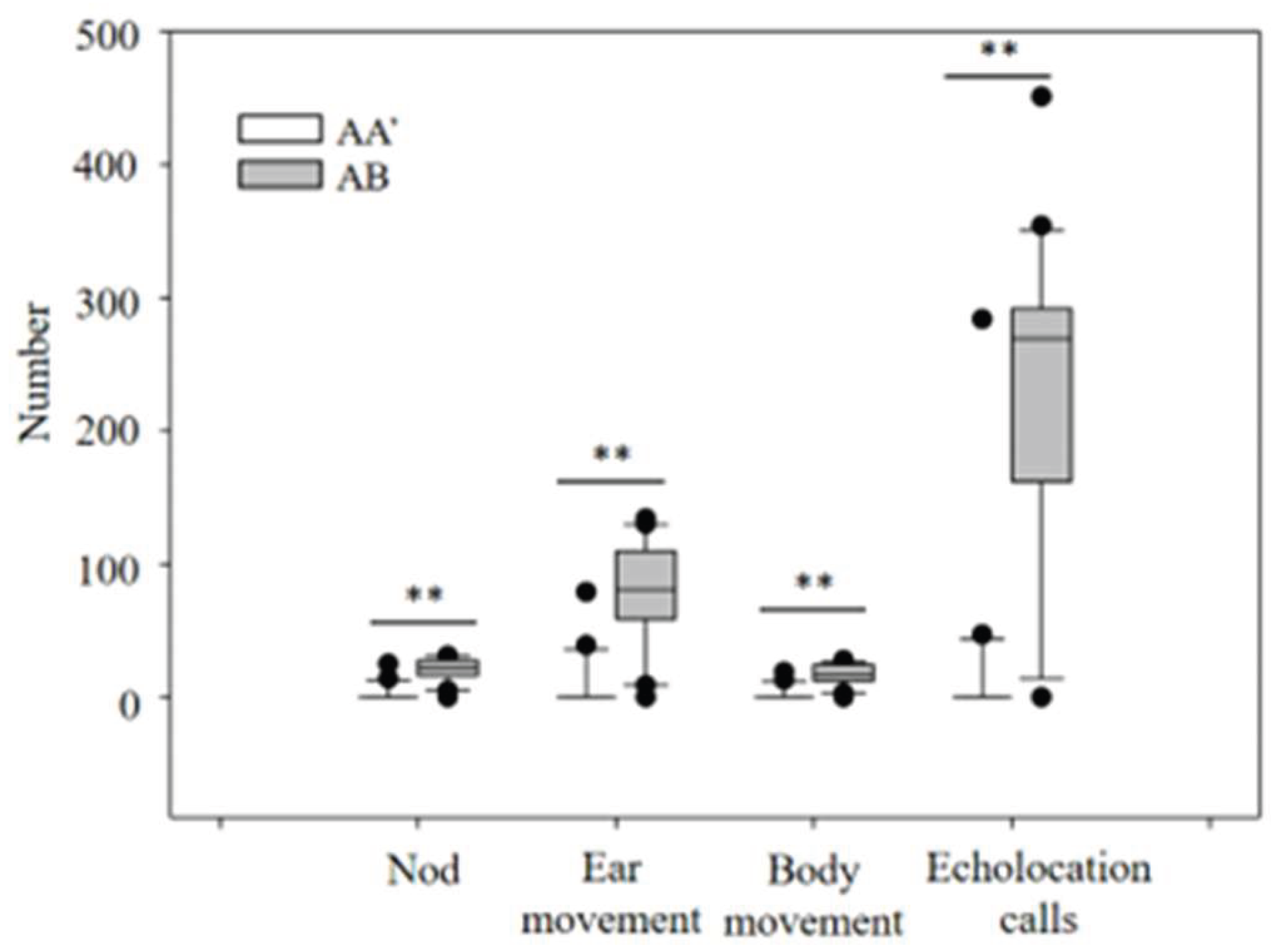

3.2.1. Differences in Behavioral Responses to Acoustic Gender Recognition

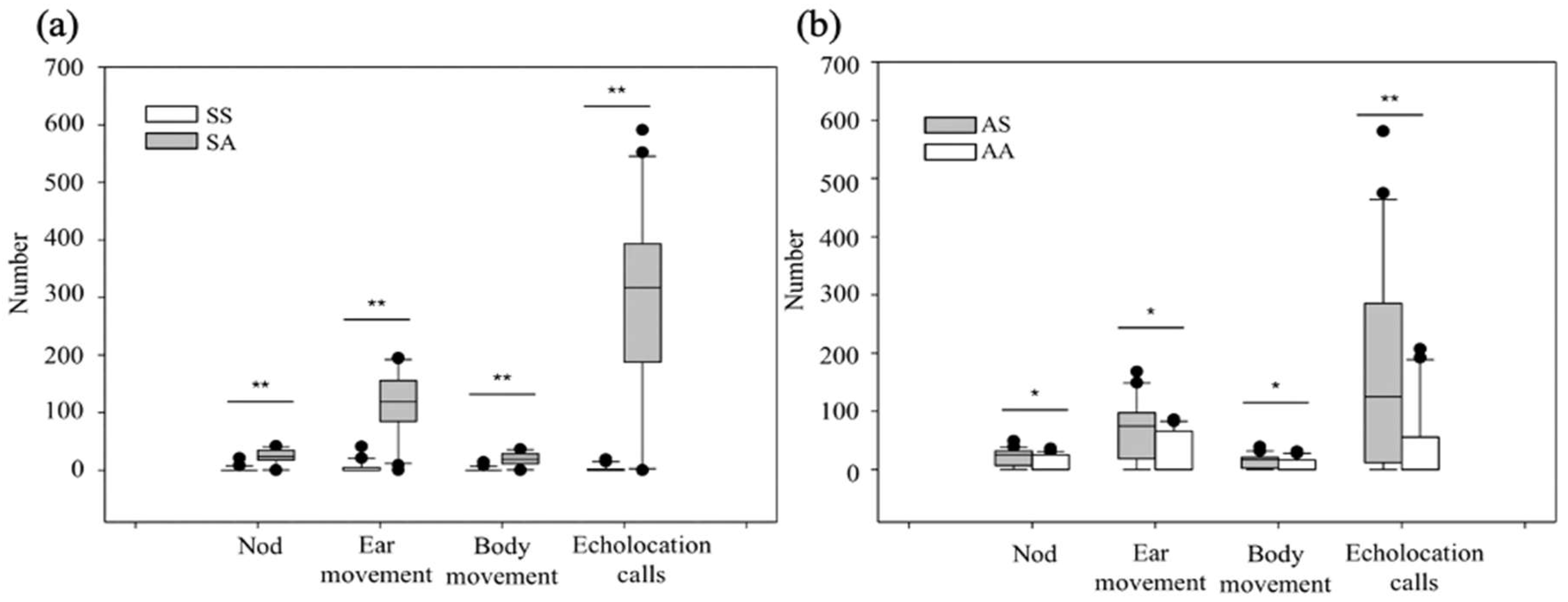

3.2.2. Differences in Behavioral Responses to Age Recognition via Acoustic Waves

3.2.3. Differences in Behavioral Responses to Individual Recognition via Acoustic Signals

4. Discussion

4.1. Classifying Bat Calls Using Machine Learning Methods

4.2. Recognition of Distress Calls

5. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Data Availability Statement

Conflicts of Interest

References

- Bradbury, J.W.; Vehrencamp, S.L. Principles of animal communication. Choice Rev. Online 1998, 49, 49–5059. [Google Scholar] [CrossRef]

- Gillam, E.; Fenton, M.B. Roles of acoustic social communication in the lives of bats. In Bat bioacoustics; Fenton, M.B., Grinnell, A.D., Popper, A.N., Fay, R.R., Eds.; Springer: New York, NY, USA, 2016; pp. 117–139. [Google Scholar]

- Hoi, H.; Darolová, A.; Krištofík, J. Slow song syllable rates provoke stronger male territorial responses in Eurasian reed warblers (Acrocephalus scirpaceus). J. Ornithol. 2023, 164, 193–202. [Google Scholar] [CrossRef]

- Golini, M.; Bell, M. The effects of anthrophony on song traits in European robins ( Erithacus rubecula ). Ecol. Evol. 2026, 16, e73018. [Google Scholar] [CrossRef] [PubMed]

- Elie, J.E.; Theunissen, F.E. Zebra finches identify individuals using vocal signatures unique to each call type. Nat. Commun. 2018, 9, 4026. [Google Scholar] [CrossRef] [PubMed]

- Lefèvre, R.A.; Amichaud, O.; Özcan, D.; Briefer, E.F. Biphonation in animal vocalizations: insights into communicative functions and production mechanisms. Philos. Trans. R. Soc. B Biol. Sci. 2025, 380, 20240011. [Google Scholar] [CrossRef]

- Shapiro, A.D. Recognition of individuals within the social group: signature vocalizations. In Handbook of behavioral neuroscience; Brudzynski, S.M., Ed.; Elsevier: Amsterdam, The Netherlands, 2010; Vol. 19, pp. 495–503. [Google Scholar]

- Zimmermann, E.; Lerch, C. The complex acoustic design of an advertisement call in male mouse lemurs (Microcebus murinus, Prosimii, Primates) and sources of its variation. Ethology 1993, 93, 211–224. [Google Scholar] [CrossRef]

- Mitani, J.C. Male gibbon (Hylobates agilis) singing behavior: natural history, song variations and function. Ethology 1988, 79, 177–194. [Google Scholar] [CrossRef]

- Cowlishaw, G.U.Y. Song function in gibbons. Behaviour 1992, 121, 131–153. [Google Scholar] [CrossRef]

- Kanwal, J.S. Audiovocal communication in bats. Encycl. Neurosci. Acad. Press 2009, 681–690. [Google Scholar] [CrossRef]

- Salles, A.; Loscalzo, E.; Montoya, J.; Mendoza, R.; Boergens, K.M.; Moss, C.F. Auditory processing of communication calls in interacting bats. iScience 2024, 27, 109872. [Google Scholar] [CrossRef]

- Vargas-Mena, J.C.; Rocha, P.A.; Appel, G.; Melo Benathar, T.C.; Prous, X.; Tavares, V.D.C.; Trevelin, L. The echolocation calls and novel audible clicks of the thumbless bat, Furipterus horrens (Cuvier, 1828), in Eastern Amazonia, Brazil. Acta Chiropterologica 2026, 27. [Google Scholar] [CrossRef]

- Ma, J.; Kobayasi, K.; Zhang, S.; Metzner, W. Vocal communication in adult greater horseshoe bats, Rhinolophus ferrumequinum. J. Comp. Physiol. A 2006, 192, 535–550. [Google Scholar] [CrossRef] [PubMed]

- Knörnschild, M.; Von Helversen, O. Nonmutual vocal mother–pup recognition in the greater sac-winged bat. Anim. Behav. 2008, 76, 1001–1009. [Google Scholar] [CrossRef]

- Davidson, S.M.; Wilkinson, G.S. Function of male song in the greater white-lined bat, Saccopteryx bilineata. Anim. Behav. 2004, 67, 883–891. [Google Scholar] [CrossRef]

- Wilkinson, G.S.; Boughman, J.W. Social calls coordinate foraging in greater spear-nosed bats. Anim. Behav. 1998, 55, 337–350. [Google Scholar] [CrossRef]

- Kloepper, L.N.; Tuninetti, A.; Bentley, I.; Harding, C.D.; Brighton, C.H.; Izadi, M.R.; Stevenson, R.L.; Taylor, G.K. Stereotyped active sensing in fast-diving echolocating bats. iScience 2025, 28, 114099. [Google Scholar] [CrossRef]

- Liu, Y.; Feng, J.; Metzner, W. Different auditory feedback control for echolocation and communication in horseshoe bats. PLoS ONE 2013, 8, e62710. [Google Scholar] [CrossRef]

- González Noschese, C.S.; Olmedo, M.L.; Díaz, M.M. First characterization of the echolocation calls of Myotis dinellii (Chiroptera: Vespertilionidae). Mammal Res. 2024, 69, 53–58. [Google Scholar] [CrossRef]

- Huang, X.; Kanwal, J.S.; Jiang, T.; Long, Z.; Luo, B.; Yue, X.; Gu, Y.; Feng, J. Situational and age-dependent decision making during life threatening distress in Myotis macrodactylus. PLoS ONE 2015, 10, e0132817, 0doi. [Google Scholar] [CrossRef]

- Ruiz-Monachesi, M.R.; Labra, A. Complex distress calls sound frightening: The case of the weeping lizard. Anim. Behav. 2020, 165, 71–77. [Google Scholar] [CrossRef]

- Mariappan, S.; Bogdanowicz, W.; Raghuram, H.; Marimuthu, G.; Rajan, K.E. Structure of distress call: Implication for specificity and activation of dopaminergic system. J. Comp. Physiol. A 2016, 202, 55–65. [Google Scholar] [CrossRef] [PubMed]

- Jiang, T.; Huang, X.; Wu, H.; Feng, J. Size and quality information in acoustic signals of Rhinolophus ferrumequinum in distress situations. Physiol. Behav. 2017, 173, 252–257. [Google Scholar] [CrossRef]

- Eckenweber, M.; Knörnschild, M. Responsiveness to conspecific distress calls is influenced by day-roost proximity in bats ( Saccopteryx bilineata ). R. Soc. Open Sci. 2016, 3, 160151. [Google Scholar] [CrossRef] [PubMed]

- Knörnschild, M.; Tschapka, M. Predator mobbing behaviour in the greater spear-nosed bat, Phyllostomus hastatus. Chiropt. Neotropical 2012, 18, 1132–1135. [Google Scholar]

- Lučan, R.K.; Šálek, M. Observation of successful mobbing of an insectivorous bat, Taphozous nudiventris (Emballonuridae), on an avian predator, Tyto alba (Tytonidae). Mammalia 2013, 77, 235–236. [Google Scholar] [CrossRef]

- Russ, J.M.; Racey, P.A.; Jones, G. Intraspecific responses to distress calls of the pipistrelle bat, Pipistrellus pipistrellus. Anim. Behav. 1998, 55, 705–713. [Google Scholar] [CrossRef]

- Manduva, V.C. Unlocking growth potential at the intersection of AI, robotics, and synthetic biology. Int. J. Mod. Comput. 2023, 6, 53–63. [Google Scholar]

- Jordan, M.I.; Mitchell, T.M. Machine learning: trends, perspectives, and prospects. Science 2015, 349, 255–260. [Google Scholar] [CrossRef]

- Huang, C.J.; Yang, Y.J.; Yang, D.X.; Chen, Y.J. Frog Classification using machine learning techniques. Expert Syst. Appl. 2009, 36, 3737–3743. [Google Scholar] [CrossRef]

- Acevedo, M.A.; Corrada-Bravo, C.J.; Corrada-Bravo, H.; Villanueva-Rivera, L.J.; Aide, T.M. Automated classification of bird and amphibian calls using machine learning: a comparison of methods. Ecol. Inform. 2009, 4, 206–214. [Google Scholar] [CrossRef]

- Shamir, L.; Yerby, C.; Simpson, R.; Von Benda-Beckmann, A.M.; Tyack, P.; Samarra, F.; Miller, P.; Wallin, J. Classification of large acoustic datasets using machine learning and crowdsourcing: application to whale calls. J. Acoust. Soc. Am. 2014, 135, 953–962. [Google Scholar] [CrossRef] [PubMed]

- Redgwell, R.D.; Szewczak, J.M.; Jones, G.; Parsons, S. Classification of echolocation calls from 14 species of bat by support vector machines and ensembles of neural networks. Algorithms 2009, 2, 907–924. [Google Scholar] [CrossRef]

- Karaaslan, M.; Turkoglu, B.; Kaya, E.; Asuroglu, T. Voice analysis in dogs with deep learning: development of a fully automatic voice analysis system for bioacoustics studies. Sensors 2024, 24, 7978. [Google Scholar] [CrossRef] [PubMed]

- Duco, R.A.J.; Fontanilla, A.M.; M. Duya, M.R. Acoustic differentiation of horseshoe bats in Luzon Island, Philippines: developing a call identification key and uncovering potential cryptic species. Acta Chiropterologica 2026, 27. [Google Scholar] [CrossRef]

- Jin, L.; Yang, S.; Kimball, R.T.; Xie, L.; Yue, X.; Luo, B.; Sun, K.; Feng, J. Do pups recognize maternal calls in Pomona leaf-nosed bats, Hipposideros pomona? Anim. Behav. 2015, 100, 200–207. [Google Scholar] [CrossRef]

- Knörnschild, M.; Feifel, M.; Kalko, E.K.V. Mother–offspring recognition in the bat Carollia perspicillata. Anim. Behav. 2013, 86, 941–948. [Google Scholar] [CrossRef]

- Gelfand, D.L.; McCracken, G.F. Individual variation in the isolation calls of Mexican free-tailed bat pups (Tadarida brasiliensis mexicana). Anim. Behav. 1986, 34, 1078–1086. [Google Scholar] [CrossRef]

- Balcombe, J.P.; McCracken, G.F. Vocal recognition in Mexican free-tailed bats: Do pups recognize mothers? Anim. Behav. 1992, 43, 79–87. [Google Scholar] [CrossRef]

- Gillam, E.H.; Chaverri, G. Strong individual signatures and weaker group signatures in contact calls of Spix’s disc-winged bat, Thyroptera tricolor. Anim. Behav. 2012, 83, 269–276. [Google Scholar] [CrossRef]

- Fagerlund, S. Bird species recognition using support vector machines. EURASIP J. Adv. Signal Process. 2007, 2007, 038637. [Google Scholar] [CrossRef]

- Chen, W.P.; Chen, S.S.; Lin, C.C.; Chen, Y.Z.; Lin, W.C. Automatic recognition of frog calls using a multi-stage average spectrum. Comput. Math. Appl. 2012, 64, 1270–1281. [Google Scholar] [CrossRef]

- Pedregosa, F.; Varoquaux, G.; Gramfort, A.; Michel, V.; Thirion, B.; Grisel, O.; Blondel, M.; Prettenhofer, P.; Weiss, R.; Dubourg, V. Scikit-Learn: machine learning in Python. J. Mach. Learn. Res. 2011, 12, 2825–2830. [Google Scholar] [CrossRef]

- Arnold, B.D.; Wilkinson, G.S. Individual specific contact calls of pallid bats (Antrozous pallidus) attract conspecifics at roosting sites. Behav. Ecol. Sociobiol. 2011, 65, 1581–1593. [Google Scholar] [CrossRef]

- Carter, G.G.; Skowronski, M.D.; Faure, P.A.; Fenton, B. Antiphonal calling allows individual discrimination in white-winged vampire bats. Anim. Behav. 2008, 76, 1343–1355. [Google Scholar] [CrossRef]

- Ancillotto, L.; Russo, D. Selective aggressiveness in European free-tailed bats (Tadarida teniotis): influence of familiarity, age and sex. Naturwissenschaften 2014, 101, 221–228. [Google Scholar] [CrossRef]

- Kanwal, J.S.; Matsumura, S.; Ohlemiller, K.; Suga, N. Analysis of acoustic elements and syntax in communication sounds emitted by mustached bats. J. Acoust. Soc. Am. 1994, 96, 1229–1254. [Google Scholar] [CrossRef]

- Priyadarshani, N.; Marsland, S.; Castro, I. Automated birdsong recognition in complex acoustic environments: a review. J. Avian Biol. 2018, 49, jav–01447. [Google Scholar] [CrossRef]

- Adavanne, S.; Drossos, K.; Cakir, E.; Virtanen, T. Stacked convolutional and recurrent neural networks for bird audio detection. In Proceedings of the 2017 25th European Signal Processing Conference (EUSIPCO), August 2017; IEEE: Kos, Greece; pp. 1729–1733. [Google Scholar]

- Xiao, Y.H.; Wang, L.; Hoyt, J.R.; Jiang, T.L.; Lin, A.Q.; Feng, J. Stereotypy and variability of social calls among clustering female big-footed myotis (Myotis macrodactylus). Zool. Res. 2018, 39, 114. [Google Scholar] [CrossRef]

- Zhang, K.; Liu, T.; Liu, M.; Li, A.; Xiao, Y.; Metzner, W.; Liu, Y. Comparing context-dependent call sequences employing machine learning methods: an indication of syntactic structure of greater horseshoe bats. J. Exp. Biol. 2019, 222, jeb.214072. [Google Scholar] [CrossRef]

- Bohn, K.M.; Schmidt-French, B.; Ma, S.T.; Pollak, G.D. Syllable acoustics, temporal patterns, and call composition vary with behavioral context in Mexican free-tailed bats. J. Acoust. Soc. Am. 2008, 124, 1838–1848. [Google Scholar] [CrossRef]

- Jin, L.; Wang, J.; Zhang, Z.; Sun, K.; Kanwal, J.S.; Feng, J. Postnatal development of morphological and vocal features in Asian particolored bat, Vespertilio sinensis. Mamm. Biol. 2012, 77, 339–344. [Google Scholar] [CrossRef]

- Wang, Z.; Hong, T.; Piette, M.A. Building thermal load prediction through shallow machine learning and deep learning. Appl. Energy 2020, 263, 114683. [Google Scholar] [CrossRef]

- Neyshabur, B.; Bhojanapalli, S.; McAllester, D.; Srebro, N. Exploring generalization in deep learning. Adv. Neural Inf. Process. Syst. 2017, 30, 5947–5956. [Google Scholar] [CrossRef]

- Wang, D.; Chen, J. Supervised speech separation based on deep learning: an overview. IEEEACM Trans. Audio Speech Lang. Process. 2018, 26, 1702–1726. [Google Scholar] [CrossRef]

- Kawaguchi, K.; Kaelbling, L.P.; Bengio, Y. Generalization in deep learning. Mach. Learn. Res. 2017, 18, 1–46. [Google Scholar] [CrossRef]

- Seyfarth, R.M.; Cheney, D.L. Signalers and receivers in animal communication. Annu. Rev. Psychol. 2003, 54, 145–173. [Google Scholar] [CrossRef]

- Koenig, W.D.; Stanback, M.T.; Hooge, P.N.; Mumme, R.L. Distress calls in the acorn woodpecker. The Condor 1991, 93, 637–643. [Google Scholar] [CrossRef]

- Avery, M.I.; Racey, P.A.; Fenton, M.B. Short distance location of hibernaculum by little brown bats (Myotis lucifugus). J. Zool. 1984, 204, 588–590. [Google Scholar] [CrossRef]

- Fong, L.J.M.; Navea, F.; Labra, A. Does Liolaemus lemniscatus eavesdrop on the distress calls of the sympatric weeping lizard? J. Ethol. 2021, 39, 11–17. [Google Scholar] [CrossRef]

- Stefanski, R.A.; Falls, J.B. A study of distress calls of song, swamp, and white-throated sparrows (Aves: Fringillidae). I. Intraspecific responses and functions. Can. J. Zool. 1972, 50, 1501–1512. [Google Scholar] [CrossRef]

- Brémond, J.C.; Aubin, T. Responses to distress calls by black-headed gulls, Larus ridibundus: the role of non-degraded features. Anim. Behav. 1990, 39, 503–511. [Google Scholar] [CrossRef]

- Driver, P.M.; Humphries, D.A. The significance of the high-intensity alarm call in captured passerines. Ibis 1969, 111, 243–244. [Google Scholar] [CrossRef]

- Conover, M.R. Stimuli eliciting distress calls in adult passerines and response of predators and birds to their broadcast. Behaviour 1994, 131, 19–37. [Google Scholar] [CrossRef]

- Fang, W.H.; Hsu, Y.H.; Lin, W.L.; Yen, S.C. The function of avian mobbing: an experimental test of ‘attract the mightier’ hypothesis. Anim. Behav. 2020, 170, 229–233. [Google Scholar] [CrossRef]

- Conover, M.R.; Perito, J.J. Response of starlings to distress calls and predator models holding conspecific prey. Z. Für Tierpsychol. 1981, 57, 163–172. [Google Scholar] [CrossRef]

- Rohwer, S.; Fretwell, S.D.; Tuckfield, R.C. Distress screams as a measure of kinship in birds. Am. Midl. Nat. 1976, 96, 418–430. [Google Scholar] [CrossRef]

- Neudorf, D.L.; Sealy, S.G. Distress calls of birds in a Neotropical cloud forest. Biotropica 2002, 34, 118. [Google Scholar] [CrossRef]

- Fenton, M.B.; Belwood, J.J.; Fullard, J.H.; Kunz, T.H. Responses of Myotis lucifugus (Chiroptera: Vespertilionidae) to calls of conspecifics and to other sounds. Can. J. Zool. 1976, 54, 1443–1448. [Google Scholar] [CrossRef]

- August, P.V. Acoustical properties of the distress calls of Artibeus jamaicensis and Phyllostomus hastatus (Chiroptera: Phyllostomidae). Southwest. Nat. 1985, 30, 371. [Google Scholar] [CrossRef]

- Carter, G.G.; Logsdon, R.; Arnold, B.D.; Menchaca, A.; Medellin, R.A. Adult vampire bats produce contact calls when isolated: acoustic variation by species, population, colony, and individual. PLoS ONE 2012, 7, e38791. [Google Scholar] [CrossRef]

- Hechavarría, J.C.; Beetz, M.J.; Macias, S.; Kössl, M. Distress vocalization sequences broadcasted by bats carry redundant information. J. Comp. Physiol. A 2016, 202, 503–515. [Google Scholar] [CrossRef]

- Ryan, J.M.; Clark, D.B.; Lackey, J.A. Response of Artibeus lituratus (Chiroptera: Phyllostomidae) to distress calls of conspecifics. J. Mammal. 1985, 66, 179–181. [Google Scholar] [CrossRef]

- Carter, G.; Schoeppler, D.; Manthey, M.; Knörnschild, M.; Denzinger, A. Distress calls of a fast-flying bat (Molossus molossus) provoke inspection flights but not cooperative mobbing. PLoS One 2015, 10, e0136146. [Google Scholar] [CrossRef]

| Parameters | Mean ± SD | Mean ± SD | Minimum | Maximum | ||

|---|---|---|---|---|---|---|

| Female | Male | Female | Male | |||

| Duration | 0.11 ± 0.02 | 0.05 ± 0.05 | 0.05 | 0.28 | 0.18 | 0.14 |

| RMS | -17.16 ± 2.42 | 2.10 ± -23.91 | -23.91 | -13.90 | -12.09 | -17.76 |

| Peak frequency in start position | 34.35 ± 18.75 | 19.39 ± 11.20 | 11.20 | 77.80 | 77.60 | 32.48 |

| Minimum frequency in start position | 14.15 ± 4.13 | 4.99 ± 7.50 | 7.50 | 44.40 | 24.10 | 14.37 |

| Maximum frequency in start position | 82.24 ± 13.80 | 15.86 ± 18.70 | 18.70 | 122.50 | 115.70 | 81.07 |

| Bandwidth in start position | 68.05 ± 14.12 | 16.12 ± 6.30 | 6.30 | 110.10 | 103.20 | 66.65 |

| Peak frequency in end position | 37.89 ± 17.41 | 15.85 ± 12.20 | 12.20 | 83.20 | 82.20 | 36.77 |

| Minimum frequency in end position | 20.23 ± 3.42 | 3.19 ± 11.40 | 11.40 | 41.90 | 37.80 | 19.95 |

| Maximum frequency in end position | 81.52 ± 14.05 | 12.95 ± 16.80 | 16.80 | 117.90 | 118.40 | 81.86 |

| Bandwidth in end position | 61.24 ± 13.79 | 13.54 ± 4.60 | 4.60 | 96.90 | 96.40 | 61.85 |

| Peak frequency in center position | 36.71 ± 16.15 | 16.56 ± 20.70 | 20.70 | 74.70 | 75.90 | 38.74 |

| Minimum frequency in center position | 20.11 ± 5.57 | 4.16 ± 6.10 | 6.10 | 25.10 | 49.00 | 18.74 |

| Maximum frequency in center position | 82.96 ± 12.12 | 14.66 ± 52.00 | 52.00 | 120.60 | 120.10 | 84.01 |

| Bandwidth in center position | 62.79 ± 14.23 | 16.34 ± 29.50 | 29.50 | 111.30 | 102.20 | 65.43 |

| Peak frequency in maximum amplitude position | 42.18 ± 17.30 | 15.17 ± 19.00 | 19.00 | 75.90 | 72.00 | 39.76 |

| Minimum frequency in maximum amplitude position | 23.31 ± 6.30 | 3.70 ± 11.40 | 11.40 | 45.80 | 55.90 | 22.08 |

| Maximum frequency in maximum amplitude position | 78.35 ± 13.59 | 12.34 ± 47.80 | 47.80 | 113.50 | 115.40 | 73.07 |

| Bandwidth in maximum amplitude position | 54.99 ± 15.27 | 12.60 ± 5.10 | 5.10 | 91.70 | 95.90 | 51.47 |

| Parameters | Adult Mean ± SD | Subadult Mean ± SD | Minimum | Maximum | ||

|---|---|---|---|---|---|---|

| Adult | Subadult | Adult | Subadult | |||

| Duration | 0.11 ± 0.02 | 0.05 ± 0.05 | 0.05 | 0.28 | 0.18 | 0.14 |

| RMS | -17.16 ± 2.42 | 2.10 ± -23.91 | -23.91 | -13.90 | -12.09 | -17.76 |

| Peak frequency in start position | 34.35 ± 18.75 | 19.39 ± 11.20 | 11.20 | 77.80 | 77.60 | 32.48 |

| Minimum frequency in start position | 14.15 ± 4.13 | 4.99 ± 7.50 | 7.50 | 44.40 | 24.10 | 14.37 |

| Maximum frequency in start position | 82.24 ± 13.80 | 15.86 ± 18.70 | 18.70 | 122.50 | 115.70 | 81.07 |

| Bandwidth in start position | 68.05 ± 14.12 | 16.12 ± 6.30 | 6.30 | 110.10 | 103.20 | 66.65 |

| Peak frequency in end position | 37.89 ± 17.41 | 15.85 ± 12.20 | 12.20 | 83.20 | 82.20 | 36.77 |

| Minimum frequency in end position | 20.23 ± 3.42 | 3.19 ± 11.40 | 11.40 | 41.90 | 37.80 | 19.95 |

| Maximum frequency in end position | 81.52 ± 14.05 | 12.95 ± 16.80 | 16.80 | 117.90 | 118.40 | 81.86 |

| Bandwidth in end position | 61.24 ± 13.79 | 13.54 ± 4.60 | 4.60 | 96.90 | 96.40 | 61.85 |

| Peak frequency in center position | 36.71 ± 16.15 | 16.56 ± 20.70 | 20.70 | 74.70 | 75.90 | 38.74 |

| Minimum frequency in center position | 20.11 ± 5.57 | 4.16 ± 6.10 | 6.10 | 25.10 | 49.00 | 18.74 |

| Maximum frequency in center position | 82.96 ± 12.12 | 14.66 ± 52.00 | 52.00 | 120.60 | 120.10 | 84.01 |

| bandwidth in center position | 62.79 ± 14.23 | 16.34 ± 29.50 | 29.50 | 111.30 | 102.20 | 65.43 |

| Peak frequency in maximum amplitude position | 42.18 ± 17.30 | 15.17 ± 19.00 | 19.00 | 75.90 | 72.00 | 39.76 |

| Minimum frequency in maximum amplitude position | 23.31 ± 6.30 | 3.70 ± 11.40 | 11.40 | 45.80 | 55.90 | 22.08 |

| Maximum frequency in maximum amplitude position | 78.35 ± 13.59 | 12.34 ± 47.80 | 47.80 | 113.50 | 115.40 | 73.07 |

| Bandwidth in maximum amplitude position | 54.99 ± 15.27 | 12.60 ± 5.10 | 5.10 | 91.70 | 95.90 | 51.47 |

| Parameters | Minimum | Maximum | Mean | SD |

|---|---|---|---|---|

| Duration | 0.05 | 0.28 | 0.13 | 0.04 |

| RMS | -23.94 | -12.09 | -17.46 | 2.28 |

| Peak frequency in start position | 10.20 | 77.80 | 33.41 | 19.05 |

| Minimum frequency in start position | 4.80 | 44.40 | 14.26 | 4.57 |

| Maximum frequency in start position | 16.60 | 122.50 | 81.66 | 14.85 |

| Bandwidth in start position | 6.30 | 110.10 | 67.35 | 15.14 |

| Peak frequency in end position | 12.20 | 83.20 | 37.33 | 16.62 |

| Minimum frequency in end position | 11.20 | 41.90 | 20.09 | 3.30 |

| Maximum frequency in end position | 16.80 | 118.40 | 81.69 | 13.48 |

| Bandwidth in end position | 4.60 | 96.90 | 61.54 | 13.64 |

| Peak frequency in center position | 19.20 | 75.90 | 37.73 | 16.35 |

| Minimum frequency in center position | 6.10 | 49.00 | 19.42 | 4.95 |

| Maximum frequency in center position | 46.60 | 120.60 | 83.48 | 13.43 |

| Bandwidth in center position | 26.80 | 111.30 | 64.11 | 15.34 |

| Peak frequency in maximum amplitude position | 15.10 | 75.90 | 40.97 | 16.28 |

| Minimum frequency in maximum amplitude position | 9.50 | 55.90 | 22.69 | 5.19 |

| Maximum frequency in maximum amplitude position | 45.60 | 115.40 | 75.71 | 13.22 |

| Bandwidth in maximum amplitude position | 5.10 | 95.90 | 53.23 | 14.08 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).