Submitted:

19 March 2026

Posted:

20 March 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

1.1. Literature review

1.1.1. Adoption and Use of Conversational AI Among Adolescents

1.1.2. Conversational AI as Emotional Support and Coping Mechanism

1.1.3. AI and Adolescent Mental Health

1.1.4. Problematic and Compensatory Use of AI

1.2. Current study

2. Methods

2.1. Participants and procedures

2.2. Measures

- Home subscale: Assesses the child’s perceived value and support within the family environment. In the current study, this subscale demonstrated high reliability with α = 0.85

- School subscale: Measures academic self-competence and perceived worth within the educational context. The reliability in the current sample was α = 0.71

- Peer subscale: Evaluates social acceptance and value among age-mates. The reliability reached α = 0.63

2.3. Statistical Analysis

3. Results

3.1. Sample

3.2. Model fit and profile selection

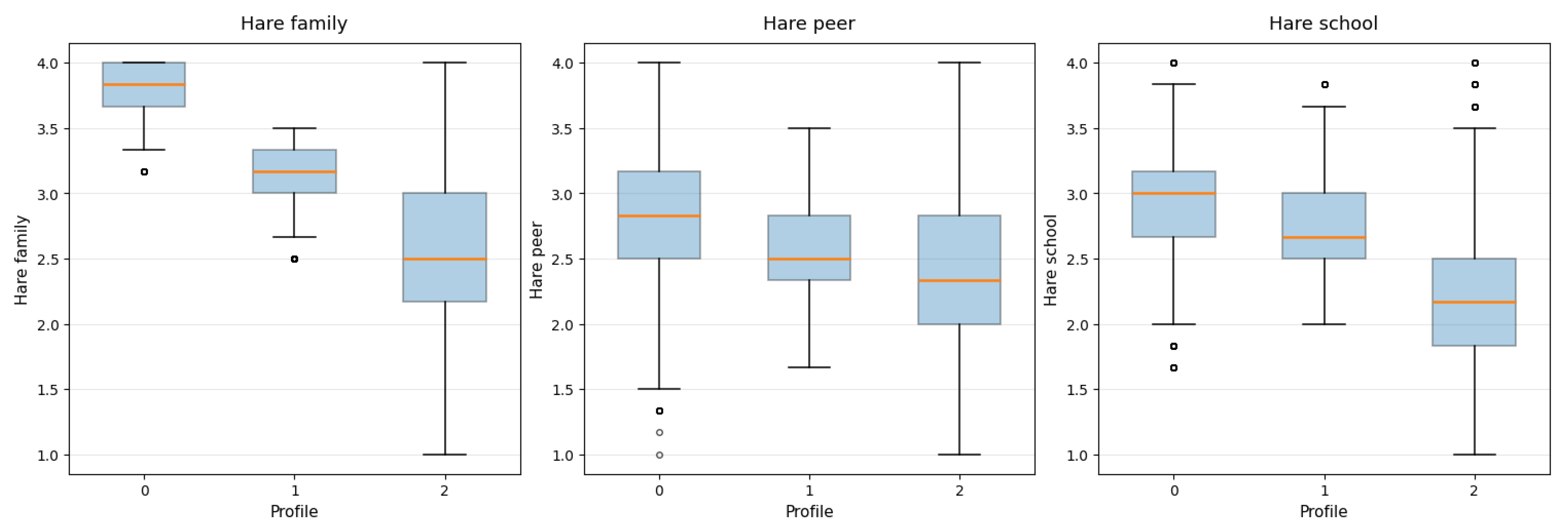

3.3. Profile characteristics

| Profile | Peer | Home | School | PHQ-4 Total |

| Profile 0 | 2.83 | 3.83 | 3.00 | 3.0 |

| Profile 1 | 2.50 | 3.17 | 2.67 | 4.0 |

| Profile 2 | 2.33 | 2.50 | 2.17 | 6.0 |

3.4. Social substitution

3.5. Emotional Regulation and Self-Disclosure

3.6. Practising and learning in intimacy

3.7. Ease of communication and accessibility

4. Discussion

5. Limitations

References

- Ahmed, A.; Ali, N.; Aziz, S.; Abd-alrazaq, A. A.; Hassan, A.; Khalifa, M.; Elhusein, B.; Ahmed, M.; Ahmed, M. A. S.; Househ, M. A review of mobile chatbot apps for anxiety and depression and their self-care features. Computer Methods and Programs in Biomedicine Update 2021, 1, 100012. [Google Scholar] [CrossRef]

- American Psychological Association. Health advisory: Artificial intelligence and adolescent well-being. 2025. Available online: https://www.apa.org/topics/artificial-intelligence-machine-learning/health-advisory-ai-adolescent-well-being.

- Almoqbel, M. Y. Talking to machines: Personas and behavioral patterns in Gen AI interactions. Journal of Posthumanism 2024, 4(3). [Google Scholar] [CrossRef]

- Brandtzaeg, P. B.; Følstad, A.; Skjuve, M. Emerging AI individualism: how young people integrate social AI into everyday life. Communication and Change 2025, 2025 1:1(1(1)), 11. [Google Scholar] [CrossRef]

- Butler, N.; Quigg, Z.; Bates, R.; Jones, L.; Ashworth, E.; Gowland, S.; Jones, M. The Contributing Role of Family, School, and Peer Supportive Relationships in Protecting the Mental Wellbeing of Children and Adolescents. School mental health 2022, 14(3), 776–788. [Google Scholar] [CrossRef]

- Chen, J.; Yuan, D.; Dong, R.; Cai, J.; Ai, Z.; Zhou, S. Artificial intelligence significantly facilitates development in the mental health of college students: a bibliometric analysis. Frontiers in Psychology 2024, 15, 1375294. [Google Scholar] [CrossRef]

- Choudhary, L.; Sybol, S. S. Impact of AI on Students Mental Health and Well-Being; 2025; pp. 205–234. [Google Scholar] [CrossRef]

- Faverio, M.; Sidoti, O. Teens, Social Media and AI chatbots 2025. In Pew Research Center; 2025; Available online: www.pewresearch.org.

- Gambo, S.; Özad, B. O. The demographics of computer-mediated communication: A review of social media demographic trends among social networking site giants. Computers in Human Behavior Reports 2020, 2, 100016. [Google Scholar] [CrossRef]

- Gao, Y. The impact and application of artificial intelligence technology on mental health counseling services for college students. Journal of Computational Methods in Sciences and Engineering 2025, 25(2), 1686–1701. [Google Scholar] [CrossRef]

- Gesselman, A. N.; Kaufman, E. M.; Marcotte, A. S.; Reynolds, T. A.; Garcia, J. R. Engagement with Emerging Forms of Sextech: Demographic Correlates from a National Sample of Adults in the United States. Journal of Sex Research 2023, 60(2), 177–189. [Google Scholar] [CrossRef] [PubMed]

- Goldman, E. J.; Poulin-Dubois, D. Children’s anthropomorphism of inanimate agents. Wiley Interdisciplinary Reviews: Cognitive Science 2024, 15(4), e1676. [Google Scholar] [CrossRef] [PubMed]

- Gori, A.; Topino, E.; Griffiths, M. D. The associations between attachment, self-esteem, fear of missing out, daily time expenditure, and problematic social media use: A path analysis model. Addictive Behaviors 2023, 141, 107633. [Google Scholar] [CrossRef]

- Herbener, A. B.; Damholdt, M. F. Are lonely youngsters turning to chatbots for companionship? The relationship between chatbot usage and social connectedness in Danish high-school students. International Journal of Human-Computer Studies 2025, 196, 103409. [Google Scholar] [CrossRef]

- Hu, M.; Chua, X. C. W.; Diong, S. F.; Kasturiratna, K. T. A. S.; Majeed, N. M.; Hartanto, A. AI as your ally: The effects of AI-assisted venting on negative affect and perceived social support. In Applied Psychology: Health and Well-Being; PAGE:STRING:ARTICLE/CHAPTER, 2025; Volume 17, 1, p. e12621. [Google Scholar] [CrossRef]

- Jiang, T.; Sun, Z.; Fu, S.; Lv, Y. Human-AI interaction research agenda: A user-centered perspective. Data and Information Management 2024, 8(4), 100078. [Google Scholar] [CrossRef]

- Kardefelt-Winther, D. A conceptual and methodological critique of internet addiction research: Towards a model of compensatory internet use. Computers in Human Behavior 2014, 31(1), 351–354. [Google Scholar] [CrossRef]

- Kim, E.; Koh, E. Avoidant attachment and smartphone addiction in college students: The mediating effects of anxiety and self-esteem. Computers in Human Behavior 2018, 84, 264–271. [Google Scholar] [CrossRef]

- Klarin, J.; Hoff, E.; Larsson, A.; Daukantaitė, D. Adolescents’ use and perceived usefulness of generative AI for schoolwork: exploring their relationships with executive functioning and academic achievement. Frontiers in Artificial Intelligence 2024, 7, 1415782. [Google Scholar] [CrossRef] [PubMed]

- Klingbeil, A.; Grützner, C.; Schreck, P. Trust and reliance on AI — An experimental study on the extent and costs of overreliance on AI. Computers in Human Behavior 2024, 160, 108352. [Google Scholar] [CrossRef]

- Kostenius, C.; Lindstrom, F.; Potts, C.; Pekkari, N. Young peoples’ reflections about using a chatbot to promote their mental wellbeing in northern periphery areas - a qualitative study. International Journal of Circumpolar Health 2024, 83(1). [Google Scholar] [CrossRef] [PubMed]

- Laestadius, L.; Bishop, A.; Gonzalez, M.; Illenčík, D.; Campos-Castillo, C. Too human and not human enough: A grounded theory analysis of mental health harms from emotional dependence on the social chatbot Replika. New Media and Society 2024, 26(10), 5923–5941. [Google Scholar] [CrossRef]

- Lai, T.; Xie, C.; Ruan, M.; Wang, Z.; Lu, H.; Fu, S. Influence of artificial intelligence in education on adolescents’ social adaptability: The mediatory role of social support. PLOS ONE 2023, 18(3), e0283170. [Google Scholar] [CrossRef]

- Levkovich, I. Evaluating Diagnostic Accuracy and Treatment Efficacy in Mental Health: A Comparative Analysis of Large Language Model Tools and Mental Health Professionals. European Journal of Investigation in Health, Psychology and Education 2025, 15(1), 9. [Google Scholar] [CrossRef]

- Loos, E.; Ivan, L. Editorial: Chatbots as humanlike text generators: friend or foe? Frontiers in Human Dynamics 2025, 7, 1706740. [Google Scholar] [CrossRef]

- Ma, G.; Tian, S.; Song, Y.; Chen, Y.; Shi, H.; Li, J. When Technology Meets Anxiety:The Moderating Role of AI Usage in the Relationship Between Social Anxiety, Learning Adaptability, and Behavioral Problems Among Chinese Primary School Students. In Psychology Research and Behavior Management; JOURNAL:JOURNAL:DPRB20;REQUESTEDJOURNAL:JOURNAL:DPRB20; WGROUP:STRING:PUBLICATION, 2025; Volume 18, pp. 151–167. [Google Scholar] [CrossRef]

- Malfacini, K. The impacts of companion AI on human relationships: risks, benefits, and design considerations. AI & Soc 2025, 40, 5527–5540. [Google Scholar] [CrossRef]

- Maples, B.; Cerit, M.; Vishwanath, A.; Pea, R. Loneliness and suicide mitigation for students using GPT-3-enabled chatbots. npj Mental Health Research 3 2024, 4. [Google Scholar] [CrossRef]

- Maples, B.; Pea, R. D.; Markowitz, D. Learning from Intelligent Social Agents as Social and Intellectual Mirrors. AI in Learning: Designing the Future 2023, 73–89. [Google Scholar] [CrossRef]

- Masyn, K. E. Latent class analysis and finite mixture modeling. In The Oxford handbook of quantitative methods in psychology; Little, T. D., Ed.; Oxford University Press, 2013; Vol. 2, pp. 551–611. [Google Scholar] [CrossRef]

- McBain, R. K.; Bozick, R.; Diliberti, M.; Zhang, L. A.; Zhang, F.; Burnett, A.; Kofner, A.; Rader, B.; Breslau, J.; Stein, B. D.; Mehrotra, A.; Pines, L. U.; Cantor, J.; Yu, H. Use of Generative AI for Mental Health Advice Among US Adolescents and Young Adults. JAMA Network Open 2025, 8(11), e2542281–e2542281. [Google Scholar] [CrossRef] [PubMed]

- Merrill, K.; Kim, J.; Collins, C. AI companions for lonely individuals and the role of social presence. In Communication Research Reports; STRING:PUBLICATION: WGROUP, 2022; Volume 39, 2, pp. 93–103. [Google Scholar] [CrossRef]

- Montgomery, B. Mother says AI chatbot led her son to kill himself in lawsuit against its maker. The Guardian, 2024. Available online: https://www.theguardian.com/technology/2024/oct/23/character-ai-chatbot-sewell-setzer-death.

- Moore, J.; Grabb, D.; Agnew, W.; Klyman, K.; Chancellor, S.; Ong, D. C.; Haber, N. Expressing stigma and inappropriate responses prevents LLMs from safely replacing mental health providers. ACMF AccT 2025 - Proceedings of the 2025 ACM Conference on Fairness, Accountability,and Transparency 2025, 1, 599–627. [Google Scholar] [CrossRef]

- Muthén, B. O. Latent variable mixture modeling. In New developments and techniques in structural equation modeling; Marcoulides, G. A., Schumacker, R. E., Eds.; Lawrence Erlbaum Associates, 2001; pp. 1–33. [Google Scholar]

- Ortutay, B. Lawsuits accuse OpenAI of driving people to suicide and delusions. AP NEWS. 2025. Available online: https://apnews.com/article/openai-chatgpt-lawsuit-suicide-56e63e5538602ea39116f1904bf7cdc3.

- Pentina, I.; Hancock, T.; Xie, T. Exploring relationship development with social chatbots: A mixed-method study of replika. Computers in Human Behavior 2023, 140, 107600. [Google Scholar] [CrossRef]

- Piombo, M. A.; La Grutta, S.; Epifanio, M. S.; Di Napoli, G.; Novara, C. Emotional Intelligence and Adolescents’ Use of Artificial Intelligence: A Parent–Adolescent Study. Behavioral Sciences 2025, Vol. 15(Page 1142, 15(8)), 1142. [Google Scholar] [CrossRef] [PubMed]

- Shoemaker, A. L. Construct validity of area specific self-esteem: The Hare Self-Esteem Scale. Educational and Psychological Measurement 1980, 40(2), 495–501. [Google Scholar] [CrossRef]

- Spurk, D.; Hirschi, A.; Wang, M.; Valero, D.; Kauffeld, S. Latent profile analysis: A review and “how to” guide of its application within vocational behavior research. Journal of Vocational Behavior 120 2020, 103445. [Google Scholar] [CrossRef]

- Sun, Y.; Zhang, Y. A review of theories and models applied in studies of social media addiction and implications for future research. Addictive Behaviors 2021, 114, 106699. [Google Scholar] [CrossRef]

- Vanhoffelen, G.; Vandenbosch, L.; Schreurs, L. Teens, Tech, and Talk: Adolescents’ Use of and Emotional Reactions to Snapchat’s My AI Chatbot. Behavioral Sciences 2025, Vol. 15(Page 1037, 15(8)), 1037. [Google Scholar] [CrossRef]

- Wang, M.; Hanges, P. J. Latent class procedures: Applications to organizational research. Organizational Research Methods 2011, 14(1), 24–31. [Google Scholar] [CrossRef]

- Weller, B. E.; Bowen, N. K.; Faubert, S. J. Latent Class Analysis: A Guide to Best Practice. Journal of Black Psychology 2020, 46(4), 287–311. [Google Scholar] [CrossRef]

- Willoughby, B. J.; Dover, C. R.; Hakala, R. M.; Carroll, J. S. Artificial connections: Romantic relationship engagement with artificial intelligence in the United States. In Journal of Social and Personal Relationships; STRING:PUBLICATION: PAGEGROUP, 2025; Volume 42, 12, pp. 3363–3387. [Google Scholar] [CrossRef]

- Xie, T.; Pentina, I. Attachment Theory as a Framework to Understand Relationships with Social Chatbots: A Case Study of Replika. In Proceedings of the 55th Hawaii International Conference on System Sciences | 2022, 2022; Available online: https://hdl.handle.net/10125/79590.

- Yao, R.; Qi, G.; Sheng, D.; Sun, H.; Zhang, J. Connecting self-esteem to problematic AI chatbot use: the multiple mediating roles of positive and negative psychological states. Frontiers in Psychology 2025, 16, 1453072. [Google Scholar] [CrossRef] [PubMed]

- Yılmaz, Ö.; Yılmaz, Ö. Personalised learning and artificial intelligence in science education: current state and future perspectives. Educational Technology Quarterly 2024, 2024(3), 255–274. [Google Scholar] [CrossRef]

- Yousif, N. Parents of teenager who took his own life sue OpenAI. BBC. 2025. Available online: https://www.bbc.com/news/articles/cgerwp7rdlvo.

- Zhang, W.; Luo, J.; Zhang, H. The therapeutic effectiveness of artificial intelligence-based chatbots in alleviation of depressive and anxiety symptoms in short-course treatments: A systematic review and meta-analysis. Journal of Affective Disorders 2024, 356, 459–469. [Google Scholar] [CrossRef]

| Model | BIC | AIC | Entropy |

| 1 profile | 364209.015 | 364157.034 | --- |

| 2 profiles | 351975.306 | 351862.679 | 0.509 |

| 3 profiles | 347664.464 | 347491.191 | 0.514 |

| 4 profiles | 347456.787 | 347222.869 | 0.491 |

| 5 profiles | 346933.671 | 346639.107 | 0.475 |

| 6 profiles | 345326.025 | 344970.816 | 0.474 |

| Item | Profile 0 | Profile 1 | Profile 2 |

| Talked to AI as a friend | 25.1% | 29.7% | 42.8% |

| AI can replace friends | 10.7% | 13.9% | 22.6% |

| AI can replace partner | 6.4% | 8.0% | 12.3% |

| AI understands me better | 7.6% | 11.2% | 20.9% |

| Item | Profile 0 | Profile 1 | Profile 2 |

| Told AI something I was ashamed to tell others | 8% | 11% | 20% |

| Easier to confide in AI than a real person | 7% | 11% | 20% |

| AI helps when I feel lonely or sad | 6% | 9% | 17% |

| AI understands me better than people | 5% | 8% | 16% |

| Item | Profile 0 | Profile 1 | Profile 2 |

| Talked about sexuality | 5% | 7% | 11% |

| Talked about relationships and feelings | 11% | 14% | 22% |

| Practiced talking to a partner | 16% | 19% | 25% |

| Prefer asking AI about relationships/sex | 27% | 31% | 39% |

| Item | Profile 0 | Profile 1 | Profile 2 |

| Writing with AI is easier than with people | 19% | 23% | 32% |

| I like talking to AI; it responds the way I prefer | 10% | 10% | 15% |

| I can tell AI anything | 16% | 21% | 27% |

| I feel relaxed and safe with AI | 13% | 15% | 24% |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).