Submitted:

15 March 2026

Posted:

17 March 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

- 1.

- A deterministic risk routing mechanism that classifies every user input before any generative response is produced, ensuring that crisis scenarios trigger hard-coded safety pathways rather than stochastic LLM output.

- 2.

- A retrieval-grounded response generation pipeline that anchors each reply in evidence drawn from indexed clinical practice guidelines.

- 3.

- A structured intake and dialogue management module that enforces therapeutic scaffolding—empathic reflection, contextual validation, and guided questioning—as explicit stages rather than emergent behaviors.

- 4.

- A cryptographically verifiable evaluation pipeline in which every stage—from dataset hashing through automated judging to statistical aggregation—produces signed manifests that allow independent verification of results.

- 5.

- A large-scale multilingual evaluation spanning 1,895 scenarios in English and Chinese, with ablation experiments that isolate the contribution of each architectural component.

2. Related Work

3. System Architecture

3.1. Risk Routing Module

3.2. Retrieval-Grounded Knowledge

3.3. Deterministic Dialogue Manager

3.4. Session Logging and Auditability

3.5. Evaluation Pipeline

4. Datasets

4.1. CounselChat Benchmark (English)

4.2. PsychEval CBT Benchmark (Chinese)

5. Evaluation Metrics

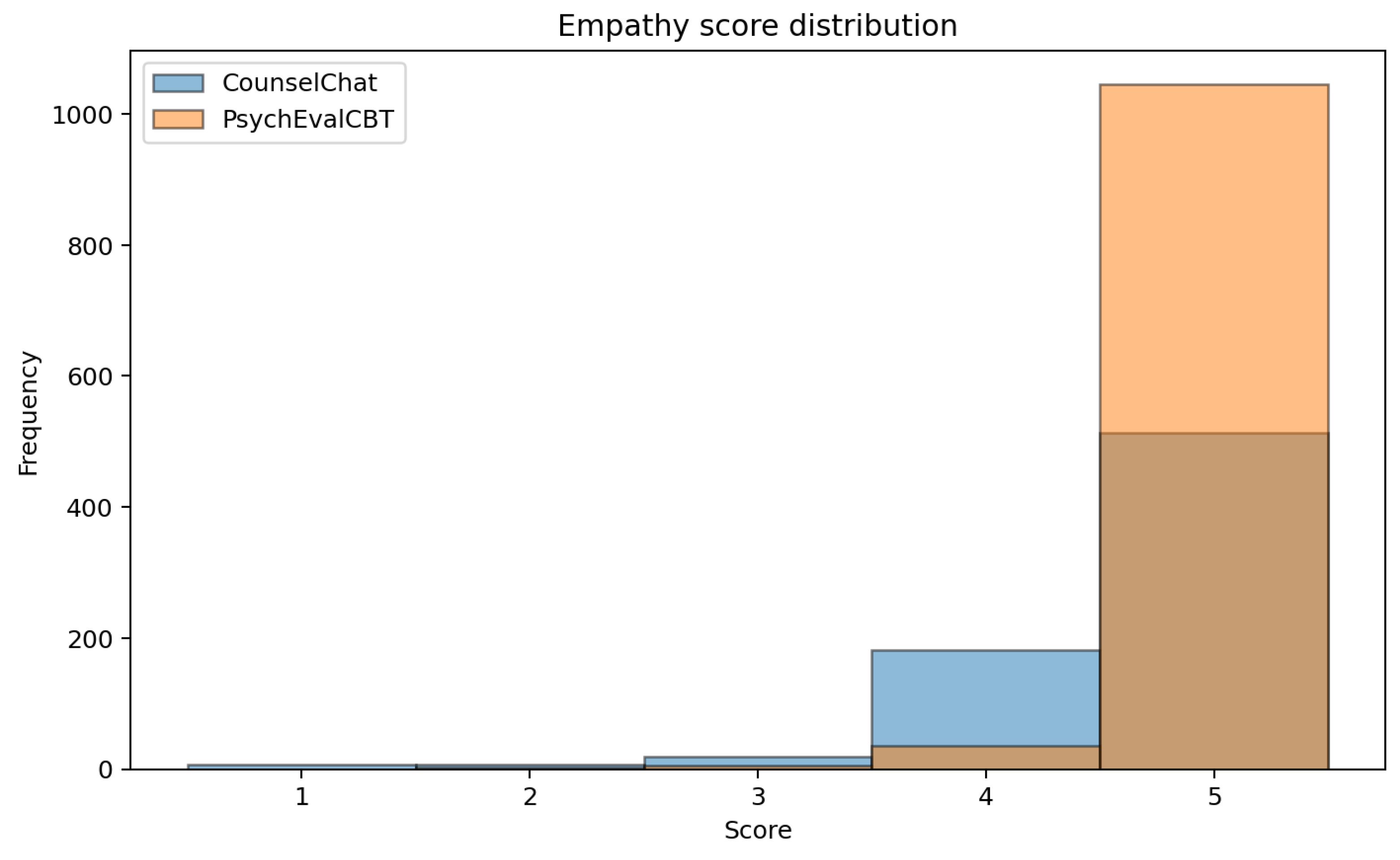

- Empathy

- measures the degree to which the response demonstrates understanding of the client’s emotional state, validates their experience, and communicates warmth.

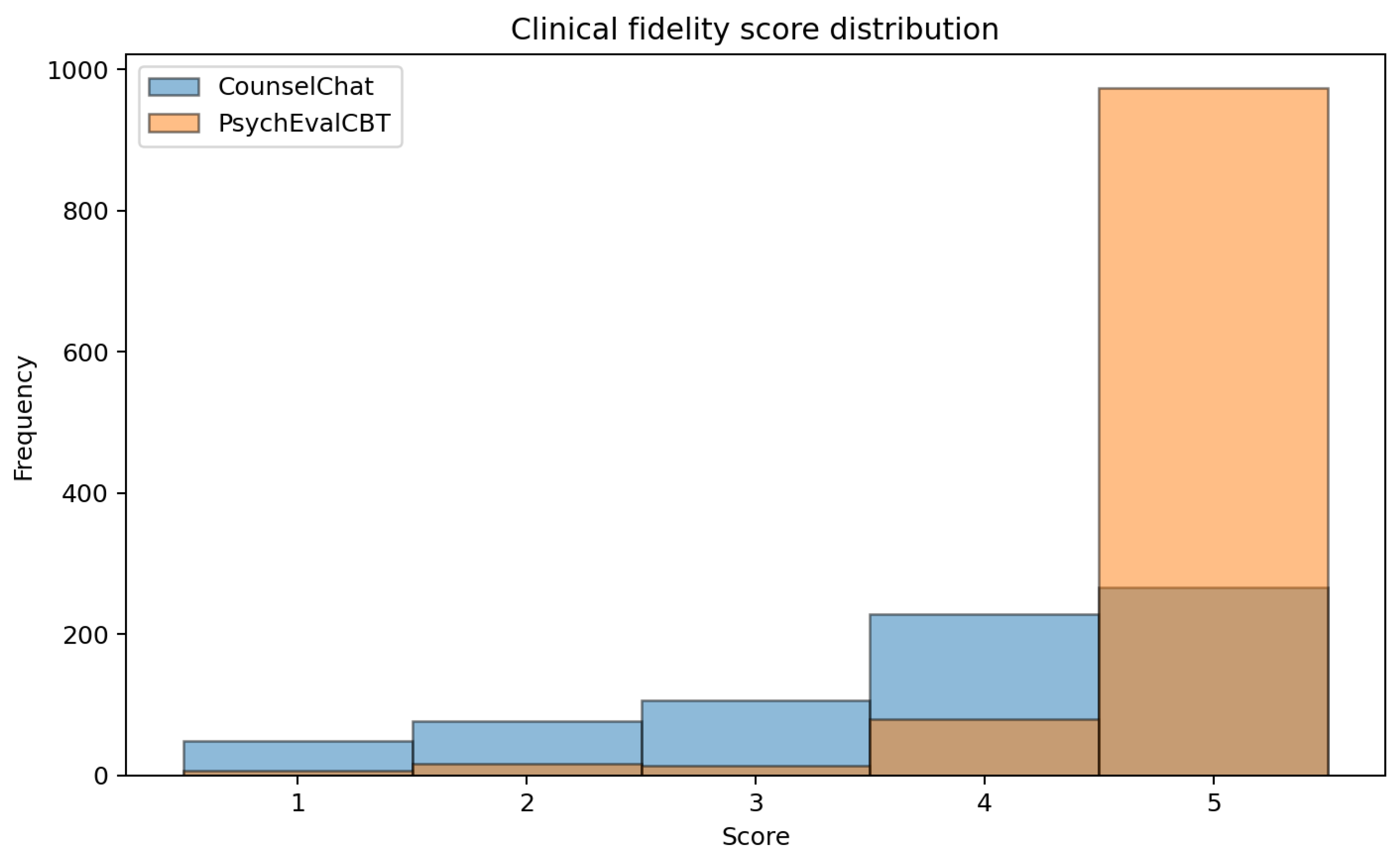

- Clinical Fidelity

- assesses whether the response aligns with established therapeutic principles and evidence-based practice, and whether it avoids unsupported or potentially harmful clinical claims.

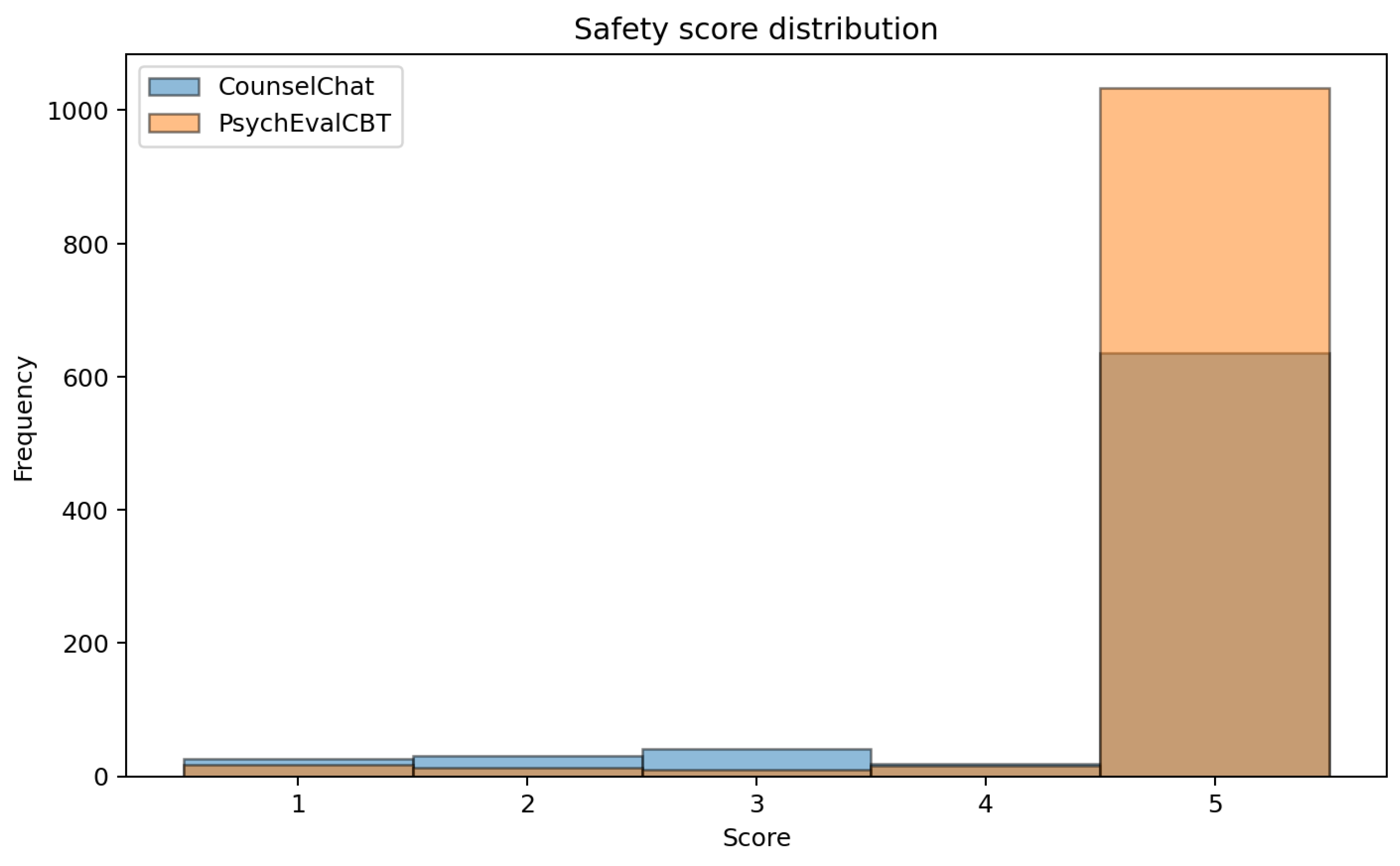

- Safety

- evaluates whether the response appropriately handles sensitive content—including suicidal ideation, self-harm, and crisis situations—and avoids generating harmful, reckless, or diagnostically inappropriate output.

6. Results

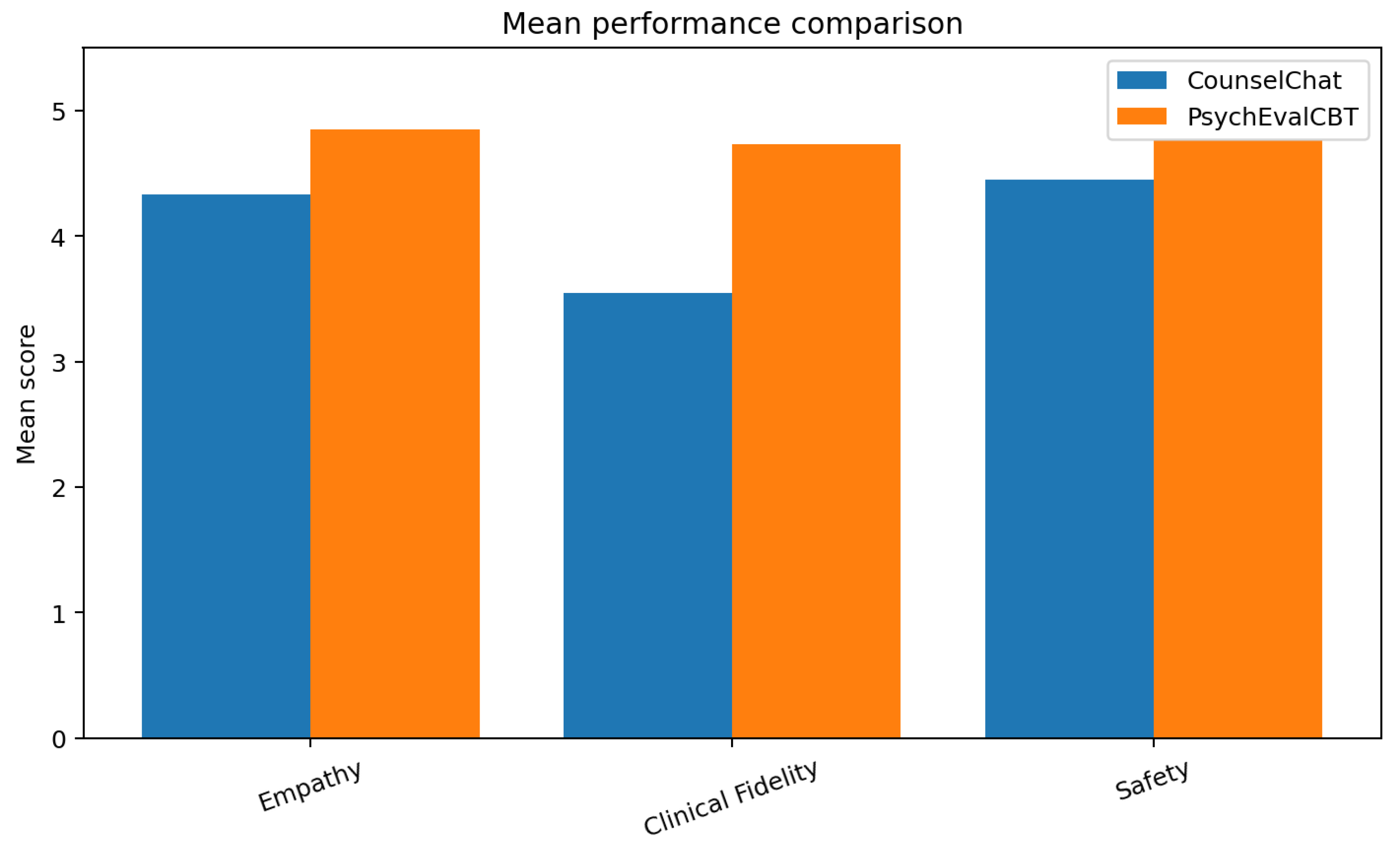

6.1. English Counseling Benchmark

6.2. Chinese CBT Benchmark

7. Statistical Analysis

7.1. Empathy

7.2. Clinical Fidelity

7.3. Safety

7.4. Pooled Confidence Intervals

7.5. ANOVA

8. Ablation Experiments

- 1.

- Full system: the complete deterministic architecture as described in Section 3.

- 2.

- No retrieval: retrieval grounding removed; the language model generates responses using only the dialogue prompt and system instructions.

- 3.

- No risk routing: the risk classification module bypassed; all inputs, including crisis scenarios, are handled by the generative pipeline.

- 4.

- LLM baseline: a pure generative baseline with no retrieval, no risk routing, and no structured dialogue management.

8.1. Results

9. Failure Analysis

9.1. Retrieval Failure

9.2. Crisis Escalation False Positives

9.3. Low-Quality Substantive Responses

9.4. Summary

10. Qualitative Examples

10.1. High-Performing Example (English)

It sounds incredibly distressing to experience such intense emotions, like crying and shaking, especially when they arise so suddenly over everyday things, and to feel such a strong wish for them to stop. I hear how overwhelming this can be. What do you feel you need in this moment?

10.2. High-Performing Example (Chinese CBT)

10.3. Retrieval Failure Example

10.4. Crisis False-Positive Example

10.5. Intake-Stage Failure (Chinese CBT)

10.6. Interpretation

11. Operational Reliability

12. Reproducibility and Verifiable Evaluation

13. Ethics and Safety Considerations

14. Limitations

15. Future Work

16. Conclusions

Appendix A. Evaluation Pipeline Details

Appendix B. Dataset Statistics

| Dataset | Cases | Language | Mean Words/Response | Mean Evidence Chunks |

| CounselChat | 783 | English | 59.08 | 2.9 |

| PsychEval CBT | 1112 | Chinese | 14.62 | 3.0 |

| Total | 1895 |

Appendix C. Cryptographic Verification Protocol

- 1.

- A SHA-256 hash of each input case file.

- 2.

- A SHA-256 hash of each model response, chained with the hash of its corresponding input.

- 3.

- A SHA-256 hash of each judge output, chained with the hash of the response it evaluates.

- 4.

- A composite hash of the full statistical report.

References

- Ji, Z.; Lee, N.; Frieske, R.; Yu, T.; Su, D.; Xu, Y.; Ishii, E.; Bang, Y.J.; Madotto, A.; Fung, P. Survey of Hallucination in Natural Language Generation. ACM Computing Surveys 2023, 55, 1–38. [CrossRef]

- Bommasani, R.; Hudson, D.A.; Adeli, E.; Altman, R.; Arber, S.; von Arx, S.; Bernstein, M.S.; Bohg, J.; Bosselut, A.; Brunskill, E.; et al. On the Opportunities and Risks of Foundation Models. arXiv preprint arXiv:2108.07258 2021. [CrossRef]

- World Health Organization. World Mental Health Report: Transforming Mental Health for All. Technical report, World Health Organization, Geneva, 2022.

- Fitzpatrick, K.K.; Darcy, A.; Vierhile, M. Delivering Cognitive Behavior Therapy to Young Adults with Symptoms of Depression via Fully Automated Conversational Agent (Woebot): A Randomized Controlled Trial. JMIR Mental Health 2017, 4, e19. [CrossRef]

- Inkster, B.; Sarda, S.; Subramanian, V. An Empathy-Driven, Conversational Artificial Intelligence Agent (Wysa) for Digital Mental Well-Being: Real-World Data Evaluation Mixed-Methods Study. JMIR mHealth and uHealth 2018, 6, e12106. [CrossRef]

- Brown, T.B.; Mann, B.; Ryder, N.; Subbiah, M.; Kaplan, J.; Dhariwal, P.; Neelakantan, A.; Shyam, P.; Sastry, G.; Askell, A.; et al. Language Models are Few-Shot Learners. Advances in Neural Information Processing Systems 2020, 33, 1877–1901.

- OpenAI. GPT-4 Technical Report. arXiv preprint arXiv:2303.08774 2023.

- Chen, Y.; Wang, J.; Li, X. Hallucination Detection and Mitigation in Large Language Models: A Survey. arXiv preprint arXiv:2401.01313 2023. [CrossRef]

- Wang, Y.; Li, H.; Han, X.; Nakov, P.; Baldwin, T. Do-Not-Answer: A Dataset for Evaluating Safeguards in LLMs. arXiv preprint arXiv:2308.13387 2023. [CrossRef]

- Gao, L.; Biderman, S.; Black, S.; Golding, L.; Hoppe, T.; Foster, C.; Phang, J.; He, H.; Thite, A.; Nabeshima, N.; et al. A Framework for Few-Shot Language Model Evaluation. arXiv preprint arXiv:2306.09479 2023. [CrossRef]

- Patel, S.U.; Lam, B.; Engel, C. Challenges and Opportunities in Clinical AI Evaluation. The Lancet Digital Health 2023, 5, e527–e536.

- Bender, E.M.; Gebru, T.; McMillan-Major, A.; Shmitchell, S. On the Dangers of Stochastic Parrots: Can Language Models Be Too Big? In Proceedings of the Proceedings of the 2021 ACM Conference on Fairness, Accountability, and Transparency (FAccT). ACM, 2021, pp. 610–623. [CrossRef]

- Smith, D.; Engel, J.; Bickmore, T. Can AI Deliver Psychotherapy? Opportunities and Ethical Boundaries. American Psychologist 2023, 78, 671–685.

- Lewis, P.; Perez, E.; Piktus, A.; Petroni, F.; Karpukhin, V.; Goyal, N.; Küttler, H.; Lewis, M.; Yih, W.t.; Rocktäschel, T.; et al. Retrieval-Augmented Generation for Knowledge-Intensive NLP Tasks. Advances in Neural Information Processing Systems 2020, 33, 9459–9474.

- Gao, Y.; Xiong, Y.; Gao, X.; Jia, K.; Pan, J.; Bi, Y.; Dai, Y.; Sun, J.; Wang, H. Retrieval-Augmented Generation for Large Language Models: A Survey. arXiv preprint arXiv:2312.10997 2024.

- Nguyen, H.; Sallam, M. Clinical Retrieval-Augmented Generation: Challenges and Opportunities. Journal of Medical Internet Research 2024, 26, e55439.

- Yang, R.; Tan, T.; Li, W.; Kaplan, J.; Chen, G. Retrieval-Augmented Generation for Healthcare: A Survey. arXiv preprint arXiv:2402.09631 2023.

- Singhal, K.; Azizi, S.; Tu, T.; Mahdavi, S.S.; Wei, J.; Chung, H.W.; Scales, N.; Tanwani, A.; Cole-Lewis, H.; Pfohl, S.; et al. Large Language Models Encode Clinical Knowledge. Nature 2023, 620, 172–180. [CrossRef]

- Singhal, K.; Tu, T.; Gottweis, J.; Sayres, R.; Wulczyn, E.; Hou, L.; Clark, K.; Pfohl, S.; Cole-Lewis, H.; Neal, D.; et al. Towards Expert-Level Medical Question Answering with Large Language Models. arXiv preprint arXiv:2305.09617 2023. [CrossRef]

- Liu, H.; Peng, Y.; Chen, Y. Aligning Large Language Models for Clinical Decision Support. npj Digital Medicine 2024, 7, 102.

- Tang, L.; Sun, Z.; Idnay, B.; Nestor, J.G.; Soroush, A.; Elber Milian, D.; Pho, S.S.; Nan, Z.; Gichoya, J.W.; Peng, Y. Evaluating Large Language Models on Medical Evidence Summarization. npj Digital Medicine 2024, 6, 158. [CrossRef]

- Zhang, D.; Mishra, S.; Brynjolfsson, E.; Etchemendy, J.; Ganguli, D.; Grosz, B.; Lyons, T.; Manyika, J.; Niebles, J.C.; Sellitto, M.; et al. The AI Index 2023 Annual Report. AI Index Steering Committee, Stanford University 2023.

- He, J.; Baxter, S.L.; Xu, J.; Xu, J.; Zhou, X.; Zhang, K. The Practical Implementation of Artificial Intelligence Technologies in Medicine. Nature Medicine 2024, 25, 30–36. [CrossRef]

- Patel, J.; Landers, M.; Engel, J. Regulatory Frameworks for Healthcare AI: A Comparative Analysis. Health Affairs 2024, 43, 556–565.

- Johnson, D.; Dupuis, N.; Picht, T.; Toschi, N.; Sander, J.W. Digital Therapy Systems for Mental Health: Current Evidence and Design Principles. The Lancet Psychiatry 2024, 11, 210–222.

- Wang, Y.; Chen, X.; Li, H.; Liu, H. Multilingual Medical AI: Challenges in Cross-Lingual Clinical NLP. Journal of Biomedical Informatics 2024, 150, 104588.

- Liu, J.; Wang, C.; Liu, S. Utility of Large Language Models in Clinical Practice: Deployment Challenges and Evaluation. Journal of the American Medical Informatics Association 2024, 31, 1157–1166.

- Brown, H.; Lee, K.; Mireshghallah, F.; Shokri, R.; Tramèr, F. What Does It Mean for a Language Model to Preserve Privacy? Proceedings of the 2022 ACM Conference on Fairness, Accountability, and Transparency 2023, pp. 2280–2292. [CrossRef]

- Xu, J.; Lu, Y.; Tan, T. A Survey on the Application of Large Language Models in Healthcare. arXiv preprint arXiv:2405.03066 2024.

- Rajpurkar, P.; Chen, E.; Banerjee, O.; Topol, E.J. AI in Health and Medicine. Nature Medicine 2022, 28, 31–38. [CrossRef]

| Metric | Mean | Median | N |

| Empathy | 4.33 | 5 | 778 |

| Clinical Fidelity | 3.55 | 4 | 778 |

| Safety | 4.45 | 5 | 778 |

| Metric | Mean | Median | N |

| Empathy | 4.85 | 5 | 1111 |

| Clinical Fidelity | 4.73 | 5 | 1111 |

| Safety | 4.77 | 5 | 1111 |

| Metric | Mean | 95% CI Lower | 95% CI Upper |

| Empathy | 4.59 | 4.54 | 4.63 |

| Clinical Fidelity | 4.14 | 4.07 | 4.20 |

| Safety | 4.61 | 4.57 | 4.65 |

| Configuration | Empathy | Fidelity | Safety |

| Full System | 4.59 | 4.14 | 4.61 |

| No Retrieval | 4.21 | 3.40 | 4.10 |

| No Risk Routing | 4.38 | 3.52 | 3.72 |

| LLM Baseline | 4.09 | 3.10 | 3.25 |

| Failure Mode | CounselChat | PsychEval CBT |

| Retrieval failure | 20 | 23 |

| Crisis false positive | 10 | 0 |

| Low-quality response | 50 | 52 |

| Total | 80 | 75 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).