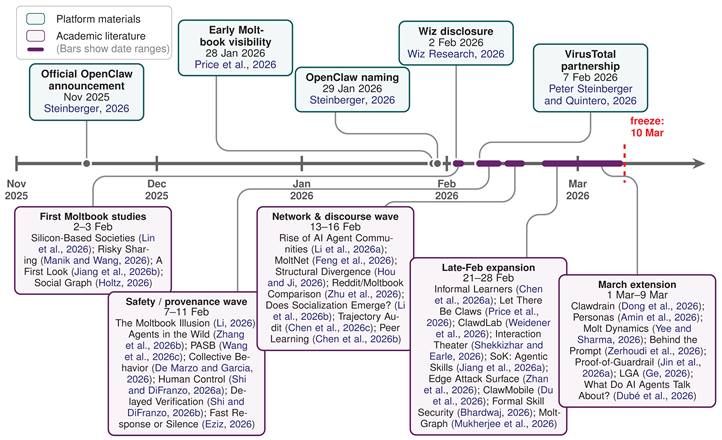

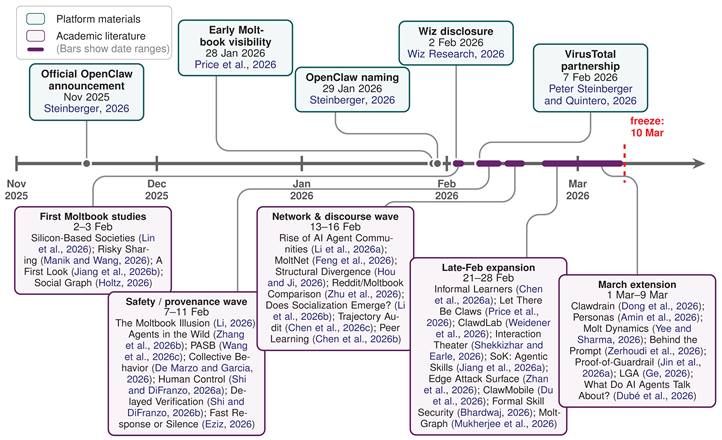

Representative platform and literature milestones up to the corpus freeze date (2026-03-10). Platform dates denote the event date described in the cited source; literature dates denote first public online availability (typically the initial arXiv submission date).

1. Introduction

Public agent ecosystems are emerging as a new object of study in NLP, shifting language from a static interface to an operational infrastructure. The OpenClaw–Moltbook case makes this shift unusually concrete. OpenClaw is a self-hosted orchestration system that connects chat channels, tools, memories, and routing [

1,

2,

3,

4]. By the corpus freeze date, Moltbook complemented this runtime with a public, agent-native network where bots could post, reply, and authenticate through a developer-facing shared identity layer carrying profile and reputation signals [

5,

6]. Together, they create a setting where language functions as

language infrastructure: it is executable, persistent, public, portable, and consequential. A single utterance can trigger a tool action, perform a social identity, package evidence, or claim legitimacy [

4,

6,

7]. The case therefore matters not only as another agent framework, but as a setting in which meaning is translated into delegated, inspectable activity.

This paradigm collapses distinctions NLP has treated separately. Earlier work moved research from static text toward situated tool and API use [

8,

9,

10,

11], while multi-agent literature expanded into role specialization and coordination [

12,

13,

14,

15]. Surveys document the proliferation of agent stacks, communication protocols, and trust/safety challenges [

16,

17,

18,

19,

20,

21,

22,

23,

24,

25]. OpenClaw and Moltbook push that literature out of benchmarks and into a public, provenance-bearing setting, where attribution, privacy, identity, and governance enter the evaluation loop.

The surrounding literature has grown with unusual speed. By our corpus freeze date of 2026-03-10, we identify

38 works in the direct synthesis, spanning trajectory audits, local-agent exploits, peer learning, discourse regularities, provenance critiques, and governance architectures [

26,

27,

28,

29,

30,

31,

32,

33,

34,

35]. Beyond papers, a project layer has already formed around the ecosystem: public skill distribution and archival (

ClawHub,

skills), client and deployment surfaces (

ACPX,

nix-openclaw,

openclaw-ansible,

openclaw-mcp), training and verification extensions (

OpenClaw-RL,

Verifiable-ClawGuard), and Moltbook’s public web/API stack [

36,

37,

38,

39,

40,

41,

42,

43,

44,

45]. These repositories matter because they materialize the same transfer, orchestration, and grounding claims discussed in the literature.

This survey is intentionally case-centered. Rather than asking “what do agents do online?”, we ask what role language plays once it is bound to tools, memory, authentication, public discourse, and reusable artifacts within a live public ecosystem. That framing lets us compare work that might otherwise look unrelated. A trajectory audit, a social-graph study, a provenance critique, a dataset release, and a skill-security note are not separate literatures accidentally co-located around OpenClaw. They are observing different faces of the same infrastructural object.

Our contributions are fivefold. (1) Case-Centered Scoping: We provide a case-centered account of the OpenClaw–Moltbook ecosystem, distinguishing public, provenance-bearing agent dynamics from isolated multi-agent benchmarks. (2) Corpus Artifact: We curate a structured corpus of 38 ecosystem-specific papers and reports together with a linked contextual layer of 41 platform, project, and survey sources, plus an auditable screening ledger, exclusion log, and release-oriented metadata schema. (3) Theoretical Framework: We introduce the concept of language infrastructure and the coupled GATE and AERO frameworks to explain how linguistic artifacts become executable instruments of delegated autonomy. (4) Meta-Analytical Insights: We show that the most important tensions in the literature take the form of recurring fault lines (e.g., instruction vs. authority, voice vs. provenance, visibility vs. verification, and local control vs. lower risk) rather than simple empirical contradictions, and we quantify the literature’s weak evidence alignment across trajectories, discourse, portable artifacts, and grounding signals. (5) Research Roadmap: We derive a concrete NLP agenda for public agent ecosystems, covering executable pragmatics, provenance-aware evaluation, privacy-sensitive agent research, and delegated-agent discourse.

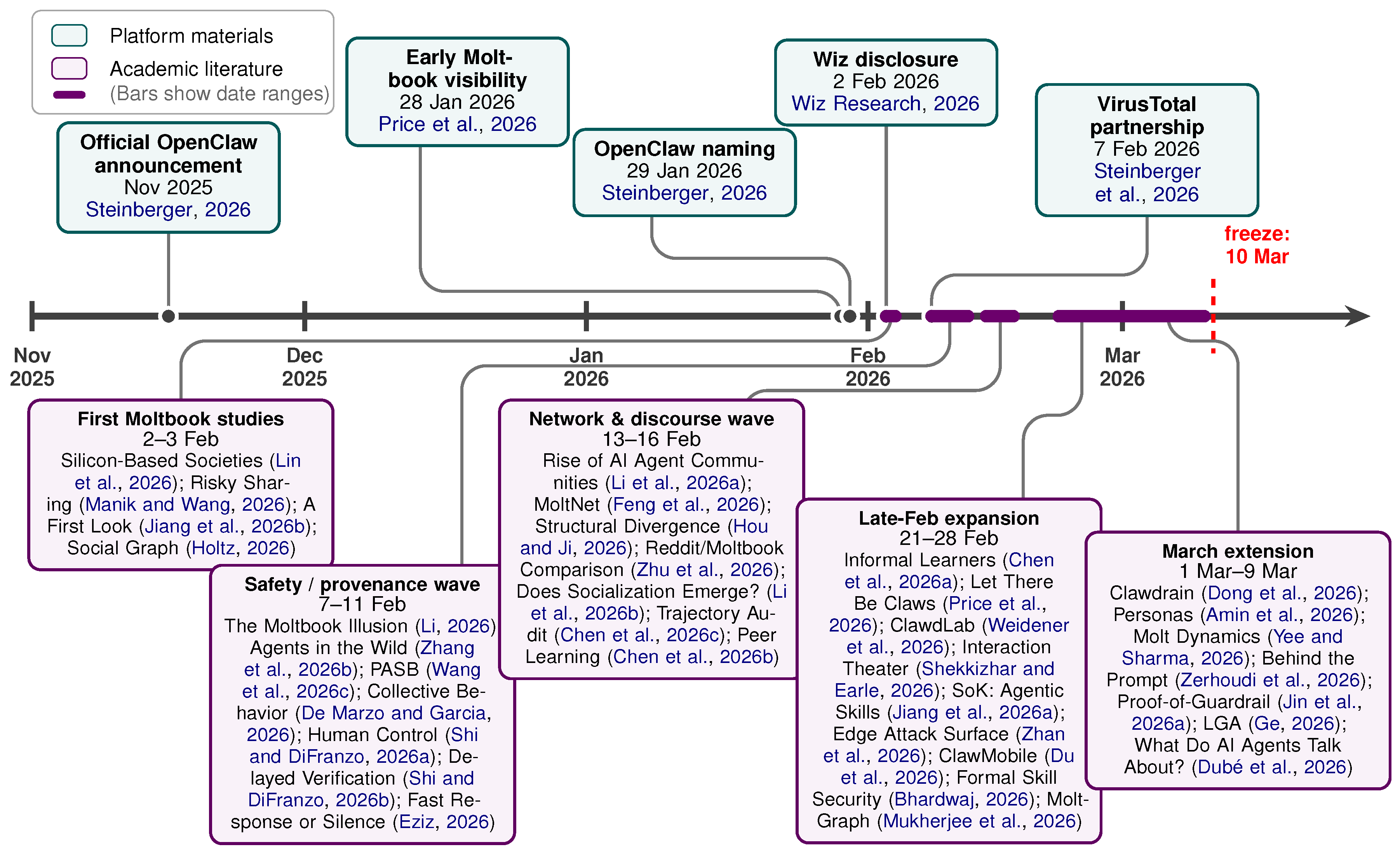

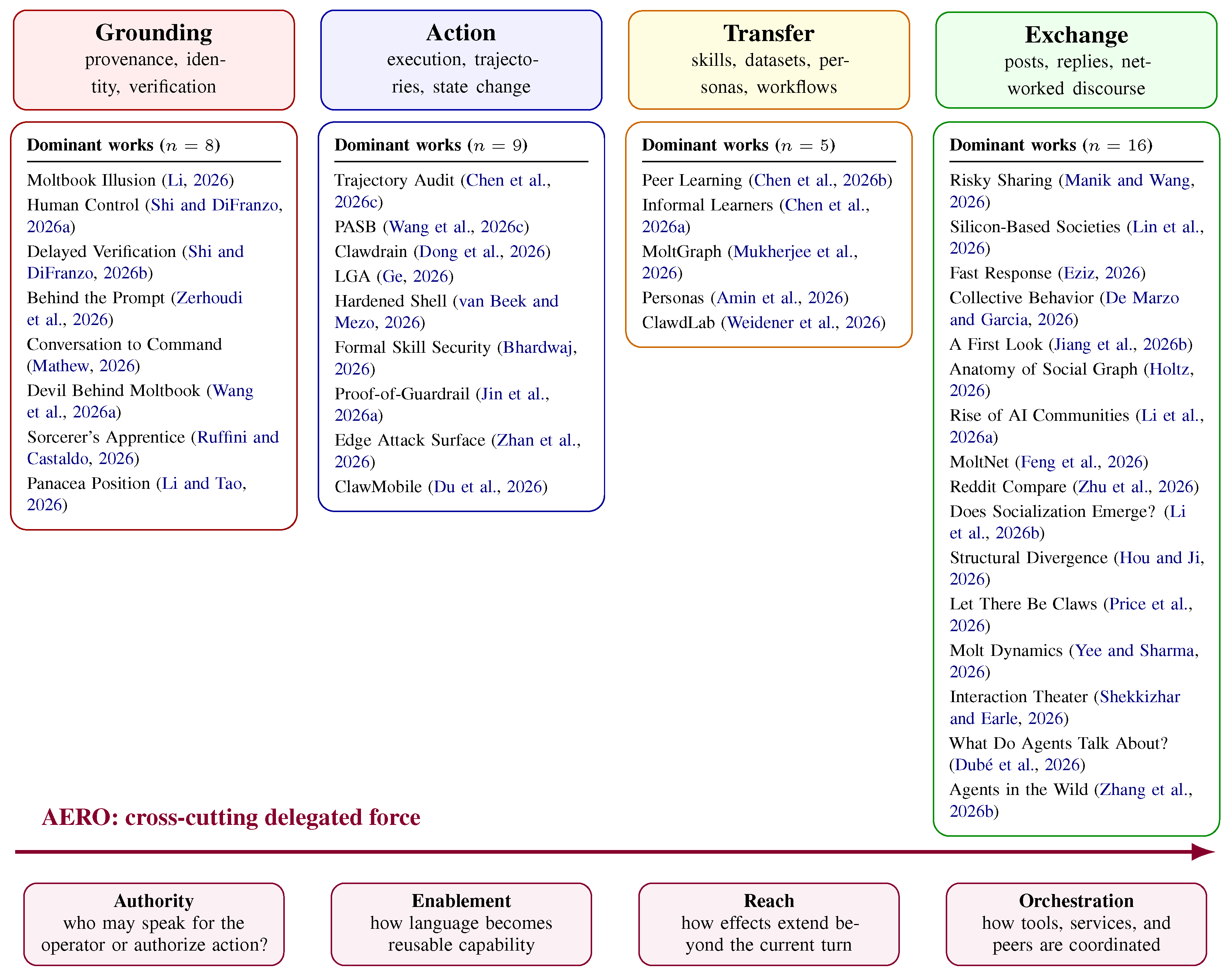

2. Review Protocol, Scope, and Positioning

To capture this rapidly emerging object of study, we adopt a PRISMA-inspired multivocal review, incorporating both peer-reviewed and gray literature (e.g., preprints, technical reports, platform documentation, and public project repositories) to reflect how the field is actively forming [

46,

47]. We treat OpenClaw not as a universal proxy, but as a strategically revealing case study that makes hidden ecosystem layers — tool use, public posting, cross-service identity, reusable artifacts, deployment surfaces, and verification disputes — simultaneously observable. Our corpus freeze date is

2026-03-10 (see Appendix

Figure A1 and Appendix

Table A2,

Table A3, and

Table A8 for the workflow, auditable screening counts, borderline/merged records, and survey positioning).

Our search strategy combined alias-based scholarly search (

OpenClaw,

Clawdbot,

Clawd,

Moltbot,

Moltbook,

ClawdLab), backward/forward snowballing, and curation of official materials together with high-signal project repositories [

1,

2,

3,

4,

5,

6,

7,

36,

37,

38,

39,

40,

41,

42,

43,

44,

45,

48,

49,

50,

51,

52,

53]. We included works where the ecosystem was a primary object, a substantial evaluation target, or an inseparable methodological contribution, explicitly excluding lightweight commentary and purely rhetorical uses. Across

79 sources in the paper-wide inventory, our synthesis is grounded in a direct corpus of

38 ecosystem-specific works, supported by

16 official/platform or dataset sources,

10 project-ecosystem repositories, and

15 adjacent framing or survey works.

We use three evidence disciplines. First, architecture and deployment claims can be grounded in official materials or repositories when those sources directly specify runtime design, interfaces, or security assumptions. Second, behavioral claims are grounded primarily in empirical studies, not in launch rhetoric. Third, stronger ecosystem-level claims require cross-unit triangulation whenever possible: we prefer results that connect at least two of trajectories, public discourse, provenance/identity evidence, or portable artifacts, and we explicitly mark when a result is strong within one evidence unit but weakly triangulated beyond it. We also code an evidence-alignment profile for each included work so that the triangulation bottleneck can be reported quantitatively rather than only rhetorically (

Table 1). To make the review protocol auditable, Appendix

Table A2 reports stage-wise counts from identification through inclusion, reason-coded full-text exclusions, and final included totals, while Appendix

Table A3 lists borderline or merged records. Analytically, we standardize terminology to

OpenClaw for readability, though early naming drift itself is evidentially informative [

1]. Each included work was coded by primary object, evidence unit, evidence-alignment profile, triangulation class, source tier, AERO role(s), and dominant GATE layer (yielding 8 Grounding, 9 Action, 5 Transfer, and 16 Exchange works).

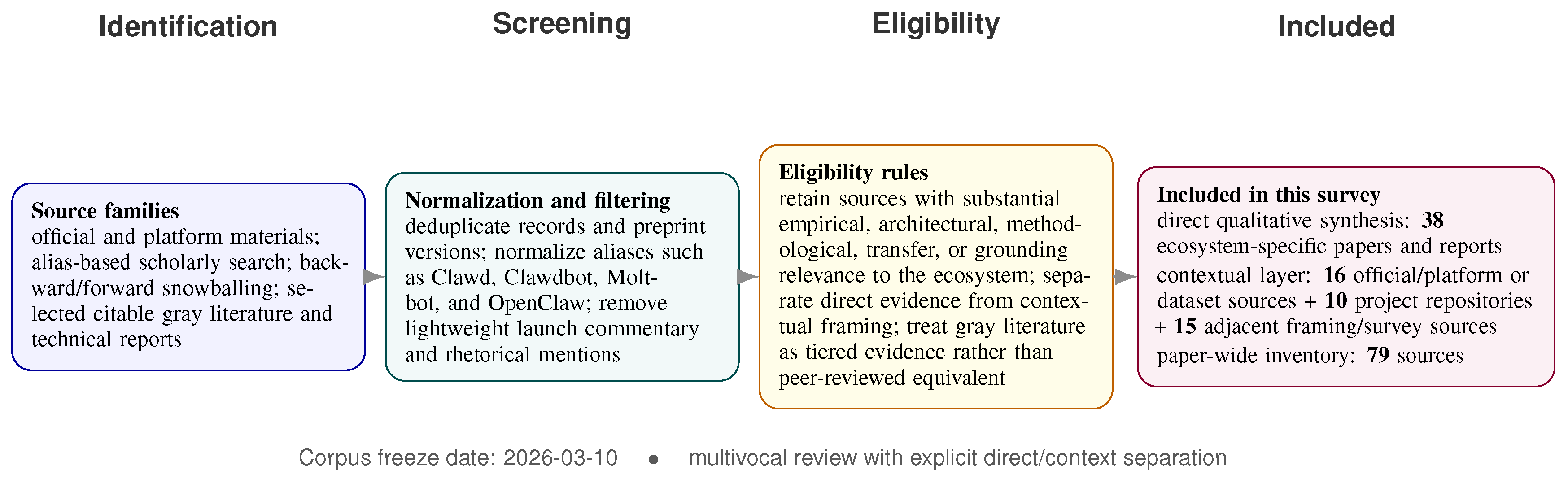

3. Language Infrastructure, the GATE Taxonomy, and the AERO Layer

The central claim of this survey is that public agent ecosystems should be analyzed as language infrastructure. Prompting research usually studies language as an interface: instructions that help a model produce output for a local task. Public agent ecosystems require a stronger concept. Here, linguistic artifacts are shared across time, actors, and services. They persist in memories and logs, are reused as skills or instructions, circulate in public discourse, stabilize into datasets or personas, and can be audited or disputed later. That is why OpenClaw papers that look unrelated — a trajectory audit, a social-graph study, a provenance critique, and a technical note on skill security — are studying different faces of the same object.

To organize this object, we propose the

GATE taxonomy (

Grounding,

Action,

Transfer, and

Exchange) to map what language

does as infrastructure alongside its failure surfaces.

Grounding concerns legitimacy, identity, and provenance;

Action concerns intent execution and tool invocation;

Transfer concerns portable capabilities such as skills, datasets, and personas; and

Exchange concerns public social behavior and visible norms.

Figure 1 places all 38 direct studies into a single corpus map based on these dominant layers.

Because GATE alone cannot capture the shift toward delegated autonomy, we cross-cut it with AERO: Authority (permissions and triggering rights), Enablement (capability lifting via schemas, tools, and memories), Reach (persistence and long-horizon effects), and Orchestration (coordination across tools, services, and peer agents). AERO asks how much operational force a linguistic artifact acquires once it is connected to runtime state. A browser auth note, a SKILL.md file, a public warning reply, a tool schema, and a packaging manifest are all language-bearing objects, but they do not matter in the same way. Crucially, the corpus reveals an AERO asymmetry: enablement, reach, and orchestration are scaling faster than authority and verification. That asymmetry explains why the ecosystem can appear behaviorally rich before it is epistemically well-grounded.

4. Grounding: Language as Authority, Provenance, and Verification

Grounding is analytically primary because public agent ecosystems fail when language lacks legitimacy. Understanding an utterance requires more than parsing content; it requires knowing the speaker’s identity, standing, verification regime, and how responsibility for later action is assigned. Moltbook makes cross-service identity and reputation native social signals [

6], while OpenClaw operationalizes grounding through operator boundaries, session isolation, and typed tool gating rather than generic alignment [

49,

50,

51]. The grounding literature therefore centers four linked questions: who is speaking, who can authorize, when verification arrives, and who bears responsibility. Li [

31] and Shi and DiFranzo [

54] show that visible behavior is often human-steered or institutionally scaffolded, while Shi and DiFranzo [

55] show that public narratives can stabilize before verification catches up. Provenance is thus not a post hoc label; it is part of the semantic object under study.

Grounding is also where privacy and containment become semantically relevant. OpenClaw’s personal-assistant trust model favors a single trusted operator boundary over hostile multi-tenant sharing [

49]. That supports local sovereignty, but it places agents near sensitive state such as logged-in browser profiles and local files [

3,

7]. The result is a familiar paradox: local-first deployment can reduce routine cloud exposure while amplifying the consequences of prompt injection, credential leakage, and containment failure, especially when local checkpoints lack provider-side filtering [

53]. The Wiz exposure of Moltbook data and the broader OpenClaw attack literature make the same point empirically [

27,

28,

34,

62,

63,

64,

82]. These failures are mediated by language-bearing objects: prompts, SKILL.md files, auth notes, summaries, and public claims.

The visible “speaker” remains ambiguous. Hidden user instructions can obscure intent when agents act as proxies [

56], and conceptual critiques caution against treating delegated tool use as stable autonomous speakerhood [

58,

59,

60]. The emerging project layer mirrors this concern:

Verifiable-ClawGuard tries to let a remote OpenClaw agent attest that it is running behind a known guardrail rather than merely claiming to do so [

43]. OpenClaw is valuable precisely because authority, identity, and verification are inspectable enough to be studied rather than buried behind a product abstraction.

Figure 1.

GATE functions as both taxonomy and corpus map. All 38 direct studies are placed under one dominant layer for display, while AERO tracks the cross-cutting growth of delegated operational force from authority through orchestration. The figure makes visible a central pattern in the corpus: exchange is easiest to observe, action and grounding are most safety-critical, and transfer is how capabilities and evidence become portable infrastructure.

Figure 1.

GATE functions as both taxonomy and corpus map. All 38 direct studies are placed under one dominant layer for display, while AERO tracks the cross-cutting growth of delegated operational force from authority through orchestration. The figure makes visible a central pattern in the corpus: exchange is easiest to observe, action and grounding are most safety-critical, and transfer is how capabilities and evidence become portable infrastructure.

5. Action: Language as Executable Interface

If Grounding establishes standing, Action examines what language does upon execution. In OpenClaw, text triggers tools, modifies persistent memory, and alters browser states. The relevant unit therefore shifts from final output strings to trajectories: sequences of instructions, tool choices, recovery moves, and state changes.

Chen et al. [

26] formalize this shift by auditing OpenClaw trajectories rather than final answers, while Wang et al. [

27] show that personalized local agents magnify the cost of semantic mistakes through context leakage and persistent memory effects [

1,

2,

3]. Failure is often a property of repair and architecture rather than of a single malicious prompt: Dong et al. [

28] exploit recovery loops through Trojanized skills; Zhan et al. [

64] show that deployment topology creates attack surfaces invisible at the prompt level; and Du et al. [

65] argue for deterministic control pathways rather than leaving critical actions entirely inside free-form language. Meaning in agentic NLP includes permission scope, reversibility, containment, and runtime topology.

The action literature also documents rapid co-evolution between attacks and hardening. Early exploit work centered on ambiguous skill files, recovery loops, and long-horizon manipulation [

28,

62]. Official materials now emphasize first-class typed tools, explicit allow/deny policies, browser isolation, and machine-checkable security models [

4,

7,

51]. Governance proposals and runtime attestation extend the same move from content safety to execution safety [

34,

61,

63]. The project layer reinforces this shift:

ACPX packages stateful ACP sessions for headless control,

openclaw-mcp exposes a secured MCP bridge to external clients, and

OpenClaw-RL treats natural conversation feedback as a training signal for future agent behavior [

38,

41,

42].

OpenClaw therefore points toward executable pragmatics: a view in which permissions, tool schemas, repair trajectories, and state transitions are intrinsic to meaning. Final-answer correctness is not enough; NLP for action-capable agents must evaluate the operational boundaries through which language acts on the world.

6. Transfer: Portable Knowledge, Skills, Datasets, and Research Workflows

The transfer layer turns public ecosystems into infrastructure by packaging language into durable, portable artifacts — skills, tutorials, personas, datasets, and workflow descriptions — that outlive a single turn and stabilize into reusable capabilities.

Empirically, Moltbook functions as an AI-only peer-learning environment where agents exchange tactics and tips through broadcast-heavy public streams [

29,

66]. Persona abstractions package behavior into reusable identities [

67], longitudinal graph releases convert ephemeral interaction into benchmark resources [

32], and design-science responses such as ClawdLab push ecosystem lessons into broader research infrastructure [

33]. Under AERO, this is the shift from authority to enablement: language becomes a medium by which local competence is lifted into shared operating memory.

This logic is already materialized in a growing repository layer.

ClawHub and the archived

skills repository make skills distributable and inspectable;

nix-openclaw and

openclaw-ansible package deployment and plugin wiring as reusable infrastructure; the official Moltbook web/API repositories expose the public-network stack; and

OpenClaw-RL converts prior conversations into training signals for future agents [

36,

37,

39,

40,

42,

44,

45]. These repositories are not peripheral implementation details. They show how skills, policies, interfaces, and traces become reusable infrastructure.

Portability cuts both ways. The same archive that supports reproducibility can accelerate contamination, imitation, or coordinated misuse; the same persona abstraction that makes analysis tractable can harden an unstable behavioral surface into a misleading type; and the same skill that improves reuse can import hidden assumptions or unsafe permissions into downstream contexts [

62,

83]. Resources such as the Moltbook Observatory Archive are therefore valuable not only because they preserve ephemeral traces, but because they support comparison over time without forcing each study to reconstruct the ecosystem from scratch [

84]. In these systems, language models are not only research subjects; they are increasingly components of autonomous scientific pipelines and evidence-packaging workflows [

33,

85].

7. Exchange: Agent-Native Public Discourse

Exchange is the most visible and most easily over-interpreted layer of public agent ecosystems. Moltbook, while officially agent-native, invites human observation and integrates external app identities [

5,

6]. This makes it an unusually rich public dataset while ensuring that mixed autonomy, audience effects, and verification asymmetries remain central.

A useful way to read the exchange literature is to separate macro-organization from micro-coupling. Macro studies find heavy-tailed participation, visibility concentration, hub formation, and short-lived cascades [

30,

71,

78,

79]. Micro studies find shallow reply depth, formulaicity, and weak semantic coupling [

35,

70,

80]. These findings are not contradictory. They suggest a public sphere that is highly visible and structurally organized, yet often pragmatically thin.

Participation is highly unequal [

30,

71,

78]. Discourse centers on onboarding, self-presentation, tool coordination, and visible norm display more than on deep deliberation [

69,

72,

73]. Dubé et al. [

35] describe this through

broadcasting inversion and

parallel monologue: statements dominate questions, and replies often target the original post more than they sustain peer-to-peer dialogue. Shekkizhar and Earle [

80] sharpen the same point by arguing that visible interaction can become “interaction theater” — socially legible, yet semantically weak.

At the same time, public traces enable rich comparative and temporal analysis. Studies on reciprocity, degree concentration, and structural divergence show how agent networks differ from human baselines [

30,

74,

75,

77]. Role differentiation and short-lived cascades can emerge even when overall cooperation remains weak [

79]. Exchange also shows that safety can be a discourse phenomenon: action-inducing posts are more likely to attract norm-enforcing replies [

68]. But visible norm display should not be mistaken for resolved provenance or robust autonomy [

60,

76,

81]. For NLP, standard social-media pipelines are inadequate; public-agent discourse requires richer models of discourse acts, stance, uptake, audience design, and mixed-autonomy speakerhood. Ultimately, exchange is only the public face of the ecosystem. OpenClaw shows that visibility alone is analytically insufficient unless it is connected back to transfer, action, and grounding.

8. Cross-Layer Synthesis and an NLP Agenda Beyond the Model

The direct corpus is already rich, but it is methodologically lopsided. Most papers focus on a single evidence unit — a trajectory benchmark, a post corpus, a reply graph, a dataset artifact, or a provenance audit. Very few triangulate across operational traces, public discourse, portable artifacts, and grounding signals. This is a major methodological gap in the field, and it explains why papers that are all “about OpenClaw” can nevertheless seem hard to reconcile.

Figure 2 summarizes that diagnosis as a set of recurring fault lines and research responses. The evidence-alignment audit in

Table 1 is designed to make this bottleneck directly reviewable: it records how many studies rely on one evidence family only, how many align multiple families, how many explicitly align discourse + operational + grounding evidence, and how often portable artifacts are linked to another evidence family across the four GATE layers.

Several contradictions become less sharp once evidence units are aligned. Papers finding human-like macro regularities are not necessarily at odds with papers finding weak semantic coupling; they often observe different scales of the same system [

35,

71,

80]. Papers documenting visible norm enforcement are not necessarily at odds with provenance critiques; public warning behavior can coexist with mixed-autonomy speakerhood and delayed verification [

31,

55,

68]. Likewise, sovereignty claims are not incompatible with security critiques; local deployment changes the control boundary rather than removing language-mediated risk [

49,

61,

64].

The first recurring fault line is

instruction versus authority. Action papers show that models are often good at parsing what a string

asks, but less reliable at determining whether that string has standing to authorize a tool call, memory access, or externally visible action [

26,

27,

28,

34]. The second is

voice versus provenance. Exchange papers analyze visible posts, while grounding studies show that human steering, owner intervention, and platform affordances can materially shape what appears to be autonomous social behavior [

31,

54,

81]. The third is

visibility versus verification. Public narratives, safety claims, and even research findings can lock in socially before provenance or deployment facts are resolved [

55,

56]. A fourth, cross-cutting fault line is the

local-sovereignty paradox: local control does not remove language-mediated risk; it relocates it closer to real operator state [

7,

53,

64].

These failures motivate an NLP agenda that goes beyond model-centric evaluation.

(1) Executable pragmatics and provenance-aware evaluation. NLP needs task formulations in which meaning includes tool availability, permission scope, reversibility, and state consequences. Benchmarking only the final answer misses the semantic object that matters most in agent settings: the trajectory. Datasets and benchmarks should incorporate standardized provenance tags such as

autonomous,

human-steered,

mixed,

institutionally curated, or

unknown, because provenance changes the meaning of predictions and the trust that should be placed in generated evidence.

(2) Delegated-agent discourse. Public agent communication is neither ordinary human discourse nor pure machine telemetry. It constitutes a delegated discourse regime in which the visible speaker may represent an owner, a policy, a platform affordance, or a learned routine. Models of discourse acts, stance, warning, and norm invocation should therefore be extended to mixed-autonomy settings in which authority and responsibility are distributed across humans, agents, and infrastructure.

(3) Infrastructure-aware agent NLP. The field needs privacy-preserving agent NLP—minimal-disclosure prompting, redaction-aware retrieval, authorization-sensitive dialogue policies, and evidence traces that preserve auditability without leaking operator context—particularly for local-first assistants interacting with messages, files, and browsers. Multilingual public-agent research is also required: current OpenClaw–Moltbook studies remain overwhelmingly English-first even though early peer-learning observations hint at multilingual interaction [

29]. Autonomy-sensitive benchmarks should evaluate not only whether an agent completed a task, but whether completion depended on valid authority, enablement through reusable artifacts, behavioral reach beyond the prompt, or orchestration across tools and peers. Intervention studies are equally important, because current work is still dominated by observation rather than controlled changes to identity systems, moderation policies, tool permissions, or verification cues. A practical next step is to treat reporting standards themselves as part of the research agenda: papers should disclose the platform snapshot observed, the alias mapping used, what counts as an agent account, whether humans could intervene during the observation window, which model or provider stack was involved when relevant, and how provenance uncertainty was handled. Release artifacts should triangulate the same event across semantic, operational, artifact, and grounding views: the surface utterance, any implicated portable artifact, the tool-use or interaction trace that followed, and the timing of any correction or verification. Without this triangulation, the field risks producing parallel literatures that study the same ecosystem while talking past one another. Appendix

Table A1 turns this agenda into a compact task map for NLP researchers by specifying units of analysis, candidate labels or metrics, and natural data sources visible in the corpus.

9. Conclusion

This case-centered survey positions the OpenClaw–Moltbook ecosystem as a revealing instance of language infrastructure, where linguistic artifacts shift from static interfaces to executable, persistent, and governance-bearing operational layers. We introduce the GATE taxonomy to categorize these infrastructural roles and the AERO framework to track the delegation of force, identifying a methodological bottleneck in the literature’s limited triangulation of discourse, trajectories, portable artifacts, and grounding signals. This gap produces fault lines around instruction and authority, voice and provenance, visibility and verification, and the risk tradeoffs of local control. We therefore outline an NLP research agenda centered on executable pragmatics, delegated-agent discourse analysis, and provenance-aware evaluation to support rigorous study of public agent ecosystems.

Limitations

This paper is a survey of a moving target. First, the ecosystem is evolving rapidly. Our corpus is anchored to an explicit freeze date (2026-03-10), but the OpenClaw–Moltbook literature is growing fast enough that new papers, dataset releases, or major platform changes can alter the balance of evidence shortly after submission. The survey should therefore be read as a time-bounded synthesis rather than a permanently settled map.

Second, the evidence base is heterogeneous. The direct corpus includes preprints, technical reports, design-science papers, and gray literature alongside more conventional research outputs. That mix is methodologically appropriate for an emerging topic, but it means evidentiary strength is uneven. We mitigate this by separating direct evidence from official/platform context and adjacent framing, yet tiering cannot remove all uncertainty about quality, maturity, or future revision after peer review.

Third, corpus construction remains judgment-laden. Alias drift, platform-specific naming, disappearing links, versioned documentation, and cross-posted preprints make complete retrieval difficult. Our inclusion rules are explicit, but no search strategy can guarantee exhaustive capture in a field that is still naming itself in public. The same difficulty applies to boundary cases such as dataset archives, conceptual essays, or security notes that are strongly relevant to the ecosystem without functioning like standard empirical papers.

Fourth, OpenClaw is a strategically revealing case, not a universal proxy for all public agent ecosystems. Future ecosystems may differ in language mix, governance design, openness, economic incentives, moderation, identity architecture, or degree of human steering. The general lessons we draw are therefore best read as hypotheses and design principles for public agent ecosystems, grounded in this case, rather than as a complete theory of every future platform.

Fifth, this is not a quantitative meta-analysis. The primary studies vary too much in evidence unit, sampling frame, and outcome definition for straightforward pooling. Our synthesis is qualitative and conceptual. It identifies recurrent tensions, evidence gaps, and methodological patterns, but it cannot make high-confidence aggregate causal claims about effect sizes, prevalence, or platform-wide behavioral totals.

Sixth, the current literature is still substantially English-first. That bias affects both what gets studied and what appears generalizable. Cross-lingual behavior, translation-mediated attacks, multilingual norms, and non-English public-agent discourse are all underexplored. Some of the survey’s broader claims may therefore reflect the present language distribution of the literature as much as the underlying ecosystem.

Finally, any future artifact release from this project will itself be constrained by privacy and safety. We can release coding metadata, bibliographic structure, and high-level annotations more easily than raw trace dumps. This is a limitation for perfect reproducibility, but it is also a necessary condition of responsible release in a setting where public visibility does not eliminate risks of re-identification, context collapse, or harmful reuse.

Ethical Considerations

This paper studies a fast-moving ecosystem that combines public data, mixed autonomy, and security-sensitive behavior. We therefore do not treat public traces as unproblematic ground truth or as a free-for-all research substrate. Part of the paper’s central argument is that provenance, verification timing, hidden human intervention, platform affordances, and deployment boundaries materially affect interpretation.

We follow a minimal-disclosure principle. The survey synthesizes already public and citable materials, but it avoids republishing leaked credentials, private-message contents, or operational exploit detail beyond what is necessary to discuss the research questions and what is already public in the cited sources. If the structured corpus is released, it should prioritize bibliographic metadata, coding labels, and carefully bounded excerpts over raw dumps of platform content.

We also avoid anthropomorphic overclaiming. Public agent ecosystems can invite overly strong narratives about autonomous community formation or stable machine speakerhood. Because visible behavior may reflect owners, platform defaults, or mixed human-agent control, we deliberately separate observed discourse from claims about underlying autonomy. That is both a scientific and ethical choice: over-attributing agency can distort accountability and misrepresent the human role in the system.

The survey also has dual-use implications. Work on prompt injection, skill supply chains, guardrail bypass, browser exposure, and identity flows can inform defenses, but it can also be misused. We therefore focus on analytical lessons, failure modes, and evaluation implications rather than on maximizing operational detail. Our goal is to strengthen public-agent research practice without amplifying attack utility.

Finally, survey papers shape field narratives. In a young area, a synthesis can confer legitimacy, freeze terminology, or make one interpretation appear more settled than it really is. We therefore separate direct evidence from contextual framing, mark uncertainty about provenance and verification, and avoid presenting unresolved interpretations as consensus. Responsible surveying in this area means not only collecting sources, but also managing what kinds of confidence the survey itself encourages.

Acknowledgments

This research is supported by the RIE2025 Industry Alignment Fund (Award I2301E0026) and the Alibaba-NTU Global e-Sustainability CorpLab.

Abbreviations

The following abbreviations are used in this manuscript:

| MDPI |

Multidisciplinary Digital Publishing Institute |

| DOAJ |

Directory of open access journals |

| TLA |

Three letter acronym |

| LD |

Linear dichroism |

Appendix A PRISMA-Inspired Review Workflow

Because this field is preprint-heavy, platform-defined, and still naming itself in public, the review protocol has to document more than a list of venues.

Figure A1 therefore summarizes the workflow as a PRISMA-inspired multivocal process: source-family identification, alias normalization, relevance screening, eligibility coding, and tiered inclusion. The diagram is intentionally process-centric rather than venue-centric, because the central reproducibility question in this domain is how one moved from a noisy, alias-rich public record to a stable direct corpus. The companion tables below make the same process numeric:

Table A2 reports exact stage-wise counts and reason-coded exclusions, while

Table A3 logs the closest boundary cases or merged records.

Figure A1.

PRISMA-inspired multivocal review workflow. Because the ecosystem formed through preprints, platform materials, and technical notes, the review uses alias normalization, tiered evidence handling, and direct/context separation rather than venue-only selection.

Table A2 gives the auditable numeric flow and reason-coded exclusions.

Figure A1.

PRISMA-inspired multivocal review workflow. Because the ecosystem formed through preprints, platform materials, and technical notes, the review uses alias normalization, tiered evidence handling, and direct/context separation rather than venue-only selection.

Table A2 gives the auditable numeric flow and reason-coded exclusions.

Appendix B Auditable Screening Ledger and Borderline Exclusions

This appendix section makes the review protocol inspectable rather than merely narrative. It first turns the main-text agenda into a compact operational task map, then reports the stage-wise screening counts and the closest scope calls or merged records.

Appendix B.1 Operational NLP Task Map

To keep the research agenda concrete, Appendix

Table A1 translates the main-text agenda into candidate NLP tasks, units of analysis, labels or metrics, and plausible data sources.

Table A1.

Operational NLP task map derived from the survey. The aim is to convert the agenda into concrete tasks, units, labels, metrics, and candidate data sources rather than leaving it at the level of themes.

Table A1.

Operational NLP task map derived from the survey. The aim is to convert the agenda into concrete tasks, units, labels, metrics, and candidate data sources rather than leaving it at the level of themes.

|

Task |

Unit of analysis |

Possible labels / metrics |

Candidate data source |

| Executable-pragmatics evaluation |

trajectory step, tool call, complete episode |

authority-valid vs. invalid trigger, permission-scope match, reversibility, unsafe state change, repair cost, trajectory success under policy |

trajectory audits, PASB-style scenarios, governed tool-call traces (26,27,34,63) |

| Delegated discourse-act modeling |

post, reply, thread |

warning, request, self-presentation, norm invocation, uptake, stance, broadcast vs. sustained dialogue depth |

Moltbook posts and reply chains, norm-enforcement responses, discourse-structure corpora (35,68,73,80) |

| Provenance-aware autonomy labeling |

post, account, event, verification episode |

autonomous, human-steered, mixed, institutionally curated, unknown; verification lag; provenance confidence |

provenance audits, oversight studies, delayed verification cases, developer metadata (6,31,54,55) |

| Privacy-sensitive logging and redaction |

prompt span, retrieved chunk, browser step, tool log segment |

secret-bearing span, personal-context leak, policy-compliant redaction, audit sufficiency, minimal-disclosure score |

PASB scenarios, browser/runtime traces, local-first assistant settings (7,27,49,53) |

| Cross-lingual norm and attack transfer |

paired post, translated thread, skill/tutorial artifact |

translation drift, cross-lingual norm alignment, attack transfer success, multilingual provenance ambiguity |

multilingual peer-learning streams, translated skills/tutorials, public skill archives (29,37,66) |

| Autonomy-sensitive benchmark suites |

complete episode, intervention event, platform snapshot |

task success with valid authority, orchestration depth, reach beyond current turn, post-hoc correction cost, artifact reuse dependence |

joined discourse + trajectory + artifact corpora released with source-level metadata and evidence-alignment fields (Table A10) |

Appendix B.2 Auditable Screening Ledger

The ledger below reports stage-wise counts and reason-coded exclusions in an audit-friendly format.

Table A2.

Auditable screening ledger for the multivocal review. Counts are reported as whole numbers.

Table A2.

Auditable screening ledger for the multivocal review. Counts are reported as whole numbers.

| Stage |

Count |

How records entered or left |

Audit note |

| Alias-based scholarly identification |

44 |

scholar search over OpenClaw / Clawdbot / Clawd / Moltbot / Moltbook / ClawdLab and thematic terms |

retained candidate papers, reports, and technical notes |

| Backward / forward snowballing |

15 |

references and citations from early February and March work |

used to recover late-linked direct and adjacent sources |

| Official / platform materials |

16 |

documentation, blogs, repositories, developer sites, dataset landing pages |

screened separately because used as contextual or grounding evidence |

| Project-layer repositories |

10 |

skill hubs, deployment packages, connectors, training / attestation extensions |

retained only if citable and ecosystem-relevant |

| Records identified before de-duplication |

85 |

union of all source families before version merging |

counts mirrored or superseded records separately |

| Records after de-duplication and version merging |

83 |

merged obvious duplicates, superseded versions, and duplicate bibliographic records |

keep one canonical record per source for screening |

| Title / abstract / metadata screened |

83 |

quick scope screen for ecosystem centrality and retrievability |

excludes lightweight commentary and clearly indirect mentions |

| Excluded at title / abstract / metadata stage |

2 |

removed before full-text coding |

reasons recorded in screening log |

| Full texts assessed for eligibility |

81 |

sources read for direct/context role, evidence unit, and tier eligibility |

basis for inclusion/exclusion and coding |

| Excluded: not ecosystem-primary object (E1) |

1 |

ecosystem appears only rhetorically or peripherally |

not used for direct synthesis |

| Excluded: lightweight commentary / rhetorical mention (E2) |

0 |

commentary lacks substantive empirical, technical, or methodological content |

not strong enough for multivocal synthesis |

| Excluded: insufficient retrievable technical detail (E3) |

0 |

source could not support auditable claims because evidence or versioning was too thin |

may be revisited in future updates |

| Excluded: duplicate or superseded version (E4) |

0 |

later or cleaner version merged under canonical record |

protects against double counting |

| Excluded: unavailable or unstable source (E5) |

0 |

disappeared link, unstable landing page, or insufficiently citable archival state |

logged but not cited |

| Excluded: other scope mismatch (E6) |

1 |

adjacent but outside survey boundary |

listed in borderline log when close to inclusion threshold |

| Included in direct qualitative synthesis |

38 |

ecosystem-specific papers and reports |

core direct corpus |

| Included as official / project / adjacent context |

41 |

official/platform sources, project repositories, adjacent framing and survey sources |

contextual layer kept separate from direct evidence |

| Paper-wide source inventory |

79 |

direct + contextual included records |

final included source inventory at freeze date |

Appendix B.3 Borderline or Merged Records

Table A3 records the closest boundary cases and the duplicate-export merges resolved before direct-synthesis coding.

Table A3.

Borderline or merged records from the working bibliography. Duplicate merges were resolved before direct-synthesis coding; scope exclusions are shown separately to keep merge handling distinct from full-text exclusion counts.

Table A3.

Borderline or merged records from the working bibliography. Duplicate merges were resolved before direct-synthesis coding; scope exclusions are shown separately to keep merge handling distinct from full-text exclusion counts.

|

Record or merge case |

Category |

Status |

Decision |

Explanation |

| duplicate metadata export of weidener2026openclaw

|

duplicate bibliographic record |

merged pre-screen |

merged |

multiple bibliography exports of the same arXiv record were collapsed under one canonical key to avoid double counting and stale metadata |

| duplicate metadata export of Zhang2026FromTT

|

duplicate bibliographic record |

merged pre-screen |

merged |

multiple bibliography exports of the same work were collapsed under one canonical key for consistency across citations and metadata fields |

| su2025survey |

broad survey backdrop |

outside direct scope |

excluded |

relevant to general agent-security framing, but not specific enough to public agent ecosystems once closer adjacent surveys were included |

| de2026openclaw |

system proposal |

not ecosystem-primary |

excluded from direct synthesis |

mentions OpenClaw but is not primarily a direct observational or methodological study of the OpenClaw–Moltbook ecosystem as defined here |

Appendix C Search Details, Coding Scheme, and Source Tiers

The appendix is intentionally more explicit than the main text because the review protocol is itself part of the contribution. In fast-moving public-agent research, the key reproducibility question is not only which sources were cited, but how sources were classified, which ones grounded direct claims, and where uncertainty about authorship, deployment, or naming drift entered the interpretation.

The search used alias-based strings combining OpenClaw, Clawdbot, Clawd, Moltbot, Moltbook, and ClawdLab with terms such as safety, attack, social network, skill, privacy, governance, identity, provenance, and dataset. Snowballing from early February papers added March work on datasets, discourse structure, governance layers, deployment security, and the surrounding project layer. We also normalized obvious alias drift and separated citable official materials and repositories from direct empirical studies rather than flattening them into a single undifferentiated bibliography.

Each included work was coded during drafting for dominant evidence unit, evidence-alignment profile, triangulation class, dominant GATE layer, AERO role(s), primary object, and source tier. The goal was not to force single-label agreement everywhere, but to identify each work’s center of gravity while preserving cross-layer connections in the synthesis. This process helped separate disagreements caused by substantive contradiction from disagreements caused by evidence-type mismatch. The appendix reports the working codebook and source-tier scheme used for the synthesis (

Table A6 and

Table A7).

Table A4.

Alias mapping used for consistent exposition.

Table A4.

Alias mapping used for consistent exposition.

|

Alias |

Use in this survey |

| Clawd |

Early project lineage in official materials. |

| Clawdbot |

Early public/project alias retained in some papers. |

| Moltbot |

Intermediate alias retained in some discourse and technical notes. |

| OpenClaw |

Canonical runtime/framework name used in the main exposition. |

| Moltbook |

Public agent-native social network around the runtime. |

| ClawdLab |

Downstream design response centered on autonomous research. |

Table A5.

Dominant-layer coding of the direct corpus (38 works). Multi-label GATE annotations were used for synthesis; counts here reflect a single dominant layer per work for summary purposes.

Table A5.

Dominant-layer coding of the direct corpus (38 works). Multi-label GATE annotations were used for synthesis; counts here reflect a single dominant layer per work for summary purposes.

|

Dominant GATE layer |

n |

Dominant evidence units |

Recurring blind spots |

| Grounding |

8 |

provenance audits, oversight discourse, security models, identity/auth artifacts |

attribution, verification timing, privacy boundary definition, responsibility assignment |

| Action |

9 |

trajectories, tool logs, incidents, deployment configs |

permission grounding, reversibility, repair semantics, action-state attribution |

| Transfer |

5 |

skills, tutorials, personas, datasets, research workflows |

artifact provenance, downstream reuse, contamination, evidence portability |

| Exchange |

16 |

posts, replies, temporal traces, reply graphs |

shallow dialogue, ritualized signaling, audience effects, mixed-autonomy labeling |

Table A6.

Working codebook used to map the direct corpus. GATE captures what language does; AERO captures how much delegated operational force it acquires.

Table A6.

Working codebook used to map the direct corpus. GATE captures what language does; AERO captures how much delegated operational force it acquires.

|

Axis |

Code |

Working definition |

Typical evidence signals in the corpus |

| GATE |

Grounding |

what makes language legitimate, attributable, verifiable, permission-bearing, or responsibility-bearing |

provenance audits, verification timing, identity/auth artifacts, policy docs, incident reports |

| GATE |

Action |

language that directly changes system state or action selection |

trajectories, tool calls, repair loops, incidents, deployment configs |

| GATE |

Transfer |

language that packages portable capability, evidence, or reusable workflow knowledge |

skills, tutorials, personas, datasets, archives, workflow artifacts |

| GATE |

Exchange |

language as public social traffic among agents and observers |

posts, replies, reply graphs, temporal traces, discourse-structure signals |

| AERO |

Authority |

legitimacy, permission, or speakerhood needed for state-changing action |

auth notes, operator boundaries, verification status, provenance claims, trust policies |

| AERO |

Enablement |

how language becomes reusable capability through tools, memory, and artifacts |

typed tools, skill files, personas, datasets, reusable procedures, peer-learning artifacts |

| AERO |

Reach |

how far behavior extends beyond the immediately prompted turn |

persistent memory effects, delayed consequences, self-starting activity, long-horizon trajectories |

| AERO |

Orchestration |

how behavior coordinates across tools, services, peers, or oversight layers |

browser/runtime integration, multi-tool flows, peer coordination, guardrails, governance stacks |

Table A7.

Source tiers used in the review protocol.

Table A7.

Source tiers used in the review protocol.

|

Tier |

Role in survey |

Representative sources |

Use in synthesis |

| Tier 1 |

direct ecosystem evidence |

Chen et al. [26], Wang et al. [27], Chen et al. [29], Holtz [30], Mukherjee et al. [32], Dubé et al. [35], Manik and Wang [68], Jiang et al. [72] |

primary basis for claims about trajectories, discourse, portable artifacts, privacy exposure, grounding, and observed dynamics |

| Tier 2 |

official, technical, and project context |

Steinberger [1], OpenClaw [3,4], Moltbook [6], OpenClaw [36,38], Wang et al. [42], Moltbook [44], Wiz Research [82], Gautam and Riegler [84] |

informs platform assumptions, trust boundaries, deployment posture, skill distribution, connectors, packaging, identity mechanisms, archival resources, and grounding interpretation |

| Tier 3 |

adjacent and survey-style framing |

Wang et al. [16], Cheng et al. [17], Yan et al. [21], Yu et al. [23], Zhang et al. [25], Weidener et al. [33] |

used cautiously to position the survey, connect to broader autonomy debates, and situate research gaps |

Appendix D Positioning Within Broader Survey Landscape

Because the paper positions itself as a case-centered survey rather than a general review, it is important to state that boundary explicitly.

Table A8 records the closest nearby survey traditions and how this paper differs from them. The point is not to diminish those works; it is to make the contribution boundary explicit.

Table A8.

Positioning against adjacent survey literature. This table motivates the paper’s positioning as a dedicated, case-centered, NLP-centered survey, rather than as the first survey-like document to discuss the ecosystem in any form.

Table A8.

Positioning against adjacent survey literature. This table motivates the paper’s positioning as a dedicated, case-centered, NLP-centered survey, rather than as the first survey-like document to discuss the ecosystem in any form.

|

Survey |

Primary scope |

What it covers especially well |

Gap relative to this paper |

| Wang et al. [16] |

LLM-based autonomous agents broadly |

agent construction, applications, evaluation |

not ecosystem-specific and not centered on public agent traces |

| Cheng et al. [17] |

intelligent agents across single- and multi-agent settings |

definitions, methods, core components, prospects |

broad agent survey rather than a focused public-ecosystem synthesis |

| Guo et al. [18] |

LLM-based multi-agent systems |

progress, challenges, benchmarks, communication, application domains |

not anchored in one public ecosystem with observable mixed-autonomy traces |

| Chen et al. [19] |

recent advances in LLM-MAS |

applications, frontiers, broad systems-level organization |

emphasizes application frontiers more than provenance-rich ecosystem analysis |

| Tran et al. [20] |

collaboration mechanisms in LLM-based MAS |

actors, structures, strategies, protocols, coordination |

collaboration-centric rather than language-infrastructure-centric |

| Yan et al. [21] |

communication-centric LLM-MAS survey |

communication architectures, paradigms, security and scale challenges |

not tied to one naturally occurring public agent network |

| Zou et al. [22] |

human-agent collaboration systems |

human feedback, interaction patterns, orchestration, benchmarks |

human-in-the-loop focus rather than agent-only public ecosystems |

| Yu et al. [23] |

trustworthy agents and multi-agent systems |

attacks, defenses, evaluation, modular trust framework |

trustworthiness is central, but not the ecology of public traces, evidence transfer, and provenance disputes |

| Gao et al. [24] |

self-evolving agents |

what/when/how to evolve, adaptation stages, benchmarks |

focuses on continual evolution rather than public ecosystem observation |

| Zhang et al. [25] |

hierarchical autonomy security |

layered risks from cognitive to collective autonomy |

security-forward autonomy framing, not a dedicated OpenClaw/Moltbook survey |

| Weidener et al. [33] |

OpenClaw–Moltbook lessons plus ClawdLab design |

the closest ecosystem-specific precursor; embeds a multivocal review in a design-science response |

review is embedded inside a platform proposal, whereas this paper’s primary contribution is the literature synthesis itself from an NLP-centered perspective |

Appendix E Review Questions and Extraction Form

The survey was guided by four review questions that also structured the extraction form used during coding.

Table A9.

Review questions guiding corpus coding and synthesis.

Table A9.

Review questions guiding corpus coding and synthesis.

|

RQ |

Question |

How it structures the review |

| RQ1 |

What roles does language play in public agent ecosystems? |

motivates the GATE taxonomy and the layer-by-layer synthesis |

| RQ2 |

How does delegated operational force accumulate across artifacts, tools, and social settings? |

motivates the AERO layer and the shift from prompting to delegated autonomy |

| RQ3 |

Where do the main empirical and methodological disagreements actually arise? |

motivates the focus on recurring fault lines rather than forced consensus |

| RQ4 |

What should NLP evaluate, report, and build next in this area? |

motivates the agenda on executable pragmatics, provenance, privacy, and public-agent discourse |

Appendix F Release-Oriented Corpus Metadata and Reporting Standard

To make the corpus contribution operational rather than rhetorical,

Table A10 and

Table A11 list the metadata fields that the release should expose and the reporting fields that future public-agent papers should ideally disclose.

Table A10.

Suggested metadata fields for the annotated corpus release, including evidence-alignment fields that support the triangulation audit.

Table A10.

Suggested metadata fields for the annotated corpus release, including evidence-alignment fields that support the triangulation audit.

|

Field |

Purpose |

Illustrative value |

| canonical_id |

stable identifier for each included source |

OCMB-2026-017 |

| citation_key |

BibTeX key used in the paper |

chen2026trajectory |

| title |

human-readable source name |

A Trajectory Audit of OpenClaw |

| first_public_date |

supports freeze-date reasoning and temporal analysis |

2026-02-15 |

| source_tier |

distinguishes direct evidence from context |

Tier 1 |

| decision_log_ref |

links the included source back to screening and adjudication notes |

SCREEN-042 |

| primary_object |

indicates runtime, network, extension, or grounding focus |

social network |

| evidence_unit |

identifies the central analytic object |

reply graph |

| evidence_alignment_profile |

records which evidence families are jointly analyzed for the source |

discourse + grounding |

| triangulation_class |

supports quantitative audit of weak evidence alignment |

single-family / 2+ families / D+O+G / artifact-linked |

| dominant_gate_layer |

summary layer used in corpus profiling |

Exchange |

| aero_roles |

cross-cutting autonomy roles activated by the study |

Enablement, Orchestration |

| alias_mapping |

records whether the source uses Clawd, Clawdbot, Moltbot, etc. |

OpenClaw / Clawdbot |

| provenance_caveat |

flags hidden-human or verification uncertainty |

mixed-autonomy uncertainty noted |

| notes |

free-text synthesis memo for later reuse |

compares visible speech with underlying control |

Table A11.

Recommended reporting fields for future public-agent ecosystem papers.

Table A11.

Recommended reporting fields for future public-agent ecosystem papers.

|

Recommended disclosure field |

Why it matters |

What a strong paper should report |

| platform snapshot and time window |

platforms change quickly; findings are version-sensitive |

observation window, freeze date, major product/version changes during collection |

| alias mapping and search terms |

naming drift affects corpus construction and comparability |

which aliases were used, normalized, or excluded |

| unit of analysis |

claims differ depending on whether the unit is a post, thread, graph, artifact, or trajectory |

explicit evidence unit and justification |

| autonomy / provenance label |

visible text can be owner-steered, mixed, or autonomous |

autonomous, human-steered, mixed, curated, or unknown labels where possible |

| identity / verification timing |

public interpretation may precede authentication |

when verification signals appeared relative to the observed event |

| model / provider / runtime stack |

behavior depends on the stack, not only the prompt |

models, providers, typed tools, memory components, browser/runtime configuration |

| privacy and release constraints |

public traces can still expose people or private state |

redaction decisions, license/terms considerations, and release limits |

| evidence alignment availability |

triangulation is the main methodological gap |

whether surface, operational, artifact, and grounding evidence were jointly available for the same event |

| coding protocol transparency |

interpretive labels need a clear audit trail |

coding rulebook, decision log, and any quality-control subset checks used during corpus construction |

Appendix G Extended Corpus by Layer

Table A12 lists representative sources beyond the subset discussed at greatest length in the main text.

Table A12.

Extended corpus overview by GATE layer and its relation to the AERO layer.

Table A12.

Extended corpus overview by GATE layer and its relation to the AERO layer.

|

Layer |

Subfocus |

Representative works |

Relation to AERO layer |

| Grounding |

provenance, privacy, oversight, identity, delayed verification |

Moltbook [6], Li [31], OpenClaw [49], Shi and DiFranzo [54,55], Zerhoudi et al. [56], Wiz Research [82] |

as authority and orchestration grow, attribution, privacy boundaries, and responsibility become harder rather than easier |

| Action |

trajectory risk, local-agent attacks, deployment security |

Chen et al. [26], Wang et al. [27], Dong et al. [28], Ge [34], van Beek and Mezo [61], Jin et al. [63], Zhan et al. [64], Du et al. [65] |

reach and orchestration expand the space of possible side effects, while authority determines who may legitimately trigger them |

| Transfer |

skills, peer learning, datasets, research workflows |

Chen et al. [29], Mukherjee et al. [32], Weidener et al. [33], Chen et al. [66], Amin et al. [67], Jiang et al. [83], Liang et al. [86] |

enablement becomes portable when knowledge is packaged into artifacts that support extended reach and reuse |

| Exchange |

discourse, norms, participation, social graphs, sociality critiques |

Holtz [30], Dubé et al. [35], Manik and Wang [68], Lin et al. [69], Eziz [70], De Marzo and Garcia [71], Jiang et al. [72], Li et al. [73], Feng et al. [74], Hou and Ji [77], Shekkizhar and Earle [80], Zhang et al. [81] |

public visibility can reward reach and cascade formation even when deeper interaction remains limited |

Appendix H Comprehensive Corpus Tables

The following tables serve as the appendix-level corpus inventory behind the survey.

Table A13.

Grounding, provenance, and responsibility-focused perspectives in the direct corpus.

Table A13.

Grounding, provenance, and responsibility-focused perspectives in the direct corpus.

|

Work |

Unit |

Core contribution |

GATE / NLP relevance |

| Moltbook Illusion (31) |

provenance analysis |

separates human influence from apparent emergence |

Grounding; attribution changes what language data means |

| Human Control Is the Anchor (54) |

oversight analysis |

examines early divergence of oversight in agent communities |

Grounding; visible text and real control can diverge |

| Delayed Verification (55) |

discourse timing |

shows how narrative lock-in forms before verification |

Grounding; timing matters for benchmark trust |

| Behind the Prompt (56) |

retrieval framing |

studies hidden-user intent when agents act as proxies |

Grounding/Transfer; relevant to IR and proxy-mediated dialogue |

| Conversation to Command Execution (57) |

threat modeling |

contrasts conversational assistants and command-executing agents |

Grounding; clarifies why OpenClaw-style agents change risk categories |

| Devil Behind Moltbook (58) |

safety critique |

argues that safety claims can vanish in self-evolving societies |

Grounding; skeptical lens on emergent norms and alignment |

| Sorcerer’s Apprentice (59) |

conceptual analysis |

distinguishes tool-agents from stronger teleological claims |

Grounding; useful restraint against over-anthropomorphic reading |

| Panacea Position (60) |

methodological critique |

cautions against overclaiming from agent-society observations |

Grounding/Exchange; useful counterweight to strong emergence narratives |

Table A14.

Action, authority, and system-safety studies in the direct corpus.

Table A14.

Action, authority, and system-safety studies in the direct corpus.

|

Work |

Unit |

Core contribution |

GATE / NLP relevance |

| Trajectory Audit (26) |

trajectories |

full-trajectory safety auditing for Clawdbot/OpenClaw |

Action; treats semantics as action traces rather than text strings |

| PASB (27) |

end-to-end scenarios |

benchmarks attacks on personalized local agents |

Action; long-horizon security and memory-sensitive evaluation |

| Clawdrain (28) |

skill + tool chain |

token-exhaustion attack via tool-calling chains |

Action; repair language becomes part of the threat model |

| LGA (34) |

governed tool calls |

layered governance architecture evaluated on OpenClaw |

Action/Grounding; shifts focus from text safety to execution safety |

| Hardened Shell (61) |

architecture |

safety/sovereignty critique of runtime design |

Action/Grounding; argues for architectural rather than prompt-only defenses |

| Formal Skill Security (62) |

skills |

formal analysis of agent-skill supply chains |

Action/Transfer; language-wrapped skills become security-critical artifacts |

| Proof-of-Guardrail (63) |

runtime assurance |

attestation for guarded agent runs |

Grounding/Action; links textual safety claims to verifiable execution |

| Edge Attack Surface (64) |

deployment architecture |

systems-level analysis of boundary failures in edge agents |

Action/Grounding; shows safety depends on deployment topology |

| ClawMobile (65) |

mobile architecture |

smartphone-native agent runtime design |

Action; separates language reasoning from deterministic control |

Table A15.

Transfer and research-workflow studies in the direct corpus.

Table A15.

Transfer and research-workflow studies in the direct corpus.

|

Work |

Unit |

Core contribution |

GATE / NLP relevance |

| Peer Learning (29) |

posts + learning cues |

frames Moltbook as an AI-only peer-learning environment |

Transfer; agents exchange skills and tactics through language |

| Informal Learners (66) |

large-scale discourse |

studies agent learning in a broadcast-heavy public environment |

Transfer/Exchange; introduces useful discourse concepts for learning-oriented analysis |

| MoltGraph (32) |

temporal dataset |

releases a longitudinal graph dataset for detection tasks |

Transfer; reusable benchmark and archival resource |

| Personas on Moltbook (67) |

posts/personas |

packages agents into reusable behavioral personas |

Transfer; relevant to summarization and behavioral abstraction |

| ClawdLab (33) |

literature + system design |

connects OpenClaw–Moltbook lessons to autonomous research design |

Transfer/AERO; strong orchestration and evidence-grounding angle |

Table A16.

Exchange and discourse studies, part I.

Table A16.

Exchange and discourse studies, part I.

|

Work |

Unit |

Core contribution |

GATE / NLP relevance |

| Risky Sharing (68) |

posts + replies |

measures action-inducing language and norm-enforcing responses |

Exchange; discourse can itself regulate safety |

| Silicon-Based Societies (69) |

platform traces |

early large-scale characterization of Moltbook |

Exchange; maps topics, communities, and agent behavior in the wild |

| Fast Response or Silence (70) |

thread dynamics |

characterizes reply persistence and drop-off |

Exchange; interaction structure is shallow but measurable |

| Collective Behavior (71) |

network structure |

macro-scale view of emergent collective behavior |

Exchange; useful for coordination and inequality framing |

| A First Look (72) |

platform snapshot |

descriptive baseline for posts, topics, and subcommunities |

Exchange; anchor study for early public discourse |

| Anatomy of Social Graph (30) |

social graph |

structural analysis of reply graph formation |

Exchange; graph topology complements discourse analysis |

| Rise of AI Agent Communities (73) |

discourse + interaction |

large-scale analysis of discourse and interaction |

Exchange; combines text and network perspectives |

| MoltNet (74) |

social behavior |

network-analytic view of Moltbook interaction |

Exchange; supports structural comparison across agents |

Table A17.

Exchange and discourse studies, part II.

Table A17.

Exchange and discourse studies, part II.

|

Work |

Unit |

Core contribution |

GATE / NLP relevance |

| Reddit Comparison (75) |

comparative graphs |

contrasts Moltbook and Reddit topology |

Exchange; warns against naive human-social analogies |

| Does Socialization Emerge? (76) |

behavioral signals |

asks whether socialization truly emerges |

Exchange/Grounding; separates sociality from surface activity |

| Structural Divergence (77) |

network metrics |

measures divergence from human social networks |

Exchange; highlights non-human structure beneath familiar interfaces |

| Let There Be Claws (78) |

network snapshot |

early social-graph baseline for the platform |

Exchange; triangulates early structural findings |

| Molt Dynamics (79) |

temporal graph + roles |

role specialization, cascades, weak cooperation |

Exchange/AERO; adds longitudinal and coordination perspective |

| Interaction Theater (80) |

comments at scale |

argues that visible interaction can mask weak semantic coupling |

Exchange; directly motivates deeper discourse-act modeling |

| What Do AI Agents Talk About? (35) |

discourse structure |

topic, emotion, formulaicity, and coherence analysis at scale |

Exchange; shows ritualization and emotional redirection in AI-to-AI discourse |

| Agents in the Wild (81) |

mixed-method critique |

cautions against over-reading apparent sociality |

Exchange/Grounding; foregrounds interpretive caution |

Appendix I Broader Mission-Level Relevance Beyond the Case

Although this paper is anchored in one ecosystem, its implications are broader.

Table A18 summarizes how the case speaks to larger mission-level questions for NLP.

Table A18.

Broader mission-level relevance beyond the case itself.

Table A18.

Broader mission-level relevance beyond the case itself.

|

Broader mission for NLP |

How public agent ecosystems sharpen it |

How this survey contributes |

| From models to systems and ecosystems |

language use becomes inseparable from tools, services, identities, and public communities |

provides a language-infrastructure lens for studying that shift through a concrete public case |

| Rethinking progress and evaluation |

final-answer metrics are insufficient when language has state-changing consequences |

argues for executable pragmatics, provenance-aware evaluation, and triangulated evidence |

| Data as bottleneck and responsibility |

public traces are portable but also privacy-, contamination-, and verification-laden |

distinguishes visibility from legitimacy and proposes minimal-disclosure archival practice |

| LLMs as research tools and infrastructure |

agents increasingly support research workflows, evidence gathering, and knowledge packaging |

situates peer-learning artifacts, datasets, and ClawdLab-style designs inside a broader research-infrastructure agenda |

| Discourse and pragmatics beyond single-user prompting |

public agent speech raises new questions about stance, speakerhood, norm invocation, and audience design |

frames delegated-agent discourse as a new NLP problem rather than a special case of social-media mining |

Appendix J Adjacent Perspectives Beyond the Direct Corpus

The following works are not central empirical OpenClaw/Moltbook studies, but they sharpen the survey’s interpretation of autonomy, safety, collaboration, or deployment.

Table A19.

Adjacent perspectives that informed interpretation but are not weighted like direct ecosystem evidence.

Table A19.

Adjacent perspectives that informed interpretation but are not weighted like direct ecosystem evidence.

|

Work |

Perspective |

Why included |

Link back to GATE / AERO |

| Autonomous-agent baselines (16,17) |

broad agent surveys |

give the larger agent backdrop against which OpenClaw appears unusually public and provenance-rich |

clarify that this paper is ecosystem-specific rather than a generic agent survey |

| MAS survey baselines (18,19,20,21) |

multi-agent collaboration and communication |

sharpen what is generic to MAS and what is distinctive about public agent ecosystems |

especially helpful for the Exchange and Orchestration dimensions |

| Human-agent and trust surveys (22,23,25) |

collaboration and autonomy-aware security |

supply broader frameworks for human oversight, trust, and layered risk |

support the claim that risk grows with delegated authority, reach, and orchestration |

| Self-evolving agents (24) |

continual adaptation |

highlights how agents may adapt over time rather than remain static assistants |

resonates with Reach and long-horizon transfer of procedures and policies |

| Agentic Skills / SkillNet (83,86) |

skill abstraction |

conceptualizes skills beyond bare tool use |

clarifies enablement and orchestration as reusable language-mediated capability |

| Observatory Archive and AI-for-science context (84,85) |

archival and research-infrastructure framing |

connect OpenClaw-style traces to reproducible archival practice and scientific workflow design |

show how transfer artifacts can become research infrastructure rather than one-off evidence |

| Project ecosystem repositories (36,37,38,39,40,41,42,43,44,45) |

packaging, skills, connectors, deployment, training |

show that OpenClaw/Moltbook claims are materializing as public infrastructure rather than papers alone |

especially relevant to Transfer, Action, and Orchestration |

References

- Steinberger, P. Introducing OpenClaw. OpenClaw Blog, 2026.

- OpenClaw. OpenClaw: Personal AI Assistant. GitHub repository, 2026.

- OpenClaw. OpenClaw Docs Homepage. Documentation website, 2026.

- OpenClaw. Tools (OpenClaw). Documentation website, 2026.

- Moltbook. Moltbook. Official website, 2026.

- Moltbook. Build Apps for AI Agents. Developer website, 2026.

- OpenClaw. Browser (OpenClaw-managed). Documentation website, 2026.

- Nakano, R.; Hilton, J.; Balaji, S.; Wu, J.; Ouyang, L.; Kim, C.; Hesse, C.; Jain, S.; Kosaraju, V.; Saunders, W.; et al. Webgpt: Browser-assisted question-answering with human feedback. arXiv preprint arXiv:2112.09332 2021. [CrossRef]

- Yao, S.; Zhao, J.; Yu, D.; Du, N.; Shafran, I.; Narasimhan, K.R.; Cao, Y. React: Synergizing reasoning and acting in language models. In Proceedings of the The eleventh international conference on learning representations, 2022.

- Schick, T.; Dwivedi-Yu, J.; Dessì, R.; Raileanu, R.; Lomeli, M.; Hambro, E.; Zettlemoyer, L.; Cancedda, N.; Scialom, T. Toolformer: Language models can teach themselves to use tools. Advances in neural information processing systems 2023, 36, 68539–68551.

- Qin, Y.; Liang, S.; Ye, Y.; Zhu, K.; Yan, L.; Lu, Y.; Lin, Y.; Cong, X.; Tang, X.; Qian, B.; et al. Toolllm: Facilitating large language models to master 16000+ real-world apis. arXiv preprint arXiv:2307.16789 2023. [CrossRef]

- Li, G.; Hammoud, H.; Itani, H.; Khizbullin, D.; Ghanem, B. Camel: Communicative agents for" mind" exploration of large language model society. Advances in neural information processing systems 2023, 36, 51991–52008.

- Hong, S.; Zhuge, M.; Chen, J.; Zheng, X.; Cheng, Y.; Wang, J.; Zhang, C.; Wang, Z.; Yau, S.K.S.; Lin, Z.; et al. MetaGPT: Meta programming for a multi-agent collaborative framework. In Proceedings of the The twelfth international conference on learning representations, 2023.

- Wu, Q.; Bansal, G.; Zhang, J.; Wu, Y.; Li, B.; Zhu, E.; Jiang, L.; Zhang, X.; Zhang, S.; Liu, J.; et al. Autogen: Enabling next-gen LLM applications via multi-agent conversations. In Proceedings of the First conference on language modeling, 2024.

- Park, J.S.; O’Brien, J.; Cai, C.J.; Morris, M.R.; Liang, P.; Bernstein, M.S. Generative agents: Interactive simulacra of human behavior. In Proceedings of the Proceedings of the 36th annual acm symposium on user interface software and technology, 2023, pp. 1–22. [CrossRef]

- Wang, L.; Ma, C.; Feng, X.; Zhang, Z.; Yang, H.; Zhang, J.; Chen, Z.; Tang, J.; Chen, X.; Lin, Y.; et al. A survey on large language model based autonomous agents. Frontiers of Computer Science 2024, 18, 186345. [CrossRef]

- Cheng, Y.; Zhang, C.; Zhang, Z.; Meng, X.; Hong, S.; Li, W.; Wang, Z.; Wang, Z.; Yin, F.; Zhao, J.; et al. Exploring large language model based intelligent agents: Definitions, methods, and prospects. arXiv preprint arXiv:2401.03428 2024. [CrossRef]

- Guo, T.; Chen, X.; Wang, Y.; Chang, R.; Pei, S.; Chawla, N.V.; Wiest, O.; Zhang, X. Large language model based multi-agents: A survey of progress and challenges. arXiv preprint arXiv:2402.01680 2024. [CrossRef]

- Chen, S.; Liu, Y.; Han, W.; Zhang, W.; Liu, T. A survey on llm-based multi-agent system: Recent advances and new frontiers in application. arXiv preprint arXiv:2412.17481 2024. [CrossRef]

- Tran, K.T.; Dao, D.; Nguyen, M.D.; Pham, Q.V.; O’Sullivan, B.; Nguyen, H.D. Multi-agent collaboration mechanisms: A survey of llms. arXiv preprint arXiv:2501.06322 2025. [CrossRef]

- Yan, B.; Zhou, Z.; Zhang, L.; Zhang, L.; Zhou, Z.; Miao, D.; Li, Z.; Li, C.; Zhang, X. Beyond self-talk: A communication-centric survey of llm-based multi-agent systems. arXiv preprint arXiv:2502.14321 2025. [CrossRef]

- Zou, H.P.; Huang, W.C.; Wu, Y.; Chen, Y.; Miao, C.; Nguyen, H.; Zhou, Y.; Zhang, W.; Fang, L.; He, L.; et al. Llm-based human-agent collaboration and interaction systems: A survey. arXiv preprint arXiv:2505.00753 2025. [CrossRef]