Submitted:

11 March 2026

Posted:

13 March 2026

Read the latest preprint version here

Abstract

Keywords:

1. Introduction: The AI Revolution in Education

1.1. The Landscape of AI in Education Today

- Text generation: AI can produce essays, reports, summaries, translations, and creative writing in seconds, across virtually any subject and any level of complexity.

- Code generation: AI can write, debug, and explain code in dozens of programming languages, from simple scripts to complex algorithms.

- Image generation: AI can create illustrations, diagrams, concept art, and photorealistic images from text descriptions.

- Data analysis: AI can process datasets, identify patterns, generate visualisations, and produce statistical summaries.

- Personalised tutoring: AI can adapt explanations to a student’s level, answer follow-up questions, and provide step-by-step guidance through complex problems.

- Research assistance: AI can summarise papers, identify relevant literature, and synthesise findings across multiple sources.

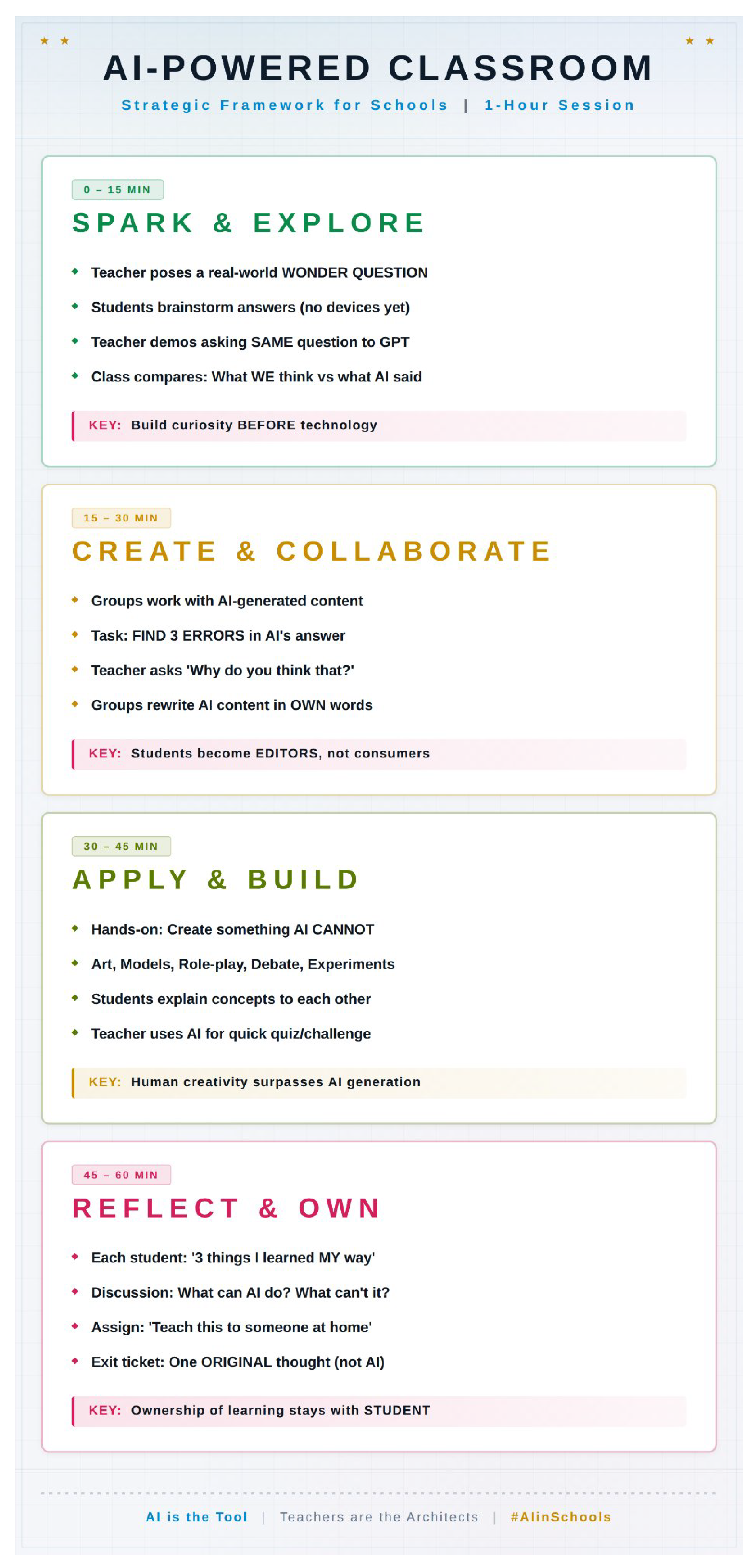

2. Part I: AI-Powered Classroom—Framework for Schools

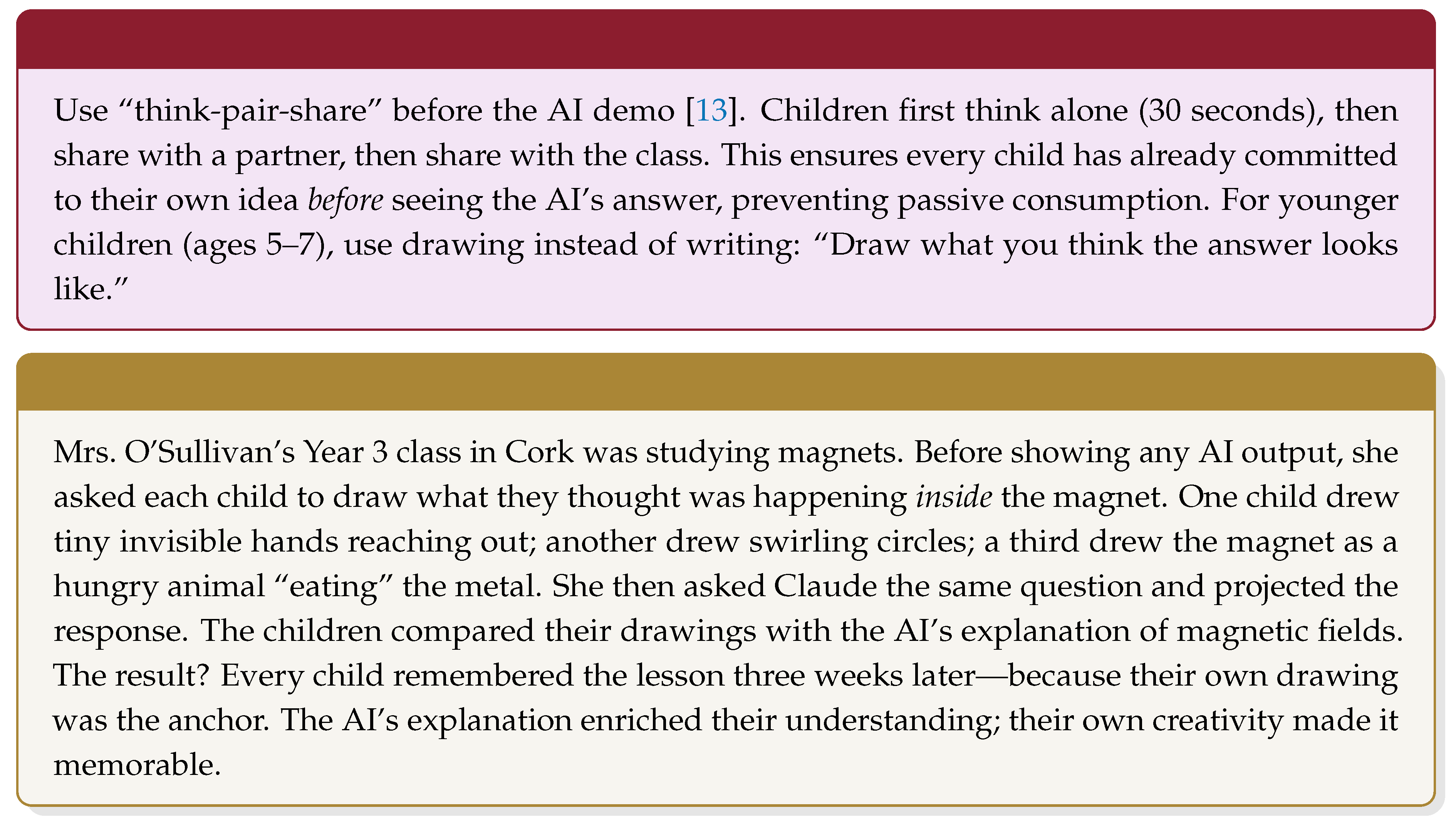

2.1. Phase 1: Spark & Explore (0–15 Minutes)

2.1.1. Elementary Level (Ages 5–11)

- “Why do leaves change colour in autumn?”

- “How does a caterpillar become a butterfly?”

- “What would happen if it never rained?”

- “Why is the ocean salty but rivers are not?”

- “How do birds know where to fly in winter?”

2.1.2. Higher Secondary Level (Ages 14–18)

- “Is social media making us more connected or more lonely?”

- “Should gene editing be allowed in human embryos?”

- “Can an AI-generated poem be considered real art?”

- “Is economic growth always good for a country?”

- “Should a self-driving car sacrifice its passenger to save five pedestrians?”

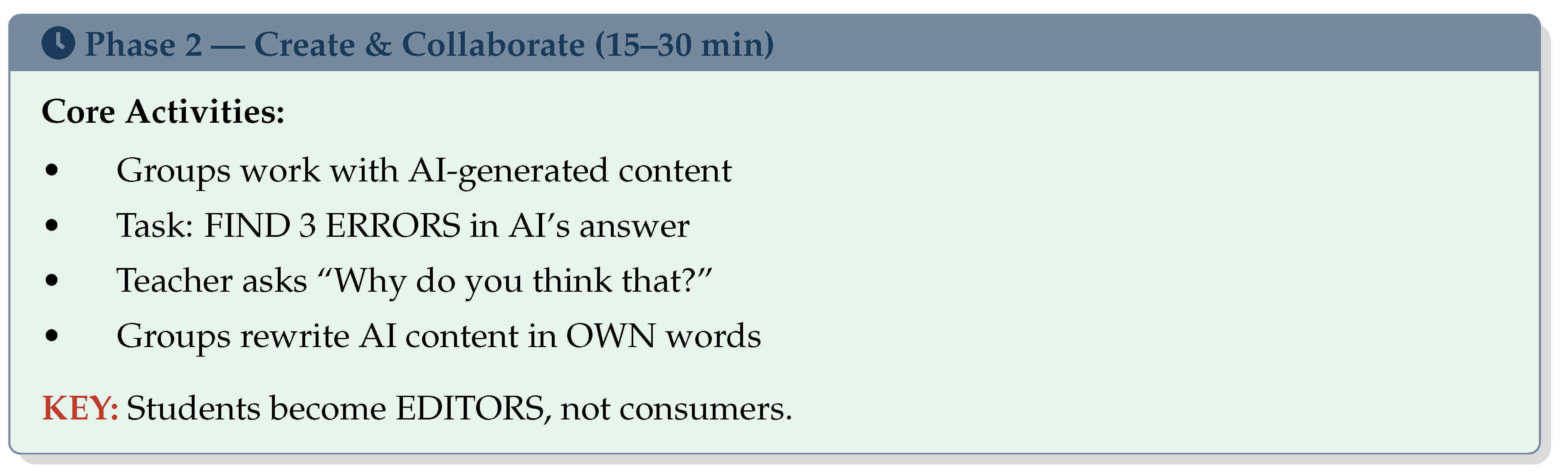

2.2. Phase 2: Create & Collaborate (15–30 Minutes)

2.2.1. Elementary Level (Ages 5–11)

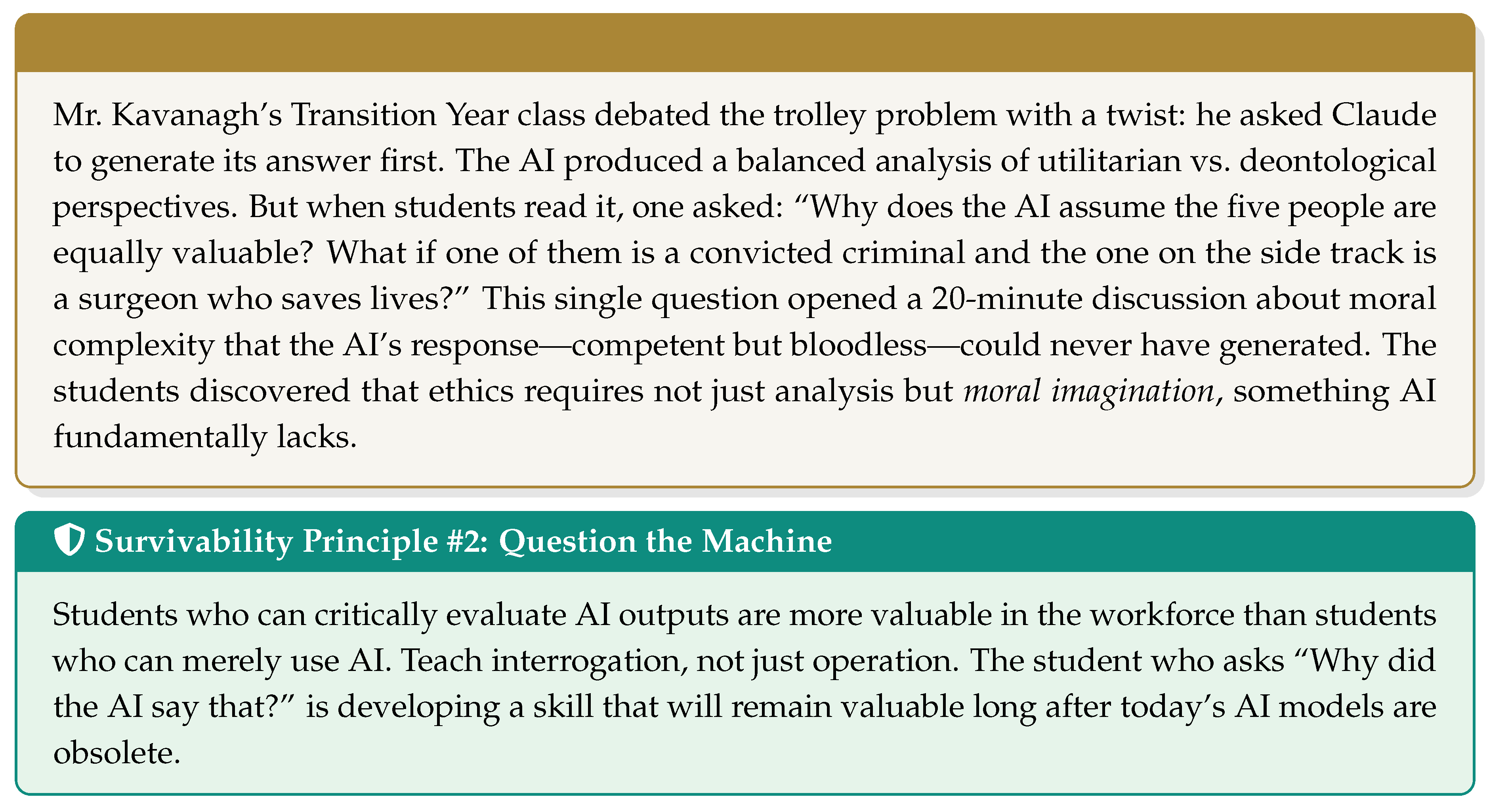

2.2.2. Higher Secondary Level (Ages 14–18)

- 1.

- Identify three substantive weaknesses (not typos or formatting issues)

- 2.

- Explain why each is a weakness, citing specific evidence or reasoning

- 3.

- Rewrite the relevant paragraph to fix it, demonstrating the improvement

- 4.

- Reflect on what the exercise reveals about AI’s limitations in this subject area

2.3. Phase 3: Apply & Build (30–45 Minutes)

2.3.1. Elementary Level (Ages 5–11)

- Science: Build a simple circuit, grow bean plants, conduct sink-or-float experiments, create a weather station from household materials

- Art: Create a collage, paint a mural, design a poster using only hands and materials, sculpt with clay or playdough

- Language: Role-play a story scene, perform a class debate on a simple motion, create a radio play with sound effects

- Maths: Use physical manipulatives (blocks, beads, fraction tiles) to solve problems the AI solved abstractly, measure real objects and compare with AI estimates

- Geography: Build a 3D map of the local area, conduct a mini-survey of classmates and graph the results by hand

2.3.2. Higher Secondary Level (Ages 14–18)

- Science: Design and run an actual experiment, then compare results to AI predictions. Where did reality diverge from the model? What variables did the AI not account for?

- History: Stage a mock trial of a historical figure, using AI-generated evidence that students must cross-examine for bias, anachronism, and omission

- Literature: Write a creative response to a poem—not an analysis, but a conversation with the text

- Business Studies: Develop a startup pitch for a local problem, using AI for market research but human judgment for the value proposition

- Mathematics: Model a real-world scenario using both hand calculations and AI, then compare approaches and identify where human intuition adds value

- Languages: Translate a passage using AI, then improve the translation by adding cultural nuance and idiomatic expressions that the AI missed

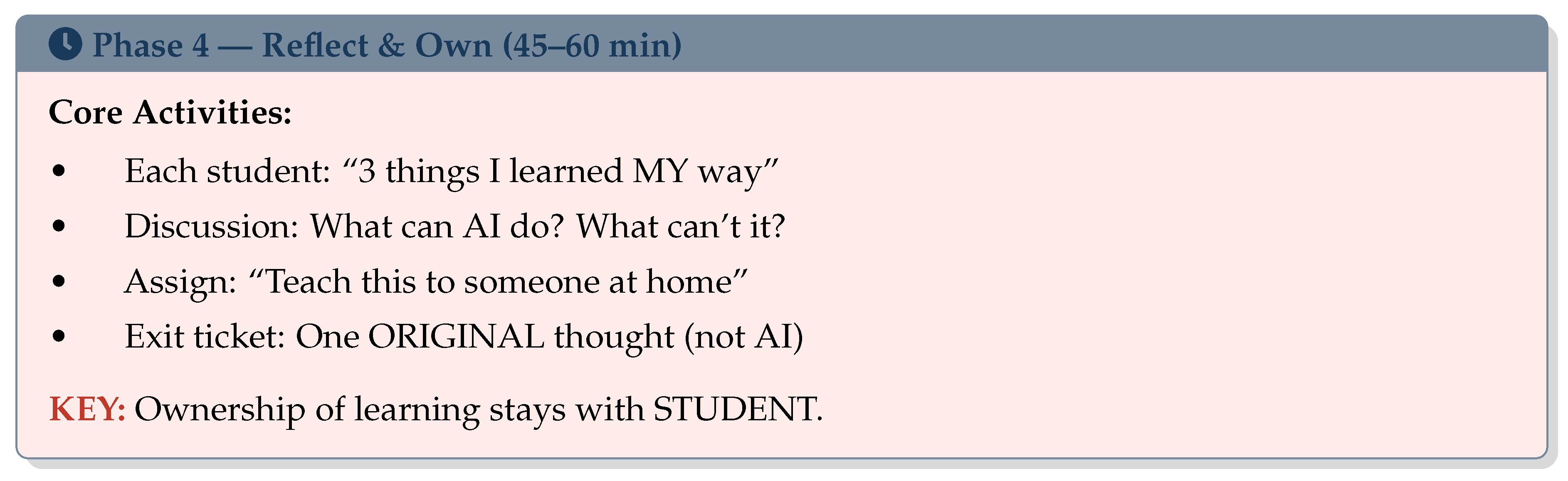

2.4. Phase 4: Reflect & Own (45–60 Minutes)

2.4.1. Elementary Level (Ages 5–11)

2.4.2. Higher Secondary Level (Ages 14–18)

- What did AI help me understand better today?

- Where did AI mislead me or give me a shallow answer?

- What can I do that AI cannot, and how do I develop that further?

- If I had to explain today’s topic to someone with no internet access, how would I do it?

- What assumptions did I make before I started, and how have they changed?

- What would I want to investigate further, and why?

3. Part II: AI-Enhanced Teaching—Framework for Colleges

3.1. Phase 1: Engage & Provoke (0–15 Minutes)

3.2. Phase 2: Deepen & Challenge (15–30 Minutes)

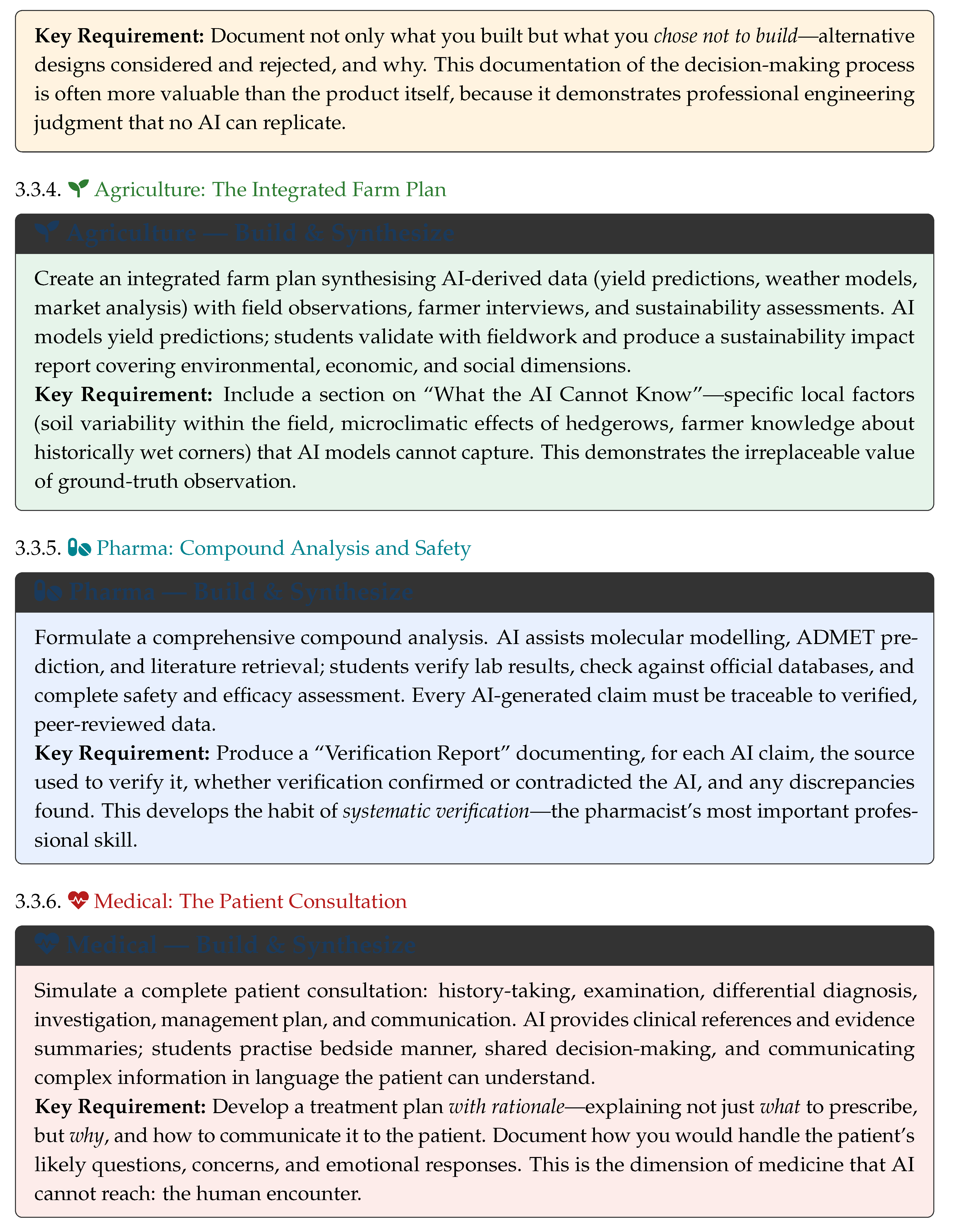

3.3. Phase 3: Build & Synthesize (30–45 Minutes)

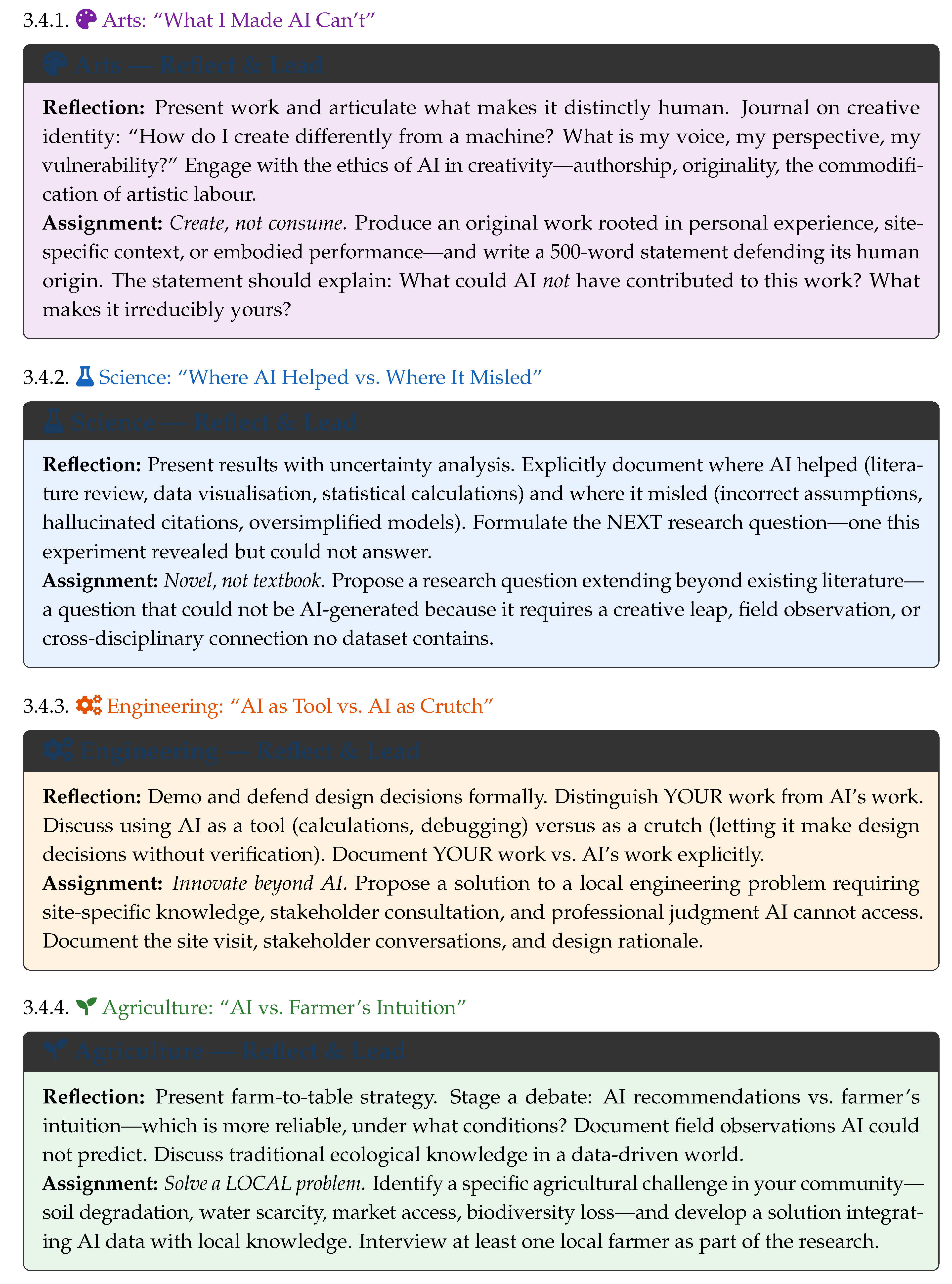

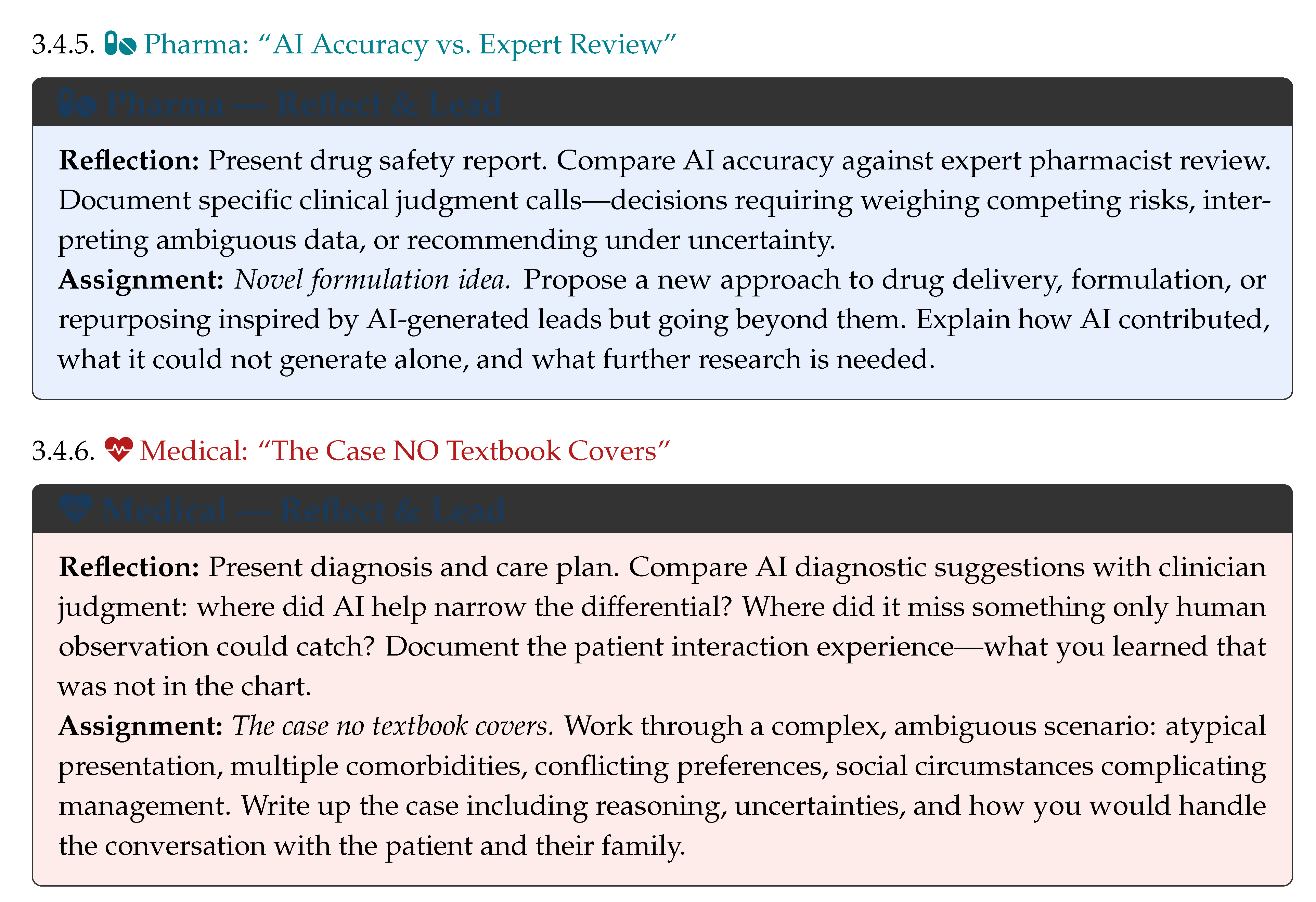

3.4. Phase 4: Reflect & Lead (45–60 Minutes)

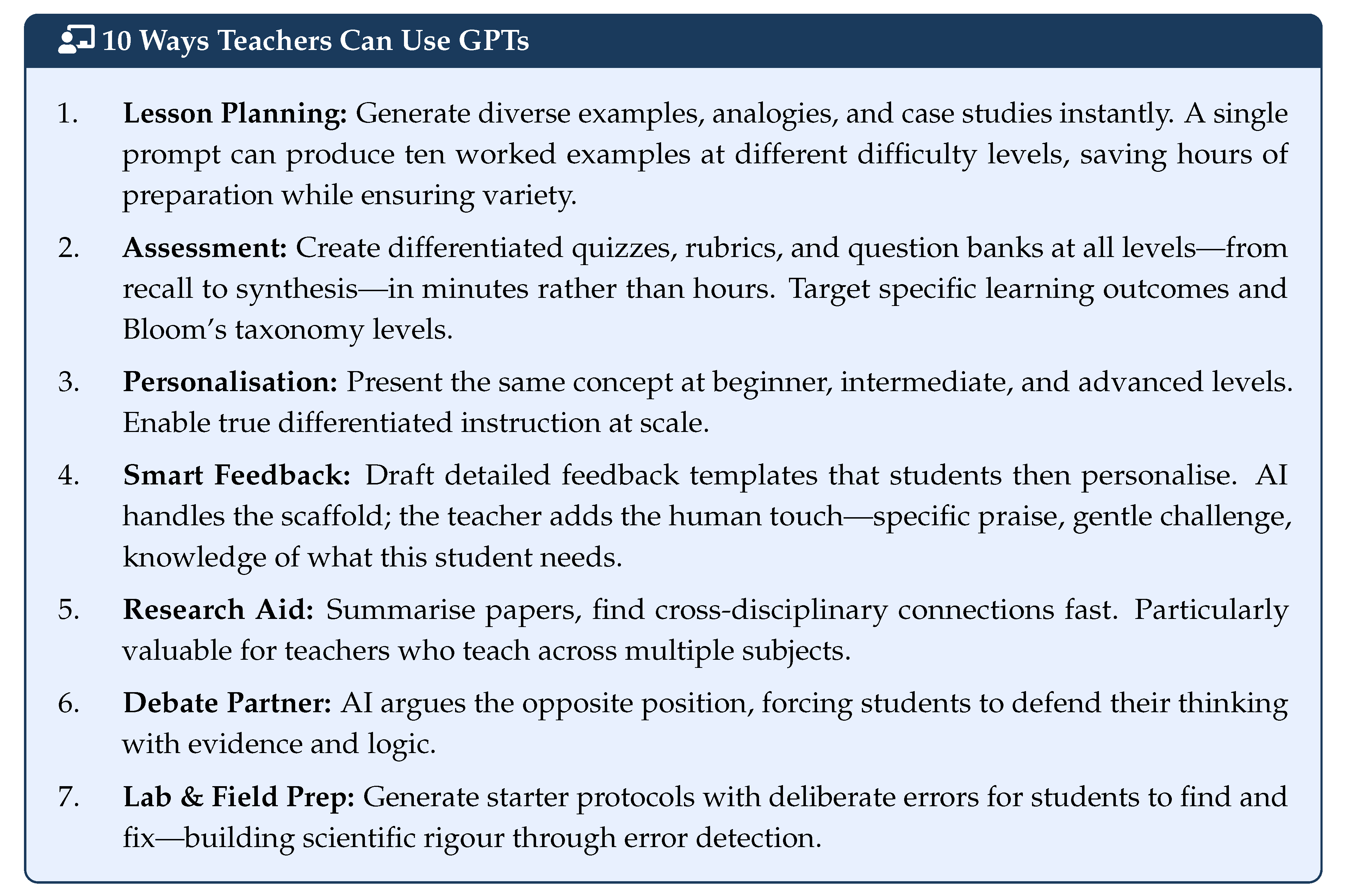

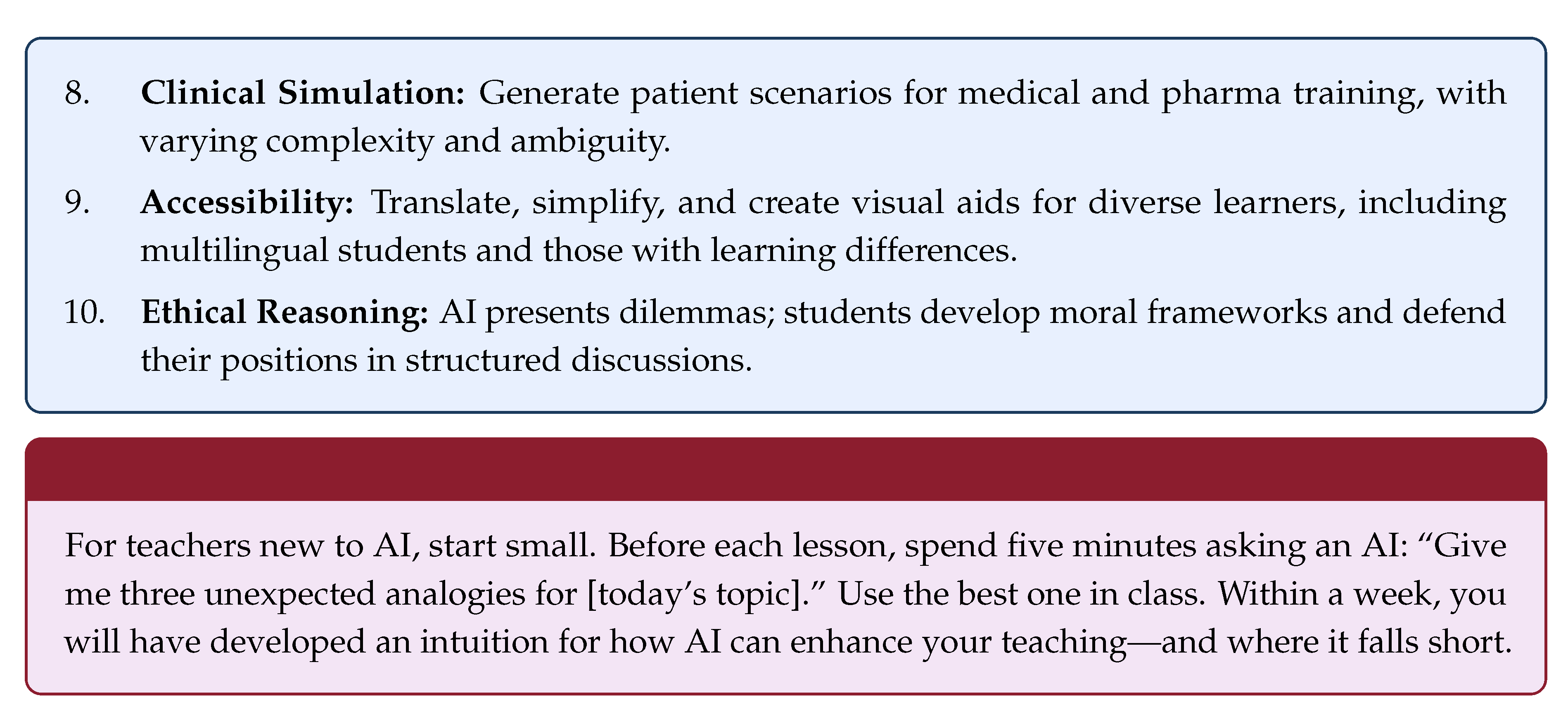

4. How Teachers Can Use GPTs to Enrich Teaching

5. Surviving and Thriving in the Age of GPTs

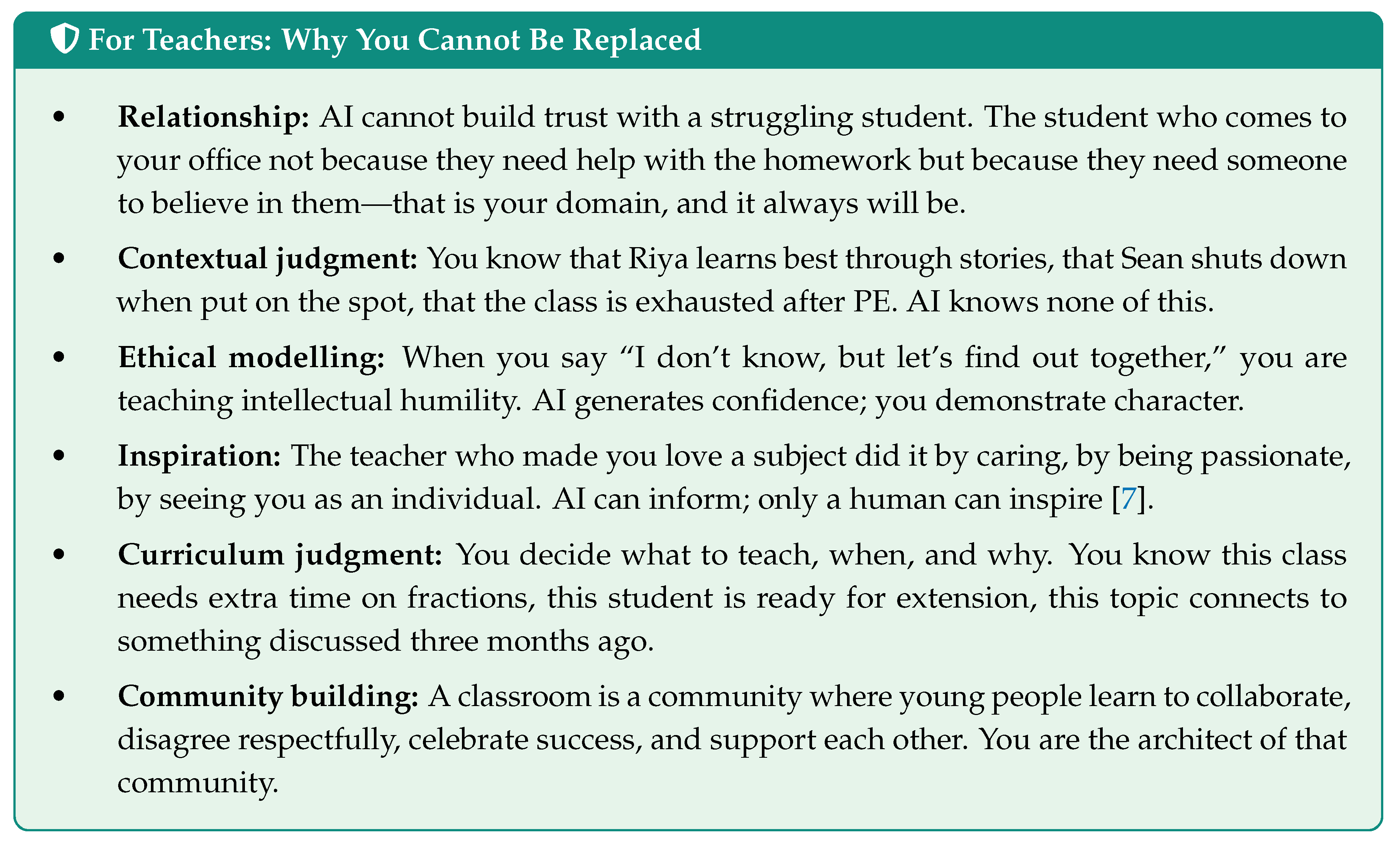

5.1. For Teachers: Your Irreplaceable Value

5.2. For Students: Skills That AI Cannot Replace

5.3. For Institutions: Strategic Imperatives

6. Conclusion: The Human at the Centre

Acknowledgments

References

- Seldon, A.; Abidoye, O. The Fourth Education Revolution Reconsidered: Will Artificial Intelligence Enrich or Diminish Teaching and Learning? University of Buckingham Press, 2020. [Google Scholar]

- Bommasani, R.; Hudson, D.A.; Adeli, E.; Altman, R.; Arber, S.; von Arx, S.; et al. On the Opportunities and Risks of Foundation Models. arXiv arXiv:2108.07258. [CrossRef]

- Luckin, R.; Holmes, W.; Griffiths, M.; Forcier, L.B. Intelligence Unleashed: An Argument for AI in Education; Pearson Education: London, 2016. [Google Scholar]

- Bloom, B.S.; Engelhart, M.D.; Furst, E.J.; Hill, W.H.; Krathwohl, D.R. Taxonomy of Educational Objectives: The Classification of Educational Goals. In Handbook I: Cognitive Domain; David McKay Company: New York, 1956. [Google Scholar]

- Krathwohl, D.R. A Revision of Bloom’s Taxonomy: An Overview. Theory into Practice 2002, 41, 212–218. [Google Scholar] [CrossRef]

- Holmes, W.; Bialik, M.; Fadel, C. Artificial Intelligence in Education: Promises and Implications for Teaching and Learning; Center for Curriculum Redesign: Boston, MA, 2019. [Google Scholar]

- Hattie, J. Visible Learning for Teachers: Maximizing Impact on Learning; Routledge: London, 2012. [Google Scholar]

- Kasneci, E.; Sessler, K.; Küchemann, S.; Bannert, M.; Dementieva, D.; Fischer, F.; et al. ChatGPT for Good? On Opportunities and Challenges of Large Language Models for Education. Learning and Individual Differences 2023, 103, 102274. [Google Scholar] [CrossRef]

- Brynjolfsson, E.; McAfee, A. The Second Machine Age: Work, Progress, and Prosperity in a Time of Brilliant Technologies; W. W. Norton & Company: New York, 2014. [Google Scholar]

- Frey, C.B.; Osborne, M.A. The Future of Employment: How Susceptible Are Jobs to Computerisation? Technological Forecasting and Social Change 2017, 114, 254–280. [Google Scholar] [CrossRef]

- Piaget, J. The Origins of Intelligence in Children; International Universities Press: New York, 1952. [Google Scholar]

- Vygotsky, L.S. Mind in Society: The Development of Higher Psychological Processes; Harvard University Press: Cambridge, MA, 1978. [Google Scholar]

- Lyman, F. The Responsive Classroom Discussion: The Inclusion of All Students. In Mainstreaming Digest; Anderson, A.S., Ed.; University of Maryland: College Park, 1981; pp. 109–113. [Google Scholar]

- Flavell, J.H. Metacognition and Cognitive Monitoring: A New Area of Cognitive-Developmental Inquiry. American Psychologist 1979, 34, 906–911. [Google Scholar] [CrossRef]

- Hofer, B.K. Personal Epistemology as a Psychological and Educational Construct: An Introduction. Personal Epistemology: The Psychology of Beliefs about Knowledge and Knowing 2002, 3–14. [Google Scholar]

- Ji, Z.; Lee, N.; Frieske, R.; Yu, T.; Su, D.; Xu, Y.; et al. Survey of Hallucination in Natural Language Generation. ACM Computing Surveys 2023, 55, 1–38. [Google Scholar] [CrossRef]

- Huang, L.; Yu, W.; Ma, W.; Zhong, W.; Feng, Z.; Wang, H.; et al. A Survey on Hallucination in Large Language Models: Principles, Taxonomy, Challenges, and Open Questions. arXiv 2023. arXiv:2311.05232. [CrossRef]

- Wang, M.C.; Haertel, G.D.; Walberg, H.J. What Influences Learning? A Content Analysis of Review Literature. The Journal of Educational Research 1990, 84, 30–43. [Google Scholar] [CrossRef]

- Dignath, C.; Büttner, G.; Langfeldt, H.P. How Can Primary School Students Learn Self-Regulated Learning Strategies Most Effectively? A Meta-Analysis on Self-Regulation Training Programmes. Educational Research Review 2008, 3, 101–129. [Google Scholar] [CrossRef]

- Becher, T.; Trowler, P.R. Academic Tribes and Territories: Intellectual Enquiry and the Culture of Disciplines, 2nd ed.; Open University Press: Buckingham, 2001. [Google Scholar]

- Colton, S.; Wiggins, G.A. Computational Creativity: The Final Frontier? Frontiers in Artificial Intelligence and Applications 2012, 242, 21–26. [Google Scholar]

- Boden, M.A. The Creative Mind: Myths and Mechanisms, 2nd ed.; Routledge: London, 2004. [Google Scholar]

- Popper, K.R. The Logic of Scientific Discovery; Hutchinson: London, 1959. [Google Scholar]

- Mitchell, M. Artificial Intelligence: A Guide for Thinking Humans; Farrar, Straus and Giroux: New York, 2019. [Google Scholar]

- Altieri, M.A. Agroecology: The Science of Sustainable Agriculture, 2nd ed.; CRC Press: Boca Raton, FL, 2018. [Google Scholar]

- Bates, D.W.; Levine, D.M.; Salmasian, H.; Syrowatka, A.; Shahian, D.M.; Lipsitz, S.; et al. The Safety of Inpatient Health Care. New England Journal of Medicine 2023, 388, 142–153. [Google Scholar] [CrossRef] [PubMed]

- Charon, R. Narrative Medicine: A Model for Empathy, Reflection, Profession, and Trust. JAMA 2001, 286, 1897–1902. [Google Scholar] [CrossRef] [PubMed]

- Marmot, M.; Wilkinson, R.G. Social Determinants of Health, 2nd ed.; Oxford University Press: Oxford, 2005. [Google Scholar]

- Fleddermann, C.B. Engineering Ethics, 4th ed.; Pearson: Upper Saddle River, NJ, 2012. [Google Scholar]

- International Council for Harmonisation. Integrated Addendum to ICH E6(R1): Guideline for Good Clinical Practice E6(R2). In ICH Harmonised Guideline; 2016. [Google Scholar]

- Smith, C.M. Origin and Uses of Primum Non Nocere—Above All, Do No Harm! The Journal of Clinical Pharmacology 2005, 45, 371–377. [Google Scholar] [CrossRef] [PubMed]

- Vamathevan, J.; Clark, D.; Czodrowski, P.; Dunham, I.; Ferran, E.; Lee, G.; et al. Applications of Machine Learning in Drug Discovery and Development. Nature Reviews Drug Discovery 2019, 18, 463–477. [Google Scholar] [CrossRef] [PubMed]

- Cuban, L. Oversold and Underused: Computers in the Classroom; Harvard University Press: Cambridge, MA, 2001. [Google Scholar]

- Paul, R.; Elder, L. Critical Thinking: Tools for Taking Charge of Your Professional and Personal Life, 2nd ed.; Pearson: Upper Saddle River, NJ, 2019. [Google Scholar]

- Deakin Crick, R. Learning to Learn: Setting the Agenda for Schools in the 21st Century. In Curriculum Journal; Taylor & Francis, 2008. [Google Scholar]

- Cotton, D.R.E.; Cotton, P.A.; Shipway, J.R. Chatting and Cheating: Ensuring Academic Integrity in the Era of ChatGPT. Innovations in Education and Teaching International 2024, 61, 228–239. [Google Scholar] [CrossRef]

- Dewey, J. Experience and Education; Kappa Delta Pi: New York, 1938. [Google Scholar]

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the author. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.