Submitted:

09 March 2026

Posted:

10 March 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Multimodality in Astronomy

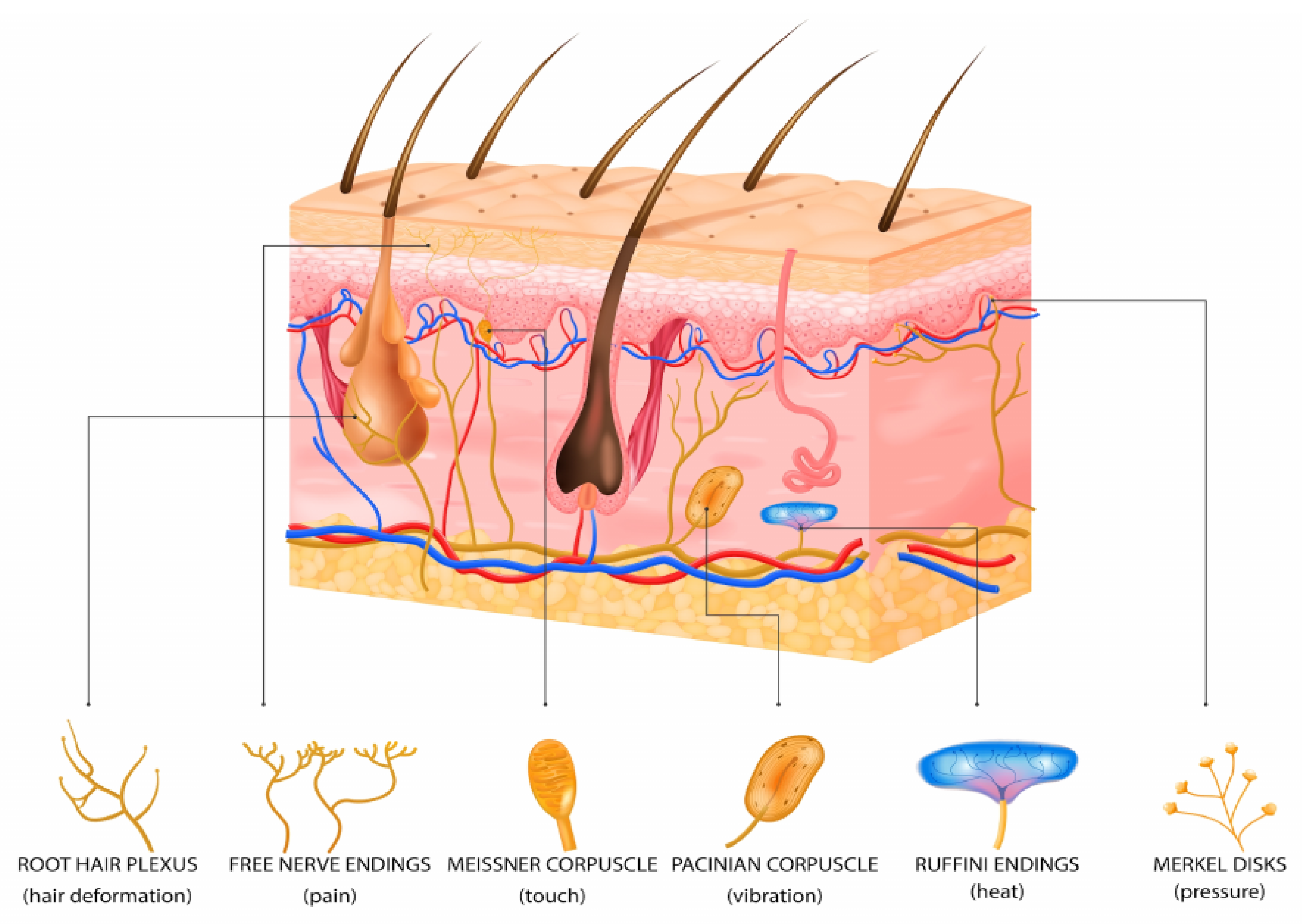

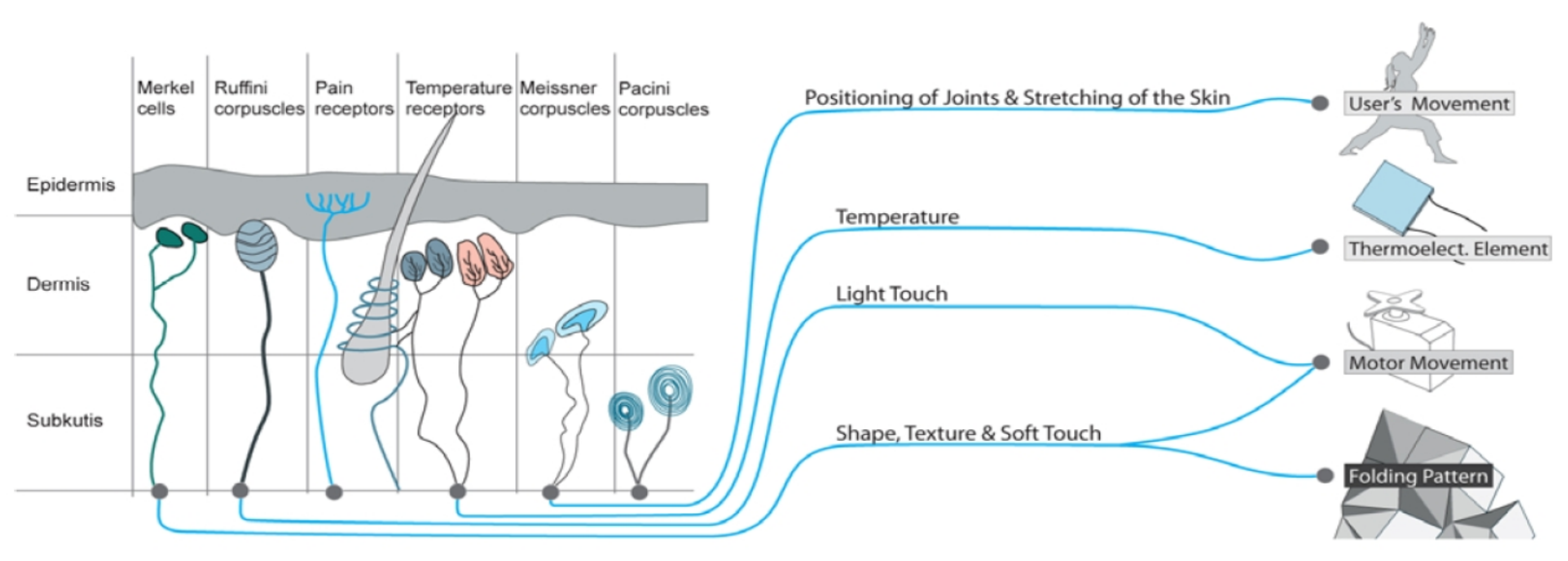

- sensor spatial resolution and sensitivity,

- temporal processing properties (e.g., adaptation and summation),

- spatial characteristics of sensory processing, and

- information processing delays.

3. Tactile Models

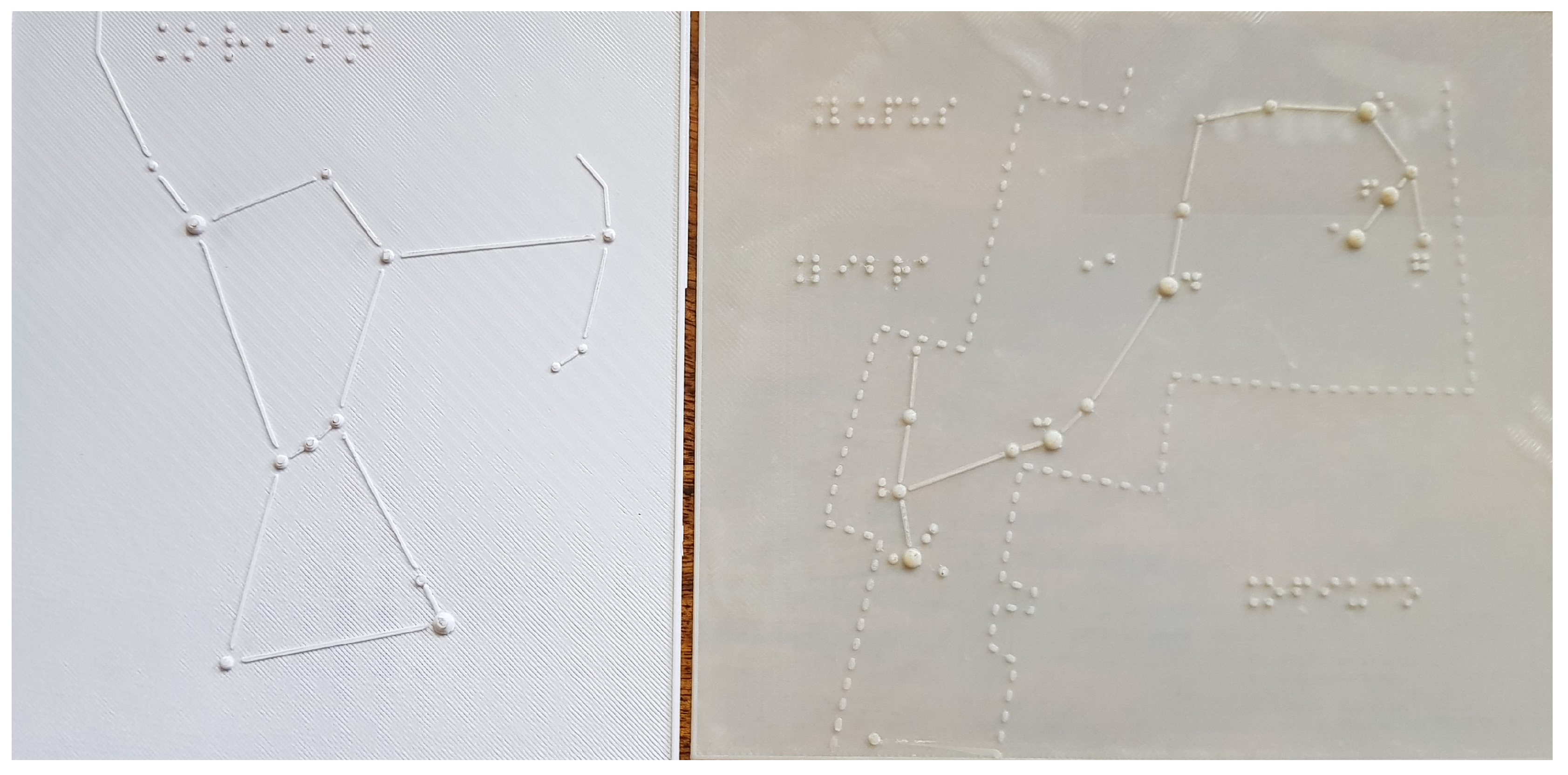

3.1. Visual and Only-Tactile-Relief Models

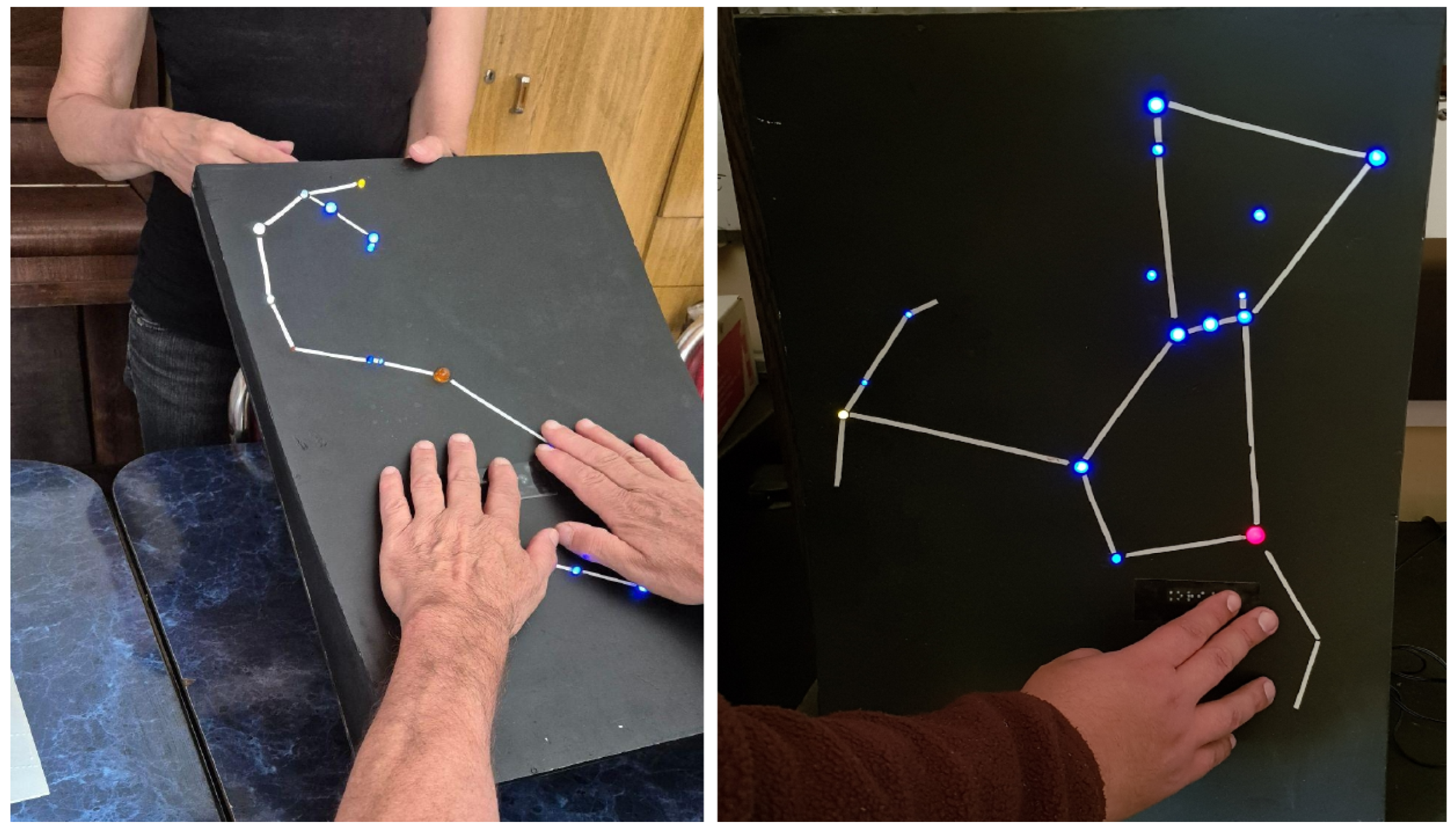

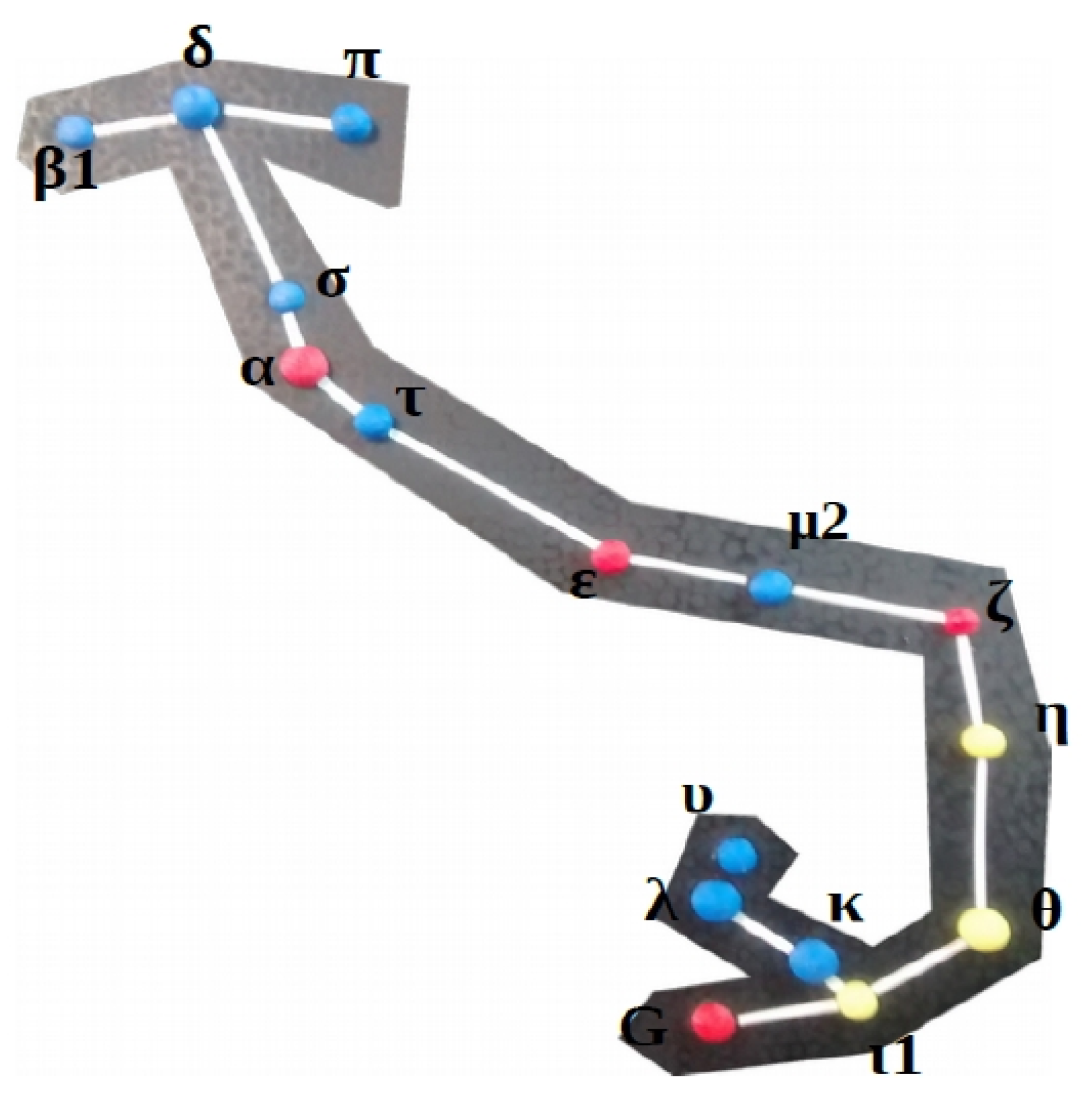

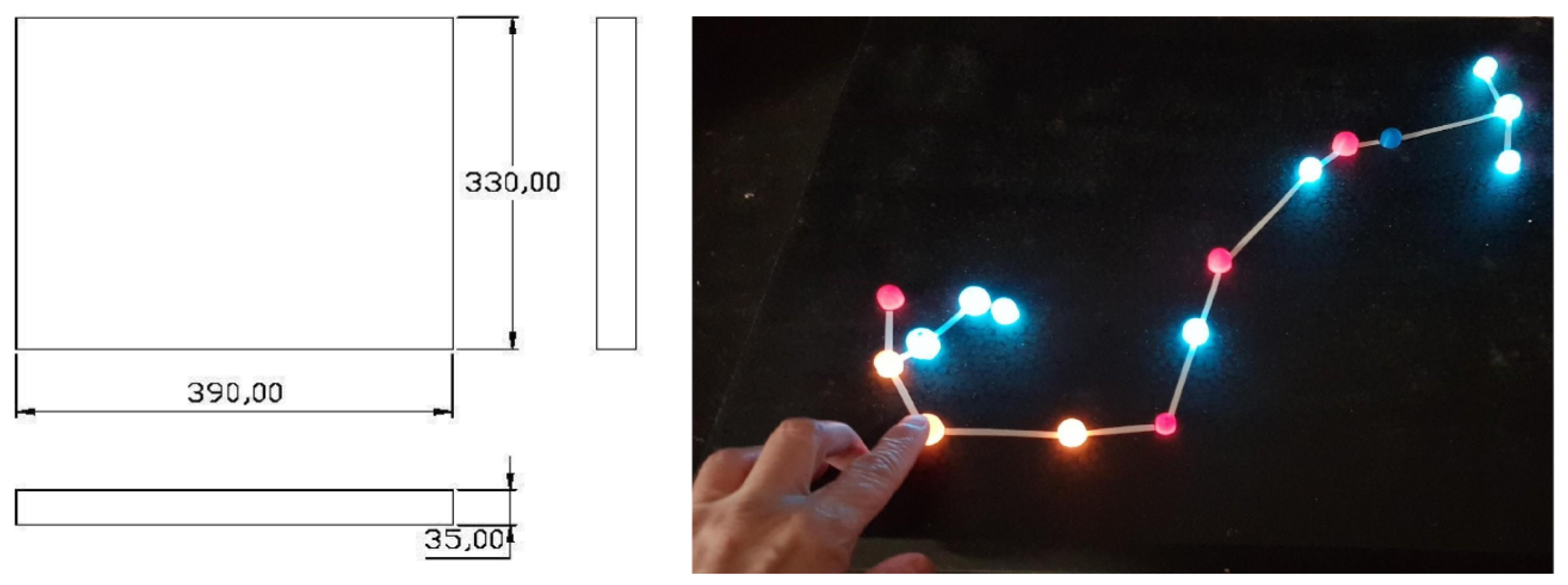

3.2. Portable Constellations

Visual and tactile-relief-thermal model implementation

- static pressure (mechanical energy), and

- thermal flow (temperature difference).

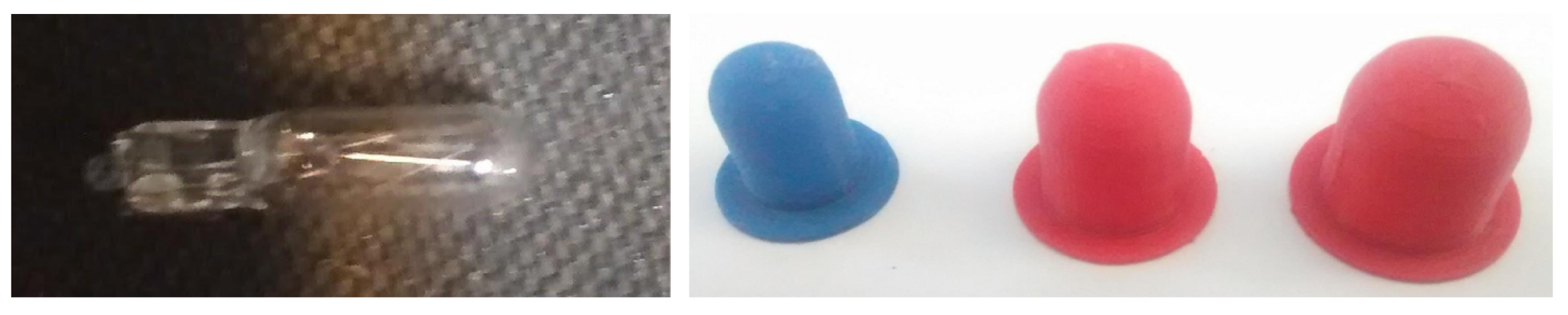

3.3. Thermal Model: Design and construction

- Thermal Actuators: Incandescent micro-lamps (T5, 12V/12W) were used as electrical-to-thermal energy transducers.

- Intensity Control: Temperature modulation was achieved using variable voltage regulators (based on the LM317 integrated circuit), allowing precise calibration of three thermal ranges representative of the spectral classes:

4. Testing the Haptic Interface

- Selective Stimulation: The design leverages the density of Meissner’s corpuscles and Merkel discs in the fingertips for texture discrimination (magnitude), while thermoreceptors (C and A-delta fibers) decode spectral information (color).

- Kinesthetic Perception: By utilizing the Scorpius asterism, kinematic exploration is encouraged, allowing the user to construct a spatial mental map based on the relative position of the actuators.

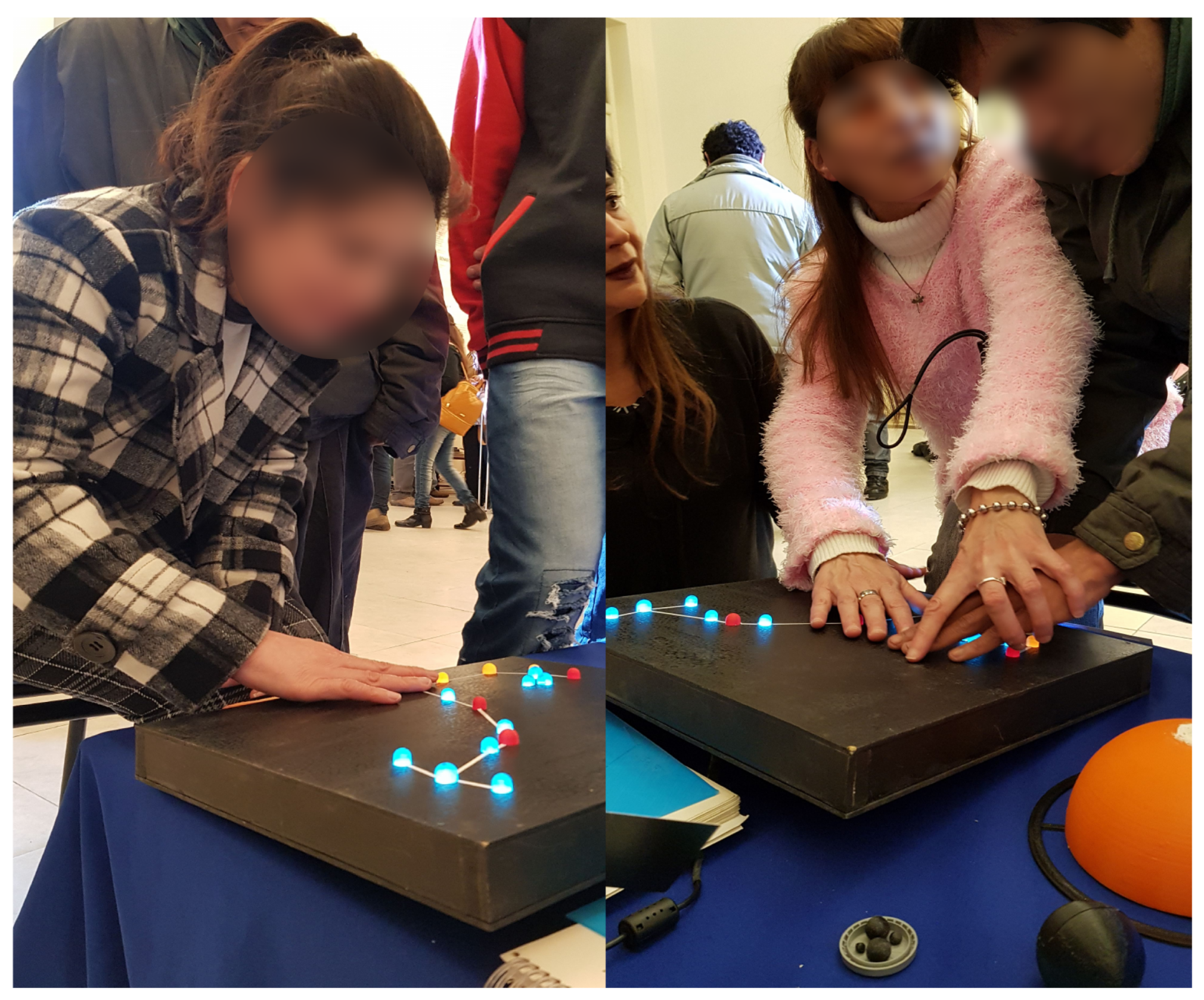

5. Pilot Qualitative Study with a Target User Group

- What did you think of the multisensory materials you used?

- What is your opinion about the relief map of the constellations?

- What is your opinion about the thermal constellation?

- Are there any aspects you would like to see improved?

- What do you think you have learned from these models?

- What would you change about their presentation?

- Do you prefer models that are only tactile, or does the addition of thermal elements aid understanding?

- What do you think about the sound effects for the colors of the stars or nebulae?

- Did working with these models change your relationship with astronomy or your understanding of science?

- reading the responses (in some cases then transcription from audio to text was needed) to identify units of meaning based on the participants’ perceptions of tactile, thermal, and auditory stimuli;

- common themes were grouped into three dimensions: Usability (simplicity, novelty, synergy between senses), pedagogical effectiveness (understanding and learning of scientific concepts), and emotional impact (inclusion, perception of science);

- analysis of multimodality, based on the comparison of simple or combined stimulus models (multiple senses: relief only, relief-thermal, relief-sound), was performed;

-

and finally, an inquiry was made into difficulties encountered, needs for change and/or improvements.From the raw responses or data in final categories, tables and matrices were used to arrive at the final numerical results.

5.1. Results of the Pilot Study

‘I thought the sky was off-limits to us, but it wasn’t. Working with these models made me feel included, included in science, and changed my whole perspective, showing me that I could access this knowledge. I felt like I was entering such a beautiful yet mysterious world’.

‘Before learning about these models, I thought astronomy was impossible for us, except perhaps through documentaries. These models changed my perception of astronomy and science. It’s very clever to incorporate thermal and sound data, which are fundamental for us. Regarding science, this can help change other aspects; it could be a starting point for accessing other topics’.

‘It was eye-opening to see how many mistakes I had made, and in some ways, I wish I had had the knowledge from the beginning so I wouldn’t have wasted that time. I love that I’m not the same person I was when I started the workshop’.

6. Conclusions

Author Contributions

Funding

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Ackerley, R; Carlsson, I; Wester, H; Olausson, H; Backlund Wasling, H. Touch perceptions across skin sites: differences between sensitivity, direction discrimination and pleasantness. Front Behav Neurosci 2014, 8, 54. [Google Scholar] [CrossRef] [PubMed] [PubMed Central]

- Arcand, K.; Jubett, A.; Watzke, M.; Price, S.; Williamson, K.; Edmonds, P. Touching the stars: improving NASA 3D printed data sets with blind and visually impaired. JCOM 2019, 18(04), A01. [Google Scholar] [CrossRef]

- Asamura, N.; Tomori, N.; Shinoda, H. A Tactile Feeling Display Based on Selective Stimulation to Skin Receptors. In Proceedings of the IEEE, Virtual Reality Annual International Symposium, Atlanta, Georgia, March 1998; 1988. [Google Scholar] [CrossRef]

- Asamura, N.; Yokoyama, N.; Shinoda, H. Method of selective stimulation to epidermal skin receptors for realistic touch. Proceedings of IEEE Virtual Reality ’99 Conference, 1999. [Google Scholar] [CrossRef]

- Bieryla, A.; Diaz-Merced, W.; Diaz-Merced, W.; Davis, D.; Hyman, S.; Hyman, S.; Troncoso Iribarren, P.; García, B.; Labbe, E. LightSound: The sound of an eclipse. *Communicating Astronomy with the Public Journal* 2020, 14(2), 38–42. [Google Scholar] [CrossRef]

- Bieryla, A. Orchestar-color Arduino. 2019. Available online: https://astrolab.fas.harvard.edu/orchestar.html (accessed on 02-02-2026).

- Bonne, N. J.; Gupta, J. A.; Krawczyk, C. M.; Masters, K. L. Tactile Universe Makes Outreach Feel Good. Astron. Geophys. 2018, 59(1), 1.30–1.33. [Google Scholar] [CrossRef]

- Casado, J.; De La Vega, G.; Díaz-Merced, W.; Gandhi, P.; García, B. Sonouno: a user-centred approach to sonification. Proceedings of the International Astronomical Union 2019, 15(S367), 120–123. [Google Scholar]

- Casado, J.; De la Vega, G.; García, B. SonoUno development: a User- Centered Sonification software for data analysis. Journal of Open Source Software 2024. [Google Scholar] [CrossRef]

- Casado, j.; García, B. A multimodal approach to data analysis in Astronomy: sonoUno applications in photometry and spectroscopy. RAS Techniques and Instruments 2025, Volume 3(Issue 1), 625–635. [Google Scholar] [CrossRef]

- Chen, D.; Levin, David I. W.; Didyk, D.I.W.; Sitthi-Amorn, P.; Matusik, W. Spec2Fab: A reducer-tuner model for translating specifications to 3D prints. ACM Transactions on Graphics (TOG) 2013, 32(4), 135. [Google Scholar] [CrossRef]

- Chouvardas, V. G.; Miliou, A. N.; Hatalis, M. K. Tactile displays: Overview and recent advances. Displays 2008, 29(3), 185–194. [Google Scholar] [CrossRef]

- De La Vega, G.; Dominguez, L. M. E.; Casado, J.; García, B. Sonouno web: An innovative user-centred web interface. HCI International 2022–Late Breaking Posters: 24th International Conference on Human-Computer Interaction, HCII 2022. Proceedings, Part I, 2022; Springer Nature Switzerland; pp. 628–633. [Google Scholar]

- Elkharraz, G.; Thumfart, S.; Akay, D.; Eitzinger, C.; Henson, B. Making tactile textures with predefined affective properties. IEEE Transactions on Affective Computing 2014, 5(1), 57–70. [Google Scholar] [CrossRef]

- Farjo, C.; Casado, J.; García, B. The CMB In 3D: tactile models as an educational and outreach resource for Astronomy. In Proceedings of the RAAA67, BAAA, 2025; in press; Vol. 67. [Google Scholar]

- Fiske, C. H. Gaining trust as well as respect in communicating to motivated audiences about science topics. In Proceedings of the National Academy of Sciences, September 2014; 2014. [Google Scholar] [CrossRef]

- García, B.; Maya, J.; Mancilla, A.; Pérez Álvarez, S.; Videla, M.; Yelós, D.; Cancio, A. Touch the sky with your hands: a special Planetarium for the blind, deaf, and motor disabled. Highlights of Astronomy, August 2012; 2012; Volume 16. [Google Scholar]

- García, B.; Maya, J.; Mancilla, A.; Pérez Álvarez, S.; Videla, M.; Yelós, D.; Cancio, A.; Broin, D.; Ferrada, R. A Multisensory Space to Teach and Learn Astronomy. EPSC Abstracts, 2013; Vol. 8, p. EPSC2013-348. [Google Scholar]

- García, B. La trama celeste: por qué educar en astronomía. Una oportunidad de aprendizajes múltiples. Boletín de la AAA 2016, vol.58, 331–337. Available online: https://articles.adsabs.harvard.edu/pdf/2016BAAA...58..331G.

- García, B; Mancilla, A.; Maya, J.; Pérez, S.; Yelós, D.; Cancio, A.; Castro, J. Astronomía para la Igualdad y la Inclusión, en CIEDUC2017, marzo 2017, Mendoza, Argentina; Ed. Universidad de Alcalá de Henares, 2017; Volume 2017, pp. 270–79. Available online: http://www.cieduc.org/2017/LibroCIEDUC2017-Volumen1.pdf.

- Gibson, J. J. Observations on active touch. Psychological Review 1962, 69(6), 477–491. [Google Scholar] [CrossRef] [PubMed]

- Gibson, J. J. The senses considered as perceptual systems; Houghton Mifflin: Boston, 1966. [Google Scholar]

- Green, B. G. Localization of thermal stimuli to the hand: Evidence of a central mechanism of thermal sensitivity. Perception & Psychophysics 1977, 22(4), 331–337. [Google Scholar]

- Gruber, D. Astronomy for all Senses. CAPjournal 2019, No. 25. [Google Scholar]

- Ho, H.-N.; Jones, L. A. Thermal and tactile integration in the perception of touch. Journal of Neurophysiology 2006, 96(3), 1664–1673. [Google Scholar]

- Jehoel, S.; McCallum, D.; Rowell, J.; Ungar, S. An empirical approach on the design of tactile maps and diagrams: the cognitive tactualisation approach. British Journal of Visual Impairment 2006, 24(2), 67–75. [Google Scholar] [CrossRef]

- Jones, L. A.; Berris, M. The psychophysics of temperature perception and thermal-interface materials. Proceedings of the Human Factors and Ergonomics Society Annual Meeting 2002, 46(21), 1735–1739. [Google Scholar]

- Kastrup, V. A invenção na ponta dos dedos: a reversão da atenção em pessoas com deficiência visual. Psicologia, em Revista 69, Belo Horizonte 2007, v. 13(n. 1), 69–90. [Google Scholar]

- Katz, D. The world of touch; Lawrence Erlbaum Associates: NJ, 1989; Available online: https://www.taylorfrancis.com/books/.

- Ktchen Astronomy. Available online: https://kitchen-theory.com/g-astronomy-the-universe-at-the-tip-of-your-tongue/ (accessed on 02-02-2026).

- Neves, M.; Almeida, M.; Miquelin, A.; Silva, J.; Lima, C.; Nascimento, C. Stars Beyond Sight: Developing a Universally Accessible 3D Tactile Resource for Teaching Constellations to Visually Impaired Learners 1. 2025. [Google Scholar]

- Nielsen, J. Usability engineering; Academic Press, 1993. [Google Scholar]

- Obrist, M.; Velasco, C. Multisensory Experiences: Formation, Realization, and Responsibilities. Communications of the ACM 2025, 68. [Google Scholar] [CrossRef]

- OpenStax CNX. Somatosensation; Modified, 2017; Available online: https://archive.cnx.org/contents/b32f61fc-5fab-4b07-bcc8-e455aa4a903d@6/.

- Piovarči, M.; Rebello, D.I.W.; Chen, J.; Ďurikovič, D.; Pfister, R.; Matusik, W.; Didy, P. An interaction-aware, perceptual model for non-linear elastic objects. ACM Transactions on Graphics (Proc. SIGGRAPH) 2016, 35(4), 55. [Google Scholar] [CrossRef]

- Pohl, I.M.; Loke, L. Engaging the sense of touch in interactive architecture. OZCHI, 2012. [Google Scholar] [CrossRef]

- Ramachandran, V.; Brang, D. Tactile-emotion synesthesia. Neurocase 2008, 14(5), 390–9. [Google Scholar] [CrossRef]

- ReInForce Projet. 02 02 2026. Available online: https://www.reinforceeu.eu.

- Rowell, J.; Ungar, S. Felling Our Way: Tactile Map User Requirements – A Survey. In Proceedings of the 21st International Cartographic Conference (ICC) Durban; South Africa, Cartographic Renaissance Hosted by The International Cartographic Association (ICA), 2003; ISBN 0-958-46093-0. [Google Scholar]

- sonoUno software. Available online: https://reinforce.sonouno.org.ar (accessed on 02-02-2026).

- Steffen, W.; Teodoro; Madura, T. I.; Groh, J. H.; Gull, T. R.; Mehner, A.; Corcoran, M. F.; Damineli, A; Hamaguchi, K. The three-dimensional structure of the Eta Carinae Homunculus. Monthly Notices of the Royal Astronomical Society 2014, Volume 442(Issue 4), 3316–3328. [Google Scholar] [CrossRef]

- Tecnópolis (2025) Wikipedia. 16 02 2026. Available online: https://en.wikipedia.org/wiki/Tecn%C3%B3polis.

- Torres, C.; Campbell, T.; Kumar, N.; Paulos, E. HapticPrint: Designing feel aesthetics for digital fabrication. In Proceedings of the 28th Annual ACM Symposium on User Interface Software & Technology. ACM, 2015; pp. 583–591. [Google Scholar]

- Trotta, R. G-Astronomy: The Universe at the Tip of your Tongue. 2023. Available online: https://kitchen-theory.com/g-astronomy-the-universe-at-the-tip-of-your-tongue/.

- Tymms, C.; Gardner, E.P.; Zorin, D. A Quantitative Perceptual Model for Tactile Roughness. In ACM Transactions on Graphics (TOG); 2018; Volume 37, Issue 5 , Article No. 168; pp. 1–14. Available online: https://dl.acm.org/doi/10.1145/3186267.

- Varano, S.; Zanella, A. Design and evaluation of a multi-sensory representation of scientific data. Frontiers in Education 2023, 8. [Google Scholar] [CrossRef]

- Ventorini, S. E. A experiência como fator determinante na representação espacial do deficiente visual. Dissertação (mestrado), Universidade Estadual Paulista, Instituto de Geociências e Ciências Exatas, Rio Claro, 2007. [Google Scholar]

- Yamamoto, A.; Cros, B.; Hashimoto, H.; Toshiro, H. Control Of Thermal Tactile Display based on Prediction of Contact Temperature. Proceedings - IEEE International Conference on Robotics and Automation 2004, 2004(2), 1536–1541. [Google Scholar] [CrossRef]

- Zanella, A.; Harrison, C. M.; Lenzi, S.; Cooke, J.; Damsma, P.; Fleming, S. W. Sonification and sound design for astronomy research, education and public engagement. In Nature Astronomy; 2022; pp. 1–8. [Google Scholar] [CrossRef]

| Id Star | Mag | LED Size | SpT/Color |

|---|---|---|---|

| alfa | 1.6 | 10 | K-red |

| lambda | 1.6 | 10 | B-blue |

| tetha | 1.9 | 8 | A-white/yellow |

| delta | 2.3 | 8 | B-blue |

| epsilon | 2.3 | 8 | K-red |

| kappa | 2.4 | 8 | B-blue |

| beta1 | 2.6 | 5 | B-blue |

| nu | 2.7 | 5 | B-blue |

| tau | 2.8 | 5 | B-blue |

| pi | 2.9 | 5 | B-blue |

| sigma | 3 | 5 | B-blue |

| iota1 | 3 | 5 | F-yellow |

| mu1 | 3 | 5 | B-blue |

| G | 3.2 | 5 | K-red |

| eta | 3.3 | 5 | F-yellow |

| zeda2 | 3.6 | 5 | K-red |

| ID | Complete Answer | User Experience / Sensorial Dimension | |||

|---|---|---|---|---|---|

| Tactile | Thermal | Sonification | Synergy | ||

| U1 | "I thought the sky was off-limits to us, but it wasn’t. Working with these models made me feel included..." | I thought they were very good. | The thermal component completes the touch model. | Sound was very effective for learning concepts like color. | I thought the combination of materials was perfect. |

| U2 | "Before learning about these models, I thought astronomy was impossible for us..." | I thought the materials were perfect. | The thermal aspect was innovative. | Sound complements the other senses. | A perfect combination. |

| U3 | "I had never thought that there are many things that blind people cannot even imagine..." | I thought the materials were wonderful. | The thermal feature was the icing on the cake! | It is very interesting. | I saw more by touching than by looking at the sky! |

| U4 | "Until now, astronomy was, in my opinion, a science for experts..." | Very useful. | Interesting. | I didn’t know the possibility of sound reinforcement existed. | I thought the multisensory materials were good. |

| U5 | "Working with these models changed my relationship with astronomy and science..." | The relief map helped a lot to understand the constellations. | The thermal constellations helped to identify colors. | The sound system is very effective for understanding brightness. | Method helped to build a mental image of the objects. |

| U6 | "I learned things I never imagined I could learn." | Good. | The combination of tactile relief with thermal sensations was enriching. | Interesting, because I’d never heard of such data before. | The multisensory proposal is very exciting. |

| ID | Cognitive Dimension | Emotional Dimension | Improvement | ||||

|---|---|---|---|---|---|---|---|

| New Learning | Understand Structures | Ideas about Astronomy | Interest | Inclusion | Science Vision | Critics | |

| U1 | Understood color/temp relationship. | Interested in galaxies and nebulae shapes. | Changed many concepts. | Exceeded expectations. | Felt totally included. | Now I know I can understand the universe. | Nothing to change. |

| U2 | Improved perception of stars. | Sound effects allowed knowing what nebulae are like. | Brought abstract topics closer. | Very interesting proposal. | Felt very included. | Changed perception of science in general. | Nothing to change. |

| U3 | Corrected bad concepts in all topics. | — | Eye-opening to realize past errors. | Felt very motivated. | "I could see, and I felt very included." | Changed my view of science. | Nothing to change. |

| U4 | Constellations topic was new and enriching. | Interpreted through touch. | As an artist, learned true color formation. | Inspired to communicate to others. | Felt very comfortable. | Learned to see from another perspective. | Add smell and tactile signage. |

| U5 | Relationship between brightness, distance and color. | Recognized extended objects like nebulae. | Changed relationship with research. | Very excited to know more. | Felt very included. | Changed relationship with science. | More time for exploration. |

| U6 | Novel topics related to celestial objects. | — | Sound effects for chemical composition. | Enriching experience. | Felt very included. | Made me think beyond the workshop. | Loved the course. |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).