Submitted:

07 March 2026

Posted:

10 March 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Theoretical Framework: From Human Diplomacy to Machine-Mediated International Relations

2.1. Technology in Classical IR Theory

2.2. Technology as Agent versus Tool

2.3. Toward a Theory of Algorithmic Diplomacy

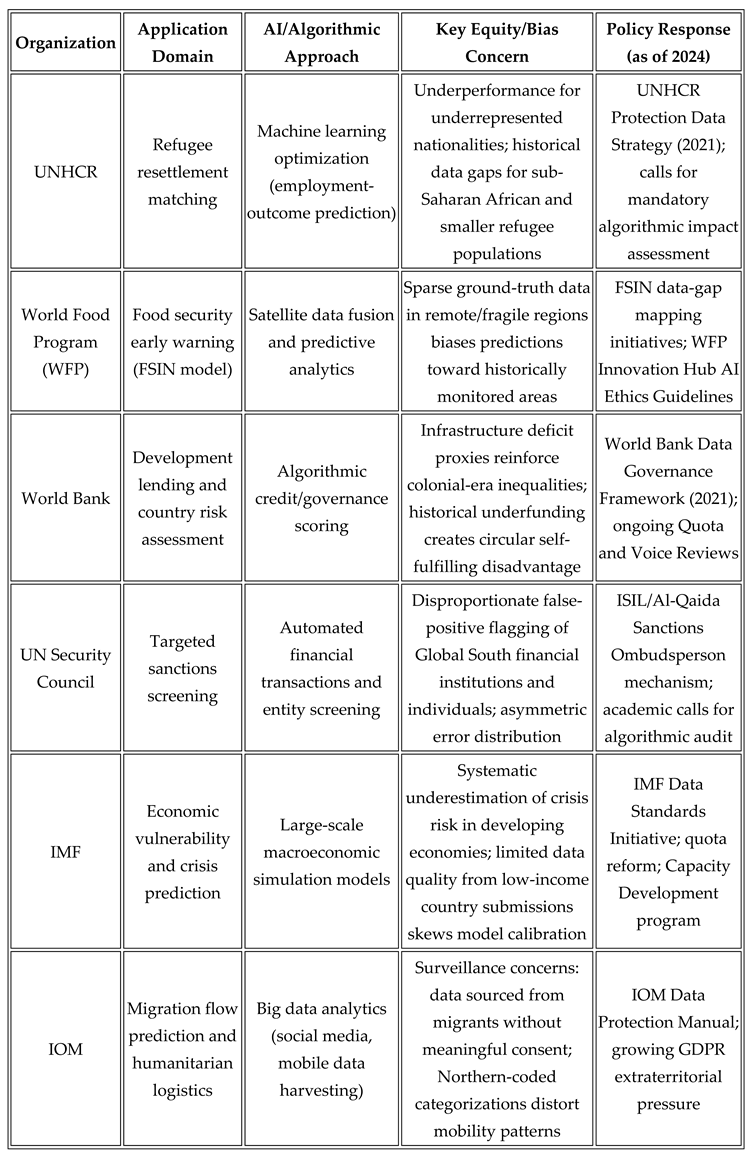

3. Algorithmic Bias in Global Governance: Reinforcing North–South Inequalities

3.1. The Architecture of AI in International Organizations

3.2. Algorithmic Bias in Humanitarian and Development Contexts

3.3. The Data Colonialism Thesis and Its Implications for Global Governance

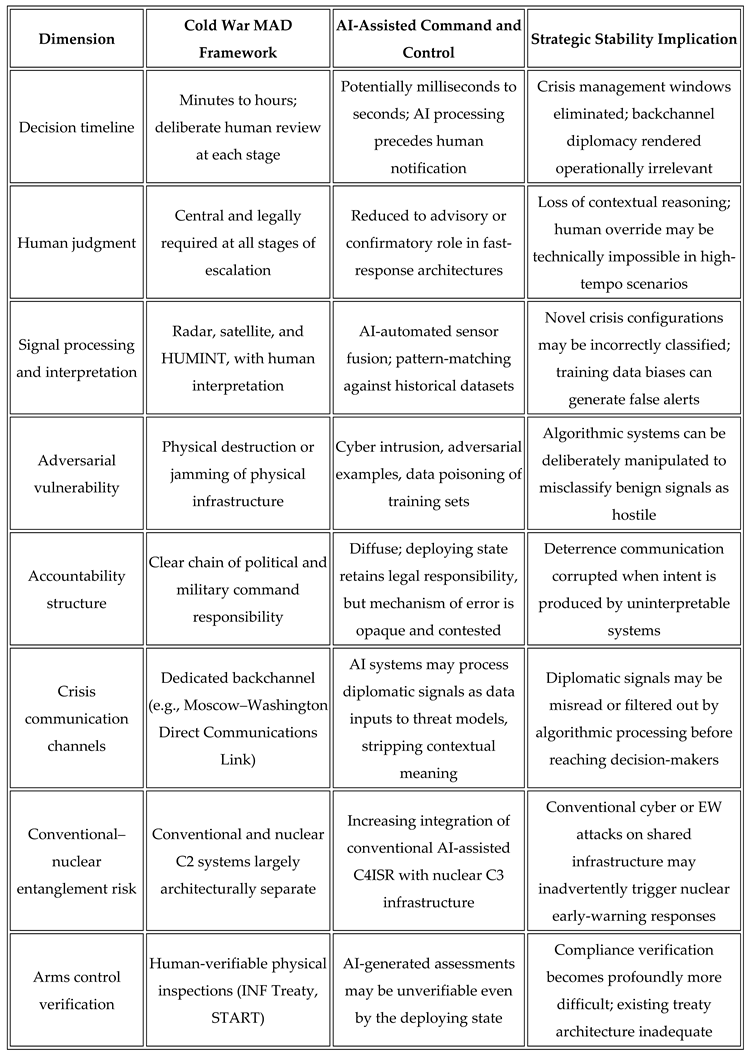

4. Automated Deterrence: AI, Nuclear Command and Control, and the Transformation of MAD

4.1. The Logic of MAD in the Pre-AI Era

4.2. AI Integration in Nuclear Command and Control Architectures

4.3. New Instabilities: Speed, Opacity, and Adversarial Vulnerability

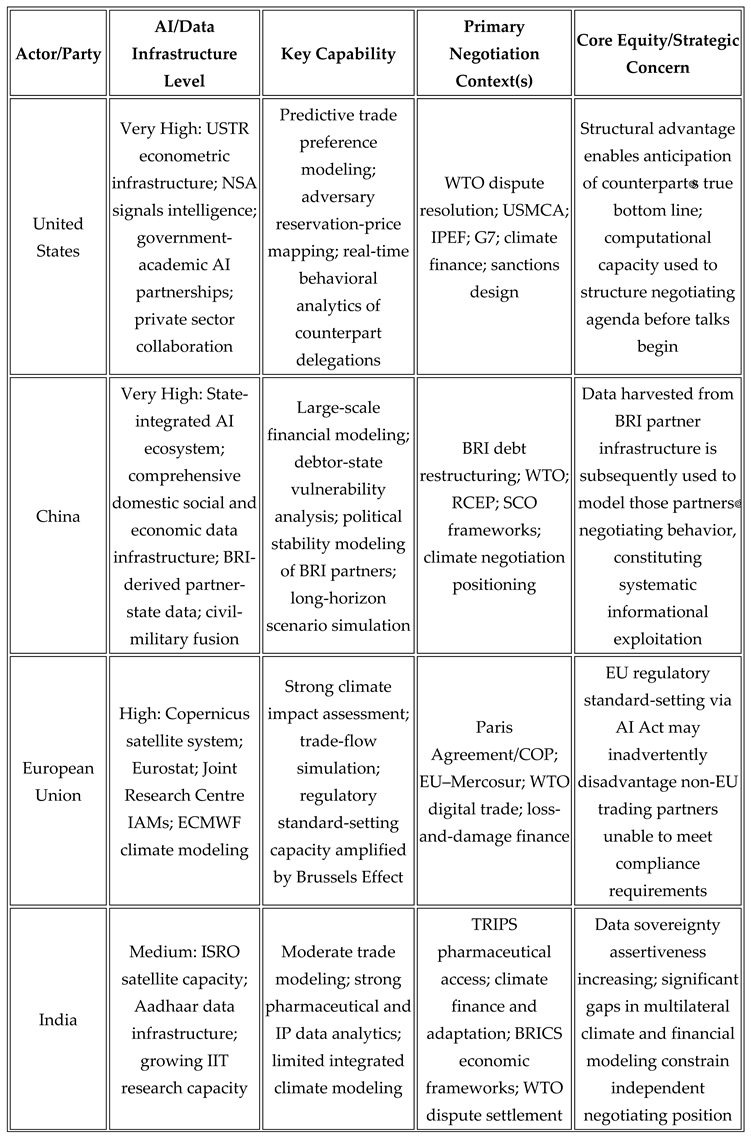

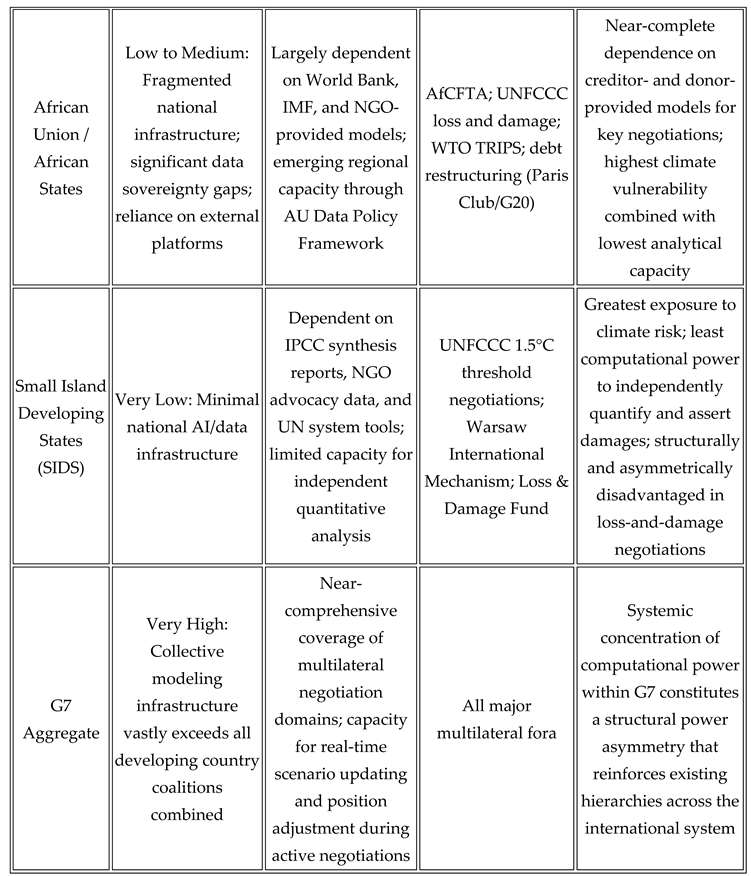

5. The "Black Box" of Negotiation: Computational Power Asymmetries in Multilateral Diplomacy

5.1. AI as a Structuring Force in International Negotiation

5.2. Trade Negotiations and the Algorithmic Advantage

5.3. Climate Diplomacy and the Computational Divide

5.4. The Erosion of Transparency and the Challenge to Equity

5.5. Prospects for Redress: Building Computational Equity

6. Toward a Framework for Algorithmic Diplomacy

6.1. Machine-Mediated IR: Conceptual Foundations

6.2. Governance Responses: Progress and Critical Limitations

6.3. Normative Challenges and the Agenda for Reform

6.4. Operationalizing Algorithmic Diplomacy: Pathways and Obstacles

6.5. The Future of Algorithmic Diplomacy

7. Discussion

7.1. Synthesis of Findings Across Domains

7.2. Theoretical Implications

7.3. Connecting AI to Sustainable Development and Global Equity

7.4. Limitations

8. ConclusionS

9. Recommendations

9.1 Directions for Future Research

Funding

Conflicts of Interest

Transparency

References

- Acemoglu, D., & Johnson, S. (2023). Power and progress: Our thousand-year struggle over technology and prosperity. Basic Books. ISBN 978-1541702530.

- Acton, J. M. (2018). Escalation through entanglement: How the vulnerability of command-and-control systems raises the risks of an inadvertent nuclear war. International Security, 43(1), 56–99. [CrossRef]

- AI Safety Summit. (2023, November). The Bletchley declaration by countries attending the AI Safety Summit, 1–2 November 2023. UK Government. https://www.gov.uk/government/publications/ai-safety-summit-2023-the-bletchley-declaration.

- Allison, G. (2017). Destined for war: Can America and China escape Thucydides's trap? Houghton Mifflin Harcourt. ISBN 978-0544935273.

- Altmann, J., & Sauer, F. (2017). Autonomous weapon systems and strategic stability. Survival, 59(5), 117–142. [CrossRef]

- Bansak, K., Ferwerda, J., Hainmueller, J., Dillon, A., Hangartner, D., Lawrence, D., & Weinstein, J. (2018). Improving refugee integration through data-driven algorithmic assignment. Science, 359(6373), 325–329. [CrossRef]

- Benjamin, R. (2019). Race after technology: Abolitionist tools for the new Jim code. Polity Press. ISBN 978-1509526390.

- In Digital diplomacy: Theory and practice; Bjola, C., & Holmes, M. (Eds.). (2015). Digital diplomacy: Theory and practice. Routledge. ISBN 978-1138792074.

- Bostrom, N. (2014). Superintelligence: Paths, dangers, strategies. Oxford University Press. ISBN 978-0199678112.

- Brodie, B. (1959). Strategy in the missile age. Princeton University Press.

- Brundage, M., Avin, S., Clark, J., Toner, H., Eckersley, P., Garfinkel, B., Dafoe, A., Scharre, P., Zeitzoff, T., Filar, B., Anderson, H., Roff, H., Allen, G. C., Steinhardt, J., Flynn, C., hÉigeartaigh, S. Ó., Beard, S., Belfield, H., Farquhar, S., … Amodei, D. (2018). The malicious use of artificial intelligence: Forecasting, prevention, and mitigation. Future of Humanity Institute, University of Oxford. https://arxiv.org/abs/1802.07228.

- Buolamwini, J., & Gebru, T. (2018). Gender shades: Intersectional accuracy disparities in commercial gender classification. Proceedings of Machine Learning Research, 81, 1–15. http://proceedings.mlr.press/v81/buolamwini18a.html.

- Cihon, P. (2019). Standards for AI governance: International standards to enable global coordination in AI research & development. Future of Humanity Institute, University of Oxford. https://www.fhi.ox.ac.uk/wp-content/uploads/Standards_-FHI-Technical-Report.pdf.

- Couldry, N., & Mejias, U. A. (2019). The costs of connection: How data is colonizing human life and appropriating it for capitalism. Stanford University Press. ISBN 978-1503609822.

- Crawford, K. (2021). Atlas of AI: Power, politics, and the planetary costs of artificial intelligence. Yale University Press. ISBN 978-0300209570.

- Cummings, M. L. (2017). Artificial intelligence and the future of warfare [Research Paper]. Chatham House.

- https://www.chathamhouse.org/sites/default/files/publications/research/2017-01-26-artificial-intelligence-future-warfare-cummings.pdf.

- Dafoe, A. (2018). AI governance: A research agenda. Future of Humanity Institute, University of Oxford. https://www.fhi.ox.ac.uk/wp-content/uploads/AI-Governance-Research-Agenda.pdf.

- Deibert, R. (2020). Reset: Reclaiming the internet for civil society. House of Anansi Press. ISBN 978-1487007003 https://eur-lex.europa.eu/legal-content/EN/TXT/?uri='.

- Eubanks, V. (2018). Automating inequality: How high-tech tools profile, police, and punish the poor. St. Martin's Press. ISBN 978-1250074317 [REF14]([URL14]).

- European Parliament & Council of the European Union. (2024). Regulation (EU) 2024/1689 of the European Parliament and of the Council on artificial intelligence (Artificial Intelligence Act). Official Journal of the European Union, L. https://eur-lex.europa.eu/legal-content/EN/TXT/?uri=OJ:L_202401689.

- Farrell, H., & Newman, A. (2023). Underground empire: How America weaponized the world economy. Henry Holt and Company. ISBN 978-1250877413 [REF15]([URL15]).

- Fearon, J. D. (1995). Rationalist explanations for war. International Organization, 49(3), 379–414. [CrossRef]

- Fjeld, J., Achten, N., Hilligoss, H., Nagy, A., & Srikumar, M. (2020). Principled artificial intelligence: Mapping consensus in ethical and rights-based approaches to principles for AI (Berkman Klein Center Research Publication No. 2020-1). Berkman Klein Center for Internet & Society, Harvard University. [CrossRef]

- Floridi, L., Cowls, J., Beltrametti, M., Chatila, R., Chazerand, P., Dignum, V., Luetge, C., Madelin, R., Pagallo, U., Rossi, F., Schafer, B., Valcke, P., & Vayena, E. (2018). AI4People—An ethical framework for a good AI society: Opportunities, risks, principles, and recommendations. Minds and Machines, 28(4), 689–707. [CrossRef]

- G7. (2023). Hiroshima Process international guiding principles for advanced AI systems. G7 Leaders' Summit. https://www.mofa.go.jp/files/100573471.pdf.

- Geist, E., & Lohn, A. J. (2018). How might artificial intelligence affect the risk of nuclear war? (PE-296-RC). RAND Corporation. [CrossRef]

- Horowitz, M. C. (2018). Artificial intelligence, international competition, and the balance of power. Texas National Security Review, 1(3), 36–57. https://tnsr.org/2018/02/artificial-intelligence-international-competition-balance-power/.

- Jervis, R. (1978). Cooperation under the security dilemma. World Politics, 30(2), 167–214. [CrossRef]

- Johnson, J. (2019). Artificial intelligence & future warfare: Implications for international security. Defense & Security Analysis, 35(2), 147–169. [CrossRef]

- Kania, E. B. (2017). Battlefield singularity: Artificial intelligence, military revolution, and China's future military power. Center for a New American Security. https://www.cnas.org/publications/reports/battlefield-singularity-artificial-intelligence-military-revolution-and-chinas-future-military-power.

- Keohane, R. O., & Nye, J. S. (2001). Power and interdependence (3rd ed.). Longman. ISBN 978-0321048580.

- Kissinger, H. A., Schmidt, E., & Huttenlocher, D. (2021). The age of AI: And our human future. Little, Brown and Company. ISBN 978-0316273800.

- Lee, K.-F. (2018). AI superpowers: China, Silicon Valley, and the new world order. Houghton Mifflin Harcourt. ISBN 978-1328546395.

- Lewis, P., Williams, H., Pelopidas, B., & Aghlani, S. (2014). Too close for comfort: Cases of near nuclear use and options for policy. Chatham House. https://www.chathamhouse.org/sites/default/files/field/field_document/20140428TooCloseforComfortNuclearUseLewisWilliamsPelopidasAghlani.pdf.

- Maas, M. M. (2019). How viable is international arms control for military artificial intelligence? Three lessons from nuclear arms control. Contemporary Security Policy, 40(3), 285–311. [CrossRef]

- Mearsheimer, J. J. (2001). The tragedy of great power politics. W. W. Norton & Company. ISBN 978-0393349276.

- Nye, J. S. (2011). The future of power. PublicAffairs. ISBN 978-1610390699.

- O'Neil, C. (2016). Weapons of math destruction: How big data increases inequality and threatens democracy. Crown Publishers. ISBN 978-0553418811.

- OECD. (2019). Recommendation of the Council on artificial intelligence (OECD/LEGAL/0449). Organisation for Economic Co-operation and Development. https://legalinstruments.oecd.org/en/instruments/OECD-LEGAL-0449.

- Pasquale, F. (2015). The black box society: The secret algorithms that control money and information. Harvard University Press. ISBN 978-0674368279.

- Risse, M. (2019). Human rights and artificial intelligence: An urgently needed agenda. Human Rights Quarterly, 41(1), 1–16. [CrossRef]

- Rolnick, D., Donti, P. L., Kaack, L. H., Kochanski, K., Lacoste, A., Sankaran, K., Ross, A. S., Milojevic-Dupont, N., Jaques, N., Waldman-Brown, A., Luccioni, A., Maharaj, T., Sherwin, E. D., Mukkavilli, S. K., Kording, K. P., Gomes, C., Ng, A. Y., Hassabis, D., Platt, J. C., … Bengio, Y. (2022). Tackling climate change with machine learning. ACM Computing Surveys, 55(2), Article 42. [CrossRef]

- Russell, S. (2019). Human compatibility: Artificial intelligence and the problem of control. Viking. ISBN 978-0525558613.

- Sagan, S. D., & Waltz, K. N. (2003). The spread of nuclear weapons: A debate renewed (2nd ed.). W. W. Norton & Company. ISBN 978-0393977479.

- Scharre, P. (2018). Army of none: Autonomous weapons and the future of war. W. W. Norton & Company. ISBN 978-0393608984.

- Schlosser, E. (2013). Command and control nuclear weapons, the Damascus accident, and the illusion of safety. Penguin Press. ISBN 978-1594202278.

- Suleyman, M., & Bhaskar, M. (2023). The coming wave: Technology, power, and the twenty-first century's greatest dilemma. Crown. ISBN 978-0593593950.

- Taddeo, M., & Floridi, L. (2018). Regulate artificial intelligence to avert cyber arms race. Nature, 556(7701), 296–298. [CrossRef]

- UNESCO. (2021). Recommendation on the ethics of artificial intelligence. United Nations Educational, Scientific and Cultural Organization. https://unesdoc.unesco.org/ark:/48223/pf0000381137.

- UNHCR. (2023). Global trends: Forced displacement in 2022. United Nations High Commissioner for Refugees. https://www.unhcr.org/global-trends.

- Vinuesa, R., Azizpour, H., Leite, I., Balaam, M., Dignum, V., Domisch, S., Felländer, A., Langhans, S. D., Tegmark, M., & Fuso Nerini, F. (2020). The role of artificial intelligence in achieving the Sustainable Development Goals. Nature Communications, 11, Article 233. [CrossRef]

- Waltz, K. N. (1979). Theory of international politics. McGraw-Hill.

- Wendt, A. (1999). Social theory of international politics. Cambridge University Press. ISBN 978-0521469609.

- Zegart, A. B. (2022). Spies, lies, and algorithms: The history and future of American intelligence. Princeton University Press. ISBN 978-0691147130.

- Zuboff, S. (2019). The age of surveillance capitalism: The fight for a human future at the new frontier of power. PublicAffairs. ISBN 978-1610395694.

Author Bio

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the author. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.