1. Introduction

The Box–Cox transformation is widely used to stabilize variance, induce approximate normality, and facilitate Gaussian modelling in transformed coordinates. Within this framework, linear relationships are often assumed to hold in the transformed space and are subsequently mapped back to the original scale through inverse transformation.

However, inverse mapping does not generally preserve conditional functional structure. Even when a strictly linear relationship holds after transformation, the corresponding conditional expectation in the original scale differs systematically from the inverse of the linear predictor.

The Box–Cox transformation is closely linked to the Power Normal distribution family introduced in [

1]. The Power Normal framework has been studied extensively in the context of transformation-based Gaussian modelling [

3,

4,

5,

7,

8]. Empirical investigations within this framework, including recent studies on the Power Normal distribution, have reported that inverse-transformed regression curves often provide excellent central approximations but exhibit systematic deviations in the tails [

6]. Such behaviour suggests that the discrepancy is not merely numerical but structural. The present paper provides a formal explanation of this phenomenon by deriving an explicit second-order decomposition of the conditional expectation under nonlinear inverse transformation.

The purpose of this paper is to formally quantify this structural distortion. We derive an explicit analytical expression for the discrepancy between the true conditional expectation and the inverse-transformed linear model. The resulting distortion term depends explicitly on the curvature of the inverse transformation and the conditional variance. This phenomenon is examined in general form and then specialized to the Power Normal framework.

More importantly, the result reveals that the discrepancy is not caused by model misspecification or estimation error, but is an intrinsic structural consequence of applying a nonlinear inverse transformation to a linear Gaussian relationship.

The main contribution of this paper is the derivation of an explicit analytical decomposition of the conditional expectation in the original scale under inverse Box–Cox transformation. The result shows that the conditional mean consists of the inverse-transformed linear predictor plus a curvature-driven correction term proportional to the conditional variance. This provides a formal explanation for the systematic deviations observed empirically in transformation-based regression models.

2. Box–Cox Transformation and Inverse Geometry

For

, the Box–Cox transformation is defined by

with inverse

For , the transformation reduces to the logarithmic case.

The inverse mapping is nonlinear whenever . Its curvature plays a central role in the structural properties of conditional expectations under inverse transformation.

The first and second derivatives are given by

Hence, is linear only for , and strictly nonlinear otherwise.

3. Main Theoretical Result

Let

be random variables and define

We explicitly allow .

Assume now that the transformed variables satisfy a bivariate normal structure:

3.0.0.1. Remark.

Strictly, the Box–Cox inverse map requires . Thus, a latent Gaussian specification for and may need to be interpreted on an admissible region (e.g., via truncation or shifting) to ensure positivity of X and Y. This technical constraint does not affect the structural distortion mechanism studied here, which follows from the nonlinearity of and the conditional variance in the transformed domain.

Proposition 1.

If , then the conditional expectation satisfies

In particular, the inverse-transformed linear predictor does not coincide with the true conditional mean unless .

The remainder term is of higher order with respect to the conditional variance .

Proof. A second-order Taylor expansion of

around

m yields

Taking conditional expectations and using yields the result. □

4. Numerical Illustration

To illustrate the structural distortion established in Proposition 1 we consider a bivariate Power Normal model with positive support. The conditional mean

is computed numerically and compared with the inverse-transformed linear predictor obtained from the linear Gaussian structure in the Box–Cox domain. In the numerical illustration we denote the original variables by

and

, so that

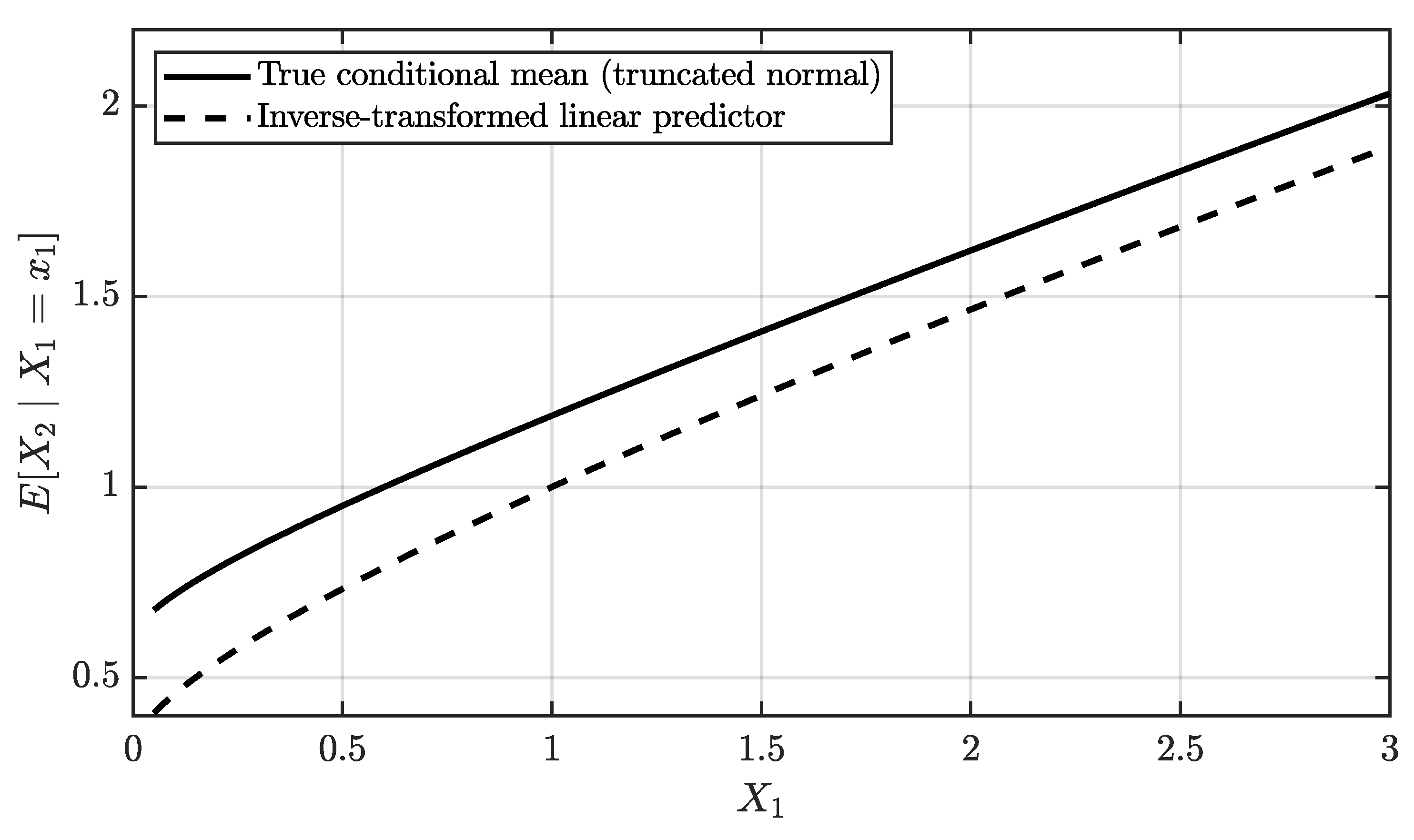

Figure 1 shows that the inverse-transformed linear predictor provides an excellent approximation in the central region. However, near the boundary of the support, the deviation becomes more noticeable, which is consistent with the curvature behaviour of the inverse Box–Cox map.

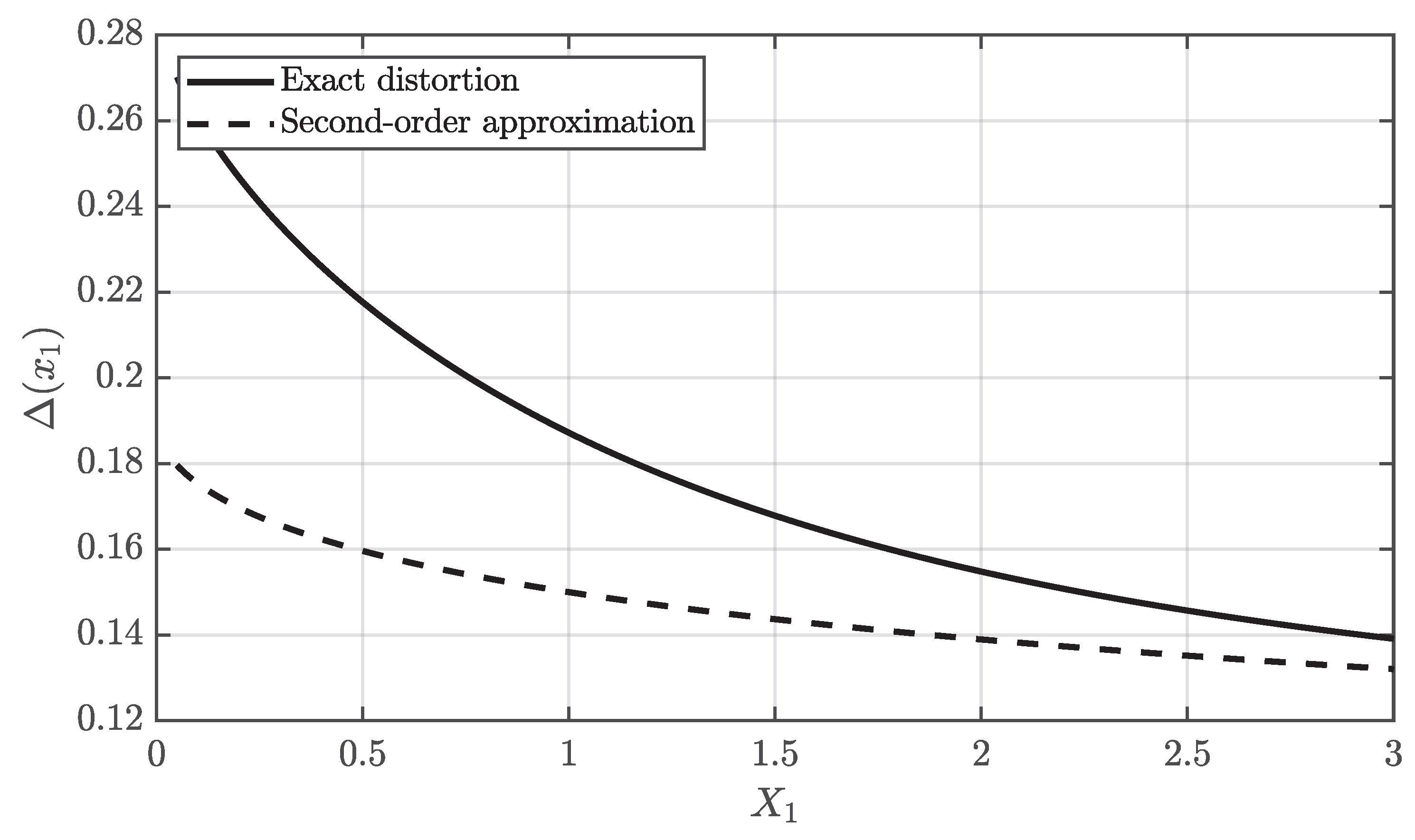

Figure 2 directly confirms the analytical decomposition predicted by Proposition 1. In the numerical illustration the distortion

is represented as a function of

through the transformation

. The distortion is dominated by the second-order term

, and its magnitude depends on the local curvature of the inverse transformation. In this example, the largest deviation occurs near the boundary

. The dashed curve represents the second-order approximation of the distortion obtained from the Taylor expansion of

. Since the true distortion

also contains higher-order even moments of the Gaussian perturbation

, the analytical expression captures the dominant curvature effect but does not reproduce the exact magnitude of the distortion. This explains the difference between the theoretical approximation and the numerical curve.

5. Analytical Form of the Distortion

From Proposition 1, the structural distortion in the original scale is given by

Using the expression of

derived in

Section 2

This expression makes explicit that the distortion magnitude is governed by:

the conditional variance in the transformed domain,

the transformation parameter ,

and the local curvature of the inverse mapping along the linear predictor.

In particular, the distortion vanishes when , since the inverse transformation becomes linear.

6. Discussion

The distortion identified in this paper can be interpreted as a Jensen-type effect generated by the nonlinear inverse transformation. Because conditional expectation is a linear operator while the inverse Box–Cox map is nonlinear, the expectation of the transformed quantity does not coincide with the transformation of the expectation.

Even when the relationship between the transformed variables is perfectly linear, the corresponding relationship between the original variables is generally nonlinear, since the inverse Box–Cox map is itself nonlinear whenever . Consequently, the regression curve in the original scale is not the image of a straight line.

The result derived in this paper shows a stronger structural fact. Even the conditional mean in the original scale does not coincide with the inverse image of the linear predictor in the transformed space. Instead, it contains an additional curvature-induced correction term depending on the conditional variance.

The distortion disappears only when the inverse transformation is linear. For the Box–Cox family this occurs only for , in which case and are linear transformations. In this limiting case the transformation merely shifts the variable and does not modify the structural relationship between X and Y. The distortion analysed in this paper therefore arises only when the transformation is genuinely nonlinear. More generally, the mechanism identified here is not specific to the Box–Cox transformation. Whenever a nonlinear monotone transformation is used to induce linear structure in a latent Gaussian domain, the inverse mapping will generally introduce curvature-induced corrections in conditional expectations. The Box–Cox family therefore provides a natural and analytically tractable setting in which this structural distortion can be made explicit.

7. Conclusions

We have provided a formal analysis of the structural distortion induced by the inverse Box–Cox transformation on conditional expectations. An explicit second-order decomposition shows that the conditional mean in the original scale consists of the inverse-transformed linear predictor plus a curvature-dependent correction term proportional to the conditional variance. This result provides a formal explanation for a phenomenon often observed in transformation-based regression models, where inverse-transformed predictors reproduce the central trend of the data but exhibit systematic deviations in the tails. The analytical decomposition derived in this work shows that such deviations arise naturally from the curvature of the inverse transformation combined with the conditional variance in the transformed domain.

The results demonstrate that transformation-based Gaussian modelling does not generally preserve structural relationships under inversion. This limitation is intrinsic to the nonlinearity of the transformation whenever .

The analytical framework developed here clarifies the geometric mechanism underlying this phenomenon and may serve as a basis for further investigations into transformation-induced distortions in regression and dependence modelling.

Future research may explore the implications of higher-order corrections and their interaction with non-Gaussian conditional structures.

References

- Goto, M.; Inoue, T. Some Properties of the Power-Normal Distribution. J. Biometrics 1980, 1, 28–54. [Google Scholar] [CrossRef]

- Box, G.E.; Cox, D.R. An Analysis of Transformations. J. R. Stat. Soc. Ser. B 1964, 26, 211–243. [Google Scholar] [CrossRef]

- Goto, M.; Hamasaki, T. The bivariate power-normal distribution. Bulletin of Informatics and Cybernetics 2002, 34(1), 29–49. [Google Scholar] [CrossRef] [PubMed]

- Freeman, J.; Modarres, R. Inverse Box-Cox: The Power-Normal Distribution. Stat. Probab. Lett. 2006, 76, 764–772. [Google Scholar] [CrossRef]

- Gonçalves, R. The power-normal distribution. AIP Conf. Proc. 2019, 2116, 110009. [Google Scholar] [CrossRef]

- Gonçalves, R. The power-normal distribution. J. Phys.: Conf. Ser. 2019, 1334, 012014. [Google Scholar] [CrossRef]

- Gonçalves, R. Inverse Box-Cox and the Power-Normal Distribution. In Innovation, Engineering and Entrepreneurship; Lecture Notes in Electrical Engineering; Springer: Cham, Switzerland, 2019; Volume 505, pp. 805–810. [Google Scholar]

- Goto, M.; Inoue, T.; Tsuchya, Y. On the Estimation of Parameters in the Power-Normal Distribution. Bull. Inf. Cybern. 1984, 21, 41–53. [Google Scholar]

|

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).