1. Introduction

Autonomous driving systems, as representative cyber-physical platforms in modern automotive electronics, demand robust and reliable perception capabilities to ensure safe navigation across diverse and challenging environmental conditions [

1]. Object detection, as a fundamental perception task in the on-board sensing and perception stack, must maintain high accuracy under strict latency and computational constraints regardless of illumination variations, adverse weather, and complex traffic scenarios. While RGB cameras provide rich texture and color information under well-lit conditions, their performance degrades significantly in low-light environments, fog, rain, and glare [

2]. Infrared (IR) sensors, which capture thermal radiation emitted by objects, offer complementary advantages by providing reliable detection capabilities in darkness and adverse weather conditions [

3]. Consequently, the fusion of RGB and IR modalities has attracted increasing research attention as a promising approach to achieve robust all-weather object detection for autonomous driving and real-time automotive perception systems [

4].

Despite significant progress in multi-modal object detection, several fundamental challenges remain unresolved.

First, existing fusion methods often employ simple concatenation or element-wise addition to combine features from different modalities [

5], which fails to capture the complex complementary relationships between RGB and IR features. The heterogeneous nature of these two modalities—RGB images encode appearance, texture, and color information while IR images capture thermal signatures—necessitates more sophisticated alignment and interaction mechanisms.

Second, most current approaches rely on convolutional neural networks (CNNs) or standard Transformer architectures for feature extraction and fusion [

6]. CNNs are inherently limited by their local receptive fields, making it difficult to model long-range dependencies across modalities. While Transformers can capture global relationships, their quadratic computational complexity with respect to sequence length poses significant challenges for real-time and compute-constrained automotive deployment [

7].

Third, the quality of object queries in detection heads significantly impacts final detection performance, yet most methods do not explicitly address the uncertainty in query selection when dealing with multi-modal inputs [

8]. Beyond perception accuracy, robustness and reliability under adverse conditions have also been conceptually discussed from connectivity and diagnosability perspectives in complex networks [

9,

10,

11,

12], highlighting the importance of redundancy and complementary information sources.

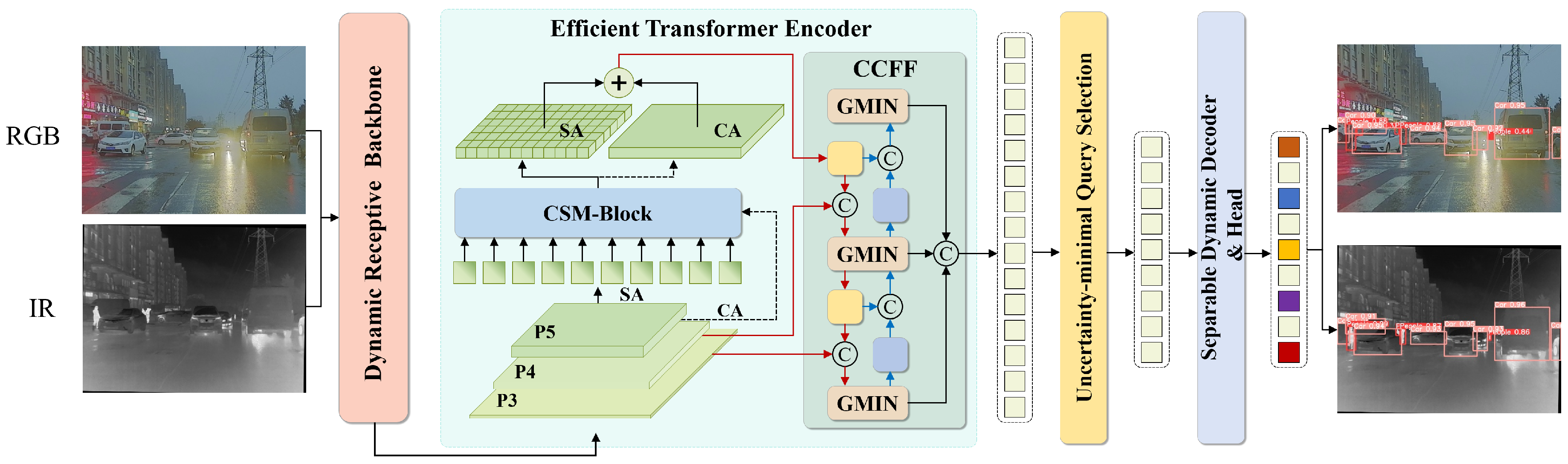

To address these challenges under practical real-time constraints, we propose CMAFNet (Cross-Modal Alignment and Fusion Network), a comprehensive yet deployment-aware framework for robust multi-modal object detection in autonomous driving. Our approach introduces several novel components that work synergistically to achieve effective cross-modal feature alignment, interaction, and fusion while maintaining a favorable accuracy–efficiency trade-off. The overall architecture of CMAFNet is illustrated in

Figure 1.

Specifically, our contributions are summarized as follows:

We propose CMAFNet, a deployment-aware end-to-end multi-modal object detection framework that achieves robust cross-modal alignment and fusion for autonomous driving under real-time constraints. The framework integrates a Dynamic Receptive Backbone, Channel-Split Mamba Block, Global Multi-modal Interaction Network, and Separable Dynamic Decoder into a unified and efficiency-oriented architecture.

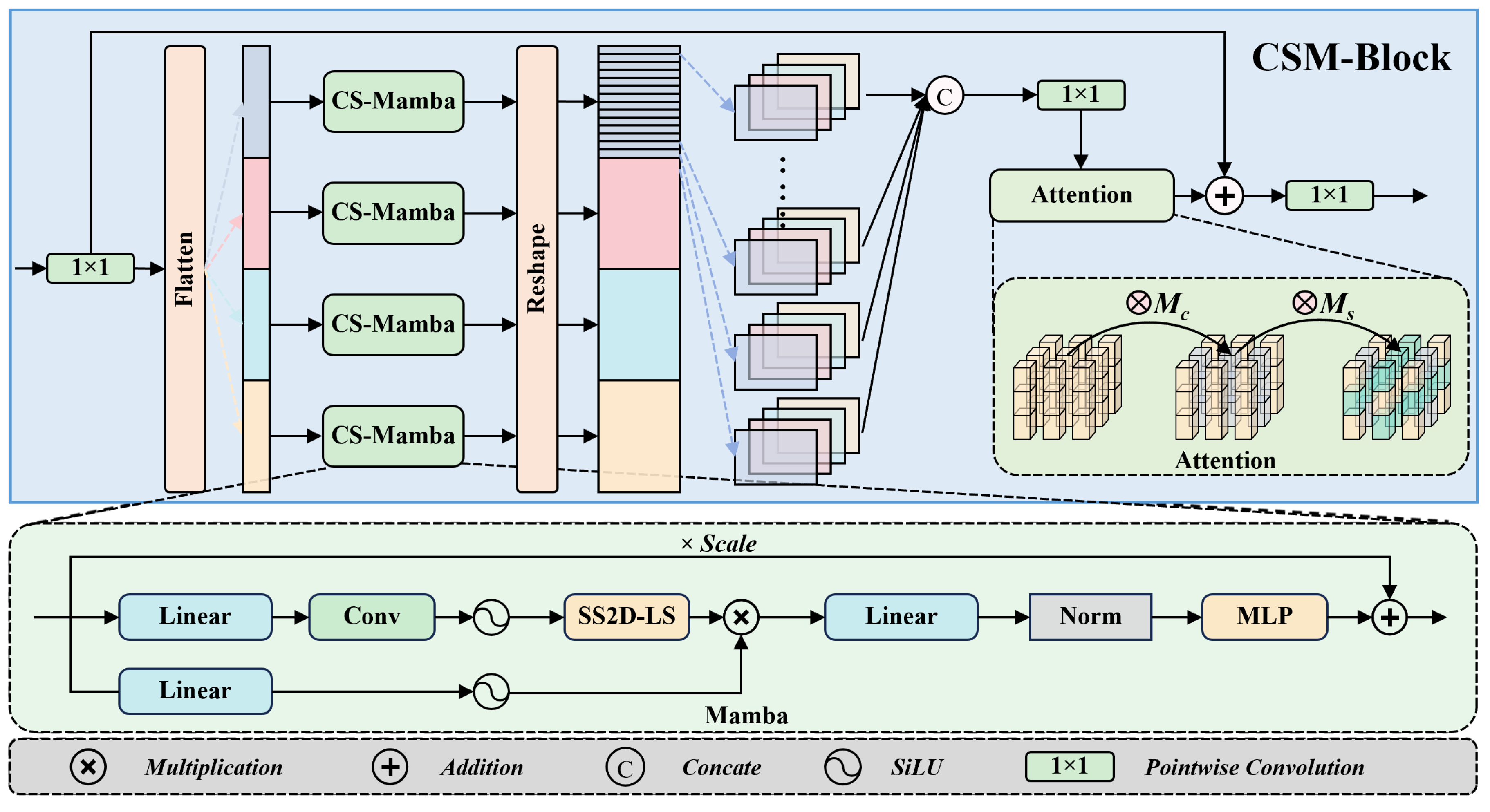

We introduce the Channel-Split Mamba Block (CSM-Block), which leverages selective state space models [

13] to efficiently capture long-range cross-modal dependencies. By splitting feature channels into multiple groups and processing them through parallel CS-Mamba branches with subsequent attention-based recalibration, the CSM-Block enables effective global context modeling with linear computational complexity, making it suitable for compute-constrained automotive perception systems.

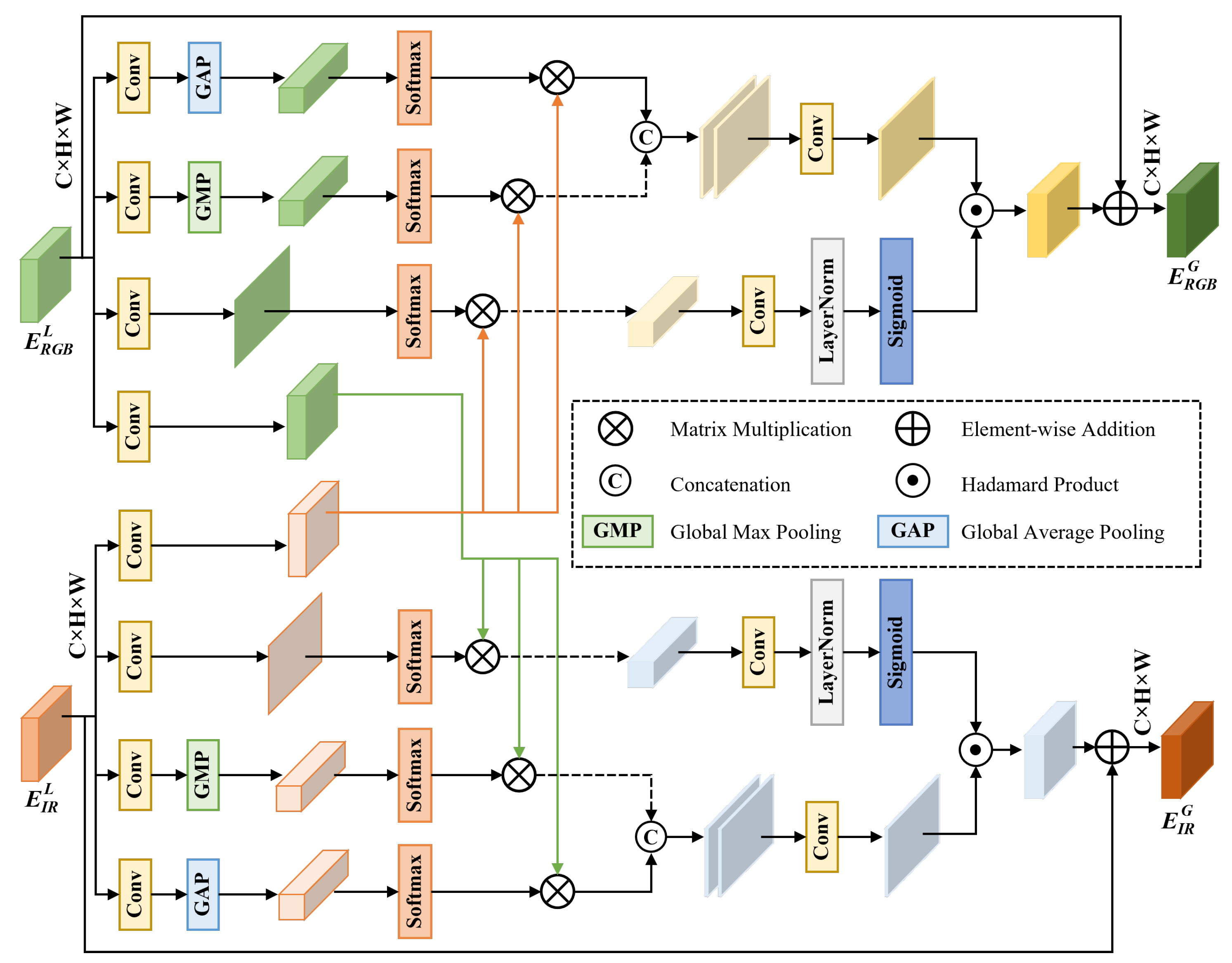

We design the Global Multi-modal Interaction Network (GMIN), a dual-branch cross-modal attention module that performs fine-grained feature alignment through global average pooling and global max pooling guided cross-attention. GMIN generates modality-specific gating signals to adaptively weight and fuse complementary information from RGB and IR features, improving robustness under adverse sensing conditions.

Extensive experiments on two widely-used benchmarks (M3FD and FLIR-Aligned) demonstrate that CMAFNet achieves state-of-the-art performance while maintaining a favorable accuracy–latency trade-off, supporting practical real-time deployment in automotive embedded vision scenarios.

The remainder of this paper is organized as follows.

Section 2 reviews related work on multi-modal object detection, state space models, and attention mechanisms.

Section 3 presents the proposed CMAFNet framework in detail.

Section 4 describes the experimental setup and presents comprehensive results, including efficiency analysis.

Section 6 concludes the paper.

5. Discussion

The experimental results demonstrate the effectiveness of CMAFNet for multi-modal object detection in autonomous driving. Beyond the quantitative improvements, several structural insights can be drawn from the proposed design.

Effectiveness of State Space Models for Cross-Modal Fusion. The CSM-Block demonstrates that selective state space models provide an efficient alternative to quadratic-complexity attention mechanisms for modeling long-range cross-modal dependencies. As shown in

Table 6, the CSM-Block achieves 2.4% higher mAP

50 than standard self-attention while delivering 61% faster inference. This performance gain is not merely computational but structural: the selective scanning mechanism enables adaptive information propagation across spatial positions, while the channel-splitting strategy promotes representation diversity and reduces feature interference. These results suggest that state space modeling is particularly well-suited for multi-modal fusion, where efficient global context modeling is critical.

Importance of Fine-Grained Cross-Modal Interaction. The GMIN module addresses a common limitation in existing fusion strategies—namely, insufficient explicit alignment between modalities. The ablation study (

Table 7) confirms that simple fusion operations such as concatenation or element-wise addition are inadequate for capturing complex complementary relationships between RGB and IR features. By integrating GAP- and GMP-guided cross-attention, GMIN captures both global statistical responses and salient discriminative cues. The modality-specific gating mechanism further stabilizes fusion by dynamically regulating the contribution of each modality. This structured interaction is particularly beneficial under distribution shifts such as illumination variation.

Robustness under Adverse Conditions. The robustness analysis (

Table 10) shows that CMAFNet exhibits the largest performance gains under nighttime and challenging weather conditions. This behavior reflects the adaptive nature of the proposed fusion strategy. When one modality is degraded, the gating mechanism increases reliance on the complementary modality, effectively mitigating information loss. Such adaptive cross-modal balancing is essential for safety-critical autonomous driving systems operating under unpredictable environmental conditions.

Efficiency–Accuracy Trade-off. Although CMAFNet introduces additional fusion modules, it maintains competitive real-time performance (29.3 FPS) as shown in

Table 9. This efficiency is largely attributed to the linear-complexity state space modeling in the CSM-Block and the dynamic convolution attention in the Separable Dynamic Decoder [

38]. The results indicate that improved cross-modal interaction does not necessarily require heavy attention-based architectures; carefully designed linear mechanisms can achieve a favorable accuracy–efficiency balance.

Limitations and Future Work. Despite achieving state-of-the-art results on RGB–IR benchmarks, several limitations remain. First, the current framework focuses on dual-modality fusion; extending CMAFNet to incorporate additional sensing modalities such as LiDAR or radar may further enhance robustness. Second, the method assumes well-aligned RGB–IR pairs; developing alignment-robust or alignment-free fusion strategies is an important practical direction. Third, while the current model achieves real-time inference on high-end GPUs, further model compression and architectural simplification would be valuable for deployment on edge platforms in resource-constrained autonomous vehicles.

Figure 1.

Overall architecture of the proposed CMAFNet. The framework consists of four main components: (1) a shared Dynamic Receptive Backbone for multi-scale feature extraction from both RGB and IR modalities, (2) an Efficient Transformer Encoder with CSM-Block for cross-modal feature enhancement, (3) GMIN modules for global multi-modal interaction and fusion at multiple scales, and (4) an Uncertainty-minimal Query Selection mechanism followed by a Separable Dynamic Decoder for final detection. The architecture is designed to balance detection accuracy and computational efficiency for real-time automotive deployment.

Figure 1.

Overall architecture of the proposed CMAFNet. The framework consists of four main components: (1) a shared Dynamic Receptive Backbone for multi-scale feature extraction from both RGB and IR modalities, (2) an Efficient Transformer Encoder with CSM-Block for cross-modal feature enhancement, (3) GMIN modules for global multi-modal interaction and fusion at multiple scales, and (4) an Uncertainty-minimal Query Selection mechanism followed by a Separable Dynamic Decoder for final detection. The architecture is designed to balance detection accuracy and computational efficiency for real-time automotive deployment.

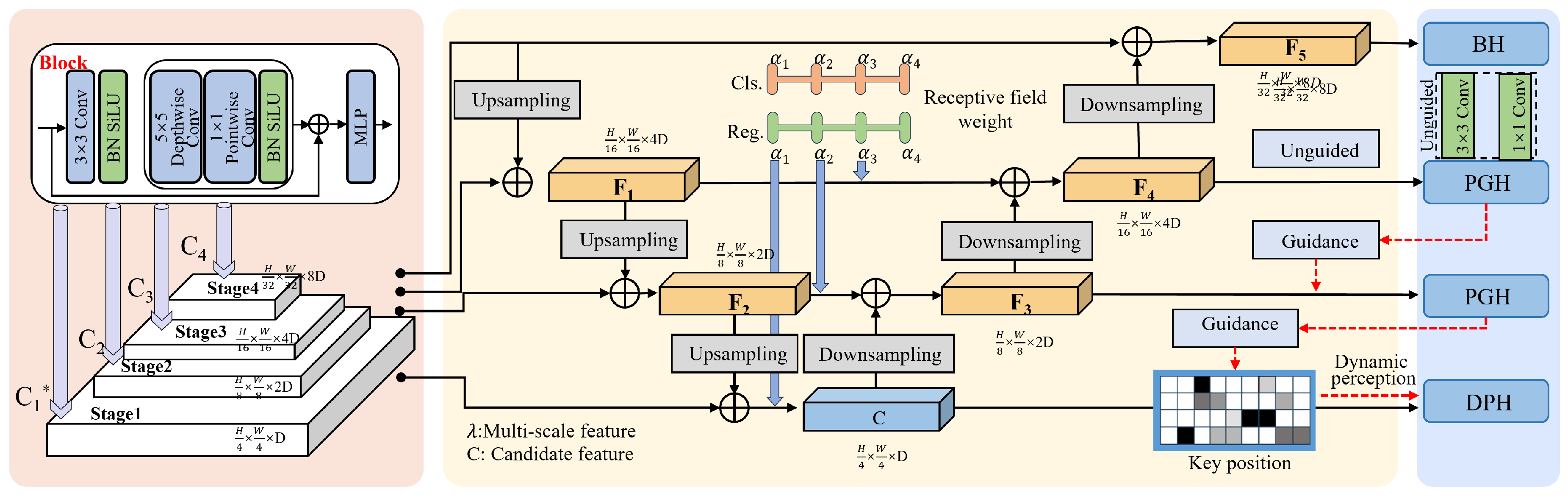

Figure 2.

Architecture of the Dynamic Receptive Backbone (DRB). The backbone consists of four stages that progressively extract multi-scale features . Each stage employs a Block module composed of convolution, depthwise separable convolution, pointwise convolution, and MLP layers. The multi-scale features are further processed through upsampling and downsampling paths with receptive field weight adaptation to generate enhanced feature maps . The backbone includes multiple detection heads: BH (Base Head), PGH (Perception-Guided Head), and DPH (Dynamic Perception Head). The design maintains a balance between representational capacity and computational cost.

Figure 2.

Architecture of the Dynamic Receptive Backbone (DRB). The backbone consists of four stages that progressively extract multi-scale features . Each stage employs a Block module composed of convolution, depthwise separable convolution, pointwise convolution, and MLP layers. The multi-scale features are further processed through upsampling and downsampling paths with receptive field weight adaptation to generate enhanced feature maps . The backbone includes multiple detection heads: BH (Base Head), PGH (Perception-Guided Head), and DPH (Dynamic Perception Head). The design maintains a balance between representational capacity and computational cost.

Figure 3.

Architecture of the Channel-Split Mamba Block (CSM-Block). The input features are first processed by a convolution, then flattened and split into multiple channel groups. Each group is independently processed by a CS-Mamba module. The outputs are reshaped and concatenated, followed by a convolution. An attention mechanism with channel attention map and spatial attention map is applied for feature recalibration. The bottom panel shows the internal structure of the CS-Mamba module, which consists of a dual-branch architecture with Linear projection, depthwise convolution, SiLU activation, SS2D-LS (Selective Scan 2D with Local Scan), element-wise multiplication, Linear projection, Layer Normalization, and MLP with residual connection. The design balances global modeling capability and computational efficiency.

Figure 3.

Architecture of the Channel-Split Mamba Block (CSM-Block). The input features are first processed by a convolution, then flattened and split into multiple channel groups. Each group is independently processed by a CS-Mamba module. The outputs are reshaped and concatenated, followed by a convolution. An attention mechanism with channel attention map and spatial attention map is applied for feature recalibration. The bottom panel shows the internal structure of the CS-Mamba module, which consists of a dual-branch architecture with Linear projection, depthwise convolution, SiLU activation, SS2D-LS (Selective Scan 2D with Local Scan), element-wise multiplication, Linear projection, Layer Normalization, and MLP with residual connection. The design balances global modeling capability and computational efficiency.

Figure 4.

Architecture of the Global Multi-modal Interaction Network (GMIN). The module takes RGB features and IR features as inputs and produces globally enhanced features and . Each modality branch generates attention weights through Global Average Pooling (GAP) and Global Max Pooling (GMP) guided cross-attention. The cross-modal interaction is achieved through matrix multiplication (⊗) between attention maps from different modalities. Modality-specific gating signals are generated through concatenation, convolution, LayerNorm, and Sigmoid activation to produce the final fused features via Hadamard product (⊙) and element-wise addition (⊕). The design emphasizes adaptive modality weighting and computational efficiency.

Figure 4.

Architecture of the Global Multi-modal Interaction Network (GMIN). The module takes RGB features and IR features as inputs and produces globally enhanced features and . Each modality branch generates attention weights through Global Average Pooling (GAP) and Global Max Pooling (GMP) guided cross-attention. The cross-modal interaction is achieved through matrix multiplication (⊗) between attention maps from different modalities. Modality-specific gating signals are generated through concatenation, convolution, LayerNorm, and Sigmoid activation to produce the final fused features via Hadamard product (⊙) and element-wise addition (⊕). The design emphasizes adaptive modality weighting and computational efficiency.

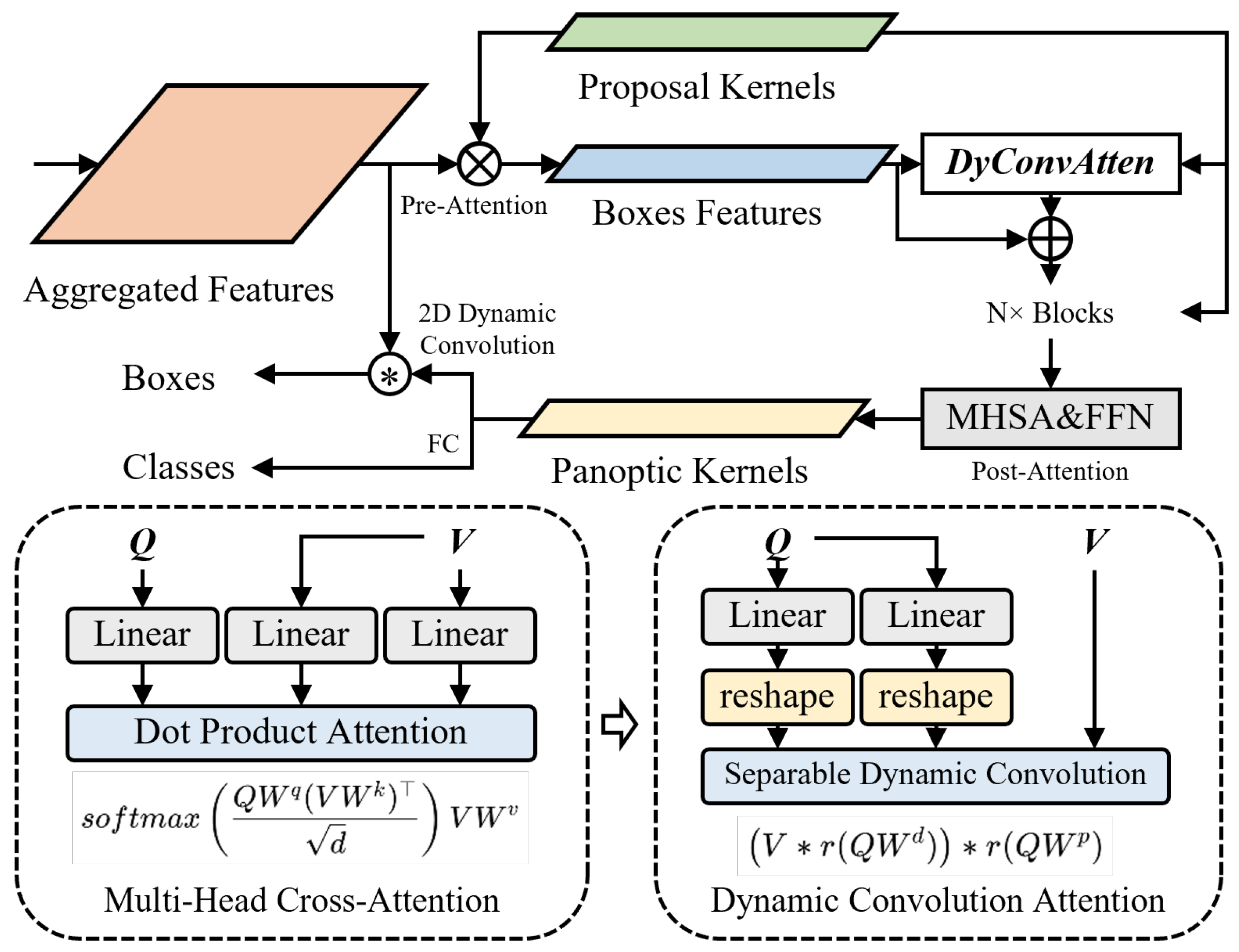

Figure 5.

Architecture of the Separable Dynamic Decoder [

38]. The decoder takes aggregated features and proposal kernels as inputs. Pre-Attention generates box features through matrix multiplication. The DyConvAtten module applies dynamic convolution attention with residual connection, followed by

N blocks of Multi-Head Self-Attention (MHSA) and Feed-Forward Network (FFN) in the Post-Attention stage. The bottom panels compare the standard Multi-Head Cross-Attention (left) with the proposed Dynamic Convolution Attention (right), where the attention computation

is replaced by the separable dynamic convolution

. The separable formulation improves computational efficiency while preserving modeling flexibility.

Figure 5.

Architecture of the Separable Dynamic Decoder [

38]. The decoder takes aggregated features and proposal kernels as inputs. Pre-Attention generates box features through matrix multiplication. The DyConvAtten module applies dynamic convolution attention with residual connection, followed by

N blocks of Multi-Head Self-Attention (MHSA) and Feed-Forward Network (FFN) in the Post-Attention stage. The bottom panels compare the standard Multi-Head Cross-Attention (left) with the proposed Dynamic Convolution Attention (right), where the attention computation

is replaced by the separable dynamic convolution

. The separable formulation improves computational efficiency while preserving modeling flexibility.

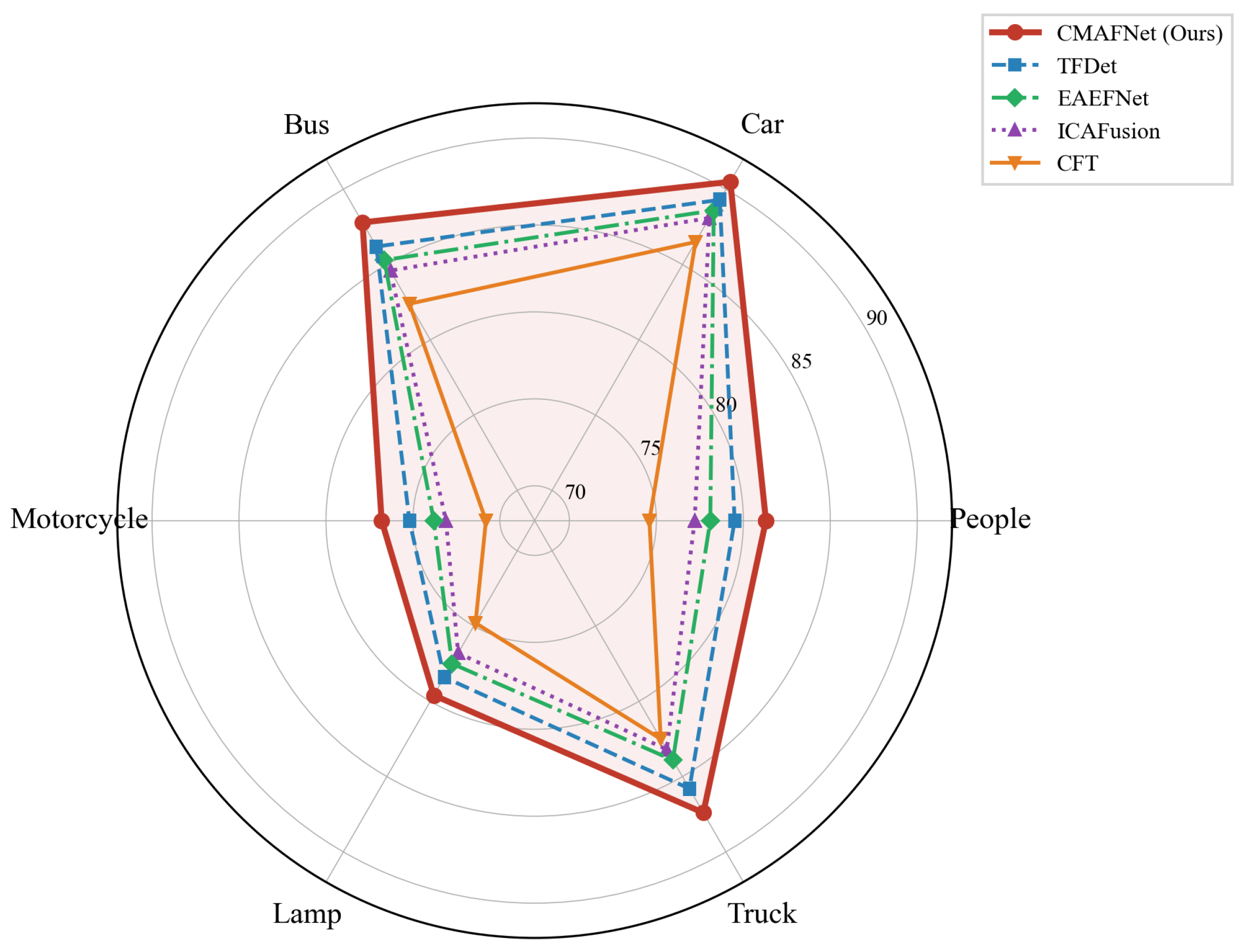

Figure 6.

Radar chart comparing per-category AP50 (%) of the top-performing methods on the M3FD dataset. CMAFNet (red solid line) consistently achieves superior balanced performance across all six object categories.

Figure 6.

Radar chart comparing per-category AP50 (%) of the top-performing methods on the M3FD dataset. CMAFNet (red solid line) consistently achieves superior balanced performance across all six object categories.

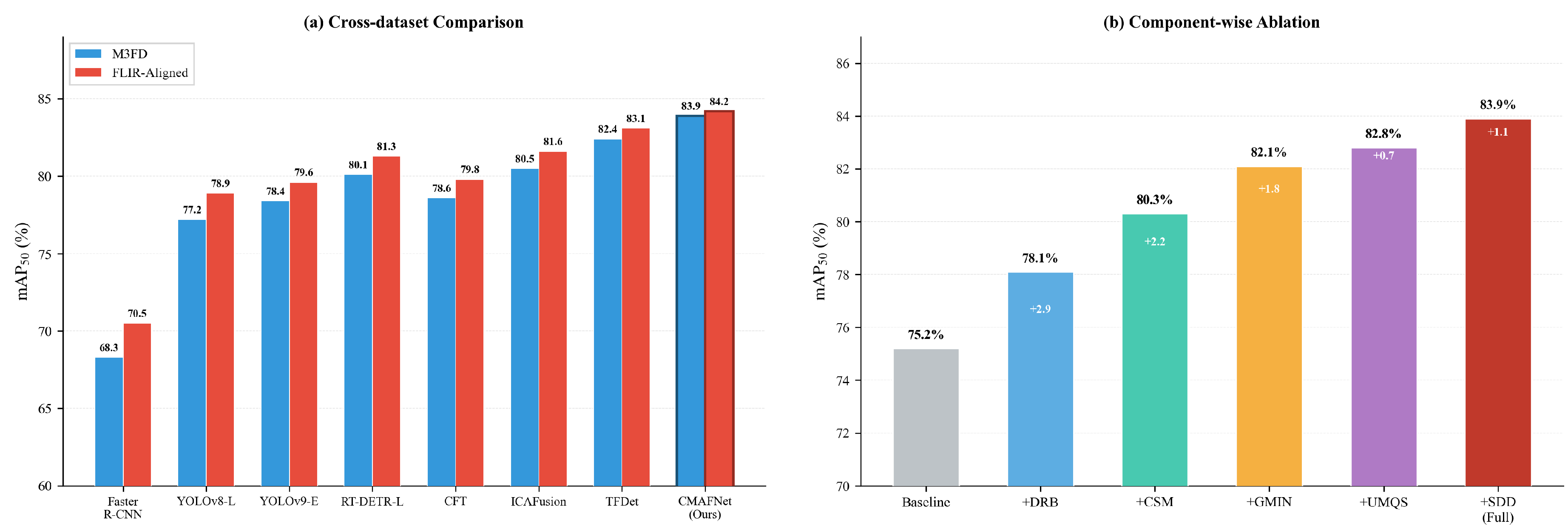

Figure 7.

(a) Cross-dataset comparison of mAP50 (%) on M3FD and FLIR-Aligned benchmarks. CMAFNet achieves the highest performance on both datasets. (b) Component-wise ablation on M3FD showing the progressive mAP50 improvement as each module is added, with incremental gains of +2.9%, +2.2%, +1.8%, +0.7%, and +1.1%.

Figure 7.

(a) Cross-dataset comparison of mAP50 (%) on M3FD and FLIR-Aligned benchmarks. CMAFNet achieves the highest performance on both datasets. (b) Component-wise ablation on M3FD showing the progressive mAP50 improvement as each module is added, with incremental gains of +2.9%, +2.2%, +1.8%, +0.7%, and +1.1%.

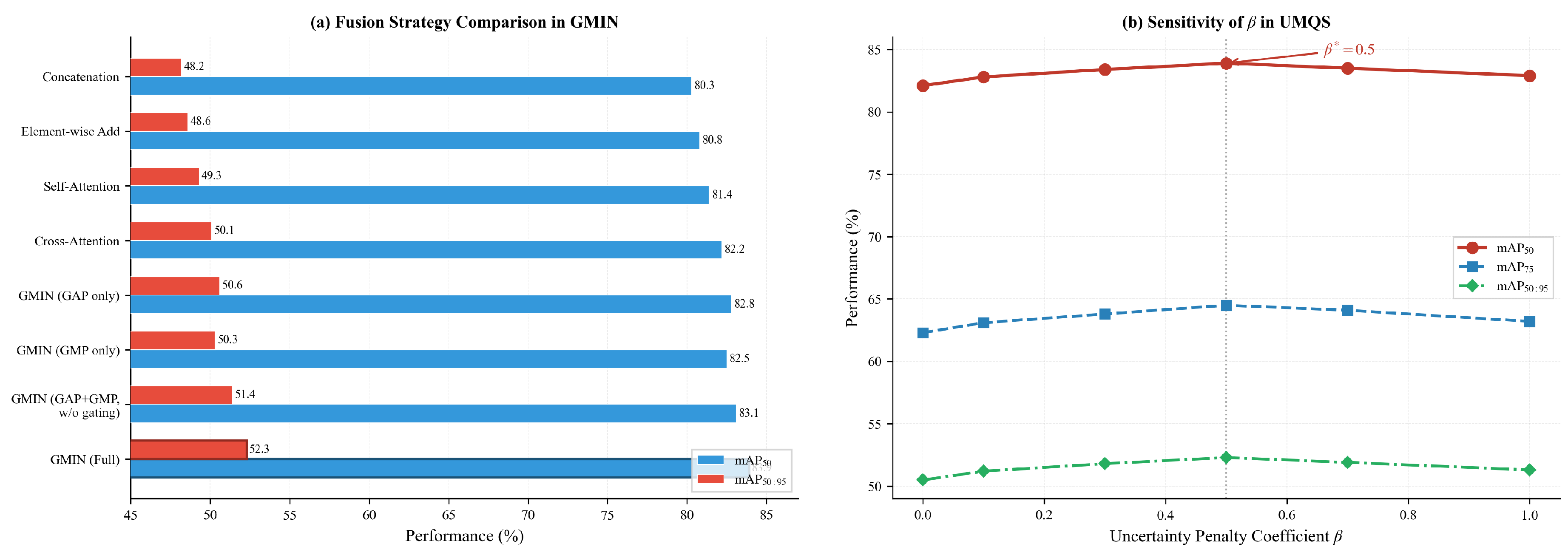

Figure 8.

(a) Horizontal bar chart comparing different fusion strategies in the GMIN module on M3FD. The full GMIN design with both GAP and GMP guided cross-attention and modality-specific gating achieves the best performance. (b) Sensitivity analysis of the uncertainty penalty coefficient in UMQS. All three metrics exhibit a consistent inverted-U pattern, with the optimal performance at .

Figure 8.

(a) Horizontal bar chart comparing different fusion strategies in the GMIN module on M3FD. The full GMIN design with both GAP and GMP guided cross-attention and modality-specific gating achieves the best performance. (b) Sensitivity analysis of the uncertainty penalty coefficient in UMQS. All three metrics exhibit a consistent inverted-U pattern, with the optimal performance at .

Figure 9.

(a) Accuracy vs. speed trade-off on the M3FD dataset. Each point represents a detection method, with bubble size proportional to the number of parameters. CMAFNet achieves a strong balance between accuracy and inference speed. (b) Heatmap visualization of mAP50 (%) under different environmental conditions. CMAFNet achieves consistently high performance across all conditions, including nighttime and challenging weather scenarios.

Figure 9.

(a) Accuracy vs. speed trade-off on the M3FD dataset. Each point represents a detection method, with bubble size proportional to the number of parameters. CMAFNet achieves a strong balance between accuracy and inference speed. (b) Heatmap visualization of mAP50 (%) under different environmental conditions. CMAFNet achieves consistently high performance across all conditions, including nighttime and challenging weather scenarios.

Figure 10.

Detection results of CMAFNet on RGB images from the M3FD dataset. The results demonstrate robust detection across diverse scenarios including daytime, nighttime, rainy, and foggy conditions. CMAFNet accurately detects multiple object categories including People, Car, Motorcycle, Bus, and Truck with high confidence scores.

Figure 10.

Detection results of CMAFNet on RGB images from the M3FD dataset. The results demonstrate robust detection across diverse scenarios including daytime, nighttime, rainy, and foggy conditions. CMAFNet accurately detects multiple object categories including People, Car, Motorcycle, Bus, and Truck with high confidence scores.

Figure 11.

Detection results of CMAFNet on IR images from the M3FD dataset. The infrared modality provides clear thermal signatures of objects even in challenging visibility conditions. CMAFNet effectively leverages the complementary thermal information to maintain high detection accuracy.

Figure 11.

Detection results of CMAFNet on IR images from the M3FD dataset. The infrared modality provides clear thermal signatures of objects even in challenging visibility conditions. CMAFNet effectively leverages the complementary thermal information to maintain high detection accuracy.

Figure 12.

Detection results of CMAFNet on RGB images from the FLIR-Aligned dataset. The model demonstrates accurate detection of Person, Car, and Bicycle categories across various urban driving scenarios with different lighting conditions and traffic densities.

Figure 12.

Detection results of CMAFNet on RGB images from the FLIR-Aligned dataset. The model demonstrates accurate detection of Person, Car, and Bicycle categories across various urban driving scenarios with different lighting conditions and traffic densities.

Figure 13.

Detection results of CMAFNet on IR images from the FLIR-Aligned dataset. The thermal infrared images clearly reveal pedestrians and vehicles through their heat signatures, enabling reliable detection in nighttime and low-visibility conditions.

Figure 13.

Detection results of CMAFNet on IR images from the FLIR-Aligned dataset. The thermal infrared images clearly reveal pedestrians and vehicles through their heat signatures, enabling reliable detection in nighttime and low-visibility conditions.

Table 1.

Comparison with state-of-the-art methods on the M3FD dataset. The best results are shown in bold and the second-best results are underlined. “Two-Stage”, “Single-Stage”, “YOLO”, “Transformer”, and “Multi-Modal” indicate the method category.

Table 1.

Comparison with state-of-the-art methods on the M3FD dataset. The best results are shown in bold and the second-best results are underlined. “Two-Stage”, “Single-Stage”, “YOLO”, “Transformer”, and “Multi-Modal” indicate the method category.

| Category |

Method |

Params (M) |

FLOPs (G) |

mAP50 (%) |

mAP50:95 (%) |

| Two-Stage |

Faster R-CNN [46] |

41.1 |

134.2 |

68.3 |

38.7 |

| Cascade R-CNN [47] |

69.2 |

189.5 |

71.6 |

41.2 |

| MBNet [15] |

43.8 |

142.7 |

74.2 |

43.5 |

| Single-Stage |

SSD [48] |

24.4 |

62.8 |

63.5 |

34.1 |

| RetinaNet [40] |

36.3 |

97.1 |

70.8 |

40.3 |

| FCOS [49] |

32.1 |

88.6 |

72.1 |

41.8 |

| YOLO-based |

YOLOv5-L [50] |

46.5 |

109.1 |

74.8 |

43.2 |

| YOLOX-L [51] |

54.2 |

155.6 |

75.3 |

44.1 |

| YOLOv7 [52] |

36.9 |

104.7 |

76.5 |

45.3 |

| YOLOv8-L [53] |

43.7 |

165.2 |

77.2 |

46.1 |

| YOLOv9-E [54] |

57.3 |

189.0 |

78.4 |

47.2 |

| Transformer-based |

DETR [30] |

41.3 |

86.0 |

71.5 |

40.8 |

| Deformable DETR [31] |

40.0 |

78.3 |

75.6 |

44.7 |

| DINO [8] |

47.6 |

98.5 |

79.3 |

48.6 |

| RT-DETR-L [32] |

32.0 |

103.4 |

80.1 |

49.3 |

| Multi-Modal |

Halfway Fusion [5] |

38.5 |

112.3 |

73.8 |

42.6 |

| CFT [6] |

44.2 |

125.8 |

78.6 |

46.8 |

| ICAFusion [17] |

48.7 |

138.4 |

80.5 |

48.9 |

| EAEFNet [19] |

42.3 |

118.6 |

81.2 |

49.5 |

| TFDet [20] |

45.1 |

131.2 |

82.4 |

50.8 |

| |

CMAFNet (Ours) |

48.5 |

132.6 |

83.9 |

52.3 |

Table 2.

Per-category AP50 (%) comparison on the M3FD dataset.

Table 2.

Per-category AP50 (%) comparison on the M3FD dataset.

| Method |

People |

Car |

Bus |

Motorcycle |

Lamp |

Truck |

mAP50

|

| Faster R-CNN [46] |

62.1 |

78.5 |

72.3 |

58.4 |

65.7 |

72.8 |

68.3 |

| YOLOv8-L [53] |

72.8 |

85.3 |

81.6 |

68.5 |

73.2 |

81.8 |

77.2 |

| YOLOv9-E [54] |

74.2 |

86.1 |

82.8 |

70.3 |

74.5 |

82.5 |

78.4 |

| DINO [8] |

75.8 |

87.2 |

83.5 |

71.6 |

75.3 |

82.4 |

79.3 |

| RT-DETR-L [32] |

76.5 |

87.8 |

84.2 |

72.4 |

76.1 |

83.6 |

80.1 |

| CFT [6] |

74.6 |

86.5 |

82.4 |

70.8 |

74.8 |

82.5 |

78.6 |

| ICAFusion [17] |

77.2 |

88.1 |

84.6 |

73.1 |

76.8 |

83.2 |

80.5 |

| EAEFNet [19] |

78.1 |

88.6 |

85.3 |

73.8 |

77.5 |

83.9 |

81.2 |

| TFDet [20] |

79.5 |

89.3 |

86.2 |

75.2 |

78.4 |

85.8 |

82.4 |

| CMAFNet (Ours) |

81.3 |

90.5 |

87.8 |

76.8 |

79.6 |

87.4 |

83.9 |

Table 3.

Comparison with state-of-the-art methods on the FLIR-Aligned dataset.

Table 3.

Comparison with state-of-the-art methods on the FLIR-Aligned dataset.

| Category |

Method |

Params (M) |

FLOPs (G) |

mAP50 (%) |

mAP50:95 (%) |

| Two-Stage |

Faster R-CNN [46] |

41.1 |

134.2 |

70.5 |

39.8 |

| Cascade R-CNN [47] |

69.2 |

189.5 |

73.2 |

42.5 |

| MBNet [15] |

43.8 |

142.7 |

75.8 |

44.6 |

| Single-Stage |

SSD [48] |

24.4 |

62.8 |

65.2 |

35.6 |

| RetinaNet [40] |

36.3 |

97.1 |

72.4 |

41.5 |

| FCOS [49] |

32.1 |

88.6 |

73.8 |

42.9 |

| YOLO-based |

YOLOv5-L [50] |

46.5 |

109.1 |

76.2 |

44.8 |

| YOLOX-L [51] |

54.2 |

155.6 |

76.8 |

45.3 |

| YOLOv7 [52] |

36.9 |

104.7 |

78.1 |

46.5 |

| YOLOv8-L [53] |

43.7 |

165.2 |

78.9 |

47.3 |

| YOLOv9-E [54] |

57.3 |

189.0 |

79.6 |

48.1 |

| Transformer-based |

DETR [30] |

41.3 |

86.0 |

73.1 |

42.2 |

| Deformable DETR [31] |

40.0 |

78.3 |

77.2 |

46.1 |

| DINO [8] |

47.6 |

98.5 |

80.5 |

49.8 |

| RT-DETR-L [32] |

32.0 |

103.4 |

81.3 |

50.5 |

| Multi-Modal |

Halfway Fusion [5] |

38.5 |

112.3 |

75.4 |

43.8 |

| CFT [6] |

44.2 |

125.8 |

79.8 |

47.6 |

| ICAFusion [17] |

48.7 |

138.4 |

81.6 |

49.7 |

| EAEFNet [19] |

42.3 |

118.6 |

82.3 |

50.8 |

| TFDet [20] |

45.1 |

131.2 |

83.1 |

51.6 |

| |

CMAFNet (Ours) |

48.5 |

132.6 |

84.2 |

53.1 |

Table 4.

Per-category AP50 (%) comparison on the FLIR-Aligned dataset.

Table 4.

Per-category AP50 (%) comparison on the FLIR-Aligned dataset.

| Method |

Person |

Car |

Bicycle |

mAP50

|

| Faster R-CNN [46] |

65.8 |

80.2 |

65.5 |

70.5 |

| YOLOv8-L [53] |

75.6 |

87.4 |

73.7 |

78.9 |

| YOLOv9-E [54] |

76.3 |

88.1 |

74.4 |

79.6 |

| DINO [8] |

77.8 |

89.2 |

74.5 |

80.5 |

| RT-DETR-L [32] |

78.5 |

89.8 |

75.6 |

81.3 |

| CFT [6] |

76.5 |

88.3 |

74.6 |

79.8 |

| ICAFusion [17] |

78.8 |

89.6 |

76.4 |

81.6 |

| EAEFNet [19] |

79.5 |

90.2 |

77.2 |

82.3 |

| TFDet [20] |

80.6 |

91.1 |

77.6 |

83.1 |

| CMAFNet (Ours) |

82.1 |

92.3 |

78.2 |

84.2 |

Table 5.

Component-wise ablation study on the M3FD dataset. “Baseline” uses a standard backbone with simple concatenation fusion and vanilla DETR decoder. DRB: Dynamic Receptive Backbone; CSM: Channel-Split Mamba Block; GMIN: Global Multi-modal Interaction Network; UMQS: Uncertainty-minimal Query Selection; SDD: Separable Dynamic Decoder.

Table 5.

Component-wise ablation study on the M3FD dataset. “Baseline” uses a standard backbone with simple concatenation fusion and vanilla DETR decoder. DRB: Dynamic Receptive Backbone; CSM: Channel-Split Mamba Block; GMIN: Global Multi-modal Interaction Network; UMQS: Uncertainty-minimal Query Selection; SDD: Separable Dynamic Decoder.

| DRB |

CSM |

GMIN |

UMQS |

SDD |

mAP50 (%) |

mAP50:95 (%) |

| |

|

|

|

|

75.2 |

44.1 |

| ✓ |

|

|

|

|

78.1 |

46.5 |

| ✓ |

✓ |

|

|

|

80.3 |

48.2 |

| ✓ |

✓ |

✓ |

|

|

82.1 |

50.5 |

| ✓ |

✓ |

✓ |

✓ |

|

82.8 |

51.2 |

| ✓ |

✓ |

✓ |

✓ |

✓ |

83.9 |

52.3 |

Table 6.

Ablation study on CSM-Block design choices on the M3FD dataset. G: number of channel groups; “Attn. Recalib.”: attention-based recalibration.

Table 6.

Ablation study on CSM-Block design choices on the M3FD dataset. G: number of channel groups; “Attn. Recalib.”: attention-based recalibration.

| Variant |

G |

Attn. Recalib. |

mAP50 (%) |

FPS |

| Standard Self-Attention |

– |

– |

81.5 |

18.2 |

| Standard Mamba (no split) |

1 |

✓ |

81.8 |

28.5 |

| CSM-Block () |

2 |

✓ |

82.6 |

30.1 |

| CSM-Block () |

4 |

✓ |

83.9 |

29.3 |

| CSM-Block () |

8 |

✓ |

83.2 |

29.8 |

| CSM-Block (, no recalib.) |

4 |

– |

82.4 |

31.2 |

Table 7.

Ablation study on GMIN design choices on the M3FD dataset.

Table 7.

Ablation study on GMIN design choices on the M3FD dataset.

| Fusion Strategy |

mAP50 (%) |

mAP50:95 (%) |

| Concatenation only |

80.3 |

48.2 |

| Element-wise addition |

80.8 |

48.6 |

| Self-attention (intra-modal) |

81.4 |

49.3 |

| Cross-attention (standard) |

82.2 |

50.1 |

| GMIN (GAP only) |

82.8 |

50.6 |

| GMIN (GMP only) |

82.5 |

50.3 |

| GMIN (GAP + GMP, w/o gating) |

83.1 |

51.4 |

| GMIN (Full) |

83.9 |

52.3 |

Table 8.

Ablation study on the uncertainty penalty coefficient in UMQS on the M3FD dataset.

Table 8.

Ablation study on the uncertainty penalty coefficient in UMQS on the M3FD dataset.

|

mAP50 (%) |

mAP75 (%) |

mAP50:95 (%) |

| 0 (no uncertainty) |

82.1 |

62.3 |

50.5 |

| 0.1 |

82.8 |

63.1 |

51.2 |

| 0.3 |

83.4 |

63.8 |

51.8 |

| 0.5 |

83.9 |

64.5 |

52.3 |

| 0.7 |

83.5 |

64.1 |

51.9 |

| 1.0 |

82.9 |

63.2 |

51.3 |

Table 9.

Computational efficiency comparison. FPS is measured on a single NVIDIA A100 GPU with input size .

Table 9.

Computational efficiency comparison. FPS is measured on a single NVIDIA A100 GPU with input size .

| Method |

Params (M) |

FLOPs (G) |

FPS |

mAP50 (%) |

| CFT [6] |

44.2 |

125.8 |

22.5 |

78.6 |

| ICAFusion [17] |

48.7 |

138.4 |

19.8 |

80.5 |

| EAEFNet [19] |

42.3 |

118.6 |

24.1 |

81.2 |

| TFDet [20] |

45.1 |

131.2 |

21.3 |

82.4 |

| CMAFNet (Ours) |

48.5 |

132.6 |

29.3 |

83.9 |

Table 10.

Performance comparison under different environmental conditions on the M3FD dataset (mAP50, %).

Table 10.

Performance comparison under different environmental conditions on the M3FD dataset (mAP50, %).

| Method |

Day |

Night |

Overexposure |

Challenge |

Overall |

| YOLOv8-L [53] |

82.3 |

68.5 |

71.8 |

65.4 |

77.2 |

| RT-DETR-L [32] |

85.6 |

72.1 |

74.5 |

68.3 |

80.1 |

| CFT [6] |

84.2 |

73.8 |

73.2 |

67.5 |

78.6 |

| ICAFusion [17] |

86.1 |

75.4 |

76.3 |

70.2 |

80.5 |

| TFDet [20] |

87.3 |

77.8 |

78.5 |

72.6 |

82.4 |

| CMAFNet (Ours) |

88.5 |

80.2 |

80.1 |

74.8 |

83.9 |