Submitted:

01 March 2026

Posted:

05 March 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

1.1. The Therac-25 Catastrophe as Parable

1.2. The Limits of Static Role Taxonomies

1.3. The Need for Transition Logic

- Over-control. Organizations cling to human primacy even when AI demonstrably outperforms, sacrificing accuracy and efficiency.

- Under-control. Organizations cede authority to AI without specifying conditions for reversion, incurring safety, legitimacy, and compliance risks.

- Accountability voids. Decisions fall into gaps between human and machine responsibility, leaving neither party answerable when outcomes go awry.

1.4. Introducing the Dynamic Authority Reversal Framework

- Human-Leader/AI-Follower (HL): Humans decide; AI advises.

- AI-Leader/Human-Follower (AL): AI proposes and, absent override, enacts; humans monitor.

- Co-Leadership (CO): Authority is negotiated or shared via explicit merge protocols; neither party holds unilateral control.

- Mutual Override (MO): A protective interrupt—either party may halt or reverse the process when risk or ethical thresholds are breached.

1.5. Contributions

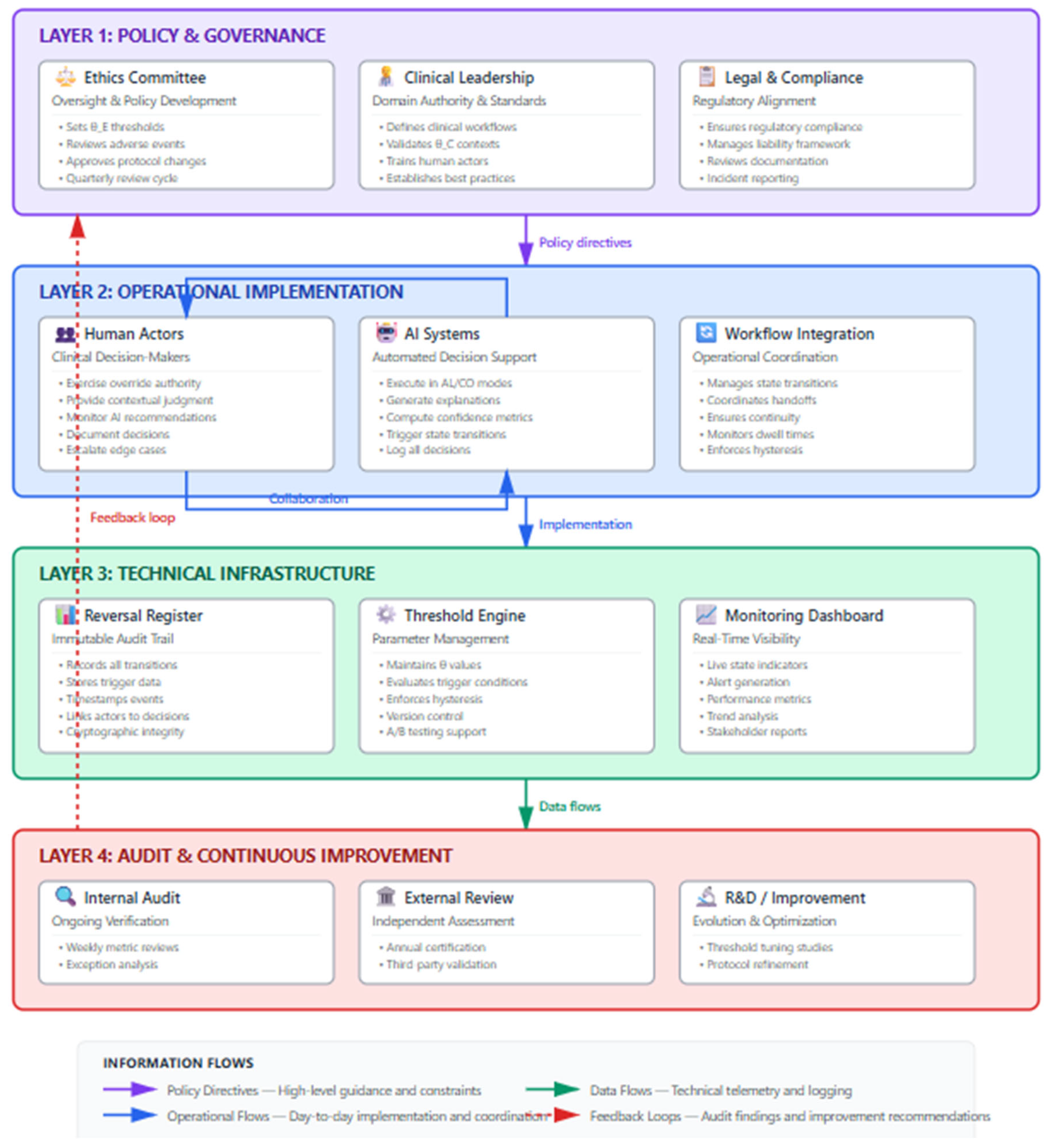

- Transition logic. DAR specifies when and why authority should shift, moving beyond the static categories that dominate existing literature and integrating insights from the adaptive-automation tradition.

- Transitional accountability. DAR couples micro-level trust calibration with macro-level legitimacy, theorizing accountability along multiple dimensions (answerability, responsibility, liability) and binding each to authority states.

- Operational instrumentation. DAR translates theoretical constructs into buildable artifacts: Authority-State Playbooks, safe-exit timers, state-contingent explanation templates, and telemetry specifications that make handovers auditable and measurable.

- Attention to human experience. DAR acknowledges that operators experience handovers phenomenologically—as potentially disorienting, contested, or empowering—and incorporates design considerations to support the human experience of transitions.

2. Literature Review

2.1. Human–AI Interaction: From Static Roles to Dynamic Handovers

2.1.1. The Augmentation–Automation Continuum

2.1.2. Adaptive Automation and Dynamic Function Allocation

2.1.3. Human-in-the-Loop and Its Discontents

2.1.4. Toward Transition Logic

2.2. Leadership Theory: Distributed, Shared, and Hybrid Forms

2.2.1. Distributed and Shared Leadership

2.2.2. Leadership as Influence Exchange

2.3. Trust Calibration in Human–AI Interaction

2.3.1. The Trust-Calibration Challenge

2.3.2. Dynamic Trust and Authority

2.4. Explainable AI: From Transparency to State-Contingent Legibility

2.4.1. The Explainability Landscape

2.4.2. Explanations as Phase-Specific Instruments

- HL state: Summative explanations that distill AI recommendations for human evaluation.

- AL state: Operational-sufficiency explanations that convey confidence, alternatives, and risk boundaries.

- CO state: Diagnostic explanations that illuminate areas of agreement and disagreement.

- MO state: Override justifications that document the rationale for halting or reversing action.

2.5. Accountability and Legitimacy in Algorithmic Governance

2.5.1. The Accountability Gap

2.5.2. Dimensions of Accountability

- Answerability: The Reversal Register documents which party held authority, what triggers prompted transitions, and what explanations were provided, enabling retrospective justification of decisions.

- Responsibility: By binding authority to states, DAR clarifies who bears the duty to act appropriately at each moment—the human in HL, the human monitor in AL, both parties under explicit protocols in CO.

- Liability: When outcomes go awry, the Reversal Register provides an evidentiary basis for attributing liability to the party in charge at the relevant moment, subject to whether that party discharged their duties appropriately.

2.5.3. Transitional Accountability in Practice

2.6. Synthesis and Research Gap

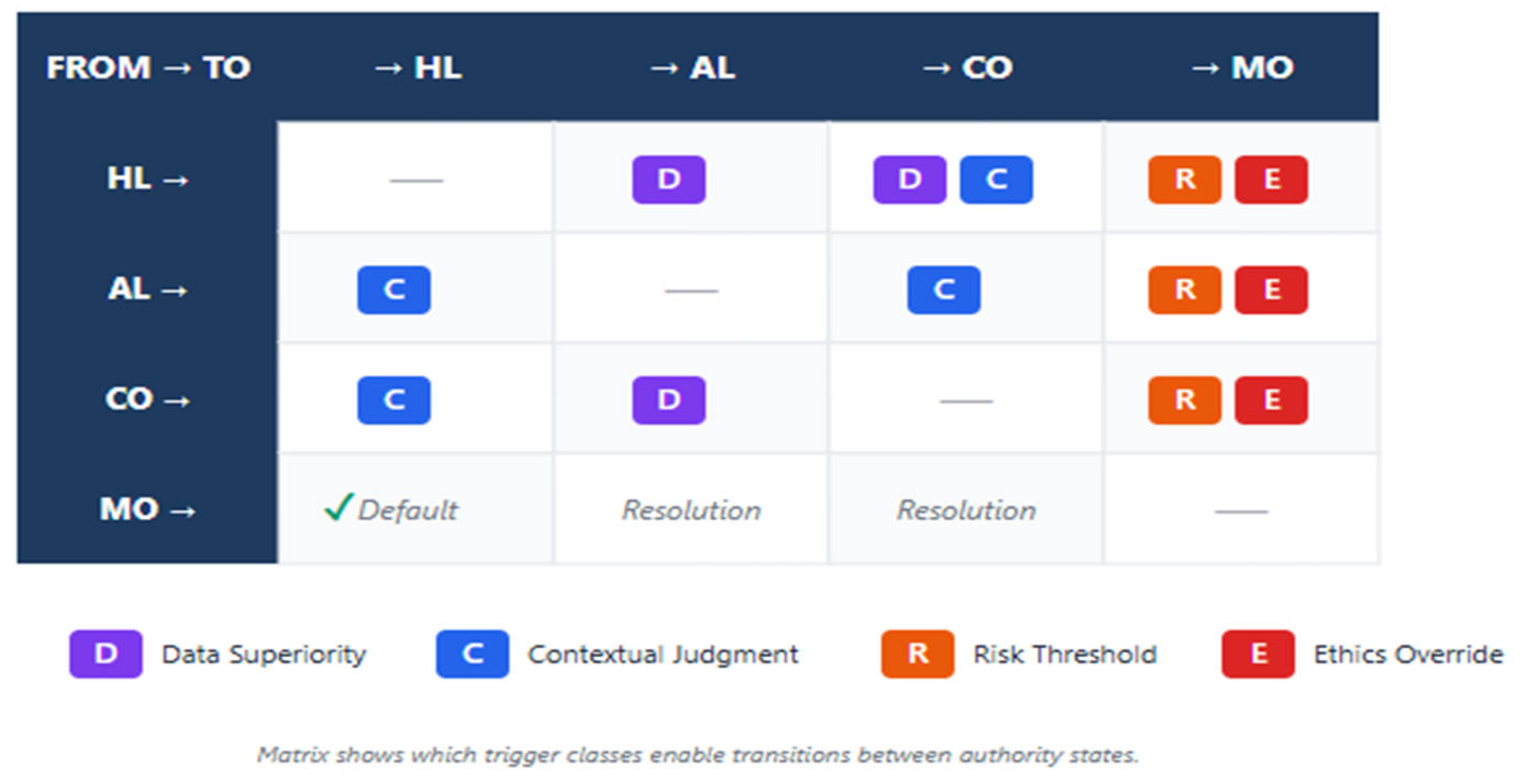

- Specifying four authority states with clear semantics and diagnostic criteria.

- Defining four trigger classes, governance mechanisms for trigger disputes, and attendant guardrails.

- Introducing instrumentation—safe-exit timers, hysteresis bands, Reversal Registers—that operationalizes transitions.

- Theorizing transitional accountability along multiple dimensions.

- Deriving falsifiable propositions that enable cumulative research.

3. The Dynamic Authority Reversal Model

3.1. Overview

3.2. Theoretical Justification for the State Taxonomy

3.2.1. Deriving States from First Principles

| Human Enacts | AI Enacts (absent override) | |

| Human Proposes | HL (Human-Leader) | — |

| AI Proposes | CO (negotiated merge) | AL (AI-Leader) |

| Either Proposes Halt | MO (protective interrupt) | MO (protective interrupt) |

- HL: Human proposes and enacts; AI advises.

- AL: AI proposes and enacts (absent override); human monitors.

- CO: AI proposes, but enactment requires human approval or negotiated merge—neither party holds unilateral authority.

- MO: Either party proposes halt; enactment is suspended pending resolution.

3.2.2. MO as Meta-Level Interrupt

3.3. States and Diagnostic Criteria

3.3.1. Human-Leader/AI-Follower (HL)

- The human must actively approve, modify, or reject the AI recommendation before action proceeds.

- The AI cannot enact action unilaterally.

- The human's approval is not merely nominal (e.g., a one-click confirmation after AI has already acted) but substantive (e.g., review of recommendation with opportunity to modify).

3.3.2. AI-Leader/Human-Follower (AL)

- The AI can enact action if the human does not intervene within the designated window.

- The human's role is monitoring and exception-handling, not routine approval.

- The human has access to sufficient information (explanations, alerts) to detect when override is warranted.

3.3.3. Co-Leadership (CO)

- An explicit merge protocol is documented (e.g., weighted voting, sequential deliberation, consensus requirement).

- Neither party can enact action without the other's input or approval.

- Disagreements are resolved through the documented protocol, not unilateral override.

3.3.4. Mutual Override (MO)

- An override condition has been signaled by either party.

- Action is halted; no enactment occurs until the condition is resolved.

- Resolution proceeds via a documented escalation protocol.

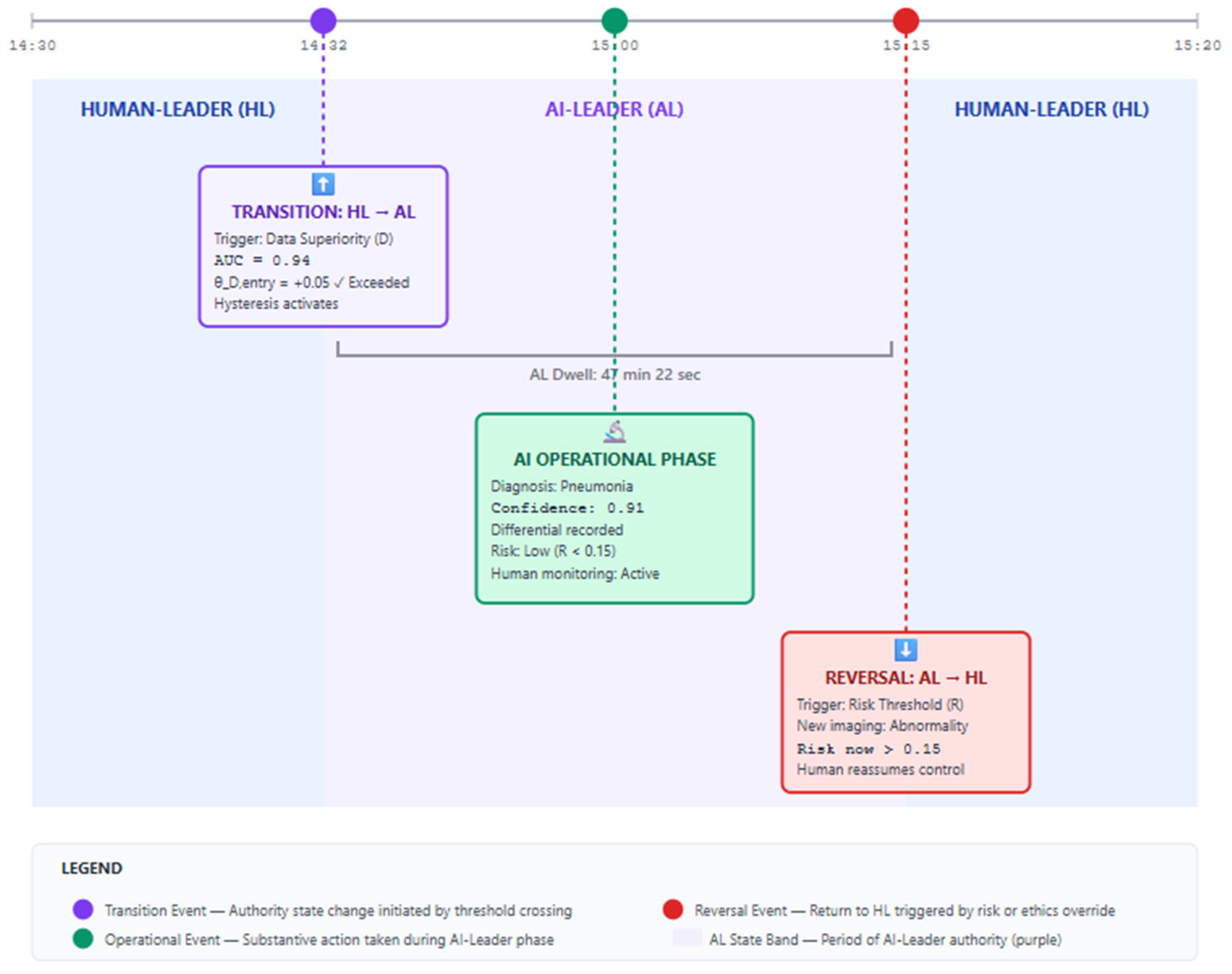

3.3.5. Worked Example: Tracing a Decision Episode

- Initial state (HL): The radiologist reviews the AI's flagged cases, examining images and AI confidence scores. For each case, the radiologist makes the diagnostic determination. Diagnostic criteria satisfied: Human must approve before diagnosis is recorded.

- Transition to AL (data-superiority trigger): Retrospective audit shows that for routine, high-confidence cases (AI confidence > 0.95), AI accuracy exceeds radiologist accuracy by 8% over 500 cases. Per the Authority-State Playbook, the workflow transitions to AL for cases meeting these criteria. Diagnostic criteria satisfied: AI diagnosis is recorded unless the radiologist overrides within 60 seconds.

- Transition to MO (risk-threshold trigger): The AI flags a case with confidence 0.97, but the patient's electronic health record indicates a history of atypical presentations. The system's out-of-distribution detector fires, triggering MO. Diagnostic criteria satisfied: Diagnosis is suspended; escalation protocol initiates.

- Resolution to HL: The radiologist reviews the case, determines that the atypical history warrants full human evaluation, and the system reverts to HL for this case.

3.4. Trigger Classes

3.4.1. Data Superiority (D)

3.4.2. Contextual Judgment Requirements (C)

3.4.3. Risk Threshold (R)

3.4.4. Ethics Override (E)

3.5. Guardrails

3.5.1. Hysteresis

3.5.2. Safe-Exit Timers

3.6. Trigger Governance

- Threshold calibration. Define initial thresholds based on pilot data and domain expertise.

- Dispute resolution. Adjudicate disagreements about whether a trigger has fired appropriately.

- Periodic review. Recalibrate thresholds at defined intervals (e.g., quarterly) based on performance data and stakeholder input.

- Stakeholder representation. Include representatives from operations, legal, compliance, ethics, and—where feasible—affected publics.

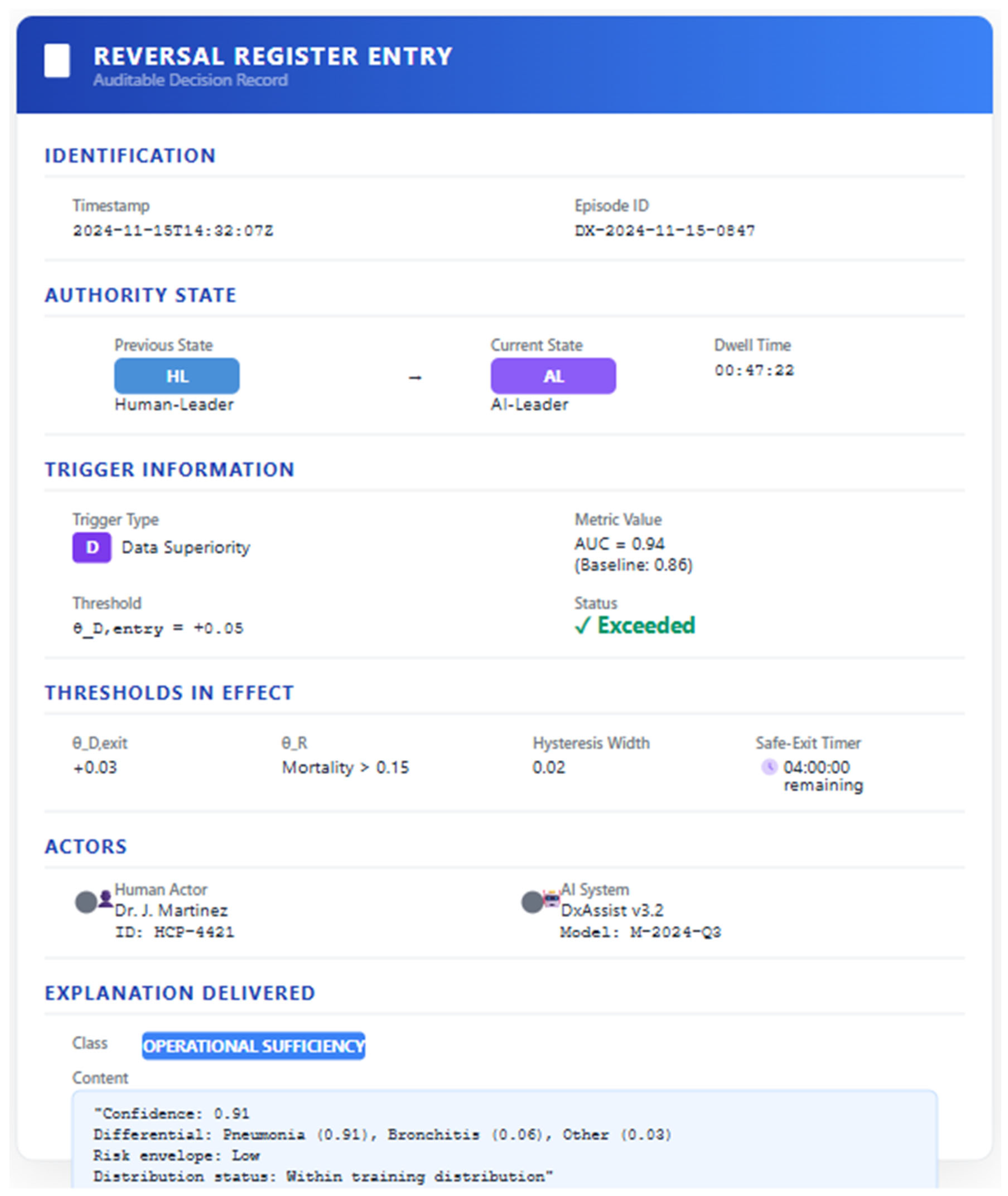

3.7. The Reversal Register

- State: The authority state at the time of decision (HL, AL, CO, MO).

- Trigger: The trigger class(es) that prompted entry into the current state.

- Thresholds: The numeric thresholds in effect (θ_D, θ_C, θ_R, hysteresis width, timer duration).

- Actors: Identifiers for the human operator(s) and AI system(s) involved.

- Explanations delivered: The class and content of explanations presented.

- Actions taken: Operator and system actions (approval, override, demand for elaboration, abort).

- Outcome (if known): Decision result and any downstream consequences.

3.8. Metrics and Telemetry

| Metric | Definition | Target Direction |

| Reversal latency | Time (ms or decision cycles) from trigger detection to effective state change | Minimize |

| Hysteresis width | Gap between entry and exit thresholds | Tune to balance stability and responsiveness |

| Trust-whiplash index | Incidence of user reliance shifts (over-trust ↔ under-trust) after reversals | Minimize |

| Thrash rate | Unwanted rapid state flips per 1,000 decisions | Near zero |

| Phase-responsibility completion | Percentage of decisions with complete Reversal Register entries | 100% |

| Auditor-satisfaction score | External-auditor rating of log completeness and traceability | Maximize |

| Operator disorientation index | Self-reported or behavioral measure of mode confusion following transitions | Minimize |

4. Propositions, Prioritization, and Methodological Pathways

4.1. Proposition Prioritization

| Proposition | Type | Tractability | Priority |

| P1 (Authority–Performance Fit) | Foundational | Moderate | High |

| P2 (Trigger Validity) | Foundational | Moderate | High |

| P3 (Hysteresis and Stability) | Derivative | High (simulation) | Medium |

| P4 (Safe-Exit Efficacy) | Derivative | High (experiment) | Medium |

| P5 (State-Contingent Explanations) | Foundational | High (experiment) | High |

| P6 (Cognitive-Load Moderation) | Derivative | High (experiment) | Low |

| P7 (Reversal Latency and Safety) | Derivative | Moderate | Medium |

| P8 (Override Friction and Error Detection) | Derivative | High (experiment) | Medium |

| P9 (Reversal Register and Legitimacy) | Derivative | Low (longitudinal) | Low |

| P10 (Transitional Accountability and Compliance) | Derivative | Low (archival) | Low |

4.2. Authority–Performance Fit

- Measurement constructs. Decision accuracy (e.g., classification accuracy, prediction error), efficiency (time-to-decision, cost), risk-adjusted return (e.g., Sharpe ratio, harm-avoidance rate).

- Methodological pathway. Randomized controlled trial or A/B test comparing DAR-governed workflows to static-HITL baselines, controlling for task complexity and operator experience.

- Threats to validity. (a) The relevant counterfactual (what would have happened under static allocation) is not directly observable; randomization addresses this but may not capture real-world dynamics. (b) Ethical concerns may preclude randomization in high-stakes settings. Mitigations include simulation studies, stepped-wedge designs, or natural experiments exploiting exogenous variation in DAR adoption.

4.3. Stability and Responsiveness

4.4. Explainability and Trust Calibration

4.5. Safety and Latency

4.6. Accountability and Legitimacy

4.7. Research-Ethics Considerations

- Informed consent for operators participating in experiments.

- Equipoise considerations—randomization is appropriate only when there is genuine uncertainty about which condition is superior.

- Monitoring and stopping rules—experiments should include interim analyses to halt if one condition proves clearly harmful.

- Privacy protections for data in Reversal Registers.

4.8. Minimal Telemetry Specification

- Current state (HL, AL, CO, MO)

- Trigger type(s) active

- Entry/exit thresholds in effect

- Dwell time in current state

- Safe-exit timer status (time remaining)

- Explanation class delivered

- Actor action sequence (approve, override, request elaboration, abort)

5. Sector-Specific Implementation Guidance

5.1. Healthcare

5.1.1. Context and Existing Governance Structures

5.1.2. DAR Implementation

- HL for complex, multi-morbid, or preference-sensitive cases, where clinician judgment is essential.

- AL for well-characterized screening tasks (e.g., diabetic-retinopathy grading) where AI meets regulatory-performance thresholds and case characteristics fall within the AI's validated operating envelope.

- CO for multidisciplinary tumor boards or shared decision-making encounters, where a documented merge protocol combines clinician and AI inputs.

- MO for patient-safety alerts (e.g., contraindication flags, drug–drug interaction warnings) or when cases fall outside the AI's validated envelope.

- Data superiority: AI accuracy exceeding physician baseline on a validated, representative test set by a pre-specified margin (e.g., +5% sensitivity). Validation should use local data reflecting the institution's case mix.

- Contextual judgment: Patient-preference signals (e.g., documented values, advance directives), novel symptom constellations, social determinants flagged by intake screening, or model uncertainty exceeding threshold.

- Risk threshold: Mortality-risk score or complication-probability exceeding threshold; severity of potential adverse outcome.

- Ethics override: Informed-consent requirements, off-label prescribing, resource-allocation dilemmas (e.g., ICU triage), protected-class considerations.

- Safe-exit timers keyed to clinical-workflow stages (e.g., 24-hour review for admitted patients, end-of-shift review for outpatient clinics). Timers ensure that even if the AI leads, a clinician reviews the case within a defined interval.

- Hysteresis on accuracy thresholds to accommodate inter-shift variability and prevent oscillation due to random fluctuations in case mix.

- A multidisciplinary committee (physicians, nurses, informaticists, ethicists, patient representatives) calibrates thresholds, adjudicates disputes, and reviews performance quarterly.

- Committee decisions are documented and accessible to the hospital's quality-improvement and compliance functions.

- Linked to electronic health record (EHR) audit logs, ensuring that each diagnostic or treatment decision is associated with the prevailing authority state.

- Entries accessible for malpractice defense, quality-improvement review, and regulatory inspection.

- Privacy protections ensure that patient-identifiable information is handled in accordance with HIPAA and institutional policies.

- Morbidity and Mortality (M&M) Conferences: Reversal Register data can inform M&M reviews by identifying cases where authority transitions may have contributed to adverse outcomes.

- Quality-Improvement Committees: Aggregate telemetry (thrash rate, reversal latency, trigger distributions) supports continuous improvement.

- Liability Allocation: Malpractice doctrine generally holds the treating clinician responsible. DAR clarifies that in HL, the clinician is unambiguously accountable; in AL, the clinician as designated monitor is accountable for failures to override detectable errors; in CO, accountability follows the documented merge protocol; in MO, the invoking party is accountable for the halt. These clarifications align with, rather than displace, existing doctrine.

5.1.3. Expected Benefits

- Reduced diagnostic delay where AI leads appropriately within its validated envelope.

- Preserved clinician authority for complex or values-laden decisions.

- Reduced alert fatigue by limiting alerts to state-relevant triggers.

- Clear documentation supporting liability attribution and quality improvement.

- Enhanced patient trust through transparent authority configurations (see Section 6.4 on affected publics).

5.1.4. Challenges and Mitigations

- Clinician resistance: Some clinicians may resist ceding authority to AI. Change management should emphasize that DAR preserves clinician authority for complex cases and that AI leadership applies only within validated envelopes with safe-exit guarantees.

- Technical integration: Integrating DAR with existing EHR systems may require significant development effort. Phased rollout—starting with a single workflow (e.g., retinal screening) before expanding—can manage complexity.

- Regulatory uncertainty: Regulatory guidance on CDSS liability is evolving. Organizations should engage with FDA and CMS to ensure that DAR implementations align with emerging requirements.

5.2. Public Administration

5.2.1. Context and Existing Governance Structures

5.2.2. DAR Implementation

- HL for final determinations affecting fundamental rights (e.g., benefit denial, child removal, parole decisions), where due-process requirements mandate substantive human judgment.

- AL for low-stakes triage or scheduling (e.g., prioritizing case review order, scheduling appointments), where AI leadership improves efficiency without implicating fundamental rights.

- CO for intermediate-risk cases (e.g., moderate risk scores in child-welfare screening), where caseworker and model inputs are combined via a structured deliberation protocol.

- MO for civil-liberties alerts (e.g., protected-class flags, anomalous-score patterns suggesting data error), procedural-fairness requirements, or when the affected party contests the determination.

- Data superiority: Model accuracy validated on representative local data, with attention to subgroup performance (accuracy disaggregated by race, gender, geography).

- Contextual judgment: Case complexity flags (e.g., multiple interacting risk factors), out-of-distribution indicators, caseworker annotation of situational complexity.

- Risk threshold: Severity scores exceeding threshold (e.g., child safety risk exceeding 0.8), potential for irreversible harm (e.g., child removal).

- Ethics override: Protected-class sensitivity (disparate-impact flags), procedural-fairness requirements (e.g., right to hearing), whistleblower or fraud-allegation context.

- Safe-exit timers aligned with statutory deadlines (e.g., 30-day benefit determination, 48-hour child-safety response). Timers ensure that AI-led triage does not circumvent statutory requirements.

- Hysteresis to prevent rapid oscillation in risk classifications, which could confuse caseworkers and undermine trust.

- A committee including agency leadership, frontline workers, legal counsel, civil-liberties advocates, and community representatives calibrates thresholds and adjudicates disputes.

- Committee composition should reflect the diversity of affected publics to ensure that threshold-setting is not captured by any single interest.

- Decisions are documented and subject to Freedom of Information Act (FOIA) requests.

- Maintained as administrative record, subject to FOIA and judicial review.

- Entries retained for the duration required by records-retention schedules.

- Register serves as evidentiary basis for administrative appeals: affected parties can request documentation of the authority state, triggers, and explanations that governed their case.

- Administrative-Law Doctrine: Reasoned decision-making requirements (e.g., under the Administrative Procedure Act) demand that agencies explain the basis for determinations. The Reversal Register provides structured documentation that supports compliance.

- Appellate Review: When determinations are challenged, courts can examine the Reversal Register to assess whether the agency appropriately allocated authority and responded to triggers.

- Legislative Oversight: Aggregate telemetry (state distributions, trigger frequencies, outcome disparities) can inform legislative hearings on algorithmic governance.

5.2.3. Expected Benefits

- Efficiency gains in routine processing (triage, scheduling) without sacrificing due process for consequential decisions.

- Enhanced due-process protections through documented deliberation and clear authority assignment.

- Defensible administrative record supporting judicial review and legislative oversight.

- Reduced disparate impact through disaggregated monitoring and protected-class triggers.

5.2.4. Challenges and Mitigations

- Political resistance: Threshold-setting allocates power; managers, caseworkers, and external stakeholders may resist changes that affect their authority. Inclusive governance (diverse committee composition) and transparent documentation can build buy-in.

- Capacity constraints: Frontline workers in under-resourced agencies may lack time for additional deliberation. DAR should be designed to reduce, not increase, overall workload by concentrating human effort on high-stakes cases.

- Transparency demands: Public-administration contexts face heightened transparency expectations. Organizations should consider proactive disclosure of aggregate telemetry (e.g., annual reports on state distributions and outcomes) to build public trust.

5.3. Additional Sectors: Summary Guidance

- States: HL for strategy and limit-setting; AL for execution within limits; CO for novel instruments; MO for circuit-breaker events.

- Key considerations: Integration with existing risk-management frameworks; alignment with prudential-regulation requirements; real-time telemetry for high-frequency environments.

- States: HL for final hiring/promotion decisions; AL for initial résumé screening; CO for calibration sessions; MO for adverse-impact alerts.

- Key considerations: Compliance with employment-discrimination law (e.g., Title VII, EEOC guidance); attention to candidate experience and dignity; integration with applicant-tracking systems.

6. Discussion

6.1. Theoretical Contributions

6.2. Practical Implications

6.3. The Human Experience of Handovers

- Clear signaling: Interface elements should unambiguously indicate the current authority state (e.g., color coding, status banners, auditory cues).

- Transition previews: When a trigger is approaching but has not yet fired, operators can be alerted to the impending transition, reducing surprise.

- Contestability: Operators should have a low-friction mechanism to contest a transition they believe is unwarranted. The Trigger Governance Committee can review contested transitions retrospectively.

- Training: Operators should receive training on the logic of the state taxonomy, the meaning of triggers, and the expectations for their role in each state.

- Workload management: Frequent transitions can impose cognitive load. Hysteresis and safe-exit timers should be calibrated to balance responsiveness against operator burden.

6.4. Political Dimensions of DAR Implementation

6.5. Affected Publics

- Transparency to affected parties: Should individuals be informed when their case was governed by AI leadership versus human leadership? In principle, the Reversal Register provides this information; in practice, disclosing detailed authority configurations may overwhelm or confuse non-expert audiences. Organizations should consider summary disclosures (e.g., "This decision was reviewed by a physician with assistance from an AI diagnostic tool") that convey the essential authority structure without technical detail.

- Contestability by affected parties: Affected individuals should have mechanisms to contest decisions, including access to information about the authority configuration that governed their case. In public-administration contexts, this aligns with due-process requirements; in other contexts, organizations may adopt analogous practices voluntarily.

- Participation in governance: Where feasible, affected publics—or their representatives—should participate in Trigger Governance Committees. Community representatives can ensure that threshold-setting reflects public values, not only organizational interests.

6.6. Tensions and Trade-offs

6.7. Regulatory Alignment

6.8. Limitations and Boundary Conditions

6.9. Future Research Directions

- Empirical validation. Field experiments and longitudinal studies are needed to test the propositions derived in Section 4 across diverse domains. Priority should be given to foundational propositions (P1, P2, P5).

- Parameter optimization. Machine-learning techniques (e.g., reinforcement learning, Bayesian optimization) could automate hysteresis and timer tuning based on historical performance data, subject to safety constraints.

- Multi-agent extensions. DAR currently models a single AI system and a single human party; extension to multi-AI and multi-human configurations is warranted, particularly for team-based workflows.

- Explanation-generation pipelines. Research should develop methods for generating state-contingent explanations automatically, drawing on large language models and domain ontologies.

- Cross-cultural and cross-jurisdictional variation. Comparative research can identify moderators and inform localized implementations.

- Affected-public engagement. Empirical research on how affected publics perceive and respond to different authority configurations can inform transparency and contestability design.

- Soft-DAR variants. For creative, exploratory, or low-stakes tasks, variants with softer, more negotiated transitions may be appropriate; conceptual and empirical work is needed to develop such variants.

7. Conclusions

References

- Amershi, S., Weld, D. S., Vorvoreanu, M., Fourney, A., Nushi, B., Collisson, P., Suh, J., Iqbal, S., Bennett, P. N., Inkpen, K., Teevan, J., Kikin-Gil, R., & Horvitz, E. (2019). Guidelines for human-AI interaction. Proceedings of the 2019 CHI Conference on Human Factors in Computing Systems, 1–13.

- Bansal, G., Wu, T., Zhou, J., Fok, R., Nushi, B., Kamar, E., Ribeiro, M. T., & Weld, D. S. (2021). Does the whole exceed its parts? The effect of AI explanations on complementary team performance. Proceedings of the 2021 CHI Conference on Human Factors in Computing Systems, 1–16.

- Bovens, M. (2007). Analysing and assessing accountability: A conceptual framework. European Law Journal, 13(4), 447–468.

- Buçinca, Z., Malaya, M. B., & Gajos, K. Z. (2021). To trust or to think: Cognitive forcing functions can reduce overreliance on AI in AI-assisted decision-making. Proceedings of the ACM on Human-Computer Interaction, 5(CSCW1), 1–21.

- D'Innocenzo, L., Mathieu, J. E., & Kukenberger, M. R. (2016). A meta-analysis of different forms of shared leadership–team performance relations. Journal of Management, 42(7), 1964–1991.

- Davenport, T. H., & Kirby, J. (2016). Only humans need apply: Winners and losers in the age of smart machines. Harper Business.

- DeRue, D. S., & Ashford, S. J. (2010). Who will lead and who will follow? A social process of leadership identity construction in organizations. Academy of Management Review, 35(4), 627–647.

- Dietvorst, B. J., Simmons, J. P., & Massey, C. (2015). Algorithm aversion: People erroneously avoid algorithms after seeing them err. Journal of Experimental Psychology: General, 144(1), 114–126.

- Eubanks, V. (2018). Automating inequality: How high-tech tools profile, police, and punish the poor. St. Martin's Press.

- European Union. (2024). Regulation (EU) 2024/1689 of the European Parliament and of the Council laying down harmonised rules on artificial intelligence. Official Journal of the European Union.

- Fountain, J. E. (2001). Building the virtual state: Information technology and institutional change. Brookings Institution Press.

- Goodrich, M. A., & Schultz, A. C. (2007). Human–robot interaction: A survey. Foundations and Trends in Human–Computer Interaction, 1(3), 203–275.

- Green, B., & Chen, Y. (2019). Disparate interactions: An algorithm-in-the-loop analysis of fairness in risk assessments. Proceedings of the Conference on Fairness, Accountability, and Transparency, 90–99.

- Gronn, P. (2002). Distributed leadership as a unit of analysis. The Leadership Quarterly, 13(4), 423–451.

- Kaber, D. B., & Endsley, M. R. (2004). The effects of level of automation and adaptive automation on human performance, situation awareness and workload in a dynamic control task. Theoretical Issues in Ergonomics Science, 5(2), 113–153.

- Lai, V., & Tan, C. (2019). On human predictions with explanations and predictions of machine learning models: A case study on deception detection. Proceedings of the Conference on Fairness, Accountability, and Transparency, 29–38.

- Lee, J. D., & See, K. A. (2004). Trust in automation: Designing for appropriate reliance. Human Factors, 46(1), 50–80.

- Leveson, N. G., & Turner, C. S. (1993). An investigation of the Therac-25 accidents. Computer, 26(7), 18–41.

- Liao, Q. V., Gruen, D., & Miller, S. (2020). Questioning the AI: Informing design practices for explainable AI user experiences. Proceedings of the 2020 CHI Conference on Human Factors in Computing Systems, 1–15.

- Mayer, R. C., Davis, J. H., & Schoorman, F. D. (1995). An integrative model of organizational trust. Academy of Management Review, 20(3), 709–734.

- Miller, T. (2019). Explanation in artificial intelligence: Insights from the social sciences. Artificial Intelligence, 267, 1–38.

- Mittelstadt, B. (2019). Principles alone cannot guarantee ethical AI. Nature Machine Intelligence, 1(11), 501–507.

- Parasuraman, R., & Manzey, D. H. (2010). Complacency and bias in human use of automation: An attentional integration. Human Factors, 52(3), 381–410.

- Parasuraman, R., Sheridan, T. B., & Wickens, C. D. (2000). A model for types and levels of human interaction with automation. IEEE Transactions on Systems, Man, and Cybernetics—Part A: Systems and Humans, 30(3), 286–297.

- Pearce, C. L., & Conger, J. A. (2003). Shared leadership: Reframing the hows and whys of leadership. SAGE Publications.

- Poursabzi-Sangdeh, F., Goldstein, D. G., Hofman, J. M., Wortman Vaughan, J. W., & Wallach, H. (2021). Manipulating and measuring model interpretability. Proceedings of the 2021 CHI Conference on Human Factors in Computing Systems, 1–52.

- Raghavan, M., Barocas, S., Kleinberg, J., & Levy, K. (2020). Mitigating bias in algorithmic hiring: Evaluating claims and practices. Proceedings of the 2020 Conference on Fairness, Accountability, and Transparency, 469–481.

- Raisch, S., & Krakowski, S. (2021). Artificial intelligence and management: The automation–augmentation paradox. Academy of Management Review, 46(1), 192–210.

- Raji, I. D., Smart, A., White, R. N., Mitchell, M., Gebru, T., Hutchinson, B., Smith-Loud, J., Theron, D., & Barnes, P. (2020). Closing the AI accountability gap: Defining an end-to-end framework for internal algorithmic auditing. Proceedings of the 2020 Conference on Fairness, Accountability, and Transparency, 33–44.

- Sarter, N. B., & Woods, D. D. (1995). How in the world did we ever get into that mode? Mode error and awareness in supervisory control. Human Factors, 37(1), 5–19.

- Seeber, I., Bittner, E., Briggs, R. O., de Vreede, T., de Vreede, G.-J., Elkins, A., Maier, R., Merz, A. B., Oeste-Reiß, S., Randrup, N., Schwabe, G., & Söllner, M. (2020). Machines as teammates: A research agenda on AI in team collaboration. Information & Management, 57(2), 103174.

- Selbst, A. D., Boyd, D., Friedler, S. A., Venkatasubramanian, S., & Vertesi, J. (2019). Fairness and abstraction in sociotechnical systems. Proceedings of the Conference on Fairness, Accountability, and Transparency, 59–68.

- Sheridan, T. B., & Verplank, W. L. (1978). Human and computer control of undersea teleoperators (Technical Report). MIT Man-Machine Systems Laboratory.

- Sittig, D. F., & Singh, H. (2010). A new sociotechnical model for studying health information technology in complex adaptive healthcare systems. Quality and Safety in Health Care, 19(Suppl 3), i68–i74.

- Topol, E. J. (2019). High-performance medicine: The convergence of human and artificial intelligence. Nature Medicine, 25(1), 44–56.

- Wieringa, M. (2020). What to account for when accounting for algorithms: A systematic literature review on algorithmic accountability. Proceedings of the 2020 Conference on Fairness, Accountability, and Transparency, 1–18.

- Yin, M., Wortman Vaughan, J., & Wallach, H. (2019). Understanding the effect of accuracy on trust in machine learning models. Proceedings of the 2019 CHI Conference on Human Factors in Computing Systems, 1–12.

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).