Submitted:

16 April 2026

Posted:

20 April 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Related Work

3. Certification-Oriented System-Level Analysis Framework

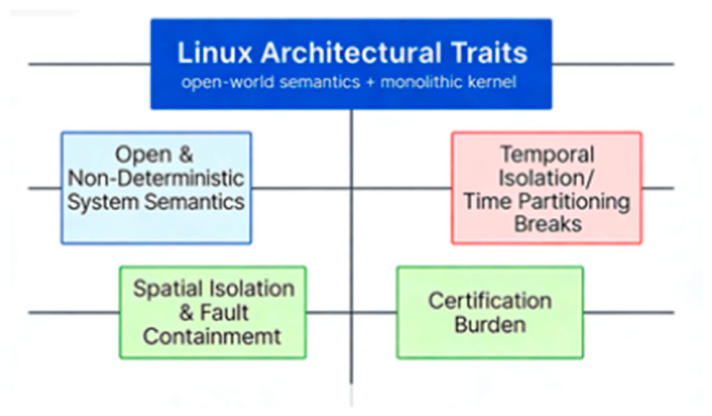

3.1. Characterization of Linux Architectural Semantics

3.2. Identification of Independently Sufficient Certification-Relevant Risk Factors

3.3. Unified Causal Framework and Examination of Mitigation Narratives

4. Certification-Relevant Architectural Risk Factors of Linux in Safety-Critical Avionics

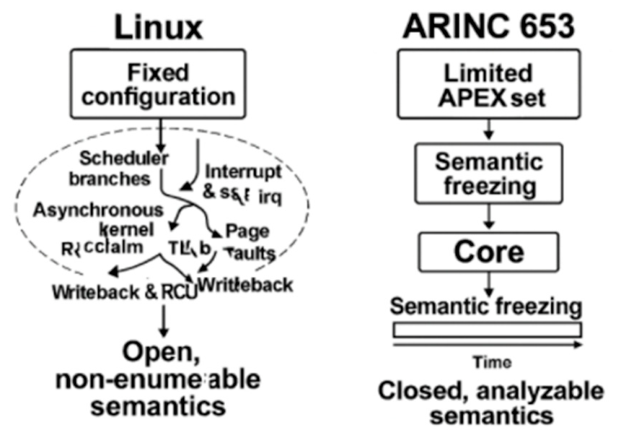

4.1. Open Execution Semantics with Difficult-to-Bound Behavior

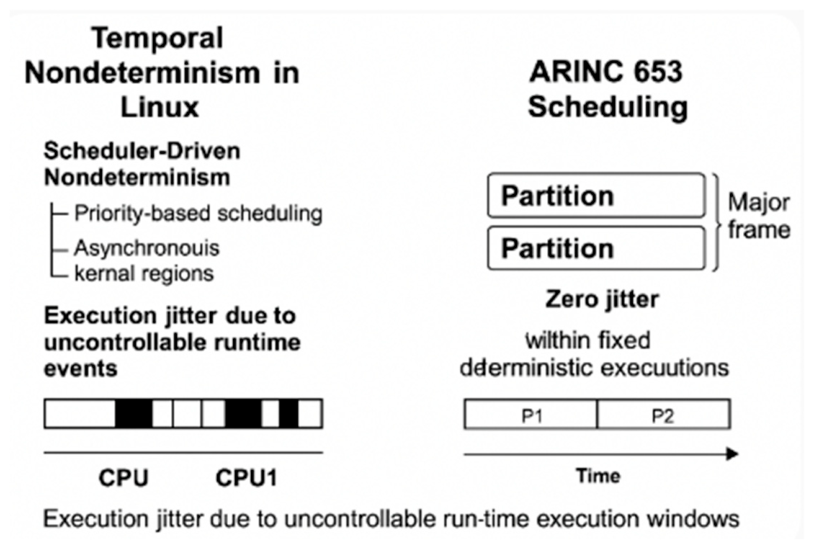

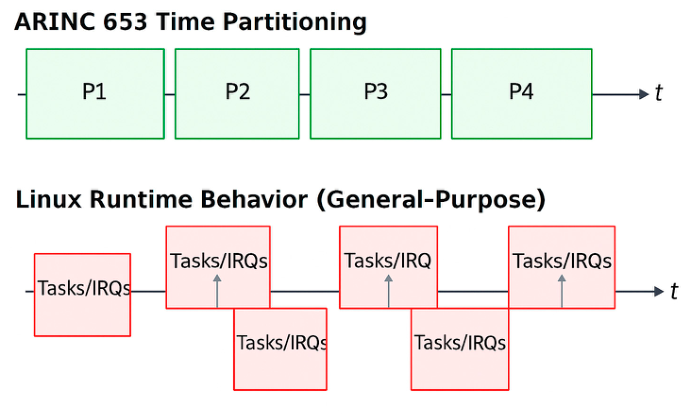

4.2. Lack of Temporal Determinism

4.2.1. Scheduler-Driven Nondeterminism

- spinlock-protected critical sections,

- per-CPU data updates,

- scheduler state transitions,

- low-level exception-handling paths.

4.2.2. Event-Driven (Asynchronous) Nondeterminism

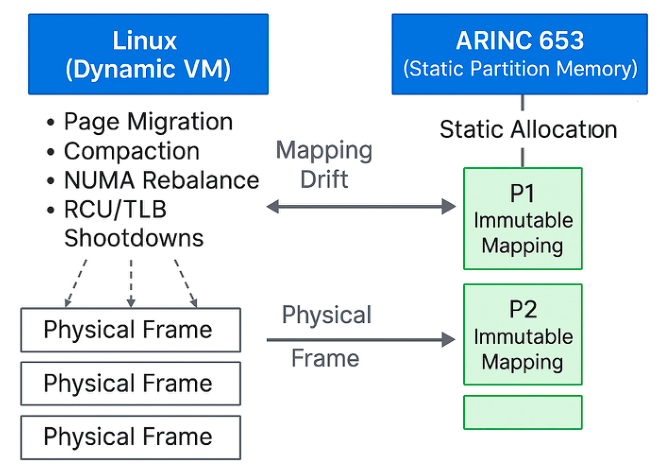

4.3. No Physical Memory Isolation

- Demand paging and on-demand allocation. Page tables are populated lazily, and physical pages may be allocated or remapped during runtime based on memory pressure and process behavior.

- Page reclaim and compaction. Under memory pressure, the kernel evicts or relocates physical pages, invoking reclaim, compaction, or write-back paths that modify page-table mappings without application involvement.

- Dynamic page-table updates and Translation Lookaside Buffer (TLB) shootdowns. Linux frequently updates page attributes, permission bits, and mapping structures, triggering cross-CPU TLB invalidations and modifying the effective physical-memory layout during operation.

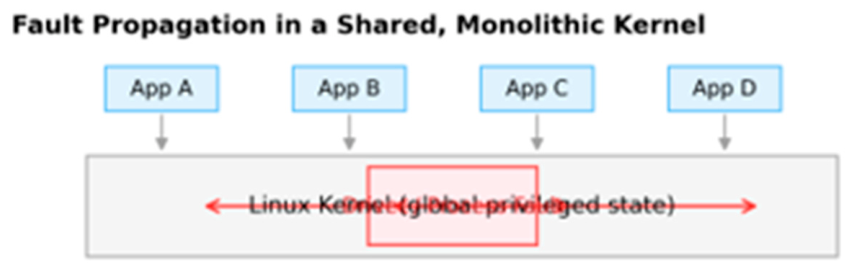

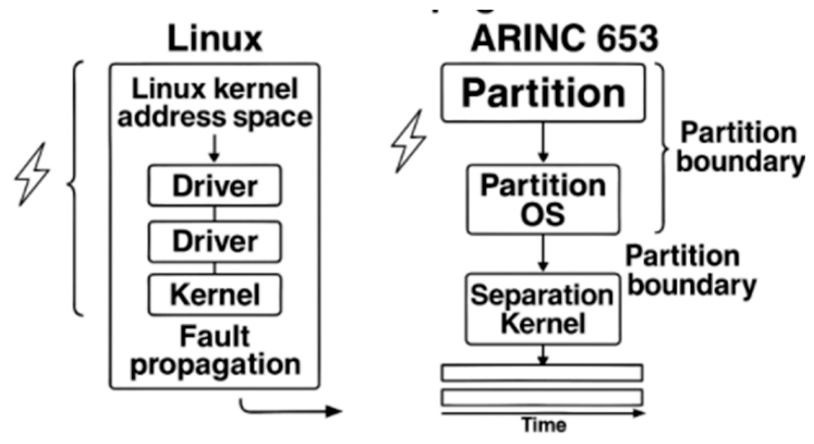

4.4. Lack of Fault Isolation

- shared kernel memory regions used by subsystems such as the scheduler, virtual file system (VFS), networking, and memory management;

- global locks and synchronization primitives that serialize access across unrelated components;

- reference counters and object life-cycle structures (e.g., file descriptors, network buffers, slab objects);

- interrupt-handling and softirq pathways, which execute in global kernel context and can therefore amplify system-wide impact once shared kernel state is corrupted.

- shared and dynamically managed physical-memory pools and page-table state, rather than fixed, non-overlapping, MMU-enforced partition memory regions;

4.5. Driver Contamination of Kernel Global State

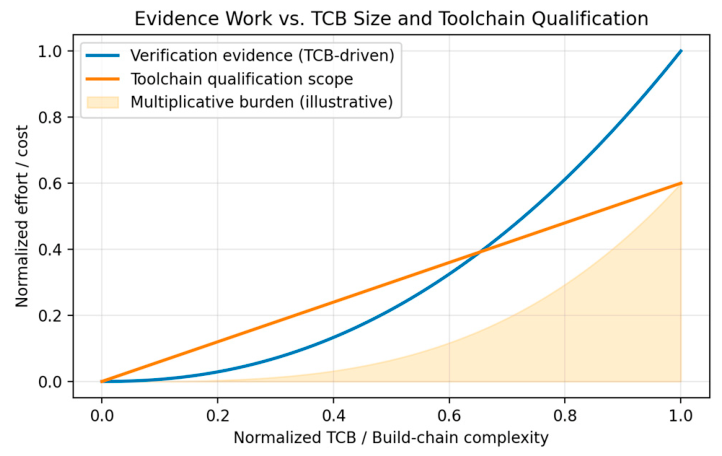

4.6. Overly Large Trusted Computing Base (TCB)

4.6.1. Planning Process Impact (PSAC, Standards, Objectives Allocation)

- Declaring all kernel subsystems, bundled drivers, and architecture-specific code paths that can affect safety objectives as part of the certifiable baseline, and assigning appropriate DAL driven objectives in the PSAC.

- Producing planning artifacts (PSAC; SDP, Software Development Plan; SVP, Software Verification Plan; SCMP, Software Configuration Management Plan; SQAP, Software Quality Assurance Plan) that define the software life cycle, transition criteria, and feedback mechanisms, and that also define the software life cycle environment (methods/tools) used to develop, verify, build, and load the kernel—placing these plans and standards under change control and review (DO-178C 4).

- Defining and maintaining an assurance strategy for kernel-wide concurrency, shared memory, interrupts, scheduling, and dynamic allocation, while also controlling the development environment (compiler/linker/loader versions and options). DO-178C notes that introducing new compiler/linker/loader versions or changing options may invalidate prior tests and coverage and requires planned re-verification means (DO-178C 4.4.2(c)).

4.6.2. Development Process Impact (Requirements, Design, Code)

- The kernel contains vast quantities of implementation-driven code, developed incrementally without DAL-style requirements decomposition,

- Core subsystems (scheduler, memory management (MM), VFS, network stack, timers, softirq) lack formalized HLR/LLR specifications, making DO-178C-style bi-directional traceability difficult to establish at the kernel scale.

- DO-178C code-level objectives typically require abnormal and exception paths to be explicitly defined and verified. Linux does not systematically enforce complete exception-path handling across kernel decision logic; achieving DAL A/B conformance would require substantial refactoring and added defensive code.

4.6.3. Verification Process Impact (Reviews, Test, MC/DC, Robustness)

- Verification of the integrated Executable Object Code against intended requirements, including normal- and abnormal-condition behavior (DO-178C 6.1(d) and 6.1(e)).

- Requirements-based testing, including robustness (abnormal-range) test cases and procedures, executed in appropriate environments (DO-178C 6.4.1, 6.4.2, and 6.4.3, especially 6.4.2.2).

- Detailed review of integration outputs (compiling/linking/loading data and memory map) to confirm that the delivered executable configuration is consistent with the approved baseline (DO-178C 6.3.5).

- Test-coverage analyses to confirm requirements-based coverage and structural coverage up to source-level modified condition/decision coverage (MC/DC) (as applicable) (DO-178C 6.4.4.1 and 6.4.4.2).

- Resolving any uncovered, dead/deactivated, or requirements-unjustified code and maintaining bidirectional verification traceability (requirements ↔ tests/procedures/results), to demonstrate the absence of unintended functionality (DO-178C 6.4.4.3, 6.5, and 6.1(d)).

- Reviews/analyses (DO-178C 6.3.4): establishing verifiability and correctness across the full kernel—especially under interrupts and asynchronous execution—requires bounding and reviewing a very large set of interacting subsystems and configurations.

- Requirements-based testing and robustness (DO-178C 6.4.1–6.4.3): many kernel behaviors (e.g., reclaim, workqueues, softirq, and RCU) are triggered by workload-dependent conditions and asynchronous events, making abnormal-range testing and deterministic bounding of timing behavior (including WCET) difficult to close across operating conditions.

- Integration outputs review (DO-178C 6.3.5): the delivered executable configuration depends on large build-time configuration spaces (modules, Kconfig, architecture variants) and many intermediate artifacts, complicating consistent baselining and repeatable regeneration of integration outputs.

- Coverage analyses and closure (DO-178C 6.4.4.1–6.4.4.2): achieving MC/DC at kernel scale implies analyzing and justifying tens of thousands of decision points. Many of these decisions occur in code with high cyclomatic complexity and in paths that are configuration- and platform-dependent, which further complicates coverage closure.

- Dead/deactivated/extraneous code and traceability (DO-178C 6.4.4.3, 6.5, 6.1(d)): to support many hardware targets, the kernel source tree contains large amounts of architecture- and platform-specific driver code that is often not built for a given target configuration. Together with rarely executed fallback/error paths and debug logic, this makes it harder to demonstrate that all delivered functionality is requirement-justified, to close coverage on the enabled configuration, and to keep bidirectional traceability complete and stable across configuration variants.

4.6.4. Configuration Management (SCMP, Baseline Control, Change Records)

4.6.5. Quality Assurance (SQAP, Process Audits, Independence Requirements)

- Upstream kernel development is performed by a large, distributed community, so an applicant cannot realistically audit the full set of life cycle processes for plan/standard compliance or systematically manage approved deviations at the scale envisioned by SQA audits (DO-178C 8.2(d)), nor can it treat upstream change sources as controlled “suppliers” in the DO-178C sense without establishing a separate, project-owned governance and audit regime (DO-178C 8.2(i)).

- The kernel’s enormous TCB makes effective independent verification and SQA oversight impractical: auditing process execution, tracking deviations and problem reports to closure, and performing a meaningful software conformity review that demonstrates completeness and regenerability of the delivered executable configuration (DO-178C 8.3) becomes prohibitively large in scope when the OS itself is part of the certified baseline.

4.7. Complex Toolchain Imposes Prohibitive DO-330 Qualification Burden

4.8. Continuous Patch Stream Destabilizes Certified Baselines

- hardware Memory Management Unit (MMU) isolation with statically defined, non-overlapping physical memory regions;

- a minimal, rigorously verified separation kernel responsible only for scheduling partitions and mediating controlled inter-process communication (IPC);

- complete disallowance of shared kernel-writable global state between partitions;

- static temporal partitioning with fixed, predefined time windows to guarantee CPU execution isolation between partitions;

- hierarchical health monitoring (HM) mechanisms supporting fault detection, containment, and recovery at process, partition, and system levels;

- fault-containment boundaries that ensure a failure inside one partition cannot corrupt the separation kernel or any other partition;

- partition placement of complex services (e.g., device drivers, networking stacks, and I/O services) outside the certified kernel, so that faults in these components do not directly mutate kernel-internal global state and can be contained by partition boundaries;

- a tightly controlled and auditable certification baseline (configuration identification, change records, and reproducible release configurations) to support long-term evidence maintenance under DO-178C DAL A/B;

- a bounded and stable build-and-load tool environment, with controlled compiling/linking/loading data, to reduce certification-relevant toolchain surface and simplify repeatability and review.

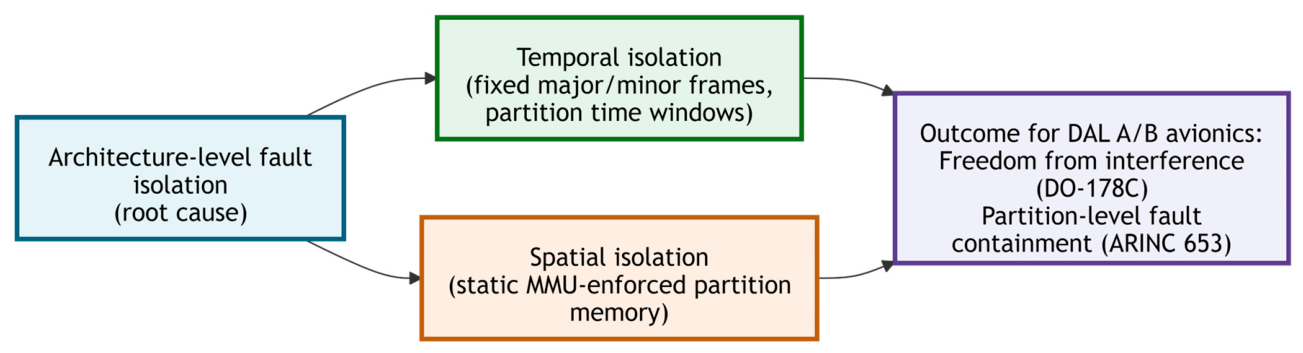

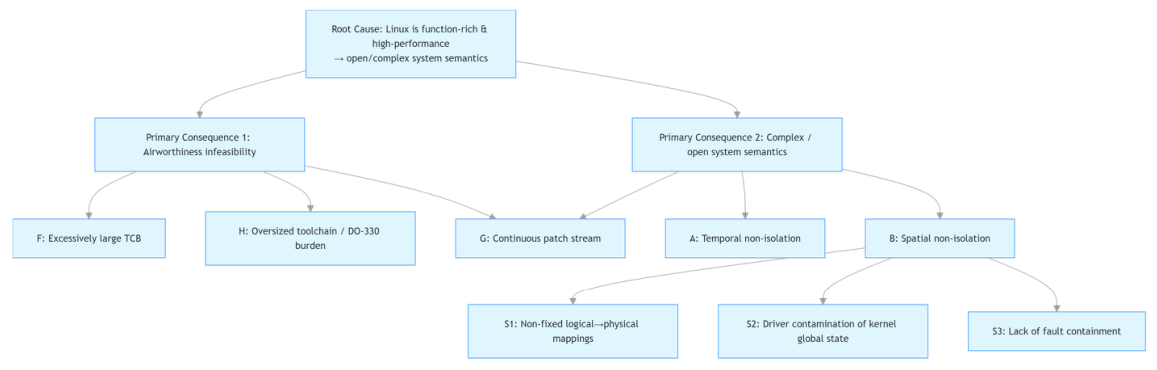

5. Unified Causal Structure of Linux’s Certification Challenges

5.1. Airworthiness Infeasibility

5.1.1. An Excessively Large Trusted Computing Base (TCB) (Section 4.6)5.1.2. A Highly Complex Toolchain That Drives DO-330 Qualification Burdens (Section 4.7)

5.1.3. Continuous Patch-Stream Evolution (Section 4.8)

5.2. Complex and Open System Semantics (Section 4.1)

5.2.1. Temporal Non-Isolation – Arising from Asynchronous Kernel Activity, Dynamic Scheduling Behavior, and Non-Preemptible Regions (Section 4.2)

5.2.2. Spatial Non-Isolation – Further Decomposing into Three Architectural Mechanisms

- Dynamic physical-memory ownership and page-table state (i.e., non-immutable logical-to-physical mappings), (Section 4.3)

- Driver-induced contamination of globally shared kernel state, (Section 4.5)

- Lack of enforced fault-containment boundaries. (Section 4.4)

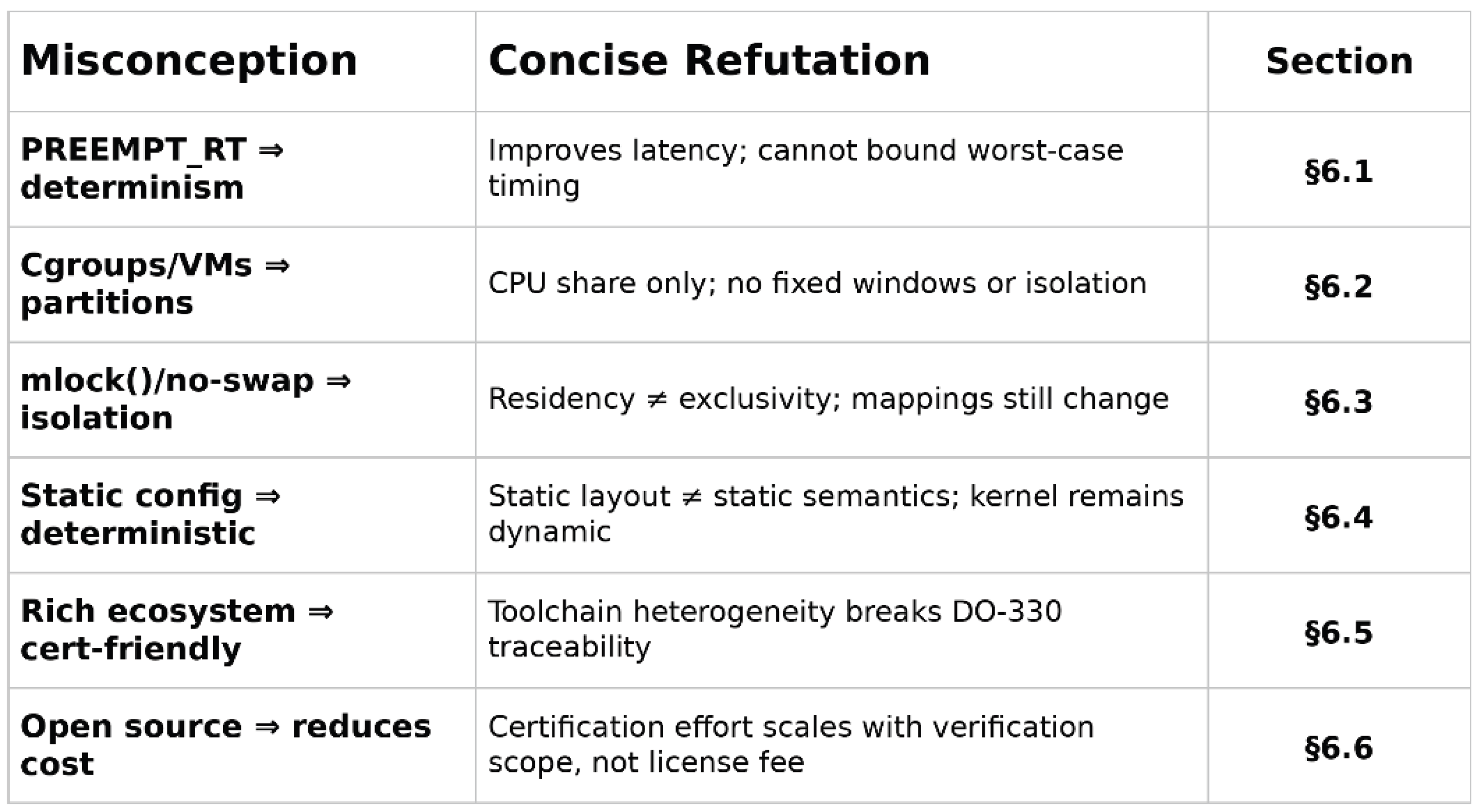

6. Common Misunderstandings and Why They Fail

6.1. PREEMPT_RT = Determinism

6.1.1. Misconception

6.1.2. Reality

- interrupt request (IRQ) exit softirq cascades;

- memory management events (page faults, reclaim, compaction)

- cross-CPU Translation Lookaside Buffer (TLB) shootdowns that can outrank RT tasks;

- driver execution time that depends on firmware/direct memory access (DMA) completion/lock contention;

- dynamic voltage and frequency scaling (DVFS) and CPU C-state exits that introduce microarchitectural stalls outside scheduler control;

- dynamic scheduler behavior (wakeup rules, load balancing, task migration, housekeeping threads) that produces runtime-dependent jitter.

6.1.3. Avionics Impact

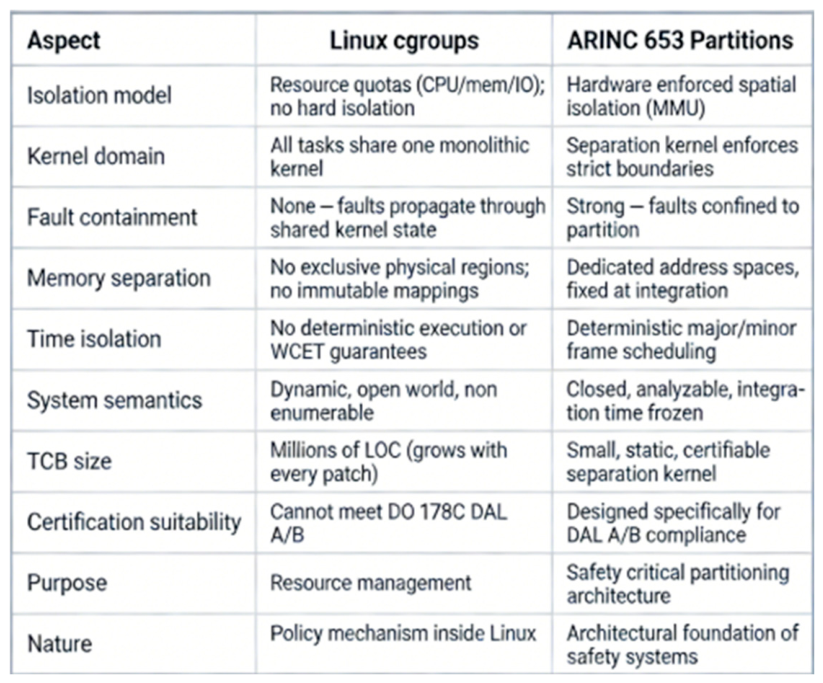

6.2. Containers/Cgroups = Partitioning

6.2.1. Misconception

6.2.2. Reality

6.2.3. Avionics Impact

- Temporal isolation: cgroup CPU control provides proportional shares rather than fixed execution windows, so it does not resolve the analyzability and interference-bounding concerns discussed in Section 4.2.

- Spatial isolation: cgroups do not provide hardware-enforced, immutable physical-memory ownership; Linux’s dynamic memory-management behavior (Section 4.3) therefore still applies.

- Fault isolation: containers/cgroups still share the same privileged kernel and driver domain, so fault-propagation concerns via shared kernel state remain (Section 4.4 and Section 4.5).

6.3. Using Mlock() and Disabling Swap to Fix Partition Memory

6.3.1. Misconception

6.3.2. Reality

6.3.3. Avionics Impact

6.4. Static Configuration Makes Linux Deterministic

6.4.1. Misconception

6.4.2. Reality

6.4.3. Avionics Impact

6.5. Abundant Linux Ecosystem

6.5.1. Misconception

6.5.2. Reality

6.5.3. Avionics Impact

6.6. Open Source = Reduces Cost

6.6.1. Misconception

6.6.2. Reality

6.6.3. Avionics Impact

- Evidence volume across the full chain of requirements, design, code, verification, and coverage.

- Baseline volatility: frequent changes (e.g., patches, toolchain upgrades, configuration drift) expand impact analysis, regression testing, and evidence refresh scope.

- Independence and quality assurance activities (e.g., SQA, independent verification/validation as applicable), including reviews, compliance audits, and objective evidence management.

- Authority/assessor engagement overhead borne by the applicant: preparation and conduct of reviews/audits (e.g., SOI-1 to SOI-4—Stages of Involvement), responses and findings closure, plus supporting logistics such as secure facilities, tooling access, and on-site support.

- Supplier and version control across the lifecycle, including long-term reproducibility, auditability, and traceability of all build inputs, tools, and delivered configurations.

7. Recommended Avionics OS/Platform Classes

7.1. ARINC 653 Partitioning Kernels

- RTEMS + AIR

- POK

- JetOS

- Table-driven major/minor-frame scheduling

- Hardware-enforced spatial isolation

- Minimal separation-kernel TCB

- Deterministic inter-partition IPC

7.2. High-Assurance Separation Kernels with Userspace ARINC Services

- Muen SK

- Minimal trusted computing base (on the order of 10–20 KLOC)

- Strict capability-based authority

- Provable fault isolation

- Support for deterministic mixed-criticality scheduling

7.3. Commercial Certifiable RTOS Platforms

- VxWorks 653

- INTEGRITY-178B

- LynxOS-178

- PikeOS

- DeOS

- Controlled and frozen baselines

- Vendor-qualified toolchains

- Well-established certification artifacts

- Deterministic partitioning services

8. Conclusions

References

- ARINC Industry Activities, ARINC Specification 653P1-3: Avionics Application Software Standard Interface, Part 1, Annapolis, MD, USA: ARINC, 2015.

- ARINC Industry Activities, ARINC Specification 653P3-2: Avionics Application Software Standard Interface, Part 3, Annapolis, MD, USA: ARINC, 2014.

- RTCA Inc., DO-178C: Software Considerations in Airborne Systems and Equipment Certification, Washington, DC, USA: RTCA, 2011.

- EUROCAE, ED-12C: Software Considerations in Airborne Systems and Equipment Certification, Paris, France: EUROCAE, 2011.

- RTCA Inc., DO-297: Integrated Modular Avionics (IMA) Design Guidance and Certification Considerations, Washington, DC, USA: RTCA, 2005.

- RTCA Inc., DO-330: Software Tool Qualification Considerations, Washington, DC, USA: RTCA, 2011.

- SAE International, ARP4754A: Guidelines for Development of Civil Aircraft and Systems, Warrendale, PA, USA: SAE, 2010.

- P. Wang, Q. Li, and H. Xiong, “Time and space partitioning technology for integrated modular avionics systems,” J. Beijing Univ. Aeronaut. Astronaut., vol. 38, no. 6, pp. 721–726, 2012 (in Chinese).

- F. He, H. Xiong, and X. Zhou, “Overview of key technologies for ARINC 653 partitioned operating systems,” Acta Aeronaut. Astronaut. Sin., vol. 35, no. 7, pp. 1777–1796, 2014 (in Chinese).

- Y. Li, T. Zhou, and J. Li, “Research and implementation of airborne ARINC 653 partition operating system,” Comput. Eng. Appl., vol. 51, no. 20, pp. 235–240, 2015 (in Chinese).

- L. Chen, “Research on deterministic scheduling of avionics partition operating systems,” Ph.D. dissertation, Coll. Aeronaut. Eng., Nanjing Univ. Aeronaut. Astronaut., Nanjing, China, 2018 (in Chinese).

- R. Huang, “Research on ARINC 653 partition isolation mechanism for IMA,” Ph.D. dissertation, Sch. Electr. Eng., Northwestern Polytech. Univ., Xi’an, China, 2020 (in Chinese).

- Lopez, P. Parra, M. Urueña, et al., “XtratuM: a hypervisor for partitioned embedded real-time systems,” in Proc. 18th Int. Conf. Real-Time Netw. Syst. (RTNS), Paris, France: ACM, 2010, pp. 1–6.

- Crespo, P. Metge, and I. Lopez, LithOS: A Guest OS for ARINC 653 on XtratuM Hypervisor, Valencia, Spain: Univ. Politèc. Valencia, 2012.

- J. Delange, L. Pautet, and S. Faucou, “POK: an ARINC 653 compliant operating system for high-integrity systems,” in Reliable Software Technologies – Ada-Europe 2010, Berlin, Germany: Springer, 2010, pp. 172–185.

- B. Huber, A. Lackorzynski, A. Warg, et al., “seL4: formal verification of a high-assurance microkernel,” Commun. ACM, vol. 57, no. 3, pp. 107–115, 2014.

- Kuz, K. Elphinstone, G. Heiser, et al., “MCS: temporal isolation in the seL4 microkernel,” in Proc. 11th Oper. Syst. Platforms Embedded Real-Time Appl. (OSPERT), New York, NY, USA: IEEE, 2015, pp. 1–6.

- H. Härtig, A. Lackorzynski, and A. Warg, The Muen Separation Kernel: Design and Formal Verification, Dresden, Germany: Tech. Univ. Dresden, 2018.

- J. Rushby, Design and Verification of Secure Systems, Menlo Park, CA, USA: SRI Int., 1981.

- J. Rushby, “A kernelized architecture for safety-critical systems,” in Proc. IFIP Congr., Vienna, Austria, 1999, pp. 1–6.

- Wind River Systems Inc., VxWorks 653 Platform Datasheet. [Online]. Available: https://www.windriver.com. 2022.

- Green Hills Software Inc., INTEGRITY-178B RTOS for Avionics. [Online]. Available: https://www.ghs.com. 2021.

- SYSGO AG, PikeOS Safety-Certifiable RTOS and Hypervisor. [Online]. Available: https://www.sysgo.com. 2024.

- DDC-I Inc., DeOS Safety-Critical RTOS. [Online]. Available: https://www.ddci.com. 2024.

- D. Bovet and M. Cesati, Understanding the Linux Kernel, Sebastopol, CA, USA: O’Reilly Media, 2005.

- R. Love, Linux Kernel Development, Upper Saddle River, NJ, USA: Addison-Wesley, 2010.

- M. Gorman, Understanding the Linux Virtual Memory Manager, Upper Saddle River, NJ, USA: Prentice Hall, 2004.

- The Linux Kernel Organization, Linux Scheduler Documentation. [Online]. Available: https://docs.kernel.org/scheduler/. 2024.

- The Linux Kernel Organization, Linux Memory Management Documentation. [Online]. Available: https://docs.kernel.org/mm/. 2024.

- T. Gleixner, PREEMPT_RT Patch Overview and Design Philosophy. San Francisco, CA, USA: Linux Foundation, 2019. [Online]. Available: https://wiki.linuxfoundation.org/realtime/start.

- The Linux Kernel Organization, kbuild: The Linux Kernel Build System. [Online]. Available: https://docs.kernel.org/kbuild/. 2024.

- The Yocto Project, Yocto Project Mega-Manual. [Online]. Available: https://www.yoctoproject.org. 2024.

- Device Tree Working Group, Device Tree Specification. [Online]. Available: https://www.devicetree.org. 2024.

- H. Zhao, S. Gao, and Y. Yang, “Applicability analysis of airborne software based on Linux real-time extension,” Comput. Eng., vol. 43, no. S1, pp. 311–315, 2017.

- a653rs Contributors, a653rs-linux: ARINC 653 Emulation on Linux. [Online]. Available: https://github.com/a653rs. 2024.

- ELISA Project, FAQs – ELISA: Enabling Linux in Safety Applications. [Online]. Available: https://elisa.tech/about/faqs/.

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the author. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.