1. Introduction

Wildfires have emerged as one of the most destructive and fast-growing natural hazards in the U.S., with far-reaching impacts on communities, ecosystems, public health, and economy. In 2023, the U.S. recorded 56,580 wildfires that burned 2.7 million acres, destroyed at least 4,318 structures, and caused insured losses exceeding $1.6 billion—figures that rise substantially when accounting for uninsured assets, as well as indirect and long-term losses (Jones Ben, n.d.; National Interagency Coordination Center Wildland Fire Summary and Statistics Annual Report 2023, n.d.). An important part of wildfire management strategy, active-fire response and decision making necessitates wildfire monitoring solutions that are fast, accurate, cost-effective, and spatially detailed. In operational contexts, detecting fire presence alone is insufficient; what’s required is the delivery of georeferenced maps that precisely locate active fires and quantify their intensity, ideally in near-real-time. Rapid fire spread makes real-time monitoring critical for situational awareness, decision making, and emergency response.

Traditional wildfire monitoring systems—such as ground-based cameras, airborne sensors, and human reports—provide localized insights but suffer from information latency, high deployment costs, coverage gaps, and logistical challenges, especially when monitoring large or inaccessible areas. In contrast, satellite remote sensing offers a scalable and consistent means of observing vast landscapes. Among available satellites, polar-orbiting platforms such as Visible Infrared Imaging Radiometer Suite (VIIRS) and Moderate Resolution Imaging Spectroradiometer (MODIS) provide global coverage with spatial resolutions that are relevant to the wildfire management problems (i.e., 375 m and 1 km respectively); however, their temporal frequency is limited to just a few overpasses per day, which is often insufficient for tracking the rapid progression of wildfires. Geostationary satellites offer a compelling alternative. While they observe only a portion of the Earth’s surface, they provide continuous coverage over large regions at higher temporal resolution. The Geostationary Operational Environmental Satellite-R (GOES-R) series (GOES-16, 17, and 18), for example, continuously monitor the Americas and can capture the evolution of wildfires at 5-minute intervals. Despite its coarser spatial resolution (~2 km infrared channels), the temporal granularity of GOES imagery makes it a valuable resource for near-real-time wildfire monitoring.

Traditional approaches to wildfire detection using GOES imagery are primarily threshold-based. One of the most widely used methods is the Wildfire Automated Biomass Burning Algorithm (WF-ABBA) (Koltunov et al., 2012), which has played a foundational role in operational fire detection. WF-ABBA is a dynamic contextual thresholding algorithm that utilizes the 3.9 μm mid-infrared and 10.7 μm thermal infrared bands, along with the visible band when available. It also incorporates the 12 μm split-window band to help distinguish hot targets from opaque cloud cover. Despite its success, WF-ABBA suffers from limitations, including a high false-alarm rate during daytime, coarse spatial resolution, and an inability to resolve small or low-intensity fires. To improve reliability, alternative thresholding-based frameworks such as the GOES Early Fire Detection (GOES-EFD) system (Koltunov et al., n.d.) have been introduced, yet these methods remain constrained by predefined rules and sensitivity to atmospheric conditions.

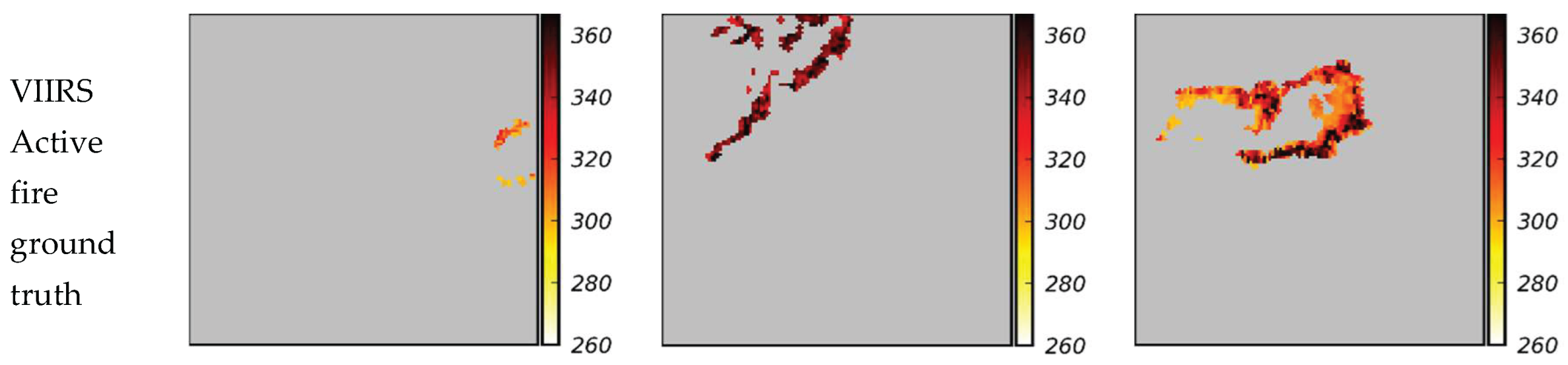

To overcome the limitations of thresholding-based detection method, recent studies have explored deep learning (DL) models for automatic fire segmentation using satellite imagery. Toan (2019)proposed one of the earliest DL studies to leverage GOES imagery for wildfire detection. This work is notable for being the first to aim toward a real-time streaming platform for wildfire monitoring based on pixel-wise classification. The authors utilized all 16 spectral bands from GOES-R, treating each multiband image as a 3D input volume. The proposed model is a simple deep convolutional neural network (CNNs) composed of 3D convolutional layers followed by fully connected layers, trained to classify each pixel as either fire or non-fire. Building upon these advances, Zhao and Ban (2022) employed a temporal modeling strategy that applies Gated Recurrent Units (GRUs) to GOES-R time series imagery, the network captures dynamic fire behavior over time. The input to the model was preprocessed using dedicated spectral indices, including a normalized difference between the Middle Infrared (MIR) and Thermal Infrared (TIR) bands to enhance fire signals, and a custom smoke/cloud mask based on the 12 μm band (Band 15). Using VIIRS active fire points as supervisory labels, the network was trained to segment burning areas. The results showed improved early detection capability and spatial localization compared to the GOES-R, while also reducing false positive detection in mid-latitude regions. These developments demonstrate a growing shift toward data-driven and temporally-aware (i.e., considering past snapshots) fire detection systems using GOES data. However, despite these advances, past studies (e.g., (Koltunov et al., 2012; Zhao and Ban, 2022)) have either combined subsets of GOES bands using predefined equations and thresholds, which can lead to loss of information, or utilized all 16 channels as input, which is computationally expensive and may not fully exploit their distinct spectral properties. Additionally, past studies (e.g., (Malik et al., 2021; Toan, 2019)) are restricted to limited spatial domains, which hinders generalizability and operational scalability. Moreover, many models (e.g., (Badhan et al., 2024; Saleh et al., 2024; Toan, 2019)) rely on polar-orbiting VIIRS/MODIS data for supervision without accounting for the spatial and temporal disparities between GOES and VIIRS observation. These limitations motivate the need for an improved development that can operate in near-real-time, leverage optimal spectral bands, and generalize across diverse fire-prone regions without requiring temporal sequences.

In a recent work (Badhan et al., 2024), a DL framework was developed to enhance the spatial resolution of GOES-17 wildfire imagery. The model was trained using contemporaneous GOES-VIIRS observation pairs. Its objective was to produce VIIRS-like resolution at GOES’s high temporal frequency, enabling more accurate estimation of fire location and brightness temperature (BT). Although the potential of this approach was demonstrated, it tends to underestimate BT values compared to the VIIRS ground truth. To address this critical challenge, here we propose a revised and more general framework for near real-time wildfire monitoring across the continental U.S. A key novelty of this work is a two-step DL pipeline, which is designed to address the underestimation of the BT values at fire locations. In this approach, segmentation is first employed to isolate fire-affected regions, followed by regression to estimate pixel-level BT. The outputs of these two steps are then combined to enable more accurate delineation of fire boundaries and mapping of BT values. The two-step pipeline allows two separate models to specialize in their respective objectives of spatial localization and BT estimation, leading to more accurate results than the single-step approach in the prior work (Badhan et al., 2024), as will be presented later in the paper.

Furthermore, several other technical advancements are introduced over the previous work (Badhan et al., 2024) and existing literature to improve the performance of the wildfire monitoring framework. These include leveraging a broader set of GOES spectral channels to correct for environmental effects, refining preprocessing through parallax correction and normalization, and improving the data pre-processing procedure. We also systematically examine the effects of modeling choices, such as background value selection and loss function design, through a comprehensive ablation study summarized in appendix. Collectively, these advancements address the key limitations of the earlier model and enable higher-fidelity BT estimation, more accurate fire boundary delineation, and a scalable framework for continuous wildfire monitoring at GOES’s 5-minute observation interval.

The remainder of the paper is organized as follows.

Section 2 describes the satellite data sources, including VIIRS and GOES, and outlines the preprocessing steps for dataset construction and alignment.

Section 3 details the proposed two-step segmentation-regression approach, including the DL architectures, loss functions, evaluation metrics, and ablation study setup.

Section 4 presents the training and testing results, including both quantitative and visual evaluations, and discusses the impact of different background values and loss functions through ablation studies. The performance of the new framework is compared with the previous work to demonstrate the improvement in both fire boundary and BT estimation. Finally,

Section 5 concludes the paper with a summary of key findings and suggestions for future work.

2. Data Sources and Preprocessing

In this study, a wildfire dataset is developed to support training the supervised DL for active fire monitoring. The dataset is constructed from open-source satellite imagery and products, including low spatial resolution GOES imagery used as the model input and the VIIRS Active Fire Product serving as the ground truth. This work advances the prior efforts (Badhan et al., 2024) by improving data quality, diversifying data through expanding spatial and temporal fire event coverage, incorporating additional spectral features, and implementing corrections to reduce spatial and temporal misalignments. Data sources, area of study, and the dataset construction and corrections are presented in this section.

2.1. Products and Data Sources

Two primary satellite products are used to construct the dataset: VIIRS I-band Active Fire Product (Schroeder et al., 2025) and GOES ABI Level 1B Radiance product (ABI-L1B-Rad) (Hager and Lemieux, n.d.). VIIRS I-band Active Fire Product provides near-real-time active fire detections. VIIRS is onboard the Suomi NPP and NOAA-20 satellites, both operating in low earth polar orbits with approximately 12-hour revisit time. Each satellite provides two 12-hours interval observations per day (one during daytime and one at night for most locations). The VIIRS I-band Active Fire Product detects fire locations using an algorithm described in the Algorithm Theoretical Basis Documents (ATBD) (Schroeder et al., 2014; Ti, 2016; Visible Infrared Imaging Radiometer Suite (VIIRS) 375 m Active Fire Detection and Characterization Algorithm Theoretical Basis Document 1.0, 2016), which integrates multiple spectral bands to identify thermal anomalies. The VIIRS fire detection algorithm identifies active fires through a sequence of fixed threshold and contextual tests. The algorithm uses BT values from channels I4 (3.55–3.93 µm) and I5 (10.5–12.4 µm), reflectance from I1 (0.60–0.68 μm), I2 (0.846–0.885 μm), and I3 (1.58–1.64 μm), along with geometric parameters such as solar and view zenith angles and relative azimuth.

The algorithm begins by masking optically thick clouds, and most water bodies using BT value and reflectance thresholds from I1, I2, and I3. To reduce false positives, additional exclusion tests are applied to identify and flag pixels affected by sun glint or bright surfaces (e.g., urban areas or sands) using geometric parameters and reflectance-based criteria. Fire candidate pixels are initially flagged based on fixed thermal thresholds in the I4 and I5 infrared channels capturing strong thermal anomalies typical of active fires. For each candidate pixel, a dynamic background is estimated from surrounding valid pixels, which are not obscured by clouds, are not already flagged as fire, are not marked as low quality, and share the same surface classification (land or water) as the candidate. This background is calculated as the median I4 value within a 501×501 pixels window. Contextual fire detection tests then refine the classification by comparing the candidate’s thermal signal to the background using a dynamically expanding window (11×11 to 31×31 pixels), computing both absolute (e.g., I4 BT value) and relative (e.g., I4–I5) BT differences. If insufficient valid pixels are available, the pixel is labeled “unclassified”. Candidates that pass contextual tests are assigned a confidence level: low, nominal, or high, based on the strength of their anomaly, viewing geometry, and potential contamination. Secondary tests further identify residual low-confidence fires and screen out remaining false positives, particularly in sun glint zones and regions affected by the south Atlantic magnetic anomaly. The resulting detections are provided in the CSV format and include fire coordinates, confidence levels, BT values from the I4 and I5 channels, and Fire Radiative Power (FRP). This product serves as the ground truth in this study.

GOES ABI-L1B-Rad, the second satellite product, provides radiances measurements from the Advanced Baseline Imager (ABI). The radiance values are converted to BT values in this study. The ABI sensor captures data across 16 spectral bands, spanning from the visible spectrum (0.47 µm) to the infrared (13.3 µm), some of which are suitable for thermal anomaly detection. This data is provided in the NetCDF format and sourced from the GOES-East and GOES-West satellites. Together, the GOES satellites offer broad coverage across the western hemisphere and enable continuous multispectral observation with temporal resolutions of 5 minutes. While these observations are more frequent than those from VIIRS, they have lower spatial resolution due to the higher orbital altitude of GOES. GOES-16 operates as GOES-East; GOES-18, which replaced GOES-17 on January 2023, operates as GOES-West (“Earth from Orbit: NOAA’s GOES-18 is now GOES West,” n.d.). To ensure uninterrupted spatial and temporal coverage, the data from GOES-16, GOES-17, and GOES-18 are included by selecting the appropriate satellite based on each wildfire’s location and timestamp.

We considered three spectral bands from the GOES product as input to the DL model towards improving the accuracy of active fire prediction. Specifically, Bands 7 (3.80–4.00 µm), 14 (10.8–11.6 µm), and 15 (11.8–12.8 µm) are chosen to leverage complementary thermal characteristics across the middle and thermal infrared ranges. Band 7 (Middle Infrared, MIR) is sensitive to temperature associated with active fires and is commonly used in operational fire detection products (Schmidt, n.d.). Band 14 and Band 15 (Thermal Infrared, TIR) provide BT values that help remove cloud coverage (Barducci et al., 2004).Zhao and Ban (2022) introduced a multi-spectral fire detection approach that combines GOES Bands 7, 14, and 15 using a set of equations to identify active fires through the normalized difference between Bands 7 and 14, followed by cloud removal using Band 15. Their approach reduces the multi-band input to a single-channel representation, which is then used as input to a DL model for fire segmentation. While this approach produces a single-channel input for segmentation that captures the general location of fire activity, the outputs often do not align precisely with ground truth fire perimeters. In the proposed DL framework in this study, all three GOES Bands 7, 14, and 15 are provided as individual inputs to the model, allowing more intricate and adaptive relationships to be learned for detecting fire locations rather than relying on a predefined combination of bands. By leveraging multispectral context, the model is anticipated to achieve higher accuracy in detecting and mapping fires compared to a single-band method.

2.2. Dataset Construction and Preprocessing

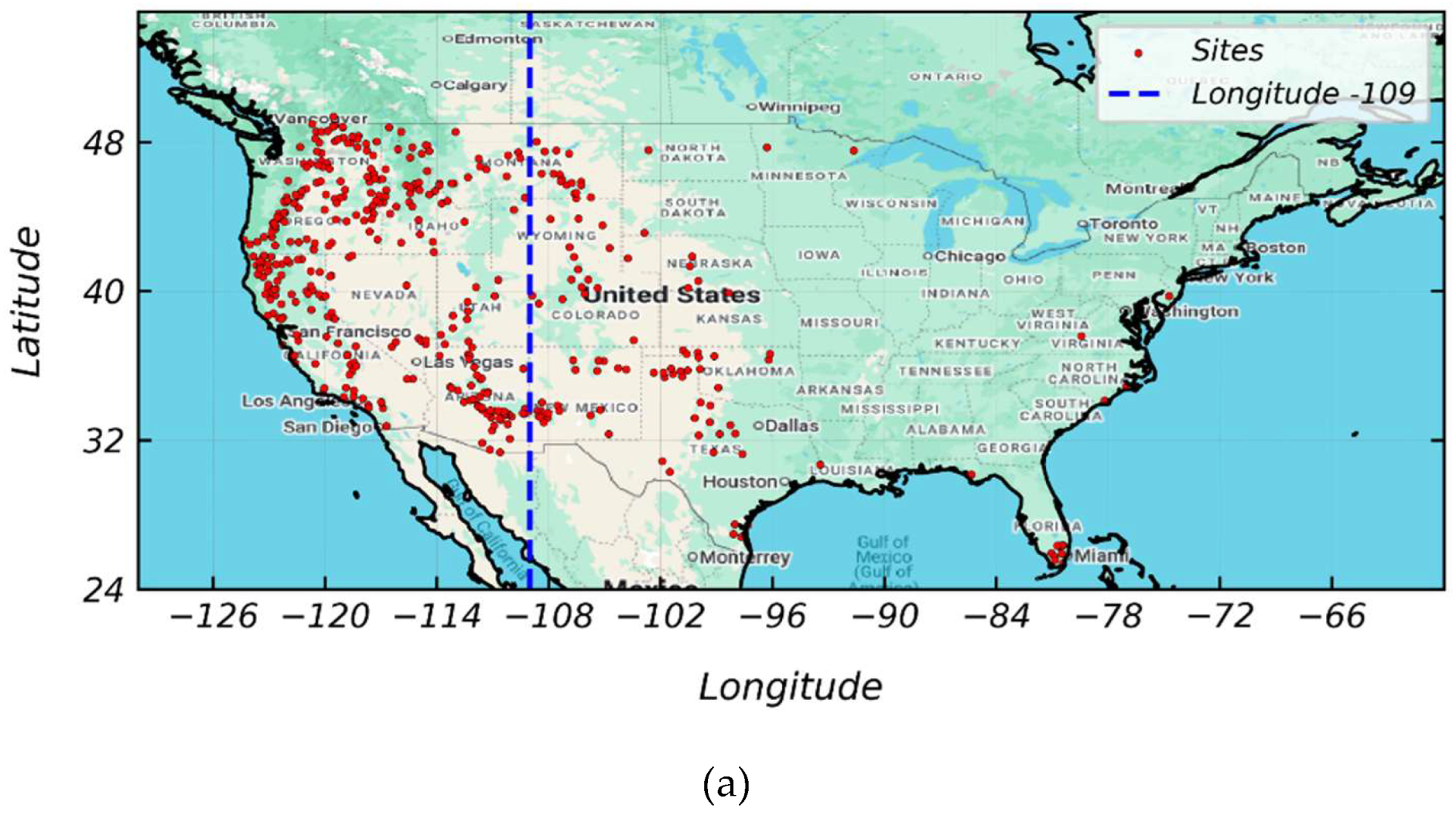

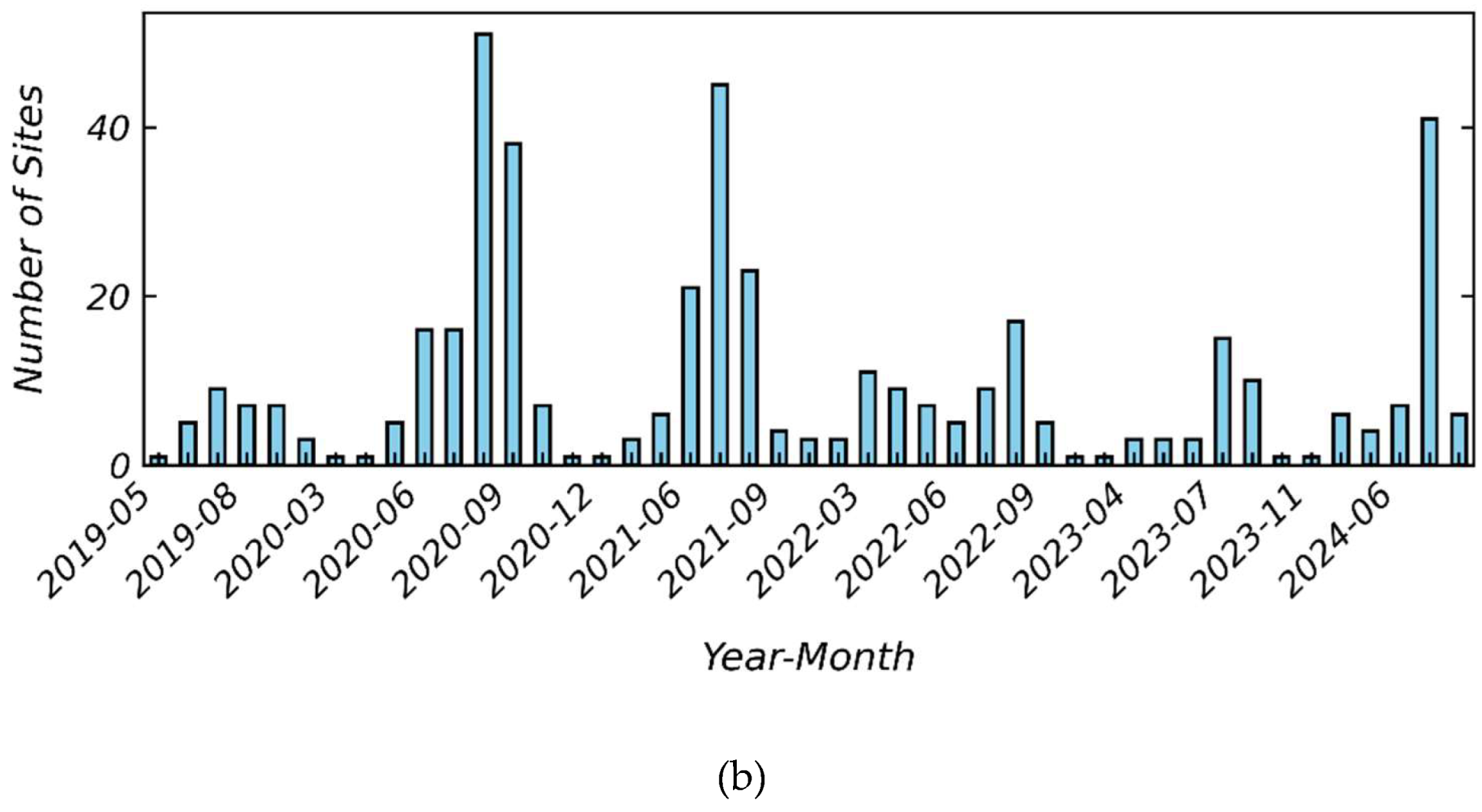

To support high-quality supervised learning and improve generalization across diverse landscapes and fire conditions, this study selects wildfire events spanning the continental U.S. (CONUS). The selected events cover a wide range of geographic regions and various times of year, supporting generalized model development. The wildfire events are selected from the Wildland Fire Incident Locations dataset provided by the National Interagency Fire Center (National Interagency Fire Center (NIFC), n.d.) database, a public platform that tracks major fire incidents. To ensure the fires are clearly visible in satellite images, only fires that burned more than 10,000 acres are included. This threshold provides a balance between the number of events and the spatial prominence of fire signals. Using this criterion, 208 wildfire events from 2019 to 2024 are selected.

Figure 1.a depicts the spatial distribution of these fire events, and

Figure 1.b summarizes their temporal distribution by month. Together, these figures highlight the broad geographic and temporal coverage of the dataset. In

Figure 1a, a dashed blue line at longitude –109° marks the boundary (as defined in this study) between GOES-East and GOES-West coverage zones (Schmit et al., 2017).

To construct the dataset, a region of interest (ROI) is defined for each wildfire event. For simplicity and consistency, we fix the ROI at 1.2 degrees in both latitude and longitude and center it on the wildfire site’s central coordinates. Within each ROI, data from GOES and VIIRS are extracted and paired to form a snapshot, which is defined as a contemporaneous GOES-VIIRS image pair captured at a specific timestamp. To ensure spatial alignment, GOES and VIIRS are reprojected to a common geographic coordinate system using an appropriate EPSG code (OGP, 2012), a standardized identifier that specifies a particular coordinate reference system (e.g., UTM zone based on the event’s longitude). GOES is resampled to match the 375-meter spatial resolution of VIIRS. VIIRS active fire (hotspot) points are resampled into a 2D BT map, and nearest-neighbor interpolation (Badhan et al., 2024) is applied to fill gaps between detected fire pixels. Since the VIIRS product includes only detected active fire locations, a constant background BT value is assigned to all remaining pixels within the ROI. This value is selected to preserve the bimodal distribution between background and fire pixels and to maintain physical relevance. Additional details on the selection and justification of this background value are provided in the

Section 3. A saturation condition in VIIRS, where I4 pixels at the core of intense fires either reach the nominal maximum of 367 K or fold to artificially low values (~208 K), occurs due to the extreme thermal intensity of active fires and the sensor’s dynamic range. This saturation condition is corrected using the procedure described in (Badhan et al., 2024), to ensure physically consistent VIIRS inputs. After applying the correction procedure described in (Badhan et al., 2024), the effective VIIRS range becomes 283–367 K. In comparison, GOES exhibits broader ranges of 209–413 K (Band 7), 202–342 K (Band 14), and 198–342 K (Band 15). These differences reflect sensor-specific characteristics, including orbital configuration, spectral response, and spatial resolution, as well as the fact that GOES covers both fire and background. It should be noted that during alignment with GOES, some VIIRS hotspots are not detected at the exact pairing timestamp but instead appear within short intervals of 2 to 10 minutes. To correct this temporal inconsistency, all VIIRS hotspots within a 10-minute window are aggregated, and the latest timestamp is assigned prior to resampling into the resulting BT maps.

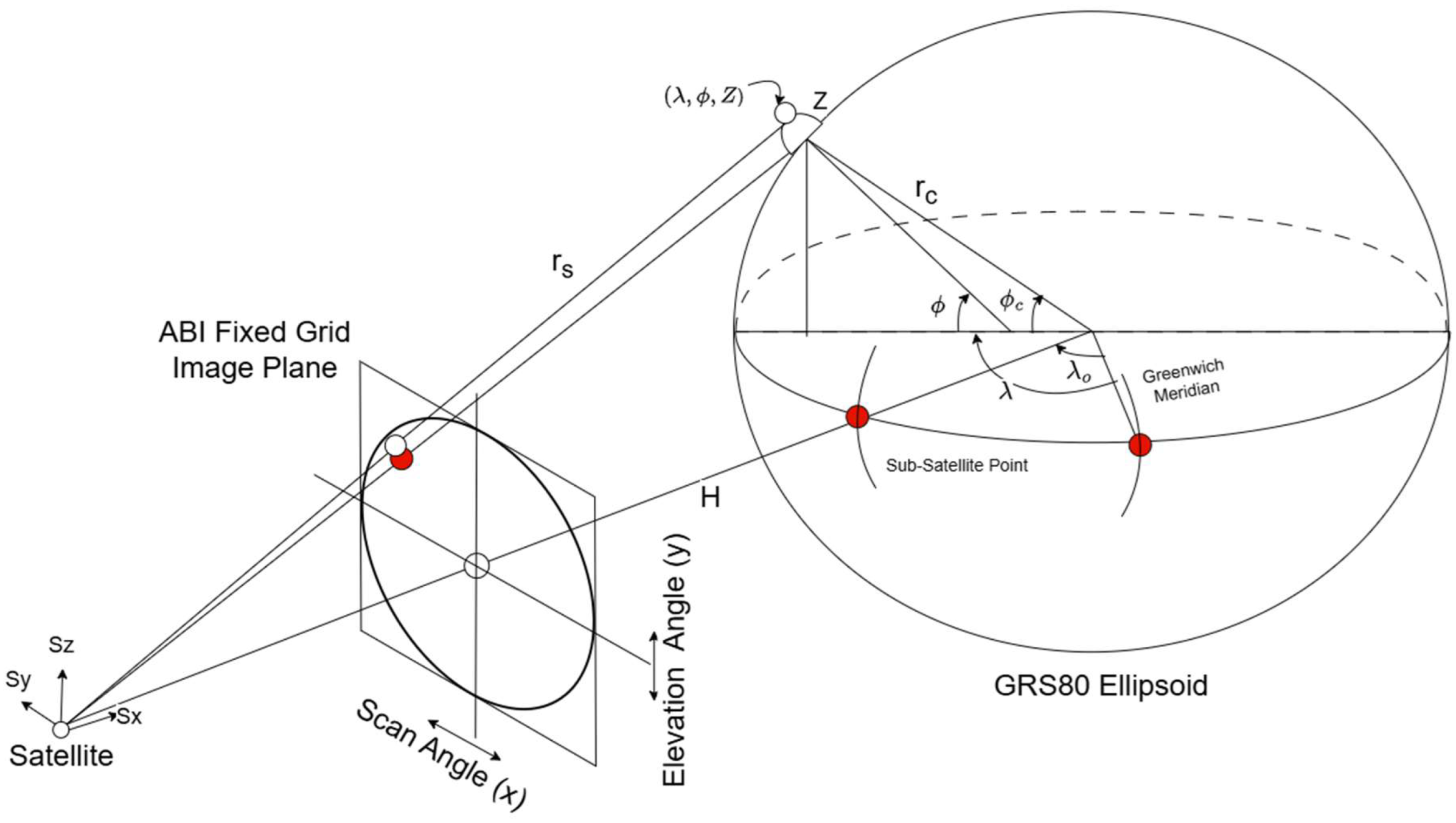

Lastly, the parallax effect (Pestana and Lundquist, 2022) in GOES ABI imagery is corrected to improve geolocation accuracy and enable reliable alignment with higher-resolution VIIRS reference. The parallax effects arise due to the satellite’s off-nadir viewing geometry, which causes elevated terrain features, such as mountains, to appear spatially displaced from their true locations. The dislocation grows with both elevation and viewing angle. In mountainous regions, this can lead to geolocation errors that can exceed 5 km. The consequence of this parallax is a misalignment between GOES imagery and ground truth datasets of VIIRS. Standard ABI products project each pixel from the ABI Fixed Grid (defined by scan and elevation angles) onto the Earth coordinates. This is done using a fixed satellite position and a smooth Earth ellipsoid model (GRS80)(Moritz, 2000). The GRS80 model approximates Earth as an oblate spheroid, with a slightly larger radius at the equator than at the poles. However, the projection ignores actual land elevation and assumes all terrain lies on the reference ellipsoid. This simplification causes parallax effect.

To correct the parallax effect, a geometric orthorectification correction method presented by Pestana and Lundquist (2022)is applied. Considering terrain offsets from the GRS80 ellipsoid, the GOES-R projection process is modified using a digital elevation model (DEM) to create orthorectified ABI images.

Figure 2 illustrates the orthorectification method, which computes the intersection between line-of-sight (

) vectors, extending from the satellite sensor through the ABI Fixed Grid image plane, and the Earth’s surface, modeled using DEM-based elevations. The satellite is positioned at a constant distance

from the Earth’s center, directly above the sub-satellite point, which is the point on the Earth’s surface located directly beneath the satellite, at longitude

. For each DEM grid cell, defined by geodetic coordinates (latitude

longitude

, and surface elevation

), the geocentric latitude (

is defined as the angle between the equatorial plane and the line connecting the Earth’s center to the point on the surface. It differs from the geodetic latitude, which is measured relative to the ellipsoid normal. The geocentric latitude can be computed as

where

is the equatorial radius of earth (semi-major axis) and is approximately equal to 6.378 × 10

3 km and

is the polar radius of the earth (semi-minor axis) and is approximately equal to 6.356 × 10

3 km. Next, the Earth-centered distance (

to the surface point, is computed as

where, is eccentricity of the reference ellipsoid, which quantifies how much the Earth’s shape deviates from a perfect sphere (e.g., 0.0818 for GRS80) and is surface elevation above the ellipsoid in km.

The surface point is then expressed in the satellite-centered cartesian coordinate system, where

,

, and

are the distance from the satellite location as origin to the surface point and are calculated as

where

is approximately equal to 42.164 × 10

3 km for GOES, and

is –75° for GOES-East and –137° for GOES-West. Finally, the coordinates are projected onto the ABI Fixed Grid as scan angle (

) and elevation angle (

), using:

This transformation creates a direct link between terrain-aware surface locations and the satellite’s viewing geometry, enabling correction of the apparent location of each pixel in GOES imagery. It is applied to each fire event in our dataset to obtain accurate surface locations by accounting for both elevation and satellite viewing angle. To prevent losing edge pixels that may shift during correction, a 0.2-degree buffer is added around the ROI before applying the transformation. After correction, the buffer is removed to restore the original ROI and maintain alignment with VIIRS data. Figure A-1 is provided in Appendix A to illustrate the effects of parallax correction.

3. Proposed Deep Learning for Wildfire Remote-sensing Enhancement Approach (DL-WREN)

To enhance the resolution of GOES imagery for active fire identification and BT estimation, a two-step approach including segmentation (Jadon, 2020) and regression (Wang et al., 2021) is developed using VIIRS data as ground truth. This two-step approach addresses a key challenge identified in the single-step approach in the prior work (Badhan et al., 2024): when background suppression and BT estimation are performed simultaneously, the resulting BT values at fire locations are often underestimated. In the proposed approach, the GOES input is processed independently through segmentation and regression models. The segmentation process detects active fire pixels and filters out background noise, producing a binary fire mask. The regression process predicts BT values across the image, independently of the segmentation output. The final BT prediction is obtained by applying a pixel-wise multiplication between the fire mask and the regression output, ensuring that BT values are retained only at predicted fire locations. This decoupling allows each model to specialize in its respective objective, i.e., spatial localization in segmentation and BT estimation in regression, leading to more accurate results than the single-step approach in the prior work (Badhan et al., 2024). The proposed approach differs from single-stage methods by enabling modular optimization and flexibility in model design. It allows the integration of task-specific architectures, normalization schemes, and loss functions tailored to each sub-task.

Segmentation is used to classify pixels within the ROI as either active fire or background by learning spatial and spectral patterns from GOES imagery. A DL-based segmentation approach is used instead of simple thresholding because high BT values alone are not uniquely indicative of fire. Elevated BT values can occur in valley regions where lower elevations exhibit warmer surface temperatures than surrounding higher terrain, and in some cases these background temperatures can even exceed the BT values of small or cooler fires. This behavior is observed in our dataset and makes threshold-based methods unreliable. The DL-based segmentation approach can leverage contextual cues to better distinguish fire pixels from confounding background signals. It should be noted that the segmentation in this study differs from typical segmentation setups, where ground truth is directly derived from the input data. Here, VIIRS observations, collected from a different sensor, serve as approximate references rather than perfectly aligned labels. This cross-sensor discrepancy introduces uncertainty into the training process and should be considered when evaluating model performance.

To estimate accurate BT values in active fire regions, a separate regression step is introduced to address the imbalance caused by the dominance of background pixels, which comprise approximately 98% of the ROI on average. In the prior work (Badhan et al., 2024), the model was found to consistently underestimate BT values in fire regions due to the disproportionate influence of background pixels in both inputs and ground truth. This imbalance shifts the optimization toward minimizing errors in non-fire areas, resulting in suppressed BT values even where active fire is present. To mitigate the effects of this background-driven bias and to stabilize the mapping between GOES and VIIRS BT fields, two supporting strategies are introduced: z-score data normalization and background-value adjustment in the VIIRS ground truth. These strategies do not resolve the imbalance itself, but they help prevent the regression model from being pulled toward low background values and instead encourage the predicted BT in fire regions to approach their true VIIRS fire-region values.

Per-image z-score normalization is applied to both GOES inputs and VIIRS ground truth to enhance generalization across daytime and nighttime conditions and to ensure physically meaningful BT predictions. Each image is transformed by subtracting its mean BT and dividing by its standard deviation, thereby standardizing the data to a mean of zero and a standard deviation of one, as described in Eq. (5).

wheres the original BT value at pixel location, is the mean BT values across the image, and is the standard deviation of BT values across the image.

This normalization process preserves internal contrast between fire and background while removing absolute differences in BT associated with time of day or scene-wide variability. For example, a nighttime image with generally lower observed BT values and a daytime image with higher BT values will both be rescaled so that their internal contrasts, such as between fire and background, remain comparable. In the previous work (Badhan et al., 2024), global min–max normalization was used, which limited the model’s ability to generalize across varying conditions. In contrast, per-image z-score normalization allows the model to focus on relative BT patterns (e.g., identifying fire regions), rather than being biased by global shifts in the ambient BT value. This normalization approach is motivated by two key observations: (1) both GOES Band-7 and VIIRS fire regions exhibit a clear day–night bimodality in mean BT values, with systematically higher temperatures during the day and lower at night, and (2) contemporaneous GOES and VIIRS observations show a linear correlation in their mean BT values, as illustrated in Figure A-2 in Appendix A using data from the Dixie Fire between July 14 and August 15. These patterns guided the use of per-image z-score normalization, since the per image mean and standard deviation used for normalization are not available at inference time. To enable proper de-normalization during prediction, the regression model is trained to estimate these per-image statistics directly from the GOES input, as described in

Section 3.1.

To prevent suppression of BT values in the predicted active fire regions, a value of 240 K is assigned to the background pixels in the VIIRS ground truth for training. In the previous work (Badhan et al., 2024), background pixels were set to 0 K, which introduced a mismatch with the GOES inputs, where background BT values are typically much higher. This discrepancy, combined with the numerical dominance of background pixels, caused the model to favor lower overall predictions, especially in fire regions. The choice of 240 K as a background value helps to reduce this bias by providing a more realistic scenario, encouraging the model to produce higher BT outputs where appropriate. However, if the background value is set too high (e.g., close to fire BTs), the per-image z-score normalization compresses the contrast between fire and background, leading the model to learn unimodal predictions without clearly separating fire regions. Although the ground truth retains bimodality, the model’s predictions do not, resulting to poor fire-background discrimination during inference. Therefore, the value of 240 K represents a practical compromise: it is high enough to avoid suppressing predicted fire-region BT values, yet low enough to preserve separation between fire and background in the model’s output. The rationale for this choice is further supported by an ablation study on varying background value, which is presented in Appendix C.

In summary, the proposed two-step approach addresses critical limitations of prior single-step methods by decoupling fire localization and BT estimation into separate segmentation and regression stages. This design enables targeted strategies, such as per-image z-score normalization and background value adjustment to overcome the dominance of background/non-fire pixels and improve prediction accuracy. The segmentation model specializes in spatial localization of fire pixels, while the regression model focuses on predicting accurate BT values. Detailed descriptions of the model architecture, loss functions, and evaluation metrics are provided in the following subsections.

3.1. DL Architecture and Model Elements

The segmentation and regression models in this study are based on the U-Net architecture (Weng and Zhu, 2021), an encoder–decoder framework originally designed for biomedical image segmentation. The U-Net used in this study features a symmetric structure, in which the encoder compresses spatial information while capturing abstract features, and the decoder restores resolution while refining predictions. A key characteristic of U-Net is the use of skip connections that pass high-resolution feature maps from the encoder to the decoder, enabling better localization. The architecture is constructed using common building blocks. 2D convolutional layers (Conv2d) (Uchida et al., 2018) extract spatial features using learnable 3×3 filters while Rectified Linear Units (ReLU) (Agarap, 2019) introduce non-linearity by zeroing out negative activations. Batch Normalization (BatchNorm2d) (Ioffe and Szegedy, 2015) normalizes the output of convolutional layers across each mini-batch to stabilize and accelerate training. Max pooling (MaxPool2d) (Zhao and Zhang, 2024) reduces spatial dimensions by selecting the maximum value in each 2×2 window, enabling spatial down-sampling while preserving important features. In the decoder, transposed convolution (TransposeConv) (Sahito et al., 2023) is used to increase spatial resolution as it learns to up-sample feature maps by applying learned kernels that reverse the effects of down-sampling. Adaptive average pooling (AdaptiveAvgPool2d) (Yang et al., 2024) reduces a feature map to a fixed spatial size by averaging over spatial regions, enabling a consistent output regardless of the input dimensions. To streamline the design, a modular unit called DoubleConv block is used throughout the encoder and decoder. Each DoubleConv block consists of two sequential 3×3 Conv2d layers, each followed by ReLU and BatchNorm2d. These blocks extract and refine spatial features and form the backbone of each stage in the U-Net.

The segmentation model uses a deep U-Net variant tailored for pixel-wise binary classification. It maps 128×128×3 GOES input images to a 128×128 binary fire mask. The encoder consists of four stages, each built with a DoubleConv block followed by MaxPool2d, progressively halving the spatial resolution and doubling the feature depth until reaching a 1024×8×8 representation at the bottleneck. The decoder mirrors this structure: TransposeConv layers up-sample the features and skip connections concatenate encoder outputs to recover spatial detail. Each decoder stage includes a DoubleConv block to refine the combined features. A final 1×1 Conv2d projects the 64-channel decoder output to a single channel, and a sigmoid activation produces a binary mask indicating active fire regions. Batch normalization is used throughout the network to ensure stable feature learning. The complete architecture of the segmentation model is detailed in Table B-1, which outlines the layers, their configurations, and output dimensions at each stage of the network.

The regression model adopts a shallower U-Net variant designed to estimate three outputs per image: a normalized BT map (1×128×128), the mean (), and the standard deviation () of BT values. These per-image statistics allow for recovery of BT values by z-score de-normalization during inference. The encoder consists of two DoubleConv blocks, each followed by MaxPool2d, reducing the resolution while increasing feature richness. At the bottleneck, the feature depth is expanded to 256. The decoder performs up-sampling via TransposeConv and recovers spatial detail using skip connections. We have removed BatchNorm2d from the last layer. Unlike the segmentation model, BatchNorm2d is removed from the final decoder stage since out experiments have shown that it enforces batch-wise statistics that often conflict with regression output. A 1×1 Conv2d layer outputs the normalized BT map. Separately, an AdaptiveAvgPool2d compresses the decoder’s final feature map to 64×1×1, which is passed through two fully connected layers: one for and another for . The predicted is passed through an exponential function to ensure it remains positive. The complete architecture is described in Table B-2 of Appendix B, which lists each layer and its function in the regression pipeline.

3.2. Loss Functions

For the segmentation task, Binary Cross-Entropy (BCE) (Mao et al., 2023) loss, as defined in Eq. (6), is used to train the model to predict a binary fire mask. BCE is commonly used in binary segmentation problems and derived from the Bernoulli distribution. It minimizes the difference between predicted probabilities and ground truth labels.

where represents the binarize label of the pixel in the VIIRS ground truth image (1: active fire and 0: no fire), represents the model-predicted probability of fire at that pixel, and represents the total number of pixels across the image. The loss function is calculated after flattening the two-dimension image into one-dimension array.

For the regression task, a statistically supervised, region-weighted, root means square error loss (

) is used to estimate pixel-wise BT values. Here, “statistically supervised” denotes additional supervision on the mean and standard deviation, helping align the overall BT distribution between prediction and ground truth. This loss not only penalizes raw prediction error but also enforces agreement in spatial statistics (mean and standard deviation) between predicted and ground truth BT values. In addition, the ranges of predicted BT values are expected to remain consistent with VIIRS observations, which saturate at 367 K. The objective is to enhance GOES imagery such that its outputs closely mimic the dynamic range and fidelity of VIIRS. This loss encourages accurate prediction in fire regions while maintaining consistency with BT statistics. The total loss contains three terms:

where,

measures the root mean square error between the ground truth mean (

) and the predicted mean (

) and

measures the root mean square error between the ground truth standard deviation (

) and the predicted standard deviation (

).

where

B represents the batch size (number of images used in each batch). The Region-Weighted RMSE loss

, addresses the class imbalance between fire and background/non-fire regions by assigning different weights to their respective errors. Specifically, higher weights can be given to fire pixels to emphasize their importance, while lower weights are assigned to background pixels to prevent them from dominating the loss:

where and are hyperparameters to be tuned for the fire and background/non-fire regions, respectively. These weights allow the model to prioritize errors in fire-affected areas, which are critical for wildfire monitoring, while still maintaining stability in the surrounding background. Physically, this formulation reflects the fact that small but intense fire regions have disproportionate importance compared to the much larger background. The sensitivity of the loss function to and is further analyzed through the ablation studies, where their impact on prediction accuracy is systematically examined.

The two loss components,

(fire root mean square error loss) and

(background root mean square error loss), are defined as:

where is the ground truth BT of the pixel, is the predicted BT value at that pixel, is the total number of pixels, and is a binary fire mask indicating whether a pixel belongs to the fire region (nonzero . Physically, quantifies the prediction error only within fire regions, ensuring that localized BT within fire region is captured accurately. In contrast, measures the prediction error in the much larger background, stabilizing the overall reconstruction and preventing spurious noise. Together, these terms ensure that the contribution from sparse but critical fire pixels is not overwhelmed by the background.

When no distinction is made between fire and background regions (i.e., without assigning separate weights to each), the regression objective simplifies to a global RMSE (

), where all pixels contribute equally. In this case, the loss no longer separates fire- and background-specific errors but instead evaluates overall prediction accuracy across the entire image.

This global formulation can be seen as a baseline version of the loss, where the image is treated as a single unit without differentiating between fire and background regions.

3.3. Evaluation Metrics

The performance of the segmentation and regression models is evaluated using a set of metrics designed to assess both the accuracy of the predicted fire locations and the BT values at those locations. For segmentation, evaluation metrics are used to quantify how well the predicted shape of active fire regions matches the corresponding regions in the ground truth. For regression, the metrics evaluate the accuracy of BT values within active fire regions and the model’s ability to suppress background BT values.

Intersection over Union (IOU) measures the agreement between the prediction and ground truth by quantifying the degree of overlap of the fire area between ground truth and model prediction. The

values range from 0 to 1, where 0 represents no overlap between the VIIRS ground truth and the model-predicted fire regions, 1 indicates perfect overlap (i.e., no error), and intermediate values correspond to partial disagreement in fire extent and shape. Prior to evaluation, the predicted probability map is binarized using Otsu’s thresholding (Xu et al., 2011), an adaptive method that selects an optimal threshold value based on the distribution of predicted intensities. After binarization, all pixels above the threshold are set to 1 (fire), and those below are set to 0 (no fire). The

metric is then computed as

where and represent the predicted and ground truth binarized presence (1) or absence (0) of fire at the pixel location, respectively.

The fire and background root mean square error ( and ) quantify the prediction error of BT values in distinct spatial regions based on the ground truth. The term measures the prediction error in the active fire regions (i.e., where ground truth has non-zero BT values), and measures the prediction error in background regions (i.e., where ground truth has zero BT values). The and values start from 0, where 0 represents zero error in the predicted BT values (i.e., perfect prediction) while larger values correspond to more error in the predicted BT values. Both metrics are expressed in K and are computed on denormalized outputs, allowing the error magnitudes to retain physical interpretability.

These metrics are calculated using following equations.

It should be noted that although these metrics are conceptually similar to the loss components defined in Eq. (10), they are used here solely for evaluation rather than optimization. Physically, captures how accurately the model reconstructs BT values within active fire regions, where temperatures are high and spatially localized. In contrast, measures prediction stability in the non-fire background, where BT values should remain near ambient levels.

4. Results

4.1. Training

All models are implemented using PyTorch (v2.0.1) with CUDA 11.7 and cuDNN 8.5 support. Training is performed on a workstation equipped with NVIDIA RTX A6000 GPUs (each with 48 GB of VRAM), running CUDA version 12.0. Separate hyperparameters are used for segmentation and regression tasks, tuned individually to optimize their respective performance. The regression model is trained for 150 epochs with a batch size of 32 and a learning rate of 3×10−5, requiring approximately one hour. For the segmentation task, a learning rate of 8×10−5 is used, with identical batch size and epoch count. The segmentation model completes training in approximately 55 minutes. The Adam optimizer (Kingma and Ba, 2017) is used for both tasks due to its efficient handling of sparse gradients and adaptive learning capabilities. To enhance generalization and training stability, the learning rate is reduced by a factor of 0.5 if the validation loss plateaus, with a minimum improvement threshold of 1×10−5. Early stopping is employed to prevent overfitting, halting training if the validation loss does not improve for 30 consecutive epochs.

Each sample in the dataset is derived from a ROI corresponding to a wildfire event snapshot. These ROIs are divided into fixed-size patches of 128 × 128 pixels (approximately 48 × 48 km) using a sliding-window technique with no overlap. To ensure complete spatial coverage, windows at the edges of each ROI that are smaller than 128 × 128 are adjusted by extending into the preceding region, allowing full inclusion without missing any portion of the scene. Additionally, a filtering step is applied to exclude samples with fires that cannot be reliably detected by GOES, as their inclusion has been found to degrade DL model performance. Specifically, a sample is discarded if the fire observed by VIIRS has an active fire coverage of less than 0.37% of the total area (approximately 506.35 km²) and a total fire FRP, which quantifies the rate of radiant heat energy emitted by the fire, below 600 MW. Whereas the 10,000-acre threshold described in

Section 2.2 is applied at the event selection stage to ensure the inclusion of large and clearly visible wildfires, this filtering step is applied at the snapshot level to remove weak or uninformative samples within those events. The dataset consists of 17,061 reference samples collected from a diverse set of wildfire events across the CONUS. A predefined 64/16/20 split ratio is applied, and samples are randomly assigned to the training, validation, and testing subsets, resulting in 10,918, 2,730, and 3,413 samples, respectively. To confirm that the random assignment does not bias the results, multiple shuffling seeds are tested while maintaining the same split ratio. All seeds produced similar model performance, indicating that the dataset’s diversity makes the results robust to the specific random split.

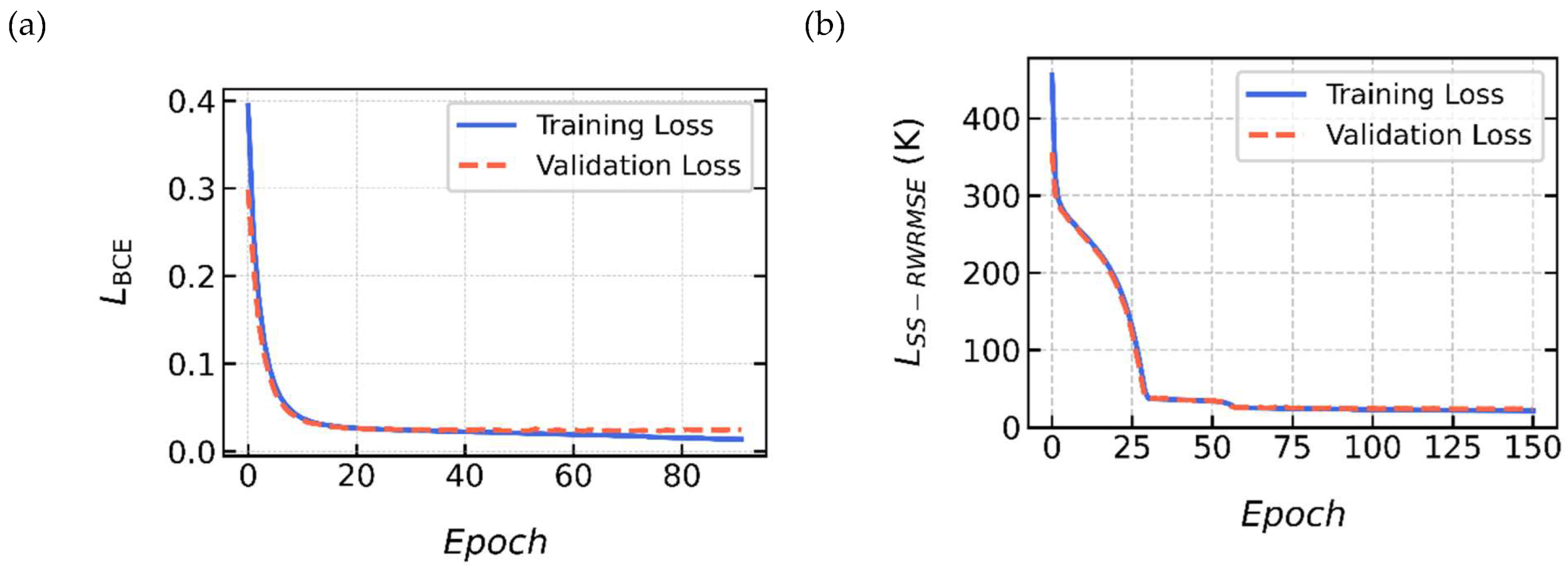

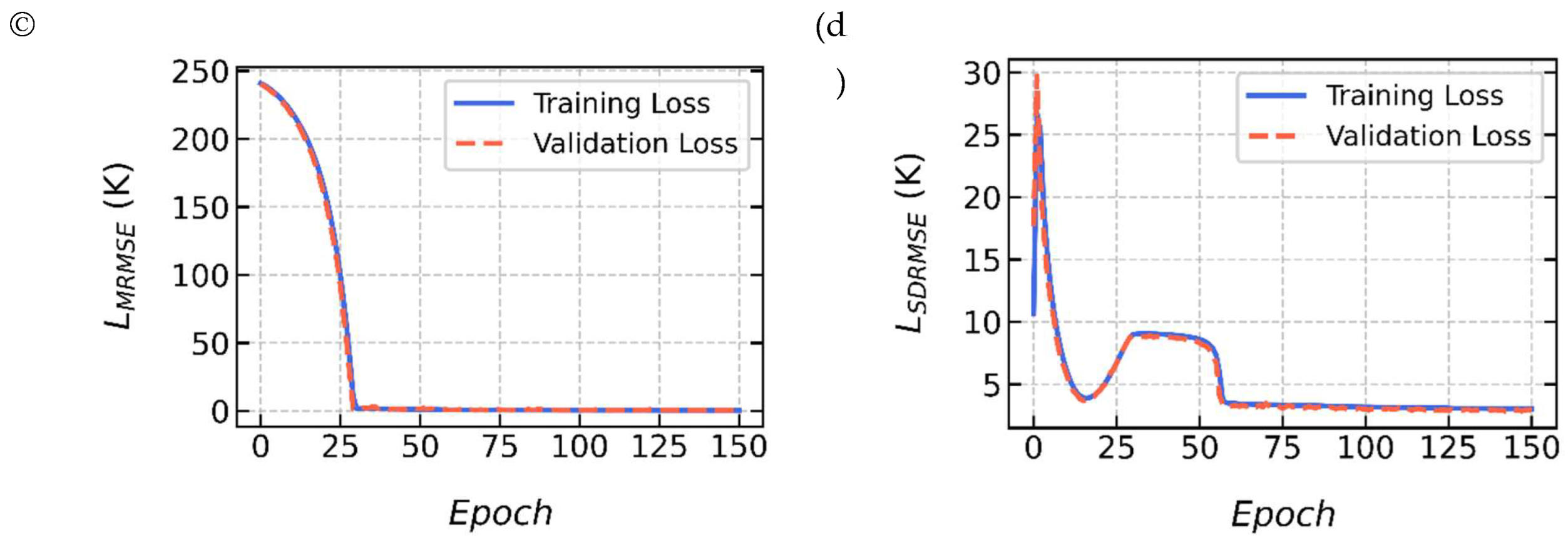

To increase diversity and reduce overfitting, basic data augmentation techniques are applied during training. These include random horizontal and vertical flipping of all input channels and corresponding ground truth. The learning curves for both regression and segmentation tasks are shown in

Figure 3. These plots demonstrate smooth convergence of the training and validation losses, with no signs of overfitting, underscoring the effectiveness of the training strategy and regularization techniques.

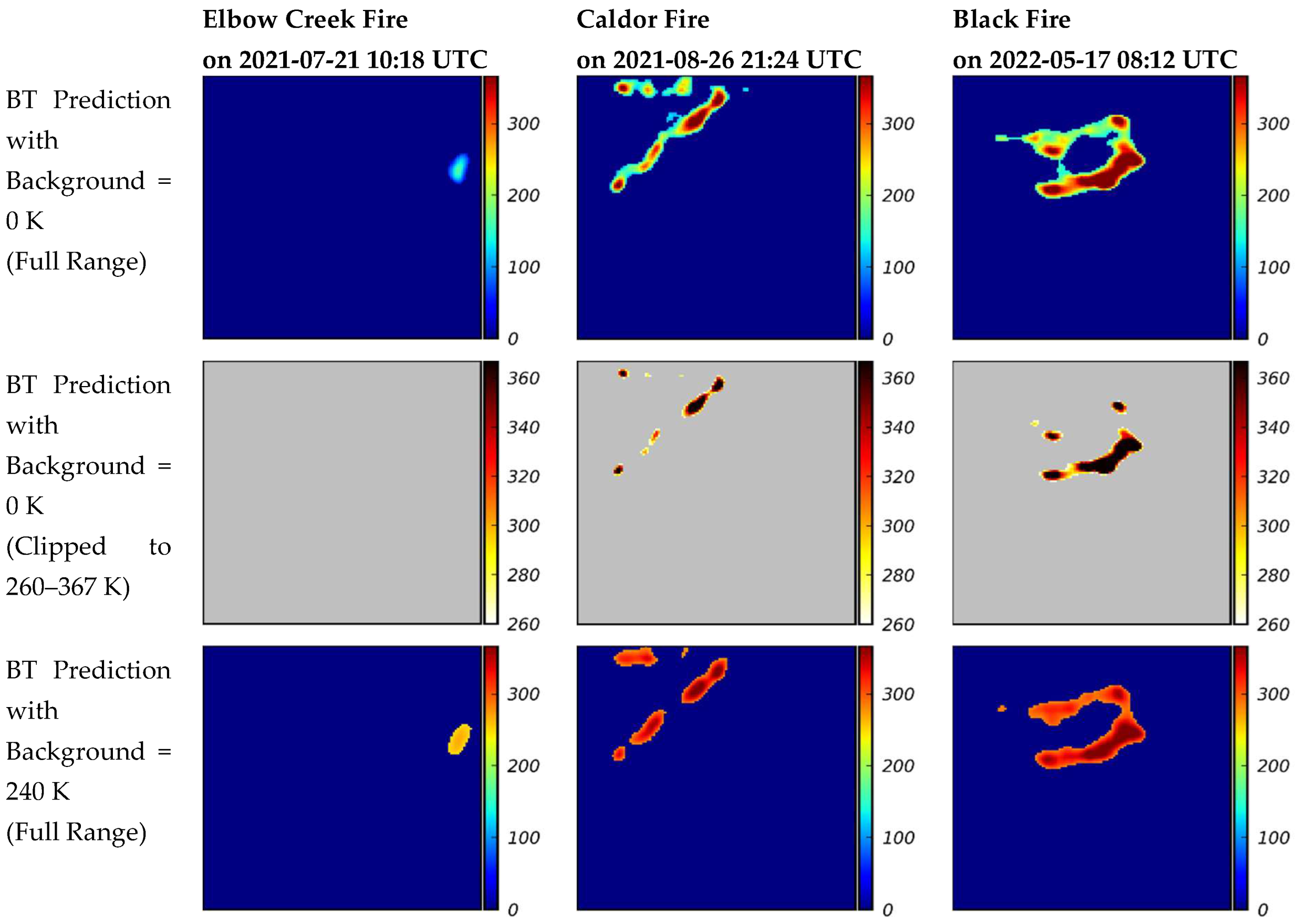

4.2. Testing of the proposed DL-WREN

This section presents the results of the proposed two-step approach described in

Section 3.1. The proposed approach first employs a U-Net segmentation model trained using BCE loss to localize fire regions. It then employs a subsequent U-Net regression model trained with the

loss function (see Eq. (7)), which combines the RMSE of 2D BT predictions with the RMSE between the predicted and ground truth global mean and standard deviation. The 2D prediction component uses the weighted RMSE loss (Eq. (9)) emphasizes fire region BT value accuracy, using weights of

= 0.75 for active fire pixels and

= 0.25 for background pixels. The final BT prediction is obtained by element-wise multiplication of the segmentation and regression outputs, suppressing background noise while enhancing fire-specific details.

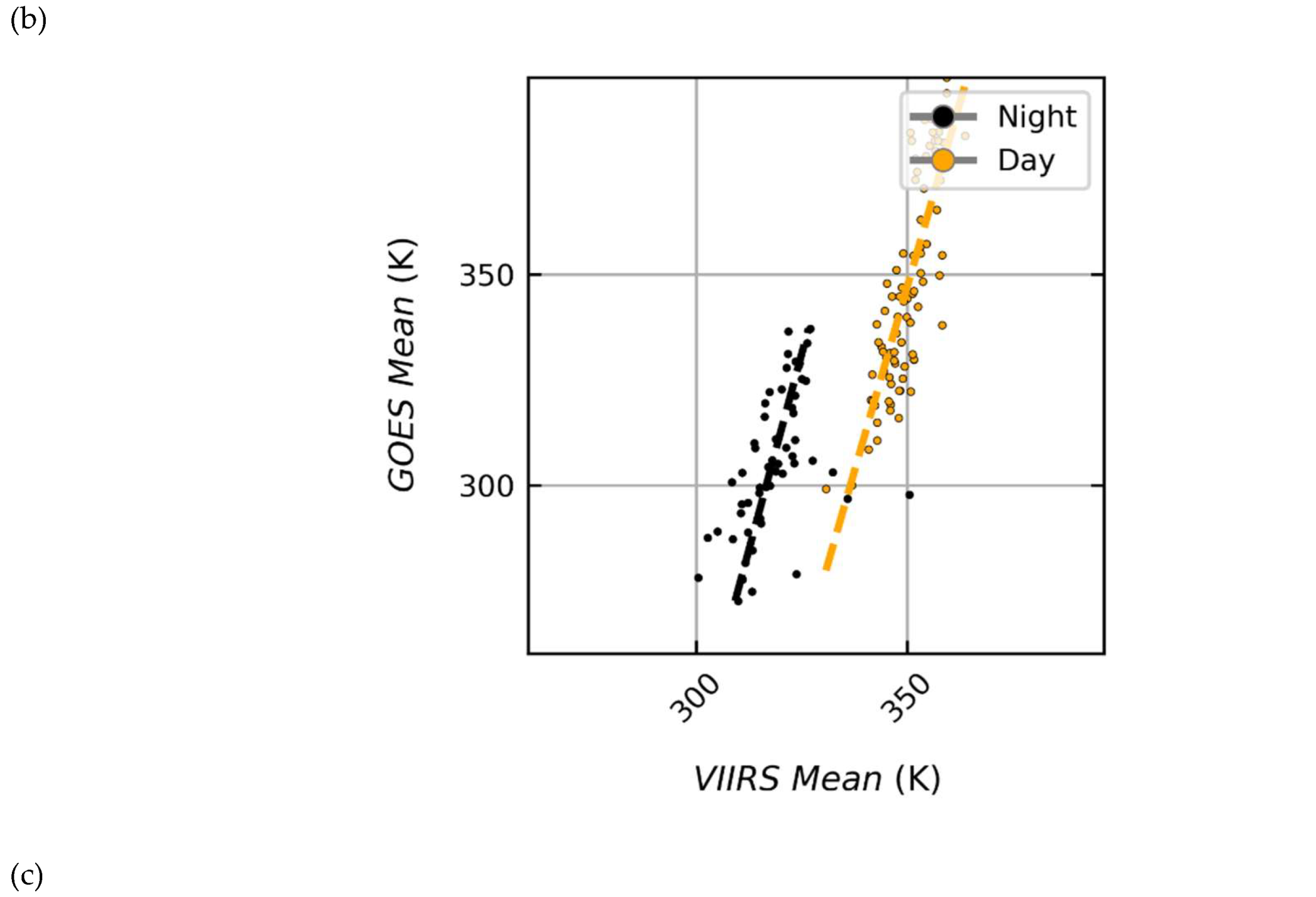

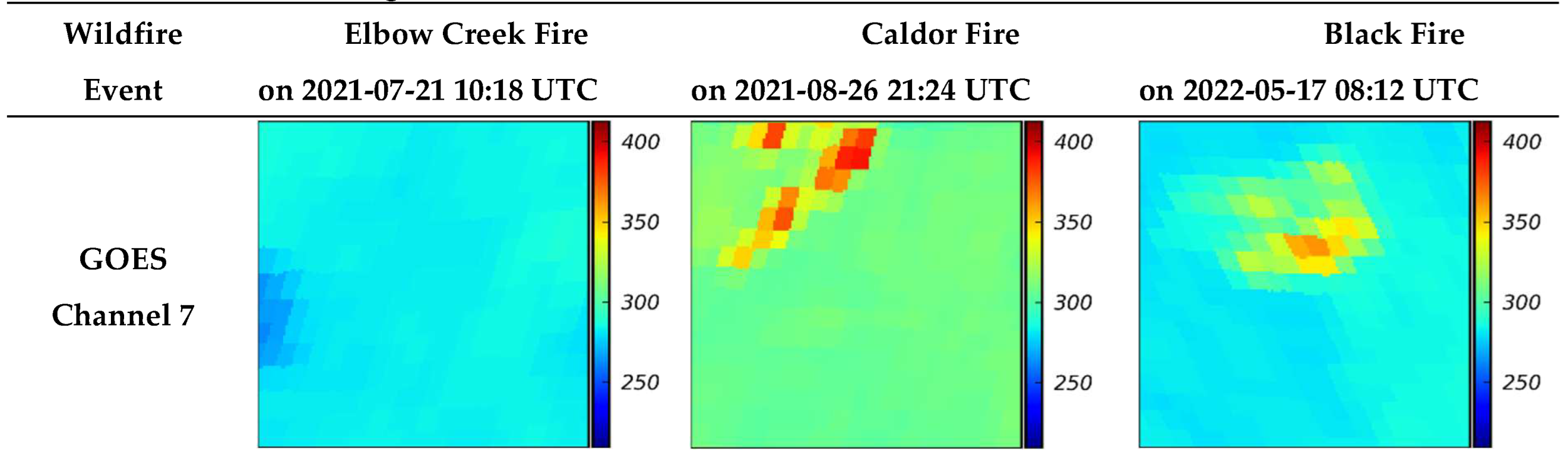

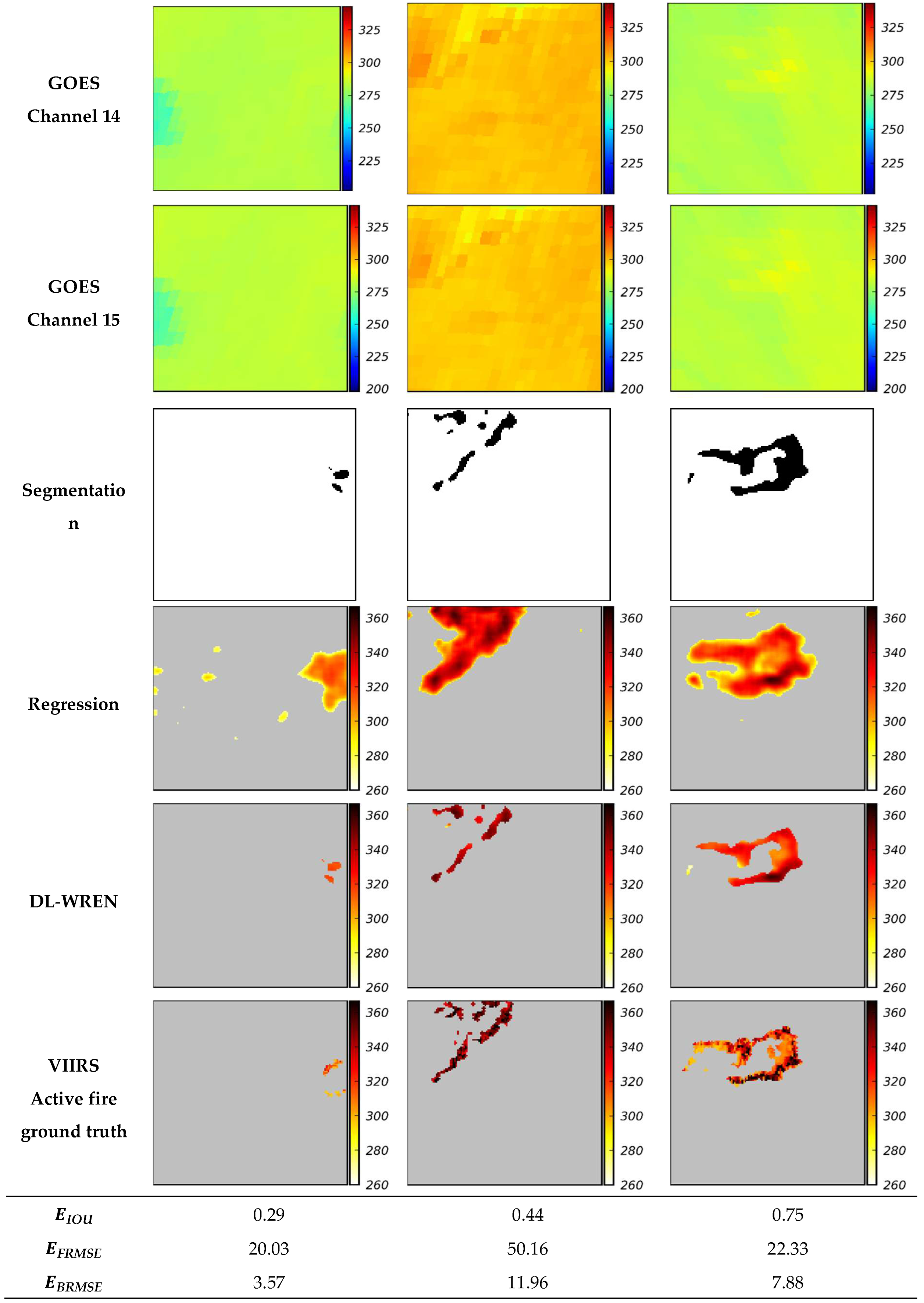

Figure 4 presents visual results for three representative wildfire events among the test set, Elbow Creek (2021-07-21), Caldor (2021-08-26), and Black Fire (2022-05-17), are selected to illustrate poor IOU (less than 0.33), average IOU (more than or equal 0.33 less than 0.66), and good IOU (more than or equal to 0.66), respectively. For each case, GOES input channels (7, 14, and 15) are shown alongside the segmentation prediction, regression prediction, the final element-wise product, and the VIIRS ground truth. It should be noted that the grey areas represent background pixels, which are excluded from the BT range and therefore not shown in the color bar. Otsu’s thresholding is applied to suppress small spurious values. The lower limit of the color scale is set to 260 K, instead of the non-saturated VIIRS minimum of 283 K, to enable visualization of under-predicted values that would otherwise be clipped. Evaluation metrics including

for fire region segmentation, fire-pixel RMSE (

), and background RMSE (

) as introduced in

Section 3.3 are also listed in the figure for each case.

As shown in

Figure 4, the two-step DL-WREN framework improves both spatial localization and BT estimation. The segmentation step isolates fire regions and suppresses false activations, while the regression step provides per-pixel BT predictions reasonably close to the ground truth through region-weighted optimization (Eq. (7)). Together, these components reduce background interference and enhance detection across diverse wildfire scenarios.

Table 1 compares the superior performance of the DL-WREN framework with a previous model developed by the authors (Badhan et al., 2024), which is referred to as the baseline model herein. Both the DL-WREN and baseline model have been applied to the test dataset and the resulting evaluation metrics are calculated and reported in

Table 1. As can be seen, the proposed DL-WREN framework substantially outperforms the baseline model. DL-WREN improves spatial localization accuracy from

= 0.24 to 0.40, representing a 66.7 % relative increase over the test dataset. In terms of BT accuracy, DL-WREN reduces the fire-region RMSE (

) from 187.2 K to 37.6 K, corresponding to a 79.9% reduction, while the background RMSE (

) decreases from 31.3 K to 5.9 K, yielding an 81.2% reduction. These gains demonstrate that the two-step design effectively mitigates the trade-off observed in single-stage models by decoupling spatial boundary refinement from BT value estimation. The design choice is justified through ablation studies (Appendix C), which guide the selection of optimal configurations for segmentation and regression. Overall, the two-step approach effectively resolves the trade-off observed in single-stage models by separately leveraging spatial cues for boundary refinement and BT values cues for accurate BT estimation.

5. Conclusions

This study aimed to improve wildfire monitoring through deep learning (DL) by enhancing both fire location detection and BT estimation from GOES satellite imagery. Building upon our previous work, the main focus was to provide BT predictions within their physical range while improving spatial accuracy. By combining segmentation and regression into a two-step framework, fire regions and fire intensity could be estimated at 5-minute intervals, enabling practical near-real-time wildfire monitoring. Several methodological innovations distinguish this work from earlier efforts. A comprehensive dataset was constructed spanning 2019–2024 across the entire continental United States (CONUS), allowing the models to generalize across diverse geographic and climatic conditions. Preprocessing was improved through terrain-based parallax correction of GOES observations, which substantially reduced geolocation errors relative to VIIRS reference data. Following a study of spectral characteristics, three GOES bands, Band 7 (3.80–4.00 µm), Band 14 (10.8–11.6 µm), and Band 15 (11.8–12.8 µm), were selected as inputs. Separate architectures were identified for segmentation and regression, representing a two-step approach. For segmentation, a U-Net architecture was employed, while regression was carried out with a shallower U-Net variant featuring modifications such as the removal of batch normalization in later layers and additional output branches to predict mean and standard deviation alongside per-image z-score normalized values. These predicted statistics were subsequently used to de-normalize the per-image z-score normalized prediction outputs, enabling accurate brightness temperature estimation in physical units. To improve regression accuracy, a weighted RMSE loss was employed. Fire-region pixels were given higher weights than background pixels to counter the strong pixel imbalance, ensuring that the model learned to predict brightness temperatures in fire regions without being dominated by background signals. The framework generates fire predictions by multiplying binarized segmentation outputs with BT estimates from the regression model, effectively combining spatial localization with physical fire intensity.

Evaluation demonstrated that the proposed framework delivers predictions that are reasonably close to ground truth. For fire-region detection, the optimized segmentation design achieved an IOU of 0.40, while regression achieved a fire-region RMSE of 38.94 K. Visual comparisons with ground-truth observations further validated the quantitative findings, confirming that the framework reliably captures both fire extent and brightness temperature dynamics. Overall, this study shows that DL, when coupled with geostationary satellite observations and preprocessing steps such as terrain-based parallax correction, spectral band selection, and normalization selection, provides a viable pathway toward near-real-time wildfire monitoring. The ability to deliver fire location and BT estimates at 5-minute intervals across CONUS represents a substantial advancement for fire monitoring. The proposed framework can support firefighters, emergency managers, researchers, and policymakers by enabling refined fire progression information. Future work can incorporate temporal dependencies using past GOES observations, as well as contextual factors such as wind direction and fuel type, with the aim of further improving predictive accuracy and reliability for operational deployment.

Author Contributions

Mukul Badhan: Conceptualization, Methodology, Software, Validation, Formal Analysis, Visualization, Writing - Original Draft. Majid Bavandpour: Methodology, Formal Analysis, Writing - Review & Editing. Kasra Shamsaei: Methodology, Formal Analysis, Writing - Review & Editing. Dani Or: Methodology, Formal Analysis, Supervision, Writing - Review & Editing. George Bebis: Methodology, Formal Analysis, Supervision, Writing - Review & Editing. Neil P. Lareau: Conceptualization, Methodology, Writing - Review & Editing. Qunying Huang: Writing - Review & Editing. Hamed Ebrahimian: Conceptualization, Methodology, Formal Analysis, Supervision, Project Administration, Funding Acquisition, Writing - Review & Editing.

Funding

This work has been supported through the National Science Foundation’s Leading Engineering for America’s Prosperity, Health, and Infrastructure (LEAP-HI) program by grant number CMMI-1953333, as well as U.S. Army Engineer Research and Development Center (ERDC) contracts W912HZ24F0414 and W912HZ25CA008. The opinions and perspectives expressed in this study are those of the authors and do not necessarily reflect the views of the sponsors.

Appendix

GOES-VIIRS Alignment Analysis

This appendix provides additional visual results supporting the data preprocessing steps. It includes examples of GOES–VIIRS spatial misalignment before and after orthorectification, and an analysis of day–night variability and cross-sensor correlation in BT values over the active fire regions.

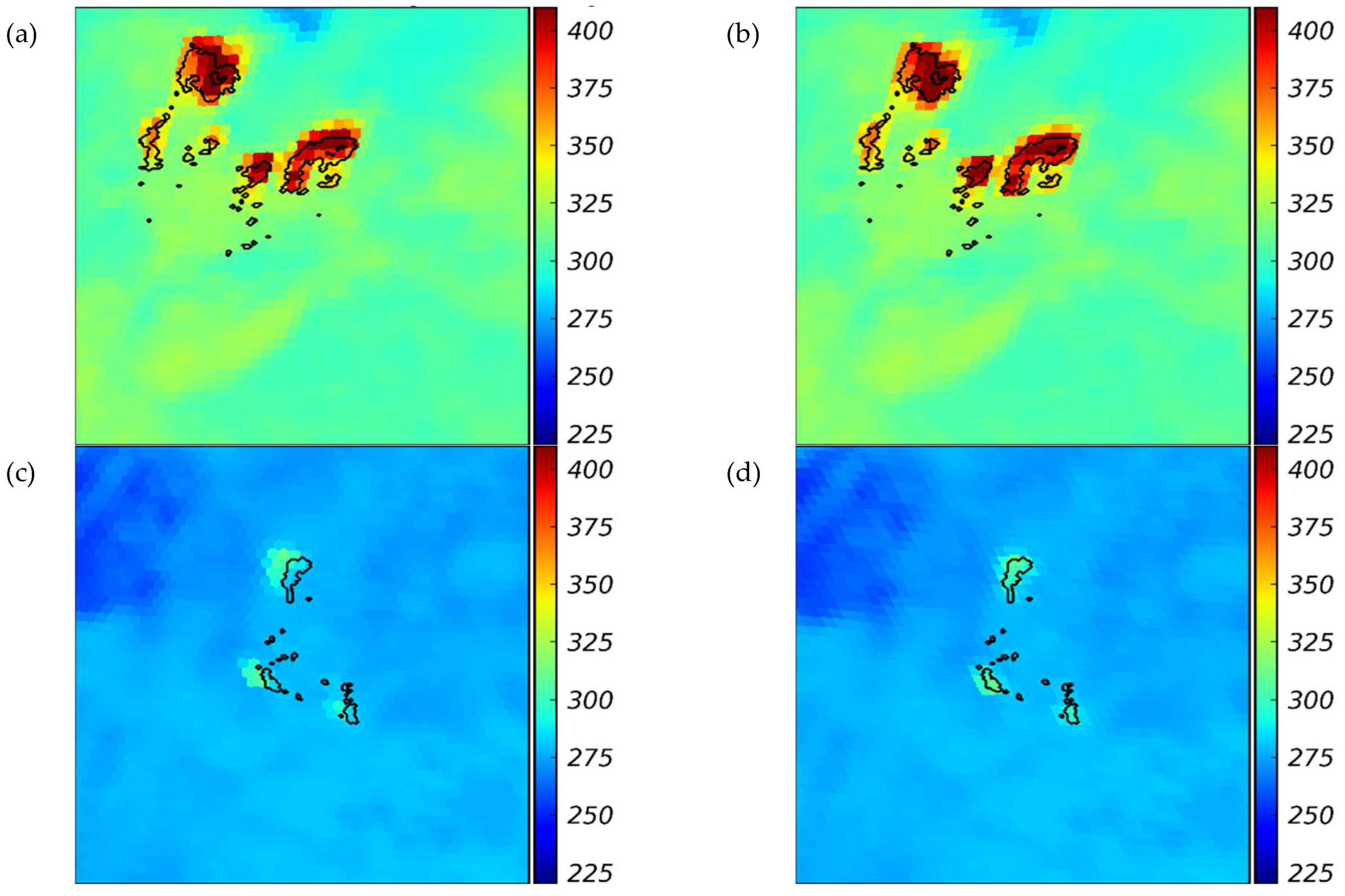

Figure A-1 illustrates examples of the misalignment of GOES-17’s band 7 and VIIRS active fire product, its corrected misalignment using orthorectification, the misalignment of GOES-16’s band 7 and VIIRS, and its corrected misalignment using orthorectification. GOES-17 exhibits a systematic eastward shift, while GOES-16 shows a westward shift relative to the VIIRS ground truth. After applying orthorectification, these misalignments are significantly reduced.

Figure A1.

Effect of orthorectification on GOES and VIIRS alignment. For Dixie Fire on August 05, 2021, 21:12 UTC (longitude: -121, latitude: 40): (a) Original GOES-West imagery overlaid with VIIRS boundaries, showing misalignment, (b) Orthorectified GOES-West imagery, demonstrating improved alignment with VIIRS. For Hermits Peak Fire May 04, 2022, 08:54 UTC (longitude: -105, latitude: 35): (c) Original GOES-East imagery overlaid with VIIRS boundaries, showing misalignment, (d) Orthorectified GOES-East imagery, demonstrating improved alignment. The results highlight how orthorectification reduces spatial displacement, improving consistency between GOES and VIIRS fire observations. In these figures, the BT observations are from GOES, while the fire boundaries extracted from VIIRS are shown with black lines.

Figure A1.

Effect of orthorectification on GOES and VIIRS alignment. For Dixie Fire on August 05, 2021, 21:12 UTC (longitude: -121, latitude: 40): (a) Original GOES-West imagery overlaid with VIIRS boundaries, showing misalignment, (b) Orthorectified GOES-West imagery, demonstrating improved alignment with VIIRS. For Hermits Peak Fire May 04, 2022, 08:54 UTC (longitude: -105, latitude: 35): (c) Original GOES-East imagery overlaid with VIIRS boundaries, showing misalignment, (d) Orthorectified GOES-East imagery, demonstrating improved alignment. The results highlight how orthorectification reduces spatial displacement, improving consistency between GOES and VIIRS fire observations. In these figures, the BT observations are from GOES, while the fire boundaries extracted from VIIRS are shown with black lines.

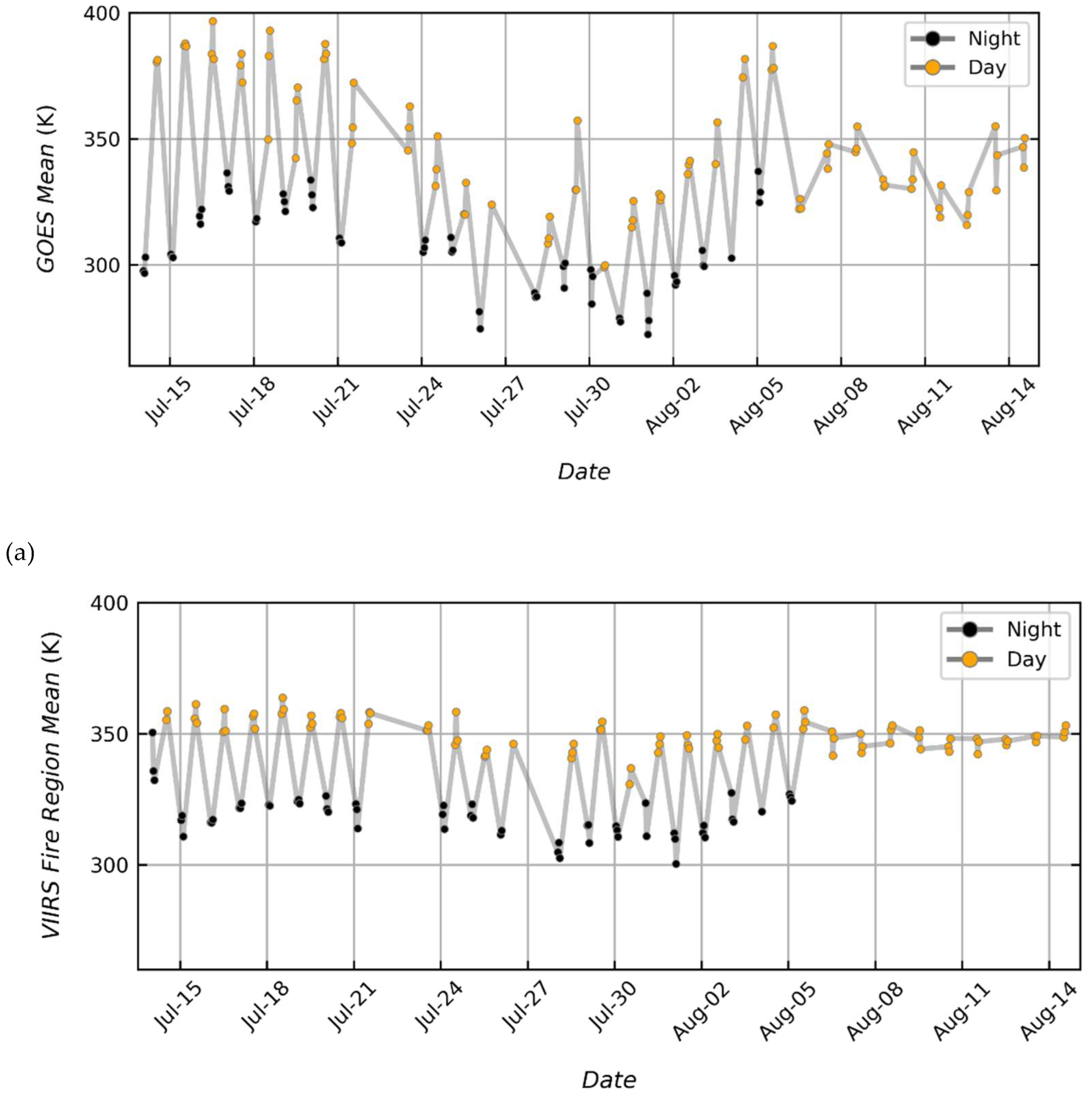

Figure A-2 provides quantitative evidence supporting the normalization strategy adopted in this study. Panels (a) and (b) show that both GOES Band-7 and VIIRS active-fire BT values exhibit a pronounced day-night bimodal distribution, with systematically higher mean BT during daytime and lower values at night when computed over the same wildfire snapshot. Panel (c) further demonstrates a strong linear relationship between contemporaneous GOES and VIIRS mean BT values over VIIRS-detected fire pixels, indicating that although the absolute BT values differ between sensors and across time of day, their relative BT values remain consistent. Together, these results indicate that absolute BT magnitudes are strongly influenced by diurnal variability, while relative contrasts within each image are more stable and informative for fire characterization. These observations motivated the use of per-image z-score normalization, which removes global BT shifts while preserving internal thermal structure. The analysis in Figure A-2 thus provides empirical justification for the normalization approach described in the main text and underlines the model design in which per-image mean and standard deviation are estimated directly from GOES inputs to enable physically consistent de-normalization at inference time.

Figure A2.

Day–night variability and cross-sensor correlation in BT expressed in K for the Dixie Fire from July 14 to August 15: (a) Mean GOES Band 7 BT values computed only over regions corresponding to VIIRS-detected active fire pixels, showing a distinct day–night bimodal pattern, (b) Mean VIIRS active fire pixels BT values over time, similarly exhibiting clear day–night separation, and (c) Scatter plot of contemporaneous GOES and VIIRS mean BT values over VIIRS active-fire region, demonstrating a strong linear relationship. Black dots represent nighttime observations while yellow dots represent daytime observations.

Figure A2.

Day–night variability and cross-sensor correlation in BT expressed in K for the Dixie Fire from July 14 to August 15: (a) Mean GOES Band 7 BT values computed only over regions corresponding to VIIRS-detected active fire pixels, showing a distinct day–night bimodal pattern, (b) Mean VIIRS active fire pixels BT values over time, similarly exhibiting clear day–night separation, and (c) Scatter plot of contemporaneous GOES and VIIRS mean BT values over VIIRS active-fire region, demonstrating a strong linear relationship. Black dots represent nighttime observations while yellow dots represent daytime observations.

Segmentation and Regression Model Architecture used in DL-WREN

This appendix provides a detailed breakdown of the DL architecture used in DL-WREN for fire segmentation and regression. Table B-1 describes the U-Net variant used to generate pixel-wise fire masks, and Table B-2 describes the U-Net based regression model that predicts z-score–normalized BT along with per-image statistical parameters (μ and σ) needed to reconstruct full-scale temperature values.

Table B1.

Architecture of the U-Net variant used for segmentation. The model receives a 3-channel GOES input image and outputs a 1-channel binary mask representing the probability of fire presence for each pixel. “Conv2d (3→64)” denotes a 2D convolutional layer with 3 input and 64 output channels, while “×2” indicates two consecutive convolutional layers within a DoubleConv block. “TransposeConv” refers to a transposed convolution (also called deconvolution) used for up-sampling in the decoder path. Batch normalization (BatchNorm2d) is applied after each convolution unless otherwise specified. The final layer uses a sigmoid activation to produce pixel-wise probabilities between 0 and 1, later thresholded to generate binary fire. masks.

Table B1.

Architecture of the U-Net variant used for segmentation. The model receives a 3-channel GOES input image and outputs a 1-channel binary mask representing the probability of fire presence for each pixel. “Conv2d (3→64)” denotes a 2D convolutional layer with 3 input and 64 output channels, while “×2” indicates two consecutive convolutional layers within a DoubleConv block. “TransposeConv” refers to a transposed convolution (also called deconvolution) used for up-sampling in the decoder path. Batch normalization (BatchNorm2d) is applied after each convolution unless otherwise specified. The final layer uses a sigmoid activation to produce pixel-wise probabilities between 0 and 1, later thresholded to generate binary fire. masks.

| Stage |

Layer Type |

Output Shape |

Kernel Size |

Stride |

Padding |

Activation |

Normalization |

Details |

| Input |

– |

3 × 128 × 128 |

– |

– |

– |

– |

– |

3 channel GOES input |

| Encoder 1 |

Conv2d (3→64) ×2 |

64 × 128 × 128 |

3×3 |

1 |

1 |

ReLU ×2 |

BatchNorm2d ×2 |

DoubleConv block |

| MaxPool2d |

64 × 64 × 64 |

2×2 |

2 |

0 |

– |

– |

Down-sampling |

| Encoder 2 |

Conv2d (64→128) ×2 |

128 × 64 × 64 |

3×3 |

1 |

1 |

ReLU ×2 |

BatchNorm2d ×2 |

DoubleConv block |

| MaxPool2d |

128 × 32 × 32 |

2×2 |

2 |

0 |

– |

– |

Down-sampling |

| Encoder 3 |

Conv2d (128→256) ×2 |

256 × 32 × 32 |

3×3 |

1 |

1 |

ReLU ×2 |

BatchNorm2d ×2 |

DoubleConv block |

| MaxPool2d |

256 × 16 × 16 |

2×2 |

2 |

0 |

– |

– |

Down-sampling |

| Encoder 4 |

Conv2d (256→512) ×2 |

512 × 16 × 16 |

3×3 |

1 |

1 |

ReLU ×2 |

BatchNorm2d ×2 |

DoubleConv block |

| MaxPool2d |

512 × 8 × 8 |

2×2 |

2 |

0 |

– |

– |

Down-sampling |

| Bottleneck |

Conv2d (512→1024) ×2 |

1024 × 8 × 8 |

3×3 |

1 |

1 |

ReLU ×2 |

BatchNorm2d ×2 |

Expands feature depth |

Decoder 1

|

TransposeConv (1024→512) |

512 × 16 × 16 |

2×2 |

2 |

0 |

– |

– |

Up-sampling |

| Conv2d (1024→512) ×2 |

512 × 16 × 16 |

3×3 |

1 |

1 |

ReLU ×2 |

BatchNorm2d ×2 |

Skip from Encoder 4 |

| Decoder 2 |

TransposeConv (512→256) |

256 × 32 × 32 |

2×2 |

2 |

0 |

– |

– |

Up-sampling |

| Conv2d (512→256) ×2 |

256 × 32 × 32 |

3×3 |

1 |

1 |

ReLU ×2 |

BatchNorm2d ×2 |

Skip from Encoder 3 |

| Decoder 3 |

TransposeConv (256→128) |

128 × 64 × 64 |

2×2 |

2 |

0 |

– |

– |

Up-sampling |

| Conv2d (256→128) ×2 |

128 × 64 × 64 |

3×3 |

1 |

1 |

ReLU ×2 |

BatchNorm2d ×2 |

Skip from Encoder 2 |

| Decoder 4 |

TransposeConv (128→64) |

64 × 128 × 128 |

2×2 |

2 |

0 |

– |

– |

Up-sampling |

| Conv2d (128→64) ×2 |

64 × 128 × 128 |

3×3 |

1 |

1 |

ReLU ×2 |

BatchNorm2d ×2 |

Skip from Encoder 1 |

| Output |

Conv2d (64→1) |

1 × 128 × 128 |

1×1 |

1 |

0 |

Sigmoid |

– |

Final prediction (binary mask) |

Table B2.

Architecture of the U-Net variant used for regression. The model takes a 3-channel GOES input and predicts a z-score–normalized brightness temperature (BT) map, along with the mean (μ) and standard deviation (σ) of BT for each input image. These parameters allow reconstruction of the full-scale temperature in Kelvin (K) during evaluation. “Conv2d (3→64)” indicates a 2D convolutional layer with 3 input and 64 output channels, while “×2” denotes two successive convolutional operations within the same block (commonly referred to as a DoubleConv block). “FC” refers to a fully connected (linear) layer. Two parallel fully connected branches are used: one predicts the per-image mean (μ) and the other predicts the standard deviation (σ), with an exponential activation (“Exp.”) ensuring σ remains positive. Batch normalization (BatchNorm2d) follows each convolution unless otherwise noted.

Table B2.

Architecture of the U-Net variant used for regression. The model takes a 3-channel GOES input and predicts a z-score–normalized brightness temperature (BT) map, along with the mean (μ) and standard deviation (σ) of BT for each input image. These parameters allow reconstruction of the full-scale temperature in Kelvin (K) during evaluation. “Conv2d (3→64)” indicates a 2D convolutional layer with 3 input and 64 output channels, while “×2” denotes two successive convolutional operations within the same block (commonly referred to as a DoubleConv block). “FC” refers to a fully connected (linear) layer. Two parallel fully connected branches are used: one predicts the per-image mean (μ) and the other predicts the standard deviation (σ), with an exponential activation (“Exp.”) ensuring σ remains positive. Batch normalization (BatchNorm2d) follows each convolution unless otherwise noted.

| Stage |

Layer Type |

Output Shape |

Kernel Size |

Stride |

Padding |

Activation |

Batch Normalization |

Details |

| Input |

– |

3×128×128 |

– |

– |

– |

– |

– |

3 channel GOES input |

| Encoder 1 |

Conv2d (3→64) ×2 |

64×128×128 |

3×3 |

1 |

1 |

ReLU ×2 |

BatchNorm2d ×2 |

DoubleConv block |

| MaxPool2d |

64×64×64 |

2×2 |

2 |

0 |

– |

– |

Downsample |

| Encoder 2 |

Conv2d (64→128) ×2 |

128×64×64 |

3×3 |

1 |

1 |

ReLU ×2 |

BatchNorm2d ×2 |

DoubleConv block |

| MaxPool2d |

128×32×32 |

2×2 |

2 |

0 |

– |

– |

Downsample |

| Bottleneck |

Conv2d (128→256) ×2 |

256×32×32 |

3×3 |

1 |

1 |

ReLU ×2 |

BatchNorm2d ×2 |

Expands feature depth |

| Decoder 1 |

ConvTranspose2d (256→128) |

128×64×64 |

2×2 |

2 |

0 |

– |

– |

Upsample |

| ConvTranspose2d (256→128) |

128×64×64 |

3×3 |

1 |

1 |

ReLU ×2 |

BatchNorm2d ×2 |

Skip from Encoder 2 |

| Decoder 2 |

ConvTranspose2d (128→64) |

64×128×128 |

2×2 |

2 |

0 |

– |

– |

Upsample |

| Conv2d (128→64) ×2 |

64×128×128 |

3×3 |

1 |

1 |

ReLU ×2 |

BatchNorm2d ×1 |

Skip from Encoder 1 |

| Final Output |

Conv2d (64→1) |

1×128×128 |

1×1 |

1 |

0 |

– |

– |

Final z-score normalized BT prediction |

| Global Pool |

AdaptiveAvgPool2d |

64×1×1 |

– |

– |

– |

– |

– |

Spatial pooling over full feature map |

| FC μ |

Linear (64→1) |

1 |

– |

– |

– |

– |

– |

Predicts per-image mean |

| FC σ |

Linear (64→1) |

1 |

– |

– |

– |

Exp. |

– |

Predicts per-image std, enforced positive |

Ablation Studies

Appendix C presents the ablation studies conducted to evaluate the impact of key design choices in the proposed approach, focusing on both the regression and segmentation tasks of the model. These studies quantify how specific modeling decisions, such as loss function design and background BT value in ground truth, affect the accuracy of fire detection and BT estimation.

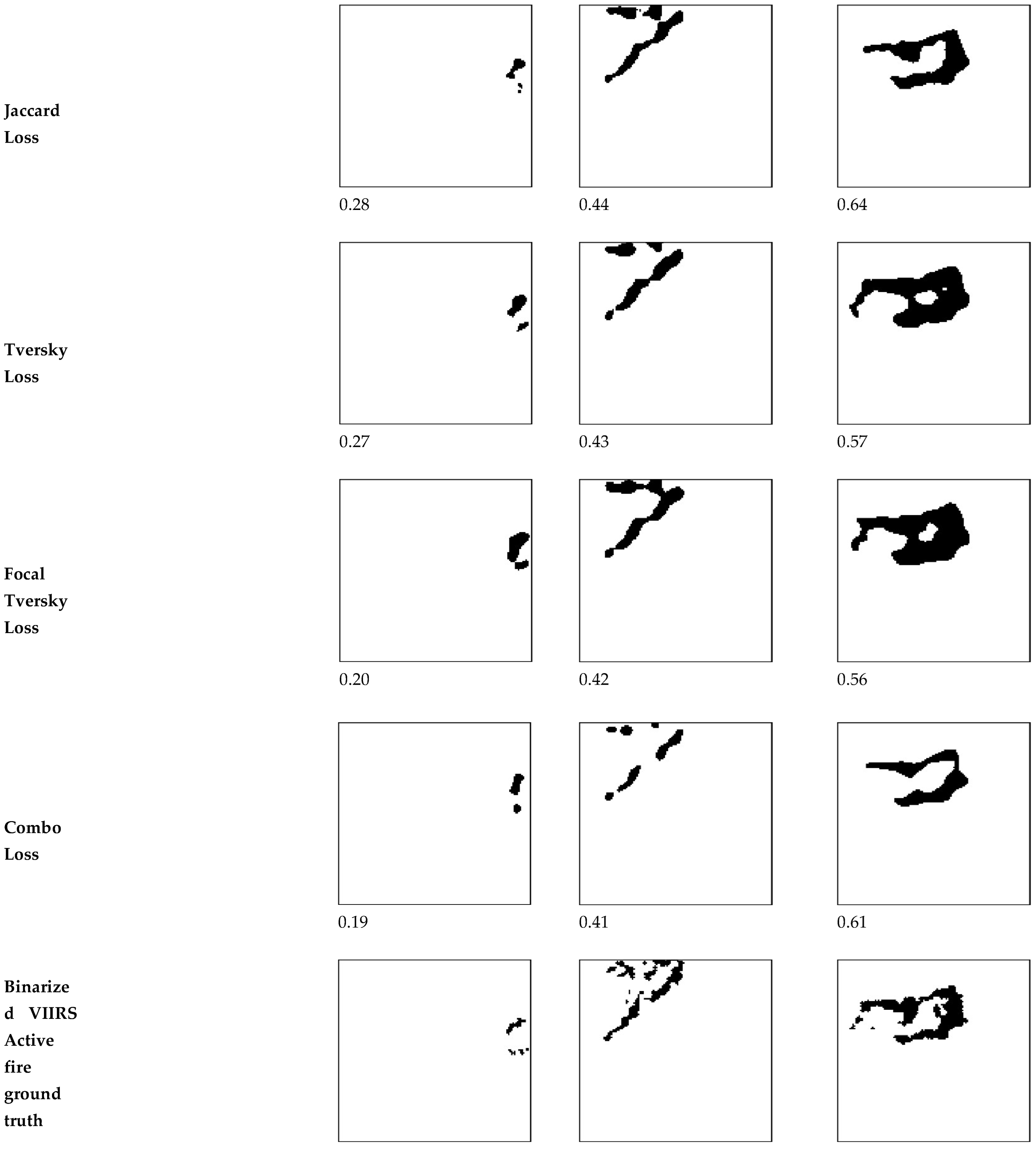

For the segmentation task, the study addresses the severe class imbalance caused by the small number of active fire pixels relative to the background. To address this, six loss functions are considered in the ablation study, each chosen for its ability to handle data sparsity, class imbalance, or limited spatial overlap. In addition to the BCE loss explained before in Eq. (6), other considered loss functions include the Focal Loss, Jaccard Loss, Tversky Loss, Focal Tversky Loss, and Combo Loss (Jadon, 2020). The goal of this evaluation is to determine which loss formulation provides more accurate localization of fire pixels. Since each loss has a different formulation, the relevant hyperparameters (, and ) are tuned separately for each method to ensure fair comparison. It should be noted that although the same symbols (, and ) are used throughout for clarity and consistency with prior literature, their specific meaning varies across loss functions. The rationale and mathematical formulation of each loss function are discussed in detail below.

Focal Loss (Lin et al., n.d.) integrates two key mechanisms into the BCE loss to better address the challenges of fire segmentation: a class weighting mechanism and a focus on hard examples mechanism. These mechanisms are controlled by the hyperparameters

and

, respectively. The class weighting mechanism, governed by

(ranging from 0 to 1), adjusts the relative importance of fire (foreground) versus background pixels. A higher

increases the penalty for misclassifying fire pixels, which are scarce relative to the vast number of background pixels, thereby directly addressing class imbalance. The focus on hard examples mechanism, controlled by

(typically between 1 and 3), reduces the contribution of well-classified pixels (those where

is close to the true label) by down-weighting their loss using a modulating factor

or

. This shifts the training focus toward hard-to-classify pixels, such as low-intensity or small fires, which are often misclassified due to their similarity to background. Compared to standard BCE, which treats all pixels equally, Focal Loss emphasizes learning from rare and hard-to-classify examples, making it particularly effective for tasks with high class imbalance and ambiguous boundaries. The Focal Loss is defined as:

The Jaccard Loss, as defined in the following equation, is a region-based loss function that encourages better overlap between predicted and ground truth binary masks. By directly optimizing the IOU score, it is particularly effective for segmentation tasks that require precise spatial alignment.

In the above equation, the numerator represents the intersection between the predicted fire region and the ground truth, i.e., the number of pixels correctly identified as fire. The denominator includes the union of the predicted and ground truth regions. Specifically, it sums all pixels identified as fire by either the ground truth or the model (or both), then subtracts the overlapping pixels to avoid double-counting. Physically, this means the model is rewarded when it accurately predicts fire pixels and penalized when it predicts too many false positives or misses fire pixels.

Tversky Loss (Salehi et al., 2017), as defined in Eq. (C-3), is a region-based loss function and extends the Jaccard loss by introducing adjustable weights to balance the importance of false positives (FP) and false negatives (FN). This flexibility is important in fire segmentation tasks, where missing a fire pixel (FN) may be more critical than incorrectly predicting one (FP). The Tversky Loss can be calculated as follows:

where, (ranging from 0 to 1) is a hyperparameter that controls the trade-off between penalizing false positives and false negatives. The second term in the denominator increases when the model predicts fire where there is no fire (FP), while the third term increases when it fails to predict fire where it exists (FN). By adjusting , the loss function can be biased toward desired behavior. For example, setting >0.5 emphasizes reducing false positives, promoting conservative predictions, whereas <0.5 favors reducing false negatives, encouraging the model to detect more fire pixels even at the risk of overprediction. Physically, this loss encourages the model to learn according to the relative severity of the two types of errors. In fire detection, where missing an active fire can have serious consequences, Tversky Loss enables the network to prioritize fire predictions over overall accuracy, making it well-suited for highly imbalanced segmentation problems.

Focal Tversky Loss (Abraham and Khan, 2019) builds on Tversky Loss by adding a focusing parameter

, which acts as a spotlight, intensifying the penalty for incorrect or uncertain predictions while dimming the influence of easy, confidently correct ones. By raising the Tversky Loss to the power of

>1, the loss becomes more sensitive to pixels where the model struggles such as small, faint, or partially detected fires forcing the network to pay more attention to these cases during training. The Focal Tversky Loss is defined as follows.

Finally, Combo Loss (Taghanaki et al., 2019), is formulated as a weighted sum of modified binary cross-entropy (MBCE) (Jadon, 2020) and Dice loss to combine their complementary strengths (Eq. (C-7)). The MBCE component (Eq. (C-5)) introduces a weighting factor

to address class imbalance by adjusting the relative importance of foreground/fire and background/non-fire terms. This loss provides a smooth gradient behavior, meaning it changes gradually with respect to the model’s predictions, which helps the model train steadily and avoid unstable jumps. Dice Loss (Eq. (C-6)), a special case of the Tversky loss with

= 0.5, enhances the learning signal from overlapping regions by doubling their weight in both numerator and denominator. This property makes Dice Loss particularly effective for segmenting small or sparse regions, such as active-fire pixels. This gives Dice a high sensitivity to spatial overlap, encouraging precise localization. By combining MBCE’s smooth gradient behavior with Dice Loss’s sensitivity to spatial overlap, Combo Loss balances pixel-wise classification and region-level accuracy. The hyperparameter

controls the contribution of each component.

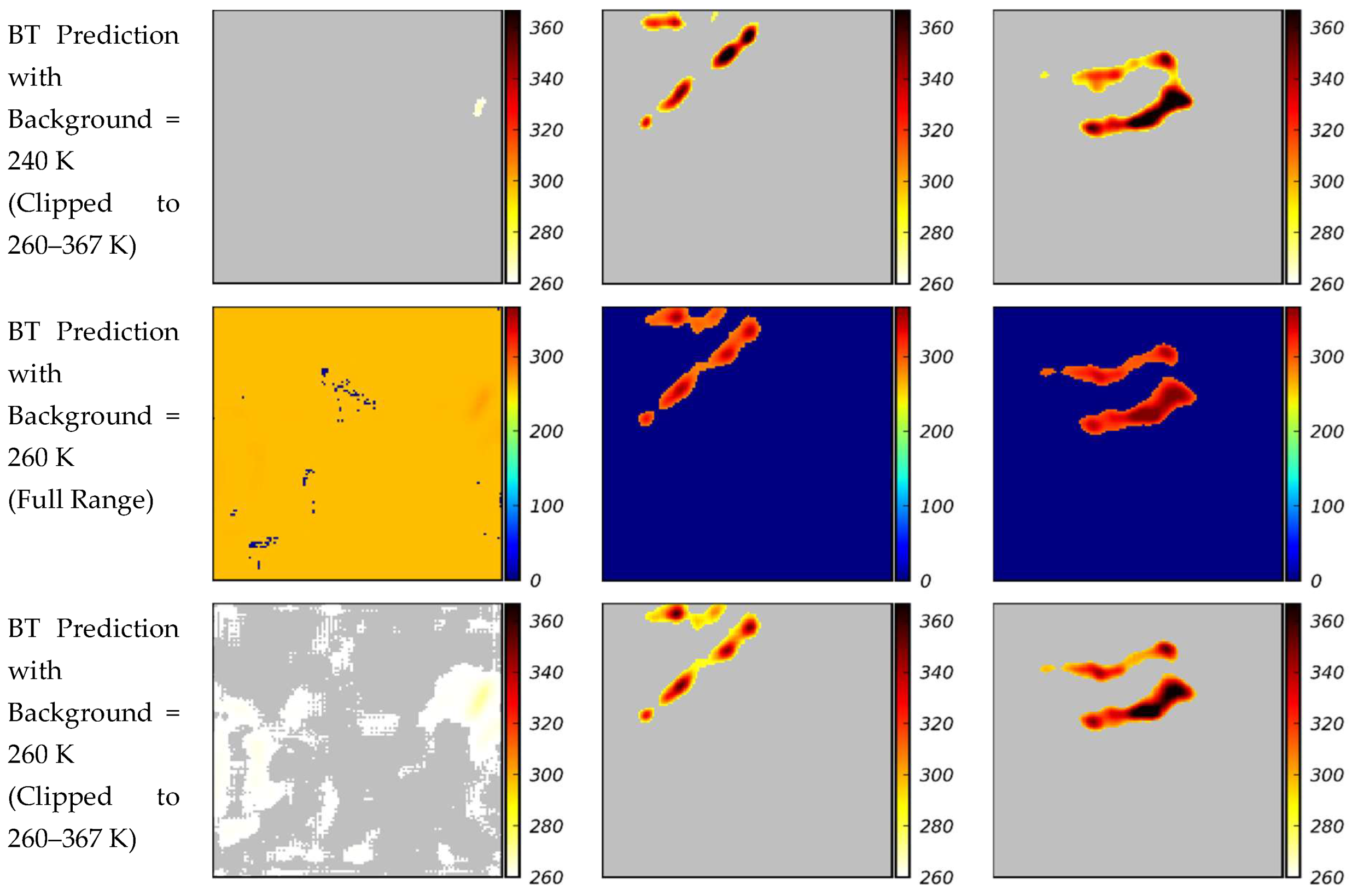

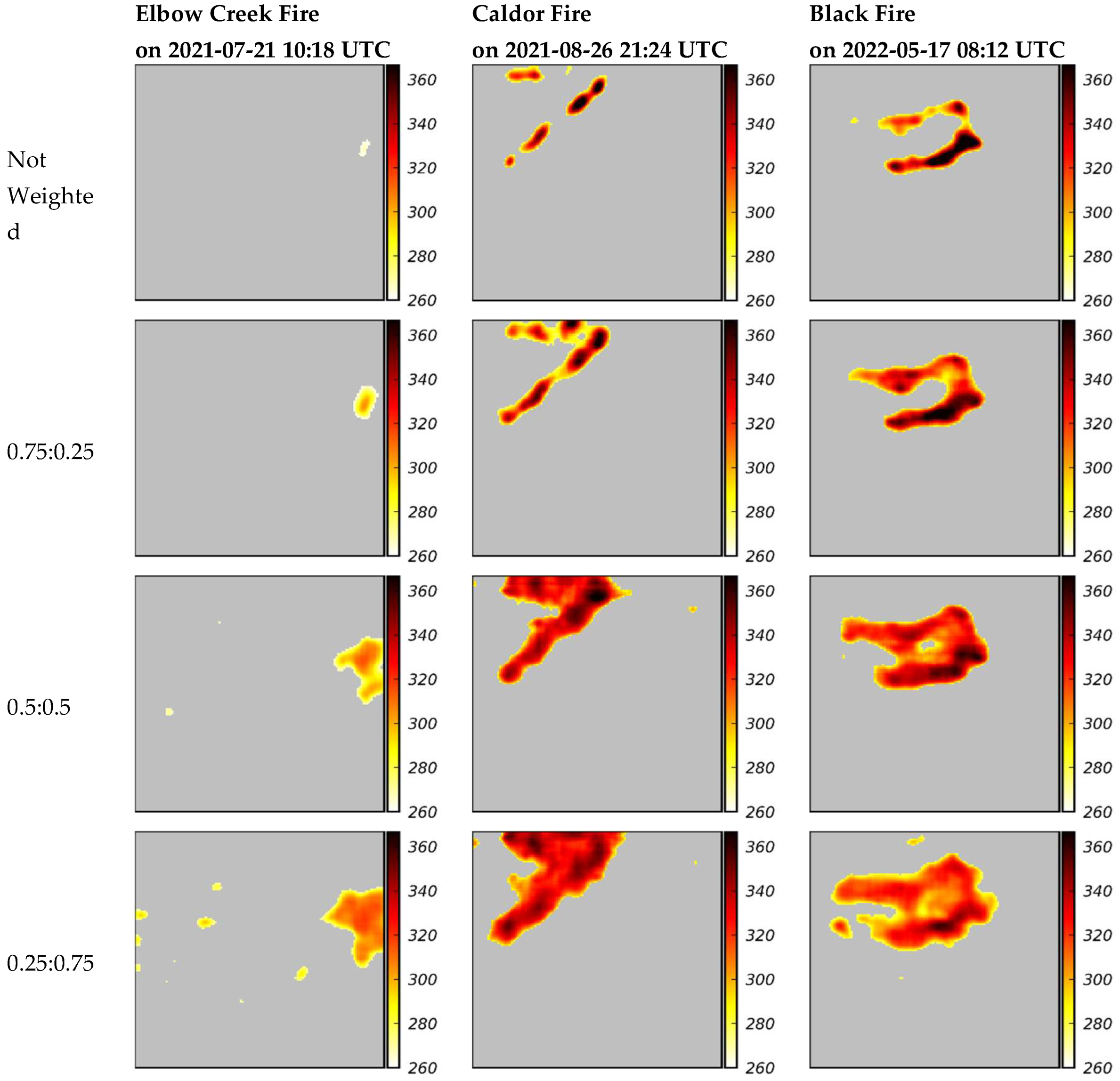

For the regression task, two important factors are examined for their influence on BT prediction accuracy as in the regression ablation study. First, the effect of assigning different constant values to background/non-fire pixels in the VIIRS ground truth is examined to reduce the underestimation of BT in fire regions caused by mismatched background intensities. Several background values are tested during experimentation, with 0 K, 220 K, 240 K, and 260 K reported in the next appendix. Second, the effect of spatially selective weighting of fire regions in the loss function is examined. For this ablation study, model is trained with the (Eq. (9)), varying the fire-region weight among 0.25, 0.5, and 0.75 to emphasize learning from the sparse but critical fire regions. Quantitative and qualitative results from these evaluations are presented in the following section to guide the final model design.

Ablation Study Results

Appendix D provides the results of the ablation studies. It reports performance values, shows visual comparisons, and explains how each tested option-loss functions, background settings, and spatial weighting affects the model performance. These results are used to justify the final configuration of the model.

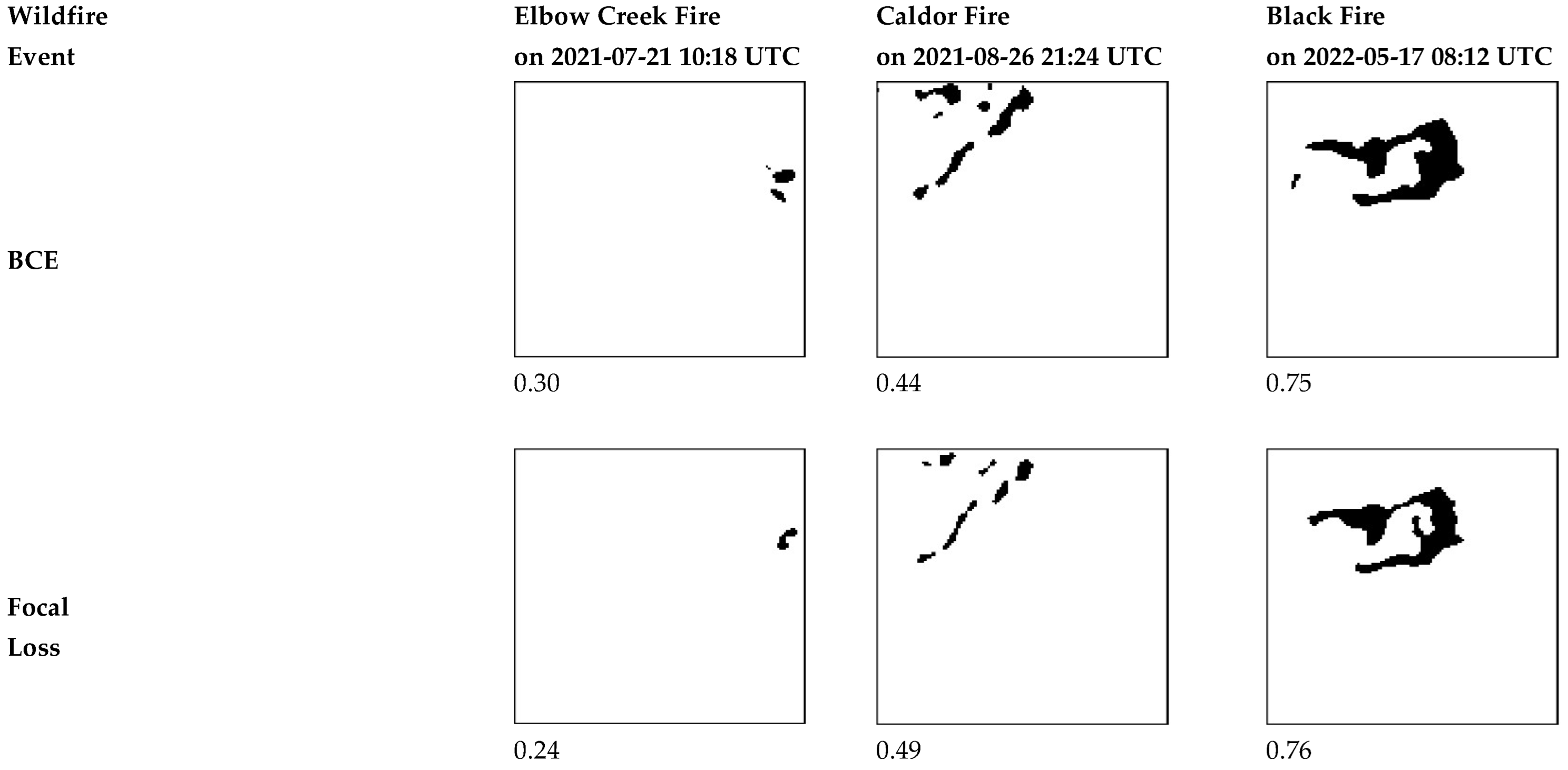

Results for different loss functions evaluated for fire region segmentation are presented in Table D-1, with IOU used as the performance metric. For each loss function, the relevant hyperparameters (, and ) are tuned separately, since their roles differ depending on the formulation (e.g., class weighting in Focal Loss versus imbalance control in Tversky-based losses).

Table D1.

Evaluation of loss functions for fire region segmentation.

Table D1.

Evaluation of loss functions for fire region segmentation.

| Loss Function |

Alpha |

Beta |

Gamma |

|

| BCE |

N/A |

N/A |

N/A |

0.40 |

| Focal Loss |

0.25 |

N/A |

1 |

0.40 |

| Jaccard Loss |

N/A |

N/A |

N/A |

0.37 |

| Tversky Loss |

N/A |

0.75 |

N/A |

0.38 |

| Focal Tversky Loss |

N/A |

0.75 |

2 |

0.38 |

| Combo Loss |

0.25 |

0.25 |

N/A |

0.38 |