Submitted:

03 March 2026

Posted:

04 March 2026

You are already at the latest version

Abstract

Keywords:

1. Toward AI-Augmented Technical Interviewing: A Conceptual Framework for Assessing Developer Competencies in AI-Mediated Software Development

1.1. I. Introduction

1.1.1. A. Motivation

1.1.2. B. Problem Statement

1.1.3. C. Approach Overview

1.1.4. D. Novelty Statement

1.1.5. E. Contributions

- 1.

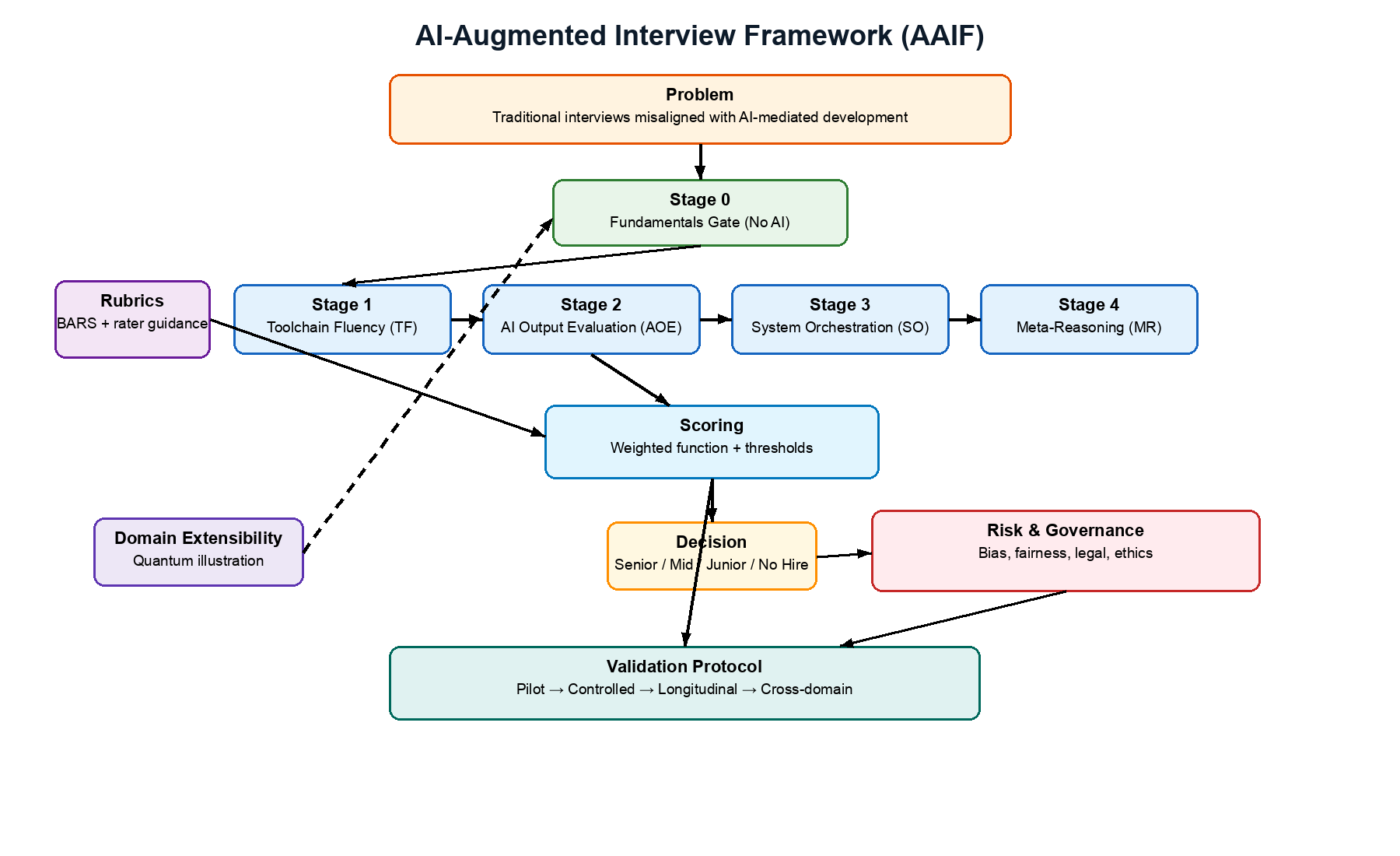

- C1: AI-Augmented Interview Framework (AAIF): A structured five-stage interview methodology (Stage 0 gate plus four AI-augmented stages) for AI-augmented development roles, with formal competency definitions, a weighted assessment function, and configurable decision criteria. We position AAIF against existing industry platforms through a structured comparison (Table II). (Section III)

- 2.

- C2: Evaluation Rubrics with Behavioral Anchors: Detailed rubrics with behaviorally anchored rating scales (BARS) for each competency level (1–5), providing specific observable behaviors that distinguish adjacent performance levels, including common rater errors to avoid. We propose hypothesized linkages between AAIF stages and organizational KPIs, labeled as conjectures pending empirical calibration. (Section IV)

- 3.

- C3: Illustration of Potential Domain Extensibility: Application of AAIF to quantum computing, illustrating that the core assessment principles may transfer to highly specialized domains when adapted to domain-specific constraints. We frame this as an illustrative demonstration requiring independent empirical validation. (Section VI)

- 4.

- C4: Integrated Risk Framework and Empirical Validation Protocol: A practitioner-oriented synthesis of bias, fairness, legal compliance, and ethical considerations for AI-augmented hiring, together with a detailed four-phase empirical validation protocol specifying study designs, sample sizes with power analysis justifications, target metrics, and ethical safeguards for future research. (Sections V and VII.C)

1.1.6. F. Paper Organization

1.2. II. Research Methodology

1.2.1. A. Design Science Research Approach

- 1.

- Problem Identification and Motivation: The misalignment between traditional technical interviews and AI-augmented development workflows (Section I.A).

- 2.

- Objectives of a Solution: A structured interview framework that assesses AI collaboration competencies while maintaining evaluation of fundamental understanding (Section I.B).

- 3.

- Design and Development: The AAIF five-stage framework with formal definitions, rubrics, and decision criteria (Sections III–IV).

- 4.

- Demonstration: Application of AAIF to quantum computing as a specialized domain, and expanded illustrative use case with scoring (Sections VI, VIII).

- 5.

- Evaluation: Threats to validity analysis following Messick’s [52] unified validity framework, and proposed empirical validation protocol (Sections V.C–V.D, VII.C). We acknowledge that DSR evaluation ideally includes empirical testing of the artifact [14]; the current work provides analytical evaluation (threats to validity) and descriptive evaluation (scenario-based demonstrations) but defers empirical evaluation to future work. This means the DSR process is incomplete, and the framework’s validity remains unestablished pending empirical testing.

- 6.

- Communication: This manuscript.

1.2.2. B. Theoretical Lenses

- Hiring managers: High PU (better signal on AI collaboration skills) but low PEOU (more complex interview logistics, tool provisioning). This analysis informed the phased rollout recommendation (Section VII.A) and quick-start guide, which progressively introduce complexity.

- Candidates: PU depends on perceived fairness; PEOU depends on prior AI tool experience. This informed the pre-interview orientation provision (Section V.A.3) and advance tool notification (Section IV.F).

- HR professionals: Low PU if compliance burden is unclear; moderate PEOU if rubrics are interpretable. This informed the regulatory compliance matrix (Table VII) and the design of BARS rubrics for HR accessibility.

- Interviewers: PU depends on whether framework improves hiring signal; PEOU depends on calibration burden. This informed the interviewer certification and calibration protocol (Section IV.F).

1.2.3. C. Literature Synthesis Method

1.2.4. D. Guiding Research Questions

- GQ1: Are the four competencies defined in the AAIF model (TF, AOE, SO, MR) sufficient and distinct constructs for assessing AI collaboration skills in software development roles?

- GQ2: How can interview frameworks evaluate AI fluency while maintaining rigorous assessment of foundational technical understanding?

- GQ3: How might a general-purpose AI-augmented interview framework be adapted for specialized domains (e.g., quantum computing)?

- GQ4: What bias, fairness, legal, and ethical risks arise from AI-augmented hiring, and how can they be systematically mitigated?

1.3. III. The AI-Augmented Interview Framework (AAIF)

1.3.1. A. Background: The Changing Landscape of Software Development

| Traditional Skills | Emerging Skills |

| Data structures and algorithms | Problem framing and decomposition |

| Manual system design | AI orchestration and prompt engineering |

| Syntax-driven coding | Toolchain integration and evaluation |

| Manual debugging | Model steering and interpretability |

| API memorization | AI output analysis and critique |

| Solo code authoring | Collaborative human-AI workflows |

1.3.2. B. Related Work, Industry Comparison, and Research Gap

| Feature | HackerRank | CodeSignal | Karat | AAIF |

| AI tool access in assessment | Emerging (AI-allowed tracks) | AI-powered assessment generation | Limited | Core design principle |

| Fundamentals gate (no AI) | Yes (traditional track) | Yes (traditional track) | Yes | Yes (Stage 0, explicit gate with defined threshold) |

| Prompt engineering evaluation | No dedicated assessment | Experimental | No | Yes (Stage 1, dedicated rubric) |

| AI output critical evaluation | No | Partial (code review tasks) | No | Yes (Stage 2, dedicated rubric) |

| System-level AI orchestration | No | No | Partial (system design rounds) | Yes (Stage 3, dedicated rubric) |

| Meta-reasoning assessment | No | No | No | Yes (Stage 4, dedicated rubric) |

| Behaviorally anchored rubrics | Proprietary scoring | Proprietary scoring | Structured rubrics | Open BARS with published anchors |

| Theoretical grounding | Not published | Not published | Not published | STS, TAM, Activity Theory |

| Published risk framework | Annual reports | Limited | Not published | Integrated (bias, legal, ethical) |

| Regulatory compliance matrix | Not published | Not published | Not published | Published (Table VII) |

| Empirical validation protocol | Internal (not published) | Internal (not published) | Internal (not published) | Published four-phase protocol |

| Domain extensibility method | Platform-dependent | Platform-dependent | Interview-dependent | Explicit adaptation template |

1.3.3. C. Competency Model

- c_1: Toolchain Fluency (TF), Proficiency with AI development tools and prompt engineering

- c_2: AI Output Evaluation (AOE), Critical assessment of AI-generated outputs

- c_3: System Orchestration (SO), Integration of AI tools into larger systems and workflows

- c_4: Meta-Reasoning (MR), Reflection on AI limitations, ethics, and failure modes

- Collaborative AI usage (pair programming with AI suggestions, team-based AI workflows) spans TF, AOE, and SO simultaneously.

- AI-assisted debugging requires AOE (evaluating AI diagnostic suggestions) and TF (formulating debugging prompts) in combination.

- Knowledge management with AI (using AI for documentation, onboarding, knowledge transfer) is partially captured by SO but may warrant independent assessment in knowledge-intensive roles.

- AI tool configuration and customization (setting up code completion rules, training custom models) is partially captured by TF but may emerge as a distinct competency as AI tools mature.

1.3.4. D. Assessment Function and Psychometric Analysis

A(k) = w_TF ∗ S_TF(k) + w_AOE ∗ S_AOE(k) + w_SO ∗ S_SO(k) + w_MR ∗ S_MR(k)

| Stage | Weight | Rationale |

| Toolchain Fluency (TF) | w_1 = 0.25 | Foundation for AI-augmented work |

| AI Output Evaluation (AOE) | w_2 = 0.25 | Critical for code quality and safety |

| System Orchestration (SO) | w_3 = 0.30 | Highest complexity; closest to senior-level work |

| Meta-Reasoning (MR) | w_4 = 0.20 | Important for long-term success and trust |

- 1.

- Compensatory model: The weighted average is fully compensatory, a high score in one competency can offset a low score in another, subject to minimum threshold constraints (Specification 2). We chose a compensatory model because AI-augmented development roles typically allow individuals to compensate for moderate weakness in one area through strength in others. However, the minimum-score floors in the decision criteria provide a partial non-compensatory constraint. Example of a potential limitation: A candidate scoring TF=5, AOE=5, SO=5, MR=1 would receive A(k) = 0.25(5) + 0.25(5) + 0.30(5) + 0.20(1) = 4.2, meeting the “Hire (Mid-level)” threshold despite having no awareness of AI limitations. Organizations should evaluate whether such outcomes are acceptable and adjust minimum thresholds accordingly.

- 2.

- Equal-interval assumption: The model treats the difference between scores 1 and 2 as equivalent to the difference between scores 4 and 5. This equal-interval assumption is standard for Likert-type scales but is rarely validated and may be violated in practice. If the behavioral distance between “Insufficient” and “Developing” is substantially larger than between “Strong” and “Exceptional,” the linear combination may be distorted. Empirical calibration in Phase 2 should include analysis of score distributions to test this assumption.

- 3.

- Independence assumption: The model assumes competencies contribute independently to the overall score. In practice, competency interactions are likely significant, strong SO likely requires adequate TF, and effective AOE may be a prerequisite for meaningful MR. A multiplicative or interaction-based model (e.g., A(k) = product of weighted scores, or inclusion of interaction terms) could capture these dependencies. We defer to empirical evidence from the validation protocol to determine whether interaction effects are large enough to warrant a more complex model.

- 4.

- Ordinal vs. interval scaling: The 1–5 BARS scores are technically ordinal data. Treating ordinal scores as interval data in a weighted average is a common but methodologically questionable practice. Item Response Theory (IRT) models, which account for item difficulty and rater severity, would be more psychometrically rigorous. We recommend IRT analysis as part of Phase 2 validation to determine whether the simpler weighted average provides adequate approximation.

- 5.

- Sensitivity to weight changes: Small changes in weights can shift candidates across decision thresholds. For example, changing w_SO from 0.30 to 0.25 (and redistributing 0.05 to MR) could change a borderline candidate’s outcome. Organizations should conduct sensitivity analyses before deploying specific weight configurations.

- Preferred method: Discussion to consensus, where interviewers compare observations and agree on a single score. This produces the most reliable assessments but requires scheduling coordination.

- Alternative method: Mean of independent scores, used when consensus discussion is impractical. Report the standard deviation; if SD > 1.0, convene a discussion to resolve the disagreement.

- Minimum panel size: Two interviewers per stage minimum; three recommended for senior-level assessments. Single-interviewer scores should be flagged as lower-reliability.

- Disagreement resolution: If interviewers disagree by 2+ points after discussion, a third interviewer independently evaluates the same stage using recorded session materials. The modal score is used.

- Hire (Senior): A(k) >= 4.5 AND min(S_i) >= 4 AND Stage 0 passed

- Hire (Mid-level): A(k) >= 3.5 AND min(S_i) >= 3 AND Stage 0 passed

- Hire (Junior): A(k) >= 3.0 AND S_TF >= 3 AND learning velocity score >= 3 AND Stage 0 passed

- No Hire: A(k) < 3.0 OR any S_i < 2 OR Stage 0 not passed

1.3.5. E. Interview Stages

- Stage 0: Fundamentals Assessment (No AI Tools)

| Score | Label | Criteria |

| 5 | Exceptional | Demonstrates deep understanding across all assessed areas; explains trade-offs fluently |

| 4 | Strong | Solid understanding with minor gaps; can reason through unfamiliar problems |

| 3 | Adequate | Demonstrates working knowledge of core concepts; may struggle with advanced topics |

| 2 | Developing | Significant gaps in foundational knowledge; can explain basic concepts only |

| 1 | Insufficient | Cannot demonstrate basic understanding of core concepts |

- Stage 1: Toolchain Fluency and Prompt Engineering (TF)

- Stage 2: AI Output Evaluation (AOE)

- Stage 3: System-Oriented Problem Solving (SO)

- Stage 4: Meta-Reasoning and Reflection (MR)

1.3.6. F. Comparison: AAIF vs. Traditional Interviews

| Aspect | Traditional Interviews | AAIF |

| AI Tool Access | Prohibited | Provided and assessed |

| Fundamentals Check | Implicit in coding tasks | Explicit Stage 0 with defined pass/fail threshold |

| Primary Focus | Algorithm implementation | AI orchestration and evaluation |

| Evaluation Target | Code correctness | Judgment and critical thinking |

| Failure Mode Testing | Edge cases in code | AI hallucinations and limitations |

| Theoretical Grounding | Implicit/none | Explicit (STS, TAM, Activity Theory) |

| KPI Linkage | Weak or absent | Hypothesized (pending validation) |

| Risk Framework | Ad hoc | Integrated (bias, legal, ethical) |

| Score Aggregation | Varies | Defined protocol (consensus or mean with SD) |

1.4. IV. Behaviorally Anchored Evaluation Rubrics

1.4.1. A. Rubric Design Principles

1.4.2. B. Toolchain Fluency (TF) Behavioral Anchors

| Score | Label | Observable Behaviors |

| 5 | Exceptional | Demonstrates sophisticated prompt strategies (decomposition, few-shot examples, constraint specification) that show deep understanding of AI tool capabilities. Strategically combines multiple AI tools (e.g., ChatGPT for architecture, Copilot for implementation). Adapts tool selection based on task characteristics. Proactively anticipates edge cases AI might miss. May achieve effective results on first attempt or through deliberate iterative refinement, both patterns indicate expertise. |

| 4 | Strong | Writes effective prompts within 2–3 iterations. Validates AI output against requirements before accepting. Uses AI tools appropriately for task context. Shows comfort with multiple tools and can explain tool selection rationale. |

| 3 | Adequate | Writes functional prompts after multiple iterations. Checks AI output for obvious errors. Can use at least one AI tool competently. Relies on trial-and-error but eventually achieves desired results. |

| 2 | Developing | Struggles to craft effective prompts; prompts are vague, overly broad, or miss critical constraints. Accepts AI output without validation. Limited experience with AI development tools. Requires guidance to use tools productively. |

| 1 | Insufficient | Cannot use AI tools effectively even with guidance. Shows no understanding of prompt quality factors. Unable to iterate on prompts based on output quality. |

| – | Common Rater Errors | Do not confuse rapid prompting with effective prompting, speed is not a proxy for skill. Do not penalize candidates who ask clarifying questions before prompting; this may indicate thoughtful problem decomposition. Do not reward memorized prompt templates over genuine understanding of when and why to use specific techniques. |

1.4.3. C. AI Output Evaluation (AOE) Behavioral Anchors

| Score | Label | Observable Behaviors |

| 5 | Exceptional | Identifies subtle logical, security, and design flaws in AI-generated code. Explains root causes with reference to underlying principles. Proposes improvements that address systemic issues, not just surface symptoms. Articulates confidence levels in AI outputs with reasoning. |

| 4 | Strong | Identifies most errors in AI-generated code, including non-obvious issues. Explains why errors occur and proposes corrections. Demonstrates balanced skepticism, neither blindly trusting nor blindly rejecting AI outputs. |

| 3 | Adequate | Identifies obvious errors (syntax, basic logic). May miss subtle design or security issues. Can explain some error causes. Asks clarifying questions about AI-generated code. |

| 2 | Developing | Identifies only syntax-level errors. Misses logical and design flaws. Tends to accept AI output if it compiles/runs without deeper inspection. Cannot articulate why a particular output might be problematic beyond surface-level observations. |

| 1 | Insufficient | Cannot distinguish correct from incorrect AI output. Does not attempt to review or question AI-generated code. No framework for evaluating AI output quality. Accepts AI outputs without any critical examination. |

| – | Common Rater Errors | Do not confuse excessive skepticism (rejecting everything) with genuine critical evaluation. Do not penalize candidates who initially accept an output and then revise their assessment upon deeper analysis, this shows intellectual honesty. Do not reward candidates who identify many minor issues (style, naming) while missing critical flaws (security, correctness). |

1.4.4. D. System Orchestration (SO) Behavioral Anchors

| Score | Label | Observable Behaviors |

| 5 | Exceptional | Designs end-to-end systems that integrate AI components with clear interfaces, error handling, and fallback strategies. Identifies which subproblems benefit from AI vs. manual implementation. Considers scalability, maintainability, and production constraints. |

| 4 | Strong | Designs functional multi-component systems with AI integration. Addresses error handling and defines clear component boundaries. Demonstrates pragmatic trade-off reasoning between AI-generated and manual components. |

| 3 | Adequate | Produces a basic system design incorporating AI tools. May lack error handling or fallback strategies. Can articulate component interactions but misses edge cases. |

| 2 | Developing | Produces incomplete or unrealistic system designs. Over-relies on AI for all components without considering integration challenges. Limited awareness of production constraints. |

| 1 | Insufficient | Cannot produce a coherent system design. Does not understand how to integrate AI components into larger workflows. No awareness of system-level concerns (reliability, scalability). |

| – | Common Rater Errors | Do not penalize unconventional but valid architectural approaches. Do not over-weight whiteboard diagram quality, focus on reasoning about component interactions and trade-offs. Do not confuse familiarity with specific technologies (e.g., Kubernetes) with system orchestration competency. |

1.4.5. E. Meta-Reasoning (MR) Behavioral Anchors

| Score | Label | Observable Behaviors |

| 5 | Exceptional | Demonstrates nuanced understanding of AI limitations, bias sources, and failure modes. Provides concrete examples from experience. Articulates governance strategies and stakeholder communication approaches. Reasons about long-term implications of AI tool adoption. |

| 4 | Strong | Identifies key AI limitations and ethical concerns. Proposes reasonable mitigation strategies. Can discuss trade-offs between AI speed and reliability. Aware of regulatory landscape (GDPR, EEOC). |

| 3 | Adequate | Aware of common AI limitations (hallucinations, bias). Can discuss basic ethical concerns. Limited depth in mitigation strategies. May recite talking points rather than demonstrate genuine reasoning. |

| 2 | Developing | Vague awareness of AI limitations. Cannot articulate specific risks or mitigations. Over-trusts AI or dismisses AI concerns without nuance. |

| 1 | Insufficient | No awareness of AI limitations or ethical concerns. Treats AI as infallible. Cannot discuss failure modes or responsible use. |

| – | Common Rater Errors | Do not confuse verbal fluency or articulateness with genuine ethical reasoning, focus on the substance of the argument, not the polish of delivery. Be aware that communication norms vary across cultures; measured or indirect responses may indicate thoughtful reasoning in some cultural contexts. Do not penalize candidates who acknowledge uncertainty; this may indicate intellectual honesty. |

1.4.6. F. Scoring Process and Calibration

- 1.

- Interviewer certification: Interviewers must demonstrate proficiency with AI development tools (scoring 4+ on TF and AOE) before evaluating candidates. Bootstrapping strategy: For the first cohort, organizations should identify 3–5 senior engineers with demonstrated AI tool proficiency and have them cross-evaluate each other using the BARS rubrics. Those achieving consistent scores (within 1 point of each other) become the founding calibration panel. Subsequent interviewers are certified by this panel.

- 2.

- Calibration sessions: Quarterly sessions where multiple interviewers independently evaluate the same candidate (recorded or live) and compare scores. Interviewers consistently deviating by 1+ point from consensus should receive retraining. After two consecutive quarters of deviation, interviewers should be removed from the panel until recalibration is achieved. Recommended minimum: Three calibration exercises per quarter, using anonymized recorded sessions to avoid demographic bias in calibration. This is reportedly analogous to calibration practices at major technology companies.

- 3.

- Progressive rollout: Begin with Stage 0 and Stage 1 for 3–6 months, measure outcomes, then incrementally add stages. Do not attempt full AAIF deployment simultaneously.

- 4.

- Tool standardization: Standardize on a defined set of AI tools per role and provide candidates 5–7 days advance notice to familiarize themselves, reducing measurement of tool familiarity rather than underlying competency. Specify tool versions and access dates for reproducibility.

1.5. V. Risk Framework and Threats to Validity

1.5.1. A. Integrated Risk Analysis

- 1)

- Bias and Discrimination

- Cultural mismatch in prompt interpretation (prompts favoring Western engineering education conventions)

- AI model output preferences for certain problem-solving approaches or communication styles

- Underrepresentation of minority perspectives in AI-generated assessment content

- Language bias: AI tools perform best in English, disadvantaging non-native English speakers and developers in non-Anglophone countries. Research on LLM performance across languages [56] provides empirical evidence of significant performance disparities, with non-English languages showing substantially lower accuracy in code generation and explanation tasks

- 2)

- The Superficial Correctness Problem

- 3)

- Fairness Across AI Literacy Backgrounds

- 4)

- Legal and Regulatory Compliance

| Regulation | Jurisdiction | Key Requirements | Penalties |

| GDPR [31] | European Union | Right to explanation of automated decisions; consent required; data minimization | Up to 4% annual revenue |

| CCPA/CPRA [32] | California, USA | Opt-out rights; disclosure of profiling; data access rights | $2,500–$7,500 per violation |

| EEOC Guidelines [28] | United States | Disparate impact analysis; reasonable accommodation; documentation | Back pay, reinstatement, damages |

| NYC Local Law 144 [33] | New York City | Bias audits required for automated employment decision tools; public disclosure | $500–$1,500 per violation |

| EU AI Act [24] | European Union | Employment AI classified as “high-risk”; conformity assessment required | Up to 35M EUR or 7% revenue |

| DPDP Act [57] | India | Consent-based processing; data localization requirements; fiduciary obligations | Up to 250 crore INR |

| China AI Regulations [58] | China | Algorithm registration; fairness requirements; labeling of AI-generated content | Varies by regulation |

- 5)

- Surveillance and Power Asymmetry Concerns

1.5.2. B. Ethical Considerations

- Transparency (ACM 1.3, IEEE P7001): Candidates should know they are being assessed on AI tool usage and understand evaluation criteria

- Autonomy (ACM 1.2): Candidates should not be penalized for choosing not to use AI tools if the role does not require them

- Dignity (ACM 1.1): Assessment should respect candidates’ professional identities regardless of AI tool adoption level

- Proportionality (IEEE Principle 5): AI fluency requirements should be proportional to actual job requirements

- Informed consent (ACM 1.6): Candidates should understand what data is collected, how it is used, and their rights regarding that data

1.5.3. C. Threats to Framework Validity

- 1)

- Construct Validity

- TF stage may conflate tool-specific knowledge with general AI orchestration ability

- AOE stage may reward excessive skepticism over balanced critical thinking

- SO stage may favor candidates with prior exposure to specific problem domains

- MR stage may confuse verbal fluency with genuine ethical reasoning

- 2)

- Criterion Validity

- The assessment function (Specification 1) may not capture the competencies that actually predict success in AI-augmented development roles

- The weighted combination of four competency scores may not be the correct functional form for predicting performance

- Job performance itself is multidimensional and difficult to measure, introducing criterion contamination and deficiency

- 3)

- Content Validity

- Framework may omit emerging competencies as AI tools evolve

- Four-stage model may not capture all dimensions (e.g., collaborative AI usage, production debugging with AI tools)

- Stage weights may not reflect actual job requirements

- 4)

- Convergent and Discriminant Validity

- 5)

- Consequential Validity

- AAIF could systematically disadvantage candidates from backgrounds with less AI tool access

- The framework could incentivize shallow AI tool proficiency over deep domain expertise

- Organizations might over-rely on AAIF scores, reducing holistic evaluation

- 6)

- Face Validity and Ecological Validity

- Time constraints (60–90 minutes per stage) may not reflect realistic project timelines

- Interview pressure may distort performance relative to actual work behavior

- AI tools provided may differ from those used in target organization

1.5.4. D. Validity Threat Summary

| Validity Type | Primary Threat | Key Proposed Mitigation | Target Metric |

| Construct | Surface vs. underlying competency | Multi-method assessment + BARS | Inter-rater reliability kappa > 0.70 |

| Criterion | Scores may not predict job performance | Regression on multiple performance indicators | Predictive validity r > 0.35 |

| Content | Competency coverage gaps | Expert validation panels (Delphi) | Coverage gap analysis |

| Convergent/Discriminant | Competencies may not be distinct | Confirmatory factor analysis | Inter-stage r < 0.85 |

| Consequential | Adverse demographic impact | Continuous adverse impact monitoring | Disparate impact ratio > 0.80 |

| Face/Ecological | Perceived irrelevance; artificial conditions | Candidate experience surveys; realistic tasks | Candidate satisfaction > 3.5/5 |

1.6. VI. Domain Extensibility: Quantum Computing Illustration

1.6.1. A. Rationale and Scope

1.6.2. B. Quantum AAIF Adaptation

| AAIF Competency | Quantum Adaptation | Key Domain-Specific Concern |

| Toolchain Fluency (TF) | Quantum Fundamentals + AI Learning (QF) | AI-generated quantum explanations may be subtly incorrect |

| AI Output Evaluation (AOE) | Quantum Algorithm Design (QAD) | Circuits may appear valid but violate quantum mechanical principles |

| System Orchestration (SO) | Hybrid System Orchestration (HSO) | Three-paradigm orchestration (classical + quantum + AI) |

| Meta-Reasoning (MR) | Critical Evaluation and Meta-Reasoning (CEM) | Quantum hype vs. NISQ reality assessment |

1.6.3. C. Extensibility Template

- 1.

- Identify domain fundamentals that AI cannot replace

- 2.

- Characterize domain-specific AI hallucination risks

- 3.

- Define hybrid system orchestration patterns for the domain

- 4.

- Establish domain-specific meta-reasoning challenges

- 5.

- Calibrate rubrics with domain experts

1.7. VII. Discussion

1.7.1. A. Implications for Practice

- 2-week adoption: Add a take-home challenge with AI tools permitted. Use the TF rubric (Table IV) to evaluate. Train interviewers to ask “How did you verify this AI output?” after every coding question.

- 3-month adoption: Implement Stage 0 (fundamentals) and Stage 1 (toolchain fluency). Pilot Stage 2 (AI output evaluation) with 10–20 candidates. Measure interviewer agreement using the calibration process in Section IV.F.

- 12-month adoption: Full AAIF implementation with calibration sessions. Begin collecting correlation data between AAIF scores and job performance for empirical weight calibration.

1.7.2. B. Framework Boundary Conditions

- 1.

- AI development tools are not used in daily work: If the target role does not involve AI-augmented development, assessing AI collaboration competencies is not relevant and may introduce construct-irrelevant variance.

- 2.

- Legacy system maintenance roles: Roles focused exclusively on maintaining legacy systems where AI tools are inapplicable or restricted should use traditional assessment methods aligned with actual job requirements.

- 3.

- Security-constrained environments: Defense, classified systems, and regulated healthcare environments where AI tool access is restricted or prohibited on the job should not assess candidates on competencies they cannot use.

- 4.

- Early-career/internship hiring: AI fluency expectations may be inappropriate for entry-level candidates. A simplified version focusing on Stage 0 and basic TF may be more appropriate, with the understanding that AI fluency will develop on the job.

- 5.

- Very small organizations: Organizations without resources for multi-stage interviews or calibration panels may find the full AAIF impractical. The quick-start guide (Section VII.A) offers abbreviated alternatives.

- 6.

- Rapid hiring scenarios: Contract roles or urgent staffing needs where 4–6 hours of assessment is impractical. A single-stage abbreviated assessment focusing on AOE may provide the highest signal-to-effort ratio.

- 7.

- Cultural contexts without validation: AAIF has been designed from a Western technology industry perspective. Deployment in non-Western contexts should be preceded by cultural adaptation and local pilot validation.

1.7.3. C. Limitations

- 1.

- No empirical validation: The framework is entirely conceptual. No interviews have been conducted, no inter-rater reliability has been measured, no predictive validity has been established. All proposed KPI linkages, decision thresholds, and stage weights are conjectures. This is the most significant limitation.

- 2.

- Single-author perspective: The framework reflects one author’s industry experience. The BARS rubrics were not developed using standard psychometric methods (critical incident technique, expert retranslation). Expert panel validation is a priority recommendation.

- 3.

- Western-centric context: The framework assumes Western technology industry practices, English-language AI tools, and US/EU regulatory frameworks. All AI tools referenced (Copilot, ChatGPT, Claude, CodeWhisperer) are from US-based companies. Regional AI tools with significant market share in non-Western markets (e.g., Tongyi Lingma, CodeGeeX in China) are not addressed. The MR behavioral anchors (Table VII) may reward communication styles aligned with Western professional norms; in cultures emphasizing indirect communication or deference to seniority, these rubrics may systematically disadvantage qualified candidates.

- 4.

- Rapidly evolving landscape: AI development tools and capabilities are changing rapidly. Framework elements may require frequent updating. The framework includes no explicit sunset mechanism or evolution pathway for when AI tools become so capable that certain stages (e.g., TF) become trivially easy or irrelevant.

- 5.

- Scope boundaries: AAIF focuses on software development roles. Extension to other knowledge work requires separate adaptation.

- 6.

- Interview time burden: The full framework requires 4–6 hours. Abbreviated versions are possible but their validity is unknown.

- 7.

- Tool cost: Providing enterprise AI tool access to candidates creates per-interview costs. At enterprise scale (10,000+ candidates per year), per-candidate tool licensing at $5–20 per session could cost $50,000–200,000 annually. Organizations should include tool costs in adoption planning and consider negotiating enterprise assessment licenses with AI tool providers.

1.7.4. D. Proposed Empirical Validation Protocol

- Participants: 50–100 candidates across 3–5 organizations (technology companies, financial institutions, and at least one non-Western organization).

- Sample size justification: For Cohen’s kappa > 0.70 with a 5-category BARS scale, assuming moderate marginal distributions, Sim and Wright (2005) [62] recommend minimum n=40 per rater pair. We target n=50–100 to provide adequate precision for reliability estimation and to account for incomplete data.

- Design: Within-subjects; all candidates complete all AAIF stages plus a traditional interview track.

- Primary outcomes: Inter-rater reliability (target: Cohen’s kappa > 0.70); candidate completion rates; interviewer satisfaction; time-to-administer.

- Analysis: Descriptive statistics; reliability analysis; thematic analysis of qualitative feedback from interviewers and candidates.

- Deliverables: Refined instruments, preliminary reliability data, feasibility assessment.

- Participants: 300–500 candidates across 10–15 organizations, with deliberate inclusion of organizations in at least 3 countries and 2 industry sectors.

- Sample size justification: For regression analysis with target effect size r = 0.35 (revised from 0.40 to align with Sackett et al.’s [51] updated validity estimates), alpha = 0.05, and power = 0.80, G*Power analysis indicates minimum n = 62. We target n=300–500 to enable subgroup analyses (by role level, organization size, cultural context) and to provide adequate power for confirmatory factor analysis (recommended minimum n=200 for four-factor models).

- Design: Randomized comparison of AAIF vs. traditional interview tracks. Stratified by role level and organization size.

- Statistical analysis: Regression of AAIF scores on job performance; adverse impact analysis (disparate impact ratio > 0.80 required); confirmatory factor analysis; discriminant validity between AAIF stages. Bonferroni correction applied for multiple comparisons across the primary analysis family.

- Feasibility risks: Recruiting 10–15 organizations willing to randomize hiring is extremely challenging. Fallback design: quasi-experimental with matched comparison groups (organizations using AAIF vs. traditional methods), acknowledging reduced internal validity.

- Deliverables: Predictive validity evidence, adverse impact data, refined thresholds.

- Participants: Follow-up of Phase 2 hires (n = 150–250).

- Expected attrition: Industry software developer turnover rates of 20–30% annually suggest 30–50% attrition over the 17-month tracking period. If attrition exceeds 40%, the effective sample may be insufficient for the planned hierarchical regression and survival analyses. Multiple imputation will be used for missing data under the missing-at-random assumption. If attrition is non-random (e.g., low AAIF scorers leave at higher rates), sensitivity analyses using pattern-mixture models will be conducted.

- Outcomes: Validate hypothesized KPI linkages.

- Analysis: Hierarchical regression; survival analysis for retention; mediation analysis.

- Deliverables: Empirically calibrated weights, validated KPI linkages.

- Domains: 2–3 specialized domains (quantum computing, computational biology, cybersecurity).

- Participants: 30–50 candidates per domain.

- Power limitation: n=30–50 per domain is likely underpowered for detecting moderate effect sizes in domain-specific analyses. Phase 4 results should be treated as exploratory, identifying domains where adapted AAIF shows promise for larger-scale validation.

- Deliverables: Domain adaptation guidelines, preliminary cross-domain validity evidence.

1.7.5. E. Future Directions

- 1.

- Automated scoring support: Developing AI-assisted evaluation tools that help interviewers apply BARS consistently

- 2.

- Non-linear assessment models: Exploring multiplicative or interaction-based scoring functions that capture competency dependencies

- 3.

- Continuous assessment platforms: Moving from point-in-time interviews to continuous evaluation environments

- 4.

- International adaptation: Developing AAIF variants for non-English-speaking contexts and non-Western organizational cultures

- 5.

- Longitudinal role evolution: Tracking how AI-augmented roles and required competencies evolve over time

- 6.

- Systematic literature review: Conducting a PRISMA-compliant systematic review of AI-augmented assessment practices

1.8. VIII. Illustrative Use Case: Kafka Performance Testing

1.8.1. A. Scenario Description

1.8.2. B. Candidate A: Strong Performance (Overall Score: 4.35)

1.8.3. C. Candidate B: Weak Performance (Overall Score: 2.55)

1.8.4. D. Scoring Observations

- 1.

- Discriminative power: The BARS anchors differentiate Candidate A (strategic tool use, genuine critical evaluation) from Candidate B (passive tool use, surface-level evaluation) at each stage.

- 2.

- Compensatory model in action: Candidate B’s adequate SO and MR scores (3/5) cannot compensate for weak TF and AOE scores (2/5), resulting in a No Hire decision.

- 3.

- Stage 0 gate function: Both candidates pass Stage 0, but Candidate B’s weaker fundamentals predict weaker performance in AI-augmented stages.

- 4.

- Common rater error illustration: An inexperienced interviewer might rate Candidate B’s Stage 1 higher because the candidate produced output quickly, confusing speed with effectiveness (see Common Rater Errors, Table IV).

1.9. IX. Conclusion

1.10. Replicability and Supplementary Materials

1.11. Data Availability Statement

Funding

Acknowledgments

Conflicts of Interest

| 1 | This term is preferred over “Model-Centric Programming” (which may be confused with Model Context Protocol) and over “AI-assisted coding” (which understates the scope of transformation). |

References

- Peng, S.; Kalliamvakou, E.; Cihon, P.; Demirer, M. The impact of AI on developer productivity: Evidence from GitHub Copilot. arXiv 2023, arXiv:2302.06590. [Google Scholar] [CrossRef]

- Stack Overflow, “2024 Developer Survey: AI Tools,” Stack Overflow. 2024. Available online: https://survey.stackoverflow.co/2024/ai.

- GitHub. GitHub Copilot: Your AI Pair Programmer. 2024. Available online: https://github.com/features/copilot.

- OpenAI, GPT-4 Technical Report. arXiv 2023, arXiv:2303.08774.

- Chen, M.; Tworek, J.; Jun, H.; Yuan, Q.; de Oliveira Pinto, H.P.; Kaplan, J. Evaluating large language models trained on code. arXiv 2021, arXiv:2107.03374. [Google Scholar] [CrossRef]

- OECD. The impact of AI on the workplace: Main findings from the OECD AI surveys of employers and workers. In OECD Social, Employment and Migration Working Papers; OECD: Paris, France, 2023. [Google Scholar]

- Behroozi, M.; Shirolkar, S.; Barik, T.; Parnin, C. Does stress impact technical interview performance? In Proceedings of the 28th ACM Joint Meeting on European Software Engineering Conference and Symposium on the Foundations of Software Engineering (ESEC/FSE), Virtual Event, 13–18 November 2020; pp. 481–492. [Google Scholar]

- Raghavan, M.; Barocas, S.; Kleinberg, J.; Levy, K. Mitigating bias in algorithmic hiring: Evaluating claims and practices. In Proceedings of the 2020 Conference on Fairness, Accountability, and Transparency (FAccT), Barcelona, Spain, 27–30 January 2020; pp. 469–481. [Google Scholar]

- Vaithilingam, A.; Zhang, T.; Glassman, E.L. Expectation vs. experience: Evaluating the usability of code generation tools powered by large language models. In Proceedings of the CHI Conference on Human Factors in Computing Systems Extended Abstracts, New Orleans, LA, USA, 29 April–5 May 2022; pp. 1–7. [Google Scholar]

- Trist, E.; Bamforth, K.W. Some social and psychological consequences of the longwall method of coal-getting. Human Relations 1951, 4, 3–38. [Google Scholar] [CrossRef]

- Baxter, G.; Sommerville, I. Socio-technical systems: From design methods to systems engineering. Interacting with Computers 2011, 23, 4–17. [Google Scholar] [CrossRef]

- Davis, F.D. Perceived usefulness, perceived ease of use, and user acceptance of information technology. MIS Quarterly 1989, 13, 319–340. [Google Scholar]

- Engestrom, Y. Learning by Expanding: An Activity-Theoretical Approach to Developmental Research; Orienta-Konsultit: Helsinki, Finland, 1987. [Google Scholar]

- Hevner, A.R.; March, S.T.; Park, J.; Ram, S. Design science in information systems research. MIS Quarterly 2004, 28, 75–105. [Google Scholar] [CrossRef]

- Peffers, K.; Tuunanen, T.; Rothenberger, M.A.; Chatterjee, S. A design science research methodology for information systems research. J.Management Information Systems 2007, 24, 45–77. [Google Scholar]

- Venkatesh, V.; Morris, M.G.; Davis, G.B.; Davis, F.D. User acceptance of information technology: Toward a unified view. MIS Quarterly 2003, 27, 425–478. [Google Scholar] [CrossRef]

- Kuutti, K. Activity theory as a potential framework for human-computer interaction research. In Context and Consciousness: Activity Theory and Human-Computer Interaction; Nardi, B.A., Ed.; MIT Press: Cambridge, MA, USA, 1996; pp. 17–44. [Google Scholar]

- Brown, T.; Mann, B.; Ryder, N.; Subbiah, M.; Kaplan, J.; Dhariwal, P. Language models are few-shot learners. Advances in Neural Information Processing Systems (NeurIPS) 2020, 33, 1877–1901. [Google Scholar]

- Amazon Web Services, Amazon CodeWhisperer. 2024. Available online: https://aws.amazon.com/codewhisperer/.

- Liang, P.; Bommasani, R.; Lee, T.; Tsipras, D.; Soylu, D.; Yasunaga, M. Holistic evaluation of language models. Transactions on Machine Learning Research 2023. [Google Scholar]

- Schmidt, F.L.; Hunter, J.E. The validity and utility of selection methods in personnel psychology: Practical and theoretical implications of 85 years of research findings. Psychological Bulletin 1998, 124, 262–274. [Google Scholar] [CrossRef]

- Huffcutt, A.I.; Arthur, W., Jr. Hunter and Hunter (1984) revisited: Interview validity for entry-level jobs. J.Applied Psychology 1994, 79, 184–190. [Google Scholar]

- Sanchez-Monedero, J.; Dencik, L.; Edwards, L. What does it mean to ‘solve’ the problem of discrimination in hiring? Social, technical, and legal perspectives from the UK on automated hiring systems. In Proceedings of the 2020 Conference on Fairness, Accountability, and Transparency, Barcelona, Spain, 27–30 January 2020; pp. 458–468. [Google Scholar]

- European Parliament. Regulation (EU) 2024/1689 laying down harmonised rules on artificial intelligence (EU AI Act). Official Journal of the European Union 2024. [Google Scholar]

- Barke, S.; James, M.B.; Polikarpova, N. Grounded copilot: How programmers interact with code-generating models. Proc. ACM Programming Languages (OOPSLA) 2023, 7, 85–111. [Google Scholar]

- Smith, P.C.; Kendall, L.M. Retranslation of expectations: An approach to the construction of unambiguous anchors for rating scales. J. Applied Psychology 1963, 47, 149–155. [Google Scholar]

- Latham, G.P.; Wexley, K.N. Increasing Productivity Through Performance Appraisal, 2nd ed.; Addison-Wesley: Reading, MA, USA, 1994. [Google Scholar]

- U.S. Equal Employment Opportunity Commission, “The Americans with Disabilities Act and the Use of Software, Algorithms, and Artificial Intelligence to Assess Job Applicants and Employees,” EEOC Technical Assistance Document, 2022.

- Mittelstadt, B.; Allo, P.; Taddeo, M.; Wachter, S.; Floridi, L. The ethics of algorithms: Mapping the debate. Big Data and Society 2016, 3. [Google Scholar] [CrossRef]

- Morley, J.; Floridi, L.; Kinsey, L.; Elhalal, A. From what to how: An initial review of publicly available AI ethics tools, methods and research to translate principles into practices. Science and Engineering Ethics 2020, 26, 2141–2168. [Google Scholar] [CrossRef]

- European Parliament and Council of the European Union. Regulation (EU) 2016/679 (General Data Protection Regulation). Official Journal of the European Union 2016. [Google Scholar]

- California State Legislature, “California Consumer Privacy Act (CCPA) as Amended by CPRA,” 2020.

- New York City Council, “Local Law 144: Automated Employment Decision Tools,” 2021.

- Wohlin, C.; Runeson, P.; Host, M.; Ohlsson, M.C.; Regnell, B.; Wesslen, A. Experimentation in Software Engineering; Springer: Berlin, Germany, 2012. [Google Scholar]

- Wootters, W.K.; Zurek, W.H. A single quantum cannot be cloned. Nature 1982, 299, 802–803. [Google Scholar] [CrossRef]

- McKinsey and Company. Quantum Computing: An Emerging Ecosystem and Industry Use Cases; McKinsey Digital: Berlin, Germany, Dec 2021. [Google Scholar]

- Preskill, J. Quantum computing in the NISQ era and beyond. Quantum 2018, 2, 79. [Google Scholar] [CrossRef]

- Cerezo, M.; Arrasmith, A.; Babbush, R.; Benjamin, S.C.; Endo, S.; Fujii, K. Variational quantum algorithms. Nature Reviews Physics 2021, 3, 625–644. [Google Scholar] [CrossRef]

- Arute, F.; Arya, K.; Babbush, R.; Bacon, D.; Bardin, J.C.; Barends, R. Quantum supremacy using a programmable superconducting processor. Nature 2019, 574, 505–510. [Google Scholar] [CrossRef]

- Dunjko, V.; Briegel, H.J. Machine learning and artificial intelligence in the quantum domain: A review of recent progress. Reports on Progress in Physics 2018, 81. [Google Scholar]

- Schuld, M.; Killoran, N. Quantum machine learning in feature Hilbert spaces. Physical Review Letters 2019, 122, 040504. [Google Scholar] [CrossRef]

- Biamonte, J.; Wittek, P.; Pancotti, N.; Rebentrost, P.; Wiebe, N.; Lloyd, S. Quantum machine learning. Nature 2017, 549, 195–202. [Google Scholar] [CrossRef]

- Runeson, P.; Host, M. Guidelines for conducting and reporting case study research in software engineering. Empirical Software Engineering 2009, 14, 131–164. [Google Scholar]

- Straub, D. Validating instruments in MIS research. MIS Quarterly 1989, 13, 147–169. [Google Scholar] [CrossRef]

- Churchill, G.A., Jr. A paradigm for developing better measures of marketing constructs. J. Marketing Research 1979, 16, 64–73. [Google Scholar]

- MacKenzie, S.B.; Podsakoff, P.M.; Podsakoff, N.P. Construct measurement and validation procedures in MIS and behavioral research: Integrating new and existing techniques. MIS Quarterly 2011, 35, 293–334. [Google Scholar] [CrossRef]

- McKinsey Global Institute. The Economic Potential of Generative AI: The Next Productivity Frontier; McKinsey and Company: New York, NY, USA, Jun 2023. [Google Scholar]

- Kaddour, J.; Harris, J.; Mozes, M.; Bradley, H.; Raileanu, R.; McHardy, R. Challenges and applications of large language models. arXiv 2023, arXiv:2307.10169. [Google Scholar] [CrossRef]

- Boston Consulting Group. The Next Decade in Quantum Computing—And How to Play; BCG: Boston, MA, USA, 2018. [Google Scholar]

- Anthropic, “Claude: AI Assistant,” 2024. Available online: https://www.anthropic.com/claude.

- Sackett, P.R.; Zhang, C.; Berry, C.M.; Lievens, F. Revisiting meta-analytic estimates of validity in personnel selection: Addressing systematic overcorrection for restriction of range. J. Applied Psychology 2022, 107, 2040–2068. [Google Scholar] [CrossRef]

- Messick, S. Validity. In Educational Measurement, 3rd ed.; Linn, R.L., Ed.; Macmillan: New York, NY, USA, 1989; pp. 13–103. [Google Scholar]

- Kamar, E.; Hacker, S.; Horvitz, E. Combining human and machine intelligence in large-scale crowdsourcing. In Proceedings of the 11th International Conference on Autonomous Agents and Multiagent Systems, Valencia, Spain, 4–8 June 2012; pp. 467–474. [Google Scholar]

- Amershi, S.; Weld, D.; Vorvoreanu, M.; Fourney, A.; Nushi, B.; Collisson, P. Guidelines for human-AI interaction. In Proceedings of the 2019 CHI Conference on Human Factors in Computing Systems, Glasgow Scotland, UK, 4–9 May 2019; pp. 1–13. [Google Scholar]

- Gajos, K.Z.; Weld, D.S.; Wobbrock, J.O. Automatically generating personalized user interfaces with Supple. Artificial Intelligence 2010, 174, 910–950. [Google Scholar] [CrossRef]

- Lai, V.D.; Ngo, N.; Veyseh, A.P.B.; Man, H.; Dernoncourt, F.; Bui, T.; Nguyen, T.H. ChatGPT beyond English: Towards a comprehensive evaluation of large language models in multilingual learning. In Proceedings of the Findings of EMNLP, Singapore, 6–10 December 2023; pp. 13171–13189. [Google Scholar]

- Government of India. Digital Personal Data Protection Act, 2023. In The Gazette of India; Government of India: New Delhi, India, 2023. [Google Scholar]

- Cyberspace Administration of China. Interim Measures for the Management of Generative Artificial Intelligence Services; Cyberspace Administration of China: Beijing, China, 2023. [Google Scholar]

- ACM. “ACM Code of Ethics and Professional Conduct,” Association for Computing Machinery. 2018. Available online: https://www.acm.org/code-of-ethics.

- IEEE. Ethically Aligned Design: A Vision for Prioritizing Human Well-being with Autonomous and Intelligent Systems. In IEEE Global Initiative; IEEE: New York, NY, USA, 2019. [Google Scholar]

- Landis, J.R.; Koch, G.G. The measurement of observer agreement for categorical data. Biometrics 1977, 33, 159–174. [Google Scholar] [CrossRef]

- Sim, J.; Wright, C.C. The kappa statistic in reliability studies: Use, interpretation, and sample size requirements. Physical Therapy 2005, 85, 257–268. [Google Scholar] [CrossRef]

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).