1. Introduction

Artificial intelligence (AI) has emerged as one of the most consequential technological forces reshaping organizational systems, workforce capabilities, and institutional performance across sectors. Driven by advances in machine learning, natural language processing, and automated decision-support systems, AI technologies are progressively integrated into organizational routines, offering the potential to automate repetitive tasks, optimize resource allocation, and enhance decision-making processes [

1,

2]. Beyond their operational implications, these technologies carry significant relevance for organizational sustainability, as they reconfigure the nature of work, alter workforce competency demands, and reshape the social conditions under which employees operate [

3,

4].

From the perspective of organizational sustainability, the relevance of AI extends beyond environmental efficiency and encompasses social and economic dimensions, including workforce adaptability, institutional productivity, and the psychological conditions of employment [

5,

6]. Sustainable organizations must ensure that technological integration strengthens—rather than undermines—human capabilities, institutional resilience, and long-term workforce development [

7,

8]. In this regard, AI adoption constitutes a dual-edged phenomenon: when strategically implemented, it can serve as a driver of sustainable organizational performance; when poorly managed, it may generate resistance, erode professional autonomy, and deepen skill gaps [

9,

10].

Educational institutions represent a particularly significant yet underexplored context for examining the organizational implications of AI adoption. As public and private educational systems undergo accelerating digital transformation, administrative personnel—those responsible for institutional coordination, resource management, and operational support—face profound changes in their work roles, competency requirements, and decision-making environments [

11,

12]. The integration of AI-driven tools into administrative functions has the potential to improve productivity, streamline processes, and strengthen institutional effectiveness. At the same time, adoption may require the development of new technical competencies and alter established patterns of decision-making autonomy [

13,

14].

From a social sustainability perspective, workforce adaptability, administrative productivity, and decision-making autonomy constitute critical indicators of sustainable organizational systems [

15,

16]. Sustainable organizations must maintain human-centered work environments that support employee professional development, psychological agency, and meaningful participation in organizational processes [

17]. Artificial intelligence, when integrated strategically, can function as an enabling mechanism that supports these conditions by reducing administrative burden, providing decision support, and expanding employee capacity for higher-value tasks [

18,

19]. However, this positive trajectory is not automatic; it depends on organizational readiness, training provision, and the extent to which AI is positioned as a complement to—rather than a replacement of—human judgment [

20].

Despite the growing body of literature on AI and organizational transformation, empirical research specifically examining the effects of AI adoption on administrative workforce outcomes in educational institutions remains limited, particularly in developing country contexts [

21,

22]. Most extant studies have concentrated on AI applications for student learning or pedagogical processes, while fewer studies have examined how AI reshapes the competencies, productivity, and decision-making autonomy of administrative staff [

23,

24]. This gap is particularly acute in Latin America, where educational institutions operate under conditions of resource constraint, institutional informality, and limited digital infrastructure [

25,

26].

Addressing this gap, the present study examines the predictive relationships between AI adoption and three organizational sustainability outcomes—job competencies (CL), administrative productivity (PA), and decision-making autonomy (ATD)—among administrative personnel in educational institutions in Chimbote, Ancash Region, Peru. Using PLS-SEM, this research provides predictive empirical evidence on how AI functions as a driver of sustainable organizational performance in an emerging economy educational context.

This study makes three primary contributions. First, it advances the theoretical understanding of AI as a driver of sustainable organizational performance by integrating the Unified Theory of Acceptance and Use of Technology (UTAUT), Labor Productivity Theory, and Self-Determination Theory (SDT) within a predictive PLS-SEM model contextualized in educational administration. Second, it contributes methodologically by operationalizing AI adoption through a multidimensional UTAUT-based instrument and modeling its effects on multiple sustainability indicators simultaneously. Third, it provides contextual evidence from Peruvian educational institutions—an underrepresented setting in the AI and organizational sustainability literature—extending empirical knowledge to emerging economy contexts.

The remainder of the article is organized as follows.

Section 2 presents the theoretical background and literature review, including hypothesis development.

Section 3 describes the methodological framework.

Section 4 reports the measurement model and structural model results.

Section 5 discusses the findings in relation to prior literature and their sustainability implications.

Section 6 concludes with theoretical and practical contributions and directions for future research.

It is important to note that the sustainability framework adopted in this study is anchored in the social and economic dimensions of organizational sustainability, consistent with the triple bottom line conceptualization [

5] and operationalized in the organizational sustainability literature [

6,

7]. Specifically, this study focuses on human capital resilience -- operationalized through job competency development -- and administrative efficiency -- operationalized through productivity and decision-making autonomy -- as core indicators of organizational sustainability in educational institutions. While environmental sustainability constitutes a critical dimension of the broader sustainability agenda and is acknowledged as such, the examination of AI’s environmental impacts falls outside the scope and design of the present study. This delimitation is theoretically coherent: the organizational sustainability outcomes examined here are rooted in Social Sustainability Theory and the Sustainable Development Goals (SDGs) related to quality education (SDG 4) and decent work (SDG 8), rather than in environmental impact assessment frameworks. Future research should address the environmental sustainability implications of AI adoption in educational settings as a complementary line of inquiry.

2. Literature Review

2.1. Theoretical Background

The theoretical framework integrates four complementary bodies of knowledge that collectively explain how AI adoption relates to organizational sustainability outcomes in educational administration. These frameworks converge on a shared premise: that technological integration is not a purely technical phenomenon but a socially embedded process whose effects are mediated by individual perceptions, organizational conditions, and contextual factors.

The Unified Theory of Acceptance and Use of Technology (UTAUT), developed by Venkatesh et al. [

27], provides the primary lens for understanding AI adoption. UTAUT synthesizes eight prior technology acceptance models, proposing that technology adoption and use are governed by performance expectancy, effort expectancy, social influence, and facilitating conditions. In the educational administrative context, performance expectancy relates to the perceived utility of AI tools in improving task outcomes; effort expectancy concerns the ease of interaction with AI systems; social influence reflects organizational and peer pressure toward adoption; and facilitating conditions encompass institutional support, training availability, and technological infrastructure [

27,

28,

29].

The Theory of Organizational Change, as developed by Lewin [

30] and later extended by Kotter [

31], provides a structural lens for understanding how individuals and organizations respond to technological innovation. Lewin’s three-stage model—unfreezing, change, and refreezing—explains the psychological and organizational mechanisms through which change is introduced, accepted, and stabilized. Kotter’s eight-step model elaborates the leadership and communication strategies required for effective change implementation. In the context of AI adoption in educational institutions, resistance to change represents a theoretically grounded response to uncertainty, perceived threat to employment security, and insufficient digital literacy—all conditions particularly salient in contexts where administrative staff have limited prior exposure to advanced digital technologies [

32,

33]. When these unfreezing conditions are absent, resistance is predictably heightened, as workers perceive the change as externally imposed rather than organizationally supported.

Labor Productivity Theory, grounded in Drucker’s [

34] conception of knowledge work, establishes that in knowledge-intensive organizational environments, productivity depends on how effectively workers access, process, and apply knowledge. Artificial intelligence, by automating routine administrative tasks and providing real-time information support, functions as a productivity-enabling technology that redirects human cognitive effort toward higher-value strategic activities. However, the productivity-enhancing effects of AI are not automatic; they depend on workers’ capacity to integrate AI tools effectively, which in turn requires adequate training, organizational support, and institutional readiness [

35].

Self-Determination Theory (SDT), articulated by Deci and Ryan [

36], posits that human motivation and well-being are sustained by the satisfaction of three fundamental psychological needs: autonomy, competence, and relatedness. In organizational contexts, autonomy refers to self-direction in work; competence involves feeling effective in task performance; and relatedness concerns meaningful connection with others. AI adoption may satisfy or undermine these needs depending on implementation approach. When AI is experienced as a decision-support tool that enhances employee capacity, it may strengthen perceived autonomy and competence. Conversely, when perceived as imposing automated routines that displace professional judgment, it may generate perceptions of external control and reduced self-determination [

37,

38].

These four frameworks together provide an integrated theoretical foundation for this study. Critically, the dependent variables examined—job competencies, resistance to change, administrative productivity, and decision-making autonomy—are not merely operational outcomes; they represent dimensions of organizational sustainability in its social and economic expressions. Within sustainable human resource management (SHRM) scholarship, these constructs are recognized as indicators of institutional capacity, workforce resilience, and long-term organizational viability [

7,

8,

17]. Job competency development sustains human capital, supporting organizational adaptability under conditions of environmental change. Resistance to change, when present, constitutes a critical barrier to sustainable technological transformation, signaling gaps in organizational readiness, training provision, and change management capacity. Administrative productivity ensures institutional efficiency and resource optimization—foundations of economic sustainability in public educational organizations. Decision-making autonomy underpins professional agency and psychological well-being, which are central to social sustainability and the maintenance of decent, human-centered work environments [

15,

16]. Accordingly, the present model connects micro-level individual perceptions to macro-level sustainability outcomes, positioning AI adoption as an organizational governance capability whose implications extend beyond operational efficiency to include the social and economic dimensions of institutional sustainability.

2.2. Artificial Intelligence Adoption in Organizational Contexts

Artificial intelligence encompasses computational systems capable of performing tasks that traditionally required human cognitive intervention, including pattern recognition, problem-solving, data analysis, and adaptive decision support [

39]. In organizational settings, AI applications range from machine learning algorithms and natural language processing tools to robotic process automation and intelligent decision-support systems [

1,

40]. Across sectors, AI adoption has been associated with improvements in operational efficiency, decision quality, and organizational responsiveness [

2,

41].

In educational institutions, AI has primarily been examined in relation to student learning outcomes and pedagogical innovation [

23,

42]. More recently, research has begun to address the administrative dimension of educational AI adoption, recognizing that administrative personnel play a critical operational role and that their capacity to integrate AI tools shapes overall institutional performance [

12,

43]. Administrative tasks in educational settings—including academic scheduling, student data management, regulatory compliance, and resource allocation—represent functional areas where AI offers efficiency gains while requiring workers to develop new competencies and adapt to transformed work roles [

11,

44].

The McKinsey Global Institute [

45] estimated that AI adoption could generate between USD 2.6 and 4.4 trillion in annual economic value globally. In Latin America, the International Labour Organization [

46] projected that generative AI could affect between 27% and 38% of the labor market in countries including Peru, underscoring the urgency of examining AI’s organizational implications in this regional context. In Peru, studies indicate that AI adoption is advancing but unevenly distributed, with significant gaps in digital infrastructure, workforce training, and institutional readiness, particularly in public educational institutions [

47,

48].

From a sustainability perspective, AI adoption represents an organizational capability that intersects with the economic and social dimensions of the triple bottom line [

5]. Economically, AI can enhance institutional efficiency and resource utilization. Socially—the dimension most directly examined in this study—AI shapes the conditions of employment, the psychological experience of work, and the development of human capabilities [

3,

15]. Sustainable AI adoption therefore requires balancing efficiency gains with the protection and development of human competencies, professional agency, and organizational resilience [

17,

74].

2.3. AI Adoption and Job Competencies

Job competencies are defined as the integrated set of knowledge, skills, attitudes, and values that enable individuals to perform effectively in their organizational roles and adapt to evolving work environment demands [

49]. Competency frameworks distinguish between technical competencies—domain-specific knowledge and functional skills—and transversal competencies—including communication, adaptability, critical thinking, and leadership—both of which are subject to modification under conditions of technological transformation [

50].

Within sustainable human resource management, job competency development represents a foundational dimension of human capital sustainability—the capacity of organizations to continuously renew and strengthen workforce capabilities in response to environmental demands [

7,

8]. When AI is integrated into administrative work, it creates dual competency dynamics. On one hand, AI tools that automate routine tasks may redirect cognitive resources toward higher-order activities, creating opportunities for competency development in data interpretation, strategic analysis, and digital system management [

51,

52]. On the other hand, without structured training, AI adoption risks generating skill displacement or competency anxiety among workers who lack the digital literacy to critically engage with AI-generated outputs [

53].

Empirical research on AI and workforce competencies reveals context-dependent findings. A bibliometric analysis of 1,647 academic articles identified "demand for new competencies" as a dominant theme in AI and labor market research, with digitized work environments consistently identified as drivers of competency transformation [

54]. Studies in Peruvian educational contexts indicate that administrative staff who receive structured AI training report higher digital competency and greater self-efficacy in technology-mediated work [

55,

56]. The UTAUT framework further supports this relationship: when facilitating conditions—including training provision—are present, AI tool use is more likely to translate into competency development rather than competency displacement [

27].

Hypothesis 1 (H1): AI adoption is positively associated with job competencies among administrative staff in educational institutions.

2.4. AI Adoption and Resistance to Change

Resistance to change is conceptualized as the natural human response to perceived alterations in established work routines, organizational roles, or professional identities, driven by uncertainty, fear of job displacement, distrust of new systems, or perceived inadequacy in adapting to technological demands [

30,

32]. In the context of AI adoption, resistance to change represents one of the most commonly documented barriers to effective technology integration, particularly in organizations where digital literacy is limited and where workers have not been systematically prepared for technological transformation [

31,

58].

The Theory of Organizational Change provides a foundational explanation for resistance to AI adoption. Lewin’s [

30] model suggests that effective change requires a deliberate unfreezing of existing behavioral patterns through transparent communication, participatory processes, and the provision of psychological safety during the transition period. When these conditions are absent—as is common in resource-constrained educational institutions undergoing rapid AI integration—resistance is predictably heightened, as workers perceive the change as externally imposed rather than organizationally supported [

33]. Kotter’s [

31] extended framework further indicates that poorly managed change initiatives, characterized by insufficient training, unclear communication of purpose, and lack of participatory co-design, reliably produce behavioral resistance even when workers are not intrinsically opposed to technological modernization.

Empirical evidence consistently supports the negative relationship between AI tool adoption and resistance to change when adoption is accompanied by adequate information, training, and participatory implementation strategies. Research in organizational settings in Peru and broader Latin America indicates that workers who receive structured preparation for AI-assisted work demonstrate significantly lower levels of resistance, greater technology acceptance, and more adaptive attitudes toward organizational change [

59,

60]. By contrast, administrative personnel in educational institutions who report limited AI training and unclear institutional communication about AI purposes exhibit higher levels of resistance, manifested as avoidance behaviors, reduced motivation, and skepticism toward AI-assisted decision-making [

47,

61]. The UTAUT framework further clarifies this dynamic: performance expectancy and effort expectancy—core UTAUT determinants—directly influence the extent to which workers perceive AI adoption as threatening or enabling. When workers expect AI to improve their performance without excessive additional effort, resistance is diminished; when AI is perceived as demanding unfamiliar skills without clear performance benefits, resistance is reinforced [

27,

62]. Consequently, AI adoption, particularly when accompanied by facilitating conditions including training and institutional support, should be negatively associated with resistance to change.

Hypothesis 4 (H4): AI adoption is negatively associated with resistance to change among administrative staff in educational institutions.

2.5. AI Adoption and Administrative Productivity

Administrative productivity is defined as the capacity to perform organizational management functions effectively and efficiently, optimizing available resources to achieve institutional objectives [

34]. In knowledge-intensive organizations, productivity increasingly depends on the quality of information processing, decision speed, and the effective allocation of cognitive resources—dimensions in which AI tools hold particular relevance [

1,

40].

From a social sustainability standpoint, administrative productivity is not merely an economic performance indicator; it constitutes a dimension of organizational resilience and long-term institutional viability. Institutions that fail to maintain adequate administrative productivity face resource inefficiency, governance deterioration, and reduced capacity to fulfill their educational mission—consequences that ultimately compromise their social sustainability [

15,

16]. Drucker’s [

34] knowledge productivity framework establishes that in the knowledge economy, productivity is determined by the strategic application of knowledge to organizational challenges—a process that AI tools can substantially enhance.

Research in administrative contexts confirms that AI-assisted automation of repetitive tasks reduces processing time, decreases error rates, and improves the consistency of institutional outputs [

2,

65]. In educational administrative settings, AI systems have been found to accelerate student record management, facilitate regulatory reporting, and support scheduling optimization [

11,

42,

66]. Studies in Peruvian organizational contexts suggest that AI adoption is associated with operational efficiency improvements, with gains in reduced processing times and faster task completion [

48]. However, these benefits are mediated by training quality and organizational culture surrounding technology adoption [

67].

Hypothesis 2 (H2): AI adoption is positively associated with administrative productivity among administrative staff in educational institutions.

2.6. AI Adoption and Decision-Making Autonomy

Decision-making autonomy is defined as the capacity of organizational members to exercise independent professional judgment and take decisions within their role boundaries without excessive external control or technological substitution of their cognitive agency [

36,

68]. Self-Determination Theory identifies autonomy as a fundamental psychological need whose satisfaction is essential for intrinsic motivation, professional well-being, and effective work performance [

36]. Within organizational sustainability scholarship, decision-making autonomy constitutes a core dimension of the social sustainability of work—it determines whether organizational members experience their employment as empowering or constraining, and whether institutional governance structures support or undermine professional agency [

17].

The relationship between AI adoption and decision-making autonomy is theoretically nuanced. When AI is positioned as a complementary decision-support tool providing relevant, real-time information, it may expand the informational basis of human decisions, enabling workers to make better-grounded choices—effectively enhancing functional autonomy [

18,

19]. When AI is experienced as expanding access to evidence and analysis, workers may perceive greater decision efficacy and reduced dependence on hierarchical approval for routine choices [

38]. This pathway aligns with SDT: AI that strengthens perceived competence and informational access simultaneously supports the autonomy need, producing conditions favorable to enhanced self-determination in organizational roles [

37].

Empirical evidence on AI and decision-making autonomy is mixed and context-dependent [

71,

72]. Some studies report that AI adoption enhances perceived decisional capacity among workers who receive structured AI literacy training and who operate in organizational cultures that position AI as complementary to professional judgment [

71]. In emerging economy educational contexts specifically, where administrative personnel have historically operated under hierarchical governance structures with limited access to systematic information support, AI decision-support tools may represent a qualitative expansion of workers’ practical decision-making capacity [

47]. This contextual dynamic—distinct from more formalized organizational environments—provides empirical justification for expecting a positive association between AI adoption and decision-making autonomy in the present research setting.

Hypothesis 3 (H3): AI adoption is positively associated with decision-making autonomy among administrative staff in educational institutions.

2.7. AI, Organizational Sustainability, and Sustainable Development Goals

The organizational sustainability implications of AI adoption in educational institutions extend beyond individual workforce outcomes and connect with broader sustainable development frameworks. This study explicitly aligns with three Sustainable Development Goals of the United Nations 2030 Agenda [

73]: SDG 4 (Quality Education), SDG 8 (Decent Work and Economic Growth), and SDG 9 (Industry, Innovation, and Infrastructure). SDG 4, Target 4.4, calls for substantially increasing the number of youth and adults with relevant technical and professional skills for employment and decent work—a goal directly advanced when AI adoption promotes job competency development. SDG 8, Target 8.2, promotes higher levels of economic productivity through technological modernization and innovation—a dimension captured by the administrative productivity construct. SDG 9, Target 9.5, supports the strengthening of scientific research and technological capabilities—which, extended to the educational sector, encompasses the development of robust AI governance frameworks enabling sustainable digital transformation [

15,

16].

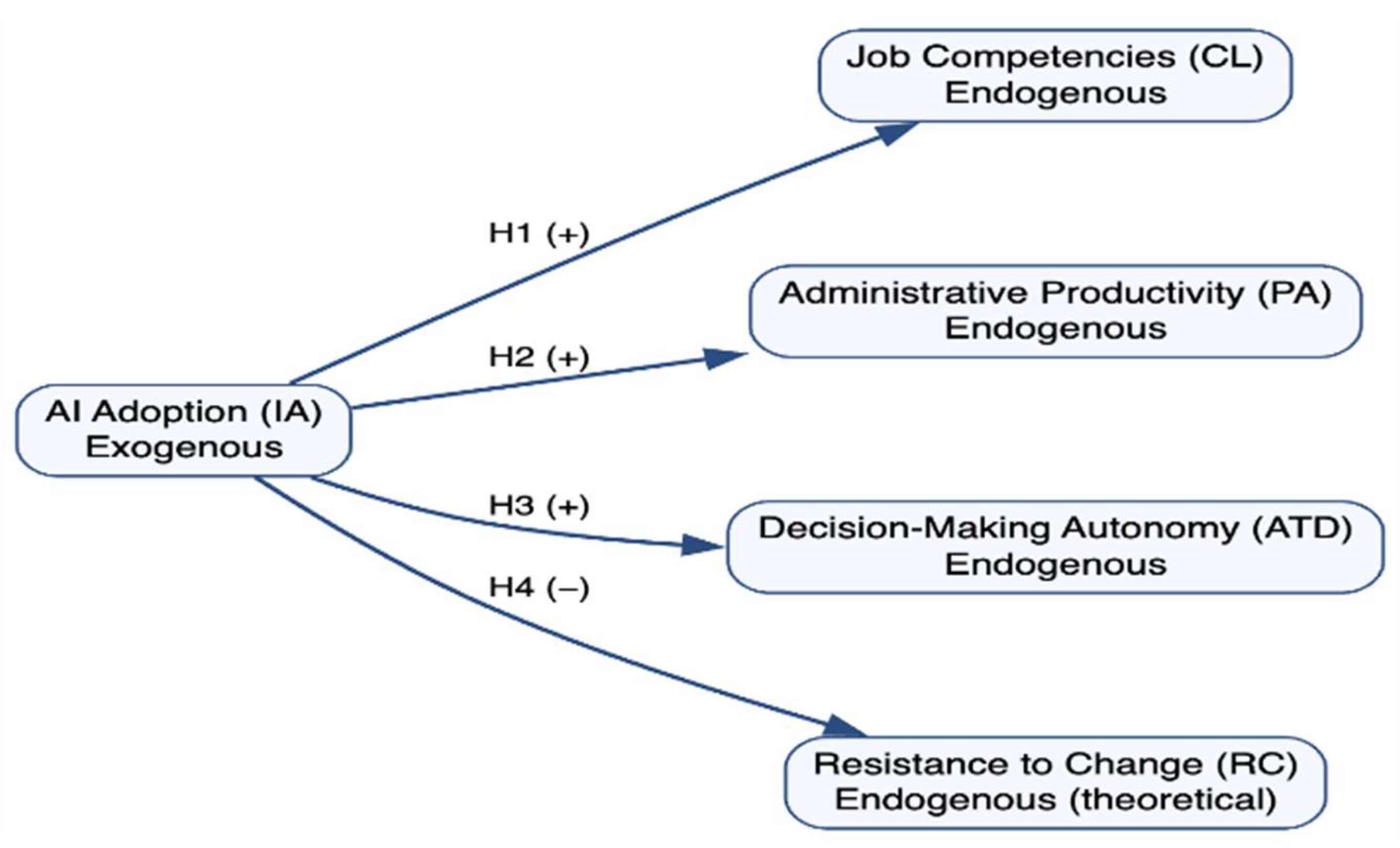

The conceptual model proposed in this study (

Figure 1) conceptualizes AI adoption as the independent variable and examines its predictive relationships with three organizational sustainability outcomes: job competencies (H1), administrative productivity (H2), and decision-making autonomy (H3). Additionally, resistance to change (H4) was theoretically proposed as a fourth outcome but was later excluded from the structural analysis due to insufficient psychometric reliability.

Grounded in the social and economic dimensions of sustainability, this study adopts a human-centered perspective of organizational performance, emphasizing workforce capability development, institutional efficiency, and employee agency. This framework aligns with organizational sustainability and human resource management literature and is consistent with the SDG agenda emphasizing decent work, productivity enhancement, and institutional strengthening. Environmental sustainability dimensions of AI, such as energy consumption and carbon footprint, fall beyond the empirical scope of this investigation and are proposed as avenues for future research.

3. Materials and Methods

3.1. Research Design

This study employed a quantitative, non-experimental, cross-sectional, and explanatory research design. The quantitative approach was selected to enable the systematic measurement of perceptual constructs and the statistical evaluation of predictive relationships between AI adoption and organizational sustainability outcomes [

75,

76]. The non-experimental design was appropriate given that variables were observed as they naturally occurred within institutional settings without researcher manipulation or intervention. The cross-sectional nature of the design allowed for data collection at a single point in time, providing a contemporaneous snapshot of the relationships among the constructs under study. The explanatory scope aimed to determine the extent to which AI adoption predicted variation in job competencies, administrative productivity, and decision-making autonomy, consistent with a causal-predictive research orientation [

75].

3.2. Population, Sample, and Data Collection

The target population consisted of formal administrative staff members working in educational institutions (Instituciones Educativas de Educación Básica Regular) in Chimbote, Ancash Region, Peru. The final sample comprised 98 administrative staff members drawn from 54 educational institutions. Participants were selected through a multi-stage sampling strategy. At the institutional level, educational institutions were identified from the official registry maintained by the Unidad de Gestión Educativa Local (UGEL) of the Santa Province, with participating institutions selected through simple random sampling. At the individual level, administrative personnel within each institution were invited to participate on a voluntary basis, with proportional allocation across institutions to ensure balanced representation.

In addition to G*Power-based power analysis, the sample size was further evaluated against PLS-SEM-specific adequacy criteria. Consistent with the 10-times rule recommended by Hair et al. [

77,

81], the minimum required sample size corresponds to ten times the maximum number of structural paths directed at any single endogenous construct in the model. In the present structural model, each endogenous construct (CL, PA, and ATD) receives a single predictor path from AI adoption (IA), yielding a minimum threshold of 10 observations. The final sample of 98 participants substantially exceeds this criterion, confirming sample adequacy from a PLS-SEM estimation perspective. Furthermore, PLS-SEM has been extensively validated as a method appropriate for research contexts where sample sizes are moderate, distributional assumptions are uncertain, and the research objective is predictive rather than strictly confirmatory [

77,

81,

82]. These combined criteria -- G*Power-based power analysis and PLS-SEM-specific 10-times rule compliance -- collectively substantiate the adequacy of the present sample for the structural estimation conducted.

This combined sampling approach is consistent with methodological recommendations for organizational research conducted in emerging economy contexts where exhaustive employee-level sampling frames are not always available [

77,

78]. The adequacy of the sample size was assessed using a priori statistical power analysis conducted with G*Power 3.1.9.7 (Heinrich Heine University Düsseldorf, Düsseldorf, Germany). Specifying a medium effect size (f2 = 0.15), a significance level of α = 0.05, and a desired power of 0.80 for a regression model with three predictors, the minimum required sample size was estimated at 77 observations. The final sample of 98 respondents exceeds this threshold, providing adequate statistical power. Primary data were collected through a self-administered online questionnaire deployed via Google Forms between March and April 2025. Participation was voluntary and anonymous, with informed consent obtained from all participants prior to their involvement in the study.

3.3. Measurement Instruments and Construct Operationalization

All measurement instruments were developed by the research team based on established theoretical frameworks and adapted to the Peruvian educational administrative context. All items were measured using a five-point Likert scale ranging from 1 ("strongly disagree") to 5 ("strongly agree"). Prior to full data collection, the questionnaire underwent content validity review by subject-matter experts. All constructs were modeled as reflective measurement models, consistent with the theoretical specification in which observed indicators are conceptualized as manifestations of their respective underlying latent constructs [

81,

82]. This reflective specification is appropriate when construct indicators are expected to be highly intercorrelated and interchangeable, and when the causal direction flows from the latent variable to the indicators—conditions that apply to all constructs in the present study [

81].

Artificial intelligence adoption (IA) was operationalized through a multidimensional instrument grounded in the UTAUT framework [

27], comprising eight items (IA1–IA8) capturing four dimensions: AI tool use (frequency, type, and purpose of AI tool engagement); knowledge and familiarity (prior experience, digital self-efficacy, and ease of interaction); areas of application (administrative domains of AI implementation); and AI training (access to development opportunities building AI-related competencies).

Job competencies (CL) were assessed through six items (CL1–CL6) capturing technical competencies (digital tool management, software use, data processing) and transversal competencies (communication, adaptability, leadership, teamwork), consistent with Spencer and Spencer’s [

49] competency framework. Administrative productivity (PA) was measured through six items (PA1–PA6) addressing management effectiveness (perceived improvement in task completion quality and output consistency) and task efficiency (perceived reduction in processing time and optimization of resource use), grounded in Drucker’s [

34] knowledge productivity framework. Decision-making autonomy (ATD) was operationalized through three items (ATD1–ATD3) grounded in Self-Determination Theory [

36], assessing the extent to which AI was experienced as expanding versus contracting workers’ capacity for independent professional judgment. Resistance to change (RC) was operationalized through items grounded in Lewin’s [

30] organizational change model and adapted to the context of AI adoption in educational institutions, drawing on Oreg’s [

32] resistance to change scale. As reported in

Section 4.1, the RC construct did not achieve the minimum psychometric thresholds required for valid structural model inclusion and was therefore excluded from the second-stage structural estimation.

3.4. Common Method Variance Assessment

Given the use of self-reported perceptual measures for all constructs collected simultaneously, potential common method variance (CMV) was assessed using two complementary procedures. First, Harman’s single-factor test was performed through an unrotated principal component analysis including all measurement items [

79]. The first unrotated factor accounted for less than 50% of the total variance, suggesting that no single factor dominated the data structure and that common method bias did not represent a critical threat to validity [

80].

Second, a full collinearity assessment was conducted following the approach recommended by Kock [

81] as part of the PLS-SEM analysis. This procedure assesses CMV by examining Variance Inflation Factor (VIF) values for all latent variables in the structural model. VIF values below 3.3 indicate that common method bias is unlikely to substantially inflate the observed relationships between constructs, as high VIF values would suggest that inter-construct correlations are inflated beyond what the theoretical model would predict [

81]. As reported in

Table 5 below, all VIF values in the present study were equal to 1.00, well below the threshold of 3.3, providing strong support for the absence of common method bias in the structural model estimates.

3.5. Data Analysis Method

The empirical analysis was conducted using Partial Least Squares Structural Equation Modeling (PLS-SEM) with SmartPLS 4.0 software (SmartPLS GmbH, Oststeinbek, Germany). PLS-SEM was selected for three reasons. First, PLS-SEM is designed for predictive-oriented research, making it particularly suitable for the causal-predictive epistemological framework of this study [

81,

82]. Second, PLS-SEM is robust under conditions of non-normal data distribution and smaller sample sizes, characteristics common in organizational research in emerging economy contexts [

83]. Third, PLS-SEM enables the simultaneous evaluation of complex latent variable models with multiple dependent constructs [

81].

The analysis followed the standard two-stage approach recommended for PLS-SEM research [

81]. In the first stage, the measurement model was evaluated by assessing (a) indicator reliability through standardized outer loadings (λ ≥ 0.70 threshold), (b) internal consistency reliability through Cronbach’s alpha (α ≥ 0.70) and composite reliability (CR ≥ 0.70), (c) convergent validity through average variance extracted (AVE ≥ 0.50), and (d) discriminant validity through the heterotrait–monotrait ratio of correlations (HTMT < 0.85 conservative threshold; < 0.90 acceptable threshold) [

81,

84]. In the second stage, the structural model was evaluated through path coefficients (β), t-values and p-values obtained through bootstrapping with 5,000 subsamples, coefficients of determination (R2), effect sizes (f2: small = 0.02, medium = 0.15, large = 0.35), predictive relevance through blindfolding Q2 values (Q2 > 0), out-of-sample predictive power through PLS Predict (Q2_predict), and global model fit through the standardized root mean square residual (SRMR ≤ 0.08). All structural relationships are interpreted as predictive associations consistent with the cross-sectional design.

3.6. Ethical Considerations

The study adhered to ethical standards for research involving human participants, consistent with the Declaration of Helsinki. Participation was voluntary, and anonymity and confidentiality were guaranteed throughout the data collection and analysis process. No personally identifiable information was collected. The research protocol was approved by the Vice-Rectorate for Research of Universidad César Vallejo. All participants provided informed consent prior to their participation.

3.7. Endogeneity Assessment (Gaussian Copula)

To address potential endogeneity concerns arising from omitted variable bias or reverse causality, a Gaussian copula approach was implemented following Park and Gupta (2012) and applied in predictive modeling contexts (Hult et al., 2018). Copula terms were generated for the independent variable (AI adoption) and incorporated into auxiliary regression models for each endogenous construct.

4. Results

4.1. Measurement Model Assessment

The measurement model was initially evaluated for all four proposed constructs: AI adoption (IA), job competencies (CL), administrative productivity (PA), decision-making autonomy (ATD), and resistance to change (RC). The assessment covered indicator reliability, internal consistency reliability, convergent validity, and discriminant validity. Results for the three retained constructs are presented in

Table 1,

Table 2,

Table 3 and

Table 4.

It is important to note that the Resistance to Change (RC) construct exhibited insufficient psychometric properties during the measurement model evaluation. Specifically, RC returned a Cronbach’s alpha below the recommended threshold of .70 (Hair et al., 2019; 2021), and multiple indicator loadings fell below the minimum acceptable value of λ = 0.70 [

81], indicating weak indicator reliability. These results collectively indicate that the RC construct failed to meet the minimum PLS-SEM thresholds for internal consistency reliability and indicator reliability recommended by Hair et al. [

77,

81]. In accordance with established psychometric practice, a construct that does not demonstrate adequate reliability cannot be meaningfully included in the structural model without risk of biasing path coefficient estimates and compromising model validity. Accordingly, the Resistance to Change construct was excluded from the structural model estimation. This exclusion is reported transparently and is discussed further in

Section 5 and

Section 7.

This exclusion decision was governed exclusively by objective psychometric criteria established prior to structural model estimation, consistent with the principle that measurement quality must be confirmed before structural relationships can be validly interpreted [

77,

81]; including a construct with demonstrated reliability deficiencies would have introduced systematic measurement error into the structural model, distorting path coefficient estimates and compromising the validity of all reported relationships [

80,

84].

Table 1.

Reliability and Convergent Validity of the Measurement Model.

Table 1.

Reliability and Convergent Validity of the Measurement Model.

| Construct |

Cronbach’s α |

ρA |

CR |

AVE |

| IA (AI Adoption) |

0.901 |

0.904 |

0.920 |

0.590 |

| CL (Job Competencies) |

0.930 |

0.934 |

0.945 |

0.742 |

| PA (Administrative Productivity) |

0.919 |

0.922 |

0.937 |

0.714 |

| ATD (Decision-Making Autonomy) |

0.824 |

0.835 |

0.895 |

0.739 |

As shown in

Table 1, all constructs demonstrated adequate internal consistency reliability. Cronbach’s alpha values ranged from 0.824 (ATD) to 0.930 (CL), and composite reliability values ranged from 0.895 (ATD) to 0.945 (CL), all exceeding the recommended threshold of 0.70 [

81]. Dijkstra–Henseler rho (ρA) values similarly confirmed reliability across all constructs. Convergent validity was confirmed for all constructs, with AVE values ranging from 0.590 (IA) to 0.742 (CL), all exceeding the 0.50 threshold [

81].

As reported in

Table 2, all indicator loadings exceeded the recommended threshold of λ ≥ 0.70 [

81]. Loadings for the IA construct ranged from 0.719 to 0.813; for CL from 0.811 to 0.879; for PA from 0.774 to 0.894; and for ATD from 0.830 to 0.883. These results confirm adequate indicator reliability across all reflective constructs.

Table 2.

Outer Loadings of Reflective Indicators.

Table 2.

Outer Loadings of Reflective Indicators.

| Item |

Construct |

Loading (λ) |

Status |

| IA1 |

IA (AI Adoption) |

0.787 |

Pass |

| IA2 |

IA (AI Adoption) |

0.722 |

Pass |

| IA3 |

IA (AI Adoption) |

0.813 |

Pass |

| IA4 |

IA (AI Adoption) |

0.779 |

Pass |

| IA5 |

IA (AI Adoption) |

0.786 |

Pass |

| IA6 |

IA (AI Adoption) |

0.796 |

Pass |

| IA7 |

IA (AI Adoption) |

0.738 |

Pass |

| IA8 |

IA (AI Adoption) |

0.719 |

Pass |

| CL1 |

CL (Job Competencies) |

0.874 |

Pass |

| CL2 |

CL (Job Competencies) |

0.850 |

Pass |

| CL3 |

CL (Job Competencies) |

0.874 |

Pass |

| CL4 |

CL (Job Competencies) |

0.811 |

Pass |

| CL5 |

CL (Job Competencies) |

0.879 |

Pass |

| CL6 |

CL (Job Competencies) |

0.877 |

Pass |

| PA1 |

PA (Administrative Productivity) |

0.830 |

Pass |

| PA2 |

PA (Administrative Productivity) |

0.894 |

Pass |

| PA3 |

PA (Administrative Productivity) |

0.830 |

Pass |

| PA4 |

PA (Administrative Productivity) |

0.849 |

Pass |

| PA5 |

PA (Administrative Productivity) |

0.774 |

Pass |

| PA6 |

PA (Administrative Productivity) |

0.887 |

Pass |

| ATD1 |

ATD (Decision-Making Autonomy) |

0.866 |

Pass |

| ATD2 |

ATD (Decision-Making Autonomy) |

0.830 |

Pass |

| ATD3 |

ATD (Decision-Making Autonomy) |

0.883 |

Pass |

Discriminant validity was assessed using the heterotrait–monotrait ratio of correlations (HTMT), as reported in

Table 3. Five of six construct pairs demonstrated HTMT values below the conservative threshold of 0.85, confirming strong discriminant validity. The PA–CL pair yielded HTMT = 0.879, which falls below the acceptable threshold of 0.90 [

84], indicating adequate discriminant validity. This moderate-to-high correlation between PA and CL is theoretically consistent with their conceptual proximity—both constructs reflect organizational sustainability outcomes that are enhanced by common antecedents related to AI adoption and workforce capability development. Together, these results confirm that all constructs in the measurement model are empirically distinct, supporting the validity of the proposed structural model.

Table 3.

Heterotrait–Monotrait Ratio of Correlations (HTMT).

Table 3.

Heterotrait–Monotrait Ratio of Correlations (HTMT).

| Construct Pair |

C1 |

C2 |

HTMT |

Assessment |

| CL–IA |

CL |

IA |

0.670 |

< 0.85 ✓ |

| PA–IA |

PA |

IA |

0.635 |

< 0.85 ✓ |

| ATD–IA |

ATD |

IA |

0.451 |

< 0.85 ✓ |

| PA–CL |

PA |

CL |

0.879 |

< 0.90 ✓ |

| ATD–CL |

ATD |

CL |

0.505 |

< 0.85 ✓ |

| ATD–PA |

ATD |

PA |

0.388 |

< 0.85 ✓ |

Table 4.

Fornell–Larcker Criterion (Discriminant Validity).

Table 4.

Fornell–Larcker Criterion (Discriminant Validity).

| Construct |

IA |

CL |

PA |

ATD |

| IA |

0.768 |

0.627 |

0.589 |

0.398 |

| CL |

0.627 |

0.861 |

0.815 |

0.440 |

| PA |

0.589 |

0.815 |

0.845 |

0.342 |

| ATD |

0.398 |

0.440 |

0.342 |

0.860 |

Table 5.

Collinearity Statistics (VIF) for Common Method Bias Assessment.

Table 5.

Collinearity Statistics (VIF) for Common Method Bias Assessment.

| Endogenous Construct |

Predictor |

VIF |

Assessment |

| CL |

IA |

1.00 |

< 3.3 ✓ |

| PA |

IA |

1.00 |

< 3.3 ✓ |

| ATD |

IA |

1.00 |

< 3.3 ✓ |

4.2. Structural Model Assessment

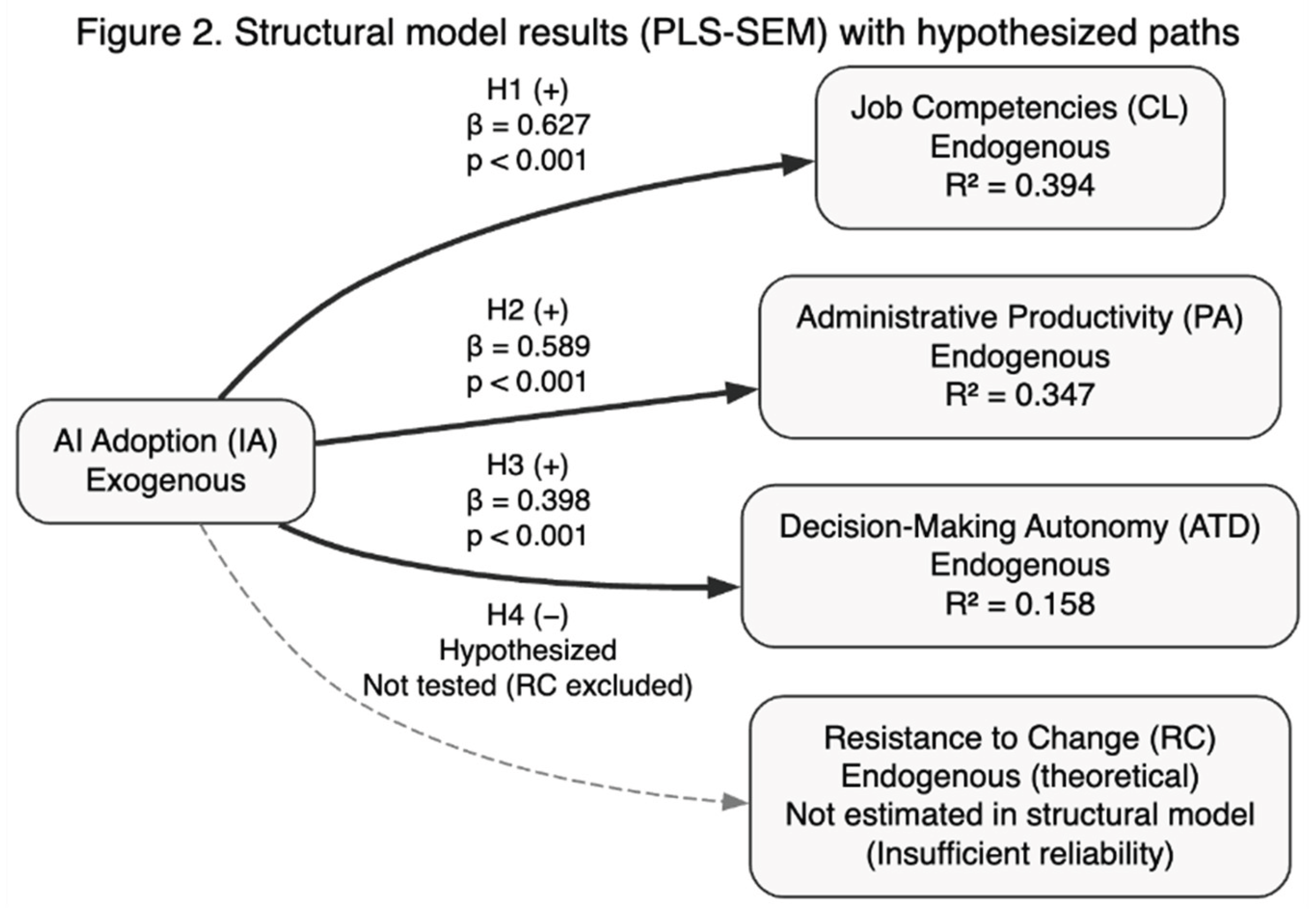

The structural model was evaluated by examining path coefficients, statistical significance (via bootstrapping with 5,000 subsamples), effect sizes (f2), coefficients of determination (R2), predictive relevance (Q2), out-of-sample predictive accuracy (PLS Predict), and global model fit (SRMR). The estimated structural relationships are visually summarized in

Figure 2, while detailed statistical results are presented in

Table 6,

Table 7,

Table 8 and

Table 9.

Table 10.

Gaussian Copula Endogeneity Test.

Table 10.

Gaussian Copula Endogeneity Test.

| Path |

Copula Coefficient |

95% CI |

t-value |

p-value |

Interpretation |

| IA → CL |

1.1617 |

[-0.7071, 3.0304] |

1.218 |

0.2261 |

No evidence of endogeneity |

| IA → PA |

1.7567 |

[-0.1658, 3.6792] |

1.791 |

0.0765 |

No evidence of endogeneity |

| IA → ATD |

2.8898 |

[0.7487, 5.031] |

2.645 |

0.0096 |

Potential endogeneity detected |

The Gaussian Copula test revealed no significant endogeneity for the AI → CL and AI → PA paths (p ≥ 0.05). However, the AI → ATD relationship showed a significant copula term (p < 0.05), indicating potential endogeneity in this specific structural path. This suggests that the relationship between AI adoption and decision-making autonomy may involve reciprocal dynamics or omitted variables.

4.3. Structural Model Interpretation

Although four hypotheses were initially proposed, the structural model evaluated only the three hypotheses for which measurement reliability was confirmed (H1, H2, H3). As noted in

Section 4.1, H4 (AI adoption → Resistance to Change) could not be tested in the structural model due to the insufficient psychometric reliability of the RC construct; the SmartPLS path model accordingly includes only the three validated structural paths. The structural results, reported in

Table 6, provide support for all three tested hypotheses. H1 (IA → CL) was supported (β = 0.627, t = 11.55, p < 0.001, 95% CI [0.519, 0.731]), indicating a strong positive predictive association between AI adoption and job competencies. The effect size (f2 = 0.649) is classified as large [

81], indicating that AI adoption is a substantively important predictor of job competency development among administrative staff.

H2 (IA → PA) was also supported (β = 0.589, t = 9.885, p < 0.001, 95% CI [0.470, 0.705]), confirming a positive and statistically significant association between AI adoption and administrative productivity, with a large effect size (f2 = 0.531). H3 (IA → ATD) was supported (β = 0.398, t = 5.267, p < 0.001, 95% CI [0.249, 0.544]), indicating a positive association between AI adoption and decision-making autonomy, with a medium-to-large effect size (f2 = 0.188).

Regarding explanatory power, AI adoption explained 39.4% of the variance in job competencies (R2 = 0.394), 34.7% of the variance in administrative productivity (R2 = 0.347), and 15.8% of the variance in decision-making autonomy (R2 = 0.158;

Table 7). All Q2 values exceeded zero (CL: Q2 = 0.379; PA: Q2 = 0.309; ATD: Q2 = 0.131), confirming the predictive relevance of the model for all three outcomes. The PLS Predict analysis (

Table 8) further confirmed that the model outperforms a naïve benchmark for all constructs, with large out-of-sample predictive accuracy for CL (Q2_predict = 0.371), moderate accuracy for PA (Q2_predict = 0.309), and small-to-moderate accuracy for ATD (Q2_predict = 0.107). Global model fit was confirmed by SRMR = 0.0686 ≤ 0.08 (

Table 9).

4.4. Robustness and Endogeneity Assessment

The model demonstrated satisfactory robustness across multiple diagnostics. Common method bias was not detected, and collinearity diagnostics were within acceptable thresholds. Predictive assessment (PLS-Predict) confirmed that the model outperformed naïve benchmarks across all constructs, supporting its out-of-sample predictive capability.

Regarding endogeneity, the Gaussian Copula procedure revealed no significant bias for the AI → CL and AI → PA paths. However, the AI → ATD relationship exhibited a significant copula term (p < 0.05), indicating potential endogeneity in this specific structural path. This finding may reflect the bidirectional nature of the relationship between technological adoption and perceived decision-making autonomy, which is theoretically plausible in digital transformation contexts. Importantly, the structural coefficient for AI → ATD remained positive and statistically significant, suggesting that the substantive interpretation of the effect remains stable despite the detected endogeneity signal.

5. Discussion

5.1. Overview of Principal Findings

This study provides robust empirical evidence that AI adoption is a significant positive predictor of three organizational sustainability outcomes—job competencies, administrative productivity, and decision-making autonomy—among administrative staff in educational institutions in Peru. The three empirically tested hypotheses (H1, H2, H3) were supported at the p < 0.001 level, with path coefficients and effect sizes that indicate substantively meaningful rather than merely statistically detectable relationships. H4, which proposed a negative association between AI adoption and resistance to change, could not be evaluated in the structural model due to the insufficient psychometric reliability of the Resistance to Change construct during the measurement phase; this finding is discussed separately in

Section 5.5. Collectively, the structural results position AI adoption as a viable driver of sustainable organizational performance in resource-constrained, emerging economy educational contexts.

5.2. AI Adoption and Job Competencies

The strong positive association between AI adoption and job competencies (β = 0.627, f2 = 0.649) is one of the most substantively significant findings of this study. This result extends prior research by demonstrating that, even in educational institutions operating under institutional and resource constraints, AI adoption is associated with competency development rather than competency displacement. This finding aligns with studies in Peruvian educational contexts showing that workers who engage with digital and AI tools develop higher digital self-efficacy and broader technical repertoires [

55,

56], and with bibliometric evidence identifying competency transformation as a dominant theme in AI-labor market research [

54].

From a sustainable human resource management perspective, this finding carries particular significance. Job competency development represents a foundational dimension of human capital sustainability—the capacity of organizations to continuously renew their workforce capabilities in response to environmental change [

7,

8]. In educational institutions, where administrative staff are increasingly required to navigate complex regulatory, data management, and communication tasks, AI-enabled competency enhancement directly strengthens institutional capacity and long-term resilience. The large effect size (f2 = 0.649) suggests that AI adoption may represent one of the most powerful organizational levers for workforce capability development currently available to educational administrators in emerging economy contexts.

A plausible mechanism underlying this association is the competency learning effect embedded in AI tool use. As administrative staff engage with AI-driven systems for scheduling, data management, and communication, they are compelled to develop practical digital literacies and critical data interpretation skills—competencies that extend beyond the immediate task context. This process aligns with UTAUT’s facilitating conditions dimension: when institutional support and training provision accompany AI adoption, competency development is reinforced [

27].

Before proceeding to discuss individual findings, it is appropriate to reaffirm the sustainability scope of this study. The conceptualization of organizational sustainability adopted here centers on social and economic sustainability -- understood as the capacity of organizations to develop human capital, sustain operational effectiveness, and preserve employee agency over time. Environmental sustainability, though integral to the broader sustainability discourse, falls outside the measurement framework and theoretical boundaries of the present research. This delimitation does not diminish the study’s sustainability relevance; rather, it reflects a coherent and deliberate theoretical positioning consistent with the social sustainability literature [

7,

15] and the SDG agenda for quality education and decent work [

73]. The three outcomes examined -- job competency development, administrative productivity, and decision-making autonomy -- each represent theoretically grounded dimensions of sustainable organizational performance that are particularly salient in resource-constrained educational institutions in emerging economy contexts.

5.3. AI Adoption and Administrative Productivity

The positive and significant association between AI adoption and administrative productivity (β = 0.589, f2 = 0.531) confirms that AI tools generate observable efficiency gains in educational administrative work. This result is consistent with prior organizational research demonstrating that AI-assisted automation of routine tasks—including data entry, scheduling, and report generation—reduces processing times, decreases errors, and frees cognitive resources for higher-value activities [

2,

65,

66]. The finding further corroborates Drucker’s [

34] knowledge productivity framework: in knowledge-intensive organizational environments, AI serves as a productivity multiplier by enabling workers to focus on strategic rather than procedural work.

The contextual implications of this finding for emerging economy educational institutions are particularly noteworthy. In Chimbote—where public educational institutions operate with constrained budgets, limited administrative personnel, and high regulatory compliance demands—productivity improvements enabled by AI adoption represent a material contribution to institutional sustainability. Administrative efficiency gains directly affect the institution’s capacity to fulfill its educational mission, allocate resources strategically, and maintain service quality in contexts where staff workload is high and human capital is limited. This connection between micro-level productivity and macro-level institutional sustainability underscores the value of examining AI’s organizational effects through a sustainability lens [

15,

16].

5.4. AI Adoption and Decision-Making Autonomy

The positive association between AI adoption and decision-making autonomy (β = 0.398, f2 = 0.188) is theoretically significant, as it suggests that AI adoption in this context functions as an autonomy-supporting rather than autonomy-constraining mechanism. This result contrasts with theoretical concerns raised by Brynjolfsson and McAfee [

70] regarding the risk of AI eroding cognitive agency, and with findings from contexts where AI is experienced as externally imposing automated decisions. Instead, the present findings align with Davenport and Kirby’s [

38] complementary intelligence model: when AI is positioned as a decision-support tool providing relevant information rather than as a decision-replacement system, workers experience expanded rather than reduced decision capacity.

The smaller effect size (f2 = 0.188) relative to the competency and productivity paths is theoretically interpretable. Decision-making autonomy is a more psychologically embedded outcome than task performance or competency acquisition—it depends not only on the functional availability of AI tools but on the organizational culture, power structures, and individual psychological readiness surrounding their use [

36,

37]. In emerging economy educational institutions characterized by historically hierarchical governance and limited prior exposure to advanced digital decision-support systems, the autonomy-enhancing effects of AI are likely to be more moderate and contingent on factors not fully captured by the current model. This interpretation is consistent with the smaller but still meaningful R2 (0.158) and Q2 (0.131) values for ATD relative to the other constructs.

The coefficient of determination for decision-making autonomy (R

2 = 0.158) should be interpreted not as a methodological limitation but as a theoretically informative finding that refines the model’s explanatory scope. In social science organizational research, R

2 values in the range of 0.10 to 0.25 are widely considered meaningful for single-predictor models examining psychologically complex outcomes [

81], and PLS-SEM research in organizational and educational contexts regularly reports R

2 values of comparable or lower magnitude [

83]. Autonomy is a multidimensional psychological construct whose variance is distributed across a range of organizational, structural, and individual antecedents -- including institutional governance structures, managerial leadership style, role clarity, and prior digital experience -- none of which are captured by the current model by design [

36,

37]. The R

2 of 0.158 thus indicates that AI adoption is a statistically significant and theoretically meaningful contributor to decision-making autonomy, while simultaneously acknowledging that autonomy is co-determined by broader contextual factors. This represents a theoretically refined interpretation consistent with Self-Determination Theory [

36,

68], which positions autonomy as a deeply embedded psychological need shaped by multiple layers of organizational context. Future research incorporating organizational culture, change management quality, and role design as additional predictors is expected to substantially improve explanatory power for this outcome.

Importantly, from a social sustainability perspective, the positive AI–autonomy association suggests that AI adoption in this context strengthens rather than undermines the psychological conditions of employment—specifically the self-determination that SDT identifies as fundamental to well-being and intrinsic motivation at work [

36]. This finding has direct implications for the social dimension of organizational sustainability in educational institutions, where the preservation of professional agency among administrative staff is essential for maintaining work quality, institutional stability, and employee well-being.

5.5. Resistance to Change: Measurement Challenges and Theoretical Implications

Although H4 (AI adoption → Resistance to Change) was theoretically grounded and conceptually integrated into the research model, the Resistance to Change construct could not be incorporated into the structural model due to insufficient internal consistency reliability. This finding warrants a contextually situated discussion, as it holds theoretical and methodological implications beyond a simple instrumentation failure.

Several contextual explanations may account for the weak reliability observed for the Resistance to Change scale in this particular sample. First, respondent heterogeneity may have played a significant role. Administrative staff in the sampled educational institutions varied substantially in age, digital experience, and institutional tenure, meaning that the construct may manifest differently across subgroups in ways that attenuate inter-item correlations and reduce overall scale coherence. Resistance to change is a psychologically complex and multidimensional phenomenon; in a heterogeneous sample, different respondents may understand and experience resistance through qualitatively different mechanisms—some behaviorally, others affectively, and others cognitively—potentially undermining the assumption of unidimensionality that underlies reflective measurement [

30,

31].

Second, the organizational context may reflect a transitional or adaptation phase of AI integration in which resistance attitudes are not yet crystallized into stable, measurable patterns. When organizations are at early stages of AI adoption, workers’ responses may be ambivalent, context-dependent, and temporally unstable, producing inconsistent item responses that lower reliability estimates [

32,

33]. In this reading, the weak reliability does not necessarily mean that resistance is absent from this context, but rather that it may not yet be sufficiently formed or uniformly conceptualized by respondents to be captured reliably by a standardized scale.

Third, social desirability bias—a recognized threat in organizational survey research [

80]—may have contributed to response distortion on resistance items. In institutional settings where AI adoption is positively framed by management and where participation in the study was voluntary and anonymous, respondents may have underreported resistance attitudes to align with perceived organizational norms. This differential reporting dynamic would attenuate variance on resistance items, reducing covariance among indicators and consequently depressing reliability estimates.

From a theoretical standpoint, this finding reinforces the importance of contextually validating organizational behavior constructs before applying them in emerging economy settings. Resistance to change scales developed and validated in Western, high-digitalization organizational contexts may not transfer directly to Latin American educational institutions, where the nature, visibility, and expression of resistance may be culturally and institutionally modulated. This observation is consistent with calls in the cross-cultural organizational research literature for context-sensitive measurement instrument development, particularly for psychologically complex constructs in transitional digital environments [

77,

81]. Future research should prioritize the development and validation of contextually adapted Resistance to Change scales for educational administrative settings in emerging economies.

5.6. Sustainability and SDG Implications

Considered collectively, the findings of this study demonstrate that AI adoption is associated with improvements across three complementary dimensions of organizational sustainability in educational institutions. First, competency development directly advances SDG 4 (Quality Education), Target 4.4, by strengthening the technical and professional skills of educational administrative personnel, thereby enhancing institutional human capital and workforce sustainability [

73]. Second, productivity enhancement supports SDG 8 (Decent Work and Economic Growth), Target 8.2, by contributing to higher levels of institutional efficiency and economic performance through technological modernization—a critical sustainability objective for resource-constrained public institutions [

15]. Third, the positive AI–autonomy association advances the social sustainability of work by preserving and enhancing professional agency, contributing to decent work conditions consistent with SDG 8’s broader mandate and with social sustainability frameworks emphasizing human-centered organizational governance [

17].

More broadly, these findings contribute to the growing literature on responsible AI adoption in organizations by demonstrating that—when implemented in a context where workers engage actively with AI tools and have access to institutional support—AI adoption does not necessarily generate the negative sustainability consequences (competency displacement, autonomy erosion, institutional fragility) that are theoretically possible. Instead, in this emerging economy educational context, AI adoption appears to function as an organizational capability that simultaneously advances workforce development, institutional efficiency, and professional self-determination.

6. Conclusions

This study examined the predictive relationships between AI adoption and three organizational sustainability outcomes—job competencies, administrative productivity, and decision-making autonomy—among administrative staff in educational institutions in Peru, using a PLS-SEM approach. The findings provide robust empirical support for all three hypotheses: AI adoption is a positive and significant predictor of job competencies (β = 0.627, f2 = 0.649), administrative productivity (β = 0.589, f2 = 0.531), and decision-making autonomy (β = 0.398, f2 = 0.188).

From a theoretical perspective, this study advances the organizational sustainability literature by empirically demonstrating that AI adoption—operationalized through a multidimensional UTAUT-grounded instrument—constitutes a substantive driver of workforce capability development, institutional efficiency, and professional agency in educational administrative contexts. The integration of UTAUT, Labor Productivity Theory, and Self-Determination Theory within a predictive PLS-SEM framework provides a comprehensive theoretical account of the mechanisms through which AI adoption shapes organizational sustainability outcomes, extending prior research beyond large organizations and developed economy settings.

Methodologically, this study demonstrates the value of PLS-SEM for examining complex, multi-outcome predictive models in organizational research conducted in emerging economy contexts characterized by smaller sample sizes and non-normal data distributions. The measurement model confirmed strong reliability and validity across three of the four proposed constructs. The Resistance to Change construct was excluded from structural estimation due to insufficient internal consistency reliability—a finding that itself constitutes a methodological contribution, underscoring the importance of rigorous psychometric validation before structural model estimation in PLS-SEM research conducted in novel organizational contexts [

77,

81]. The PLS Predict analysis confirmed that the retained model provides meaningful out-of-sample predictive accuracy beyond a naïve benchmark for all three confirmed outcomes.

From a practical perspective, the findings offer actionable implications for educational administrators, institutional policymakers, and AI governance frameworks. Rather than viewing AI adoption as a peripheral operational tool, educational institutions should treat it as a strategic sustainability capability—one that requires deliberate investment in training provision, participatory implementation, and organizational cultures that position AI as a complement to rather than a replacement of professional judgment. For policymakers, the results suggest that supporting AI integration in educational administration—through infrastructure investment, training programs, and governance frameworks—can generate simultaneous gains across workforce, productivity, and autonomy dimensions of institutional sustainability, advancing SDGs 4, 8, and 9 at the organizational level.

7. Limitations and Future Research Directions

Despite its theoretical and empirical contributions, this study presents several limitations that should be acknowledged when interpreting its findings. First, the cross-sectional research design limits the ability to establish temporal precedence and definitive causal relationships between AI adoption and organizational sustainability outcomes. Although the structural model reveals strong predictive associations, the simultaneous measurement of all constructs does not allow for conclusive causal inference. It remains possible that workers with higher baseline competencies, productivity, and autonomy may perceive AI tools more favorably—a reverse-causation dynamic that cannot be ruled out with cross-sectional data. Future research employing longitudinal or cross-lagged panel designs would provide stronger evidence regarding causal directionality.

Second, the reliance on self-reported perceptual measures introduces potential risk of common method variance and perceptual inflation. Although statistical diagnostics—including Harman’s single-factor test and full collinearity VIF assessment—indicated that common method bias is unlikely to constitute a severe threat, the use of methodologically homogeneous data sources may nonetheless amplify observed associations. Future research should incorporate multi-source data collection approaches, including institutional records, supervisor assessments, or objective performance indicators, to strengthen methodological robustness.

Third, the regional scope of this study—limited to educational institutions in Chimbote, Ancash, Peru—constrains the generalizability of the findings. Although Chimbote provides a theoretically relevant and underresearched emerging economy context, institutional conditions, cultural norms, and managerial practices in other regions or countries may substantially differ, potentially moderating the AI–sustainability relationships observed in this study. Cross-national and cross-regional comparative studies would help assess the external validity of the proposed model.

Fourth, while the sample of 98 respondents exceeds the minimum required by statistical power analysis, the overall sample size remains relatively modest in absolute terms. Future studies should target larger samples, ideally spanning multiple institutional types (public and private) and educational levels, to enable multi-group analyses and improve model generalizability.

Fifth, the structural model examined direct relationships between AI adoption and the three sustainability outcomes without explicitly modeling potential mediating or moderating mechanisms. Future research should investigate mediating variables—such as organizational learning culture, digital self-efficacy, or AI governance quality—as well as moderators including leadership style, institutional formalization, and workforce AI literacy levels, to develop a more comprehensive understanding of the boundary conditions under which AI adoption generates positive sustainability outcomes. Cross-country comparisons examining whether the AI–sustainability relationships observed in Peru generalize to other Latin American or emerging economy educational contexts would constitute a particularly valuable extension of this research.

Sixth, and importantly, the Resistance to Change construct was excluded from the structural model due to insufficient psychometric robustness. The RC scale failed to achieve the minimum recommended Cronbach’s alpha threshold of .70 and returned weak indicator loadings across multiple items, precluding its valid inclusion in the structural estimation [

77,

81]. This exclusion should not be interpreted as evidence that resistance to change is theoretically irrelevant in this context; rather, it reflects the psychometric limitations of the measurement instrument as deployed in this specific organizational and cultural setting. The construct remains theoretically important, and its exclusion represents a substantive gap that future research must address. Specifically, there is a clear need for: (a) the development and contextual validation of resistance to change scales adapted to emerging economy educational settings; (b) the employment of validated multidimensional RC scales that distinguish between affective, cognitive, and behavioral resistance components; and (c) longitudinal research designs capable of capturing change dynamics and resistance trajectories as AI adoption matures in these institutions. Moderation models including organizational change readiness, change management quality, or institutional culture as boundary conditions may also illuminate the conditions under which resistance to AI adoption is most and least pronounced in educational contexts.

The present model focuses on direct predictive relationships to preserve parsimony and predictive clarity. Future research should incorporate mediating mechanisms such as digital self-efficacy, organizational learning climate, and AI governance quality.

Although the RC construct was excluded due to insufficient internal consistency reliability under a reflective specification, future research may consider modeling resistance to change as a formative construct or employing multidimensional scales distinguishing affective, cognitive, and behavioral resistance dimensions.

Author Contributions

Conceptualization, J.A.M. and A.V.G.; methodology, J.A.M. and A.V.G; software, M.A.C.-P.; validation, M.A.C.-P.; J.A.M. and A.V.G.; formal analysis, M.A.C.-P.; investigation, J.A.M. and A.V.G.; resources, J.A.M. and A.V.G.; data curation, M.A.C.-P.; writing—original draft preparation, M.A.C.-P. J.A.M. and A.V.G.; writing—review and editing, M.A.C.-P. J.A.M. and A.V.G.; visualization, J.A.M. and A.V.G.; supervision, M.A.C.-P.; project administration, M.A.C.-P. J.A.M. and A.V.G. All authors have read and agreed to the published version of the manuscript.

Funding

The author(s) declare that financial support was received for the publication of this arti-cle. The APC was funded by Universidad César Vallejo.

Institutional Review Board Statement

The study was conducted in accordance with the Declara-tion of Helsinki and approved by the Institutional Review Board of Universidad César Vallejo (School of Business Administration), approval code N.° 00142-2025/CEI-AE.

Informed Consent Statement

All participants provided informed consent prior to their partici-pation in the study.

Data Availability Statement

The data presented in this study are available on request from the corresponding author. The data are not publicly available due to privacy and ethical restrictions.

Generative AI Statement

Generative artificial intelligence tools were used to support language editing and improve clarity during manuscript preparation. No generative AI was used for data collection, data generation, or data manipulation. All statistical analyses were conducted using the original dataset collected from the participants.

Acknowledgments

The authors gratefully acknowledge the participation of the organizations and individuals who contributed to this study and generously shared their time and insights.

Conflicts of Interest

The authors declare no conflicts of interest. The funders had no role in the study.

References

- Dwivedi, Y.K.; Hughes, L.; Ismagilova, E.; Aarts, G.; Coombs, C.; Crick, T.; Duan, Y.; Dwivedi, R.; Edwards, J.; Eirug, A.; et al. Artificial intelligence (AI): Multidisciplinary perspectives on emerging challenges, opportunities, and agenda for research, practice and policy. Int. J. Inf. Manag. 2021, 57, 101994. [Google Scholar] [CrossRef]

- Davenport, T.H.; Ronanki, R. Artificial intelligence for the real world. Harv. Bus. Rev. 2018, 96, 108–116. Available online: https://hbr.org/2018/01/artificial-intelligence-for-the-real-world (accessed on 28 February 2026).