1. Introduction

1.1. Overview

Wind speed forecasting techniques are applied in many fields that involve integrating green energy, power systems, and climate change analysis. However, wind exhibits complex, intermittent spatial and temporal dynamics that depend on atmospheric conditions, topography, and local turbulent eddies. While linear models effectively explain global behaviour and ignore any local dependencies, data-driven forecasting techniques lack interpretability and any quantification of uncertainty.

In the context of spatio-temporal forecasting, Gaussian Process (GP) approaches offer the advantage of a probabilistic treatment of uncertainty while enabling the covariance structure to be modelled economically. Despite these advantages, GPs tend to be computationally expensive, especially for large datasets, and are also computationally redundant. A hybrid approach to combining GPs is to tailor the models to the residuals of a linear mean model. Generally, wind speed is characterised differently across the country, with trends ranging from low-elevation coastal regions to high-elevation interior regions. Therefore, unless the spatial variability is modelled appropriately, the forecasting could be inaccurate. To address the above challenges, a model is proposed that uses a hybrid method to model regional wind speed. The model partitions regional wind speeds into a mean model and a GP model for the errors.

1.2. Literature Review

Accurate forecasting of wind speeds is critical for integrating wind energy into the grid and for various meteorological needs. Unfortunately, the chaotic behaviour of wind, influenced by large-scale atmospheric conditions, local topography, and turbulence, makes wind speed forecasting a complex problem. This review traces the chronological and thematic developments of wind speed forecasting techniques, from earlier physical and statistical models to modern hybrid and probabilistic models. It focuses on the developments of Gaussian Process Regression (GPR) and the utilisation of clustering techniques, both of which are significant to the proposed research. A brief overview of the major models is presented in

Table 1.

1.2.1. Classical Methods: Physical and Statistical Models

Traditionally, wind speed forecasting was carried out using Numerical Weather Prediction (NWP) models, which simulate atmospheric physics to predict wind speeds. These are certainly essential in wind speed forecasting; however, these models are subject to systematic errors and lack spatial resolution, which are often overcome by post-processing these results to increase their accuracy locally [

1]. To overcome these problems, statistical time-series models such as ARIMA and SARIMA were widely applied. These models are effective in capturing linear trends and seasonality in wind speeds; however, they are not effective in capturing rapid, non-linear changes in wind speeds, which are characteristic of wind speeds in general [

2]. More adaptive models, such as Kalman Filters, were then applied to correct errors in short-term forecasts; however, these filters rely on linear assumptions and are prone to noise, limiting their application to wind speed forecasting over longer time scales and in spatially heterogeneous domains [

3].

1.2.2. The Ascent of Machine Learning and Deep Learning

The limitations of linear models triggered the development and application of machine learning (ML) and deep learning (DL) techniques. ML and DL models, including random forests, Convolutional Neural Networks (CNNs), and Long Short-Term Memory (LSTM), are very effective at learning complex, non-linear relationships directly from historical data, often resulting in significant accuracy gains in forecasts [

4]. The hybrid model, combining NWP model results and LSTM, is also very effective for forecasting complex terrain, including non-linear relationships and temporal dependencies [

5]. However, ML/DL-based models are computationally intensive, require careful tuning, and often struggle to generalise across different locations. Additionally, ML/DL models are considered "black box" models, which limits their interpretability, making interpretability a driving need for finding models that are effective, yet transparent and physically plausible [

4,

5].

1.2.3. Probabilistic Forecasting and Gaussian Process Regression

As the need to quantify uncertainty in grid operations increases, probabilistic forecasting has become a crucial area of study. Unlike point forecasts, probabilistic forecasting can offer predictive distributions for better risk-informed decision-making [

6]. Bayesian methods, including Bayesian Neural Networks and Bayesian Optimised models, inherently offer uncertainty quantification. However, their site-specific calibration can be a hindrance for real-time applications [

7].

Within this paradigm, Gaussian Process Regression (GPR) has emerged as a prominent non-parametric tool for probabilistic forecasting. GPR is particularly prized for its capacity to yield a mean estimate and a well-founded interval estimate, which capture the essential randomness of wind phenomena [

8]. The efficacy of GPR is critically dependent on its covariance function (kernel), which embodies assumptions regarding the underlying process, e.g., smoothness, periodicity, etc. GPR has been successfully utilised in hybrid frameworks, e.g., coupling GPR with support vector regression to post-process NWP forecasts for short-term forecasting (1 to 6 hours ahead) [

9]. Another hybrid model, which is a focus of this research, is a linear mean model coupled with a GPR model for forecasting residuals. This model balances interpretability with the power of GPR for modelling complex, spatially correlated error structures. However, standard GPR is known to scale poorly to large datasets, requiring a cubic time complexity (

) for its implementation [

9,

10].

1.2.4. Spatio-Temporal Modelling and the Role of Clustering

The spatial correlations in wind fields suggest that using data from adjacent areas can improve local predictions. A more advanced version of GPR, known as kriging, which is analogous to geostatistics, has been employed to interpolate wind fields while accounting for spatial correlations. It provides predictions along with their uncertainties [

9]. More recent models seek to address complex spatio-temporal interactions. For example, dynamic versions of GPR that incorporate local clustering for improving accuracy have been presented [

11]. Bayesian models for spatio-temporal forecasting for renewable energy forecasting have also been presented, but their success relies on the availability of dense data [

12].

A notable result of the recent research is the recognition that wind speed statistical properties are not necessarily homogeneous over vast geographical areas. Various regimes, depending on the presence or absence of factors such as coastal areas and altitude, exhibit unique properties, including levels of non-stationarity, turbulence, and autocorrelation. This means that rather than seeking an optimal model applicable over the global domain, the regime-based approach of dividing the domain into homogeneous areas is more advisable. Grouping stations by their physical properties, such as geographic coordinates and altitude, is a statistically sound approach for dividing the domain.

Recent research emphasises the potential of using the latest GP variants. For example, the application of hybrid GP approaches, which integrate spatial correlation with corrected NWP forecast information, has been found to offer better accuracy than the standard GP model [

13]. Another GP model variant, the multi-task GP, is also found to be beneficial, particularly when applied to data-scarce locations, where information from data-rich locations can be exploited [

14]. However, the current GP model variants, including the complex residual model, have the limitation that their added value is assumed to be applicable uniformly across the study area.

1.3. Summary of Literature Review

This research addresses the limitation of the complex residual model directly by proposing a hybrid linear-GP model, but not over the entire study area, but over geographically and elevation-based clusters. This is to evaluate the added value of the complex residual model across regimes, providing a physically informed, computationally efficient tool to improve the accuracy of wind speed forecasts.

A summary of the comparison of wind speed forecasting methods, including the key studies discussed, highlighting their temporal focus, uncertainty quantification, computational cost and limitations, is given in

Table 1.

1.4. Contribution and Research Highlights

Spatiotemporal wind forecasting models have a critical limitation in accounting for wake effects from wind turbines, which can significantly reduce forecast accuracy and the operational efficiency of wind farms [

15]. Deep learning–based wind forecasting models have demonstrated strong capability to learn nonlinear temporal dependencies and improve short-term prediction accuracy; however, their black-box nature, high data and computational demands, and lack of inherent uncertainty quantification limit their interpretability and reliability for operational wind energy decision-making [

16]. Additionally, the scalability of Gaussian Process Regression (GPR) limits its direct application in large wind farms, as computational demands reduce operational efficiency.

Multi-kernel Learning/Regression: Unified Spatio-Temporal Kernel which combines Matérn kernel (space) + Periodic kernel (time) to model the interactions between the turbines. This kernel identifies where and when interactions between the turbines occur. Bayesian uncertainty provides a measure of predictive variance on how confident a model is in its predictions. For grid operators, this helps quantify risk in power demand/supply forecasts, make data-driven decisions under uncertainty, prioritise responses to high-variance (less certain) scenarios, and improve the grid’s resilience and reliability. In essence, it informs operators how much trust they can place in a forecast. GPR’s superiority in uncertainty-aware forecasting is well-established, but scalability remains a hurdle. Bayesian methods (such as GPR, BNNs) are gaining traction over deterministic ML in energy applications.

The hybrid linear–Gaussian Process model presented here addresses key limitations of existing wind forecasting approaches by decomposing wind prediction into a deterministic linear component and a spatial GP residual component. This formulation preserves interpretability, explicitly models spatial dependence, and provides rigorous uncertainty quantification, making it particularly suitable for sparse wind station networks. Clustering stations based on elevation and geographic coordinates (longitude and latitude) further improves model performance by capturing location-specific wind patterns and local topographic effects. Model accuracy is enhanced through careful kernel selection, where candidate spatial and temporal kernels are evaluated, and hyperparameter optimisation via grid search ensures that the Gaussian Process component is well-calibrated to the underlying data. Together, these strategies improve predictive performance and provide reliable uncertainty estimates.

The rest of the paper is organised as follows.

Section 2 briefly discusses materials and methods, including the Hopkins variable selection method, temporal GP regression, linear temporal regression, the hybrid, and the evaluation metrics.

Section 3 presents the results, and the discussion is presented in

Section 4, and the conclusion in

Section 5.

2. Materials and Methods

2.1. Data Description and Clustering

The data used in this study are from the Wind Atlas South Africa (WASA) at

https://wasadata.csir.co.za/wasa1/WASAData. The WASA data was obtained from the website [

17]. Wind speed data from 10 stations were collected, each with multiple meteorological predictors (e.g., temperature, pressure). The variables included are as follows: Wind speed (m/s) at hub height (62m). The data also feature the temporal aspect, which is the time of the day, the spatial aspect, which is the coordinates, and the meteorological aspect, which includes temperature, barometric pressure, relative humidity, and wind direction. These measurements were recorded every 10 minutes for all variables. For ensuring comparability and removing scale bias: Imputing missing data - Feature-wise means were used to replace missing values; Standardisation - Numerical features are standardised to have a zero average and unit variance; Normalisation - Normalisation of spatial coordinates to remove bias in scales of latitude and longitude. This preprocessing helps stabilise the training process for both the Linear and the Gaussian Process models.

Clustering

Wind conditions in several neighbouring wind farms are likely similar to each other because they are subject to similar weather conditions. To reduce computation, several wind farms can be combined into clusters, enabling forecasts based on clusters rather than individual wind farms. There is an advantage to combining information from several wind farms in the cluster, as it may help iron out local weather extremes.

To capture regime-dependent wind dynamics, stations were clustered using geographical coordinates (longitude, latitude) and elevation. We first used the Hopkins test to assess the dataset’s tendency to form clusters and determine whether the data are meaningfully clustered or randomly distributed (i.e., not clusterable). According to the method proposed by Hopkins and Skellam [

18], the clustering tendency can be quantified using the Hopkins statistic

H defined as:

where

suggests strong evidence of the cluster structure, while

suggests little or no cluster tendency. Thereafter, we will use hierarchical clustering to group the data. This is a method of cluster analysis that builds a hierarchy of clusters to uncover the underlying structure in a dataset. It does not rely on probability distributions, making it a nonparametric method, suitable even when the underlying data distribution is unknown. It also produces a dendrogram, a tree diagram, that provides a visual, flexible representation of how clusters form at different levels. This allows users to choose the number of clusters by cutting the tree at the desired level.

Variable Selection - Relaxed Lasso

The Relaxed Lasso is a two-stage modification of the standard Lasso regression that aims to reduce the bias introduced by Lasso’s shrinkage, particularly in high-dimensional settings [

19]. Lasso performs both variable selection and shrinkage by minimising Equation

2: Lasso estimates regression coefficients by solving the Equation

2. As shown in Equation

2, the Lasso introduces an

-penalty to enforce sparsity.

The factor

expresses the loss as average empirical risk; alternative scalings (e.g.,

) are equivalent up to a rescaling of the tuning parameter

. While effective for variable selection, the Lasso tends to over-shrink coefficient estimates, leading to biased results. The Relaxed Lasso addresses this by decoupling the variable selection and coefficient estimation steps. Specifically, it first uses the Lasso to select a subset of predictors and then performs a second estimation step with reduced shrinkage on those selected variables. The Relaxed Lasso estimator is defined as Equation

3:

where

is the Lasso solution at regularisation level

,

is the ordinary least squares estimate on the set of variables selected by the Lasso,

is the relaxation parameter, controlling the degree of shrinkage. When

, the relaxed estimator reduces to the standard Lasso; when

, it corresponds to unregularised least squares on the Lasso-selected model. Intermediate values of

provide a bias-variance trade-off.

2.2. GPR Models for Wind Forecasting

Gaussian processes are a powerful nonparametric Bayesian approach to regression and classification introduced by [

20]. These processes are conceptualised as components of spatial-temporal modelling, specifically within a defined region where stations are located at different sites. Spatial analysis seeks to construct an optimal model for generating outputs by considering inputs from diverse locations over various time frames. Equation (

4) describes a spatio-temporal GP model:

1. Model equation:

where

,

,

is the observed data at location

and time

t. and

2. Prior to the latent process:

The independent Gaussian process (GP) model is specified hierarchically by:

for each

and

, where we assume that

and

are independent, and each is normally distributed with its respective parameters. The notation

will be used to denote the so-called

nugget effect or the pure error term, which is assumed to be independently normally distributed as

where

is the unknown pure error variance and

I is the identity matrix.

The spatio-temporal random effects will be denoted by

, and these will be assumed to follow

independently in time

Let

denote all the random effects

, for

and

. Let

denote all the parameters of this model, and let

denote the prior distribution.

The logarithm of the joint posterior distribution of the parameters and the missing data for this GP model is given by:

3. Linear models:

The very first attempt at modelling a spatio-temporal response variable is to consider the linear regression models. The multiple linear regression model is written in Equation (

10) as:

where

is the spatio-temporal error term. The error term

is assumed to be a zero-mean spatio-temporal Gaussian process with a separable covariance structure, given by:

where

is an anisotropic correlation function of the spatial distance

between two locations

and

, which may depend on the parameter

, which may be more than one in number.

2.3. Hybrid Model

The proposed Hybrid GP Regression combines a linear regression model with a Gaussian Process (GP) applied to residuals, enabling both interpretable trend estimation and flexible modelling of spatio-temporal dependencies.

Gaussian Process Residual Model

The residual process ( ) is modelled using a zero-mean GP with covariance function ( k ). Multiple covariance structures are considered, including: Matérn covariance with smoothness parameters ( ), Exponential covariance, and Gaussian (squared exponential) covariance. Each covariance structure encodes different assumptions about the smoothness of the residual wind-speed field.

Covariance Selection Procedure

For every validation split of residuals, GP models with different covariance functions are employed. Estimation of the hyperparameters is conducted through maximum likelihood optimisation. When selecting the best covariance function, a choice is made based on validation RMSE and probabilistic performance measures.

Validation Strategy and Evaluation Metrics

A leave-one-station-out cross-validation method was used to evaluate the model’s performance. In each iteration, validation was performed on one station, and training was conducted on the remaining stations. This repetition continued until all stations were used for validation once. Hence, for four stations, 25% of the data was utilised for validation; for six stations, 16.7% was utilised. This checks the model’s ability to make predictions at unobserved locations of wind measurement.

2.4. Validation Metrics

Bayesian evaluation metrics are used to evaluate the performance of Bayesian models, such as Bayesian neural networks. These metrics often go beyond traditional accuracy measures to incorporate uncertainty estimates, which are a key feature of Bayesian methods. The following techniques are used to evaluate the models and are classified as deterministic and probabilistic. The deterministic metrics are the Root Mean Square Error (RMSE) and Mean Absolute Error (MAE), and the probabilistic metrics are the continuous ranked probability score (CRPS), the coverage probability (CVG), the negative log predictive density (NLPD), PINAD and PINAW.

RMSE (Root Mean Squared Error):

MAE (Mean absolute error):

where

is the wind speed,

is the forecast of the wind speed, and

N is the number of forecasts. RMSE measures the average magnitude of forecast errors, giving a higher weight to larger errors, whereas MAE measures the average absolute difference between predicted and actual values.

The Continuous Ranked Probability Score (CRPS) can be written in expectation form [

21] as

where:

F is the predictive cumulative distribution function (CDF),

y is the observed value

,

are independent random variables drawn from the forecast distribution

F and

denotes the expectation under the distribution

F. CRPS evaluates the quality of a probabilistic forecast by comparing the forecast’s cumulative distribution function (CDF) with the observed value. CVG measures the proportion of true observations that fall within a predicted confidence interval of a given nominal level (e.g., 95%). The predictive coverage of the

confidence interval is measured using the Coverage (CVG) metric. For

n observations, let

and

denote the lower and upper bounds of the predicted

confidence interval for the

ith observation. The empirical coverage is then

where

is the observed value at test point

i,

is the indicator function, returning 1 if the condition is true and 0 otherwise. CVG represents the proportion of observations falling within their corresponding predictive intervals. A well-calibrated model should achieve

, reflecting accurate uncertainty quantification.

Negative Log Predictive Density (NLPD) to evaluate uncertainty calibration. For a single forecast-observation pair:

where

y is the observed value,

is the predictive probability density at

y given training data

.

For

N test points, the average NLPD is:

The negative log predictive density (NLPD) measures how likely the model thinks the observed data are, accounting for both the mean and the uncertainty. The Prediction Interval Normalised Average Width (PINAW) measures the average width of the prediction interval, normalised by the range of observed values. Narrower intervals indicate higher precision. It is defined as:

where: k is the number of validation points, and are the upper and lower bounds of the prediction interval for the i-th observation, is the range of the observed values.

The Prediction Interval Normalised Average Deviation (PINAD) measures the average deviation of the midpoint of the prediction interval from the true values, normalised by the range. It indicates the calibration of the prediction intervals. It is defined as:

where the symbols have the same meaning as above, lower values of PINAD indicate that the prediction interval is well centred around the observed values.

3. Results

In this section, the exploratory data analysis will be outlined and evaluated. R statistical software version 4.5.2 was used for data analysis.

3.1. Exploratory Data Analysis (EDA)

As part of analysing wind speed data, Exploratory Data Analysis (EDA) uses statistical measures and various forms of data visualisation. EDA, in particular, becomes important when forming spatio-temporal data models, as it reveals inherent data trends, structures, and patterns, providing the foundation for selecting an ideal model and specifying parameters.

3.1.1. Clustering

In spatio-temporal predictions, clustering the locations balances efficiency, accuracy, and interpretability. This makes clustering a powerful strategy for large-scale forecasting tasks such as weather, traffic, and energy production.

Hopkins Test

The Hopkins Statistic is a method for evaluating whether a dataset has a meaningful clustering structure, i.e., its tendency to form clusters. It is often used before applying clustering algorithms to spatial data. The Hopkins test is used to assess the tendency for clustering in a dataset.

Null Hypothesis (): The data is uniformly randomly distributed in the feature space (i.e., there is no meaningful cluster structure).

Alternative Hypothesis (): The data is not uniformly random and exhibits a clustering tendency.

Decision Rule:

If the Hopkins statistic , we fail to reject — the data is likely random.

If (typically ), we reject — the data shows a significant cluster tendency.

Since the Hopkins statistic is , we reject the null hypothesis and conclude that the data is clusterable.

Clustering

Since we established that our data is clusterable, the next step is to estimate the optimal or maximum number of clusters that can be meaningfully formed. The silhouette measures how well each point fits within its own cluster relative to other points. It provides a more quantitative evaluation and suggests that 2 clusters can be used for the data.

Table 2 shows the stations grouped into two clusters according to their geographic location and elevation.

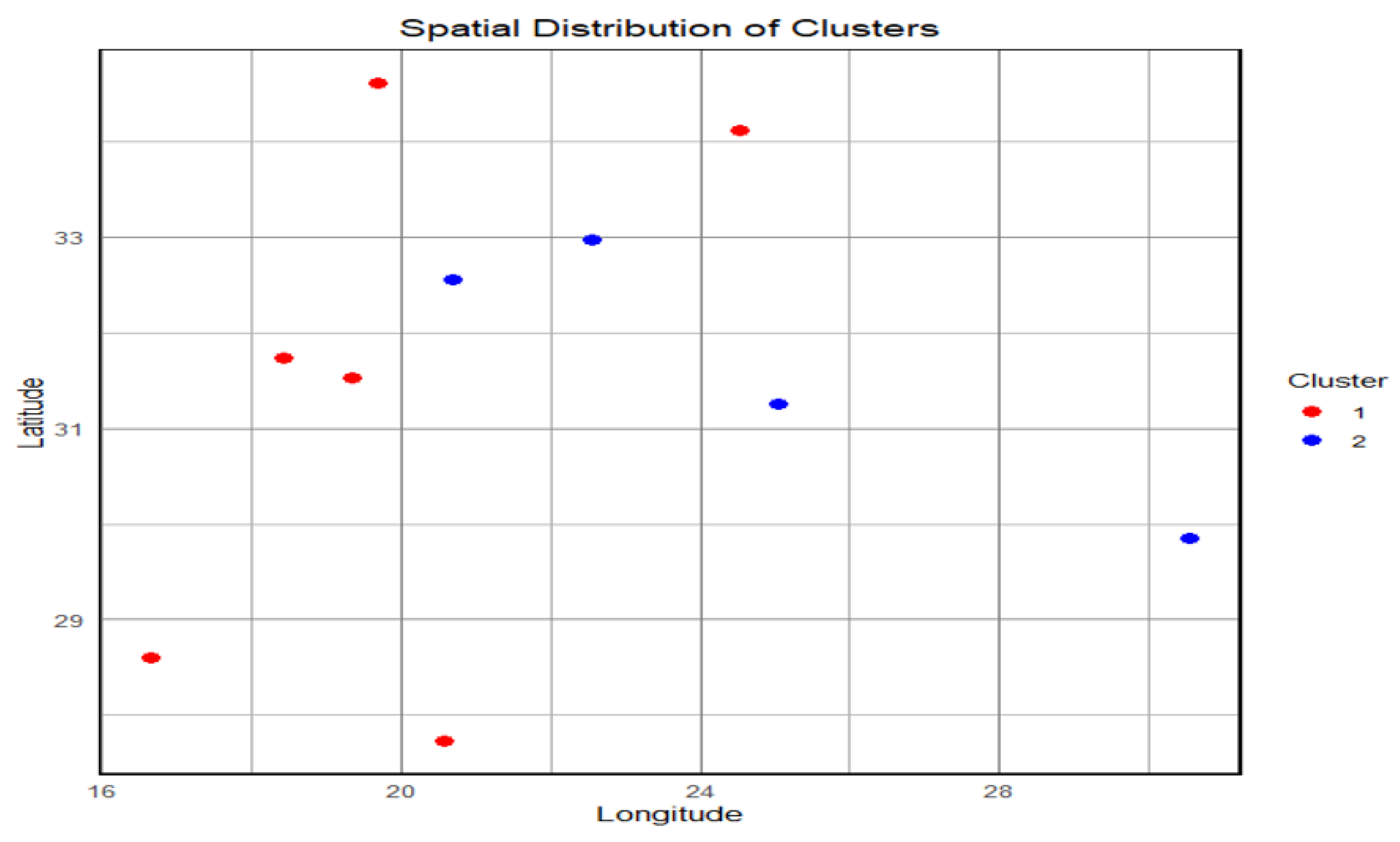

Spatial Distribution of Clusters

The scatter plot

Figure 1 illustrates the spatial distribution of clusters based on station coordinates in terms of latitude and longitude. The two clusters are visually distinct—Cluster 1 (shown in red) is primarily located in the western region with lower longitude values. In contrast, Cluster 2 (shown in blue) is positioned toward the eastern part of the study area. Lastly, the coverage of the spread of Cluster 1 stations reveals a rather wide latitude range, demonstrating greater dispersion, unlike the Cluster 2 stations, which are few and geographically concentrated. This spatial differentiation is reflected in the clusters obtained, underscoring the importance of the geographical factor in their formation and highlighting climatic differences among the WASA stations.

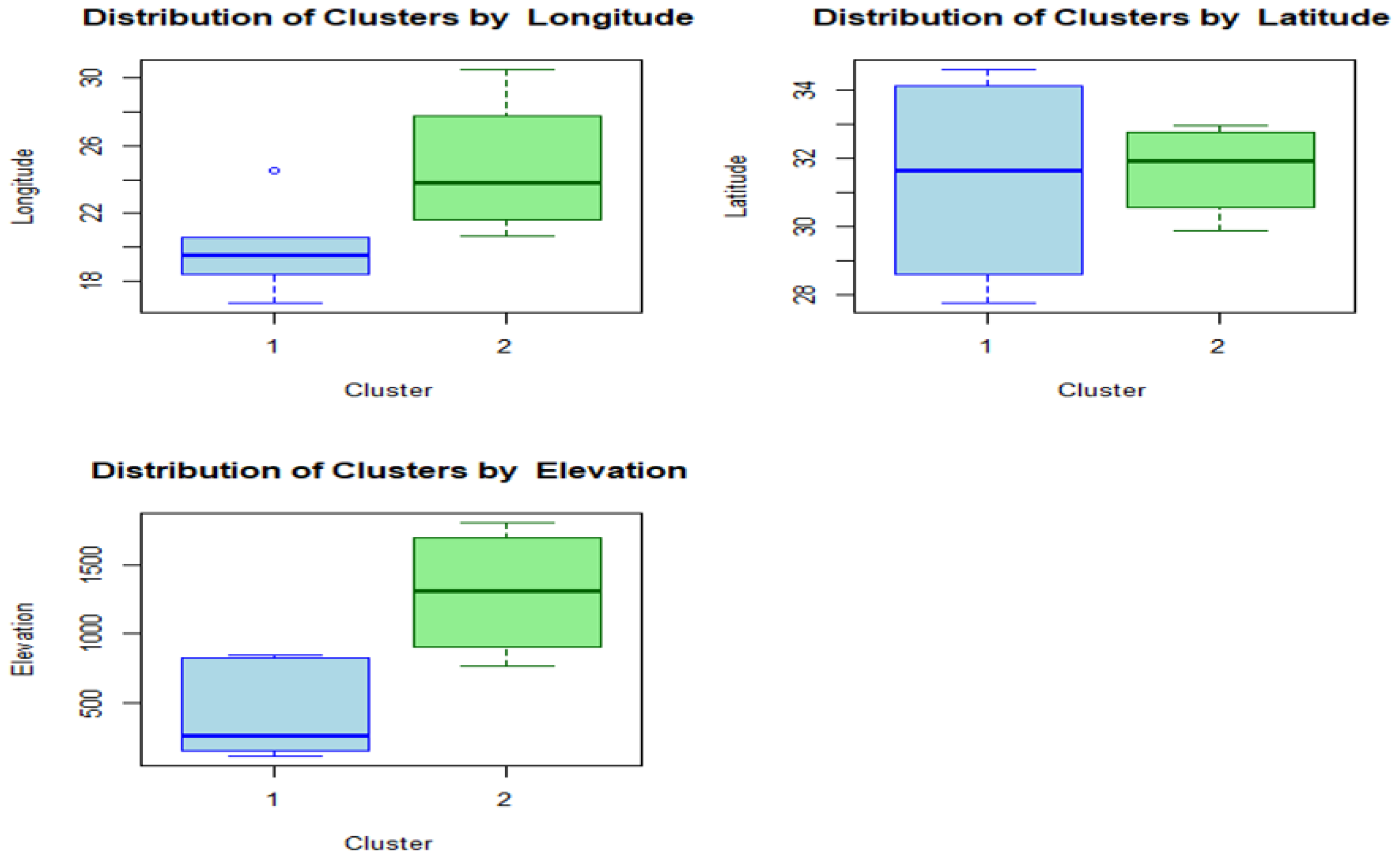

Figure 2 displays the spatial and topographical characteristics of two clusters based on their longitude, latitude, and elevation status. The longitude plot shows that stations in Cluster 1 are primarily in a lower-latitude zone, whereas those in Cluster 2 are located farther east, with higher longitudes. The plot showing the distribution based on latitude shows that while Cluster 1 covers a broader zone, extending further into the northern latitudes, Cluster 2 stations are concentrated within a narrower zone of lower latitudes. Finally, based on elevation, a notable difference between the two clusters is observed in

Figure 2. In this regard, stations within Cluster 1 are concentrated in lower-elevation zones, whereas those within Cluster 2 are concentrated in higher-elevation zones. Overall,

Figure 2 shows notable differences between clusters, thus supporting a spatial coherence between clusters based on longitude, latitude, and elevation status.

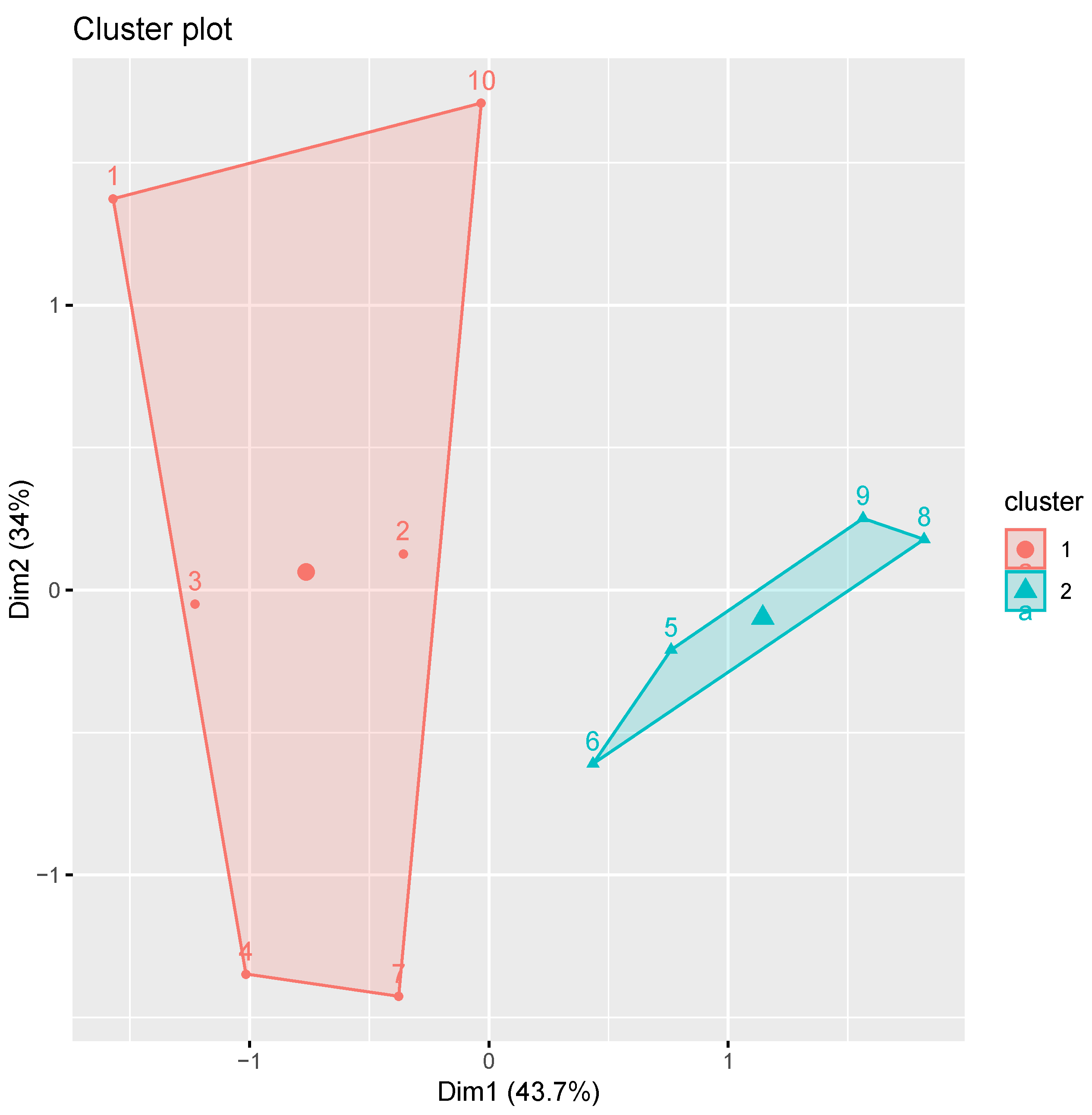

Figure 3 includes two clusters, 1 and 2, with each cluster included in a polygon that denotes the cluster boundary. Here, Dim1 accounts for 43.7% of the variance, while Dim2 accounts for 34%. These values add up to over 77%. Thus, it is evident that this plot includes most of the clusters. In

Figure 3, it is evident that the spatial distribution of the clusters is demonstrated through hierarchical methods. All the clusters generated by the dendrogram are clearly separated, demonstrating that hierarchical clustering effectively distinguishes between them. In this plot, it is evident that the clusters are well separated in Dim1, indicating that it is an important dimension for distinguishing between them.

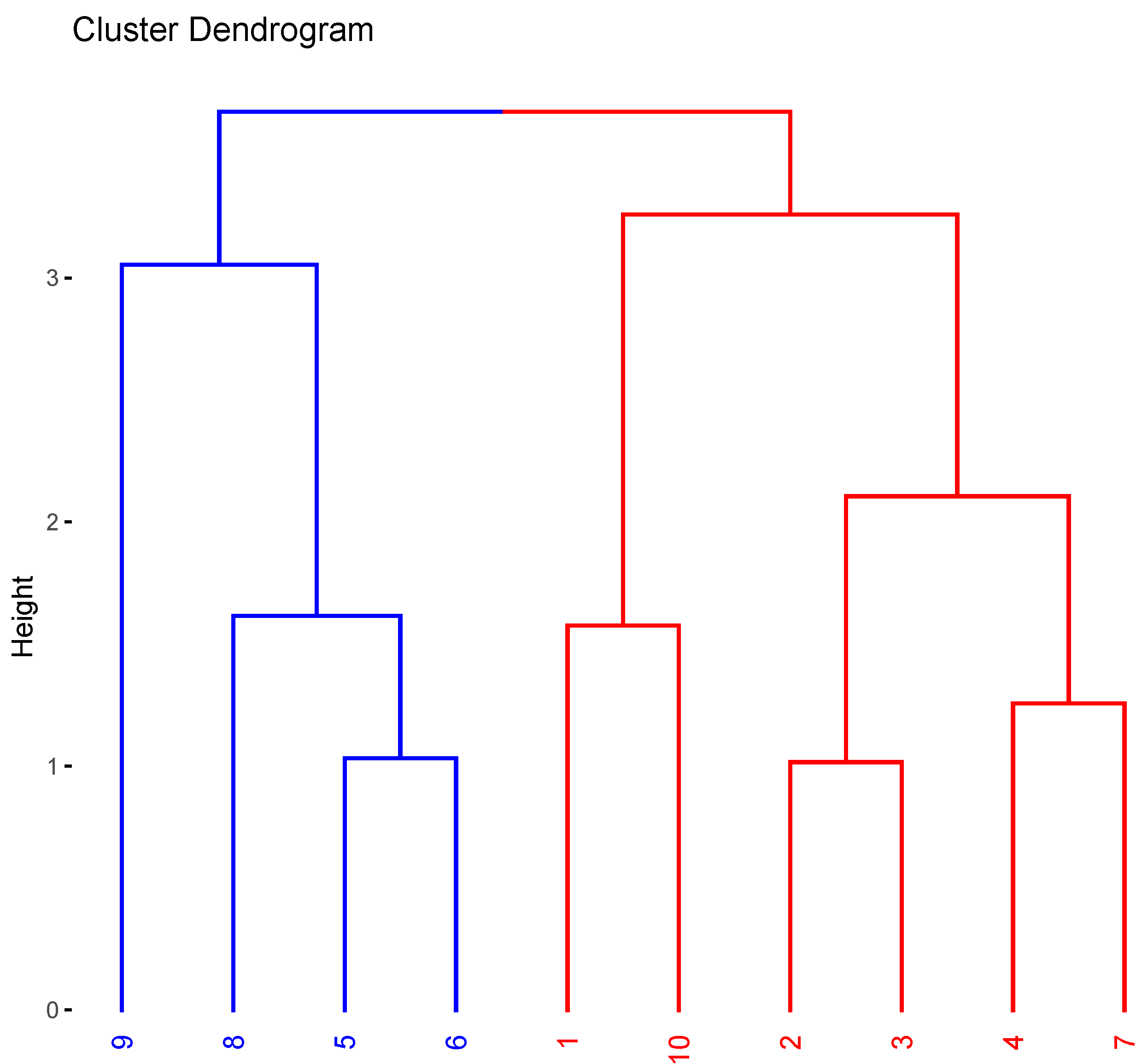

Figure 4 shows a dendrogram for clustering ten different stations labelled from 1 to 10. The vertical axis is labelled “Height,” indicating the degree of dissimilarity or the distance at which clustering occurs. The degree of dissimilarity is higher for points clustered at higher positions than for those clustered at lower positions. From the given dendrogram, two clusters are marked within dashed boxes. The first cluster consists of stations 9, 8, 5, and 6, whereas the second cluster consists of stations 1, 10, 2, 3, 4, and 7.

Multivariate Exploratory Data Analysis

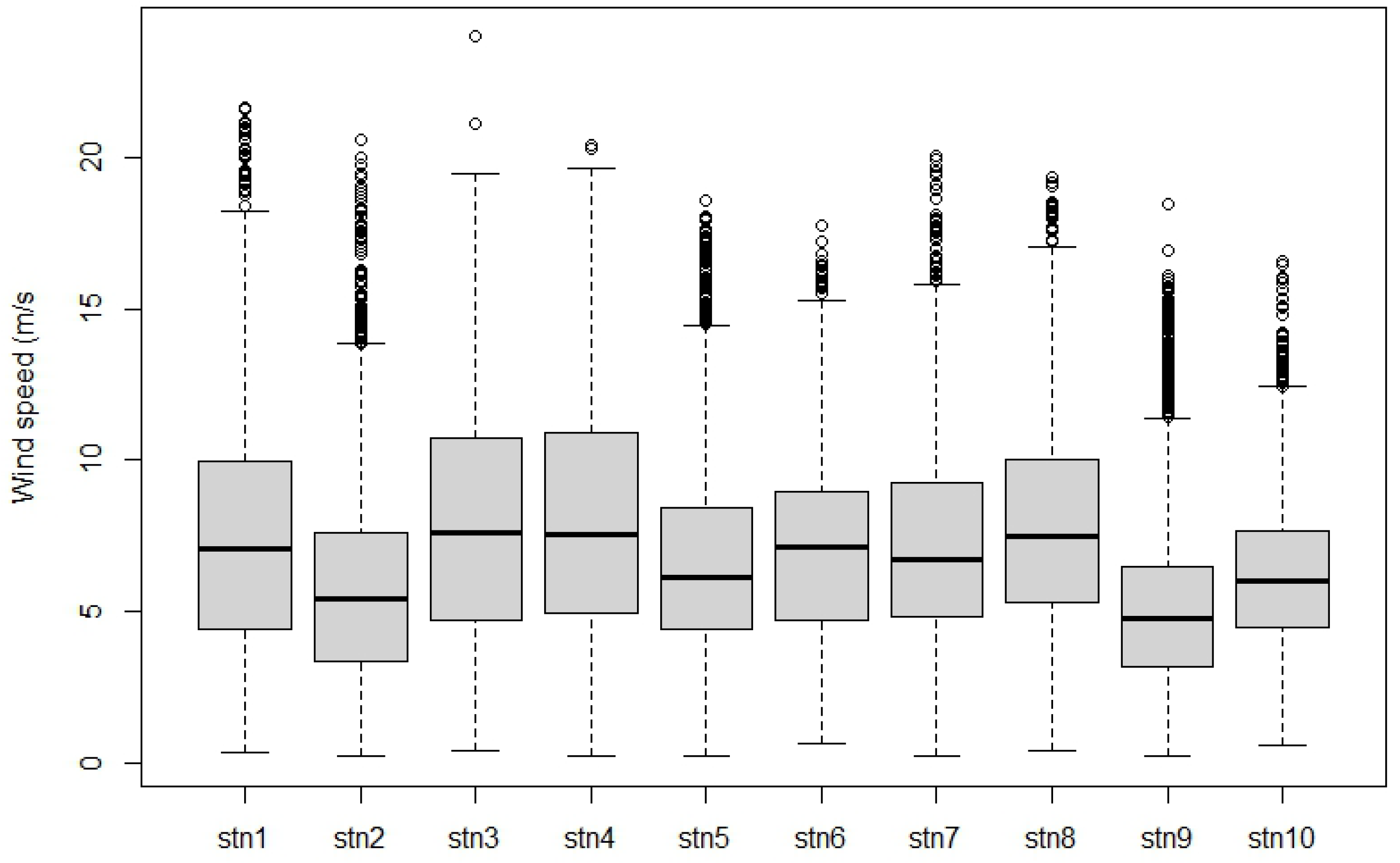

Table 3 lists the summary statistics of wind data across stations. Many stations exhibit moderate to high variability, with median wind speeds typically 6–7.5 m/s. The highest wind activity comes from Stations 3 and 4, while Station 9 is the calmest. The maximum wind speed is close to 24 m/s, suggesting occasional high-wind events.

Also, the average values across the stations are quite different as indicated in

Table 3. Alternatively, for Station 1 (stn1), the mean is higher than the median, suggesting the data may be right-skewed. Also, the difference between Q3 and the maximum is very high, suggesting the presence of outliers or a long tail.

Figure 5 is a box plot showing the distribution of wind speed (in m/s) across 10 stations (stn1 to stn10). Each box shows the central 50% of the data (interquartile range), the line within the box shows the median wind speed, and the dots represent outliers. Stations such as stn4, stn6, and stn7 exhibit higher wind speeds and greater variability, while others, such as stn1, stn2, and stn8, have lower, more consistent wind speeds.

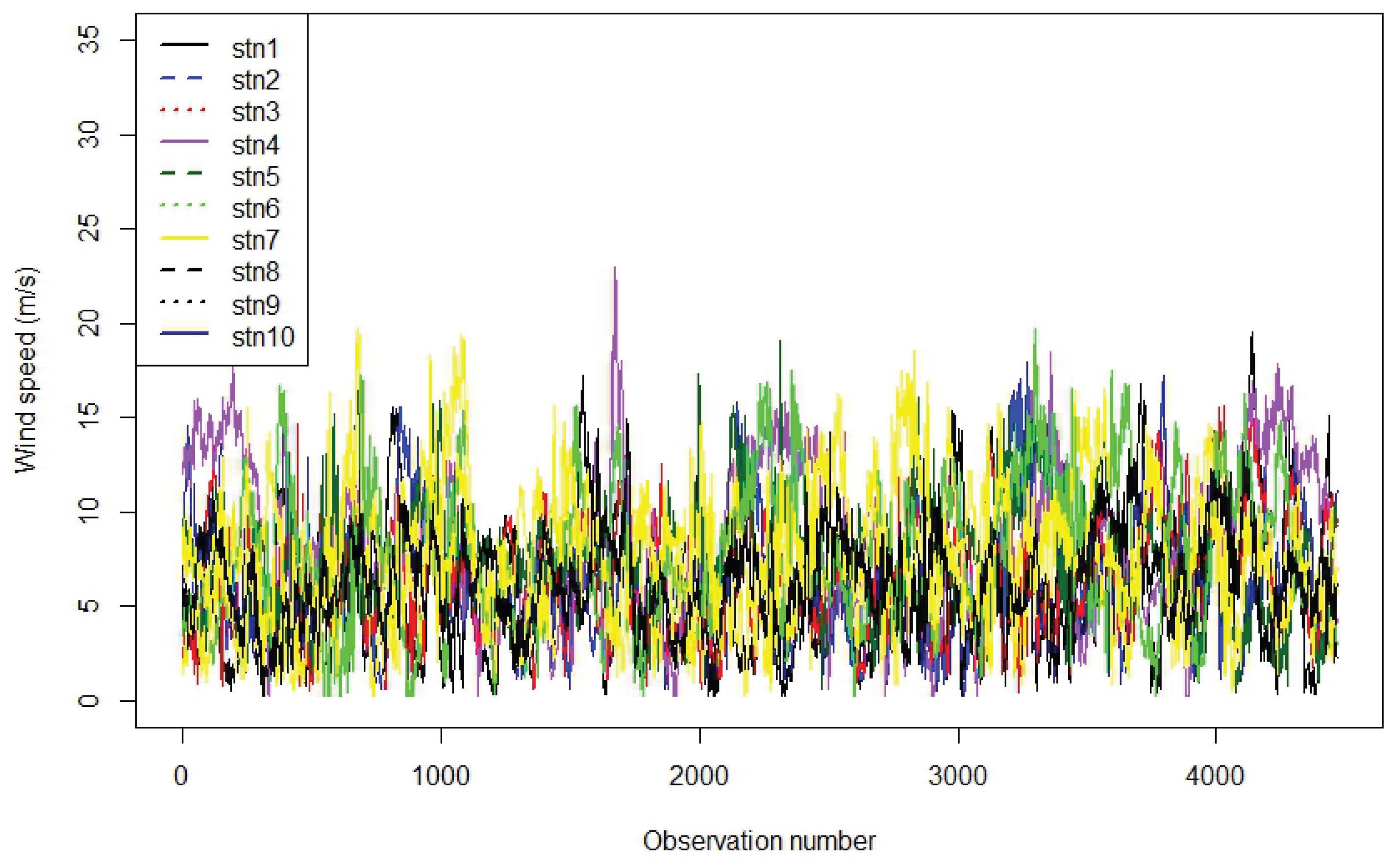

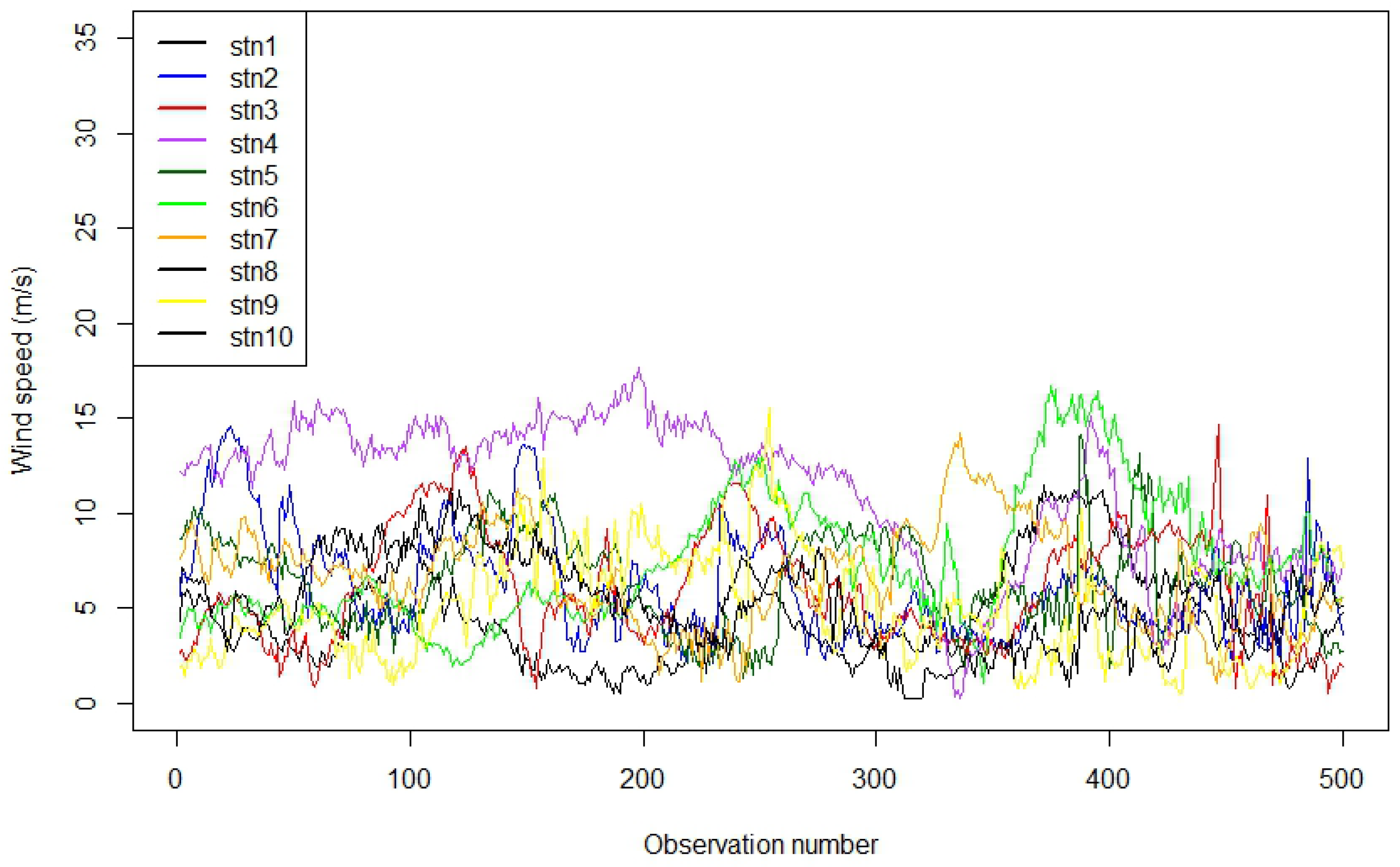

The plot

Figure 6 is a time series line plot showing the wind speed (in m/s) recorded at 10 different stations over a series of observations. A sample of 500 was taken to improve visualisation of wind speed. Each line represents the variation in wind speed at a specific station, showing temporal fluctuations and variability. The overlapping lines illustrate how wind speed trends differ and coincide between stations during the observed period.

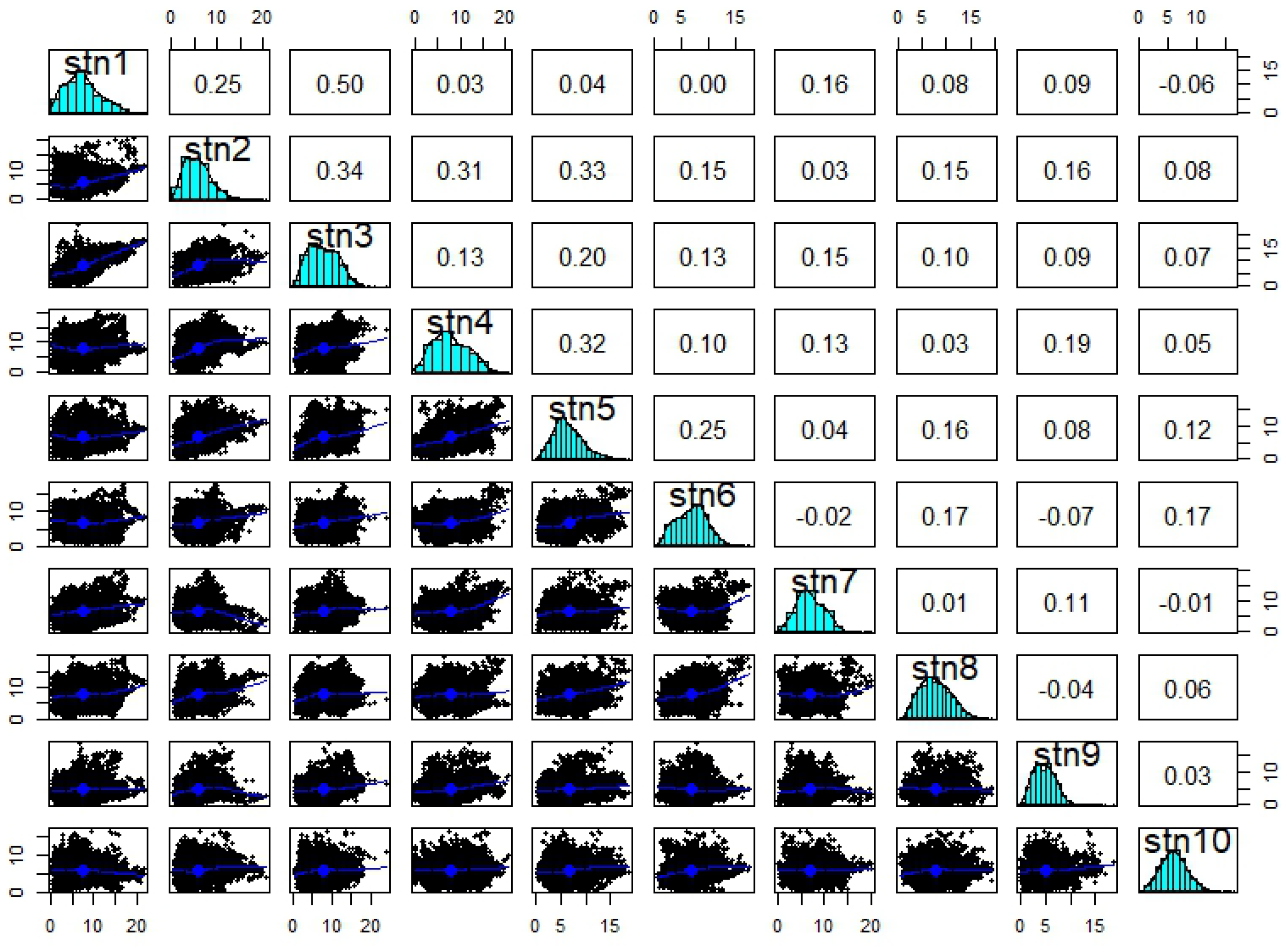

Figure 7 shows a scatterplot matrix combined with histograms and correlation coefficients for wind speed data. This plot is useful for understanding the pairwise relationships and dependencies among wind speed measurements across different stations. The histograms show the wind speed distribution for each station. The scatterplots show pairwise relationships between wind speeds at different stations. Each plot has a fitted smooth curve (blue line) to show trends. Correlation coefficients between wind speeds at pairs of stations are also presented. These values quantify the strength and direction of linear relationships (positive, negative, or near zero). The correlation coefficients are mostly positive but low to moderate (generally below 0.4), indicating a weak linear association. Stations such as stn1 and stn2, or stn2 and stn3, have somewhat higher correlations.

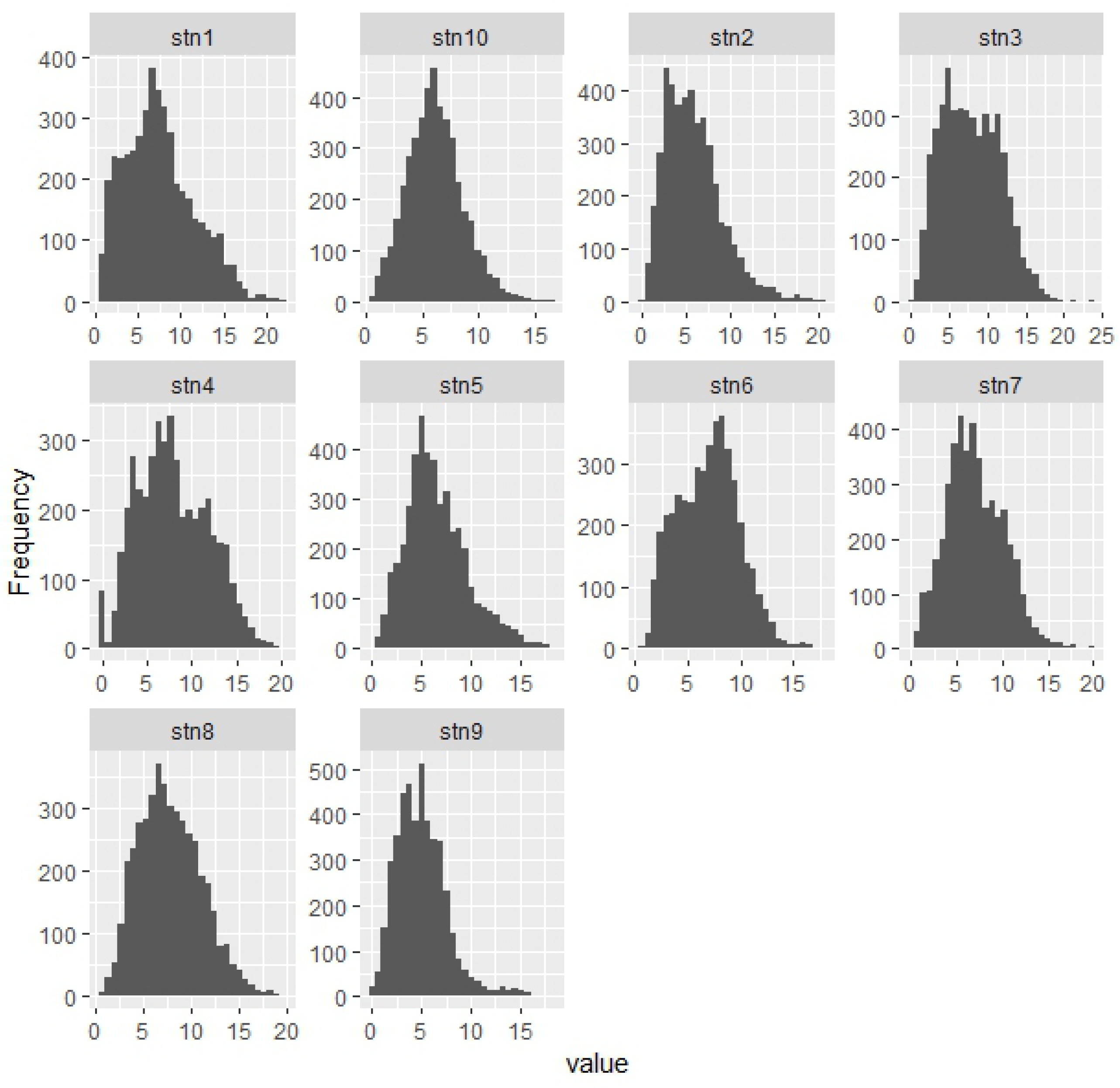

Figure 8 gives a clear visualisation of how wind speed is distributed at each station. Most stations experience wind speeds typically between 0 and 15 m/s, with varying frequencies. Differences in histogram shape suggest variations in local wind-speed patterns across stations.

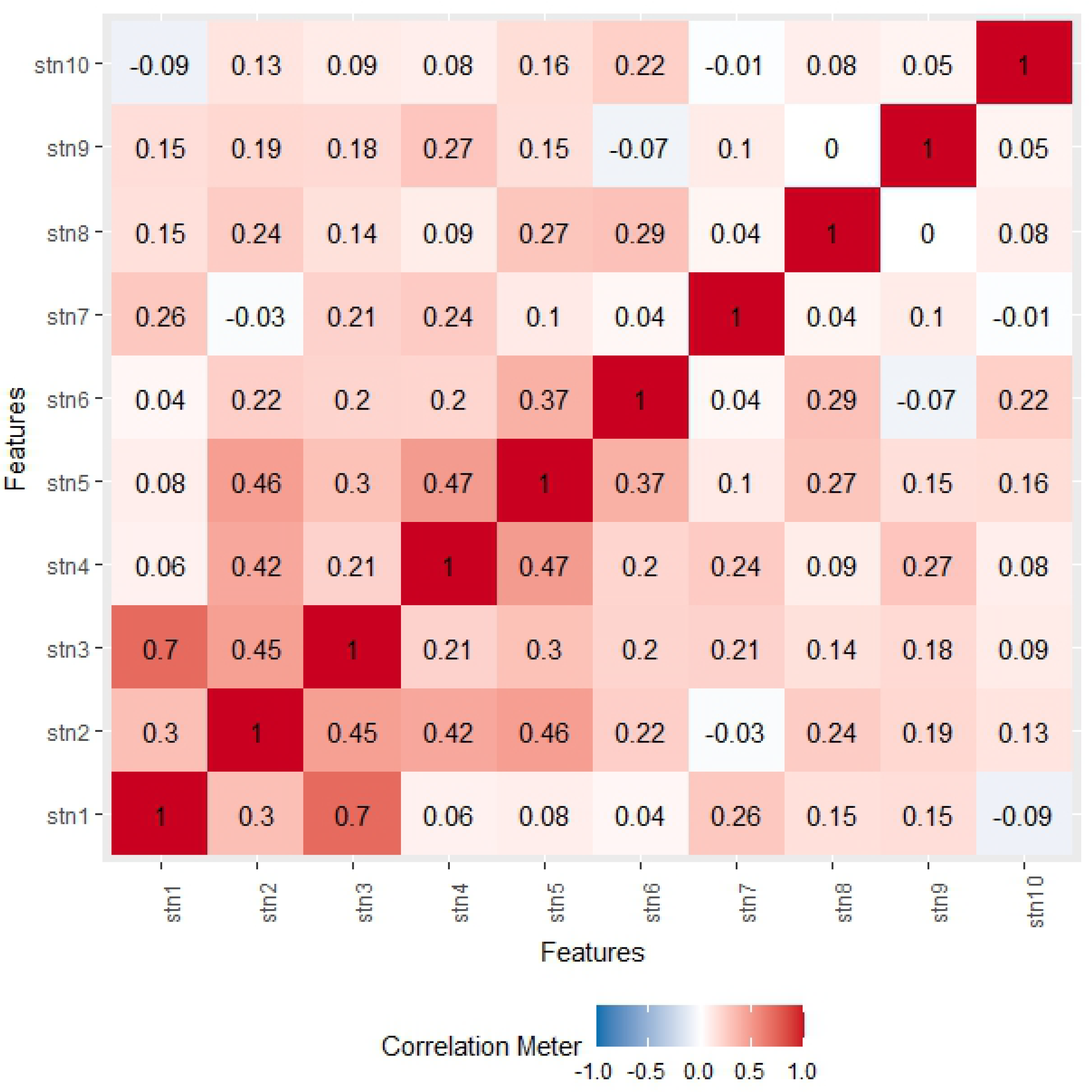

Figure 9 shows the correlation between stations. From the plot, stations 1 and 2 show a strong positive relationship. As the wind speed of station 1 increases, so does that of station 3.

3.2. Results for the Models

The results for the models are presented in the following sections in two stages. Firstly, section 3.2.1 presents the results for Gaussian Process (GP) models applied to all possible combinations of train-test stations, providing a comprehensive evaluation of predictive performance and metrics such as RMSE, NLPD, CVG, and acceptance rate . These results establish the baseline behavior of the GP models across clusters and highlight the effects of station selection and spatial coverage.

In contrast,

Section 3.2.2 evaluates the hybrid Linear–Gaussian Process framework. Here, a linear regression model is first estimated to capture large-scale spatio-temporal trends, and a GP is subsequently applied to the residuals to model local deviations. Within this second stage, a variety of covariance structures are rigorously assessed, including Matérn functions with smoothness parameters

, as well as exponential and Gaussian covariances. The final covariance choice is determined based on validation performance. Because the hybrid model explicitly separates linear and residual components, the train–test splits, validation folds, and reported metrics differ from

Section 3.2.1. Metrics such as

are not always relevant for residual modeling, and certain summary metrics (e.g., CRPS) are computed only where meaningful for the hybrid framework. This structure ensures that the evaluation in

Section 3.2.2 reflects the performance of the hybrid approach in capturing both large-scale trends and local residuals, rather than replicating the exhaustive GP-only analysis.

3.2.1. Results for the Linear and GP Models

Variable Selection

Relaxed Lasso is a variation of the Lasso (Least Absolute Shrinkage and Selection Operator) regression method, designed to improve variable selection by reducing the bias introduced by Lasso’s strong penalty. In the first step, Lasso is applied to select a subset of relevant variables by shrinking some coefficients exactly to zero. Then, in the second step, a less-penalised or unpenalised regression (like OLS) is fit only on the selected variables, allowing the model to estimate their coefficients more accurately. This two-stage process helps maintain Lasso’s variable selection strength while improving the accuracy and interpretability of the prediction by relaxing the amount of shrinkage applied.

Forecast Evaluation

We perform GP modelling using the spTimer package in R, and we use an MCMC approach to estimate the posterior distributions of the model parameters. The following MCMC settings were used: number of iterations (N): 2,000; burn-in period: 300 iterations; thinning interval: 1; number of chains: 3; and reporting interval (report): 3. Such settings mean that the program was run for 2,000 iterations, of which the initial 300 were considered as burn-in to minimise the impact of initial values. Thinning of 1 means that the entire set of post-burn samples is utilised for posterior analysis. Running the chains enables convergence diagnosis, ensuring proper parameter evaluation. The report = 3 settings ensure that the algorithm reports every 3rd iteration, thus enabling tracking of the execution of the sampling function. Such settings ensure an appropriate trade-off between computational efficiency and the sample size required to approximate the posterior distribution.

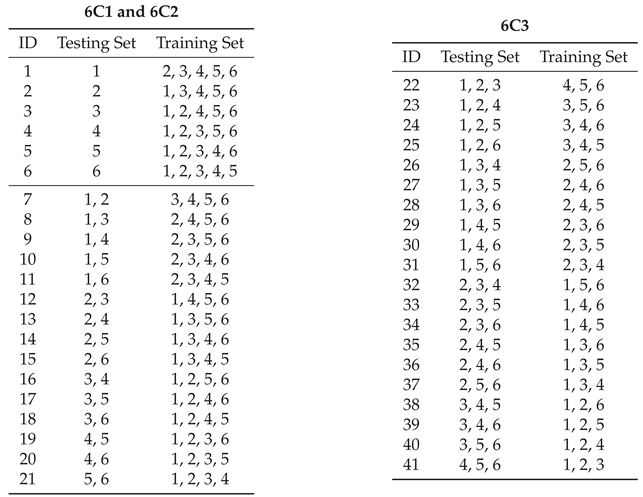

Training and testing for cluster 1

In cluster 1, we use the ratio

to generate all possible combinations of data for the test set, with test set sizes ranging from 1 to 3 samples. We used the configurations

,

and

, which resulted in 41 unique train–test partitions. In each configuration, the selected subset of stations served as the test set and the remaining stations were used for training. The objective of this exhaustive enumeration is to evaluate the robustness of spatial generalisation with respect to the specific choice of held-out stations. By considering every possible hold-out combination, we eliminate randomness due to fold assignment and provide a complete assessment of how sensitive model performance is to station selection. The combinations resulting from the testing and training sets are in

Table 4.

Results for Cluster 1

Table 5 summarizes predictive performance across all 41 spatial hold-out configurations. When a single station is held out (leave-one-station-out validation), the model is trained on five stations and evaluated on one. As expected, this setting yields the lowest RMSE, MAE, NLPD, and CRPS values because the training set is largest and the extrapolation domain is minimal. When two stations are held out, predictive performance degrades moderately across all proper scoring rules. This reflects both the reduced amount of spatial information available for training and the increased geographic extent of the test domain. The most challenging setting occurs when three stations are held out. In this case, the model is trained on only half of the available stations and must extrapolate to a substantially larger unseen spatial region. Consequently, RMSE, MAE, CRPS, and NLPD increase consistently in relation to cases of one and two-stations.

This monotonic degradation pattern indicates that performance is sensitive to the degree of spatial data removal rather than to any specific station combination. Importantly, coverage (CVG) remains stable across configurations, suggesting that predictive uncertainty is well calibrated even as extrapolation difficulty increases. The variability observed within each group (for example, across different three-station subsets) reflects heterogeneity in spatial information content across stations, highlighting that some station combinations are inherently more informative for spatial interpolation than others. Therefore,

Table 5 quantifies how predictive accuracy deteriorates as the spatial extrapolation task becomes more challenging. In this sense, the table illustrates the sensitivity of model performance to the degree of spatial data removal, providing a structured robustness assessment of spatial extrapolation performance. The metric

quantifies the relative contribution of each train-test combination within the fusion scheme. Specifically, for combination

j,

is defined as

where

represents the weight or influence assigned to combination

j based on its predictive performance. CVG and other metrics are used to determine

in a manner that emphasizes well-performing combinations. The resulting

values are expressed as percentages, highlighting which combinations contribute the most to the overall fused prediction. It represents the acceptance rate in the GPR fusion scheme, highlighting the train–test combinations that contribute most reliably to the final predictive ensemble. The metric

further highlights the combinations that contribute the most within the fusion scheme, with certain station sets such as 2, 3, 3–5, 3–6, 2–5–6, receiving higher relevance scores, suggesting that these combinations provide more informative predictors for the model.

Prediction Plots

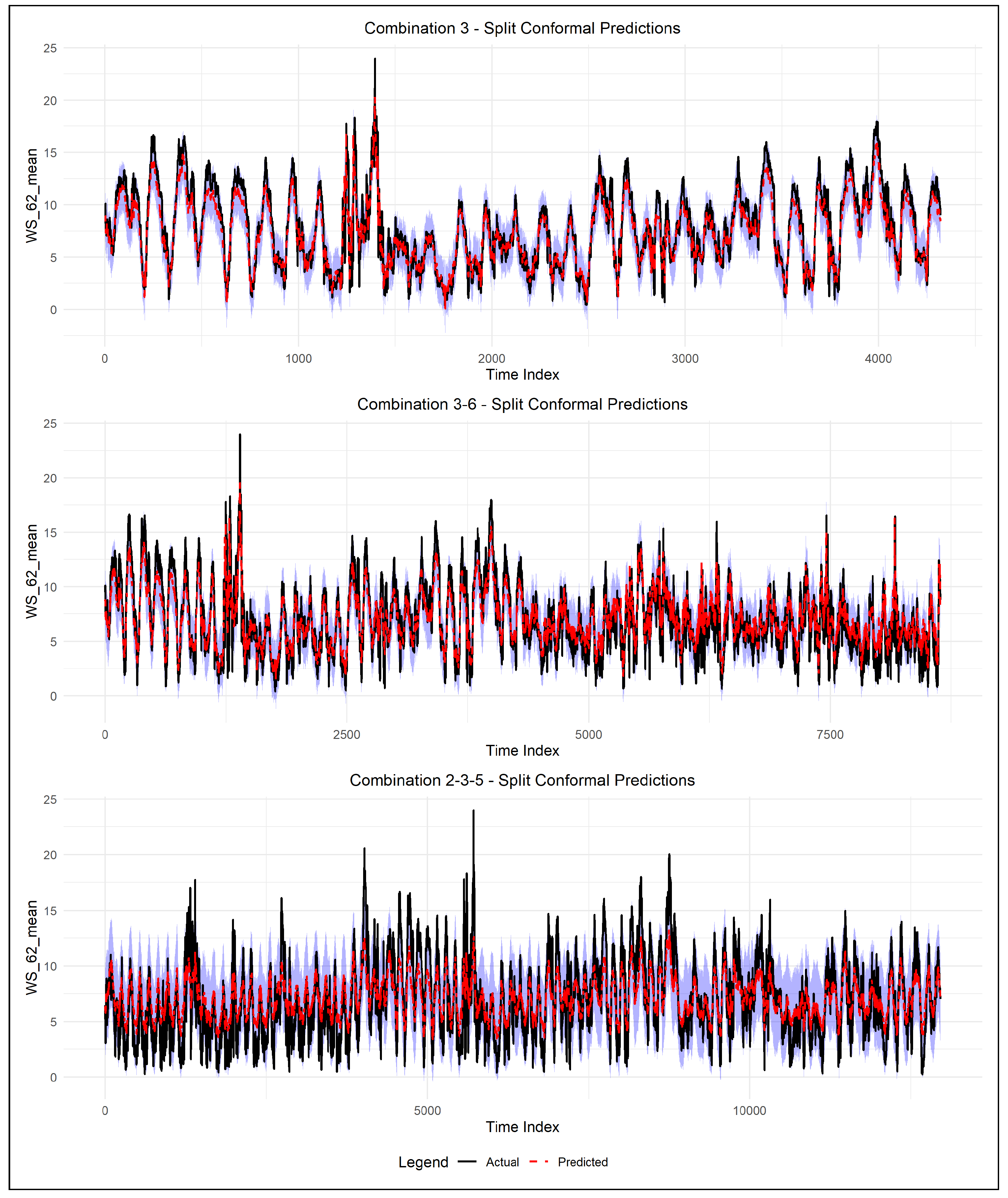

As the combined station counts increase, accuracy gradually deteriorates. At the same time, pairwise combinations tend to report higher NLPD, wider prediction intervals, and greater RMSEs as model complexity increases, due to greater spatial variability. This phenomenon is reinforced when visualised within

Figure 10, where combined stations report wider bands of conformal prediction, less smooth trends for the mean trajectories, while the prediction intervals report perfectly well-calibrated performance across the different combinations, reporting the desired true coverage of 95% as affirmed by the CVG values reported within

Table 5.

Importantly, the adaptive extension of the prediction interval in the regime of high wind variability, evident from

Figure 10, shows the capability of the split conformal method in handling the dynamic uncertainty without sacrificing the guarantees. These examples reveal an interesting balance between the sharpness of predictions and the level of aggregation, indicating that although aggregation can be valuable, it might also entail a penalty in the loss of local wind dynamics, which is of principal importance.

Testing and Training Sets

The training set is the data used to train the model, allowing it to learn patterns and relationships. In contrast, the testing set is a separate portion of data used to assess how well the model performs on new, unseen inputs. By splitting data into these two sets, we can ensure the model learns effectively without simply memorising the data, helping to avoid overfitting and giving a more accurate measure of how the model will perform in real-world scenarios.

Table 6 shows the test-train sets for cluster 2.

To evaluate the model’s performance and generalisation, two data-splitting strategies were employed, each using combinations of four spatial locations. We used the two configurations , which denote combinations in which subsets of four components are split into training and testing sets. These combinations provide a systematic way to assess the model’s robustness under varying data availability scenarios and to evaluate predictive performance across different spatial partitions.

Results for Cluster 2

Table 7 presents a comparative evaluation of the performance of the model in various training and testing configurations using key statistical metrics: Root Mean Square Error (RMSE), Mean Absolute Error (MAE), Continuous Ranked Probability Score (CRPS), probability of prediction interval coverage (PICP), Negative Log Predictive Density (NLPD), normalised average width of prediction interval (PINAW) and normalised average deviation of prediction interval (PINAD).

From

Table 8, among all configurations, Combination 1 achieves the best overall performance, yielding the lowest values of NLPD, RMSE, MAE, and CRPS, together with the narrowest prediction intervals (PINAW). Combination 2 shows comparable but slightly inferior performance, while Combination 3 and Combination 4 exhibit noticeably higher error metrics and wider prediction intervals. Pairwise station combinations generally lead to higher prediction errors and greater uncertainty than single-station cases, with Combination 2–3 showing the weakest performance across most metrics.

Across all configurations, the empirical coverage (CVG) remains close to the nominal level of 0.95, indicating that the probabilistic forecasts are well calibrated regardless of the station combination. The relative improvement metric indicates consistent performance gains over the linear baseline, with the largest improvements observed for single-station configurations.

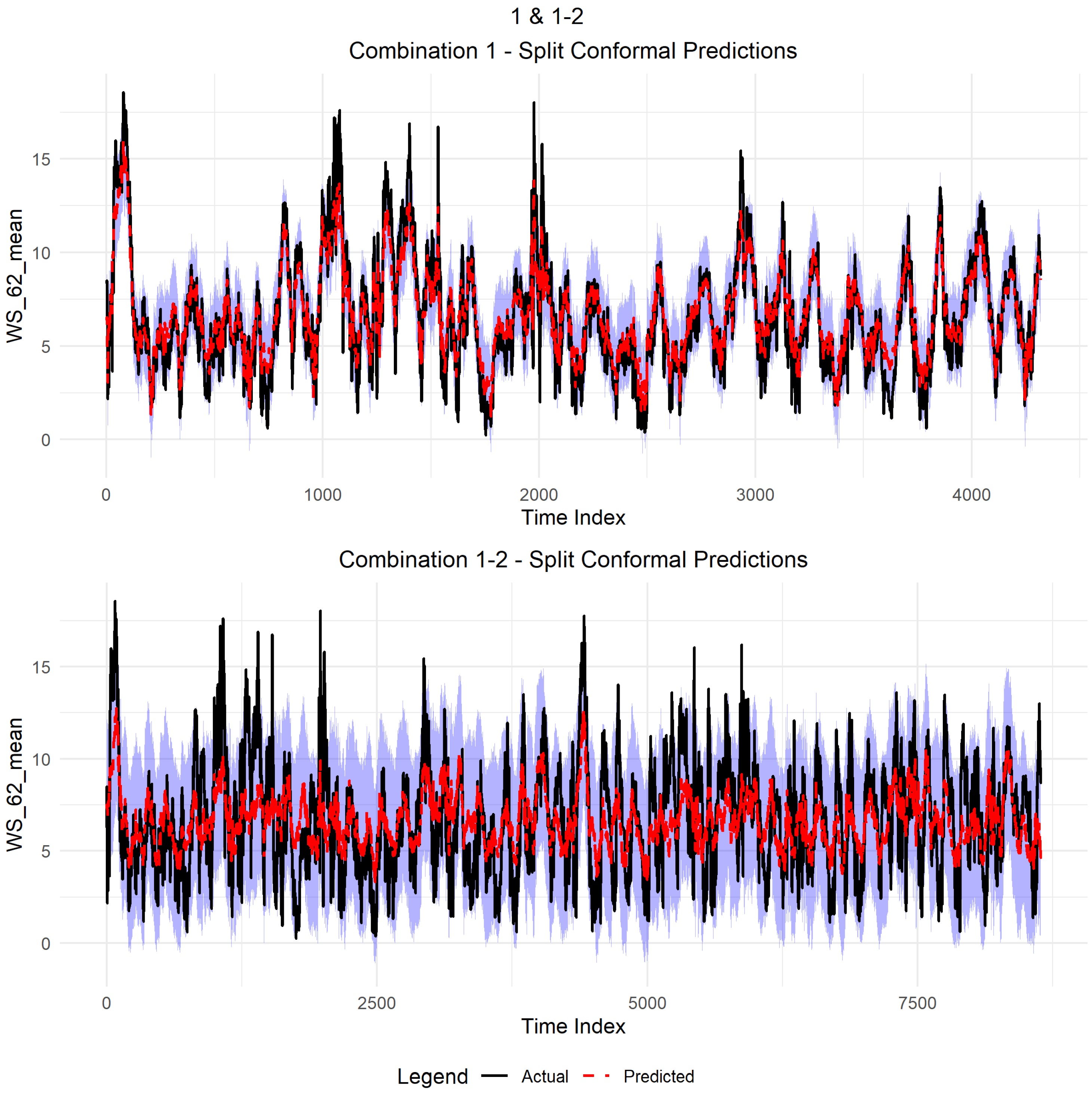

Figure 11 presents the split conformal prediction results for Combination 1 and Combination 1–2, while the corresponding quantitative performance metrics are reported in

Table 8. For Combination 1, the predicted mean closely tracks the observed wind speed, with relatively narrow prediction intervals, consistent with the low RMSE (1.15), MAE (0.88), CRPS (0.76), and PINAW (0.25) values reported in the table. In contrast, Combination 1–2 exhibits wider prediction intervals and increased variability in the predicted mean, which aligns with the higher RMSE (2.44), MAE (1.97), CRPS (1.39), and PINAW (0.51) observed in

Table 8. In both cases, the empirical coverage shown in

Figure 11 is consistent with the nominal level, in agreement with the CVG value of approximately 0.95 reported in the table. The split conformal prediction method provides prediction intervals (shown as shaded regions) that effectively capture the variability in the observed wind speed data (black line). The predicted values (red dashed line) closely follow the actual measurements, and the uncertainty bands adapt over time, widening during more volatile periods and narrowing during more stable periods. This shows that split conformal prediction generates well-calibrated, dynamic-width intervals that reflect the true uncertainty in predictions across disparate time indices.

3.2.2. Results for the Hybrid Linear-Gaussian Process

Validation Setup

The proposed hybrid modelling framework was evaluated using a two-fold validation strategy. A leave-one-station-out cross-validation approach was used to evaluate model performance. In each iteration, data from one wind station were withheld for validation, while the remaining stations were used for model training. This procedure was repeated until each station had served as the validation site once. For cluster 1 (total sample size 25914), each validation set comprised 16.7% of the available observations (4319 samples per split) and for cluster 2 (total sample size 17276), each validation set comprised 25% of the available observations (4319 samples per split). For each split, a two-stage process was used. First, a linear regression model was estimated to account for large-scale spatiotemporal effects, followed by a GP analysis of the residuals. A variety of covariance structures were rigorously evaluated within the second stage, including several Matérn covariance functions with smoothness and , an exponential covariance, and a Gaussian covariance. The best-performing covariance structure is based on validation performance.

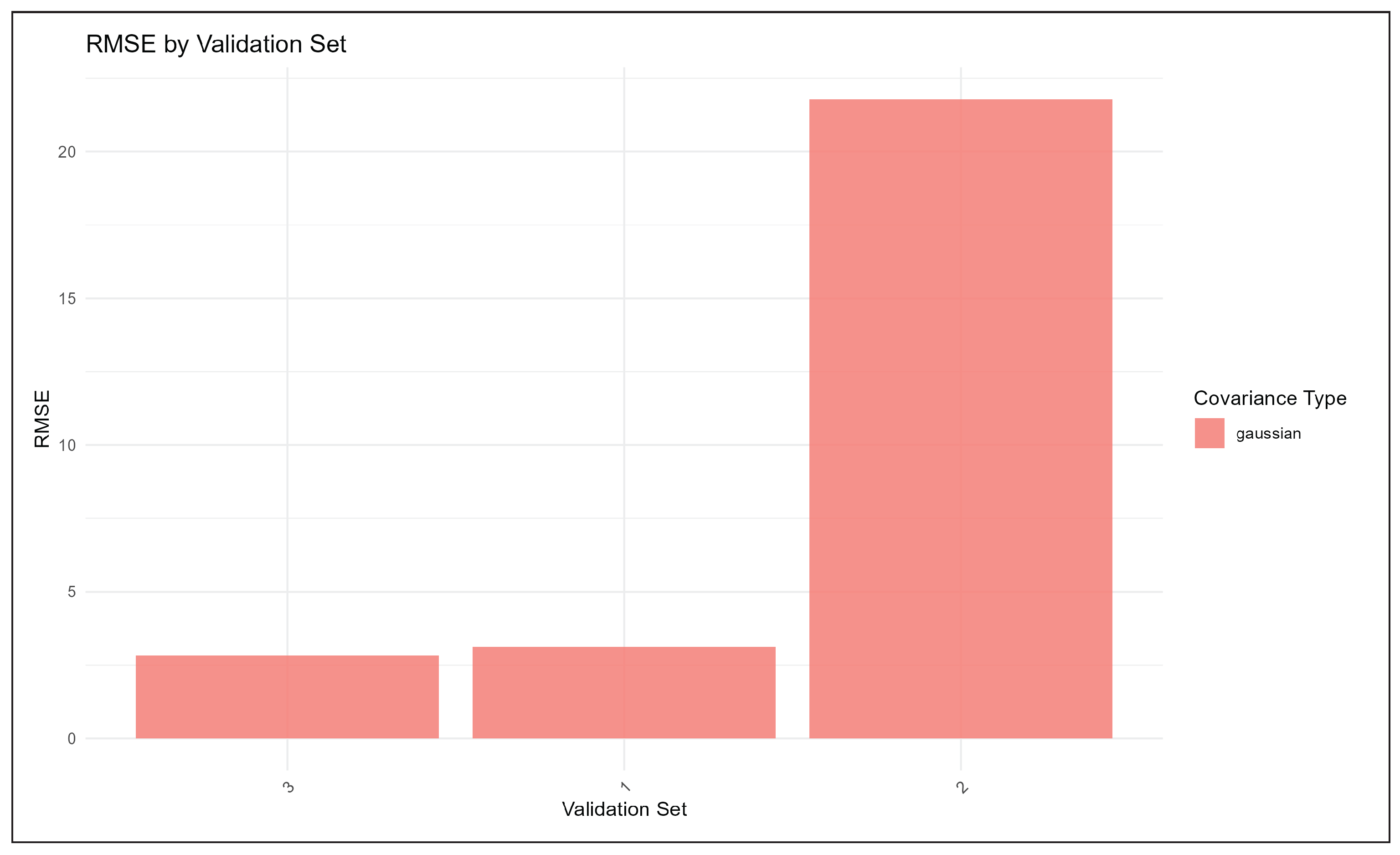

Covariance Selection and Residual Structure

In all three model validation cases, the Gaussian (squared exponential) covariance was selected as optimal. This suggests that the residuals of Cluster 1 could be best modelled as having a stationary covariance structure. Additionally, the negative improvement in the GP fold indicates that the residuals’ spatial structure is neither strong nor stationary, resulting in little improvement from the GP method. Covariance-based feature selection cannot always adequately compensate for residual heterogeneity.

Probabilistic Forecast Quality

The hybrid method provided well-calibrated uncertainty estimates. The coverage (CVG) was kept at 0.95 for all the folds, and the PINAW values ranged from 0.465 to 2.511. Low PINAD values across most folds indicate little bias in interval centring. This shows that even when point prediction was not significantly improved, the GP component still provided good predictions; hence, decisions could be made accordingly.

Implications for Wind Speed Forecasting

The results for Cluster 1 should be kept in mind for the practical application of the wind forecast method. In particular, the usefulness of the hybrid GP modelling method depends on the spatial coherence of the residuals; a lack of heterogeneity in the residuals could prevent efficient RMSE improvement within the clusters. The selection of covariance should be based on validation performance, but this may require non-stationary and/or multi-scale kernels for more accurate terrain-effect modelling. Probabilistic forecasts remain robust, and a small improvement in point predictions underscores the scope for risk-informed decision-making with hybrid models, especially in wind energy applications. The findings from cluster 1 highlight that modelling strategies should vary across clusters, accounting for trends and residual spatial structures, especially in complex terrain and heterogeneous winds.

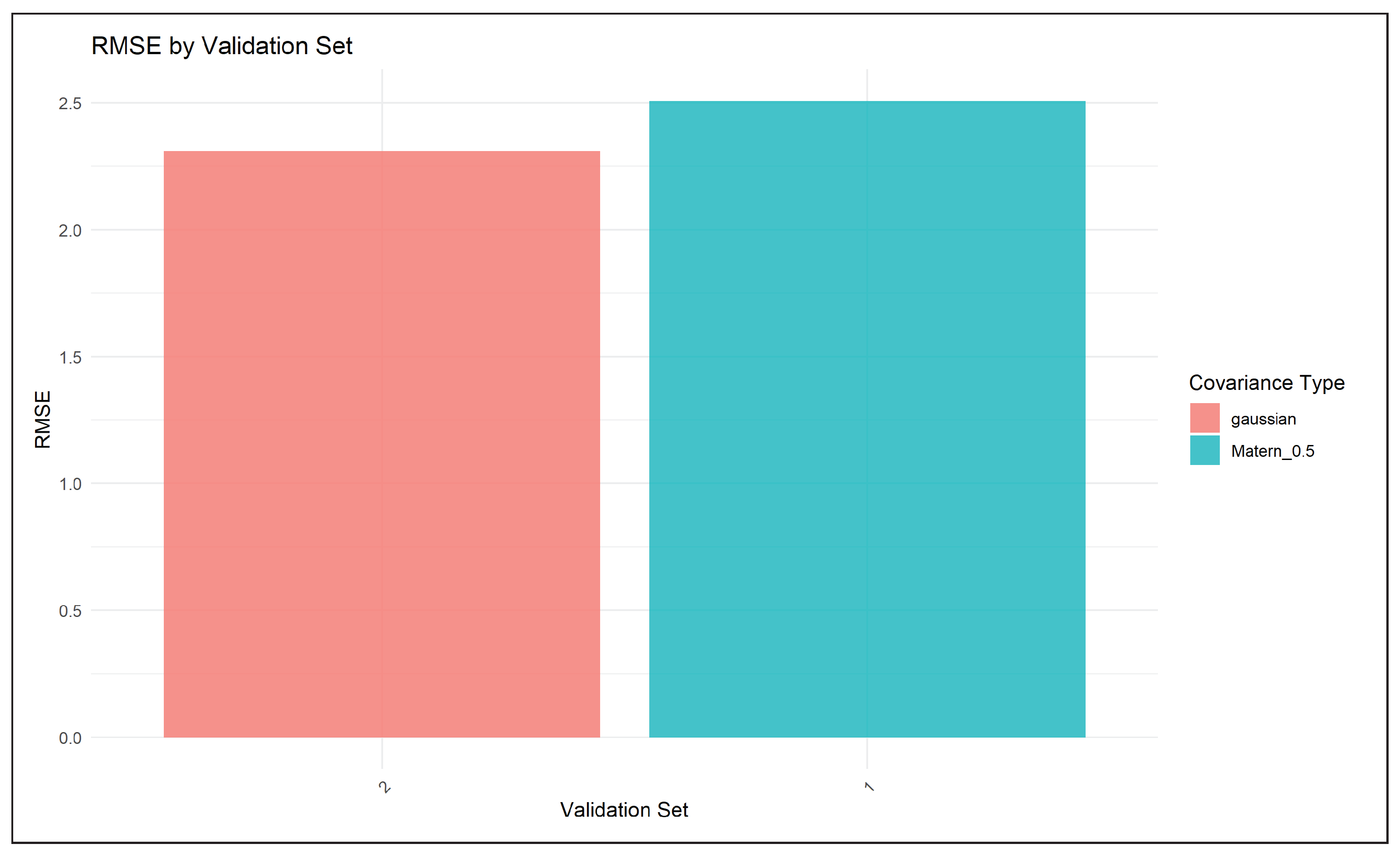

Covariance Selection and Residual Structure

Different optimal covariance structures were selected based on the validation splits reported by the machine-learning algorithm, using the automatic covariance structure selection method implemented in the code. In the first validation set, the Matérn covariance structure with was consistently selected, while in the second set, the Gaussian covariance structure was preferred. The Matérn covariance structure essentially corresponds to a rough, even non-differentiable, stochastic process, which is typically preferred for modelling abrupt changes in the underlying dynamics, for instance, those characterising localised variability in the wind field. The optimal structure found for the first set suggests that the dynamics of the residual wind field were characterised by particularly rough variability, potentially linked to factors such as gustiness and rapid regime changes. On the contrary, the optimal choice of the Gaussian kernel was observed in the second set, where the proportion of the original variability explained by the linear model was also particularly large.

Probabilistic Forecast Quality

Aside from point prediction accuracy, the hybrid models also demonstrated excellent probabilistic performance. Also, the Matérn performed best in terms of the smallest negative log predictive density, well-centred prediction intervals, and almost nominal empirical coverage of about 95%. Even though the prediction intervals generated by the Gaussian kernel were narrower and the CRPS was slightly reduced, the model also showed some underdispersion, indicating overconfidence in the predictions. These findings reveal a balance between point prediction accuracy and probabilistic calibration, with rougher covariance structures yielding a more realistic quantification of uncertainty in wind speed predictions.

Implications for Wind Speed Forecasting

The results show the capability of hybrid linear-GP approaches with adaptive covariance selection in spatio-temporal prediction of wind speed while being physically consistent. The linear model demonstrates its capability to capture larger trends, while the GP model captures the localised stochastic component. The tendency toward rough covariance structures supports the non-smooth nature of the underlying wind-speed processes, justifying the use of Matérn kernels in this context. The presented model offers greater prediction accuracy, more precise quantification of uncertainty, and greater interpretability of predictions than traditional linear models.

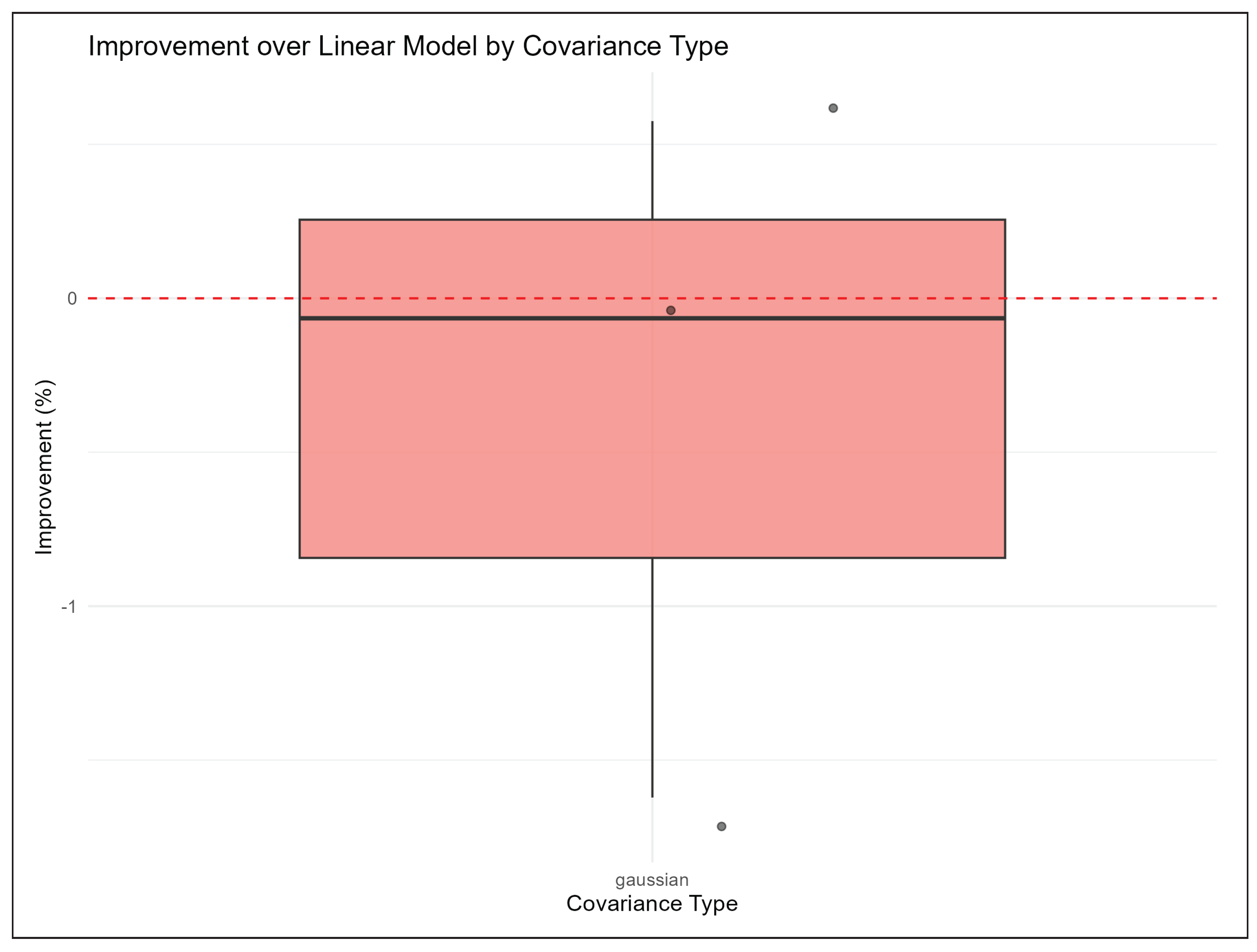

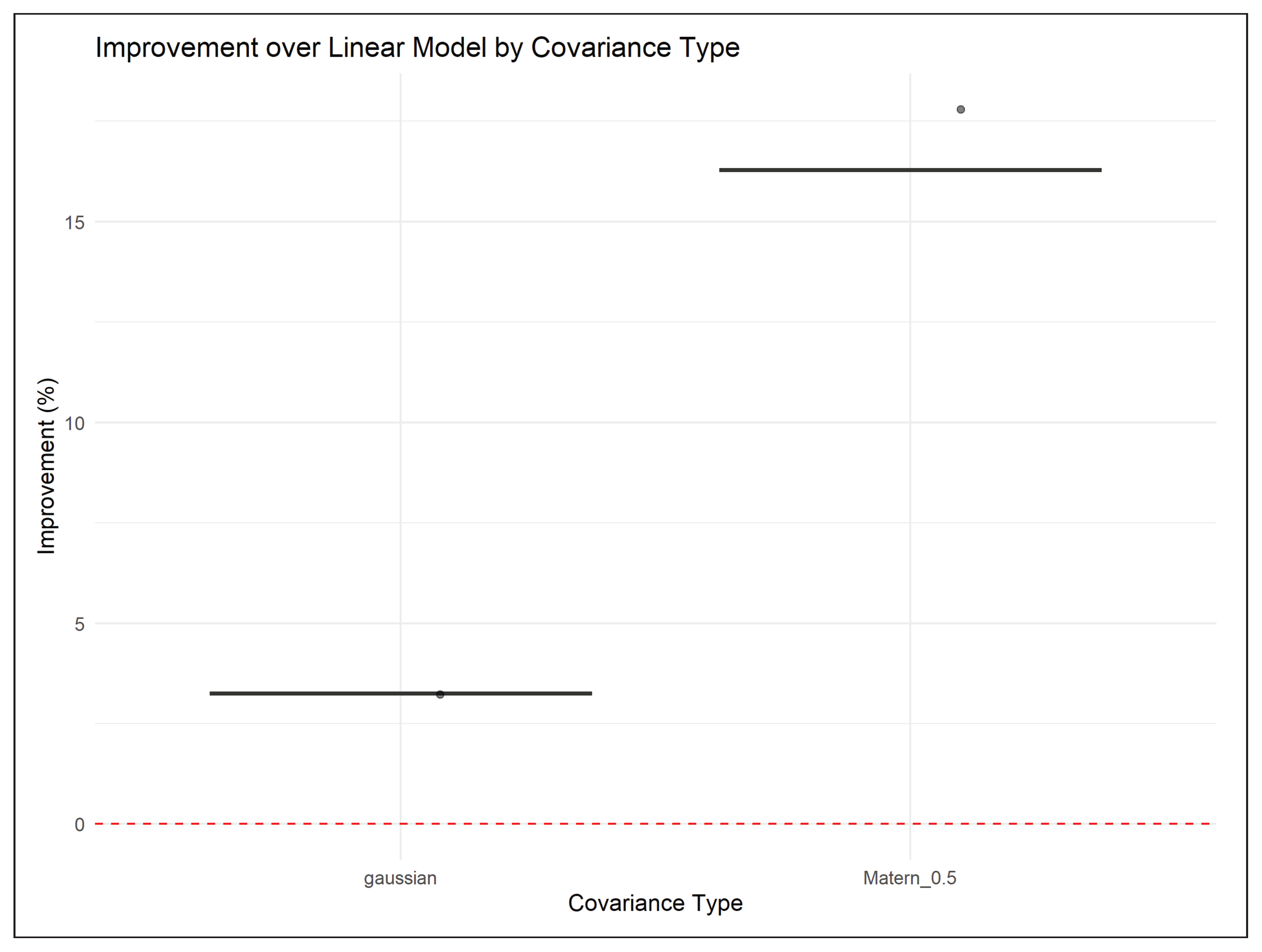

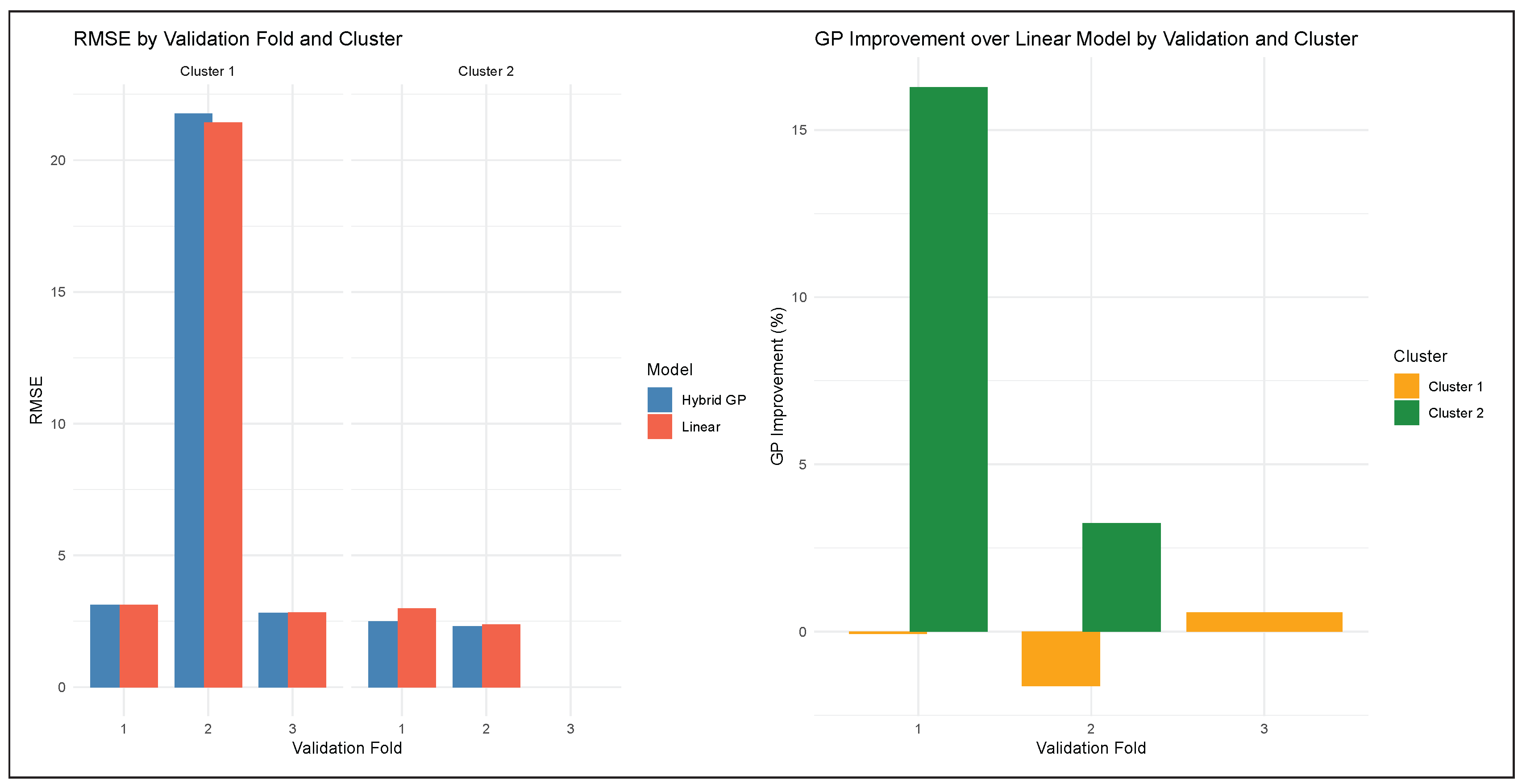

As shown in

Figure 12, the figure compares the predictive performance of the Linear and Hybrid GP regression models across two data clusters and various validation sets. The left-hand side of the figure illustrates a representation of the Root Mean Square Error (RMSE) for the Linear Model and the Hybrid GP model. As is apparent, the RMSE is a vital measure that effectively quantifies error. As such, lower error values are desirable compared to higher ones. This figure effectively compares the relative performance of including the GP model in improving overall wind speed prediction across different data clusters. On the other hand, the right-hand side of

Figure 12 demonstrates the percentage improvement of the Hybrid GP Model over the basic Linear Model. The inclusion of the GP Model would be beneficial if the value is positive. In contrast, a negative value would indicate cases where the GP Model does not effectively improve prediction accuracy.

From the appendix, figure A1 presents the time series plot for the independent variable windspeed. Figures A2-A5 shows the results of the hybrid model from both clusters with the covariances selected as well as the improvement from the linear model.

4. Discussion

4.1. Discussion of Results

The proposed hybrid framework was tested using two different clusters of wind speed. Although the improvements were significant in the second cluster, the results of the first cluster show very different behaviour. In fact, the hybrid framework improved over the linear baseline in only one of the three validation splits, with an average change in RMSE of . In one of the validation splits, the GP’s performance significantly deteriorated, and the RMSE exceeded 20. The selection procedure reliably identified the Gaussian kernel as the optimal one for this cluster. This confirms our previous assumption that the variability present in this cluster was mostly smooth and weakly correlated. On the other hand, marginal improvements and even degradations in performance suggest that this wind speed variability lacks sufficient spatio-temporal structure for a GP-based model. Thus, we have successfully demonstrated the limitations of employing GP-based residual modelling techniques and shown how this particular framework can identify them through this covariance selection and validation structure.

From an operational perspective, these results reinforce the relevance of regime-aware modelling approaches. Excessive and indiscriminate use of complex stochastic models may incur unnecessary computational cost and degrade forecast quality.

4.2. Limitations and Future Work

Firstly, the stationarity of the process within clusters, as assumed by the Gaussian Process Residual model, may not account for sudden regime changes characteristic of the atmosphere. This issue may be particularly concerning for Cluster 1, as suggested by the validation results. Secondly, the Gaussian Process (GP) method may be sensitive to sparsity/outliers, as evidenced by occasional decreases in model performance as measured by validation. However, it should be noted that this issue is mitigated by adaptive covariance selection. Lastly, although the two-stage method may be more interpretable and efficient, it does not use any physics-based constraints.

Future work may involve generalising the method to non-stationary and non-separable covariance functions, using anisotropic functions that depend on wind direction, and developing approximations of the GP method for operational use.

5. Conclusions

We had two fundamentally different wind regimes obtained by clustering the wind stations. For cluster 1, the linear model is already near-optimal, Residuals are weak, noisy, or unstable and the GP often degrades performance. Smooth kernels selected, but were not very helpful. For Cluster 2 (strong spatio-temporal dependence, rough residuals), GP provides large gains, and Matérn kernels dominate. This leads to the conclusion that the effectiveness of Gaussian process residual modelling is regime-dependent and varies across wind speed clusters.

This study applied a hybrid linear–Gaussian Process model to daily wind speed data from South African stations, clustered by elevation and geographic location. Key conclusions are: Cluster 1 (low-to-mid elevation) showed mixed GP improvements due to heterogeneous residuals, but probabilistic forecasts were reliable. Cluster 2 (high elevation) experienced consistent improvements, with GP capturing coherent residual patterns and enhancing both point and probabilistic predictions. Covariance selection is critical; Gaussian and Matérn 0.5 kernels were optimal depending on residual structure. The hybrid model effectively quantifies forecast uncertainty, making it valuable for risk-aware wind energy management.

Future work should explore non-stationary or multi-scale kernels, as well as additional topographic or meteorological features, to further improve predictive performance in heterogeneous terrain.

Author Contributions

Conceptualisation, THT and CS.; methodology, THT.; software, THT.; validation, THT, CS, TBD and SN; formal analysis, THT; investigation, THT, CS, TBD and SN.; data curation, THT; writing—original draft preparation, THT; writing—review and editing, THT, CS, TBD and SN; visualisation, THT.; supervision, CS, TBD and SN; project administration, CS, TBD and SN. All authors have read and agreed to the published version of this manuscript.

Funding

This research received no external funding.

Data Availability Statement

Acknowledgments

The authors thank the anonymous reviewers for their helpful comments on this paper.

Conflicts of Interest

The authors declare no conflicts of interest

Abbreviations

The following abbreviations are used in this manuscript:

| MAE |

Mean Absolute Error |

| MASE |

Mean Absolute Scaled Error |

| RMSE |

Root Mean Squared Error |

| MCMC |

Markov Chain Monte Carlo |

| CRPS |

Continuous Ranked Probability Score |

| PICP |

probability of prediction interval coverage |

| NLPD |

Negative Log Predictive Density |

| PINAW |

normalised average width of prediction interval |

| PINAD |

normalised average deviation of prediction interval |

Appendix A. Supplementary Plots

Figure A1.

Time series plot for all the stations.

Figure A1.

Time series plot for all the stations.

Hybrid model plots

Figure A2.

RMSE for cluster 1

Figure A2.

RMSE for cluster 1

Figure A3.

RMSE for cluster 2

Figure A3.

RMSE for cluster 2

Figure A4.

Improvement for cluster 1

Figure A4.

Improvement for cluster 1

Figure A5.

Improvement for cluster 2

Figure A5.

Improvement for cluster 2

Appendix B. Modelling specification-An Extensive Hierarchical Bayesian Formulation with MCMC Sampling.

Appendix B.1. Hybrid Model Specification

Let

denote the response variable wind speed at location

and time

. The complete model is:

where:

is a vector of covariates

are regression coefficients

is a zero-mean Gaussian process

is the measurement error (nugget effect)

is the covariance function with parameters

Appendix B.2. Two-Stage Estimation Procedure

Appendix B.2.1. Stage 1: Linear Regression

The very first attempt at modelling a spatio-temporal response variable is to consider the linear regression models. First, estimate the linear component via ordinary least squares:

The linear predictions are:

Residuals for GP modelling:

Appendix B.2.2. Stage 2: Gaussian Process on Residuals

Model the residuals as:

where

.

Appendix B.3. Covariance Structures

Appendix B.3.1. General Form of Covariance Functions

For spatio-temporal modelling, we consider separable covariance functions:

where

is the marginal variance.

Appendix B.3.2. Matérn Family of Covariance Functions

The

Matern covariance function is defined as:

where:

is the Euclidean distance

is the smoothness parameter

is the range parameter

is the variance parameter

is the modified Bessel function of the second kind

Appendix B.3.3. Special Cases

- 1.

Exponential covariance ():

- 2.

- 3.

- 4.

Gaussian/Squared Exponential ():

Exponential covariance () models rough processes with less smoothness.

Gaussian covariance () assumes very smooth processes.

Matern covariance with intermediate balances smoothness and flexibility.

Appendix B.3.4. Rational Quadratic Covariance

where

controls the smoothness.

Appendix B.4. Likelihood and Estimation

Appendix B.4.1. Gaussian Process Likelihood

For

n observations

, the log-likelihood is:

where is the covariance matrix with entries .

Appendix B.4.2. Maximum Likelihood Estimation

The MLE estimates are obtained by:

Appendix B.4.3. Predictive Distribution

For a new location

, the predictive distribution is Gaussian:

with:

where

.

Appendix B.5. Hierarchical Bayesian Formulation

Appendix B.5.1. Complete Bayesian Model

Appendix B.5.2. Posterior Distribution

Appendix B.5.3. Gibbs Sampling

- 1.

- 2.

- 3.

-

Sample covariance parameters (Metropolis-Hastings):

Appendix B.6. Model Comparison and Selection

Appendix B.6.1. Information Criteria

Appendix B.6.2. Cross-Validation

Appendix B.7. Uncertainty Quantification

Appendix B.7.1. Prediction Intervals

For a new location

, the predictive distribution of the response is Gaussian. The

prediction interval:

where

. This provides both a

point prediction (

) and an

uncertainty measure (

) for the new location.

Appendix B.8. Conformal Prediction

- 1.

Compute residuals:

- 2.

Find -quantile:

- 3.

Prediction interval:

Appendix B.9. Computational Aspects

Appendix B.9.1. Scalability Challenges

Exact GP: . Solutions:

Low-rank approximations: ,

Sparse covariance methods

Stochastic Variational Inference

Appendix B.9.2. Numerical Stability

Appendix B.10. Theoretical Properties

Appendix B.10.1. Consistency

Under regularity conditions, as

:

Appendix B.10.2. Asymptotic Normality

For fixed-domain asymptotics:

where

is the Fisher information matrix.

References

- Yang, M.; Jiang, Y.; Che, J.; Han, Z.; Lv, Q. Short-Term Forecasting of Wind Power Based on Error Traceability and Numerical Weather Prediction Wind Speed Correction. Electronics 2024, vol. 13(no. 8), 1559. [Google Scholar] [CrossRef]

- Szostek, K.; Mazur, D.; Drałus, G.; Kusznier, J. Analysis of the Effectiveness of ARIMA, SARIMA, and SVR Models in Time Series Forecasting: A Case Study of Wind Farm Energy Production. Energies 2024, vol. 17(no. 19), 4803. [Google Scholar] [CrossRef]

- Xu, J.; Xiao, Z.; Lin, Z.; et al. System bias correction of short-term hub-height wind forecasts using the Kalman filter. Protection and Control of Modern Power Systems 2021, vol. 6, Article 37. [Google Scholar] [CrossRef]

- Liu, Z.; Guo, H.; Zhang, Y.; Zuo, Z. A Comprehensive Review of Wind Power Prediction Based on Machine Learning: Models, Applications, and Challenges. Energies vol. 18(no. 2), 350, 2025. [CrossRef]

- Che, G.; Zhou, D.; Wang, R.; Zhou, L.; Zhang, H.; Yu, S. Wind Energy Assessment in Forested Regions Based on the Combination of WRF and LSTM-Attention Models. Sustainability 2024, vol. 16(no. 2), 898. [Google Scholar] [CrossRef]

- Bessa, R. J.; Miranda, V.; Gama, J. The Role of Predictive Distributions in Modern Wind Power Forecasting Frameworks. International Journal of Forecasting 2022, vol. 38(no. 3), 601–615. [Google Scholar] [CrossRef]

- Elshaboury, N.; Elmousalami, H. Wind speed and power forecasting using Bayesian optimised machine learning models in Gabal Al-Zayt, Egypt. Scientific Reports vol. 15(Article 28500), 2025. [CrossRef]

- Yang, Q.; Huang, G.; Li, T.; Xu, Y.; Pan, J. A Novel Short-Term Wind Speed Prediction Method Based on Hybrid Statistical-Artificial Intelligence Model with Empirical Wavelet Transform and Hyperparameter Optimization. Journal of Wind Engineering and Industrial Aerodynamics 2023, 239, 105499. [Google Scholar] [CrossRef]

- Cai, H.; Jia, X.; Feng, J.; Li, W.; Hsu, Y.-M.; Lee, J. Gaussian Process Regression for Numerical Wind Speed Prediction Enhancement. Renewable Energy 2020, vol. 146, 2112–2123. [Google Scholar] [CrossRef]

- Heaton, M. J.; Gelfand, A. E.; Vecchia, D. J.; Guinness, J. R. A Case Study Competition Among Methods for Analysing Large Spatial Data. Journal of Agricultural, Biological and Environmental Statistics 2019, vol. 24(no. 3), 398–425. [Google Scholar] [CrossRef] [PubMed]

- Wang, B.; Yan, L.; Rong, Q.; Chen, J.; Shen, P.; Duan, X. Dynamic Gaussian Process Regression for Spatio-Temporal Data Based on Local Clustering. Chinese Journal of Aeronautics 2024, vol. 37(no. 12), 245–257. [Google Scholar] [CrossRef]

- Sigauke, C.; Chandiwana, E.; Bere, A. Spatio-Temporal Forecasting of Global Horizontal Irradiance Using Bayesian Inference. Applied Sciences 2023, vol. 13(no. 1), 201. [Google Scholar] [CrossRef]

- Chen, Y.; et al. Hybrid Forecasting Method for Wind Power Integrating Spatial Correlation and Corrected Numerical Weather Prediction. Applied Energy 2021, vol. 293, 116951. [Google Scholar] [CrossRef]

- Smith, J.; et al. Probabilistic Wind Power Forecasting for Newly-Built Wind Farms Based on Multi-Task Gaussian Process Method. Renewable Energy 2023, vol. 217, 119054. [Google Scholar] [CrossRef]

- Dimitrov, S.; Li, Q.; Sanchez, M. Toward Integrated Spatio-Temporal Wind Forecasting: Addressing Turbine Wake Effects. Wind Energy Science 2023, vol. 8(no. 1), 101–117. [Google Scholar]

- Wang, Y.; Hu, Q.; Meng, Z. Deep Learning-Based Wind Speed Forecasting with Temporal Feature Extraction. Renewable Energy 2022, vol. 189, 731–744. [Google Scholar] [CrossRef]

- CSIR. WASA Data Portal. Available online: https://wasadata.csir.co.za/wasa1/WASAData (accessed on 2025).

- Hopkins, B.; Skellam, J. G. A new method for determining the type of distribution of plant individuals. Annals of Botany 1954, vol. 18(no. 2), 213–227. Available online: https://academic.oup.com/aob/article-abstract/18/2/213/277917. [CrossRef]

- Meinshausen, N. Relaxed Lasso. Computational Statistics & Data Analysis 2007, vol. 52(no. 1), 374–393. [Google Scholar] [CrossRef]

- Williams, C. K. I.; Rasmussen, C. E. Gaussian Processes for Machine Learning; MIT Press: Cambridge, MA, 2006; Available online: http://www.gaussianprocess.org/gpml/.

- Gneiting, T.; Raftery, A. E. Strictly Proper Scoring Rules, Prediction, and Estimation. Journal of the American Statistical Association 2007, vol. 102(no. 477), 359–378. [Google Scholar] [CrossRef]

Figure 1.

Spatial distribution of station clusters.

Figure 1.

Spatial distribution of station clusters.

Figure 2.

Distribution of clusters by coordinates and elevation.

Figure 2.

Distribution of clusters by coordinates and elevation.

Figure 3.

Hierarchical cluster plot.

Figure 3.

Hierarchical cluster plot.

Figure 4.

The dendrogram.

Figure 4.

The dendrogram.

Figure 5.

Boxplot of the distribution of wind speed (in m/s) across all stations.

Figure 5.

Boxplot of the distribution of wind speed (in m/s) across all stations.

Figure 6.

Time series plot for all the stations.

Figure 6.

Time series plot for all the stations.

Figure 7.

Correlation plot for all the stations.

Figure 7.

Correlation plot for all the stations.

Figure 8.

Histogram of all the stations.

Figure 8.

Histogram of all the stations.

Figure 9.

Correlation plot.

Figure 9.

Correlation plot.

Figure 10.

Prediction plots for cluster 1 high performing stations.

Figure 10.

Prediction plots for cluster 1 high performing stations.

Figure 11.

Prediction plots for cluster 2 high performing stations.

Figure 11.

Prediction plots for cluster 2 high performing stations.

Figure 12.

Comparison of predictive performance between Linear and Hybrid GP models across clusters and validation sets.

Figure 12.

Comparison of predictive performance between Linear and Hybrid GP models across clusters and validation sets.

Table 1.

Comparison of wind speed forecasting methods.

Table 1.

Comparison of wind speed forecasting methods.

| Method |

Temporal Focus |

Spatial Awareness |

Uncertainty Quantification |

Computational Cost |

Key Limitations |

| NWP Models [1] |

Medium-long range |

Low |

No |

High |

Systematic errors, limited resolution |

| SARIMA [2] |

Short term |

None |

No |

Low |

Poor with non-linear data |

| Kalman Filters [3] |

Short term |

Low |

Limited |

Low-medium |

Linearity assumptions |

| ML/DL [4,5] |

Short-medium |

None-medium |

No |

High |

Black-box, site-specific, data-hungry |

| Bayesian Models [6,7] |

Short-medium |

None-medium |

Yes |

High-very high |

Computationally intensive, calibration needed |

| GPR [8,9] |

Short term |

Medium |

Yes |

Very high () |

Scalability issues |

| Hybrid Linear-GPR [9,10] |

Short term |

Medium |

Yes |

Medium-high |

Assumes uniform spatial benefit |

| Spatio-temporal GPR/Kriging [9,11] |

Short term |

High |

Yes |

Very high |

Data density requirements |

| Cluster-based Hybrid (Proposed) |

Short term |

High |

Yes |

Medium |

Regime identification, clustering sensitivity |

Table 2.

Station data with clusters.

Table 2.

Station data with clusters.

| Stn no |

StationCode |

StationName |

lon |

lat |

elev |

cluster |

| 1 |

WM1 |

Alexander Bay |

16.66441 |

28.60188 |

152 |

1 |

| 2 |

WM2 |

Calvinia |

19.36075 |

31.52494 |

824 |

1 |

| 3 |

WM3 |

Vredendal |

18.41992 |

31.73051 |

242 |

1 |

| 4 |

WM5 |

Napier |

19.69245 |

34.61192 |

288 |

1 |

| 5 |

WM6 |

Sutherland |

20.69124 |

32.55680 |

1581 |

2 |

| 6 |

WM7 |

Beaufort West |

22.55667 |

32.96672 |

1047 |

2 |

| 7 |

WM8 |

Humansdorp |

24.51436 |

34.10997 |

110 |

1 |

| 8 |

WM9 |

Noupoort |

25.02838 |

31.25254 |

1806 |

2 |

| 9 |

WM12 |

Eston |

30.52871 |

29.85026 |

770 |

2 |

| 10 |

WM19 |

Upington |

20.56833 |

27.72670 |

848 |

1 |

Table 3.

Summary statistics for sensor readings (stn1 to stn10).

Table 3.

Summary statistics for sensor readings (stn1 to stn10).

| Statistic |

stn1 |

stn2 |

stn3 |

stn4 |

stn5 |

stn6 |

stn7 |

stn8 |

stn9 |

stn10 |

| Min |

0.3818 |

0.2533 |

0.3869 |

0.2075 |

0.2406 |

0.6305 |

0.2344 |

0.4235 |

0.2112 |

0.6037 |

| 1st Qu. |

4.4349 |

3.3906 |

4.7480 |

4.9616 |

4.4436 |

4.6944 |

4.8155 |

5.3201 |

3.1822 |

4.4880 |

| Median |

7.1031 |

5.4118 |

7.5978 |

7.5278 |

6.1062 |

7.1340 |

6.7533 |

7.4886 |

4.8019 |

6.0382 |

| Mean |

7.5087 |

5.8444 |

7.8269 |

7.9377 |

6.6211 |

6.9727 |

7.0867 |

7.8101 |

5.0163 |

6.1670 |

| 3rd Qu. |

9.9906 |

7.5868 |

10.7213 |

10.9338 |

8.4525 |

8.9410 |

9.2498 |

10.0568 |

6.4848 |

7.6730 |

| Max |

21.6708 |

20.5868 |

23.9921 |

20.3895 |

18.5664 |

17.7714 |

20.0661 |

19.3474 |

18.4819 |

16.5804 |

Table 4.

Testing and Training Set Combinations (Grouped into 6C1–6C2 and 6C3).

Table 4.

Testing and Training Set Combinations (Grouped into 6C1–6C2 and 6C3).

Table 5.

Performance metrics for different station combinations (compact view).

Table 5.

Performance metrics for different station combinations (compact view).

| Combination |

NLPD |

PINAW |

PINAD |

RMSE |

MAE |

CRPS |

CVG |

(%) |

| 1 |

1.9772 |

0.2690 |

-0.0156 |

1.4581 |

1.1639 |

0.8857 |

0.9500 |

22.00 |

| 2 |

1.8799 |

0.2134 |

0.0367 |

1.2051 |

0.9906 |

0.7701 |

0.9500 |

92.05 |

| 3 |

1.8407 |

0.1984 |

-0.0194 |

1.2138 |

0.9260 |

0.7491 |

0.9500 |

67.55 |

| 4 |

2.0453 |

0.3163 |

-0.0301 |

1.6529 |

1.3192 |

0.9794 |

0.9500 |

22.35 |

| 5 |

1.9173 |

0.2382 |

0.0035 |

1.2164 |

0.9596 |

0.7881 |

0.9500 |

26.35 |

| 6 |

1.9280 |

0.3274 |

0.0339 |

1.3307 |

1.0546 |

0.8254 |

0.9500 |

24.35 |

| 1-2 |

2.1802 |

0.2640 |

0.0161 |

1.4966 |

1.2269 |

1.0074 |

0.9500 |

41.44 |

| 1-3 |

2.3404 |

0.4258 |

-0.0366 |

2.4553 |

1.9306 |

1.3918 |

0.9500 |

23.15 |

| 1-4 |

2.2288 |

0.3677 |

-0.0321 |

1.9697 |

1.5561 |

1.1681 |

0.9500 |

23.05 |

| 1-5 |

2.2467 |

0.3432 |

-0.0107 |

1.8504 |

1.4508 |

1.1399 |

0.9500 |

20.25 |

| 1-6 |

2.1970 |

0.3022 |

0.0059 |

1.6391 |

1.3080 |

1.0537 |

0.9500 |

18.50 |

| 2-3 |

2.1310 |

0.2640 |

0.0088 |

1.6682 |

1.3634 |

1.0251 |

0.9500 |

18.45 |

| 2-4 |

2.3002 |

0.4221 |

0.0162 |

2.2910 |

1.8898 |

1.3245 |

0.9500 |

20.40 |

| 2-5 |

2.1372 |

0.2512 |

0.0273 |

1.3396 |

1.0761 |

0.9412 |

0.9500 |

32.80 |

| 2-6 |

2.2779 |

0.3443 |

0.0768 |

1.9823 |

1.7042 |

1.2087 |

0.9500 |

32.35 |

| 3-4 |

2.1903 |

0.3035 |

-0.0388 |

1.8522 |

1.4662 |

1.1113 |

0.9500 |

53.75 |

| 3-5 |

2.1291 |

0.2397 |

-0.0206 |

1.3993 |

1.0840 |

0.9480 |

0.9500 |

36.75 |

| 3-6 |

2.1276 |

0.2310 |

0.0016 |

1.4057 |

1.0887 |

0.9475 |

0.9500 |

40.00 |

| 4-5 |

2.4069 |

0.5244 |

-0.0276 |

2.6420 |

2.1088 |

1.5004 |

0.9500 |

23.25 |

| 4-6 |

2.3480 |

0.4647 |

-0.0063 |

2.4049 |

1.9453 |

1.3858 |

0.9500 |

19.20 |

| 5-6 |

2.3853 |

0.5165 |

0.0261 |

2.5984 |

2.0662 |

1.4700 |

0.9500 |

26.70 |

| 1-2-3 |

2.6265 |

0.5341 |

0.0010 |

3.2953 |

2.6461 |

1.8635 |

0.9500 |

25.95 |

| 1-2-4 |

2.4450 |

0.4465 |

-0.0037 |

2.4452 |

1.9569 |

1.4516 |

0.9500 |

20.55 |

| 1-2-5 |

2.4384 |

0.3841 |

0.0179 |

2.1756 |

1.7505 |

1.3661 |

0.9500 |

18.95 |

| 1-2-6 |

2.4819 |

0.4118 |

0.0482 |

2.3775 |

1.9584 |

1.4631 |

0.9500 |

19.45 |

| 1-3-4 |

2.5240 |

0.5029 |

-0.0570 |

2.9707 |

2.3361 |

1.6811 |

0.9500 |

23.30 |

| 1-3-5 |

2.5704 |

0.5219 |

-0.0346 |

3.0764 |

2.4197 |

1.7442 |

0.9500 |

23.60 |

| 1-3-6 |

2.4954 |

0.4413 |

-0.0099 |

2.5856 |

2.0518 |

1.5300 |

0.9500 |

22.00 |

| 1-4-5 |

2.4914 |

0.4787 |

-0.0374 |

2.5171 |

1.9611 |

1.5019 |

0.9500 |

21.15 |

| 1-4-6 |

2.4247 |

0.3955 |

-0.0178 |

2.1086 |

1.6635 |

1.3356 |

0.9500 |

20.00 |

| 1-5-6 |

2.4990 |

0.4158 |

0.0050 |

2.2558 |

1.7919 |

1.4351 |

0.9500 |

26.40 |

| 2-3-4 |

2.4937 |

0.4780 |

-0.0137 |

2.9242 |

2.3500 |

1.6535 |

0.9500 |

20.95 |

| 2-3-5 |

2.3653 |

0.3322 |

0.0066 |

2.0923 |

1.6908 |

1.2898 |

0.9500 |

18.45 |

| 2-3-6 |

2.4715 |

0.4477 |

0.0390 |

2.8127 |

2.3023 |

1.6068 |

0.9500 |

24.30 |

| 2-4-5 |

2.5664 |

0.5905 |

0.0107 |

3.0813 |

2.5013 |

1.7589 |

0.9500 |

26.65 |

| 2-4-6 |

2.7089 |

0.6601 |

0.0401 |

3.5722 |

2.9373 |

2.0517 |

0.9500 |

25.00 |

| 2-5-6 |

2.5753 |

0.5879 |

0.0690 |

3.1232 |

2.5483 |

1.7855 |

0.9500 |

27.80 |

| 3-4-5 |

2.4724 |

0.4564 |

-0.0501 |

2.6810 |

2.0913 |

1.5440 |

0.9500 |

21.00 |

| 3-4-6 |

2.4396 |

0.3782 |

-0.0241 |

2.2505 |

1.7764 |

1.3871 |

0.9500 |

19.40 |

| 3-5-6 |

2.4585 |

0.4047 |

-0.0005 |

2.4462 |

1.9551 |

1.4616 |

0.9500 |

24.65 |

| 4-5-6 |

2.6000 |

0.5781 |

-0.0017 |

2.9612 |

2.3805 |

1.7331 |

0.9500 |

27.75 |

Table 6.

Testing and Training Set Combinations for cluster 2.

Table 6.

Testing and Training Set Combinations for cluster 2.

| No. |

Testing Set |

Training Set |

| 1 |

1 |

2, 3, 4 |

| 2 |

2 |

1, 3, 4 |

| 3 |

3 |

1, 2, 4 |

| 4 |

4 |

1, 2, 3 |

| 5 |

1, 2 |

3, 4 |

| 6 |

1, 3 |

2, 4 |

| 7 |

1, 4 |

2, 3 |

| 8 |

2, 3 |

1, 4 |

| 9 |

2, 4 |

1, 3 |

| 10 |

3, 4 |

1, 2 |

Table 7.

Model performance for LM and GPR across station combinations.

Table 7.

Model performance for LM and GPR across station combinations.

| s.index |

Model |

RMSE |

MAE |

CRPS |

PICP |

NLPD |

PINAW |

| 1 |

LM |

0.8198 |

0.6321 |

0.4530 |

0.9454 |

1.2241 |

0.1728 |

| |

GP |

1.1313 |

0.8616 |

1.3826 |

0.999 |

1.8957 |

0.507 |

| 2 |

LM |

0.8283 |

0.6421 |

0.4585 |

0.9474 |

1.2346 |

0.1852 |

| |

GP |

1.2536 |

1.003 |

1.3806 |

0.999 |

1.9227 |

0.5429 |

| 3 |

LM |

0.8199 |

0.6212 |

0.4495 |

0.9379 |

1.2241 |

0.1658 |

| |

GP |

2.1206 |

1.6249 |

1.2953 |

0.9506 |

2.1743 |

0.4679 |

| 4 |

LM |

1.2005 |

0.7890 |

0.5963 |

0.8946 |

1.8832 |

0.1708 |

| |

GP |

2.8746 |

2.4061 |

1.3586 |

0.9215 |

2.4990 |

0.5225 |

| 1,2 |

LM |

0.8202 |

0.6300 |

0.4521 |

0.9463 |

1.2245 |

0.1732 |

| |

GP |

2.4598 |

1.9836 |

1.5065 |

0.9024 |

2.6392 |

0.5685 |

| 1,3 |

LM |

1.5695 |

1.3962 |

1.0527 |

0.5676 |

2.8405 |

0.1596 |

| |

GP |

2.1250 |

2.6561 |

1.9941 |

0.8330 |

2.9306 |

0.5220 |

| 1,4 |

LM |

1.1129 |

0.7302 |

0.5433 |

0.9166 |

1.7300 |

1.1689 |

| |

GP |

2.7990 |

2.2896 |

1.7487 |

0.9099 |

2.7441 |

0.6694 |

| 2,3 |

LM |

0.8231 |